1. Introduction

The face has long been considered as a window with a view to our emotions [

1]. Facial expressions are regarded as one of the most natural and efficient cues enabling people to interact and communicate with others in a nonverbal manner [

2]. With the systematic analysis of facial expression [

3], the link between facial expression and emotion has been demonstrated empirically in psychology literature [

1,

4]. Decades of behavioral research revealed that facial expression carries information for a wide-range of phenomena, from psychopathology to consumer preferences [

5,

6,

7]. The recent advances in electronics and computational technologies allow recording facial expressions at increasingly high resolutions and advanced the analysis performance. A better understanding of facial expressions can contribute to human-computer interactions and emerging practical applications that employ facial expression recognition, such as in education, entertainment, interactive games, clinical diagnostics, and many others.

When and how to capture spontaneous facial expressions, as well as the methods to interpret associated mental states and the underlying neurological mechanisms are growing research areas [

8,

9,

10]. In this study, we extended our previous work [

11] to investigate the relationship between spontaneous human facial emotion analysis and brain signals generated due to reactions to both static (image) and dynamic (video) stimuli. We jointly analyze the affective states by using multimodal brain activity measurements. The facial emotion recognition method utilizes image processing and pattern recognition and classification to decode the universal emotion types [

12]. Namely, these primitive emotions are anger, disgust, fear, happiness, sadness, and surprise [

13]. Facial expressions can be coded by the facial action coding system (FACS) which describes an expression through the action units (AU) of individual muscles [

14]. Although facial expression descriptions may be precise, automatic recognition of the emotions behind specific facial expressions from images remains a challenge without the availability of context information [

10]. Some existing image classification methods have achieved high recognition rates for facial emotions based on benchmarked databases containing a variety of posed facial emotions [

15,

16]. However, these datasets are built from images of subjects performing exaggerated expressions that are quite different than spontaneous and natural presentations [

17].

The neural mechanisms of emotion processing have been a fundamental research area in cognitive neuroscience and psychiatry in part due to clinical applications relating to mood disorders [

18,

19]. Researchers have shown that neurophysiological changes are induced by non-consciously perceived emotional stimuli [

20]. In particular, prefrontal cortex (PFC) has been identified as an important region that facilitates emotion regulation and, as a result, functional neuroimaging of PFC has been used to investigate neural correlates of emotion processing [

21,

22,

23,

24,

25]. Findings from these studies have suggested that monitoring PFC activity using non-invasive neuroimaging approaches, including functional near-infrared spectroscopy (fNIRS) [

26] and electroencephalography (EEG) [

27], presents an opportunity for automatic emotion recognition. These tools enable measuring the brain activity in natural everyday settings with minimal restrictions on participants during measurement. Hence, they are ideal tools for the Neuroergonomics approach [

28,

29,

30] that is focusing on studying the brain with real/realistic settings as opposed to artificial lab settings. Findings from these tools can be used for mapping the brain function as well as decoding mental states.

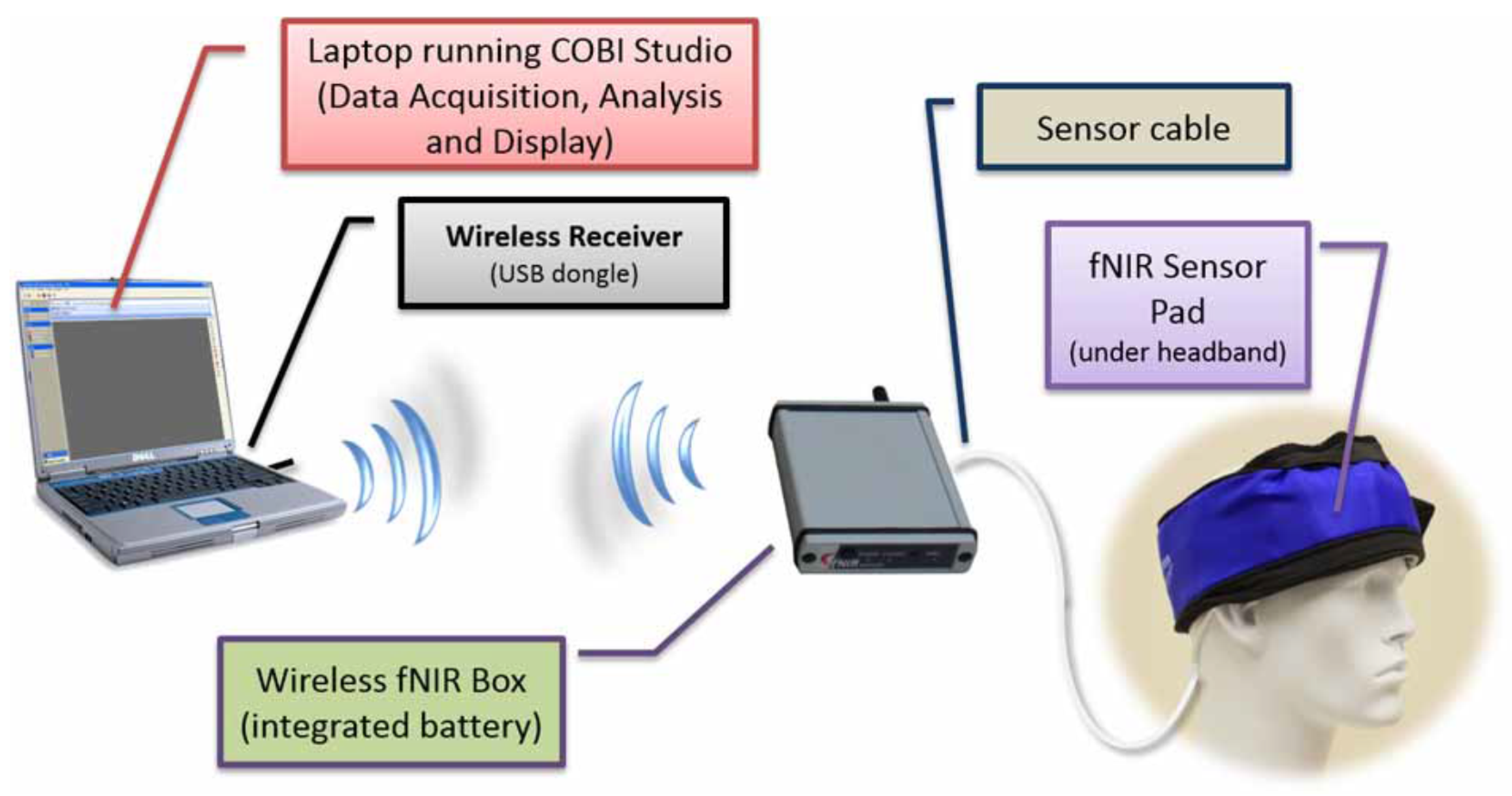

fNIRS is a non-invasive and portable neuroimaging method that can quantify the changes of cerebral oxygenated and deoxygenated hemoglobin concentrations using near-infrared light attenuation. fNIRS measures cortical hemodynamic response similarly to functional magnetic resonance imaging (fMRI), but without limitations and restrictions on the subject such as staying in a supine position within a confined space or exposure to loud noises [

31]. As a portable and cost-effective functional neuroimaging modality, fNIRS is uniquely suitable to study cognition and emotion processing-related brain activities due to relatively high spatial resolution and a practical sensory setup [

22,

31,

32,

33,

34]. EEG is a non-invasive, portable, and widely adopted neuroimaging technique used to detect brain electrophysiological patterns. It measures electrical potentials through electrodes placed on the scalp. Due to its high temporal resolution, EEG is an ideal candidate for monitoring event-related brain dynamics. Furthermore, EEG has been widely used to investigate the brain signals implicated in emotion processing [

35,

36]. It has been reported that asymmetric brain activity in frontal region is a key biomarker observed for emotional stimuli using EEG, fNIRS and fMRI [

37,

38,

39,

40]. Davidson et al. proposed that activity differences between the left and right PFC hemisphere as acquired by EEG were associated with the processing of positive and negative affects [

41]. According to this view of frontal asymmetry, the left prefrontal cortex is thought to be associated with positive affect, and the right prefrontal cortex activity is related to negative affect [

42].

The measurement of neural correlates of cognitive and affective processes using concurrent EEG and fNIRS, multimodal functional neuroimaging, has seen growing interest [

43,

44,

45,

46]. As fNIRS and EEG measure complementary aspects of brain activity (hemodynamic and electrophysiological, respectively), a hybrid brain data incorporates more information and enabling higher mental decoding accuracy [

43] confirming earlier findings [

47]. Specifically, in [

43] we showed that body physiological measures (heart rate and breathing) did not contribute any new information to fNIRS + EEG based classification of cognitive workload. Another recent study reported in [

48] utilized fNIRS and EEG as well as with autonomic nervous system measures, including skin conductance responses and heart rate, for emotion analysis. Authors reported strong effects observed in fNIRS and EEG when comparing positive and negative valence. And, they confirmed prefrontal lateralization for valence. Finally, heart rate didn’t show any effect, but skin conductance response demonstrated a difference although no comparison was done if this adds to EEG or fNIRS. In a more recent study, authors used prefrontal cortex based fNIRS signals recording during emotional video clips to recognize different positive emotions [

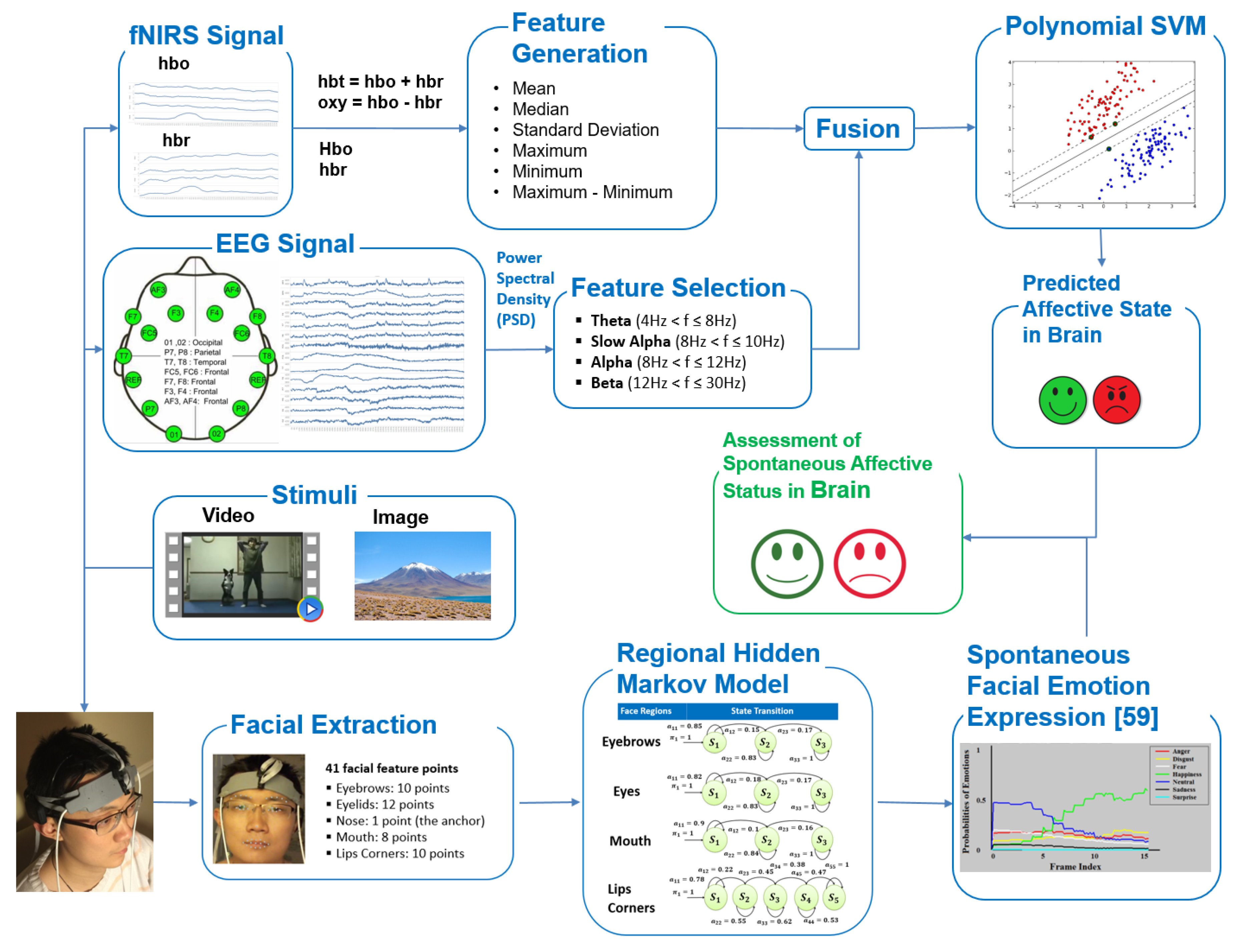

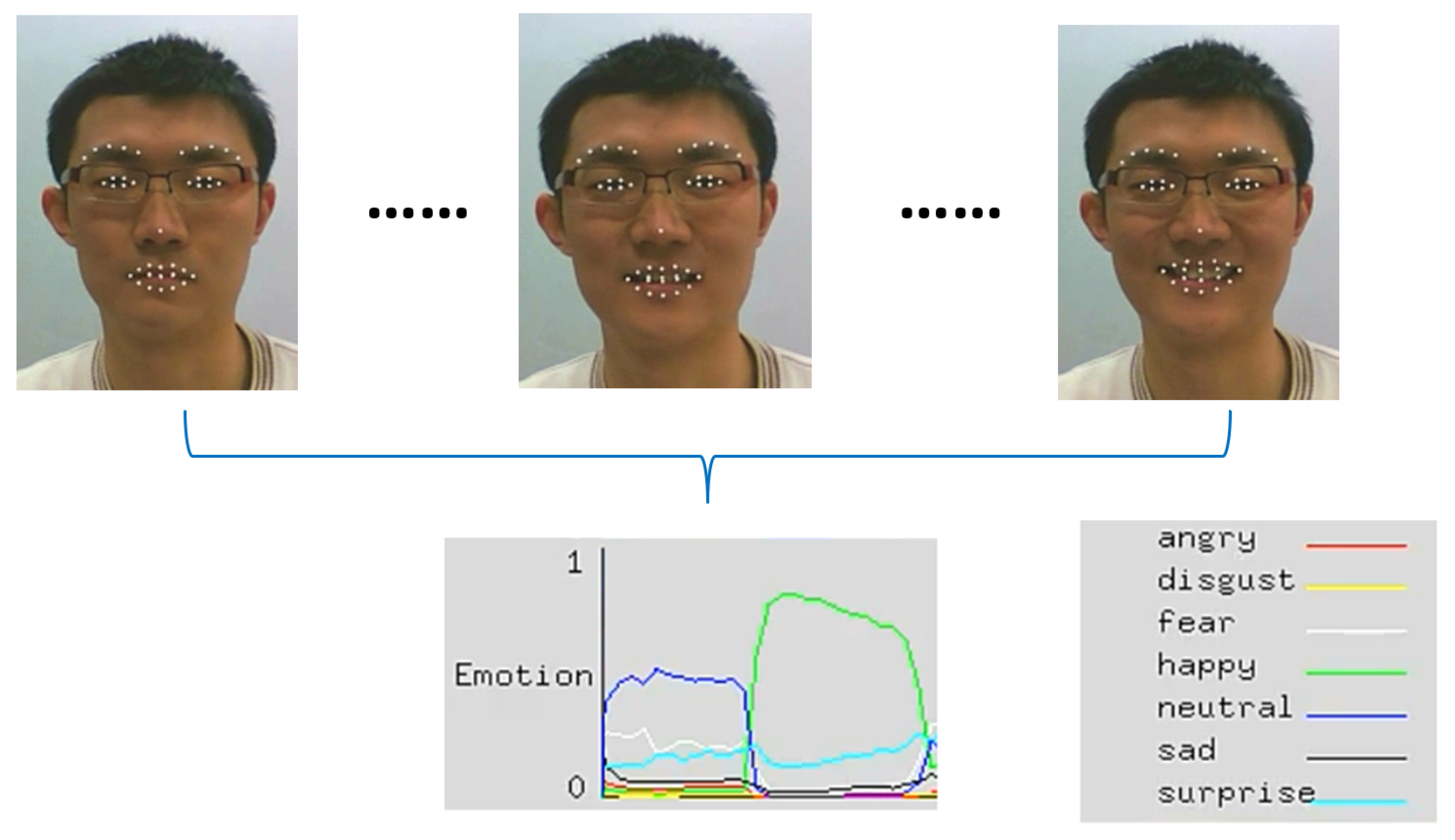

49]. In this study, we investigated spontaneous facial affective expressions and brain activity simultaneously recorded using both fNIRS and EEG modalities for affective state estimation. The block diagram of the system is displayed in

Figure 1.

This paper highlights the benefits of multimodal wearable neuroimaging using ultra-portable battery-operated and wireless sensors that allows for the untethered measurement of participants, ad potentially can be used in everyday settings. The major contributions of the paper are summarized as follows:

To the best of our knowledge, this is the first attempt to explore the relationship between spontaneous human facial affective states and relevant brain activity by simultaneously using fNIRS, EEG, and facial expressions registered in captured video.

The spontaneous facial affective expressions recorded by a video camera are demonstrated to be in line with the affective states coded by brain activities. This is consistent with Neuroergonomics [

30] and mobile brain/body imaging approaches [

50].

The experimental results show that the proposed multimodal technique outperforms methods using a subset of these signal types for the same task.

The remainder of the paper is organized as follows.

Section 2 details the approach and methods as well as the experimental design used in the study.

Section 3 reviews analytical details and presents the results. Then, the discussion and concluding remarks are given in the last section of the paper.

4. Discussion

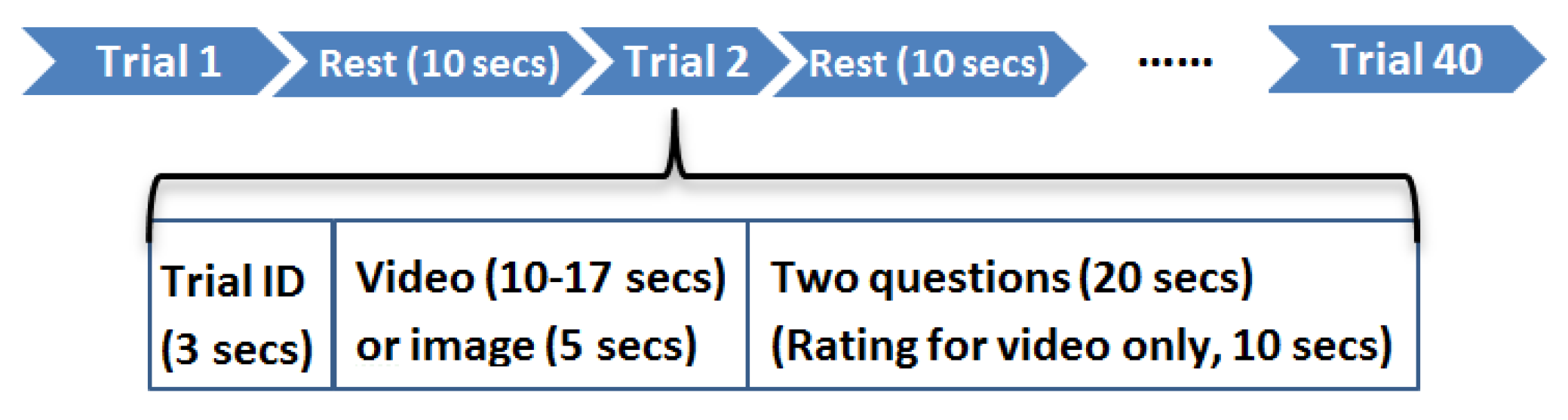

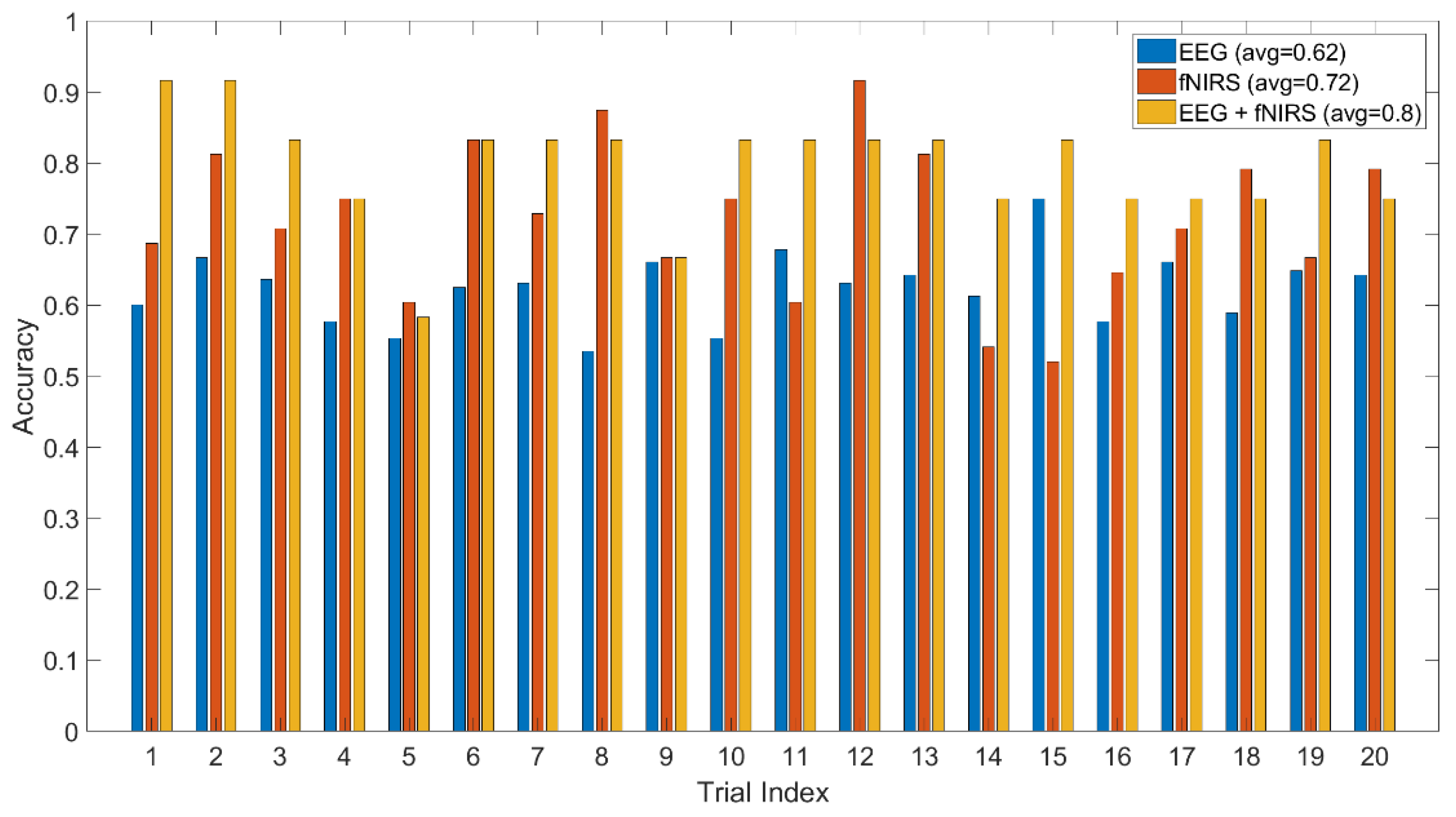

This study provides new insights for the exploration and analysis of spontaneous facial affective expression associated with simultaneous multimodal brain activity in the form of two wearable and portable neuroimaging techniques—fNIRS and EEG—that measure hemodynamic and electrophysiological changes, respectively. We have demonstrated that affective states can be estimated from human spontaneous facial expressions and brain activity via wearable sensors. The experimental results are founded on the premise that the participant has no knowledge of stimuli prior to the experiment. The spontaneous facial expressions of participants can be triggered by emotional stimuli. Moreover, specific neural activity changes are found due to the perception of the emotional stimuli. In addition, we found that video-content stimuli more readily induce the participants’ affective states than image-content stimuli. This can be explained as dynamic (video) stimulus provides more contextual information than a static (image) one. Compared to the static (image) stimuli, dynamic (video) ones trigger enhanced emotion delivered by brain activity as also shown in [

67].

In this study, the findings were derived from the combined analysis of cortical hemodynamic and electrophysiological signals. The neural activities were measured by two non-invasive, wearable and complementary neuroimaging techniques, fNIRS and EEG. The complementary nature of fNIRS and EEG has been reported in the literature with multimodality studies [

43,

47,

68,

69,

70]. Particularly, both of them have received considerable attention on emotion inference and emotional mapping on brain activities [

21,

41,

71]. The proposed hybrid method for affective state detection jointly using fNIRS and EEG signals outperforms techniques that employ only EEG or only fNIRS. The same results are observed using both video or image-content types of stimuli. The method jointly using fNIRS and EEG features shows 0.8 accuracy (video-content stimuli) and 0.75 accuracy (image-content stimuli) which outperforms the techniques where only one of them is utilized. The results here confirm earlier multimodal fNIRS + EEG studies and highlight the complementary information content in both signal streams [

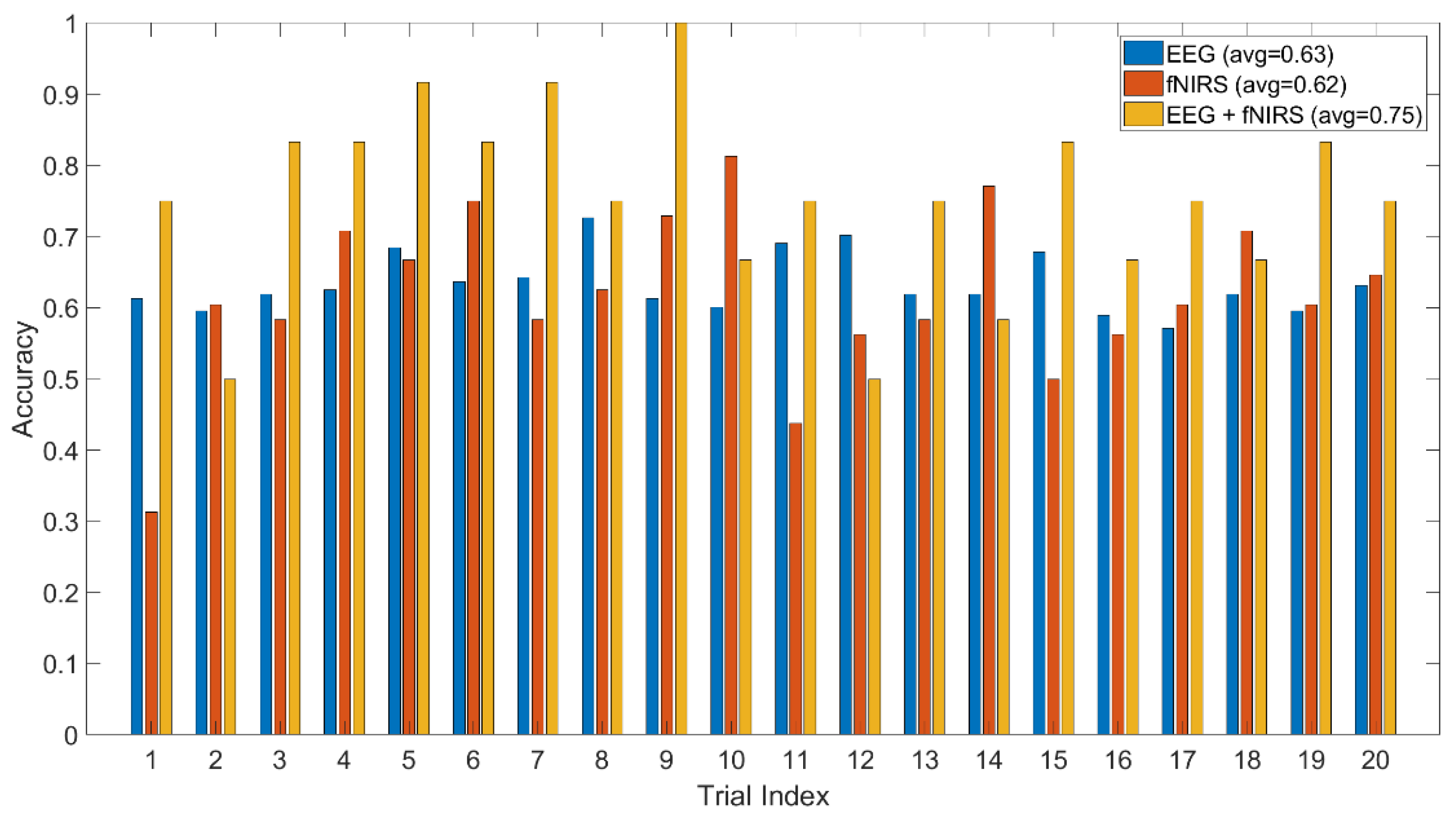

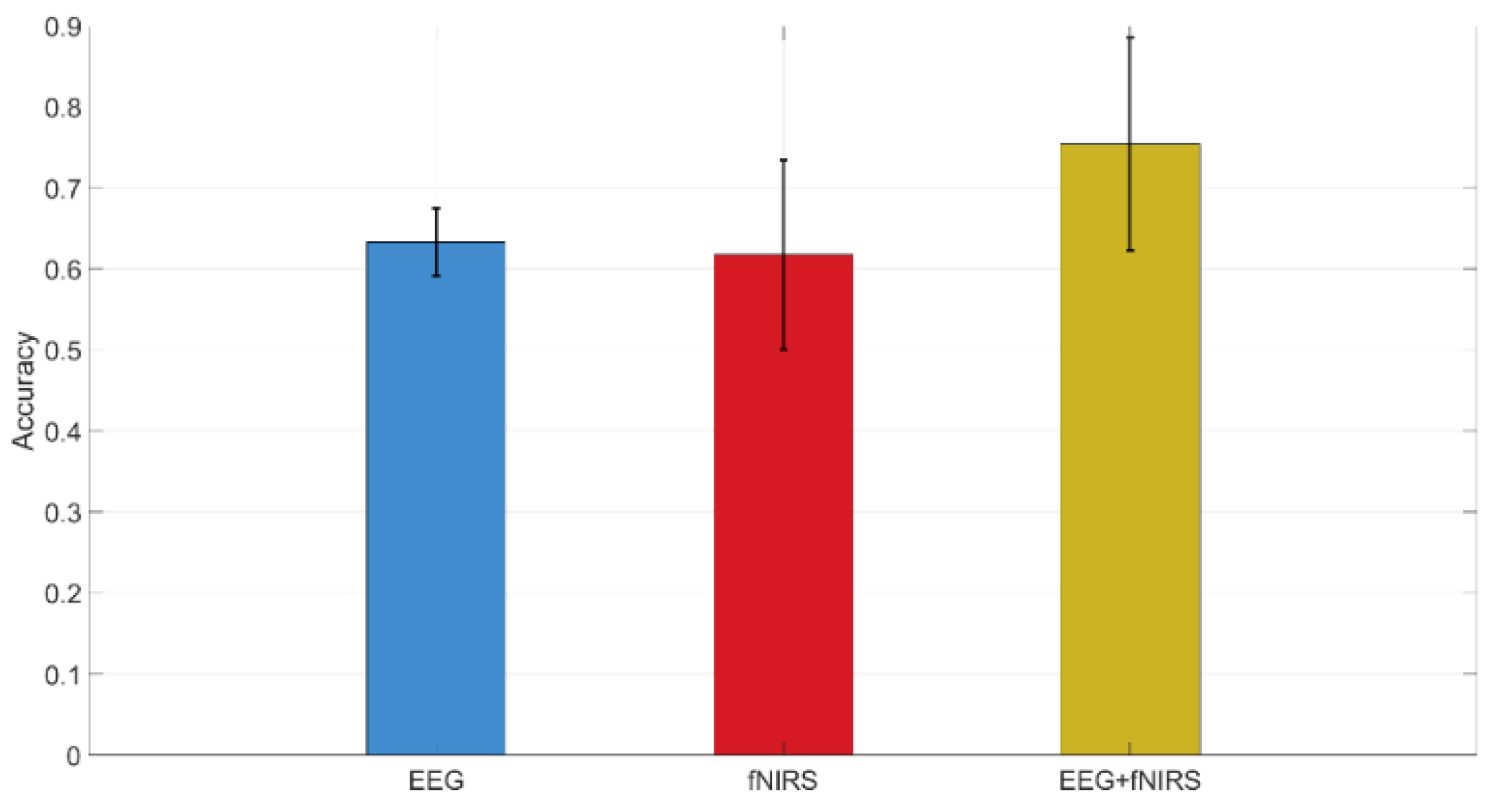

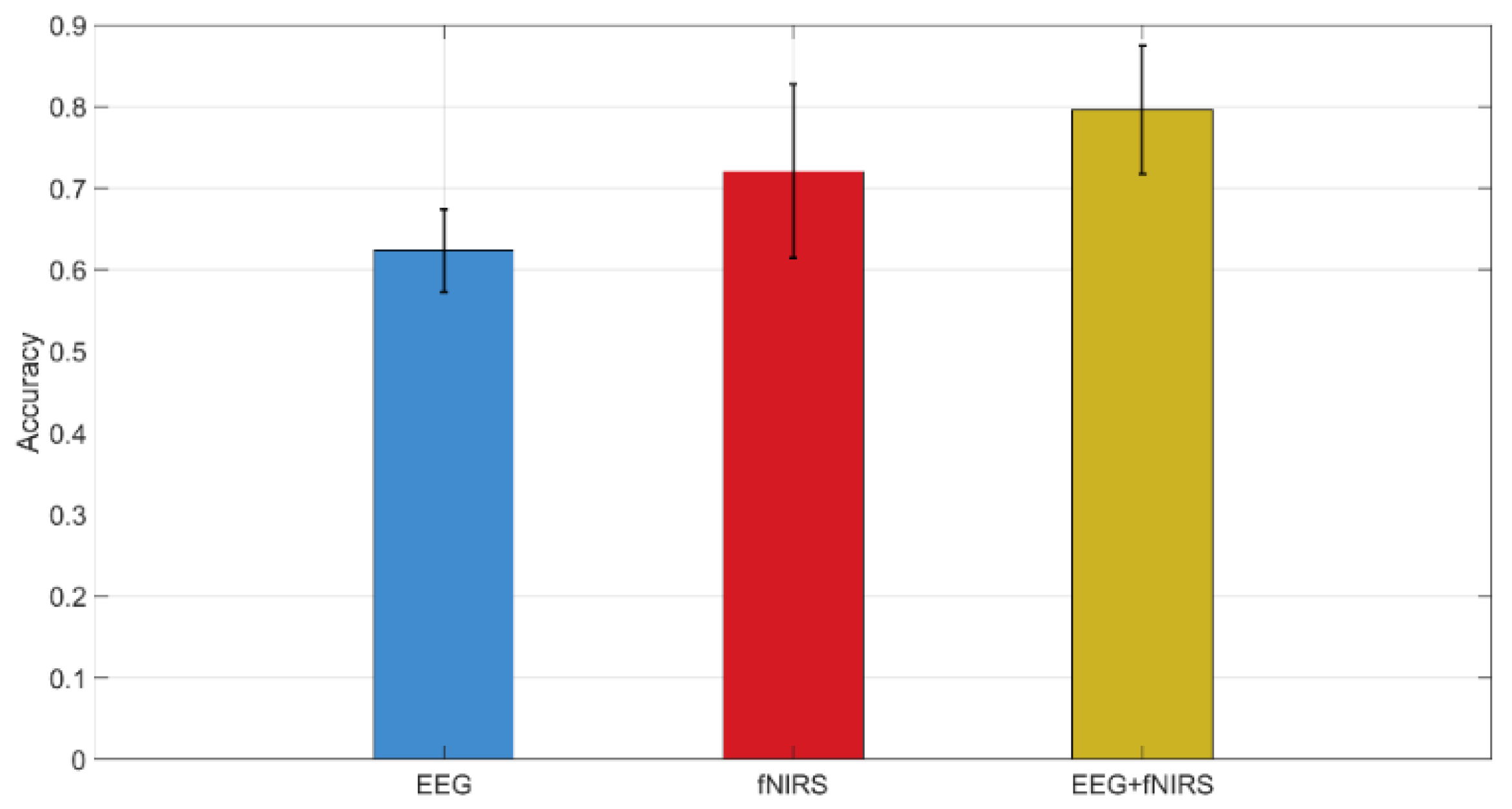

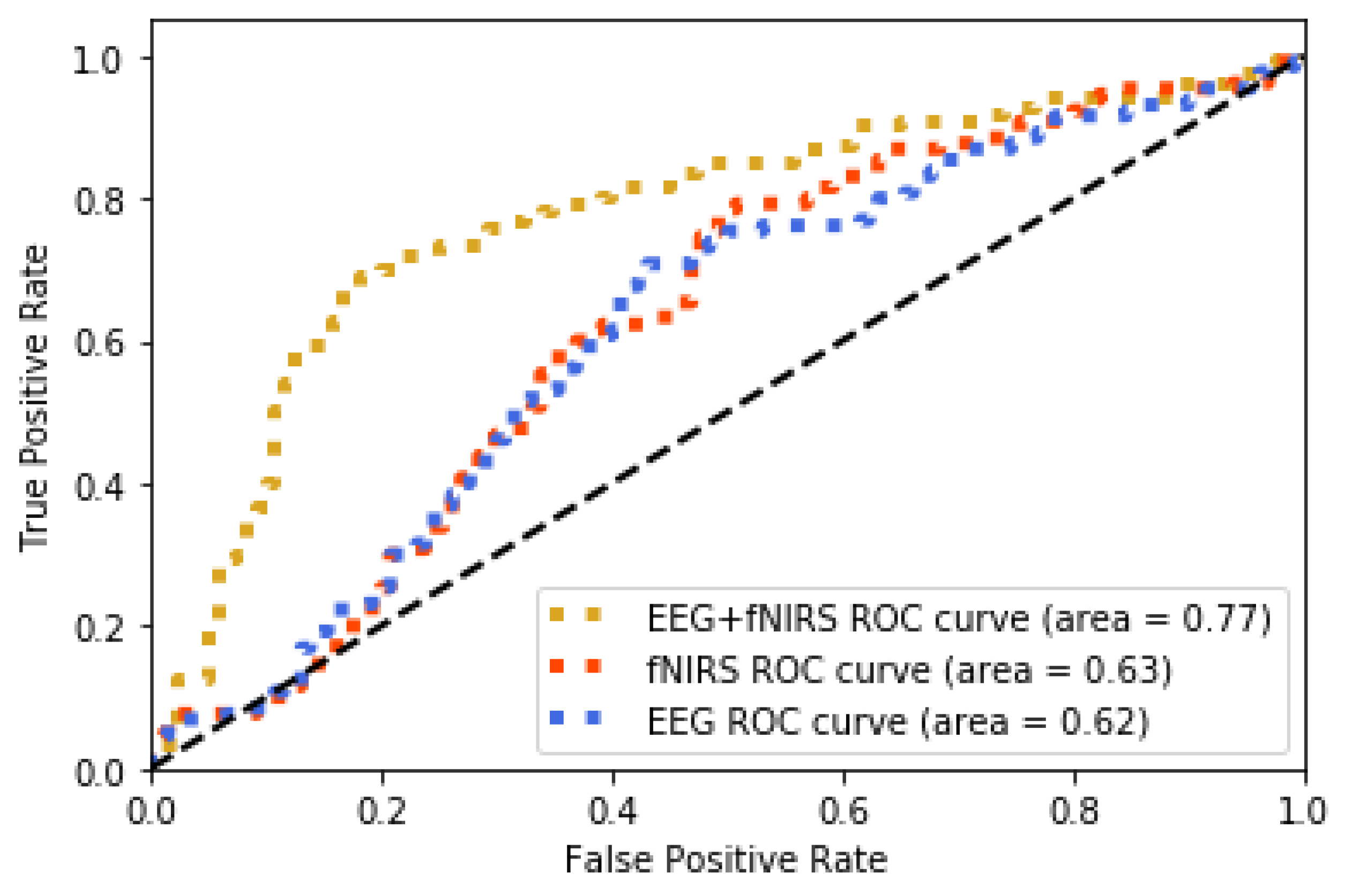

47].

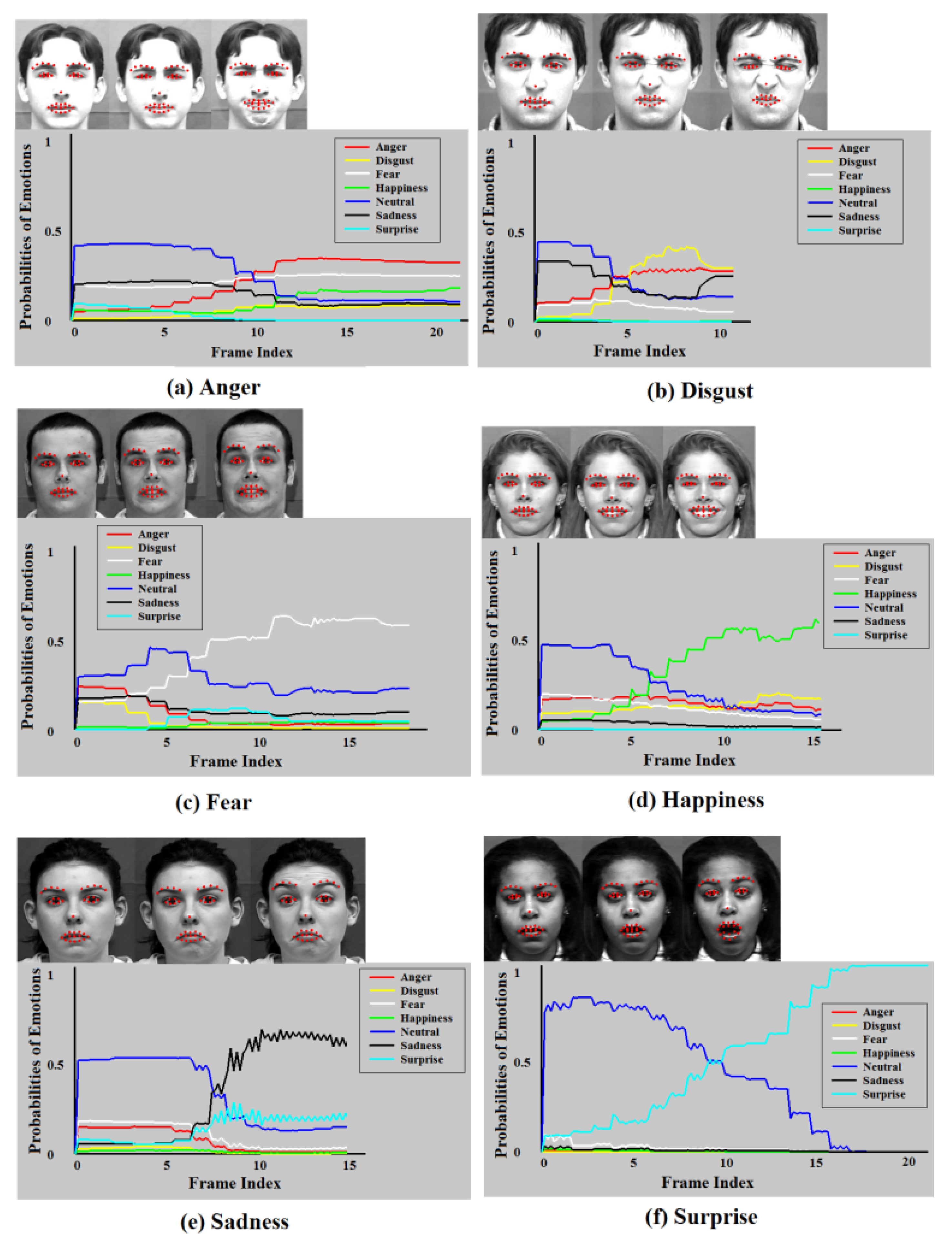

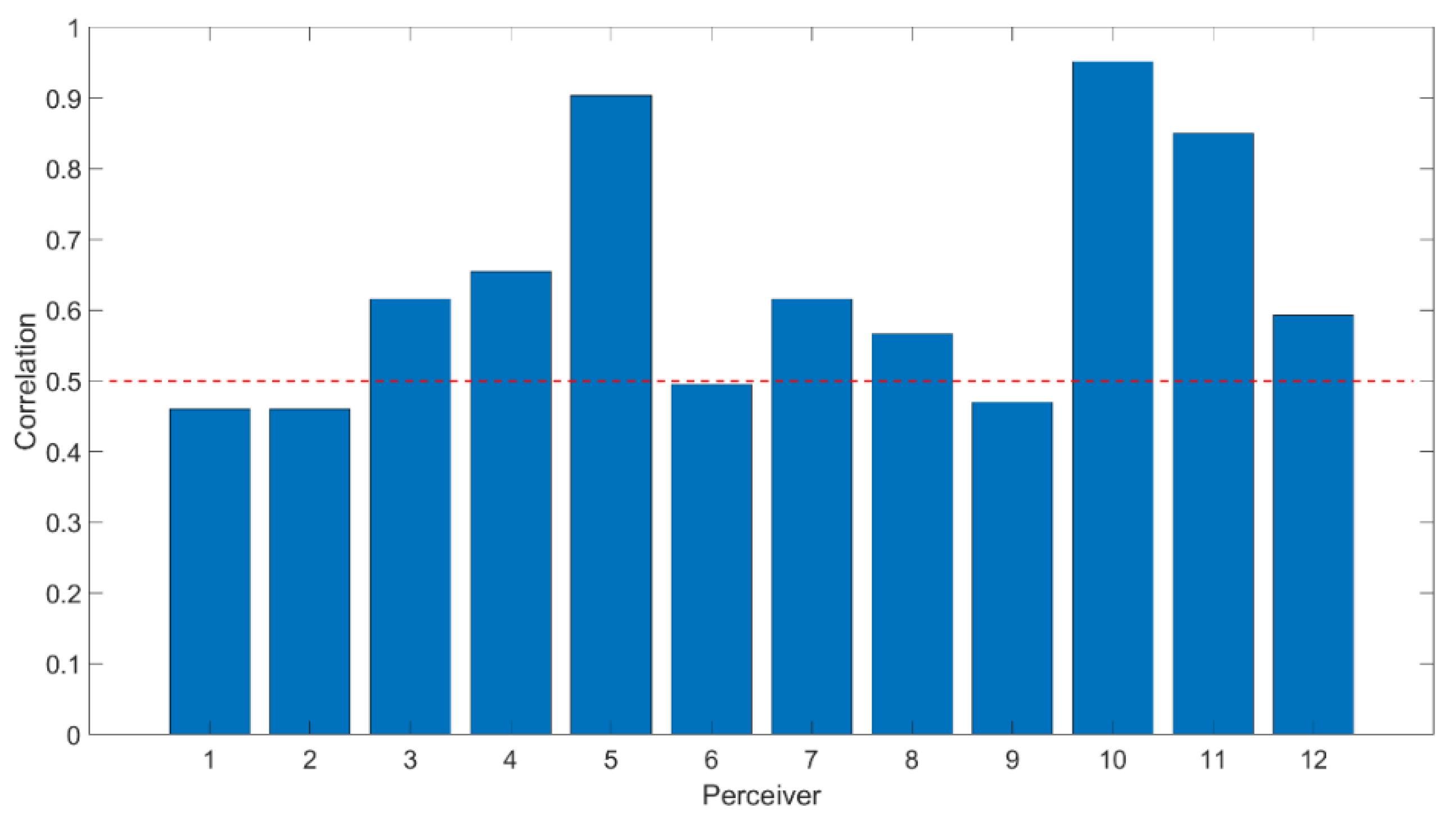

The video stream to measure facial reactions to different stimuli offers prompt, objective, and accurate recognition performance in continuous time. The regional facial features are highlighted since they convey significant information relevant to expressions. It is natural to classify the states of each facial region rather than considering the holistic features of the entire face for recognition [

72]. The experimental results support our hypothesis by showing a high correlation between recognized facial affective expressions and the ground truth for all trials (the given labels on image stimuli and participant’s self-assessment on video stimuli).

The study described here provides important albeit preliminary information about wearable and ultra-portable neuroimaging sensors. It is important to highlight the fact that EPOC EEG electrodes are sensitive to external interference and non-brain signal sources such as muscle activity. Long, thick hair of participants could prevent electrodes from touching the scalp properly in order to collect “clean” brain signals. The challenging nature of measuring EEG signals may cause an adverse effect on our analysis of the relationship of facial activities and the affective states translated by their brain signals. Moreover, the fNIRS measures of the PFC hemodynamic response were used based on earlier studies [

21]; however, monitoring of other brain areas could increase the overall classification accuracy. Finally, some prior work has shown that men and women differ in the neural mechanisms underlying their expression of specific emotions [

73]. It is noted that all subjects involved in this study were male. However, future work may extend this study and its findings to all sexes.

The video sequences and images used in this study display short duration content, although all participants stated that they were able to understand all stimuli. However, it is of interest to address how the participants react to the content stimuli with longer durations in future studies. The findings in this study indicate that the spontaneous facial affective expressions are interrelated to the measured brain activity. It is likely that facial reactions to the longer duration-content stimuli might differ in frequency. The audience’s physiological responses to two-hour long movies were measured in [

74] and revealed significant variations in affective states throughout the media. The extension of this work might benefit the specific applications that require the feedback of longer-duration content such as online education and entertainment. Also, the accuracy score per subject must be interpreted with caution. In a two class and ten testing trials per class to fit with experimental constraints, classification performance should be higher than 70% to be statistically significant (

p < 0.05) [

75,

76]. Considering both image-content and video-content, average performance of classifier with EEG + fNIRS passed this limit. Further improvements with preprocessing methods and/or machine learning methodologies could improve and optimize the classifier performance.