Objective Assessment of Attention-Deficit Hyperactivity Disorder (ADHD) Using an Infinite Runner-Based Computer Game: A Pilot Study

Abstract

:1. Introduction

2. Bibliographic Review

3. Materials and Methods

3.1. Participants

3.2. Running Raccon Game

3.3. Inatention SWAN Rating Subscale

3.4. Statistical Analysis

3.5. Ethics Procedures

4. Results

5. Discussion and Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Thomas, R.; Sanders, S.; Doust, J.; Beller, E.; Glasziou, P. Prevalence of Attention-Deficit/Hyperactivity Disorder: A Systematic Review and Meta-analysis. Pediatrics 2015, 135, e994–e1001. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Harpin, V. The effect of ADHD on the life of an individual, their family, and community from preschool to adult life. Arch. Dis. Child. 2005, 90, i2–i7. [Google Scholar] [CrossRef] [PubMed]

- Elkins, I.J.; McGue, M.; Iacono, W.G. Prospective Effects of Attention-Deficit/Hyperactivity Disorder, Conduct Disorder, and Sex on Adolescent Substance Use and Abuse. Arch. Gen. Psychiatry 2007, 64, 1145–1152. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Dalsgaard, S.; Østergaard, S.D.; Leckman, J.F.; Mortensen, P.B.; Pedersen, M.G. Mortality in children, adolescents, and adults with attention deficit hyperactivity disorder: A nationwide cohort study. Lancet 2015, 385, 2190–2196. [Google Scholar] [CrossRef]

- Heller, M.D.; Roots, K.; Srivastava, S.; Schumann, J.; Srivastava, J.; Hale, T.S. A Machine Learning-Based Analysis of Game Data for Attention Deficit Hyperactivity Disorder Assessment. Games Health 2013, 2, 291–298. [Google Scholar] [CrossRef]

- Edwards, M.C.; Gardner, E.S.; Chelonis, J.J.; Schulz, E.G.; Flake, R.A.; Diaz, P.F. Estimates of the Validity and Utility of the Conners’ Continuous Performance Test in the Assessment of Inattentive and/or Hyperactive-Impulsive Behaviors in Children. J. Abnorm. Child Psychol. 2007, 35, 393–404. [Google Scholar] [CrossRef]

- Pellegrini, S.; Murphy, M.; Lovett, E. The QbTest for ADHD assessment: Impact and implementation in Child and Adolescent Mental Health Services. Child. Youth Serv. Rev. 2020, 114, 105032. [Google Scholar] [CrossRef]

- Bruchmüller, K.; Margraf, J.; Schneider, S. Is ADHD diagnosed in accord with diagnostic criteria? Overdiagnosis and influence of client gender on diagnosis. J. Consult. Clin. Psychol. 2012, 80, 128–138. [Google Scholar] [CrossRef]

- Schultz, B.K.; Evans, S.W. Sources of Bias in Teacher Ratings of Adolescents with ADHD. J. Educ. Dev. Psychol. 2012, 2, 151–162. [Google Scholar] [CrossRef]

- Chi, T.C.; Hinshaw, S.P. Mother-child relationships of children with ADHD: The role of maternal depressive symptoms and depression-related distortions. J. Abnorm. Child Psychol. 2002, 30, 387–400. [Google Scholar] [CrossRef]

- Frazier, T.W.; Frazier, A.; Busch, R.M.; Kerwood, M.A.; Demaree, H.A. Detection of simulated ADHD and reading disorder using symptom validity measures. Arch. Clin. Neuropsychol. 2008, 23, 501–509. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Sansone, R.A.; Sansone, L.A. Faking Attention Deficit Hyperactivity Disorder. Innov. Clin. Neurosci. 2011, 8, 10–13. [Google Scholar] [PubMed]

- Basco, M.R.; Bostic, J.Q.; Davies, D.; Rush, A.J.; Witte, B.; Hendrickse, W.; Barnett, V. Methods to Improve Diagnostic Accuracy in a Community Mental Health Setting. Am. J. Psychiatry 2000, 157, 1599–1605. [Google Scholar] [CrossRef] [PubMed]

- Calvo, I.P.; Jiang-Lin, L.K.; Girela-Serrano, B.; Delgado-Gomez, D.; Navarro-Jimenez, R.; Baca-Garcia, E.; Porras-Segovia, A. Video games for the assessment and treatment of attention-deficit/hyperactivity disorder: A systematic review. Eur. Child Adolesc. Psychiatry 2020. [Google Scholar] [CrossRef]

- Dietrich, B. Forget the battery, let’s play games! In Proceedings of the IEEE 12th Symposium on Embedded Systems for Real-time Multimedia, Greater Noida, India, 16–17 October 2014. [Google Scholar]

- Trommer, B.L.; Hoeppner, J.-A.B.; Lorber, R.; Armstrong, K.J. The Go—No-Go paradigm in attention deficit disorder. Ann. Neurol. 1988, 24, 610–614. [Google Scholar] [CrossRef]

- Berger, I.; Slobodin, O.; Cassuto, H. Usefulness and Validity of Continuous Performance Tests in the Diagnosis of Attention-Deficit Hyperactivity Disorder Children. Arch. Clin. Neuropsychol. 2017, 32, 81–93. [Google Scholar]

- Hosmer, D.W.; Lemeshow, S.; Sturdivant, R.X. Applied Logistic Regression; John Wiley & Sons: Hoboken, NJ, USA, 2013; p. 177. [Google Scholar]

- Shaw, R.; Grayson, A.; Lewis, V. Inhibition, ADHD, and Computer Games: The Inhibitory Performance of Children with ADHD on Computerized Tasks and Games. J. Atten. Disord. 2005, 8, 160–168. [Google Scholar] [CrossRef]

- Faraone, S.V.; Newcorn, J.H.; Antshel, K.M.; Adler, L.; Roots, K.; Heller, M. The Groundskeeper Gaming Platform as a Diagnostic Tool for Attention-Deficit/Hyperactivity Disorder: Sensitivity, Specificity, and Relation to Other Measures. J. Child Adolesc. Psychopharmacol. 2016, 26, 672–685. [Google Scholar] [CrossRef] [Green Version]

- Delgado-Gómez, D.; Carmona-Vázquez, C.; Bayona, S.; Ardoy-Cuadros, J.; Aguado, D.; Baca-Garcia, E.; Lopez-Castroman, J. Improving impulsivity assessment using movement recognition: A pilot study. Behav. Res. Methods 2015, 48, 1575–1579. [Google Scholar] [CrossRef]

- Delgado-Gómez, D.; Calvo, I.P.; Masó-Besga, A.E.; Vallejo-Oñate, S.; Tello, I.B.; Duarte, E.A.; Varela, C.V.; Carballo, J.; Baca-Garcia, E.; Baron, D.; et al. Microsoft Kinect-based Continuous Performance Test: An Objective Attention Deficit Hyperactivity Disorder Assessment. J. Med. Internet Res. 2017, 19, e79. [Google Scholar] [CrossRef]

- Rizzo, A.; Buckwalter, J.; Bowerly, T.; Van Der Zaag, C.; Humphrey, L.; Neumann, U.; Chua, C.; Kyriakakis, C.; Van Rooyen, A.; Sisemore, D. The Virtual Classroom: A Virtual Reality Environment for the Assessment and Rehabilitation of Attention Deficits. CyberPsychol. Behav. 2000, 3, 483–499. [Google Scholar] [CrossRef]

- Rizzo, A.; Bowerly, T.; Buckwalter, J.G.; Klimchuk, D.; Mitura, R.; Parsons, T.D. A Virtual Reality Scenario for All Seasons: The Virtual Classroom. CNS Spectr. 2009, 11, 35–44. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Parsons, T.D.; Bowerly, T.; Buckwalter, J.G.; Rizzo, A.A. A Controlled Clinical Comparison of Attention Performance in Children with ADHD in a Virtual Reality Classroom Compared to Standard Neuropsychological Methods. Child Neuropsychol. 2007, 13, 363–381. [Google Scholar] [CrossRef]

- Conners, C.K.; Sitarenios, G. Conners’ Continuous Performance Test (CPT); Springer Science and Business Media LLC: New York, NY, USA, 2011; Volume II, pp. 681–683. [Google Scholar]

- Díaz-Orueta, U.; Garcia-López, C.; Crespo-Eguílaz, N.; Sanchez-Carpintero, R.; Climent, G.; Narbona, J. AULA virtual reality test as an attention measure: Convergent validity with Conners’ Continuous Performance Test. Child Neuropsychol. 2013, 20, 328–342. [Google Scholar] [CrossRef] [PubMed]

- Bioulac, S.; Lallemand, S.; Rizzo, A.; Philip, P.; Fabrigoule, C.; Bouvard, M.P. Impact of time on task on ADHD patient’s performances in a virtual classroom. Eur. J. Paediatr. Neurol. 2012, 16, 514–521. [Google Scholar] [CrossRef]

- Areces, D.; Rodríguez, C.; García, T.; Cueli, M.; González-Castro, P. Efficacy of a Continuous Performance Test Based on Virtual Reality in the Diagnosis of ADHD and Its Clinical Presentations. J. Atten. Disord. 2016, 22, 1081–1091. [Google Scholar] [CrossRef] [PubMed]

- Guze, S.B. Diagnostic and Statistical Manual of Mental Disorders, 4th ed. (DSM-IV). Am. J. Psychiatry 1995, 152, 1228. [Google Scholar] [CrossRef]

- Unity 3D. Available online: https://unity.com (accessed on 1 September 2020).

- Swanson, J.M.; Schuck, S.; Porter, M.M.; Carlson, C.; Hartman, C.A.; Sergeant, J.A.; Clevenger, W.; Wasdell, M.; McCleary, R.; Lakes, K.; et al. Categorical and Dimensional Definitions and Evaluations of Symptoms of ADHD: History of the SNAP and the SWAN Rating Scales. Int. J. Educ. Psychol. Assess. 2012, 10, 51–70. [Google Scholar]

- Hay, D.A.; Bennett, K.S.; Levy, F.; Sergeant, J.; Swanson, J. A Twin Study of Attention-Deficit/Hyperactivity Disorder Dimensions Rated by the Strengths and Weaknesses of ADHD-Symptoms and Normal-Behavior (SWAN) Scale. Biol. Psychiatry 2007, 61, 700–705. [Google Scholar] [CrossRef]

- Lakes, K.D.; Swanson, J.M.; Riggs, M. The reliability and validity of the English and Spanish strengths and weaknesses of ADHD and normal behavior rating scales in a preschool sample: Continuum measures of hyperactivity and inattention. J. Atten. Disord. 2012, 16, 510–516. [Google Scholar] [CrossRef] [Green Version]

- James, G.; Witten, D.; Hastie, T.; Tibshirani, R. An Introduction to Statistical Learning; Springer Science and Business Media LLC: New York, NY, USA, 2013. [Google Scholar]

- Dubitzky, W.; Granzow, M.; Berrar, D.P. Fundamentals of Data Mining in Genomics and Proteomics; Springer Science & Business Media: Berlin, Germany, 2007. [Google Scholar]

| Block | Trunk | Gap | Speed | IS Time | Block | Trunk | Gap | Speed | IS Time |

|---|---|---|---|---|---|---|---|---|---|

| B1 | 8 | 2 | 5 | 1.6 | B10 | 11 | 3 | 7 | 1.6 |

| B2 | 13 | 2 | 5 | 2.6 | B11 | 18 | 3 | 7 | 2.6 |

| B3 | 18 | 2 | 5 | 3.6 | B12 | 25 | 3 | 7 | 3.6 |

| B4 | 7 | 3 | 5 | 1.4 | B13 | 9.5 | 4.5 | 7 | 1.4 |

| B5 | 12 | 3 | 5 | 2.4 | B14 | 16.5 | 4.5 | 7 | 2.4 |

| B6 | 17 | 3 | 5 | 3.4 | B15 | 23.5 | 4.5 | 7 | 3.4 |

| B7 | 6 | 4 | 5 | 1.2 | B16 | 8 | 6 | 7 | 1.2 |

| B8 | 11 | 4 | 5 | 2.2 | B17 | 15 | 6 | 7 | 2.2 |

| B9 | 16 | 4 | 5 | 3.2 | B18 | 22 | 6 | 7 | 3.2 |

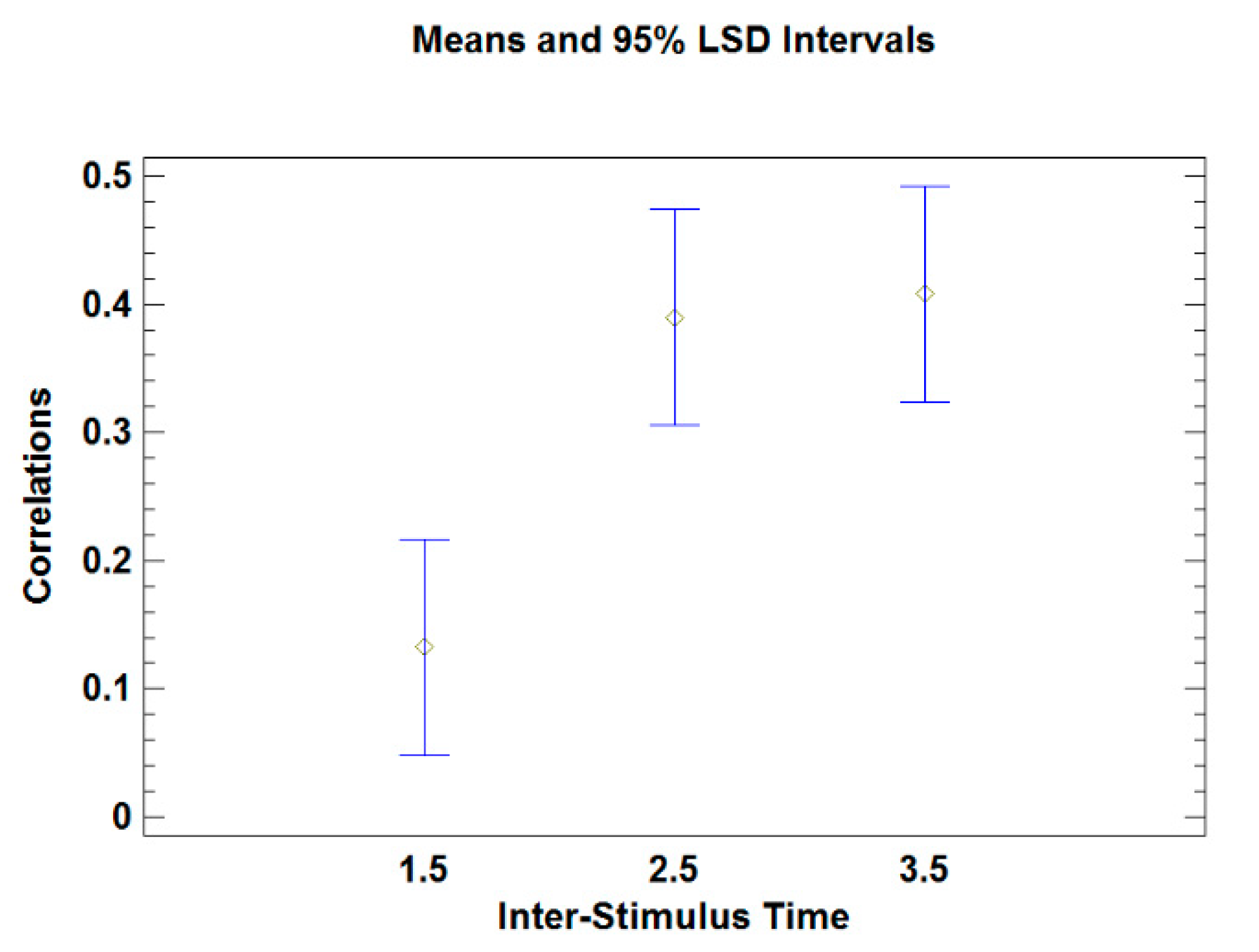

| All | 1.5 s | 2.5 s | 3.5 s | |

|---|---|---|---|---|

| Median | 0.48 (<0.01) | 0.23 (0.21) | 0.55 (<0.01) | 0.52 (<0.01) |

| IQR | 0.43 (0.01) | 0.11 (0.56) | 0.40 (0.02) | 0.41 (0.02) |

| Omissions | −0.39 (0.03) | −0.21 (0.25) | −0.49 (<0.01) | −0.41 (0.02) |

| Variables Included in the Model | Median | IQR | Omission |

|---|---|---|---|

| Median | 2.98 (0.005) | - | - |

| IQR | - | 2.59 (0.014) | - |

| Omission | - | - | −2.54 (0.016) |

| Median+IQR | 1.32 (0.196) | 0.18 (0.855) | - |

| Median+Omission | 1.86 (0.073) | - | −0.73 (0.468) |

| IQR+Omission | - | 1.79 (0.083) | −1.43 (0.161) |

| Median+IQR+Omissions | 0.62 (0.524) | 0.42 (0.678) | −0.81 (0.42) |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Delgado-Gómez, D.; Sújar, A.; Ardoy-Cuadros, J.; Bejarano-Gómez, A.; Aguado, D.; Miguelez-Fernandez, C.; Blasco-Fontecilla, H.; Peñuelas-Calvo, I. Objective Assessment of Attention-Deficit Hyperactivity Disorder (ADHD) Using an Infinite Runner-Based Computer Game: A Pilot Study. Brain Sci. 2020, 10, 716. https://doi.org/10.3390/brainsci10100716

Delgado-Gómez D, Sújar A, Ardoy-Cuadros J, Bejarano-Gómez A, Aguado D, Miguelez-Fernandez C, Blasco-Fontecilla H, Peñuelas-Calvo I. Objective Assessment of Attention-Deficit Hyperactivity Disorder (ADHD) Using an Infinite Runner-Based Computer Game: A Pilot Study. Brain Sciences. 2020; 10(10):716. https://doi.org/10.3390/brainsci10100716

Chicago/Turabian StyleDelgado-Gómez, David, Aaron Sújar, Juan Ardoy-Cuadros, Alejandro Bejarano-Gómez, David Aguado, Carolina Miguelez-Fernandez, Hilario Blasco-Fontecilla, and Inmaculada Peñuelas-Calvo. 2020. "Objective Assessment of Attention-Deficit Hyperactivity Disorder (ADHD) Using an Infinite Runner-Based Computer Game: A Pilot Study" Brain Sciences 10, no. 10: 716. https://doi.org/10.3390/brainsci10100716

APA StyleDelgado-Gómez, D., Sújar, A., Ardoy-Cuadros, J., Bejarano-Gómez, A., Aguado, D., Miguelez-Fernandez, C., Blasco-Fontecilla, H., & Peñuelas-Calvo, I. (2020). Objective Assessment of Attention-Deficit Hyperactivity Disorder (ADHD) Using an Infinite Runner-Based Computer Game: A Pilot Study. Brain Sciences, 10(10), 716. https://doi.org/10.3390/brainsci10100716