1. Introduction

In the machine learning literature, one of the most important challenges involves estimating parameters accurately and selecting relevant variables from highly correlated, high-dimensional data. Researchers have noticed many highly correlated features in high-dimensional data [

1]. Models often overfit or underfit high-dimensional data because they have a large number of variables but only a few of them are actually relevant; most others are irrelevant or redundant. An underfitting model contributes to estimation bias (i.e., high bias and low variance) in the model fitting because it keeps out relevant variables, whereas an overfitting raises estimation error (i.e., low bias and high variance) since it includes irrelevant variables in the model.

To illustrate an application of our proposed method, consider a study of gene expression data. This is a high-dimensional dataset and contains highly correlated genes. The geneticist always likes to determine which variants/genes contribute to changes in biological phenomena (e.g., increases in blood cholesterol level, etc.) [

2]. Therefore, the aim is to explicitly identify all relevant variants. The penalized regularization models such as

,

, and so forth have recently become a topic of great interest within machine learning, statistics [

1], and optimization [

3] communities as classic approaches to estimate parameters. The

-based method is not a preferred selection method for groups of variables among which pairwise correlations are significant because the lasso arbitrarily selects a single variable from the group without any consideration of which one to select [

4]. Furthermore, if the selected value of a parameter is too small, the

-based method would select many irrelevant variables, thus degrading its performance. On the other hand, a large value of the parameter would yield a large bias [

5]. Another point worth noting is that few

regularization methods are either adaptive, computationally tractable, or distributed, but no such method contains all three properties together. Therefore, the aim of this study is to develop a model for parameter estimation and to determine relevant variables in highly correlated, high-dimensional data based on the ordered

. This model has the following three properties all together: adaptive (Our method is adaptive in the sense that it reduces the cost of including new relevant variables as more variables are added to the model due to rank-based penalization properties.), tractable (A computationally intractable method is a computer algorithm that takes a very long time to execute a mathematical solution. A computationally tractable method is exactly the opposite of intractable method.), and distributed.

Several adaptive and nonadaptive methods have been proposed for parameter estimation and variable selection in large-scale datasets. Different principles are adopted in these procedures to estimate parameters. For example, an adaptive solution (i.e., an ordered

) [

5] is a norm and, therefore, convex. Regularization parameters are sorted in non-increasing order in the ordered

, in which the ordered regularization penalizes regression coefficients according to their order, with higher orders closer to the top and having larger penalties. Pan et al. [

6] proposed a partial sorted

norm, which is non-convex and non-smooth. In contrast, the ordered

regularization is convex and smooth, just as the standard

norm is convex and smooth in Reference [

7]. Pan et al. [

6] considered

p-values between

that do not cover

,

norms, and so forth. Pan et al. [

6] also did not provide details of other partially sorted norms when

and used random projection and the partial sorted

norm to complete the parameter estimation, whereas we have used ADMM with the ordered

. A nonadaptive solution (i.e., an elastic net) [

8] is a mixture of both ordinary

and

. In particular, it is a useful model when the number of predictors (p) is much larger than the number of observations (n) or in any situation where the predictor variables are correlated.

Table 1 presents the important properties of the regularizers. As seen in

Table 1,

and the ordered

regularizers are suitable methods for highly correlated, high-dimensional grouping data rather than

and the ordered

regularizers. The ordered

encourages grouping, whereas most of

-based methods promote sparsity. Here, grouping signifies a group of strongly correlated variables in high-dimensional data. We used the ordered

regularization in our method instead of

regularization because the ordered

regularization is adaptive. Finally, ADMM has a parallel behavior for solving large-scale convex optimization problems. Our model also employs ADMM and inherits distributed properties of native ADMM [

9]. Hence, our model is also distributed. Bogdan et al. [

5] did not provide any details about how they applied ADMM in the ordered

regularization.

In this paper, we propose “Parameter Estimation with the Ordered

Regularization via ADMM” called ADMM-O

to find relevant parameters from a model.

is a ridge regression; similarly, the ordered

becomes an ordered ridge regression. The main contribution of this paper is not to present a superior method but rather to introduce a quasi-version of the

regularization method and to concurrently raise awareness of the existing methods. As part of this research, we introduced a modern ordered

regularization method and proved that the square root of the ordered

is a norm and, thus, convex. Therefore, it is also tractable. In addition, the regularization method used an ordered elastic net method to combine the widely used ordered

penalty with modern ordered

penalty for ridge regression. The ordered elastic net is also proposed by the scholars in this paper. To the best of our knowledge, this is one of the first method to use the ordered

regularization with ADMM for parameter estimation and variable selection. In

Section 3 and

Section 4, we explain the integration of ADMM with the ordered

and further details about it.

The rest of the paper is arranged as follows. Related works are discussed in

Section 2, along with a presentation of the ordered

regularization in

Section 3.

Section 4 describes the application of ADMM to the ordered

.

Section 5 presents the experiments conducted. Finally,

Section 6 closes the paper with a conclusion.

4. Applying ADMM to the Ordered Ridge Regression

In order to apply ADMM to the problem in Equation (

5), we first transform it into an equivalent form of the problem in Equation (

1) by introducing an auxiliary variable

z.

We can see that Equation (

6) has two blocks of variables (i.e.,

x and

z). Its objective function is separable in the form of Equation (

1) since

and

, where

and

. Therefore, ADMM is applicable to Equation (

6). An augmented Lagrangian form of Equation (

6) can be defined as follows:

where

is a Lagrangian multiplier and

denotes a penalty parameter. Next, we apply ADMM to the augmented Lagrangian equation of Equation (

7) (Reference [

9], Section 3.1), which renders ADMM iterations as follows:

Proximal gradient methods are well known for solving convex optimization problems for which the objective function is the sum of a smooth loss function and a non-smooth penalty function [

9,

29,

30]. A well-studied example is

regularized least squares [

1,

5]. It should be noted that an ordered

norm is convex but not smooth. Therefore, these researchers used a proximal gradient method. In contrast, we have employed an ADMM method because ADMM can solve convex optimization problems for which the objective function is either the sum of a smooth loss function and a non-smooth penalty function or both loss and penalty function are smooth and ADMM also supports parallelism. In the ordered ridge regression, both loss and penalty function are smooth, whereas, in the ordered elastic net, loss function is smooth and penalty function is non-smooth.

4.1. Scaled Form

We can also define ADMM in scaled form by merging a linear and a quadratic term in augmented Lagrangian and then a scaled dual variable, which is shorter and more appropriate. The scaled dual form of ADMM iterations in Equation (

8) can be expressed as follows:

where

and

u is the scaled dual variable. Next, we can minimize the augmented Lagrangian in Equation (

7) with respect to

x and

z, successively. Minimizing Equation (

7) with respect to

x becomes the

x subproblem of Equation (9a), and it can be expressed as follows:

After computing a derivative of Equation (10a) with respect to

x, then the setting of the derivative of

x becomes equal to zero. Notice that this is a convex problem; therefore, it minimizes to solve the following linear system of Equation (10b):

Minimizing problem Equation (

7) w.r.t.

z, we obtain Equation (9b), and it results in the following

z subproblem:

After computing a derivative of Equation (11a) with respect to

z, then the setting of the derivative of

z becomes equal to zero. Notice that this is a convex problem; therefore, it minimizes to solve the following linear system of Equation (11b):

Finally, the multiplier (i.e., the scaled dual variable

u) is updated in the following way:

Optimality conditions: Primal and dual feasibility are essential and adequate optimality conditions for ADMM in Equation (

6) [

9]. Dual residual (

) and primal residual (

) can be defined as follows:

Stopping criteria: The stopping criterion for an ordered ridge regression is that primal and dual residuals must be small:

We set

and

. For further details about this choice, see Reference [

9], Section 3).

4.2. Over-Relaxed ADMM Algorithm

By comparing Equations (

1) and (

6), we can write Equation (

6) in the over-relaxation form as follows:

Substituting

with Equation (

13) into

z of Equation (11b) and

u of Equation (

12) updates the results in a relaxation form. Algorithm 1 presents an ADMM iteration for the ordered ridge regression of Equation (

6).

| Algorithm 1: Over-relaxed ADMM for the ordered ridge regression |

- 1:

Initialize , , , - 2:

while () do - 3:

- 4:

; - 5:

- 6:

- 7:

end while

|

We observed that ADMM Algorithm 1 computes an exact solution for each subproblem and that their convergence is guaranteed by existing ADMM theory [

24,

25,

31]. The most important and computationally intensive operation here is matrix inversion in line 3 of Algorithm 1. Here, matrix A is high-dimensional (

) and

takes

and its inverse (i.e.,

) takes

. We compute

and

outside loop; then, we are left with (inverse *

), which is

, while addition and subtraction take

.

is also cacheable, so the complexity is just

+ k *

heuristically with k number of iteration.

Generating the ordered parameter: As mentioned in the beginning, we set out to identify a computationally tractable and adaptive solution. The regularizing sequences play a vital role in achieving this goal. Therefore, we generated adaptive values of

such that regressor coefficients are penalized according to their respective order. Our regularizing sequence procedure is motivated by the BHq procedure [

27]. The BHq method generates

sequences as follows:

where

,

is

th quantile of a standard normal distribution, and

q is a parameter, namely

. We started with

as an initial value of the ordered parameter

.

Algorithm 2 presents a method for generating sorted

. The difference between lines 5 and 6 in Algorithm 2 is that line 5 is for low-dimensional (

) data and that line 6 is for high-dimensional data (

). Finally, we used the ordered

from Algorithm 2 (i.e., the adaptive value of

) in the ordered ridge regression of Equations (

6) and (

7) instead of ordinary

. This makes the ordered

adaptive and different from standard

.

| Algorithm 2: Sorted Lambda |

- 1:

Initialize - 2:

; - 3:

fordo - 4:

- 5:

; - 6:

; - 7:

end for

|

4.3. The Ordered Elastic Net

A standard

(or an ordered

) regularization is a commonly used tool to estimate parameters for microarray datasets (strongly correlated grouping). However, a key drawback of the

regularization is that it cannot automatically select relevant variables because the

regularization shrinks coefficient estimates closer but not exactly equal to zero (Reference [

12], Chapter 6.2). On the other hand, a standard

(or an ordered

) regularization can automatically determine relevant variables due to its sparsity property. However, the

regularization also has a limitation. Especially when different variables are highly correlated, the

regularization tends to pick only a few of them and to remove the remaining ones—even important ones that might be better predictors. To overcome the limitations of both

and

regularization, we proposed another method called an ordered elastic net (the ordered

regularization or ADMM-O

or -O

), similar to a standard elastic net [

8], by combining the ordered

regularization with the ordered

regularization and the elastic net. By doing so, the ordered

regularization automatically selects relevant variables in a way similar to the ordered

regularization. In addition, it can select groups of strongly correlated variables. The key difference between the ordered elastic net and the standard elastic net is a regularization term. We apply the ordered

and

regularization in the ordered elastic net instead of the standard

and

regularization. This approach means that the ordered elastic net inherits the sparsity, grouping, and adaptive properties of the ordered

and

regularization. We have also employed ADMM to solve the ordered

regularized loss minimization as follows:

For simplicity, let

and

. The ordered elastic net becomes

Now, we can transform the above ordered elastic net equation into an equivalent form of Equation (

1) by introducing an auxiliary variable

z.

We can minimize Equation (

16) w.r.t.

x and

z in the same way as we minimized the ordered

regularization in

Section 4 and

Section 4.1 and

Section 4.2. Therefore, we directly present the final results below without any details. The + sign means to select max (0,value).

5. Experiments

A series of experiments were conducted on both simulated and real data to examine the performance of a proposed method. In this section, first, a concept to select a correct sequence of s is discussed. Second, an experiment on synthetic data is presented that describes the convergence of the lasso, SortedL1, ADMM-O, and ADMM-O. Finally, the proposed method is applied to a real feature selection dataset. The performance of the ADMM-O method is analyzed in comparison with state-of-the-art methods: the lasso and SortedL1. These two methods are chosen for comparison because they are very similar to the ADMM-O method except they use the regular lasso and the ordered lasso, respectively, while ADMM-O model employs the ordered regularization with ADMM.

Experimental setting: The algorithms were implemented on Scala Spark™ with Scala code in both distributed and non-distributed versions. A distributed version of experiments was carried out in a cluster of virtual machines with four nodes: one master and three slaves. Each node has 10 GB of memory, 8 cores, CentOS release 6.2, and amd64: core-4.0-noarch. Apache spark™ 1.5.1 was deployed on it. The scholars also used IntelliJ IDEA 15 ULTIMATE as a Scala editor, interactive build tool sbt version 0.13.8, and Scala version 2.10.4. The standalone machine is a Lenovo desktop running Windows 7 Ultimate with an Intel™ Core™ i

3 Duo 3.20 GHz CPU and 4 GB of memory. The scholars used MATLAB™ version 8.2.0.701 running on a single machine to draw all figures. The source codes of the lasso, SortedL1, and ADMM-O

are available at References [

32,

33,

34], respectively.

5.1. Adjusting the Regularizing Sequence for the Ordered Ridge Regression

Figure 1 was drawn using Algorithm (2), where

p = 5000. As seen in

Figure 1, when the value of a parameter (

) becomes larger, the sequence

decreases, while

increases for a small value of

. However, the goal is to obtain a non-increasing order of sequence

by adjusting the value of

q, which stimulates convergence. Here, adjusting means tuning the value of the parameter

q using the BHq procedure to yield a suitable sequence

such that it improves performance.

5.2. Experimental Results of Synthetic Data

In this section, numerical examples show the convergences of ADMM-O

, ADMM-O

, and other methods. A tiny, dense example of an ordered

regularization is examined, where the feature matrix A has

n = 1500 examples and

p = 5000 features. Synthetic data is generated as follows: create a matrix

A and choose

using

and then normalize columns of the matrix

A to have the unit

norm.

is generated such that each sampled from

is a Gaussian distribution. Label b is calculated as b = A*

+ v, where v ∼

, which is the Gaussian noise. A penalty parameter

= 1.0, an over-relaxed parameter

= 1.0, and termination tolerances

and

are used. Variables

and

are initialized to be zero.

is a non-increasing ordered sequence according to

Section 5.1 and Algorithm 2.

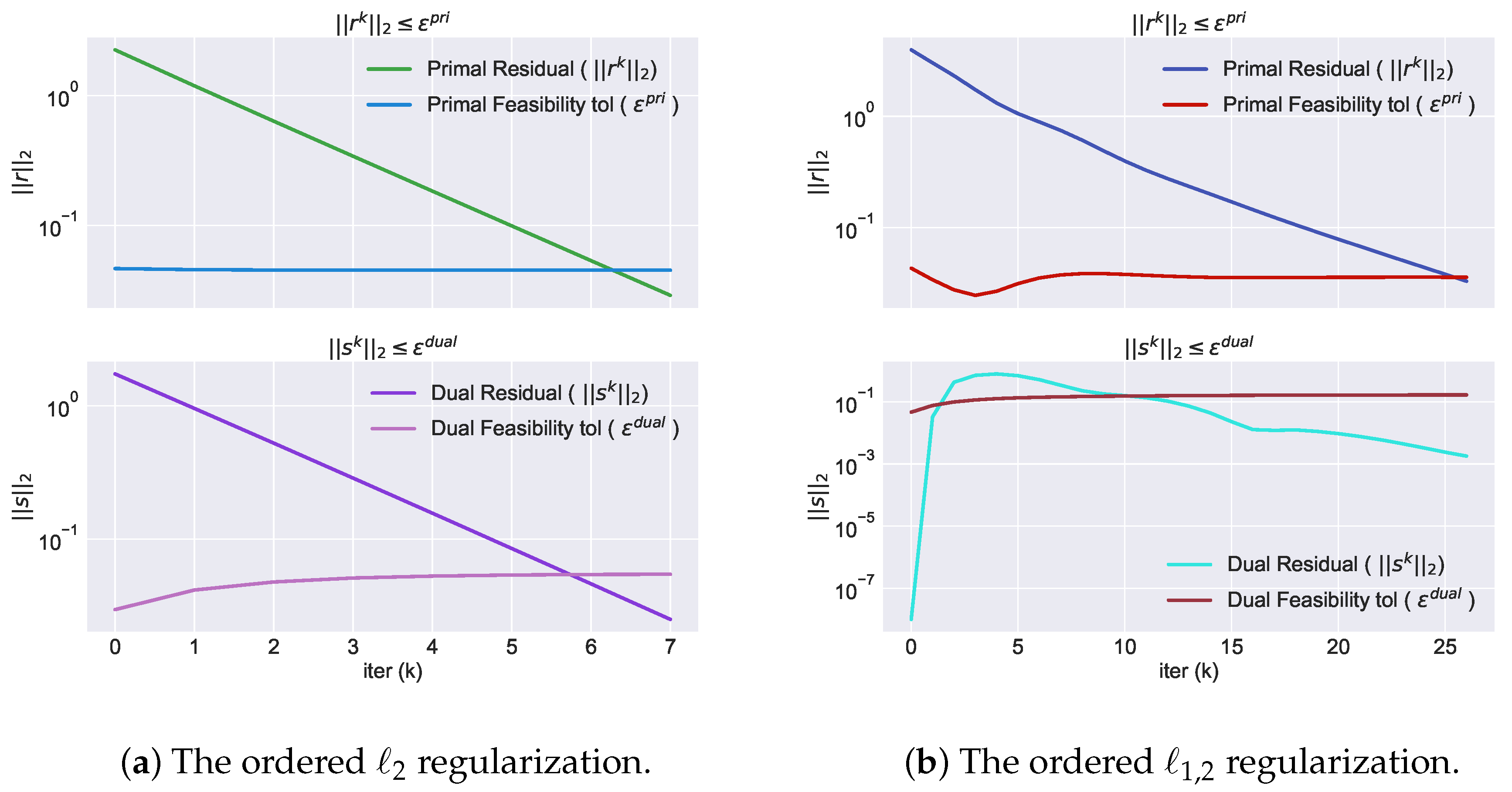

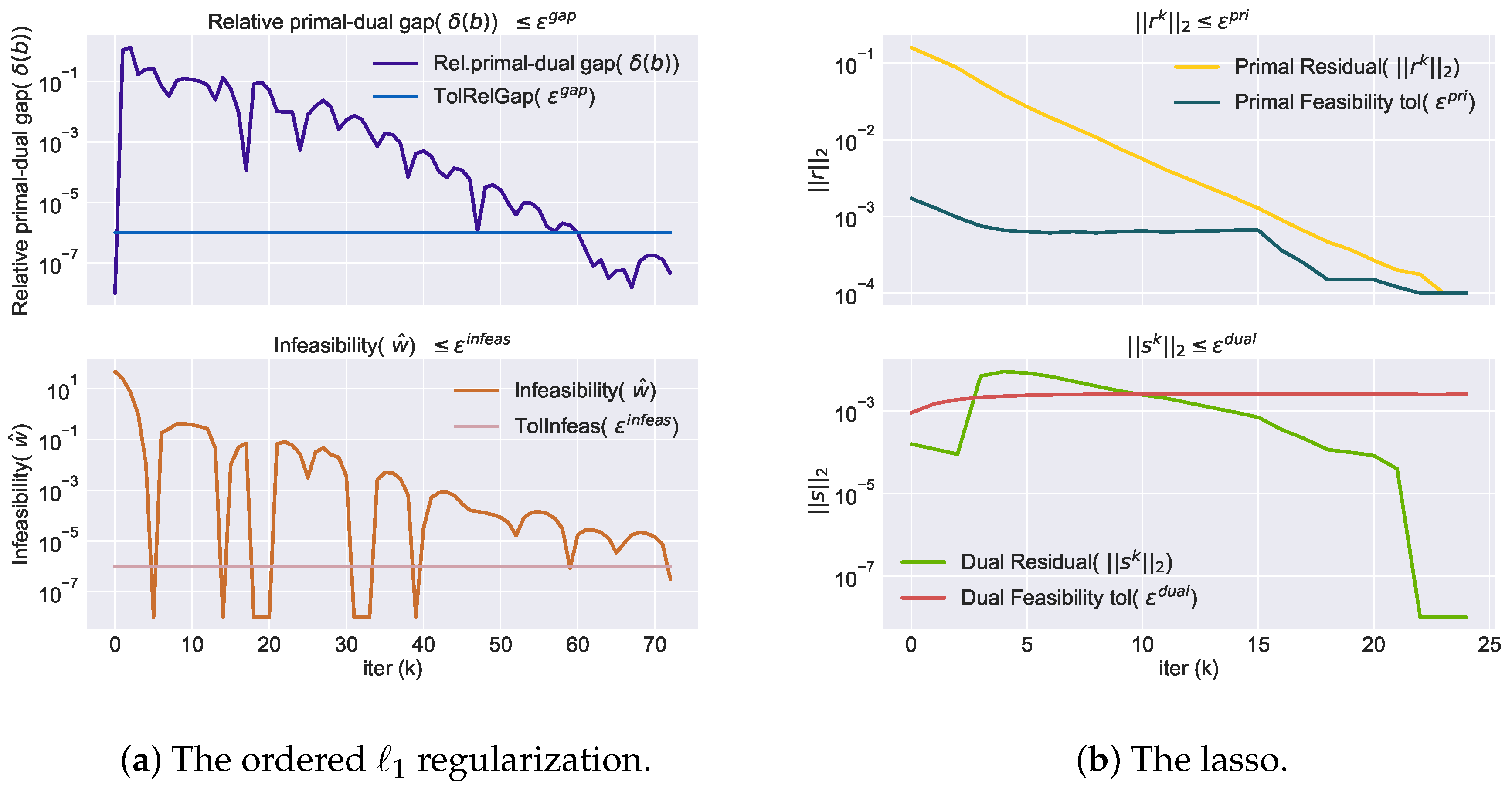

Figure 2a,b indicates the convergence of ADMM-O

and ADMM-O

, respectively.

Figure 3a,b shows the convergence of the ordered

regularization and the lasso, respectively. It can be seen from the

Figure 2 and

Figure 3 that the ordered

regularization converges faster than all algorithms. The ordered

, lasso, ordered

, and ordered

take less than 80, 30, 30, and 10 iterations, respectively to converge. Dual is not guaranteed to be feasible. Therefore, a level of infeasibility of dual is also needed to compute. A numerical experiment terminates whenever both the infeasibility (

) and relative primal-dual gap (

) are less than equal to

(Tolinfeas (

) and TolRelGap (

), respectively) the ordered

regularization harness synthetic data provided by Reference [

5]. The same data is generated for the lasso as for the ordered

regularization except for an initial value of

. For the lasso, set

, where

. The researchers also use 10-fold cross-validation (cv) with the lasso. For further details about this step, see Reference [

9].

5.3. Experimental Results of Real Data

Variable selection difficulty arises when the number of features (p) are greater than the number of instances (n). The proposed method genuinely handles these types of issues. The practical application of the ADMM method is in many domains such as computer vision and graphics [

35], analysis of biological data [

36,

37], and smart electric grids [

38,

39]. A biological leukaemia dataset [

40] was used to demonstrate the performance of the proposed method. Leukemia is a type of cancer that impairs the body’s ability to build healthy blood cells. Leukemia begins in the bone marrow. There are many types of leukemia, such as acute lymphoblastic leukemia, acute myeloid leukemia, and chronic lymphocytic leukemia. The following two types of leukemia are used in this experiment: acute lymphoblastic leukemia and acute myeloid leukemia. The leukemia dataset consists of 7129 genes and 72 samples [

41]. Randomly split the data into training and test sets. In the training set, there are 38 samples, among which 27 are type I ALL (acute lymphoblastic leukemia) and 11 are type II AML (acute myeloid leukemia). The remaining 34 samples allowed us to test the prediction accuracy. The test set contains 20 type I ALL and 14 type II AML. The data were labeled according to the type of leukemia (ALL or AML). Therefore, before applying an ordered elastic net, the type of leukemia (ALL = −1, or AML = 1) is converted as a (−1, 1) response y. Predicted response

is set to 1 if

; otherwise, it is set to −1.

is a non-increasing, ordered sequence generated according to

Section 5.1 and Algorithm 2. For the regular lasso,

is a single scalar value generated using Equation (

14).

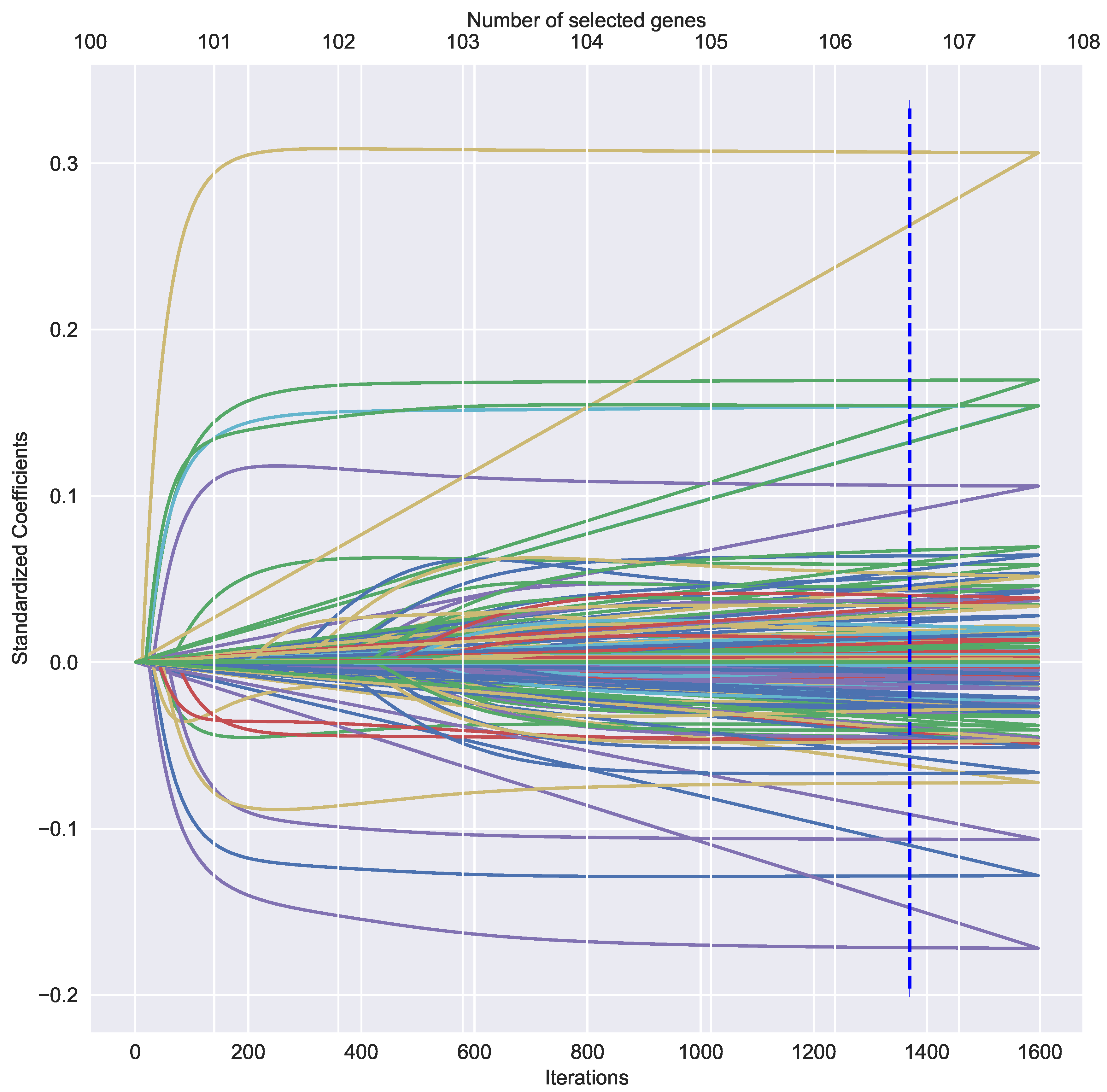

is used for the leukemia dataset. All other settings are the same as experiment with synthetic data.

Table 3 illustrates the experiment results of the leukemia dataset for different types of regularization. The lowest average mean square error (MSE) of regularization is the ordered

, followed by the ordered

and lasso, while the highest average MSE can be seen in the ordered

. Looking at

Table 3 first, it is clear that the ordered

converges the fastest among all the regularizations. The second fastest converging regularization is the ordered

, while the slowest converging regularization is the ordered

. The ordered

takes an average iteration around 190 and an average time around 0.15 s to converge. On the other hand, the ordered

, the ordered

, and lasso take average iterations around 1381, 10,000, and 10,000, respectively, and average times around: 1.0, 14.0, and 5.0 s, respectively, to converge. It can also be seen from the data in

Table 3 that the ordered

selected all the variables but that the goal is to select only the relevant variables from strongly correlated, high-dimensional dataset. Therefore, the ordered elastic net was proposed, which only selects relevant variables and discards irrelevant variables. As can be seen from

Table 3, average MSE, time, and iteration in the ordered

regularization and lasso are significantly more than the ordered

regularization, although an average gene selection in the ordered

regularization is more than that of the ordered

regularization and lasso. The ordered

and lasso select averages around 84 and 7 variables, respectively, whereas the ordered

selects an average around 107 variables. The lasso performs poorly on the leukemia dataset. The reason for this is that strongly correlated variables are present in the leukemia dataset. In general, the ordered elastic net performs better than the ordered

and lasso.

Figure 4 shows the ordered elastic net solution paths and the variable selection results.

6. Conclusions

In this paper, we showed a method for optimizing an ordered problem under an ADMM framework, called ADMM-O. As an implementation of ADMM-O, the ridge regression with the ordered regularization is shown. We also presented a method for variable selection, called ADMM-O which employs the ordered and . We see the ordered as a generalization of the ordered , which is shown as an important tool for model fitting, feature selection, and parameter estimation.

Experimental results show that the ADMM-O method correctly estimates parameter, selects relevant variables, and excludes irrelevant variables for microarray data. Our method is also computationally tractable, adaptive, and distributed. The ordered regularization is convex and can be optimized efficiently with faster convergence rate. Additionally, we have shown that our algorithm has complexity + k * heuristically, where k is the number of iterations. In future work, we plan to apply our method to other regularization models with complex penalties.