3D Facial Expression Recognition for Defining Users’ Inner Requirements—An Emotional Design Case Study

Abstract

1. Introduction

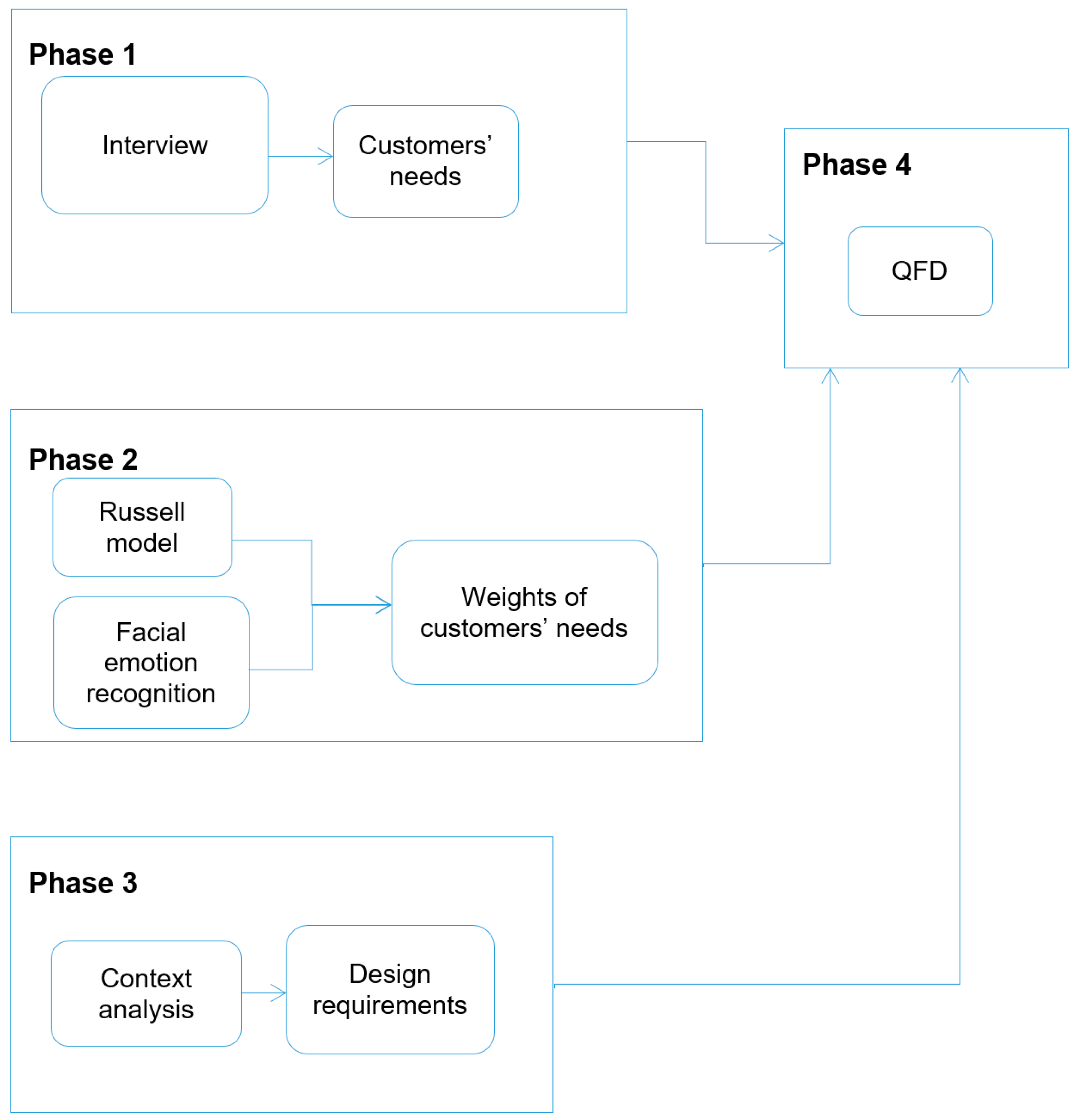

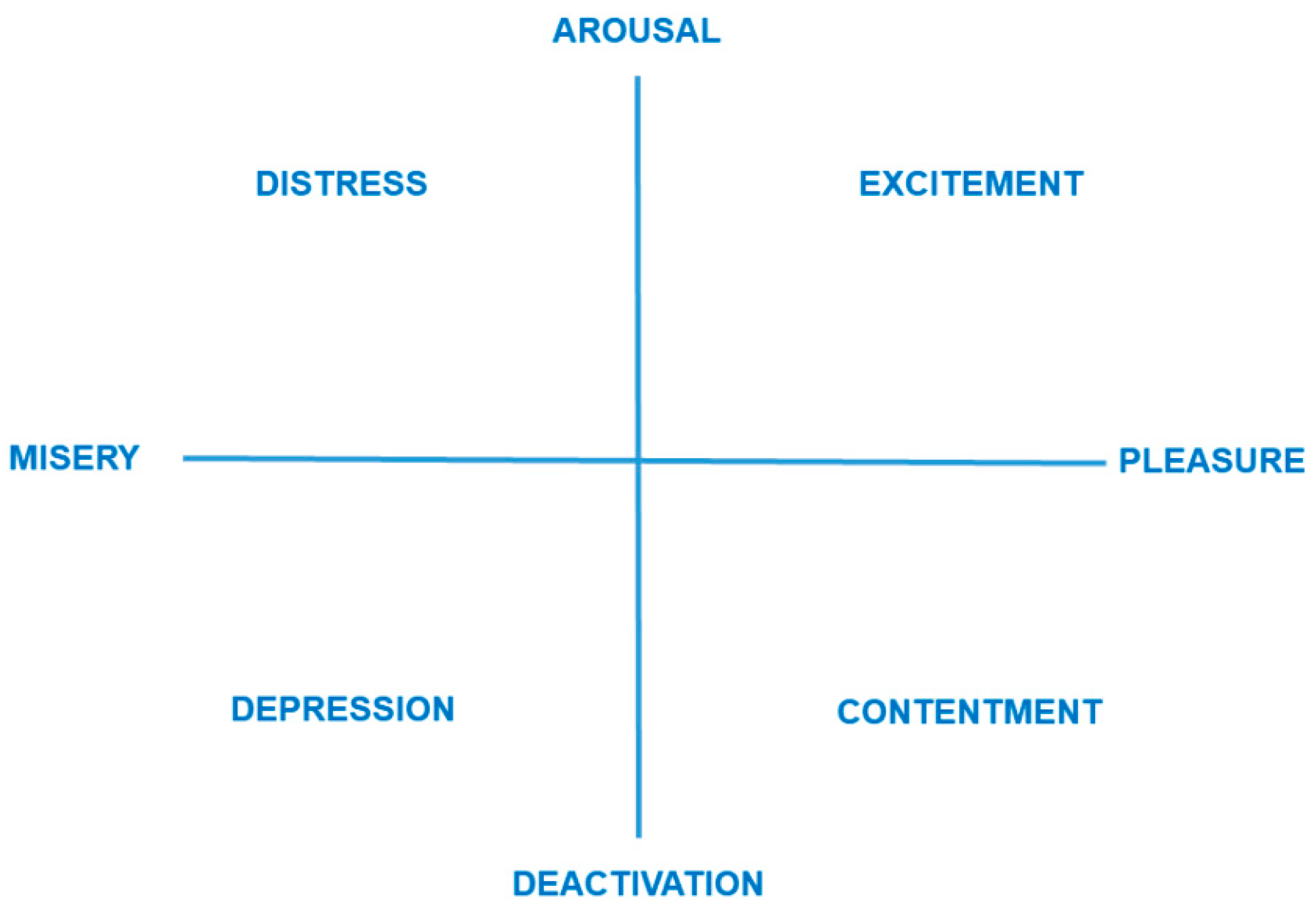

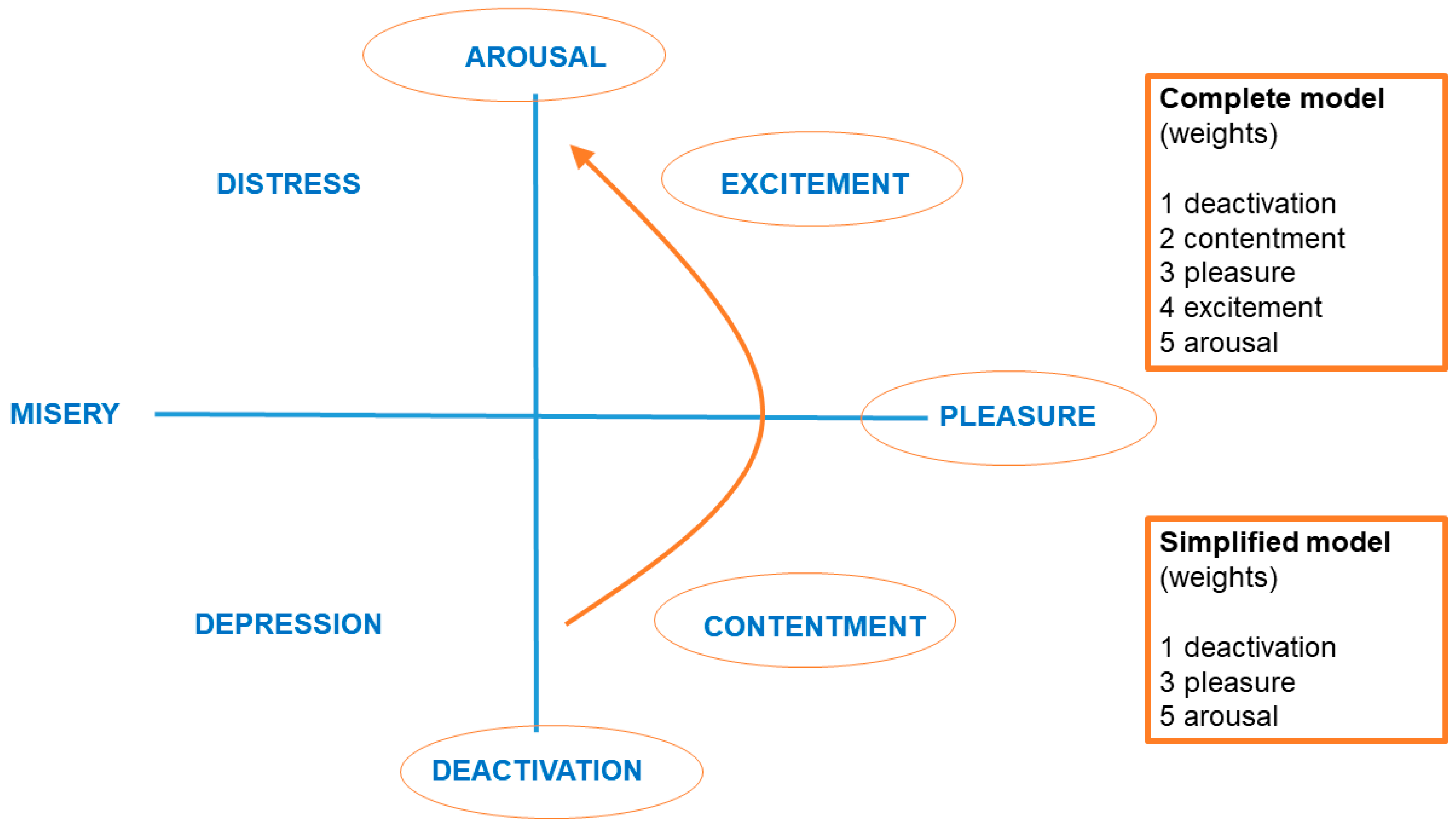

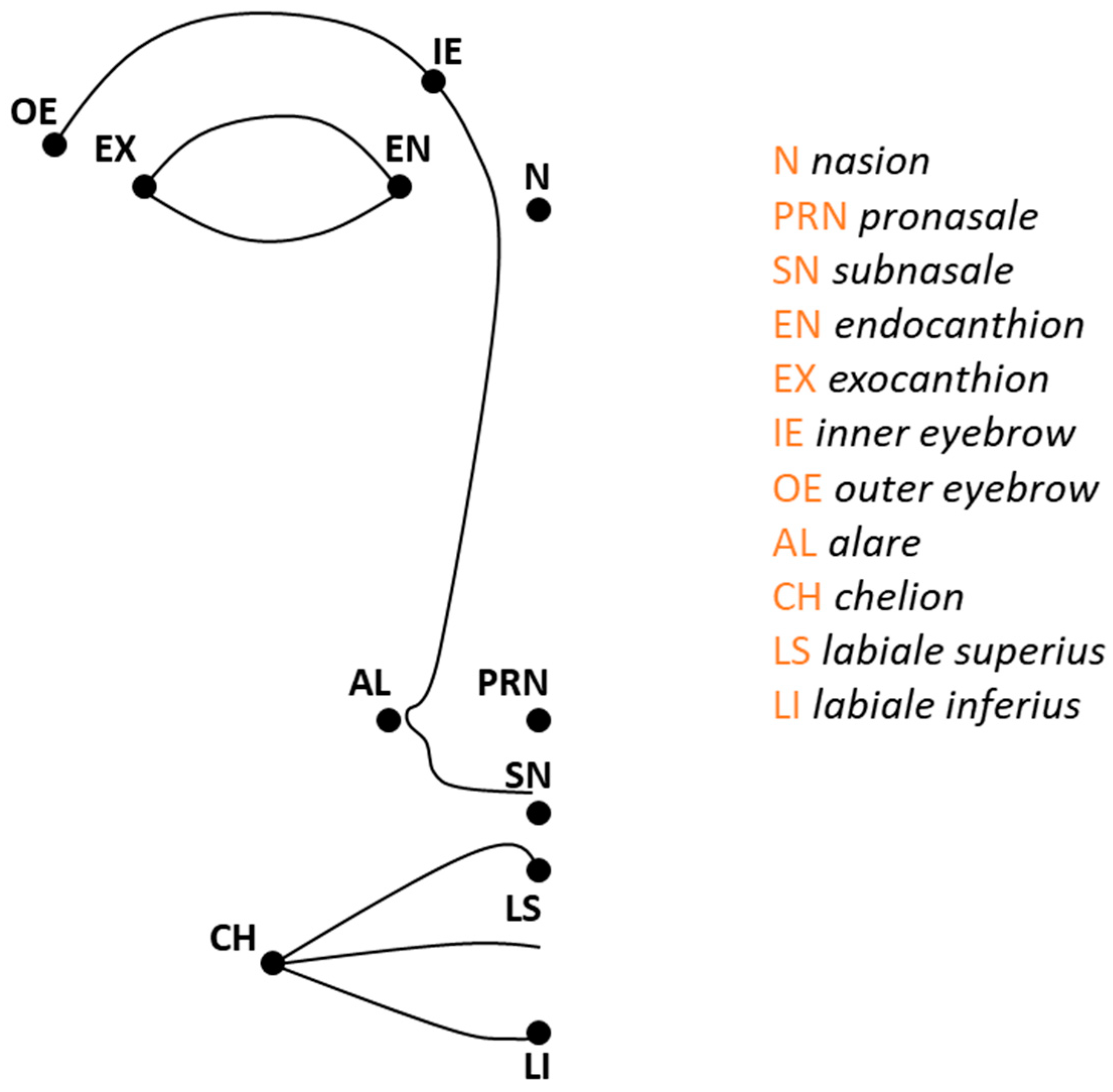

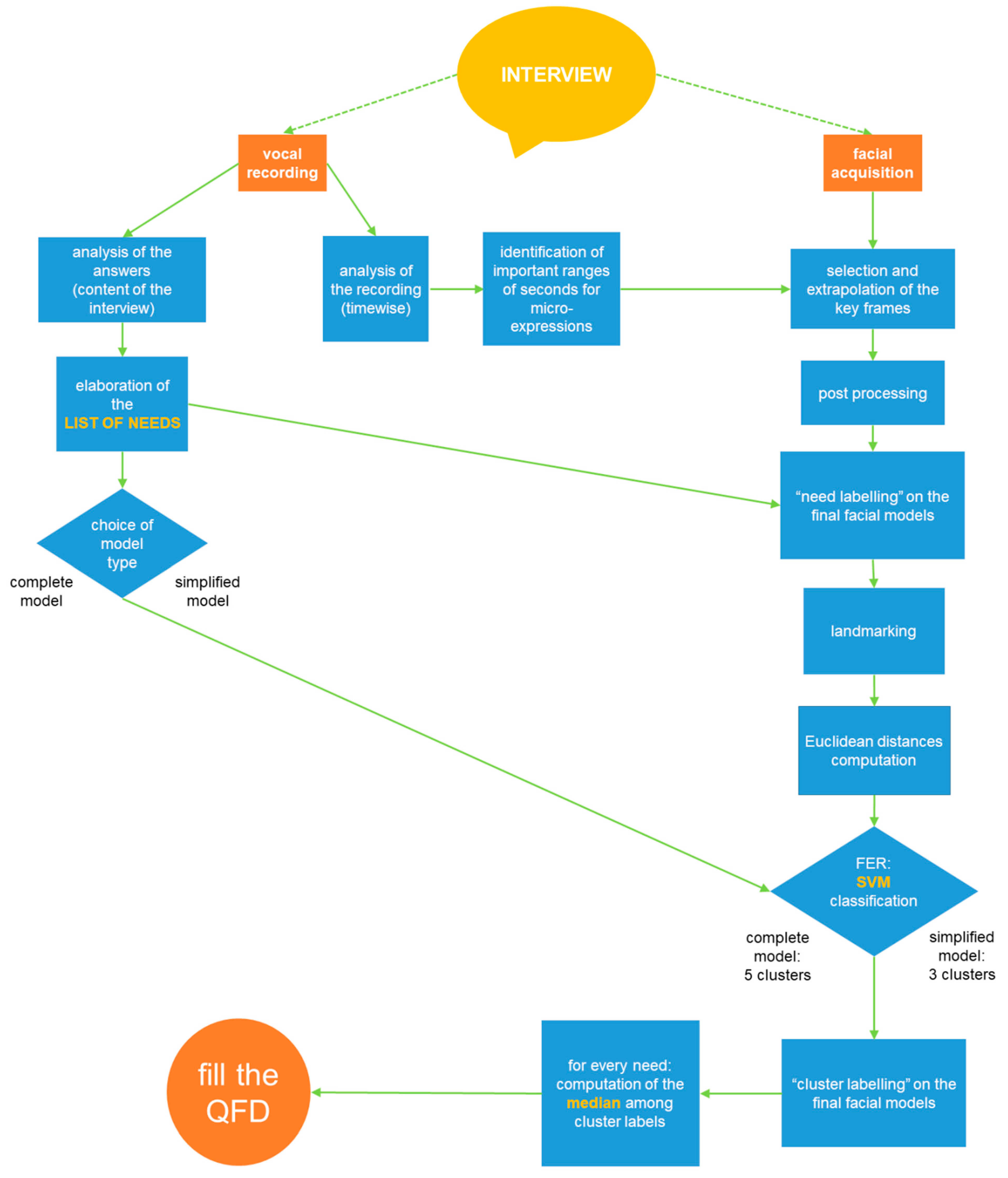

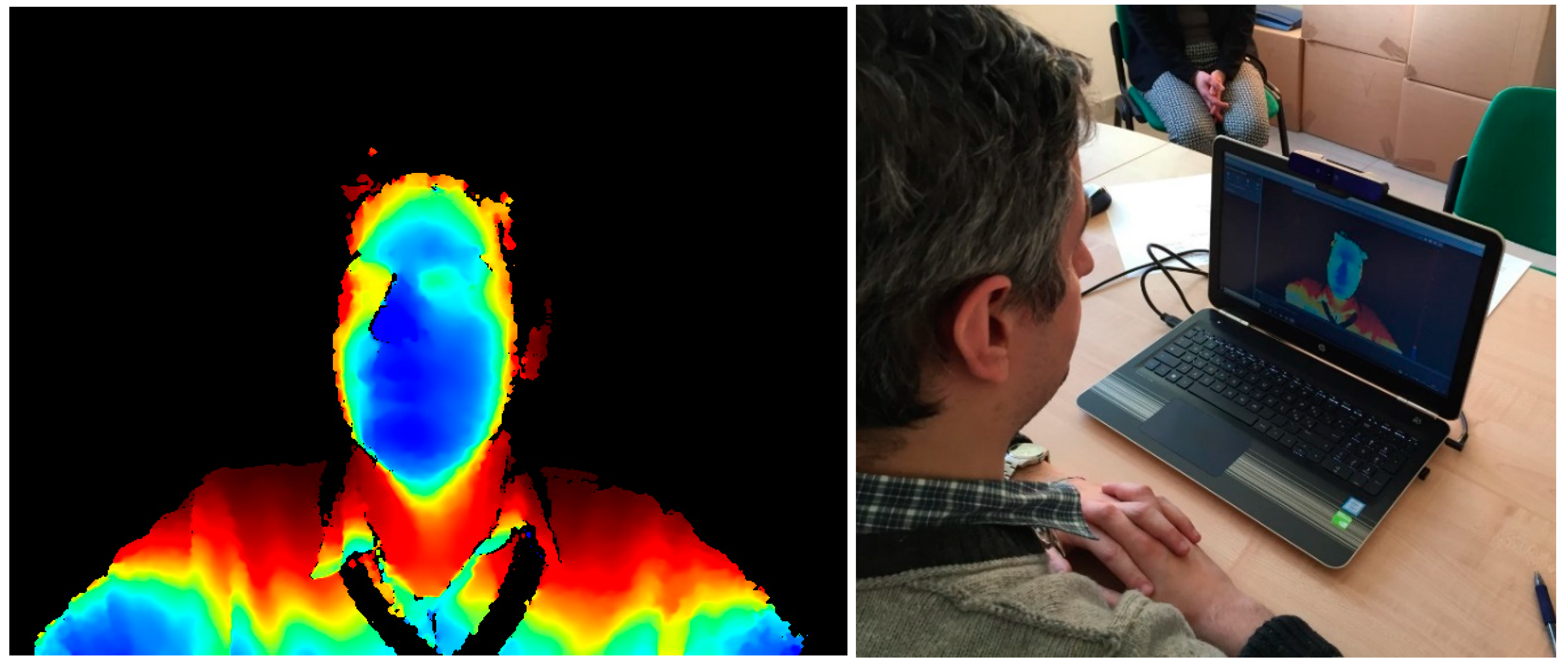

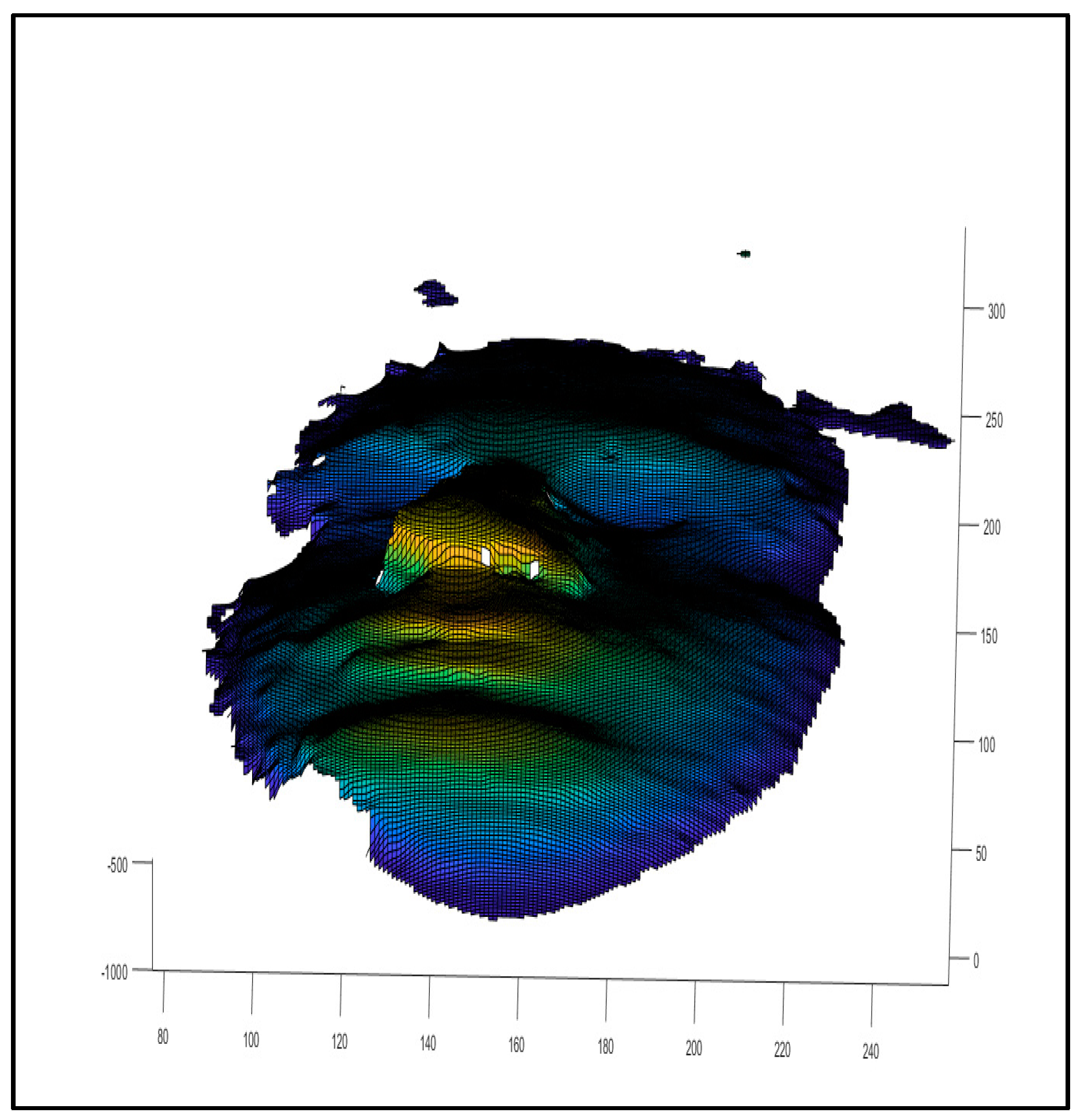

2. Method

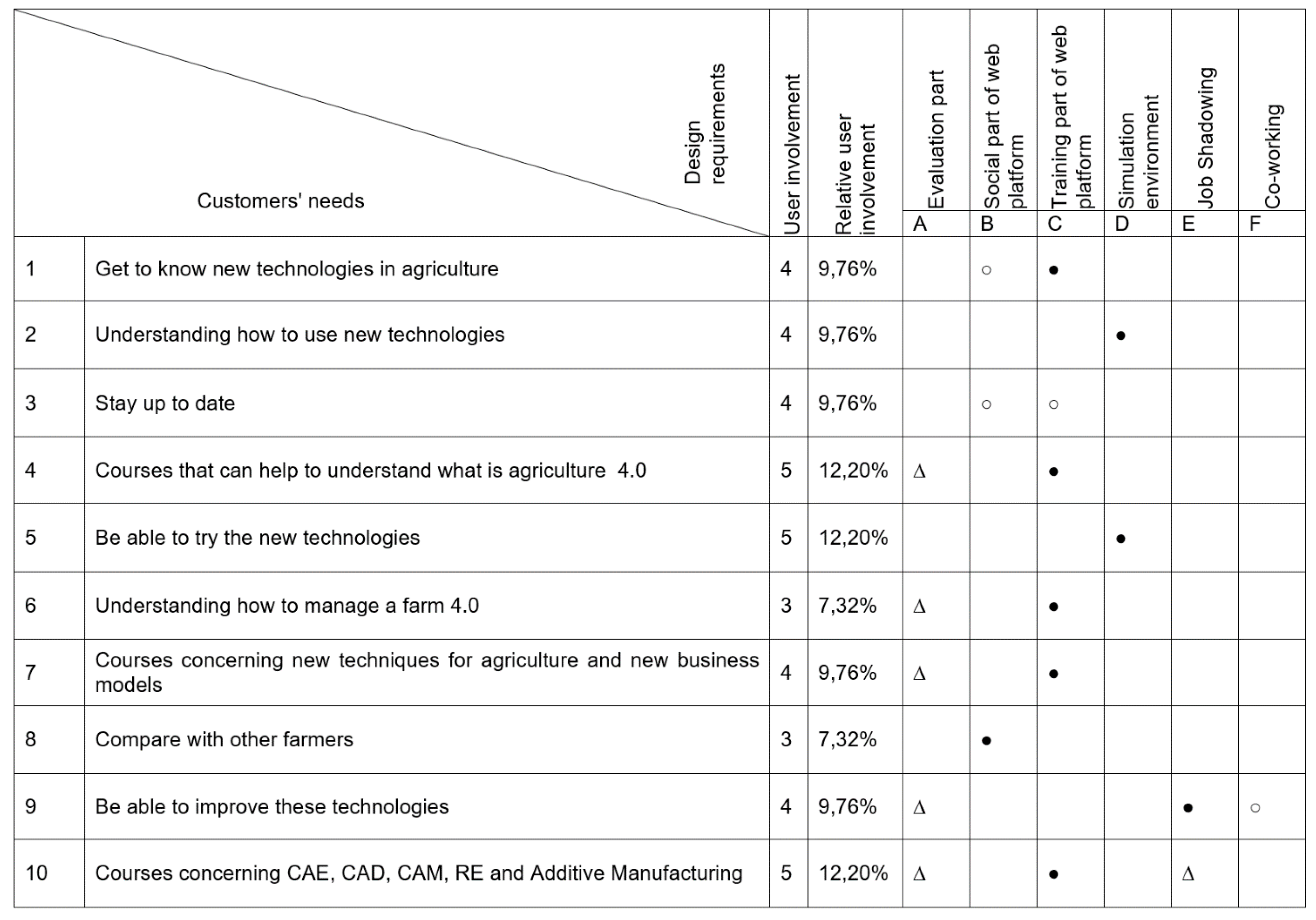

Case Study

3. Discussion

4. Conclusions

Author Contributions

Funding

Conflicts of Interest

Appendix A

Interview for Project Farmer 4.0

- Do you know the different legal forms to give to a company (family, individual, small company, and so on)?

- What is your knowledge about new technologies in the agricultural field?

- What advantages in production do you think are derived from a systematic use of ground and air robots?

- How much can the innovative cultivation processes favour the presence of women in agricultural entrepreneurship?

- The importance of new technologies for agricultural safety?

- What are the advantages for farms that use underground sensors?

- The Internet of Thinking (IOT) and the chips in animals?

- The IOT to improve the efficiency of farms in terms of monitoring the production and organization of daily activities: the campaign notebook?

- Agriculture 4.0 and the new business models?

- The new Arduino software for remote control via smartphone (lights, sprinkler, music, etc.)?

- How easy is it to become aware of new equipment/technologies mentioned above to facilitate farm work? And those for the transformation of the product?

- And, knowing the new equipment/technologies mentioned above, what are the difficulties in using these and getting them?

- How easy is it to stay up to date?

- How are new product transformation technologies are suitable for small businesses? (Here, it must be kept in mind that a young man who intends to open a micro-farm will have small quantities of product to be processed and, if the machinery is not suitable even for small quantities, it will be difficult to close the supply chain. Moreover, above all at the beginning, he cannot afford to have employees).

- How useful (on a scale of 1 to 10) are the following competences from the perspective of the Global Farm 4.0?

- Knowing funding for starting, maintaining, and expanding own business______________

- Understanding the business culture of the country work______________________________

- Entering local and/or international markets_________________________________________

- Developing fundamental skills for being a successful entrepreneur_____________________

- Information and Communications Technology (ICT) knowledge for managing a Farm 4.0____________________________________________

- Are there other competences to take into consideration?

- In the area of “actions”: which of the following skills do you think are to be developed more in a 4.0 perspective? (Select one or more alternatives).

- taking the initiative________________________________________________________

- planning and management__________________________________________________

- coping with ambiguity, uncertainty, and risk working with others____________________

- learning through experiences_________________________________________________

- In the area of “resources”: which of the following skills do you think are to be developed more in a 4.0 perspective? (Select one or more alternatives).

- self-awareness and self-efficacy__________________________________________________

- motivation and perseverance____________________________________________________

- mobilising resourcing___________________________________________________________

- financial and economic literacy___________________________________________________

- mobilising others_______________________________________________________________

- In the area of “ideas and opportunities”: which of the following skills do you think are to be developed more in a 4.0 perspective? (Select one or more alternatives).

- ethical and sustainable thinking___________________________________________________

- valuing ideas__________________________________________________________________

- vision and creativity____________________________________________________________

- spotting opportunities__________________________________________________________

- Which social networks do you consider most important? Facebook or Instagram?

- How much and how can social networks give visibility to your company?

- Do you believe that social networks can be used for your business in terms of: (Select yes or no)

- Buying raw materials Yes No

- Selling your production Yes No

- Exchanging best practices Yes No

- Asking opinions from other farmers to solve practical problems Yes No

- How much do you know about FabLab?

- Do you know what the CAE/CAD/CAM/RE are?

- Do you know what 3D printers are? How are they used?

- Regarding the FabLab approach, on a scale from 1 to 10, how much do you consider the following design and manufacturing tools to be useful?

- CAD: Computer aided design_____________________________________________

- CAE: Computer aided engineering__________________________________________

- RE: Reverse engineering__________________________________________________

- CAM: Computer aided manufacturing________________________________________

- Additive Manufacturing—3D Printing________________________________________

- Do you think these tools can be used to support your work?

- Can they be useful and efficient in the agricultural sector? If yes, how?

- What do you think should be the main topics to be included in an interactive entrepreneurship course for Farmer 4.0?

- Regarding our scheme of the project, how important do you consider the following phases? (from 1 to 10):

- The evaluation path of the user in terms of skills and abilities________________________________________________________________________

- The training path________________________________________________________________________

- The simulative environment________________________________________________________________________

- The job shadowing________________________________________________________________________

- The co-working________________________________________________________________________

- Could it be useful to represent in the simulation environment a virtual FabLab in which the user can move at 360° to view typical FabLab equipment and tools and learn how they work?

References

- Norman, D.A. Emotional Design: Why We Love (or Hate) Everyday Things; Basic Civitas Books: New York, NY, USA, 2004. [Google Scholar]

- Norman, D.A. Cognitive engineering. User Cent. Syst. Des. 1986, 31, 61. [Google Scholar]

- Jordan, P.W. Designing Pleasurable Products: An Introduction to the New Human Factors; CRC Press: London, UK, 2003. [Google Scholar]

- Green, W.S.; Jordan, P.W. Pleasure with Products: Beyond Usability; CRC Press: London, UK, 2002. [Google Scholar]

- Triberti, S.; Chirico, A.; la Rocca, G.; Riva, G. Developing emotional design: Emotions as cognitive processes and their role in the design of interactive technologies. Front. Psychol. 2017, 8, 1773. [Google Scholar] [CrossRef] [PubMed]

- Van Gorp, T.; Adams, E. Design for Emotions; Elsevier: Waltham, MA, USA, 2012. [Google Scholar]

- Sáenz, D.C.; Domínguez, C.E.D.; Llorach-Massana, P.; García, A.A.; Arellano, J.L.H. A series of recommendations for industrial design conceptualizing based on emotional design. In Managing Innovation in Highly Restrictive Environments; di Cortés-Robles, G., García-Alcaraz, J.L., Alor-Hernández, G., Eds.; Springer: Cham, Switzerland, 2019; pp. 167–185. [Google Scholar]

- Lin, C.J.; Cheng, L.Y. Product attributes and user experience design: How to convey product information through user-centered service. J. Intell. Manuf. 2017, 28, 1743–1754. [Google Scholar] [CrossRef]

- Pucillo, F.; Cascini, G. A framework for user experience, needs and affordances. Des. Stud. 2014, 35, 160–179. [Google Scholar] [CrossRef]

- Yang, B.; Liu, Y.; Liang, Y.; Tang, M. Exploiting user experience from online customer reviews for product design. Int. J. Inf. Manag. 2019, 46, 173–186. [Google Scholar] [CrossRef]

- Hassenzahl, M. The interplay of beauty, goodness, and usability in interactive products. Hum.-Comput. Interact. 2004, 19, 319–349. [Google Scholar] [CrossRef]

- Mugge, R.; Schifferstein, H.N.; Schoormans, J.P. Product attachment and satisfaction: Understanding consumers’ post-purchase behavior. J. Consum. Mark. 2010, 27, 271–282. [Google Scholar] [CrossRef]

- Desmet, P.M.; Hekkert, P. Framework of product experience. Int. J. Des. 2007, 1, 57–66. [Google Scholar]

- Mahut, T.; Bouchard, C.; Omhover, J.F.; Favart, C.; Esquivel, D. Interdependency between user experience and interaction: A kansei design approach. Int. J. Interact. Des. Manuf. (IJIDeM) 2018, 12, 105–132. [Google Scholar] [CrossRef]

- Vezzetti, E.; Marcolin, F.; Guerra, A.L. QFD 3D: A new C-shaped matrix diagram quality approach. Int. J. Qual. Reliab. Manag. 2016, 33, 178–196. [Google Scholar] [CrossRef]

- Osgood, C.; Suci, G.; Tannenbaum, P. The Measurement of Meaning; University of Illinois Press: Urbana-Champaign, IL, USA, 1967. [Google Scholar]

- Green, P.E.; Srinivasan, V. Conjoint Analysis in consumer research: Issues and outlook. J. Consum. Res. 1978, 5, 103–123. [Google Scholar] [CrossRef]

- Violante, M.G.; Vezzetti, E. Kano qualitative vs quantitative approaches: An assessment framework for products attributes analysis. Comput. Ind. 2017, 86, 15–25. [Google Scholar] [CrossRef]

- Nagamachi, M. Kansei/Affective Engineering; CRC Press: London, UK, 2016. [Google Scholar]

- Hartono, M.; Tan, K.C.; Peacock, J.B. Applying Kansei Engineering, the Kano model and QFD to services. Int. J. Serv. Econ. Manag. 2013, 5, 256–274. [Google Scholar] [CrossRef]

- Lee, S.; Harada, A.; Stappers, P.J. Pleasure with products: Design based on Kansei. In Pleasure with Products: Beyond Usability; CRC Press: London, UK, 2002; pp. 219–229. [Google Scholar]

- Huang, Y.; Chen, C.H.; Khoo, L.P. Products classification in emotional design using a basic-emotion based semantic differential method. Int. J. Ind. Ergon. 2012, 42, 569–580. [Google Scholar] [CrossRef]

- Barone, S.; Lombardo, A.; Tarantino, P. A weighted logistic regression for conjoint analysis and Kansei engineering. Qual. Reliab. Eng. Int. 2007, 23, 689–706. [Google Scholar] [CrossRef]

- Violante, M.G.; Vezzetti, E. Virtual interactive e-learning application: An evaluation of the student satisfaction. Comput. Appl. Eng. Educ. 2015, 23, 72–91. [Google Scholar] [CrossRef]

- Violante, M.G.; Vezzetti, E. A methodology for supporting requirement management tools (RMt) design in the PLM scenario: An user-based strategy. Comput. Ind. 2014, 65, 1065–1075. [Google Scholar] [CrossRef]

- Violante, M.G.; Vezzetti, E.; Alemanni, M. An integrated approach to support the Requirement Management (RM) tool customization for a collaborative scenario. Int. J. Interact. Des. Manuf. (IJIDeM) 2017, 11, 191–204. [Google Scholar] [CrossRef]

- Violante, M.G.; Vezzetti, E. Implementing a new approach for the design of an e-learning platform in engineering education. Comput. Appl. Eng. Educ. 2014, 22, 708–727. [Google Scholar] [CrossRef]

- Violante, M.G.; Vezzetti, E. Guidelines to design engineering education in the twenty-first century for supporting innovative product development. Eur. J. Eng. Educ. 2017, 42, 1344–1364. [Google Scholar] [CrossRef]

- Fukuda, S. Emotional Engineering: Service Development; Springer Science & Business Media: Berlin/Heidelberg, Germany, 2010. [Google Scholar]

- Castellano, G.; Mortillaro, M.; Camurri, A.; Volpe, G.; Scherer, K. Automated analysis of body movement in emotionally expressive piano performances. Music Percept. Interdiscip. J. 2008, 26, 103–119. [Google Scholar] [CrossRef]

- Schmidt, S.; Stock, W.G. Collective indexing of emotions in images. A study in emotional information retrieval. J. Am. Soc. Inf. Sci. Technol. 2009, 60, 863–876. [Google Scholar] [CrossRef]

- Sullivan, L.P. Quality Function Deployment; Quality Progress (ASQC): Milwaukee, WI, USA, 1986; pp. 39–50. [Google Scholar]

- Akao, Y.; Mazur, G.H. The leading edge in QFD: Past, present and future. Int. J. Qual. Reliab. Manag. 2003, 20, 20–35. [Google Scholar] [CrossRef]

- Vezzetti, E.; Tornincasa, S.; Moos, S.; Marcolin, F. 3D Human Face Analysis: Automatic Expression Recognition, Biomedical Engineering; ACTA Press: Innsbruck, Austria, 2016. [Google Scholar]

- Tornincasa, S.; Vezzetti, E.; Moos, S.; Violante, M.G.; Marcolin, F.; Dagnes, N.; Ulrich, L.; Tregnaghi, G.F. 3D Facial Action Units and Expression Recognition using a Crisp Logic. Comput.-Aided Des. Appl. 2019, 16, 256–268. [Google Scholar] [CrossRef]

- Russell, J.A. A circumplex model of affect. J. Personal. Soc. Psychol. 1980, 39, 1161. [Google Scholar] [CrossRef]

- Russell, J.A.; Fehr, B. Relativity in the perception of emotion in facial expressions. J. Exp. Psychol. Gen. 1987, 116, 223. [Google Scholar] [CrossRef]

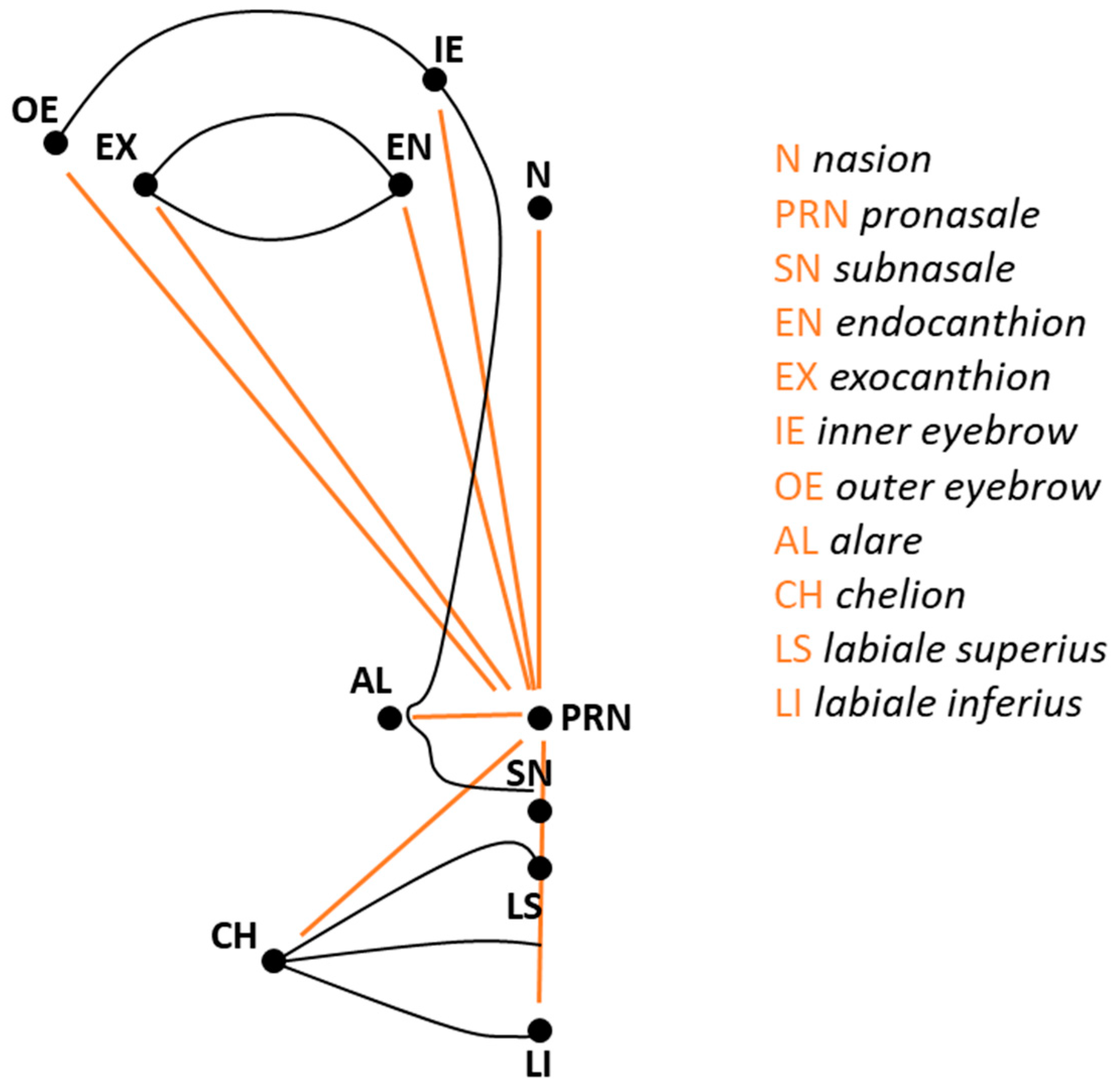

- Marcolin, F. Miscellaneous expertise of 3D facial landmarks in recent literature. Int. J. Biom. 2017, 9, 279–304. [Google Scholar] [CrossRef]

- Vezzetti, E.; Marcolin, F.; Tornincasa, S.; Ulrich, L.; Dagnes, N. 3D geometry-based automatic landmark localization in presence of facial occlusions. Multimed. Tools Appl. 2017. [Google Scholar] [CrossRef]

- Marcolin, F.; Vezzetti, E. Novel descriptors for geometrical 3D face analysis. Multimed. Tools Appl. 2017, 76, 13805–13834. [Google Scholar] [CrossRef]

- Swennen, G.R.; Schutyser, F.A.; Hausamen, J.E. Three-Dimensional Cephalometry: A Color Atlas and Manual; Springer Science & Business Media: Berlin/Heidelberg, Germany, 2005. [Google Scholar]

- Avikal, S.; Singh, R.; Rashmi, R. QFD and fuzzy kano model based approach for classification of aesthetic attributes of SUV car profile. J. Intell. Manuf. 2018, 1–14. [Google Scholar] [CrossRef]

- Dolgun, L.E.; Köksal, G. Effective use of quality function deployment and Kansei engineering for product planning with sensory customer requirements: A plain yogurt case. Qual. Eng. 2018, 30, 569–582. [Google Scholar] [CrossRef]

- Han, D.I.D.; Jung, T.; Tom Dieck, M.C. Translating tourist requirements into mobile AR application engineering through QFD. Int. J. Hum.–Comput. Int. 2019, 1–17. [Google Scholar] [CrossRef]

- Ho, W.C.; Lee, A.W.; Lee, S.J.; Lin, G.T. The Application of quality function deployment to smart watches–the house of quality for improved product design. J. Sci. Ind. Res. 2018, 77, 149–152. [Google Scholar]

- Benner, M.; Linnemann, A.R.; Jongen, W.M.F.; Folstar, P. Quality Function Deployment (QFD)—Can it be used to develop food products? Food Qual. Prefer. 2003, 14, 327–339. [Google Scholar] [CrossRef]

- Bouchereau, V.; Rowlands, H. Methods and techniques to help quality function deployment (QFD). Benchmarking Int. J. 2000, 7, 8–20. [Google Scholar] [CrossRef]

- Chan, L.K.; Wu, M.L. Quality function deployment: A comprehensive review of its concepts and methods. Qual. Eng. 2002, 15, 23–35. [Google Scholar] [CrossRef]

- Zare Mehrjerdi, Y. Quality function deployment and its extensions. Int. J. Qual. Reliab. Manag. 2010, 27, 616–640. [Google Scholar] [CrossRef]

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Violante, M.G.; Marcolin, F.; Vezzetti, E.; Ulrich, L.; Billia, G.; Di Grazia, L. 3D Facial Expression Recognition for Defining Users’ Inner Requirements—An Emotional Design Case Study. Appl. Sci. 2019, 9, 2218. https://doi.org/10.3390/app9112218

Violante MG, Marcolin F, Vezzetti E, Ulrich L, Billia G, Di Grazia L. 3D Facial Expression Recognition for Defining Users’ Inner Requirements—An Emotional Design Case Study. Applied Sciences. 2019; 9(11):2218. https://doi.org/10.3390/app9112218

Chicago/Turabian StyleViolante, Maria Grazia, Federica Marcolin, Enrico Vezzetti, Luca Ulrich, Gianluca Billia, and Luca Di Grazia. 2019. "3D Facial Expression Recognition for Defining Users’ Inner Requirements—An Emotional Design Case Study" Applied Sciences 9, no. 11: 2218. https://doi.org/10.3390/app9112218

APA StyleViolante, M. G., Marcolin, F., Vezzetti, E., Ulrich, L., Billia, G., & Di Grazia, L. (2019). 3D Facial Expression Recognition for Defining Users’ Inner Requirements—An Emotional Design Case Study. Applied Sciences, 9(11), 2218. https://doi.org/10.3390/app9112218