Corpus Augmentation for Neural Machine Translation with Chinese-Japanese Parallel Corpora

Abstract

Featured Application

Abstract

1. Introduction

2. Related Work

3. Neural Machine Translation and ASPEC-JC Corpus

3.1. Neural Machine Translation

3.2. ASPEC-JC Corpus

4. Corpus Augmentation by Sentence Segmentation

4.1. Generating Parallel Partial Sentences

- Obtain the word alignment information from tokenized Japanese-Chinese parallel sentences.

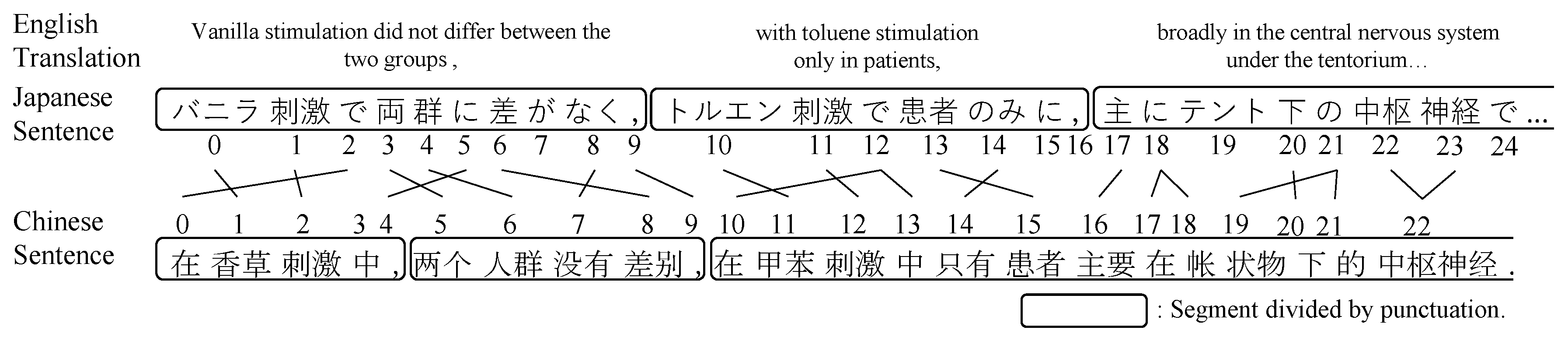

- Split the long parallel sentences into segments at the punctuation symbols, such as “,”, “;”, and “:”. Figure 1 presents an example of word alignment information and the segments of a sentence pair.

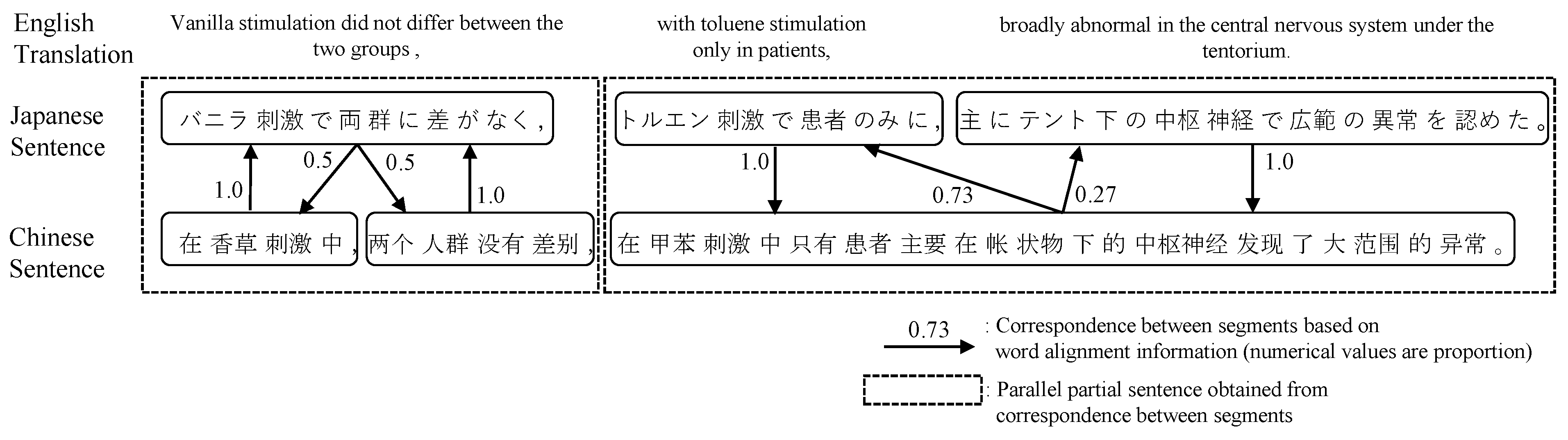

- Obtain source-target segment alignments: For each source segment s-segi and target segment t-segj, count the words in s-segi that correspond to the words in t-segj according to the word alignment information. The numerical values on the arrows in Figure 2 represent the rate of the correspondence relation between the segments. We infer that s-segi corresponds to t-segj if the rate is greater than or equal to a threshold value .

- Obtain target-source segment alignments: According to the procedure in 3.

- Concatenate multiple segments to form a one-to-one relation if there is a one-to-many or many-to-many relation between the segments.

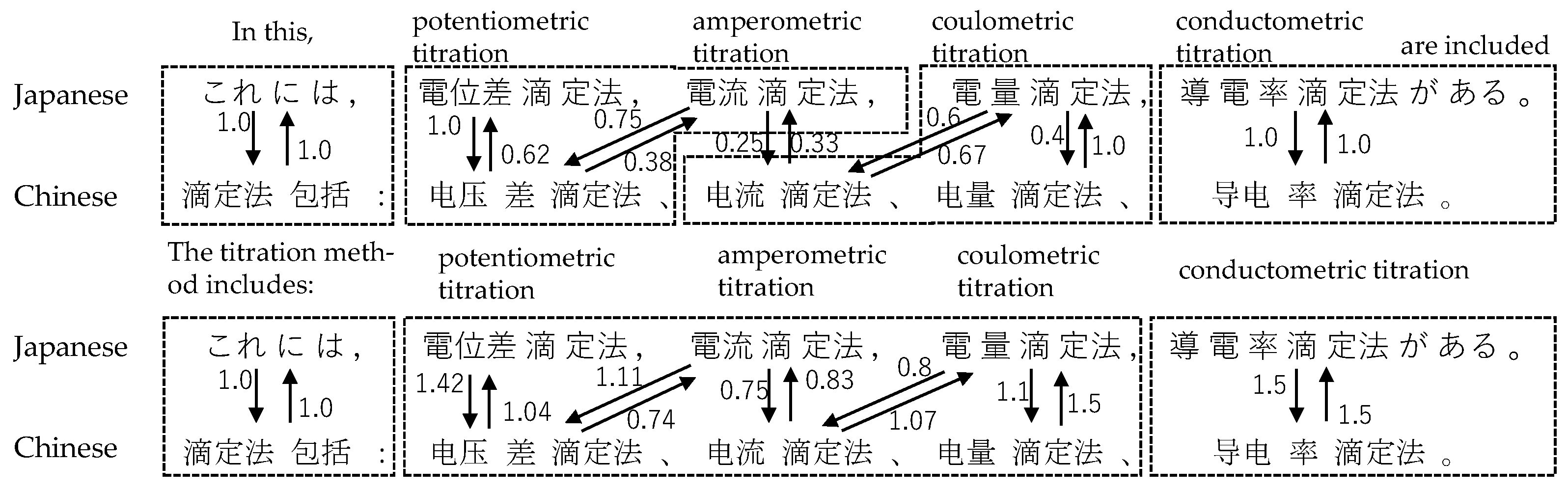

4.2. Correcting Segments’ Correspondence Information Using Common Chinese Characters

4.3. Corpus Augmentation by Generated Parallel Partial Sentences

- Back-translate the target partial sentences into the source language with a translation model built from parallel data.

- For each sentence, create a pseudo-source sentence that is partly different from the original source sentence by replacing a part of the original sentence with a partial sentence obtained using back-translation. As a result, it is possible to generate the same number of variations of pseudo-source language sentences as the number of partial sentences. For example, if a sentence is divided into two partial sentences, two pseudo-source sentences will be created. Table 1 shows the pseudo-source language sentences generated from the Japanese sentence of Figure 2.

- Copy the target sentences corresponding to the created pseudo-source sentences to produce pseudo-parallel sentences.

- Add the generated pseudo-parallel sentences to the original parallel corpus.

4.4. Use of Sentences Not Divided into Partial Sentences

- Extract target sentences from the undivided sentence pairs that can be split into multiple segments.

- Back-translate the extracted target language sentences into the source language.

- Split the target language sentence and its back-translation result into segments in the manner used for Step 2 in Section 4.1.

- Let t and be respectively a target sentence and its back-translation result; let n and m be respectively the numbers of the segments of t and ; i.e., and . Extract sentence pairs such that and .

- (4-1)

- Back-translate each segment of t to .

- (4-2)

- For i (), replace in with to generate n pseudo-source sentences: , , …, .

- (4-3)

- Make n pseudo-parallel sentence pairs from each obtained pseudo-source sentence and the target sentence t.

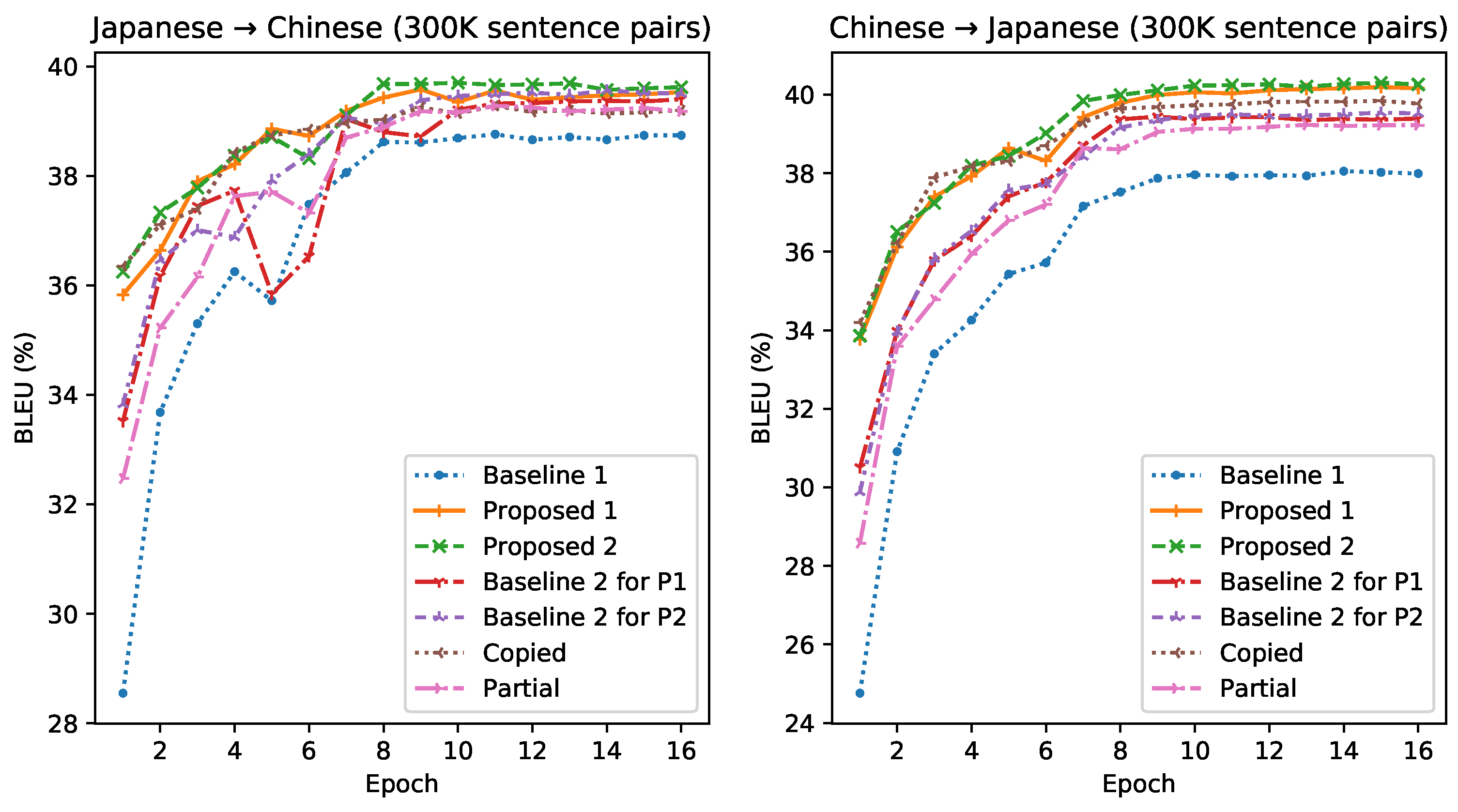

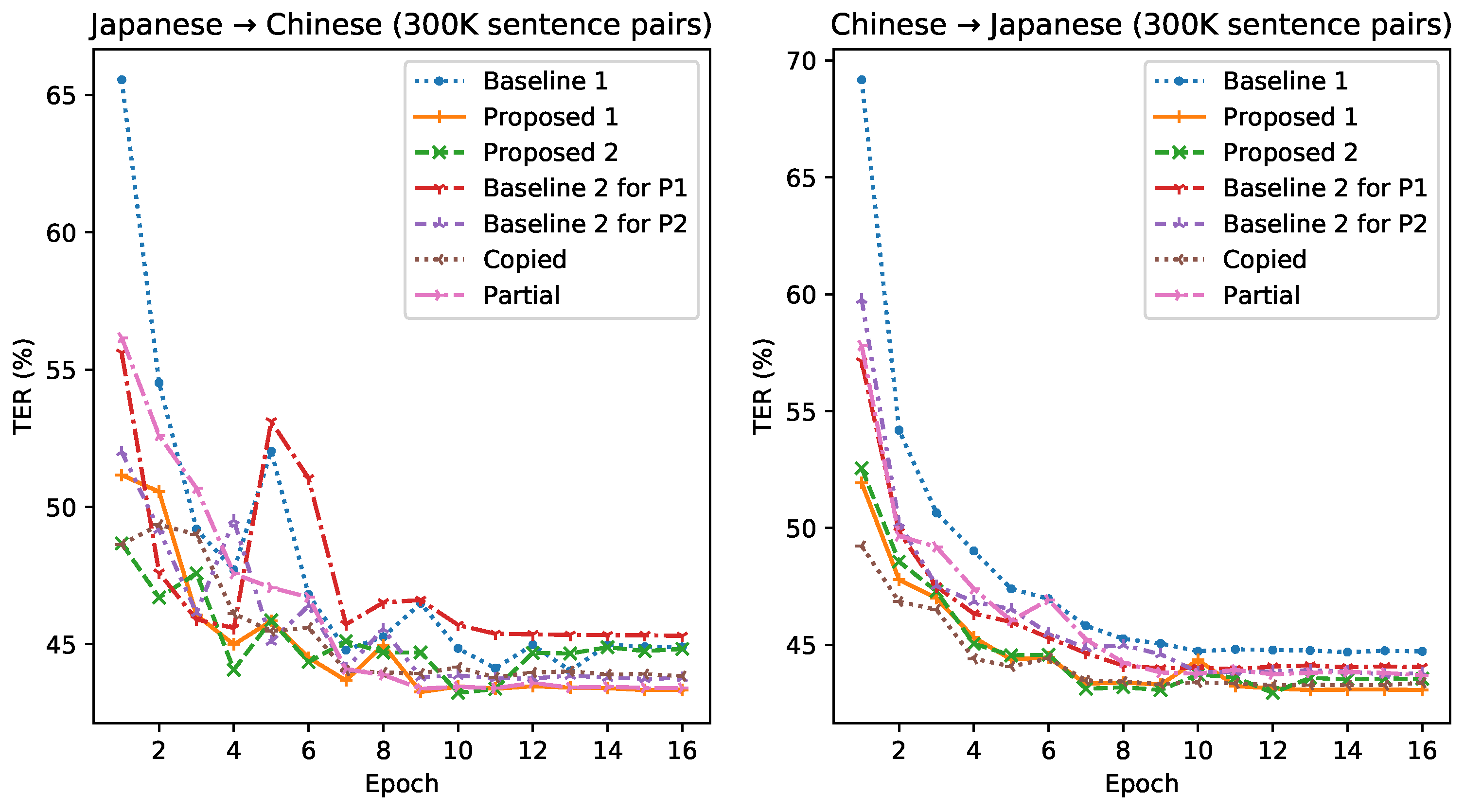

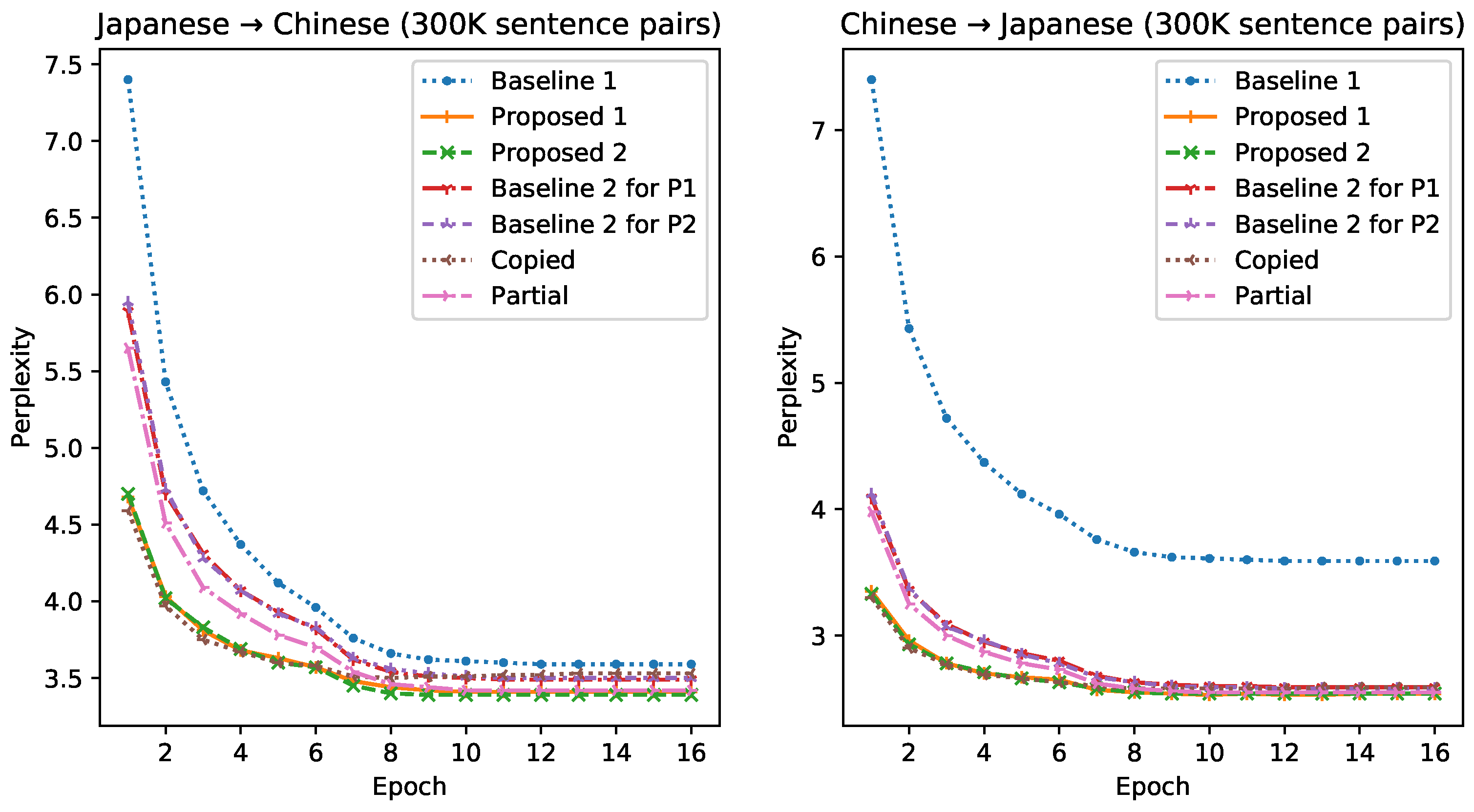

5. Evaluation and Translation Results

5.1. Experiment Settings

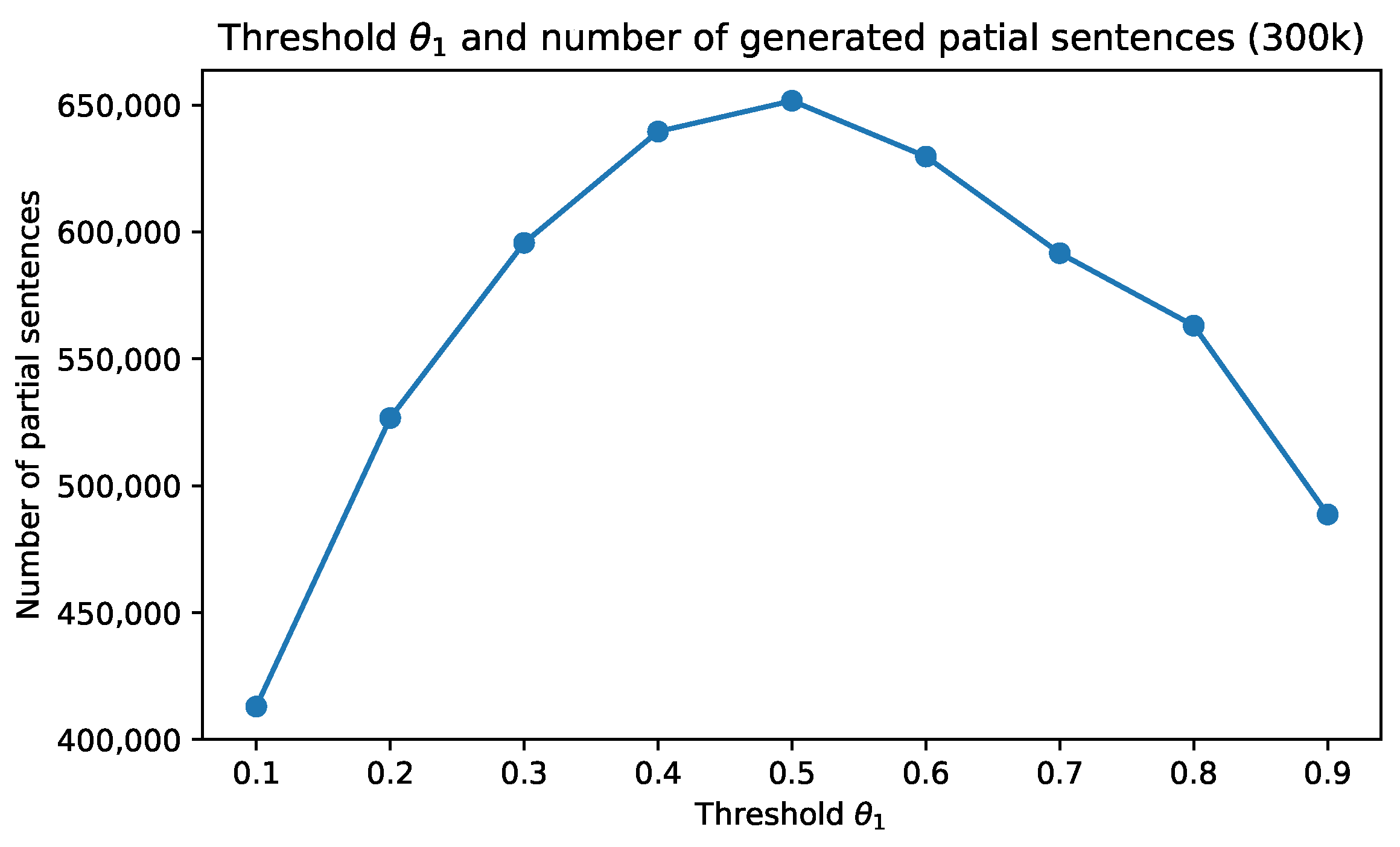

5.2. Selection of Thresholds , and Weight w

5.3. Experiment Results and Discussion

6. Conclusions and Future Work

Supplementary Materials

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Bahdanau, D.; Cho, K.; Bengio, Y. Neural Machine Translation by Jointly Learning to Align and Translate. arXiv 2014, arXiv:1409.0473. [Google Scholar]

- Koehn, P.; Knowles, R. Six Challenges for Neural Machine Translation. arXiv 2017, arXiv:1706.03872. [Google Scholar]

- O’Brien, S.; Liu, C.-H.; Way, A.; Graça, J.; MarMns, A.; Moniz, H.; Kemp, E.; Petras, R. The INTERACT Project and Crisis MT. In Proceedings of the MT Summit XVI, Vol.2: Users and Translators Track, Nagoya, Japan, 18–22 September 2017; pp. 56–76. [Google Scholar]

- Firat, O.; Cho, K.; Bengio, Y. Multi-Way, Multilingual Neural Machine Translation with a Shared Attention Mechanism. arXiv 2016, arXiv:1601.01073. [Google Scholar]

- Johnson, M.; Schuster, M.; Le, Q.V.; Krikun, M.; Wu, Y.; Chen, Z.; Thorat, N.; Viégas, F.; Wattenberg, M.; Corrado, G.; et al. Google’s Multilingual Neural Machine Translation System: Enabling Zero-Shot Translation. arXiv 2017, arXiv:1611.04558. [Google Scholar] [CrossRef]

- Lakew, S.M.; Lotito, Q.; Negri, M.; Turchi, M.; Federico, M. Improving Zero-Shot Translation of Low-Resource Languages. arXiv 2018, arXiv:1811.01389. [Google Scholar]

- Mattoni, G.; Nagle, P.; Collantes, C.; Shterionov, D. Zero-Shot Translation for Indian Languages with Sparse Data. In Proceedings of the MT Summit 2017, Nagoya, Japan, 18–22 September 2017. [Google Scholar]

- Chen, Y.; Liu, Y.; Cheng, Y.; Li, V.O. A Teacher-Student Framework for Zero-Resource Neural Machine Translation. In Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), Vancouver, BC, Canada, 30 July–4 August 2017; Association for Computational Linguistics: Stroudsburg, PA, USA, 2017; pp. 1925–1935. [Google Scholar] [CrossRef]

- Zheng, H.; Cheng, Y.; Liu, Y. Maximum Expected Likelihood Estimation for Zero-resource Neural Machine Translation. In Proceedings of the Twenty-Sixth International Joint Conference on Artificial Intelligence, Melbourne, Australia, 19–25 August 2017; pp. 4251–4257. [Google Scholar] [CrossRef]

- Cheng, Y.; Yang, Q.; Liu, Y.; Sun, M.; Xu, W. Joint Training for Pivot-based Neural Machine Translation. In Proceedings of the Twenty-Sixth International Joint Conference on Artificial Intelligence, Melbourne, Australia, 19–25 August 2017; pp. 3974–3980. [Google Scholar] [CrossRef][Green Version]

- Sennrich, R.; Haddow, B.; Birch, A. Improving Neural Machine Translation Models with Monolingual Data. arXiv 2015, arXiv:1511.06709. [Google Scholar]

- Luong, M.T.; Pham, H.; Manning, C.D. Effective Approaches to Attention-based Neural Machine Translation. In Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing, Lisbon, Portugal, 4 September 2015; pp. 1412–1421. [Google Scholar] [CrossRef]

- Nakazawa, T.; Yaguchi, M.; Uchimoto, K.; Utiyama, M.; Sumita, E.; Kurohashi, S.; Isahara, H. ASPEC: Asian Scientific Paper Excerpt Corpus. In Proceedings of the Tenth International Conference on Language Resources and Evaluation (LREC 2016), Portorož, Slovenia, 23–28 May 2016. [Google Scholar]

- Sennrich, R.; Birch, A.; Currey, A.; Germann, U.; Haddow, B.; Heafield, K.; Barone, A.V.M.; Williams, P. The University of Edinburgh’s Neural MT Systems for WMT17. arXiv 2017, arXiv:1708.00726. [Google Scholar]

- Currey, A.; Miceli Barone, A.V.; Heafield, K. Copied Monolingual Data Improves Low-Resource Neural Machine Translation. In Proceedings of the Second Conference on Machine Translation, Copenhagen, Denmark, 7–8 September 2017; Association for Computational Linguistics: Stroudsburg, PA, USA, 2017; pp. 148–156. [Google Scholar] [CrossRef]

- Fadaee, M.; Bisazza, A.; Monz, C. Data Augmentation for Low-Resource Neural Machine Translation. In Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers), Vancouver, BC, Canada, 30 July–4 August 2017; pp. 567–573. [Google Scholar] [CrossRef]

- Bojar, O.; Tamchyna, A. Improving Translation Model by Monolingual Data. In Proceedings of the Sixth Workshop on Statistical Machine Translation, Edinburgh, UK, 30–31 July 2011; Association for Computational Linguistics: Stroudsburg, PA, USA, 2011; pp. 330–336. [Google Scholar]

- Gwinnup, J.; Anderson, T.; Erdmann, G.; Young, K.; Kazi, M.; Salesky, E.; Thompson, B.; Taylor, J. The AFRL-MITLL WMT17 Systems: Old, New, Borrowed, BLEU. In Proceedings of the Second Conference on Machine Translation; Association for Computational Linguistics: Stroudsburg, PA, USA, 2017; pp. 303–309. [Google Scholar] [CrossRef]

- Lample, G.; Ott, M.; Conneau, A.; Denoyer, L.; Ranzato, M. Phrase-Based & Neural Unsupervised Machine Translation. arXiv 2018, arXiv:1804.07755. [Google Scholar]

- Poncelas, A.; Shterionov, D.; Way, A.; de Buy Wenniger, G.M.; Passban, P. Investigating Backtranslation in Neural Machine Translation. arXiv 2018, arXiv:1804.06189. [Google Scholar]

- He, D.; Xia, Y.; Qin, T.; Wang, L.; Yu, N.; Liu, T.Y.; Ma, W.Y. Dual Learning for Machine Translation. In Advances in Neural Information Processing Systems 29; Lee, D.D., Sugiyama, M., Luxburg, U.V., Guyon, I., Garnett, R., Eds.; Curran Associates, Inc.: Red Hook, NY, USA, 2016; pp. 820–828. [Google Scholar]

- Park, J.; Song, J.; Yoon, S. Building a Neural Machine Translation System Using Only Synthetic Parallel Data. arXiv 2017, arXiv:1704.00253. [Google Scholar]

- Karakanta, A.; Dehdari, J.; van Genabith, J. Neural machine translation for low-resource languages without parallel corpora. Mach. Transl. 2018, 32, 167–189. [Google Scholar] [CrossRef]

- Chu, C.; Nakazawa, T.; Kurohashi, S. Chinese Characters Mapping Table of Japanese, Traditional Chinese and Simplified Chinese. In Proceedings of the 8th Conference on International Language Resources and Evaluation, Istanbul, Turkey, 23–25 May 2012; pp. 2149–2152. [Google Scholar]

- Chu, C.; Nakazawa, T.; Kurohasi, S. Japanese-Chinese phrase alignment using common Chinese characters information. In Proceedings of the MT Summit XIII, Xiamen, China, 19–23 September 2011; pp. 475–482. [Google Scholar]

- Klein, G.; Kim, Y.; Deng, Y.; Senellart, J.; Rush, A.M. OpenNMT: Open-Source Toolkit for Neural Machine Translation. arXiv 2017, arXiv:1701.02810. [Google Scholar]

- Papineni, K.; Roukos, S.; Ward, T.; Zhu, W.-J. BLEU: A Method for Automatic Evaluation of Machine Translation. In Proceedings of the 40th Annual Meeting on Association for Computational Linguistics, Philadelphia, PA, USA, 7–12 July 2002; pp. 311–318. [Google Scholar]

- Snover, M.; Dorr, B.; Schwartz, R.; Micciulla, L.; Makhoul, J. A study of translation edit rate with targeted human annotation. In Proceedings of the Association for Machine Translation in the Americas, Cambridge, MA, USA, 8–12 August 2006; pp. 223–231. [Google Scholar]

| Original/Generated Sentences | Input Japanese Sentence | English Translation of the Input Japanese Sentence |

|---|---|---|

| Source sentence (original) | バニラ刺激で両群に差がなく,// トルエン刺激で患者のみに,主にテント下の中枢神経で広範の異常を認めた。 | Vanilla stimulation did not differ between the two groups, // with toluene stimulation only in patients, broadly abnormal in the central nervous system under the tentorium. |

| Pseudo-source sentence 1 (pseudo- and original) | 香草刺激では,両群に差はなかった // トルエン刺激で患者のみに,主にテント下の中枢神経で広範の異常を認めた。 | There was no difference between the two groups with vanilla stimulation // only in patients, toluene stimulation showed extensive abnormalities, mainly in the central nervous system under the tent. |

| Pseudo-source sentence 2 (original and pseudo-) | バニラ刺激で両群に差がなく, // トルエン刺激には主に帳票物下の中枢神経で広範囲の異常が認められた。 | With vanilla stimulation there was no difference between both groups, // a wide range of abnormality was confirmed mainly in the central nervous system under the slap for toluene stimulation. |

| The Error Rate of Aligned Sentences (%) | ||||||

|---|---|---|---|---|---|---|

| Without cc | With cc | Without cc | With cc | Without cc | With cc | |

| 8.8 | 6.8 | 7.6 | 5.6 | 9.2 | 9.0 | |

| 2.1 | 1.7 | 1.7 | 0.8 | 3.3 | 2.2 | |

| 7.0 | 3.0 | 6.0 | 3.8 | 7.6 | 7.2 | |

| ASPEC-JC Corpus | Number of Sentence Pairs |

|---|---|

| Training data | 672,315 |

| Development (dev) data | 2090 |

| Development-test (dev-test) data | 2148 |

| Test data | 2107 |

| Method | Chinese→Japanese | |||||||

|---|---|---|---|---|---|---|---|---|

| # Sentences | # Back-Translated | ppl | BLEU (%) | TER (%) | ||||

| Raw | Used | Dev | Dev-Test | Test | Dev-Test | Test | ||

| Baseline 1 | 300k | 300k | 0 | 3.6 | 38.5 | 38.7 | 44.0 | 44.8 |

| Baseline 2 for P1 | 518k | 518k | 218k | 3.5 | 39.1 | 39.4 | 43.9 | 45.3 |

| Baseline 2 for P2 | 531k | 531k | 231k | 3.5 | 39.2 | 39.5 | 43.0 | 43.8 |

| Copied | 977k | 977k | 0 | 3.5 | 38.9 | 39.2 | 43.7 | 43.9 |

| Partial | 984k | 984k | 0 | 3.5 | 38.9 | 39.2 | 43.0 | 43.4 |

| Proposed 1 | 952k | 923k | 218k | 3.4 | 39.2 | 39.5 | 44.2 | 43.4 |

| Proposed 2 | 977k | 945k | 231k | 3.4 | 39.3 | 39.7 | 42.9 | 43.4 |

| Method | Chinese→Japanese | |||||||

|---|---|---|---|---|---|---|---|---|

| # Sentences | # Back-Translated | ppl | BLEU (%) | TER (%) | ||||

| Raw | Used | Dev | Dev-Test | Test | Dev-Test | Test | ||

| Baseline 1 | 300k | 300k | 0 | 2.7 | 38.1 | 38.0 | 44.9 | 44.8 |

| Baseline 2 for P1 | 518k | 518k | 218k | 2.6 | 40.0 | 39.4 | 44.5 | 44.1 |

| Baseline 2 for P2 | 529k | 529k | 229k | 2.6 | 40.1 | 39.5 | 43.6 | 43.8 |

| Copied | 972k | 972k | 0 | 2.6 | 39.7 | 39.8 | 43.5 | 43.3 |

| Partial | 984k | 984k | 0 | 2.6 | 39.0 | 39.2 | 43.9 | 43.8 |

| Proposed 1 | 952k | 947k | 218k | 2.5 | 40.2 | 40.1 | 42.9 | 43.3 |

| Proposed 2 | 972k | 967k | 229k | 2.5 | 40.5 | 40.2 | 43.6 | 43.4 |

| Method | Chinese→Japanese | |||||||

|---|---|---|---|---|---|---|---|---|

| # Sentences | # Back-Translated | ppl | BLEU (%) | TER (%) | ||||

| Raw | Used | Dev | Dev-Test | Test | Dev-Test | Test | ||

| Baseline 1 | 150k | 150k | 0 | 4.3 | 36.5 | 36.5 | 48.5 | 50.1 |

| Baseline 2 + mono (522k) | 672k | 672k | 522k | 3.8 | 38.8 | 39.1 | 44.6 | 44.7 |

| 150k + mono (522k) + P2 | 2313k | 2201k | 525k | 3.7 | 38.9 | 39.1 | 43.9 | 44.4 |

| Method | Chinese→Japanese | |||||||

|---|---|---|---|---|---|---|---|---|

| # Sentences | # Back-Translated | ppl | BLEU (%) | TER (%) | ||||

| Raw | Used | Dev | Dev-Test | Test | Dev-Test | Test | ||

| Baseline 1 | 150k | 150k | 0 | 3.1 | 35.4 | 35.5 | 48.4 | 47.5 |

| Baseline 2 + mono (522k) | 672k | 672k | 522k | 2.8 | 39.3 | 39.1 | 45.1 | 44.6 |

| 150k + mono (522k) + P2 | 2239k | 2134k | 515k | 2.7 | 40.6 | 40.1 | 43.7 | 43.7 |

| Method | Chinese→Japanese | |||||||

|---|---|---|---|---|---|---|---|---|

| # Sentences | # Back-Translated | ppl | BLEU (%) | TER (%) | ||||

| Raw | Used | Dev | Dev-Test | Test | Dev-Test | Test | ||

| Baseline 1 | 300k | 300k | 0 | 3.6 | 38.5 | 38.7 | 44.0 | 44.8 |

| Baseline 2 + mono (372k) | 672k | 672k | 372k | 3.4 | 39.6 | 39.7 | 42.8 | 44.2 |

| 300k + mono (372k) + P2 | 2287k | 2234k | 522k | 3.4 | 39.8 | 40.1 | 42.6 | 43.3 |

| Method | Chinese→Japanese | |||||||

|---|---|---|---|---|---|---|---|---|

| # Sentences | # Back-Translated | ppl | BLEU (%) | TER (%) | ||||

| Raw | Used | Dev | Dev-Test | Test | Dev-Test | Test | ||

| Baseline 1 | 300k | 300k | 0 | 2.7 | 38.1 | 38.0 | 44.9 | 44.8 |

| Baseline 2 + mono (372k) | 672k | 672k | 372k | 2.6 | 40.5 | 39.9 | 43.3 | 43.3 |

| 300k + mono (372k) + P2 | 2223k | 2213k | 501k | 2.5 | 41.8 | 41.4 | 42.2 | 42.5 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, J.; Matsumoto, T. Corpus Augmentation for Neural Machine Translation with Chinese-Japanese Parallel Corpora. Appl. Sci. 2019, 9, 2036. https://doi.org/10.3390/app9102036

Zhang J, Matsumoto T. Corpus Augmentation for Neural Machine Translation with Chinese-Japanese Parallel Corpora. Applied Sciences. 2019; 9(10):2036. https://doi.org/10.3390/app9102036

Chicago/Turabian StyleZhang, Jinyi, and Tadahiro Matsumoto. 2019. "Corpus Augmentation for Neural Machine Translation with Chinese-Japanese Parallel Corpora" Applied Sciences 9, no. 10: 2036. https://doi.org/10.3390/app9102036

APA StyleZhang, J., & Matsumoto, T. (2019). Corpus Augmentation for Neural Machine Translation with Chinese-Japanese Parallel Corpora. Applied Sciences, 9(10), 2036. https://doi.org/10.3390/app9102036