A Meta-Learning-Based Framework for Cellular Traffic Forecasting

Abstract

1. Introduction

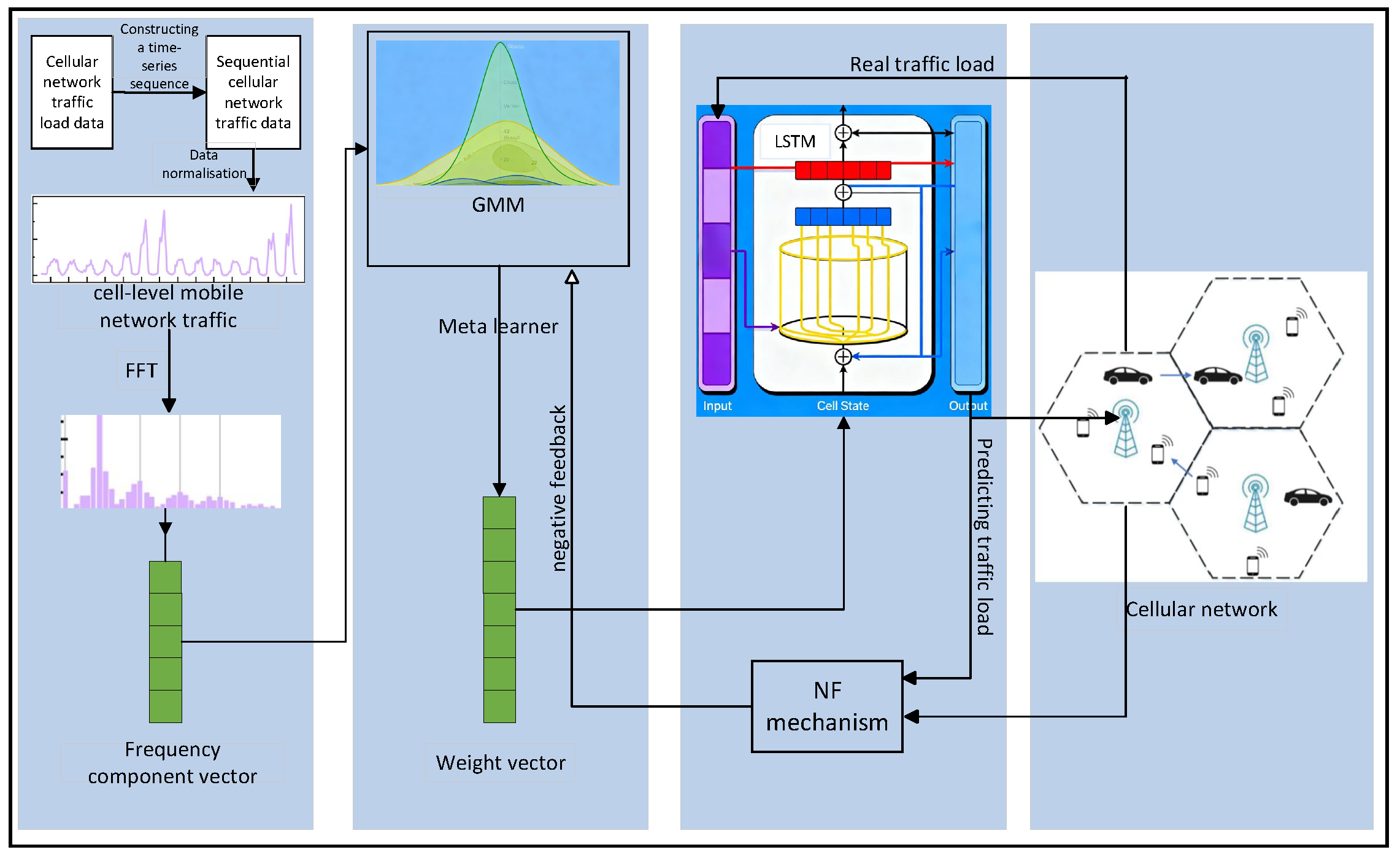

- Proposing a GMM-based meta-learner to replace the KNN meta-learner in ML-TP. GMM enables probabilistic modelling of the meta-feature space, capturing latent structures in task distributions.

- The MCM is introduced to overcome the limitations of KNN’s rigid assignment in ML-TP. MCM initialises the base learner for new tasks by synthesising weight vectors from multiple Gaussian components.

- A prediction–correction negative feedback mechanism (NF) is designed to dynamically adjust GMM parameters during long-term predictions.

2. Related Work

- Hybrid prediction models based on signal decomposition. Their core concept employs a “decomposition–prediction–reconstruction” paradigm: first utilising signal processing techniques such as empirical mode decomposition or wavelet transforms to decompose non-stationary raw traffic sequences into relatively stationary sub-components, then predicting and fusing these separately to reduce modelling complexity. Such methods demonstrate unique advantages in handling complex fluctuation patterns.

- Deep learning-based end-to-end prediction models, which represent the current mainstream research direction. These can be further subdivided based on network architecture and spatio-temporal information processing approaches: recurrent neural networks (RNNs/LSTM/GRUs) and their hybrid variants, which focus on capturing temporal dependencies; convolutional neural networks (CNNs) and their spatio-temporal fusion variants, adept at extracting spatial features; and graph neural networks (GNNs), capable of directly modelling base station network topologies.

- Emerging models adapted from other domains, such as Transformers utilising self-attention mechanisms to capture long-range dependencies, and multi-task learning frameworks designed to enhance generalisation capabilities through cross-task knowledge sharing.

2.1. Signal Decomposition-Based Hybrid Forecasting Models

2.2. Deep Learning-Based End-to-End Forecasting Models

2.2.1. Recurrent Neural Networks and Their Variants

2.2.2. Convolutional Neural Networks and Spatio-Temporal Fusion Models

2.2.3. Graph Neural Networks (GNNs) and Network Topology Modelling

2.3. Transformers and Other Emerging Models

2.4. Summary

3. GMM-ML-TP Cellular Traffic Forecasting Model

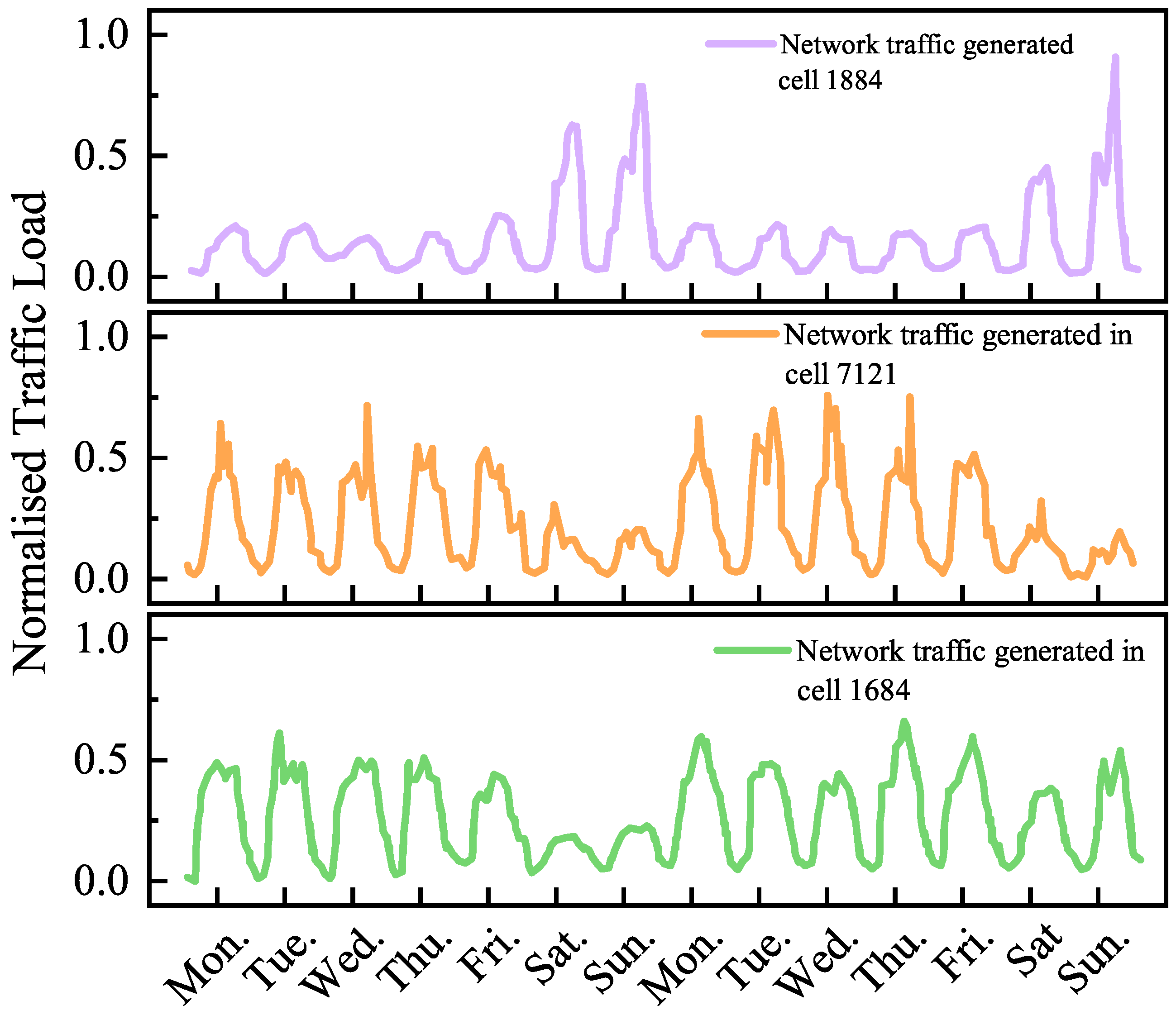

3.1. Dataset and Preliminary Analysis

- (1)

- Spatial Gridding and Cell Definition

- (2)

- Time Series Construction

- (3)

- Data Normalisation

- (4)

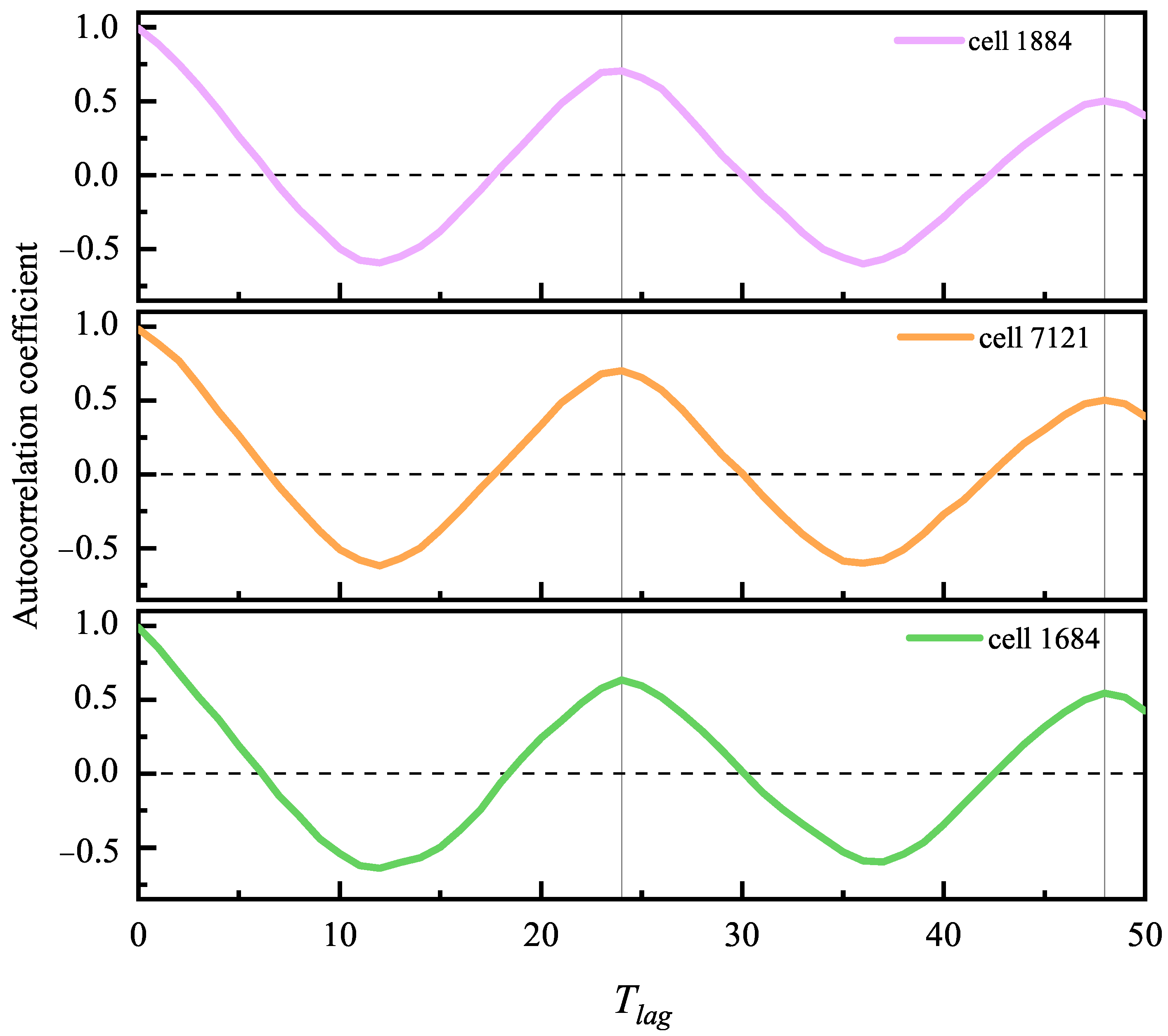

- Meta-feature extraction

- (1)

- Generation of feature candidate pool based on global energy spectrum

- (2)

- To ensure the universality of these five frequency components, we further examined their significance across different cellular subpopulations.

- (3)

- Interpretability of physical significance

3.2. Probabilistic Modelling in Feature Space

3.3. Verification of Feature Distribution Assumptions

3.3.1. Intra-Component Multinormality Test

3.3.2. Model Comparison and Goodness-of-Fit Assessment

3.3.3. Cluster Structure Validation

3.4. Prediction Correction Mechanism Based on the SCM

- (1)

- Initial weight allocation

- (2)

- Prediction Error Calculation

- (3)

- Weighting Coefficient Update

3.5. Prediction Correction Mechanism Based on MCM

- (1)

- Initial Weight Synthesis

- (2)

- Responsibility Weight Calculation

- (3)

- Multi-component collaborative error correction

- (4)

- Convergence Analysis

- (5)

- Comparison of the Correction Mechanism with Standard Methods

- (1)

- Differences from the standard EM algorithm:

- (2)

- Differences from reinforcement learning:

4. Experimental Analysis

4.1. Experimental Objectives

- Evaluate the efficacy of core innovations: Quantify improvements in predictive performance from the MCM and responsibility-weighted negative feedback mechanism (MCM-NF) through systematic comparisons with traditional deep learning baselines and meta-learning baselines (ML-TP).

- Conduct ablation analysis to quantify component contributions: By controlling variables, isolate and evaluate the individual impacts of the GMM, MCM, and NF on the model’s overall performance.

- Test model robustness in small-sample scenarios: Evaluate model generalisation capability under varying training data volumes, with particular emphasis on prediction stability under extreme data scarcity.

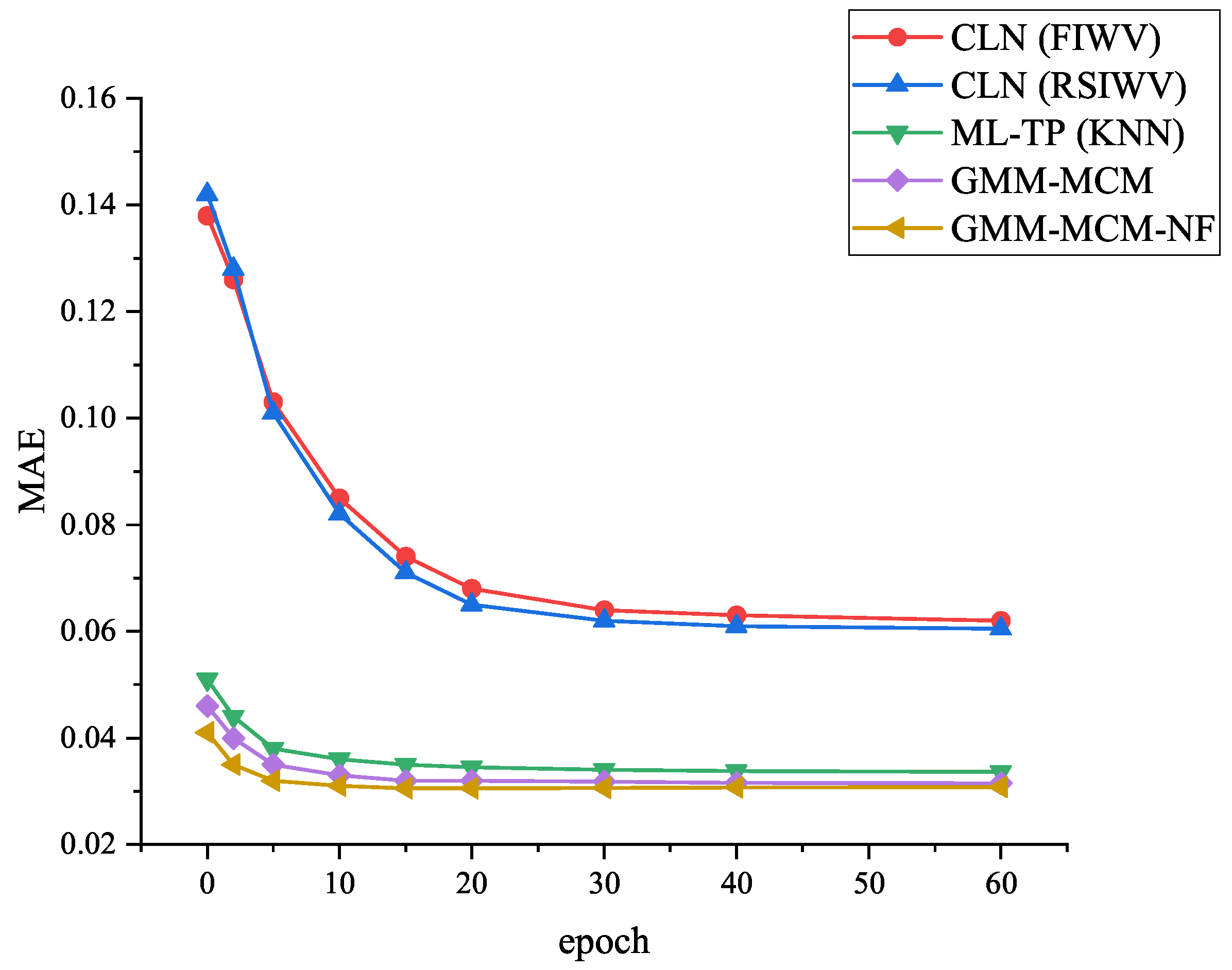

- Analyse model learning efficiency: Compare the proposed model with baseline methods in terms of convergence speed and the training data volume required to achieve equivalent performance.

4.2. Datasets and Preprocessing

- (1)

- Time Series Construction:

- (2)

- Data Normalisation:

- (3)

- Meta-Feature Extraction:

- (4)

- Foundational Sample Construction:

- (5)

- Dataset Partitioning:

4.2.1. Base Learner Configuration

4.2.2. Component Learner and Correction Parameters

4.3. Comparison of Algorithms

4.4. Evaluation Metrics

4.5. Results and Discussion

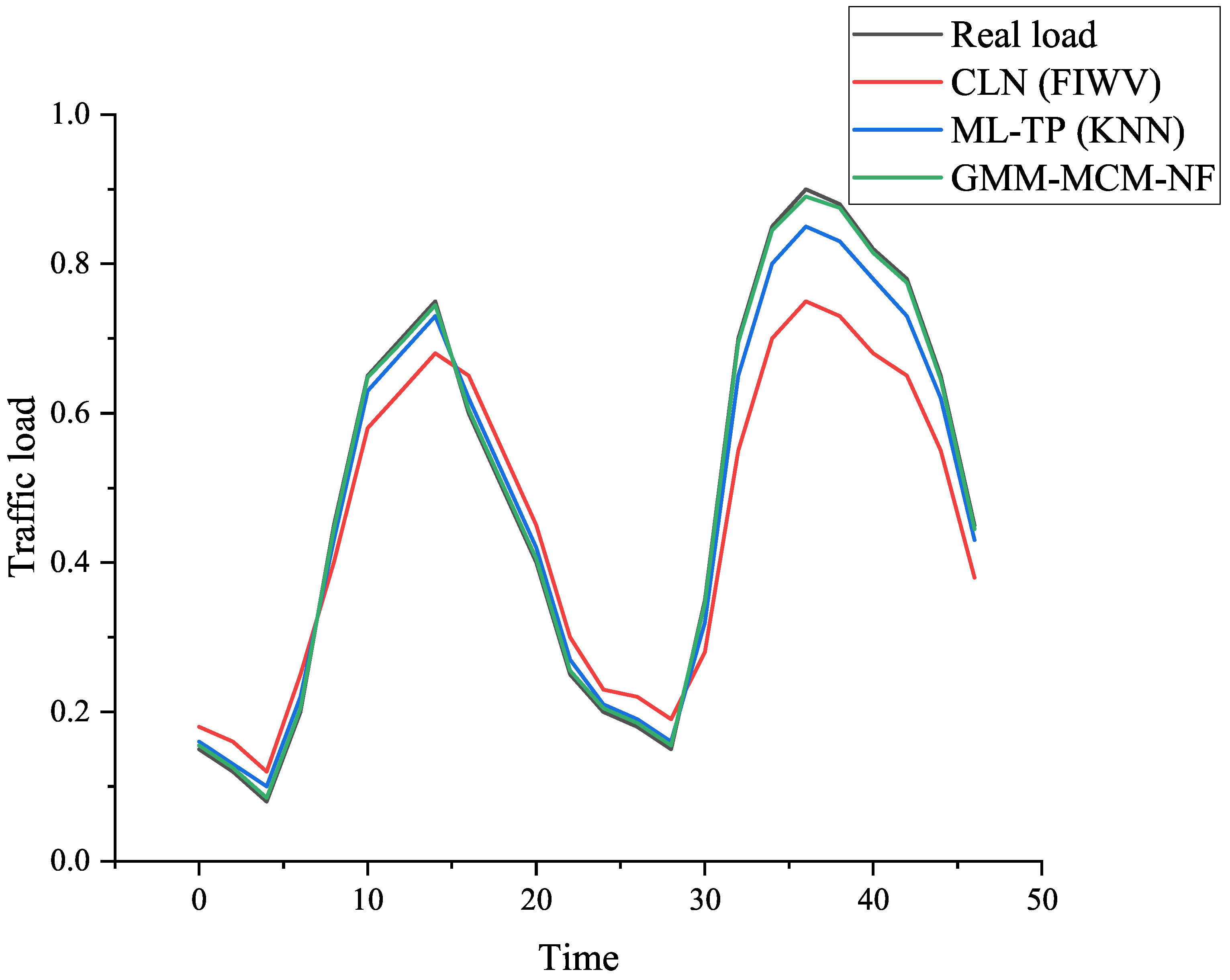

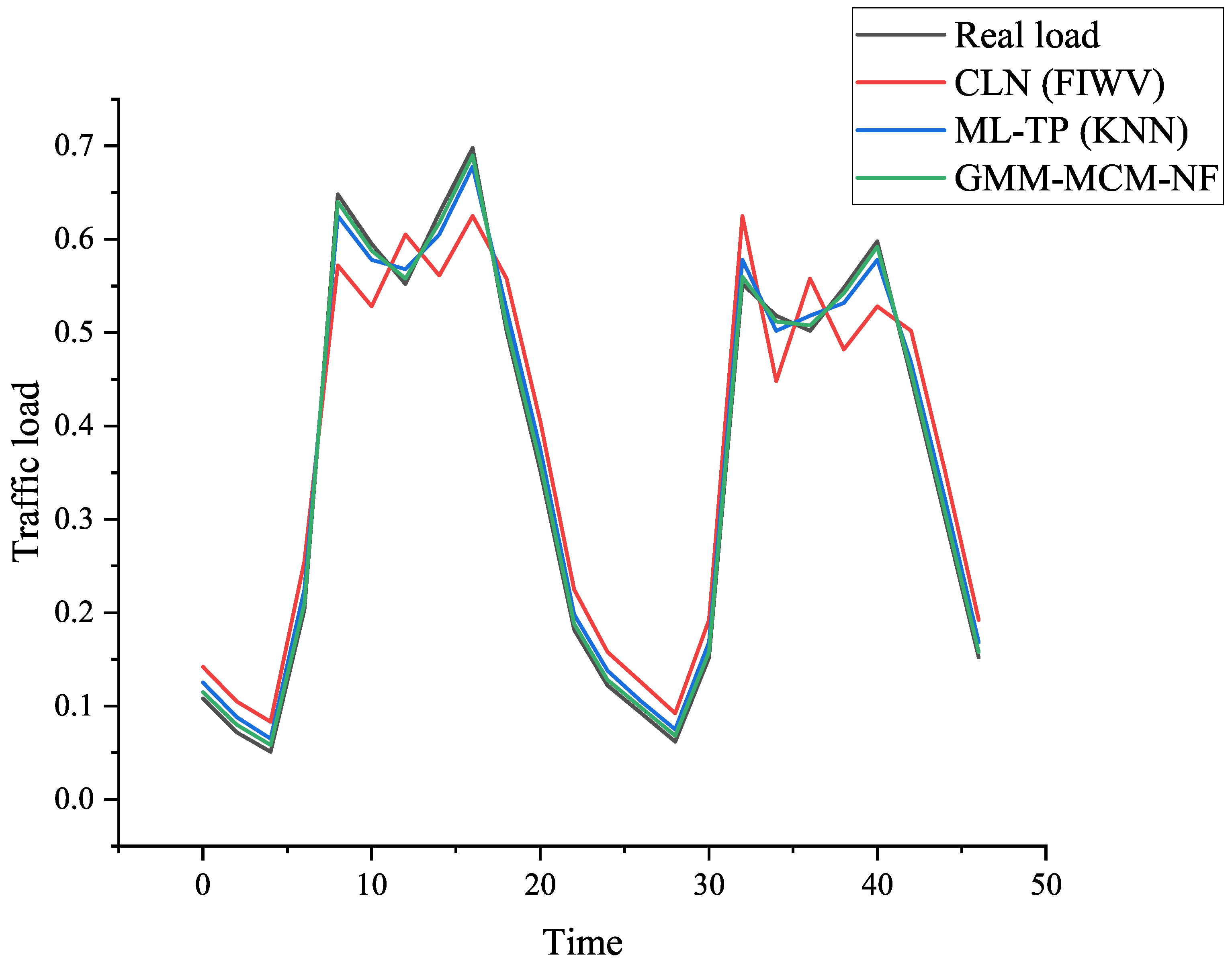

4.5.1. Overall Performance Comparison

4.5.2. Multi-Step Forecasting Performance Analysis

4.5.3. Learning Efficiency Analysis

4.5.4. Robustness Testing in Low-Sample-Size Scenarios

4.5.5. Model Component Ablation Experiments

4.5.6. Comprehensive Comparison with GNN Baselines

4.5.7. Validation of Cross-Dataset Generalisation Capability

- (1)

- Dataset partitioning and simulation rationale

- (2)

- Experimental Setup

- (3)

- Results and Analysis

4.5.8. Hyperparameter Sensitivity Analysis

4.5.9. Model Explainability and Failure Mode Analysis

- (1)

- Meta-feature-component semantic mapping:

- (2)

- Attribution analysis for prediction failures:

- Responsibility Weight Analysis: Calculate responsibility weights within the MCM correction mechanism . This weight quantifies each component’s k contribution to the current error. Define primary responsibility components .

- Meta-feature anomaly detection: Calculate the new task meta-feature to its responsible component centres using Mahalanobis distance:If this distance exceeds a preset threshold (e.g., the quantile), it indicates that the base station’s actual traffic pattern falls outside the existing experience range of its assigned component, constituting an out-of-distribution (OOD) sample. This represents a primary cause of model failure.

- Feedback signal interpretation: The corrective mechanism’s specific operations provide direct diagnostic information. If a component’s k mixing coefficient is significantly reduced, it indicates that the component has performed poorly in recent tasks, suggesting its provided “experience” may be outdated or inaccurate. Conversely, if a component’s mean or representational weight is substantially updated, it indicates the system is learning a new or evolving traffic pattern.

4.5.10. Uncertainty Analysis and Calibration

- (1)

- Performance Stability

- (2)

- Characteristics of Prediction Bias and Error Distribution

- (3)

- Error Calibration and Reliability Assessment

5. Conclusions

- In terms of prediction accuracy and generalisation capability, the GMM-MCM-NF model significantly outperforms traditional deep learning models and baseline meta-learning models. Whether on homogeneous datasets or simulated heterogeneous scenarios spanning multiple operators, this model demonstrates superior MAE, RMSE, and performance, confirming its superior knowledge transfer and task adaptation capabilities.

- Regarding learning efficiency, owing to high-quality initialisation, the proposed framework converges at a markedly faster rate (requiring approximately 40–50% fewer training iterations) while maintaining robust predictive performance under extreme small-sample conditions (e.g., with only 24 h of data). This holds considerable practical value for rapid deployment and energy-efficient management of new base stations.

- Regarding model robustness, ablation experiments confirm the synergistic effect of the three core components—GMM, MCM, and NF—which collectively contribute over 10% performance improvement. Sensitivity analysis indicates the model exhibits stability near optimal parameters, reducing fine-tuning complexity in practical deployment.

- Model lightweighting and online learning mechanisms: While the current framework achieves optimal performance with large-scale meta-training datasets, its storage and computational overhead increase with the number of tasks. Future work will investigate lightweighting techniques such as model pruning and knowledge distillation, alongside exploring more efficient online meta-learning algorithms to enable real-time, low-overhead adaptation to dynamically changing traffic patterns.

- Fusion of multimodal meta-features: This paper primarily utilises frequency-domain traffic features. Future work may incorporate richer meta-features, such as POI information around base stations, real-time weather data, and social event data, to construct a multimodal meta-learning framework. This would enable more precise characterisation of task contexts and further enhance initialisation quality.

- Validation in real cross-operator and B5G/6G scenarios: While we simulated cross-operator scenarios through regional partitioning, ultimate validation requires real-world data encompassing more operators. Furthermore, with the development of B5G/6G, network slicing, and integrated air-ground-space networks, traffic patterns will exhibit novel characteristics. Applying this framework to these emerging scenarios to test and extend its applicability holds significant research value.

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Jiang, W. Cellular traffic prediction with machine learning: A survey. Expert Syst. Appl. 2022, 201, 117163. [Google Scholar] [CrossRef]

- Wang, X.; Wang, Z.; Yang, K.; Song, Z.; Bian, C.; Feng, J.; Deng, C. A survey on deep learning for cellular traffic prediction. Intell. Comput. 2024, 3, 54. [Google Scholar] [CrossRef]

- Duan, A.; Zhang, Z. Cellular traffic prediction using a hybrid neural network based on quadratic decomposition. Syst. Eng. Electron. 2025, 47, 1687–1697. [Google Scholar]

- Jiang, D.; Zhao, H.; Wang, Z. A Long-Term Cellular Network Traffic Forecasting Method Based on EWT and NeuralProphet-MLP. Mod. Inf. Technol. 2024, 8, 52–57. [Google Scholar]

- Yan, W. Research on Key Energy-Saving Technologies Based on Traffic Forecasting in Cellular Networks. Ph.D. Thesis, Zhejiang University, Hangzhou, China, 2012. [Google Scholar]

- Gu, M.C. Traffic forecasting method for EMD-LSTM networks based on noise statistics. Comput. Meas. Control 2023, 31, 21–27. [Google Scholar]

- Zang, Y.; Ni, F.; Feng, Z.; Cui, S.; Ding, Z. Wavelet transform processing for cellular traffic prediction in machine learning networks. In Proceedings of the 2015 IEEE China Summit and International Conference on Signal and Information Processing (ChinaSIP), Chengdu, China, 12–15 July 2015; pp. 458–462. [Google Scholar]

- Zhang, L. Wavelet-scale particle swarm variable step detection for multi-cluster network traffic. Sci. Technol. Bull. 2015, 31, 215–217. [Google Scholar]

- Zhang, Z.; Wu, D.; Zhang, C. Research on cellular traffic prediction based on multi-channel sparse LSTM. Comput. Sci. 2021, 48, 296–300. [Google Scholar]

- Jaffry, S.; Hasan, S.F. Cellular traffic prediction using recurrent neural networks. In Proceedings of the 2020 IEEE 5th International Symposium on Telecommunication Technologies (ISTT), Shah Alam, Malaysia, 9–11 November 2020; pp. 94–98. [Google Scholar]

- Jaffry, S. Cellular traffic prediction with recurrent neural network. arXiv 2020, arXiv:2003.02807. [Google Scholar] [CrossRef]

- Li, W.; Jia, H.; Shen, C.; Wu, Y. LSTM-TCN base station traffic prediction algorithm based on multi-head self-attention mechanism. Mod. Electron. Technol. 2024, 47, 125–130. [Google Scholar]

- Alsaade, F.W.; Hmoud Al-Adhaileh, M. Cellular traffic prediction based on an intelligent model. Mob. Inf. Syst. 2021, 2021, 6050627. [Google Scholar] [CrossRef]

- Azari, A.; Papapetrou, P.; Denic, S.; Peters, G. Cellular traffic prediction and classification: A comparative evaluation of LSTM and ARIMA. In Proceedings of the International Conference on Discovery Science, Split, Coratia, 28–30 October 2019; pp. 129–144. [Google Scholar]

- Kurri, V.; Raja, V.; Prakasam, P. Cellular traffic prediction on blockchain-based mobile networks using LSTM model in 4G LTE network. Peer-Netw. Appl. 2021, 14, 1088–1105. [Google Scholar] [CrossRef]

- Li, H.; Xu, Y.; Guo, Y. Time series forecasting based on LSTM hybrid models. Yangtze River Inf. Commun. 2022, 35, 38–40. [Google Scholar]

- Zheng, S.; Zhang, X.; Zhang, Y.; Wang, X.; Yuan, G. Low-Complexity Cellular Traffic Forecasting Method Based on Lightweight Convolutional Neural Networks. Radio Commun. Technol. 2024, 50, 921–931. [Google Scholar]

- Huang, D.; Yang, B.; Wu, Z.; Kuang, J.; Yan, Z. Spatiotemporal fully connected convolutional networks for citywide cellular traffic prediction. Comput. Eng. Appl. 2021, 57, 168–175. [Google Scholar]

- Zhang, C.; Zhang, H.; Yuan, D.; Zhang, M. Citywide cellular traffic prediction based on densely connected convolutional neural networks. IEEE Commun. Lett. 2018, 22, 1656–1659. [Google Scholar] [CrossRef]

- Zhang, D.; Ren, J. Cellular network traffic prediction based on multi-temporal granularity spatio-temporal graph networks. Comput. Technol. Dev. 2024, 34, 24–30. [Google Scholar]

- Feng, J.; Chen, X.; Gao, R.; Zeng, M.; Li, Y. Deeptp: An end-to-end neural network for mobile cellular traffic prediction. IEEE Netw. 2018, 32, 108–115. [Google Scholar] [CrossRef]

- Zhang, D.; Liu, L.; Xie, C.; Yang, B.; Liu, Q. Citywide cellular traffic prediction based on a hybrid spatiotemporal network. Algorithms 2020, 13, 20. [Google Scholar] [CrossRef]

- Ni, F. Cellular network traffic prediction based on an improved wavelet-Elman neural network algorithm. Electron. Des. Eng. 2017, 25, 171–175. [Google Scholar]

- Li, Z.; Song, W.; Wang, C. Cellular network traffic prediction based on weighted multi-graph neural networks. Electron. Des. Eng. 2025, 33, 17–21. [Google Scholar]

- Guo, X.; Ma, M.; Zhou, Z.; Lu, Z.; Zhang, B. Mobile Cellular Network Traffic Forecasting Based on Spatio-Temporal Graph Convolutional Neural Networks. Sci. Ocean. Story Rev. 2023, 25–27. [Google Scholar]

- Wang, Y.; Fan, Y.; Sun, Y.; Xiong, J.; Jiang, T.; Zhou, Y.; Han, Z.; Li, Z.; Wang, Z. Research on Dynamic Base Station Switching Based on Deep Reinforcement Learning. Radio Commun. Technol. 2024, 50, 815–822. [Google Scholar]

- Fu, B.; Liu, S.; Liao, G.; Liu, Q.; Li, Z. Intelligent Decision System for Energy Conservation and Emission Reduction in 4G/5G Base Stations Based on T-GCN. Radio Commun. Technol. 2024, 50, 631–639. [Google Scholar]

- Wang, Z.; Hu, J.; Min, G.; Zhao, Z.; Chang, Z.; Wang, Z. Spatial-temporal cellular traffic prediction for 5G and beyond: A graph neural networks-based approach. IEEE Trans. Ind. Inform. 2022, 19, 5722–5731. [Google Scholar] [CrossRef]

- Yao, Y.; Gu, B.; Su, Z.; Guizani, M. MVSTGN: A multi-view spatial-temporal graph network for cellular traffic prediction. IEEE Trans. Mob. Comput. 2021, 22, 2837–2849. [Google Scholar] [CrossRef]

- Zhao, N.; Wu, A.; Pei, Y.; Liang, Y.C.; Niyato, D. Spatial-temporal aggregation graph convolution network for efficient mobile cellular traffic prediction. IEEE Commun. Lett. 2021, 26, 587–591. [Google Scholar] [CrossRef]

- Zhou, X.; Zhang, Y.; Li, Z.; Wang, X.; Zhao, J.; Zhang, Z. Large-scale cellular traffic prediction based on graph convolutional networks with transfer learning. Neural Comput. Appl. 2022, 34, 5549–5559. [Google Scholar] [CrossRef]

- Zhao, S.; Jiang, X.; Jacobson, G.; Jana, R.; Hsu, W.L.; Rustamov, R.; Talasila, M.; Aftab, S.A.; Chen, Y.; Borcea, C. Cellular network traffic prediction incorporating handover: A graph convolutional approach. In Proceedings of the 2020 17th Annual IEEE International Conference on Sensing, Communication, and Networking (SECON), Como, Italy, 22–25 June 2020; pp. 1–9. [Google Scholar]

- Liu, Q.; Li, J.; Lu, Z. ST-Tran: Spatial-temporal transformer for cellular traffic prediction. IEEE Commun. Lett. 2021, 25, 3325–3329. [Google Scholar] [CrossRef]

- Gu, B.; Zhan, J.; Gong, S.; Liu, W.; Su, Z.; Guizani, M. A spatial-temporal transformer network for city-level cellular traffic analysis and prediction. IEEE Trans. Wirel. Commun. 2023, 22, 9412–9423. [Google Scholar] [CrossRef]

- Wei, B. Research on Spatio-Temporal Prediction Methods for Metropolitan Cellular Traffic Based on Deep Multi-Task Learning. Ph.D. Thesis, North China University of Technology, Beijing, China, 2022. [Google Scholar]

- Zhang, J.; Sun, L. Deep learning-based network anomaly detection and intelligent traffic prediction methods. Radio Commun. Technol. 2022, 48, 81–88. [Google Scholar]

- Cai, D.; Chen, K.; Lin, Z.; Li, D.; Zhou, T.; Leung, M.F. JointSTNet: Joint pre-training for spatial-temporal traffic forecasting. IEEE Trans. Consum. Electron. 2024, 71, 6239–6252. [Google Scholar] [CrossRef]

- Wan, Y.; Wang, N.; Liu, X.; Wang, Y.; Blaabjerg, F.; Chen, Z. Inertia-Emulation-Based Fast Frequency Response From EVs: A Multi-Level Framework With Game-Theoretic Incentives and DRL. IEEE Trans. Smart Grid 2025, in press. [CrossRef]

- Mehri, H.; Chen, H.; Mehrpouyan, H. Cellular Traffic Prediction Using Online Prediction Algorithms. arXiv 2024, arXiv:2405.05239. [Google Scholar] [CrossRef]

- Santos Escriche, E.; Vassaki, S.; Peters, G. A comparative study of cellular traffic prediction mechanisms. Wirel. Netw. 2023, 29, 2371–2389. [Google Scholar] [CrossRef]

- Zhang, C.; Zhang, H.; Qiao, J.; Yuan, D.; Zhang, M. Deep transfer learning for intelligent cellular traffic prediction based on cross-domain big data. IEEE J. Sel. Areas Commun. 2019, 37, 1389–1401. [Google Scholar] [CrossRef]

- Zhao, N.; Ye, Z.; Pei, Y.; Liang, Y.C.; Niyato, D. Spatial-temporal attention-convolution network for citywide cellular traffic prediction. IEEE Commun. Lett. 2020, 24, 2532–2536. [Google Scholar] [CrossRef]

- Barlacchi, G.; De Nadai, M.; Larcher, R.; Casella, A.; Chitic, C.; Torrisi, G.; Antonelli, F.; Vespignani, A.; Pentl, A.; Lepri, B. A multi-source dataset of urban life in the city of Milan and the Province of Trentino. Sci. Data 2015, 2, 150055. [Google Scholar] [CrossRef]

- Mexwell. Telecom Shanghai Dataset. 2023. Available online: https://www.kaggle.com/datasets/mexwell/telecom-shanghai-dataset (accessed on 15 January 2024).

| Researcher (Year) | Model Used | Method/Features | Principal Contributions/Conclusions |

|---|---|---|---|

| Zhang Zhengwan et al. (2021) [9] | Multi-channel sparse LSTM | The introduction of multi-source inputs and sparse connections enables the model to adaptively focus on different time points. | Enhances the model’s ability and flexibility to capture multi-source traffic information. |

| Jaffry and Hasan (2020) [10] | RNN | Validates the fundamental efficacy of RNN-based models for cellular traffic forecasting tasks. | Confirmed the potential of RNN-type models for handling such time series problems. |

| Jaffry (2020) [11] | LSTM | Conducted comparative experiments with ARIMA and FFNN. | Noted that LSTM possesses advantages in training speed, making it more suitable for scenarios requiring rapid response. |

| Li Weiyue et al. (2024) [12] | LSTM-TCN-MHSA | A hybrid model incorporating Multi-Head Self-Attention (MHSA), utilising LSTM and TCN to capture long-term/short-term and global dependencies, respectively. | Enhances spatio-temporal feature extraction by introducing an attention mechanism to handle complex dependencies. |

| Alsaade and Al-Adhaileh (2021) [13] | SES-LSTM | Data is preprocessed using Single Exponential Smoothing (SES) before prediction by LSTM. | Data smoothing preprocessing enhances LSTM prediction performance on complex traffic data. |

| Azari et al. (2019) [14] | LSTM | Compared with ARIMA models under conditions of large-scale, high-sampling-frequency data. | It was demonstrated that, when sufficient data is available, LSTM generally outperforms traditional ARIMA models. |

| Kurri et al. (2021) [15] | LSTM | Applied LSTM to blockchain-based 4G/LTE network traffic forecasting. | Demonstrated the flexibility and applicability of LSTM models across different network architectures and application scenarios. |

| Li Huidong et al. (2022) [16] | LSTM-ANFIS | Combining LSTM with Adaptive Neuro-Fuzzy Inference Systems (ANFISs) to construct a hybrid model. | Through hybrid modelling, the performance and robustness of the prediction model are further enhanced. |

| Researcher | Model Employed | Method/Core Approach | Principal Contributions/Characteristics |

|---|---|---|---|

| Zheng Songzhi et al. [17] | Lightweight CNN | Employing a parallel branch architecture to extract recent and periodic features, respectively, whilst incorporating base station density derived from K-Means clustering as an external feature. | Achieves low-complexity, high-precision forecasting whilst emphasising the effective integration of external features. |

| Huang Dongyi et al. [18] | ST-FCCNet (Spatio-Temporal Fully Connected Convolutional Network) | Designed with a special unit structure to capture spatial dependencies between any two regions within a city, while integrating external information. | Achieves modelling of extensive spatial dependencies, overcoming the limitations of local convolutions. |

| Zhang et al. [19] | Dense-Connected CNN (DenseNet) | Among the earliest to apply densely connected CNNs to city-level cellular traffic forecasting. | This validated the immense potential and effectiveness of CNN architectures in this domain. |

| Zhang Deyang et al. [20] | 1D-CNN + GAT (Graph Attention Network) | Employed a one-dimensional CNN to extract features at different temporal granularities, subsequently aggregated via a Graph Attention Network (GAT). | Effectively fuses temporal features with complex spatial (graph structure) correlations. |

| Feng et al. [21] | DeepTP (End-to-End Neural Network) | Designed an end-to-end deep learning model specifically for mobile cellular traffic forecasting. | Provides a complete end-to-end forecasting solution, simplifying the workflow. |

| Zhang et al. [22] | HSTNet (Hybrid Spatio-Temporal Network) | Extends CNNs by incorporating deformable convolutions and attention mechanisms. | Enhances the model’s adaptability and robustness to complex spatio-temporal patterns. |

| Ni Feixiang et al. [23] | k-NN + Wavelet-Elman Neural Network | Combines k-NN algorithm analysis of spatio-temporal correlations with a wavelet-Elman neural network for prediction. | Provides valuable insights for hybrid models integrating spatio-temporal information at an early stage. |

| Researcher (Year) | Model/Method Used | Core Idea/Technical Features | Principal Contributions/Application Objectives |

|---|---|---|---|

| Li Zhehui et al. (2025) [24] | Weighted Multi-Graph Convolutional Network | Constructs multi-graph relationships through distance, correlation, and attention mechanisms, while weighting and integrating features. | More finely characterises the complex multi-spatial dependencies between base stations. |

| Guo Xinyu et al. (2023) [25] | Spatio-Temporal Graph Convolutional Network (STGCN) | Designs an STGCN-based framework to simultaneously capture spatio-temporal dynamic correlations. | Improves the modelling and prediction accuracy of dynamic spatio-temporal correlations. |

| Wang Yu et al. (2024) [26]/Fu Bohan et al. (2024) [27] | T-GCN (Temporal Graph Convolutional Network) | Utilises GNN prediction outputs (e.g., future load) as inputs to dynamically control base station switching. | Applies predictive models to network energy conservation, achieving a closed-loop system of prediction and optimisation. |

| Wang et al. (2022) [28] | GNN | Applies GNNs to traffic forecasting problems in 5G and B5G networks. | Validating the efficacy and potential of GNNs in modelling future advanced network traffic. |

| Yao et al. (2021) [29] | Multi-view Spatio-temporal Graph Network (MVSTGN) | Constructing graph structures from multiple perspectives (views) to capture complex spatio-temporal dependencies. | Enhances the model’s understanding of complex spatio-temporal patterns through multi-view learning. |

| Zhao et al. (2021) [30] | Spatio-Temporal Aggregated Graph Convolutional Network | Designs novel aggregation mechanisms aimed at enhancing the efficiency of spatio-temporal information propagation and aggregation. | Achieve more efficient, computationally less costly flow prediction. |

| Zhou et al. (2022) [31] | Graph Convolutional Networks (GCN) + Transfer Learning | Employ GCNs to model spatial relationships, combined with transfer learning to address data scarcity issues. | Transferring learned knowledge to new domains to tackle the challenge of small-sample prediction in large-scale networks. |

| Zhao et al. (2020) [32] | GNN + User Handover Information | Integrates user handover behaviour information across different base stations into the graph neural network model. | Further enhances prediction accuracy by introducing mobility semantic features. |

| Number of Layers | Hidden Layer Dimensions | Dropout Rate | Learning Rate | |

|---|---|---|---|---|

| Structure I | 3 | [64,64,32] | 0.2 | 0.001 |

| Structure II | 4 | [128,64] | 0.1 |

| Algorithm | Architecture 1 (MAE/RMSE/R2) | Architecture 2 (MAE/RMSE/R2) |

|---|---|---|

| CLN (FIWV) | 0.0612 / 0.0851 / 0.6685 | 0.0621 / 0.0863 / 0.7053 |

| CLN (RSIWV) | 0.0595 / 0.0828 / 0.6721 | 0.0584 / 0.0812 / 0.7312 |

| ML-TP (KNN) | 0.0331 / 0.0489 / 0.9153 | 0.0352 / 0.0518 / 0.9076 |

| GMM-SCM | 0.0323 / 0.0476 / 0.9175 | 0.0340 / 0.0501 / 0.9102 |

| GMM-SCM-NF | 0.0315 / 0.0464 / 0.9198 | 0.0331 / 0.0488 / 0.9129 |

| GMM-MCM | 0.0311 / 0.0458 / 0.9208 | 0.0326 / 0.0481 / 0.9135 |

| GMM-MCM-NF | 0.0296 / 0.0431 / 0.9242 | f0.0311 / 0.0454 / 0.9176 |

| Time | Real Load | CLN (FIWV) Prediction | ML-TP (KNN) Prediction | ML-TP (GMM- MCM-NF) Prediction |

|---|---|---|---|---|

| 0 | 0.15 | 0.18 | 0.16 | 0.155 |

| 2 | 0.12 | 0.16 | 0.13 | 0.125 |

| 4 | 0.08 | 0.12 | 0.10 | 0.085 |

| 6 | 0.20 | 0.25 | 0.22 | 0.205 |

| 8 | 0.45 | 0.40 | 0.43 | 0.445 |

| 10 | 0.65 | 0.58 | 0.63 | 0.648 |

| 12 | 0.70 | 0.63 | 0.68 | 0.695 |

| 14 | 0.75 | 0.68 | 0.73 | 0.745 |

| 16 | 0.60 | 0.65 | 0.62 | 0.605 |

| 18 | 0.50 | 0.55 | 0.52 | 0.505 |

| 20 | 0.40 | 0.45 | 0.42 | 0.405 |

| 22 | 0.25 | 0.30 | 0.27 | 0.255 |

| 24 | 0.20 | 0.23 | 0.21 | 0.205 |

| 26 | 0.18 | 0.22 | 0.19 | 0.185 |

| 28 | 0.15 | 0.19 | 0.16 | 0.155 |

| 30 | 0.35 | 0.28 | 0.32 | 0.345 |

| 32 | 0.70 | 0.55 | 0.65 | 0.695 |

| 34 | 0.85 | 0.70 | 0.80 | 0.845 |

| 36 | 0.90 | 0.75 | 0.85 | 0.890 |

| 38 | 0.88 | 0.73 | 0.83 | 0.875 |

| 40 | 0.82 | 0.68 | 0.78 | 0.815 |

| 42 | 0.78 | 0.65 | 0.73 | 0.775 |

| 44 | 0.65 | 0.55 | 0.62 | 0.645 |

| 46 | 0.45 | 0.38 | 0.43 | 0.445 |

| Time | Real Load | CLN (FIWV) Prediction | ML-TP (KNN) Prediction | ML-TP (GMM-MCM-NF) Prediction |

|---|---|---|---|---|

| 0 | 0.108 | 0.142 | 0.125 | 0.115 |

| 2 | 0.072 | 0.105 | 0.088 | 0.080 |

| 4 | 0.051 | 0.083 | 0.065 | 0.058 |

| 6 | 0.203 | 0.255 | 0.225 | 0.210 |

| 8 | 0.648 | 0.572 | 0.625 | 0.640 |

| 10 | 0.595 | 0.528 | 0.578 | 0.588 |

| 12 | 0.552 | 0.605 | 0.568 | 0.558 |

| 14 | 0.628 | 0.561 | 0.605 | 0.618 |

| 16 | 0.698 | 0.625 | 0.678 | 0.690 |

| 18 | 0.502 | 0.558 | 0.525 | 0.508 |

| 20 | 0.352 | 0.405 | 0.375 | 0.360 |

| 22 | 0.182 | 0.225 | 0.198 | 0.188 |

| 24 | 0.122 | 0.158 | 0.138 | 0.128 |

| 26 | 0.092 | 0.125 | 0.105 | 0.098 |

| 28 | 0.062 | 0.092 | 0.075 | 0.068 |

| 30 | 0.152 | 0.192 | 0.168 | 0.158 |

| 32 | 0.552 | 0.625 | 0.578 | 0.560 |

| 34 | 0.518 | 0.448 | 0.502 | 0.512 |

| 36 | 0.502 | 0.558 | 0.518 | 0.508 |

| 38 | 0.548 | 0.482 | 0.532 | 0.542 |

| 40 | 0.598 | 0.528 | 0.578 | 0.592 |

| 42 | 0.452 | 0.502 | 0.468 | 0.458 |

| 44 | 0.302 | 0.352 | 0.322 | 0.308 |

| 46 | 0.152 | 0.192 | 0.168 | 0.158 |

| Model | 1 h | 6 h | 12 h |

|---|---|---|---|

| CLN (FIWV) | 0.0643 | 0.0789 | 0.0855 |

| ML-TP (KNN) | 0.0346 | 0.0451 | 0.0513 |

| GMM-MCM-NF | 0.0310 | 0.0395 | 0.0442 |

| Epoch | CLN (FIWV) | CLN (RSIWV) | ML-TP (KNN) | GMM-MCM | GMM-MCM-NF |

|---|---|---|---|---|---|

| 0 | 0.138 | 0.142 | 0.051 | 0.046 | 0.041 |

| 2 | 0.126 | 0.128 | 0.044 | 0.040 | 0.035 |

| 5 | 0.103 | 0.101 | 0.038 | 0.035 | 0.032 |

| 10 | 0.085 | 0.082 | 0.036 | 0.033 | 0.031 |

| 15 | 0.074 | 0.071 | 0.035 | 0.032 | 0.0305 |

| 20 | 0.068 | 0.065 | 0.0345 | 0.032 | 0.0305 |

| 30 | 0.064 | 0.062 | 0.034 | 0.0318 | 0.0306 |

| 40 | 0.063 | 0.061 | 0.0338 | 0.0316 | 0.0307 |

| 60 | 0.062 | 0.0605 | 0.0336 | 0.0315 | 0.0308 |

| Number of Samples | CLN (FIWV) | ML-TP (KNN) | GMM-MCM-NF |

|---|---|---|---|

| 12 | 0.152/0.207 | 0.086/0.119 | 0.071/0.097 |

| 24 | 0.145/0.198 | 0.081/0.113 | 0.059/0.081 |

| 48 | 0.112/0.154 | 0.065/0.091 | 0.046/0.063 |

| 96 | 0.085/0.117 | 0.050/0.069 | 0.038/0.052 |

| 168 | 0.061/0.085 | 0.033/0.049 | 0.030/0.043 |

| 336 | 0.046/0.064 | 0.028/0.040 | 0.024/0.034 |

| 672 | 0.035/0.050 | 0.025/0.036 | 0.021/0.030 |

| Model Variants | MAE | RMSE | R2 | Number of Convergence Iterations | Relative Improvement |

|---|---|---|---|---|---|

| Base (KNN) | 0.0331 | 0.0489 | 0.915 | 22 | – |

| +GMM | 0.0318 | 0.0469 | 0.918 | 19 | +3.9% |

| +GMM+MCM | 0.0306 | 0.0451 | 0.921 | 16 | +7.6% |

| +GMM+MCM+NF | 0.0296 | 0.0431 | 0.924 | 13 | +10.6% |

| Model | MAE (Structure 1) | R2 (Structure 1) | Training Time (s) | MAE (Structure 2) | R2 (Structure 2) | Training Time (s) |

|---|---|---|---|---|---|---|

| STGCN | 0.0381 | 0.892 | 285 | 0.0395 | 0.885 | 312 |

| GWNET | 0.0368 | 0.901 | 320 | 0.0372 | 0.894 | 345 |

| GTS | 0.0352 | 0.908 | 298 | 0.0361 | 0.899 | 325 |

| AGCRN | 0.0345 | 0.910 | 265 | 0.0358 | 0.902 | 290 |

| ML-TP (KNN) | 0.0346 | 0.910 | 45 | 0.0368 | 0.902 | 52 |

| GMM-MCM-NF | 0.0310 | 0.918 | 38 | 0.0325 | 0.912 | 42 |

| Model | MAE (Shanghai) | R2 (Shanghai) |

|---|---|---|

| CLN-RSIWV | 0.1328 | 0.501 |

| CLN-FIWV | 0.1246 | 0.561 |

| ML-TP (KNN) | 0.1082 | 0.631 |

| dmTP (DNN) | 0.1019 | 0.664 |

| GMM-MCM-NF | 0.0943 | 0.708 |

| Model | Region-A (Intra-Region Performance) | Region-B (Inter-Region Performance) |

|---|---|---|

| CLN (FIWV) | 0.1215 / 0.1632 / 0.645 | 0.1458 / 0.1951 / 0.521 |

| CLN (RSIWV) | 0.1158 / 0.1554 / 0.678 | 0.1382 / 0.1853 / 0.558 |

| ML-TP (KNN) | 0.0983 / 0.1356 / 0.722 | 0.1156 / 0.1592 / 0.635 |

| GMM-MCM-NF | 0.0862 / 0.1189 / 0.768 | 0.0988 / 0.1365 / 0.695 |

| Parameter Combination | Stability Analysis | |||

|---|---|---|---|---|

| 0.0335 | 0.0331 | 0.0338 | Performance fluctuations are relatively minor but overall suboptimal | |

| 0.0318 | 0.0310 | 0.0319 | Optimal region, robust performance | |

| 0.0329 | 0.0323 | 0.0332 | Performance degradation, unstable training |

| Component ID | Dominant Frequency Pattern | Inferred Semantic Label | Typical Traffic Characteristics |

|---|---|---|---|

| 1 | Daily cycle (strong), weekly cycle (moderate) | Commercial district | Significant daytime traffic peaks on weekdays, with flatter patterns at weekends |

| 2 | Daily cycle (moderate), uniform across all cycles | Residential Area | Pronounced morning and evening peaks, with relatively high night-time traffic |

| 3 | Weekly cycle (strong), daily cycle (weak) | Entertainment District | Weekend traffic surges, weekday traffic low |

| ... | ... | ... | ... |

| Metric | Mean | Standard Deviation | Coefficient of Variation (%) |

|---|---|---|---|

| MAE | 0.0296 | 0.0005 | 1.69 |

| Root Mean Square Error | 0.0431 | 0.0007 | 1.62 |

| MAPE (%) | 7.82 | 0.14 | 1.79 |

| R2 | 0.9242 | 0.0015 | 0.16 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Liu, X.; Li, Y.; Zhu, S.; Su, Q.; Li, C. A Meta-Learning-Based Framework for Cellular Traffic Forecasting. Appl. Sci. 2025, 15, 11616. https://doi.org/10.3390/app152111616

Liu X, Li Y, Zhu S, Su Q, Li C. A Meta-Learning-Based Framework for Cellular Traffic Forecasting. Applied Sciences. 2025; 15(21):11616. https://doi.org/10.3390/app152111616

Chicago/Turabian StyleLiu, Xiangyu, Yuxuan Li, Shibing Zhu, Qi Su, and Changqing Li. 2025. "A Meta-Learning-Based Framework for Cellular Traffic Forecasting" Applied Sciences 15, no. 21: 11616. https://doi.org/10.3390/app152111616

APA StyleLiu, X., Li, Y., Zhu, S., Su, Q., & Li, C. (2025). A Meta-Learning-Based Framework for Cellular Traffic Forecasting. Applied Sciences, 15(21), 11616. https://doi.org/10.3390/app152111616