1. Introduction

Maintaining a healthy diet and balanced intake of essential nutrients plays a crucial role in modern lifestyles [

1]. Unhealthy eating habits can lead to a variety of health issues, such as diabetes and cardiovascular diseases. By analyzing users’ dietary patterns, personalized nutrition advice and health guidance can be provided to help individuals achieve their health goals [

2]. For example, a dietary health management system can identify food types, analyze nutritional components, calculate the quantities of each component in the food, and provide users with appropriate portion sizes to manage their dietary health. The foundation of this process is food image recognition. Therefore, food image recognition technology has become an important research topic in personalized health management.

Food image recognition is challenged by significant intra-class variations and high inter-class similarities [

3]. First, high intra-class variability refers to significant differences in appearance among foods of the same category, which can be attributed to diverse preparation methods, ingredient combinations, presentation styles, and shooting angles. As shown in

Figure 1, all three images depict chicken salad, but due to different preparation methods and presentation styles, they exhibit visual differences. Such variability may cause the recognition system to incorrectly classify them into different food categories, thereby affecting classification accuracy. Second, high inter-class similarity refers to the fact that foods from different categories are visually very similar. The three images in

Figure 2 depict carrot soup, squash soup, and tomato soup, respectively. Although they belong to different categories, their texture and form are highly similar, making them easily misclassified as the same food category, thereby reducing recognition accuracy.

Food image recognition techniques fall into two categories: conventional approaches that rely on engineered visual descriptors, and modern data-driven models that learn hierarchical representations directly from data. Kong et al. [

4] generated food features using the Scale-Invariant Feature Transform and Difference of Gaussians, and classified them using a k-nearest neighbors algorithm. Tammachat et al. [

5] extracted visual features using a combination of color histograms, Bag-of-Features, and Segmentation-Based Fractal Texture Analysis, and subsequently classified them using a Support Vector Machine (SVM) model. Pouladzadeh et al. [

6] extracted features related to color, texture, size, and shape, and used SVM to process images. Anthimopoulos et al. [

7] reported that expanding the visual vocabulary size enhances classification performance, while a linear SVM outperforms other classifiers in food recognition tasks. Kawano et al. [

8] encoded features using Fisher Vectors (FV), thereby improving image recognition speed. However, these methods generally exhibit limited generalization capabilities when dealing with diverse appearances [

9].

Advances in deep learning have established Convolutional Neural Networks (CNNs) as a solution for food recognition applications. CNNs extract features layer by layer through the local receptive fields of convolution kernels, while pooling layers reduce spatial dimensions and gradually expand the receptive field [

10]. This architecture is well-suited for capturing detailed characteristics such as ingredient textures and edge contours. Bossard et al. [

11] constructed the Food-101 dataset and employed AlexNet for dish classification. Yanai et al. [

12] used a CNN model for multi-category dish recognition. Zhu et al. [

13] fine-tuned AlexNet for fruit and vegetable identification. Ciocca et al. [

14] applied CNNs to recognize different states of food. Hou et al. [

15] proposed HybridNet to fuse coarse and fine-grained features for fruit and vegetable classification. Hassannejad et al. [

16] adopted Inception-V3 for dish recognition. McAllister et al. [

17] combined ResNet-152 and GoogleNet for multi-feature fusion-based recognition. Metwalli et al. [

18] proposed DenseFood, integrating DenseNet with a center loss function to enhance fine-grained recognition performance. Patino et al. [

19] designed Fruit-AlexNet for tropical fruit recognition. Katarzyna et al. [

20] proposed a dual-channel CNN for identifying retail fruit images. CNN has powerful local feature extraction capabilities and spatial invariance, so it can accurately identify the same type of food category even when the appearance varies due to different ingredient combinations and plating methods [

21]. However, due to its lack of modeling ability for the global context, it often struggles to accurately distinguish between different food categories with similar appearances, thereby affecting the classification performance [

22].

To compensate for the global modeling deficiency of CNNs, researchers have explored Vision Transformer (ViT). Each image in ViT is segmented into equally sized patches that act as input tokens. By computing attention weights among image patches, ViT models contextual interactions across diverse regions [

23], leveraging transformer-based mechanisms to extract extended contextual dependencies and enhance holistic representation learning for food imagery. Cai et al. [

24] explored the use of ViT under semi-supervised learning settings, enhancing model performance by utilizing large amounts of unlabeled data. Khan et al. [

25] applied ViT to tackle the challenge of recognizing cooking states in dishes, such as slicing or doneness. When identifying various types of food that are visually similar in terms of color, shape or structure, ViT’s global modeling capability can analyze the overall layout and high-level semantic patterns of the image, achieving accurate classification [

25]. However, its ability to perceive local details is relatively weak. When recognizing visually diverse instances within the same food category, it is difficult to accurately extract discriminative information, thereby reducing the recognition effect in complex food images [

26].

Current methods mainly leverage attention mechanisms to enhance the model’s focus on salient image areas, leading to more robust categorization outcomes. Recently, the integration of various techniques such as self-attention, cross-modal attention, and multi-head attention has not only improved the ability to capture local details but also enhanced global modeling capabilities. He et al. [

27] incorporated a spatial attention mechanism into CNNs, combining feature extraction with focus on salient regions. The model significantly outperformed baseline methods without attention on both self-built datasets and Food-101, demonstrating that attention mechanisms enhance the model’s responsiveness to crucial visual cues. Chen et al. [

28] constructed a multi-layer attention feature fusion network to unify features from different CNN intermediate layers, enhancing performance in food image classification, and achieving superior results compared to conventional CNN architectures. Alahmari et al. [

29] utilized attention mechanisms and mask fusion strategies to achieve food type and state recognition.

Although clustering itself does not directly provide supervised labels, it can indirectly enhance model performance in self-supervised settings by guiding feature aggregation and separation. Cai et al. [

30] used text descriptions automatically generated from images to guide image clustering, combining visual and linguistic information for clustering construction, thereby improving semantic consistency of clusters on general food images. He et al. [

31] proposed a Color-Cluster Attention Network, combining Kohonen clustering with self-attention mechanisms to iteratively refine features, successfully enhancing intra-class consistency and inter-class boundary discrimination.

Pretrained visual transformers demonstrate strong general visual understanding in food recognition. These models benefit from large data pretraining and transfer successfully to downstream food tasks with high precision. Gao et al. [

32] employed the ViT-B/16 architecture initialized with ImageNet pre-training weights, followed by the integration of a feature enhancement module. Pre-training provides robust image representation capabilities, enabling the model to achieve excellent image recognition performance even on medium-sized and small-scale food datasets. Liu et al. [

33] fine-tuned the large pre-trained ViT-B/16 model, retaining only useful tokens, and added Dense Adapters to help the network maintain good performance with fewer tokens, thereby reducing computational load while maintaining accuracy.

In food image recognition tasks, the diversity of cooking methods, ingredient types, and plating styles brings about significant visual differences for the same type of food, at the same time, different food categories often exhibit strong similarities in color, shape, or texture. This visual confusion increases the complexity of feature learning and makes food recognition more difficult. In response to the shortcomings of current approaches, we present FFFNet, a Food Feature Fusion Network designed to improve the discriminative capability of complex food images through a multi-head cross-attention mechanism and self-supervised clustering strategies. Firstly, the multi-head cross-attention mechanism integrates the local detail capturing capability of CNN with the global modeling capability of ViT [

34], achieving effective feature fusion and dynamically focusing on discriminative food regions such as ingredients and textures. This enables the model to accurately extract key discriminative information even when identifying food types with high visual confusion. Additionally, a self-supervised clustering module is introduced, which generates pseudo-labels based on feature space distributions. To optimize the distribution of the feature space, a clustering loss function based on Kullback–Leibler (KL) divergence is introduced, which maximizes similarity between features and their corresponding cluster centers while minimizing similarity to non-corresponding ones. This promotes the aggregation of similar sample features and the separation of dissimilar sample features, thereby effectively enhancing the discriminative and structural properties of feature representation. This mechanism helps alleviate recognition difficulties caused by visual confusion [

35,

36,

37]. Finally, the supervised classification loss is integrated with a self-supervised clustering loss to construct a joint optimization objective, guiding the learning process toward acquiring highly discriminative and well-organized representations during classification tasks [

38]. Empirical evaluations demonstrate that FFFNet outperforms existing methods on ISIA Food-500 and ETHZ Food-101. Our contributions include the following:

A feature fusion approach utilizing multi-head cross-attention is introduced, integrating the local detail extraction strength of CNNs with the global modeling capacity of ViTs to enhance the model’s feature representation capabilities. This method is particularly effective in capturing key discriminative features in complex scenes with visual confusion.

A self-supervised clustering constraint strategy is introduced to guide features to form a structured distribution in the latent space, thereby enhancing intra-class compactness and inter-class separability, thereby alleviating the issue of category confusion.

The proposed method achieves accurate classification on the ISIA Food-500 and ETHZ Food-101 datasets, performing better than existing methods.

2. Materials and Methods

2.1. Architecture

Figure 3 presents the overall framework of FFFNet. The network architecture incorporates the following key components: a CNN block, a ViT block, a multi-head cross-attention module, a self-supervised clustering module, and a supervised classification module. The CNN block extracts fine-grained local characteristics of food items in images, represented as

. The ViT block captures global structural patterns of food items in images, represented as

. The multi-head cross-attention module integrates

and

by leveraging multiple attention heads across diverse dimensions, enabling the model to capture richer and more comprehensive representations. This enhances feature representation and enables the model to dynamically focus on discriminative food regions, allowing it to attend to and extract key informative cues. Within a self-supervised learning framework, the clustering module leverages cluster centers derived from clustering algorithms as pseudo-labels. By enhancing the alignment of features with their assigned cluster centers and reducing similarity to unrelated ones, the model learns more discriminative representations, promoting tighter grouping of similar instances and greater separation between distinct classes. The loss function used is KL divergence. Supervised classification, guided by labels, explicitly optimizes the model’s prediction capability, improving the final classification accuracy, with its loss function being cross-entropy loss. The output of this module is taken as the final classification prediction. Throughout training, the loss function combines clustering loss and classification loss. Clustering loss compensates for the shortcomings of classification loss in modeling feature structures, whereas classification loss corrects any semantic deviations that may arise during the clustering process.

Specifically, input images are first preprocessed before being fed into both the CNN and ViT blocks for feature extraction. Local features obtained from the images through the CNN block are input as queries (Q) into the multi-head cross-attention module. Global features obtained from the images through the ViT block are input as keys (K) and values (V) into the same module. After fusion by the multi-head cross-attention module, are obtained. The self-supervised clustering module calculates the similarity between and learned cluster centers , generates a predicted probability distribution, and computes the KL divergence with the target distribution to obtain the self-supervised clustering loss . The supervised classification module generates category prediction probabilities through features , and uses and true labels Lab to calculate cross-entropy loss, obtaining supervised classification loss . The total loss function aggregates and , using fixed weights and , and serves as the training objective function for backpropagation. Additionally, The model’s performance is assessed using Top-1 and Top-5 accuracy metrics derived from the classification outputs .

The algorithm is as follows (Algorithm 1):

| Algorithm 1: Algorithm of FFFNet |

input: images Img

labels Lab

number of training epochs E

batch size B

model M

output: ,

Initialize all weights,

for i ∈ {1,…,E} do

Divide {Img, Lab} to minibatches with size B

for j ∈ minibatches do

backward()

update all weights

end

end

Compute Top-1 and Top-5 Accuracy

return , |

2.2. Image Data

The evaluation was conducted on publicly accessible benchmark datasets for food recognition: ISIA Food-500 [

39], ETHZ Food-101 [

11] and UEC Food256 [

40].

Comprising food samples from diverse geographical regions, the ISIA Food-500 dataset encompasses a wide variety of culinary traditions, such as Chinese dishes (e.g., fried dough sticks, meat-stuffed buns, and fried noodles with sauce) and Western fare (e.g., bruschetta, caramel pudding, and steak). Containing 399,726 images, the dataset has an average of around 800 samples per category. Following the official configuration, we selected 60% of the images from each category for the training set, 10% for the validation set, and 30% for the test set.

The ETHZ Food-101 Dataset includes 101 common food categories, covering both Chinese and Western cuisine, such as hamburgers, sushi, pizza, cake, and French fries, with strong category diversity and visual complexity. The dataset contains a total of 101,000 images, with 1000 images per food category. To maintain consistency with the official ETHZ Food-101 settings, we kept the test set unchanged in our experiments and continued to use the official 250 test images. The remaining 750 images are randomly divided, with 650 assigned to training and 100 reserved for validation. This division scheme ensures stability and comparability of the test set while introducing a validation set to support reasonable model parameter tuning and early stopping, ultimately improving the model’s capacity to generalize.

UEC Food256 comprises 256 categories of commonly encountered foods, spanning Chinese cuisine, Western meals and Japanese dishes, thereby exhibiting both categorical diversity and cross-cultural characteristics. The dataset contains a total of 25,088 images, which, in our experiments, were partitioned for each category into training, validation, and test sets in a 6:1:3 ratio.

2.3. Transfer Learning

Effective deep learning models are often built upon large-scale datasets, reflecting the method’s high demand for training data. However, the ISIA Food-500 dataset contains only 500 food categories with approximately 400,000 images, which is insufficient for deep learning tasks. Additionally, training a model from scratch under limited computational resources demands significant time. Moreover, with limited data, models are prone to overfitting, which can lead to poor generalization performance.

To tackle these challenges, transfer learning has become a widely used approach, improving model performance in the target task through the adaptation of representations acquired in a different but related domain [

41].

The amount of data used for training in the source domain is large, such as the ImageNet dataset, which contains 20,000 categories and 14 million images. The LVD-142M dataset, which contains publicly available datasets such as ImageNet, Google-Landmarks, and web datasets, totaling 142 million images. Models are typically trained on the source dataset for thousands of hours using multiple graphics cards. The multiple benefits of transfer learning solve problems in deep learning.

2.4. Image Preprocessing Module

The ISIA Food-500 dataset encompasses 500 distinct food categories, totaling 399,726 images. But the dataset is unbalanced in terms of categories. Some categories have more images and some have fewer images. Sauteed_mushrooms has 2029 images, while Green_bean_casserole has only 501 images. The presence of class imbalance impacts model training, leading to poor performance on certain categories and degrading overall recognition accuracy [

42].

To address class imbalance in food image datasets, we apply data augmentation to increase sample size within minority classes, with the goal of promoting a more uniform distribution across all categories. This balancing strategy not only enables the model to learn effectively from diverse categories, but also avoids overfitting to dominant classes at the expense of minority ones and enhances overall classification performance [

43]. Furthermore, data augmentation generates diverse training samples through techniques such as brightness variation, image flipping, and deformation, simulating different viewpoints and environmental conditions. This supports better model robustness in practical environments, reduces overfitting, and enhances generalization performance [

44].

Specifically, we generate diverse training samples through operations such as brightness reduction, brightness increase, horizontal flipping, compression, and stretching, as shown in

Figure 4. These operations simulate different environmental brightness levels and viewing angles in real-world environments, thereby improving the model’s robustness for food images in complex environments. To balance category distribution in the training set while maintaining sufficient diversity of original samples, the images from all food categories in the ISIA Food-500 dataset were allocated across training, validation, and test subsets in a 6:1:3 proportion. Each category in the dataset averages approximately 800 images, and after division according to this ratio, each training set contains approximately 480 images. For categories with insufficient original images, the training set may have fewer than 480 images. Data augmentation is applied to expand the training subset, ensuring that each category reaches a uniform size of 480 images. To preserve evaluation integrity, original images are retained in both the validation and test subsets, avoiding artificial distortions and ensuring unbiased and authentic model assessment.

When using a pre-trained model, using the same data normalization method as pre-training can better improve the efficiency of model training. A randomly specified region is cropped into a 224 × 224 RGB image and normalized per channel using a given mean and standard deviation. The mean values are [0.485, 0.456, 0.406] and the standard deviations are [0.229, 0.224, 0.225], which were obtained by calculating the mean and standard deviation of the pixel values of the entire ImageNet dataset [

45].

2.5. CNN Block

CNNs construct local connection patterns on images by using small convolutional kernels such as 3 × 3 or 5 × 5. Each neuron perceives only a small area of the input image, thereby focusing on local details in the image, such as edge textures, granular structures, and local color changes. For certain food categories, such as braised pork, the same type of sample may exhibit differences in appearance due to variations in cooking methods, sauce concentration, ingredient combinations, and shooting angles. In such cases, critical discriminative information is often hidden in local details, such as the gloss of the meat, the state of sauce adhesion, or the texture of charred edges. The high sensitivity of CNNs to fine-grained features enables them to better capture fine-grained differences between similar samples, effectively mitigating the interference caused by visual differences among similar samples.

This part uses TransXNet [

46] and loads a model pre-trained on ImageNet. TransXNet incorporates two key architectural modules—Dual Dynamic Token Mixer (D-Mixer) and Multi-Scale Feed-Forward Network (MS-FFN)—which demonstrate advantages in food image recognition tasks characterized by large intra-class variation.

Convolution kernel weights are dynamically generated by the D-Mixer module from local input features, allowing the receptive field to be adaptively adjusted. In the case of “braised pork” dishes, for instance, image samples may vary drastically in appearance: some pieces appear bright red with caramelized edges, while others are darker, fattier, and differ in size, orientation, or lighting conditions. D-Mixer adapts its receptive field size according to the local structure of each image, accurately extracting textural and structural features across different samples. For example, in images where the pork is well-seared, D-Mixer can focus on high-frequency edge textures; in samples with thicker fat layers or blurred textures, the receptive field can expand to capture more contextual information. This dynamic perception capability greatly enhances the model’s adaptability to intra-class diversity.

In contrast, ResNet50 [

47] uses filters of a static dimensions, such as 1 × 1 and 3 × 3, and its receptive field remains static after training. When dealing with the complex visual variations within the “braised pork” category, a fixed receptive field often fails to capture key discriminative features effectively. For instance, when the meat chunks are large or have indistinct edges, ResNet50’s fixed-size convolutions may not cover critical regions sufficiently, causing the model to miss important cues and leading to decreased classification accuracy.

The MS-FFN module in TransXNet enhances multi-scale feature extraction by applying parallel depthwise separable convolutions using varied kernel sizes across one layer. In images of braised pork, samples may contain fine caramelized textures on the surface, glossy fat reflections, and coarse color contrasts between the meat and background. MS-FFN enables the model to jointly capture local details (e.g., seared meat edges) and global structural features (e.g., spatial distribution of meat and fat), resulting in richer and more discriminative feature representations at a fine-grained level.

By comparison, ResNet50’s multi-scale capabilities rely on stacking fixed convolutional layers, and features at different scales are only integrated at deeper stages of the network. Unlike MS-FFN, it lacks a mechanism for directly aligning and fusing multi-scale features within the same layer. This structural rigidity limits its ability to jointly capture local and global visual cues in complex “braised pork” images—especially when faced with intricate fat textures, varied lighting, or unconventional plating—resulting in misclassification or intra-class confusion.

In summary, TransXNet, through its D-Mixer and MS-FFN modules, improves the model’s capacity to manage significant within-class variation in food images such as braised pork. In contrast, the fixed-structure ResNet50 is limited in its capacity for adaptive receptive field adjustment and multi-scale feature fusion, making it less suitable for complex scenarios. Therefore, choosing TransXNet as the backbone network offers stronger performance in recognizing food categories with rich intra-class diversity.

This component extracts the final layer feature

from TransXnet, where B is the batch size,

indicates the channel count, and H, W represent the spatial dimensions of the feature map, each set to 7. We reshape

to match the feature dimension of

, enabling more comprehensive modeling of feature interdependencies and enhanced feature representation within the multi-head cross-attention fusion process. Specifically, a 2D convolutional layer (Conv2d) is applied to

, where the input channel number is

, the output channel number is D, with D set to 1024, and the kernel size is 1 × 1. This process yields the desired feature

. This transformation is formulated as:

2.6. ViT Block

ViT uses multi-head attention to capture contextual relationships across the whole image, allowing focus on overall structure and semantic hierarchy during encoding. This allows it to extract discriminative global representations, such as the relative positions of main ingredients and side dishes, overall color distribution, and cooking style characteristics. For certain food categories, such as carrot soup and squash soup, these two foods are highly similar in color, texture, and shape, making it challenging for traditional models based on local details to effectively distinguish between them. However, ViT can model across multiple levels, from overall layout and color distribution to semantic patterns, identifying global features such as the thickness of the soup and the distribution of surface ingredients. This modeling approach enables ViT to better capture semantic differences between categories, making it particularly suitable for handling food categories with highly similar visual characteristics.

This part uses DINOv2 [

48] and loads a model pre-trained on LVD-142M. In food recognition tasks, high inter-class similarity, such as between different types of soups, can hinder accurate model classification. The crux of this challenge lies in the model’s need not only to capture basic visual features but also to understand the implicit relationships between ingredients and their dynamic patterns of change. The design differences between DINOv2 and ViT-B/16 [

23] precisely reflect their respective abilities to address these demands.

The core advantage of DINOv2 stems from its self-supervised pretraining framework. By integrating contrastive learning and denoising training on the large-scale unlabeled dataset LVD-142M, this framework enables the model to transcend the limitations of predefined class labels and learn cross-domain semantic associations among ingredients. For instance, in the high inter-class similarity scenario of “Japanese chawanmushi” and “Chinese steamed egg,” DINOv2 leverages the co-occurrence pattern of “white egg custard base + surface toppings” captured during pretraining to accurately localize key discriminative cues. Although the two dishes differ in preparation methods—leading to variations in topping distribution and coagulation state—DINOv2 can identify decisive differences such as the scattered arrangement of mushrooms and goji berries in chawanmushi versus the clustered layout of scallions and shrimp in steamed egg. This transfer of prior knowledge based on “visual co-occurrence patterns” enables the model to detect defining distinctions even in cases of visually similar but semantically different samples—for example, differences in the distribution pattern of toppings or the texture at the edges of the custard.

In contrast, ViT-B/16, which relies on supervised training, is effective at learning general visual features but exhibits clear limitations in high inter-class similarity food scenarios. Its training is guided by fixed category labels such as “steamed egg,” “soup,” or “staple food,” which do not enforce the model to distinguish nuanced intra-class differences such as those between chawanmushi and steamed egg. As a result, in ViT’s learned feature space, class representations for “steamed egg” variants tend to overlap. The supervised paradigm—focused on broad class boundaries rather than fine-grained relational understanding—can lead the model to be misled by shared visual traits (e.g., pale custard base, small topping granules), making it difficult to capture decisive cues such as the topping types and their arrangement.

Moreover, DINOv2, through its multi-view consistency learning mechanism under the teacher-student framework, guides the student network to learn stable and discriminative semantic representations from images at different scales and viewpoints in the self-supervised training stage. This mechanism encourages the model to maintain feature consistency across views even when the input image undergoes slight deformations or local perturbations, thereby enhancing its sensitivity to fine-grained variations in ingredients (e.g., positional shifts in mushroom pieces on chawanmushi or the density distribution of scallions on steamed egg). By aligning features across multiple views, DINOv2 constructs robust representations that enable it to accurately capture decisive details and delineate clear semantic boundaries—even in high-similarity scenarios.

In contrast, ViT-B/16 employs a static self-attention mechanism and a supervised learning paradigm. Its feature learning is constrained by single-label guidance and a focus on global representations, making it less adaptable to local variations. In food recognition settings involving visually similar yet detail-critical categories—such as chawanmushi and steamed egg—ViT-B/16 is more prone to confusion from shared visual regions (e.g., the pale custard base or granular topping distribution), often resulting in blurred class boundaries.

In summary, DINOv2, through its self-supervised pretraining strategy and multi-view consistency mechanism under a teacher-student architecture, demonstrates significant advantages in food recognition tasks involving visually similar but semantically distinct classes. In contrast, ViT-B/16, constrained by static supervision and global modeling, struggles to capture local variations and fine details, limiting its performance in fine-grained classification. Therefore, when identifying food images with high visual similarity, DINOv2 is a more adaptive and discriminative backbone model.

This part extracts the last layer feature

from DINOv2, where B represents the batch size, N = 256 denotes the sequence length, and

is the feature dimension. To better capture the complex relationships between features and enhance feature representation during the multi-head cross-attention fusion process, we adjust the shape of

to match the feature dimension of

. Specifically, a linear layer (Linear) is applied to

, where the input size is

and the output size is D, with D set to 1024. This process yields the desired feature

. The transformation is given by this equation:

2.7. Multi-Head Cross-Attention Module

Currently, there are many mechanisms for feature fusion, such as multi-head cross-attention, efficient attention variants, state space models, and xLSTM [

49]. In the task of image feature fusion, the local texture features extracted by CNNs usually exist in a regular spatial structure, while the global semantics extracted by ViT are represented in a flattened token sequence. These two types of features differ in terms of representational structure, spatial encoding, and modeling paradigm. Multi-head cross-attention directly models inputs from different sources by explicitly constructing associations among Query, Key, and Value, thereby achieving spatial matching and semantic fusion.

Efficient attention variants, such as Performer [

50], approximate the original Softmax attention to a linear inner product form to address the high computational complexity of traditional attention, thereby reducing time and space complexity. However, such an approach risks losing critical semantic content, thereby underperforming relative to multi-head cross-attention. For future applications on resource-constrained mobile devices, adapting this method could be a promising direction.

State space models (SSMs) rely on input-driven updates across time steps and implicit state evolution. Modern SSMs represented by Mamba [

51] adopt a discretized design, and their modeling process still mainly focuses on implicit state evolution, lacking the ability to directly model explicit interactions between different positions. This characteristic may lead to insufficient spatial alignment capabilities in the task of image feature fusion, making it difficult to accurately capture the regional correspondence between heterogeneous features.

xLSTM uses gating mechanisms to accumulate state information across sequences, which is suitable for extracting deep semantics from continuous features from the same source. However, in the task of image feature fusion, the structures and semantics of the outputs from CNNs and ViTs are quite different. The incremental accumulation approach of xLSTM makes it difficult to simultaneously align the spatial structures of the two heterogeneous feature sources, resulting in inferior feature fusion performance compared to cross-attention.

Moreover, both state space models and xLSTM operate on one-dimensional sequences, requiring the feature representations to be flattened into sequential inputs. This flattening process may disrupt the original two-dimensional spatial structure of images, causing a degradation in spatial positional encoding and complicating the preservation of spatial relationships among local regions. Therefore, these two methods are not considered suitable for heterogeneous feature fusion in the current study.

contains local detail information from the image, such as the texture, edges, and shape of food items. Using these local features as queries (Q) directs attention toward critical details during computation, thereby enhancing its ability to recognize food items.

captures the image’s holistic visual characteristics, allowing the model to understand the overall structure and contextual information. Using these global features as keys (K) and values (V) ensures that the model fully considers the global structure during attention computation, thus better understanding the position of food items within the entire image and their relationships with other objects.

Multi-head cross-attention computes attention weights among Q, K, and V through multiple attention heads, each attending to distinct dimensions or semantic aspects. This architecture supports richer feature representation and enhances understanding of complex images. Moreover, the mechanism effectively fuses local representations from the CNN with global contextual information from the ViT. The CNN-derived fine-grained details guide the ViT in better interpreting structural nuances within the global context, while the ViT-extracted holistic semantics help the CNN better understand the broader meaning of local patterns. As a result, the model can simultaneously leverage both local details and global structures, leading to a more comprehensive feature representation that better describes the characteristics of food images. Therefore, the multi-head cross-attention mechanism can boost the network’s extraction proficiency, especially in complex scenes with visual confusion, effectively capturing key discriminative features such as the spatial structure of main and secondary ingredients, local textures, and overall color schemes, thereby improving overall recognition performance.

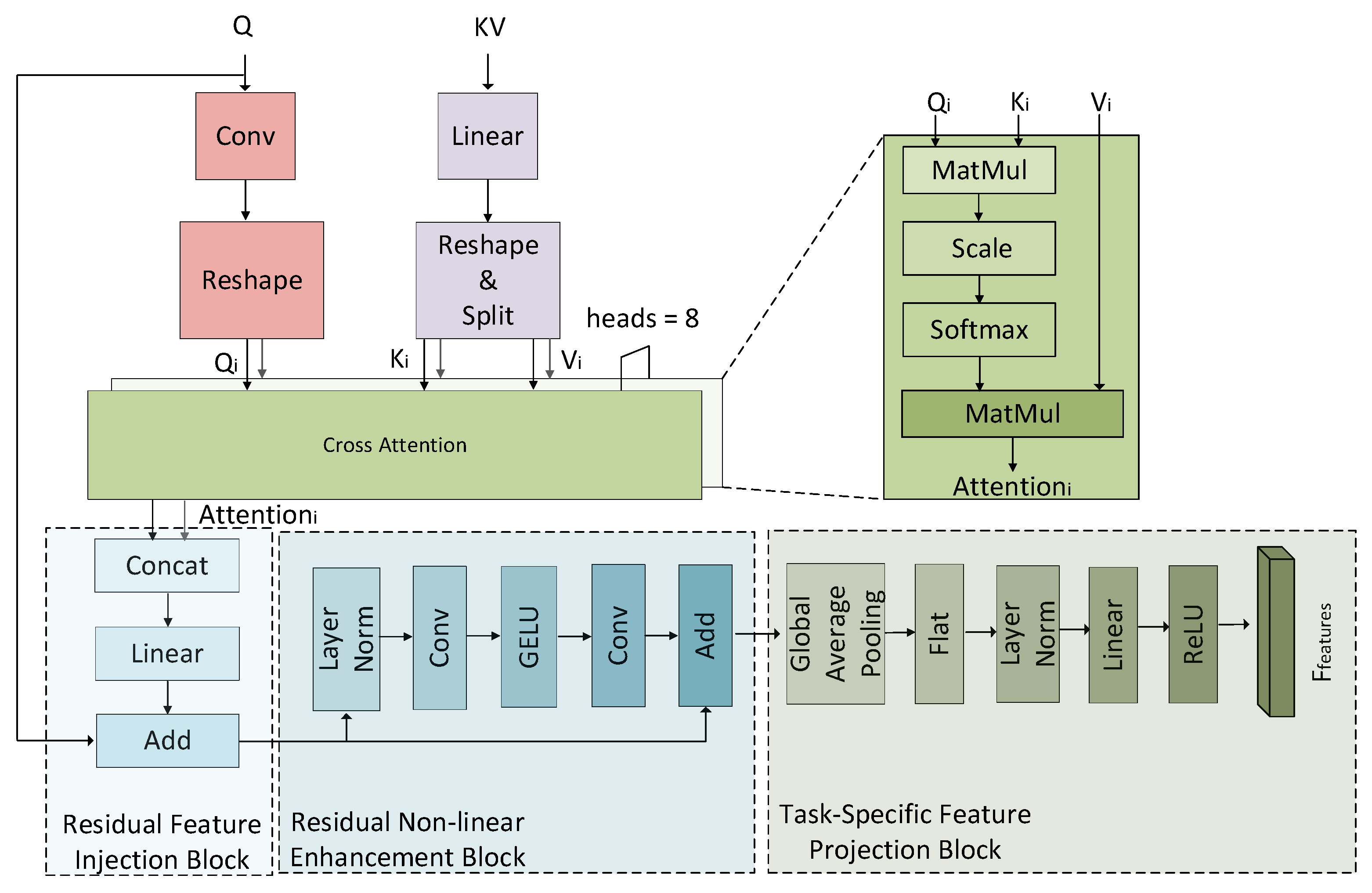

The framework of the multi-head cross-attention is shown in

Figure 5. It consists of the cross-attention mechanism with multiple heads, the residual feature injection block, the residual non-linear enhancement block, and the task-specific feature projection block.

The cross-attention mechanism with multiple heads enhances the model’s perception of different feature patterns by mapping inputs into multiple distinct subspaces for parallel computation. In FFFNet, we set the number of attention heads is set to 8 (heads = 8). Specifically: , where Q is derived from , B denotes batch size, D denotes feature channel count, H denotes feature map height, and W denotes feature map width. , where KV is derived from , B denotes batch size, N denotes sequence length, and D denotes feature dimension.

We apply a 2D convolutional layer (Conv2d) to Q, where the input channel number is D, the output channel number is also D, and the kernel size is 1 × 1. The resulting feature is then reshaped into a multi-head format, yielding the final Query tensor

. The transformation is formulated as follows:

We apply a linear layer to KV, where the input channel number is D, the output channel number is 2D. After adjusting the shape, we obtain

. Finally,

is split into Key (

) and Value (

), each with the shape

. The transformation process is formulated as follows:

For each head i, the output is computed using the attention mechanism formula as follows:

where

represents the Query matrix for head i,

represents the Key matrix for head i,

represents the Value matrix for head i,

is the dimension of the key vectors, and its value is

.

Matrix multiplication of and yields a measure of their similarity. This result is then divided by , to prevent the attention scores from becoming excessively large. Softmax normalizes the scaled attention scores, producing a probability distribution where each element represents the attention weight of one query element over all key elements. The weighted value features are derived in the final stage by combining the value matrix with the normalized alignment scores.

First, the output features from multiple attention heads are concatenated to form a unified representation. Then, the concatenated features are transformed into the final output dimension through a linear transformation. This linear layer further integrates information from all heads, producing a richer feature representation, where both the input and output dimensions are D. Finally, the feature tensor is reshaped back into image form. The corresponding formulas are as follows:

In order to capture more complex patterns at deeper levels of the network while retaining key information from the input features, we introduce a residual feature injection block to make the model more stable during training and to address issues such as overfitting or vanishing gradients.

Since Q contains local detail information, which is crucial for identifying food categories in images, adding the reshaped attention output to Q enhances its discriminative power, enabling more accurate recognition of food categories. The corresponding formula is as follows:

The residual non-linear enhancement block uses non-linear changes to further enhance the expressive power of features. Specifically, after adding the reshaped attention output to Q, we apply Layer Normalization (LayerNorm) to the resulting features to stabilize the training process. Layer Normalization normalizes the summed features across the feature dimension, which helps in maintaining a stable gradient flow and accelerating convergence. The formula is as follows:

After normalizing the features, a non-linear mapping is introduced to further enhance the feature representation. This transformation is implemented as a sequence consisting of a convolutional layer, a GELU activation, and a second convolutional layer applied to the normalized input

. Specifically, the first convolutional layer has an input channel dimension of D and an output channel dimension of 4D, with a kernel size of 1 × 1. The GELU activation function incorporates non-linear characteristics within the model. The second convolutional layer reduces the feature dimension back from 4D to D, also using a 1 × 1 kernel. Finally, the output of this transformation is combined with

via a skip connection, which helps preserve information flow and stabilize training, and the corresponding formulas are as follows:

The GELU activation function provides a smoother non-linearity, which can theoretically help mitigate issues related to gradient degradation during training. The corresponding formula is as follows:

where Φ(x) gives the cumulative probability up to x for a standard normal distribution, given by:

here, erf refers the error function.

In order to further enhance feature expressiveness and adapt to classification and clustering requirements, we introduce a task-specific feature projection block. Specifically, the processing of

involves a series of operations: global average pooling (GAP), flattening (Flat), layer normalization, linear transformation, and ReLU activation. In this sequence, the global average pooling layer produces an output of size [1, 1], effectively reducing the spatial dimensions while preserving channel-wise information. The linear layer operates on input features of size D and projects them to an output space of dimension D/2. The specific formulas are as follows:

ReLU is a simple non-linear operation that maps negative inputs to zero and preserves positive ones without modification. This straightforward form makes it computationally efficient and contributes to its excellent performance in many deep learning tasks. The ReLU function is defined by:

2.8. Self-Supervised Clustering Module

A clustering module based on self-supervised learning was proposed to boost the model’s performance in distinguishing ambiguous visual patterns. This module constrains the spatial distribution of features by dynamically generating target distributions, while leveraging self-supervised learning to provide additional learning signals.

By utilizing the intrinsic structure of unlabeled data, self-supervised learning enables more informative feature learning in models.

First, structured feature distribution is achieved by optimizing the KL divergence, encouraging features to form organized clusters. Samples from the same category converge toward a shared cluster center, while those from different categories are separated into distinct clusters. This structured distribution enhances intra-class compactness and inter-class separability [

52]. Second, robustness is improved. Self-supervised learning enhances the model’s generalization ability against data noise and visual differences by utilizing the underlying structure of unlabeled data, making it particularly suitable for handling visually confusing scenarios in food images [

53].

The cluster centers are initialized as learnable parameters, which are continuously updated during training to better represent the data distribution. Specifically, we assign a randomly initialized vector to each cluster center [

54]:

where

denotes the number of cluster centers, fixed at 500 to align with the number of food categories, and

denotes the dimensionality of the features, set to match the size of the feature vector

.

To ensure that the computed similarity is based on direction rather than magnitude and to make the similarity computation more stable [

55], we perform vector normalization on both the input features (

) and clustering centers (

), resulting in

and

, respectively. The normalization formula is as follows:

The raw similarity between each sample and all cluster centers is obtained by computing the dot product between

and

. This similarity matrix is then divided by a temperature coefficient (

) to regulate the concentration of the soft assignment distribution, resulting in a scaled similarity matrix. The formula is as follows:

The smaller the temperature value τ, the sharper the distribution, and the more distinct the model’s discrimination of features. In experiments, τ is set to 0.1.

A Softmax operator is used on the similarity matrix to generate

, which denotes the assignment probabilities of samples across all cluster centers. The formula is as follows:

We compute the mean of

along the batch dimension to derive a global class distribution, referred to as the pseudo-target distribution, Target. This approach guides the learning of a balanced and stable clustering distribution, thus improving within-class cohesion and between-class distinction. The formula is as follows:

Let denote the total number of samples, and let be the probability distribution vector predicted for sample i by the self-supervised clustering module.

The loss of this module is based on the KL divergence. By measuring the KL divergence from the predicted distribution

to the target distribution

, the model continuously optimizes both the feature representations and the cluster centers. This enables samples to be assigned to more discriminative clusters, thereby facilitating effective self-supervised feature learning. This can be expressed as:

The smaller the KL divergence, the closer the model’s predicted distribution aligns with the target distribution, thus facilitating the formation of a structured distribution in the feature space [

56].

2.9. Supervised Classification Module

Supervised classification, guided by ground-truth labels, directly enhances predictive performance and boosts final accuracy.

The supervised classification module applies a linear mapping to the input features (

) to generate predicted logits for each class. The linear layer’s input dimension is

, and its output dimension is

, which corresponds to the number of food categories. Specifically,

is set to 500. The transformation is expressed as:

The predicted logits are transformed to yield

through application of the Softmax operator. Each element in this predicted distribution indicates the likelihood a given instance is associated with the corresponding class. By applying the Softmax transformation, the model produces a normalized output vector with positive entries that sum to one, forming a valid probability distribution over the classes. During final prediction,

serves as the final prediction output. The Softmax transformation is defined by the equation:

The supervised classification module employs cross-entropy loss to enhance model performance by leveraging labeled data. The cross-entropy loss is as follows:

where

is the number of samples.

is the one-hot encoded ground-truth label vector for sample

.

denotes the predicted probability distribution vector for sample

from the supervised classification module.

In the validation and testing stages, the predicted class index is determined by selecting the argmax of the output probabilities in

for each input sample. If this index matches the true label, it is counted as a

prediction. Similarly, the top-5 predicted class indices with the highest probabilities are retrieved. If the true label is among these five, it is counted as a

prediction. After aggregating the total number of samples (

), Top-1 accuracy (

) and Top-5 accuracy (

) are computed. The formulas are as follows:

2.10. Loss Function

The final loss (

) combines the self-supervised clustering loss (

) and the supervised classification loss (

).

is defined as:

where

and

are the loss weights. By optimizing the self-supervised clustering loss, the model learns feature embeddings where within-class variance is reduced and between-class differences are amplified, resulting in more structured and discriminative representations. However, due to the lack of explicit label supervision, self-supervised clustering methods may fail to accurately align with the true semantic categories. This issue is particularly pronounced when handling semantically ambiguous boundaries or inter-class similarity, where pseudo-labels generated based on feature similarity are prone to errors, potentially leading to error accumulation and propagation during training. To strengthen the learning signal, we incorporate a supervised classification loss during training and jointly optimize it with the self-supervised clustering loss. In this framework, pseudo-labels serve as soft guidance for auxiliary supervision, and an appropriate weight loss is set to balance the contributions of the two objectives. This design preserves benefits of self-supervised clustering in modeling structural relationships within feature space, while retaining semantic anchors from ground-truth labels to correct potential deviations during clustering, thus improving overall discriminability and semantic consistency.

A grid search was conducted over different weight combinations of the self-supervised clustering loss and the supervised classification loss to evaluate their impact on model performance and select the optimal configuration. Specifically, we tested multiple combinations of loss weight parameters , where and . When both weights were set to 0.5, the model achieved optimal performance on the test set. This result indicates that, under the configuration where the weights of the two loss terms are equal, the model can effectively combine the structural modeling capability of the clustering loss with the semantic guidance capability of the classification loss, ultimately achieving more discriminative and generalizable feature learning.

3. Results

3.1. Experimental Environment

The experimental platform was configured with an Intel (R) Xeon (R) Gold 6226R CPU and an NVIDIA GeForce RTX 3090 GPU, running on Ubuntu 18.04.6 operating system, with Python 3.9.19, PyTorch 2.0.0, and CUDA 11.7 installed for deep learning computations.

We use a learning rate of 0.0001, the Adam optimizer, a batch size of 20, and train for 20 epochs, with each epoch taking approximately 2 h. TransXNet is used as the CNN backbone and DINOv2 as the ViT backbone. In the loss function, and are both set to 0.5. Top-1 accuracy (Top-1 Acc.) and Top-5 accuracy (Top-5 Acc.) serve as the evaluation metrics.

3.2. Experimental Results

We evaluate FFFNet on food image datasets: ISIA Food-500, ETHZ Food-101 and UEC Food256. All trials were conducted using identical hardware and software environments, aiming to maintain consistency and comparability of the experimental settings. We conducted comparisons with a set of representative image classification techniques, including VGG-16 [

45], ResNet-18 [

47], TransXNet [

46], PVT [

57], DeiT [

58], DINOv2 [

48], SGLANet [

39], EHFR-Net [

59], and CAN [

31]. Among these, VGG-16, ResNet-18, and TransXNet are CNN-based architectures; PVT, DeiT, and DINOv2 belong to the ViT family; SGLANet employs a hybrid attention scheme combining global and local contexts; EHFR-Net integrates both CNN and ViT modules; and CAN adopts a clustering-driven strategy. The principal evaluation metrics are Top-1 and Top-5 accuracies, with each experiment independently repeated three times. For every metric, the standard deviation (Std.) and the 95% confidence interval (95% CI) are reported to provide a comprehensive assessment of the model’s stability and reliability. To further verify whether the performance improvements of FFFNet are statistically significant, we conducted independent t-tests on the results of three independent experiments comparing FFFNet with various baseline methods in terms of Top-1 and Top-5 accuracy, computing the corresponding

p-values.

The results are shown in

Table 1. FFFNet was evaluated against three representative baselines: the classical CNN framework ResNet-18, the ViT-based PVT, and the current state-of-the-art SGLANet. On ISIA Food-500, FFFNet yielded Top-1/Top-5 accuracy gains of 15.07%/13.66%, 11.83%/10.33%, and 2.18%/1.59% over ResNet-18, PVT, and SGLANet, respectively. Corresponding improvements on ETHZ Food-101 reached 11.56%/6.28%, 7.68%/2.82%, and 1.32%/0.84%, while on UEC Food256 the increases were 12.38%/8.91%, 9.12%/5.45%, and 1.59%/1.07%. All reported differences are statistically significant at

p < 0.05, indicating that FFFNet achieves not only higher accuracy, but also consistent and dependable performance across datasets.

These performance gains arise from effectively fusing CNN’s proficiency in local detail representation with ViT’s capacity for holistic scene understanding, enabled by cross-attention across multiple heads. This integration supports rich feature learning that simultaneously resolves fine structures and global layout patterns in food images, improving the identification of distinctive features in challenging food classes. In contrast, EHFR-Net merely fuses CNN and ViT outputs through summation and concatenation, resulting in shallow feature interactions. Consequently, FFFNet surpasses EHFR-Net with Top-1/Top-5 accuracy gains of 8.02%/3.33% on ISIA Food-500, 4.14%/2.16% on ETHZ Food-101, and 7.82%/2.65% on UEC Food256, with all improvements statistically significant (p < 0.05).

Furthermore, this work introduces a self-supervised clustering module that constructs pseudo-labels based on sample similarities and optimizes feature representations and cluster centers using KL divergence loss. This design not only enhances within-class cohesion and between-class distinction but also facilitates the learning of more discriminative features without requiring additional labeled data. In contrast, CAN uses clustering merely as an auxiliary feature-processing step and does not explicitly enforce the geometric structure of the feature space. Accordingly, FFFNet achieves Top-1/Top-5 accuracy improvements of 9.29%/5.04% on ISIA Food-500, 5.25%/2.24% on ETHZ Food-101, and 8.77%/3.78% on UEC Food256, with all gains statistically significant (p < 0.05).

In summary, the architectural designs collectively enhance the robustness and generalization capability of the model in food image recognition tasks.

The curves of loss over training and accuracy over validation are shown in

Figure 6 for FFFNet. The validation accuracy includes Top-1 and Top-5 accuracy.

Figure 6a illustrates FFFNet’s performance on ISIA Food-500.

Figure 6b illustrates FFFNet’s performance on ETHZ Food-101.

Figure 6c illustrates FFFNet’s performance on UEC Food256. On three datasets, the training loss of FFFNet decreases rapidly within the first 10 epochs and then stabilizes gradually, indicating that the model converges quickly and the optimization process is stable. The Top-1 and Top-5 accuracy rates continue to rise within the first 10 epochs. Additionally, Top-5 accuracy approaches 90% on ISIA Food-500, and exceeds 90% on both ETHZ Food-101 and UEC Food256, reflecting the model’s robustness in distinguishing fine-grained food categories. These results indicate that FFFNet can achieve rapid convergence within 20 epochs while maintaining strong generalization capabilities.

3.3. Ablation Experiments

Firstly, we evaluated the role of the main components in food image recognition using the ISIA Food-500 dataset. In

Table 2, “TransXNet” denotes food image recognition performed using only the TransXNet model. “DINOv2” denotes food image recognition conducted solely with the DINOv2 model. “Multi-Head Cross-Attention” denotes the integration of multi-head cross-attention mechanisms between TransXNet and DINOv2. “Multi-Head Cross-Attention + Clustering” denotes our proposed approach, which incorporates clustering into the Multi-Head Cross-Attention framework.

Analysis of the results in

Table 2 indicates that our method outperforms both the standalone TransXNet and DINOv2 models. Applying the multi-head cross-attention increases Top-1 and Top-5 accuracy by 3.77% and 2.44%, respectively, compared with TransXNet. When both multi-head cross-attention and self-supervised clustering are employed, Top-1 and Top-5 accuracy rise by 5.6% and 3.53% relative to TransXNet, and by 1.83% and 1.09% compared with using the multi-head cross-attention alone.

The multi-head cross-attention mechanism successfully combines CNN’s strength in capturing fine-grained details with ViT’s capacity for modeling global features, thereby achieving higher-quality feature interaction and integration. Multi-head mechanisms allow the model to parallelly model different types of attention patterns across multiple subspaces, capturing complex and diverse semantic information from various perspectives. By focusing on different regions, scales, and semantic levels through multiple attention heads, the model exhibits enhanced expressiveness and robustness when dealing with diverse and structurally complex food images. Moreover, cross-attention strengthens the interaction between features extracted by CNN and ViT, leveraging their complementary characteristics. By leveraging the structural advantages of both CNN and ViT, the multi-head cross-attention mechanism allows the model to extract edge and texture details while also representing overall spatial layouts and ingredient relationships as global semantic information, thereby dynamically focusing on discriminative regions like ingredients and textures.

Additionally, the introduction of self-supervised clustering allows the model to generate pseudo-labels based on the feature space distribution and continuously optimize feature representations using clustering loss functions, resulting in a more stable and structurally guided learning process. This process helps the network uncover latent category boundary information, guiding it to learn more discriminative and generalizable feature representations, thus further enhancing overall recognition performance.

Secondly, we assessed the effectiveness of different models as the backbone for CNN and ViT, as shown in

Table 3. We selected two high-performing CNN models (VGG-16 and TransXNet) and two high-performing ViT models (DeiT and DINOv2) as the backbone, and conducted cross combinations of these models. Experimental results indicate that when the CNN is fixed as VGG-16, replacing the ViT from DeiT to DINOv2 increases Top-1 and Top-5 accuracy by 6.58% and 4.97%, respectively, indicating that DINOv2 enhances the overall performance. When the ViT is fixed as DeiT, replacing the CNN from VGG-16 to TransXNet increases Top-1 and Top-5 accuracy by 5.06% and 4.12%, showing that TransXNet also improves overall performance. When both the best-performing TransXNet and DINOv2 are combined, Top-1 and Top-5 accuracy rise by 8.88% and 6.49% relative to the baseline VGG-16–DeiT combination, demonstrating the synergistic gain of this combination.

The TransXNet-DINOv2 combination effectively leverages the complementary strengths of advanced CNN and ViT architectures. Specifically, TransXNet incorporates a Dual Dynamic Token Mixer module and a multi-scale convolution design, making it particularly effective at capturing local details and texture features in food images. This enables the model to better handle subtle differences in texture and structure among various ingredients. DINOv2, on the other hand, benefits from large-scale pre-training and powerful global modeling capabilities, allowing it to capture spatial structures and high-level semantic relationships between ingredients through cross-region attention mechanisms.

Compared with other combinations, TransXNet-DINOv2 achieves a better balance between local and global feature extraction. It preserves detailed local structural information while effectively modeling the overall spatial layout. This enables it to demonstrate higher recognition capabilities and stronger generalization performance in complex and variable food scenes.

Thirdly, the ISIA Food-500 dataset was partitioned with a 6:1:3 split across training, validation, and test subsets. Subsequently, brightness and geometric transformations were applied to the training subset to enhance data diversity. Brightness augmentation involved reducing and increasing brightness, while geometric augmentation included horizontal flipping, compression, and stretching. This ensured that each food category had at least 480 training images. To assess how augmentation strategies affect generalization, we evaluated the model on four variants of the ISIA Food-500 dataset: the ISIA Food-500 original dataset without data augmentation, the augmented dataset with only brightness augmentation, the augmented dataset with only geometric augmentation, and the augmented dataset with both brightness and geometric augmentation. In all cases, only the ISIA Food-500 training data was modified, while the validation and testing portions remained unchanged.

As shown in

Table 4, compared to the dataset without data augmentation, applying brightness augmentation alone increases Top-1 and Top-5 accuracy by 0.06% and 0.22%, respectively. Applying geometric augmentation alone increases Top-1 and Top-5 accuracy by 0.08% and 0.19%, respectively. Combining brightness and geometric augmentation achieves the best performance, with Top-1 and Top-5 accuracy improving by 0.15% and 0.38%, respectively.

The overall improvement is small, primarily because data augmentation targets food categories with fewer images, but these categories account for a low proportion of the overall sample, so their impact on the overall metrics is also weak.

We selected three food categories with fewer images and tested their Top-1 accuracy on the ISIA Food-500 original dataset and the augmented datasets generated by three different augmentation strategies. The three selected food categories are Curry_goat, Green_bean_casserole, and Hae_mee. In the original ISIA Food-500 dataset, these categories contain 500, 501, and 502 images in total, respectively.

As shown in

Table 5, compared to the dataset without data augmentation, the accuracy of Curry_goat increased by 8% after geometric augmentation, and the accuracy of Green_bean_casserole increased by 11.26% after both brightness and geometric augmentation, and the accuracy of Hae_mee increased by 6.61% after brightness augmentation. Compared to the overall accuracy, food categories with fewer images showed an improvement in accuracy.

These results highlight the value of data augmentation in improving model performance, effectively mitigating class imbalance in the source dataset and enabling more balanced learning of food features. By simulating changes in brightness, angle, and shape of images, the model’s adaptability to image diversity is enhanced, enabling it to focus on more stable and representative discriminative features—such as the texture and structure of ingredients—even when faced with perturbations. This enhances the robustness and generalization capability of feature extraction, thereby improving classification performance.

Fourthly, we assess model behavior across various weight loss configurations.

Table 6 demonstrates that peak performance is attained when

and

each take the value of 0.5.

With the loss weights set to and , the self-supervised clustering loss and the supervised classification loss maintain a relatively balanced contribution during optimization, each providing equal gradient information. This weight setting effectively leverages the complementary strengths of both components. On one hand, the self-supervised clustering loss encourages the model to form clearer inter-class boundaries and more compact intra-class distributions in the embedding space, thus improving the structural integrity of feature representations. On the other hand, the classification loss, guided by ground-truth labels, helps correct the semantic misalignment that may arise from clustering, thereby improving discriminative power and generalization. In contrast, if one loss dominates excessively, the model may overfit to either structural modeling or semantic supervision, leading to performance degradation.

To further analyze the model’s sensitivity to the loss weight ratio, we tested multiple combinations of loss weight parameters , where and . The results show that Top-1 accuracy ranges from 63.82% to 65.31%, and Top-5 accuracy ranges from 87.93% to 88.94%. The best performance is achieved when and , while extreme weight configurations ( = 0 or 1) result in the largest performance drops. Compared with setting , assigning leads to an increase of 1.07% in Top-1 accuracy and 0.89% in Top-5 accuracy. Compared with , the same setting improves Top-1 and Top-5 accuracy by 1.49% and 1.01%, respectively. The model demonstrates sensitivity to the weight loss ratio, with performance varying significantly under different configurations.

3.4. Visualization Analysis

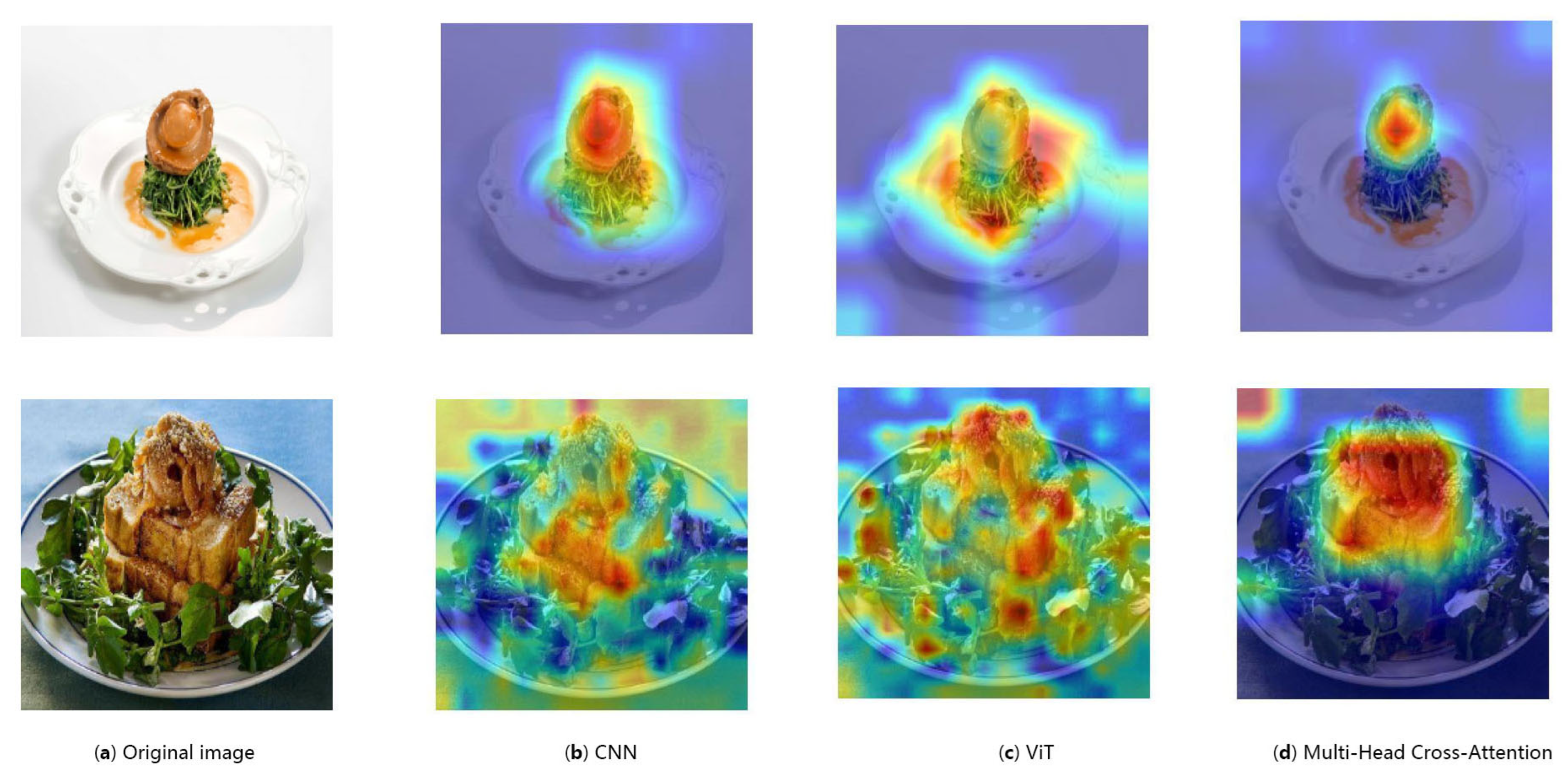

To visually demonstrate the role of multi-head attention, we have created a series of feature heatmaps, as shown in

Figure 7. Areas of interest to the model are displayed in red, while areas of no interest are displayed in blue.

Figure 7a shows the original input image.

Figure 7b shows the feature heatmap extracted using a CNN. Since CNNs are good at capturing local features, the heatmap typically shows high activation values in certain local regions of the image, such as desktop textures, background details, food materials, and food textures.

Figure 7c shows the feature heatmap extracted using a ViT. Since ViTs capture global features through self-attention mechanisms, the heatmap typically shows attention to the overall shape of the food.

Figure 7d shows the feature heatmap extracted using a multi-head cross-attention mechanism that combines CNN and ViT. Since the multi-head cross-attention mechanism fuses CNN’s capability to extract fine-grained local patterns with ViT’s strength in capturing global structures, the heatmap highlights the overall shape, materials, and texture of the food.

To visually demonstrate the effectiveness of self-supervised clustering, we plotted a feature scatter plot, as shown in

Figure 8, which illustrates the distribution of food images in a two-dimensional feature space, where points of different colors represent food images of different categories.

Figure 8a shows the visualization results obtained using only self-supervised clustering. Self-supervised clustering can uncover the intrinsic structure of the data, enhance within-class cohesion and promote between-class discrimination. As a result, points of the same color are relatively compact. However, the lack of real-world semantic guidance leads to blurred and overlapping cluster boundaries, such as the red cluster in the bottom right, which is highly compact but overlaps with the blue cluster below it.

Figure 8b shows the visualization results obtained using only supervised classification. Supervised classification methods rely on real labels for training, effectively improving prediction accuracy and to some extent promoting the aggregation of similar samples. For example, the red points on the right are more concentrated, indicating that the model attempts to compress them into the same decision region. However, due to the lack of feature space structure modeling in this method, the distribution is relatively loose, the area is large, and the boundaries between classes are not clear, thereby limiting the model’s generalization performance.

Figure 8c shows the visualization results of using both self-supervised clustering and supervised classification. Based on improving intra-class compactness and inter-class separability, supervised fine-tuning ensures the accuracy of the final classification and alignment with the true semantics. The distribution state in the figure is a visual proof of the combination of these two advantages. For example, in the lower right corner, self-supervised clustering brings red samples closer together in the feature space, improving intra-class compactness, and the area is smaller compared to using only supervised classification. Supervised classification optimizes the decision boundary based on real labels, pushing away other categories, and there is less color overlap in the red area compared to using only self-supervised clustering.

From the scatter plot in the feature space, the optimal performance is achieved when training combines self-supervised clustering with supervised classification. This is because self-supervised clustering improves within-class cohesion and between-class distinction, while supervised classification provides explicit category labels, improving classification accuracy and enhancing inter-class separability. The combination of the two achieves a better balance between feature structure and semantic alignment, effectively improving the model’s discriminative ability and generalization performance.

4. Discussion

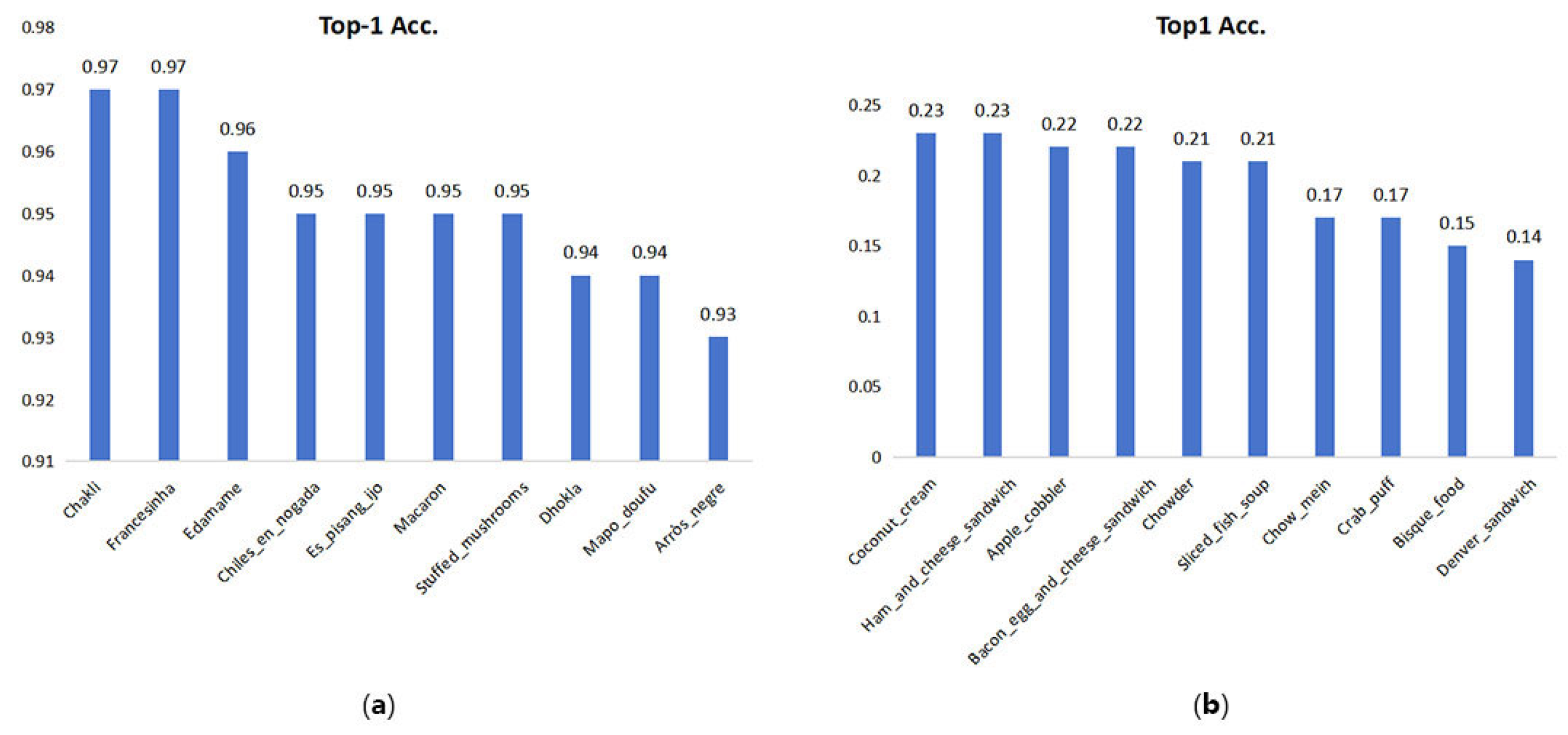

Due to the high similarity in appearance of some foods and the existence of multiple forms for certain foods, the classification results for different categories of food images vary.

Figure 9 illustrates the 10 food categories with the best and worst TOP-1 accuracy in the ISIA Food-500 dataset. Specifically,

Figure 9a shows the 10 categories that perform best in classification, indicating that some categories are very easy to recognize, such as Chakli, Francesinha, and Edamame, all of which have a Top-1 accuracy exceeding 96%.

Figure 9b shows the 10 categories with the poorest classification performance, revealing that some categories are difficult to identify, including Chow_mein, Crab_puff, Bisque_food, and Denver_sandwich, all of which have a Top-1 accuracy below 20%.

Figure 10 shows the three foods with the highest recognition accuracy.

Figure 10a shows Chakli, a food with a spiral structure, serrated edges, and a grainy surface texture. This fried food has a yellow color and a crispy texture. It has a clear outline and stable recognition boundaries.

Figure 10b shows Francesinha, a food with a layered structure, featuring a thick square sandwich as the main component, topped with a fried egg, and wrapped in a layer of sauce, giving it a distinctive shape.

Figure 10c shows Edamame, which appears as green pods, often served in piles, forming a highly standardized image pattern that enhances the model’s memory and recognition capabilities. The high recognition accuracy of these food types is primarily due to their stable appearance, uniform color, and clear structure. These factors enable the multi-head cross-attention mechanism to accurately identify key discriminative information, while self-supervised clustering further reinforces intra-class consistency, thereby achieving higher recognition accuracy.

Figure 11 shows the four foods with the worst recognition results. These four foods with poor recognition results have the following true labels: Chow_mein, Crab_puff, Bisque_food, and Denver_sandwich, along with the corresponding incorrect recognition results. The low recognition accuracy of the above categories of images may be due to the following reasons: First, food items with similar shapes are difficult to distinguish, such as Bisque_food, Lobster_bisque, Fish_chowder, and She-crab_soup, which are all types of seafood soup. The difference lies in the seafood ingredients used, resulting in soup-like food products. Second, food items with the same ingredients are difficult to distinguish, such as Crab_puff, Crab_rangoon, Crab_cake, and Crab_dip, which are all crab-based foods. Although they have different shapes, they remain difficult to differentiate. As for Denver_sandwich and Chow_mein, there are food items with similar shapes and ingredients that can be confused with them. These factors cause the multi-head cross-attention mechanism to distribute attention weights evenly across multiple similar regions when faced with samples where key features are not prominent, lacking focus and resulting in feature representations that lack sufficient discriminative power. Self-supervised clustering on these categories is also prone to being influenced by feature overlap between classes, leading to blurred class boundaries and thereby affecting overall discriminative performance.

Relevant parameters were collected to assess the computational efficiency and deployment feasibility of the model. The entire model contains approximately 150.75 M parameters. The inference process for a single image requires 31.48 GFLOPs. In our experimental environment, the average inference delay is 160.76 ms. Among them, the DINOv2 module occupies 87.08 M parameters and 22.08 GFLOPs, accounting for 58% of total parameters and 70% of computational demand. Although its complexity is high as a result of the self-attention mechanism, introducing this module can enhance the global representation ability and improve the recognition accuracy. This model is suitable for deployment on servers, and its computational cost is still within an acceptable range for offline inference or high-performance scenarios. In daily life, we can upload photos to the server using a mobile app, and the server will process the information and inform the user.

To enhance the recognition performance, we can optimize the data augmentation and feature fusion. In the data augmentation part, the brightness augmentation we use includes both reducing brightness and increasing brightness. We can introduce more diverse variations into brightness augmentation, such as adjusting the lighting color, thereby promoting more robust generalization across diverse visual conditions. In the feature fusion stage, when using multi-head cross-attention, we use CNN features as Q, ViT features as K and V. We can use multi-head bidirectional cross-attention instead of multi-head cross-attention, which enables both types of features to equally participate in the fusion process and reduces the bias in feature fusion.