1. Introduction

With the rapid advancement of the global intelligent vehicle sector, autonomous driving technology has become a pivotal element in transforming and enhancing the global automotive industry [

1]. Object detection, a crucial component of environmental perception systems, is essential for the safety performance and decision-making efficiency of autonomous driving systems due to its precision and real-time capabilities [

2]. In complex and dynamic environments, intelligent vehicles utilize sensors such as cameras and radars, along with object detection technologies, to perceive and gather information about the surrounding roadway environment [

3]. Traffic cones, often used as standard indicators in temporary road planning and traffic incident management, present a significant challenge for accurate recognition in autonomous driving systems [

4].

The Formula Student Autonomous China (FSAC) competition, with its enclosed tracks delineated by traffic cones, provides a standardized platform for the empirical testing and advancement of autonomous driving technologies. The dense arrangement of traffic cones on the track, combined with fluctuating lighting and motion blur from high speeds, closely mimics the challenges of real-world traffic cone identification, imposing significant demands on the efficacy and resilience of object detection systems.

This study focuses on optimizing object detection algorithms for an autonomous formula racing car.

Figure 1 illustrates the actual vehicle used in this research. The car is equipped with a variety of sensors to perceive its surrounding road environment and features an integrated drive-by-wire steering system and an electro-hydraulic braking system. Within the autonomous system, a Jetson Orin NX industrial control computer serves as the central computing unit, responsible for executing the software functionalities of the unmanned system. Efficient communication between algorithmic modules is achieved through the Robot Operating System (ROS) [

5]. Furthermore, the upper-level controller integrates data from a ZED 2i stereo camera and a Huahai INS570D integrated inertial navigation system to enable autonomous driving capabilities.

While the existing YOLOv13s model stands as the state-of-the-art in the YOLO series for real-time detection, it encounters critical limitations in the FSAC-specific scenario—limitations that previous small object detection studies have yet to fully address. Specifically, it exhibits insufficient feature extraction for small traffic cones, particularly during long-range detection, which often results in missed or false detections; it suffers from post-processing delays caused by Non-Maximum Suppression (NMS) when dealing with densely arranged cones, an issue that impairs real-time performance under high-speed motion; it carries excessive parameters and computational load, rendering it incompatible with resource-constrained onboard terminals such as the Jetson Orin NX; and it lacks robustness against dynamic environmental disturbances such as backlighting and low light, which undermines detection stability on outdoor enclosed tracks. These gaps collectively define the core research problem of this study: developing an object detection algorithm that balances high accuracy, real-time performance, and lightweight design to enable effective traffic cone detection in autonomous formula racing.

To address these challenges, this study implements a three-stage optimization strategy for YOLOv13s, with method selection explicitly aligned to resolve the aforementioned limitations and ensure result reliability:

Initially, we refine the YOLOv13 network architecture by integrating the DS-C3k2_UIB module, an enhanced variant of the Universal Inverted Bottleneck (UIB), into the backbone. This module is selected for its adoption of depthwise separable convolutions, which can capture local features across different channels more meticulously and achieve better extraction performance for the features contained in small objects. Meanwhile, the UIB can integrate channel information and generate new feature representations through linear combination, thereby enriching the feature description of small objects. We also employ the NMS-free ConeDetect detection head in the Head. This design eliminates NMS-induced post-processing delays, a critical improvement for high-speed scenarios with dense cones. The Tunable Neural Architecture Search (TuNAS) technique is employed to optimize the network architecture, thereby improving detection accuracy.

Secondly, the network is pruned using two methodologies: a Batch Normalization (BN) layer sparsity-based pruning strategy and a structural reparameterization-based pruning strategy, to reduce computational load for onboard deployment. The structural reparameterization strategy is ultimately selected because comparative trials show that it better retains detection accuracy. Unlike BN layer sparsity, it decouples training and pruning via gradient penalty terms, thereby mitigating the conflict between feature fitting and channel importance assessment that is common in conventional pruning.

Finally, the TensorRT framework is used for 8-bit integer (INT8) quantization of the model. This choice is justified by TensorRT’s ability to resolve the Jetson Orin NX’s memory bandwidth bottleneck via inter-layer fusion, and its KL divergence calibration minimizes quantization errors, ensuring accuracy is preserved while cutting storage and computational demands.

To verify the effectiveness of each optimization component and ensure result reliability and validity, we design a controlled ablation study: each module is incrementally added to the baseline YOLOv13s, with all experiments conducted under identical hyperparameters. After the model undergoes pruning and quantization, it is fine-tuned on the traffic cone dataset to restore potential accuracy losses. Final evaluations are further performed on the actual Jetson Orin NX-based racing car platform, ensuring results reflect real-world deployment validity.

The remainder of this paper is organized as follows: The related work in the field of object detection is reviewed in

Section 2, with a focus on analyzing the development and limitations of YOLO series algorithms;

Section 3 will elaborate on the design details of YOLOv13-Cone-Lite, including network architecture optimization, pruning and quantization methods;

Section 4 will verify it through experiments, analyzing the results of ablation studies, the effects of pruning and quantization, and the detection performance under different scenarios;

Section 5 will summarize the research conclusions, pointing out the limitations and prospective future directions. Through this structure, the complete research process from problem formulation and method design to experimental verification is systematically presented, providing technical reference for object detection in enclosed environments.

2. Related Works

Object detection, a fundamental task in computer vision, generally falls into two main categories: classical techniques and deep learning-based algorithms. Conventional object detection methods, which rely heavily on hand-crafted feature descriptors, often suffer from high computational complexity [

6] and limited robustness [

7]. This makes it challenging for them to meet the stringent demands of autonomous driving in intelligent vehicles operating under complex and dynamic road conditions. However, with the rapid advancements in computing hardware and continuous refinement of Convolutional Neural Network (CNN) architectures, deep learning-based object detection algorithms have rapidly developed and emerged as the dominant approach in recent years.

Object detection methods based on deep learning can be broadly categorized into two types: two-stage algorithms utilizing region proposal networks and single-stage algorithms that directly regress bounding boxes. Due to the comparatively slower detection speed of two-stage models, research efforts have increasingly focused on advancing single-stage object detection techniques. Joseph introduced the YOLOv1 (You Only Look Once) algorithm, which reformulated object detection as a regression problem by directly predicting object locations and classes from a single image input, achieving an impressive detection speed of 150 frames per second [

8]. Liu Wei et al. proposed the SSD (Single Shot MultiBox Detector) algorithm, which employs a pyramid architecture to detect objects across multiple feature map levels, thereby enhancing detection performance for small objects [

9]. To address the limited detection accuracy of YOLOv1, Joseph and colleagues successively developed YOLOv2 [

10] and YOLOv3 [

11], improving recall and localization precision through enhancements in network design, training strategies, and multi-scale detection. Alexey et al. presented YOLOv4, which integrates a feature pyramid network to boost feature extraction capability and introduces a novel image augmentation technique to improve robustness [

12]. Subsequently, Ultralytics released YOLOv5, featuring a novel lightweight network architecture, an adaptive anchor mechanism to improve generalization, and Mosaic data augmentation to further enhance robustness [

13]. Li et al. optimized the YOLOv6 architecture by incorporating the reparameterization principle from the RepVGG framework, which significantly increased detection speed [

14]. In the same year, Alexey et al. introduced YOLOv7, which enhances the network structure with an Efficient Layer Aggregation Network (ELAN) and improves parameter utilization efficiency through a gradient path design, thus accelerating feature extraction [

15]. Ultralytics developed YOLOv8, adopting an anchor-free approach to better handle irregularly shaped objects and decoupling classification and bounding box regression tasks at the detection head, thereby improving overall model performance [

16]. Wang et al. proposed the concept of programmable gradient information and designed YOLOv9, which leverages the Generalized Efficient Layer Aggregation Network (GELAN) to fuse multi-scale feature information, maintaining a lightweight model with real-time performance while enhancing accuracy [

17]. Wang et al. subsequently introduced YOLOv10, which eliminates the need for conventional NMS by designing an end-to-end detection head, markedly improving detection speed [

18]. In the same year, Ultralytics incorporated various optimization strategies from previous YOLO versions, refined the network architecture, and added new functionalities to develop YOLOv11 [

19]. YOLOv12 integrates attention mechanisms, including the Residual Efficient Layer Aggregation Network (R-ELAN), lightweight axial attention (A2) and Flash Attention, collectively enhancing robustness and accuracy while preserving real-time speed [

20]. To overcome limitations in capturing complex global many-to-many high-order relationships, YOLOv13 introduces the Hypergraph-based Adaptive Correlation Enhancement (HyperACE) mechanism and the Full-process Aggregation and Distribution (FullPAD) framework. It replaces traditional large-kernel convolutions with depthwise separable convolutions, improving detection performance while reducing parameters and computational load [

21]. YOLOv13 currently represents the most accurate and real-time capable model in the YOLO series.

The development of object detection models showcases a persistent drive to tackle challenges in feature extraction, real-time processing, and adaptation to specific application contexts. YOLOv1, as an early-stage model, initiated the single-stage detection paradigm, laying a fundamental framework. However, it lacked advanced techniques to cope with the complexities of diverse object features. As the series evolved, models like YOLOv3 introduced multi-scale feature fusion, representing progress in capturing objects of different sizes, yet they still operated within relatively conventional design scopes. Subsequent models, ranging from YOLOv6 to YOLOv13, started integrating more refined techniques. Reparameterization, anchor-free design, and attention mechanisms were gradually adopted, aiming to boost detection accuracy and efficiency. Nevertheless, these enhancements were often generalized for wide-ranging object detection tasks and failed to meet the unique demands of traffic cone detection in autonomous racing scenarios. For instance, while attention mechanisms in later YOLO models improved feature discrimination, they did not account for the specific hurdles of detecting small, densely packed traffic cones under dynamic environmental factors such as motion blur or changing lighting.

Our YOLOv13-Cone-Lite sets itself apart by addressing these unfulfilled needs. Unlike previous models that either focused on general enhancements or partial improvements, it combines multiple advanced techniques. The integration of reparameterization for structural efficiency, NMS-free design for real-time detection of dense objects, and targeted small-object optimization for traffic cones enables it to tackle the trio of challenges: high precision, real-time performance, and lightweight deployment, crucial for autonomous formula racing. By aligning technical innovations with the specific constraints, our work builds on the cumulative advancements of the YOLO series while redirecting it to fill the niche of specialized object detection. This not only pushes the state-of-the-art in object detection for this particular scenario but also demonstrates the adaptability of the YOLO framework to domain-specific problems.

To systematically distill the evolutionary logic of detection frameworks and clarify our study’s positioning,

Table 1 concisely organizes core technical dimensions.

3. Methodology

3.1. Network Architecture Optimization for Object Detection Algorithms

For the object detection task in autonomous formula racing cars, this study proposes a traffic cone detection algorithm based on YOLOv13s. The original YOLOv13s algorithm, however, still exhibits limitations in detection accuracy.

Integrating recent advancements in deep learning, we enhance the network architecture of YOLOv13s, specifically designing our method, YOLOv13-Cone, for traffic cone identification to optimize both accuracy and computational efficiency. Specifically, the original DS-C3k2 module in the Backbone is restructured into the more efficient DS-C3k2_UIB module by incorporating the UIB structure. This modification improves the model’s feature extraction capacity while maintaining computational efficiency.

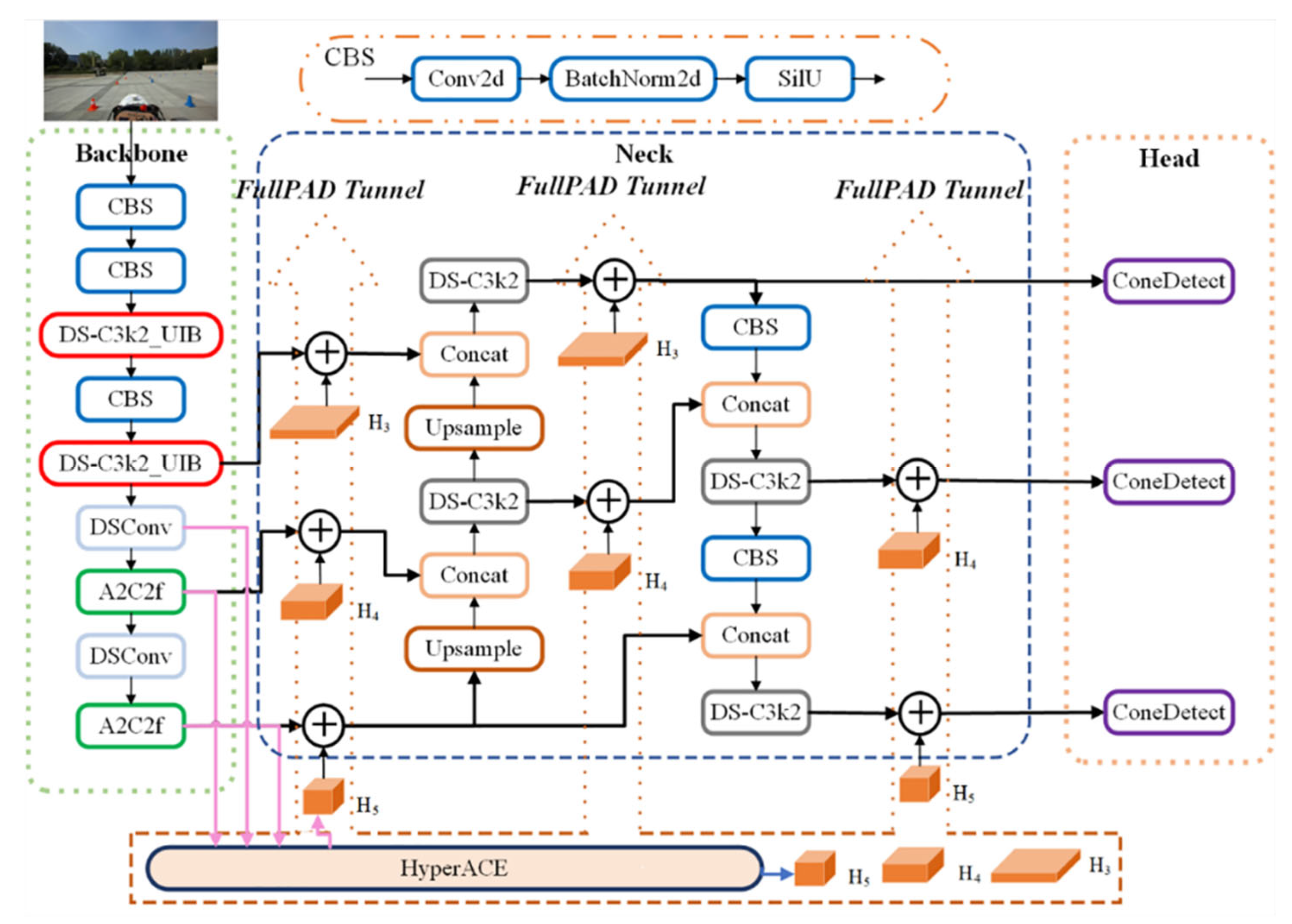

Furthermore, given the high concentration of traffic cones in racetrack settings, the model frequently generates an excessive number of predictions. Traditional methods rely on NMS during post-processing, which is computationally intensive and can adversely affect real-time performance. To mitigate this, the network’s head is restructured using an NMS-free approach, leading to the introduction of an innovative detection head module, ConeDetect. This significantly reduces post-processing latency and enhances overall inference speed. The optimized network architecture is illustrated in

Figure 2.

3.1.1. Design of the DS-C3k2_UIB Module

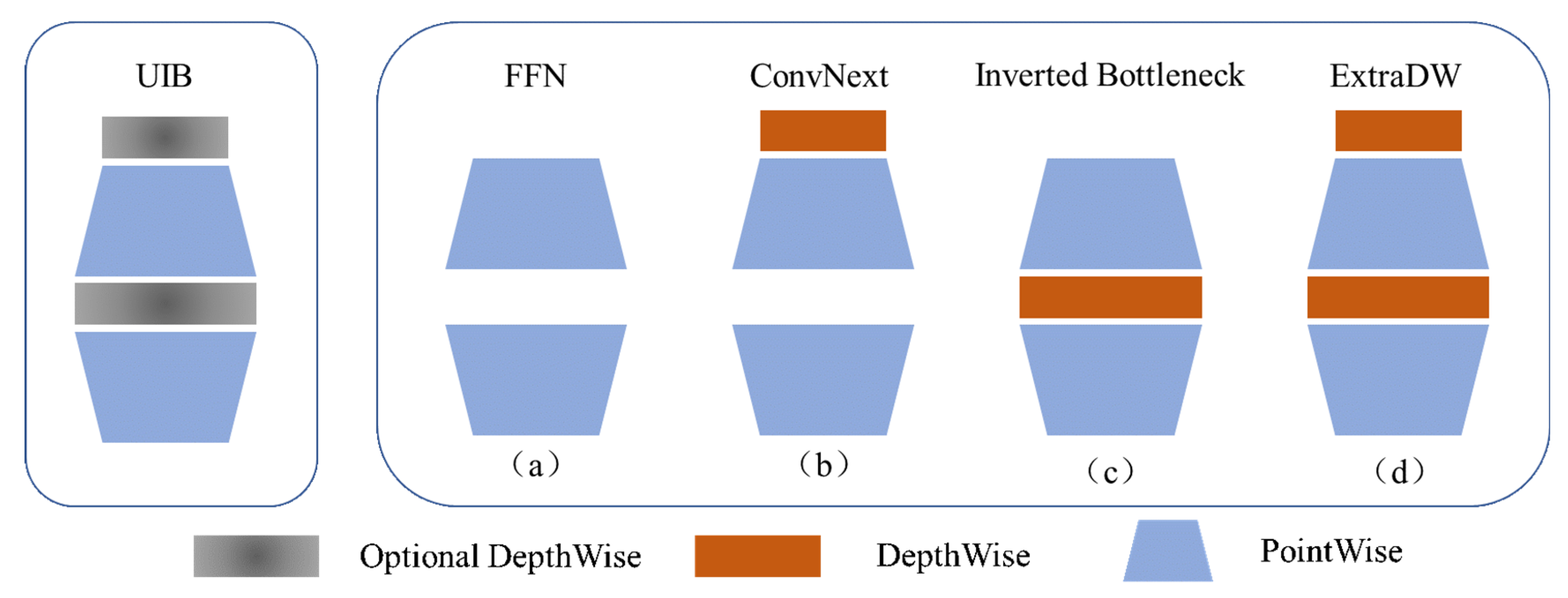

The MobileNetv4 algorithm introduces the highly efficient Universal Inverted Bottleneck (UIB) architecture, designed for efficient deployment on resource-constrained mobile devices [

22]. It enhances the traditional inverted bottleneck architecture through two optional Depthwise Convolution (DWConv) components. This design allows for four dynamically alternatable structural variants, facilitating a more adaptive and efficient network architecture. The UIB module’s structure is shown in

Figure 3.

The YOLOv13 network is further optimized by integrating the UIB module with an enhanced neural architecture search method, TuNAS. The improved model can fully leverage the benefits of various UIB structural variants. Compared to models employing a singular UIB version, the network architecture refined by the TuNAS technique is better suited for the traffic cone detection task. It achieves significantly enhanced detection precision while preserving significant computational efficiency.

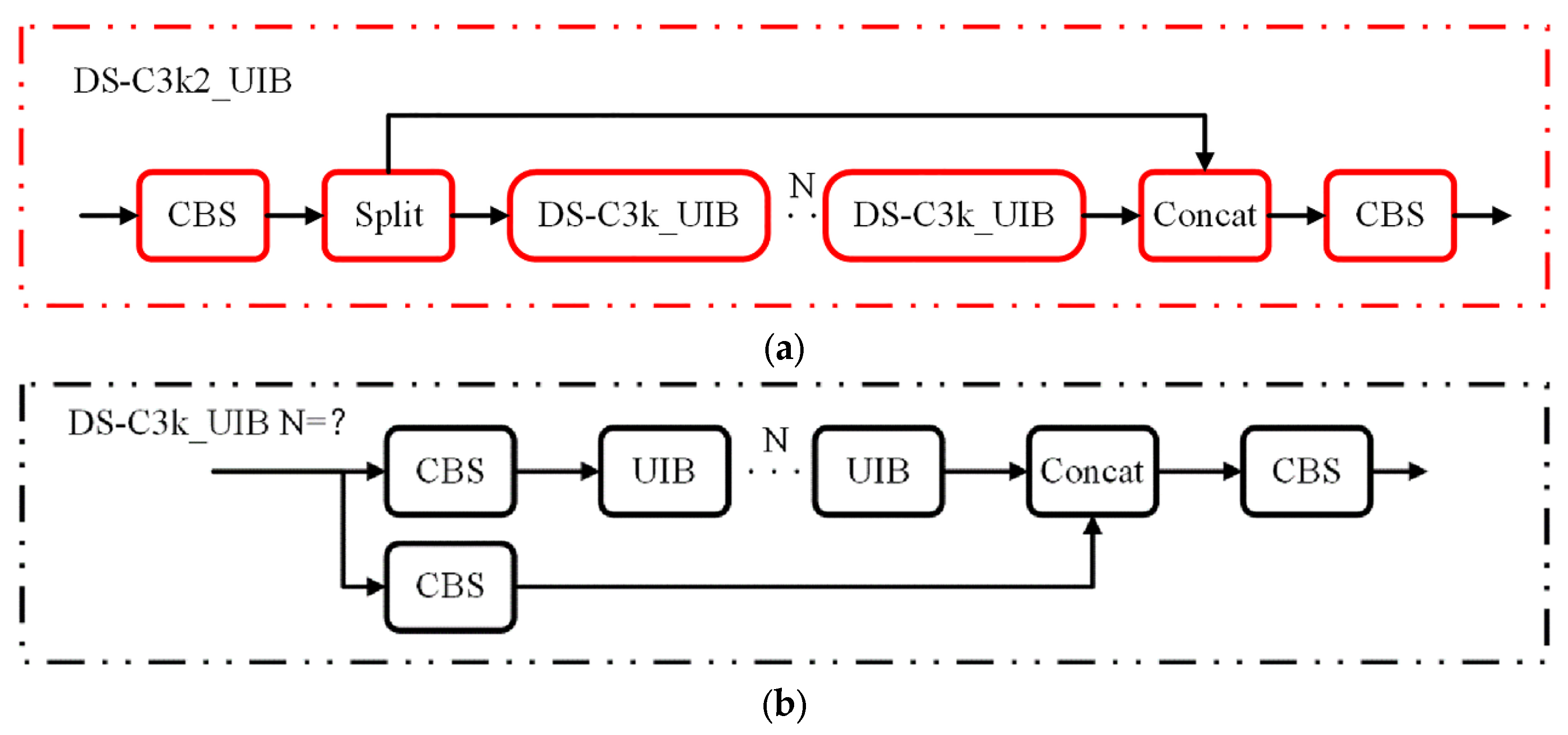

We integrate the UIB module to enhance the DS-C3k2 module within the Backbone of the YOLOv13s architecture, leading to the newly developed DS-C3k2_UIB module, as depicted in

Figure 4a.

Figure 4b further illustrates the internal structure of a specific sub-component of the DS-C3k2_UIB module. This design enhances the Backbone’s feature extraction capability while significantly reducing model complexity and computational demands, thereby enabling deployment on resource-constrained terminals.

3.1.2. Design of the ConeDetect Detection Head

Conventional YOLO-series algorithms typically utilize a one-to-many label assignment technique, where a single ground truth object can correspond to multiple predicted bounding boxes during inference. This necessitates NMS in post-processing to eliminate superfluous detections and retain the prediction with the highest Intersection over Union (IoU) and confidence score. However, in images with high object density, NMS can significantly prolong the post-processing phase due to its computational overhead [

23]. To address this, the RT-DETR technique introduced an end-to-end architecture based on Transformer networks, thereby obviating the need for NMS in post-processing and achieving genuine real-time end-to-end object detection [

24]. Similarly, algorithms like OneNet employ a one-to-one label assignment technique, associating each ground truth object with a singular predicted bounding box. This approach markedly diminishes superfluous predictions, eliminates the necessity for NMS in post-processing, and significantly improves the operational runtime efficiency of the detection model [

25].

Unlike the conventional one-to-many label assignment strategy, the one-to-one label assignment strategy assigns a singular bounding box to each ground truth object. While simplifying post-processing, this approach often leads to a restricted quantity of positive samples, complicating model convergence during training and potentially diminishing detection accuracy [

26]. To address this, this study employs a dual-label assignment technique, combining the one-to-one label assignment with the original one-to-many assignment mechanism.

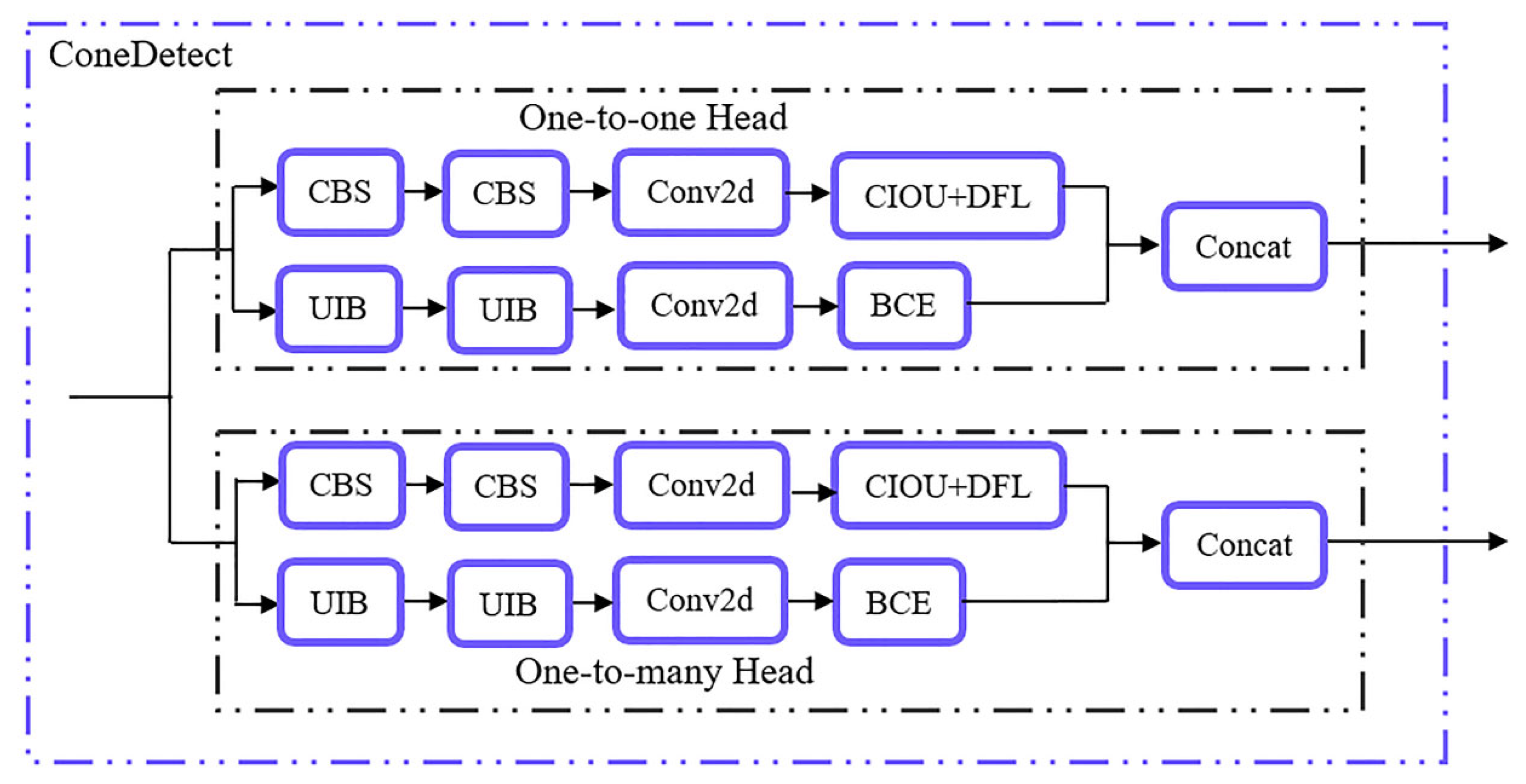

A novel detection head, termed ConeDetect, is presented, sharing an identical architecture for both assignment approaches. During training, the two detection heads are optimized concurrently, with gradients from the one-to-many assignment head guiding the optimization of the one-to-one assignment head. Critically, during the inference phase, only the one-to-one assignment detection head is employed. This design obviates the need for NMS and facilitates real-time, end-to-end detection of traffic cone targets.

Additionally, the original detection head of the YOLOv13s architecture is further optimized by integrating the previously proposed UIB module into its regression branch, thereby augmenting the model’s computational efficiency. The architecture of the ConeDetect detection head is illustrated in

Figure 5.

3.2. Lightweight Design for Object Detection Algorithm Based on Model Pruning

Environment perception algorithms for autonomous formula cars require rigorous real-time performance. However, hardware constraints concerning computational resources and power consumption limit the YOLOv13-Cone model’s real-time inference speed, necessitating further optimization [

27]. To address this, we employ model pruning to optimize the algorithm’s execution performance on resource-limited industrial control computers. The primary objective is to reduce computational cost by removing superfluous parameters from the model, thereby enhancing the algorithm’s inference speed.

Model pruning techniques are primarily categorized into structured and unstructured pruning, based on their operational granularity and optimization goals. Unstructured pruning eliminates specific weight characteristics of neurons, offering significant flexibility and compatibility with diverse network architectures [

28]. However, this technique typically yields sparse matrices, which necessitate specialized sparse matrix libraries and dedicated hardware support, thereby limiting its practical efficacy [

29]. Conversely, structured pruning removes complete structural elements, such as convolutional kernels or channels, ensuring the pruned model maintains a dense architecture. This leads to enhanced structural optimization, making it more suitable for practical implementation [

30]. Therefore, this section focuses on structured model pruning, employing two strategies—BN layer sparsity and structural reparameterization—to achieve lightweight optimization of the YOLOv13-Cone model.

3.2.1. Model Pruning Strategy Based on Batch Normalization Layer Sparsity

As the depth of neural networks increases, the number of parameters in algorithmic models significantly increases. However, some of these parameters have relatively low weights, and pruning them has minimal impact on the model’s overall performance. To improve computational performance, this study employs a model pruning strategy focused on sparsity within BN layers. We incorporate an L1 sparsity regularization term after the BN layers of a pre-trained model. Through limited training, the scaling factors of specific channels in the BN layers converge towards zero. This process facilitates the identification of neuron channels that minimally impact the model’s performance. By eliminating these less significant channels, redundant parameters are minimized, leading to model size reduction and enhanced real-time runtime performance of the algorithm [

31]. The complete algorithmic process is detailed in Algorithm 1.

| Algorithm 1 Workflow of model pruning based on the BN layer sparsity |

| Input: |

| Initial network |

| Output: |

| Compact network |

1. Trian with channel sparsity

2. Set pruning ratio α = {0.1,0.3,0.5,0.7}

3. for i∈α do

4. Prune channels with a pruning ratio

5. Fine-tune the pruned network

6. Find the best compact network |

Placing a BN layer after each convolutional layer enables convolutional neural networks to efficiently standardize the output of convolutions. This ensures that the inputs to subsequent layers maintain a normal distribution. This standardization process significantly accelerates model convergence and enhances training efficiency. The computation of the BN layer is shown in Equation (1).

where

and

denote the input and output of the BN layer, respectively;

and

represent the mean and variance of the input data to the BN layer;

is a small constant (typically set to 10-6) added to prevent numerical instability;

and

are the learnable scaling and shifting parameters that modulate the distribution of the output values.

The structured pruning strategy leverages channel sparsification by incorporating scaling factors into the neural network channels. During the sparsity-inducing training phase, additional sparsity regularization is applied to these scaling factors. Channels with scaling factors approaching zero are then identified as having negligible influence on the overall model performance and are subsequently eliminated. The pruned model is then fine-tuned to maintain its performance while preserving its lightweight characteristics. The loss function used in the sparsity training process is defined in Equation (2).

where

and

denote the input and output of the model, respectively;

represents the trainable weights of the model;

is the original loss function of the model;

denotes the scaling factor;

indicates the neuron parameters to be scaled;

is the set of neurons subject to scaling; and refers to the sparsity-regularized loss function. In this work, the L1 regularization is employed as the sparsity-inducing loss function, i.e.,

.

This study leverages the scaling parameter (denoted as ) of the BN layer as the sparsity-inducing factor to expedite model convergence during sparse training, thereby eliminating the need for additional scaling layers. This method not only streamlines the network architecture but also leverages the intrinsic properties of BN layers, thus enhancing training efficiency. Since both convolutional and BN layers perform linear transformations, they are commonly fused during inference. The sparsity achieved within the BN layers can then be efficiently utilized for global structural pruning of the network. The pruned model is then fine-tuned on the traffic cone dataset to optimize the remaining weights, enhance generalization, and restore the model’s accuracy to its pre-pruning level.

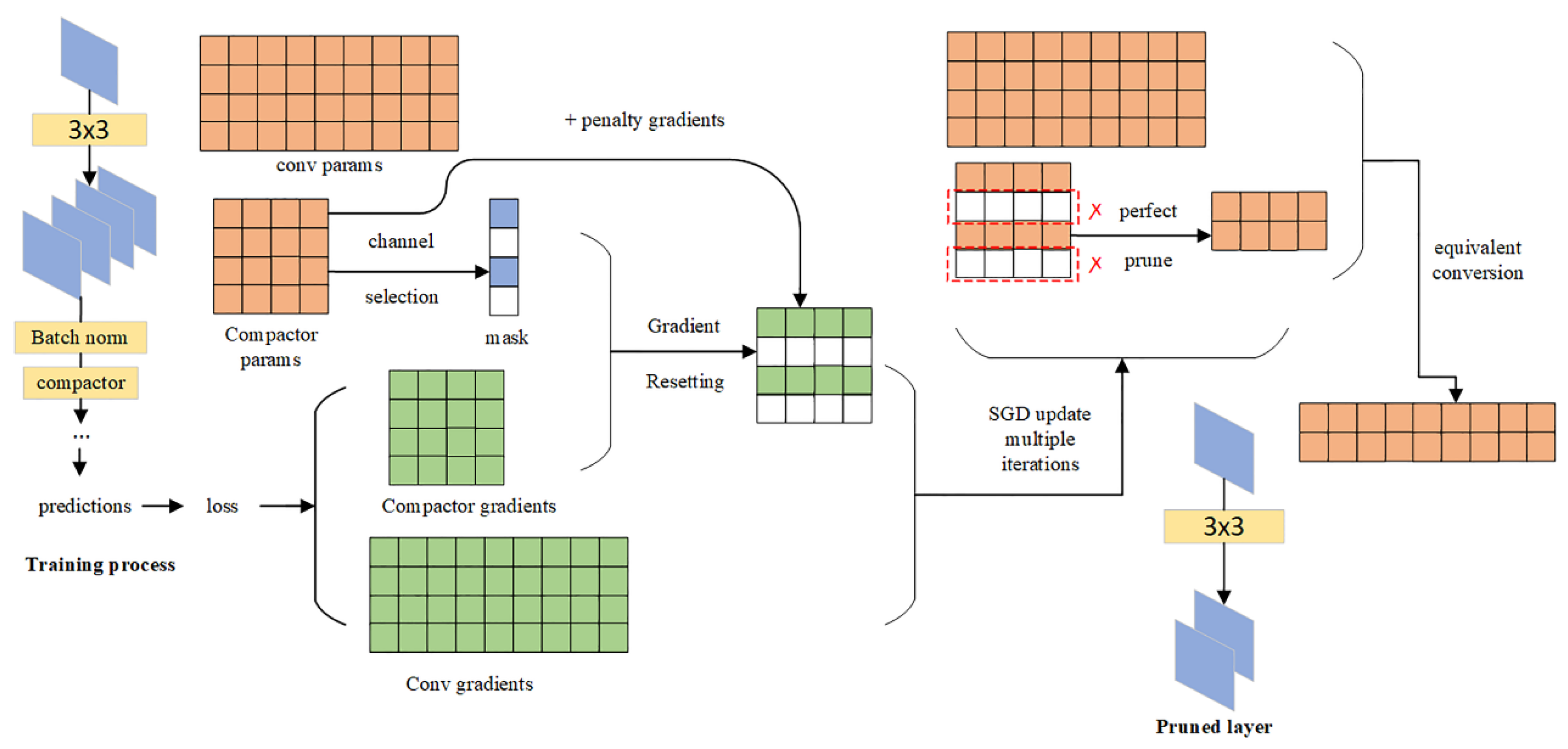

3.2.2. Model Pruning Strategy Based on Structural Reparameterization

The concept of structural re-parameterization was initially introduced by the RepConv [

32] and RepVGG [

33] networks. This approach employs a complex multi-branch architecture during the training phase, which enhances feature extraction and accelerates convergence. Crucially, during inference, this multi-branch structure is reparameterized into an equivalent single-branch architecture. This transformation significantly improves computational efficiency while maintaining model accuracy.

The concept of structural reparameterization is founded on the principle of kernel equivalence transformation. During inference, convolutional layers are first fused with their corresponding BN layers. Subsequently, identity mappings within the multi-branch architecture are transformed into 1 × 1 convolutional kernels with identity matrix weights. Notably, a 1 × 1 convolutional kernel can be reinterpreted as a 3 × 3 dilated convolution kernel. By utilizing these transformations, all convolutional kernels from different branches can be unified into 3 × 3 kernels and then merged. This effectively converts the complex multi-branch structure into a streamlined single-branch architecture. This significantly simplifies the inference process. The principle of this approach is illustrated in

Figure 6.

The BN layer sparsity-based pruning strategy identifies and removes less important neuron channels with scaling factors below a predetermined threshold, thereby lightening the model through sparse training. However, this approach invariably leads to a discernible decline in model accuracy. Moreover, conventional pruning techniques often combine channel importance assessment with the feature fitting of convolutional layers. This simultaneous optimization creates conflicts during gradient descent, which in turn reduces pruning efficacy. To overcome these limitations, this study introduces a systematic reparameterization-based pruning technique that decouples the training and pruning processes. This approach effectively maintains model performance while significantly reducing the accuracy loss commonly associated with traditional pruning strategies [

34]. The overall pruning pipeline is illustrated in

Figure 7.

The structural reparameterization-based model pruning strategy introduces a 1 × 1 identity convolutional kernel after each convolutional layer. This ensures the original convolutional structures can continue to effectively fit image features from the dataset during sparse training. Crucially, the convolution operation and loss computation remain unchanged. The newly added identity kernel solely evaluates the importance of each neuron channel. To effectively decouple the training and pruning tasks, a gradient penalty term is imposed on this identity kernel, specifically preventing it from participating in feature extraction. This approach ensures the pruning process does not interfere with the model’s feature extraction capabilities, allowing the model to maintain high accuracy while achieving a lightweight design. The loss function applied to the identity kernel during sparse training is defined in Equation (3).

where

denotes the loss function of the YOLOv13-Cone algorithm;

represents the sparsity-inducing scaling factor in the model; and

is the L1 regularization loss function.

Based on Equation (3), the gradient computation formula for the identity convolution kernel during the model’s sparse training process can be derived, as shown in Equation (4).

where

represents the gradient penalty term, which is set to zero to ensure that the identity convolution kernel performs only the pruning task, thereby decoupling it from the model training task. Meanwhile, the presence of the gradient penalty drives certain channels of the identity convolution kernel toward zero after sparse training, which enables the identification of less important neuron channels.

Upon concluding sparse training, neuron channels within the identity convolutional kernel whose scaling factors approach zero are eliminated. Subsequently, the original convolutional kernel is fused with the identity kernel into a single equivalent kernel through structural reparameterization, thereby achieving channel pruning. This channel pruning technique, based on structural reparameterization, effectively alleviates the detrimental effects of sparse training on the original network architecture. It reduces the typical decline in model accuracy associated with channel pruning, thus achieving model lightweighting while preserving the stability and integrity of model performance.

3.3. Lightweight Design of Object Detection Algorithm Based on Model Quantization

Model quantization is a widely used method for compressing deep neural networks. It reduces both storage demands and computational expenses by converting high-precision weights and activations into lower-precision representations [

35]. This process not only cuts down memory usage but also boosts inference efficiency and resource utilization while largely preserving model accuracy. Consequently, quantization is particularly well-suited for deployment on resource-limited embedded systems [

36].

Although the YOLOv13-Cone algorithm’s real-time efficacy has significantly improved after optimization using the structured reparameterization-based pruning technique, there is still room for further enhancement. To further boost the algorithm’s inference efficiency on the Jetson Orin NX embedded platform, this study utilizes TensorRT and implements model quantization techniques. This approach creates a lightweight version of the YOLOv13-Cone model, leading to improved performance and enhanced resource utilization efficiency.

3.3.1. Model Lightweight Design Based on TensorRT

TensorRT, developed by NVIDIA, is a high-performance toolkit for model quantization and optimization, specifically designed for deployment on NVIDIA GPUs. It boosts inference efficiency and optimizes resource consumption through techniques like layer fusion and parameter quantization, making it widely used for efficient deployment of deep learning models. At its core, TensorRT interprets the network architecture to identify the most efficient GPU kernel for each operation. It integrates analogous convolutional layers, reduces weight precision, and constructs an autonomous inference engine. This eliminates runtime dependencies on frameworks like PyTorch, allowing direct invocation of CUDA kernels for inference execution. This study leverages TensorRT for the lightweight optimization of the YOLOv13-Cone model. The detailed quantization workflow is presented in Algorithm 2.

| Algorithm 2 Workflow of model quantization. |

| Input: |

| Initial network |

| Output: |

| Quantization network |

| 1. Convert the trained model to ONNX format |

| 2. Create objects and networks, and read weight coefficients |

| 3. Optimize the network based on input, output, and network structure |

| 4. Build the optimized network as an engine object and serialize it into a file |

3.3.2. Principle of Layer Fusion

Deep learning algorithms heavily rely on the parallel computing capabilities of high-performance GPUs for intensive data processing. While the Jetson Orin NX embedded device boasts a powerful 1024-core Ampere GPU architecture and 32 Tensor Cores, delivering up to 100 TOPS INT8 computation capability, its memory bandwidth is constrained to 102.4 GB/s. This notable disparity between data transfer rate and processing capacity creates a significant performance bottleneck [

37]. Such an imbalance, where computational capacity outpaces memory bandwidth, is common in embedded systems. The problem intensifies with increased model complexity, leading to prolonged data transfer durations.

To address this issue, TensorRT employs a layer fusion strategy to enhance the network architecture. Specifically, convolutional layers, BN layers, and activation functions are fused vertically, while convolutional layers with identical kernel configurations are merged horizontally. These fusion operations significantly reduce the frequency of data transfers between memory and computational units. This, in turn, decreases resource consumption and substantially enhances inference efficiency. As illustrated in

Figure 8, this layer fusion strategy also significantly simplifies the network architecture.

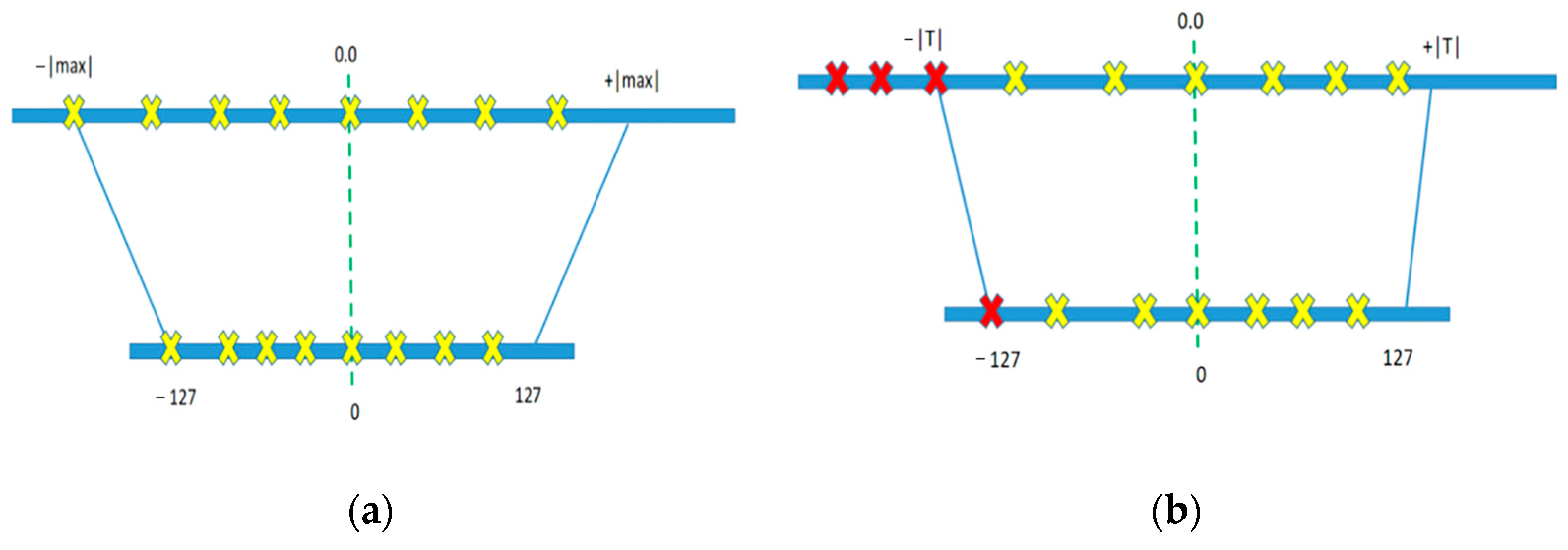

3.3.3. INT8 Quantization Principle

Mainstream deep learning models typically store weights in 32-bit floating-point (FP32) format to maintain computational precision. However, FP32 consumes 4 bytes per weight and involves intricate computations, which leads to longer inference times compared to integer arithmetic [

38]. Conversely, the 8-bit integer (INT8) format uses only 1 byte, significantly reducing memory usage and bandwidth requirements. Moreover, INT8 is optimally designed for GPU acceleration, offering enhanced parallelism and reduced power consumption. Consequently, in this study, we quantize model weights from FP32 to INT8.

The quantization process employs a linear mapping strategy, which is classified into two main approaches: non-saturating and saturating quantization. The non-saturating method maps the full value range based on the maximum absolute weight, directly scaling values to the range of [−127, 127]. However, this approach is highly sensitive to outliers, often resulting in a reduced effective quantization range and consequently decreased accuracy. In contrast, the saturating method determines a threshold from the weight distribution: parameters falling within this threshold are linearly scaled, while those exceeding it are clipped to ±127. This technique effectively mitigates the impact of outliers, achieving a superior balance between inference accuracy and computational efficiency. The contrast between saturating and non-saturating quantization is visually represented in

Figure 9.

This study employs the saturating mapping approach to quantize the model’s neural network weights. The Kullback–Leibler (KL) divergence calibration method is used to determine optimal quantization thresholds, aiming to minimize computational errors from quantization and maintain stable inference accuracy. During the quantization process, a subset of the dataset is first designated as a calibration set. This set is then analyzed using the original FP32 model to gather intermediate activation statistics. Subsequently, the KL divergence technique determines the ideal clipping threshold for each layer of the neural network, thereby improving the overall quality of quantization.

KL divergence, or relative entropy, is a metric utilized to quantify the disparity between two probability distributions. In the realm of model quantization, it assesses the distributional shift prior to and after quantization, thereby accurately representing the mistake imposed by quantization [

39]. The KL divergence serves as an effective metric for quantifying the error introduced during the model quantization process, and it guides the selection of optimal quantization thresholds [

40]. By doing so, it reduces inference latency while ensuring that the quantized model retains as much accuracy as possible. Its formulation is given in Equation (5).

where

is the probability density function of the pre-quantization weight distribution, and

represents that of the quantized weight distribution.

is the KL divergence value, with a smaller value indicating that the quantized distribution more closely approximates the original distribution.

The KL divergence calibration method begins by collecting the weight parameters of each neural network layer. These parameters are then randomly partitioned into multiple subsets to construct corresponding histograms, which visually depict the weight distributions. Next, a set of candidate clipping thresholds is selected based on these histograms. Under each threshold, the neuron weights are quantized. For each candidate, the KL divergence between the original and quantized distributions is calculated. This step assesses the degree of distributional distortion caused by quantization.

The threshold that minimizes the KL divergence is selected as the optimal clipping value for that layer. This calibration method effectively preserves the statistical characteristics of the original weight distributions while minimizing quantization-induced errors, thus helping ensure that the streamlined model maintains robust performance.

4. Experiment

Experiments were executed on a fixed Jetson Orin NX platform (16 GB variant) to mitigate system-induced variability. This platform is equipped with a 1024-core NVIDIA Ampere architecture GPU featuring 32 Tensor cores and runs on the Ubuntu 20.04 operating system. Key software configurations for the perception algorithm are detailed in

Table 2.

For reliability assurance, a traffic cone dataset was constructed, covering 5 typical scenarios (normal light, backlight, shadow, long-range small targets, dense arrangement) with 10,000 images. Data were split into training, validation, and test sets at a 7:2:1 ratio, and all annotations underwent cross-verification by 3 annotators, controlling the annotation error rate below 2%. Model training employed fixed hyperparameters (learning rate = 0.001, epochs = 300, batch size = 16) and was repeated 3 times. The average result is reported, with the standard deviation controlled below 0.5%.

Regarding the uncertainty in data collection, considering that fluctuations in the data distribution may arise from camera shooting angles (with a deviation of ±5°), distances (with an error of ±0.5 m), and illumination intensities (with a fluctuation of ±100 lux), random angle rotation (−10° to 10°), scaling (0.8 to 1.2 times), and brightness adjustment (0.5 to 1.5 times) are introduced in the data augmentation stage. This is to enhance the model’s tolerance to input perturbations.

For the randomness in model training, since the randomness of neural network initialization weights and data loading order may lead to fluctuations in training results, by fixing the random seed (seed = 42) and adopting multi-round training to select the optimal model, the performance difference across different training rounds is controlled within 1%.

Concerning the uncertainty in hardware computation, given that the temperature and memory usage fluctuations of the industrial personal computer during operation may affect the inference speed, in the experiment, the ambient temperature is controlled (maintained at a constant 25 °C), and redundant background processes are closed. This ensures that the GPU utilization rate is stabilized at 90% ± 5%, and the FPS measurement error is controlled within 2 Hz.

4.1. Experimental Analysis of Network Architecture Optimization

The YOLOv13s network was enhanced by integrating the refined DS-C3k2_UIB module and the ConeDetect module. To evaluate the effectiveness of these improvements, the modified models were trained on a custom traffic cone dataset. To ensure an equitable comparison, the training protocols for both the original and improved models were executed on Chang’an University’s high-performance computing nodes, maintaining identical hyperparameters.

To further optimize the architecture, the TuNAS technique was employed for neural architecture search. This method combines reinforcement learning with loss function optimization to iteratively explore a shared-weight search space. To accelerate this search process, the convolutional kernel size of the UIB module was fixed at 3, and the dilation rate at 4. Additionally, the search space was limited exclusively to depthwise convolution operations.

For the internal design of the ConeDetect module, we adopted a unified optimization strategy. This was driven by the structural similarity between detection heads employing one-to-one and one-to-many label assignment methods. This strategy helps prevent fine-tuning failures that can arise from structural inconsistencies. By adaptively combining four UIB variants, we conducted an architecture search on the pre-trained YOLOv13-Cone model. This process ultimately led to enhanced UIB module performance. The final configuration of the optimized UIB module is presented in

Table 3.

In the table, the Structure column details the hierarchical order of the YOLOv13-Cone network and specifies the precise placement of each UIB module within the DS-C3k2_UIB and ConeDetect components. The Block column indicates the variant type of the UIB module as optimized by TuNAS. DW k1 and DW k2 denote the configurations of the optimized depthwise convolutions.

After architectural optimization, the model was fine-tuned using the same hyperparameter settings as in the pretraining phase. After 290 training epochs, image augmentation was disabled. An additional 10 epochs of training were then conducted using original images to enhance the model’s adaptability to real-world deployment scenarios.

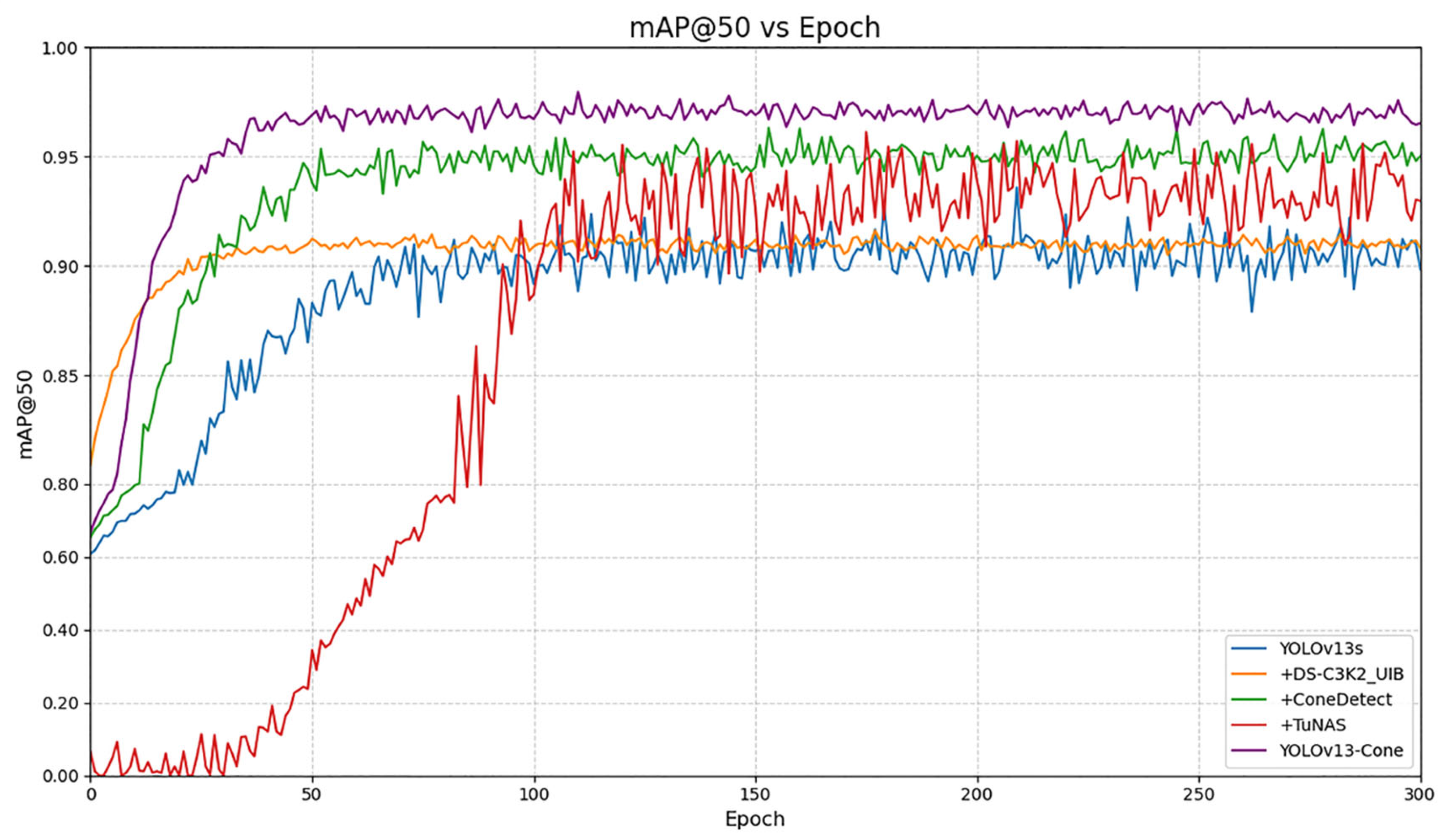

We conducted an ablation study on the traffic cone validation set to assess the performance of the proposed YOLOv13-Cone algorithm. The assessment metrics include mean Average Precision at an IoU threshold of 0.5 (mAP

50), Frames Per Second (FPS), and the total number of parameters. These results are presented in

Table 4.

In the table, YOLOv13s serves as the baseline model for our ablation study, where each subsequent network configuration represents an incremental alteration from its predecessor. YOLOv13-Cone denotes the final, optimized model after fine-tuning, with the table detailing performance metrics after each structural modification.

The ablation results show that integrating the enhanced DS-C3k2_UIB module into the Backbone significantly boosts the model’s mAP50 from 88.6% to 91.1%. This substantially augments its capacity to extract image features and enhances detection precision. However, the initial use of ExtraDw as the default UIB variation (before TuNAS modification) introduced additional structural complexity. This resulted in a marginal decrease in detection performance, with the frame rate dropping from 51 Hz to 44 Hz. In the Head, incorporating the ConeDetect module further enhanced mAP50 from 91.1% to 93.2%, and increased the frame rate from 44 Hz to 48 Hz. This improvement is twofold: it not only boosts detection accuracy but also eliminates the inference latency caused by the NMS algorithm during post-processing, thereby enhancing overall inference efficiency. Finally, the TuNAS technique was applied for structural optimization, followed by fine-tuning. This led to a rise in mAP50 from 93.2% to 94.4%, and an increase in frame rate from 48 Hz to 57 Hz, ultimately improving both detection precision and real-time efficacy.

Figure 10 illustrates the mAP

50 comparison curve from the ablation study. Compared to the original model, the mAP

50 increased from 88.6% to 94.4%, and the FPS rose from 51 Hz to 57 Hz. These represent relative improvements of 6.5% and 11.7%, respectively. These findings indicate that the proposed structural alterations, which include the UIB and ConeDetect modules, significantly enhance both the accuracy and real-time efficiency of the YOLOv13s network.

As illustrated in

Figure 11, a comparison of the confusion matrices (before and after improvements) reveals that the enhanced YOLOv13-Cone model significantly outperforms the baseline YOLOv13s in detecting all three colors of traffic cones. Specifically, the mAP

50 for yellow cones increased from 85% to 94%, for blue cones from 91% to 96%, and for red cones from 86% to 96%. These results confirm that the proposed optimization strategies substantially improve the YOLOv13s model’s performance in traffic cone detection. This not only leads to a significant enhancement in detection accuracy but also further strengthens the overall efficacy of the perception algorithm.

When operating on outdoor enclosed tracks, autonomous formula racing cars are susceptible to environmental disturbances, such as varying lighting conditions, which can adversely affect camera-based perception. To ensure accurate acquisition of road environment data, the object detection algorithm must exhibit strong robustness.

In this study, the enhanced YOLOv13-Cone model was used to detect traffic cones under both standard illumination and challenging conditions, including backlighting and low-light scenarios (as shown in

Figure 12 and

Figure 13). The experimental results demonstrate that the algorithm consistently achieves accurate detection of traffic cones across these diverse lighting environments, highlighting its strong robustness and practical applicability.

4.2. Experimental Analysis of Model Pruning

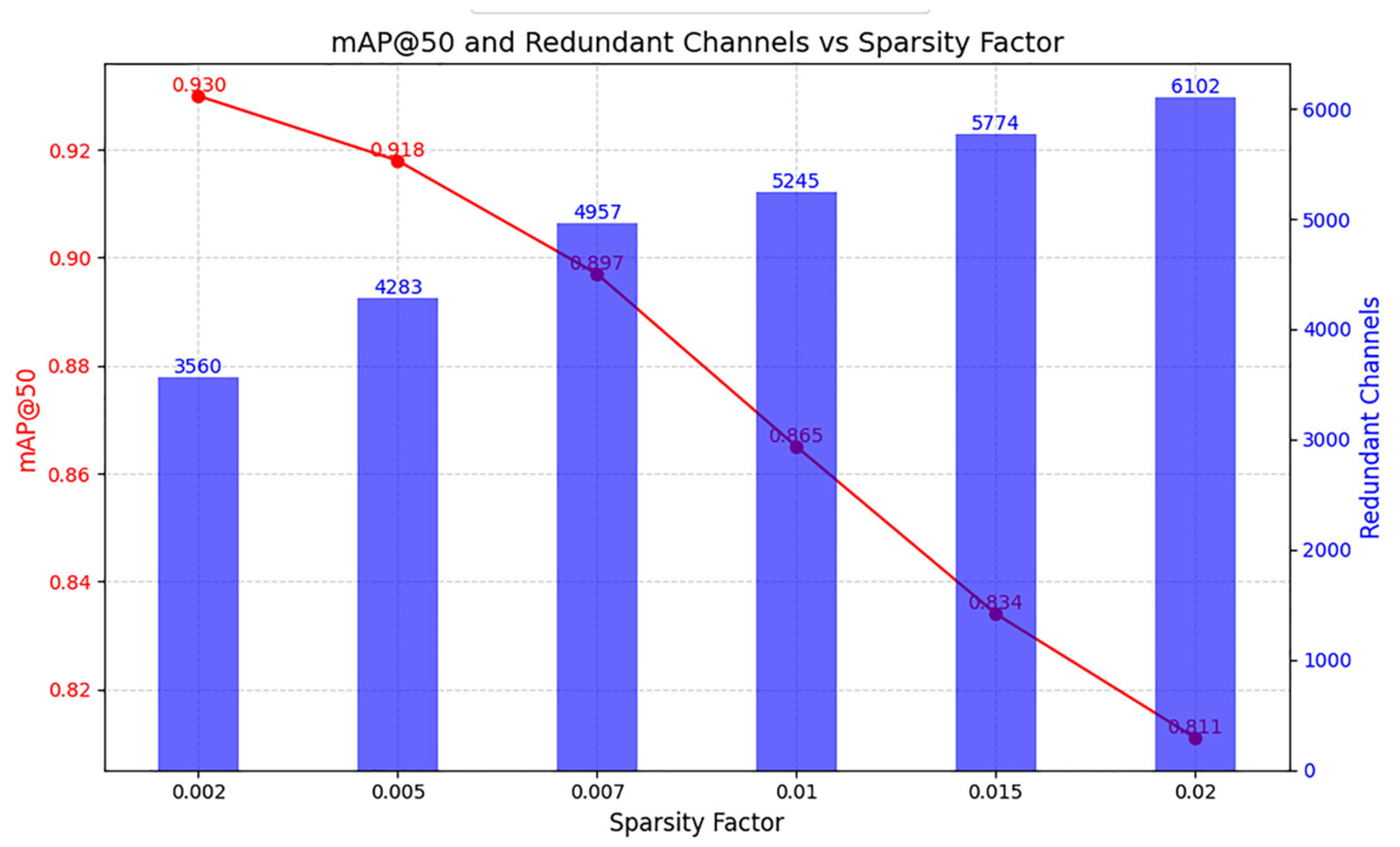

To ensure the algorithm’s performance after pruning, this study compresses the YOLOv13-Cone architecture by exclusively applying pruning operations to the Class Balanced Sampling (CBS) modules within its Backbone and Neck components. The structure of all other modules is preserved. During this pruning process, two lightweight design strategies are applied to the YOLOv13-Cone network: one based on BN layer sparsity and the other on structural reparameterization. Both approaches involve sparsity training on a pretrained model to identify redundant channels with weight coefficients approaching zero.

The model pruning strategy based on BN layer sparsity necessitates selecting an appropriate sparsity coefficient. In this study, we applied various sparsity coefficients to the pretrained YOLOv13-Cone model for sparsity training. We then analyzed the coefficient’s impact on both mAP

50 and the number of redundant channels. The experimental results are shown in

Figure 14.

In

Figure 14, the horizontal axis represents the sparsity coefficient, while the left and right vertical axes correspond to mAP

50 and the number of redundant channels, respectively. As the sparsity coefficient increases, the number of redundant channels also rises. However, the mAP

50 significantly degrades after sparsity training at higher coefficients. To ensure the pruning process minimally affects model accuracy, a sparsity coefficient of 0.005 was ultimately selected for channel pruning based on BN layer sparsity.

This study uniformly prunes neural channels with weight coefficients below 10-3 to ensure a consistent comparison of pruning effects. Subsequently, the pruned models are deployed on an industrial control computer and evaluated on the same validation dataset to assess their performance. The experimental results are presented in

Table 5.

Experimental findings show that the channel pruning strategy based on BN layer sparsity effectively reduces the model size from 20.1 MB to 15.7 MB and boosts the frame rate from 28 Hz to 41 Hz, indicating a notable improvement in model compactness. However, this approach also leads to a considerable drop in mAP50, from 94.2% to 92.7%, highlighting a significant decline in detection accuracy. In contrast, the pruning method based on structural re-parameterization reduces the parameter size to 16.3 MB and increases the frame rate to 37 Hz. Crucially, it only slightly lowers the mAP50 from 94.2% to 93.8%, thereby demonstrating superior retention of detection performance after pruning.

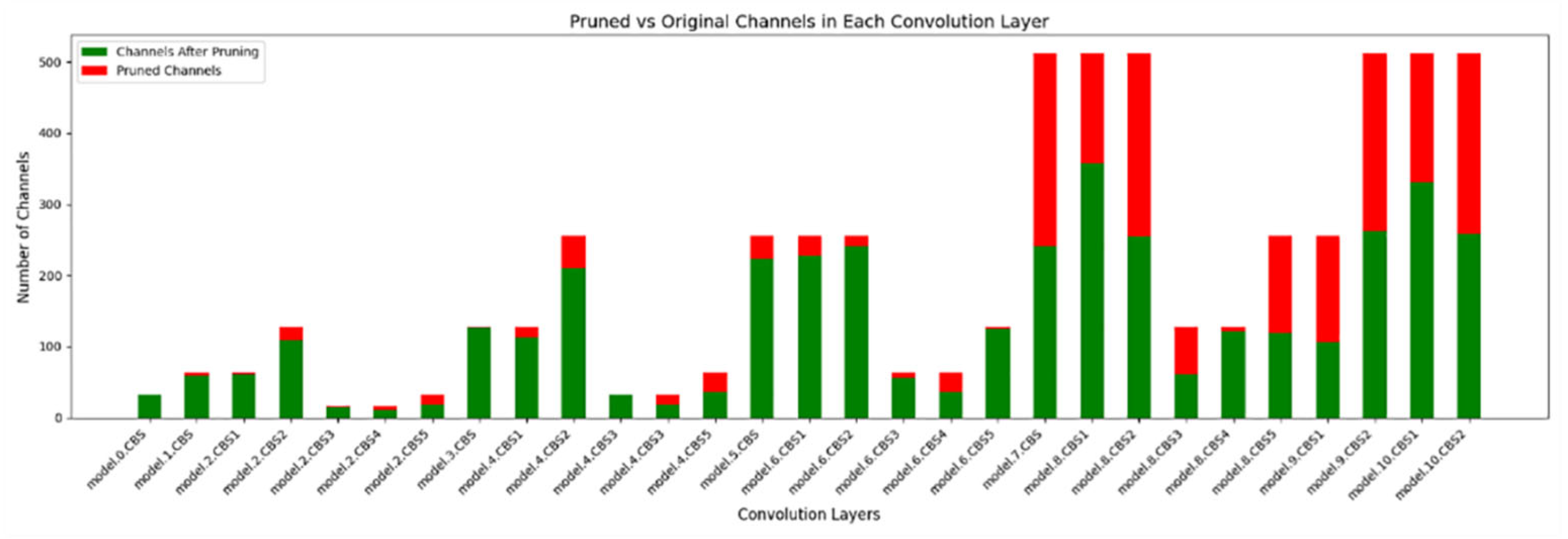

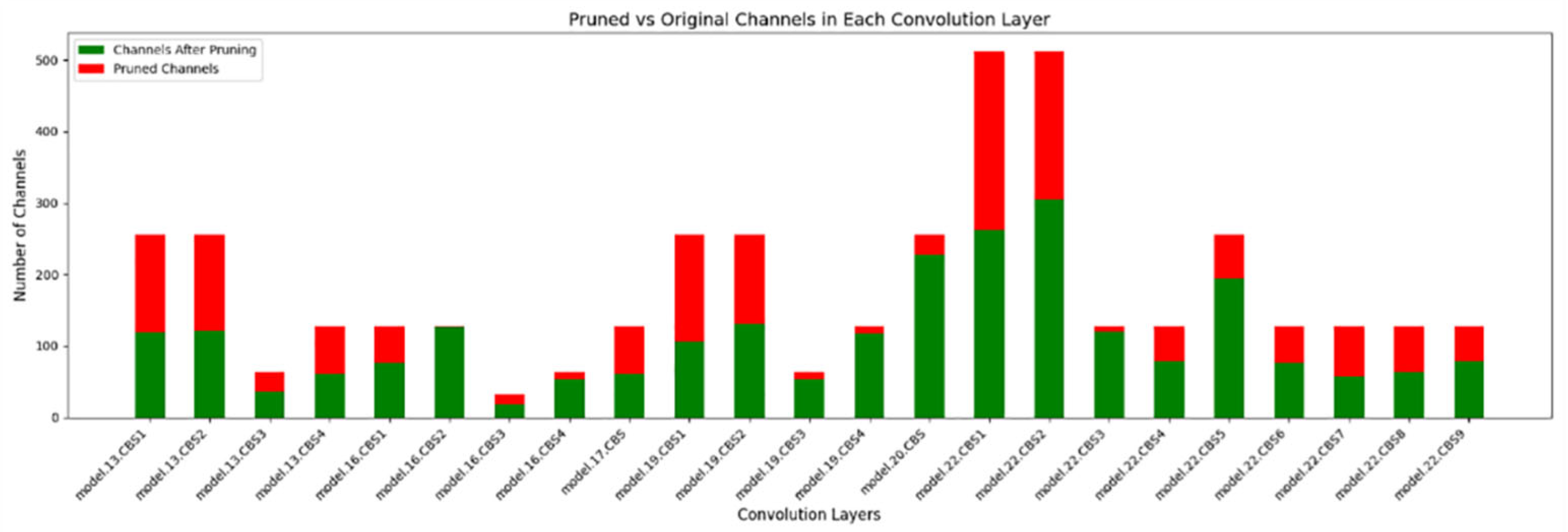

Although the pruning strategy based on structural reparameterization achieves slightly less model compression compared to the BN layer sparsity approach, it better preserves detection accuracy significantly. Therefore, this study ultimately adopts the structural reparameterization method to compress the YOLOv13-Cone model. The comparison of channel distributions before and after pruning is shown in

Figure 15 and

Figure 16.

4.3. Experimental Analysis of Quantization

In this study, the pruned YOLOv13-Cone model is quantized using the TensorRT framework. The quantization process is carried out under FP32, FP16, and INT8 precision levels to evaluate the feasibility of layer fusion and reduced-precision strategies in real-world deployment scenarios. TensorRT serves as an optimization toolkit that operates on the CUDA architecture designed for NVIDIA GPUs. Consequently, the quantized models are closely tied to the specific TensorRT version and corresponding GPU hardware. This leads to introducing compatibility challenges across different devices. All quantization and deployment experiments are therefore conducted on an industrial control computer. The simulation results are summarized in

Table 6.

Experimental results indicate that the application of FP32 quantization to the pruned YOLOv13-Cone model, which optimizes the network structure solely through layer fusion without modifying weight precision, enhances real-time performance, the delay is reduced from 27.0 ms (Baseline Model) to 23.3 ms, with only a minor reduction in detection accuracy, confirming the effectiveness of the layer fusion strategy. Further quantization to FP16 and INT8, compared to FP32, introduces some inference accuracy loss due to reduced weight precision. Nevertheless, it significantly improves inference speed. For FP16, the delay drops to 19.6 ms, and for INT8, it further decreases to 14.7 ms, verifying the practicality of quantization strategies, particularly for deployment in resource-constrained industrial control computers.

This study applies INT8 quantization via the TensorRT framework to derive the final lightweight model, YOLOv13-Cone-Lite, based on the pruned YOLOv13-Cone architecture. Compared with the pre-quantized model, the mAP50 decreases slightly from 93.8% to 92.9%, reflecting only a 0.9% reduction in accuracy. The inference speed improves significantly, with FPS increasing from 37 Hz to 68 Hz, nearly doubling performance. In addition, the model size is reduced from 16.3 MB to 8.7 MB, substantially lowering storage requirements. These results validate the effectiveness of the proposed quantization method, demonstrating reduced inference latency while maintaining competitive detection accuracy. This meets the practical demands of autonomous formula racing cars, which require both high precision and real-time perception capabilities.

Using the same test dataset, object detection was performed with both the original YOLOv13s model and the optimized YOLOv13-Cone-Lite model. Representative detection results are presented in

Figure 17. A comparative analysis reveals that the proposed YOLOv13-Cone-Lite model achieves a notable improvement in detection accuracy. Specifically, for traffic cone targets, the enhanced model is capable of accurately identifying cones within the image, effectively addressing the missed and false detections that occur with distant targets in the original YOLOv13s model.

To further contextualize the performance of YOLOv13-Cone-Lite, we compare it with recent YOLO models; the result is shown in

Table 7.

Among these models, YOLOv13-Cone-Lite stands out with its comprehensive performance. In terms of detection accuracy, it achieves a relatively high mAP50 value, reflecting its strong ability to identify traffic cones accurately across different scenarios. For inference speed, it reaches a considerable FPS, enabling efficient real-time detection, which is crucial for applications like autonomous driving where timely responses are essential. Additionally, with a compact parameter size, it maintains a lightweight advantage while delivering excellent performance. Overall, compared with other contemporary YOLO models, YOLOv13-Cone-Lite demonstrates enhanced effectiveness and adaptability in traffic cone detection tasks, showcasing the value of the proposed algorithmic design.

5. Conclusions

This study presents a comprehensive optimization strategy for object detection algorithms specifically tailored for autonomous formula racing cars, targeting deployment on resource-constrained industrial control computers. The proposed approach focuses on enhancing both detection accuracy and inference efficiency despite hardware limitations.

We improved the YOLOv13 architecture by integrating a DS-C3k2_UIB module (based on an enhanced UIB design) into its Backbone. Additionally, we adopted an NMS-free inspired ConeDetect detection head. Combined with the TuNAS strategy for architecture refinement, the resulting optimized model is designated as YOLOv13-Cone.

To compress the network, we investigated two pruning strategies: one leveraging BN layer sparsity and the other based on structural reparameterization. Experimental comparisons revealed that structural reparameterization offers superior accuracy retention while effectively reducing inference latency. Consequently, we adopted this method for structured pruning, ensuring high performance in limited computing environments.

The pruned model was further quantized using TensorRT with INT8 precision. Through layer fusion and reduced-precision strategies, this quantization achieved a substantial decrease in both storage footprint and computational overhead, all while minimizing performance degradation. This multi-stage optimization thus yielded the final lightweight model, YOLOv13-Cone-Lite.

Simulation results validate the effectiveness of our proposed methodology. On an industrial control platform, the mAP50 improved from 88.4% to 92.9%, and FPS increased from 23 Hz to 68 Hz, significantly enhancing both detection accuracy and real-time performance.

Beyond the technical achievements, this study holds practical implications for both scholars and practitioners in the fields of computer vision and autonomous driving. For academic researchers, the proposed optimization framework, integrating structural pruning with TensorRT-based quantization, provides a replicable paradigm for lightweighting object detection models in edge computing scenarios. It also enriches the research on scenario-specific model adaptation, offering insights into balancing precision and efficiency for small-target detection tasks. For industry practitioners, YOLOv13-Cone-Lite delivers a ready-to-deploy solution for closed-environment traffic scenarios, such as temporary roadworks and accident zones. Its compatibility with industrial control computers and low latency meet the real-time requirements of on-board decision-making, while its compact size reduces hardware resource occupancy, lowering the cost of deployment in industrial control systems.

Despite the contributions mentioned above, the study has certain limitations that merit acknowledgment. In terms of hardware deployment flexibility, the model’s quantization process relies on TensorRT, a toolkit tightly bound to NVIDIA GPU architectures. This dependence restricts the model’s deployment on non-NVIDIA hardware platforms, limiting its scalability across diverse industrial environments. In terms of scenario applicability, the current validation is primarily conducted in closed tracks, a controlled environment with relatively single background interference. While the model performs well in backlighting and low-light conditions, its adaptability to open, complex traffic environments remains untested, and its robustness to extreme interferences needs further verification.

To address the above limitations and further advance the research, targeted future directions are proposed. We will expand the detection target scope, extending the model’s capability from traffic cones to other dynamic objects to adapt to complex open traffic scenarios. This will require constructing a multi-category dataset with richer environmental variations. Concurrently, we aim to enhance open-environment adaptability by optimizing the model architecture: integrating multi-scale feature fusion modules and adaptive interference suppression mechanisms to improve robustness against background clutter and dynamic obstacles in open settings. We also plan to explore cross-hardware lightweight solutions, reducing dependence on NVIDIA-specific tools by adapting the model to hardware-agnostic frameworks and developing quantization strategies compatible with non-NVIDIA platforms, thereby enhancing deployment flexibility. By advancing these directions, the research can further bridge the gap between lightweight model design and real-world autonomous driving applications, providing more comprehensive technical support for object detection systems.