Controllable Speech-Driven Gesture Generation with Selective Activation of Weakly Supervised Controls

Abstract

1. Introduction

- Managing redundancy and enabling selective control activation: gesture control parameters often overlap or conflict, for example, when both gesture speed and emotion affect motion dynamics. To manage this, we introduce two masked training mechanisms: (i) single-mask training, where one random control is masked during each iteration, and (ii) single-control training, where only one control is active while others are masked.

- Effective control and mask integration into the model: it is important that the model follows the control input and is not affected by mask values when the specific control is off. Therefore, we systematically evaluate three conditioning methods: concatenation, Feature-wise Linear Modulation (FiLM) [12], and Adaptive Instance Normalization (AdaIN) [13]. These methods are assessed based on their influence on the output gesture motion and the gesture quality, which is evaluated by the proposed objective metric.

- Temporal dynamics of controls: If the control vector is represented with just one value across the entire audio segment, this does not represent the control values during training. To introduce more dynamic control vectors, we proposed two strategies: (i) random sampling within a specific range based on normal distribution, and (ii) scaling based on voice activity detection (VAD) to align control values with speech dynamics

- Cont-Gest: A Transformer-based gesture generation model supporting both explicit (motion-level) and implicit (high-level style attributes) controls, such as emotion or speaker traits.

- Weakly-supervised controls: We extract control parameters from raw data using both automatic methods and pretrained models, reducing the need for manual annotation and enhancing scalability.

- Selective control training: A masked control paradigm that enables control activation and deactivation.

- Temporal dynamics strategies: Techniques for maintaining natural gesture flow through dynamic control variation.

- Objective evaluation metric: A novel gesture quality metric based on the Kinetic Feature Extractor and Hellinger distance, that is highly correlated with subjective studies.

- Graphical interface tool: An interactive tool for real-time control, observation, manipulation, gesture visualization, and evaluation feedback.

2. Related Work

2.1. Post-Processing Paradigm for Gesture Control

2.2. Input Control Paradigm

2.2.1. Explicit Gesture Control

2.2.2. Implicit Gesture Control

2.3. Control Parameter Extraction

2.4. Model Interface

2.5. Contrasting Our Work with Previous Work

3. Methods and Materials

3.1. Data and Preprocessing

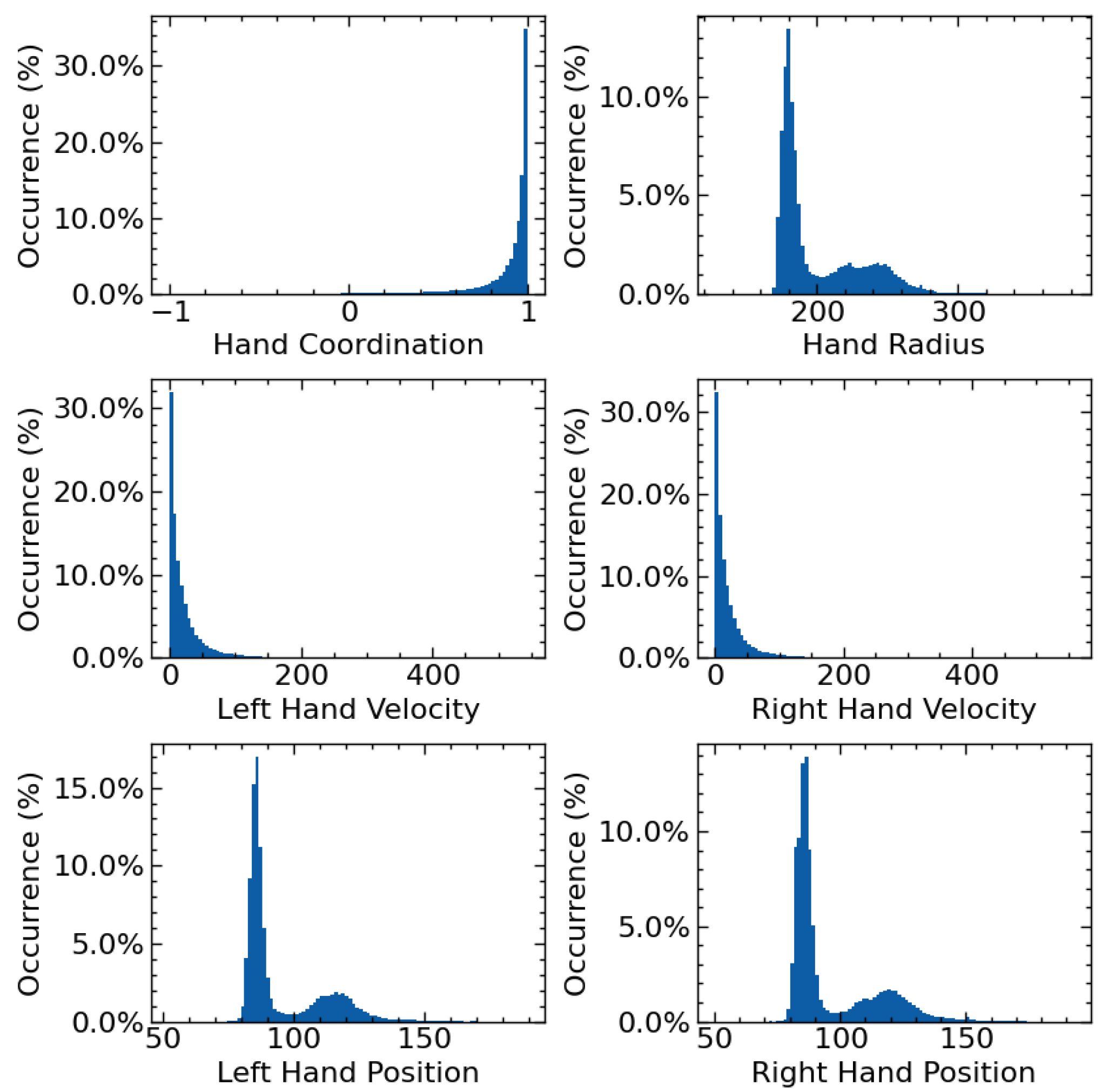

3.2. Extraction of Control Parameters

3.3. Controllable Gesture Model (Cont-Gest)

3.3.1. Control Fusion Module

- Concatenation-based conditioning: the most straightforward approach, where auxiliary control parameters are appended directly to the intermediate model features.

- Feature-wise Linear Modulation (FiLM) [12]: utilizes a conditional affine transformation to modulate the features of the intermediate model representation based on the control parameters. It scales (multiplies) and shifts (adds bias) using the information extracted from the control parameters. FiLM offers more control than concatenation as it allows for independent scaling and shifting of features. Mathematically, it is expressed as follows:where is the feature vector on which conditioning is being applied, is the conditioning vector, and are learned vectors for scaling and shifting the input feature vector.

- Adaptive Instance Normalization (AdaIN) [13]: focuses on aligning the primary input features’ statistical properties (mean and variance) with those of the control parameter. By doing this, AdaIN ensures that the distribution of the input features matches the “style” information encoded in the control parameter. AdaIN does not introduce additional learnable parameters. AdaIN can be represented mathematically as follows:where is the variance, and is the average of the input feature vector and control vector .

3.3.2. Selective Control Activation

4. Experimental Setup

4.1. Model Training and Development

4.2. Experiments

4.2.1. Experiment 1—Masked Control Training

4.2.2. Experiment 2—Control Fusion

4.2.3. Experiment 3—Control Dynamics

4.3. Evaluation and Model Selection

4.3.1. Objective Evaluation

4.3.2. Validation of the Kinetic–Hellinger Metric

4.3.3. Graphical Interface for Visual Feedback and Control Specification

5. Results

5.1. Experiment 1—Effect of Control Training Paradigm

5.2. Experiment 2—Effect of Control Fusion

5.3. Experiment 3—Effect of Control Dynamics

5.4. Extended Control Analysis

5.4.1. No Control Analysis

5.4.2. High-Level Control Analysis

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| ECA | Embodied Conversational Agent |

| EDD | Evaluation-driven Development |

| FiLM | Feature-wise Linear Modulation |

| AdaIN | Adaptive Instance Normalization |

| VAD | Voice Activity Detection |

References

- Alexanderson, S.; Henter, G.E.; Kucherenko, T.; Beskow, J. Style-Controllable Speech-Driven Gesture Synthesis Using Normalising Flows. Comput. Graph. Forum 2020, 39, 487–496. [Google Scholar] [CrossRef]

- Ao, T.; Zhang, Z.; Liu, L. GestureDiffuCLIP: Gesture Diffusion Model with CLIP Latents. ACM Trans. Graph. 2023, 42, 42. [Google Scholar] [CrossRef]

- Ghorbani, S.; Ferstl, Y.; Holden, D.; Troje, N.F.; Carbonneau, M.-A. ZeroEGGS: Zero-Shot Example-Based Gesture Generation from Speech. Comput. Graph. Forum 2023, 42, 206–216. [Google Scholar] [CrossRef]

- Nyatsanga, S.; Kucherenko, T.; Ahuja, C.; Henter, G.E.; Neff, M. A Comprehensive Review of Data-Driven Co-Speech Gesture Generation. Comput. Graph. Forum 2023, 42, 569–596. [Google Scholar] [CrossRef]

- Ribet, S.; Wannous, H.; Vandeborre, J.-P. Survey on Style in 3D Human Body Motion: Taxonomy, Data, Recognition and Its Applications. IEEE Trans. Affect. Comput. 2021, 12, 928–948. [Google Scholar] [CrossRef]

- Castillo, G.; Neff, M. What Do We Express Without Knowing? Emotion in Gesture. In Proceedings of the 18th International Conference on Autonomous Agents and MultiAgent Systems, Montreal, QC, Canada, 13–17 May 2019; pp. 702–710. [Google Scholar]

- Smith, H.J.; Neff, M. Understanding the Impact of Animated Gesture Performance on Personality Perceptions. ACM Trans. Graph. 2017, 36, 49. [Google Scholar] [CrossRef]

- Feine, J.; Gnewuch, U.; Morana, S.; Maedche, A. A Taxonomy of Social Cues for Conversational Agents. Int. J. Hum.-Comput. Stud. 2019, 132, 138–161. [Google Scholar] [CrossRef]

- Yoon, Y.; Cha, B.; Lee, J.-H.; Jang, M.; Lee, J.; Kim, J.; Lee, G. Speech Gesture Generation from the Trimodal Context of Text, Audio, and Speaker Identity. ACM Trans. Graph. 2020, 39, 222. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention Is All You Need. In Proceedings of the 31st International Conference on Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; pp. 6000–6010. [Google Scholar]

- Radford, A.; Kim, J.W.; Xu, T.; Brockman, G.; McLeavey, C.; Sutskever, I. Robust Speech Recognition via Large-Scale Weak Supervision. In Proceedings of the 40th International Conference on Machine Learning, Honolulu, HI, USA, 23–29 July 2023; Volume 202, pp. 28492–28518. [Google Scholar]

- Perez, E.; Strub, F.; de Vries, H.; Dumoulin, V.; Courville, A. FiLM: Visual Reasoning with a General Conditioning Layer. In Proceedings of the Thirty-Second AAAI Conference on Artificial Intelligence and Thirtieth Innovative Applications of Artificial Intelligence Conference and Eighth AAAI Symposium on Educational Advances in Artificial Intelligence, New Orleans, LA, USA, 2–7 February 2018; pp. 3942–3951. [Google Scholar]

- Huang, X.; Belongie, S. Arbitrary Style Transfer in Real-Time with Adaptive Instance Normalization. In Proceedings of the 2017 IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 1510–1519. [Google Scholar]

- Wolfert, P.; Henter, G.E.; Belpaeme, T. Exploring the Effectiveness of Evaluation Practices for Computer-Generated Nonverbal Behaviour. Appl. Sci. 2024, 14, 1460. [Google Scholar] [CrossRef]

- Onuma, K.; Faloutsos, C.; Hodgins, J.K. FMDistance: A Fast and Effective Distance Function for Motion Capture Data. In Proceedings of the Eurographics 2008—Short Papers; Mania, K., Reinhard, E., Eds.; The Eurographics Association: Eindhoven, The Netherlands, 2008. [Google Scholar]

- Crnek, K.; Močnik, G.; Rojc, M. Advancing Objective Evaluation of Speech-Driven Gesture Generation for Embodied Conversational Agents. Int. J. Hum.–Comput. Interact. 2025, 1–17. [Google Scholar] [CrossRef]

- Park, J.; Cho, S.; Kim, D.; Bailo, O.; Park, H.; Hong, S.; Park, J. A Body Part Embedding Model with Datasets for Measuring 2D Human Motion Similarity. IEEE Access 2021, 9, 36547–36558. [Google Scholar] [CrossRef]

- Yoon, Y.; Park, K.; Jang, M.; Kim, J.; Lee, G. SGToolkit: An Interactive Gesture Authoring Toolkit for Embodied Conversational Agents. In Proceedings of the 34th Annual ACM Symposium on User Interface Software and Technology, New York, NY, USA, 10–14 October 2021; pp. 826–840. [Google Scholar]

- Chi, D.; Costa, M.; Zhao, L.; Badler, N. The EMOTE Model for Effort and Shape. In Proceedings of the 27th Annual Conference on Computer Graphics and Interactive Techniques, New Orleans, LA, USA, 23–28 July 2000; pp. 173–182. [Google Scholar]

- Durupinar, F.; Kapadia, M.; Deutsch, S.; Neff, M.; Badler, N.I. PERFORM: Perceptual Approach for Adding OCEAN Personality to Human Motion Using Laban Movement Analysis. ACM Trans. Graph. 2016, 36, 6. [Google Scholar] [CrossRef]

- Hartmann, B.; Mancini, M.; Pelachaud, C. Implementing Expressive Gesture Synthesis for Embodied Conversational Agents. In The Gesture in Human-Computer Interaction and Simulation, Proceedings of the 6th International Gesture Workshop, GW 2005, Berder Island, France, 18–20 May 2005; Gibet, S., Courty, N., Kamp, J.-F., Eds.; Springer: Berlin/Heidelberg, Germany, 2006; pp. 188–199. [Google Scholar]

- Sonlu, S.; Güdükbay, U.; Durupinar, F. A Conversational Agent Framework with Multi-Modal Personality Expression. ACM Trans. Graph. 2021, 40, 7. [Google Scholar] [CrossRef]

- Gebhard, P. ALMA: A Layered Model of Affect. In Proceedings of the Fourth International Joint Conference on Autonomous Agents and Multiagent Systems, Utrecht, The Netherlands, 25–29 July 2005; pp. 29–36. [Google Scholar]

- Shvo, M.; Buhmann, J.; Kapadia, M. An Interdependent Model of Personality, Motivation, Emotion, and Mood for Intelligent Virtual Agents. In Proceedings of the 19th ACM International Conference on Intelligent Virtual Agents, Paris, France, 2–5 July 2019; pp. 65–72. [Google Scholar]

- Qian, S.; Tu, Z.; Zhi, Y.; Liu, W.; Gao, S. Speech Drives Templates: Co-Speech Gesture Synthesis with Learned Templates. In Proceedings of the 2021 IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 10–17 October 2021; pp. 11057–11066. [Google Scholar]

- Habibie, I.; Elgharib, M.; Sarkar, K.; Abdullah, A.; Nyatsanga, S.; Neff, M.; Theobalt, C. A Motion Matching-Based Framework for Controllable Gesture Synthesis from Speech. In Proceedings of the Special Interest Group on Computer Graphics and Interactive Techniques Conference, Vancouver, BC, Canada, 7–11 August 2022; pp. 1–9. [Google Scholar]

- Alexanderson, S.; Nagy, R.; Beskow, J.; Henter, G.E. Listen, Denoise, Action! Audio-Driven Motion Synthesis with Diffusion Models. ACM Trans. Graph. 2023, 42, 44. [Google Scholar] [CrossRef]

- Wu, B.; Liu, C.; Ishi, C.T.; Shi, J.; Ishiguro, H. Extrovert or Introvert? GAN-Based Humanoid Upper-Body Gesture Generation for Different Impressions. Int. J. Soc. Robot. 2023, 17, 457–472. [Google Scholar] [CrossRef]

- Kucherenko, T.; Nagy, R.; Neff, M.; Kjellström, H.; Henter, G.E. Multimodal Analysis of the Predictability of Hand-Gesture Properties. In Proceedings of the 21st International Conference on Autonomous Agents and Multiagent Systems, Virtual, 9–13 May 2022; pp. 770–779. [Google Scholar]

- Kucherenko, T.; Nagy, R.; Jonell, P.; Neff, M.; Kjellström, H.; Henter, G.E. Speech2Properties2Gestures: Gesture-Property Prediction as a Tool for Generating Representational Gestures from Speech. In Proceedings of the 21st ACM International Conference on Intelligent Virtual Agents, Virtual, 14–17 September 2021; pp. 145–147. [Google Scholar]

- Ferstl, Y.; Neff, M.; McDonnell, R. ExpressGesture: Expressive Gesture Generation from Speech Through Database Matching. Comput. Animat. Virtual Worlds 2021, 32, e2016. [Google Scholar] [CrossRef]

- Zhang, F.; Wang, Z.; Lyu, X.; Zhao, S.; Li, M.; Geng, W.; Ji, N.; Du, H.; Gao, F.; Wu, H.; et al. Speech-Driven Personalized Gesture Synthetics: Harnessing Automatic Fuzzy Feature Inference. IEEE Trans. Vis. Comput. Graph. 2024, 30, 6984–6996. [Google Scholar] [CrossRef] [PubMed]

- Bozkurt, E.; Yemez, Y.; Erzin, E. Affective Synthesis and Animation of Arm Gestures from Speech Prosody. Speech Commun. 2020, 119, 1–11. [Google Scholar] [CrossRef]

- Kucherenko, T.; Nagy, R.; Yoon, Y.; Woo, J.; Nikolov, T.; Tsakov, M.; Henter, G.E. The GENEA Challenge 2023: A Large-Scale Evaluation of Gesture Generation Models in Monadic and Dyadic Settings. In Proceedings of the 25th International Conference on Multimodal Interaction, Paris, France, 9–13 October 2023; pp. 792–801. [Google Scholar]

- Lee, G.; Deng, Z.; Ma, S.; Shiratori, T.; Srinivasa, S.; Sheikh, Y. Talking with Hands 16.2M: A Large-Scale Dataset of Synchronized Body-Finger Motion and Audio for Conversational Motion Analysis and Synthesis. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October–2 November 2019; pp. 763–772. [Google Scholar]

- Kucherenko, T.; Jonell, P.; Yoon, Y.; Wolfert, P.; Henter, G.E. A Large, Crowdsourced Evaluation of Gesture Generation Systems on Common Data: The GENEA Challenge 2020. In Proceedings of the 26th International Conference on Intelligent User Interfaces, College Station, TX, USA, 14–17 April 2021; pp. 11–21. [Google Scholar]

- Deichler, A.; Mehta, S.; Alexanderson, S.; Beskow, J. Diffusion-Based Co-Speech Gesture Generation Using Joint Text and Audio Representation. In Proceedings of the 25th International Conference on Multimodal Interaction, Paris, France, 9–13 October 2023; pp. 755–762. [Google Scholar]

- Schroter, H.; Escalante-B, A.N.; Rosenkranz, T.; Maier, A. Deepfilternet: A Low Complexity Speech Enhancement Framework for Full-Band Audio Based On Deep Filtering. In Proceedings of the ICASSP 2022—2022 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Singapore, 22–27 May 2022; pp. 7407–7411. [Google Scholar]

- Intel/Openvino-Plugins-Ai-Audacity: A Set of AI-Enabled Effects, Generators, and Analyzers for Audacity®. Available online: https://github.com/intel/openvino-plugins-ai-audacity (accessed on 13 March 2025).

- Audacity ®|Free Audio Editor, Recorder, Music Making and More! Available online: https://www.audacityteam.org/ (accessed on 13 March 2025).

- Ravanelli, M.; Parcollet, T.; Plantinga, P.; Rouhe, A.; Cornell, S.; Lugosch, L.; Subakan, C.; Dawalatabad, N.; Heba, A.; Zhong, J.; et al. SpeechBrain: A General-Purpose Speech Toolkit. arXiv 2021, arXiv:2106.04624. [Google Scholar]

- Wang, Y.; Ravanelli, M.; Yacoubi, A. Speech Emotion Diarization: Which Emotion Appears When? In Proceedings of the 2023 IEEE Automatic Speech Recognition and Understanding Workshop (ASRU), Taipei, Taiwan, 16–20 December 2023; pp. 1–7. [Google Scholar]

- Burkhardt, F.; Wagner, J.; Wierstorf, H.; Eyben, F.; Schuller, B. Speech-Based Age and Gender Prediction with Transformers. In Proceedings of the 15th ITG Conference on Speech Communication, Aachen, Germany, 20–22 September 2023; pp. 46–50. [Google Scholar]

- Zhou, Y.; Barnes, C.; Lu, J.; Yang, J.; Li, H. On the Continuity of Rotation Representations in Neural Networks. In Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019; pp. 5738–5746. [Google Scholar]

- Paszke, A.; Gross, S.; Massa, F.; Lerer, A.; Bradbury, J.; Chanan, G.; Killeen, T.; Lin, Z.; Gimelshein, N.; Antiga, L.; et al. PyTorch: An Imperative Style, High-Performance Deep Learning Library. In Proceedings of the 33rd International Conference on Neural Information Processing Systems, Vancouver, BC, Canada, 8–14 December 2019; pp. 8026–8037. [Google Scholar]

- Grassia, F.S. Practical Parameterization of Rotations Using the Exponential Map. J. Graph. Tools 1998, 3, 29–48. [Google Scholar] [CrossRef]

| Control Parameter | 15th | 50th (Median) | 85th |

|---|---|---|---|

| Correlation | 0.79 | 0.96 | 0.99 |

| Radius | 176.65 | 184.57 | 239.59 |

| Velocity Left | 2.28 | 11.23 | 39.21 |

| Velocity Right | 2.22 | 11.25 | 38.57 |

| Left-hand Height | 84.06 | 87.06 | 116.00 |

| Right-hand Height | 83.31 | 87.27 | 118.99 |

| Age | 27 | 35 | 38 |

| Features | Human-Likeness Correlation | p-Value |

|---|---|---|

| Position | 0.4778 | 0.0182 |

| Axis-angle | 0.6778 | 0.0008 |

| 6D | 0.7000 | 0.0005 |

| Fusion | Control Training | Kin–Hel-6D ↑ | Left-Hand Height | Right-Hand Height | Correlation | Radius | Left-Hand Velocity | Right-Hand Velocity |

|---|---|---|---|---|---|---|---|---|

| FiLM | Single-control | 0.2073 | 98.03 ± 0.13 | 96.47 ± 0.12 | 0.69 ± 0.003 | 206.39 ± 0.21 | 31.08 ± 0.24 | 27.49 ± 0.24 |

| AdaIN | Single-control | 0.2229 | 104.06 ± 0.17 | 88.36 ± 0.11 | 0.81 ± 0.003 | 202.16 ± 0.23 | 18.12 ± 0.18 | 20.17 ± 0.20 |

| FiLM | Single-mask | 0.2312 | 101.41 ± 0.12 | 90.01 ± 0.11 | 0.79 ± 0.003 | 199.89 ± 0.18 | 18.01 ± 0.19 | 18.21 ± 0.21 |

| Concat. | Single-control | 0.2373 | 90.91 ± 0.10 | 92.53 ± 0.13 | 0.84 ± 0.002 | 190.03 ± 0.21 | 21.35 ± 0.18 | 20.49 ± 0.21 |

| AdaIN | Single-mask | 0.2810 | 104.72 ± 0.15 | 118.33 ± 0.17 | 0.71 ± 0.003 | 231.02 ± 0.28 | 24.81 ± 0.25 | 32.51 ± 0.28 |

| Concat. | Single-mask | 0.3954 | 112.00 ± 0.16 | 128.56 ± 0.16 | 0.79 ± 0.002 | 245.18 ± 0.26 | 25.73 ± 0.25 | 29.92 ± 0.30 |

| Control Parameter | Control Value | Kin–Hel-6D | Left-Hand Height | Right-Hand Height | Correlation | Radius | Left-Hand Velocity | Right-Hand Velocity |

|---|---|---|---|---|---|---|---|---|

| Age | 27 (15th) | 0.2386 | 101.69 ± 0.11 | 90.54 ± 0.11 | 0.77 ± 0.003 | 199.45 ± 0.17 | 16.24 ± 0.17 | 18.24 ± 0.19 |

| Age | 35 (50th) | 0.2357 | 102.01 ± 0.11 | 90.73 ± 0.11 | 0.79 ± 0.003 | 200.06 ± 0.17 | 16.39 ± 0.16 | 17.98 ± 0.19 |

| Age | 38 (85th) | 0.2343 | 100.57 ± 0.11 | 90.41 ± 0.11 | 0.79 ± 0.003 | 198.97 ± 0.17 | 16.87 ± 0.17 | 19.59 ± 0.21 |

| Emotion | Angry | 0.2308 | 101.64 ± 0.12 | 90.04 ± 0.10 | 0.76 ± 0.003 | 200.09 ± 0.18 | 17.19 ± 0.17 | 19.34 ± 0.22 |

| Emotion | Happy | 0.2295 | 99.41 ± 0.12 | 90.81 ± 0.11 | 0.78 ± 0.003 | 198.43 ± 0.18 | 16.32 ± 0.17 | 20.18 ± 0.21 |

| Emotion | Neutral | 0.2277 | 99.29 ± 0.11 | 91.20 ± 0.12 | 0.78 ± 0.003 | 199.03 ± 0.19 | 16.90 ± 0.18 | 21.06 ± 0.22 |

| Emotion | Sad | 0.2198 | 98.15 ± 0.11 | 92.06 ± 0.13 | 0.79 ± 0.003 | 198.69 ± 0.19 | 16.17 ± 0.16 | 19.76 ± 0.21 |

| Gender | Child | 0.2361 | 101.36 ± 0.13 | 90.81 ± 0.11 | 0.78 ± 0.003 | 200.25 ± 0.19 | 17.27 ± 0.19 | 20.19 ± 0.22 |

| Gender | Female | 0.2297 | 100.38 ± 0.11 | 92.39 ± 0.12 | 0.76 ± 0.003 | 201.53 ± 0.18 | 18.60 ± 0.18 | 21.57 ± 0.22 |

| Gender | Male | 0.2268 | 100.04 ± 0.12 | 90.16 ± 0.10 | 0.78 ± 0.003 | 198.78 ± 0.18 | 17.05 ± 0.17 | 19.97 ± 0.21 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Crnek, K.; Rojc, M. Controllable Speech-Driven Gesture Generation with Selective Activation of Weakly Supervised Controls. Appl. Sci. 2025, 15, 9467. https://doi.org/10.3390/app15179467

Crnek K, Rojc M. Controllable Speech-Driven Gesture Generation with Selective Activation of Weakly Supervised Controls. Applied Sciences. 2025; 15(17):9467. https://doi.org/10.3390/app15179467

Chicago/Turabian StyleCrnek, Karlo, and Matej Rojc. 2025. "Controllable Speech-Driven Gesture Generation with Selective Activation of Weakly Supervised Controls" Applied Sciences 15, no. 17: 9467. https://doi.org/10.3390/app15179467

APA StyleCrnek, K., & Rojc, M. (2025). Controllable Speech-Driven Gesture Generation with Selective Activation of Weakly Supervised Controls. Applied Sciences, 15(17), 9467. https://doi.org/10.3390/app15179467