1. Introduction

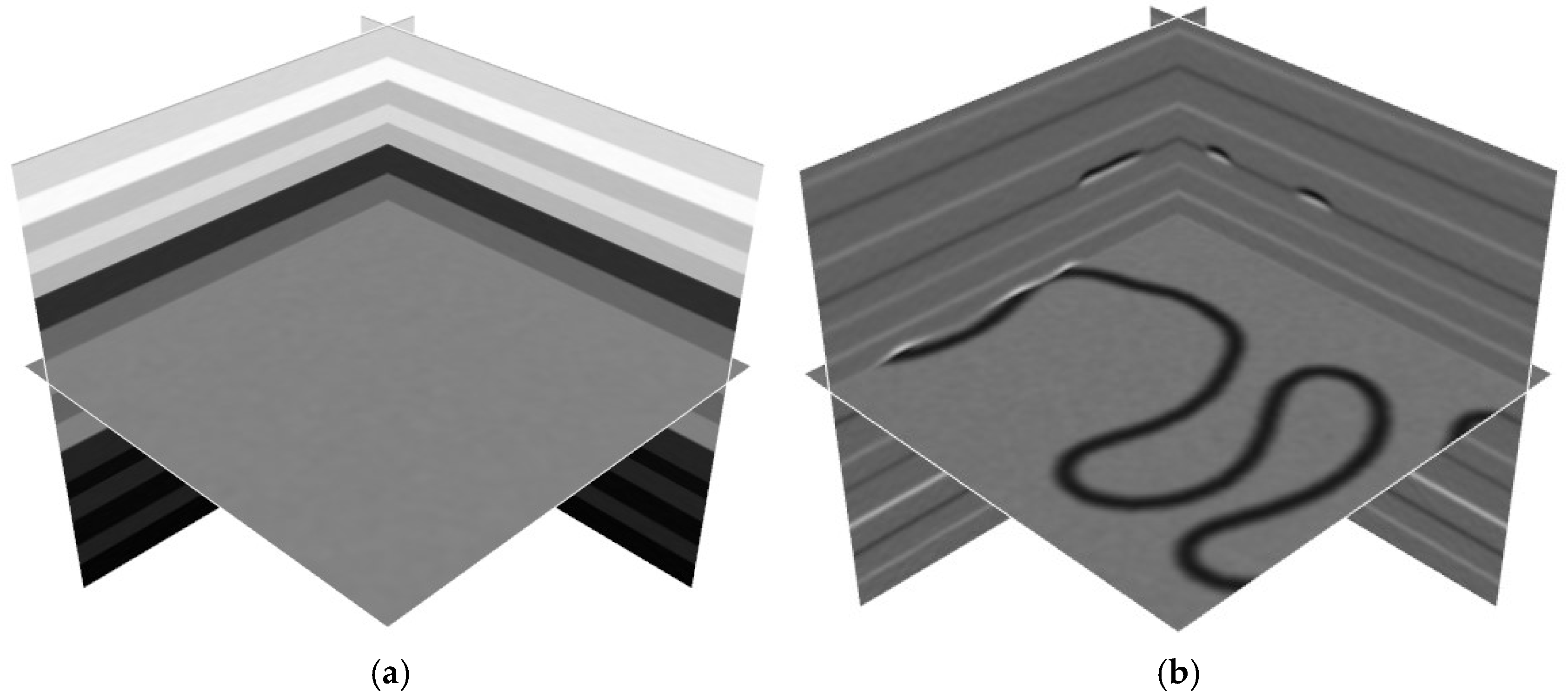

Channels, as key components of geological sedimentary environments, play a crucial role in the transportation and deposition of sediments, profoundly influencing the spatial distribution and structural characteristics of subsurface reservoirs [

1]. Accurately identifying and characterizing the location and morphology of channels not only significantly improves the accuracy of oil and gas reservoir prediction and development, but also provides essential geological support for well placement in oil and gas exploration [

2]. Traditional channel interpretation methods typically rely on manually extracting seismic attributes such as curvature [

3], coherence [

4], attenuation attributes [

5], time–frequency analysis [

6,

7], and principal component analysis [

8]. However, these methods often require substantial human effort and are highly susceptible to the quality of seismic data and the subjective judgment of interpreters. In particular, in regions with poor seismic data quality or complex geological structures, traditional seismic attributes may not effectively capture channel characteristics.

With the continuous advancement of deep learning technologies, particularly the successful application of convolutional neural networks (CNNs), such as U-Net [

9], in fields like medical image segmentation, related research has gradually introduced these methods into seismic image processing tasks. CNNs are now widely used in seismic structural interpretation, including fault detection [

10,

11,

12,

13], horizon picking [

14], paleokarsts [

15,

16], and salt boundary detection [

17,

18]. Seismic channel recognition, as a complex 3D semantic segmentation problem, benefits from the advantages of deep learning in spatial perception and multi-scale feature extraction. However, the application of deep learning models in seismic interpretation still faces several challenges, including the scarcity of training samples, high model complexity, and limited generalization ability on field seismic datasets.

To address the issue of insufficient labeled samples, Wang et al. proposed a method that combines geological channel simulation with geophysical forward modeling [

19], constructing a large-scale labeled 3D synthetic seismic channel dataset that effectively supports the training of deep learning models. Gao et al. introduced CNNs and trained the model with synthetic data generated from geological numerical simulations, successfully achieving channel recognition in field seismic volumes [

20]. Li et al. proposed a channel recognition method based on an end-to-end 3D CNN and successfully achieved efficient and accurate separation of river channel features in complex seismic structures [

21]. Zhong et al. combined Frangi filtering with Attention R2U-Net, significantly improving the accuracy of channel linear structure recognition and multi-scale feature expression [

22]. Despite these advances, recognizing natural channels remains challenging due to their complex and highly variable morphology, making accurate and stable identification difficult with current methods.

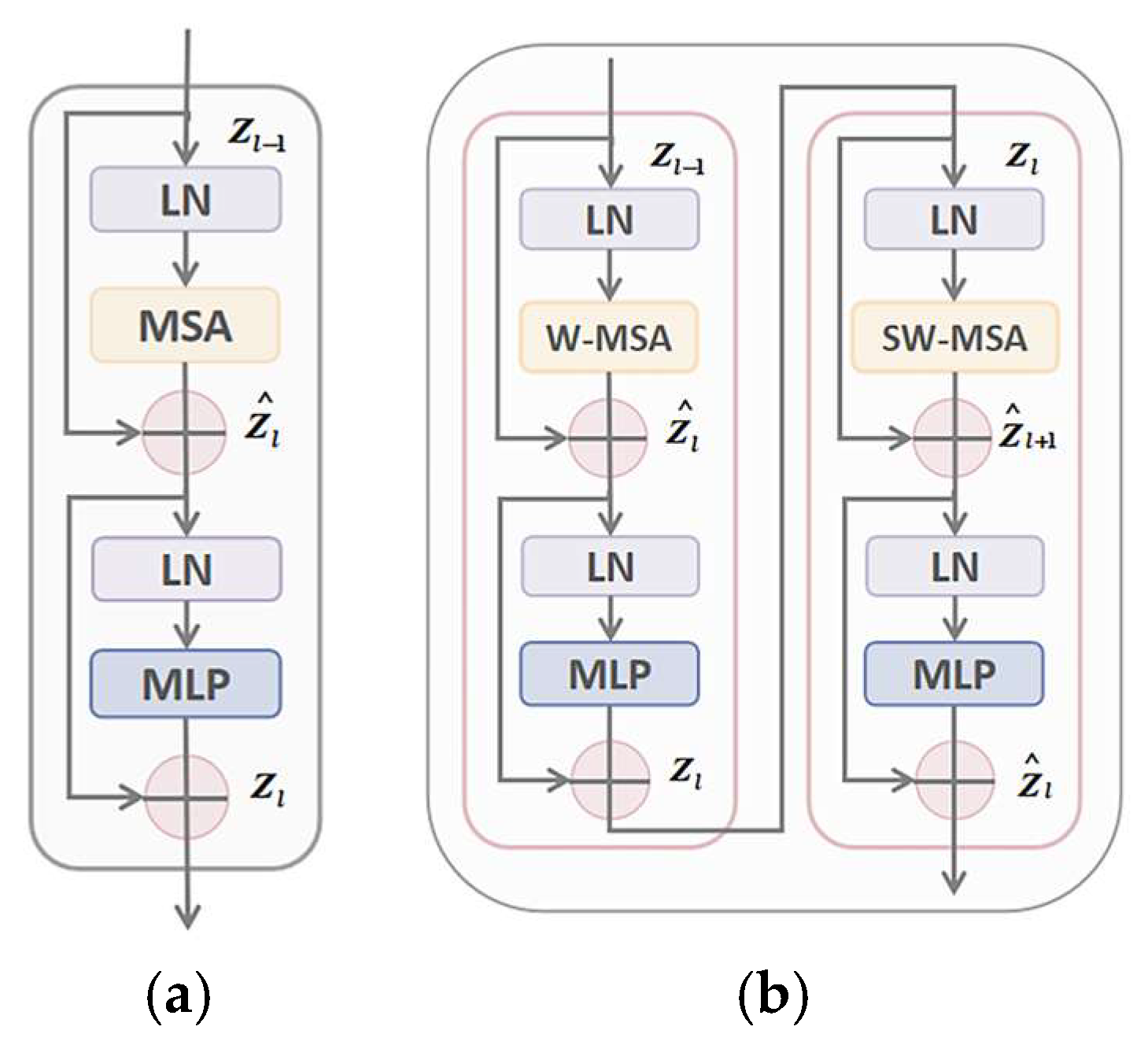

CNNs have advantages in feature extraction. However, their performance in certain complex tasks is limited due to their restricted receptive field, inability to model long-range dependencies, sensitivity to transformations, susceptibility to overfitting, and lack of global contextual information [

23]. In contrast, models incorporating the Transformer structure demonstrate greater advantages in global feature extraction. In recent years, many researchers have explored various Transformer model variants and achieved significant results [

24,

25]. Lin et al. proposed a novel 2D deep medical image segmentation framework [

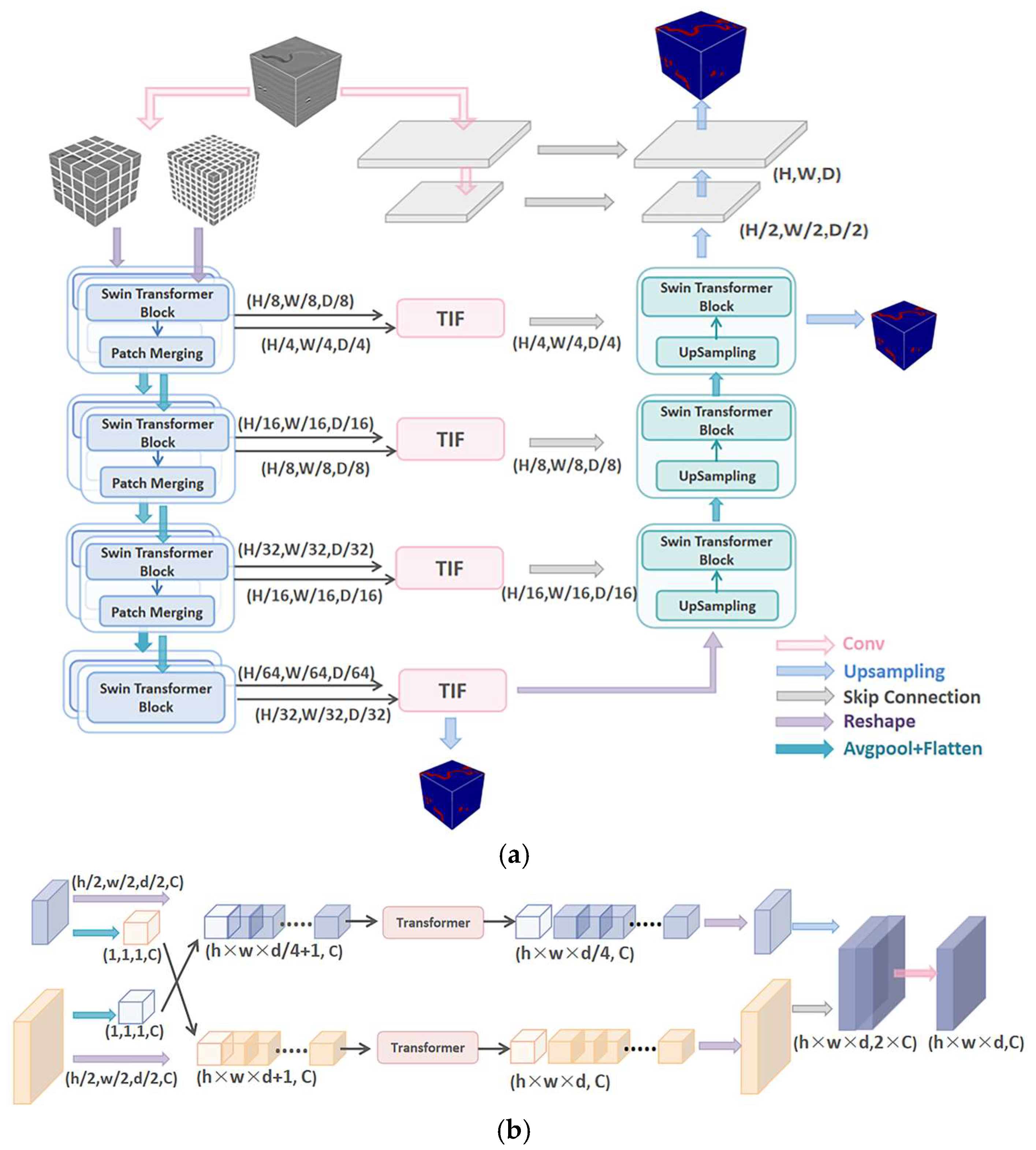

26], which aims to incorporate the hierarchical Swin Transformer into both the encoder and the decoder of the standard U-shaped architecture. Meanwhile, a well-designed transformer interactive fusion (TIF) module is introduced to effectively perform multi-scale information fusion through the self-attention mechanism.

In this study, to accommodate the processing requirements of 3D seismic data, we modified the DS-TransUnet model by adjusting the sliding window configuration of the Swin Transformer to operate in three-dimensional space and replacing all 2D convolution operations in the encoder with 3D convolutions and applied the enhanced model to channel recognition in 3D seismic images. The optimization approach proposed in this study demonstrates significant advantages in channel identification and boundary delineation, while also achieving effective suppression of interference from similar features. This paper is structured as follows: The process of constructing the synthetic dataset is described in detail in

Section 2. In

Section 3, we present the theoretical foundation of the 3D DS-TransUnet model, highlighting its strengths in the field of image segmentation.

Section 4 describes the training process of the proposed model on this dataset and compares the performances between traditional U-Net and TransUnet. Subsequently, we conducted an in-depth analysis of the proposed model’s performance in handling complex seismic data in

Section 5 and further validated its accuracy and robustness using two-field seismic data.

Section 6 provides a systematic summary of the methodologies adopted in this study, highlighting the core advantages of the model while objectively analyzing its current limitations and areas for improvement.

4. Training and Model Evaluation

4.1. Training and Validation

Based on the workflow established in

Section 2, which defines the simulation procedure, we generated 300 pairs of synthetic data for training the network model. We selected 150 pairs of synthetic images (labeled 0–149) as the training set and 40 pairs (labeled 200–239) as the validation set. The development platform is based on PyTorch 2.8.0, utilizing the Adam optimizer and the binary cross-entropy loss function. The batch size was set to 1, with an initial learning rate of 0.0001. Training was performed over 50 epochs on an Intel

® Xeon

® Platinum 8352V CPU (Intel Corporation, Santa Clara, CA, USA) with 120 GB of memory, and the optimal model was obtained at epoch 43.

To increase both the quantity and diversity of the training data, various data augmentation techniques were applied to the 150 pairs of synthetic images during training. The first augmentation method involved rotating the images by 0°, 90°, 180°, and 270° around the vertical axis. The second method involved randomly cropping smaller sub-volumes from the original images. To match the input size required by the model, these cropped volumes were resized to 128 × 128 × 128. We also applied Mean-Std normalization to the data using the following formula:

where

denotes the original value, and

represent the mean and standard deviation of the data, and

is the normalized value.

4.2. Evaluation Metrics

In this paper, the performance metrics used are Intersection over Union (IoU), Precision, Recall, and F1 score. These metrics offer a comprehensive evaluation of the model’s performance in segmentation tasks. The formula of these metrics is specified as follows:

where

TP represents the number of correctly identified fault samples,

FP refers to the number of non-fault samples incorrectly predicted as faults, and

FN indicates the number of fault samples that were incorrectly classified.

4.3. Contrast Experiments for Training and Validation

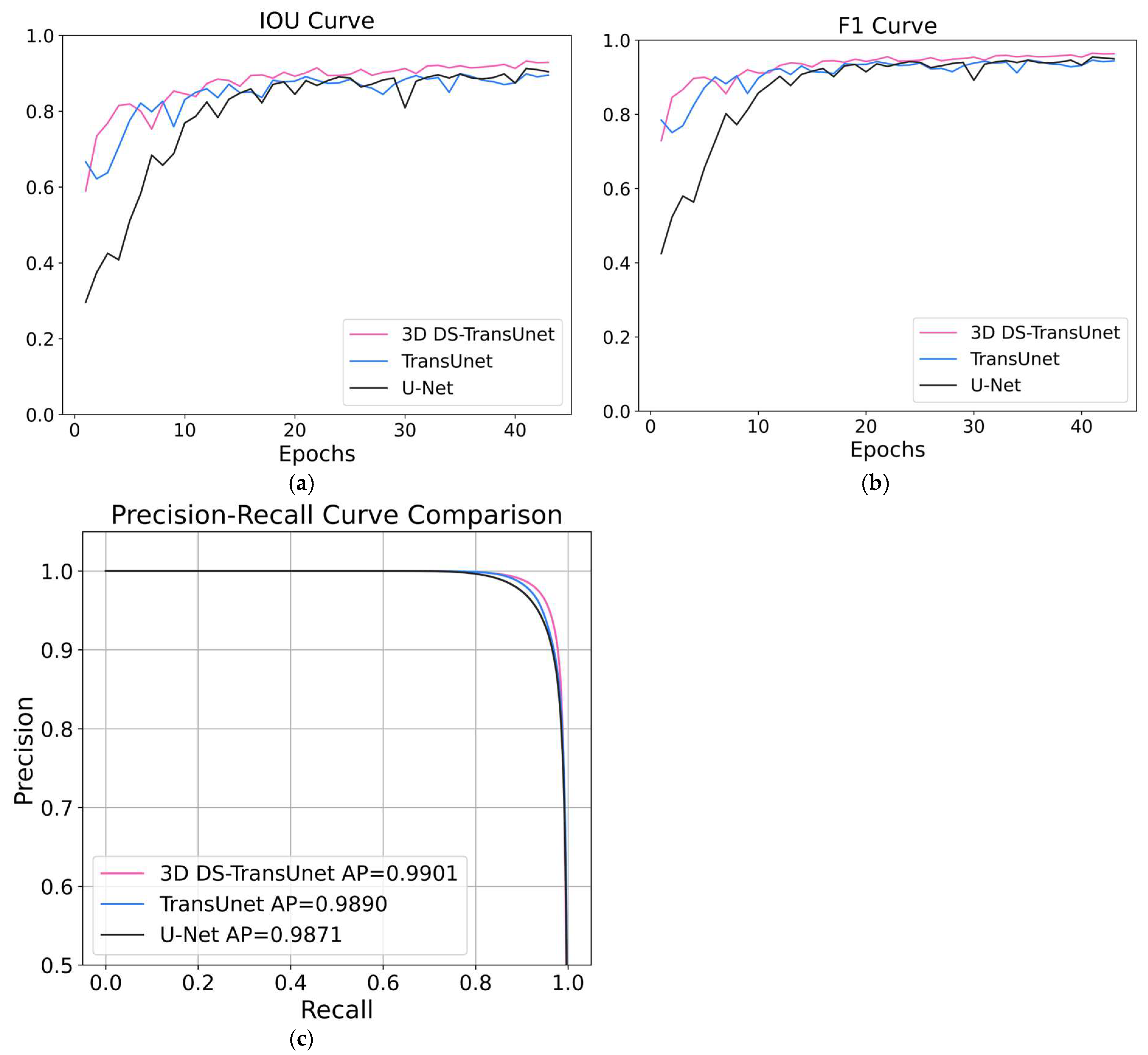

The training and validation results of U-Net, TransUnet, and 3D DS-TransUnet are evaluated using loss and precision as metrics on the aforementioned synthetic data.

Figure 4 depicts the comparisons of accuracy and loss curves for these models.

It can be seen that the 3D DS-TransUnet model converges faster than the other two models, with both the training and validation sets achieving a loss of 0.02. The Transformer structure’s core lies in the self-attention mechanism, while the feedforward neural network effectively facilitates gradient propagation and focuses on global context information. This allows the model to swiftly identify key features in the data. The global perspective enables the model to find the optimal solution faster, reduce instability, and accelerate convergence.

The Swin Transformer blocks in 3D DS-TransUnet contribute to reducing redundant computations and enhancing feature information transfer between layers through parameter sharing and cross-layer information fusion. Additionally, this structure gradually expands the receptive field, enabling the network to capture long-range dependencies while preserving local feature details. This strong global perception capability enables the model to adapt more quickly to different tasks, reduces the number of training iterations required, and enhances the model’s stability and convergence speed.

Figure 5 compares the performances of U-Net, TransUnet, and 3D DS-TransUnet on various evaluation metrics, including IoU, F1 score, precision–recall (PR) curve (with a threshold range of 0–1), as the number of iterations increases. The PR curve illustrates the relationship between precision and recall, making it particularly suitable for class-imbalanced tasks. The average precision (AP) value, defined as the area under the PR curve, quantifies the model’s overall trade-off between precision and recall across all thresholds. A higher AP indicates that the model maintains more stable performance under varying decision thresholds.

The results clearly show that the 3D DS-TransUnet model outperforms the others in all metrics. This superiority is primarily attributed to the incorporation of the Dual-Scale Swin Transformer structure in the encoder, which enables the extraction of higher-quality features in the model’s shallow layers. As a result, the 3D DS-TransUnet model achieves high performance early in the training process. Furthermore, by introducing the TIF block at the skip connections, multi-scale feature representations are effectively fused, bridging the semantic gap caused by differences in the hierarchical levels of intermediate features.

To provide a more reliable reference, we conducted additional testing on ten extra pairs of synthetic data (outside the training and validation sets). The metrics include precision, IoU, and F1 score.

Table 1 compares the performance of three models when the optimal iterations are selected for testing: U-Net, TransUnet, and Ours (3D DS-TransUnet). As shown in

Table 1, 3D DS-TransUnet achieves superior performance over the original TransUnet in synthetic data recognition.

5. Application

To test the recognition ability and generalization of the optimal iterative model, we applied it to a pair of synthetic data (labeled 251) and field seismic data. To mitigate the effects of clipping and boundary artifacts, overlapping regions are introduced between adjacent blocks, and trilinear interpolation is applied to the predictions. This approach smooths the transition areas, thus reducing the impact of clipping and boundary effects, and significantly enhances the accuracy and consistency of the final predictions. In fact, when performing trilinear interpolation, we only selected four traces near the boundary, far fewer than the total number of traces, so it did not affect the overall performance. Although the overlapping block strategy may increase computational overhead, we optimized memory management to minimize computational redundancy while ensuring the stability and performance of the model.

5.1. Comparisons on the Synthetic Datasets

This section applies the three trained models to synthetic seismic datasets, with a dimension of 256 × 256 × 256.

Figure 6a shows the label of the synthetic data, which serves as the most standard reference for comparison.

Figure 6b–d present the vertical slice at 194, showing the predicted results of U-Net, TransUnet, and 3D DS-TransUnet, respectively.

The comparison clearly demonstrates that the model proposed in this study, 3D DS-TransUnet, improves the accuracy of feature identification. As indicated by the yellow arrows, the model provides more reliable results: Arrow 1 marks a boundary that is delineated with higher precision, while Arrows 2 and 3 highlight a discontinuity that is more clearly recognized by the model. Overall, these results show that 3D DS-TransUnet delivers the most accurate and consistent recognition performance among the compared models.

This improvement underscores the model’s effectiveness in preserving the integrity of features throughout the seismic data. The self-attention mechanism in the Transformer structure strengthens long-range dependencies between patches. However, by treating the image as a sequence of non-overlapping patches, it overlooks the pixel-level intrinsic structural features within each patch, which can result in the loss of shallow features such as edges and lines.

In contrast, the Dual-Scale Swin Transformer, used in 3D DS-TransUnet, performs feature extraction by dividing the image into patches of different scales during encoding, allowing the patches to complement each other. This approach preserves local continuity during feature extraction, thereby enhancing the model’s robustness and improving segmentation performance.

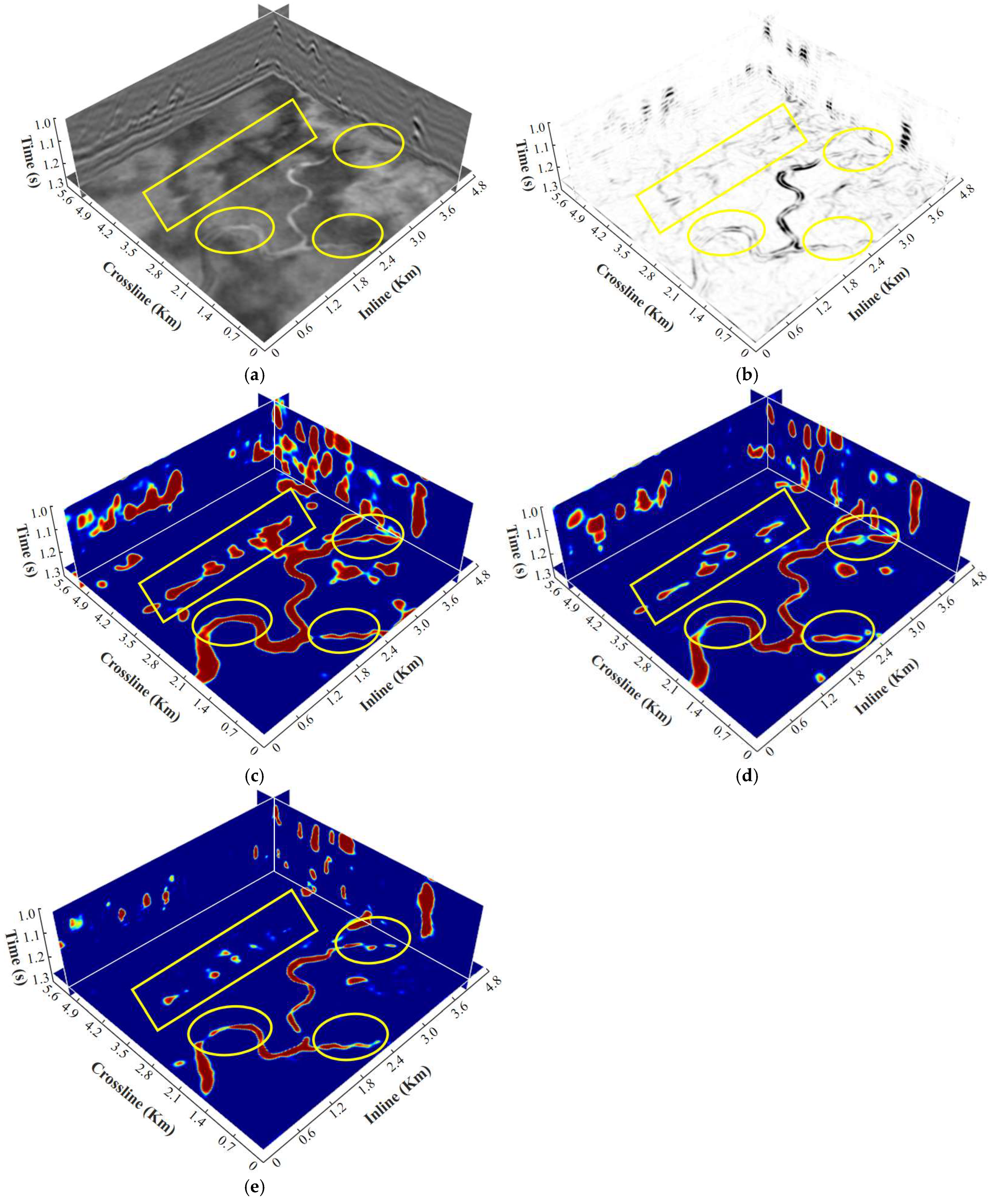

5.2. Evaluations on Field Seismic Data from Tarim Oilfield

To better evaluate the generalization capability of the model, a small 3D field seismic dataset from the Tarim oilfield in northwest China is used for testing in this study. The dimensions of seismic data are 86 [vertical] × 241 [inline] × 256 [crossline], with a time sampling interval of 2 ms.

Figure 7 compares the time slices of the recognition results obtained by the different models.

Figure 7a shows the original amplitude image of the seismic data, with a time slice at 1.27 s.

Figure 7b displays the channel boundary delineation obtained using the coherence attribute, which serves as a baseline derived from traditional seismic attribute analysis.

Figure 7c–e present the predicted results identified by the U-Net, TransUnet, and 3D DS-TransUnet, respectively. Compared with other models, the results of DS-TransUnet show closer agreement with the coherence attribute, further validating its effectiveness and reliability. In summary, the proposed DS-TransUnet model demonstrates outstanding performance in channel boundary delineation and exhibits stronger suppression of interference.

Compared to U-Net in

Figure 7c, TransUnet in

Figure 7d achieves higher accuracy in boundary recognition, primarily due to its ability to process features from multiple layers and locations simultaneously. This capability enhances the model’s ability to capture global information and handle complex image structures, enabling the extraction of more detailed and abstract features. However, the 3D DS-TransUnet model demonstrates even greater advantages in feature localization and fine feature recognition, as indicated by the yellow ovals. This improvement is primarily attributed to the Dual-Scale Swin Transformer used in the encoder stage. The Swin Transformer employs a cross-window information exchange mechanism through shifted windows, allowing information sharing between different windows. This design helps overcome the information isolation issue that can arise from relying solely on local window-based self-attention. The window shifting facilitates connections between different regions, effectively capturing long-range dependencies.

Additionally, the Swin Transformer effectively addresses the computational and memory bottlenecks of traditional global self-attention mechanisms, while preserving strong capabilities for modeling local features. By reducing computation and memory usage, it significantly enhances the model’s efficiency.

As evidenced by the yellow rectangles in

Figure 7c–e, 3D DS-TransUnet demonstrates robust anti-interference capabilities. In fact, as indicated in

Figure 7b, the coherence attribute results reveal that this region does not display the typical meandering morphology of channels.

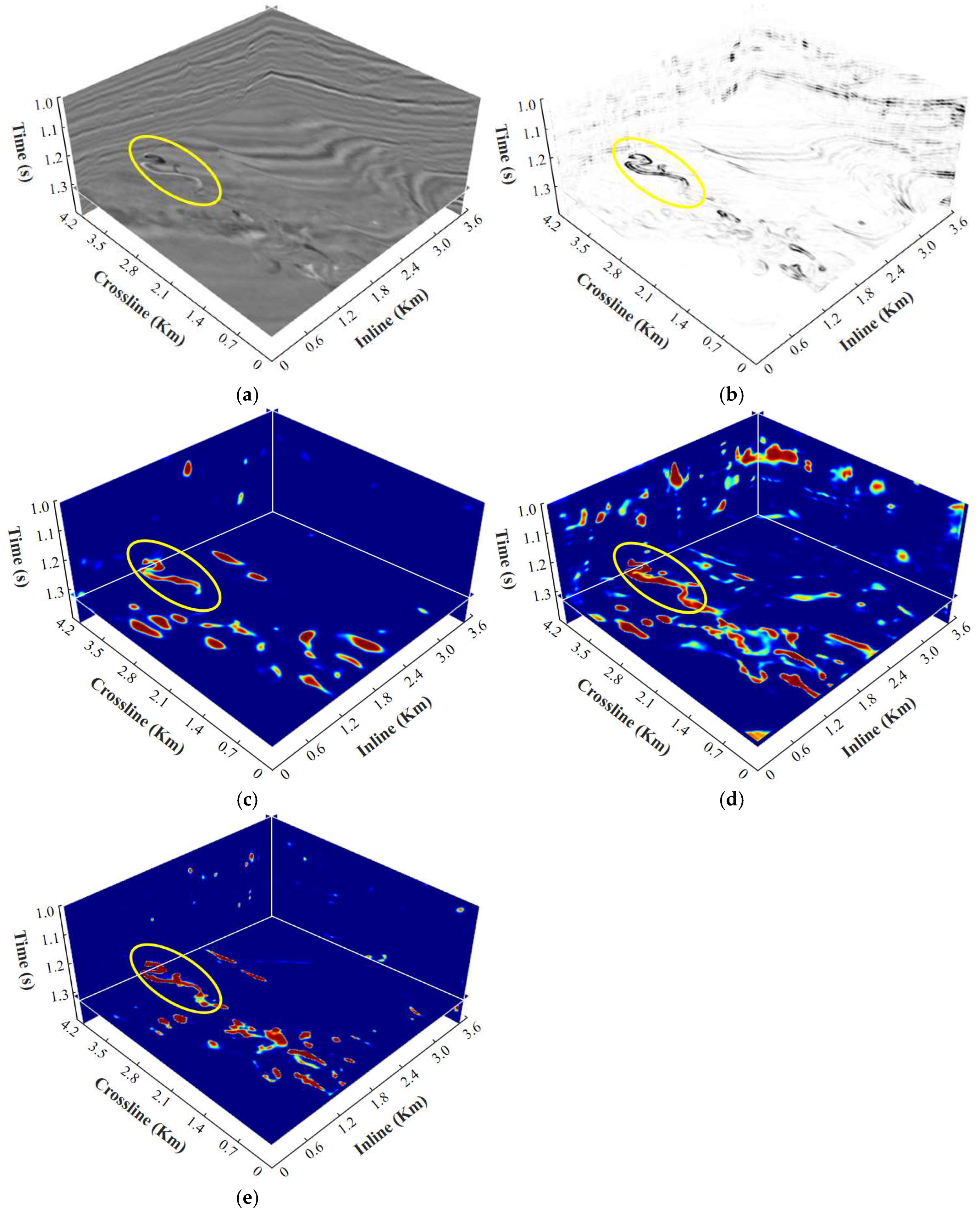

5.3. Evaluations on Field Seismic Data from Parihaka3D

To further evaluate the generalization capability of the proposed model and verify the effectiveness of our method, we conducted experiments using the publicly available Parihaka3D seismic dataset, containing a series of meandering channels. The dataset has an original sampling interval of 3ms. It was partitioned into multiple sub-volumes with dimensions of 128 [vertical] × 256 [inline] × 256 [crossline], consistent with the model input size, to ensure spatial continuity in the segmentation results.

Figure 8a shows the original seismic amplitude, while

Figure 8b presents the identification result using the coherence attribute, which serves as the interpretation reference. In the time slice, a small meandering channel is clearly visible, accompanied by a minor distributary branch. Observation of the seismic profile indicates that there is no strong seismic response above the selected slice.

Figure 8c–e display the prediction outputs of the U-Net, TransUnet, and 3D DS-TransUnet models, respectively.

It is evident that

Figure 8e demonstrates a significant advantage in channel boundary delineation, as highlighted by the yellow-marked regions. Compared with the coherence attribute result in

Figure 8b, this region exhibits the typical meandering morphology of channels as well as small distributary branches. In comparison with U-Net and TransUnet, 3D DS-TransUnet provides more accurate delineation of channel boundaries and better preservation of fine details. Moreover, the model exhibits superior suppression of interference, particularly in the seismic profile dimension.

This advantage is primarily attributed to the introduction of the TIF block in the skip connections. The module effectively fuses the output features from the dual-scale encoder, allowing information from different scales to interact and merge. This overcomes the semantic gap between scales that is common in traditional methods, while preserving the integrity of global information and preventing the loss or confusion of local features. Moreover, the introduction of the Swin Transformer in the decoder enhances the model’s ability to capture long-range dependencies and significantly improves decoding accuracy during the upsampling process, resulting in more precise final outputs. Especially under complex interference conditions, the model demonstrates stable recognition of target features. These factors collectively contribute to 3D DS-TransUnet’s excellent performance in the identification and segmentation of complex geological features, showcasing its strong robustness and outstanding decoding capabilities.

6. Conclusions

In this study, we introduce the DS-TransUnet model to the field of intelligent seismic interpretation, with the goal of improving the accuracy and robustness of feature recognition in 3D seismic images. To accommodate the input of 3D seismic data, we innovatively modify the convolutional module to use 3D convolution operations. Experimental results demonstrate that, in tests on both synthetic data and field seismic profiles, 3D DS-TransUnet not only significantly enhances the accuracy of channel recognition under complex geological conditions, but also effectively suppresses interference, while exhibiting outstanding robustness and stability. However, due to the high similarity of seismic profiles, the model faces challenges in distinguishing between channel features and karst cave features, especially in the case of continuous karst cave structures. Relying solely on a deep learning framework still makes it difficult to accurately differentiate these similar features in seismic images. Meanwhile, there remains room for improvement in the continuity recognition of channels. To address these limitations, future research will explore two directions:

By incorporating geological background knowledge and conventional seismic attributes, this will provide the model with explicit prior constraints and enhance its understanding of complex geological structures. Expert-driven geological knowledge graphs are expected to guide the model in more accurately distinguishing between channels and similarly responding features such as karst caves, thereby compensating for the semantic limitations of conventional deep learning.

To further enhance model robustness and generalization, future work will focus on constructing more diverse and representative training and testing datasets that encompass a broader range of geological settings and noise patterns, thereby improving the model’s adaptability, stability, and reliability in field seismic interpretation tasks.