Current Status and Challenges in Usability Evaluation Research on Human–Machine Interaction in Intelligent Vehicles: A Systematic Review

Abstract

Featured Application

Abstract

1. Introduction

2. Materials and Methods

2.1. Research Framework

2.2. Phase 1: Acquiring Literature

- Operational Procedures

- 2.

- Important Notes

- 3.

- Execution Results

2.3. Phase 2: Arranging Literature

2.3.1. Literature Screening

- Operational Procedures

- 2.

- Important Notes

- 3.

- Execution Results

2.3.2. Literature Coding

- Operational Procedures

- 2.

- Important Notes

- 3.

- Execution Results

2.3.3. Literature Counting

2.4. Phase 3: Analyzing Literature

- Operational Procedures

- 2.

- Important Notes

- 3.

- Execution Results

3. Results and Discussion

3.1. Current Development Status of Usability Evaluation Research on Human–Machine Interaction in Intelligent Vehicles (Addressing Research Question 1)

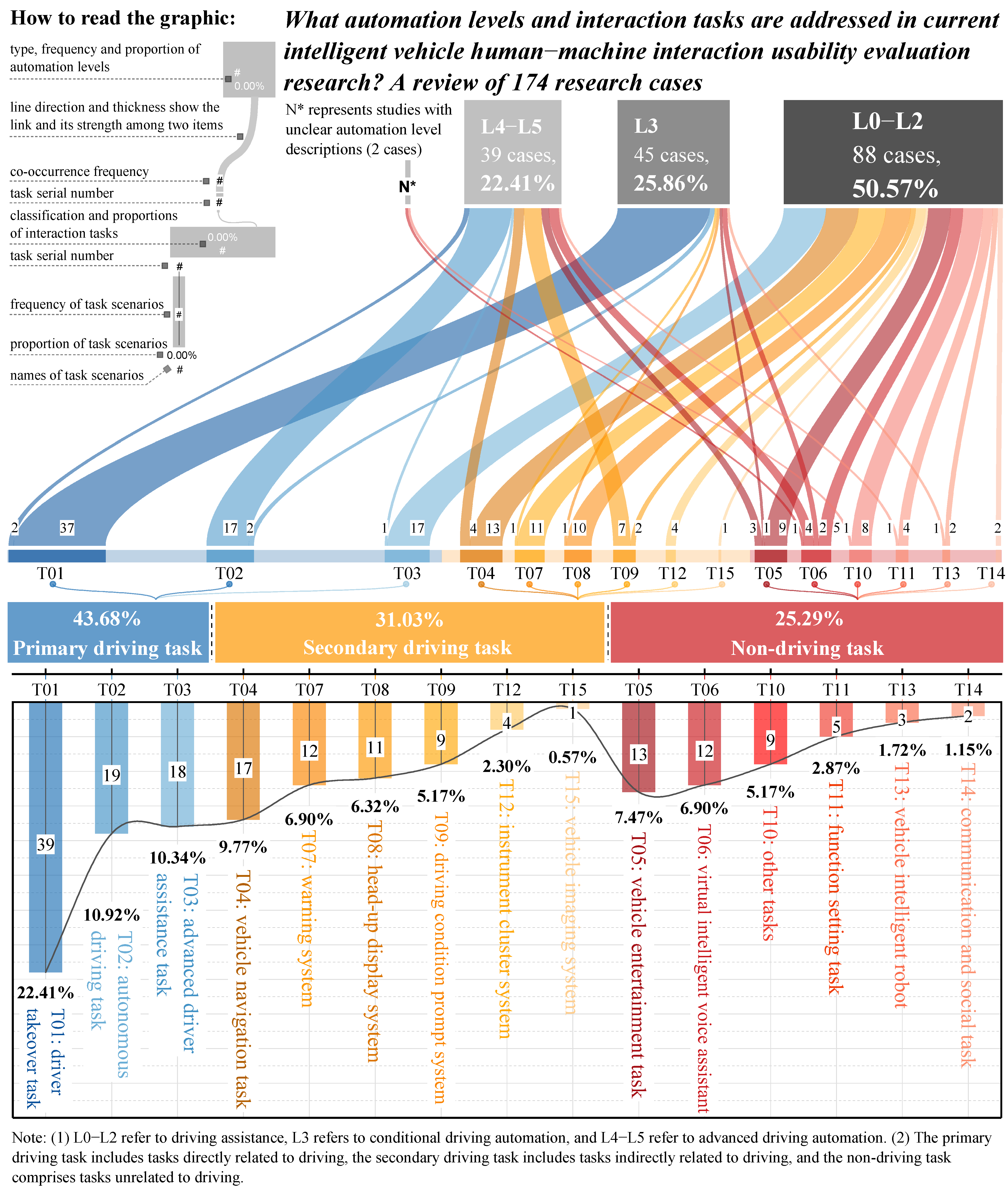

3.1.1. Research Status 1: Existing Human–Machine Interaction Usability Evaluation Research Primarily Focuses on L0–L2 Driving-Assistance Scenarios

3.1.2. Research Status 2: Current Research Focuses on Primary Driving Tasks, While Non-Driving Tasks Receive Limited Attention

3.1.3. Research Status 3: Each Automation Level Triggers Specific Human Factors Issues

- At L0–L2 levels (driving assistance), drivers remain the absolute primary agents for vehicle control and environmental monitoring. Accordingly, current human–machine interaction usability evaluation research focuses on optimizing the effectiveness of task scenarios such as advanced driver assistance systems, warning systems, vehicle navigation tasks, and head-up display systems. In other words, the usability goal at the L0–L2 levels is to provide effective assistance to drivers focused on driving [54] to ensure that information delivery is both efficient and safe.

- When automation levels reach L3 (conditional driving automation), human–machine interaction conflicts concentrate on the critical point of “human-machine co-driving.” [55] Accordingly, the vast majority of current human–machine interaction usability evaluation research focuses converge on driving takeover as the core task (82.22%, 37/45). How to design efficient and reliable takeover requests to ensure drivers can safely and promptly regain control from the system has become the most critical and challenging human factors problem at this stage [56,57].

- When automation levels enter L4–L5 (advanced driving automation), the driver’s role completely transforms to that of a passenger. Accordingly, current human–machine interaction usability evaluation research priorities also shift to two aspects: first, human–machine interaction surrounding autonomous driving tasks themselves, e.g., how to effectively communicate the vehicle’s driving intentions and decision-making rationale to passengers [58]; second, maintaining passengers’ situational awareness of the vehicle and surrounding environment through driving condition prompt systems [59]. However, it is noteworthy that although passengers are liberated from driving tasks, current research on non-driving tasks such as virtual intelligent voice assistants, vehicle entertainment, and communication and social tasks remains relatively limited. This gap poses potential constraints on realizing the value of advanced automated driving (see discussion of “Potential Challenge 1” below).

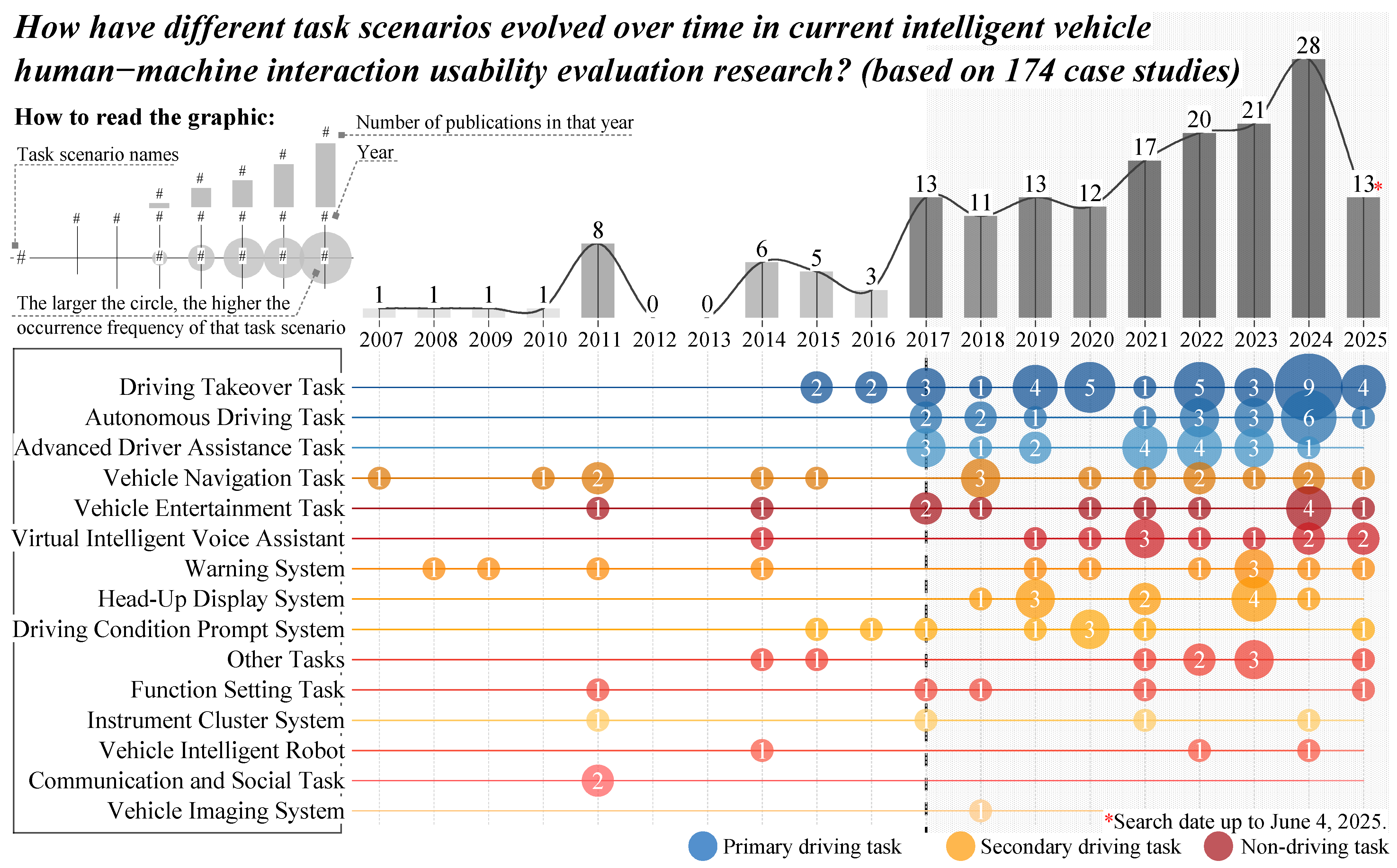

3.1.4. Research Status 4: The Scope of Task Scenarios in Usability Evaluation Research on Human–Machine Interaction in Intelligent Vehicles Is Expanding (Breadth) and Diversifying (Depth)

- As indicated by the blue circles in Figure 3, since 2017, usability evaluation research focusing on primary driving task scenarios has begun to receive intensive attention, including driving takeover tasks, advanced driver assistance, and autonomous driving tasks. Although research on these primary driving tasks started relatively late, their growth momentum is strong, occupying a significant proportion (43.68%, 76/174) in a short period. This rapid convergence of research topics essentially means that as automated driving levels improve, designing safe, efficient, and trustworthy human–machine interaction for primary driving tasks has become a frontier issue in the current field of human–machine interaction usability evaluation.

- As indicated by the yellow circles in Figure 3, since 2017, usability evaluation research focusing on secondary driving task scenarios has also begun to receive intensive attention, for example, vehicle navigation tasks, warning systems, head-up display systems (HUDs), and driving condition prompt systems. This trend indicates that the academic community has begun to systematically study navigation, HUD, and other functions as an independent “secondary driving” task cluster to better support primary driving tasks.

- As indicated by the red circles in Figure 3, since 2017, the number of usability evaluation studies focusing on non-driving tasks has continued to rise, such as vehicle entertainment tasks, virtual intelligent voice assistants, other tasks, and function-setting tasks. This trend is closely coupled with the development of intelligent vehicle technology [60,61], where technological progress has not only enriched vehicle functions but also transformed the driver’s role. Therefore, how to enable drivers to safely and conveniently engage in non-driving activities during the driving process has become a new research hotspot.

- As shown in Figure 3, although current research has long focused on “traditional” task scenarios such as vehicle navigation tasks, vehicle entertainment tasks, virtual voice assistants, and vehicle function settings (such as air conditioning, windows, seat adjustment, etc.), before 2017, related research was scattered and failed to attract widespread attention. However, after 2017, research around these traditional tasks became more concentrated, with significantly increased numbers. This reveals that against the backdrop of intelligent cockpits (such as large touch screens and multimodal interaction) becoming mainstream configurations, the interaction methods for traditional task scenarios are being reshaped [62]. Therefore, the academic community urgently needs to re-examine and optimize the usability of these traditional interaction tasks with new evaluation perspectives to adapt to new hardware platforms and user expectations.

- As shown in Figure 3, in recent years, some studies have begun to focus on other tasks that were previously overlooked, namely, edge tasks or atypical tasks. Examples include the usability of touchscreen size, interface layout, and key design [63,64,65,66]; the usability of handwriting input box size in vehicle information systems [67]; the usability of T-shaped panel layout design [68]; the interface layout design of vehicle information systems [69]; the font design of central control interfaces [70]; and adaptive vehicle system design [71]. This shift reflects the improvement in user expectations for human–machine interaction usability, transitioning from meeting basic functions to pursuing ultimate, detailed experiences. Additionally, this also marks that human–machine interaction design concepts are further deepening toward “user-centered” approaches, emphasizing that optimizing the details of non-core functions, in addition to technological innovation, can also enhance overall user satisfaction and product competitiveness.

3.1.5. Research Status 5: Usability of Vehicle Navigation Tasks Remains a Consistently Important Research Topic

3.1.6. Research Status 6: Current Research Tends to Use Multimodal Interaction When Exploring the Usability of Driving Takeover Tasks While Highly Concentrating on Single-Modal Optimization and Application for Secondary Driving Tasks and Non-Driving Tasks

- In research related to secondary driving tasks and non-driving tasks, as many as 87.31% (117/134) of studies focus on unimodal interaction, while research exploring multimodal interaction usability is limited, accounting for only 12.68% (17/134). This indicates that existing research tends to optimize single interaction modalities when handling non-primary driving tasks such as infotainment and navigation settings.

- In contrast to the aforementioned trend, when exploring the usability of driving takeover tasks, existing research leans toward multimodal interaction. Specifically, multimodal interaction research on driving takeover tasks accounts for 57.38% (35/61), exceeding unimodal interaction research (42.63%, 26/61). The reason for this interaction modality application trend lies in the fact that the core objective of driving takeover tasks is to ensure that drivers can quickly and accurately receive and understand critical information, and multimodal interaction is key to achieving this goal [8,86,87,88]. Specifically, multimodal interaction fully utilizes the synergy and complementarity of information, enabling the more reliable transmission of critical information to drivers [88,89,90]. This not only enhances system fault tolerance but also effectively alerts drivers and helps them quickly integrate information to establish comprehensive situational awareness.

3.1.7. Research Status 7: From the Perspective of Modality Types, Existing Research Has Explored Diverse Interaction Modalities

- In terms of output modality applications, current research presents the following characteristics. First, visual output modalities represented by HUD interfaces, central control interfaces, and dashboard interfaces are the most widely applied. Among these, the frequent application of HUD interfaces is particularly noteworthy, as it fully demonstrates its unique advantages in reducing driver gaze deviation from the road and enhancing driving safety [91]. Second, among non-visual modalities, auditory feedback (such as speech and acoustic signals) has gained widespread recognition due to its advantages in reducing driving distraction risks [92]. Additionally, haptic vibration as an auxiliary feedback method has also received attention, which indicates that in driving contexts with high visual and cognitive load, timely haptic cues can effectively supplement information and enhance the immediacy and reliability of interaction [93,94].

- Beyond the aforementioned mainstream output modalities, some studies have begun to explore the application value of emerging interaction technologies. For example, some researchers have attempted to use changes in indicator light colors and flashing frequencies to convey warning or status information [95,96,97,98]. Meanwhile, some studies have started focusing on special modalities such as olfactory or haptic temperature feedback [99], aiming to relieve driving fatigue and help drivers maintain alertness through the release of specific odors or the provision of temperature stimulation. Additionally, other research has explored the feasibility of replacing traditional optical rearview mirrors with electronic rearview mirrors [100]. It should be noted that although these emerging modalities show certain application prospects, their applicability, stability, and user acceptance in real vehicle environments still require more comprehensive empirical validation.

- In terms of input modality applications, current research presents the following characteristics. First, touch screens, as one of the primary input devices, reflect the high dependence of current intelligent vehicles on information integration and operational flexibility in central control interaction through their high-frequency application. However, although touch screens possess rich interaction capabilities and visual expressiveness, their distraction risks during driving are equally noteworthy. Second, speech interaction has become a current research focus due to its convenience in freeing hands and being suitable for multitasking. Additionally, natural or physical interaction modalities such as gestures, steering wheel keys, and central control keys also play indispensable roles in specific scenarios [101,102], which proves their rationality and value.

3.1.8. Research Status 8: Fragmented Research on Multimodal Interaction Usability in Intelligent Vehicles, Particularly for Driving Takeover Tasks

3.2. Potential Challenges in Usability Evaluation Research on Human–Machine Interaction in Intelligent Vehicles (Addressing Research Question 2)

3.2.1. Potential Challenge 1: Insufficient Attention to Non-Driving Tasks in Existing Research, Which Poses a Potential Constraint on the Value Realization of Advanced Automated Driving

3.2.2. Potential Challenge 2: For L3 Conditional Driving Automation Scenarios, Existing Research Overly Focuses on Driving Takeover Tasks, Which May Mask Systemic Safety Risks

3.2.3. Potential Challenge 3: Significant Gaps in Usability Research on Multimodal Interaction in Secondary and Non-Driving Tasks Will Constrain the Overall Development of Intelligent Vehicles

3.2.4. Potential Challenge 4: The Absence of Usability Standards for Multimodal Interaction in Intelligent Vehicles Hinders Industry Development

3.3. Development Recommendations for Usability Evaluation Research on Human–Machine Interaction in Intelligent Vehicles (Addressing Research Question 3)

3.3.1. Development Recommendation 1: Future Research Should Strengthen Usability Studies of Non-Driving Tasks in L4–L5 Advanced Driving Automation Scenarios

3.3.2. Development Recommendation 2: Future Research Needs to Conduct Specialized Usability Studies for Task Scenarios at Each Automation Level

3.3.3. Development Recommendation 3: For Task Scenarios Under L3 Level, Future Research Should Expand Its Scope from Single Takeover Tasks to the Complete Interaction Cycle of L3 Automated Driving

3.3.4. Development Recommendation 4: Future Research Needs to Promptly Adjust Usability Evaluation Work to Meet Increasingly Complex Evaluation Demands

3.3.5. Development Recommendation 5: Future Research Should Continuously Focus on How to Design and Evaluate Vehicle Navigation Tasks

- With the increasing maturity of technologies such as augmented reality head-up displays (AR-HUDs), high-precision speech recognition, and natural language processing (NLP), the presentation and interaction methods of vehicle navigation information are triggering a paradigm revolution [133,134]. The focus of future usability research on vehicle navigation tasks will shift from traditional two-dimensional screen visual optimization toward the deep integration of visual, auditory, and even haptic feedback, aiming to build seamless, immersive, and highly intuitive multimodal interaction experiences.

- Vehicle navigation systems are transforming from independent functions into the “data brain” of intelligent vehicles, capable of providing data support for advanced driver assistance systems (ADASs) and obtaining real-time traffic information through vehicle-to-everything (V2X) communication [135]. Therefore, how to clearly integrate navigation, driving assistance, and external environment information on the interface to ensure that drivers can clearly and accurately understand the vehicle’s comprehensive operational status and decision intentions represents a key design and evaluation challenge.

3.3.6. Development Recommendation 6: Future Research Should Establish a Standardized Framework or Practical Guidelines for Multimodal Interaction Usability in Intelligent Vehicles to Meet Development Needs

4. Limitations of the Research

5. Conclusions and Future Work

5.1. Research Conclusions

5.2. Future Work

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Li, W.; Cao, D.; Tan, R.; Shi, T.; Gao, Z.; Ma, J.; Guo, G.; Hu, H.; Feng, J.; Wang, L. Intelligent Cockpit for Intelligent Connected Vehicles: Definition, Taxonomy, Technology and Evaluation. IEEE Trans. Intell. Veh. 2024, 9, 3140–3153. [Google Scholar] [CrossRef]

- Chen, L.; Li, Y.; Huang, C.; Xing, Y.; Tian, D.; Li, L.; Hu, Z.; Teng, S.; Lv, C.; Wang, J.; et al. Milestones in Autonomous Driving and Intelligent Vehicles—Part I: Control, Computing System Design, Communication, HD Map, Testing, and Human Behaviors. IEEE Trans. Syst. Man Cybern. Syst. 2023, 53, 5831–5847. [Google Scholar] [CrossRef]

- Cao, J.; Lin, L.; Zhang, J.; Zhang, L.; Wang, Y.; Wang, J. The development and validation of the perceived safety of intelligent connected vehicles scale. Accid. Anal. Prev. 2021, 154, 106092. [Google Scholar] [CrossRef] [PubMed]

- Tufano, F.; Bahadure, S.W.; Tufo, M.; Novella, L.; Fiengo, G.; Santini, S. An Optimization Framework for Information Management in Adaptive Automotive Human–Machine Interfaces. Appl. Sci. 2023, 13, 10687. [Google Scholar] [CrossRef]

- Li, J.; Liu, J.; Wang, X.; Liu, L. The Impact of Transparency on Driver Trust and Reliance in Highly Automated Driving: Presenting Appropriate Transparency in Automotive HMI. Appl. Sci. 2024, 14, 3203. [Google Scholar] [CrossRef]

- Bellani, P.; Picardi, A.; Caruso, F.; Gaetani, F.; Brevi, F.; Arquilla, V.; Caruso, G. Enhancing User Engagement in Shared Autonomous Vehicles: An Innovative Gesture-Based Windshield Interaction System. Appl. Sci. 2023, 13, 9901. [Google Scholar] [CrossRef]

- Kim, S.; Oh, J.; Seong, M.; Jeon, E.; Moon, Y.-K.; Kim, S. Assessing the Impact of AR HUDs and Risk Level on User Experience in Self-Driving Cars: Results from a Realistic Driving Simulation. Appl. Sci. 2023, 13, 4952. [Google Scholar] [CrossRef]

- Tan, Z.; Dai, N.; Su, Y.; Zhang, R.; Li, Y.; Wu, D.; Li, S. Human–machine interaction in intelligent and connected vehicles: A review of status quo, issues, and opportunities. IEEE Trans. Intell. Transp. Syst. 2021, 23, 13954–13975. [Google Scholar] [CrossRef]

- Noy, I.Y.; Shinar, D.; Horrey, W.J. Automated driving: Safety blind spots. Saf. Sci. 2018, 102, 68–78. [Google Scholar] [CrossRef]

- Hancock, P.A.; Kajaks, T.; Caird, J.K.; Chignell, M.H.; Mizobuchi, S.; Burns, P.C.; Feng, J.; Fernie, G.R.; Lavallière, M.; Noy, I.Y.; et al. Challenges to Human Drivers in Increasingly Automated Vehicles. Hum. Factors 2020, 62, 310–328. [Google Scholar] [CrossRef]

- Yan, M.; Rampino, L.; Caruso, G. Comparing User Acceptance in Human–Machine Interfaces Assessments of Shared Autonomous Vehicles: A Standardized Test Procedure. Appl. Sci. 2025, 15, 45. [Google Scholar] [CrossRef]

- Biondi, F.; Alvarez, I.; Jeong, K.-A. Human–Vehicle Cooperation in Automated Driving: A Multidisciplinary Review and Appraisal. Int. J. Hum.–Comput. Interact. 2019, 35, 932–946. [Google Scholar] [CrossRef]

- Graichen, L.; Graichen, M.; Krems, J.F. Effects of Gesture-Based Interaction on Driving Behavior: A Driving Simulator Study Using the Projection-Based Vehicle-in-the-Loop. Hum. Factors 2022, 64, 324–342. [Google Scholar] [CrossRef] [PubMed]

- Roche, F.; Somieski, A.; Brandenburg, S. Behavioral Changes to Repeated Takeovers in Highly Automated Driving: Effects of the Takeover-Request Design and the Nondriving-Related Task Modality. Hum. Factors 2019, 61, 839–849. [Google Scholar] [CrossRef]

- van de Merwe, K.; Mallam, S.; Nazir, S. Agent Transparency, Situation Awareness, Mental Workload, and Operator Performance: A Systematic Literature Review. Hum. Factors 2024, 66, 180–208. [Google Scholar] [CrossRef]

- François, M.; François, O.; Alexandra, F.; Philippe, C.; Navarro, J. Automotive HMI design and participatory user involvement: Review and perspectives. Ergonomics 2017, 60, 541–552. [Google Scholar] [CrossRef]

- Harms, I.M.; Auerbach, D.A.M.; Papadimitriou, E.; Hagenzieker, M.P. Frequently Used Vehicle Controls While Driving: A Real-World Driving Study Assessing Internal Human–Machine Interface Task Frequencies and Influencing Factors. Appl. Sci. 2025, 15, 5230. [Google Scholar] [CrossRef]

- Li, L.; Huang, W.-L.; Liu, Y.; Zheng, N.-N.; Wang, F.-Y. Intelligence testing for autonomous vehicles: A new approach. IEEE Trans. Intell. Veh. 2016, 1, 158–166. [Google Scholar] [CrossRef]

- Naujoks, F.; Hergeth, S.; Wiedemann, K.; Schömig, N.; Keinath, A. Use Cases for Assessing, Testing, and Validating the Human Machine Interface of Automated Driving Systems. Proc. Hum. Factors Ergon. Soc. Annu. Meet. 2018, 62, 1873–1877. [Google Scholar] [CrossRef]

- Zhou, Y.; Sun, Y.; Tang, Y.; Chen, Y.; Sun, J.; Poskitt, C.M.; Liu, Y.; Yang, Z. Specification-based autonomous driving system testing. IEEE Trans. Softw. Eng. 2023, 49, 3391–3410. [Google Scholar] [CrossRef]

- Forster, Y.; Hergeth, S.; Naujoks, F.; Beggiato, M.; Krems, J.F.; Keinath, A. Learning to use automation: Behavioral changes in interaction with automated driving systems. Transp. Res. Part F Traffic Psychol. Behav. 2019, 62, 599–614. [Google Scholar] [CrossRef]

- Forster, Y.; Hergeth, S.; Naujoks, F.; Krems, J.; Keinath, A. User Education in Automated Driving: Owner’s Manual and Interactive Tutorial Support Mental Model Formation and Human-Automation Interaction. Information 2019, 10, 143. [Google Scholar] [CrossRef]

- Tang, S.; Zhang, Z.; Zhang, Y.; Zhou, J.; Guo, Y.; Liu, S.; Guo, S.; Li, Y.-F.; Ma, L.; Xue, Y.; et al. A Survey on Automated Driving System Testing: Landscapes and Trends. ACM Trans. Softw. Eng. Methodol. 2023, 32, 1–62. [Google Scholar] [CrossRef]

- Forster, Y.; Frison, A.-K.; Wintersberger, P.; Geisel, V.; Hergeth, S.; Riener, A. Where we come from and where we are going: A review of automated driving studies. In Proceedings of the AutomotiveUI ‘19: Proceedings of the 11th International Conference on Automotive User Interfaces and Interactive Vehicular Applications: Adjunct Proceedings, Utrecht, The Netherlands, 21–25 September 2019; pp. 140–145. [Google Scholar]

- Su, Y.; Tan, Z.; Dai, N. Changes in Usability Evaluation of Human-Machine Interfaces from the Perspective of Automated Vehicles. In Proceedings of the AHFE 2021 Virtual Conferences on Usability and User Experience, Human Factors and Wearable Technologies, Human Factors in Virtual Environments and Game Design, and Human Factors and Assistive Technology, Virtual, 25–29 July 2021; pp. 886–893. [Google Scholar]

- Albers, D.; Radlmayr, J.; Loew, A.; Hergeth, S.; Naujoks, F.; Keinath, A.; Bengler, K. Usability Evaluation—Advances in Experimental Design in the Context of Automated Driving Human–Machine Interfaces. Information 2020, 11, 240. [Google Scholar] [CrossRef]

- Paul, J.; Lim, W.M.; O’Cass, A.; Hao, A.W.; Bresciani, S. Scientific procedures and rationales for systematic literature reviews (SPAR-4-SLR). Int. J. Consum. Stud. 2021, 45, 1147. [Google Scholar] [CrossRef]

- Page, M.J.; McKenzie, J.E.; Bossuyt, P.M.; Boutron, I.; Hoffmann, T.C.; Mulrow, C.D.; Shamseer, L.; Tetzlaff, J.M.; Akl, E.A.; Brennan, S.E.; et al. The PRISMA 2020 statement: An updated guideline for reporting systematic reviews. BMJ 2021, 372, n71. [Google Scholar] [CrossRef]

- Page, M.J.; Moher, D.; Bossuyt, P.M.; Boutron, I.; Hoffmann, T.C.; Mulrow, C.D.; Shamseer, L.; Tetzlaff, J.M.; Akl, E.A.; Brennan, S.E.; et al. PRISMA 2020 explanation and elaboration: Updated guidance and exemplars for reporting systematic reviews. BMJ 2021, 372, n160. [Google Scholar] [CrossRef]

- Boboc, R.G.; Gîrbacia, F.; Butilă, E.V. The Application of Augmented Reality in the Automotive Industry: A Systematic Literature Review. Appl. Sci. 2020, 10, 4259. [Google Scholar] [CrossRef]

- Alanazi, F. A Systematic Literature Review of Autonomous and Connected Vehicles in Traffic Management. Appl. Sci. 2023, 13, 1789. [Google Scholar] [CrossRef]

- ISO/SAE PAS 22736:2021; Taxonomy and Definitions for Terms Related to Driving Automation Systems for On-Road Motor Vehicles. International Organization for Standardization: London, UK, 2021.

- J3016_202104; Taxonomy and Definitions for Terms Related to Driving Automation Systems for On-Road Motor Vehicles. SAE International: Warrendale, PA, USA, 2021.

- GB/T 40429—2021; Taxonomy of Driving Automation for Vehicles. China National Standardization Management Committee: Beijing, China, 2021. (In Chinese)

- Llaneras, R.; Glaser, Y.; Green, C.; August, M.; Landry, S. Operational Evaluation of a Pre-Production L2 System HMI; SAE International: Warrendale, PA, USA, 2025. [Google Scholar]

- Shen, S.; Neyens, D.M. Assessing drivers’ response during automated driver support system failures with non-driving tasks. J. Saf. Res. 2017, 61, 149–155. [Google Scholar] [CrossRef]

- Perrier, M.J.R.; Louw, T.L.; Carsten, O. User-centred design evaluation of symbols for adaptive cruise control (ACC) and lane-keeping assistance (LKA). Cogn. Technol. Work 2021, 23, 685–703. [Google Scholar] [CrossRef]

- Roberts, S.; Ebadi, Y.; Talreja, N.; Michael A Knodler, J.; Fisher, D.L. Designing and Evaluating an Informative Interface for Transfer of Control in a Level 2 Automated Driving System. In Proceedings of the 14th International Conference on Automotive User Interfaces and Interactive Vehicular Applications, Seoul, Republic of Korea, 17–20 September 2022; pp. 253–262. [Google Scholar]

- Sánchez–Mateo, S.; Pérez–Moreno, E.; Jiménez, F. Driver Monitoring for a Driver-Centered Design and Assessment of a Merging Assistance System Based on V2V Communications. Sensors 2020, 20, 5582. [Google Scholar] [CrossRef]

- Monsaingeon, N.; Caroux, L.; Langlois, S.; Lemercier, C. Earcons to reduce mode confusions in partially automated vehicles: Development and application of an evaluation method. Int. J. Hum.-Comput. Stud. 2023, 176, 103044. [Google Scholar] [CrossRef]

- Bai, X.; Feng, J. Awakening the Disengaged: Can Driving-Related Prompts Engage Drivers in Partial Automation? Hum. Factors 2025, 67, 731–752. [Google Scholar] [CrossRef]

- Marcano, M.; Tango, F.; Sarabia, J.; Chiesa, S.; Pérez, J.; Díaz, S. Can Shared Control Improve Overtaking Performance? Combining Human and Automation Strengths for a Safer Maneuver. Sensors 2022, 22, 9093. [Google Scholar] [CrossRef]

- Takada, Y.; Boer, E.R.; Sawaragi, T. Driver assist system for human–machine interaction. Cogn. Technol. Work 2017, 19, 819–836. [Google Scholar] [CrossRef]

- Liu, Y.; Zhang, J.; Li, Y.; Hansen, P.; Wang, J. Human-Computer Collaborative Interaction Design of Intelligent Vehicle—A Case Study of HMI of Adaptive Cruise Control. In Proceedings of the Third International Conference, MobiTAS 2021, Held as Part of the 23rd HCI International Conference, HCII 2021, Virtual Event, 24–29 July 2021; pp. 296–314. [Google Scholar]

- Lee, J.M.; Ju, D.Y. Classification of Human-Vehicle Interaction: User Perspectives on Design. Soc. Behav. Personal. Int. J. 2018, 46, 1057–1070. [Google Scholar] [CrossRef]

- ISO 17287: 2003; Road Vehicles—Ergonomic Aspects of Transport Information and Control Systems—Procedure for Assessing Suitability for Use While Driving. International Organization for Standardization: London, UK, 2003.

- Pfleging, B.; Schmidt, A. (Non-) Driving-Related Activities in the Car: Defining Driver Activities for Manual and Automated Driving. In Proceedings of the Workshop on Experiencing Autonomous Vehicles: Crossing the Boundaries between a Drive and a Ride at CHI’15, Seoul, Republic of Korea, 18–23 April 2015. [Google Scholar]

- Murali, P.K.; Kaboli, M.; Dahiya, R. Intelligent in-vehicle interaction technologies. Adv. Intell. Syst. 2022, 4, 2100122. [Google Scholar] [CrossRef]

- ISO 17488: 2016; Road Vehicles—Transport Information and Control Systems—Detection-Response Task (DRT) for Assessing Attentional Effects of Cognitive Load in Driving. International Organization for Standardization: London, UK, 2016.

- ISO/TR 21959-1: 2020; Road Vehicles—Human Performance and State in the Context of Automated Driving—Part 1: Common Underlying Concepts. International Organization for Standardization: London, UK, 2020.

- Liu, Y.; Wu, C.; Zhang, H.; Ding, N.; Xiao, Y.; Zhang, Q.; Tian, K. Safety evaluation and prediction of takeover performance in automated driving considering drivers’ cognitive load: A driving simulator study. Transp. Res. Part F Traffic Psychol. Behav. 2024, 103, 35–52. [Google Scholar] [CrossRef]

- Scharfe, M.S.L.; Zeeb, K.; Russwinkel, N. The Impact of Situational Complexity and Familiarity on Takeover Quality in Uncritical Highly Automated Driving Scenarios. Information 2020, 11, 115. [Google Scholar] [CrossRef]

- Johansson, M.; Mullaart Söderholm, M.; Novakazi, F.; Rydström, A. The Decline of User Experience in Transition from Automated Driving to Manual Driving. Information 2021, 12, 126. [Google Scholar] [CrossRef]

- Du, H.; Tao, S.; Feng, X.; Ma, J.; Wang, H. From Passive to Active: Towards Conversational In-Vehicle Navigation Through Large Language Models. In Proceedings of the Design, User Experience, and Usability, HCII 2024, Cham, Switzerland, 15 June 2024; pp. 159–172. [Google Scholar]

- Chen, Y.; Wang, J.; Jia, F.; Wu, X.; Xiao, Q.; Wang, Z.; You, F. Is control necessary for drivers? Exploring the influence of human–machine collaboration modes on driving behavior and subjective perception under different hazard visibility scenarios. Accid. Anal. Prev. 2025, 217, 108067. [Google Scholar] [CrossRef] [PubMed]

- Karimi, A.; Barbin, A.H.; Hazoor, A.; Marinelli, G.; Bassani, M. Comparative safety analysis of take-over control mechanisms of conditionally automated vehicles. Accid. Anal. Prev. 2025, 217, 108068. [Google Scholar] [CrossRef]

- Salubre, K.J.; Nathan-Roberts, D. Takeover Request Design in Automated Driving: A Systematic Review. Proc. Hum. Factors Ergon. Soc. Annu. Meet. 2021, 65, 868–872. [Google Scholar] [CrossRef]

- Tinga, A.M.; Cleij, D.; Jansen, R.J.; van der Kint, S.; van Nes, N. Human machine interface design for continuous support of mode awareness during automated driving: An online simulation. Transp. Res. Part F Traffic Psychol. Behav. 2022, 87, 102–119. [Google Scholar] [CrossRef]

- Oliveira, L.; Burns, C.; Luton, J.; Iyer, S.; Birrell, S. The influence of system transparency on trust: Evaluating interfaces in a highly automated vehicle. Transp. Res. Part F Traffic Psychol. Behav. 2020, 72, 280–296. [Google Scholar] [CrossRef]

- Stampf, A.; Colley, M.; Rukzio, E. Towards Implicit Interaction in Highly Automated Vehicles-A Systematic Literature Review. Proc. ACM Hum.-Comput. Interact. 2022, 6, 1–21. [Google Scholar] [CrossRef]

- Yang, L.; Yang, T.Y.; Liu, H.; Shan, X.; Brighton, J.; Skrypchuk, L.; Mouzakitis, A.; Zhao, Y. A Refined Non-Driving Activity Classification Using a Two-Stream Convolutional Neural Network. IEEE Sens. J. 2021, 21, 15574–15583. [Google Scholar] [CrossRef]

- Ebel, P.; Christoph, L.; Vogelsang, A. Multitasking While Driving: How Drivers Self-Regulate Their Interaction with In-Vehicle Touchscreens in Automated Driving. Int. J. Hum.–Comput. Interact. 2023, 39, 3162–3179. [Google Scholar] [CrossRef]

- Yang, J.J.; Chen, Y.M.; Xing, S.S.; Qiu, R.Z. A comfort evaluation method based on an intelligent car cockpit. Hum. Factors Ergon. Manuf. Serv. Ind. 2023, 33, 104–117. [Google Scholar] [CrossRef]

- Feng, F.; Yili, L.; Chen, Y. Effects of Quantity and Size of Buttons of In-Vehicle Touch Screen on Drivers’ Eye Glance Behavior. Int. J. Hum.–Comput. Interact. 2018, 34, 1105–1118. [Google Scholar] [CrossRef]

- Jung, S.; Jaehyun, P.; Jungchul, P.; Mungyeong, C.; Taehun, K.; Myungbin, C.; Lee, S. Effect of Touch Button Interface on In-Vehicle Information Systems Usability. Int. J. Hum.–Comput. Interact. 2021, 37, 1404–1422. [Google Scholar] [CrossRef]

- Wu, Z.; Kaiyue, R.; Weixing, D.; Yixi, B.; Jin, T. The Effect of Touchscreen Layout Division on the Usability and Driving Safety of In-Vehicle Information Systems. Int. J. Hum.–Comput. Interact. 2025, 41, 3340–3351. [Google Scholar] [CrossRef]

- Zhong, Q.; Guo, G.; Zhi, J. Chinese handwriting while driving: Effects of handwritten box size on in-vehicle information systems usability and driver distraction. Traffic Inj. Prev. 2023, 24, 26–31. [Google Scholar] [CrossRef] [PubMed]

- Yang, H.; Zhang, J.; Wang, Y.; Jia, R. Exploring relationships between design features and system usability of intelligent car human–machine interface. Robot. Auton. Syst. 2021, 143, 103829. [Google Scholar] [CrossRef]

- Cao, J.; Hsuan-Lin, C.; Yan-Lin, C.; Chih-Hsing, C.; Ying-Yin, H.; Lee, Y.-J. Enhancing in-car interface efficiency: The influence of menu configuration on cognitive load and visuospatial memory. Ergonomics 2025, 1–17. [Google Scholar] [CrossRef]

- Shao, J.; Yang, Z.; Li, Y.; Li, X.; Huang, Y.; Deng, J. Research on Chinese Font Size of Automobile Central Control Interface Driving. In Proceedings of the HCI in Mobility, Transport, and Automotive Systems: 5th International Conference, MobiTAS 2023, Held as Part of the 25th HCI International Conference, HCII 2023, Copenhagen, Denmark, 23–28 July 2023; pp. 210–223. [Google Scholar]

- Graefe, J.; Rittger, L.; Carollo, G.; Engelhardt, D.; Bengler, K. Evaluating the Potential of Interactivity in Explanations for User-Adaptive In-Vehicle Systems—Insights from a Real-World Driving Study. In Proceedings of the HCI International 2023—Late Breaking Papers: 25th International Conference on Human-Computer Interaction, HCII 2023, Copenhagen, Denmark, 23–28 July 2023; pp. 294–312. [Google Scholar]

- Lin, M.-C.; Lin, Y.-H.; Lin, C.-C.; Lin, J.-Y. A Study on the Interface Design of a Functional Menu and Icons for In-Vehicle Navigation Systems. In Proceedings of the 16th International Conference, HCI International 2014, Heraklion, Crete, Greece, 22–27 June 2014; pp. 261–272. [Google Scholar]

- Metz, B.; Schoch, S.; Just, M.; Kuhn, F. How do drivers interact with navigation systems in real life conditions?: Results of a field-operational-test on navigation systems. Transp. Res. Part F Traffic Psychol. Behav. 2014, 24, 146–157. [Google Scholar] [CrossRef]

- Knapper, A.; Nes, N.V.; Christoph, M.; Hagenzieker, M.; Brookhuis, K. The use of navigation systems in naturalistic driving. Traffic Inj. Prev. 2016, 17, 264–270. [Google Scholar] [CrossRef]

- Yared, T.; Patterson, P. The impact of navigation system display size and environmental illumination on young driver mental workload. Transp. Res. Part F Traffic Psychol. Behav. 2020, 74, 330–344. [Google Scholar] [CrossRef]

- Ro, S.; Van Nguyen, H.; Jung, W.; Pae, Y.W.; Munson, J.P.; Woo, J.; Yu, S.; Lee, K. A usability evaluation on the XVC framework for in-vehicle user interfaces. IEICE Trans. Inf. Syst. 2010, E93-D, 3321–3330. [Google Scholar] [CrossRef]

- Amditis, A.; Pagle, K.; Joshi, S.; Bekiaris, E. Driver–Vehicle–Environment monitoring for on-board driver support systems: Lessons learned from design and implementation. Appl. Ergon. 2010, 41, 225–235. [Google Scholar] [CrossRef]

- Bian, Y.; Zhang, X.; Wu, Y.; Zhao, X.; Liu, H.; Su, Y. Influence of prompt timing and messages of an audio navigation system on driver behavior on an urban expressway with five exits. Accid. Anal Prev. 2021, 157, 106155. [Google Scholar] [CrossRef]

- Kim, J.C.; Laine, T.H.; Åhlund, C. Multimodal Interaction Systems Based on Internet of Things and Augmented Reality: A Systematic Literature Review. Appl. Sci. 2021, 11, 1738. [Google Scholar] [CrossRef]

- Turk, M. Multimodal interaction: A review. Pattern Recognit. Lett. 2014, 36, 189–195. [Google Scholar] [CrossRef]

- IISO 9241-112:2017; Ergonomics of Human-System Interaction—Part 112: Principles for the Presentation of Information. International Organization for Standardization: London, UK, 2017.

- Tan, Z.; Wang, J.; Dai, N.; Zhang, R. Qualitative Research of the Multimodal In-Vehicle Interaction Systems Latency Perception. Proc. ACM Hum.-Comput. Interact. 2025, 9, 1–25. [Google Scholar] [CrossRef]

- Tan, J.; He, W. The Application Value of Human-Vehicle Interaction Theory in Intelligent Cockpit Design. Front. Bus. Econ. Manag. 2024, 13, 174–177. [Google Scholar] [CrossRef]

- Meena, Y.K.; Arya, K.V. Multimodal interaction and IoT applications. Multimed. Tools Appl. 2023, 82, 4781–4785. [Google Scholar] [CrossRef]

- Manawadu, U.E.; Kamezaki, M.; Ishikawa, M.; Kawano, T.; Sugano, S. A multimodal human-machine interface enabling situation-adaptive control inputs for highly automated vehicles. In Proceedings of the 2017 IEEE Intelligent Vehicles Symposium (IV), Los Angeles, CA, USA, 11–14 June 2017; pp. 1195–1200. [Google Scholar]

- Jin, L.; Liu, X.; Guo, B.; Han, Z.; Wang, Y.; Cao, Y.; Yang, X.; Shi, J. Impact of non-driving related task types, request modalities, and automation on driver takeover: A meta-analysis. Saf. Sci. 2025, 181, 106704. [Google Scholar] [CrossRef]

- Hong, J.; Kim, S.; Jeon, G.; Boo, S.; Jo, J.; Park, J.; Jung, S.; Park, J.; Lee, S. A Preliminary Study of Multimodal Feedback Interfaces in Takeover Transition of Semi-Autonomous Vehicles. In Proceedings of the Adjunct Proceedings of the 14th International Conference on Automotive User Interfaces and Interactive Vehicular Applications, Seoul, Republic of Korea, 17–20 September 2022; pp. 108–113. [Google Scholar]

- Gruden, T.; Tomažič, S.; Sodnik, J.; Jakus, G. A user study of directional tactile and auditory user interfaces for take-over requests in conditionally automated vehicles. Accid. Anal. Prev. 2022, 174, 106766. [Google Scholar] [CrossRef]

- Hong, S.; Yang, J.H. Effect of multimodal takeover request issued through A-pillar LED light, earcon, speech message, and haptic seat in conditionally automated driving. Transp. Res. Part F Traffic Psychol. Behav. 2022, 89, 488–500. [Google Scholar] [CrossRef]

- Bazilinskyy, P.; Petermeijer, S.M.; Petrovych, V.; Dodou, D.; de Winter, J.C.F. Take-over requests in highly automated driving: A crowdsourcing survey on auditory, vibrotactile, and visual displays. Transp. Res. Part F Traffic Psychol. Behav. 2018, 56, 82–98. [Google Scholar] [CrossRef]

- Li, J.; Weihua, Z.; Zhongxiang, F.; Liyang, W.; Tang, T.; Gu, T. Effects of Head-Up Display Information Layout Design on Driver Performance: Driving Simulator Studies. Int. J. Hum.–Comput. Interact. 2024, 41, 8829–8845. [Google Scholar] [CrossRef]

- Nees, M.A.; Helbein, B.; Porter, A. Speech Auditory Alerts Promote Memory for Alerted Events in a Video-Simulated Self-Driving Car Ride. Hum. Factors 2016, 58, 416–426. [Google Scholar] [CrossRef]

- Chang, W.; Wonil, H.; Ji, Y.G. Haptic Seat Interfaces for Driver Information and Warning Systems. Int. J. Hum.–Comput. Interact. 2011, 27, 1119–1132. [Google Scholar] [CrossRef]

- Wan, J.; Wu, C. The Effects of Vibration Patterns of Take-Over Request and Non-Driving Tasks on Taking-Over Control of Automated Vehicles. Int. J. Hum.–Comput. Interact. 2018, 34, 987–998. [Google Scholar] [CrossRef]

- García-Díaz, J.M.; García-Ruiz, M.A.; Aquino-Santos, R.; Edwards-Block, A. Evaluation of a Driving Simulator with a Visual and Auditory Interface. In Proceedings of the 6th Latin American Conference, CLIHC 2013, Carrillo, Costa Rica, 2–6 December 2013; pp. 131–139. [Google Scholar]

- Löcken, A.; Frison, A.-K.; Fahn, V.; Kreppold, D.; Götz, M.; Riener, A. Increasing User Experience and Trust in Automated Vehicles via an Ambient Light Display. In Proceedings of the 22nd International Conference on Human-Computer Interaction with Mobile Devices and Services, Oldenburg, Germany, 5–8 October 2020; p. 38. [Google Scholar]

- Peintner, J.; Manger, C.; Riener, A. Communication of Uncertainty Information in Cooperative, Automated Driving: A Comparative Study of Different Modalities. In Proceedings of the 15th International Conference on Automotive User Interfaces and Interactive Vehicular Applications, Ingolstadt, Germany, 18–22 September 2023; pp. 322–332. [Google Scholar]

- Kuhlmann, K.; Borowy, M.; Vollrath, M. Illuminating Safety: Can Light Cues Enhance Situation Awareness During Take-Overs in Conditional Automated Driving? In Proceedings of the Adjunct Proceedings of the 16th International Conference on Automotive User Interfaces and Interactive Vehicular Applications, Stanford, CA, USA, 22–25 September 2024; pp. 123–127. [Google Scholar]

- Lim, C.; Villarreal, R.T.; Nasir, M.; Yu-Chin, C.; Yu, D. REViVe: Development of a reactive environmental vigilance in-vehicle system to mitigate drowsiness-induced inattention during automated driving. Accid. Anal. Prev. 2025, 217, 108045. [Google Scholar] [CrossRef]

- Murata, A.; Kohno, Y. Effectiveness of replacement of automotive side mirrors by in-vehicle LCD–Effect of location and size of LCD on safety and efficiency–. Int. J. Ind. Ergon. 2018, 66, 177–186. [Google Scholar] [CrossRef]

- Cao, Y.; Lingyu, L.; Jiehao, Y.; Jeon, M. Head-up Displays Improve Drivers’ Performance and Subjective Perceptions with the In-Vehicle Gesture Interaction System. Int. J. Hum.–Comput. Interact. 2025, 41, 6921–6935. [Google Scholar] [CrossRef]

- Xu, W.; Jingyi, Z.; Jiateng, L.; Xu, Z.; Hongwei, H.; Zaiyan, G.; Ma, J. Effects of Smart Cockpit Steering Wheel Control Gestures on List Selection Tasks. Int. J. Hum.–Comput. Interact. 2025, 1–16. [Google Scholar] [CrossRef]

- Brandenburg, S.; Epple, S. Drivers’ Individual Design Preferences of Takeover Requests in Highly Automated Driving. i-com 2019, 18, 167–178. [Google Scholar] [CrossRef]

- Pakdamanian, E.; Sheng, S.; Baee, S.; Heo, S.; Kraus, S.; Feng, L. DeepTake: Prediction of Driver Takeover Behavior using Multimodal Data. In Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems, Yokohama, Japan, 8–13 May 2021; p. 103. [Google Scholar]

- Capallera, M.; Meteier, Q.; Salis, E.D.; Widmer, M.; Angelini, L.; Carrino, S.; Sonderegger, A.; Khaled, O.A.; Mugellini, E. A Contextual Multimodal System for Increasing Situation Awareness and Takeover Quality in Conditionally Automated Driving. IEEE Access 2023, 11, 5746–5771. [Google Scholar] [CrossRef]

- Liu, W.; Qingkun, L.; Zhenyuan, W.; Wenjun, W.; Chao, Z.; Cheng, B. A Literature Review on Additional Semantic Information Conveyed from Driving Automation Systems to Drivers through Advanced In-Vehicle HMI Just Before, During, and Right After Takeover Request. Int. J. Hum.–Comput. Interact. 2023, 39, 1995–2015. [Google Scholar] [CrossRef]

- Heng, Z.; Sun, Y.; Boyang, Z.; Che, Y. The Verification of Human-Machine Interaction Design of Intelligent Connected Vehicles Based on Augmented Reality. In Proceedings of the 13th International Conference on Applied Human Factors and Ergonomics (AHFE 2022), New York, NY, USA, 24–28 July 2022. [Google Scholar]

- Kun, A.L. Human-Machine Interaction for Vehicles: Review and Outlook. Found. Trends® Hum.–Comput. Interact. 2018, 11, 201–293. [Google Scholar] [CrossRef]

- Ge, X.; Li, X.; Wang, Y. Methodologies for Evaluating and Optimizing Multimodal Human-Machine-Interface of Autonomous Vehicles; 2018-01-0494; SAE International: Warrendale, PA, USA, 2018; p. 12. [Google Scholar]

- Detjen, H.; Faltaous, S.; Pfleging, B.; Geisler, S.; Schneegass, S. How to increase automated vehicles’ acceptance through in-vehicle interaction design: A review. Int. J. Hum.–Comput. Interact. 2021, 37, 308–330. [Google Scholar] [CrossRef]

- Lu, Z.; Zhang, B.; Feldhütter, A.; Happee, R.; Martens, M.; De Winter, J.C.F. Beyond mere take-over requests: The effects of monitoring requests on driver attention, take-over performance, and acceptance. Transp. Res. Part F Traffic Psychol. Behav. 2019, 63, 22–37. [Google Scholar] [CrossRef]

- Gerber, M.A.; Schroeter, R.; Ho, B. A human factors perspective on how to keep SAE Level 3 conditional automated driving safe. Transp. Res. Interdiscip. Perspect. 2023, 22, 100959. [Google Scholar] [CrossRef]

- Schnelle-Walka, D.; McGee, D.R.; Pfleging, B. Multimodal interaction in automotive applications. J. Multimodal User Interfaces 2019, 13, 53–54. [Google Scholar] [CrossRef]

- Ataya, A.; Kim, W.; Elsharkawy, A.; Kim, S. Gaze-Head Input: Examining Potential Interaction with Immediate Experience Sampling in an Autonomous Vehicle. Appl. Sci. 2020, 10, 9011. [Google Scholar] [CrossRef]

- Roider, F.; Rümelin, S.; Pfleging, B.; Gross, T. The Effects of Situational Demands on Gaze, Speech and Gesture Input in the Vehicle. In Proceedings of the AutomotiveUI ‘17: Proceedings of the 9th International Conference on Automotive User Interfaces and Interactive Vehicular Applications, Oldenburg, Germany, 24–27 September 2017; pp. 94–102. [Google Scholar]

- Ma, J.; Zuo, Y.; Gong, Z.; Meng, Y. Design methodology and evaluation of multimodal interaction for enhancing driving safety and experience in secondary tasks of IVIS. Displays 2025, 87, 102991. [Google Scholar] [CrossRef]

- Ataya, A.; Kim, W.; Elsharkawy, A.; Kim, S. How to Interact with a Fully Autonomous Vehicle: Naturalistic Ways for Drivers to Intervene in the Vehicle System While Performing Non-Driving Related Tasks. Sensors 2021, 21, 2206. [Google Scholar] [CrossRef]

- Farooq, A.; Nukarinen, T.; Sand, A.; Venesvirta, H.; Spakov, O.; Surakka, V. Where’s My Cellphone: Non-contact based Hand-Gestures and Ultrasound haptic feedback for Secondary Task Interaction while Driving. In Proceedings of the 2021 IEEE Sensors, Sydney, Australia, 31 October 2021; pp. 1–4. [Google Scholar]

- Schnelle-Walka, D.; Radomski, S. Automotive multimodal human-machine interface. In The Handbook of Multimodal-Multisensor Interfaces: Language Processing, Software, Commercialization, and Emerging Directions; Oviatt, S., Schuller, B., Cohen, P.R., Sonntag, D., Potamianos, G., Krüger, A., Eds.; Association for Computing Machinery and Morgan & Claypool: San Rafael, CA, USA, 2019; pp. 477–522. [Google Scholar]

- Yin, H.; Li, R.; Victor Chen, Y. From hardware to software integration: A comparative study of usability and safety in vehicle interaction modes. Displays 2024, 85, 102869. [Google Scholar] [CrossRef]

- Wang, J.; Xue, J.; Fu, T.; Gong, H.; Ye, L.; Li, C. Risk quantification and prediction of non-driving-related tasks on drivers’ critical intervention behavior in autonomous driving scenarios. Int. J. Transp. Sci. Technol. 2024, 15, 1–23. [Google Scholar] [CrossRef]

- Choi, I.-K.; Kim, W.-S.; Lee, D.; Kwon, D.-S. A weighted qfd-based usability evaluation method for elderly in smart cars. Int. J. Hum.-Comput. Interact. 2015, 31, 703–716. [Google Scholar] [CrossRef]

- Harvey, C.; Stanton, N.A.; Pickering, C.A.; McDonald, M.; Zheng, P. To twist or poke? A method for identifying usability issues with the rotary controller and touch screen for control of in-vehicle information systems. Ergonomics 2011, 54, 609–625. [Google Scholar] [CrossRef]

- Oliveira, L.; Luton, J.; Iyer, S.; Burns, C.; Mouzakitis, A.; Jennings, P.; Birrell, S. Evaluating How Interfaces Influence the User Interaction with Fully Autonomous Vehicles. In Proceedings of the AutomotiveUI ‘18: Proceedings of the 10th International Conference on Automotive User Interfaces and Interactive Vehicular Applications, Toronto, ON, Canada, 23–25 September 2018; pp. 320–331. [Google Scholar]

- Morgan, P.L.; Voinescu, A.; Alford, C.; Caleb-Solly, P. Exploring the Usability of a Connected Autonomous Vehicle Human Machine Interface Designed for Older Adults. In Proceedings of the AHFE 2018 International Conference on Human Factors in Transportation, Orlando, FL, USA, 21–25 July 2019; pp. 591–603. [Google Scholar]

- Naujoks, F.; Hergeth, S.; Keinath, A.; Schömig, N.; Wiedemann, K. Editorial for Special Issue: Test and Evaluation Methods for Human-Machine Interfaces of Automated Vehicles. Information 2020, 11, 403. [Google Scholar] [CrossRef]

- He, B.; Wang, X.; Luo, C.; Guo, X. Bi-Directional Transparency Interaction for L3 Co-Driving System. In Proceedings of the 2024 5th International Seminar on Artificial Intelligence, Networking and Information Technology (AINIT), Nanjing, China, 29–31 March 2024; pp. 308–315. [Google Scholar]

- Li, M.; Feng, Z.; Zhang, W.; Wang, L.; Wei, L.; Wang, C. How much situation awareness does the driver have when driving autonomously? A study based on driver attention allocation. Transp. Res. Part C Emerg. Technol. 2023, 156, 104324. [Google Scholar] [CrossRef]

- Tan, H.; Jiahao, S.; Wang, W.; Zhu, C. User Experience & Usability of Driving: A Bibliometric Analysis of 2000–2019. Int. J. Hum.–Comput. Interact. 2021, 37, 297–307. [Google Scholar] [CrossRef]

- Forster, Y.; Hergeth, S.; Naujoks, F.; Krems, J.; Keinath, A. Tell Them How They Did: Feedback on Operator Performance Helps Calibrate Perceived Ease of Use in Automated Driving. Multimodal Technol. Interact. 2019, 3, 29. [Google Scholar] [CrossRef]

- Albers, D.; Grabbe, N.; Janetzko, D.; Bengler, K. Saluton! How do you evaluate usability?—Virtual Workshop on Usability Assessments of Automated Driving Systems. In Proceedings of the AutomotiveUI ‘20: 12th International Conference on Automotive User Interfaces and Interactive Vehicular Applications, Virtual Event, DC, USA, 21–22 September 2020; pp. 109–112. [Google Scholar]

- Mandujano-Granillo, J.A.; Candela-Leal, M.O.; Ortiz-Vazquez, J.J.; Ramirez-Moreno, M.A.; Tudon-Martinez, J.C.; Felix-Herran, L.C.; Galvan-Galvan, A.; Lozoya-Santos, J.D.J. Human–Machine Interfaces: A Review for Autonomous Electric Vehicles. IEEE Access 2024, 12, 121635–121658. [Google Scholar] [CrossRef]

- Hou, G.; Qi, D.; Wang, H. The Effect of Dynamic Effects and Color Transparency of AR-HUD Navigation Graphics on Driving Behavior Regarding Inattentional Blindness. Int. J. Hum.–Comput. Interact. 2025, 41, 7581–7592. [Google Scholar] [CrossRef]

- Winkler, M.; Soleimani, M. A Review of Augmented Reality Heads Up Display in Vehicles: Effectiveness, Application, and Safety. Int. J. Hum.–Comput. Interact. 2025, 1–16. [Google Scholar] [CrossRef]

- Adnan Yusuf, S.; Khan, A.; Souissi, R. Vehicle-to-everything (V2X) in the autonomous vehicles domain—A technical review of communication, sensor, and AI technologies for road user safety. Transp. Res. Interdiscip. Perspect. 2024, 23, 100980. [Google Scholar] [CrossRef]

- Yee, S.; Nguyen, L.; Green, P.; Oberholtzer, J.; Miller, B. Visual, Auditory, Cognitive, and Psychomotor Demands of Real in-Vehicle Tasks; UMTRI-2006-20; University of Michigan, Ann Arbor, Transportation Research Institute: Ann Arbor, MI, USA, 2007. [Google Scholar]

- Wickens, C.D. Multiple resources and performance prediction. Theor. Issues Ergon. Sci. 2002, 3, 159–177. [Google Scholar] [CrossRef]

- Baber, C.; Mellor, B. Using critical path analysis to model multimodal human–computer interaction. Int. J. Hum.-Comput. Stud. 2001, 54, 613–636. [Google Scholar] [CrossRef]

- Oviatt, S. Ten myths of multimodal interaction. Commun. ACM 1999, 42, 74–81. [Google Scholar] [CrossRef]

- Wechsung, I. An Evaluation Framework for Multimodal Interaction—Determining Quality Aspects and Modality Choice; Springer International Publishing: Berlin/Heidelberg, Germany, 2014. [Google Scholar]

| Group A | Group B | Group C 1 |

|---|---|---|

| Usability | Evaluation Assessment | Intelligent Vehicle, Intelligent Connected Vehicle, Smart Vehicle; Intelligent Driving, Smart Driving; Intelligent Cockpit, Smart Cockpit; Intelligent Car, Smart Car, Intelligent Connected Car; Autonomous Vehicle, Automated Vehicle, Automatic Vehicle, Self-Driving Vehicle; Autonomous Driving, Automated Driving, Automatic Driving; Autonomous Car, Automated Car, Automatic Car, Self-Driving Car |

| No. | Item 1 | Inclusion and Exclusion Criteria 2 |

|---|---|---|

| 1 | Language | Inclusion: Only English-language literature was included. Exclusion: Non-English literature was excluded. |

| 2 | Type | Inclusion: Only journal articles and conference papers were included. Exclusion: Literature from non-academic sources (not peer-reviewed) such as market reports, news articles, white papers, and working papers was excluded. Additionally, books and dissertations were also excluded; although they may contain relevant research, their comprehensive content makes them inconvenient for rapid reading and analysis. |

| 3 | Permission | Inclusion: Only literature with accessible full text was included. Exclusion: Literature with copyright restrictions was excluded. |

| 4 | Domain | Inclusion: Literature focusing on “HMI (human-machine interaction)” as the research subject was included. Exclusion: Literature outside the “HMI (human-machine interaction)” research field was excluded. |

| 5 | Subject | Inclusion: Literature focusing on in-vehicle interaction in passenger vehicles as the research subject was included. Exclusion: Literature discussing topics such as public transportation vehicles, traffic accidents, traffic network systems, automated driving roads, and intelligent transportation was excluded. |

| 6 | Divergence | Temporarily include disputed literature: For research literature for which relevance cannot be clearly determined, it is recommended to retain it initially and make decisions after subsequent discussion, thereby avoiding potential selection bias. |

| 7 | Redundancy | Exclusion of duplicate literature: Before conducting full-text review, duplicate literature should be excluded. Duplication here not only refers to general duplication but also includes literature with overlapping content published by the same author, even when these publications use different titles. |

| 8 | Content | Inclusion: This study primarily included applied research literature focusing on HMI design practice evaluation, for example, feasibility validation of novel interaction design solutions, comparative evaluation of multiple interaction design solutions, and user interaction performance and experience studies under specific driving scenarios. In other words, this study prioritized research cases related to actual project development. Exclusion: This study excluded fundamental research literature primarily focused on theoretical research, such as studies aimed at constructing theoretical frameworks, exploring basic principles and patterns of human–machine interaction, validating psychological models, constructing behavioral models, and investigating related influencing factors. |

| 9 | Method | Inclusion: Literature that conducted empirical analysis was included. Exclusion: Articles that were narrative reviews, comparative studies, survey research, or other types of reviews were excluded. Additionally, studies that only discussed interaction design concepts or user interaction experiences were also excluded. |

| 10 | Quality | Inclusion: Literature with relatively well-designed research methodology was included. Exclusion: Research literature that did not provide clear and detailed usability evaluation methods was excluded. |

| Data Entry | Extraction Items | Terminology Classification of Related Extraction Items | |

|---|---|---|---|

| Primary Classification | Secondary Classification | ||

| Data 1 (for addressing Questions 1–3) | Automation Level | Driving Assistance (L0–L2) | Level 0: no driving automation (emergency assistance); level 1: driving assistance (partial driving assistance); level 2: partial driving automation (combined driving assistance) |

| Conditional Driving Automation (L3) | Level 3: conditional driving automation | ||

| Advanced Driving Automation (L4–L5) | Level 4: high driving automation; level 5: full driving automation | ||

| Data 2 (for addressing Questions 1–3) | Task Scenario | Primary Driving Task | Autonomous driving task; driving takeover task; advanced driver assistance task |

| Secondary Driving Task | Vehicle navigation task; instrument cluster system; driving condition prompt system; warning system; head-up display system; vehicle imaging system | ||

| Non-driving Task | Virtual intelligent voice assistant; function-setting task; vehicle entertainment task; vehicle intelligent robot; communication and social task; other tasks (some task scenarios that are difficult to categorize, such as interface layout, font design, screen size, personalized interface design, etc., also called edge tasks) | ||

| Data 3 (for addressing Questions 1–3) | Interaction Modality 1 | Input Modality | In-touch screen; in-steering wheel key; in-central control key; in-gestures; in-speech; in-multimodal |

| Output Modality | Out-indicating light; out-acoustic; out-speech; out-central control interface; out-dashboard interface; out-HUD interface; out-haptic vibration; out-haptic temperature feedback; out-olfactory; out-electronic rearview mirror monitoring; out-robot facial expression; out-other interface (some interaction modalities that are difficult to categorize or overly complex, such as head-mounted displays); out-multimodal | ||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhou, D.; Yuan, X.; Sun, Y.; Wu, Y. Current Status and Challenges in Usability Evaluation Research on Human–Machine Interaction in Intelligent Vehicles: A Systematic Review. Appl. Sci. 2025, 15, 9384. https://doi.org/10.3390/app15179384

Zhou D, Yuan X, Sun Y, Wu Y. Current Status and Challenges in Usability Evaluation Research on Human–Machine Interaction in Intelligent Vehicles: A Systematic Review. Applied Sciences. 2025; 15(17):9384. https://doi.org/10.3390/app15179384

Chicago/Turabian StyleZhou, Datao, Xiaofang Yuan, Yidi Sun, and Yu Wu. 2025. "Current Status and Challenges in Usability Evaluation Research on Human–Machine Interaction in Intelligent Vehicles: A Systematic Review" Applied Sciences 15, no. 17: 9384. https://doi.org/10.3390/app15179384

APA StyleZhou, D., Yuan, X., Sun, Y., & Wu, Y. (2025). Current Status and Challenges in Usability Evaluation Research on Human–Machine Interaction in Intelligent Vehicles: A Systematic Review. Applied Sciences, 15(17), 9384. https://doi.org/10.3390/app15179384