Ultrasonic Nondestructive Testing Image Enhancement Model Based on Super-Resolution Imaging

Abstract

1. Introduction

- (1)

- The deep learning super-resolution model is applied to the field of laser ultrasound image signal enhancement, providing a new solution for enhancing laser ultrasound images.

- (2)

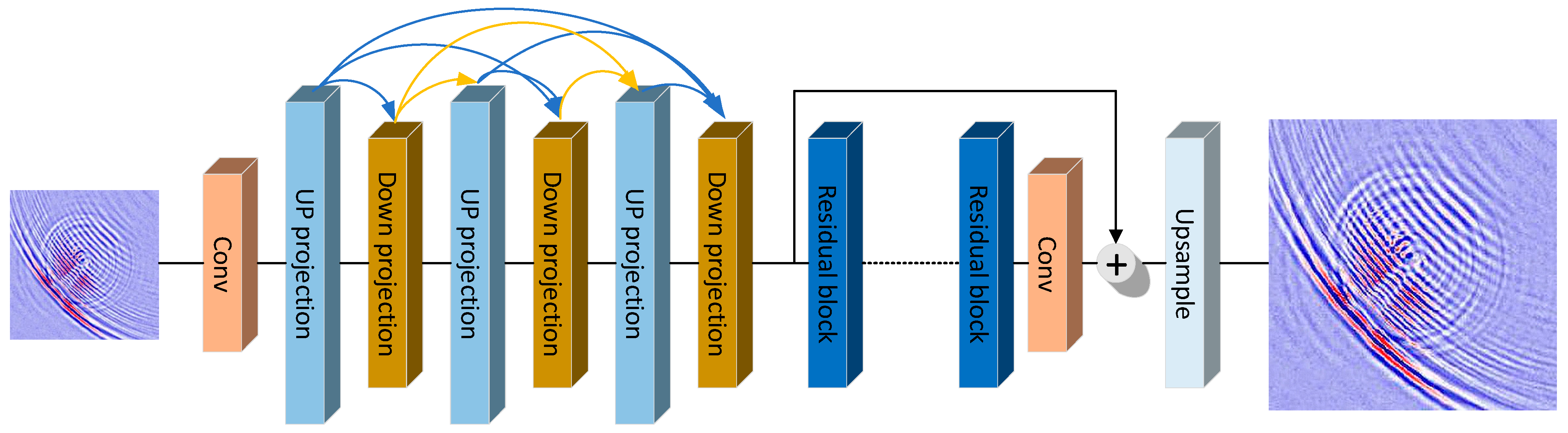

- A new end-to-end ultrasonic image super-resolution model is proposed, which combines up and down projection layers, deep residual network, and Charbonnier loss to solve the problem of ultrasonic image super-resolution data enhancement. The model does not require manual feature extraction or annotation of ultrasound images based on a large amount of prior knowledge.

- (3)

- Super-resolution data enhancement of ultrasound images can be directly realized without any modification to the existing laser ultrasound equipment. Compared with the existing method of increasing image resolution through hardware, the cost of industrial applications is reduced.

- (4)

- The model was compared with the classic super-resolution imaging model in the actual ultrasonic nondestructive testing data set under the indicators of peak signal-to-noise ratio (PSNR) and structural similarity index measurement (SSIM). The model showed better imaging results and provided a better detection signal for subsequent defect identification.

2. Theory and Method

2.1. Degradation Model

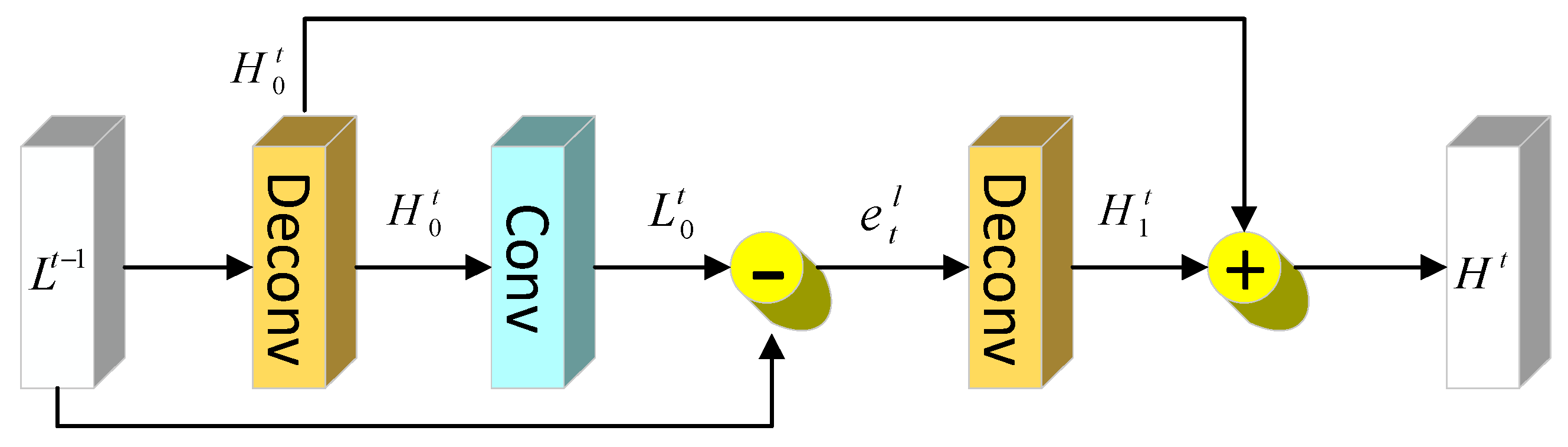

2.2. Up and Down Projection Block

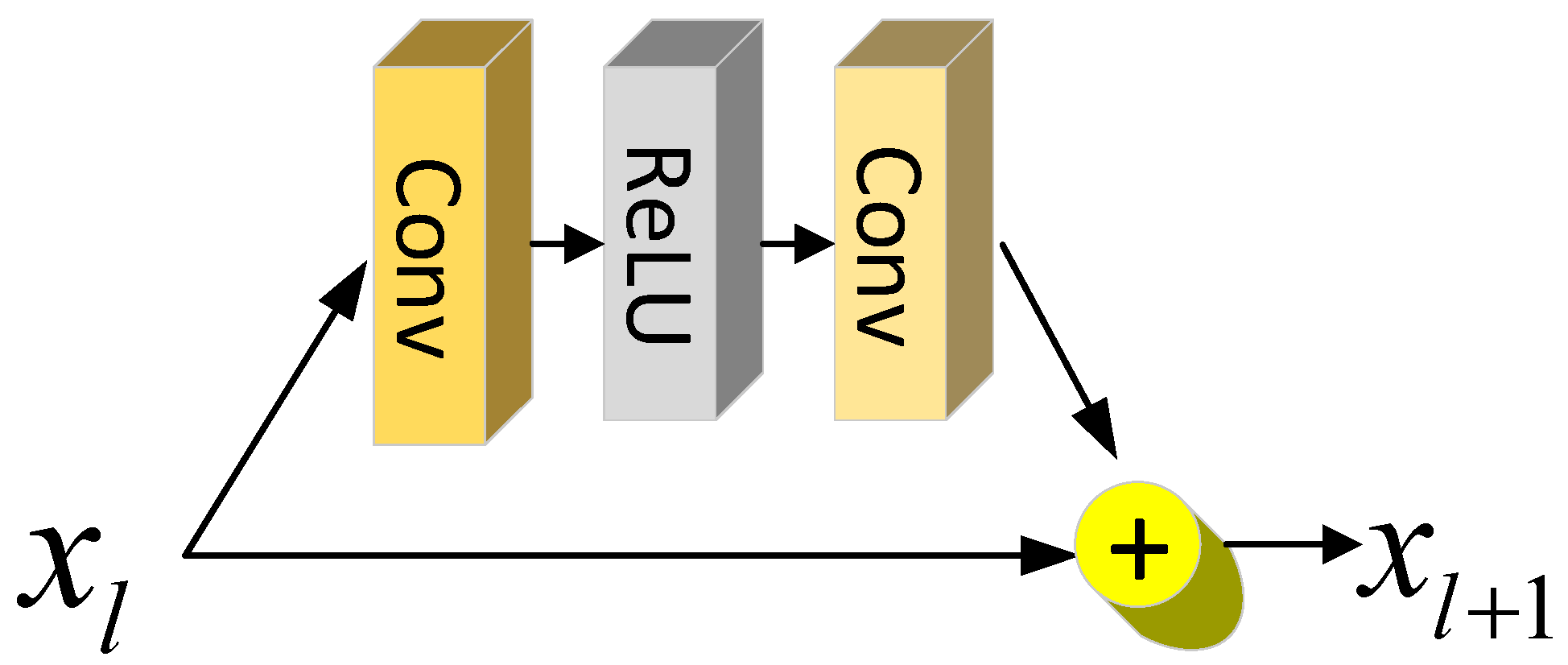

2.3. Residual Block

2.4. Charbonnier Loss

2.5. Laser Ultrasound Super-Resolution Network

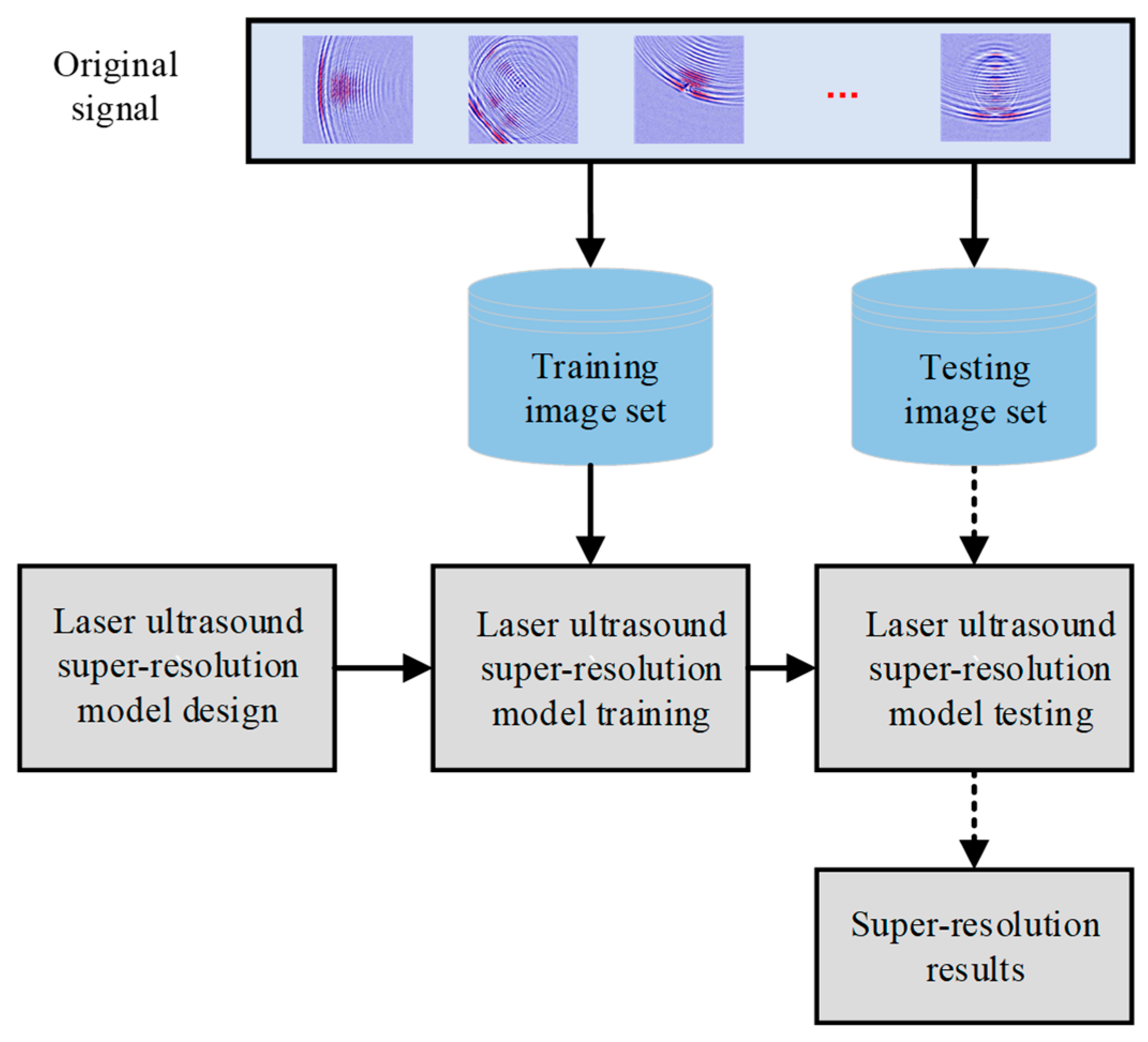

- (1)

- The laser ultrasound image data set is acquired through the material under test by laser ultrasound equipment.

- (2)

- The data set is divided into two parts according to a certain ratio. The first part is used to train the super-resolution model, which is called the training set, and the other part is called the test set to test the performance of the model.

- (3)

- Ultrasound super-resolution model design.

- (4)

- Use the training set to train the ultrasound super-resolution model until a satisfactory result is obtained.

- (5)

- Input the test set into the trained super-resolution model to realize super-resolution imaging of laser ultrasound.

- (6)

- Output super-resolution results.

3. Experiments

3.1. Experimental Setup and Test Specimens

3.2. Quantitative Evaluation Metrics

3.2.1. Peak Signal-to-Noise Ratio (PSNR)

3.2.2. Structural Similarity Index Measure (SSIM)

4. Results and Discussion

5. Conclusions and Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Lima, E.B.; Santos, V.H.; Baggio, A.L.; Lopes, J.H.; Leão-Neto, J.P.; Silva, G.T. An image formation model for ultrasound superresolution using a polymer ball lens. Appl. Acoust. 2020, 170, 107494. [Google Scholar] [CrossRef]

- Amireddy, K.K.; Balasubramaniam, K.; Rajagopal, P. Holey-structured metamaterial lens for subwavelength resolution in ultrasonic characterization of metallic components. Appl. Phys. Lett. 2016, 108, 224101. [Google Scholar] [CrossRef]

- Lu, D.; Liu, Z. Hyperlenses and metalenses for far-field super-resolution imaging. Nat. Commun. 2012, 3, 1205. [Google Scholar] [CrossRef] [PubMed]

- Zhu, J.; Christensen, J.; Jung, J.; Martin-Moreno, L.; Yin, X.; Fok, L.; Zhang, X.; Garcia-Vidal, F.J. A holey-structured metamaterial for acoustic deep-subwavelength imaging. Nat. Phys. 2011, 7, 52–55. [Google Scholar] [CrossRef]

- Labyed, Y.; Huang, L. Ultrasound time-reversal MUSIC imaging with diffraction and attenuation compensation. IEEE Trans. Ultrason. Ferroelectr. Freq. Control 2012, 59, 2186–2200. [Google Scholar] [CrossRef] [PubMed]

- He, J.; Yuan, F.-G. Lamb wave-based subwavelength damage imaging using the DORT-MUSIC technique in metallic plates. Struct. Health Monit. 2016, 15, 65–80. [Google Scholar] [CrossRef]

- Fan, C.; Yang, L.; Zhao, Y. Ultrasonic multi-frequency time-reversal-based imaging of extended targets. NDT E Int. 2020, 113, 102276. [Google Scholar] [CrossRef]

- Baker, S.; Kanade, T. Limits on super-resolution and how to break them. IEEE Trans. Pattern Anal. Mach. Intell. 2002, 24, 1167–1183. [Google Scholar] [CrossRef]

- Freeman, W.T.; Jones, T.R.; Pasztor, E.C. Example-based super-resolution. IEEE Comput. Graph. Appl. 2002, 22, 56–65. [Google Scholar] [CrossRef]

- Yang, J.; Wright, J.; Huang, T.; Ma, Y. Image super-resolution as sparse representation of raw image patches. In Proceedings of the 2008 IEEE Conference on Computer Vision and Pattern Recognition, Anchorage, AK, USA, 23–28 June 2008; pp. 1–8. [Google Scholar]

- Wu, X.; Zhou, B.; Huang, F.; Lin, P.; Cao, R. Super-Resolution Thermal Imaging Using Uncooled Infrared Sensors for Non-Destructive Testing of Adhesively Bonded Joints. IEEE Sens. J. 2022, 22, 14415–14423. [Google Scholar] [CrossRef]

- Marini, M.; Bouzin, M.; Sironi, L.; D’Alfonso, L.; Colombo, R.; Di Martino, D.; Gorini, G.; Collini, M.; Chirico, G. A novel method for spatially-resolved thermal conductivity measurement by super-resolution photo-activated infrared imaging. Mater. Today Phys. 2021, 18, 100375. [Google Scholar] [CrossRef]

- Liang, P.; Wang, B.; Jiang, G.; Li, N.; Zhang, L. Unsupervised fault diagnosis of wind turbine bearing via a deep residual deformable convolution network based on subdomain adaptation under time-varying speeds. Eng. Appl. Artif. Intell. 2023, 118, 105656. [Google Scholar] [CrossRef]

- Liang, P.; Wang, W.; Yuan, X.; Liu, S.; Zhang, L.; Cheng, Y. Intelligent fault diagnosis of rolling bearing based on wavelet transform and improved ResNet under noisy labels and environment. Eng. Appl. Artif. Intell. 2022, 115, 105269. [Google Scholar] [CrossRef]

- Kang, M.-S.; An, Y.-K. Frequency–wavenumber analysis of deep learning-based super resolution 3D GPR images. Remote. Sens. 2020, 12, 3056. [Google Scholar] [CrossRef]

- Cantero-Chinchilla, S.; Wilcox, P.D.; Croxford, A.J. Deep learning in automated ultrasonic NDE–developments, axioms and opportunities. NDT E Int. 2022, 131, 102703. [Google Scholar] [CrossRef]

- Dong, C.; Loy, C.C.; He, K.; Tang, X. Learning a deep convolutional network for image super-resolution. In Computer Vision–ECCV 2014: 13th European Conference, Zurich, Switzerland, 6–12 September 2014; Proceedings, Part IV 13; Springer International Publishing: Berlin/Heidelberg, Germany, 2014. [Google Scholar]

- Lim, B.; Son, S.; Kim, H.; Nah, S.; Mu Lee, K. Enhanced deep residual networks for single image super-resolution. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, Honolulu, HI, USA, 21–26 July 2017. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016. [Google Scholar]

- Haris, M.; Shakhnarovich, G.; Ukita, N. Deep back-projection networks for super-resolution. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018. [Google Scholar]

- Soh, J.W.; Cho, S.; Cho, N.I. Meta-transfer learning for zero-shot super-resolution. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. Adv. Neural Inf. Process. Syst. 2017, 30. [Google Scholar]

- Zhang, Y.; Li, K.; Li, K.; Wang, L.; Zhong, B.; Fu, Y. Image super-resolution using very deep residual channel attention networks. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018. [Google Scholar]

- Xia, B.; Hang, Y.; Tian, Y.; Yang, W.; Liao, Q.; Zhou, J. Efficient non-local contrastive attention for image super-resolution. Proc. AAAI Conf. Artif. Intell. 2022, 36, 2759–2767. [Google Scholar] [CrossRef]

- Song, H.; Yang, Y. Super-resolution visualization of subwavelength defects via deep learning-enhanced ultrasonic beamforming: A proof-of-principle study. NDT E Int. 2020, 116, 102344. [Google Scholar] [CrossRef]

- Cheng, L.; Kersemans, M. Dual-IRT-GAN: A defect-aware deep adversarial network to perform super-resolution tasks in infrared thermographic inspection. Compos. Part B Eng. 2022, 247, 110309. [Google Scholar] [CrossRef]

- Mei, Y.; Jin, H.; Yu, B.; Wu, E.; Yang, K. Visual geometry group-UNet: Deep learning ultrasonic image reconstruction for curved parts. J. Acoust. Soc. Am. 2021, 149, 2997–3009. [Google Scholar] [CrossRef] [PubMed]

- Zhang, W.; Chai, X.; Zhu, W.; Zheng, S.; Fan, G.; Li, Z.; Zhang, H.; Zhang, H. Super-resolution reconstruction of ultrasonic Lamb wave TFM image via deep learning. Meas. Sci. Technol. 2023, 34, 055406. [Google Scholar] [CrossRef]

- Zhang, K.; Zhou, X.; Zhang, H.; Zuo, W. Revisiting single image super-resolution under internet environment: Blur kernels and reconstruction algorithms. In Advances in Multimedia Information Processing—PCM 2015: 16th Pacific-Rim Conference on Multimedia, Gwangju, South Korea, 16–18 September 2015; Proceedings, Part I 16; Springer International Publishing: Berlin/Heidelberg, Germany, 2015. [Google Scholar]

- Timofte, R.; De Smet, V.; Van Gool, L. A+: Adjusted anchored neighborhood regression for fast super-resolution. In Computer Vision—ACCV 2014: 12th Asian Conference on Computer Vision, Singapore, 1–5 November 2014; Revised Selected Papers, Part IV 12; Springer International Publishing: Berlin/Heidelberg, Germany, 2015. [Google Scholar]

- Yang, C.Y.; Ma, C.; Yang, M.H. Single-image super-resolution: A benchmark. In Computer Vision–ECCV 2014: 13th European Conference, Zurich, Switzerland, 6–12 September 2014; Proceedings, Part IV 13; Springer International Publishing: Berlin/Heidelberg, Germany, 2014. [Google Scholar]

- Riegler, G.; Schulter, S.; Ruther, M.; Bischof, H. Conditioned regression models for non-blind single image super-resolution. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 7–13 December 2015. [Google Scholar]

- Ye, J.; Toyama, N. Benchmarking deep learning models for automatic ultrasonic imaging inspection. IEEE Access 2021, 9, 36986–36994. [Google Scholar] [CrossRef]

| Specimen | Flaw Type | Depth | Transducer Side | Defect Size (mm) |

|---|---|---|---|---|

| 1–3 | Hole | Penetrated | Front | 1/3/5 |

| 4–6 | Hole | 1.5 mm | Front | 1/3/5 |

| 7–9 | Hole | 1.5 mm | Back | 1/3/5 |

| 10–11 | Slit | Penetrated | Front | 5/10 |

| 12–14 | Slit | 1.5 mm | Front | 3/5/10 |

| 15–17 | Slit | 1.5 mm | Back | 3/5/10 |

| Methods | PSNR | SSIM |

|---|---|---|

| EDSR | 29.371 | 0.903 |

| DBPN | 30.681 | 0.924 |

| MZSR | 27.663 | 0.877 |

| Ours | 33.122 | 0.955 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhu, J.; Wang, G.; Luo, K.; Zhang, X. Ultrasonic Nondestructive Testing Image Enhancement Model Based on Super-Resolution Imaging. Appl. Sci. 2025, 15, 8339. https://doi.org/10.3390/app15158339

Zhu J, Wang G, Luo K, Zhang X. Ultrasonic Nondestructive Testing Image Enhancement Model Based on Super-Resolution Imaging. Applied Sciences. 2025; 15(15):8339. https://doi.org/10.3390/app15158339

Chicago/Turabian StyleZhu, Jinxuan, Guoyou Wang, Kang Luo, and Xinfang Zhang. 2025. "Ultrasonic Nondestructive Testing Image Enhancement Model Based on Super-Resolution Imaging" Applied Sciences 15, no. 15: 8339. https://doi.org/10.3390/app15158339

APA StyleZhu, J., Wang, G., Luo, K., & Zhang, X. (2025). Ultrasonic Nondestructive Testing Image Enhancement Model Based on Super-Resolution Imaging. Applied Sciences, 15(15), 8339. https://doi.org/10.3390/app15158339