Intelligent Time Delay Control of Telepresence Robots Using Novel Deep Reinforcement Learning Algorithm to Interact with Patients

Abstract

1. Introduction

2. Related Works

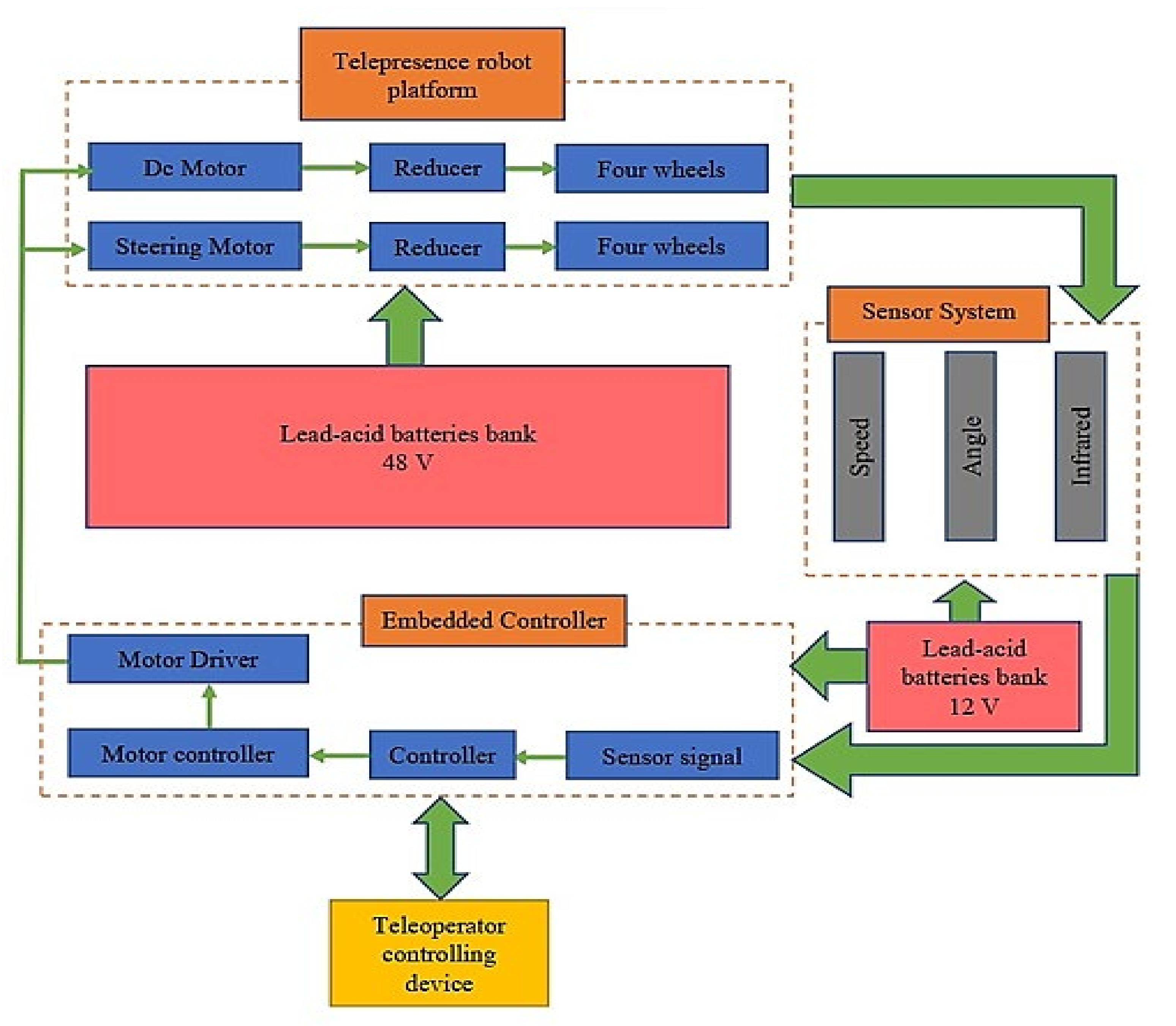

3. System Development

3.1. Telepresence Robot Design

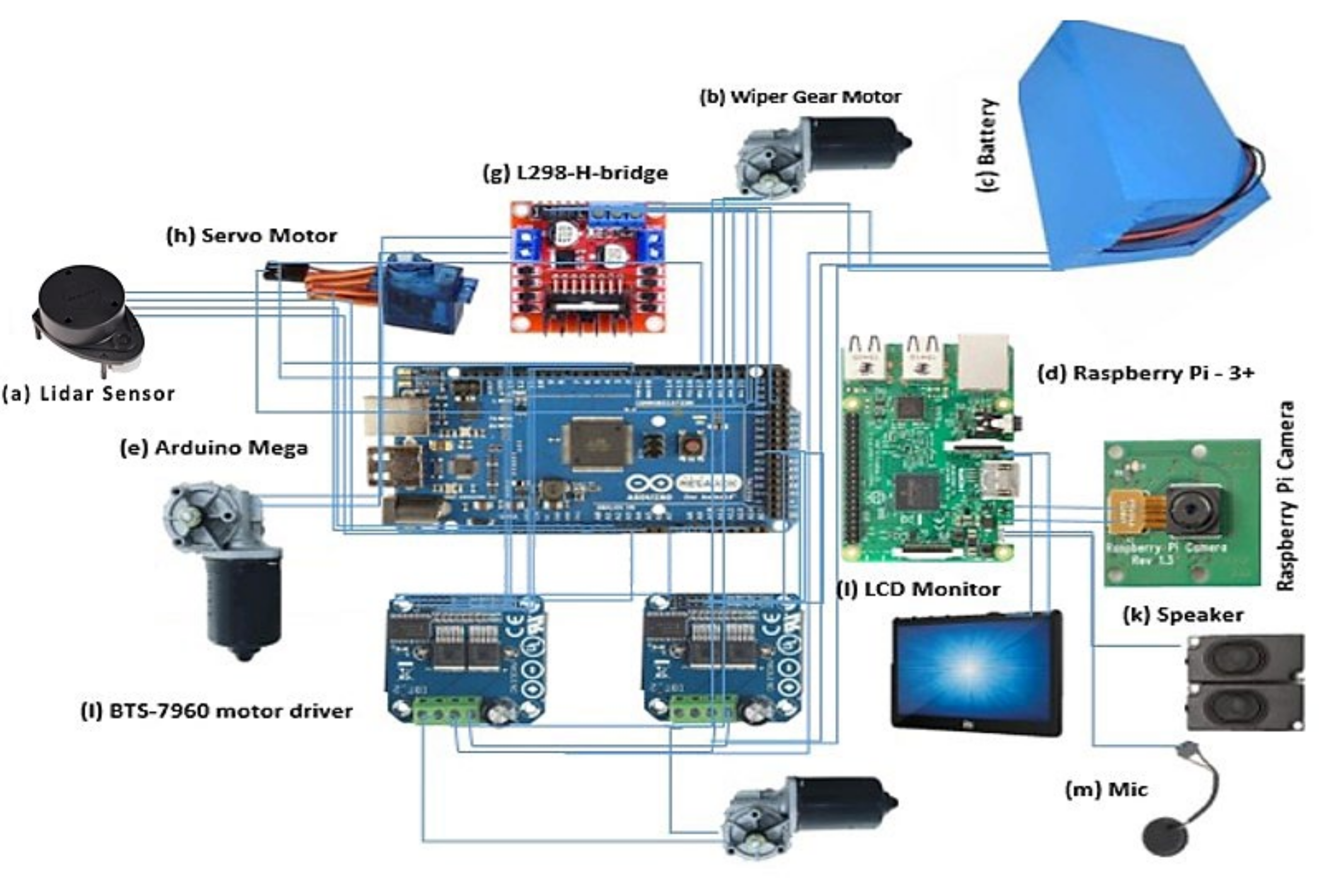

3.2. Telepresence Robot Hardware

3.2.1. Raspberry Pi Model 3B

3.2.2. Arduino Mega 2560

3.2.3. Arduino Pi Camera

3.2.4. Motor Driver IBT2-BTS7960

3.2.5. Lidar Sensor-RPLiDAR-A1M8-360

3.3. Current Status of Telepresence Robot

3.4. Design Parameters of Telepresence Robot

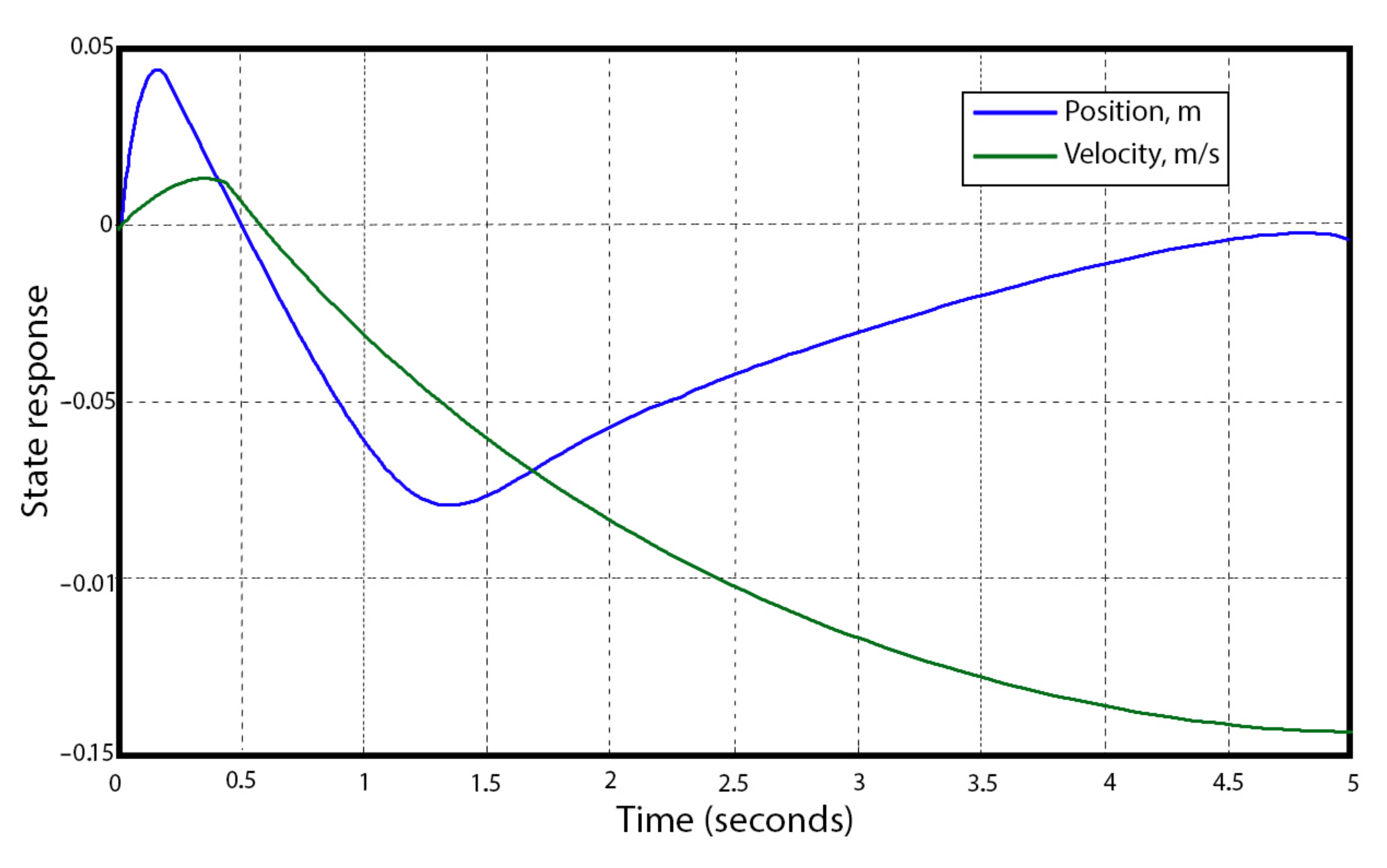

3.5. Mathematical Modeling of Telepresence Robot

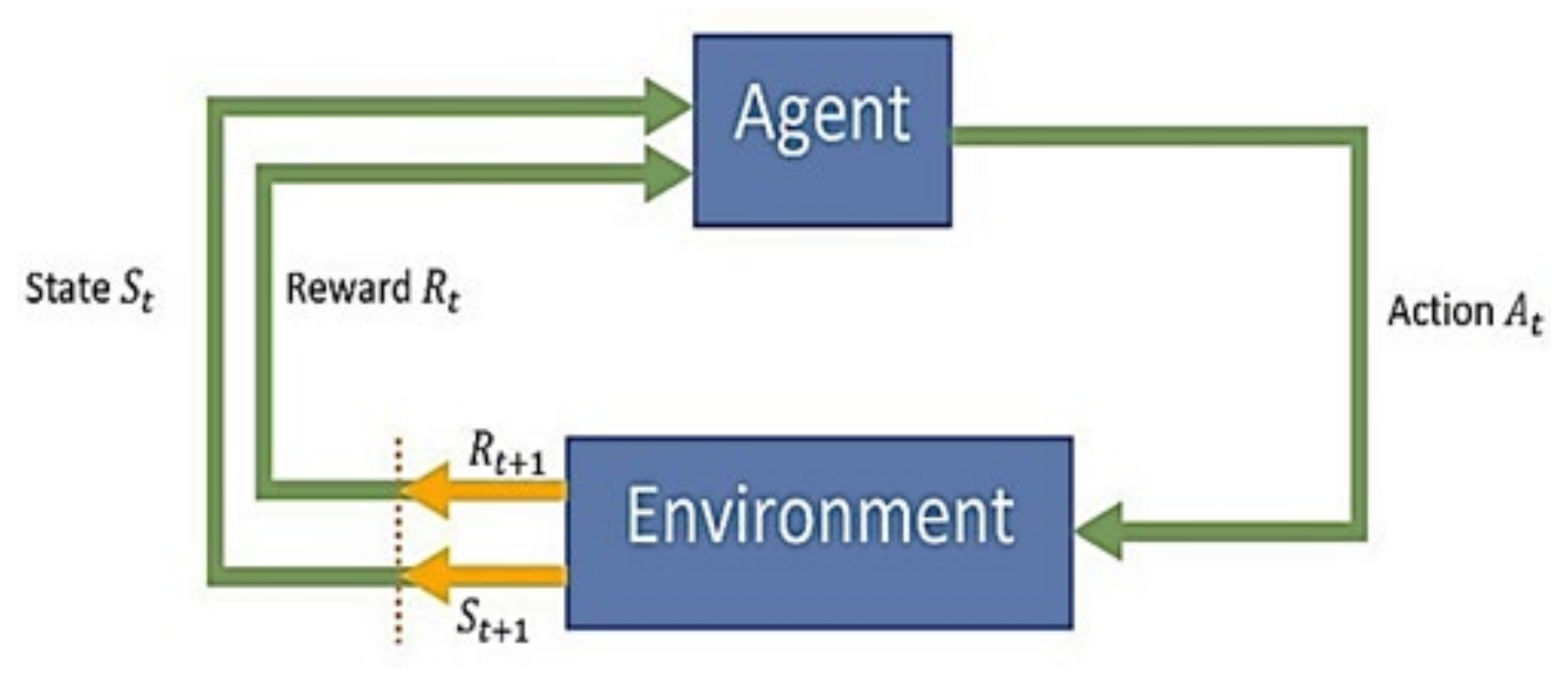

4. The Proposed Framework Approach

4.1. Implementation of the Proposed DRL Framework

| Algorithm 1. Double Deep Q-Networks Learning (DDQN) | |

| 1: | Initialize: online network Qθ and replay buffer , |

| target network Qθ, with weights θ′ ← θ | |

| 2: | for each episode, do |

| 3: | ovservation of the current state st |

| 4: | for each step in the environment, do |

| 5: | select action with respect to the policy π |

| 6: | implement action at |

| 7: | observation of the next state st+1 and reward |

| 8: | store in replay buffer |

| 9: | adaptation of the current state st ← st+1 |

| 10: | end for |

| 11: | for each update step, do |

| 12: | sample N of experiences with from replay buffer |

| 13: | calculate expected Q values; |

| 14: | calculate loss |

| 15: | calculate the stochastic gradient step on L |

| 16: | updatation of target network parameters: |

| 17: | end for |

| 8: | end for |

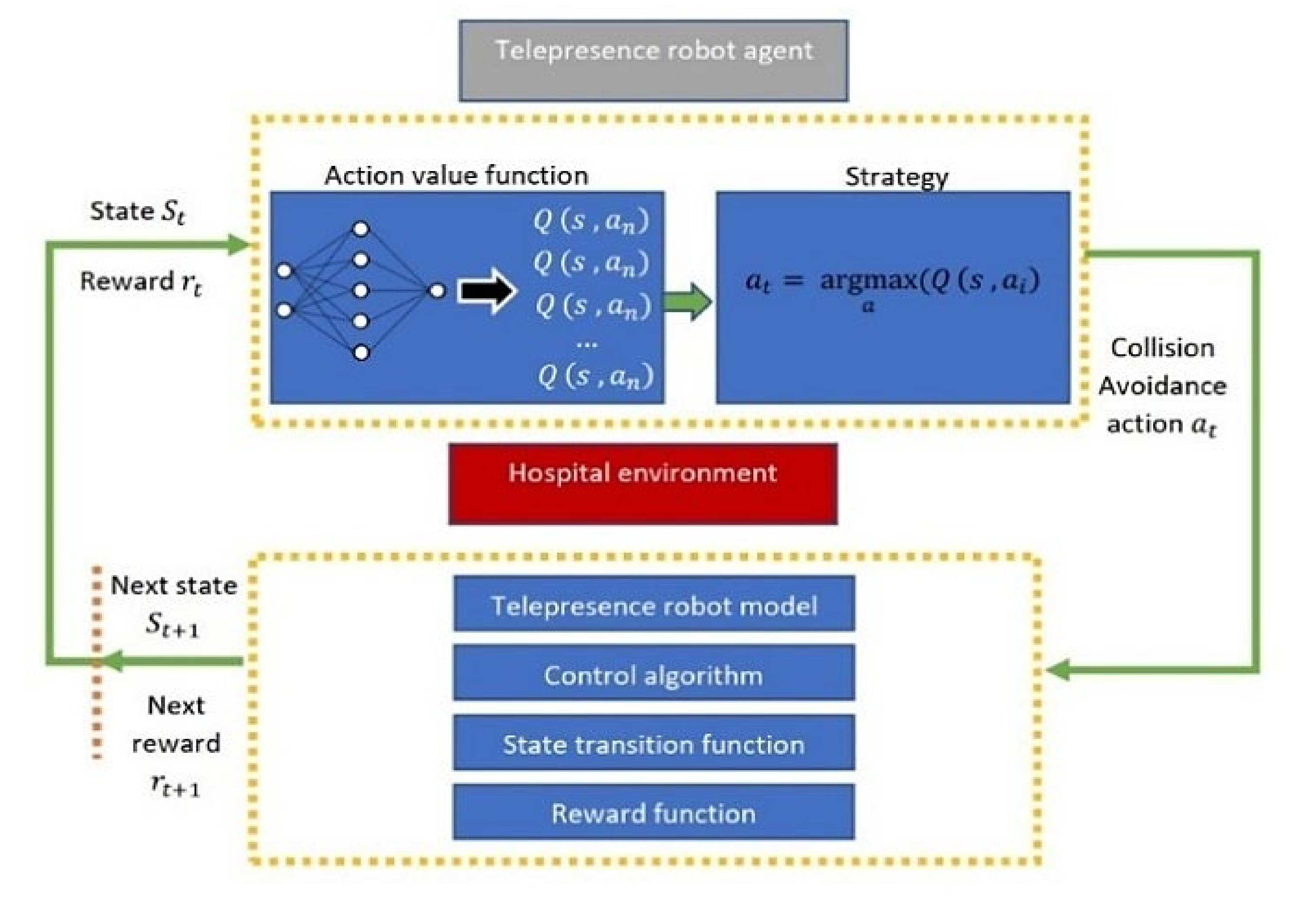

4.2. Agent Model for Telepresence Robot

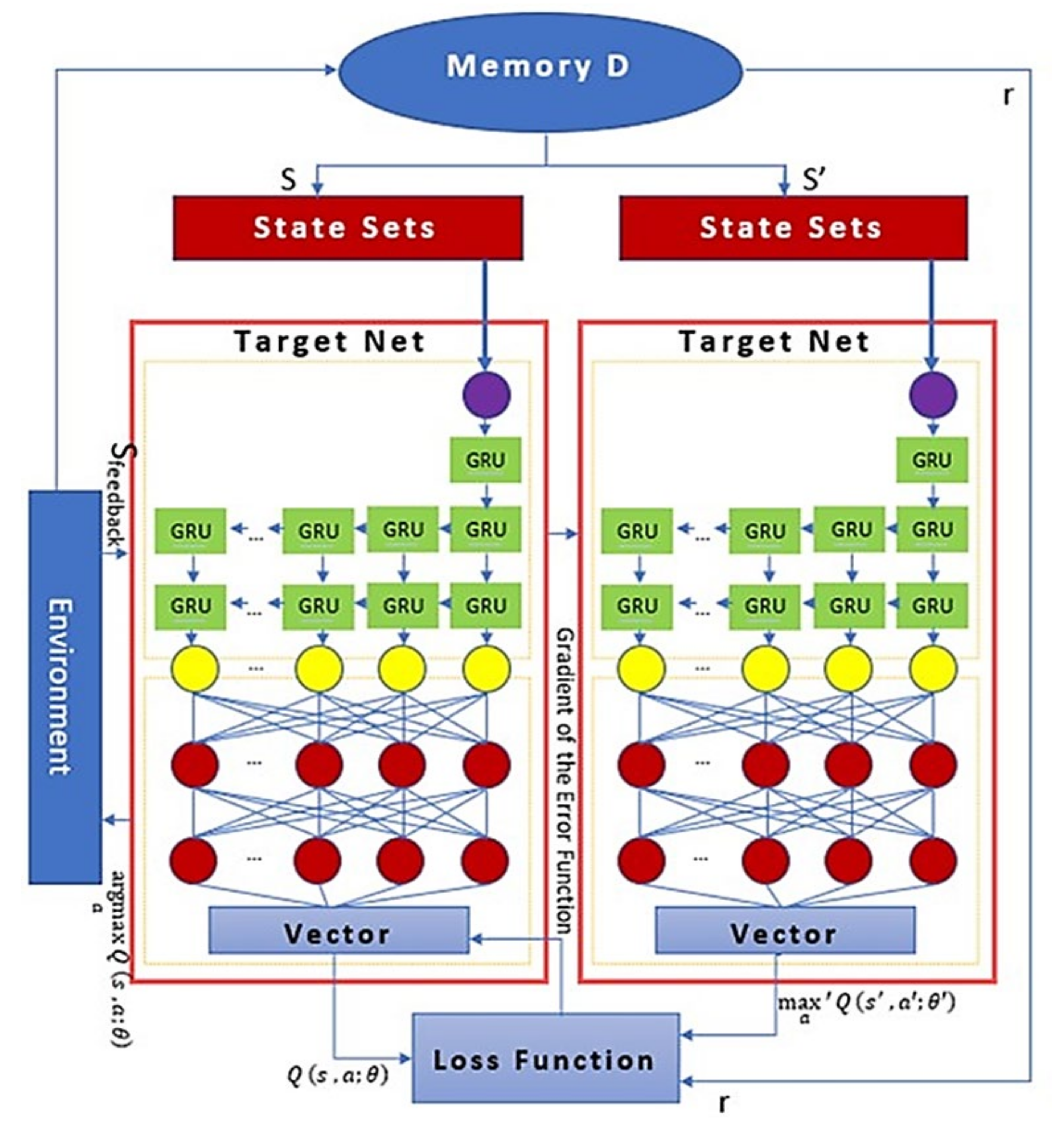

| Algorithm 2. Gated Recurrent Unit (GRU) with Double Deep Q-Networks Learning (DDQN) | |

| 1: | Initialize: input data and Update associated status information from memory D, |

| 2: | for each episode, do |

| 3: | input will be sent to the main and target networks |

| 4: | input data will send to GRU unit, which has eight behavior sets (columns) . |

| 5: | for each step in the GRU unit, do |

| 6: | GRU layers are responsible for processing the n = 8 data |

| middle data will be fed to two layers of the FC layer | |

| 7: | The FC layer parameter is 8 × 64 and 64 × 8 matrix |

| the activation function uses the rectified linear in the neural unit in the FC | |

| a dropout structure is set in the GRU and FC layers to prevent overfitting | |

| 8: | end for |

| 9: | for each update step do |

| 10: | find the action of |

| 11: | then find the action in the target network |

| 12: | The Qtarget value is calculated by using th Q value |

| 13: | The Q value is not certainly the largest in the target network, |

| so, we can avoid selecting the overestimated suboptimal action | |

| 14: | Memory pool provides the training data |

| 15: | Calculate the loss function: |

| 16: | end for |

| 17: | end for |

- 1.

- The activation function uses the rectified linear in the neural unit in the FC.

- 2.

- A dropout structure is set in the GRU and FC layers to prevent overfitting.

- 3.

- Initially, we find the action of with the main network and then find the action in the target network.

- a.

- The Q_target value is calculated by using the Q-value.

- b.

- We can avoid choosing the overstated suboptimal action since the Q value is not unquestionably the highest in the target network.

- c.

- The memory pool provides the training data.

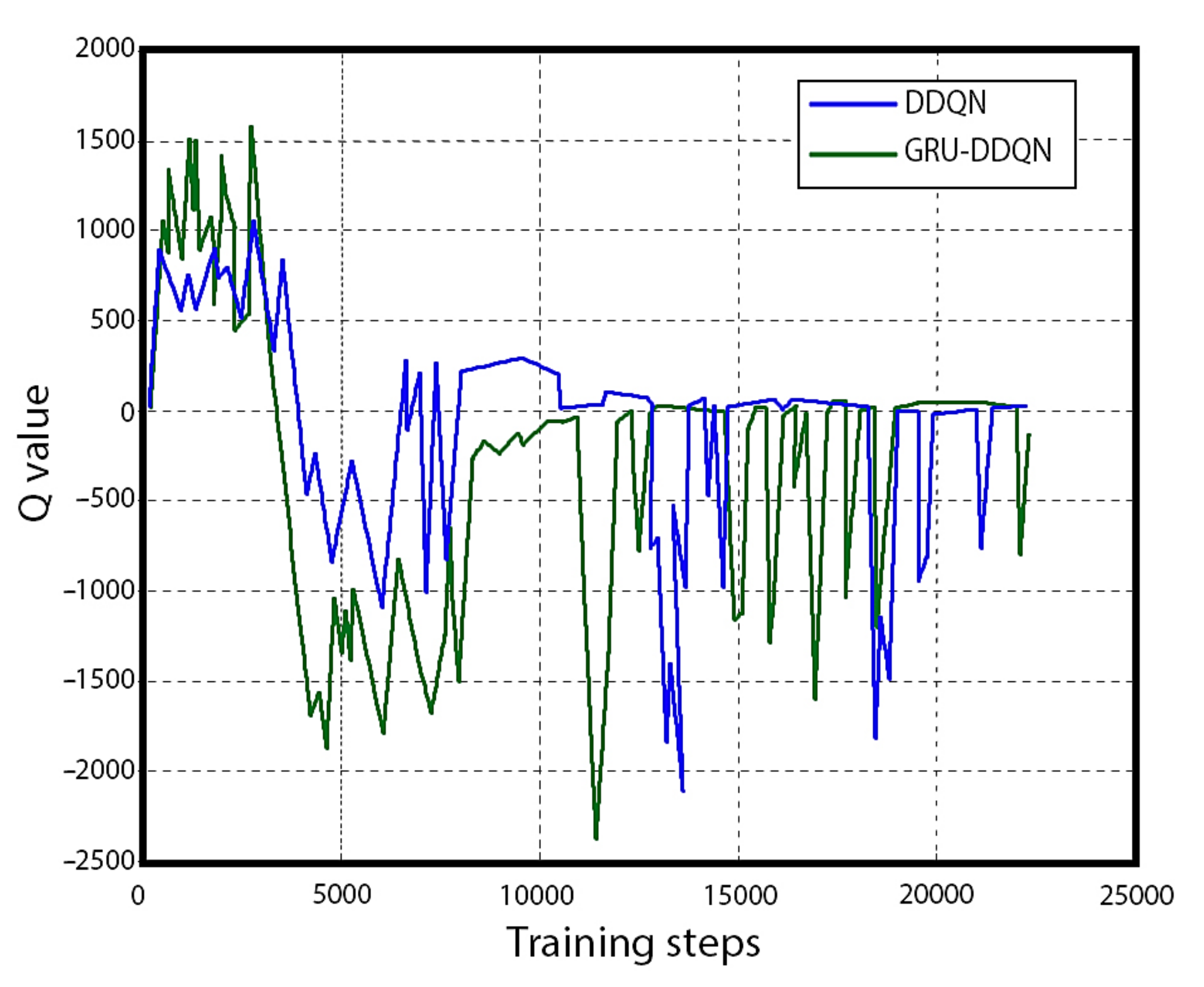

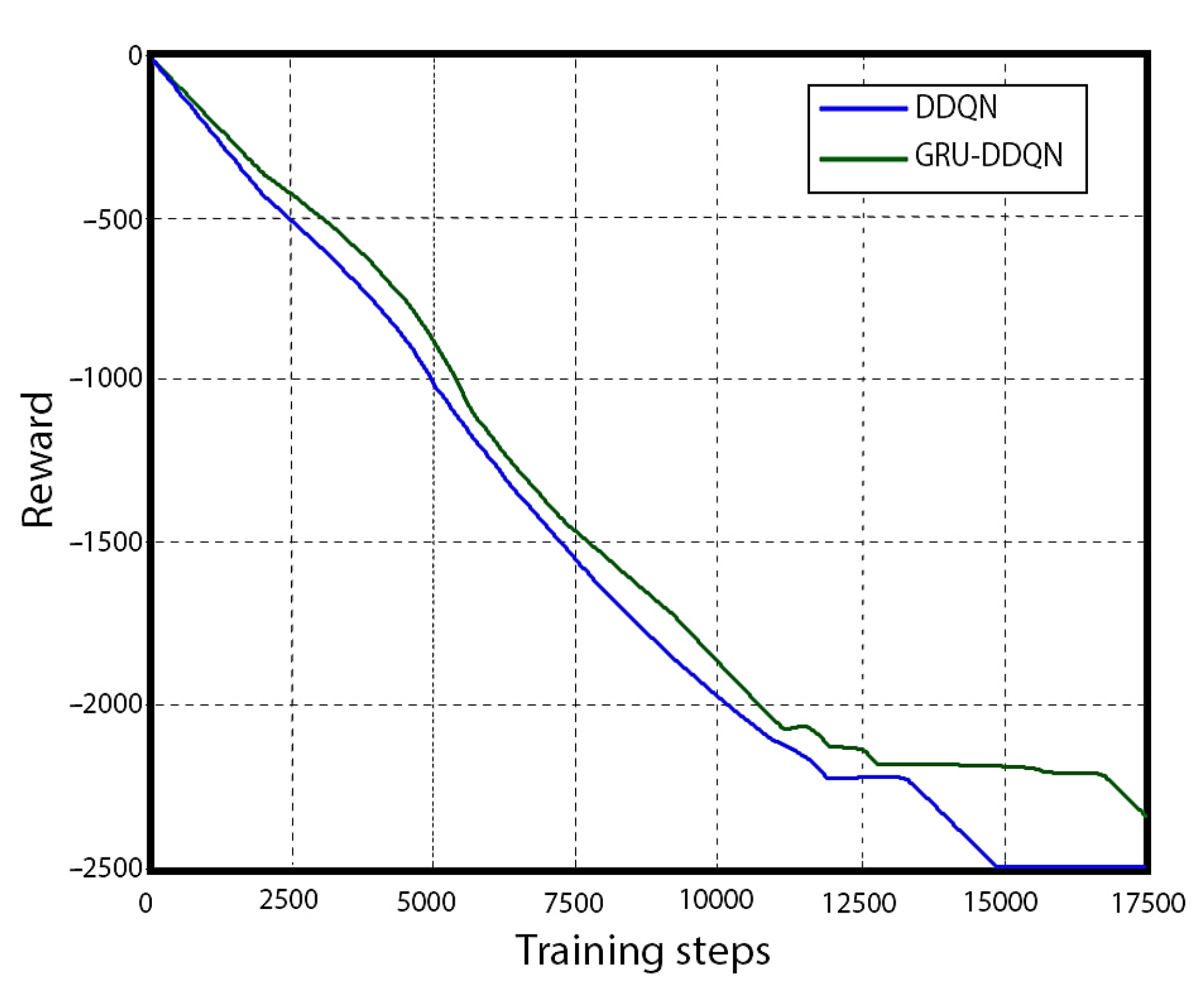

5. Experimental Setup and Results

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Tachi, S. Telexistence. In Lecture Notes in Computer Science; Springer International Publishing: Cham, Switzerland, 2015; pp. 229–259. [Google Scholar] [CrossRef]

- Engel, J.; Schöps, T.; Cremers, D. LSD-SLAM: Large-Scale Direct Monocular SLAM. In Computer Vision—ECCV 2014; Springer International Publishing: Cham, Switzerland, 2014; pp. 834–849. [Google Scholar] [CrossRef]

- Mur-Artal, R.; Montiel, J.M.M.; Tardos, J.D. ORB-SLAM: A Versatile and Accurate Monocular SLAM System. IEEE Trans. Robot. 2015, 31, 1147–1163. [Google Scholar] [CrossRef]

- Zhao, K.; Song, J.; Luo, Y.; Liu, Y. Research on Game-Playing Agents Based on Deep Reinforcement Learning. Robotics 2022, 11, 35. [Google Scholar] [CrossRef]

- Jiang, Y.; Shin, H.; Ko, H. Precise Regression for Bounding Box Correction for Improved Tracking Based on Deep Reinforcement Learning. In Proceedings of the ICASSP 2018—2018 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Calgary, AB, Canada, 15–20 April 2018. [Google Scholar] [CrossRef]

- Caicedo, J.C.; Lazebnik, S. Active Object Localization with Deep Reinforcement Learning. In Proceedings of the 2015 IEEE International Conference on Computer Vision (ICCV), Santiago, Chile, 7–13 December 2015. [Google Scholar] [CrossRef]

- Ranjith Rochan, M.; Aarthi Alagammai, K.; Sujatha, J. Computer Vision Based Novel Steering Angle Calculation for Autonomous Vehicles. In Proceedings of the 2018 Second IEEE International Conference on Robotic Computing (IRC), Laguna Hills, CA, USA, 31 January–2 February 2018. [Google Scholar] [CrossRef]

- Lillicrap, T.P.; Hunt, J.J.; Pritzel, A.; Heess, N.; Erez, T.; Tassa, Y.; Silver, D.; Wierstra, D. Continuous Control with Deep Reinforcement Learning. Comput. Sci. 2015, 8, A187. [Google Scholar]

- Hosu, I.-A.; Rebedea, T. Playing Atari Games with Deep Reinforcement Learning and Human Checkpoint Replay. Computer Science. arXiv 2016, arXiv:1607.05077. [Google Scholar]

- Zhang, W.; Zhang, Y.; Liu, N. Danger-Aware Adaptive Composition of DRL Agents for Self-Navigation. Unmanned Syst. 2020, 9, 1–9. [Google Scholar] [CrossRef]

- Dobrevski, M.; Skočaj, D. Deep reinforcement learning for map-less goal-driven robot navigation. Int. J. Adv. Robot. Syst. 2021, 18, 172988142199262. [Google Scholar] [CrossRef]

- Shao, Y.; Li, R.; Zhao, Z.; Zhang, H. Graph Attention Network-based DRL for Network Slicing Management in Dense Cellular Networks. In Proceedings of the 2021 IEEE Wireless Communications and Networking Conference (WCNC), Nanjing, China, 29 March–1 April 2021. [Google Scholar] [CrossRef]

- Kebria, P.M.; Khosravi, A.; Nahavandi, S.; Shi, P.; Alizadehsani, R. Robust Adaptive Control Scheme for Teleoperation Systems with Delay and Uncertainties. IEEE Trans. Cybern. 2020, 50, 3243–3253. [Google Scholar] [CrossRef]

- Shen, S.; Michael, N.; Kumar, V. Autonomous multi-floor indoor navigation with a computationally constrained MAV. In Proceedings of the 2011 IEEE International Conference on Robotics and Automation (ICRA), Shanghai, China, 9–13 May 2011; IEEE: Piscataway, NJ, USA, 2011. [Google Scholar] [CrossRef]

- Likhachev, M.; Ferguson, D.; Gordon, G.; Stentz, A.; Thrun, S. Anytime search in dynamic graphs. Artif. Intell. 2008, 172, 1613–1643. [Google Scholar] [CrossRef]

- Howard, T.M.; Green, C.J.; Kelly, A.; Ferguson, D. State space sampling of feasible motions for high-performance mobile robot navigation in complex environments. J. Field Robot. 2008, 25, 325–345. [Google Scholar] [CrossRef]

- Wang, S. State Lattice-Based Motion Planning for Autonomous On-Road Driving/Shuiying Wang; Online-Ressource; Freie Universität Berlin: Berlin, Germany, 2015; Available online: http://d-nb.info/1069105651/34 (accessed on 15 January 2023).

- Hsu, D.; Latombe, J.-C.; Kurniawati, H. On the Probabilistic Foundations of Probabilistic Roadmap Planning. Int. J. Robot. Res. 2006, 25, 627–643. [Google Scholar] [CrossRef]

- Brezak, M.; Petrovic, I. Real-time Approximation of Clothoids With Bounded Error for Path Planning Applications. IEEE Trans. Robot. 2014, 30, 507–515. [Google Scholar] [CrossRef]

- Glaser, S.; Vanholme, B.; Mammar, S.; Gruyer, D.; Nouveliere, L. Maneuver-Based Trajectory Planning for Highly Autonomous Vehicles on Real Road with Traffic and Driver Interaction. IEEE Trans. Intell. Transp. Syst. 2010, 11, 589–606. [Google Scholar] [CrossRef]

- Rastelli, J.P.; Lattarulo, R.; Nashashibi, F. Dynamic trajectory generation using continuous-curvature algorithms for door to door assistance vehicles. In Proceedings of the 2014 IEEE Intelligent Vehicles Symposium (IV), Dearborn, MI, USA, 8–11 June 2014. [Google Scholar] [CrossRef]

- Lim, W.; Lee, S.; Sunwoo, M.; Jo, K. Hierarchical Trajectory Planning of an Autonomous Car Based on the Integration of a Sampling and an Optimization Method. IEEE Trans. Intell. Transp. Syst. 2018, 19, 613–626. [Google Scholar] [CrossRef]

- Ziegler, J.; Bender, P.; Dang, T.; Stiller, C. Trajectory planning for Bertha—A local, continuous method. In Proceedings of the 2014 IEEE Intelligent Vehicles Symposium (IV), Dearborn, MI, USA, 8–11 June 2014. [Google Scholar] [CrossRef]

- Dolgov, D.; Thrun, S.; Montemerlo, M.; Diebel, J. Path Planning for Autonomous Vehicles in Unknown Semi-structured Environments. Int. J. Robot. Res. 2010, 29, 485–501. [Google Scholar] [CrossRef]

- Ziegler, J.; Bender, P.; Schreiber, M.; Lategahn, H.; Strauss, T.; Stiller, C.; Thao, D.; Franke, U.; Appenrodt, N.; Keller, C.G.; et al. Making Bertha Drive—An Autonomous Journey on a Historic Route. IEEE Intell. Transp. Syst. Mag. 2014, 6, 8–20. [Google Scholar] [CrossRef]

- Minamoto, M.; Suzuki, Y.; Kanno, T.; Kawashima, K. Effect of robot operation by a camera with the eye tracking control. In Proceedings of the 2017 IEEE International Conference on Mechatronics and Automation (ICMA), Takamatsu, Japan, 6–9 August 2017. [Google Scholar] [CrossRef]

- Ma, L.; Xu, Z.; Schilling, K. Robust bilateral teleoperation of a car-like rover with communication delay. In Proceedings of the 2009 European Control Conference (ECC), Budapest, Hungary, 23–26 August 2009. [Google Scholar] [CrossRef]

- Xu, Z.; Ma, L.; Schilling, K. Passive bilateral teleoperation of a car-like mobile robot. In Proceedings of the 2009 17th Mediterranean Conference on Control and Automation (MED), Thessaloniki, Greece, 24–26 June 2009. [Google Scholar] [CrossRef]

- Zhu, Y.; Aoyama, T.; Hasegawa, Y. Enhancing the Transparency by Onomatopoeia for Passivity-Based Time-Delayed Teleoperation. IEEE Robot. Autom. Lett. 2020, 5, 2981–2986. [Google Scholar] [CrossRef]

- Lee, D.; Spong, M.W. Passive Bilateral Teleoperation with Constant Time Delay. IEEE Trans. Robot. 2006, 22, 269–281. [Google Scholar] [CrossRef]

- Kunii, Y.; Kubota, T. Human Machine Cooperative Tele-Drive by Path Compensation for Long Range Traversability. In Proceedings of the 2006 IEEE/RSJ International Conference on Intelligent Robots and Systems, Beijing, China, 9–15 October 2006. [Google Scholar] [CrossRef]

- Sasaki, T.; Uchibe, E.; Iwane, H.; Yanami, H.; Anai, H.; Doya, K. Policy gradient reinforcement learning method for discrete-time linear quadratic regulation problem using estimated state value function. In Proceedings of the 2017 56th Annual Conference of the Society of Instrument and Control Engineers of Japan (SICE), Kanazawa, Japan, 19–22 September 2017. [Google Scholar] [CrossRef]

- Vamvoudakis, K.G.; Modares, H.; Kiumarsi, B.; Lewis, F.L. Game Theory-Based Control System Algorithms with Real-Time Reinforcement Learning: How to Solve Multiplayer Games Online. IEEE Control. Syst. 2017, 37, 33–52. [Google Scholar] [CrossRef]

- Anderson, P.; Wu, Q.; Teney, D.; Bruce, J.; Johnson, M.; Sunderhauf, N.; Reid, I.; Gould, S.; van den Hengel, A. Vision-and-Language Navigation: Interpreting Visually-Grounded Navigation Instructions in Real Environments. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–23 June 2018. [Google Scholar] [CrossRef]

- Zhu, Y.; Mottaghi, R.; Kolve, E.; Lim, J.J.; Gupta, A.; Fei-Fei, L.; Farhadi, A. Target-driven visual navigation in indoor scenes using deep reinforcement learning. In Proceedings of the 2017 IEEE International Conference on Robotics and Automation (ICRA), Singapore, 29 May–3 June 2017. [Google Scholar] [CrossRef]

- Yang, W.; Wang, X.; Farhadi, A.; Gupta, A.; Mottaghi, R. Visual Semantic Navigation using Scene Priors. arXiv 2018, arXiv:1810.06543. [Google Scholar]

- Wang, D.; Deng, H.; Pan, Z. MRCDRL: Multi-robot coordination with deep reinforcement learning. Neurocomputing 2020, 406, 68–76. [Google Scholar] [CrossRef]

- Wang, Z.; Schaul, T.; Hessel, M.; van Hasselt, H.; Lanctot, M.; de Freitas, N. Dueling Network Architectures for Deep Reinforcement Learning. arXiv 2015, arXiv:1511.06581. [Google Scholar]

- Peng, X.B.; Berseth, G.; Yin, K.; Van De Panne, M. DeepLoco: Dynamic locomotion skills using hierarchical deep reinforcement learning. ACM Trans. Graph. 2017, 36, 1–13. [Google Scholar] [CrossRef]

- Merel, J.; Ahuja, A.; Pham, V.; Tunyasuvunakool, S.; Liu, S.; Tirumala, D.; Heess, N.; Wayne, G. Hierarchical Visuomotor Control of Humanoids. arXiv 2018, arXiv:1811.09656. [Google Scholar]

- Pomerleau, D.A. Neural Network Based Autonomous Navigation. In The Kluwer International Series in Engineering and Computer Science; Springer US: Boston, MA, USA, 1990; pp. 83–93. [Google Scholar] [CrossRef]

- Sermanet, P.; Eigen, D.; Zhang, X.; Mathieu, M.; Fergus, R.; LeCun, Y. OverFeat: Integrated Recognition, Localization, and Detection using Convolutional Networks. arXiv 2013, arXiv:1312.6229. [Google Scholar]

- Park, J.J.; Kim, J.H.; Song, J.B. Path Planning for a Robot Manipulator Based on Probabilistic Roadmap and Reinforcement Learning. Int. J. Control. Autom. Syst. 2007, 5, 674–680. [Google Scholar]

- Loiacono, D.; Prete, A.; Lanzi, P.L.; Cardamone, L. Learning to overtake in TORCS using simple reinforcement learning. In Proceedings of the 2010 IEEE Congress on Evolutionary Computation (CEC), Barcelona, Spain, 18–23 July 2010. [Google Scholar] [CrossRef]

- Huang, H.-H.; Wang, T. Learning overtaking and blocking skills in simulated car racing. In Proceedings of the 2015 IEEE Conference on Computational Intelligence and Games (CIG), Tainan, Taiwan, 31 August–2 September 2015. [Google Scholar] [CrossRef]

- Karpathy, A. Deep Reinforcement Learning: Pong from Pixels, 31 May 2016. Available online: http://karpathy.github.io/2016/05/31/rl/ (accessed on 18 October 2022).

- Peters, J.; Schaal, S. Policy Gradient Methods for Robotics. In Proceedings of the 2006 IEEE/RSJ International Conference on Intelligent Robots and Systems, Beijing, China, 9–15 October 2006. [Google Scholar] [CrossRef]

- Silver, D.; Lever, G.; Heess, N.; Degris, T.; Wierstra, D.; Riedmiller, M. Deterministic Policy Gradient Algorithms. In Proceedings of the 31st International Conference on Machine Learning, PMLR, Beijing, China, 22–24 June 2014; Volume 32, pp. 387–395. [Google Scholar]

- Zong, X.; Xu, G.; Yu, G.; Su, H.; Hu, C. Obstacle Avoidance for Self-Driving Vehicle with Reinforcement Learning. SAE Int. J. Passeng. Cars Electron. Electr. Syst. 2017, 11, 30–39. [Google Scholar] [CrossRef]

- Zhang, X.; Shi, X.; Zhang, Z.; Wang, Z.; Zhang, L. A DDQN Path Planning Algorithm Based on Experience Classification and Multi Steps for Mobile Robots. Electronics 2022, 11, 2120. [Google Scholar] [CrossRef]

- Dey, R.; Salem, F.M. Gate-variants of Gated Recurrent Unit (GRU) neural networks. In Proceedings of the 2017 IEEE 60th International Midwest Symposium on Circuits and Systems (MWSCAS), Boston, MA, USA, 6–9 August 2017. [Google Scholar] [CrossRef]

| Reference Approach | Advantages | Disadvantages |

|---|---|---|

| Sample-Based | Probabilistic integrity | Discretizes state and action space, high time complexity |

| Interpolation-Based | High path continuity | Complex approach |

| Numerical Optimization | Globally optimal trajectory | Increased complexity and time-consuming |

| Fuzzy Logic | Robust to uncertainties | Less precise |

| Master-Slave | Guaranteed idle time | High delay in communication reduces passive loop gain |

| Route Planning | Effective in remote environment | Considerable communication delay |

| Learning-based Navigation | Improved navigation | Little research on compensating for undefined behavior during actual operation |

| DRL | Effective in multi-robot coordination | Computational complex |

| Proposed Approach | More dynamic and efficient method | Large Data requirement |

| Technical Specification | Unit | Min–Max Value |

|---|---|---|

| Robot speed | m/s | 0–3.25 |

| Robot momentum | N.m | 0–0.93 |

| Robot height | ft | 5′3″ |

| Robot width | ft | 1′5″ |

| Robot breadth | ft | 0′6″ |

| Robot weight | kg | 14 |

| Robot battery | Ah | 35 |

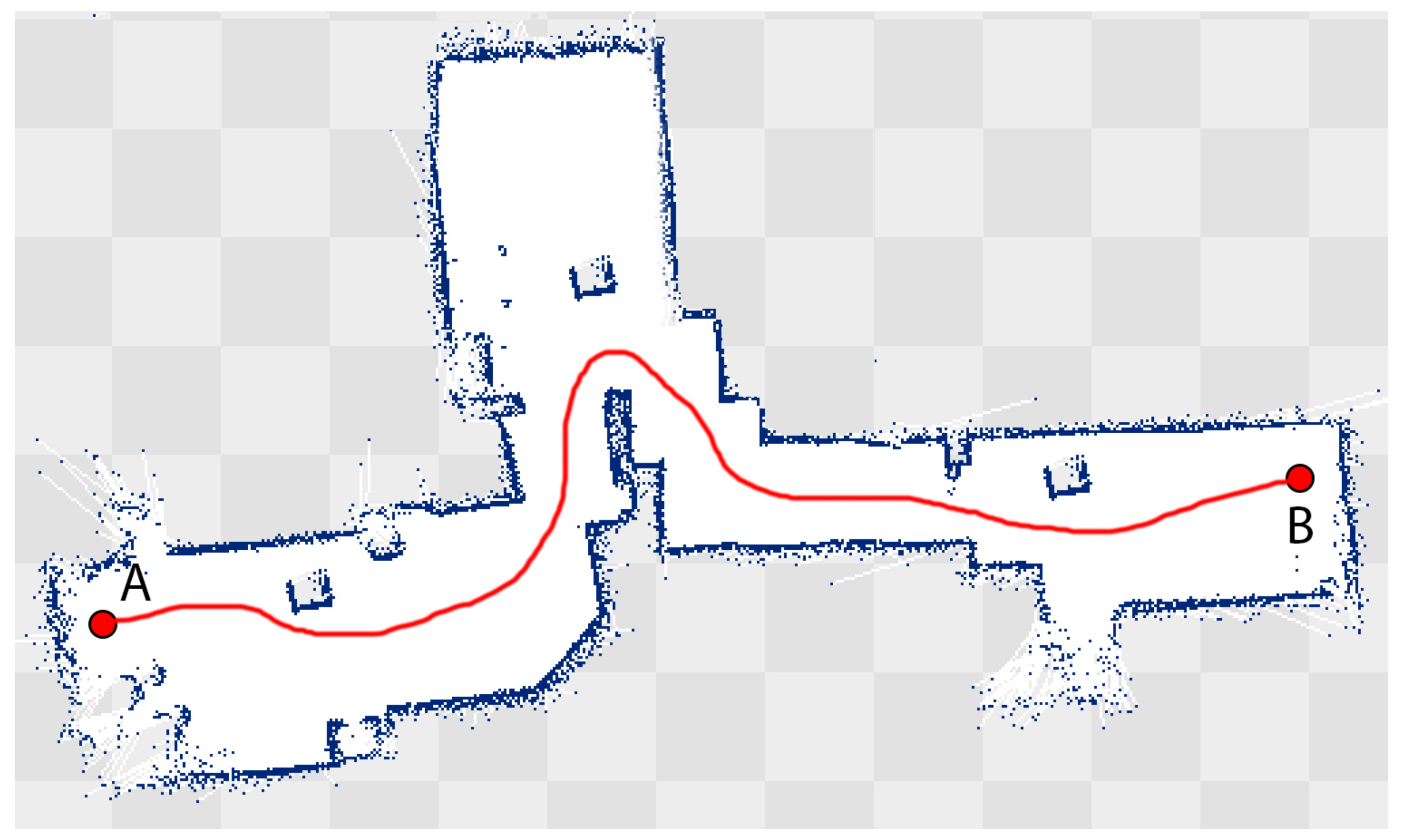

| Parameters | Unit | Value |

|---|---|---|

| Static obstacles | 4 × 4 feet | 8 |

| Dynamic Obstacle | - | - |

| Path | Curved | 1 |

| Starting Point | - | A (Main entrance) |

| End Point | - | B (Patient Ward) |

| Coverage | meters | 140 |

| Algorithm | Examples | Trial 1 | Trial 2 | Trial 3 | Mean |

|---|---|---|---|---|---|

| Distance (m)/time (s) | Distance (m)/time (s) | Distance (m)/time (s) | Distance (m)/time (s) | ||

| A* | First trip | 8.7 m/91 s | 9.9 m/99 s | 9.1 m/95 s | 9.2 m/95 s |

| Second trip | 9.9 m/90 s | 9.1 m/87 s | 8.1 m/88 s | 9.0 m/88 s | |

| Third trip | 9.1 m/98 s | 9.0 m/89 s | 9.0 m/75 s | 9.0 m/87 s | |

| Fourth trip | 8.4 m/87 s | 9.8 m/72 s | 9.9 m/61 s | 9.3 m/73 s | |

| DDQN | First trip | 8.8 m/84 s | 9.6 m/76 s | 8.9 m/95 s | 9.1 m/85 s |

| Second trip | 8.4 m/86 s | 7.8 m/87 s | 7.9 m/88 s | 8.0 m/87 s | |

| Third trip | 9.1 m/80 s | 8.4 m/79 s | 8.3 m/75 s | 8.6 m/78 s | |

| Fourth trip | 9.0 m/77 s | 7.9 m/61 s | 7.8 m/58 s | 8.2 m/65 s | |

| Proposed Framework | First trip | 8.1 m/84 s | 8.8 m/89 s | 7.7 m/85 s | 8.2 m/86 s |

| Second trip | 7.8 m/86 s | 7.5 m/87 s | 8.1 m/88 s | 7.8 m/87 s | |

| Third trip | 8.5 m/85 s | 8.4 m/79 s | 7.7 m/75 s | 8.2 m/79 s | |

| Fourth trip | 7.9 m/73 s | 8.1 m/69 s | 7.3 m/71 s | 7.7 m/71 s |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Naseer, F.; Khan, M.N.; Altalbe, A. Intelligent Time Delay Control of Telepresence Robots Using Novel Deep Reinforcement Learning Algorithm to Interact with Patients. Appl. Sci. 2023, 13, 2462. https://doi.org/10.3390/app13042462

Naseer F, Khan MN, Altalbe A. Intelligent Time Delay Control of Telepresence Robots Using Novel Deep Reinforcement Learning Algorithm to Interact with Patients. Applied Sciences. 2023; 13(4):2462. https://doi.org/10.3390/app13042462

Chicago/Turabian StyleNaseer, Fawad, Muhammad Nasir Khan, and Ali Altalbe. 2023. "Intelligent Time Delay Control of Telepresence Robots Using Novel Deep Reinforcement Learning Algorithm to Interact with Patients" Applied Sciences 13, no. 4: 2462. https://doi.org/10.3390/app13042462

APA StyleNaseer, F., Khan, M. N., & Altalbe, A. (2023). Intelligent Time Delay Control of Telepresence Robots Using Novel Deep Reinforcement Learning Algorithm to Interact with Patients. Applied Sciences, 13(4), 2462. https://doi.org/10.3390/app13042462