Self-Supervised Learning for the Distinction between Computer-Graphics Images and Natural Images

Abstract

:1. Introduction

- We conduct and report, to our knowledge, a first and comprehensive study on utilizing self-supervised learning for CG forensics.

- We propose a new self-supervised learning method, with an appropriate pretext task, for the distinction between CG images and NIs. Different from existing pretext tasks, targeted at image content understanding, our proposed pretext task copes better with the considered forensic analysis problem.

- We carry out experiments under different evaluation scenarios for the assessment of our method and comparisons with existing self-supervised schemes. The obtained results showed that our method performed better for the CG forensics problem, leading to more accurate classification of CG images and NIs in different scenarios.

2. Related Work

2.1. Detection of CG Images

2.2. Self-Supervised Learning

3. The Proposed Method

3.1. Motivation

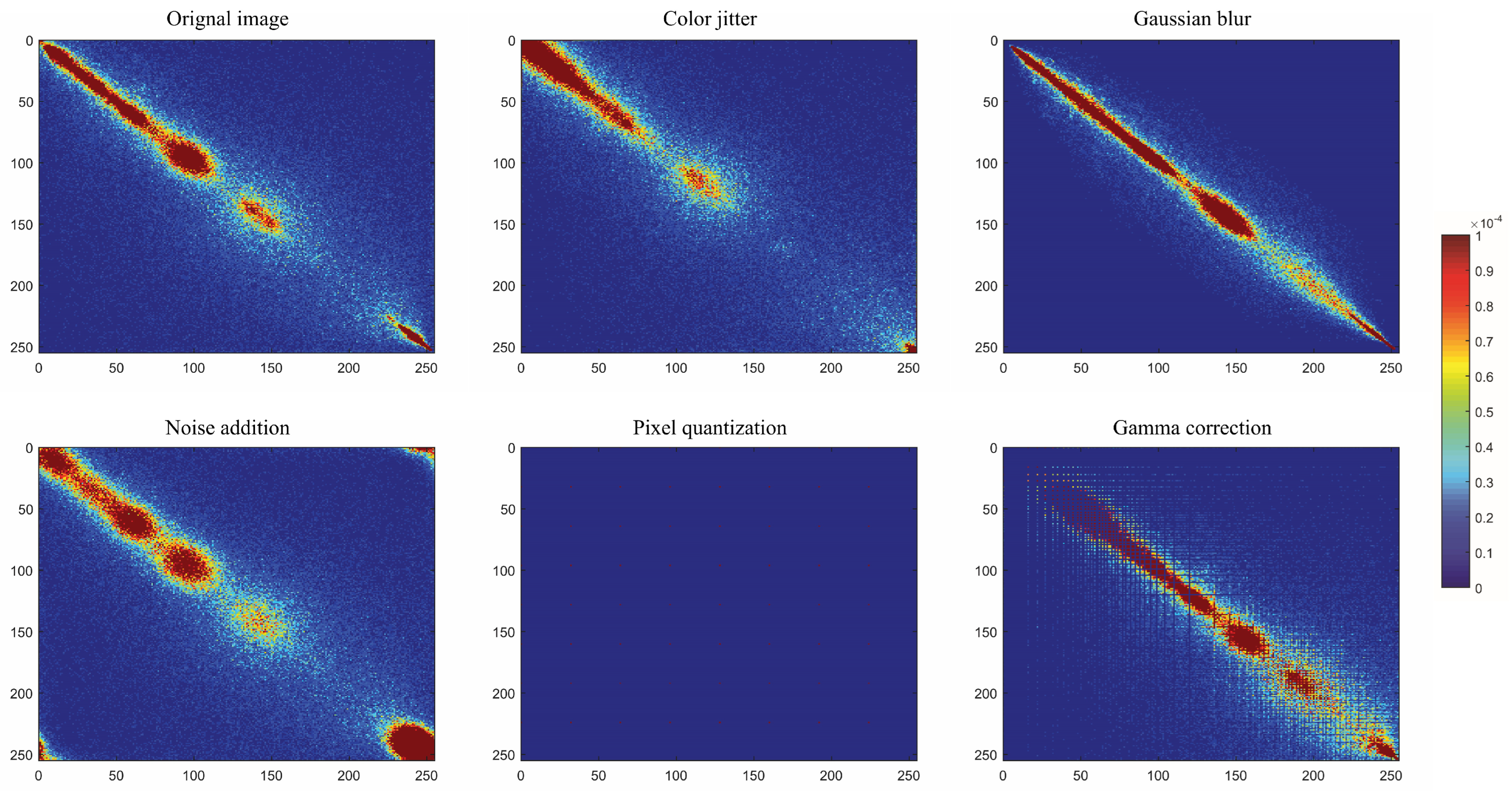

3.2. Self-Supervised Learning Task

4. Experimental Results

4.1. Implementation Details and Datasets

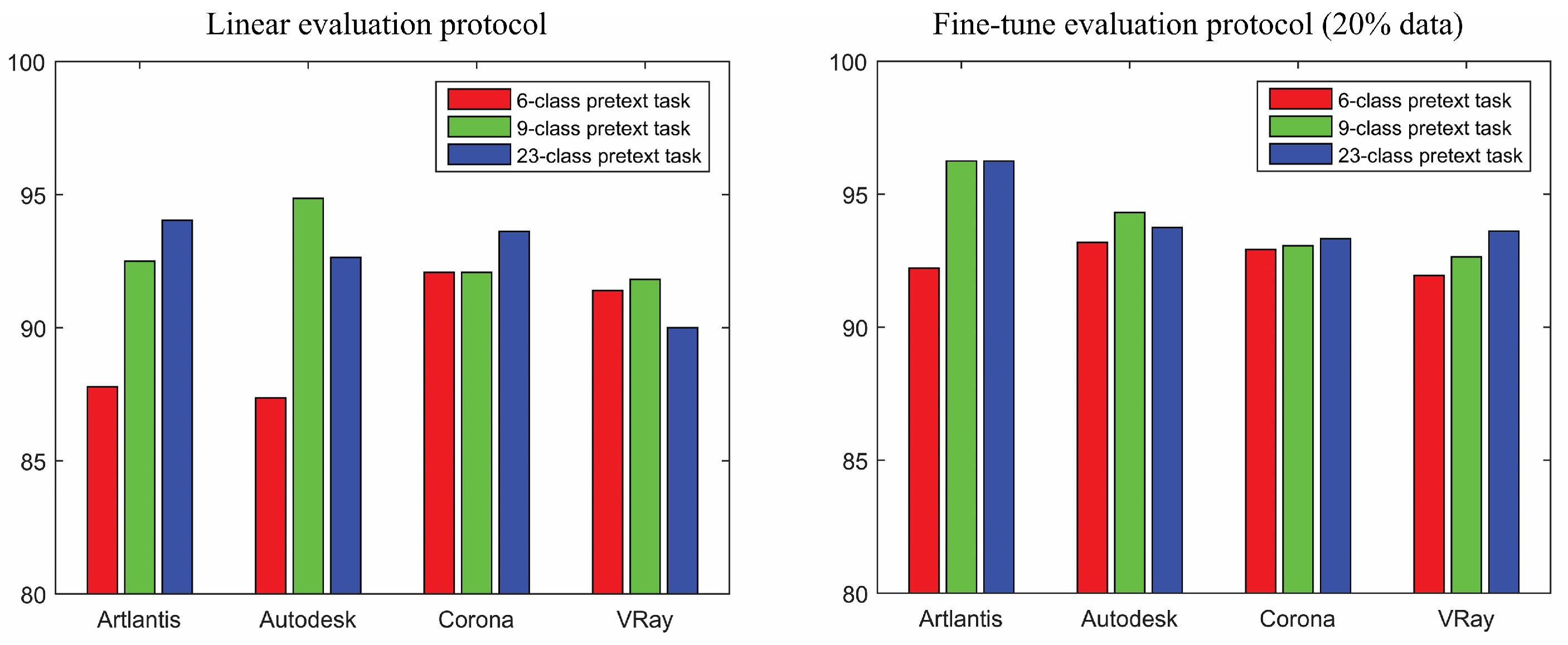

4.2. Linear Classification Evaluation Protocol

4.3. Fine-Tuning Evaluation Protocol

- Multi-class CG forensics. Furthermore, we considered an even more challenging CG forensics problem for the fine-tuning evaluation, i.e., the multi-class classification problem, in which the objective was to correctly classify NIs and CG images created by different rendering engines. This problem had good practical utility because it might allow forensic analysts to better know the source of a given image, being able to not only discriminate between NI and CG image, but also identify the source rendering engine for a CG image. In our study, this was a 5-class classification problem with the five classes being NIs and four kinds of CG images created, respectively, by Artlantis, Autodesk, Corona and VRay. The dataset of this 5-class classification problem was obtained in a simple way from the four datasets used above: CG images were directly from the corresponding dataset, while NIs were randomly drawn from all the NIs in the four datasets. This was done separately for the training and testing sets and in the end we had a training set of 25,200 images (5040 images for each of the five classes) and a testing set of 1800 images (360 images for each class). Similar to the experiments described above, we still considered two versions of fine-tuning, respectively, on the whole training set and on of training set. Table 5 presents the obtained results. All self-supervised methods had the same data and parameters for the fine-tuning. We slightly modified the last classification layer of ENet so that it could cope with the considered 5-class classification problem. The performances of this adapted ENet are also reported in Table 5. For fair comparisons, all the methods had the same number of 105 epochs for fine-tuning or training. It can be noticed from Table 5 that for this challenging CG forensics problem (a random guess gave accuracy), existing self-supervised methods resulted in rather limited performances, especially for the scenario of fine-tuning on only 20% training data. Our self-supervised method, in this case, performed much better than the existing methods, with a large margin compared to the second-best self-supervised method of MoCo-V2, i.e., (ours) vs. (MoCo-V2) and (ours) vs. (MoCo-V2), respectively, for the two fine-tuning scenarios. In addition, although the supervised and adapted ENet clearly outperformed our self-supervised method when the fine-tuning was carried out on the whole training set, the performances were comparable under the fine-tuning on 20% of data (ours being even slightly better, i.e., for ours vs. for ENet). This might be an indication of the importance and advantage of a properly designed forensics-oriented pretext task for a self-supervised learning method, in particular under a data scarcity scenario with a very challenging forensic problem.

4.4. Additional Experiments

4.4.1. Impact of Manipulations

4.4.2. Self-Supervised Training on Whole ImageNet Dataset

4.5. Discussion

5. Conclusions and Future Work

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Farid, H. Digital Image Forensics, 2012. Tutorial and Course Notes. Available online: https://farid.berkeley.edu/downloads/tutorials/digitalimageforensics.pdf (accessed on 27 December 2022).

- Piva, A. An overview on image forensics. Isrn Signal Process. 2013, 2013, 496701. [Google Scholar] [CrossRef]

- Verdoliva, L. Media forensics and deepfakes: An overview. IEEE J. Sel. Top. Signal Process. 2020, 14, 910–932. [Google Scholar] [CrossRef]

- Castillo Camacho, I.; Wang, K. A comprehensive review of deep-learning-based methods for image forensics. J. Imaging 2021, 7, 69. [Google Scholar] [CrossRef] [PubMed]

- Ng, T.T.; Chang, S.F. Discrimination of Computer Synthesized or Recaptured Images from Real Images. In Digital Image Forensics; Sencar, H.T., Memon, N., Eds.; Springer: New York, NY, USA, 2013; pp. 275–309. [Google Scholar]

- Quan, W.; Wang, K.; Yan, D.M.; Zhang, X. Distinguishing between natural and computer-generated images using convolutional neural networks. IEEE Trans. Inf. Forensics Secur. 2018, 13, 2772–2787. [Google Scholar] [CrossRef]

- Yang, P.; Baracchi, D.; Ni, R.; Zhao, Y.; Argenti, F.; Piva, A. A survey of deep learning-based source image forensics. J. Imaging 2020, 6, 9. [Google Scholar] [CrossRef]

- Jing, L.; Tian, Y. Self-supervised visual feature learning with deep neural networks: A survey. IEEE Trans. Pattern Anal. Mach. Intell. 2021, 43, 4037–4058. [Google Scholar] [CrossRef]

- Chaosgroup Gallery. Available online: https://www.chaosgroup.com/gallery (accessed on 27 December 2022).

- Learn V-Ray Gallery. Available online: https://www.learnvray.com/fotogallery/ (accessed on 27 December 2022).

- Corona Renderer Gallery. Available online: https://corona-renderer.com/gallery (accessed on 27 December 2022).

- Shullani, D.; Fontani, M.; Iuliani, M.; Shaya, O.A.; Piva, A. VISION: A video and image dataset for source identification. Eurasip J. Inf. Secur. 2017, 2017, 15. [Google Scholar] [CrossRef]

- Dang-Nguyen, D.T.; Pasquini, C.; Conotter, V.; Boato, G. RAISE: A raw images dataset for digital image forensics. In Proceedings of the ACM Multimedia Systems Conference, Portland, OR, USA, 18–20 March 2015; pp. 219–224. [Google Scholar]

- Ng, T.T.; Chang, S.F.; Hsu, J.; Xie, L.; Tsui, M.P. Physics-motivated features for distinguishing photographic images and computer graphics. In Proceedings of the ACM International Conference on Multimedia, Singapore, Singapore, 6–11 November 2005; pp. 239–248. [Google Scholar]

- Pan, F.; Chen, J.; Huang, J. Discriminating between photorealistic computer graphics and natural images using fractal geometry. Sci. China Ser. Inf. Sci. 2009, 52, 329–337. [Google Scholar] [CrossRef]

- Zhang, R.; Wang, R.D.; Ng, T.T. Distinguishing photographic images and photorealistic computer graphics using visual vocabulary on local image edges. In Proceedings of the International Workshop on Digital-forensics and Watermarking, Shanghai, China, 31 October–3 November 2012; pp. 292–305. [Google Scholar]

- Peng, F.; Zhou, D.L. Discriminating natural images and computer generated graphics based on the impact of CFA interpolation on the correlation of PRNU. Digit. Investig. 2014, 11, 111–119. [Google Scholar] [CrossRef]

- Sankar, G.; Zhao, V.; Yang, Y.H. Feature based classification of computer graphics and real images. In Proceedings of the IEEE International Conference on Acoustics, Speech, and Signal Processing, Taipei, Taiwan, 19–24 April 2009; pp. 1513–1516. [Google Scholar]

- Lyu, S.; Farid, H. How realistic is photorealistic? IEEE Trans. Signal Process. 2005, 53, 845–850. [Google Scholar] [CrossRef]

- Wang, J.; Li, T.; Shi, Y.Q.; Lian, S.; Ye, J. Forensics feature analysis in quaternion wavelet domain for distinguishing photographic images and computer graphics. Multimed. Tools Appl. 2017, 76, 23721–23737. [Google Scholar] [CrossRef]

- Chen, W.; Shi, Y.Q.; Xuan, G. Identifying computer graphics using HSV color model and statistical moments of characteristic functions. In Proceedings of the IEEE International Conference on Multimedia & Expo, Beijing, China, 2–5 July 2007; pp. 1123–1126. [Google Scholar]

- Özparlak, L.; Avcibas, I. Differentiating between images using wavelet-based transforms: A comparative study. IEEE Trans. Inf. Forensics Secur. 2011, 6, 1418–1431. [Google Scholar] [CrossRef]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. ImageNet classification with deep convolutional neural networks. In Proceedings of the Advances in Neural Information Processing Systems, Lake Tahoe, NV, USA, 3–6 December 2012; pp. 1097–1105. [Google Scholar]

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep Learning; MIT Press: Cambridge, MA, USA, 2016; Available online: http://www.deeplearningbook.org (accessed on 27 December 2022).

- Rahmouni, N.; Nozick, V.; Yamagishi, J.; Echizen, I. Distinguishing computer graphics from natural images using convolution neural networks. In Proceedings of the IEEE International Workshop on Information Forensics and Security, Rennes, France, 4–7 December 2017; pp. 1–6. [Google Scholar]

- Yao, Y.; Hu, W.; Zhang, W.; Wu, T.; Shi, Y.Q. Distinguishing computer-generated graphics from natural images based on sensor pattern noise and deep learning. Sensors 2018, 18, 1296. [Google Scholar] [CrossRef]

- Quan, W.; Wang, K.; Yan, D.M.; Zhang, X.; Pellerin, D. Learn with diversity and from harder samples: Improving the generalization of CNN-based detection of computer-generated images. Forensic Sci. Int. Digit. Investig. 2020, 35, 301023. [Google Scholar] [CrossRef]

- He, P.; Li, H.; Wang, H.; Zhang, R. Detection of computer graphics using attention-based dual-branch convolutional neural network from fused color components. Sensors 2020, 20, 4743. [Google Scholar] [CrossRef] [PubMed]

- Zhang, R.; Quan, W.; Fan, L.; Hu, L.; Yan, D.M. Distinguishing computer-generated images from natural images using channel and pixel correlation. J. Comput. Sci. Technol. 2020, 35, 592–602. [Google Scholar] [CrossRef]

- Bai, W.; Zhang, Z.; Li, B.; Wang, P.; Li, Y.; Zhang, C.; Hu, W. Robust texture-aware computer-generated image forensic: Benchmark and algorithm. IEEE Trans. Image Process. 2021, 30, 8439–8453. [Google Scholar] [CrossRef] [PubMed]

- Yao, Y.; Zhang, Z.; Ni, X.; Shen, Z.; Chen, L.; Xu, D. CGNet: Detecting computer-generated images based on transfer learning with attention module. Signal Process. Image Commun. 2022, 105, 116692. [Google Scholar] [CrossRef]

- Nguyen, H.H.; Yamagishi, J.; Echizen, I. Capsule-forensics: Using Capsule networks to detect forged images and videos. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing, Brighton, UK, 12–17 May 2019; pp. 2307–2311. [Google Scholar]

- He, P.; Jiang, X.; Sun, T.; Li, H. Computer graphics identification combining convolutional and recurrent neural networks. IEEE Signal Process. Lett. 2018, 25, 1369–1373. [Google Scholar] [CrossRef]

- Bhalang Tarianga, D.; Senguptab, P.; Roy, A.; Subhra Chakraborty, R.; Naskar, R. Classification of computer generated and natural images based on efficient deep convolutional recurrent attention model. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, Long Beach, CA, USA, 15–20 June 2019; pp. 146–152. [Google Scholar]

- Liu, S.; Mallol-Ragolta, A.; Parada-Cabaleiro, E.; Qian, K.; Jing, X.; Kathan, A.; Hu, B.; Schuller, B.W. Audio self-supervised learning: A survey. Patterns 2022, 3, 100616. [Google Scholar] [CrossRef]

- Krishnan, R.; Rajpurkar, P.; Topol, E.J. Self-supervised learning in medicine and healthcare. Nat. Biomed. Eng. 2022, 6, 1346–1352. [Google Scholar] [CrossRef] [PubMed]

- Liu, Y.; Jin, M.; Pan, S.; Zhou, C.; Zheng, Y.; Xia, F.; Yu, P. Graph self-supervised learning: A survey. IEEE Trans. Knowl. Data Eng. 2022, 1–20. [Google Scholar] [CrossRef]

- Gidaris, S.; Singh, P.; Komodakis, N. Unsupervised representation learning by predicting image rotations. In Proceedings of the International Conference on Learning Representations, Vancouver, BC, Canada, 30 April–3 May 2018; pp. 1–16. [Google Scholar]

- Zhang, R.; Isola, P.; Efros, A.A. Colorful image colorization. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 11–14 October 2016; pp. 649–666. [Google Scholar]

- Doersch, C.; Gupta, A.; Efros, A.A. Unsupervised visual representation learning by context prediction. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 7–13 December 2015; pp. 1422–1430. [Google Scholar]

- Noroozi, M.; Favaro, P. Unsupervised learning of visual representations by solving jigsaw puzzles. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 11–14 October 2016; pp. 69–84. [Google Scholar]

- Chen, T.; Kornblith, S.; Norouzi, M.; Hinton, G.E. A simple framework for contrastive learning of visual representations. In Proceedings of the International Conference on Machine Learning, Virtual Event, 12–18 July 2020; pp. 1597–1607. [Google Scholar]

- He, K.; Fan, H.; Wu, Y.; Xie, S.; Girshick, R. Momentum contrast for unsupervised visual representation learning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 9729–9738. [Google Scholar]

- Chen, X.; Fan, H.; Girshick, R.; He, K. Improved baselines with momentum contrastive learning. CoRR 2020, 1–3. [Google Scholar] [CrossRef]

- Grill, J.B.; Strub, F.; Altché, F.; Tallec, C.; Richemond, P.H.; Buchatskaya, E.; Doersch, C.; Pires, B.A.; Guo, Z.D.; Azar, M.G.; et al. Bootstrap your own latent: A new approach to self-supervised learning. In Proceedings of the Advances in Neural Information Processing Systems, Virtual Event, 6–12 December 2020; pp. 21271–21284. [Google Scholar]

- Zbontar, J.; Jing, L.; Misra, I.; LeCun, Y.; Deny, S. Barlow Twins: Self-supervised learning via redundancy reduction. In Proceedings of the International Conference on Machine Learning, Virtual Event, 18–24 July 2021; pp. 12310–12320. [Google Scholar]

- Bayar, B.; Stamm, M.C. Constrained convolutional neural networks: A new approach towards general purpose image manipulation detection. IEEE Trans. Inf. Forensics Secur. 2018, 13, 2691–2706. [Google Scholar] [CrossRef]

- Castillo Camacho, I.; Wang, K. Convolutional neural network initialization approaches for image manipulation detection. Digit. Signal Process. 2022, 122, 103376. [Google Scholar] [CrossRef]

- Deng, J.; Dong, W.; Socher, R.; Li, L.; Li, K.; Fei-Fei, L. ImageNet: A large-scale hierarchical image database. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Miami, FL, USA, 20–25 June 2009; pp. 248–255. [Google Scholar]

- Hyvärinen, A.; Hurri, J.; Hoyer, P.O. Natural Image Statistics—A Probabilistic Approach to Early Computational Vision; Springer: London, UK, 2009. [Google Scholar]

- Goyal, P.; Duval, Q.; Reizenstein, J.; Leavitt, M.; Xu, M.; Lefaudeux, B.; Singh, M.; Reis, V.; Caron, M.; Bojanowski, P.; et al. VISSL (Computer VIsion Library for State-of-the-Art Self-Supervised Learning). 2021. Available online: https://github.com/facebookresearch/vissl (accessed on 27 December 2022).

- Artlantis Gallery. Available online: https://artlantis.com/en/gallery/ (accessed on 27 December 2022).

- Autodesk A360 Rendering Gallery. Available online: https://gallery.autodesk.com/a360rendering/ (accessed on 27 December 2022).

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Xie, Q.; Dai, Z.; Hovy, E.; Luong, M.T.; Le, Q.V. Unsupervised data augmentation for consistency training. In Proceedings of the Advances in Neural Information Processing Systems, Virtual Event, 6–12 December 2020; pp. 6256–6268. [Google Scholar]

- Haghighi, F.; Taher, M.R.H.; Gotway, M.B.; Liang, J. DiRA: Discriminative, restorative, and adversarial learning for self-supervised medical image analysis. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 20824–20834. [Google Scholar]

- Liu, X.; Sanchez, P.; Thermos, S.; O’Neil, A.Q.; Tsaftaris, S.A. Learning disentangled representations in the imaging domain. Med. Image Anal. 2022, 80, 102516. [Google Scholar] [CrossRef]

- Tong, Z.; Song, Y.; Wang, J.; Wang, L. VideoMAE: Masked autoencoders are data-efficient learners for self-supervised video pre-training. In Proceedings of the Advances in Neural Information Processing Systems, New Orleans, LA, USA, 28 November–9 December 2022; pp. 1–15. [Google Scholar]

- Wei, C.; Fan, H.; Xie, S.; Wu, C.Y.; Yuille, A.; Feichtenhofer, C. Masked feature prediction for self-supervised visual pre-training. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 14668–14678. [Google Scholar]

| Manipulation | Parameter | Index |

|---|---|---|

| Color jitter | , | 1 |

| RGB color rescaling | 2 | |

| Gaussian blurring | , | 3 |

| Random Gaussian noise | , | 4 |

| Sharpness enhancement | 5 | |

| Histogram equalization | − | 6 |

| Pixel value quantization | 7 | |

| Gamma correction | 8 |

| Method | Artlantis | Autodesk | Corona | VRay |

|---|---|---|---|---|

| RotNet [38] | ||||

| Jigsaw [41] | ||||

| BarlowTwins [46] | ||||

| SimCLR [42] | ||||

| MoCo-V2 [44] | ||||

| ImageNetClassi | ||||

| Ours |

| Method | Artlantis | Autodesk | Corona | VRay |

|---|---|---|---|---|

| RotNet [38] | ||||

| Jigsaw [41] | ||||

| BarlowTwins [46] | ||||

| SimCLR [42] | ||||

| MoCo-V2 [44] | ||||

| ImageNetClassi | ||||

| ENet (supervised) [27] | ||||

| Ours |

| Method | Artlantis | Autodesk | Corona | VRay |

|---|---|---|---|---|

| RotNet [38] | ||||

| Jigsaw [41] | ||||

| BarlowTwins [46] | ||||

| SimCLR [42] | ||||

| MoCo-V2 [44] | ||||

| ImageNetClassi | ||||

| ENet (supervised) [27] | ||||

| Ours |

| Method | Fine-Tune on Whole Train Set | Fine-Tune on 20% Train Set |

|---|---|---|

| RotNet [38] | ||

| Jigsaw [41] | ||

| BarlowTwins [46] | ||

| SimCLR [42] | ||

| MoCo-V2 [44] | ||

| ImageNetClassi | ||

| ENet adapted (supervised) | ||

| Ours |

| Method | Fine-Tune on Whole Train Set | Fine-Tune on 20% Train Set |

|---|---|---|

| RotNet [38] | ||

| Jigsaw [41] | ||

| BarlowTwins [46] | ||

| SimCLR [42] | ||

| MoCo-V2 [44] | ||

| ImageNetClassi | ||

| ENet adapted (supervised) | ||

| Ours |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Wang, K. Self-Supervised Learning for the Distinction between Computer-Graphics Images and Natural Images. Appl. Sci. 2023, 13, 1887. https://doi.org/10.3390/app13031887

Wang K. Self-Supervised Learning for the Distinction between Computer-Graphics Images and Natural Images. Applied Sciences. 2023; 13(3):1887. https://doi.org/10.3390/app13031887

Chicago/Turabian StyleWang, Kai. 2023. "Self-Supervised Learning for the Distinction between Computer-Graphics Images and Natural Images" Applied Sciences 13, no. 3: 1887. https://doi.org/10.3390/app13031887

APA StyleWang, K. (2023). Self-Supervised Learning for the Distinction between Computer-Graphics Images and Natural Images. Applied Sciences, 13(3), 1887. https://doi.org/10.3390/app13031887