DLALoc: Deep-Learning Accelerated Visual Localization Based on Mesh Representation

Abstract

1. Introduction

2. Related Work

3. System Illustration

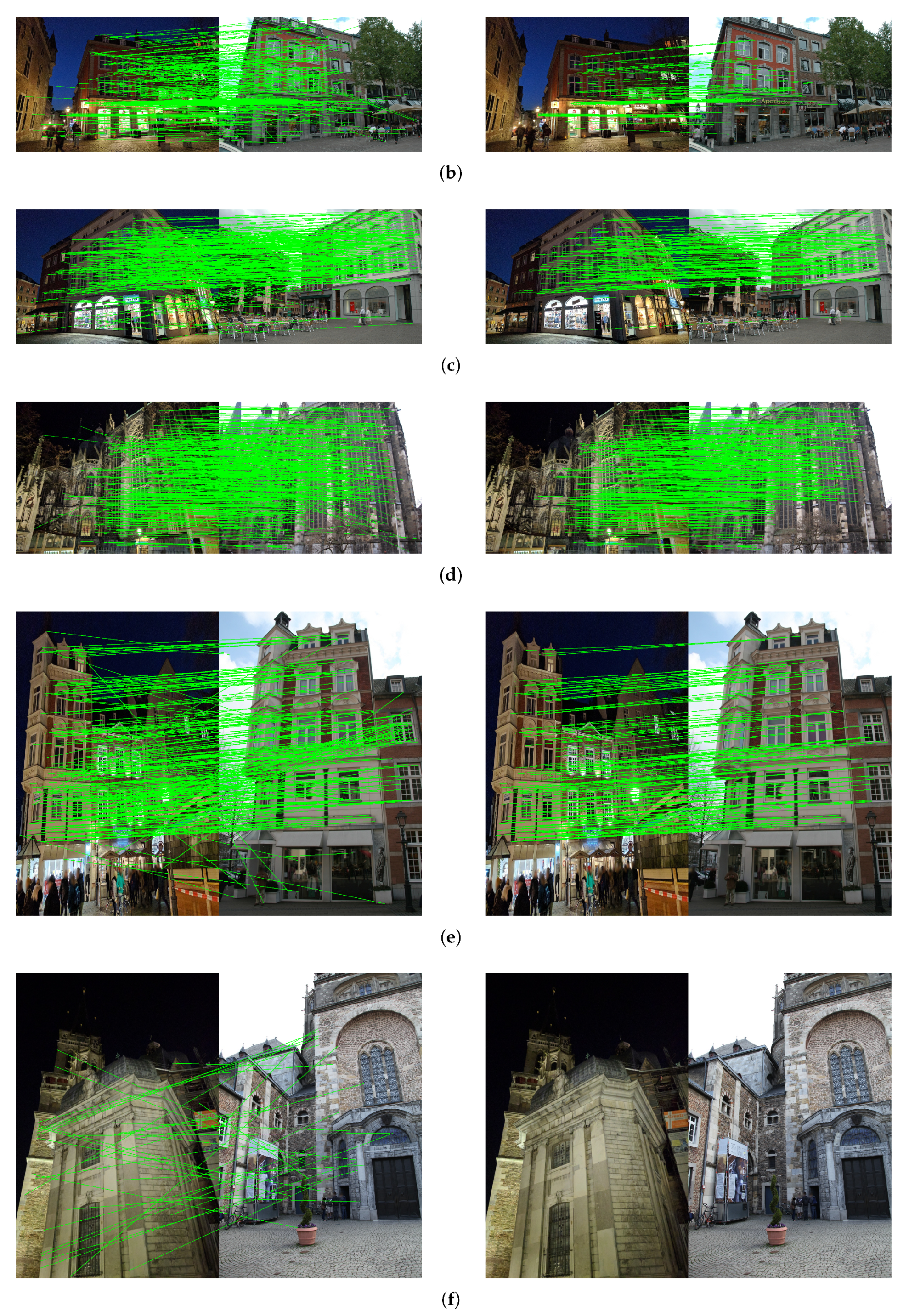

4. Methods and Material

4.1. Mesh-Based Visual Localization

4.2. Learning-Based Relative Pose Estimation

4.3. Efficient RANSAC Using Scale Consistency

| Algorithm 1 Filtration algorithm using scale consistency |

|

5. Experimental Evaluation

5.1. Experiment Prerequisites and Dataset

5.2. Methods and Metrics

- The overall accuracy of localization is defined by position and orientation error. The position error is defined as the Euclidean distance between the estimated pose , and ground truth pose . The orientation error is the absolute degree error computed from the estimated and ground truth camera rotation matrices , ; we derived error from . Additionally, we calculate the percentage of query images localized within three error thresholds varying from high precision (0.25 m, 2°) to medium precision (0.5 m, 5°) to low precision (5 m, 10°).

- Matching time is the time used to establish 2D–3D matches of query and database images. This time includes time spent on image retrieval, extracting local features of query images, and matching these features to retrieved database image features.

- P3P time is the time used for P3P method as illustrated in Step 4; providing high quality 2D–3D matches can significantly reduce the time for this step.

- P3P points are the average 3D points that localize a query image. The less number of P3P points that are used to reach a certain level of quality of the 2D–3D matches built on these 3D points for localization, the better the algorithm performance.

- MLP time is the total time used for additional pose estimation combined with filtering 2D correspondence outliers. The reduction of time in our algorithm can be estimated by the decrease in P3P time plus additional time spent.

5.3. Experimental Results and Analysis

6. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Liu, D.; Cui, Y.; Guo, X.; Ding, W.; Yang, B.; Chen, Y. Visual localization for autonomous driving: Mapping the accurate location in the city maze. In Proceedings of the 2020 25th International Conference on Pattern Recognition (ICPR), Milan, Italy, 10–15 January 2021; pp. 3170–3177. [Google Scholar]

- Bürki, M.; Schaupp, L.; Dymczyk, M.; Dubé, R.; Cadena, C.; Siegwart, R.; Nieto, J. Vizard: Reliable visual localization for autonomous vehicles in urban outdoor environments. In Proceedings of the 2019 IEEE Intelligent Vehicles Symposium (IV), Paris, France, 9–12 June 2019; pp. 1124–1130. [Google Scholar]

- Amato, G.; Cardillo, F.A.; Falchi, F. Technologies for visual localization and augmented reality in smart cities. In Sensing the Past; Springer: Berlin/Heidelberg, Germany, 2017; pp. 419–434. [Google Scholar]

- Middelberg, S.; Sattler, T.; Untzelmann, O.; Kobbelt, L. Scalable 6-dof localization on mobile devices. In Proceedings of the European Conference on Computer Vision, Zurich, Switzerland, 6–12 September 2014; pp. 268–283. [Google Scholar]

- Sarlin, P.E.; Cadena, C.; Siegwart, R.; Dymczyk, M. From coarse to fine: Robust hierarchical localization at large scale. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 16–20 June 2019; pp. 12716–12725. [Google Scholar]

- Sattler, T.; Weyand, T.; Leibe, B.; Kobbelt, L. Image Retrieval for Image-Based Localization Revisited. In Proceedings of the BMVC, Surrey, UK, 3–7 September 2012; Volume 1, p. 4. [Google Scholar]

- Sarlin, P.E.; Unagar, A.; Larsson, M.; Germain, H.; Toft, C.; Larsson, V.; Pollefeys, M.; Lepetit, V.; Hammarstrand, L.; Kahl, F.; et al. Back to the feature: Learning robust camera localization from pixels to pose. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Virtual, 19–25 June 2021; pp. 3247–3257. [Google Scholar]

- Humenberger, M.; Cabon, Y.; Guerin, N.; Morat, J.; Revaud, J.; Rerole, P.; Pion, N.; de Souza, C.; Leroy, V.; Csurka, G. Robust image retrieval-based visual localization using kapture. arXiv 2020, arXiv:2007.13867. [Google Scholar]

- Barath, D.; Ivashechkin, M.; Matas, J. Progressive NAPSAC: Sampling from gradually growing neighborhoods. arXiv 2019, arXiv:1906.02295. [Google Scholar]

- Barath, D.; Noskova, J.; Ivashechkin, M.; Matas, J. MAGSAC++, a fast, reliable and accurate robust estimator. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 1304–1312. [Google Scholar]

- Chum, O.; Matas, J. Matching with PROSAC-progressive sample consensus. In Proceedings of the 2005 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR’05), San Diego, CA, USA, 20–26 June 2005; Volume 1, pp. 220–226. [Google Scholar]

- Agarwal, S.; Furukawa, Y.; Snavely, N.; Simon, I.; Curless, B.; Seitz, S.M.; Szeliski, R. Building rome in a day. Commun. ACM 2011, 54, 105–112. [Google Scholar] [CrossRef]

- Schonberger, J.L.; Frahm, J.M. Structure-from-motion revisited. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 4104–4113. [Google Scholar]

- Panek, V.; Kukelova, Z.; Sattler, T. MeshLoc: Mesh-Based Visual Localization. In Proceedings of the European Conference on Computer Vision, Tel Aviv, Israel, 23–27 October 2022; pp. 589–609. [Google Scholar]

- Brejcha, J.; Lukáč, M.; Hold-Geoffroy, Y.; Wang, O.; Čadík, M. Landscapear: Large scale outdoor augmented reality by matching photographs with terrain models using learned descriptors. In Proceedings of the European Conference on Computer Vision, Glasgow, UK, 23–28 August 2020; pp. 295–312. [Google Scholar]

- Zhang, Z.; Sattler, T.; Scaramuzza, D. Reference pose generation for long-term visual localization via learned features and view synthesis. Int. J. Comput. Vis. 2021, 129, 821–844. [Google Scholar] [CrossRef] [PubMed]

- Hruby, P.; Duff, T.; Leykin, A.; Pajdla, T. Learning to Solve Hard Minimal Problems. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 5532–5542. [Google Scholar]

- Lepetit, V.; Moreno-Noguer, F.; Fua, P. Epnp: An accurate o (n) solution to the pnp problem. Int. J. Comput. Vis. 2009, 81, 155–166. [Google Scholar] [CrossRef]

- Brahmbhatt, S.; Gu, J.; Kim, K.; Hays, J.; Kautz, J. Geometry-aware learning of maps for camera localization. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 2616–2625. [Google Scholar]

- Kendall, A.; Cipolla, R. Geometric loss functions for camera pose regression with deep learning. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 5974–5983. [Google Scholar]

- Shavit, Y.; Ferens, R.; Keller, Y. Learning multi-scene absolute pose regression with transformers. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, QC, Canada, 10–17 October 2021; pp. 2733–2742. [Google Scholar]

- Brachmann, E.; Rother, C. Learning less is more-6d camera localization via 3d surface regression. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 4654–4662. [Google Scholar]

- Zeisl, B.; Sattler, T.; Pollefeys, M. Camera pose voting for large-scale image-based localization. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 7–13 December 2015; pp. 2704–2712. [Google Scholar]

- Gordo, A.; Almazan, J.; Revaud, J.; Larlus, D. End-to-end learning of deep visual representations for image retrieval. Int. J. Comput. Vis. 2017, 124, 237–254. [Google Scholar] [CrossRef]

- Arandjelovic, R.; Gronat, P.; Torii, A.; Pajdla, T.; Sivic, J. NetVLAD: CNN architecture for weakly supervised place recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 5297–5307. [Google Scholar]

- Brachmann, E.; Rother, C. Visual camera re-localization from RGB and RGB-D images using DSAC. IEEE Trans. Pattern Anal. Mach. Intell. 2021, 44, 5847–5865. [Google Scholar] [CrossRef] [PubMed]

- Brachmann, E.; Krull, A.; Nowozin, S.; Shotton, J.; Michel, F.; Gumhold, S.; Rother, C. Dsac-differentiable ransac for camera localization. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 6684–6692. [Google Scholar]

- Cavallari, T.; Golodetz, S.; Lord, N.A.; Valentin, J.; Di Stefano, L.; Torr, P.H. On-the-fly adaptation of regression forests for online camera relocalisation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 4457–4466. [Google Scholar]

- Cavallari, T.; Golodetz, S.; Lord, N.A.; Valentin, J.; Prisacariu, V.A.; Di Stefano, L.; Torr, P.H. Real-time RGB-D camera pose estimation in novel scenes using a relocalisation cascade. IEEE Trans. Pattern Anal. Mach. Intell. 2019, 42, 2465–2477. [Google Scholar] [CrossRef] [PubMed]

- Kendall, A.; Grimes, M.; Cipolla, R. Posenet: A convolutional network for real-time 6-dof camera relocalization. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 7–13 December 2015; pp. 2938–2946. [Google Scholar]

- Moreau, A.; Piasco, N.; Tsishkou, D.; Stanciulescu, B.; de La Fortelle, A. LENS: Localization enhanced by NeRF synthesis. In Proceedings of the Conference on Robot Learning, PMLR, London, UK, 8–11 November 2022; pp. 1347–1356. [Google Scholar]

- Balntas, V.; Li, S.; Prisacariu, V. Relocnet: Continuous metric learning relocalisation using neural nets. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 751–767. [Google Scholar]

- Ding, M.; Wang, Z.; Sun, J.; Shi, J.; Luo, P. CamNet: Coarse-to-fine retrieval for camera re-localization. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Seoul, Republic of Korea, 27 October–2 November 2019; pp. 2871–2880. [Google Scholar]

- Mildenhall, B.; Srinivasan, P.P.; Tancik, M.; Barron, J.T.; Ramamoorthi, R.; Ng, R. Nerf: Representing scenes as neural radiance fields for view synthesis. Commun. ACM 2021, 65, 99–106. [Google Scholar] [CrossRef]

- Sattler, T.; Leibe, B.; Kobbelt, L. Improving image-based localization by active correspondence search. In Proceedings of the European Conference on Computer Vision, Florence, Italy, 7–13 October 2012; pp. 752–765. [Google Scholar]

- Li, Y.; Snavely, N.; Huttenlocher, D.; Fua, P. Worldwide pose estimation using 3d point clouds. In Proceedings of the European Conference on Computer Vision, Florence, Italy, 7–13 October 2012; pp. 15–29. [Google Scholar]

- Friedman, J.H.; Baskett, F.; Shustek, L.J. An algorithm for finding nearest neighbors. IEEE Trans. Comput. 1975, 100, 1000–1006. [Google Scholar] [CrossRef]

- Sarlin, P.E.; DeTone, D.; Malisiewicz, T.; Rabinovich, A. Superglue: Learning feature matching with graph neural networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 4938–4947. [Google Scholar]

- Gao, X.S.; Hou, X.R.; Tang, J.; Cheng, H.F. Complete solution classification for the perspective-three-point problem. IEEE Trans. Pattern Anal. Mach. Intell. 2003, 25, 930–943. [Google Scholar]

- Nister, D. An efficient solution to the five-point relative pose problem. In Proceedings of the 2003 IEEE Computer Society Conference on Computer Vision and Pattern Recognition, Madison, WI, USA, 16–22 June 2003; Volume 2, p. II-195. [Google Scholar]

- Kazhdan, M.; Hoppe, H. Screened poisson surface reconstruction. ACM Trans. Graph. (ToG) 2013, 32, 1–13. [Google Scholar] [CrossRef]

- DeTone, D.; Malisiewicz, T.; Rabinovich, A. Superpoint: Self-supervised interest point detection and description. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, Salt Lake City, UT, USA, 18–22 June 2018; pp. 224–236. [Google Scholar]

- Lowe, D.G. Distinctive image features from scale-invariant keypoints. Int. J. Comput. Vis. 2004, 60, 91–110. [Google Scholar] [CrossRef]

| RoborCar Seasons/Aachen [%] | Numbe of anchors | ||||

|---|---|---|---|---|---|

| RANSAC Sampling Times | 40 | 80 | 120 | 160 | 200 |

| 5 | 1.5/4.8 | 4.7/7.2 | 8.5/16.4 | 8.9/17.6 | 8.8/17.9 |

| 10 | 3.5/6.9 | 6.1/11.3 | 12.5/21.7 | 12.9/22.3 | 13.5/24.3 |

| 30 | 5.1/7.9 | 5.4/13.2 | 13.7/25.4 | 12.8/23.2 | 13.3/24.6 |

| 50 | 5.7/6.2 | 6.9/14.7 | 14.8/28.9 | 14.5/22.5 | 15.2/24.8 |

| 100 | 6.1/9.5 | 7.3/15.1 | 15.7/29.1 | 15.6/23.4 | 15.5/21.9 |

| Hierarchical Localization Baseline | |||||

|---|---|---|---|---|---|

| Aachen | RobotCar | ||||

| Day | Night | Dusk | Sun | Night | |

| Distance [m] | 0.25/0.50/5.0 | 0.5/1.0/5.0 | 0.25/0.50/5.0 | 0.25/0.50/5.0 | 0.25/0.50/5.0 |

| Orient. [deg] | 2/5/10 | 2/5/10 | 2/5/10 | 2/5/10 | 2/5/10 |

| NV+SIFT+NN | 82.6/87.3/92.9 | 34.2/46.8/60.1 | 56.7/83.5/94.9 | 46.1/67.8/91.0 | 5.1/10.9/23.9 |

| NV+SIFT+SG | 84.6/88.7/93.7 | 54.5/71.7/86.9 | 63.2/87.9/95.4 | 61.1/74.9/93.5 | 7.2/18.4/33.6 |

| NV+SP+NN | 83.7/88.4/93.2 | 40.8/56.4/73.7 | 59.2/86.7/95.1 | 58.5/69.7/93.3 | 6.7/17.3/31.1 |

| NV+SP+SG | 88.0/94.9/97.8 | 70.2/87.4/98.4 | 67.9/88.9/96.9 | 57.4/67.8/92.6 | 6.4/18.1/30.9 |

| HF-Net+NN | 75.7/88.3/90.1 | 45.9/61.1/78.9 | 55.6/85.6/95.2 | 46.7/74.6/95.9 | 3.2/6.9/17.7 |

| HF-Net+SG | 87.6/92.4/93.7 | 65.4/85.9/94.4 | 64.8/87.1/95.8 | 46.3/67.4/90.9 | 4.7/17.5/24.9 |

| DLALoc with 100 Anchors and 25 RANSAC Sampling Times | |||||

|---|---|---|---|---|---|

| Aachen | RobotCar | ||||

| Day | Night | Dusk | Sun | Night | |

| Distance [m] | 0.25/0.50/5.0 | 0.5/1.0/5.0 | 0.25/0.50/5.0 | 0.25/0.50/5.0 | 0.25/0.50/5.0 |

| Orient. [deg] | 2/5/10 | 2/5/10 | 2/5/10 | 2/5/10 | 2/5/10 |

| NV+SIFT+NN | 81.9/87.1/93.2 | 34.4/47.1/63.1 | 56.3/84.5/94.9 | 45.9/68.1/92.4 | 5.0/10.6/24.7 |

| NV+SIFT+SG | 83.8/87.6/94.5 | 54.3/72.5/88.1 | 61.2/86.2/95.3 | 60.9/73.8/94.1 | 7.4/18.6/36.6 |

| NV+SP+NN | 82.9/88.1/93.9 | 41.1/57.3/75.2 | 58.9/85.9/96.1 | 58.1/70.2/93.1 | 6.9/18.4/32.9 |

| NV+SP+SG | 78.6/95.1/97.7 | 70.4/87.4/98.6 | 65.4/88.4/95.8 | 55.9/68.8/93.7 | 6.8/18.8/31.3 |

| HF-Net+NN | 75.6/87.9/91.1 | 46.2/62.9/79.6 | 55.4/86.5/95.6 | 46.9/74.7/95.9 | 3.6/7.1/18.5 |

| HF-Net+SG | 87.4/93.2/94.5 | 64.4/84.2/94.1 | 64.5/87.2/96.2 | 45.9/67.2/91.0 | 4.6/18.5/26.9 |

| DLALoc with 100 Anchors and 50 RANSAC Sampling Times | |||||

|---|---|---|---|---|---|

| Aachen | RobotCar | ||||

| Day | Night | Dusk | Sun | Night | |

| Distance [m] | 0.25/0.50/5.0 | 0.5/1.0/5.0 | 0.25/0.50/5.0 | 0.25/0.50/5.0 | 0.25/0.50/5.0 |

| Orient. [deg] | 2/5/10 | 2/5/10 | 2/5/10 | 2/5/10 | 2/5/10 |

| NV+SIFT+NN | 84.9/88.4/94.3 | 45.4/58.1/76.2 | 58.2/86.3/95.1 | 50.1/71.2/93.1 | 7.8/13.6/26.1 |

| NV+SIFT+SG | 84.9/89.1/95.6 | 57.6/74.8/89.9 | 63.4/88.9/96.2 | 64.1/75.6/94.8 | 8.5/20.3/37.9 |

| NV+SP+NN | 84.7/89.6/93.9 | 42.6/58.9/76.9 | 60.1/87.1/96.4 | 59.8/72.8/93.9 | 7.5/19.6/34.2 |

| NV+SP+SG | 78.9/95.4/97.9 | 74.6/89.6.4/98.8 | 68.4/88.9/96.9 | 57.2/69.9/94.5 | 7.9/19.6/32.4 |

| HF-Net+NN | 74.6/90.9/94.5 | 55.6/68.9/93.8 | 58.4/89.5/96.4 | 49.3/77.9/96.9 | 4.5/8.6/20.4 |

| HF-Net+SG | 88.6/94.4/95.6 | 66.1/87.2/96.6 | 57.8/89.4/96.7 | 47.1/68.9/92.4 | 5.0/19.6/27.5 |

| DLALoc with 100 Anchors and 100 RANSAC Sampling Times | |||||

|---|---|---|---|---|---|

| Aachen | RobotCar | ||||

| Day | Night | Dusk | Sun | Night | |

| Distance [m] | 0.25/0.50/5.0 | 0.5/1.0/5.0 | 0.25/0.50/5.0 | 0.25/0.50/5.0 | 0.25/0.50/5.0 |

| Orient. [deg] | 2/5/10 | 2/5/10 | 2/5/10 | 2/5/10 | 2/5/10 |

| NV+SIFT+NN | 85.1/88.6/94.7 | 45.6/58.2/76.4 | 58.4/87.2/95.3 | 51.2/72.3/93.0 | 8.2/14.2/27.4 |

| NV+SIFT+SG | 84.9/89.7/95.8 | 57.7/75.2/90.1 | 63.6/88.4/96.6 | 65.0/75.4/94.9 | 8.9/21.4/38.1 |

| NV+SP+NN | 86.7/89.9/94.2 | 42.8/59.4/77.6 | 60.5/86.8/96.3 | 60.3/73.6/94.2 | 7.9/20.4/35.1 |

| NV+SP+SG | 79.0/95.7/98.2 | 74.4/89.6/98.7 | 69.2/89.3/97.2 | 57.5/70.3/94.9 | 8.6/19.7/34.1 |

| HF-Net+NN | 74.6/91.5/94.8 | 56.6/69.3/94.2 | 60.6/89.9/96.9 | 51.2/78.4/97.2 | 4.7/9.3/21.5 |

| HF-Net+SG | 89.3/94.8/95.3 | 67.5/87.7/97.7 | 58.7/89.9/97.2 | 47.1/68.9/92.4 | 5.9/20.3/28.4 |

| Matching Time (min) | P3P Time (min) | MLP Time (min) | Total Time (min) | Improvement | Local Features | P3P Points | |

|---|---|---|---|---|---|---|---|

| NV+SP+NN | 64 | 35.6 | - | 35.6 | - | 2492 | 2096 |

| DLALoc(NV+SP+NN) | 64 | 5 | 0.5 | 5.5 | 84.5% | 2492 | 746 |

| NV+SP+SG | 258 | 20.4 | - | 20.4 | - | 1764 | 1657 |

| DLALoc(NV+SP+SG) | 258 | 4.1 | 0.5 | 4.6 | 76.7% | 1764 | 1032 |

| HF-Net+NN | 61 | 25.6 | - | 25.6 | - | 1432 | 1324 |

| DLALoc(HF-Net+NN) | 61 | 4.3 | 0.5 | 4.8 | 81.3% | 1432 | 998 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, P.; Liu, W. DLALoc: Deep-Learning Accelerated Visual Localization Based on Mesh Representation. Appl. Sci. 2023, 13, 1076. https://doi.org/10.3390/app13021076

Zhang P, Liu W. DLALoc: Deep-Learning Accelerated Visual Localization Based on Mesh Representation. Applied Sciences. 2023; 13(2):1076. https://doi.org/10.3390/app13021076

Chicago/Turabian StyleZhang, Peng, and Wenfen Liu. 2023. "DLALoc: Deep-Learning Accelerated Visual Localization Based on Mesh Representation" Applied Sciences 13, no. 2: 1076. https://doi.org/10.3390/app13021076

APA StyleZhang, P., & Liu, W. (2023). DLALoc: Deep-Learning Accelerated Visual Localization Based on Mesh Representation. Applied Sciences, 13(2), 1076. https://doi.org/10.3390/app13021076