Dynamic Obstacle Avoidance and Path Planning through Reinforcement Learning

Abstract

1. Introduction

1.1. Contributions of the Manuscript

1.2. Whats Is known

1.2.1. Application of Reinforcement Learning Algorithm in Mobile Robot Application

- Agent: the learner who is equipped with a collection of sensors and actuators to interact with the environment;

- Environment: the world around an agent or everything that interacts with it;

- Policy: a mapping between the set of seen states, S, and the set of actions, A;

- The reward function, which maps state–action pairs to a scalar value;

- Q value: the total payment that an agent might anticipate accumulating over time, starting from that state.

- Notices its current state st;

- Chooses and takes action at;

- Monitors the subsequent state st + 1;

- Receives a payout right away rt.

1.2.2. Description of Terms

- Mobile Robot

- Reinforcement Learning

- Autonomous Navigation

- Propagation Models

- Positive

- 2.

- Negative

2. Materials and Methods

2.1. Protocol Development (PD)

Research Questions (RQs)

- RQ1: What are the technical background and applications of reinforcement learning?

- RQ2: What are the principles of dynamic obstacle avoidance/path planning?

- RQ3: What is the propagation model?

- RQ4: What are the performance metric and evaluation methods of reinforcement learning in obstacle avoidance algorithms in a dynamic environment?

- RQ5: What is the gap in the literature?

- RQ1: The scientific involvement in RL;

- RQ2: The principles of dynamic obstacle avoidance/path planning;

- RQ3: The various propagation models used;

- RQ4: Performance metrics and evaluation model;

- RQ5: Research gap.

2.2. Search Strategy

2.2.1. Search Terms or Keywords

2.2.2. Resources

2.2.3. Eligibility Criteria

Exclusion Criteria (EC):

- EC1: All articles published in languages other than English were not considered.

- EC2: Studies not focusing on path planning or dynamic obstacle avoidance in reinforcement learning were excluded.

- EC3: Papers that did not discuss reinforcement learning were excluded.

- EC4: Studies that did not provide an answer to one or more of the review questions were removed.

Inclusion Criteria (IC):

- IC1: All publications from 2018 to 2022 were considered in the study.

- IC2: Only articles published in English and that met other inclusion criteria were considered.

- IC3: Studies focusing on path planning or dynamic obstacle avoidance in reinforcement learning were included.

- IC4: Articles focusing on the review question(s) were selected.

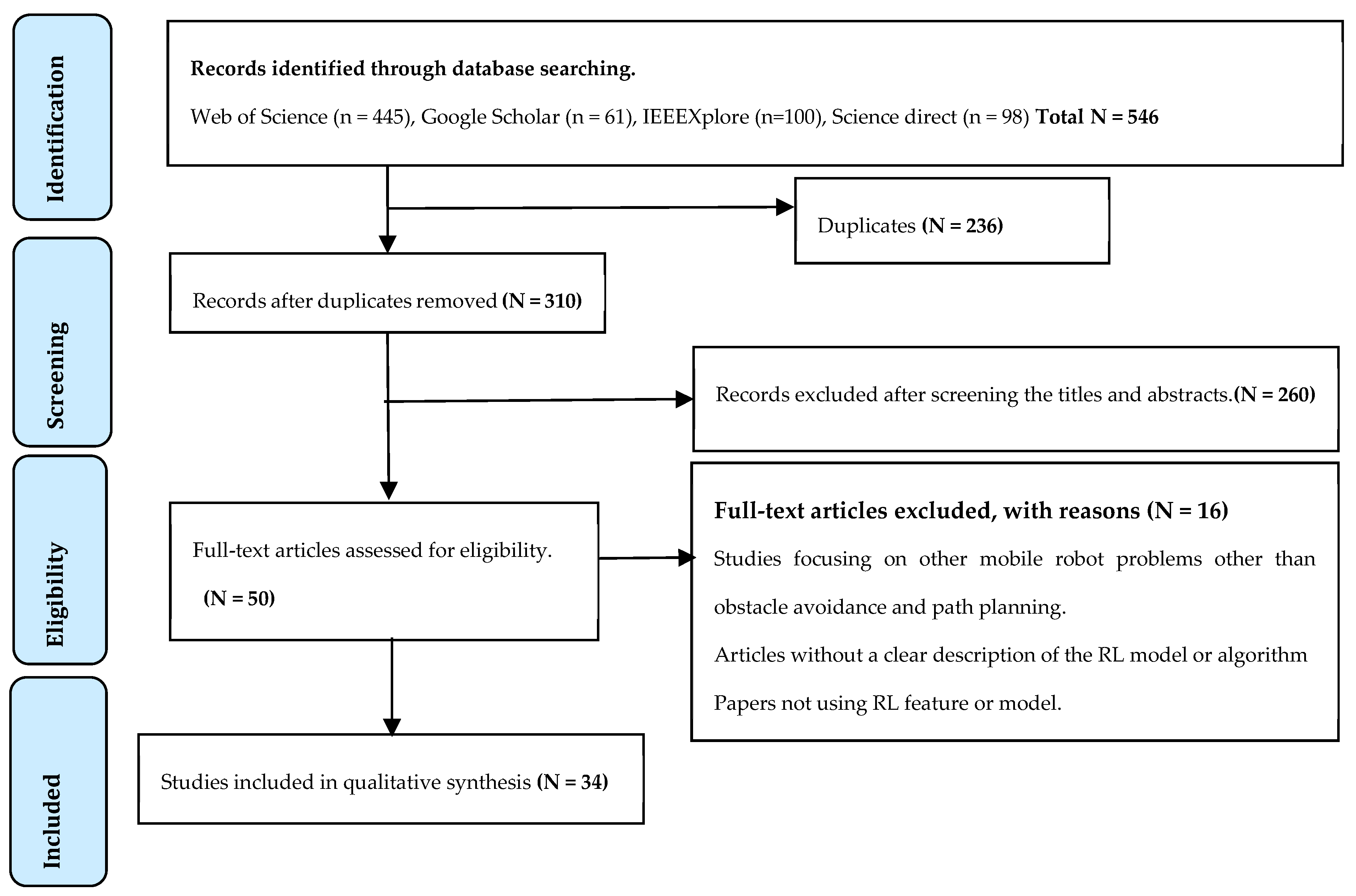

2.2.4. Database Search Outcome

2.3. Quality Assessment

- QC1: Were inclusion criteria clearly defined (choice of a robot)?

- QC2: Were the study subject (mobile robot) and the settings (environment) clearly defined?

- QC3: Was the proposed model clearly defined?

- QC4: Did the study use standard criteria and objectives to design the model?

- QC5: Did it identify the confounding factors?

- QC6: Were strategies for dealing with the confounding factors discussed?

- QC7: Were the outcome measured validly and reliably?

- QC8: Did the study use appropriate performance metrics?

2.4. Data Extraction

2.5. Data Synthesis

3. Results

3.1. Data Selection

3.2. Quality Assessment

3.3. Synthesized Result

4. Discussion

4.1. Application of Reinforcement Learning Algorithm in the Development of Mobile Robot

4.1.1. Path Planning, Optimization, and Obstacle Avoidance with RL

4.1.2. Navigation and Learning with RL

4.1.3. Motion Control and Adaptation with RL

4.2. Principles of Dynamic Obstacle Avoidance and Path Planning

4.3. Propagation Model of RL

4.4. Performance Metric and Evaluation Method of RL Propagation Model in Autonomous Mobile Robot

4.5. Research Gap in Reinforcement Learning Application in Mobile Robot Obstacle Avoidance

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Hadi, B.; Khosravi, A.; Sarhadi, P. Deep reinforcement learning for adaptive path planning and control of an autonomous underwater vehicle. Appl. Ocean. Res. 2022, 129, 103326. [Google Scholar] [CrossRef]

- Kozjek, D.; Malus, A.; Vrabič, R. Reinforcement-Learning-Based Route Generation for Heavy-Traffic Autonomous Mobile Robot Systems. Sensors 2021, 21, 4809. [Google Scholar] [CrossRef] [PubMed]

- Long, P.; Fan, T.; Liao, X.; Liu, W.; Zhang, H.; Pan, J. Towards optimally decentralized multi-robot collision avoidance via deep reinforcement learning. In Proceedings of the 2018 IEEE International Conference on Robotics And Automation (ICRA), Brisbane, QLD, Australia, 21–25 May 2018. [Google Scholar]

- Khatib, O. Real-time obstacle avoidance for manipulators and mobile robots. In Proceedings of the 1985 IEEE International Conference on Robotics and Automation, St. Louis, MO, USA, 25–28 March 1985. [Google Scholar] [CrossRef]

- Bin Issa, R.; Das, M.; Rahman, S.; Barua, M.; Rhaman, K.; Ripon, K.S.N.; Alam, G.R. Double Deep Q-Learning and Faster R-CNN-Based Autonomous Vehicle Navigation and Obstacle Avoidance in Dynamic Environment. Sensors 2021, 21, 1468. [Google Scholar] [CrossRef] [PubMed]

- López-Lozada, E.; Rubio-Espino, E.; Sossa-Azuela, J.H.; Ponce-Ponce, V.H. Reactive navigation under a fuzzy rules-based scheme and reinforcement learning for mobile robots. PeerJ Comput. Sci. 2021, 7, e556. [Google Scholar] [CrossRef] [PubMed]

- Kober, J.; Bagnell, J.A.; Peters, J. Reinforcement learning in robotics: A survey. Int. J. Robot. Res. 2013, 32, 1238–1274. [Google Scholar] [CrossRef]

- Van Hasselt, H.; Guez, A.; Silver, D. Deep Reinforcement Learning with Double Q-Learning. In Proceedings of the AAAI Conference on Artificial Intelligence, Phoenix, AZ, USA, 12–17 February 2016; Volume 30. [Google Scholar]

- Song, H.; Li, A.; Wang, T.; Wang, M. Multimodal Deep Reinforcement Learning with Auxiliary Task for Obstacle Avoidance of Indoor Mobile Robot. Sensors 2021, 21, 1363. [Google Scholar] [CrossRef]

- Masadeh, A.; Wang, Z.; Kamal, A. Convergence-Based Exploration Algorithm for Reinforcement Learning Recommended Citation. Electr. Comput. Eng. Tech. Rep. White Pap. 2018, 1, 1–11. [Google Scholar]

- Xie, L.; Wang, S.; Markham, A.; Trigoni, N. Towards Monocular Vision based Obstacle Avoidance through Deep Reinforcement Learning. arXiv 2017, arXiv:1706.09829. [Google Scholar]

- Akanksha, E.; Jyoti; Sharma, N.; Gulati, K. Review on Reinforcement Learning, Research Evolution and Scope of Application. In Proceedings of the 2021 5th International Conference on Computing Methodologies and Communication (ICCMC), Erode, India, 8–10 April 2021; pp. 1416–1423. [Google Scholar] [CrossRef]

- Lemley, J.; Bazrafkan, S.; Corcoran, P. Deep Learning for Consumer Devices and Services: Pushing the limits for machine learning, artificial intelligence, and computer vision. IEEE Consum. Electron. Mag. 2017, 6, 48–56. [Google Scholar] [CrossRef]

- Kitchenham, B.; Brereton, O.P.; Budgen, D.; Turner, M.; Bailey, J.; Linkman, S. Systematic literature reviews in software engineering—A systematic literature review. Inf. Softw. Technol. 2009, 51, 7–15. [Google Scholar] [CrossRef]

- Zhu, Y.; Mottaghi, R.; Kolve, E.; Lim, J.J.; Gupta, A.; Fei-Fei, L.; Farhadi, A. Target-driven visual navigation in indoor scenes using deep reinforcement learning. In Proceedings of the 2017 IEEE International Conference on Robotics and Automation (ICRA), Singapore, 29 May–3 June 2017; pp. 3357–3364. [Google Scholar]

- Liu, W.; Dong, L.; Niu, D.; Sun, C. Efficient Exploration for Multi-Agent Reinforcement Learning via Transferable Successor Features. IEEE/CAA J. Autom. Sin. 2022, 9, 1673–1686. [Google Scholar] [CrossRef]

- Sengupta, A.; Chaurasia, R. Secured Convolutional Layer IP Core in Convolutional Neural Network Using Facial Biometric. IEEE Trans. Consum. Electron. 2022, 68, 291–306. [Google Scholar] [CrossRef]

- Xiao, X.; Liu, B.; Warnell, G.; Stone, P. Motion planning and control for mobile robot navigation using machine learning: A survey. Auton. Robot. 2022, 46, 569–597. [Google Scholar] [CrossRef]

- Fiorini, P.; Shiller, Z. Motion Planning in Dynamic Environments Using Velocity Obstacles. Int. J. Robot. Res. 1998, 17, 760–772. [Google Scholar] [CrossRef]

- Havenstrøm, S.T.; Rasheed, A.; San, O. Deep Reinforcement Learning Controller for 3D Path Following and Collision Avoidance by Autonomous Underwater Vehicles. Front. Robot. AI 2021, 7, 211. [Google Scholar] [CrossRef]

- Zelek, R.; Jeon, H. Characterization of Semantic Segmentation Models on Mobile Platforms for Self-Navigation in Disaster-Struck Zones. IEEE Access 2022, 10, 73388–73402. [Google Scholar] [CrossRef]

- Joseph, L.M.I.L.; Goel, P.; Jain, A.; Rajyalakshmi, K.; Gulati, K.; Singh, P. A Novel Hybrid Deep Learning Algorithm for Smart City Traffic Congestion Predictions. In Proceedings of the 2021 6th International Conference on Signal Processing, Computing and Control (ISPCC), Solan, India, 7–9 October 2021; pp. 561–565. [Google Scholar] [CrossRef]

- Armstrong, R.; Hall, B.J.; Waters, E. Cochrane update. ‘Scoping the scope’ of a cochrane review. J. Public Health 2011, 33, 147–150. [Google Scholar] [CrossRef]

- Petersen, K.; Vakkalanka, S.; Kuzniarz, L. Guidelines for conducting systematic mapping studies in software engineering: An update. Inf. Softw. Technol. 2015, 64, 1–18. [Google Scholar] [CrossRef]

- Moher, D.; Liberati, A.; Tetzlaff, J.; Altman, D.G. Preferred reporting items for systematic reviews and meta-analyses: The PRISMA statement. PLoS Med. 2009, 6, e1000097. [Google Scholar] [CrossRef] [PubMed]

- Higgins, J.P.; Green, S. (Eds.) Cochrane Handbook for Systematic Reviews of Interventions; Wiley-Blackwell: Chichester, UK, 2008; Volume 5. [Google Scholar]

- Moola, S.; Munn, Z.; Tufanaru, C.; Aromataris, E.; Sears, K.; Sfetcu, R.; Currie, M.; Qureshi, R.; Mattis, P.; Lisy, K.; et al. Chapter 7: Systematic Reviews of Etiology and Risk. In JBI Manual for Evidence Synthesis; Aromataris, E., Munn, Z., Eds.; JBI: Adelaide, Australia, 2020. [Google Scholar]

- Wang, N.; Zhang, D.; Wang, Y. Learning to Navigate for Mobile Robot with Continual Reinforcement Learning. In Proceedings of the 39th Chinese Control Conference, Shenyang, China, 27–29 July 2020. [Google Scholar] [CrossRef]

- Chen, C.; Jeng, S.; Lin, C. Mobile Robot Wall-Following Control Using Fuzzy Logic Controller with Improved Differential Search and Reinforcement Learning. Mathematics 2020, 8, 1254. [Google Scholar] [CrossRef]

- Huang, R.; Qin, C.; Ling, J.; Lan, L. Path planning of mobile robot in unknown dynamic continuous environment using reward-modified deep Q-network. Optim. Control Appl. Methods 2021, 44, 1570–1587. [Google Scholar] [CrossRef]

- Gao, R.; Gao, X.; Liang, P.; Han, F.; Lan, B.; Li, J.; Li, J.; Li, S. Motion Control of Non-Holonomic Constrained Mobile Robot Using Deep Reinforcement Learning. In Proceedings of the 2019 IEEE 4th International Conference on Advanced Robotics and Mechatronics (ICARM), Toyonaka, Japan, 3–5 July 2019; pp. 348–353. [Google Scholar]

- Lee, H.; Jeong, J. Mobile Robot Path Optimization Technique Based on Reinforcement Learning Algorithm in Warehouse Environment. Appl. Sci. 2021, 11, 1209. [Google Scholar] [CrossRef]

- Xiang, J.; Li, G.; Dong, X.; Ren, Z. Continuous Control with Deep Reinforcement Learning for Mobile Robot Navigation. In Proceedings of the 2019 Chinese Automation Congress (CAC), Hangzhou, China, 22–24 November 2019; pp. 1501–1506. [Google Scholar]

- Fen, S.; Ren, H.; Wang, X.; Ben-Tzv, P. Mobile Robot Obstacle Avoidance Base on Deep Reinforcement Learning. Proc. ASME 2019, 5A, 1–8. [Google Scholar]

- Huang, L.; Qu, H.; Fu, M.; Deng, W. Reinforcement Learning for Mobile Robot Obstacle Avoidance under Dynamic Environments. In PRICAI 2018: Trends in Artificial Intelligence; Geng, X., Kang, B.H., Eds.; Lecture Notes in Computer Science; Springer: Cham, Switzerland, 2018; Volume 11012, pp. 441–453. [Google Scholar] [CrossRef]

- Choi, J.; Lee, G.; Lee, C. Reinforcement learning-based dynamic obstacle avoidance and integration of path planning. Intell. Serv. Robot. 2021, 14, 663–677. [Google Scholar] [CrossRef]

- Xiaoxian, S.; Chenpeng, Y.; Haoran, Z.; Chengju, L.; Qijun, C. Obstacle Avoidance Algorithm for Mobile Robot Based on Deep Reinforcement Learning in Dynamic Environments. In Proceedings of the 2020 IEEE 16th International Conference on Control & Automation (ICCA), Singapore, 9–11 October 2020; pp. 366–372. [Google Scholar] [CrossRef]

- Song, S.; Kidziński, Ł.; Peng, X.B.; Ong, C.; Hicks, J.; Levine, S.; Delp, S.L. Deep reinforcement learning for modeling human locomotion control in neuromechanical simulation. J. Neuroeng. Rehabil. 2021, 18, 1–17. [Google Scholar] [CrossRef]

- Taghavifar, H.; Xu, B.; Taghavifar, L.; Qin, Y. Optimal path-planning of nonholonomic terrain robots for dynamic obstacle avoidance using single-time velocity estimator and reinforcement learning approach. IEEE Access 2019, 7, 159347–159356. [Google Scholar] [CrossRef]

- Chewu, C.C.E.; Kumar, V.M. Autonomous navigation of a mobile robot in dynamic indoor environments using SLAM and reinforcement learning. IOP Conf. Ser. Mater. Sci. Eng. 2018, 402, 012022. [Google Scholar] [CrossRef]

- Ruan, X.; Ren, D.; Zhu, X.; Huang, J. Mobile Robot Navigation based on Deep Reinforcement Learning. In Proceedings of the 2019 Chinese Control and Decision Conference (CCDC), Nanchang, China, 3–5 June 2019; pp. 6174–6178. [Google Scholar] [CrossRef]

- Yao, Q.; Zheng, Z.; Qi, L.; Yuan, H.; Guo, X.; Zhao, M.; Yang, T. Path planning method with improved artificial potential field—A reinforcement learning perspective. IEEE Access 2020, 8, 135513–135523. [Google Scholar] [CrossRef]

- Han, S.; Choi, H.; Benz, P.; Loaiciga, J. Sensor-Based Mobile Robot Navigation via Deep Reinforcement Learning. In Proceedings of the IEEE International Conference on Big Data and Smart Computing, Shanghai, China, 15–17 January 2018; pp. 147–154. [Google Scholar] [CrossRef]

- Gao, J.; Ye, W.; Guo, J.; Li, Z. Deep Reinforcement Learning for Indoor Mobile Robot Path Planning. Sensors 2020, 20, 5493. [Google Scholar] [CrossRef]

- Duguleana, M.; Mogan, G. Neural networks based reinforcement learning for mobile robots obstacle avoidance. Expert Syst. Appl. 2016, 62, 104–115. [Google Scholar] [CrossRef]

- Zhang, W.; Wang, G. Reinforcement Learning-Based Continuous Action Space Path Planning Method for Mobile Robots. J. Robot. 2022, 2022, 9069283. [Google Scholar] [CrossRef]

- Wang, Y.; Fang, Y.; Lou, P.; Yan, J.; Liu, N. Deep Reinforcement Learning based Path Planning for Mobile Robot in Unknown Environment. J. Phys. Conf. Ser. 2020, 1576, 012009. [Google Scholar] [CrossRef]

- Lin, T.-C.; Chen, C.-C.; Lin, C.-J. Wall-following and Navigation Control of Mobile Robot Using Reinforcement Learning Based on Dynamic Group Artificial Bee Colony. J. Intell. Robot. Syst. 2018, 92, 343–357. [Google Scholar] [CrossRef]

- Orozco-Rosas, U.; Picos, K.; Pantrigo, J.J.; Montemayor, A.S.; Cuesta-Infante, A. Mobile Robot Path Planning Using a QAPF Learning Algorithm for Known and Unknown Environments. IEEE Access 2022, 10, 84648–84663. [Google Scholar] [CrossRef]

- Quan, H.; Li, Y.; Zhang, Y. A novel mobile robot navigation method based on deep reinforcement learning. Int. J. Adv. Robot. Syst. 2020, 17, 1729881420921672. [Google Scholar] [CrossRef]

- Everett, M.; Chen, Y.F.; How, J.P. Motion planning among dynamic, decision-making agents with deep reinforcement learning. In Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) Madrid, Spain, 1–5 October 2018; pp. 3052–3059. [Google Scholar]

- Niroui, F.; Zhang, K.; Kashino, Z.; Nejat, G. Deep reinforcement learning robot for search and rescue applications: Exploration in unknown cluttered environments. IEEE Robot. Autom. Lett. 2019, 4, 610–617. [Google Scholar] [CrossRef]

- Mehmood, A.; Shaikh, I.U.; Ali, A. Application of Deep Reinforcement Learning Tracking Control of 3WD Omnidirectional Mobile Robot. Inf. Technol. Control 2021, 50, 507–521. [Google Scholar] [CrossRef]

- Chen, P.Z.; Pei, J.A.; Lu, W.Q.; Li, M.Z. A deep reinforcement learning based method for real-time path planning and dynamic obstacle avoidance. Neurocomputing 2022, 497, 64–75. [Google Scholar] [CrossRef]

- Bernat, J.; Czopek, P.; Bartosik, S. Analysis of Mobile Robot Control by Reinforcement Learning Algorithm. Electronics 2022, 11, 1754. [Google Scholar] [CrossRef]

- Wen, S.; Wen, Z.; Zhang, D.; Zhang, H.; Wang, T. A multi-robot path-planning algorithm for autonomous navigation using meta-reinforcement learning based on transfer learning. Appl. Soft Comput. 2021, 110, 107605. [Google Scholar] [CrossRef]

- Baek, D.; Hwang, M.; Kim, H.; Kwon, D.S. Path planning for automation of surgery robot based on probabilistic roadmap and reinforcement learning. In Proceedings of the 2018 15th International Conference on Ubiquitous Robots (UR), Honolulu, HI, USA, 26–30 June 2018; pp. 342–347. [Google Scholar]

- Yu, J.; Su, Y.; Liao, Y. The Path Planning of Mobile Robot by Neural Networks and Hierarchical Reinforcement Learning. Front. Neurorobotics 2020, 14, 63. [Google Scholar] [CrossRef] [PubMed]

- Zhu, W.; Guo, X.; Fang, Y.; Zhang, X. A Path-Integral-Based Reinforcement Learning Algorithm for Path Following of an Autoassembly Mobile Robot. IEEE Trans. Neural Netw. Learn. Syst. 2019, 31, 4487–4499. [Google Scholar] [CrossRef] [PubMed]

- Bai, W.; Li, T.; Tong, S. NN Reinforcement Learning Adaptive Control for a Class of Nonstrict-Feedback Discrete-Time Systems. IEEE Trans. Cybern. 2020, 50, 4573–4584. [Google Scholar] [CrossRef] [PubMed]

- Sutskever, I.; Vinyals, O.; Le, Q.V. Sequence to sequence learning with neural networks. In Proceedings of the Advances in Neural Information Processing Systems, Montreal, QC, Canada, 8–13 December 2014; pp. 3104–3112. [Google Scholar]

- Zhang, T.; Mo, H. Reinforcement learning for robot research: A comprehensive review and open issues. Int. J. Adv. Robot. Systems 2021, 18, 1–22. [Google Scholar] [CrossRef]

| Sources | URL |

|---|---|

| Web of Science | https://webofscience.com (accessed on 7 December 2022) |

| Google Scholar | https://scholar.google.com/ (accessed on 7 December 2022) |

| IEEE Xplore | https://ieeexplore.ieee.org/ (accessed on 7 December 2022) |

| Science Direct | https://sciencedirect.com/ (accessed on 7 December 2022) |

| Sources | Before |

|---|---|

| Web of Science | 261 |

| Google Scholar | 87 |

| IEEE Xplore | 100 |

| Science Direct | 98 |

| Total | 546 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Almazrouei, K.; Kamel, I.; Rabie, T. Dynamic Obstacle Avoidance and Path Planning through Reinforcement Learning. Appl. Sci. 2023, 13, 8174. https://doi.org/10.3390/app13148174

Almazrouei K, Kamel I, Rabie T. Dynamic Obstacle Avoidance and Path Planning through Reinforcement Learning. Applied Sciences. 2023; 13(14):8174. https://doi.org/10.3390/app13148174

Chicago/Turabian StyleAlmazrouei, Khawla, Ibrahim Kamel, and Tamer Rabie. 2023. "Dynamic Obstacle Avoidance and Path Planning through Reinforcement Learning" Applied Sciences 13, no. 14: 8174. https://doi.org/10.3390/app13148174

APA StyleAlmazrouei, K., Kamel, I., & Rabie, T. (2023). Dynamic Obstacle Avoidance and Path Planning through Reinforcement Learning. Applied Sciences, 13(14), 8174. https://doi.org/10.3390/app13148174