The Interface of Privacy and Data Security in Automated City Shuttles: The GDPR Analysis

Abstract

:1. Introduction

- An extensive analysis on how the GDPR discusses the principles of data processing, the rights of data subjects, and roles and responsibilities of the stakeholders (data controllers, data processors, sub-processors, etc.) before, during, and after the processing of personal data collected from the ACS.

- Categorization of the main privacy-preserving techniques that are applicable to the ACS environment.

- Presentation of the gaps between the legal definitions and technological implementation of privacy-preserving schemes recommended by the GDPR, which are mainly pseudonymization and anonymization techniques.

- Investigation, through interdisciplinary efforts, into the shortcomings and pitfalls of the GDPR data processing principles in protecting personal data within the complex ACS context.

2. Related Work

2.1. GDPR in Driverless Landscape

2.2. Data Privacy Challenges within Vehicular Environment

2.3. Data Privacy-Preserving Methods

- Presenting an interdisciplinary approach regarding data protection requirements in the ACS ecosystem by assessing and addressing simultaneously both regulatory and technical challenges.

- Providing an in-depth analysis of the GDPR provisions and limitations that are relevant to the ACS.

- Having a closer examination on how the legal requirements are compatible with the technologies deployed in the ACS.

- Analyzing the inconsistencies between the legal definitions on pseudonymization and anonymization in the GDPR and their technical implementation through a comprehensive categorization of the most relevant applicable techniques into the ACS landscape.

- Developing a significant reference point for academic research on ACS, public transportation operators, automobile manufacturers (OEMs), policymakers, and service providers, acquiring or looking forward to deploying ACSs within their systems.

3. GDPR Implications

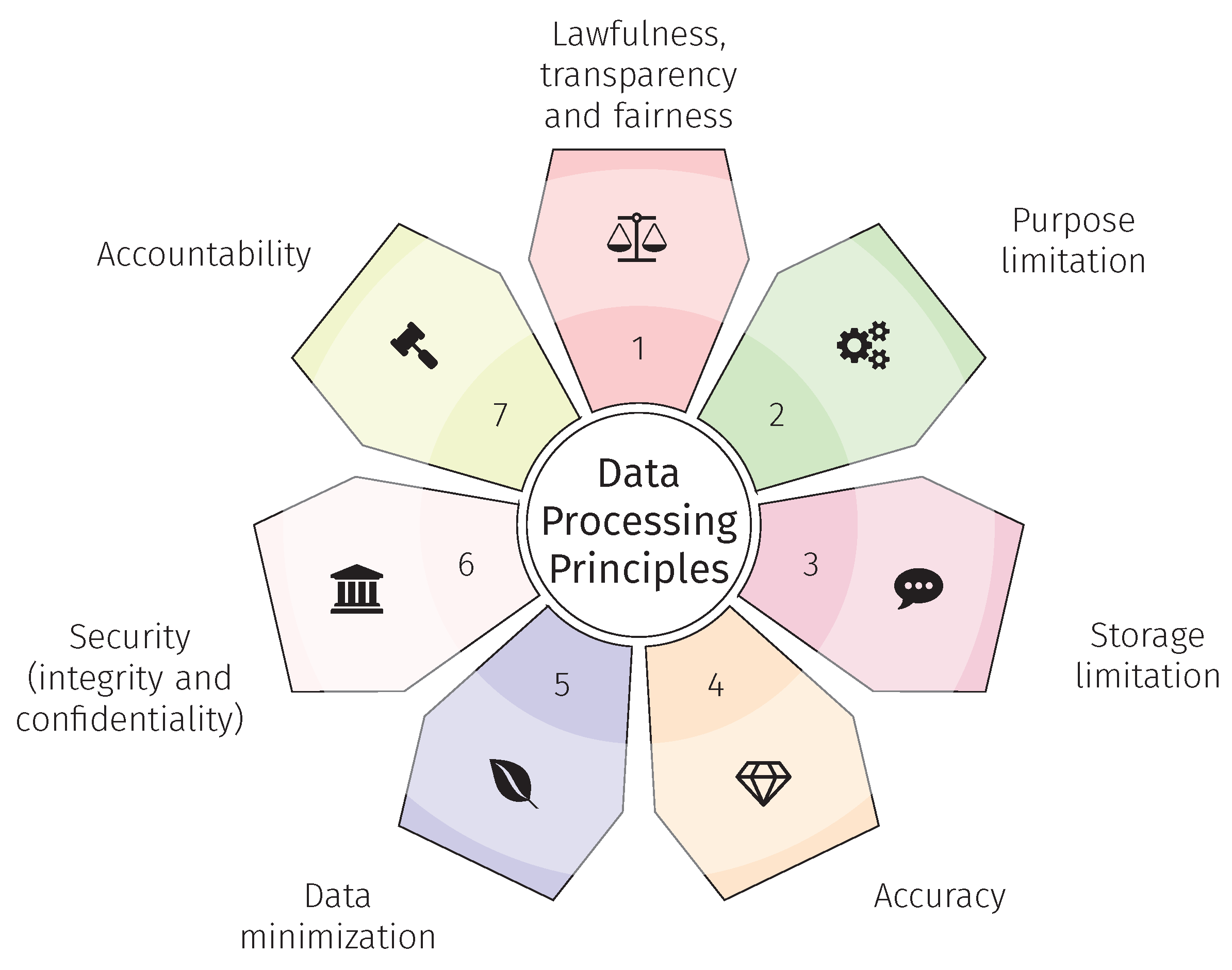

3.1. Data Processing Principles

- Lawfulness, transparency, and fairness: where data collection practices are conducted based on a thorough understanding of the GDPR law and without hiding the type of collected data and the reason for its processing from the data subjects (Article 5, Sections 1.a and 6).

- Purpose limitation: where the processing is approached based on the specified, explicit, and legitimate purposes with no further processing in a manner that is incompatible with those agreed on purposes (Article 5 Section 1.d).

- Storage limitation: calling for data storage no longer than it is necessary for the purpose for which the personal data is processed (Article 5 Section 1.e).

- Accuracy: where controllers should take necessary measures to process only correct data (Article 5 Section 1.b).

- Data minimization: aiming to limit the amount of processed data to the lowest level and requiring data destruction once the purpose of the processing is completed (Article 5 Section 1.e).

- Security: requiring data controllers to employ the appropriate technical and organizational measures designed to effectively implement integrity and confidentiality through PbD and PbDf principles (Article 25 Sections 1 & 2).

- Accountability: requiring data controllers to put in place appropriate privacy-preserving measures that are able to demonstrate compliance to the regulation at any stage (Article 5 Section 2).

3.2. Data Subjects Rights

3.3. Data Controllers’ and Data Processors’ Compliance

- The implementation of the data processing principles (Articles 5–11).

- To inform data subjects as elaborated in Section 3.1 and secure their rights (Articles 12–23).

- The implementation of security measures such as the deployment of privacy-preserving techniques discussed in Section 4 (Articles 5, 25, and 32).

- The arrangements with the joint controller (if any) (Article 26).

- The engagement of processors (Article 28).

- The notification of personal data breach to the relevant data protection authority (Article 33).

- The communication of personal data breach to the data subjects (Article 34).

- The realization of DPIA (Article 35).

- The designation of the Data Protection Officer (Article 37).

- The transfer of data to third countries (Chapter V, Articles 44–50).

- The communication with the DPAs (Articles 31, 36, and 37).

- The compliance with specific processing situations (Articles 85–91).

- Retain all the necessary documentation and records (as listed throughout GDPR articles).

3.4. Data Protection Impact Assessment

- A systematic and extensive evaluation of automated processing, including profiling and similar activities that have legal effects or affect the data subjects.

- Processing on a large scale of special categories of sensitive data such as racial or ethnic origin, political opinion, and of personal data relating to criminal convictions and offenses.

- A systematic monitoring of a publicly accessible area on a large scale.

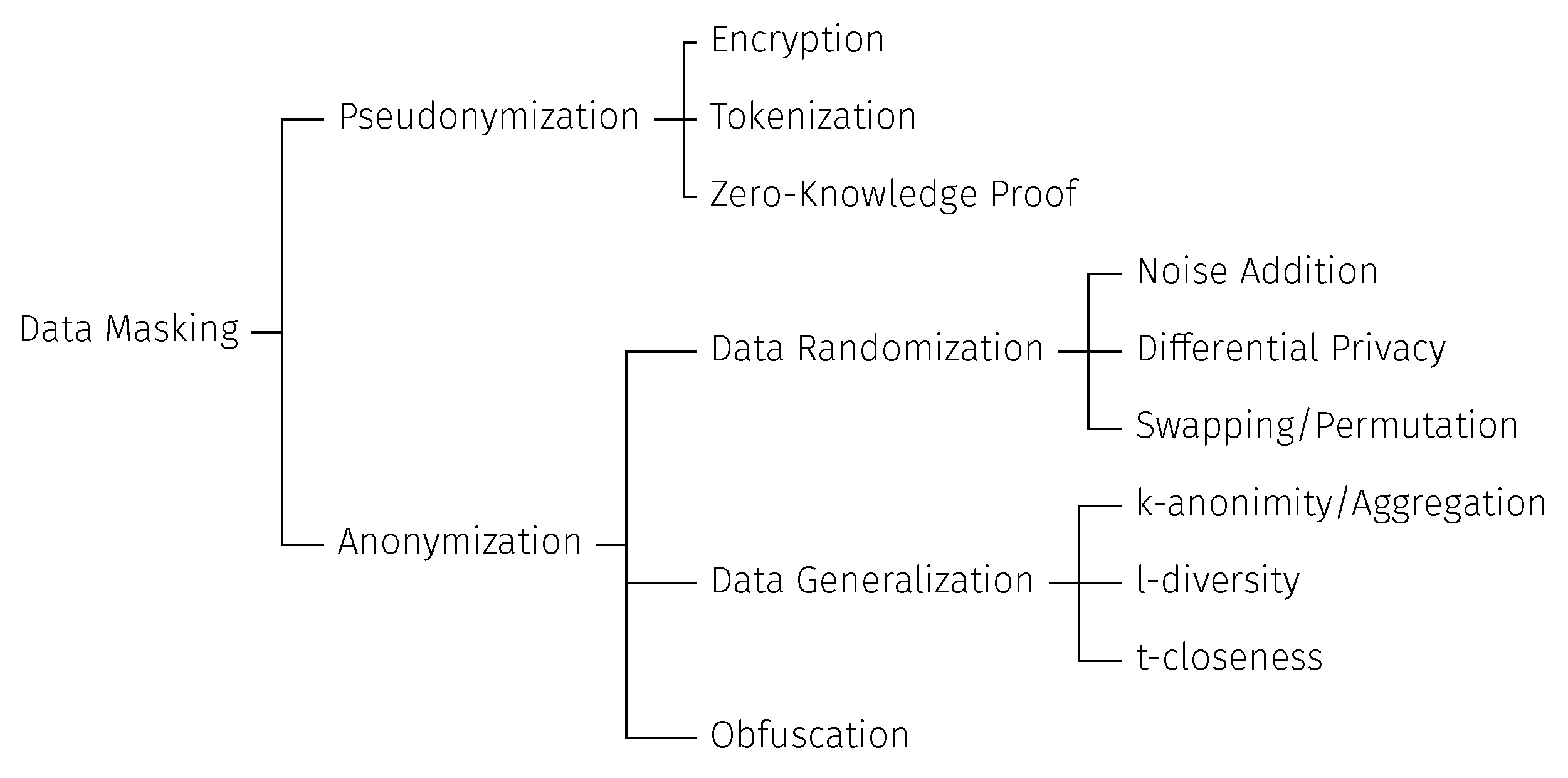

4. The Interface of Privacy and Data Security in ACSs

4.1. Privacy-Preserving Techniques Overview

- ”Singling out”: when the data subject’s data are isolated to identify the natural person attributes or track their localization.

- ”Linkability”: when correlation of multiple records, at least two, of the same individual leads to the identification of the person.

- ”Inference”: when a data subject’s data are deducted from a dataset or through additional information leading to their identification [30].

4.1.1. Pseudonymization

4.1.2. Anonymization

- k-anonymity/aggregation: hides a data subject in the crowd (called also equivalent class) by grouping their attributes with k-1 other individuals. The scheme provides a convenient protection from being singled out, though the inference risk remains important [28].

- l-diversity: handles the k-anonymity limitation by ensuring that in every crowd there are l-different values. Such difference reduces the inference risk but does not completely eliminate it [59].

- t-closeness: is a refinement of the l-diversity theory that aims to set a t-threshold by computing the resemblance of a sensitive value distribution within the equivalent class in comparison to the attribute distribution in the whole dataset [49].

4.2. Privacy-Preserving Pitfalls

- Pseudonymization is indicated as an appropriate “technical and organizational measure” for data protection (Article 25(1)) [7] without proposing the mixed use of pseudonymization and anonymization schemes to make the privacy-preserving level even higher.

- The GDPR considers the pseudonymization to be a reversible process and anonymization to be permanent without highlighting the de-anonymization risk over the time.

- The opinion 05/2014 WP29 introduced the main risks and mitigation solutions against the re-identification risk of anonymization; though the recommendations do not cope with the rapid evolving technologies as more risks and countermeasures are worthy to be extended by the WP29, which is currently represented by EDPB.

- The re-identification likelihood can never be zero.

- Anonymization is not everlasting, as it can be reverted in the future. Instead of a one-time operation, it should be assessed continuously.

- The choice of privacy-preserving scheme depends on the nature of the attribute of the private data itself. To illustrate, techniques applied to anonymize a data subject’s name or data entry identifier might not be suitable for location data.

- There is a common misunderstanding, as pointed out in Section 4.1.2, in defining data masking as a subcategory of anonymization techniques. However, this technique is broad enough to embed both pseudonymization and anonymization, which would apply to different data protection obligations.

- Some researchers wrongly discussed encryption as an anonymization scheme, though it should be considered as a powerful pseudonymization technique.

- There is no unique solution that fits all processing, but the privacy-preserving technique should be selected on a case-by-case basis and depending on technologies involved within the ACS.

5. Conclusions and Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| ACS | Automated city shuttle |

| AI | Artificial intelligence |

| CAV | Connected automated vehicle |

| CJEU | Court of Justice of the European Union |

| CPPA | Conditional privacy-preserving authentication |

| DPA | Data protection authorities |

| DPIA | Data protection impact assessment |

| EDPB | European Data Protection Board |

| ENISA | European Union Agency for Cybersecurity |

| EU | European Union |

| GDPR | General Data Protection Regulation |

| GPS | Differential Global Positioning Systems |

| GSIS | Group signature and identity-based signature |

| IaaS | Infrastructure as a service |

| IoT | Internet of Things |

| IoV | Internet of Vehicles |

| ITS | Intelligence transport system |

| LBS | Location-based services |

| MaaS | Mobility as a service |

| MNO | Mobile network operator |

| NIS | Network and information security |

| OEM | Automobile manufacturer |

| PaaS | Platform as a service |

| PbD | Privacy by design |

| PbDf | Privacy by default |

| PIPEDA | Personal Information Protection and Electronic Documents Act |

| PTO | Public transport operator |

| RSU | Roadside unit |

| SaaS | Software as a service |

| SAE | Society of Automotive Engineering |

| V2C | Vehicle-to-cloud |

| V2I | Vehicle-to-infrastructure |

| V2P | Vehicle-to-pedestrian |

| V2V | Vehicle-to-vehicle |

| V2X | Vehicle-to-everything |

| WP29 | Article 29Working Party |

| ZKP | Zero-knowledge proof |

References

- Balboni, P.; Botsi, A.; Francis, K.; Barata, M.T. Designing Connected and Automated Vehicles around Legal and Ethical Concerns: Data Protection as a Corporate Social Responsibility. In Proceedings of the WAIEL2020, Athens, Greece, 3 September 2020. [Google Scholar]

- Ainsalu, J.; Arffman, V.; Bellone, M.; Ellner, M.; Haapamäki, T.; Haavisto, N.; Josefson, E.; Ismailogullari, A.; Lee, B.; Madland, O.; et al. State of the art of automated buses. Sustainability 2018, 10, 3118. [Google Scholar] [CrossRef]

- Konstantas, D. From Demonstrator to Public Service: The AVENUE Experience. In The Robomobility Revolution of Urban Public Transport; Mira-Bonnardel, S., Antonialli, F., Attias, D., Eds.; Springler: Cham, Switzerland, 2021; pp. 107–130. [Google Scholar] [CrossRef]

- Taxonomy and Definitions for Terms Related to Driving Automation Systems for On-Road Motor Vehicles. SAE Technical Report J3016_202104. 2018. Available online: https://www.sae.org/standards/content/j3016_202104 (accessed on 23 March 2022).

- Elliott, D.; Keen, W.; Miao, L. Recent advances in connected and automated vehicles. J. Traffic Transp. Eng. (Engl. Ed.) 2019, 6, 109–131. [Google Scholar] [CrossRef]

- Veitas, V.K.; Delaere, S. In-vehicle data recording, storage and access management in autonomous vehicles. arXiv 2018, arXiv:1806.03243. [Google Scholar]

- European Union. Regulation (EU) 2016/679: The European Parliament and of the Council of 27 April 2016 on the Protection of Natural Persons with Regard to the Processing of Personal Data and on the Free Movement of Such Data. Off. J. Eur. Communities 2016, L119, 1–88. [Google Scholar]

- Smith, G.; Smith, G. Making Mobility-as-a-Service; Chalmers University of Technology: Gothenburg, Sweden, 2020. [Google Scholar]

- Article 29 Data Protection Working Party. Opinion 03/2017 on Processing Personal Data in the Context of Cooperative Intelligent Transport Systems (C-ITS)-217/EN-WP 252; Technical Report October; European Commission: Brussels, Belgium, 2017. [Google Scholar]

- European Union. Directive 2002/58/EC: The European Parliament and of the Council of 12 July 2002 Concerning the Processing of Personal Data and the Protection of Privacy in the Electronic Communications Sector (Directive on Privacy and Electronic Communications) (L 201). Off. J. Eur. Communities 2002, L201, 37–47. [Google Scholar]

- The European Parliament and the Council of the European Union. Directive (EU) 2016/ 1148 The European Parliament and of The Council—NIS Dircetive 1; Technical Report; European Commission: Brussels, Belgium, 2016. [Google Scholar]

- The European Parliament and the Council of the European Union. Proposal for a Directive Directive (EU) 2016/ 1148 of the European Parliament and of the Council—NIS Dircetive 2; Technical Report; European Commission: Brussels, Belgium, 2020. [Google Scholar]

- Costantini, F.; Thomopoulos, N.; Steibel, F.; Curl, A.; Lugano, G.; Kováčiková, T. Autonomous vehicles in a GDPR era: An international comparison. Adv. Transp. Policy Plan. 2020, 5, 191–213. [Google Scholar] [CrossRef]

- OneTrust Data Guidance. Comparing Privacy Laws: GDPR vs. PIPEDA. Technical Report. 2020. Available online: https://www.dataguidance.com/sites/default/files/gdpr_v_pipeda.pdf (accessed on 15 April 2022).

- Australia, N. Regulating Government Access to C-ITS and Automated Vehicle Data; Technical Report September; National Transport Commission: Melbourne, VIC, Australia, 2018. [Google Scholar]

- George, D.; Reutimann, K.; Larrieux, A.T. GDPR bypass by design? Transient processing of data under the GDPR. Int. Data Priv. Law 2019, 9, 285–298. [Google Scholar] [CrossRef]

- Taeihagh, A.; Lim, H.S.M. Governing autonomous vehicles: Emerging responses for safety, liability, privacy, cybersecurity, and industry risks. Transp. Rev. 2019, 39, 103–128. [Google Scholar] [CrossRef] [Green Version]

- Lim, H.S.M.; Taeihagh, A. Autonomous vehicles for smart and sustainable cities: An in-depth exploration of privacy and cybersecurity implications. Energies 2018, 11, 1062. [Google Scholar] [CrossRef] [Green Version]

- Pattinson, J.A.; Chen, H.; Basu, S. Legal issues in automated vehicles: Critically considering the potential role of consent and interactive digital interfaces. Humanit. Soc. Sci. Commun. 2020, 7, 1–10. [Google Scholar] [CrossRef]

- Vallet, F. The GDPR and Its Application in Connected Vehicles—Compliance and Good Practices. In Electronic Components and Systems for Automotive Applications; Springer: Cham, Switzerland, 2019; pp. 245–254. [Google Scholar] [CrossRef]

- Krontiris, I.; Grammenou, K.; Terzidou, K.; Zacharopoulou, M.; Tsikintikou, M.; Baladima, F.; Sakellari, C.; Kaouras, K. Autonomous Vehicles: Data Protection and Ethical Considerations. In Proceedings of the CSCS 2020: ACM Computer Science in Cars Symposium, Feldkirchen, Germany, 2 December 2020. [Google Scholar] [CrossRef]

- Bastos, D.; El-Mousa, F.; Giubilo, F. GDPR Privacy Implications for the Internet of Things. In Proceedings of the 4th Annual IoT Security Foundation Conference, London, UK, 4 December 2018. [Google Scholar]

- Collingwood, L. Privacy implications and liability issues of autonomous vehicles. Inf. Commun. Technol. Law 2017, 26, 32–45. [Google Scholar] [CrossRef] [Green Version]

- Glancy, D.J. Santa Clara Law Review Privacy in Autonomous Vehicles. Number Artic. 2012, 52, 12–14. [Google Scholar]

- Karnouskos, S.; Kerschbaum, F. Privacy and integrity considerations in hyperconnected autonomous vehicles. Proc. IEEE 2018, 106, 160–170. [Google Scholar] [CrossRef]

- Hes, R.L.; Borking, J.J. Privacy-Enhancing Technologies: The Path to Anonymity; Registratiekamer: The Hague, The Netherlands, 1988. [Google Scholar]

- Mulder, T.; Vellinga, N.E. Exploring data protection challenges of automated driving. Comput. Law Secur. Rev. 2021, 40, 105530. [Google Scholar] [CrossRef]

- Ribeiro, S.L.; Nakamura, E.T. Privacy Protection with Pseudonymization and Anonymization in a Health IoT System: Results from OCARIoT. In Proceedings of the 2019 IEEE 19th International Conference on Bioinformatics and Bioengineering (BIBE), Athens, Greece, 28–30 October 2019; pp. 904–908. [Google Scholar] [CrossRef]

- Brasher, E.A. Addressing the Failure of Anonymization: Guidance from the European Union’s General Data Protection Regulation. Columbia Bus. Law Rev. 2018, 2018, 209–253. [Google Scholar]

- Li, H.; Ma, D.; Medjahed, B.; Kim, Y.S.; Mitra, P. Analyzing and Preventing Data Privacy Leakage in Connected Vehicle Services. Sae Int. J. Adv. Curr. Pract. Mobil. 2019, 1, 1035–1045. [Google Scholar] [CrossRef]

- Löbner, S.; Tronnier, F.; Pape, S.; Rannenberg, K. Comparison of De-Identification Techniques for privacy-preserving Data Analysis in Vehicular Data Sharing. In Computer Science in Cars Symposium; ACM: New York, NY, USA, 2021; pp. 1–11. [Google Scholar] [CrossRef]

- ENISA. Data Pseudonymisation: Advanced Techniques & Use Cases; Technical Report; ENISA: Athens, Greece, 2021. [Google Scholar] [CrossRef]

- European Union Agency for Cybersecurity. Data Protection Engineering; Technical Report; ENISA: Athens, Greece, 2022. [Google Scholar] [CrossRef]

- Lim, J.; Yu, H.; Kim, K.; Kim, M.; Lee, S.B. Preserving Location Privacy of Connected Vehicles with Highly Accurate Location Updates. IEEE Commun. Lett. 2017, 21, 540–543. [Google Scholar] [CrossRef]

- Article 29 Protection Working Party. Opinion 05/2014 on Anonymisation Techniques; Technical Report April; European Commission: Bussels, Belgium, 2014. [Google Scholar]

- EDPB. Guidelines 07/2020 on the Concepts of Controller and Processor in the GDPR; Technical Report; EDPB: Brussels, Belgium, 2020. [Google Scholar]

- EDPB. Guidelines 1/2020 on Processing Personal Data in the Context of Connected Vehicles and Mobility Related Applications; Technical Report March; European Data protection Board: Brussels, Belgium, 2021. [Google Scholar]

- Article 29 Data Protection Working Party. Guidelines on Automated Individual Decision-Making and Profiling for the Purposes of Regulation 2016/679; Technical Report; Article 29 WP; European Commission: Brussels, Belgium, 2018. [Google Scholar]

- Curia Caselaw. Judgment of The Court. 2018. Available online: https://curia.europa.eu/juris/document/document.jsf?docid=202543&doclang=EN (accessed on 25 February 2022).

- Curia Caselaw. Judgment of the Court on Facebook Ireland Ltd. 2019. Available online: https://curia.europa.eu/juris/document/document.jsf?docid=216555&mode=req&pageIndex=1&dir=&occ=first&part=1&text=&doclang=EN&cid=4232790 (accessed on 10 March 2022).

- European Data Protection Supervisor. EDPS Guidelines on the Concepts of Controller, Processor and Joint Controllership under Regulation (EU) 2018/1725; Technical Report; EDPS: Brussels, Belgium, 2019. [Google Scholar]

- Mulder, T.; Vellinga, N. Handing over the Wheel, Giving up Your Privacy? In Proceedings of the 13th ITS Europe Congress, Eindhoven, The Netherlands, 3–6 June 2019. [Google Scholar]

- Article 29 Data Protection Working Party. Guidelines on Data Protection Impact Assessment (DPIA) and Determining Whether Processing Is “Likely to Result in a High Risk” for the Purposes of Regulation 2016/679; Technical Report; European Commission: Bussels, Belgium, 2017. [Google Scholar]

- Bu-Pasha, S. Location Data, Personal Data Protection and Privacy in Mobile Device Usage: An EU Law Perspective. Ph.D. Thesis, Faculty of Law, Helsinki, Finland, 2018. [Google Scholar]

- AEPD. Ten Misunderstandings Related to Anonymisation; Technical Report 1; AEPD: Madrid, Spain, 2019. [Google Scholar]

- Vokinger, K.N.; Stekhoven, D.J.; Krauthammer, M. Lost in Anonymization—A Data Anonymization Reference Classification Merging Legal and Technical Considerations. J. Law Med. Ethics 2020, 48, 228–231. [Google Scholar] [CrossRef]

- Manivannan, D.; Moni, S.S.; Zeadally, S. Secure authentication and privacy-preserving techniques in Vehicular Ad-hoc NETworks (VANETs). Veh. Commun. 2020, 25, 100247. [Google Scholar] [CrossRef]

- Dibaei, M.; Zheng, X.; Jiang, K.; Abbas, R.; Liu, S.; Zhang, Y.; Xiang, Y.; Yu, S. Attacks and defences on intelligent connected vehicles: A survey. Digit. Commun. Netw. 2020, 6, 399–421. [Google Scholar] [CrossRef]

- Ouazzani, Z.E.; Bakkali, H.E. A Classification of non-Cryptographic Anonymization Techniques ensuring Privacy in Big Data. Int. J. Commun. Netw. Inf. Secur. (IJCNIS) 2020, 12, 142–152. [Google Scholar]

- De Montjoye, Y.A.; Hidalgo, C.A.; Verleysen, M.; Blondel, V.D. Unique in the Crowd: The privacy bounds of human mobility. Sci. Rep. 2013, 3, 1376. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Wan, Z.; Guan, Z.; Zhou, Y.; Ren, K. Zk-AuthFeed: How to feed authenticated data into smart contract with zero knowledge. In Proceedings of the 2019 2nd IEEE International Conference on Blockchain, Blockchain 2019, Atlanta, GA, USA, 14–17 July 2019; pp. 83–90. [Google Scholar] [CrossRef]

- Gabay, D.; Akkaya, K.; Cebe, M. Privacy-Preserving Authentication Scheme for Connected Electric Vehicles Using Blockchain and Zero Knowledge Proofs. IEEE Trans. Veh. Technol. 2020, 69, 5760–5772. [Google Scholar] [CrossRef]

- Takbiri, N.; Houmansadr, A.; Goeckel, D.L.; Pishro-Nik, H. Limits of location privacy under anonymization and obfuscation. In Proceedings of the 2017 IEEE International Symposium on Information Theory (ISIT), Aachen, Germany, 25–30 June 2017; pp. 764–768. [Google Scholar] [CrossRef]

- Dwork, C.; Kohli, N.; Mulligan, D. Differential Privacy in Practice: Expose your Epsilons! J. Priv. Confidentiality 2019, 9. [Google Scholar] [CrossRef] [Green Version]

- Ha, T.; Dang, T.K.; Dang, T.T.; Truong, T.A.; Nguyen, M.T. Differential Privacy in Deep Learning: An Overview. In Proceedings of the 2019 International Conference on Advanced Computing and Applications (ACOMP), Nha Trang, Vietnam, 26–28 November 2019; pp. 97–102. [Google Scholar] [CrossRef]

- Tachepun, C.; Thammaboosadee, S. A Data Masking Guideline for Optimizing Insights and Privacy Under GDPR Compliance. In Proceedings of the 11th International Conference on Advances in Information Technology, Bangkok, Thailand, 1–3 July 2020; ACM: New York, NY, USA, 2020; pp. 1–9. [Google Scholar] [CrossRef]

- Murthy, S.; Abu Bakar, A.; Abdul Rahim, F.; Ramli, R. A Comparative Study of Data Anonymization Techniques. In Proceedings of the 2019 IEEE 5th International Conference on Big Data Security on Cloud (BigDataSecurity), IEEE International Conference on High Performance and Smart Computing, (HPSC) and IEEE International Conference on Intelligent Data and Security (IDS), Washington, DC, USA, 27–29 May 2019; pp. 306–309. [Google Scholar] [CrossRef]

- Wang, J.; Cai, Z.; Yu, J. Achieving Personalized k-Anonymity-Based Content Privacy for Autonomous Vehicles in CPS. IEEE Trans. Ind. Inform. 2020, 16, 4242–4251. [Google Scholar] [CrossRef]

- Sangeetha, S.; Sudha Sadasivam, G. Privacy of Big Data: A Review. In Handbook of Big Data and IoT Security; Springer: Cham, Switzerland, 2019; pp. 5–23. [Google Scholar] [CrossRef]

- Kawamoto, Y.; Murakami, T. On the Anonymization of Differentially Private Location Obfuscation. In Proceedings of the 2018 International Symposium on Information Theory and Its Applications (ISITA), Singapore, 28–31 October 2018. [Google Scholar]

- Lu, Z.; Qu, G.; Liu, Z. A Survey on Recent Advances in Vehicular Network Security, Trust, and Privacy. IEEE Trans. Intell. Transp. Syst. 2019, 20, 760–776. [Google Scholar] [CrossRef]

- Murakami, T. A Succinct Model for Re-identification of Mobility Traces Based on Small Training Data; A Succinct Model for Re-identification of Mobility Traces Based on Small Training Data. In Proceedings of the 2018 International Symposium on Information Theory and Its Applications (ISITA), Singapore, 28–31 October 2018. [Google Scholar]

- Wadhwani, P.; Saha, P. Autonomous Bus Market Trends 2022–2028, Size Analysis Report; Technical Report; Global Market Insights: Selbyville, DE, USA, 2021. [Google Scholar]

- Center for Strategic and International Studies. European Union Releases Draft Mandatory Human Rights and Environmental Due Diligence Directive; Center for Strategic and International Studies: Washington, DC, USA, 2022. [Google Scholar]

- Evas, T.; Heflich, A. Artificial Intelligence in Road Transport; Technical Report; European Parliament: Strasbourg, France, 2021. [Google Scholar]

| Related Work | Year | Scope | PC a | GDPR Implications | PP d | GDPR Pitfalls | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| ACS | CAV | IoT | IT | Principles | DS Rights b | DC Obligations c | Roles | DPIA | Pseudonymization | Anonymization | Risks | ||||

| ENISA [33] | 2022 | ✗ | ✗ | ✗ | ✓ | ✗ | ✗ | ✗ | ✗ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

| Mulder and Vellinga [27] | 2021 | ✗ | ✓ | ✗ | ✗ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✗ | ✗ |

| Löbner et al. [31] | 2021 | ✗ | ✓ | ✗ | ✗ | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ | ✓ | ✗ |

| ENISA [32] | 2021 | ✗ | ✓ | ✗ | ✗ | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ | ✗ | ✗ | ✗ |

| Pattinson et al. [19] | 2020 | ✗ | ✓ | ✗ | ✗ | ✓ | ✓ | ✓ | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ |

| Krontiris et al. [21] | 2020 | ✗ | ✓ | ✗ | ✗ | ✓ | ✓ | ✓ | ✓ | ✗ | ✓ | ✗ | ✗ | ✗ | ✗ |

| Costantini et al. [13] | 2020 | ✗ | ✓ | ✗ | ✗ | ✓ | ✗ | ✓ | ✗ | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ |

| Vallet [20] | 2019 | ✗ | ✓ | ✗ | ✗ | ✓ | ✓ | ✓ | ✗ | ✗ | ✗ | ✓ | ✗ | ✗ | ✗ |

| Ribeiro and Nakamura [28] | 2019 | ✗ | ✗ | ✓ | ✗ | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ | ✓ | ✗ | ✗ |

| Li et al. [30] | 2019 | ✗ | ✓ | ✗ | ✗ | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ | ✓ | ✗ |

| Taeihagh and Lim [17,34] | 2018 | ✗ | ✓ | ✗ | ✗ | ✓ | ✓ | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ |

| Veitas and Delaere [6] | 2018 | ✗ | ✓ | ✗ | ✗ | ✓ | ✓ | ✗ | ✗ | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ |

| Bastos et al. [22] | 2018 | ✗ | ✗ | ✓ | ✗ | ✗ | ✓ | ✓ | ✓ | ✗ | ✓ | ✓ | ✓ | ✗ | ✗ |

| Ainsalu et al. [2] | 2018 | ✓ | ✗ | ✗ | ✗ | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ |

| Karnouskos and Kerschbaum [25] | 2018 | ✗ | ✓ | ✗ | ✗ | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ | ✓ | ✗ | ✗ |

| Brasher [29] | 2018 | ✗ | ✗ | ✗ | ✓ | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ | ✓ | ✓ | ✓ |

| Collingwood [23] | 2017 | ✗ | ✓ | ✗ | ✗ | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ | ✓ | ✗ | ✗ |

| WP29 [35] | 2014 | ✗ | ✗ | ✗ | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ | ✓ | ✓ | ✗ |

| Glancy [24] | 2012 | ✗ | ✓ | ✗ | ✗ | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ | ✓ | ✓ | ✗ |

| This work | ✓ | ✗ | ✗ | ✗ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Benyahya, M.; Kechagia, S.; Collen, A.; Nijdam, N.A. The Interface of Privacy and Data Security in Automated City Shuttles: The GDPR Analysis. Appl. Sci. 2022, 12, 4413. https://doi.org/10.3390/app12094413

Benyahya M, Kechagia S, Collen A, Nijdam NA. The Interface of Privacy and Data Security in Automated City Shuttles: The GDPR Analysis. Applied Sciences. 2022; 12(9):4413. https://doi.org/10.3390/app12094413

Chicago/Turabian StyleBenyahya, Meriem, Sotiria Kechagia, Anastasija Collen, and Niels Alexander Nijdam. 2022. "The Interface of Privacy and Data Security in Automated City Shuttles: The GDPR Analysis" Applied Sciences 12, no. 9: 4413. https://doi.org/10.3390/app12094413

APA StyleBenyahya, M., Kechagia, S., Collen, A., & Nijdam, N. A. (2022). The Interface of Privacy and Data Security in Automated City Shuttles: The GDPR Analysis. Applied Sciences, 12(9), 4413. https://doi.org/10.3390/app12094413