A Novel Data Augmentation-Based Brain Tumor Detection Using Convolutional Neural Network

Abstract

:1. Introduction

Contribution

- Detecting brain tumors from MRI datasets using deep learning and convolutional neural networks.

- Sometimes, we face issues like limited data, so we are extremely interested in the data augmentation technique. This technique allows us to implement the detection algorithms we plan to develop.

- In our paper, we used data augmentation techniques to improve the detection of brain tumors by using the VGG-16 model.

- Experimental results showed that expanding a dataset by using flipping, rotation, and translation techniques is very useful to train the VGG model.

2. Related Works

3. A Taxonomy of Deep Convolutional Neural Networks

3.1. LeNet

3.2. AlexNet

3.3. GoogleNet

3.4. ResNet

3.5. VGGNet

3.6. DenseNet

3.7. SqueezeNet

- Reduction of the filter size with the use of 1 × 1 filter instead of 3 × 3.

- Reduction of the input channels to 3 × 3 filters.

- Downsampling at the end of the array so that the convolutional layers have large activation maps.

3.8. MobileNet

- Depthwise Convolution or Convolution in depth.

- Pointwise Convolution or Point Convolution.

4. Methodology

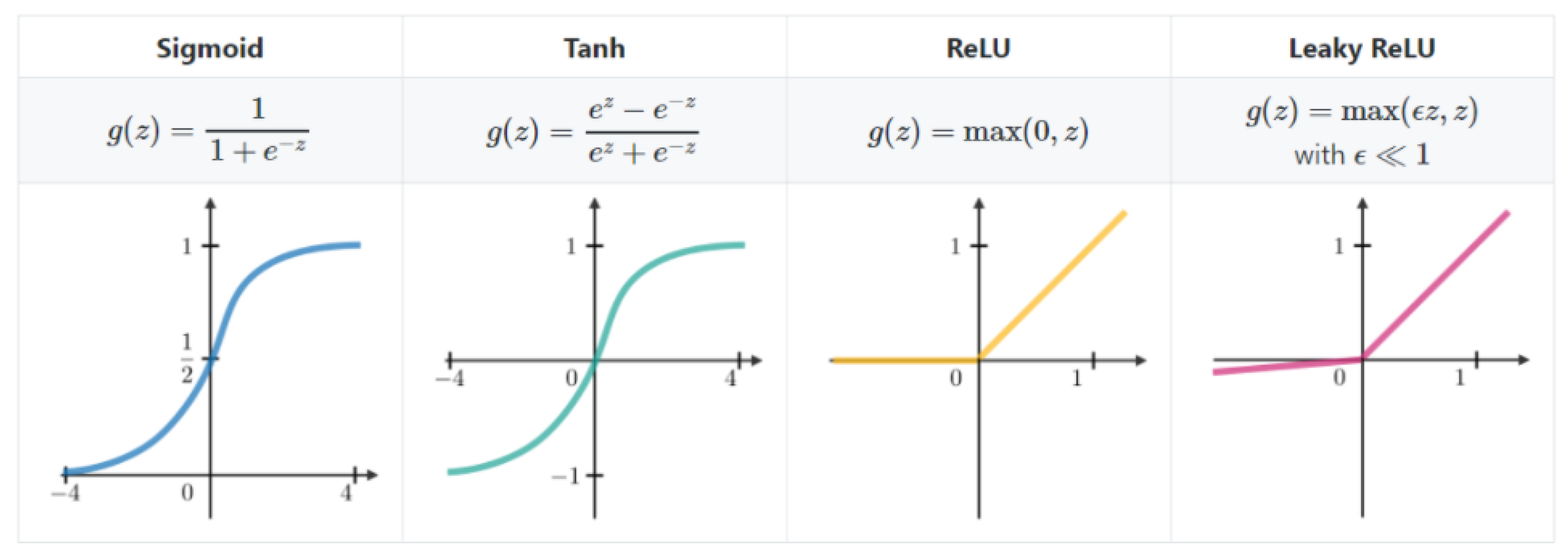

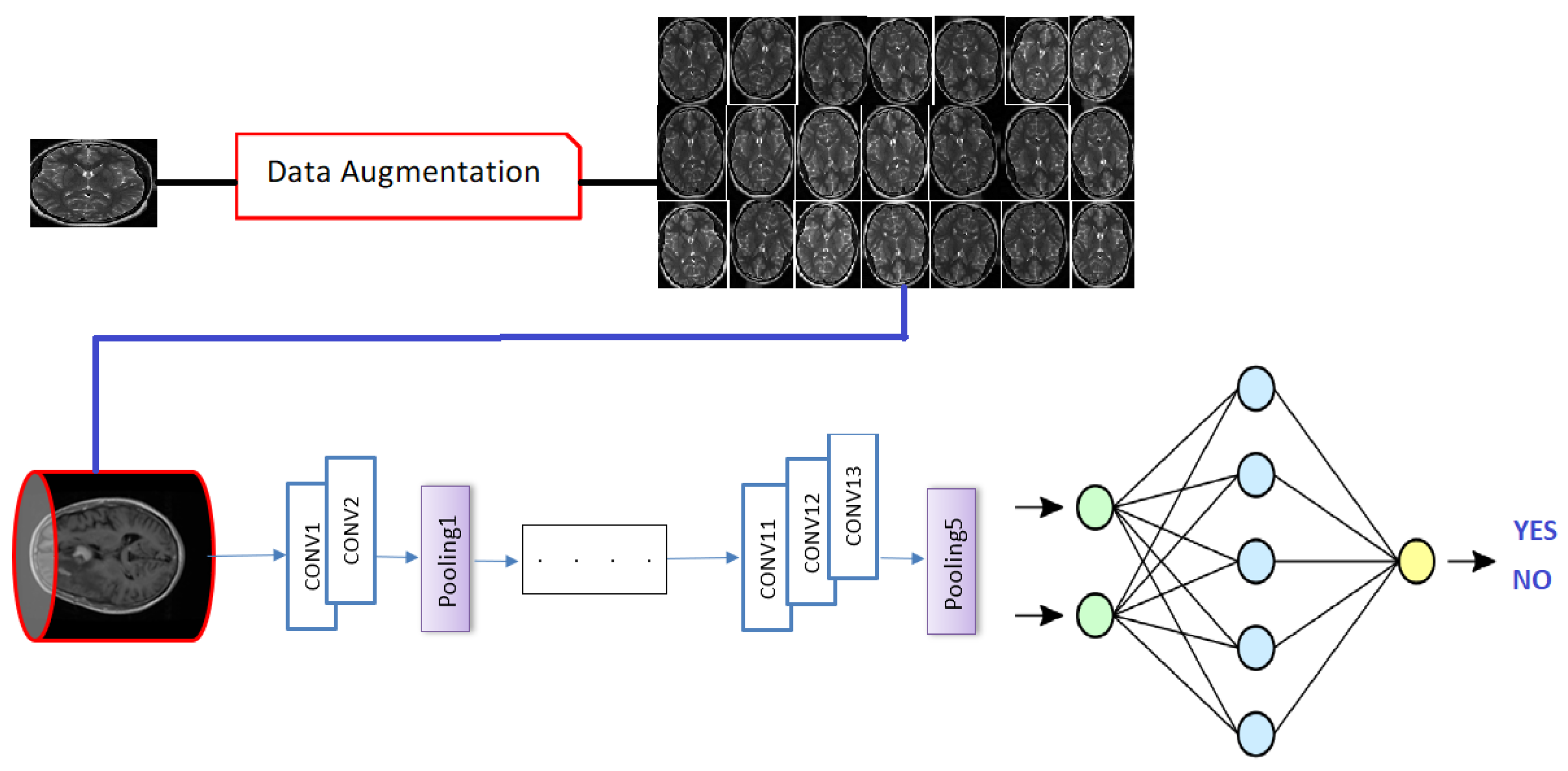

4.1. Deep Convolutional Neural Network

4.1.1. Convolution Layer

4.1.2. Back Propagation

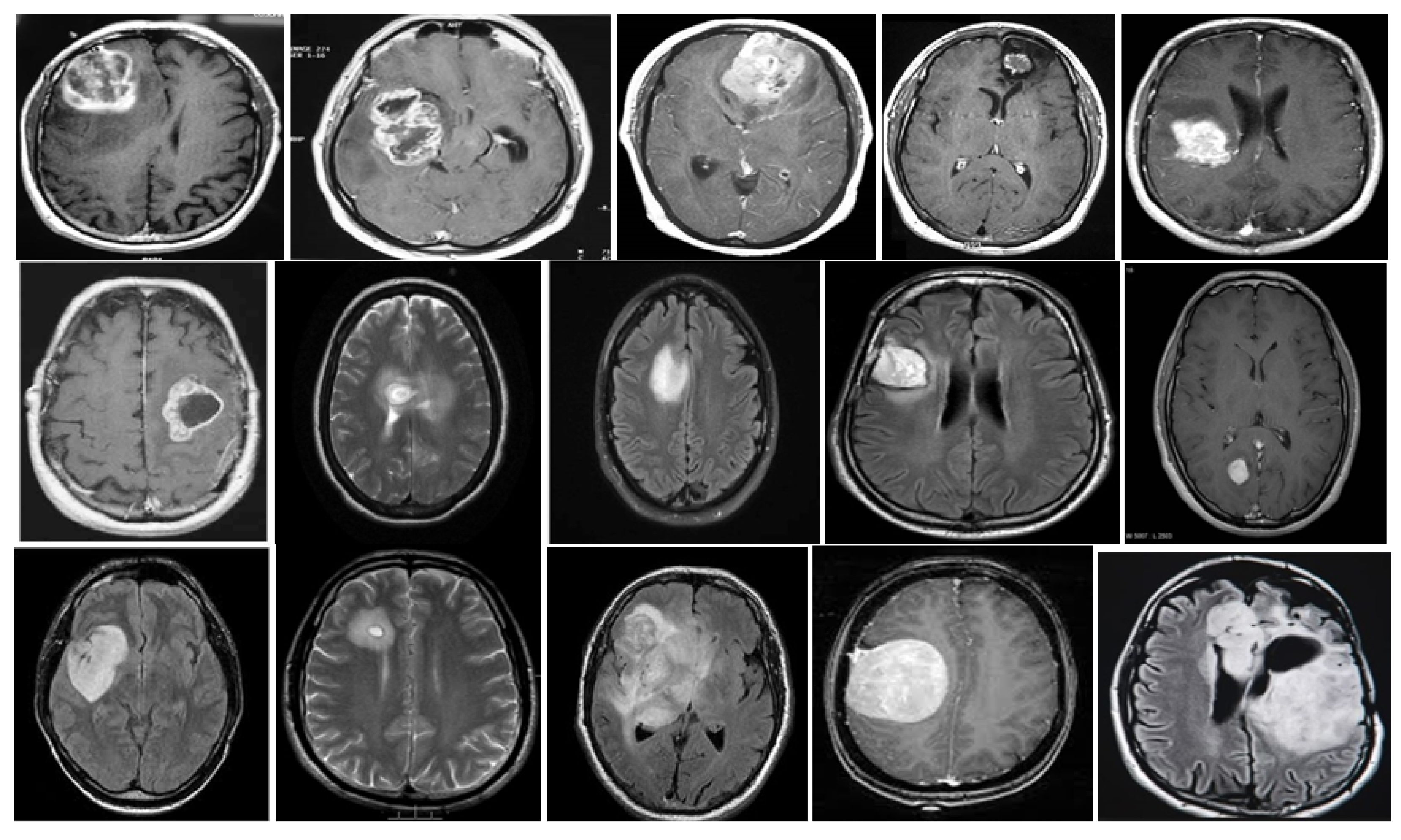

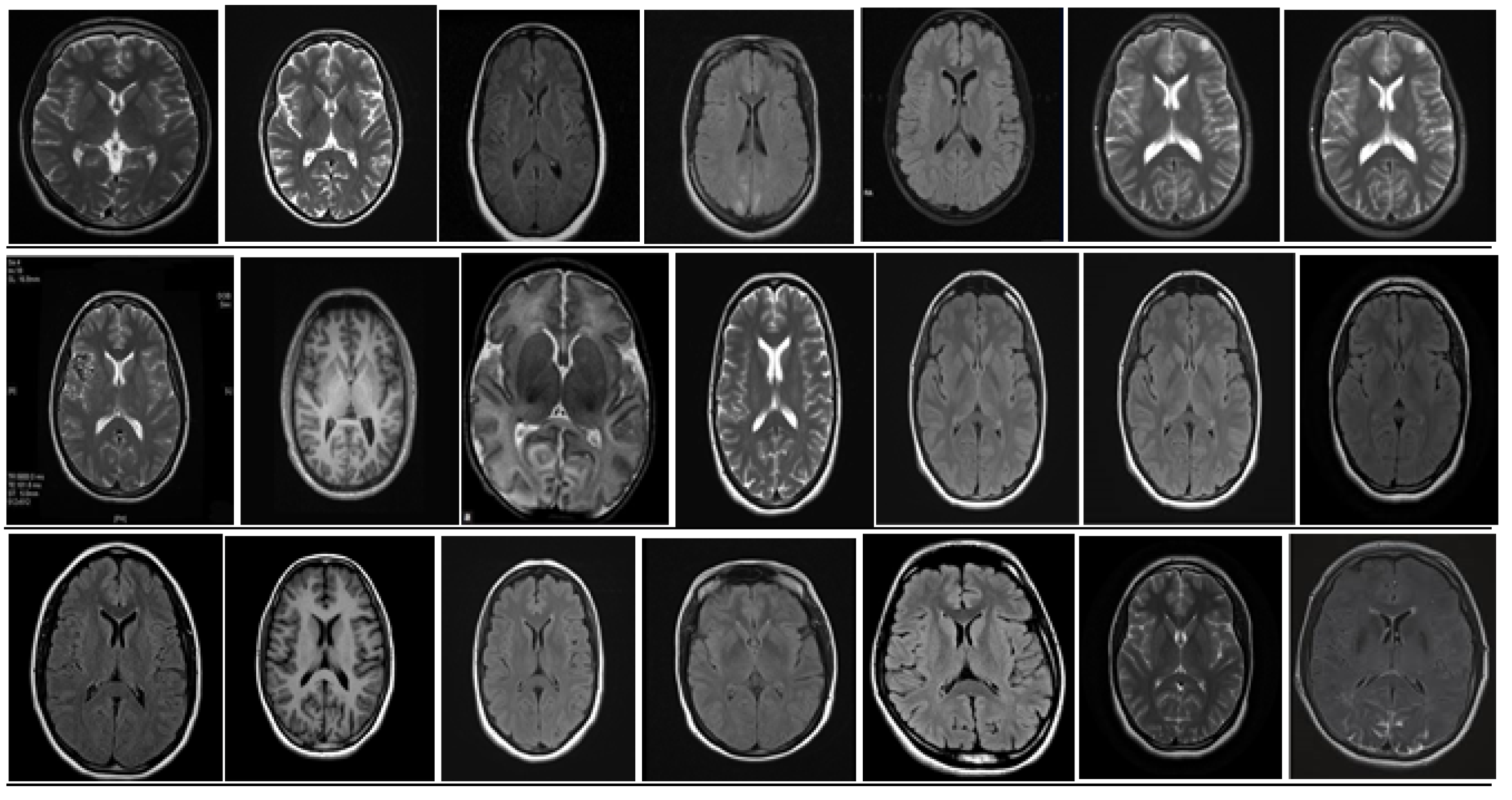

5. Database and Dataset

5.1. DataBase Collection

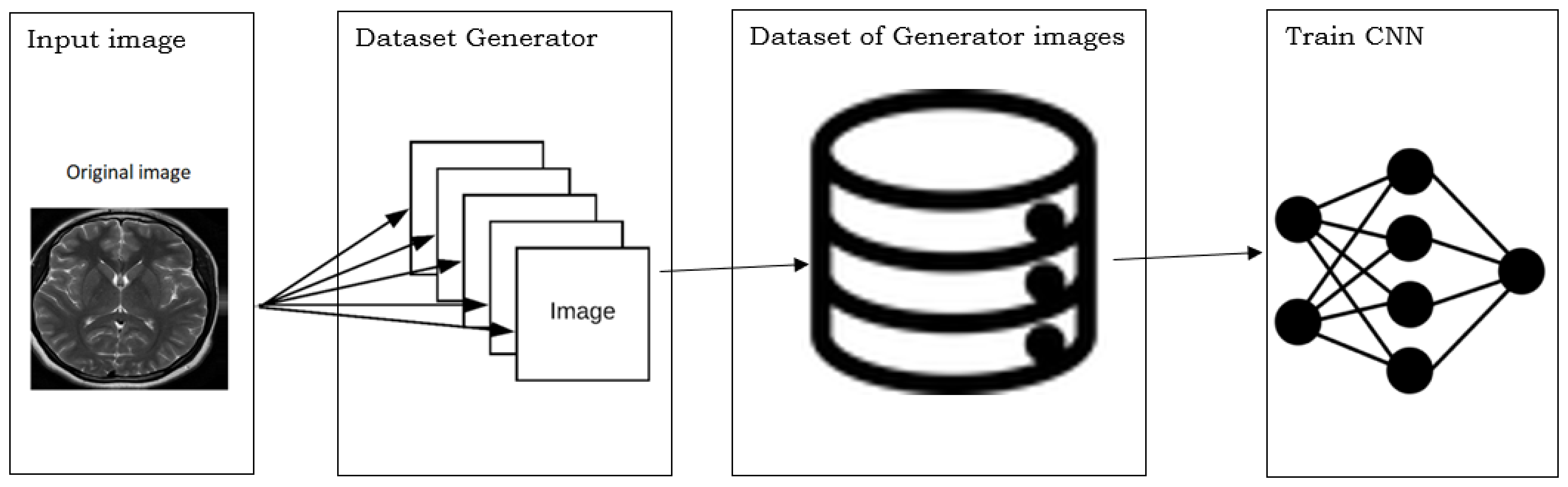

5.2. Database Augmentation

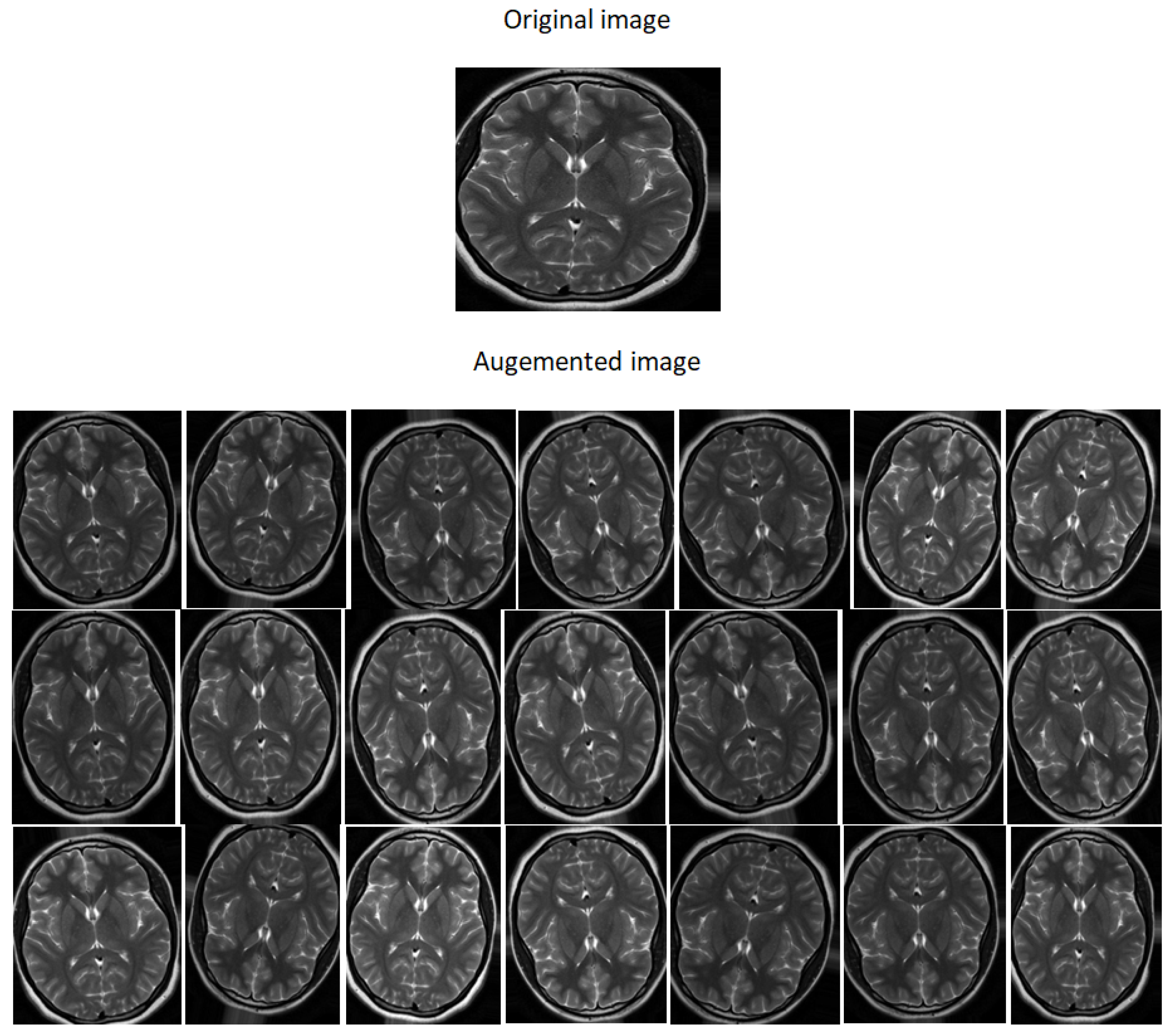

- Dataset generation and expanding an existing dataset (Figure 5)

- In-place/on-the-fly data augmentation

- Combining dataset generation and in-place augmentation.

- Flipping: creates a mirror reflection of an original image,

- Rotation: rotating an image by an angle around the center pixel,

- Translation: involves moving the image along the X or Y direction or both.

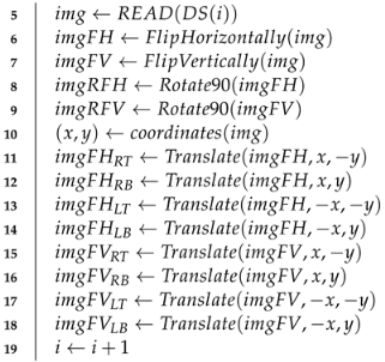

| Algorithm 1: Data Augmentation |

| Input: DataSet (DS) Output: Augmented images 1 DataAugmentation 2 number of images in DS 3 4 while do  20 end |

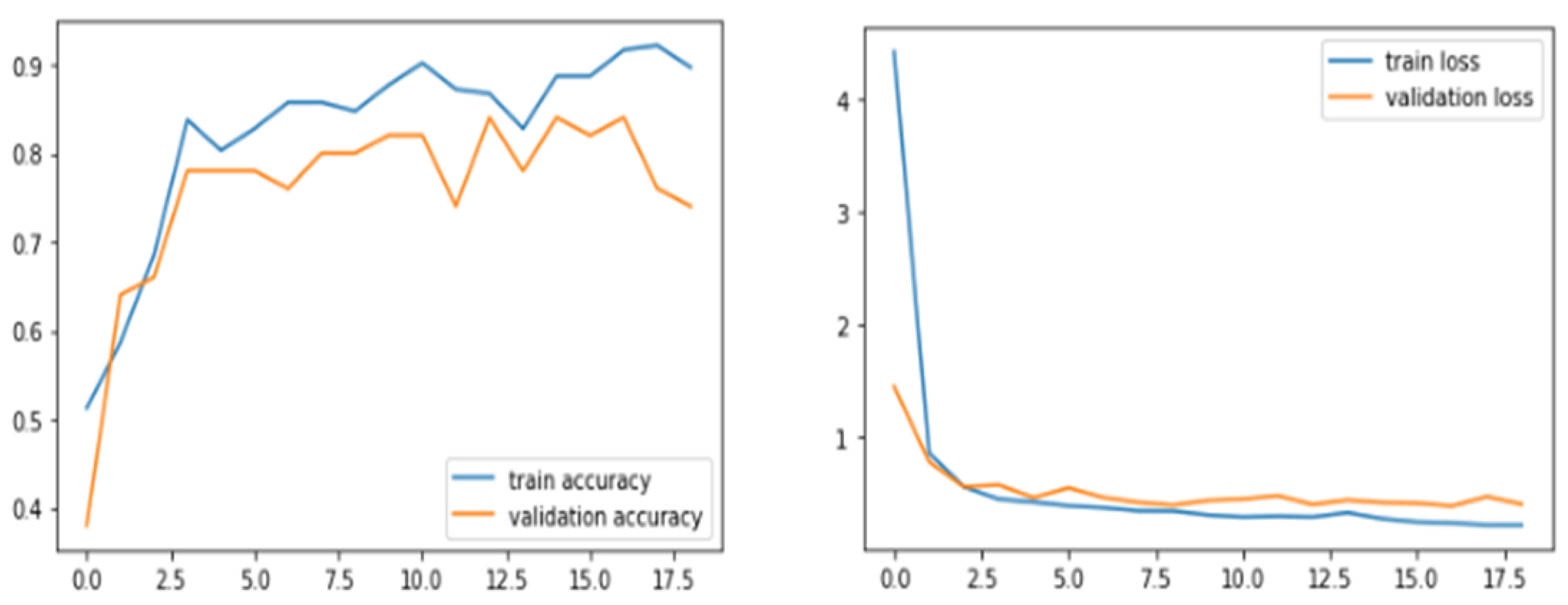

6. Results and Discussions

| Model | Accuracy | Precision | Recall | F1-Score |

|---|---|---|---|---|

| VGG16 | 0.96 | 0.93 | 1.0 | 0.97 |

| ResNet-50 | 0.89 | 0.87 | 0.93 | 0.90 |

| VGG-19 | 0.93 | 0.94 | 0.93 | 0.93 |

| Inception-V3 | 0.75 | 0.77 | 0.71 | 0.74 |

| ResNet-101 | 0.74 | 0.74 | 0.74 | 0.73 |

| DenseNet121 | 0.49 | 0.50 | 0.48 | 0.49 |

| [69] | 0.97 | 0.98 | 0.95 | 0.96 |

| [70] | 0.96 | 0.96 | 0.98 | 0.95 |

| [71] | 0.97 | 0.97 | 0.97 | 0.97 |

| [72] | 0.79 | 0.76 | 0.86 | 0.81 |

| [73] | 0.96 | 0.97 | 0.80 | 0.88 |

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Sung, H.; Ferlay, J.; Siegel, R.L.; Laversanne, M.; Soerjomataram, I.; Jemal, A.; Bray, F. Global cancer statistics 2020: GLOBOCAN estimates of incidence and mortality worldwide for 36 cancers in 185 countries. CA A Cancer J. Clin. 2021, 71, 209–249. [Google Scholar] [CrossRef] [PubMed]

- Toufiq, D.M.; Ali Makki Sagheer, H. A Review on Brain Tumor Classification in MRI Images. Turk. J. Comput. Math. Educ. (TURCOMAT) 2021, 12, 1958–1969. [Google Scholar]

- Magadza, T.; Viriri, S. Deep Learning for Brain Tumor Segmentation: A Survey of State-of-the-Art. J. Imaging 2021, 7, 19. [Google Scholar] [CrossRef] [PubMed]

- Chauhan, S.; More, A.; Uikey, R.; Malviya, P.; Moghe, A. Brain tumor detection and classification in MRI images using image and data mining. In Proceedings of the 2017 International Conference on Recent Innovations in Signal Processing and Embedded Systems (RISE), Bhopal, India, 27–29 October 2017; IEEE: Piscataway, NJ, USA, 2017; pp. 223–231. [Google Scholar]

- Wang, X.; Wang, Z. The method for image retrieval based on multi-factors correlation utilizing block truncation coding. Pattern Recognit. 2014, 47, 3293–3303. [Google Scholar] [CrossRef]

- Unar, S.; Wang, X.; Wang, C.; Wang, Y. A decisive content based image retrieval approach for feature fusion in visual and textual images. Knowl.-Based Syst. 2019, 179, 8–20. [Google Scholar] [CrossRef]

- Wang, X.y.; Chen, Z.f.; Yun, J.j. An effective method for color image retrieval based on texture. Comput. Stand. Interfaces 2012, 34, 31–35. [Google Scholar] [CrossRef]

- Wang, C.; Wang, X.; Xia, Z.; Ma, B.; Shi, Y.Q. Image description with polar harmonic Fourier moments. IEEE Trans. Circuits Syst. Video Technol. 2019, 30, 4440–4452. [Google Scholar] [CrossRef]

- Wang, C.; Wang, X.; Xia, Z.; Zhang, C. Ternary radial harmonic Fourier moments based robust stereo image zero-watermarking algorithm. Inf. Sci. 2019, 470, 109–120. [Google Scholar] [CrossRef]

- Bhoi, A.K.; Mallick, P.K.; Liu, C.M.; Balas, V.E. Bio-Inspired Neurocomputing; Springer: Berlin/Heidelberg, Germany, 2021. [Google Scholar]

- Jyotiyana, M.; Kesswani, N. A Study on Deep Learning in Neurodegenerative Diseases and Other Brain Disorders. In Rising Threats in Expert Applications and Solutions; Springer: Berlin/Heidelberg, Germany, 2021; pp. 791–799. [Google Scholar]

- Montemurro, N.; Condino, S.; Cattari, N.; D’Amato, R.; Ferrari, V.; Cutolo, F. Augmented Reality-Assisted Craniotomy for Parasagittal and Convexity En Plaque Meningiomas and Custom-Made Cranio-Plasty: A Preliminary Laboratory Report. Int. J. Environ. Res. Public Health 2021, 18, 9955. [Google Scholar] [CrossRef]

- Condino, S.; Montemurro, N.; Cattari, N.; D’Amato, R.; Thomale, U.; Ferrari, V.; Cutolo, F. Evaluation of a wearable AR platform for guiding complex craniotomies in neurosurgery. Ann. Biomed. Eng. 2021, 49, 2590–2605. [Google Scholar] [CrossRef]

- Yildirim, M.; Cinar, A.C. Classification of White Blood Cells by Deep Learning Methods for Diagnosing Disease. Rev. D’Intell. Artif. 2019, 33, 335–340. [Google Scholar] [CrossRef]

- Hassan, T.M.; Elmogy, M.; Sallam, E.S. Diagnosis of focal liver diseases based on deep learning technique for ultrasound images. Arab. J. Sci. Eng. 2017, 42, 3127–3140. [Google Scholar] [CrossRef]

- Arjmand, A.; Angelis, C.T.; Christou, V.; Tzallas, A.T.; Tsipouras, M.G.; Glavas, E.; Forlano, R.; Manousou, P.; Giannakeas, N. Training of deep convolutional neural networks to identify critical liver alterations in histopathology image samples. Appl. Sci. 2020, 10, 42. [Google Scholar] [CrossRef] [Green Version]

- Tabrizchi, H.; Mosavi, A.; Szabo-Gali, A.; Felde, I.; Nadai, L. Rapid COVID-19 diagnosis using deep learning of the computerized tomography Scans. In Proceedings of the 2020 IEEE 3rd International Conference and Workshop in Óbuda on Electrical and Power Engineering (CANDO-EPE), Budapest, Hungary, 18–19 November 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 000173–000178. [Google Scholar]

- Sandhya, Y.; Sahoo, P.K.; Eswaran, K. Malaria Disease Detection Using Deep Learning Technique. Int. J. Adv. Sci. Technol. 2020, 29, 7736–7745. [Google Scholar]

- Murtaza, G.; Shuib, L.; Mujtaba, G.; Raza, G. Breast cancer multi-classification through deep neural network and hierarchical classification approach. Multimed. Tools Appl. 2020, 79, 15481–15511. [Google Scholar] [CrossRef]

- Kieu, S.T.H.; Bade, A.; Hijazi, M.H.A.; Kolivand, H. A Survey of Deep Learning for Lung Disease Detection on Medical Images: State-of-the-Art, Taxonomy, Issues and Future Directions. J. Imaging 2020, 6, 131. [Google Scholar] [CrossRef]

- McBee, M.P.; Awan, O.A.; Colucci, A.T.; Ghobadi, C.W.; Kadom, N.; Kansagra, A.P.; Tridandapani, S.; Auffermann, W.F. Deep learning in radiology. Acad. Radiol. 2018, 25, 1472–1480. [Google Scholar] [CrossRef] [Green Version]

- Mazurowski, M.A.; Buda, M.; Saha, A.; Bashir, M.R. Deep learning in radiology: An overview of the concepts and a survey of the state of the art. arXiv 2018, arXiv:1802.08717. [Google Scholar] [CrossRef]

- Basheera, S.; Ram, M.S.S. Classification of brain tumors using deep features extracted using CNN. In Journal of Physics: Conference Series; IOP Publishing: Secunderabad, India, 2019; Volume 1172, p. 012016. [Google Scholar]

- Sajjad, M.; Khan, S.; Muhammad, K.; Wu, W.; Ullah, A.; Baik, S.W. Multi-grade brain tumor classification using deep CNN with extensive data augmentation. J. Comput. Sci. 2019, 30, 174–182. [Google Scholar] [CrossRef]

- Das, S.; Aranya, O.R.R.; Labiba, N.N. Brain tumor classification using convolutional neural network. In Proceedings of the 2019 1st International Conference on Advances in Science, Engineering and Robotics Technology (ICASERT), Dhaka, Bangladesh, 3–5 May 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 1–5. [Google Scholar]

- Talo, M.; Baloglu, U.B.; Yıldırım, Ó.; Acharya, U.R. Application of deep transfer learning for automated brain abnormality classification using MR images. Cogn. Syst. Res. 2019, 54, 176–188. [Google Scholar] [CrossRef]

- Çinar, A.; Yildirim, M. Detection of tumors on brain MRI images using the hybrid convolutional neural network architecture. Med. Hypotheses 2020, 139, 109684. [Google Scholar] [CrossRef] [PubMed]

- Khawaldeh, S.; Pervaiz, U.; Rafiq, A.; Alkhawaldeh, R.S. Noninvasive grading of glioma tumor using magnetic resonance imaging with convolutional neural networks. Appl. Sci. 2017, 8, 27. [Google Scholar] [CrossRef] [Green Version]

- Sharma, A.K.; Nandal, A.; Dhaka, A.; Koundal, D.; Bogatinoska, D.C.; Alyami, H. Enhanced Watershed Segmentation Algorithm-Based Modified ResNet50 Model for Brain Tumor Detection. BioMed Res. Int. 2022, 2022, 7348344. [Google Scholar] [CrossRef] [PubMed]

- Arif, M.; Ajesh, F.; Shamsudheen, S.; Geman, O.; Izdrui, D.; Vicoveanu, D. Brain Tumor Detection and Classification by MRI Using Biologically Inspired Orthogonal Wavelet Transform and Deep Learning Techniques. J. Healthc. Eng. 2022, 2022, 2693621. [Google Scholar] [CrossRef] [PubMed]

- Mamatha, S.; Krishnappa, H.; Shalini, N. Graph Theory Based Segmentation of Magnetic Resonance Images for Brain Tumor Detection. Pattern Recognit. Image Anal. 2022, 32, 153–161. [Google Scholar] [CrossRef]

- Belfin, R.; Anitha, J.; Nainan, A.; Thomas, L. An Efficient Approach for Brain Tumor Detection Using Deep Learning Techniques. In Proceedings of the International Conference on Innovative Computing and Communications, Singapore, 12–13 July 2022; Springer: Berlin/Heidelberg, Germany, 2022; pp. 297–312. [Google Scholar]

- LeCun, Y.; Bottou, L.; Bengio, Y.; Haffner, P. Gradient-based learning applied to document recognition. Proc. IEEE 1998, 86, 2278–2324. [Google Scholar] [CrossRef] [Green Version]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. Imagenet classification with deep convolutional neural networks. Adv. Neural Inf. Process. Syst. 2012, 25, 1097–1105. [Google Scholar] [CrossRef]

- Mikołajczyk, A.; Grochowski, M. Data augmentation for improving deep learning in image classification problem. In Proceedings of the 2018 International Interdisciplinary PhD Workshop (IIPhDW), Swinoujscie, Poland, 9–12 May 2018; IEEE: Piscataway, NJ, USA, 2018; pp. 117–122. [Google Scholar]

- Engstrom, L.; Tran, B.; Tsipras, D.; Schmidt, L.; Madry, A. A Rotation and a Translation Suffice: Fooling CNNs with Simple Transformations. 2018. Available online: https://openreview.net/forum?id=BJfvknCqFQ (accessed on 20 February 2022).

- Goodfellow, I.; Pouget-Abadie, J.; Mirza, M.; Xu, B.; Warde-Farley, D.; Ozair, S.; Courville, A.; Bengio, Y. Generative adversarial nets. Adv. Neural Inf. Process. Syst. 2014, 27. [Google Scholar] [CrossRef]

- Nayak, D.R.; Padhy, N.; Mallick, P.K.; Zymbler, M.; Kumar, S. Brain Tumor Classification Using Dense Efficient-Net. Axioms 2022, 11, 34. [Google Scholar] [CrossRef]

- Wei, K.; Li, T.; Huang, F.; Chen, J.; He, Z. Cancer classification with data augmentation based on generative adversarial networks. Front. Comput. Sci. 2022, 16, 162601. [Google Scholar] [CrossRef]

- Mzoughi, H.; Njeh, I.; Wali, A.; Slima, M.B.; BenHamida, A.; Mhiri, C.; Mahfoudhe, K.B. Deep multi-scale 3D convolutional neural network (CNN) for MRI gliomas brain tumor classification. J. Digit. Imaging 2020, 33, 903–915. [Google Scholar] [CrossRef] [PubMed]

- LeCun, Y.; Jackel, L.; Bottou, L.; Brunot, A.; Cortes, C.; Denker, J.; Drucker, H.; Guyon, I.; Muller, U.; Sackinger, E.; et al. Comparison of learning algorithms for handwritten digit recognition. In Proceedings of the International Conference on Artificial Neural Networks, Perth, Australia, 27 November–1 December 1995; Volume 60, pp. 53–60. [Google Scholar]

- Wang, G.; Gong, J. Facial expression recognition based on improved LeNet-5 CNN. In Proceedings of the 2019 Chinese Control And Decision Conference (CCDC), Nanchang, China, 3–5 June 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 5655–5660. [Google Scholar]

- Zhang, Z.H.; Yang, Z.; Sun, Y.; Wu, Y.F.; Xing, Y.D. Lenet-5 Convolution Neural Network with Mish Activation Function and Fixed Memory Step Gradient Descent Method. In Proceedings of the 2019 16th International Computer Conference on Wavelet Active Media Technology and Information Processing, Chengdu, China, 13–15 December 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 196–199. [Google Scholar]

- Rongshi, D.; Yongming, T. Accelerator implementation of Lenet-5 convolution neural network based on FPGA with HLS. In Proceedings of the 2019 3rd International Conference on Circuits, System and Simulation (ICCSS), Nanjing, China, 20–22 July 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 64–67. [Google Scholar]

- Szegedy, C.; Liu, W.; Jia, Y.; Sermanet, P.; Reed, S.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A. Going deeper with convolutions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 1–9. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- Huang, G.; Liu, Z.; Van Der Maaten, L.; Weinberger, K.Q. Densely connected convolutional networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 4700–4708. [Google Scholar]

- Shah, U.; Harpale, A. A Review of Deep Learning Models for Computer Vision. In Proceedings of the 2018 IEEE Punecon, Pune, India 2018 IEEE, Piscataway, NJ, USA, 30 November–2 December 2018; pp. 1–6. [Google Scholar]

- Peters, J.F. Foundations of Computer Vision: Computational Geometry, Visual Image Structures and Object Shape Detection; Springer: Berlin/Heidelberg, Germany, 2017; Volume 124. [Google Scholar]

- Li, Y.H.; Aslam, M.S.; Yang, K.L.; Kao, C.A.; Teng, S.Y. Classification of body constitution based on TCM philosophy and deep learning. Symmetry 2020, 12, 803. [Google Scholar] [CrossRef]

- Chen, Q.; Xie, Q.; Yuan, Q.; Huang, H.; Li, Y. Research on a real-time monitoring method for the wear state of a tool based on a convolutional bidirectional LSTM model. Symmetry 2019, 11, 1233. [Google Scholar] [CrossRef] [Green Version]

- Iandola, F.N.; Han, S.; Moskewicz, M.W.; Ashraf, K.; Dally, W.J.; Keutzer, K. SqueezeNet: AlexNet-level accuracy with 50x fewer parameters and <0.5 MB model size. arXiv 2016, arXiv:1602.07360. [Google Scholar]

- Howard, A.G.; Zhu, M.; Chen, B.; Kalenichenko, D.; Wang, W.; Weyand, T.; Andreetto, M.; Adam, H. Mobilenets: Efficient convolutional neural networks for mobile vision applications. arXiv 2017, arXiv:1704.04861. [Google Scholar]

- Wang, N.; Li, Y.; Liu, H. Reinforced Neighbour Feature Fusion Object Detection with Deep Learning. Symmetry 2021, 13, 1623. [Google Scholar] [CrossRef]

- Zhang, J.; Liu, J.; Wang, Z. Convolutional Neural Network for Crowd Counting on Metro Platforms. Symmetry 2021, 13, 703. [Google Scholar] [CrossRef]

- LeCun, Y.; Jackel, L.D.; Bottou, L.; Cortes, C.; Denker, J.S.; Drucker, H.; Guyon, I.; Muller, U.A.; Sackinger, E.; Simard, P.; et al. Learning algorithms for classification: A comparison on handwritten digit recognition. Neural Netw. Stat. Mech. Perspect. 1995, 261, 2. [Google Scholar]

- Deng, L. The mnist database of handwritten digit images for machine learning research [best of the web]. IEEE Signal Process. Mag. 2012, 29, 141–142. [Google Scholar] [CrossRef]

- Zhu, X.; Bain, M. B-CNN: Branch convolutional neural network for hierarchical classification. arXiv 2017, arXiv:1709.09890. [Google Scholar]

- Kanwal, K.; Ahmad, K.T.; Khan, R.; Abbasi, A.T.; Li, J. Deep learning using symmetry, fast scores, shape-based filtering and spatial mapping integrated with cnn for large scale image retrieval. Symmetry 2020, 12, 612. [Google Scholar] [CrossRef]

- Abd El Kader, I.; Xu, G.; Shuai, Z.; Saminu, S.; Javaid, I.; Salim Ahmad, I. Differential deep convolutional neural network model for brain tumor classification. Brain Sci. 2021, 11, 352. [Google Scholar] [CrossRef] [PubMed]

- Perez, L.; Wang, J. The effectiveness of data augmentation in image classification using deep learning. arXiv 2017, arXiv:1712.04621. [Google Scholar]

- Wong, S.C.; Gatt, A.; Stamatescu, V.; McDonnell, M.D. Understanding data augmentation for classification: When to warp? In Proceedings of the 2016 International Conference on Digital Image Computing: Techniques and Applications (DICTA), Gold Coast, Australia, 30 November–2 December 2016; IEEE: Piscataway, NJ, USA, 2016; pp. 1–6. [Google Scholar]

- Khan, H.A.; Jue, W.; Mushtaq, M.; Mushtaq, M.U. Brain tumor classification in MRI image using convolutional neural network. Math. Biosci. Eng. 2020, 17, 6203. [Google Scholar] [CrossRef] [PubMed]

- Li, Q.; Yu, Z.; Wang, Y.; Zheng, H. TumorGAN: A multi-modal data augmentation framework for brain tumor segmentation. Sensors 2020, 20, 4203. [Google Scholar] [CrossRef] [PubMed]

- Işın, A.; Direkoğlu, C.; Şah, M. Review of MRI-based brain tumor image segmentation using deep learning methods. Procedia Comput. Sci. 2016, 102, 317–324. [Google Scholar] [CrossRef] [Green Version]

- Aslan, M.F.; Unlersen, M.F.; Sabanci, K.; Durdu, A. CNN-based transfer learning–BiLSTM network: A novel approach for COVID-19 infection detection. Appl. Soft Comput. 2021, 98, 106912. [Google Scholar] [CrossRef]

- Aslan, M.F.; Sabanci, K.; Durdu, A.; Unlersen, M.F. COVID-19 diagnosis using state-of-the-art CNN architecture features and Bayesian Optimization. Comput. Biol. Med. 2022, 2022, 105244. [Google Scholar] [CrossRef]

- Sujit, S.J.; Bonfante, E.; Aein, A.; Coronado, I.; Riascos-Castaneda, R.; Giancardo, L. Deep learning enabled brain shunt valve identification using mobile phones. Comput. Methods Programs Biomed. 2021, 210, 106356. [Google Scholar] [CrossRef]

- Ghosh, A.; Soni, B. An Automatic Tumor Identification Process to Classify MRI Brain Images. In Data Science; Springer: Berlin/Heidelberg, Germany, 2021; pp. 315–327. [Google Scholar]

- Hossain, M.F.; Islam, M.A.; Hussain, S.N.; Das, D.; Amin, R.; Alam, M.S. Brain Tumor Classification from MRI Images Using Convolutional Neural Network. In Proceedings of the 2021 IEEE International Conference on Artificial Intelligence in Engineering and Technology (IICAIET), Kota Kinabalu, Malaysia, 13–15 September 2021; IEEE: Piscataway, NJ, USA, 2021; pp. 1–6. [Google Scholar]

- Wahid, R.R.; Anggraeni, F.T.; Nugroho, B. Brain Tumor Classification with Hybrid Algorithm Convolutional Neural Network-Extreme Learning Machine. Ijconsist J. 2021, 3, 29–33. [Google Scholar] [CrossRef]

- Zhaputri, A.; Hayaty, M.; Laksito, A.D. Classification of Brain Tumour MRI Images using Efficient Network. In Proceedings of the 2021 4th International Conference on Information and Communications Technology (ICOIACT), Virtually, 30–31 August 2021; IEEE: Piscataway, NJ, USA, 2021; pp. 108–113. [Google Scholar]

| Layer | Filter | Kernel Size | Strid | Size of Feautre Maps |

|---|---|---|---|---|

| Input | - | 3 × 3 | - | 224 × 224 × 3 |

| Conv(1) | 64 | 3 × 3 | - | 224 × 224 × 64 |

| Conv(2) | 64 | 3 × 3 | - | 224 × 224 × 64 |

| Pooling(1) | 64 | - | 2 × 2 | 112 × 112 × 64 |

| Conv(3) | 128 | 3 × 3 | - | 112 × 112 × 128 |

| Conv(4) | 128 | 3 × 3 | - | 112 × 112 × 128 |

| Pooling(2) | 128 | - | 2 × 2 | 56 × 56 × 128 |

| Conv(5) | 256 | 3 × 3 | - | 56 × 56 × 256 |

| Conv(6) | 256 | 3 × 3 | - | 56 × 56 × 256 |

| Conv(7) | 256 | 3 × 3 | 56 × 56 × 256 | |

| Pooling (3) | 256 | - | 2 × 2 | 28 × 28 × 256 |

| Conv(8) | 512 | 3 × 3 | - | 28 × 28 × 512 |

| Conv(9) | 512 | 3 × 3 | - | 28 × 28 × 512 |

| Conv(10) | 512 | 3 × 3 | 28 × 28 × 512 | |

| Pooling(4) | 512 | - | 2 × 2 | 14 × 14 × 512 |

| Conv(11) | 512 | 3 × 3 | - | 14 × 14 × 512 |

| Conv(12) | 512 | 3 × 3 | - | 14 × 14 × 512 |

| Conv(13) | 512 | 3 × 3 | - | 14 × 14 × 512 |

| Pooling(5) | 512 | - | 2 × 2 | 7 × 7 × 512 |

| F1 | - | - | - | 25.088 |

| Networks | Without | With | Whole | Core | Enhanced | Mean |

|---|---|---|---|---|---|---|

| Augmentation | Augmentation | |||||

| Cascaded Net | X | 0.848 | 0.748 | 0.643 | 0.746 | |

| Cascaded Net | X | 0.853 | 0.791 | 0.692 | 0.778 | |

| U-Net | X | 0.783 | 0.672 | 0.609 | 0.687 | |

| U-Net | X | 0.806 | 0.704 | 0.611 | 0.706 | |

| Deeplab-v3 | X | 0.820 | 0.700 | 0.571 | 0.697 | |

| Deeplab-v3 | X | 0.831 | 0.762 | 0.584 | 0.725 |

| Dataset | Number of Images |

|---|---|

| Original dataset | 253 |

| Tumor brain MRI images | 155 |

| Non-tumor MRI images | 98 |

| After augmentation | 3700 |

| Training | 185 |

| Validation | 48 |

| Test | 20 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Alsaif, H.; Guesmi, R.; Alshammari, B.M.; Hamrouni, T.; Guesmi, T.; Alzamil, A.; Belguesmi, L. A Novel Data Augmentation-Based Brain Tumor Detection Using Convolutional Neural Network. Appl. Sci. 2022, 12, 3773. https://doi.org/10.3390/app12083773

Alsaif H, Guesmi R, Alshammari BM, Hamrouni T, Guesmi T, Alzamil A, Belguesmi L. A Novel Data Augmentation-Based Brain Tumor Detection Using Convolutional Neural Network. Applied Sciences. 2022; 12(8):3773. https://doi.org/10.3390/app12083773

Chicago/Turabian StyleAlsaif, Haitham, Ramzi Guesmi, Badr M. Alshammari, Tarek Hamrouni, Tawfik Guesmi, Ahmed Alzamil, and Lamia Belguesmi. 2022. "A Novel Data Augmentation-Based Brain Tumor Detection Using Convolutional Neural Network" Applied Sciences 12, no. 8: 3773. https://doi.org/10.3390/app12083773

APA StyleAlsaif, H., Guesmi, R., Alshammari, B. M., Hamrouni, T., Guesmi, T., Alzamil, A., & Belguesmi, L. (2022). A Novel Data Augmentation-Based Brain Tumor Detection Using Convolutional Neural Network. Applied Sciences, 12(8), 3773. https://doi.org/10.3390/app12083773