1. Introduction

Ensuring the health, livelihood and safety of people is a main concern in modern times. The World Health Organization reports that more than 1.3 million deaths are caused by road accidents [

1]. Climate change is another concern that affects people’s health and life quality. In 2018, transport was responsible for 24% of global CO

emissions, of which most were from road passenger (45.1%) and road freight (29.4%) transport [

2]. These concerns are being addressed, as reflected by megatrends in mobility such as electrification, shared mobility, connected mobility and automated driving. In this study, we focus on automated driving and, in particular, its link to road infrastructure. The primary goal of automated driving systems (ADS) is enhancing road safety. Eichberger et al. [

3] showed, in 2011, that lane keeping assistance (LKA) would prevent 17% of fatal traffic accidents in Austria, and further enhance it by an additional 13%. Kusano et al. [

4] investigated the influence of the forward collision warning (FCW) and lane departure warning (LDW) technology on traffic accidents. The authors concluded that FCW could prevent up to 69% of moderate to fatal driver injuries in rear-end collisions, and up to 22% of serious to fatal injuries caused by drift-out-of-lane crashes by using LDW. Benson et al. [

5] provided research estimating the benefits of combining multiple advanced driver assistance systems (ADAS) on accident prevention. The research estimated that approximately 40% of all passenger-vehicle crashes, 37% of crashes involved injuries and 29% of deaths in crashes could be prevented. More recent ADAS technology such as intelligent speed assistance (ISA) has the potential to reduce accidents by 30% and deaths by 20%, see [

6]. However, misuse of advanced technology potentially leads to distracted driving, which is already showing an increasing trend as an accident factor. Moreover, partial automation causes earlier signs of sleepiness than manual driving [

7]. Therefore, developing highly reliable ADAS is essential.

To achieve a high level of reliability, ADAS must be improved through onboard perception sensors, decision logic and controllers. However, this may not be enough. A certain improvement in the road infrastructure also plays a role in achieving the desired level of reliability and, yet, road infrastructure design has been guided by human drivers. Ambiguous traffic signs and road markings can lead to recognition failure by ADAS, regardless of its causes, design patterns, insufficiently maintained road infrastructure and nonharmonised appearances of traffic signs and road markings. Sensor fusion and digitalisation of the road network through vehicular communication and digital maps offer promising solutions to increase the reliability of the entire system by providing redundancy. Moreover, such an approach promotes the implementation of a safety culture as a vital part of establishing functional safety within an organisation and the life cycle of a product, as recommended by ISO 26262 [

8].

The synergy between ADS and road infrastructure is a well-recognised topic for inquiry that has been increasingly addressed in recent years. One of the first comprehensive studies on this topic was conducted in the US as part of the National Cooperative Highway Research Program project [

9]. The investigation was based on a field study examining the effect of the quality of longitudinal white and yellow road markings on the detectability and readability of machine vision (MV) systems in vehicles. Similar extensive studies were conducted by Austroads, which examined the performance of lane keeping assist (LKA) and traffic sign recognition (TSR) based on literature research, stakeholder consultation and on-road and off-road tests [

10,

11]. They aimed to specify recommendations for changes in Australian and New Zealand road infrastructure as well as to specify the level of required maintenance to support MV systems. Austroads also conducted extensive studies regarding connected and automated vehicles (CAVs) and infrastructure changes to support automated vehicles (AVs). The studies were conducted through five modules in which the authors examined road standards and gaps in physical and digital road infrastructure mostly related to traffic signs and road markings [

12,

13,

14,

15,

16]. They concluded that, in Australia and New Zealand, only a few roads are fully suitable for automated driving over an extended distance. They also reported that the main reason why road authorities do not develop new standards is a lack of guidance and costs. In addition to those five modules, Austroads carried out a study on open data policies through literature research and interviews with stakeholders involved in the CAV data distribution chain [

17]. The Permanent International Association of Road Congresses (PIARC) reported on challenges and opportunities for road operators and road authorities in the domain of CAVs [

18,

19]. The European Commission (EC) is also very active with EU-funded research and directives to support future trends in mobility. One such example is the INFRAMIX project that introduced so-called infrastructure support levels for automated driving (ISAD) to categorise road infrastructure based on its capability to support automated driving [

20].

The focus of this paper is to provide a clear and concise overview of the synergy and existing limitations between road infrastructure and ADS. This paper is considered as an addition to reports that identify challenges and opportunities on this particular topic. An extensive technology survey and cost–benefit analysis are not in the scope of this paper. Our target audience is researchers that are new to the field, road authorities and bodies involved in international activities towards defining the requirements of road infrastructure for the successful adoption of ADS. Therefore, a basic overview of the state-of-the-art ADS would be a valuable source of information. That information could clarify the root cause of limits that ADS is facing. As a whole, the contributions of this paper are:

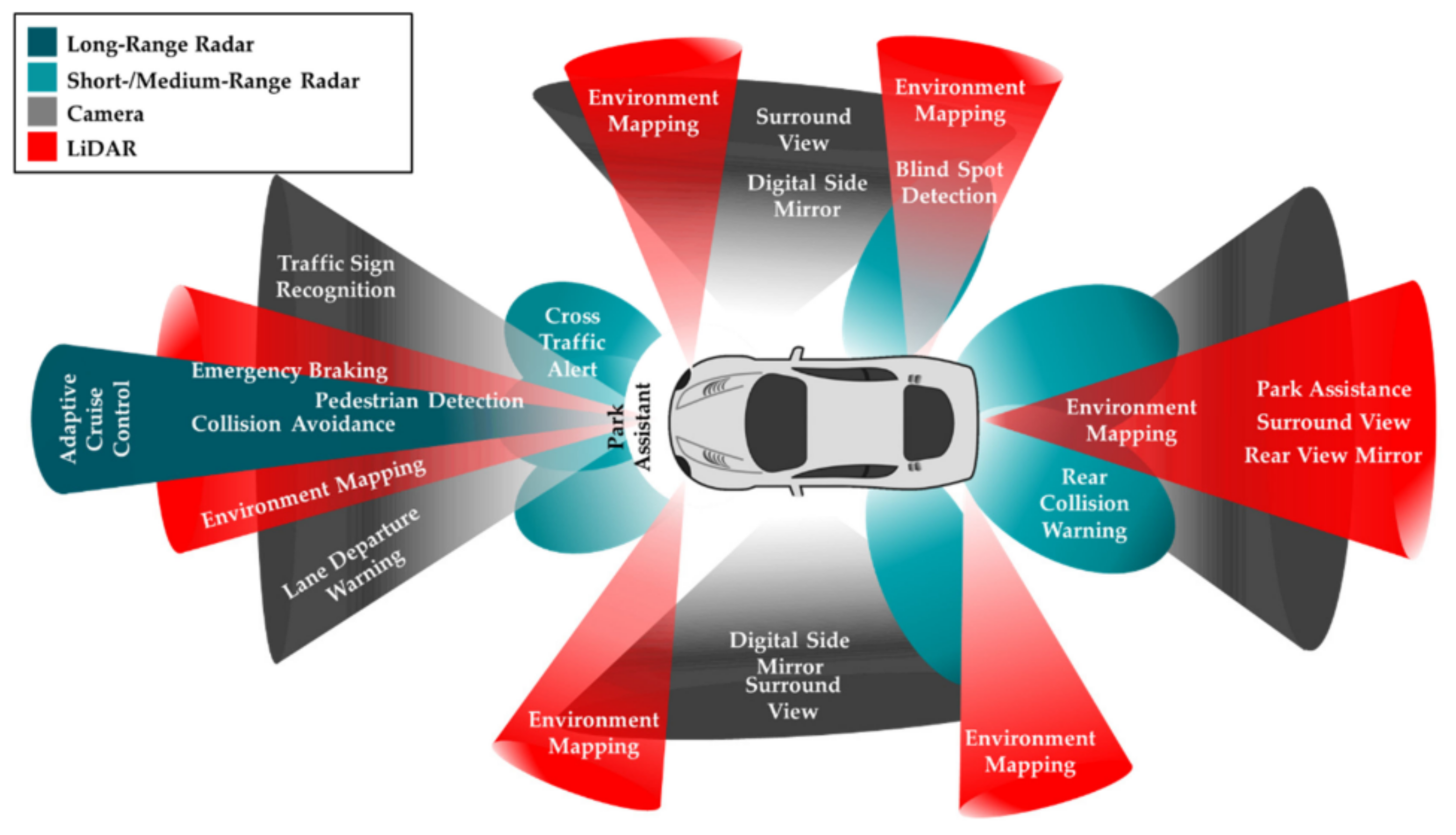

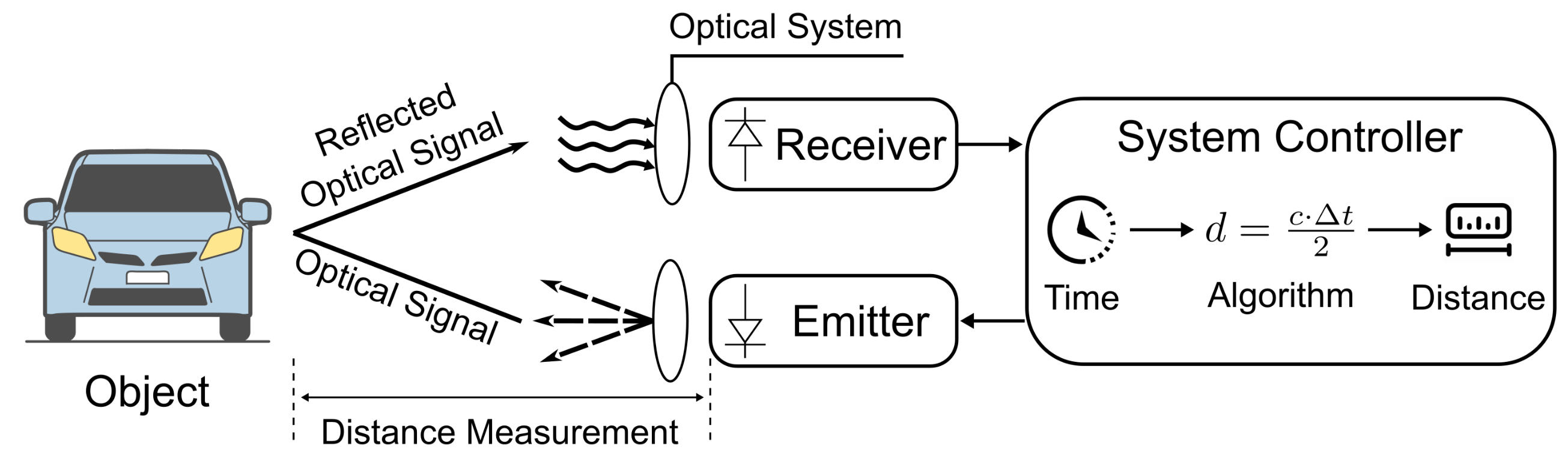

Provision of a short overview and the basic work principles of the most used and promising state-of-the-art ADS technologies.

Determination of the limitations and advances of ADS concerning road infrastructure (particularly for road markings and traffic signs).

Categorisation of the gaps and limitations according to challenges that should be addressed to support the transition towards higher levels of automation.

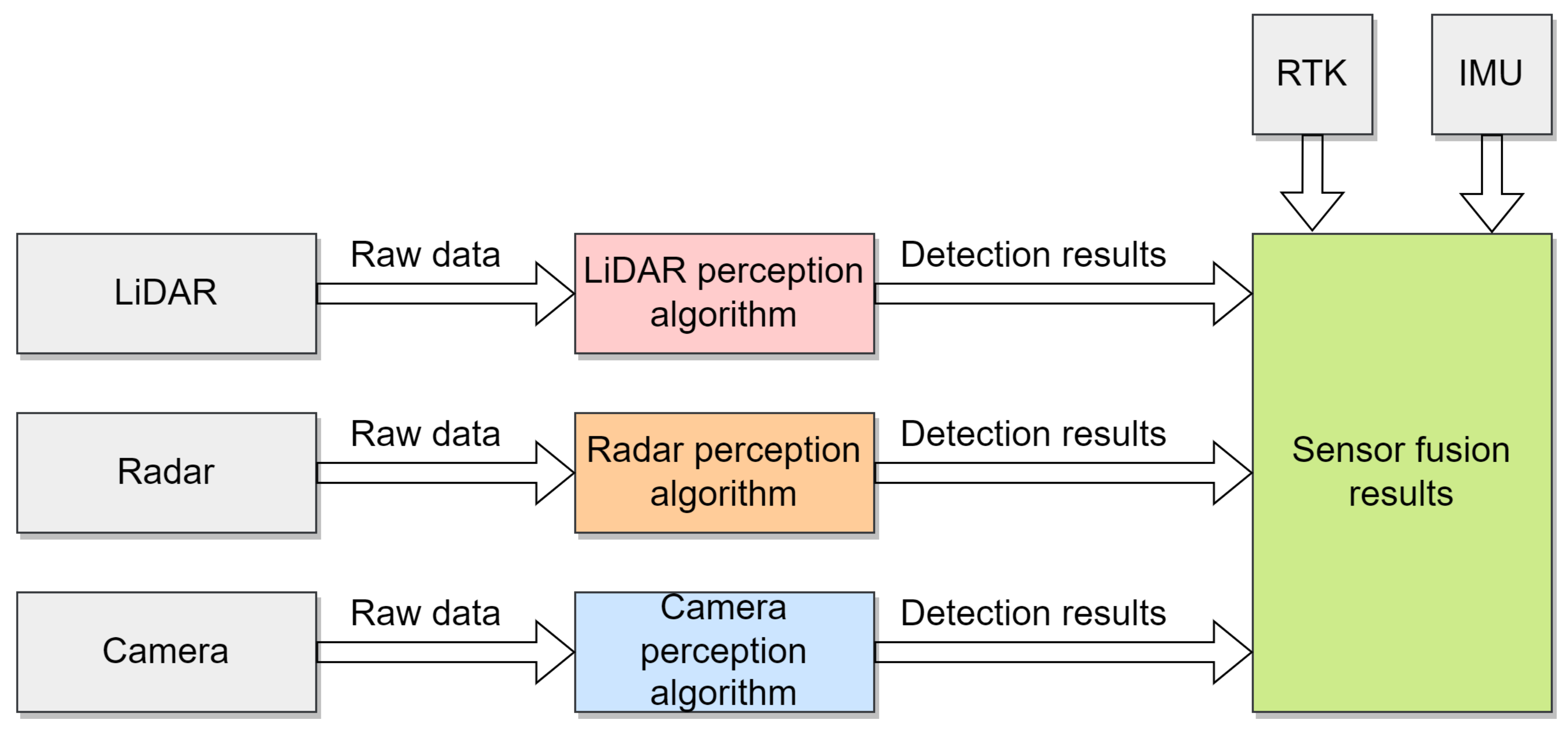

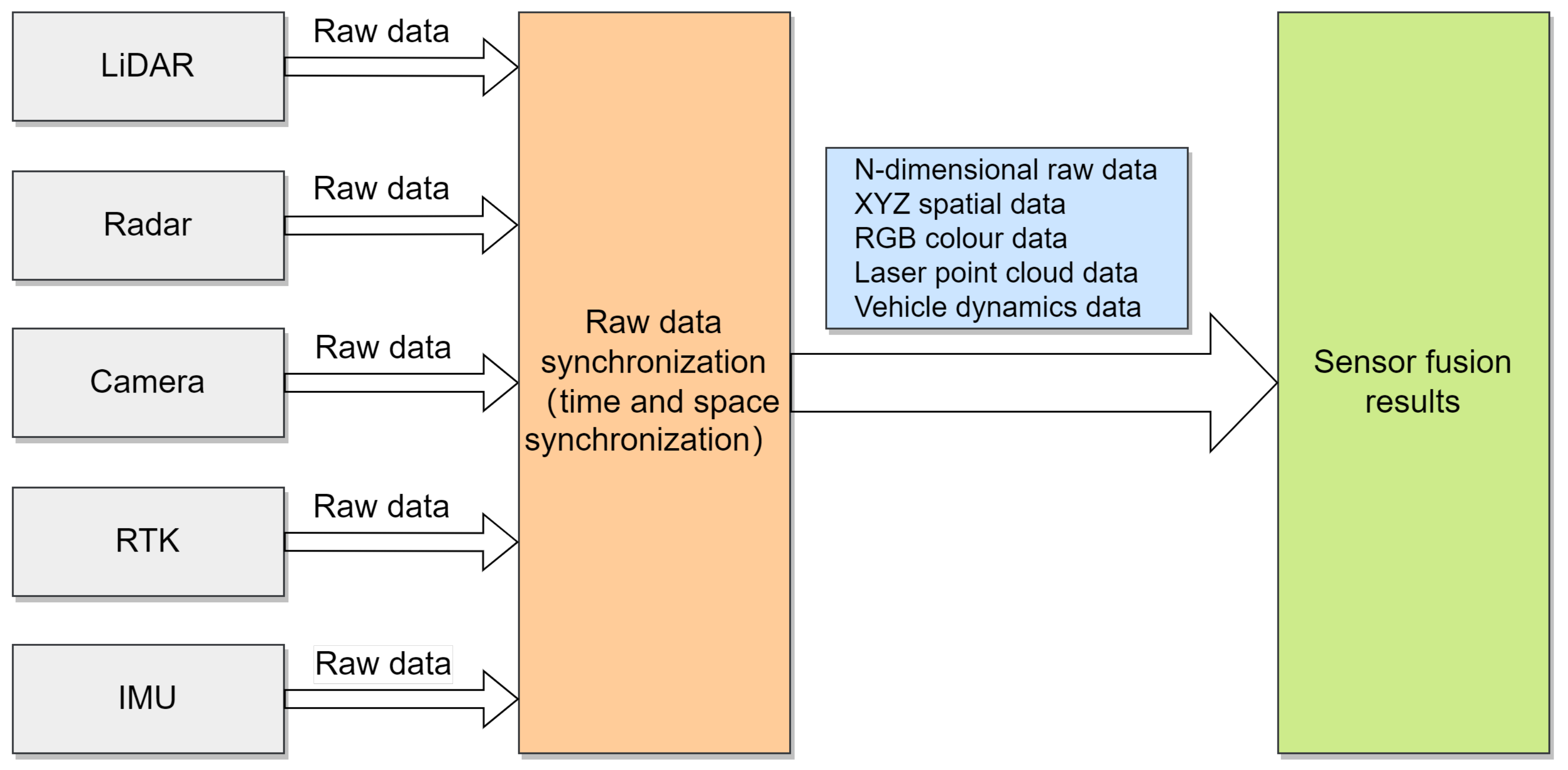

We approach the problem by first providing a short introduction of perception sensors and their basic work principles. This is the key to identifying the root cause of the limitations. In addition to this overview, sensor fusion, digital maps, and vehicular communication are introduced, which provide insight into emerging and promising technologies that aim to enhance ADS reliability. This state-of-the-art overview is given in

Section 2. In

Section 3, we introduce known limitations and advances in ADS technology from the perspective of road infrastructure. To address this topic properly, we selected traffic sign recognition systems (TSRS) and lane support systems (LSS) as reference advanced driver assistance systems (ADAS), which are essential and mature technologies for automated vehicles (AVs), and they are directly linked to the road infrastructure, which makes them an ideal candidate for this paper. Through the identified limitations, we identify gaps that could be addressed in future research activities and, moreover, those limitations are grouped into five challenges, and a summary of their mutual impacts and dependencies is included. The challenges are organised into the following categories: international harmonisation, revision in the maintenance of the road infrastructure, review of common design patterns, digitalisation of road networks, and interdisciplinarity. Discussion of the challenges is found in

Section 4. The paper concludes with

Section 6.

Publications considered for this review are reports compiled by governmental organisations or transport associations, classical peer-reviewed research publications, and web research to support findings with existing or emerging commercial solutions. As this is a fast-growing field, we focused our research on more recent studies published after 2015, including a few exceptions that we still considered state-of-the-art. Due to limited peer-reviewed academic publications related to the limitation and performance of the current ADAS regarding road infrastructure, the reports are considered as the primary information resource. Through exhaustive reports, usually associated with projects lead by governmental organisations, we were able to review the limitation and thresholds that could be considered as an adequate performance of ADS for given infrastructure bounds. Some of those publications are based on extensive literature research, stakeholder interviews, and experiments and, therefore, they provide not only scientific but also real-world experience. However, due to the lack of research papers that could distinguish the significance of the parameters of infrastructure regarding ADAS performance, we are not, at this stage, able to propose adequate thresholds for each parameter.

4. Identification of Challenges

After reviewing the state-of-the-art and literature research, we identified five topics related to challenges in identification: international harmonisation of traffic signs and road markings, revision in the maintenance of the road infrastructure to support future mobility, review of current design patterns used for road infrastructure, digitalisation through digital maps and vehicular communication, and finally interdisciplinarity, which is needed to cope with jobs that may arise due to transition towards self-driving.

4.1. Harmonisation

Studies that investigated improvements of the traffic signs and road markings through a literature review, stakeholder interviews and tests have highlighted harmonisation as one of the main challenges for a smooth transition towards a higher level of automation [

10,

11,

19]. Despite standards for traffic signs, such as the US Manual of Uniform Traffic Control Devices (MUTCD) and the Vienna Convention, many countries and jurisdictions apply their own rules and implementations. Nonharmonised signs and markings result in higher efforts required in data collection and training of recognition algorithms, which is also of increased interest for digitalisation. Besides data collection, digitalisation also requires the harmonisation of formats and protocols for data transfer.

When discussing harmonisation, many questions that require addressing can be identified:

Who should be involved in the harmonisation of road infrastructure?

Who benefits the most and what are the benefits of harmonisation?

How long should the adaptation period be?

To what extent should harmonisation be realised?

Harmonisation extends to the international level and, therefore, it is important that is managed by international legislation bodies and organisations. However, road authorities and vehicle manufacturers are the ones that need to define requirements and technical content. Vehicle manufacturers together with their suppliers and research institutes are aware of the technical capabilities and limitations of ADAS; it is their responsibility to provide requirements to increase the reliability of ADAS. Nevertheless, road authorities have permission to change the road infrastructure. Therefore, to successfully adapt harmonisation, cooperation between those two stakeholders should be well established. Harmonisation would remove the big burden on data collection and training recognition algorithms. That is the reason why vehicular manufacturers may benefit the most from it, though this would, to some extent, also be of benefit to the human driver in the long term. Reaching a consensus and changing signs and markings takes time and comes at some cost, especially for road authorities, which may result in resentment. However, clear guidance and extensive cost–benefit analysis could soften the process. Moreover, in the long-term, additional costs could, to some extent, be compensated through the simplification of future digitalisation processes and the exchange of common expertise and processes, which could be one of the areas of increased demand in the foreseeable future, as digitalisation will open new jobs and opportunities. We can observe that in all these actions—reaching consensus or compliance of human drivers, asking for time and, thus, the harmonisation—should be looked at as a long-term process that could take place in parallel with the transition towards self-driving. To avoid unnecessary costs, the replacement can be done during maintenance. How broad this harmonisation should go is a question of geography, culture and the daily implications of the transition for people, so it would make sense to include at least the largest landmasses under common regulation. Yet, several studies raised concern about the comprehensiveness of traffic signs from a tourist perspective, which would lead to extending the harmonisation even further [

74,

75]. Although the parameters of road infrastructure such as shape, colours, fonts for traffic signs or colour and width for road markings are well recognised among all people, the text that often accompanies them is more a matter of culture and language and, therefore, is more difficult to harmonise. However, through digitalisation and the appearance of smart signs and markings, translation of the text to a machine-readable format is achievable.

4.2. Maintenance

Ensuring sufficient visibility of the traffic signs and road markings through proper maintenance is one of the important tasks of road authorities. With the penetration of ADAS to the market, maintenance become even more relevant, as ADAS requires clearly visible and unobstructed road infrastructure. This means that reflectivity and the contrast ratio should be kept within the range at which reliability of the ADAS is ensured. Moreover, road authorities should regularly repair damage and remove natural obstacles, such as bushes or snow. Therefore, there are a few questions we can ask ourselves when it comes to maintenance:

What are the minimum thresholds and important parameters for good visibility?

How can potential issues be detected in time?

What influence does digitalisation have on maintenance?

Will the costs of maintenance increase?

To investigate the implications of the road infrastructure for MV, many countries and organisations around the globe have already conducted extensive research [

9,

10,

11]. Their findings are provided in previous sections through

Table 5 and

Table 7, which summarise the proposals for good detectability of the parameters, such as visibility, colours, width and material of the road markings and traffic signs. However, the maintenance periods cannot be generalised. Each region, even if it belongs to the same jurisdiction, can have certain characteristics that make it an exception. Such examples are alpine roads that, due to harsh winters and frequent shovelling, experience fast deterioration of road markings, which is especially important in discussions about more advanced technology, such as profiled markings that are beneficial under wet or rainy conditions. With digitalisation and further advances in automated driving, real-time maintenance, updates of digital maps and warning of obstacles ahead will be of huge importance. Information on the local road conditions is of high interest for automated driving functions that have to adapt their strategies, such as distance to other traffic participants and the decision making concerning braking and steering interventions to the coefficient of friction between tire and road. The potential of combining vehicle and infrastructure data, e.g., for maintenance, is shown by [

76] and with weather data by [

77]. This offers both opportunities and additional tasks for road authorities. To ensure the proper operation of ADAS, especially concerning higher levels of automation, the road should be subjected to stricter maintenance, which means all obstacles, ghost markings, potholes, etc., must be recognised and addressed in a timely manner. It is hard to imagine that it will be possible for all roads to be inspected simply though road monitoring by authorised vehicles. Therefore, information could be collected through public and private transport via crowdsourcing [

43,

44]. Whether this will completely relieve road authorities from the burden of manually updating databases, and to what extent collaboration between road authorities, map providers and vehicular manufacturers regarding data transfer remains a necessity, but this is more a subject of digitalisation than maintenance. Crowdsourcing will, however, certainly reduce some of the expenses associated with monitoring but, in general, a reorganisation of operation activities of conventional road authorities is needed. Digitalisation will involve additional expenses for maintenance, installation as well as a need for new expertise. At the same time, shared mobility tends to reduce the number of vehicles, which could lead to increased maintenance periods, but at this point it is very hard to forecast in which direction traffic will develop.

Maintenance is a complex challenge in which the burden is mostly placed on road authorities. Yet, it is still too early to precisely define the required actions. As support in performing their task, policies could be a good way to define clear guidance. However, before that, many other activities should be settled, such as open data policies for vehicular communication [

18] and a clear definition of the most significant factors of road infrastructure that influence the performance of ADAS.

4.3. Design

Historically, the development of road infrastructure has been constructed considering humans. With the infiltration of ADS and ADAS, the perception of how road infrastructure is experienced changes. However, in the foreseeable future, until full automation is reached, both “worlds” should be satisfied. Moreover, the physical road infrastructure and onboard sensors are currently the priority and the most desirable forms. With advances in ADAS, however, the need for digitalisation will increase. Today, digital maps are only a supplement to physical infrastructure and onboard sensors, but this could change in the future. The questions we could ask to help us define and explain challenges regarding the design of traffic signs and road markings are the following:

To recap the design limitations of traffic signs and road markings, it is worth looking, once again, at the list of design limitations in

Table 5 and

Table 7 as well as the list of advances in

Table 6 and

Table 8. As we can see, ADAS have a problem in interpreting collocated traffic signs, textual messages or irregular and worn-out road markings. This problem can be solved partially with sensor fusion and digitalisation. Moreover, some of the problems are already solvable. Flickering of the electronic signs is reported only in some cases and depends on the sign manufacturers and, therefore, could be solved through regulating the refresh rates of electric signs. Other examples are related to increasing the width of road markings from 100 to 150 mm, which is already adopted in the US [

79]. Implementation of RFID, infrared barcodes and other smart solutions could be a solution for the transition period [

59,

60,

61,

62]. However, more drastic measures such as replacing dashed with solid lines to achieve better continuity are not viable in the foreseen future, and solving these issues would therefore require some support from digitalisation. Another more drastic measure is dedicated lanes for AVs. There are mainly two ways for this approach, either to use current infrastructure and assign some of the lanes to AVs only or to build completely new lanes. The latter is a less viable solution due to high costs and space limitations. Be that as it may, the interaction between human drivers and AVs is inevitable, and extensive traffic flow simulations and simulator studies are necessary to see a whole picture and real traffic benefits [

80]. Nevertheless, dedicated lanes would still require changes in the design of road marking and signs, stricter maintenance, harmonisation and digitalisation. A study on dedicated lanes for roundabouts came to the conclusion that dedicated lanes do not results in benefits at the lower penetration rates of AVs and only confer a slight advantage under higher penetration rates [

81]. Dedicated lanes, magnet markers and magnetic-induction lines are a possible option in some areas but not for all roads and not for wide application [

82,

83].

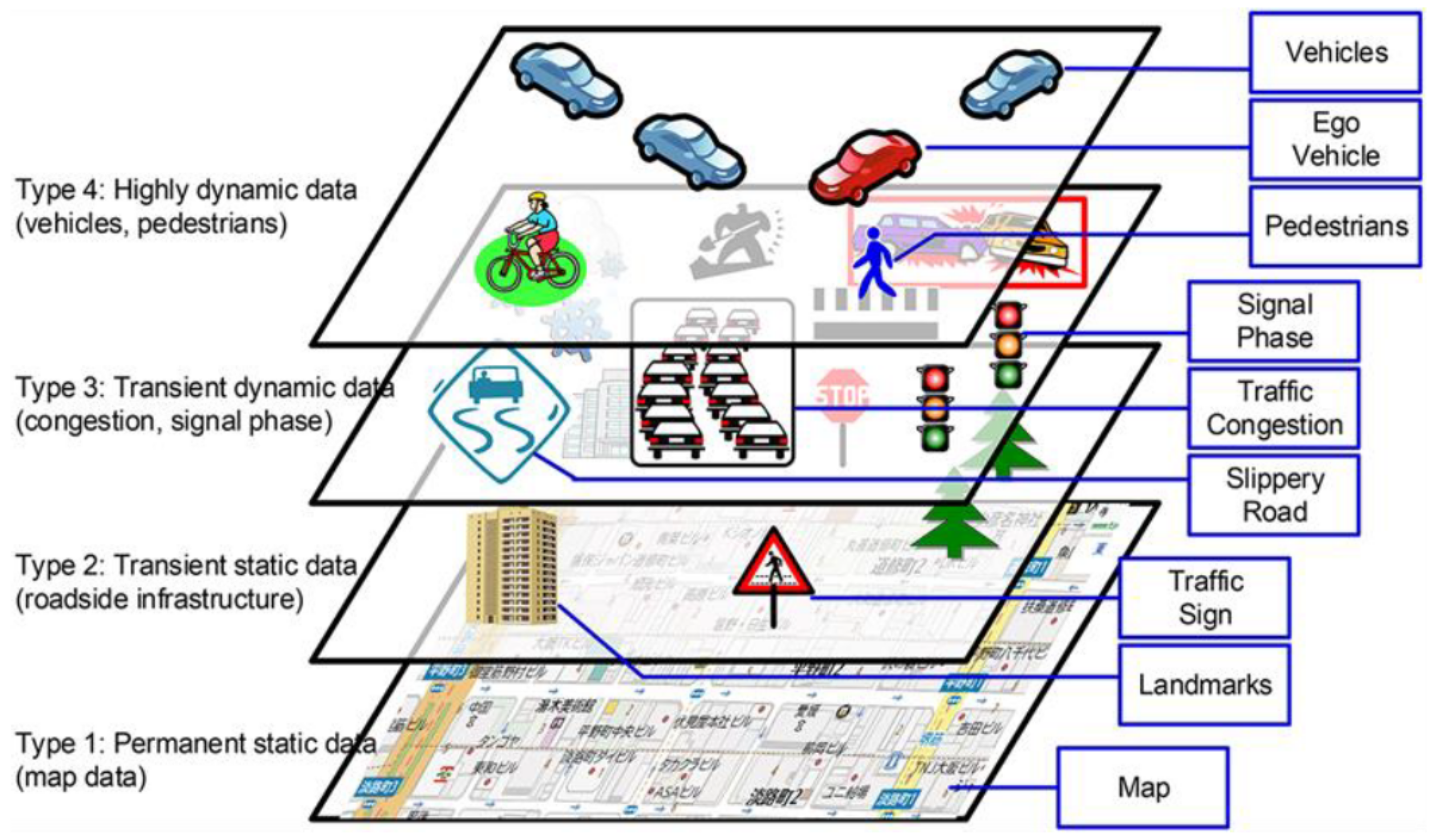

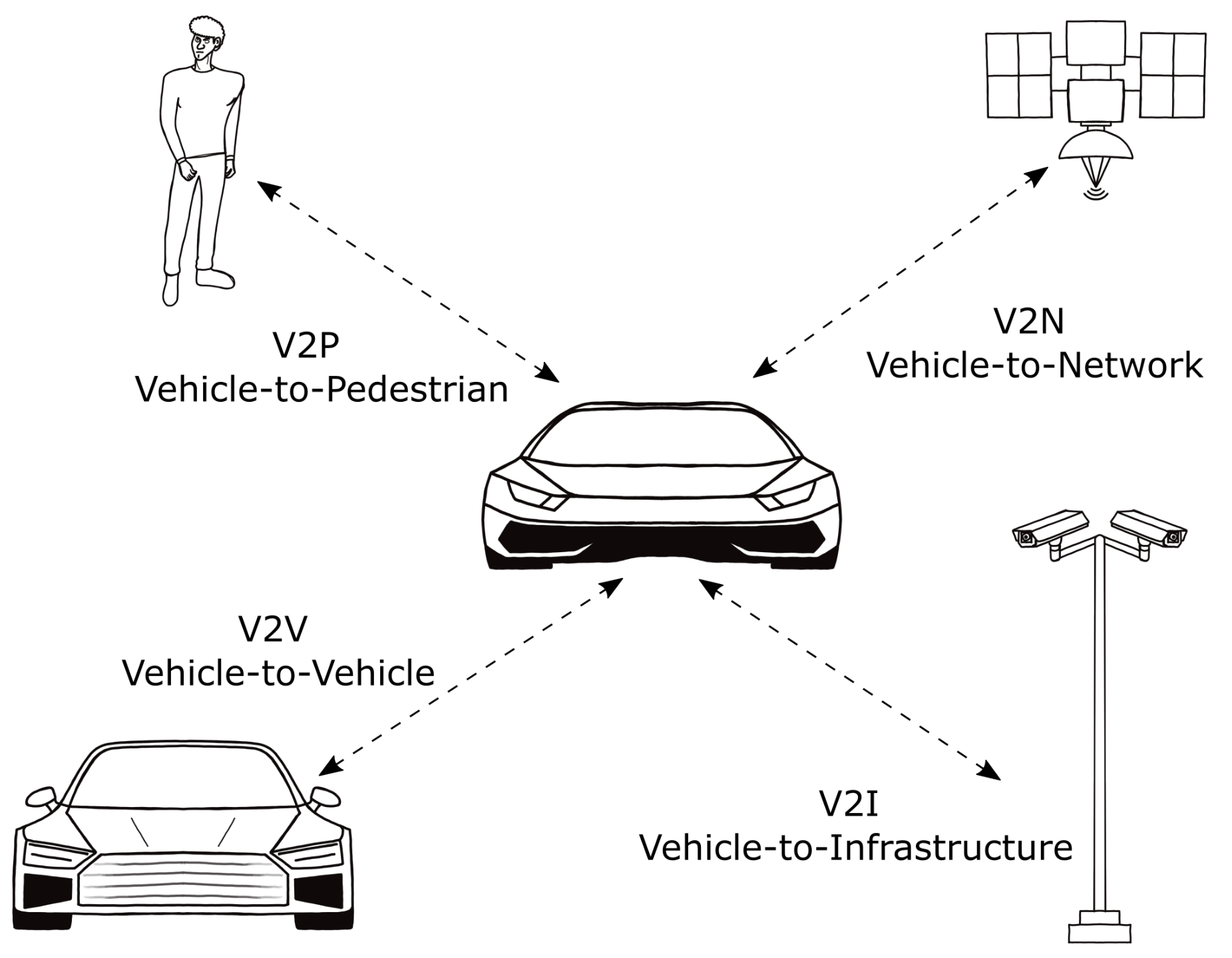

4.4. Digitalisation

Digitalisation of infrastructure is represented by V2I and V2N communication and digital maps. Many limitations that are present when onboard perception is used can be solved with digitalisation. Digitalisation supports AV with information outside FOV and under harsh environmental conditions. Moreover, it represents a redundant system that increases the reliability of ADAS. The questions that could be asked to address challenges are:

What are the requirements for digitalisation?

Is modern technology for digitalisation standardised?

What influences the penetration and acceptance of modern technology?

Is security advanced enough to resist cyber-attacks?

What are the responsibilities and opportunities related to large data collection?

To support AV, especially higher automation levels, it is important to ensure real-time information and transfer of a large amount of data with low latency. Moreover, this large amount of data should be analysed and sent from vehicle to infrastructure and vice versa. Therefore, it is necessary to implement technology with such capability. Currently, high power computing and 5G network show promising results as they can handle the required data rate of 2.2 Gbit/s and latency of 10 ms [

48,

50,

84]. However, those requirements are for safety-critical applications. Some applications do not require that level of performance, such as automated valet parking, where latency can be significantly higher, and the range that needs to be covered is approximately 50 to 100 m, so only a moderate vehicle density of, e.g., 50 users is requested. Such a requirement-based approach is used by 5GAA, in which they define ADS use cases and categorise them as safety-related, vehicle operation management, autonomous driving, platooning, traffic efficiency and more [

85,

86]. Therefore, one of the challenges of digitalisation is to precisely define requirements and correctly address technologies that may handle them. An example of a complication that could arise is overloading of a certain frequency band, e.g., 5.9 GHz for short-range communication, and a requirements-based approach could support strategies for prioritisation of certain functions. Overloading implies the importance of integration of different technologies in which the definition of data types and standardisation would play an important role. However, huge efforts have already been invested in standardisation, and it is an open topic managed by organisations such as European Telecommunications Standards Institute (ETSI), SAE and the European Standardisation Committee, among others. Moreover, integration of communication and computing of AI algorithms and switching from vehicle computing to cloud computing to gain the most out of collected data is another advance that is gaining increasing attention and, once again, posing challenges regarding the standardisation and technical requirements of digitalisation [

48,

87]. However, the efficiency of cooperative driving and smart road infrastructure is highly dependent on the number of vehicles equipped with such technology, e.g., to make the most of traffic flow and energy efficiency through smart intersections and traffic lights, and the vast amount of data that needs to be collected is only possible if many cars already have such systems. Penetration of such vehicles is also a precondition of HD digital maps because without real application; they do not make economic sense for map providers.

With higher penetration, however, higher security demands arise. Cyber-attacks are a huge threat to safety, allowing diverse attack possibilities in which even complete car controllers can be taken over [

88,

89,

90]. The authors of [

48] suggested that a cryptography-based approach might not be sufficient to tackle V2X security. Yet, emerging technology, such as blockchains, shows promising results [

91,

92,

93].

With data collection comes new business opportunities, but questions regarding data liability also arise. One study [

48] addresses the issue that data monetisation could generate high revenues and open the door to new business models. However, it also comes with great responsibility, as to make the most of the digitalisation, data should be collected from different entities and shared among them [

94]. On the one hand, we have road authorities that will collect a huge amount of data through infrastructure and provide them to vehicles. However, they are public entities and obey national regulations, thus resulting in an open data policy. The private sector, on the other hand, comprising vehicular manufacturers and map providers, is not affected by national regulation and their data are confidential due to competition. Therefore, cooperation models will play a significant role [

19]. Yet, in Europe, there exists legislation that deals with the access to data by the private sector [

95]. Moreover, liability issues regarding data have not been solved up to this point but will need to be addressed in the future. Liability for provided data is a sensitive topic and is currently rejected by road authorities [

17]. This may be changed in the future if quality-based policies for data are established. Moreover, according to [

17], the industry stated that liability is not a barrier for them, as other complementary data sources are available. Nevertheless, that should not be a reason for neglecting the requirement for accurate and reliable data.

4.5. Interdisciplinarity

The complexity of future mobility requires a wide range of expertise. Therefore, interdisciplinarity is essential when dealing with emerging technology. Yet, this is one aspect of the changes that is often neglected. The emergence of new technology has brought about challenges for the road authorities and industry to find people capable of dealing with this complexity. If we just look at AVs, we can see that this is a compound of mechanical engineering, electrical engineering and computer science that can no longer be separated, such as in the conventional development process. Moreover, the AV and ADAS are highly dependent on traffic and, still, on human drivers, which would need to be monitored during driving. Therefore, traffic sciences, psychology and even aspects of biotechnology are involved. This is, e.g., supported by the findings of the study in [

96], where a system-theoretic process analysis was applied to improve existing road safety management. This shows the complex and interdisciplinary interactions required to maintain road safety. Without a doubt, this requires new expertise of the responsible persons. In addition, this study shows that feedback from human drivers on the perception of road, markings, and speed limitations is crucial in this process. When dealing with a fully AV in the future, this feedback to the road authorities will be required from AVs. So there are questions we could ask ourselves:

Through this study, we saw that harmonisation, digitalisation and maintenance require a diversity of expertise. Regarding interdisciplinarity, we can take a global perspective, where the focus is on collaboration to define necessary policies, directives, regulations and standards that contribute to reliability and safety. Another perspective is more local, which affects a specific group of people such as academia or road authorities. Nevertheless, that is something that we can already see in academia and the projects that are established to support future mobility. To some extent, this can be applied to road authorities. Digitalisation may make some jobs redundant, but new opportunities will arise, especially in the field of big data and AI computing. For road authorities, one way to deal with this is through collaboration with vehicle manufacturers, telecommunication companies and others. However, integrating expertise in traffic and data analysis or communication is rather something that would be constantly needed, so there is a justified reason as to why they may want to deal with it internally.

5. Discussion

In the previous section, we derived five challenges that should be overcome to deal with future mobility. To summarise:

Harmonisation: It is a long-term process that involves local road authorities, vehicle manufacturers and legislative bodies as a managing organisation. Harmonisation should aim to accept a common interpretation of the existing standards (MUTCD or Vienna Convention) and upcoming format and standards for digitalisation on the basis of large landmasses. The subject of harmonisation could be parameters that are easily distinguishable among different cultures such as shape, colour and fonts while textual and collocated signs could be solved through digitalisation. Nonharmonised road marking and signs among countries increases the complexity in technology design and acceptance of upcoming ADAS.

Maintenance: In recent years, many reports were written on recommendations for the maintenance and visibility of road markings and traffic signs. AVs will require stricter rules for maintenance, but digitalisation allows potential improvements in real-time road monitoring and maintenance period scheduling. These real-time updates will require access to data from public and private transport, which is part of data access regulation. Moreover, to keep a high level of maintenance and cope with oncoming technology, the road authorities will need to reform their finances and expertise. Yet, there may be a need for clear and precise guidance and policies to support the activities of road authorities.

Design: Through literature research, it was observed that some limitations of ADAS are caused by the design and placement of traffic signs and road markings, which do not normally cause issues for human drivers. Some of those limitations are solvable with sensor fusion, digitalisation or through new regulation. The solutions to issues that are easily solvable and have viable solutions could be addressed in the next maintenance period, while others such as changing the appearance of lines from dashed to solid are not viable at least while human drivers are present. However, those particular issues are solvable through digitalisation.

Digitalisation: Digitalisation is an important and promising step with the potential to increase reliability and support environmental, maintenance and design limitations. However, it is also dependent on strict maintenance and real-time updates. To take full advantage of digitalisation, data access should first be regulated and standardised. Nevertheless, security must remain up-to-date with the progress of digitalisation and sufficiently resist or at least recognise cyber-attacks.

Interdisciplinarity: The need for people with strong skills in multiple fields, such as mechanical engineering, electrical engineering, computer science, traffic sciences and even psychology and biotechnology, is already a necessity, and be increasing required in the future. To cope with this, universities and research activities are already nurture multidisciplinarity. This is also of great importance for road authorities that will need to strengthen their collaboration with research, vehicle manufacturers and telecommunication in addition to establishing new job opportunities and extending their in-house expertise.

We can observe strong links and integrity over the challenges that are defined through this study. We have noted that digitalisation solves many existing problems not just in improving the reliability of ADS but also in supporting maintenance through real-time road monitoring via public and private transportation. To enable successful data exchange among road authorities, vehicle manufacturers, digital map providers and other stakeholders, a common consensus about data format and standards should be achieved, which should also undergo harmonisation to allow normal traffic flow across the regions of different road jurisdictions. The same implementation of standards as in MUTCD or Vienna Convention would simplify the process of digitalisation and the market penetration of ADS. Moreover, in the long term, digitalisation and smart solutions offer a promising alternative to designs that are convenient for human drivers and cannot be replaced so long as human drivers are present. We can see the strong connection of digitalisation with other challenges. For example, both harmonisation and design are long-term entities that should be updated along with the penetration of ADS technology and in harmony with the maintenance periods. We can also observe that the maintenance of road infrastructure will acquire new aspects in the future through digital infrastructure, which will require proper maintenance through labour that can cope with those tasks. Due to the collection of the large datasets, new opportunities for road authorities will be opened, and data analysis and AI will be fields that road authorities need to handle. Such expertise will ensure the possibility to optimise traffic flows and energy efficiency. Yet, all those challenges should be looked at as long-term processes of which some of their applications are not visible in the foreseeable future.