1. Introduction

Traffic prediction is an important part of the Intelligent Transportation System (ITS), which can reasonably allocate road resources, alleviate traffic congestion, and improve road traffic efficiency [

1,

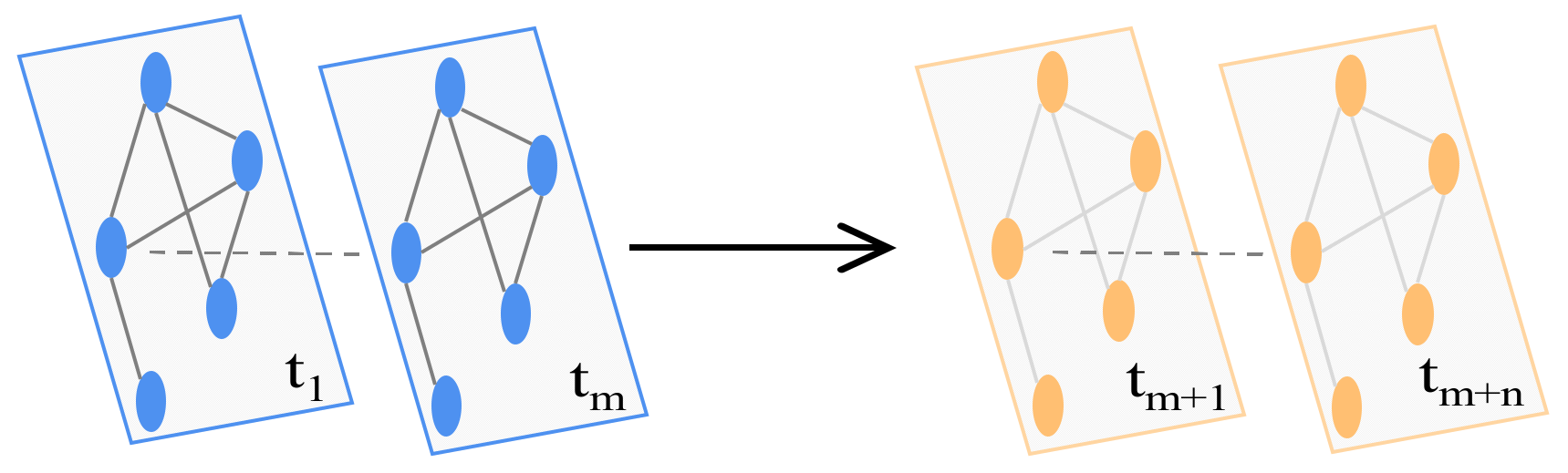

2]. Traffic prediction has always been extremely challenging because traffic in road networks changes dynamically over time. There is a complex, nonlinear and multimodal spatio-temporal dependency between historical traffic and predicted traffic. This temporal dependence relationship is expressed as the mutual influence of traffic at different times within the road network. The spatial dependence relationship is expressed as the mutual influence of the traffic between different roads within the road network, as shown in

Figure 1.

With the vigorous development of neural networks, deep learning has achieved success in obtaining complex nonlinear relationships [

3]. Graph Convolutional Networks (GCNs) are good at processing nonlinear structural data and are widely used in traffic prediction [

4,

5,

6,

7]. Most existing traffic prediction studies are aimed at short-term prediction (15 min) [

1,

8,

9,

10]. DCRNN [

8] uses the graph convolution operation to replace the original linear transformation in the recursive unit of the Recurrent Neural Network (RNN) for obtaining spatio-temporal information. To avoid the complex gating operation of RNN, STGCN [

9] uses graph convolution and 1D convolution to obtain spatio-temporal information, reduce parameters, and achieve a good short-term prediction effect. GWnet [

10] further uses graph convolution and block stacking of the Temporal Convolution Network (TCN) to achieve better short-term prediction. STSGCN [

11] constructs a local spatio-temporal graph for convolution to obtain a better short-term prediction effect. GMAN [

12] pointed out that the existing models pay more attention to short-term prediction and therefore spatio-temporal attention and an encoder–decoder mechanism were used to obtain better long-term predictions (1 h). However, this did not significantly help with short-term predictions. To be able to consider the combined effect of both short-term and long-term traffic prediction, we propose a new deep learning model Multi-Stage Spatio-Temporal Fusion Diffusion Graph Convolutional Network (MFDGCN).

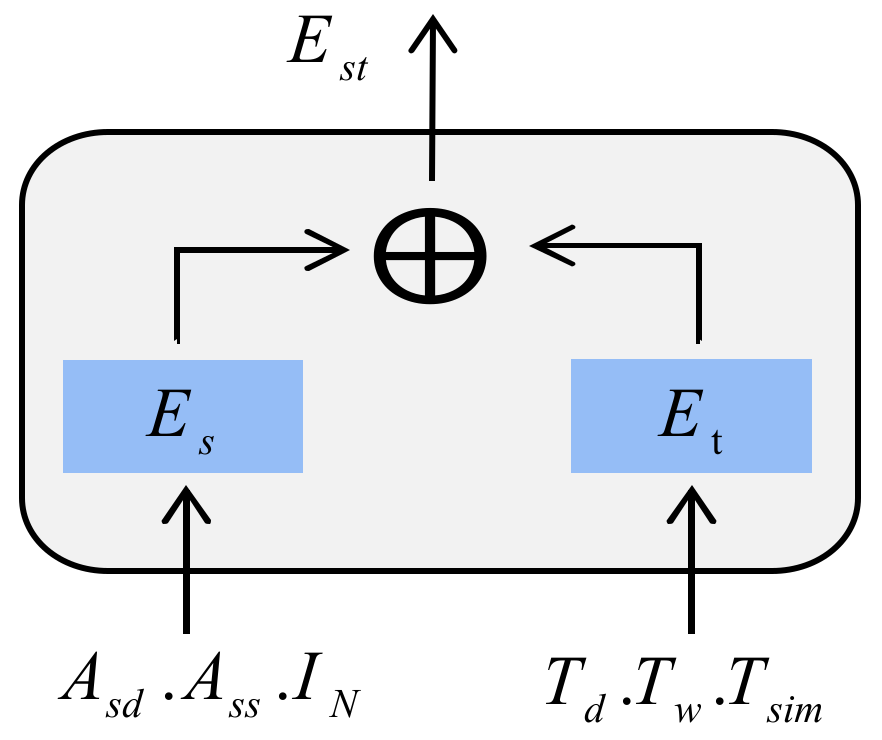

First, we propose a new spatio-temporal multi-association graph generation method, which obtains the static and dynamic representations of traffic flow in the spatial dimension based on spatial distance and spatial similarity. We then obtain the static and dynamic representation of the temporal dimension per the temporal connection relationship and temporal similarity. However, modeling based on a simple distance graph [

8,

9,

10] will ignore the deep relationship between non-adjacent spatial and temporal dimensions. It will also be less able to capture the interaction of traffic between different roads. While using weather and POI (Point of Interest) as features to build models is possible [

1,

13], these data are not easy to obtain.

Second, we propose a multi-stage hybrid spatio-temporal fusion method that can capture longer-term spatio-temporal relationships. It uses basic fusion operations to capture early low-level spatio-temporal information, followed by the use of an adaptive gating mechanism to fuse high-level spatio-temporal information after convolution. It then finally captures deeper complex spatio-temporal information through a multi-head attention mechanism [

14]. The spatial and temporal data in traffic prediction can be regarded as multimodal data with different dimensions. However, simple connection or addition operations are not sufficient to deeply mine for information from the mutual fusion of different models [

8,

9,

10,

15]. For example, STGCN [

9] and HetGAT [

16] use separate modules to sequentially capture spatial and temporal relationships, thereby splitting the association between both dimensions. STGODE [

17] and AST-MTL [

18] add the separately obtained spatio-temporal relationships, which is not conducive to establishing long-term spatio-temporal fusion. The main contributions of this paper are summarized as follows:

We propose a new spatio-temporal multi-association graph generation method to enhance features and capture richer spatio-temporal static and dynamic traffic features;

Considering the complex and changing spatio-temporal information of the traffic network, we propose a multi-stage hybrid spatio-temporal fusion method, which can capture longer-term spatio-temporal relationships;

We propose a new deep learning model MFDGCN, which can not only predict short-term traffic well, but also achieves good performance in long-term prediction;

We perform extensive validation using data from a real road network, and our model MFDGCN shows better performance in long and short-term traffic prediction compared to existing advanced baselines.

2. Related Work

Spatio-temporal prediction refers to the prediction of unknown system states in spatial and temporal dimensions and is widely used in many real-world scenarios, such as weather prediction, traffic prediction, and earthquake prediction. Traffic prediction is a typical spatio-temporal prediction problem [

2,

9]. Traditional traffic prediction utilized model-driven methods, such as Historical Average (HA) [

19] and Autoregressive Integrated Moving Average (ARIMA). These models are based on the linear analysis method, which are less accurate in complex nonlinear traffic prediction. Subsequently, data-driven methods gradually became mainstream, and machine learning methods were used in the early days, such as Vector Auto Regression (VAR) [

20], Support Vector Regression (SVR) [

21], and K-Nearest Neighbor (KNN) [

22], but they required more detailed engineering features and were more complex in terms of time. With the rapid development of deep learning technology, Recurrent Neural Networks (RNNs) have been widely used in time series prediction tasks [

23,

24,

25]. However, RNNs cannot consider the correlation of nodes in the spatial dimension. As such, Convolution Neural Networks (CNNs) for image processing have become a popular research topic, which divides the space into grids for convolution to capture spatial traffic dependencies [

26]. However, regularized grid data is unable to properly reflect irregular traffic network structure information. Therefore, Graph Convolutional Networks (GCNs) [

4,

5,

6,

7] have received extensive attention and are widely used in traffic prediction to extract spatial dependencies.

GCN is divided into spectral domain graph convolution [

4] and spatial domain graph convolution [

5,

6,

7,

27]. The spectral domain graph convolution uses Fourier transform to convert the graph signal to the spectral domain, followed by the convolution operation with a rather complicated calculation. The spatial domain graph convolution directly defines the neighborhood nodes of the convolution on the graph followed by the convolution operation, which is more intuitive and flexible. For example, GraphSage [

6] introduces an aggregation function to aggregate the neighbor information of nodes. GAT [

7] uses an attention mechanism to determine the importance of each neighbor node. DCNN [

27] regards graph convolution as a diffusion process with graph convolution realized via the probability transition matrix between nodes.

GCN uses information regarding graph edges to aggregate node information for generating a new node representation, so the adjacency matrix representing the edge relationship is particularly important. In a traffic network graph, an adjacency matrix is usually constructed based on the distance or connectivity between nodes. DCRNN [

8] models the traffic flow as a diffusion process on a directional graph, which constructs an adjacency matrix based on the distance map and uses diffusion graph convolution to extract the spatial representation. STGCN [

9] builds an adjacency matrix based on the distance graph, with spectral domain GCN used to extract the spatial representation. HetGAT [

16] builds an adjacency matrix based on the distance graph between nodes and the relationship between nodes and sites was also built and used GAT to extract the spatial representation. AST-MTL [

18] builds an adjacency matrix based on the connectivity between nodes and the spatial representation was extracted using spectral domain GCN. A simple distance graph extracts the spatial relationship between nodes and it is easy to ignore the correlation between nodes. STSGCN [

11] connects adjacent nodes to directly capture the local correlation between close nodes and their spatio-temporal neighbors, which placed limitations on capturing correlations between far nodes. HGCN [

28] builds an adjacency matrix based on the distance graph and uses the distance between nodes to generate a regional graph for obtaining node similarity. DCNN was then used to extract the spatial representation, but the regional similarity tended to ignore single node differences. STGODE [

17] and STFGNN [

29] used DTW (Dynamic Time Warping) to compare similarities between time series, but it is computationally expensive. AST-GCN [

1], MS-net [

13], and DMVST-Net [

26] comprehensively modeled actual traffic data by integrating various external information such as POI (Point of Interest) distribution, weather, and holiday notifications, but these are difficult to obtain in many scenarios.

Traffic prediction models can be divided into two categories according to the acquisition of temporal dependencies, which are either RNNs-based [

1,

2,

8,

18] or CNNs-based [

10,

16,

17,

29]. Models based on RNNs extract temporal dependencies through RNN, Long Short-Term Memory (LSTM), and Gated Recurrent Unit (GRU). AST-GCN [

1] and DCRNN [

8] use the graph convolution operation to replace the original linear transformation in the recursive unit to obtain spatio-temporal information. GST-GAT [

2] and TGC-LSTM [

30] use LSTM [

31] to extract temporal information. AST-MTL [

18] uses GRU to obtain spatio-temporal information after graph convolution. However, when a traffic prediction data set is relatively large, the complex gating in RNNs will generate a large computation workload. As RNNs rely on the memory of the previous step, this makes it difficult to capture large traffic flow fluctuations during peak traffic conditions [

9]. Some studies tend to use CNNs for temporal relationships in traffic prediction. CNNs-based studies use convolution operations across the temporal information to model temporal dependencies and expand the receptive field through dilated convolution to obtain a wider range of temporal relationships [

20]. GWnet [

10] and HGCN [

28] use stacked 1D and 2D dilated convolutions after graph convolution to increase the temporal receptive field of the model and show better short-term prediction results. STGODE [

17] uses 1D dilated convolution after graph convolution to extract temporal information. STFGNN [

29] uses graph convolution and 1D dilated convolution with a large dilation rate to extract spatio-temporal information in parallel. HetGAT [

16] uses graph attention convolutional after 1D and 2D dilated convolutions to extract temporal information.

Spatial and temporal modalities in traffic prediction usually use different methods to extract information, so the mutual fusion of spatio-temporal information plays a crucial role. STGCN [

9] and HetGAT [

16] use separate modules to sequentially capture spatial and temporal relationships, thereby splitting the association between spatial and temporal dimensions. STSGCN [

11] and MS-Net [

13] concatenate the separately obtained spatio-temporal relationships, and GWnet [

10], STGODE [

17], and AST-MTL [

18] add the separately obtained spatio-temporal relationships, STFGNN [

29] multiplies the separately obtained spatio-temporal relationships, but these simple methods are not conducive to establishing long-term spatio-temporal fusion.

4. Experiments and Discussion

In this section, we evaluate, compare, and analyze the experimental results of the proposed MFDGCN model, which compares eight basic and advanced traffic prediction baseline models when used with a real data set.

4.1. Data Set

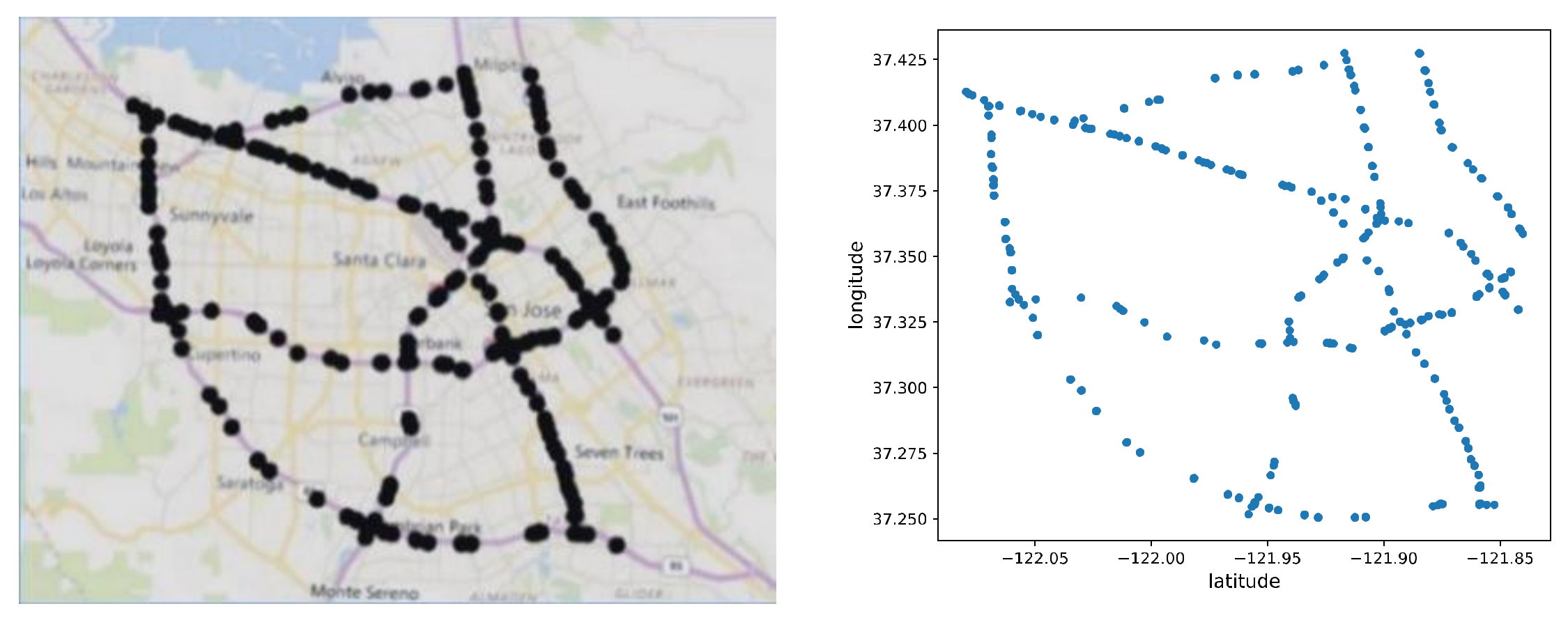

We comprehensively evaluated the MFDGCN model on the real road network data set PeMS_BAY [

8], which is shown in

Figure 7. This data set contains the data of 325 sensors in the highway network collected by CalTrans Performance Measurement System (PeMS). We selected data at 30 s intervals for 6 months from 1 January 2017 to 31 May 2017. Before the experiment, we aggregated 30 s of data into a time step of 5 min as the traffic flow input feature

of the model and generated an adjacency matrix

based on the distance and traffic similarity of each sensor. For the traffic flow data, we used 70% of the data for training, 20% for testing, and 10% for validation. In our work, the number of time steps in an hour is set to 12, and on this basis, we sample features x and labels y by a sliding window with a step size of 1.

4.2. Experimental Settings

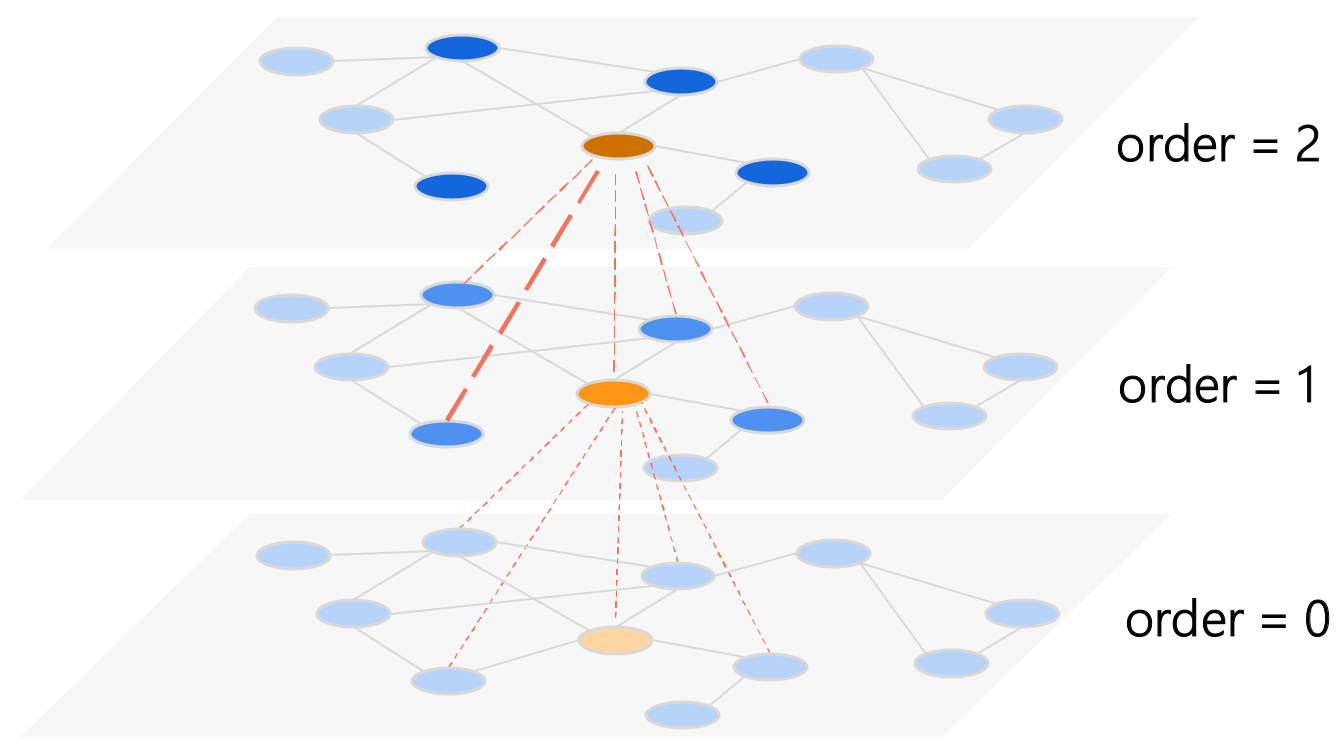

We used PyTorch 1.10 to conduct experiments on GeForce RTX 2080Ti. The batch size was 32, the initial learning rate was 0.001, the decay was performed every 5 rounds, and the early stop mechanism was set during training. The number of FDGCN-layers was 8, and residuals were added to each layer. In the temporal convolutional network, the kernel size

= 2, and the dilation factor was

= 1, 2, 1, 4, 1, 4, 1, 2. In the graph convolutional network, the convolutional neighborhood order = 2. The number of attention heads was

= 8, and the time step

was 12. We used the Adam optimizer [

36] to train the model. The traffic prediction in our work is a regression problem, so in the performance evaluation stage, we adopted the regression commonly used evaluation metrics MAE, RMSE, and MAPE.

4.3. Baselines

In the experiments, we compared MFDGCN against the following eight baseline methods. HA [

19]: The average value of all historical records in a certain period as the predicted value. VAR [

20]: Used in the analysis of multivariate time series models, it can better reflect the fluctuation of traffic flow and reduce uncertainty. SVR [

21]: Curve fitting using SVM and regression task analysis. DCRNN [

8]: Traffic prediction using the encoder–decode framework and diffusion graph convolution. STGCN [

9]: Designing neural networks with convolutional layers for spatio-temporal prediction with fewer parameters and faster training. STSGCN [

11]: Design of multiple modules with different time periods to effectively capture heterogeneity in the local spatio-temporal graph. GWnet [

10]: Stack spatio-temporal layers for better short-term predictions. GMAN [

12]: Multi-attention mechanism modeling to solve the problem of poor long-term traffic prediction.

4.4. Experimental Results

This section shows the prediction results of MFDGCN on traffic flow and compares it with other baseline models. The results are shown in

Table 1. We aggregated the MAE, RMSE, and MAPE metrics for the prediction results of the model over the next 15, 30, and 60 min on the PeMS_BAY data set, respectively. In this experiment, five-fold cross-validation was performed on the neural network model and the average value was taken as the final result.

Table 1 shows that the non-neural network models (HA, VAR, SVR) perform poorly for traffic flow prediction, mainly because they cannot directly model spatio-temporal dependencies in the traffic flow. The neural network model has strong feature learning ability and can achieve good results in traffic flow prediction. Our MFDGCN model outperformed other baseline models in MAE, RMSE, and MAPE by comprehensively comparing long-term and short-term prediction effects.

As shown in the table, MFDGCN has a MAE that is 4% lower for a short-term prediction of 15 min and a RMSE that is 7% lower when comparing with the GMAN model with better long-term prediction performance. MFDGCN has a MAE that is 7% lower for a long-term prediction of 60 min and a RMSE that is 19% lower when comparing with the GWnet model with better short-term prediction performance. In the comprehensive comparison, the MFDGCN model goes further when compared to the GWnet and GMAN models. It fuses spatio-temporal features better, obtains richer spatio-temporal dependencies, and achieves better results in both short-term and long-term traffic prediction. As the prediction duration increases, the long-term prediction performance of each model will decline, but the decline for MFDGCN is smaller and its advantage is more obvious.

Compared with STGCN, DCRNN, GWnet, and GMAN modeled using a simple distance graph and STSGCN modeled with a local node correlation graph, MFDGCN uses dynamic and static fusion graph modeling in spatial and temporal dimensions. This can not only capture relevant information from local nodes in the road traffic network, but also capture relevant information from global nodes. It can learn the similar relationship between non-adjacent nodes and improve prediction performance. Compared with STGCN that splits the spatio-temporal association, STSGCN concatenates spatio-temporal information and GWnet adds the stacked spatio-temporal information, MFDGCN uses an adaptive gating mechanism to fuse the convolutional spatio-temporal information. It then fuses the embedded spatio-temporal information through a multi-head attention mechanism, which can capture longer-term spatio-temporal relationships and achieve better long-term prediction performance.

MFDGCN shows better short-term prediction performance by using multi-layer stacked and dilated temporal convolutions. It is 4% lower in MAE for a short-term prediction of 15 min and 1% lower in MAE at 30 min when compared with GMAN where a transformer is used. However, MFDGCN is slightly lacking in long-term prediction when compared with GMAN and the MAE of GMAN at 60 min for a long-term prediction is 2% higher. In general, the comprehensive performance of MFDGCN is better.

The comparison of the prediction performance of each model at each time step on the PeMS_BAY data set is shown in

Figure 8.

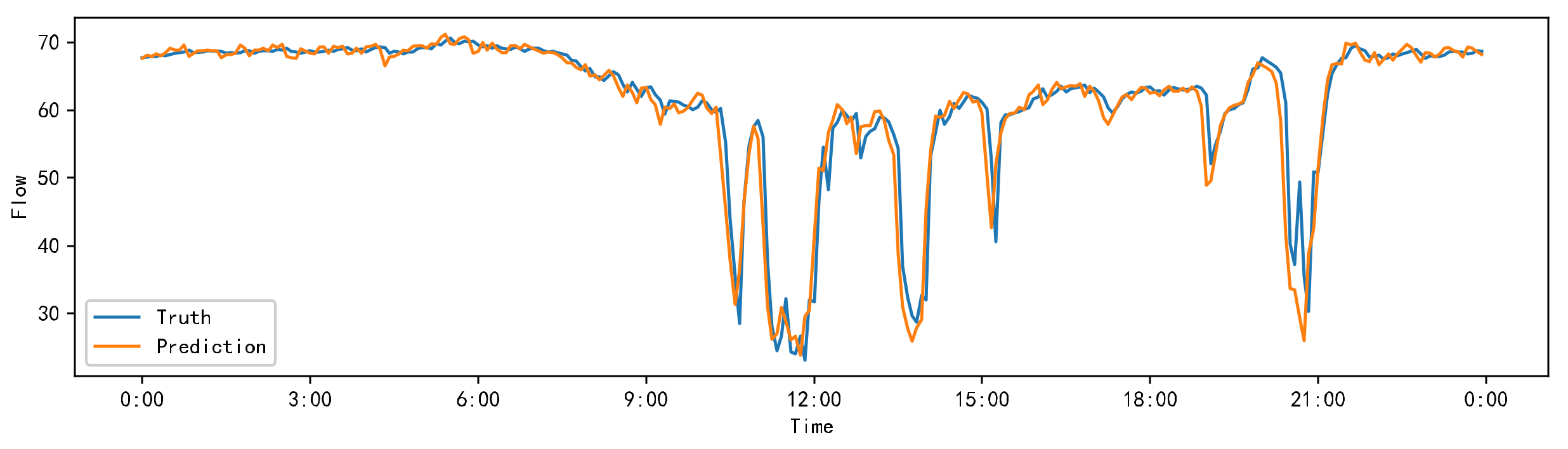

Figure 9 shows the comparison of the truth and predicted values of MFDGCN for a certain day on the PeMS_BAY data set.

4.5. Effects of Components and Parameters

We compared the proposed multi-association graph generation and multi-stage fusion methods, verified the effectiveness of each component in MFDGCN, and compared the selection of important model parameters. We removed the spatial similarity graph in the multi-association graph and named the model No-simg. We also removed the gate in the multi-stage fusion and named the model No-gate. Comparing them with the MFDGCN model, the MAE values of the prediction results at 15 min are shown in

Figure 10. The results show that stronger features can be obtained by using the multi-association graph and the spatio-temporal multimodal fusion module shows better performance.

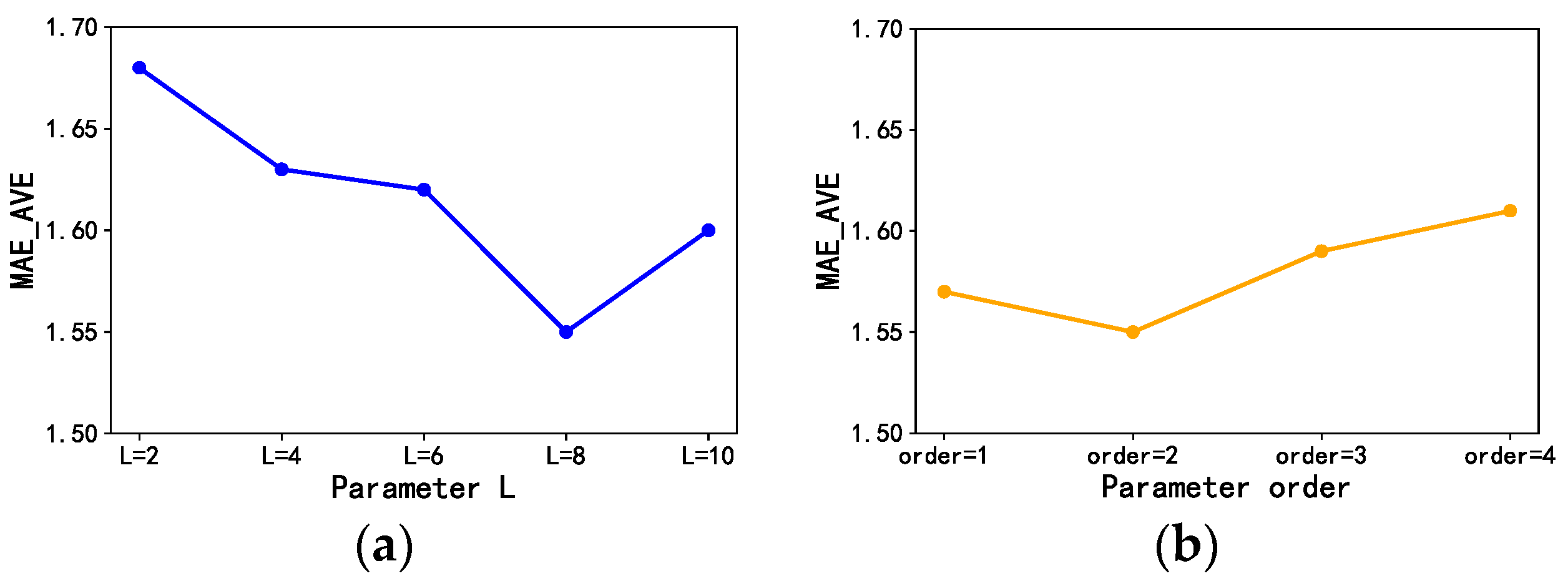

We used

L to represent the number of layers of FDGCN, fixed each component in FDGCN, and tested the model prediction results when

L was set to 2, 4, 6, 8, and 10. The results are the average of all MAE values within an hour. As shown in

Figure 11a, the model works best when

L = 8. The results show that the model needs a certain depth to obtain richer spatio-temporal information, but too much depth will influence the model effect, and parameter selection needs to be made according to different data and models.

We fixed the number of FDGCN layers at

L = 8 and tested the model prediction results when the graph convolutional network neighborhood order was set to 1, 2, 3, and 4. The results are the average of all MAE values within an hour. As shown in

Figure 11b, the model works best when order = 2. The results show that graph convolution is not as large as possible when selecting neighborhoods and appropriate parameters should be selected according to the different data and models.