GenoMus: Representing Procedural Musical Structures with an Encoded Functional Grammar Optimized for Metaprogramming and Machine Learning

Abstract

Featured Application

Abstract

1. Introduction

1.1. New Challenges for Modeling Musical Creativity

1.2. Composing Composers

1.3. Features of a Procedural Oriented Composing-Aid System

- Modularity:

- based on a very simple syntax.

- Compactness:

- the maximal compression of procedural information.

- Extensibility:

- subsets and supersets of the grammar that can be easily handled.

- Readability:

- both abstract and human-readable formats are safely interchangeable.

- Repeatibility:

- the same initial conditions always generate the same output.

- Self-referenceable:

- support for compression and iterative processes based on internal references.

- Extensibility:

- easily adaptable to other contexts and domains.

1.4. Knowledge Representation Optimized for Machine Learning by Design

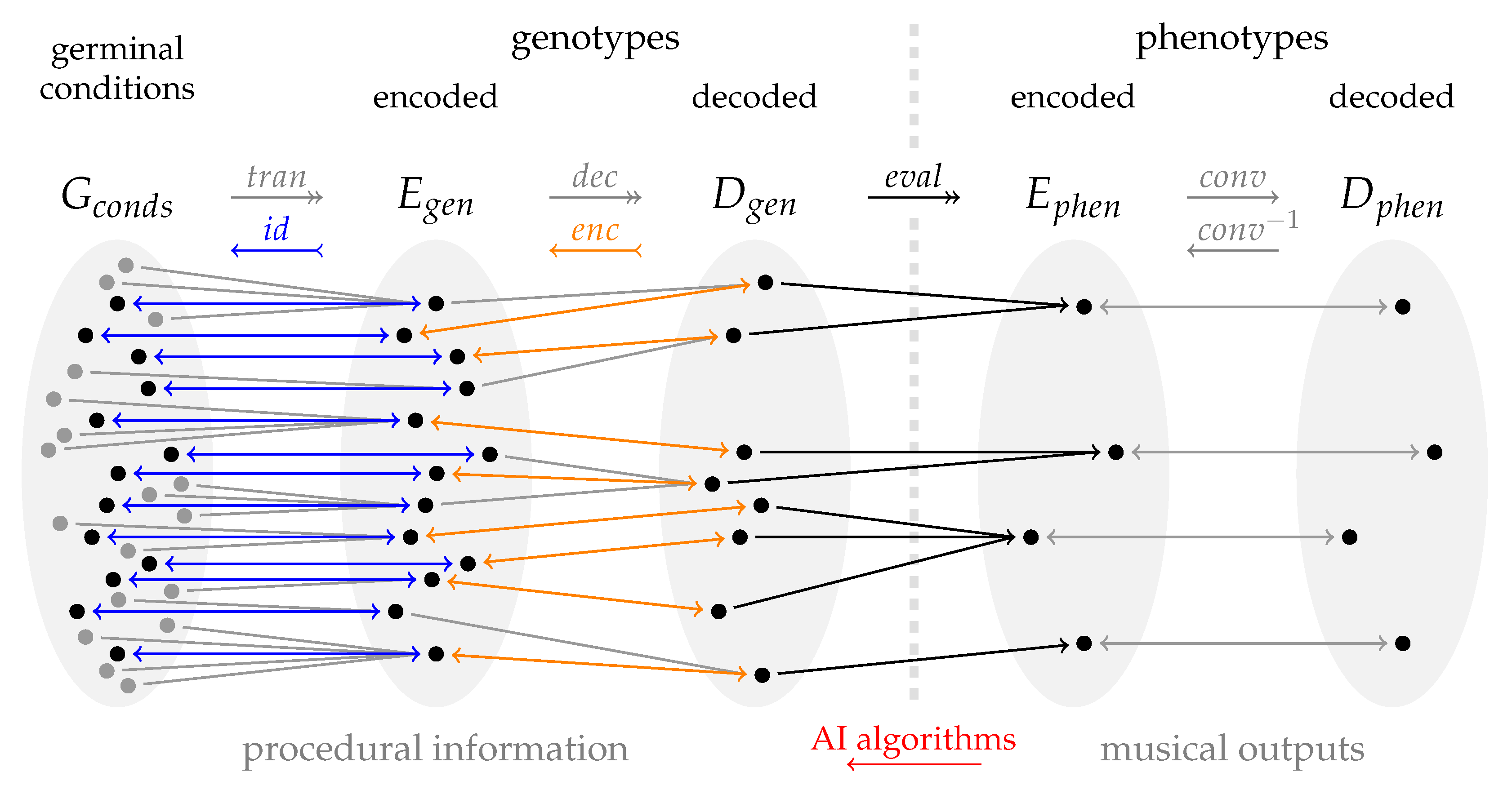

2. Conceptual Framework

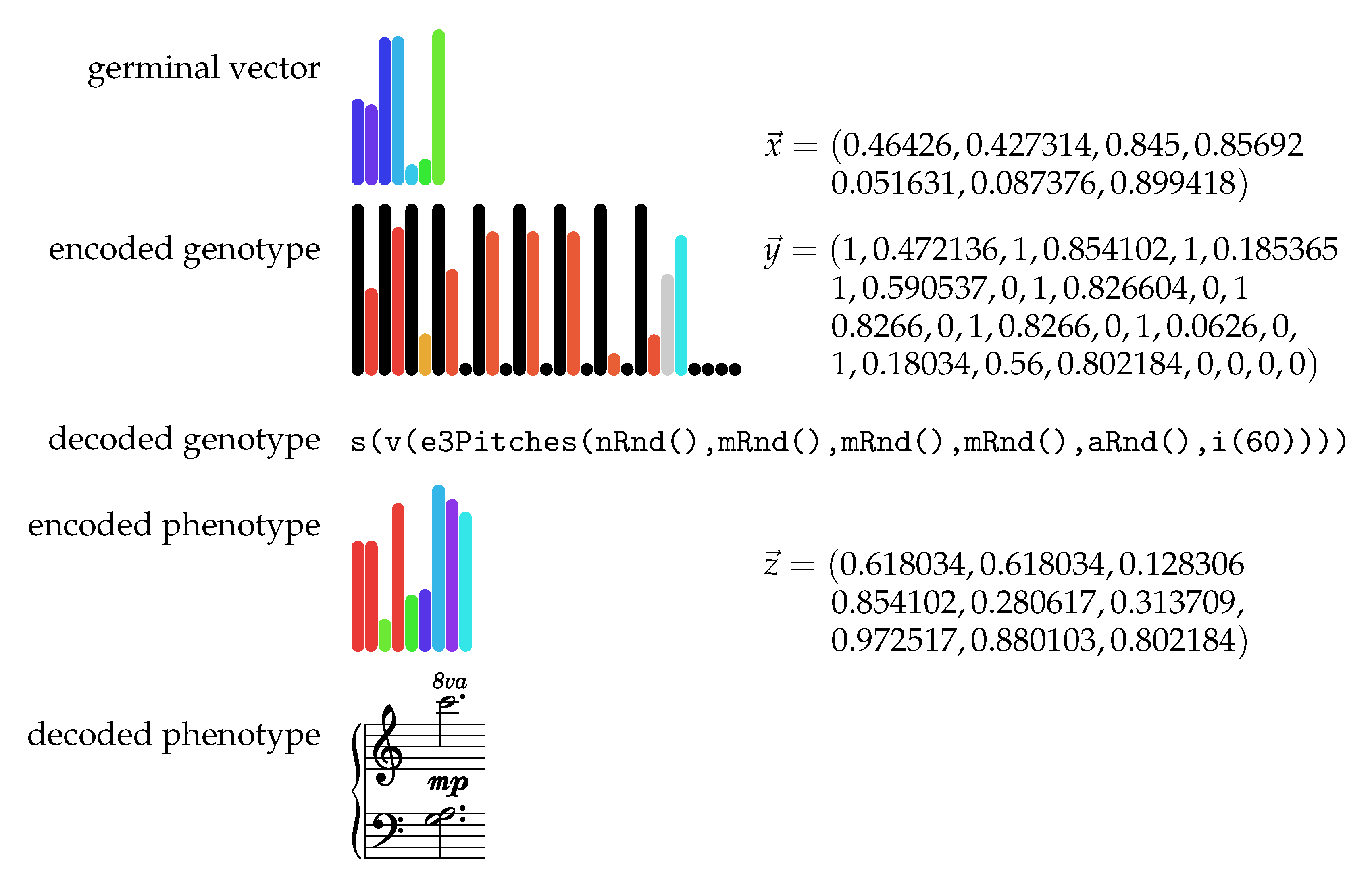

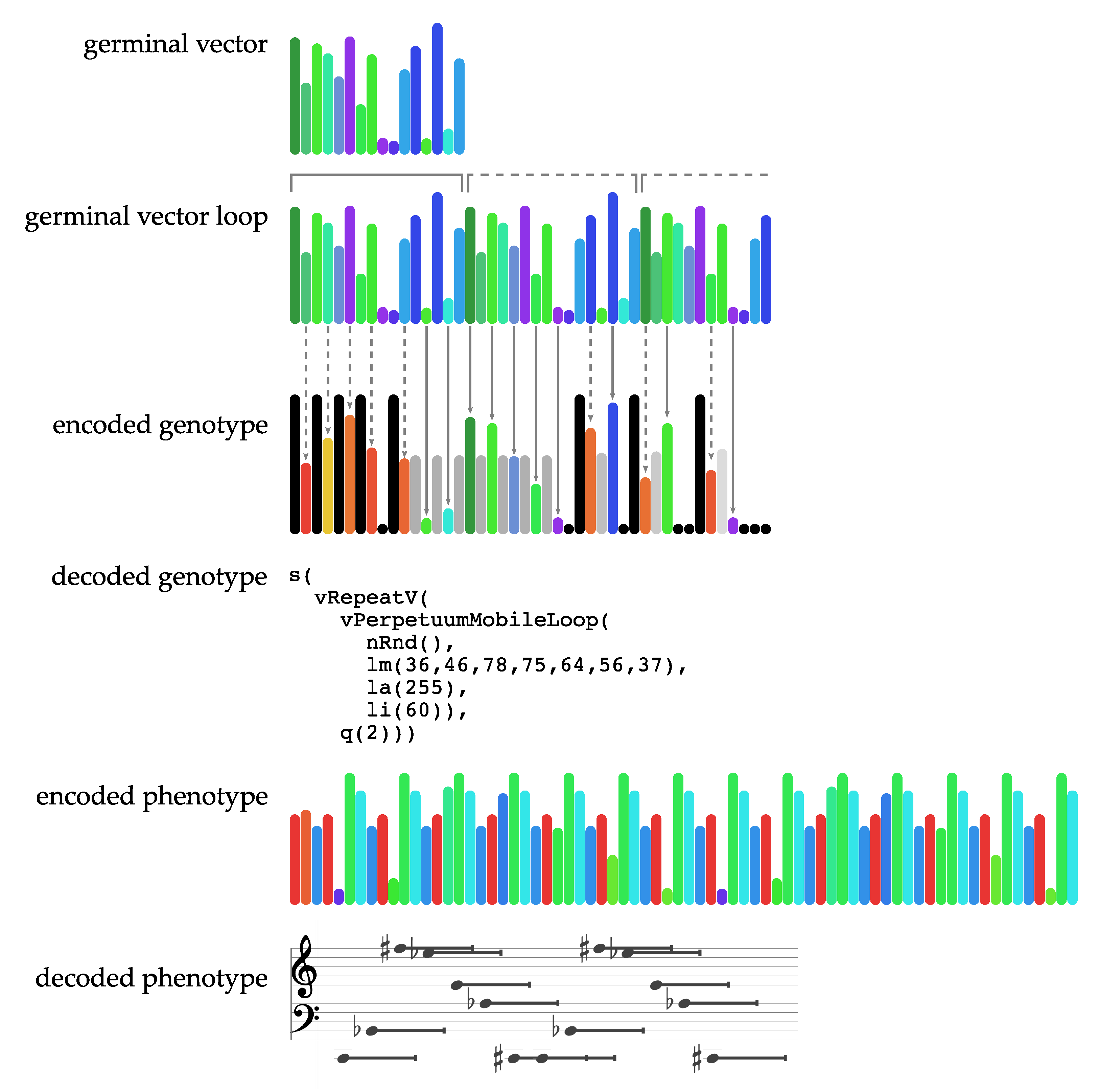

2.1. Definitions and Analogies

- Genotype function:

- Minimal computable unit representing a musical procedure, designed in a modular way to enable taking other genotype functions as arguments.

- Genotype:

- Computable tree of genotype functions.

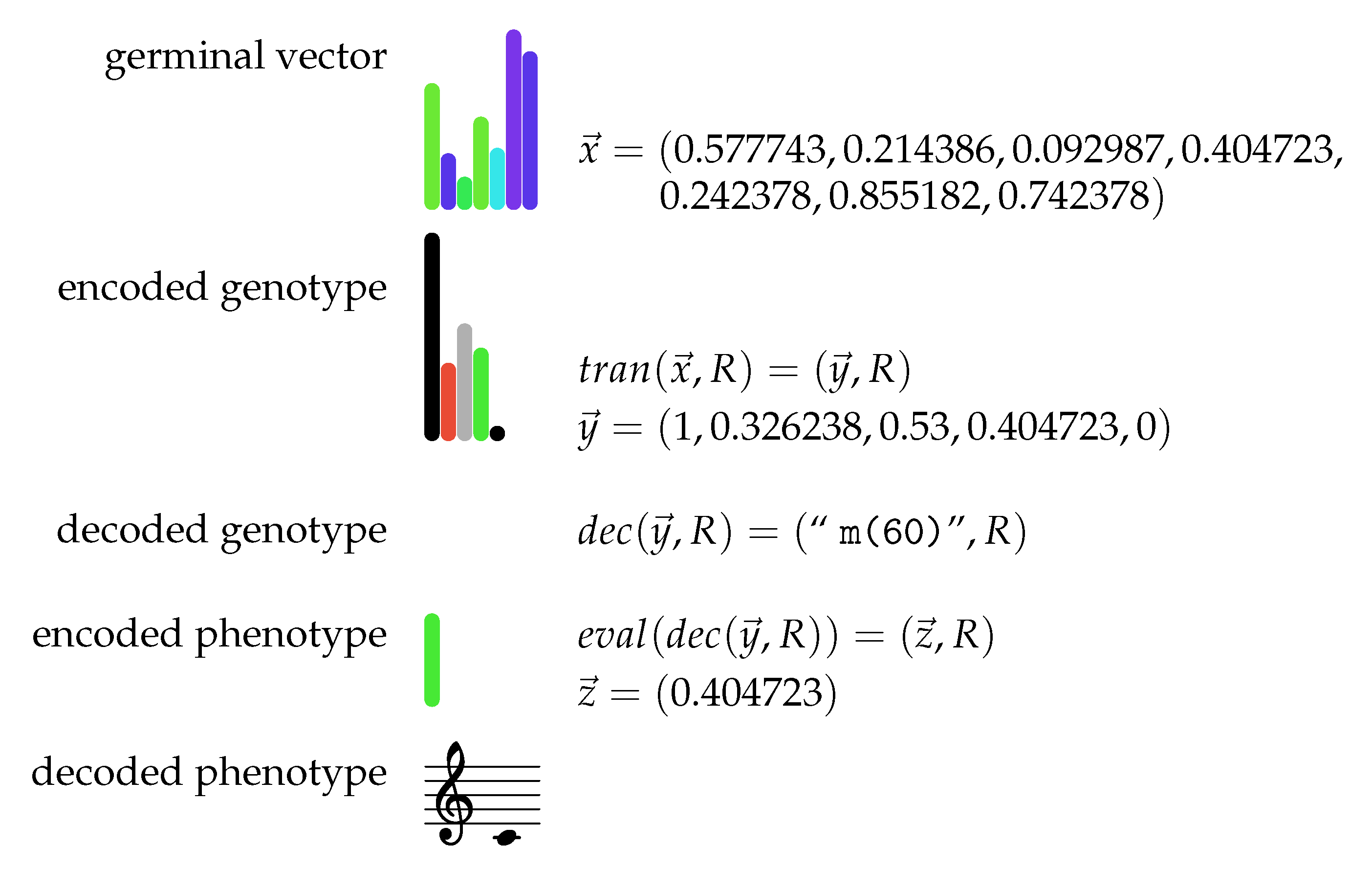

- Encoded genotype:

- Genotype coded as an array of floats .

- Decoded genotype:

- Genotype coded as a human-readable evaluable string.

- Leaf:

- Single numeric value or list of values at the end of a genotype branch.

- Phenotype:

- Music generated by a genotype.

- Encoded phenotype:

- Phenotype coded as an array of floats .

- Decoded phenotype:

- Phenotype converted to a format suitable for third-party music software.

- Germinal vector:

- An array of floats used as an initial decision tree to build a genotype.

- Germinal conditions:

- Minimal data required to deterministically construct a genotype, consisting of a germinal vector and several constraints to handle the generative process.

- Event:

- Simplest musical element. An event is a wrapper for the parameters (leaves) that characterize it.

- Voice:

- Line (or layer) of music. A voice is a wrapper for a sequence of one or more events, or a wrapper for one or more voices sequentially concatenated, without overlapping.

- Score:

- Piece (or excerpt) of music. A score can be a wrapper for one or more voices, or a wrapper for one or more scores together. Scores can be concatenated sequentially (one after another) or simultaneously (sounding together).

- Species:

- A specific configuration of the internal parametric structure that constitutes an event.

- Specimen:

- A genotype/phenotype pair belonging to a species, that produces a piece of music. Data characterizing a specimen are stored in a dictionary containing the germinal conditions, along with metadata and additional analytical information.

2.2. Formal Definition of the GenoMus Framework

- is the set of parameter types integrating a genotype functional tree,

- is the vector of parameter types that constitutes an event data structure,

- is the set of conversion functions mapping human-readable specific formats of each parameter type into numbers , and their correspondent inverse functions,

- is the set of genotype functions defined and indexed in a specific library, which take and return data structures , and

- is the set of functions covering all required transformations to compute phenotypes from germinal conditions.

- is the subset of eligible functions encoded indices,

- is the output type of the genotype function tree,

- is a depth limit to the branching of the genotype function tree,

- is the maximal length for lists of parameters, and

- is a seed state used to produce deterministic results with random processes inside a genotype.

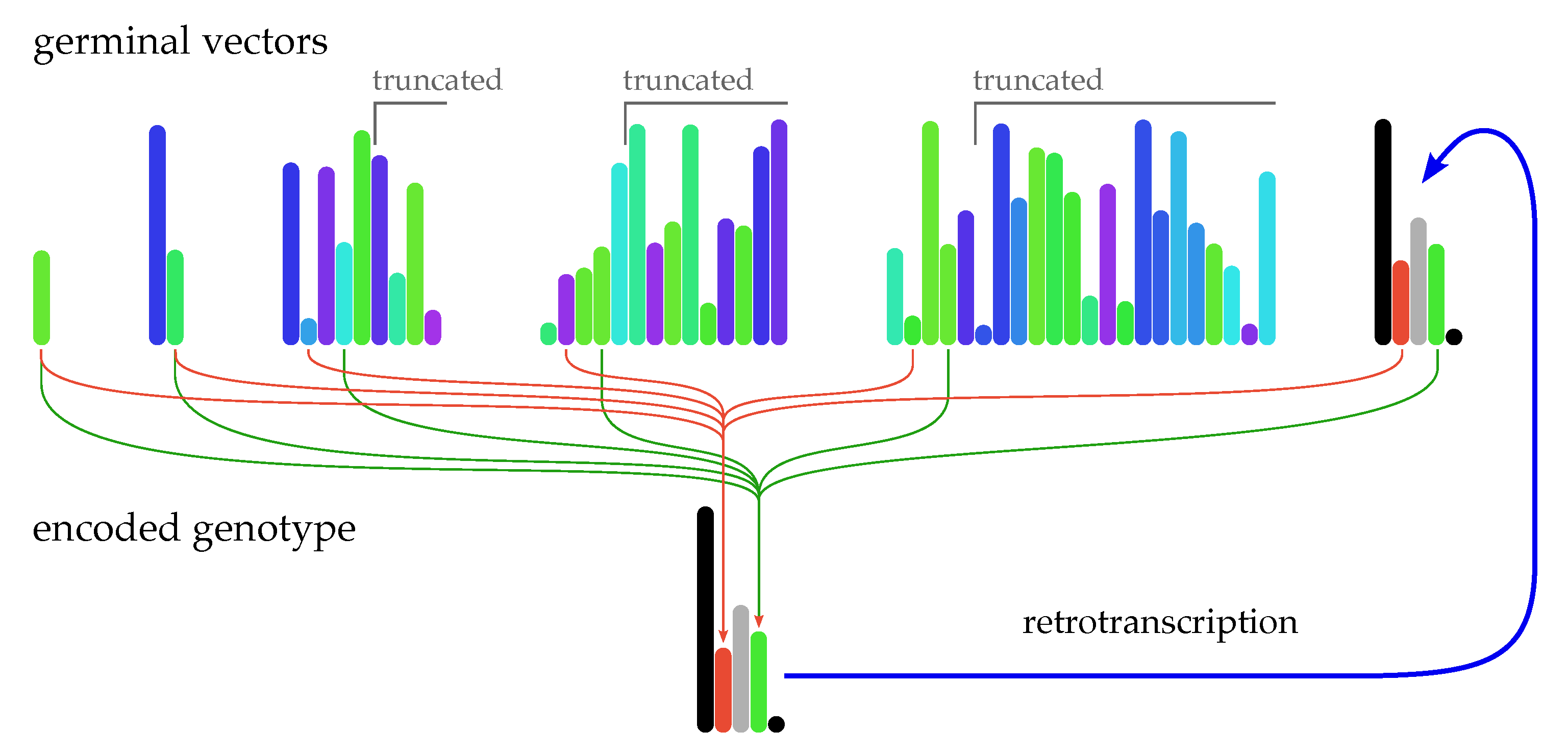

2.3. From Germinal Vectors to Musical Outputs

- If has more items than needed, they are ignored, effectively acting as a truncation of the remaining unused part of .

- If needs more elements than those supplied by , reads elements repeatedly from the beginning as a circular array, until reaching a closure, to complete a valid vector . That implies that germinal vectors with even a single value are valid inputs to be transformed into functional expressions.

- To avoid infinite recursion when loops occur while applying , the restrictions R introduce some limits to the depth of functional trees and the length of parameter lists.

2.4. Retrotranscription of Decoded Genotypes as Germinal Vectors

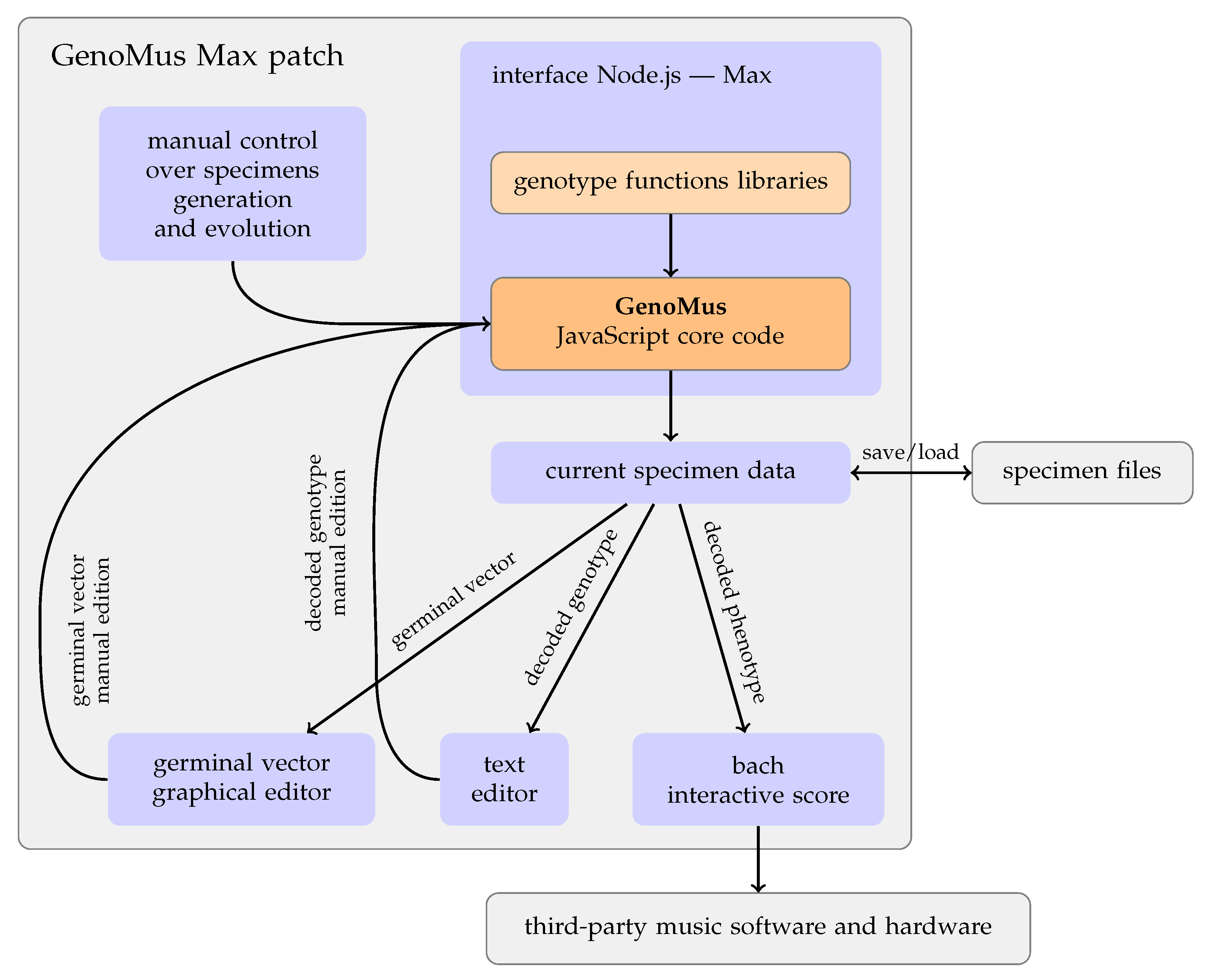

3. Implementation

3.1. Model Overview

- Display the generated music as an editable and interactive score, whose data can be converted to sound inside Max, or sent to external software via MIDI, OSC, or custom formats.

- Mutate the current specimen changing stochastically only leaf values of the functional tree, applying new seeds for random processes, replacing complete branches, etc.

- Display decoded genotypes as editable text, so they can be manually edited from scratch.

- Set constraints to the generated specimens, limiting the length, polyphony, depth of the functional tree, forcing the inclusion of a function, etc.

- Define conditions and limitations for the search process.

- Edit germinal conditions, including the graphical edition of the germinal vector.

- Save and load specimens and germinal conditions.

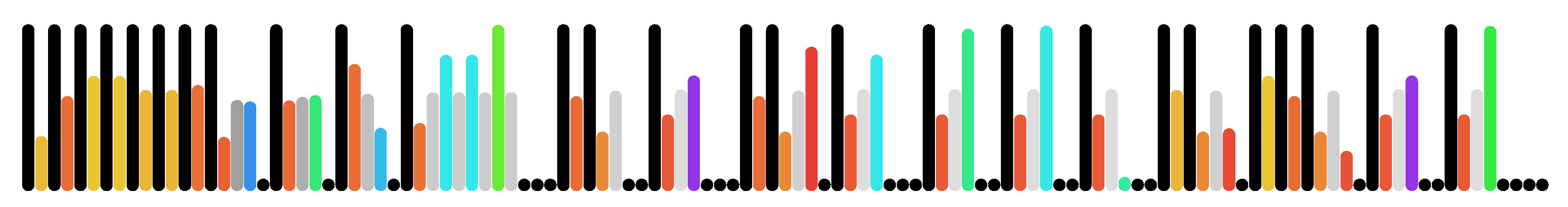

- Export specimens as colored barcodes, according to the rules explained in Section 3.5.

3.2. Anatomy of a Genotype Function

3.3. Encoding and Decoding Strategies for Data Normalization and Retrotranscription

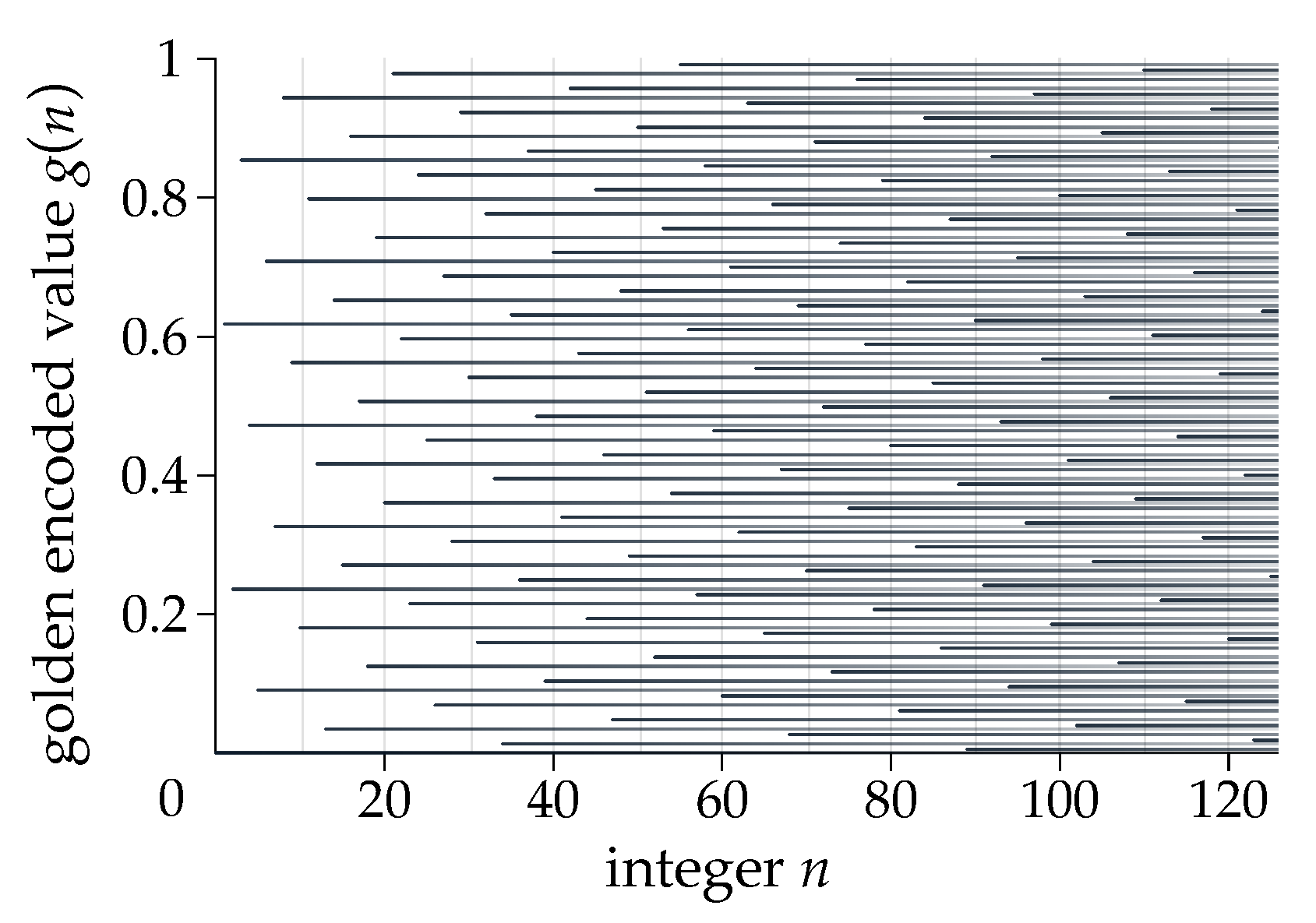

3.3.1. Leaf Type Identifiers

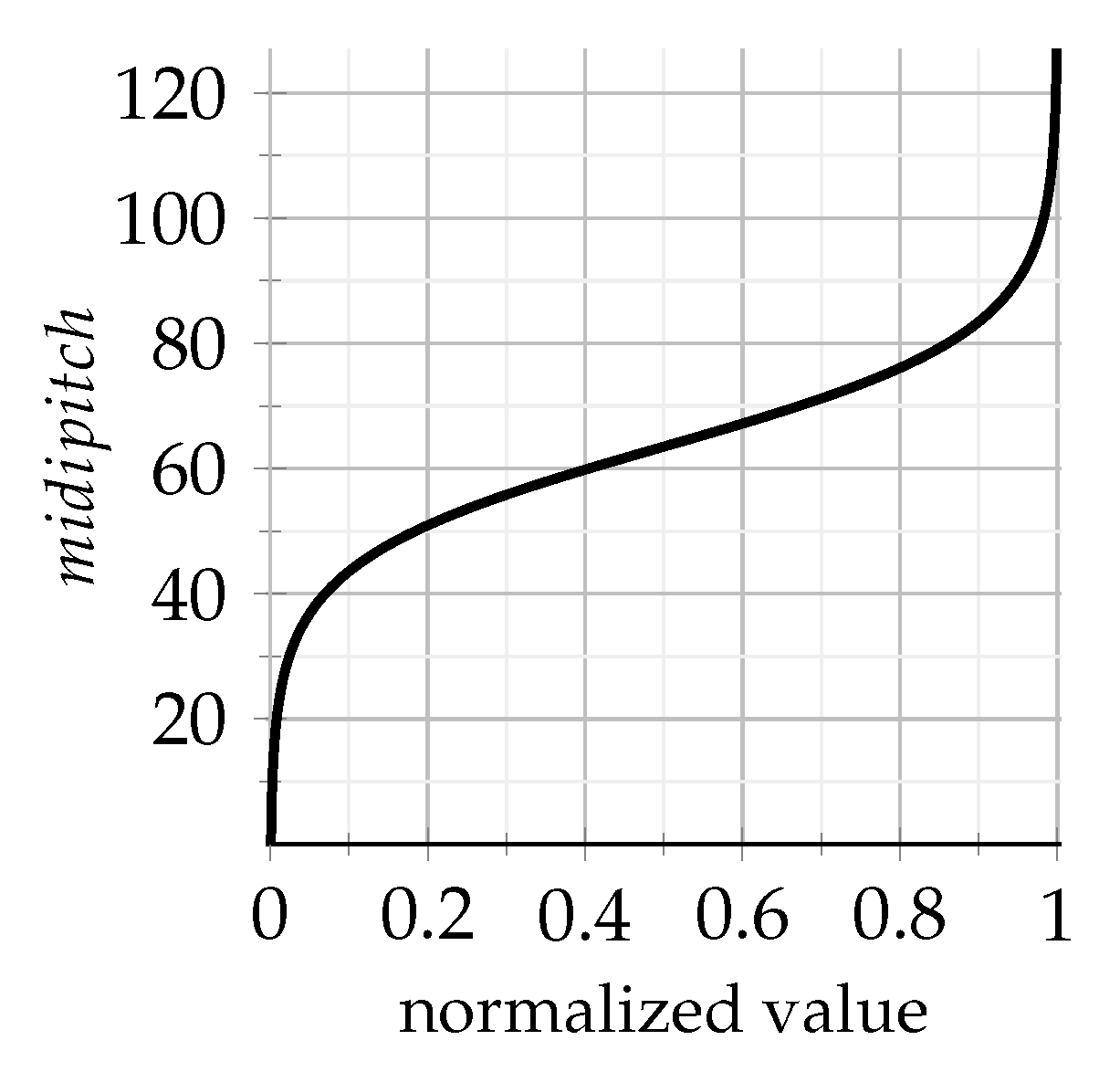

3.3.2. Leaf Values

- A wide range of values is covered (for instance, for parameters regarding rhythm and articulation, very short and long durations are available).

- For each parameter type, a central value of is assigned to a midpoint among the typical values, interval covers the usual range, and values and are reserved for extreme values rarely used in common music scores.

- To favor the predominance of ordinary values, a previous conversion similar to the lognormal function is applied to each encoded parameter value x supplied by a germinal vector:

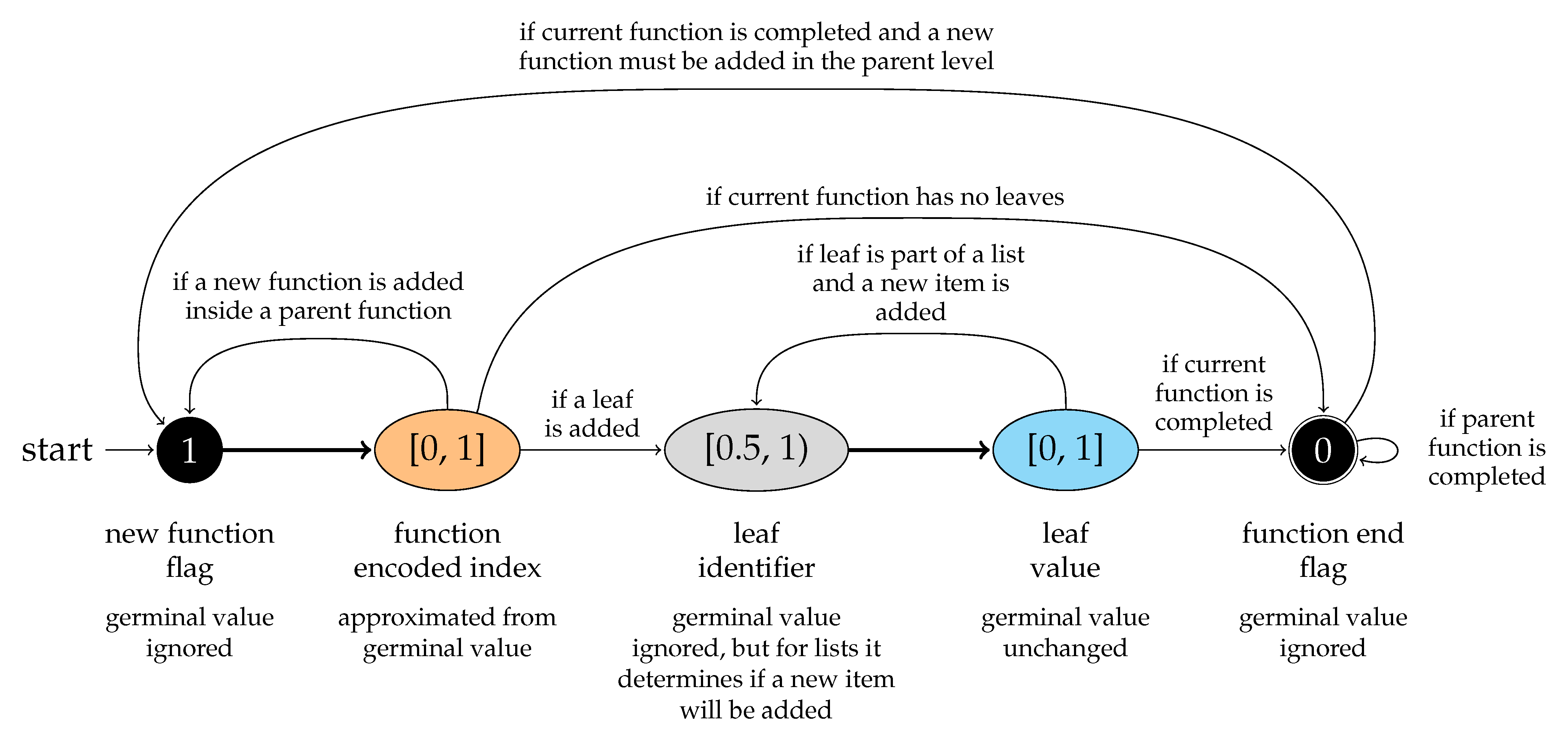

3.3.3. Expression Structure Flags

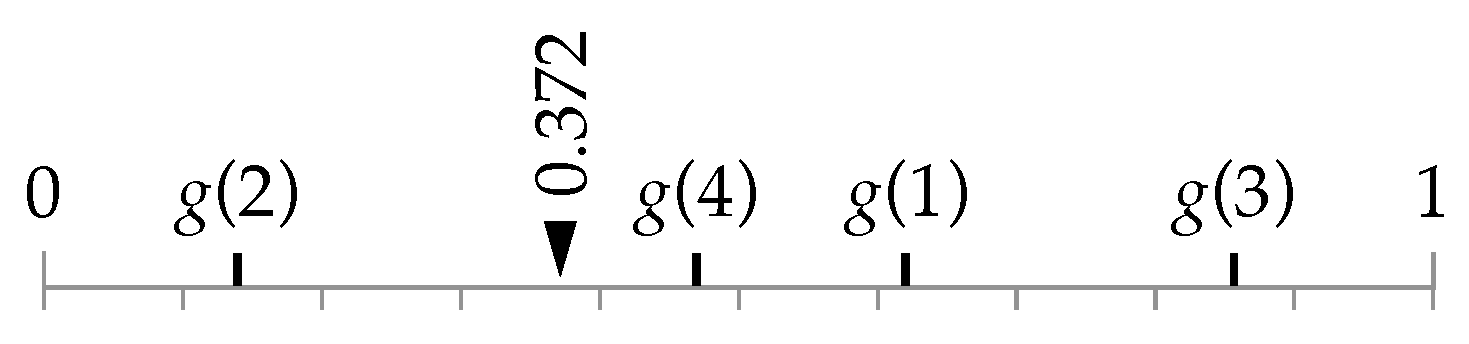

3.3.4. Genotype Function Indices

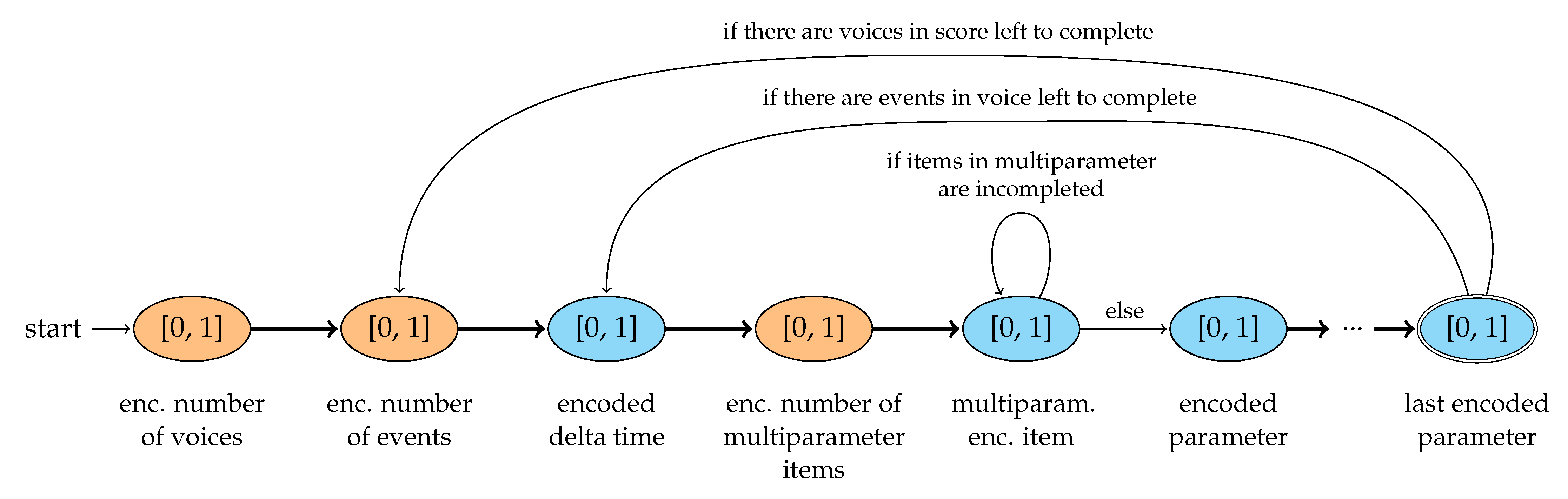

3.4. Encoding Genotypes and Phenotypes Vectors

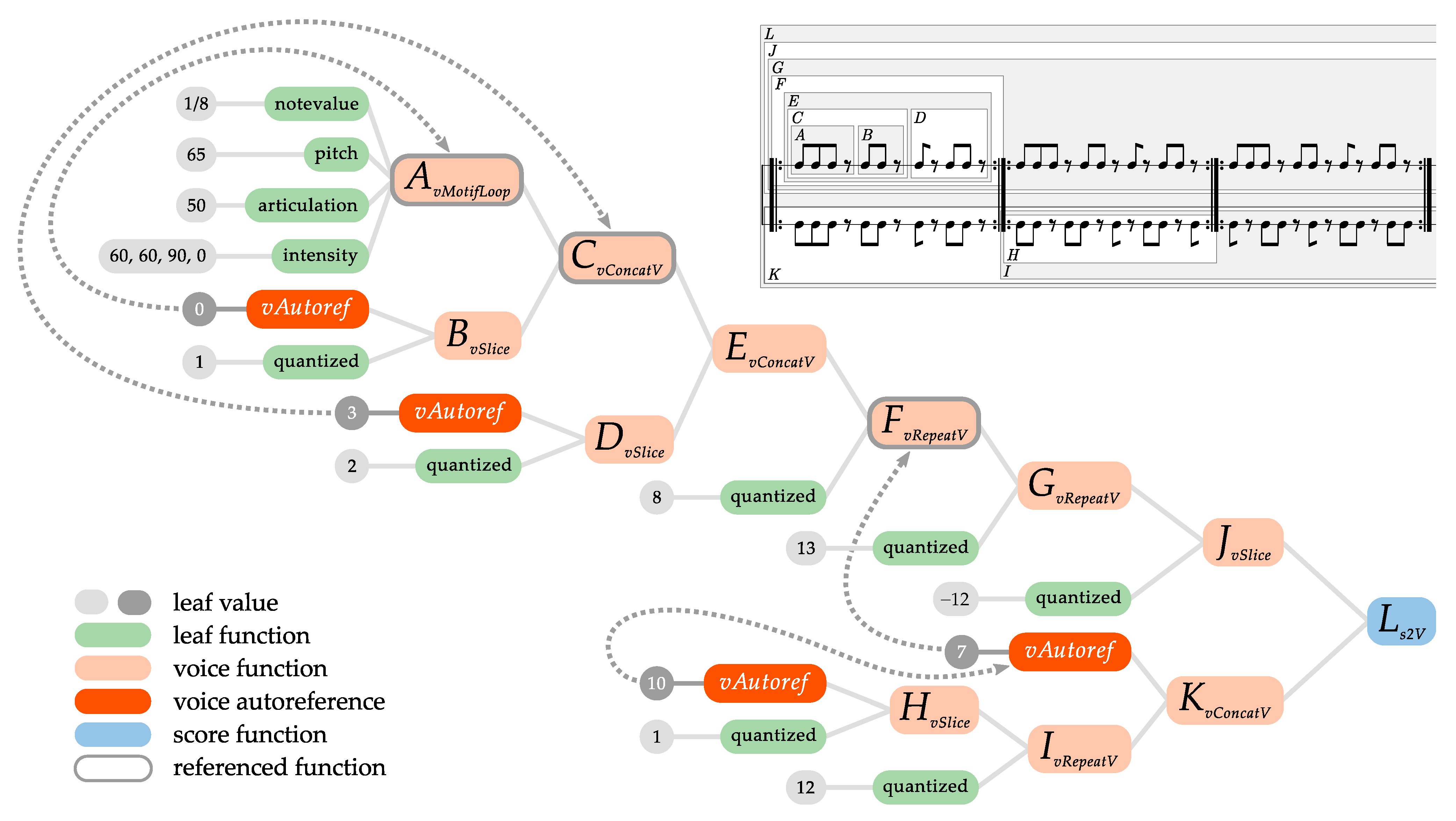

3.5. Minimal Examples Visualized

4. Results

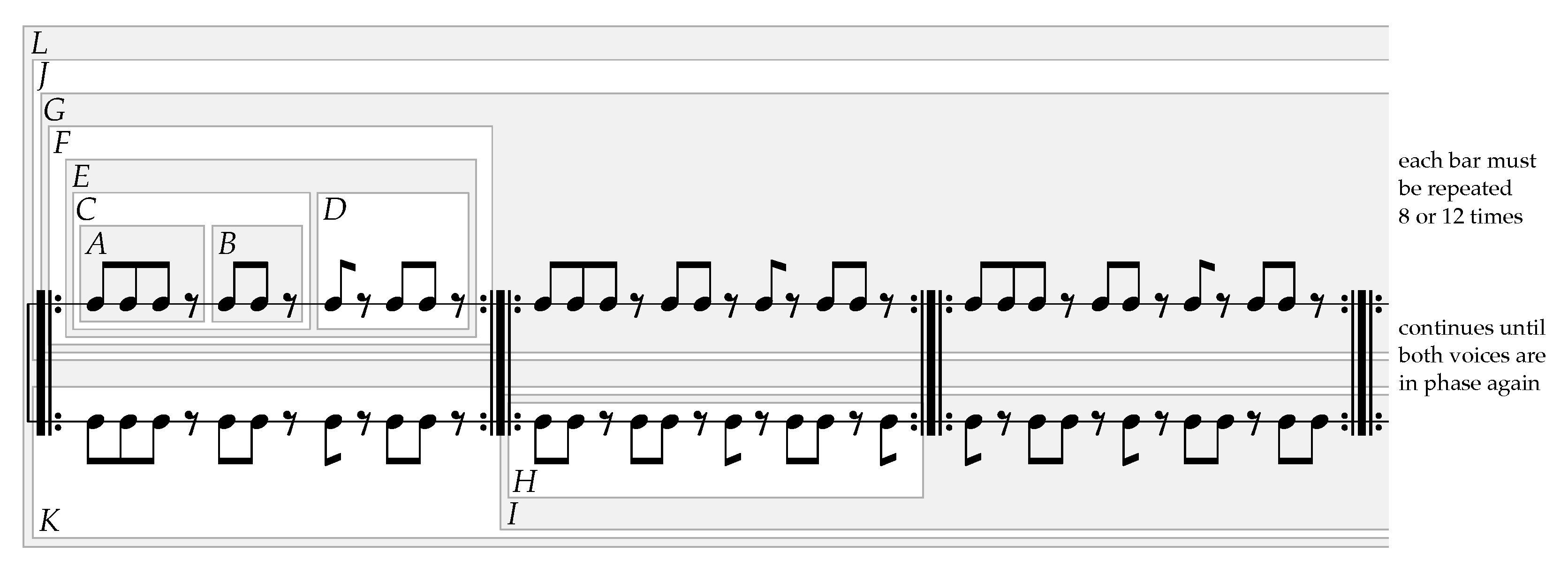

4.1. Clapping Music as a GenoMus Specimen

4.2. Pure Procedural Representation

s2V( // score L: joins the 2 voices vertically

vSlice( // voice J: slices last cycle due to phase shift

vRepeatV( // phase G: F 13 times

vRepeatV( // cycle F: E 8 times

vConcatV( // pattern E: C + D

vConcatV( // motif C: A + B

vMotifLoop( // core motif A: 3 8th-notes and a silence

ln(1/8), // note values

lm(65), // pitch (irrelevant for this piece)

la(50), // articulation

li(60,60,90,0)), // intensities (last note louder for clarity)

vSlice( // motif B: A with 1st note sliced

vAutoref(0),

q(1))),

vSlice( // motif D: C with first two notes sliced

vAutoref(3),

q(2))),

q(8)),

q(13)),

q(-12)),

vConcatV( // voice K: F + H

vAutoref(7),

vRepeatV( // phase I: H 12 times

vSlice( // cycle H: F without 1st note, for phase shift

vAutoref(10),

q(1)),

q(12))))

|

4.3. Internal Autoreferences

4.4. Creating New Functions from Musical Examples

5. Discussion and Conclusions

5.1. Development and Specification of the Grammar Key Characteristics

- Grammar based on a symbolic and generative approach to music composition and analysis. GenoMus is focused on the correspondences between compositional procedures and musical results. It employs the genotype/phenotype metaphor, as many other similar approaches, but in a very specific way. The musical analysis represented as trees has been also used by Ando et al. [37], representing classical pieces.

- Style-independent grammar, able to integrate and combine traditional and contemporary techniques. In any approach to artificial creativity, a representation system is a precondition that restricts the search space and imposes aesthetic biases a priori, either consciously or unconsciously. The design of algorithms to generate music can be ultimately seen as an act of composition itself. With this in mind, our proposal seeks to be as open and generic as possible, to represent virtually any style, and to enclose any procedure. The purpose of the project is not to imitate styles, but to create results of certain originality, worthy of being qualified as creative. A smooth integration of modern and traditional techniques is one of the purposes of our grammar. GenoMus allows for the inclusion and interaction of any compositional procedure, even those from generative techniques that imply iterative subprocesses, such as recursive formulas, automata, chaos, constraint-based and heuristic searches, L-systems, etc. This simple architecture achieves the integration of the three different paradigms described by Wooller et al. [38]: analytic, transformational, and generative. So, a genotype can be viewed as a multiagent tree.

- Optimized modularity for metaprogramming. Each musical excerpt is generated by a function tree made with a palette of procedures attending all dimensions: events, motifs, rhythmic and harmonic structures, polyphony, global form, etc. All function categories share the same input/output data structures, which eases the implementation of the metaprogramming routines encompassing all time scales and polyphonic layers of a composition, from expressive details to the overall form. This follows the advice of Jacobs [39] and Herremans et al. [40], who suggest working with larger building blocks to capture longer music structures.

- Support for internal autoreferences. In almost any composition, some essential procedures require the reuse of previously heard patterns. As many pieces consist of transformations and derivations of motifs presented at the very beginning, our framework enables pointing to preceding patterns. At execution time, each subexpression is stored and indexed, being available to be referenced by the subsequent functions of the evaluation chain. Beyond the benefits of avoiding internal redundancy when there are repeated patterns, the possibility of creating internal autorefences of nodes inside a function tree is an indispensable precondition for the inclusion of procedures that demand the recursion and reevaluation of subexpressions. This kind of reuse of genotype excerpts is also observed in genomics [41].

- Consistency of the correspondences among procedures and musical outputs. To obtain an increasing knowledge base, correspondences between expressions, encoded representations, and the resulting music must be always the same, regardless of the forthcoming evolution of the grammar and the progressive addition of new procedures by different users. To encode musical procedures, each function name is assigned a number, but to keep the encoded vectors as different as possible, function name indices are scattered across the interval and are registered in a library containing all available functions.

- Possibility of generating music using subsets of the complete library of compositional procedures. Before the automatic composition process begins, users can select which specific procedures should be included or excluded from it. It can also be used to set the mandatory functions to be used in all the results proposed by the algorithm.

- Applicability to other creative disciplines beyond music. Although this framework is presented for the automatic composition of music, the model is easily adaptable to other areas where creative solutions are sought. Whenever it is possible to decompose a result into nested procedures, a library of such compound procedures can be created, taking advantage of their encoding as numerical vectors that serve as input data for machine learning algorithms.

5.2. Perspectives for Training, Testing, and Validation of the Model

- Multiple strategies of evolution in parallel: Starting from a given genotype, a wide range of manipulations can be combined, mutating and crossing leaves and branches of the functional tree, and also introducing previously learned patterns at any time scale. The architecture of germinal vectors as universally computable inputs has been designed to enable high flexibility for any manipulation of preexisting material as simple numeric manipulations.

- Specimen autoanalysis: Some genotype functions can return an objective analysis of a set of musical characteristics, such as variability, rhythmic complexity, tonal stability, global dissonance index, level of inner autoreference, etc. These genotype metadata are very helpful for reducing the search space when looking for some specific styles, and allow any AI system to measure the relative distance and similarities to other specimens, classify results, and drive evolution processes.

- Human supervised evaluations: subjective ratings made by human users, attending to aesthetic value, originality, mood, and emotional intensity, can be stored and classified to build a database of interesting germinal conditions to be taken as starting points for new interactions with each user profile.

- Analysis of existing music: selected excerpts recreated as a decoded genotype (as shown in Section 4), both manual or automated, can enrich the corpus of the learned specimens of a general database.

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Fernández, J.D.; Vico, F.J. AI Methods in Algorithmic Composition: A Comprehensive Survey. J. Artif. Intell. Res. 2013, 48, 513–582. [Google Scholar] [CrossRef]

- López-Rincón, O.; Starostenko, O.; Martín, G.A.S. Algoritmic music composition based on artificial intelligence: A survey. In Proceedings of the 2018 International Conference on Electronics, Communications and Computers, Cholula, Mexico, 21–23 February 2018. [Google Scholar] [CrossRef]

- Pearce, M.; Meredith, D.; Wiggins, G. Motivations and Methodologies for Automation of the Compositional Process. Music. Sci. 2002, 6, 119–147. [Google Scholar] [CrossRef]

- Nierhaus, G. Algorithmic Composition: Paradigms of Automated Music Generation, 1st ed.; Springer Publishing Company, Incorporated: New York, NY, USA, 2008. [Google Scholar]

- Boden, M.A. What Is Creativity? In Dimensions of Creativity; The MIT Press: Cambridge, MA, USA, 1996; pp. 75–118. [Google Scholar]

- Rowe, J.; Partridge, D. Creativity: A survey of AI approaches. Artif. Intell. Rev. 1993, 7, 43–70. [Google Scholar] [CrossRef]

- Papadopoulos, G.; Wiggins, G. AI Methods for Algorithmic Composition: A Survey, a Critical View and Future Prospects. AISB Symp. Music. Creat. 1999, 124, 110–117. [Google Scholar]

- Crawford, R. Algorithmic Music Composition: A Hybrid Approach; Northern Kentucky University: Highland Heights, KY, USA, 2015. [Google Scholar]

- López de Mántaras, R. Making Music with AI: Some Examples. In Proceedings of the 2006 Conference on Rob Milne: A Tribute to a Pioneering AI Scientist, Entrepreneur and Mountaineer, Amsterdam, The Netherlands, 20 May 2006; IOS Press: Amsterdam, The Netherlands, 2006; pp. 90–100. [Google Scholar]

- Schaathun, A. Formula-composition modernism in music made audible. In Inspirator–Tradisjonsbærer–Rabulist; Edition Norsk Musikforlag: Oslo, Norway, 1996; pp. 132–147. [Google Scholar]

- Xenakis, I. Formalized Music: Thought and Mathematics in Composition; Indiana University Press: Bloomington, IN, USA, 1971. [Google Scholar]

- Jacob, B.L. Algorithmic composition as a model of creativity. Organised Sound 1996, 1, 157–165. [Google Scholar] [CrossRef]

- Buchanan, B.G. Creativity at the Metalevel (AAAI-2000 Presidential Address). AI Mag. 2001, 22, 13–28. [Google Scholar]

- McCormack, J. Open Problems in Evolutionary Music and Art. In Proceedings of the Applications of Evolutionary Computing, EvoWorkshops 2005: EvoBIO, EvoCOMNET, EvoHOT, EvoIASP, EvoMUSART, and EvoSTOC, Proceedings, Lausanne, Switzerland, 30 March–1 April 2005; pp. 428–436. [Google Scholar] [CrossRef]

- Hofmann, D.M. A Genetic Programming Approach to Generating Musical Compositions. In Evolutionary and Biologically Inspired Music, Sound, Art and Design; Springer International Publishing: Cham, Switzerland, 2015; pp. 89–100. [Google Scholar] [CrossRef]

- de la Puente, A.O.; Alfonso, R.S.; Moreno, M.A. Automatic composition of music by means of grammatical evolution. In Proceedings of the 2002 Conference on APL Array Processing Languages: Lore, Problems, and Applications-APL ’02, Madrid, Spain, 22–25 July 2002; ACM Press: New York, NY, USA, 2002. [Google Scholar] [CrossRef]

- Ariza, C. An Open Design for Computer-Aided Algorithmic Music Composition: athenaCL; Dissertation.com: Boca Raton, FL, USA, 2005. [Google Scholar]

- Shao, J.; Mcdermott, J.; O’Neill, M.; Brabazon, A. Jive: A Generative, Interactive, Virtual, Evolutionary Music System. In Proceedings of the EvoApplications 2010: EvoCOMNET, EvoENVIRONMENT, EvoFIN, EvoMUSART, and EvoTRANSLOG, Istanbul, Turkey, 7–9 April 2010; Volume 6025, pp. 341–350. [Google Scholar] [CrossRef]

- Burton, A.R.; Vladimirova, T. Generation of Musical Sequences with Genetic Techniques. Comput. Music J. 1999, 23, 59–73. [Google Scholar] [CrossRef]

- Dostál, M. Evolutionary Music Composition. In Handbook of Optimization; Springer: Berlin/Heidelberg, Germany, 2013; pp. 935–964. [Google Scholar] [CrossRef]

- de Lemos Almada, C. Gödel-Vector and Gödel-Address as Tools for Genealogical Determination of Genetically-Produced Musical Variants. In Computational Music Science; Springer International Publishing: Cham, Switzerland, 2017; pp. 9–16. [Google Scholar] [CrossRef]

- Sulyok, C.; Harte, C.; Bodó, Z. On the impact of domain-specific knowledge in evolutionary music composition. In Proceedings of the Genetic and Evolutionary Computation Conference on GECCO’19, Prague, Czech Republic, 13–17 July 2019; ACM Press: New York, NY, USA, 2019. [Google Scholar] [CrossRef]

- Quintana, C.S.; Arcas, F.M.; Molina, D.A.; Fernández, J.D.; Vico, F.J. Melomics: A Case-Study of AI in Spain. AI Mag. 2013, 34, 99. [Google Scholar] [CrossRef][Green Version]

- Bod, R. The Data-Oriented Parsing Approach: Theory and Application. In Computational Intelligence: A Compendium; Fulcher, J.F.J., Jain, L.C., Eds.; Springer: Berlin/Heidelberg, Germany, 2008; pp. 330–342. [Google Scholar]

- López-Montes, J. Microcontrapunctus: Metaprogramación con GenoMus aplicada a la síntesis de sonido. Espacio Sonoro 2016. Available online: http://espaciosonoro.tallersonoro.com/2016/05/15/microcontrapunctus-metaprogramacion-con-genomus-aplicada-a-la-sintesis-de-sonido/ (accessed on 15 August 2022).

- Agostini, A.; Ghisi, D. A Max Library for Musical Notation and Computer-Aided Composition. Comput. Music J. 2015, 39, 11–27. [Google Scholar] [CrossRef]

- Agostini, A.; Ghisi, D. Gestures, events and symbols in the bach environment. In Proceedings of the Journées d’Informatique Musicale (JIM 2012), Mons, Belgium, 9–11 May 2012; pp. 247–255. [Google Scholar]

- Burton, A.R. A Hybrid Neuro-Genetic Pattern Evolution System Applied to Musical Composition. Ph.D. Thesis, University of Surrey, Guildford, UK, 1998. [Google Scholar]

- Laine, P.; Kuuskankare, M. Genetic algorithms in musical style oriented generation. In Proceedings of the First IEEE Conference on Evolutionary Computation, IEEE World Congress on Computational Intelligence, Orlando, FL, USA, 27–29 June 1994. [Google Scholar] [CrossRef]

- Drewes, F.; Högberg, J. An Algebra for Tree-Based Music Generation. In Proceedings of the 2nd International Conference on Algebraic Informatics, Lecture Notes in Computer Science, Thessaloniki, Greece, 21–25 May 2007; Volume 4728, pp. 172–188. [Google Scholar] [CrossRef]

- Spector, L.; Alpern, A. Induction and Recapitulation of Deep Musical Structure. In Proceedings of the IJCAI-95 Workshop on Artificial Intelligence and Music, Macao, China, 10–16 August 2019; pp. 41–48. [Google Scholar]

- Mheich, Z.; Wen, L.; Xiao, P.; Maaref, A. Design of SCMA Codebooks Based on Golden Angle Modulation. IEEE Trans. Veh. Technol. 2019, 68, 1501–1509. [Google Scholar] [CrossRef]

- Hofmann, D.M. Music Processing Suite: A Software System for Context-Based Symbolic Music Representation, Visualization, Transformation, Analysis and Generation. Ph.D. Thesis, University of Music, Karlsruhe, Germany, 2018. [Google Scholar]

- López-Montes, J. GenoMus como aproximación a la creatividad asistida por computadora. Espacio Sonoro 2015. Available online: http://espaciosonoro.tallersonoro.com/2015/01/17/genomus-como-aproximacion-a-la-creatividad-asistida-por-computadora/ (accessed on 15 August 2022).

- López-Montes, J. Ada+Babbage-Capricci, for cello and piano. Espacio Sonoro 2015. Available online: http://espaciosonoro.tallersonoro.com/2015/01/19/ada-babbage-capricci-for-cello-and-piano/’ (accessed on 15 August 2022).

- López-Montes, J.; Miralles, P. Tiento: Creatividad artificial con GenoMus para la composición colaborativa de música electrónica. In FACBA’21: Seminario La Variación Infinita; Editorial Universidad de Granada: Granada, Spain, 2021; pp. 62–69. [Google Scholar]

- Ando, D.; Dahlsted, P.; Nordahl, M.G.; Iba, H. Interactive GP with Tree Representation of Classical Music Pieces. In Lecture Notes in Computer Science; Springer: Berlin/Heidelberg, Germany, 2007; pp. 577–584. [Google Scholar] [CrossRef]

- Wooller, R.; Brown, A.R.; Miranda, E.; Diederich, J.; Berry, R. A framework for comparison of process in algorithmic music systems. In Generative Arts Practice; David, B., Ernest, E., Eds.; Creativity and Cognition Studios: Sydney, Australia, 2005; pp. 109–124. [Google Scholar]

- Jacob, B.L. Composing with Genetic Algorithms. In Proceedings of the 1995 International Computer Music Conference, ICMC 1995, Banff, AB, Canada, 3–7 September 1995. [Google Scholar]

- Herremans, D.; Chuan, C.H.; Chew, E. A Functional Taxonomy of Music Generation Systems. ACM Comput. Surv. 2017, 50, 1–30. [Google Scholar] [CrossRef]

- Stanley, K.O.; Miikkulainen, R. A Taxonomy for Artificial Embryogeny. Artif. Life 2003, 9, 93–130. [Google Scholar] [CrossRef] [PubMed]

| Property Name | Description | Example |

|---|---|---|

| funcType | output type of the function, a property used to create a pool of expressions that can be referenced as an argument for other functions | ’lmidipitchF’ |

| encGen | encoded genotype | [1,0.506578,0.53,0.404723, 0.53,0.458756,0] |

| decGen | decoded genotype; that is, the expression of the evaluated function that returns itself | ’lm(60,62)’ |

| encPhen | encoded phenotype | [0.404723,0.458756] |

| (other optional properties) | specific properties generated after evaluation, containing useful information for the parent function | (not returned by function lm) |

| Value | Meaning | Colors |

|---|---|---|

| 1 | new function | black |

| 0 | end of function | black |

| function type identifier | gray tones | |

| encoded golden value | red–orange–yellow | |

| rest of values | leaf value | green–blue–purple |

| Function Name | Arguments Type | Output Type | Description |

|---|---|---|---|

| vMotifLoop | (lnotevalue, lmidipitch, larticulation, lintensity) | voice | Creates a sequence of events based on repeating lists. The number of events is determined by the longest list. Shorter lists are treated as loops. |

| vConcatV | (voice, voice) | voice | Concatenates two voices sequentially. |

| vRepeatV | (voice, quantized) | voice | Repeats a voice a number of times. |

| vSlice | (voice, quantized) | voice | Removes a number of events at the beginning or at the end of a voice. |

| vAutoref | (quantized) | voice | Returns a copy of a previous voice branch of the functional tree, referenced by an index. |

| s2V | (voice, voice) | score | Joins two voices simultaneously. |

| Event Structure | ||||

|---|---|---|---|---|

| {notevalue, | (notevalue, | (norm2notevalue, | {q , | {tran, |

| midipitch, | midipitch, | norm2midipitch, | ln , | dec, |

| articulation, | articulation, | norm2articulation, | lm , | enc, |

| intensity, | intensity) | norm2intensity, | la , | eval, |

| quantized, | norm2quantized, | li , | conv} | |

| lnotevalue1, | norm2goldenvalue) | vMotifLoop, | ||

| lmidipitch, | and inverse | vConcatV, | ||

| larticulation, | converters | vRepeatV, | ||

| lintensity, | vSlice, | |||

| event, | vAutoref, | |||

| voice, | s2V} | |||

| score} |

| Index Subexpression |

|---|

0. "vMotifLoop(ln(1/8),lm(65),la(50),li(60,60,90,0))"

1. "vAutoref(0)"

2. "vSlice(vAutoref(0),q(1))"

3. "vConcatV(vMotifLoop(ln(1/8),lm(65),la(50),li(60,60,90,0)),vSlice

(vAutoref(0),q(1)))"

4. "vAutoref(3)"

5. "vSlice(vAutoref(3),q(2))"

6. "vConcatV(vConcatV(vMotifLoop(ln(1/8),lm(65),la(50),li(60,60,90,0)),

vSlice(vAutoref(0),q(1))),vSlice(vAutoref(3),q(2)))"

7. "vRepeatV(vConcatV(vConcatV(vMotifLoop(ln(1/8),lm(65),la(50),

li(60,60,90,0)),vSlice(vAutoref(0),q(1))),vSlice(vAutoref(3),q(2))),q(8))"

8. "vRepeatV(vRepeatV(vConcatV(vConcatV(vMotifLoop(ln(1/8),lm(65),la(50),

li(60,60,90,0)),vSlice(vAutoref(0),q(1))),vSlice(vAutoref(3),q(2))),

q(8)),q(13))"

9. "vSlice(vRepeatV(vRepeatV(vConcatV(vConcatV(vMotifLoop(ln(1/8),lm(65),

la(50),li(60,60,90,0)),vSlice(vAutoref(0),q(1))),vSlice(vAutoref(3),

q(2))),q(8)),q(13)),q(-12))"

10. "vAutoref(7)"

11. "vAutoref(10)"

12. "vSlice(vAutoref(10),q(1))"

13. "vRepeatV(vSlice(vAutoref(10),q(1)),q(12))"

14. "vConcatV(vAutoref(7),vRepeatV(vSlice(vAutoref(10),q(1)),q(12)))"

|

| Function Name | Arguments Type | Output Type | Description |

|---|---|---|---|

| sClapping | (lnotevalues, lmidipitch, larticulation, lintensity, quantized, quantized, quantized, quantized, quantized, quantized, quantized) | score | Creates two voices with a repeated pattern with a progressive phase shift of the second voice created by slicing notes at the beginning of the pattern. |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

López-Montes, J.; Molina-Solana, M.; Fajardo, W. GenoMus: Representing Procedural Musical Structures with an Encoded Functional Grammar Optimized for Metaprogramming and Machine Learning. Appl. Sci. 2022, 12, 8322. https://doi.org/10.3390/app12168322

López-Montes J, Molina-Solana M, Fajardo W. GenoMus: Representing Procedural Musical Structures with an Encoded Functional Grammar Optimized for Metaprogramming and Machine Learning. Applied Sciences. 2022; 12(16):8322. https://doi.org/10.3390/app12168322

Chicago/Turabian StyleLópez-Montes, José, Miguel Molina-Solana, and Waldo Fajardo. 2022. "GenoMus: Representing Procedural Musical Structures with an Encoded Functional Grammar Optimized for Metaprogramming and Machine Learning" Applied Sciences 12, no. 16: 8322. https://doi.org/10.3390/app12168322

APA StyleLópez-Montes, J., Molina-Solana, M., & Fajardo, W. (2022). GenoMus: Representing Procedural Musical Structures with an Encoded Functional Grammar Optimized for Metaprogramming and Machine Learning. Applied Sciences, 12(16), 8322. https://doi.org/10.3390/app12168322