A VR Truck Docking Simulator Platform for Developing Personalized Driver Assistance

Abstract

:1. Introduction

1.1. Background and Previous Work

1.2. Content and Goals of the VISTA Project

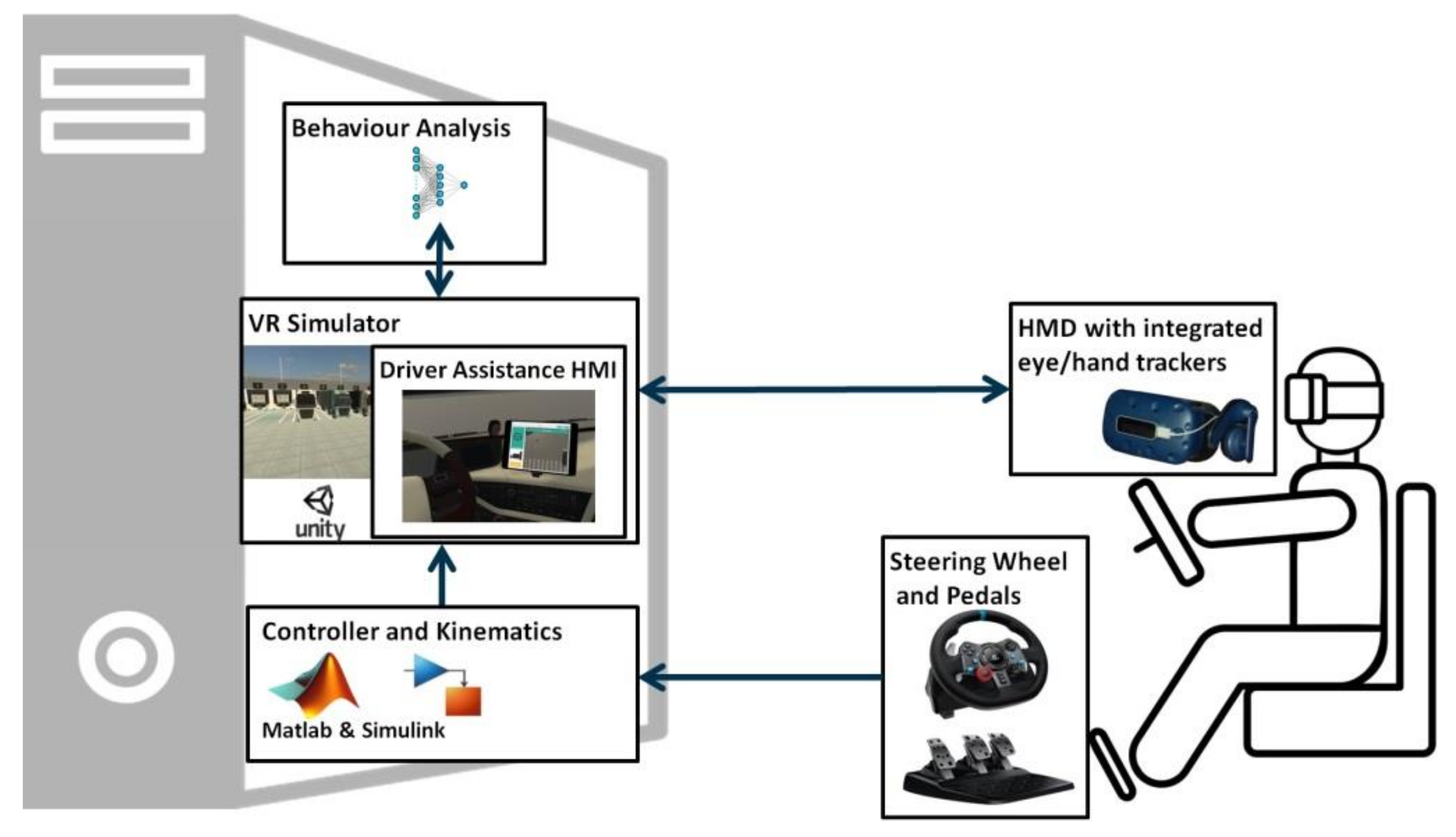

2. VISTA VR-Simulator Platform (VISTA-Sim)

2.1. VISTA-Sim Hardware

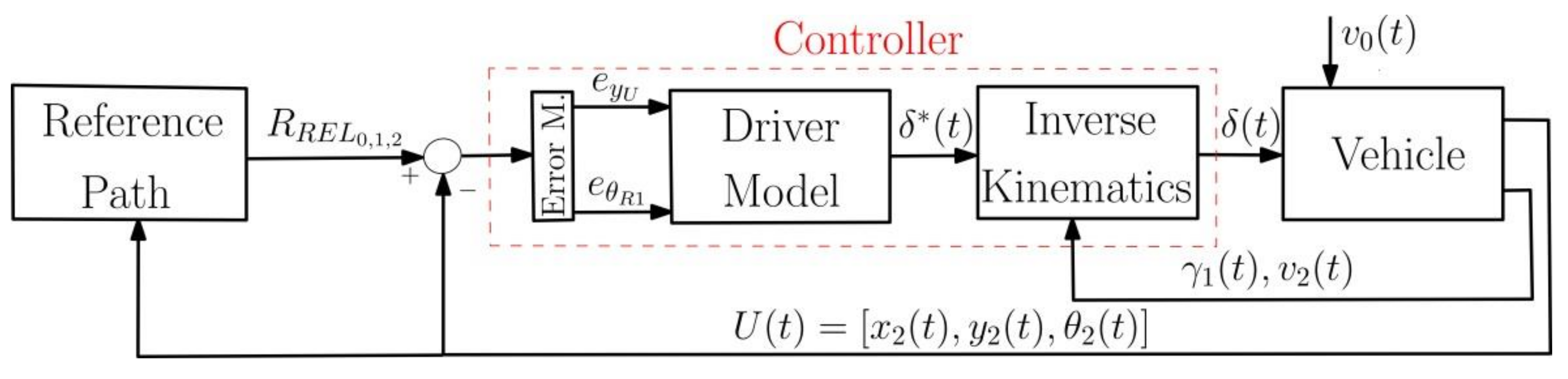

2.2. Path Planner and Path Tracking Controller

2.2.1. Path Planner

2.2.2. Path Tracking Controller

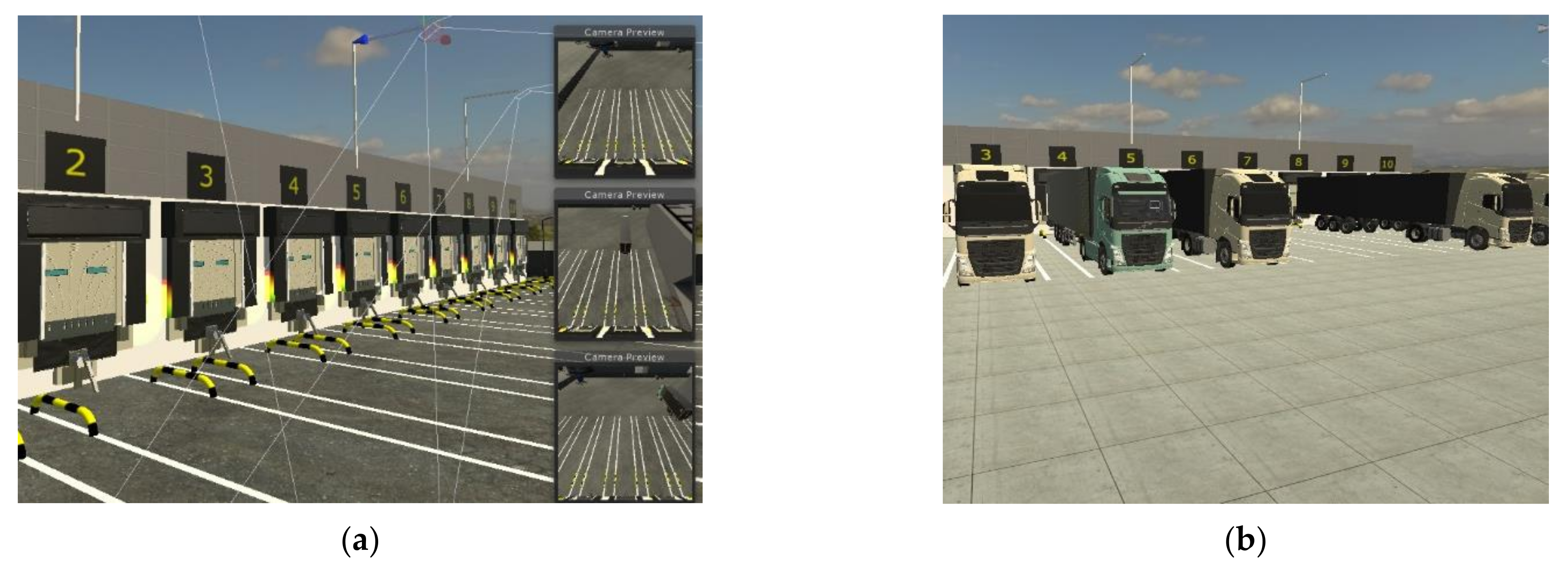

2.3. Simulation Environment

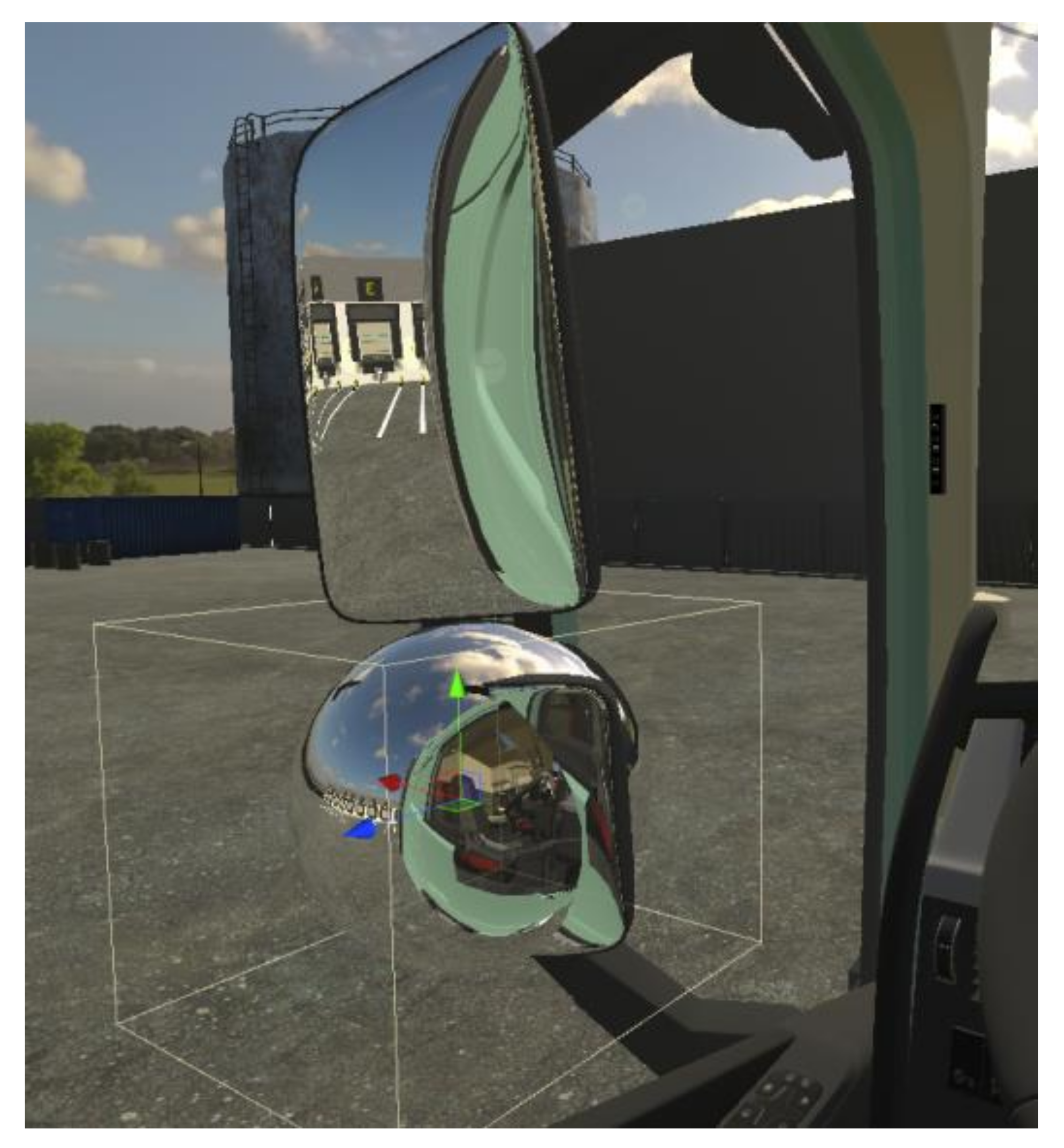

2.3.1. The Virtual Semi-Trailer Truck and the Distribution Centre

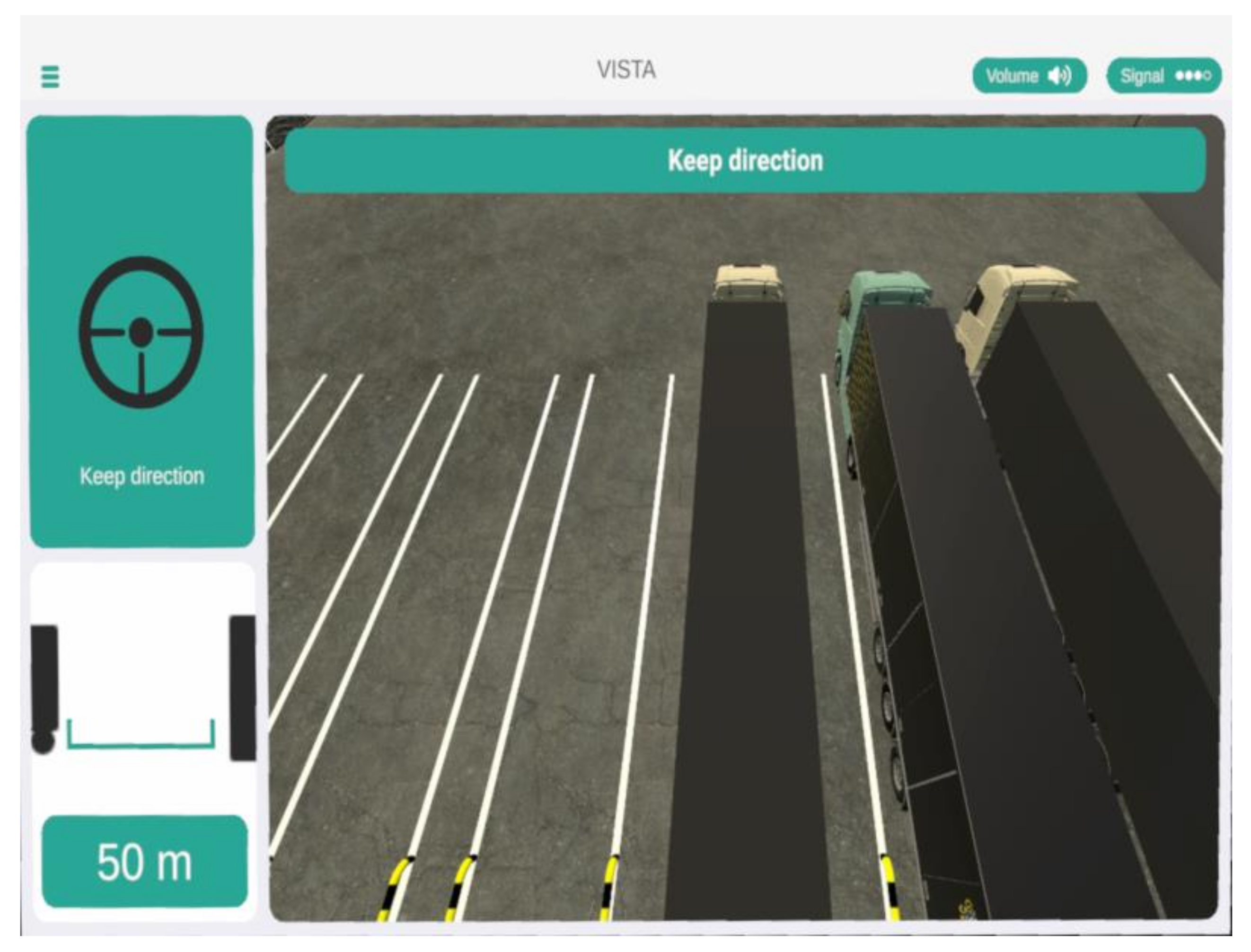

2.3.2. Driver Assistance Human-Machine Interface (HMI)

2.3.3. Integration with Path Tracking Controller

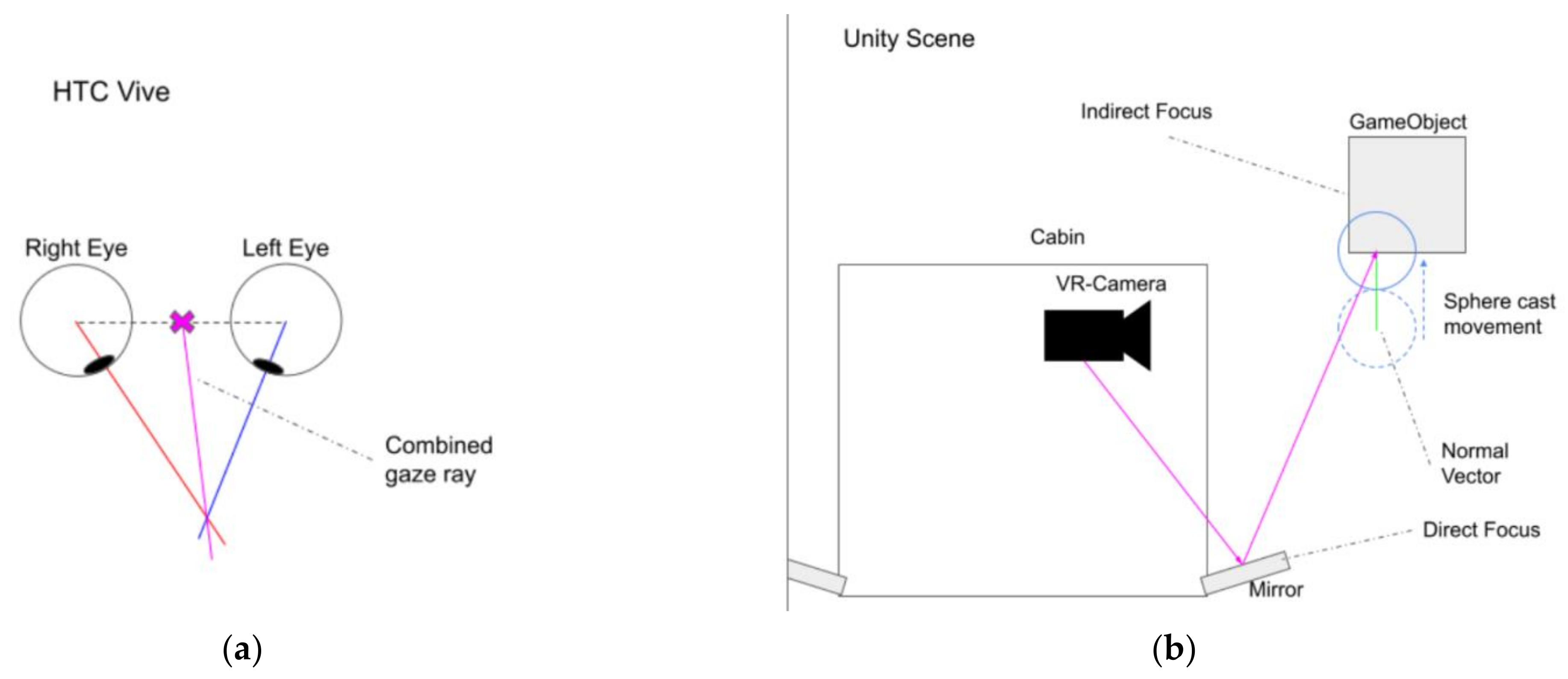

2.3.4. Tracking Systems

2.3.5. Data Recorder and Player

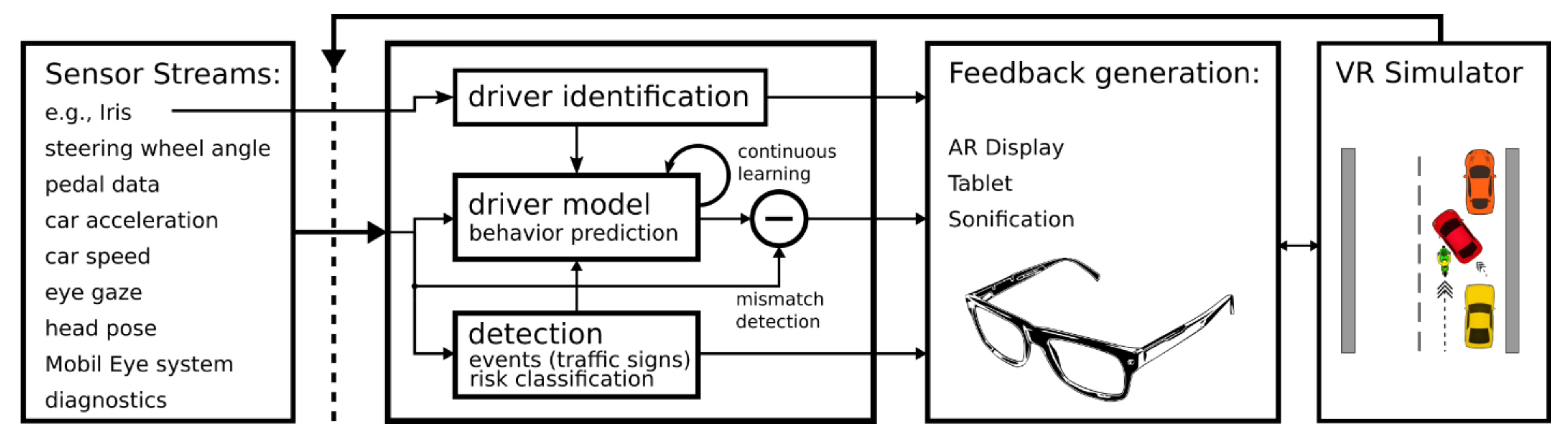

3. Driver Behaviour Analysis

3.1. Driver Expert Interview

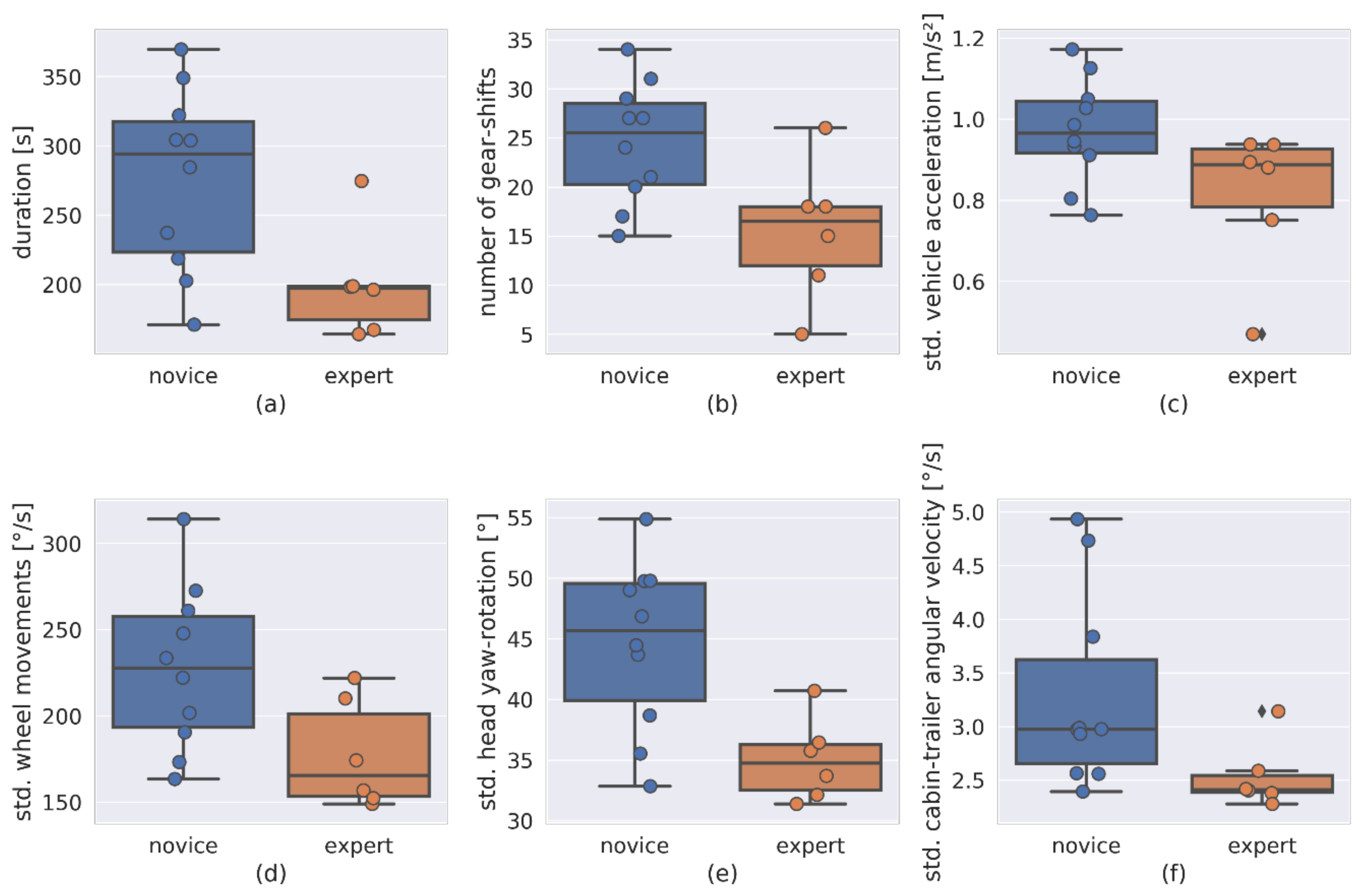

3.2. Visualization of Expertise Performance Indicators

3.3. Expertise Estimation Using Machine Learning

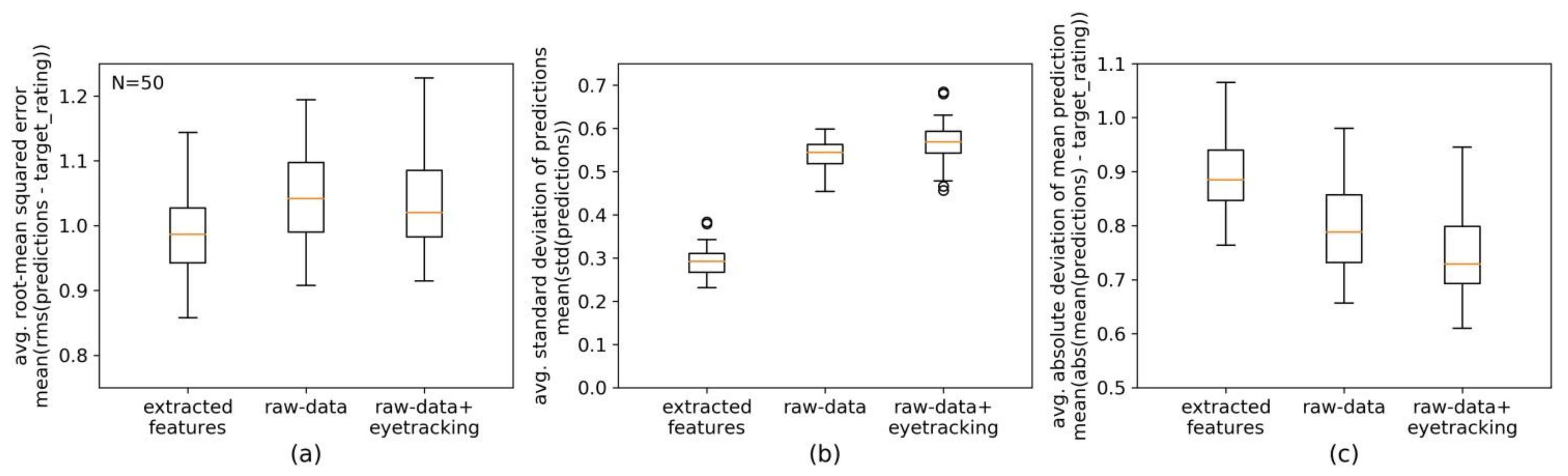

3.4. First Evaluation of the Behaviour Analysis Module

4. Outcomes and Conclusions

5. Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Liu, S. Global AR/VR Automotive Spending 2017 and 2025. Available online: https://www.statista.com/statistics/828499/world-ar-vr-automotive-spending/ (accessed on 28 June 2021).

- Choi, S.H.; Cheung, H.H. A Versatile Virtual Prototyping System for Rapid Product Development. Comput. Ind. 2008, 59, 477–488. [Google Scholar] [CrossRef]

- Lawson, G.; Salanitri, D.; Waterfield, B. Future Directions for the Development of Virtual Reality within an Automotive Manufacturer. Appl. Ergon. 2016, 53, 323–330. [Google Scholar] [CrossRef] [PubMed]

- Taheri, S.M.; Matsushita, K.; Sasaki, M. Virtual Reality Driving Simulation for Measuring Driver Behavior and Characteristics. J. Transp. Technol. 2017, 7, 123. [Google Scholar] [CrossRef] [Green Version]

- Gunning, D.; Aha, D. DARPA’s Explainable Artificial Intelligence (XAI) Program. AI Mag. 2019, 40, 44–58. [Google Scholar] [CrossRef]

- Sanneman, L.; Shah, J.A. A Situation Awareness-Based Framework for Design and Evaluation of Explainable AI. In Explainable, Transparent Autonomous Agents and Multi-Agent Systems; Calvaresi, D., Najjar, A., Winikoff, M., Främling, K., Eds.; Springer International Publishing: Cham, Switzerland, 2020; pp. 94–110. [Google Scholar]

- Nam, C.S.; Lyons, J.B. Trust in Human-Robot. Interaction; Academic Press: Cambridge, MA, USA, 2020; ISBN 978-0-12-819473-7. [Google Scholar]

- Kusumakar, R.; Buning, L.; Rieck, F.; Schuur, P.; Tillema, F. INTRALOG—Intelligent Autonomous Truck Applications in Logistics; Single and Double Articulated Autonomous Rearward Docking on DCs. IET Intell. Transp. Syst. 2018, 12, 1045–1052. [Google Scholar] [CrossRef] [Green Version]

- Benders, J. VISTA—Towards Damage-Free and Time-Accurate Truck Docking. Available online: https://vistaproject.eu/ (accessed on 28 June 2021).

- Ihemedu-Steinke, Q.C.; Erbach, R.; Halady, P.; Meixner, G.; Weber, M. Virtual Reality Driving Simulator Based on Head-Mounted Displays. In Automotive User Interfaces: Creating Interactive Experiences in the Car; Meixner, G., Müller, C., Eds.; Human–Computer Interaction Series; Springer International Publishing: Cham, Switzerland, 2017; pp. 401–428. ISBN 978-3-319-49448-7. [Google Scholar]

- Körber, M.; Baseler, E.; Bengler, K. Introduction Matters: Manipulating Trust in Automation and Reliance in Automated Driving. Appl. Ergon. 2018, 66, 18–31. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Zahabi, M.; Razak, A.M.A.; Shortz, A.E.; Mehta, R.K.; Manser, M. Evaluating Advanced Driver-Assistance System Trainings Using Driver Performance, Attention Allocation, and Neural Efficiency Measures. Appl. Ergon. 2020, 84, 103036. [Google Scholar] [CrossRef] [PubMed]

- Sportillo, D.; Paljic, A.; Ojeda, L. Get Ready for Automated Driving Using Virtual Reality. Accid. Anal. Prev. 2018, 118, 102–113. [Google Scholar] [CrossRef] [PubMed]

- Diederichs, F.; Niehaus, F.; Hees, L. Guerilla Evaluation of Truck HMI with VR. In Virtual, Augmented and Mixed Reality. Design and Interaction; Chen, J.Y.C., Fragomeni, G., Eds.; Springer International Publishing: Cham, Switzerland, 2020; pp. 3–17. [Google Scholar]

- Lin, N.; Zong, C.; Tomizuka, M.; Song, P.; Zhang, Z.; Li, G. An Overview on Study of Identification of Driver Behavior Characteristics for Automotive Control. Math. Probl. Eng. 2014, 2014, e569109. [Google Scholar] [CrossRef]

- Wang, W.; Xi, J.; Chen, H. Modeling and Recognizing Driver Behavior Based on Driving Data: A Survey. Math. Probl. Eng. 2014, 2014, e245641. [Google Scholar] [CrossRef] [Green Version]

- Hasenjäger, M.; Heckmann, M.; Wersing, H. A Survey of Personalization for Advanced Driver Assistance Systems. IEEE Trans. Intell. Veh. 2020, 5, 335–344. [Google Scholar] [CrossRef]

- Li, A.; Jiang, H.; Zhou, J.; Zhou, X. Implementation of Human-Like Driver Model Based on Recurrent Neural Networks. IEEE Access 2019, 7, 98094–98106. [Google Scholar] [CrossRef]

- Sallab, A.E.; Abdou, M.; Perot, E.; Yogamani, S. Deep Reinforcement Learning Framework for Autonomous Driving. Electron. Imaging 2017, 2017, 70–76. [Google Scholar] [CrossRef] [Green Version]

- Darwish, A.; Steinhauer, H.J. Learning Individual Driver’s Mental Models Using POMDPs and BToM; IOS Press: Amsterdam, The Netherlands, 2020; pp. 51–60. [Google Scholar]

- Xu, J.; Min, J.; Hu, J. Real-Time Eye Tracking for the Assessment of Driver Fatigue. Healthc Technol. Lett. 2018, 5, 54–58. [Google Scholar] [CrossRef] [PubMed]

- MathWorks Simulink—Simulation und Model-Based Design. Available online: https://de.mathworks.com/products/simulink.html (accessed on 25 June 2021).

- Unity, U. Unity 3D Platform. Available online: https://unity.com/ (accessed on 9 June 2021).

- HTC Corporation VIVE Pro Eye. Available online: https://www.vive.com/eu/product/vive-pro-eye/overview/ (accessed on 9 June 2021).

- Logitech Logitech G29 Steering Wheels & Pedals. Available online: https://www.logitechg.com/en-eu/products/driving/driving-force-racing-wheel.html (accessed on 9 June 2021).

- Ultraleap Leap Motion Controller. Available online: https://www.ultraleap.com/ (accessed on 9 June 2021).

- Devasia, D. Motion Planning with Obstacle Avoidance for Autonomous Docking of Single Articulated Vehicles; HAN University of Applied Sciences: Arnhem, The Netherlands, 2019. [Google Scholar]

- Kannan, M. Automated Docking Maneuvering of an Articulated Vehicle in the Presence of Obstacles. Ph.D. Thesis, České Vysoké Učení Technické v Praze, Prague, Czech Republic, 2021. [Google Scholar]

- Kural, K.; Nijmeijer, H.; Besselink, I.J.M. Dynamics and Control Analysis of High Capacity Vehicles for Europe; Technische Universiteit Eindhoven: Eindhoven, The Netherlands, 2019. [Google Scholar]

- Hettinger, L.J.; Riccio, G.E. Visually Induced Motion Sickness in Virtual Environments. Presence Teleoper Virtual Env. 1992, 1, 306–310. [Google Scholar] [CrossRef]

- Smith, S.W. The Scientist and Engineer’s Guide to Digital Signal. Processing; California Technical Publishing: San Diego, CA, USA, 1997; ISBN 978-0-9660176-3-2. [Google Scholar]

- Chollet, F. Keras GitHub. Available online: https://github.com/fchollet/keras. (accessed on 26 June 2021).

- Hastie, T.; Tibshirani, R.; Friedman, J. The Elements of Statistical Learning: Data Mining, Inference, and Prediction, 2nd ed.; Springer Series in Statistics; Springer: New York, NY, USA, 2009; ISBN 978-0-387-84857-0. [Google Scholar]

- Parisi, G.I.; Kemker, R.; Part, J.L.; Kanan, C.; Wermter, S. Continual Lifelong Learning with Neural Networks: A Review. Neural Netw. 2019, 113, 54–71. [Google Scholar] [CrossRef] [PubMed]

- Kirkpatrick, J.; Pascanu, R.; Rabinowitz, N.; Veness, J.; Desjardins, G.; Rusu, A.A.; Milan, K.; Quan, J.; Ramalho, T.; Grabska-Barwinska, A.; et al. Overcoming Catastrophic Forgetting in Neural Networks. Proc. Natl. Acad. Sci. USA 2017, 114, 3521–3526. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Coelho, J.; Duarte, C. The Contribution of Multimodal Adaptation Techniques to the GUIDE Interface. In Universal Access in Human-Computer Interaction. Design for All and eInclusion; Stephanidis, C., Ed.; Springer: Berlin/Heidelberg, Germany, 2011; pp. 337–346. [Google Scholar]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ribeiro, P.; Krause, A.F.; Meesters, P.; Kural, K.; van Kolfschoten, J.; Büchner, M.-A.; Ohlmann, J.; Ressel, C.; Benders, J.; Essig, K. A VR Truck Docking Simulator Platform for Developing Personalized Driver Assistance. Appl. Sci. 2021, 11, 8911. https://doi.org/10.3390/app11198911

Ribeiro P, Krause AF, Meesters P, Kural K, van Kolfschoten J, Büchner M-A, Ohlmann J, Ressel C, Benders J, Essig K. A VR Truck Docking Simulator Platform for Developing Personalized Driver Assistance. Applied Sciences. 2021; 11(19):8911. https://doi.org/10.3390/app11198911

Chicago/Turabian StyleRibeiro, Pedro, André Frank Krause, Phillipp Meesters, Karel Kural, Jason van Kolfschoten, Marc-André Büchner, Jens Ohlmann, Christian Ressel, Jan Benders, and Kai Essig. 2021. "A VR Truck Docking Simulator Platform for Developing Personalized Driver Assistance" Applied Sciences 11, no. 19: 8911. https://doi.org/10.3390/app11198911

APA StyleRibeiro, P., Krause, A. F., Meesters, P., Kural, K., van Kolfschoten, J., Büchner, M.-A., Ohlmann, J., Ressel, C., Benders, J., & Essig, K. (2021). A VR Truck Docking Simulator Platform for Developing Personalized Driver Assistance. Applied Sciences, 11(19), 8911. https://doi.org/10.3390/app11198911