AR in the Architecture Domain: State of the Art

Abstract

Featured Application

Abstract

1. Introduction

- -

- Defining AR framed in the path of architectural knowledge;

- -

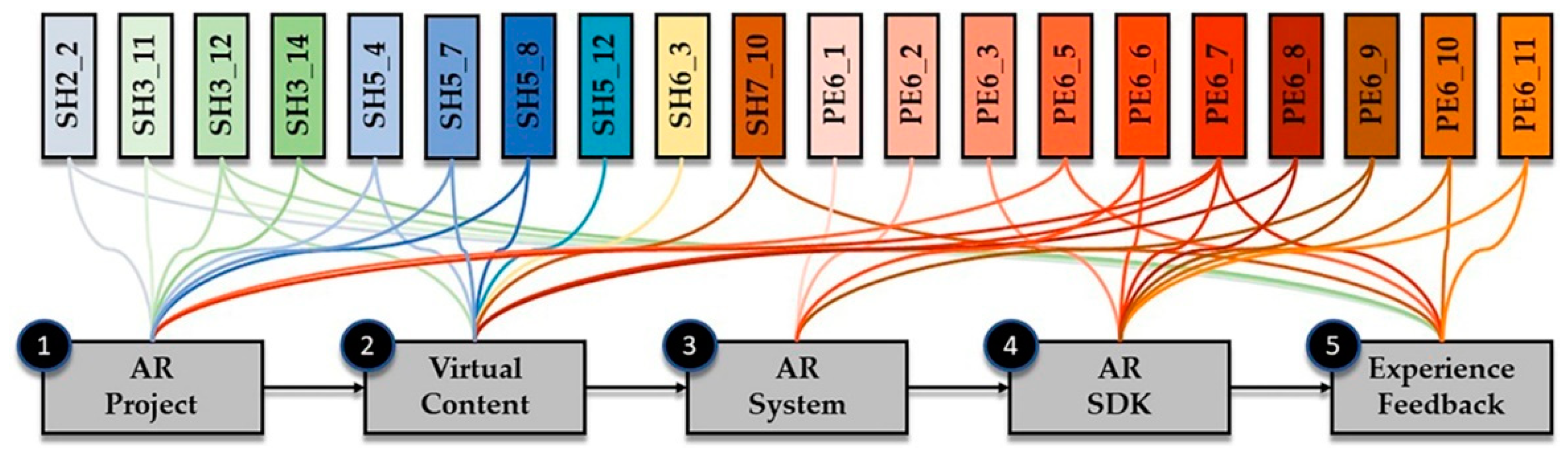

- Organizing the AR pipeline, highlighting the main aspects of the chain;

- -

- Outlining the state of the art of AR in the architecture domain;

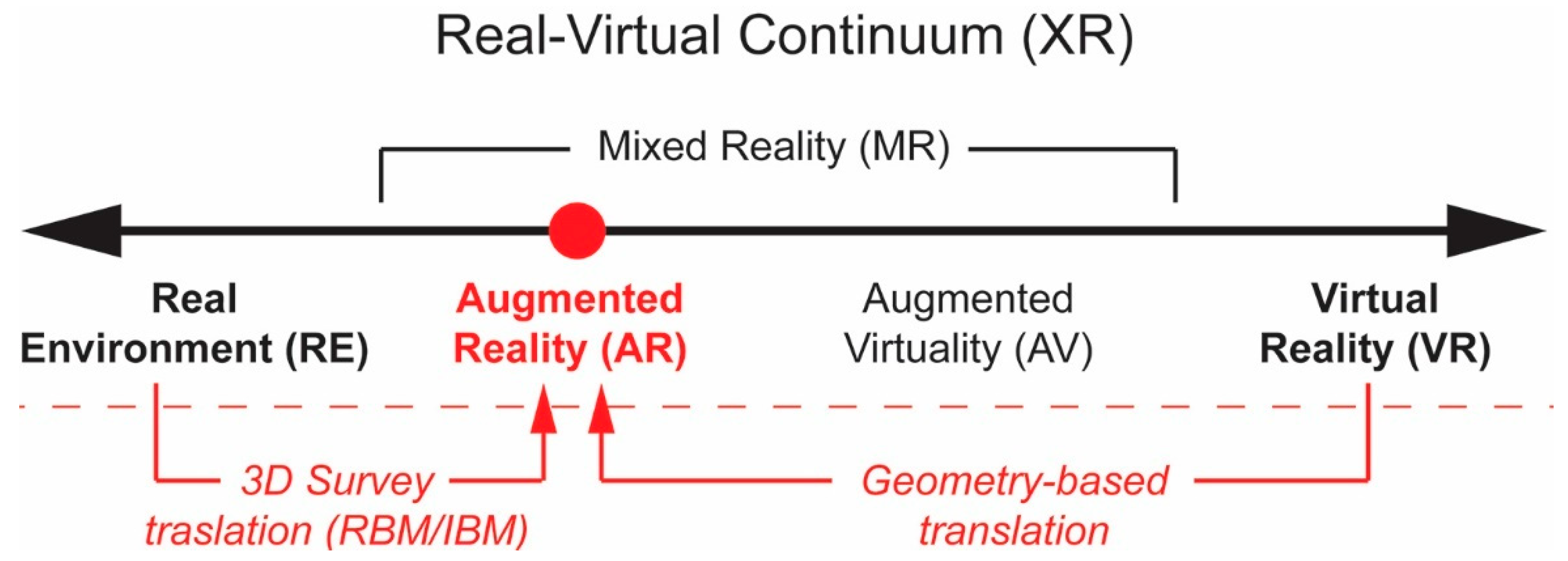

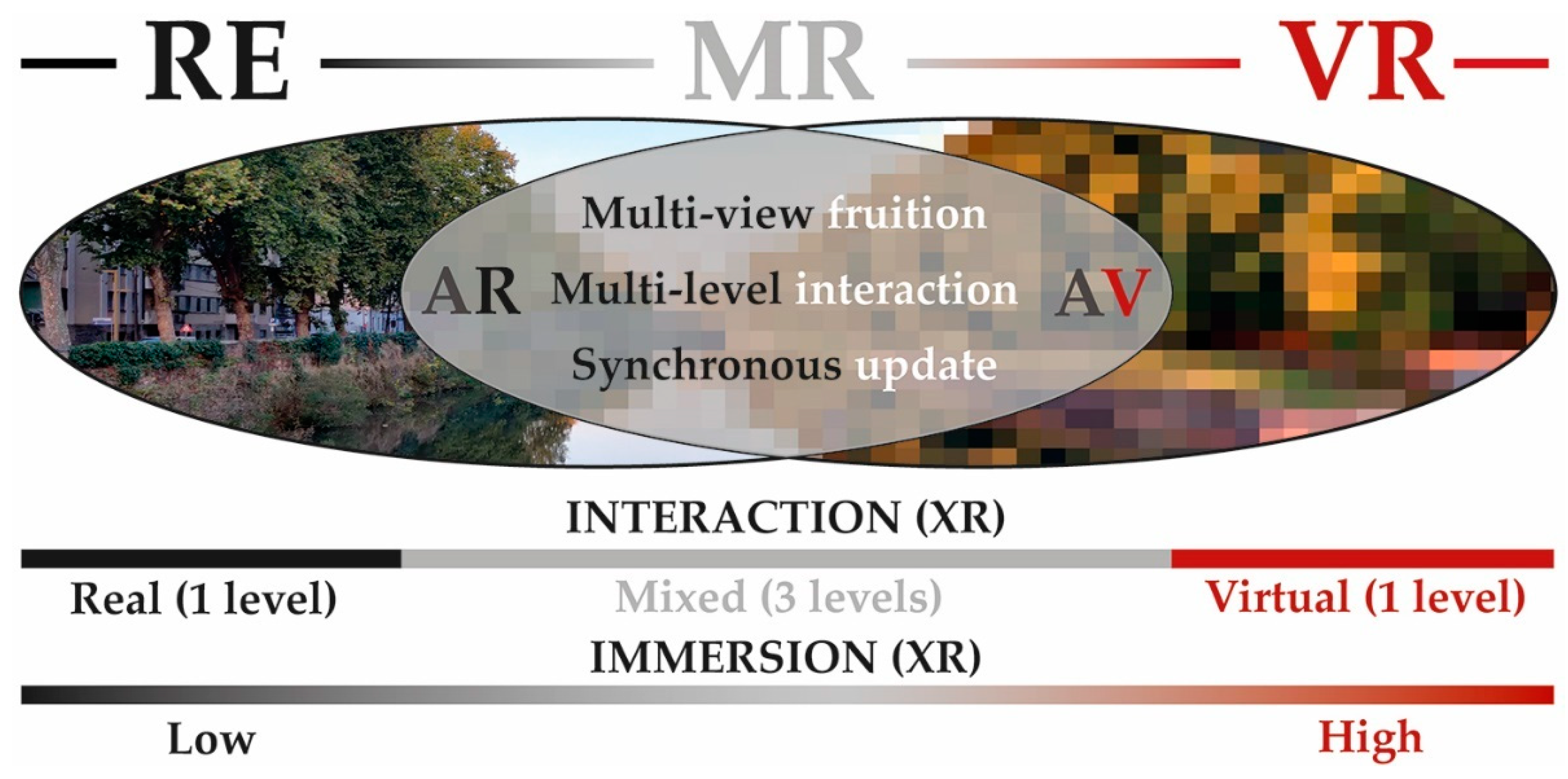

2. AR in the Real-Virtual Continuum

- It must merge the real architectural environment, or a portion of it, with virtual data in a common visualization platform on the device;

- It must work in real time, reaching a synchronous update between the movements in the real space and the simulated virtual one;

- It must allow a high level of engagement, not constrained to a single point of view, moving freely in the architectonic space or around it;

- It must allow a variable level of interaction between the users, the real world, and the virtual contents.

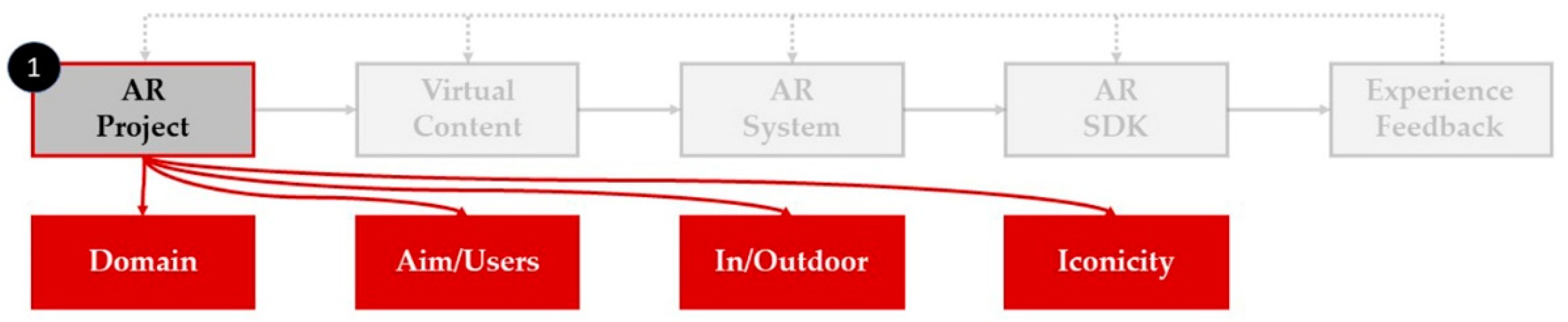

3. AR Project

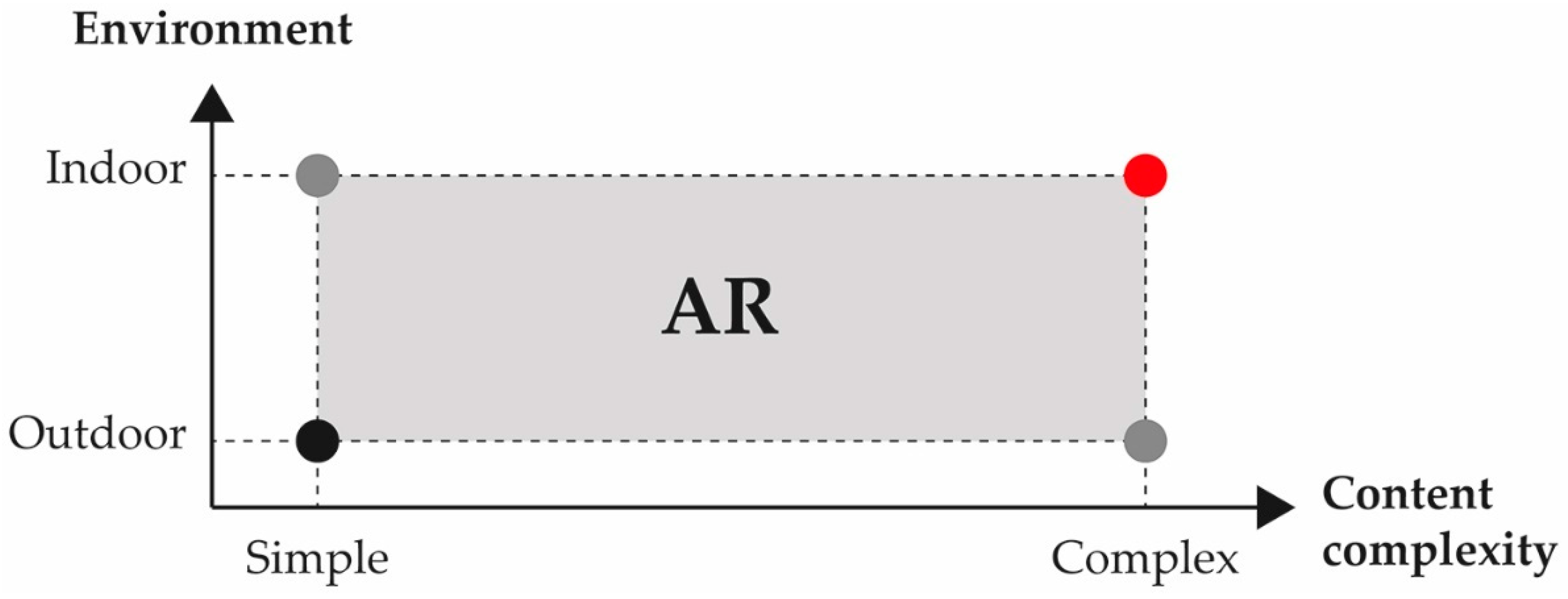

3.1. AR Domain

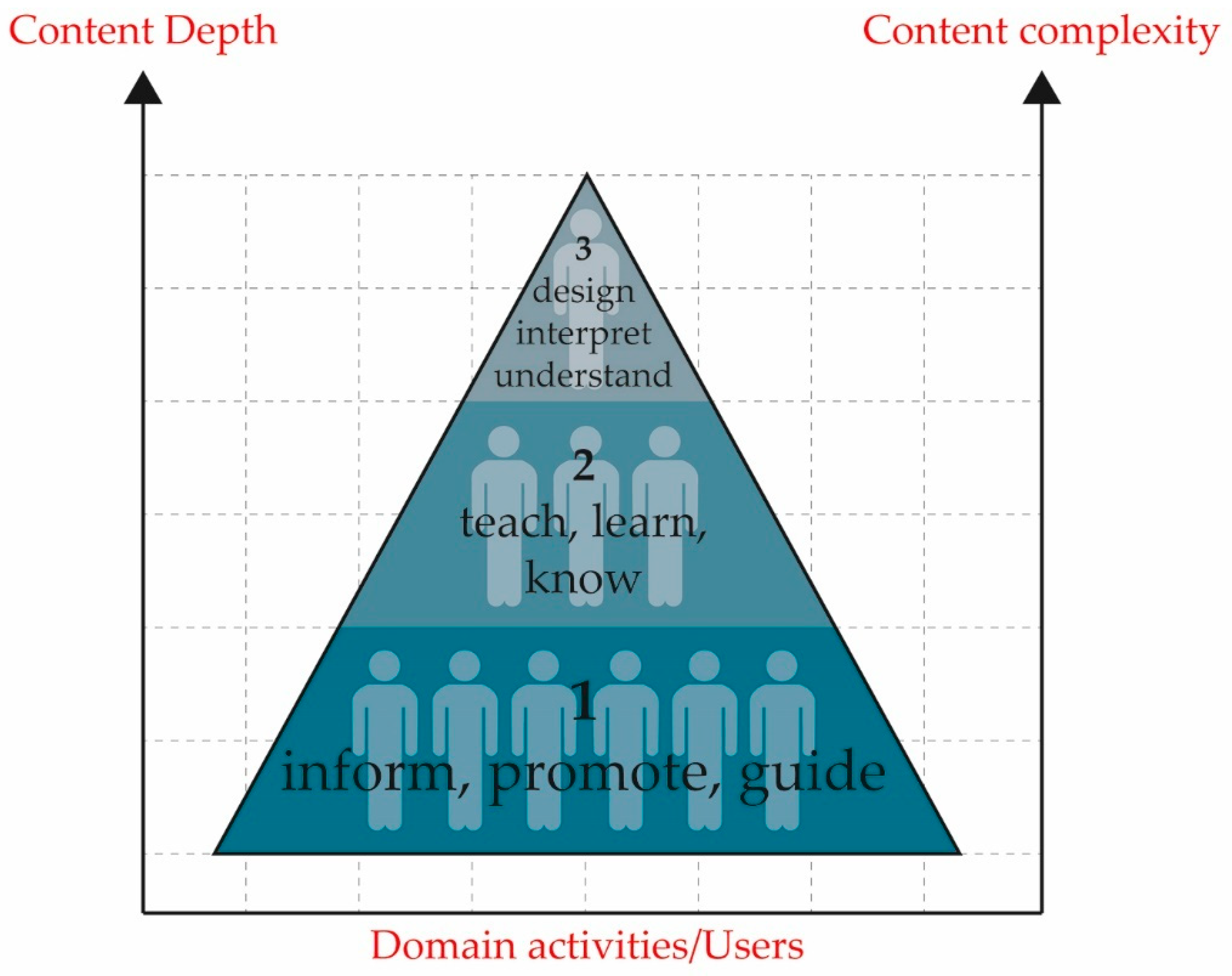

3.2. AR Aim and Users

- Inform, promote, guide (Level I of knowledge transfer);

- Teach, learn, know (Level II of knowledge transfer);

- Design, interpret, understand (Level III of knowledge transfer).

3.3. Outdoor/Indoor Applications

3.4. AR Iconocity

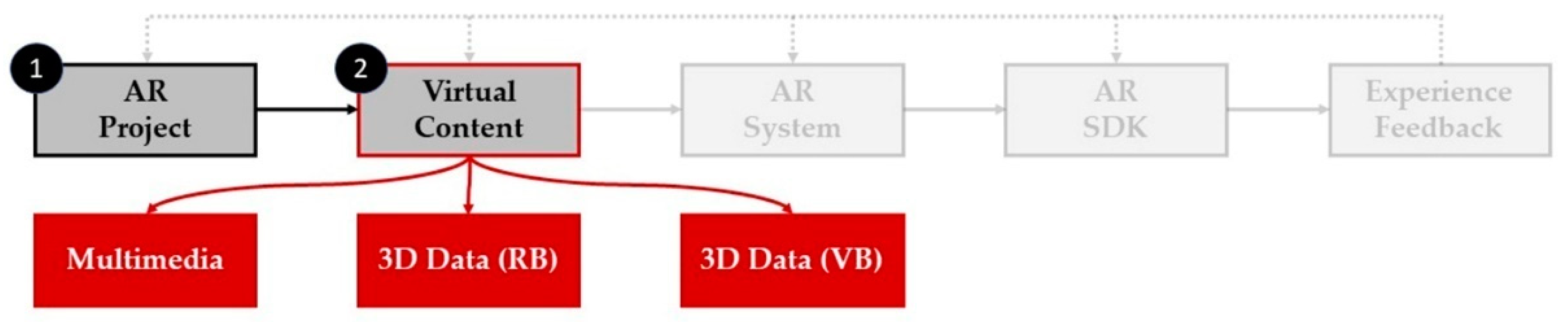

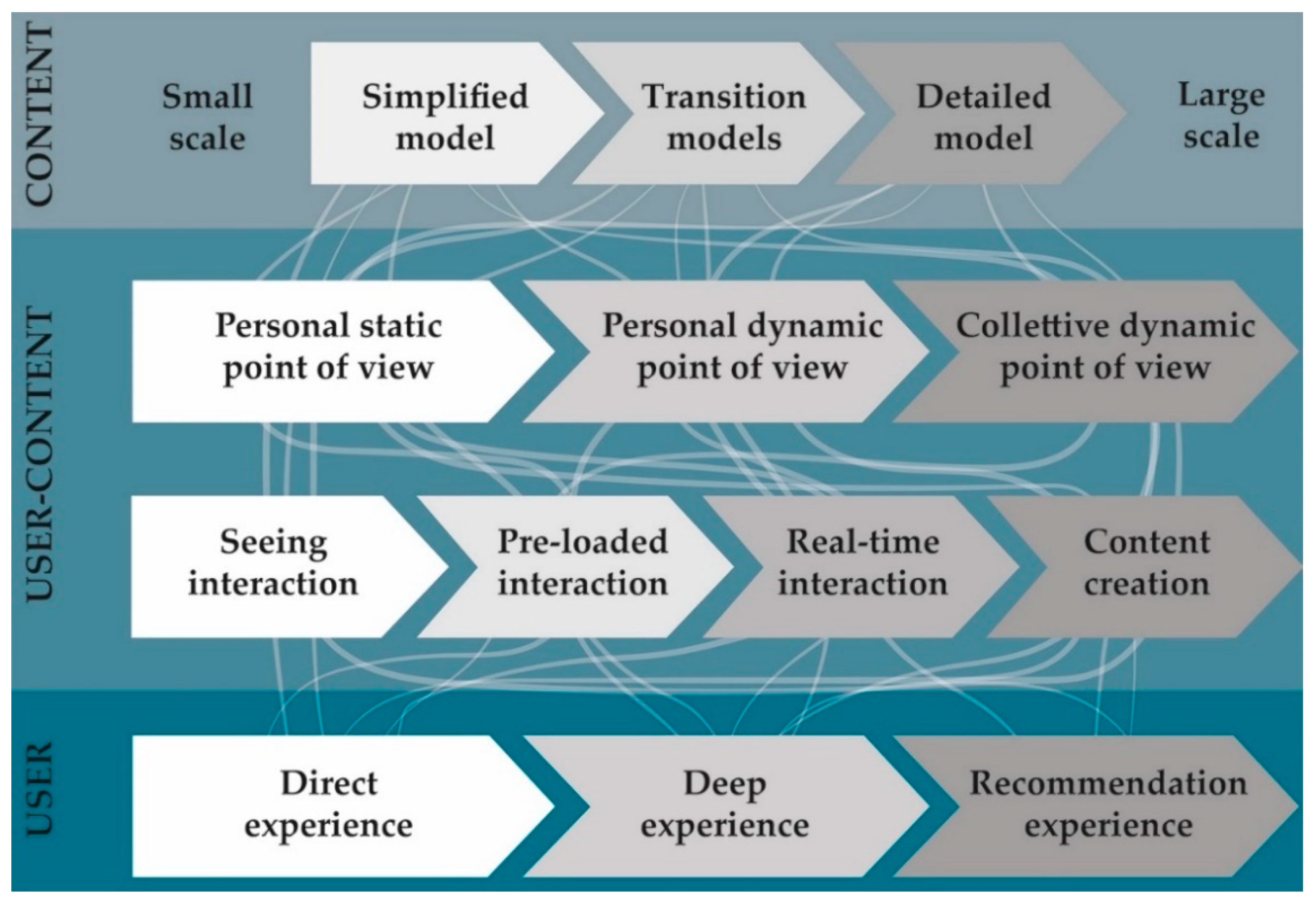

4. AR Virtual Content

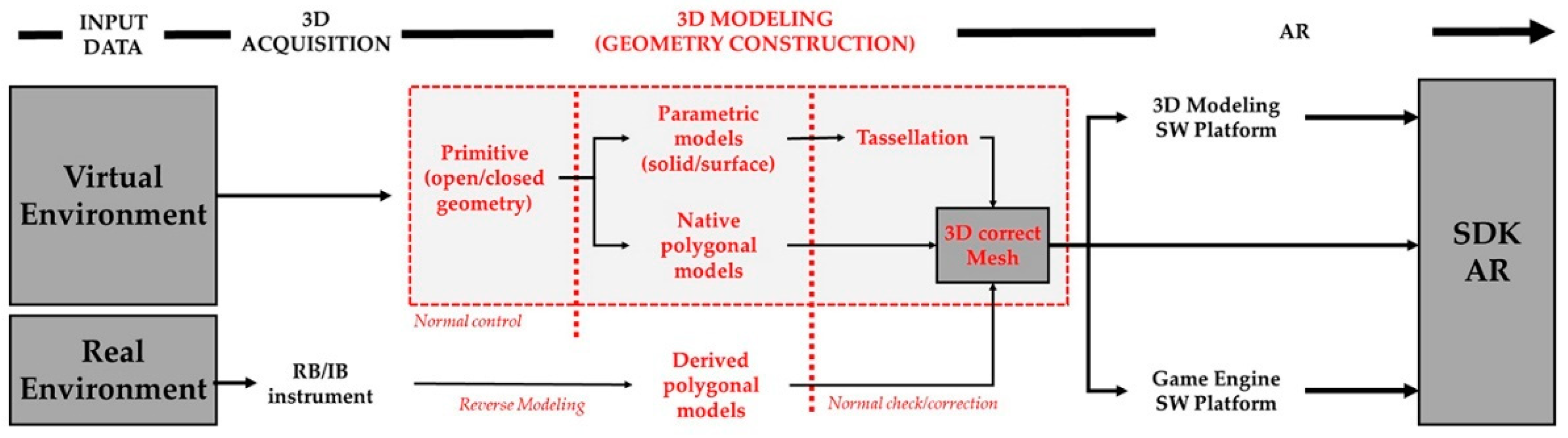

4.1. Reality-Based 3D Models

4.2. Virtual-Based 3D Models

4.3. Extension of 3D Models to AR

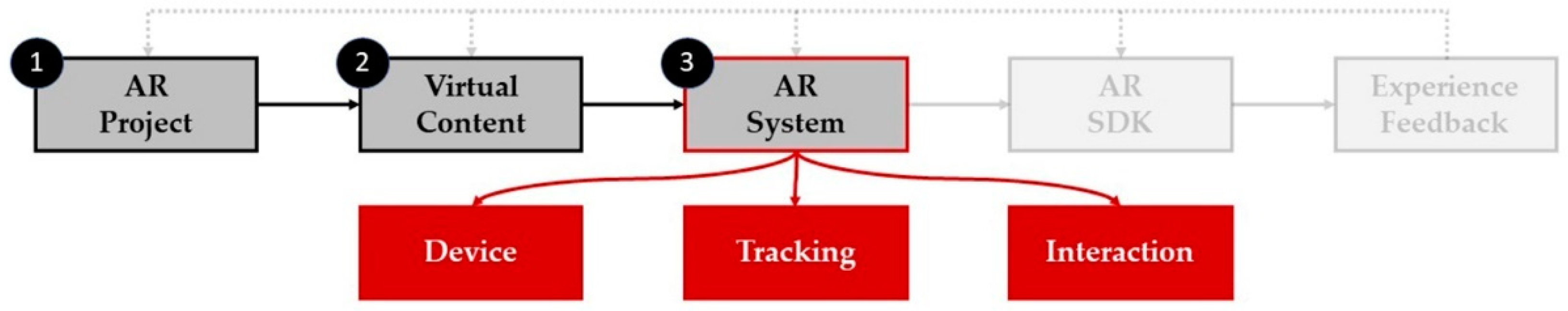

5. AR System

- Devices: the HW system through which AR is used, analyzing instrument capacities concerning AR aims;

- Tracking and registration: related to the device, real environment, and virtual model reference systems;

- Interaction interfaces: relation between the virtual/real data and the user, crucial to visualization of AR use.

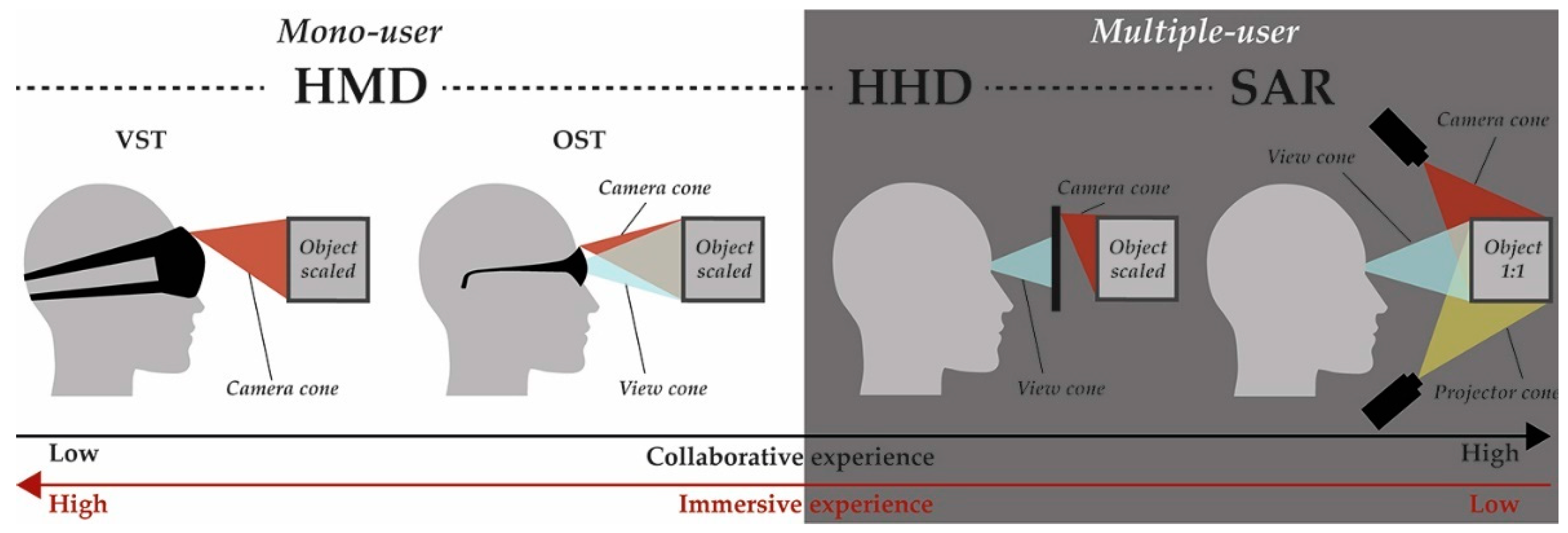

5.1. Devices

5.1.1. Display

5.1.2. Cameras and Tracking Devices

5.1.3. Computer

5.1.4. Network Connectivity

5.1.5. Input Devices

5.1.6. Social Acceptance

5.2. Tracking and Registration

5.2.1. Marker Tracking

- The relationship established between the marker and the real scene;

- The possibility of preserving its location;

- The lighting conditions.

5.2.2. Markerless Tracking

5.3. Interface Interaction

5.3.1. Tangible Interfaces

5.3.2. Sensor-Based Interfaces

5.3.3. Collaborative Interfaces

5.3.4. Hybrid Interfaces

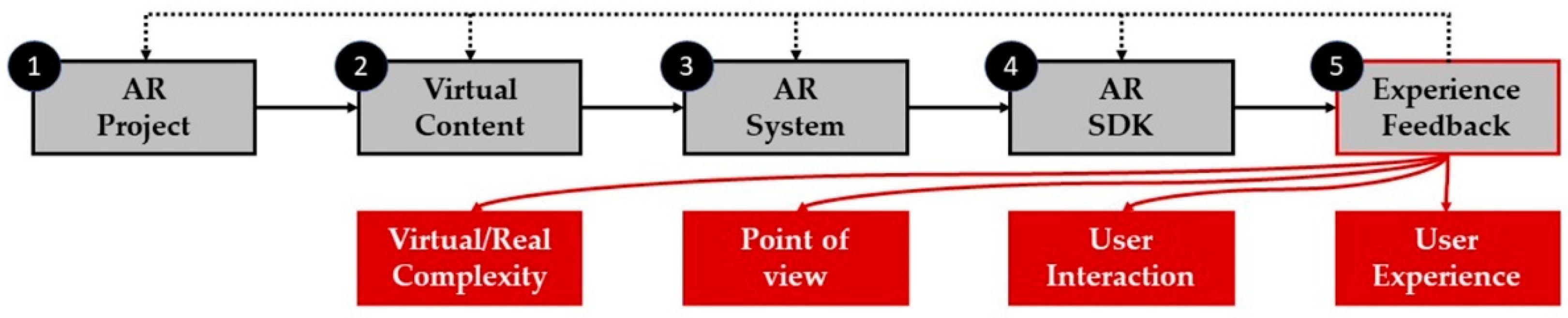

6. AR Development Tools

6.1. Open-Source SDKs

6.2. Free/Proprietary SDKs

6.3. Commercial SDK

7. AR User Experience

- How this AR visualization transmits content;

- How this content is rooted in personal experience;

- How AR experience is translated into a better understanding of the real phenomena.

8. Applications

- -

- Area 1: Cultural heritage communication/valorization, build enhancement/management, buildings storytelling, cultural tourism, monument exploration;

- -

- Area 2: AEC, design process, project planning, co-design, monitoring construction, building process, BIM pipeline, collaborative process;

- -

- Area 3: Education, training activities, knowledge transmission, EdTech, professional skills, civil engineering study, assembling learning.

8.1. AR for Build Deepening and Enhancement

- -

- Applications to improve the visitor experience in contexts in which heritage is placed, distributed, or collected. Cultural tourism is an essential economic and social contributor worldwide. This area is more related to education [170], valorization, and tourist routes in general, improving the accessibility of information in both existing and no-longer-existing urban places [171,172] or architecture [150]. The VALUE project [173] highlights user location, multimodal interaction, user understanding, and gamification as the four main pillars of innovative built use. The authors of [167,174] analyze the experiential aspect of in situ AR communication in cultural heritage and heritage management. The complexity of shapes and interaction with ambient light play a dominant role in AR visualization, increasing or decreasing the sense of presence [175]. Some studies investigate the impact of AR technology on the visitor’s learning experience, deepening both cultural tourism and consumer psychology domains [176]. Even if many studies focused on MAR in the cultural tourism domain, few of them would explore the adoption of augmented reality smart glasses (ARSGs). Han et al. [177] contributed to highlighting the adoption of this technology in cultural tourism. The mobile capacity to merge locational instruments such as GPS and AR is helpful in guided tours and tourist storytelling, furthering the revivification of cultural heritage sites. Hincapié et al. [178] highlight the differences in learning and user perception with or without mobile apps, underlining the improvement in the cultural experience. This aspect has also been deepened by Han et al. [4], who studied the experiential value of AR on destination-related behavior. They investigated how the multidimensional components of AR’s experiential value support behavior through AR satisfaction and experiential authenticity. In general, AR contributes to cultural dissemination and perception of tangible remains, while offering virtual reconstructions and complementary information, sounds, images, etc. That capacity, well known and applied in the cultural heritage field, can be enlarged in other domains and territories, i.e., attracting people to rural areas, and improving their social and economic situation [179];

- -

- To visualize the reconstructive interventions or the simulations of intervention on the artifacts. This second domain is undoubtedly addressed to experts or technicians in the field, who are called to read the built environment and manage it in the best possible way, planning any intervention operations. This activity can be conducted at the urban scale by introducing decision-making and participatory processes—as the SquareAR project has shown [180]—or at the architectonic scale, showing the use of AR as a tool for reading the building to support the restoration process [181];

- -

- To optimize and enrich the management and exploration of the monuments. This last strand is aimed at the managers or owners of the assets, using AR to manage the heritage and its main features better, increasing its value in terms of visibility and induction [182]. Starting from the impact of the surrounding architectural heritage [183], some articles investigate the value of AR in providing a practical guide to the existing structures, increasing the accessibility and value of the asset [184]. Other research analyzes AR as a technology applied through devices and wearables for the promotion and marketing/advertising of tourist destinations [185], replacing the traditional tourist channels of explanation of monuments and architectures in situ [186].

8.2. AR for the Architectural Design and Building Process

- In project planning and co-design activities, the user can preview and share the building and urban context simulation. Urban/architecture planning requires a hierarchy of decision-making activities, enhanced, and optimized by collaborative interfaces. Co-design activities may involve both specialists and citizens, offering a decisive role in the urban and architecture pre-organization choices. Augmented reality can assume the role of a co-working platform. At the urban scale, AR is considered a practical design and collaboration tool within design teams. One of the first examples has been the ARUDesigner project, evaluating virtual urban projects in a real, natural, and familiar workspace [194]. Urban areas represent a meeting point between stakeholders’ needs and public needs. For that reason, participatory processes are necessary, and AR can increase the range of participants and the intensity of participation, engaging citizens in collaborative urban planning processes [195]. At the architectural scale, before construction, virtual walks around the future buildings may identify changes that must be applied without causing problems and delays in the building execution phase. In this way, communication delays can be minimized, optimizing project management processes [196]. Some applications can overlay actual design alternatives onto drawings through MARs, allowing for flexible wall placement and application of interior and exterior wall materials, developing new, engaging architectural co-design processes [197]. Multitouch user interfaces (MTUIs) on mobile devices can be effective tools for improving co-working practices by providing new ways for designers to interact on the same project, from the cognitive and representation points of view [198];

- In the construction monitoring process: the real-time overlap between the design and the as-built model allows for sudden validation of the progress of construction. It can also highlight the inconsistencies between the design documentation, 3D model, and the actual state [199], assessing their effects and allowing for rapid response, reducing project risk. Structural elements can be monitored from the early construction stages to their maintenance [190]. To monitor and control construction progress and perform comparisons between as-built and as-planned project data, quick access to easily understandable project information is necessary [200,201], presenting a model that overlays progress and planned data on the user’s view of the physical environment. Moreover, in recent years RPAS platforms integrated with AR visualization are applied to the monitoring and inspection of buildings [202];

- In the reading and progress of AEC work, AR allows for supplemental or predictive information at the construction site—for example, by superimposing the structural layout with the architectural and plant systems in space, reducing errors during construction. An example is described by the D4AR project [203]. This ability to incorporate information on multiple levels can help workers to process complex information in order to document and monitor the in-progress construction [201]; it can also help workers to identify any on-site problems and avoid rework, quickly optimizing productivity;

- AR technology supports some phases of reading, construction, and assembly on-site—for example, by facilitating the reading of plans, construction details, and positioning of structural elements, minimizing errors caused by misinterpretation. [204]. Concerning distractions in on-site AR, emphasis should be placed on the careful use of this technology to avoid distractions caused by the screen and loss of awareness of the context in which one is immersed.

8.3. AR for Architectural Training and Education

9. Conclusions

Funding

Conflicts of Interest

References

- Rabbi, I.; Ulla, S. A Survey on Augmented Reality Challenges and Tracking. Acta Graph. 2013, 24, 29–46. [Google Scholar]

- Van Krevelen, D.W.F.; Poelman, R. A survey of augmented reality technologies, applications and limitations. Int. J. Virtual Real. 2010, 9, 1–20. [Google Scholar] [CrossRef]

- Carmigniani, J.; Furht, B.; Anisetti, M.; Ceravolo, P.; Damiani, E.; Ivkovic, M. Augmented reality technologies, systems and applications. Multimed. Tools Appl. 2011, 51, 341–377. [Google Scholar] [CrossRef]

- Billinghurst, M.; Clark, A.; Lee, G. A survey of augmented reality. Found. Trends Hum. Comput. Interact. 2015, 8, 73–272. [Google Scholar] [CrossRef]

- Broschart, D.; Zeile, P. ARchitecture: Augmented Reality in Architecture and Urban Planning. In Proceedings of the Digital Landscape Architecture 2015, Dessau, Germany, 4–6 June 2015; Buhmann, E., Ervin, S.M., Pietsch, M., Eds.; Anhalt University of Applied Sciences: Bernburg, Germany; VDE VERLAG GMBH: Berlin/Offenbach, Germany, 2015; pp. 111–118. [Google Scholar]

- Osorto, C.; Moisés, D.; Chen, P.-H. Application of mixed reality for improving architectural design comprehension effectiveness. Autom. Constr. 2021, 126, 103677. [Google Scholar] [CrossRef]

- Bekele, M.K.; Pierdicca, R.; Frontoni, E.; Malinverni, E.S.; Gain, J. A Survey of Augmented, Virtual, and Mixed Reality for Cultural Heritage. J. Comput. Cult. Herit. 2018, 11, 1–36. [Google Scholar] [CrossRef]

- Wang, F.Y. Is culture computable? IEEE Intell. Syst. 2009, 24, 2–3. [Google Scholar] [CrossRef]

- Haydar, M.; Roussel, D.; Maïdi, M.; Otmane, S.; Mallem, M. Virtual and augmented reality for cultural computing and heritage: A case study of virtual exploration of underwater archaeological sites (preprint). Virtual Real. 2011, 15, 311–327. [Google Scholar] [CrossRef]

- Zhou, F.; Been-Lirn Duh, H.; Billinghurst, M. Trends in augmented reality tracking, interaction and display: A review of ten years of ISMAR. In Proceedings of the 7th IEEE/ACM International Symposium on Mixed and Augmented Reality, Cambridge, UK, 15–18 September 2008; pp. 193–202. [Google Scholar] [CrossRef]

- Costanza, E.; Kunz, A.; Fjeld, M. Mixed reality: A Survey. In Human Machine Interaction. Lecture Notes in Computer Science; Lalanne, D., Kohlas, J., Eds.; Springer: Berlin/Heidelberg, Germany, 2009; Volume 5440, pp. 47–68. [Google Scholar] [CrossRef]

- Sanna, A.; Manuri, F. A survey on applications of augmented reality. Adv. Comput. Sci. 2016, 5, 18–27. [Google Scholar]

- Milovanovic, J.; Guillaume, M.; Siret, D.; Miguet, F. Virtual and Augmented Reality in Architectural Design and Education: An Immersive Multimodal Platform to Support Architectural Pedagogy. In Proceedings of the 17th International Conference, CAAD Futures 2017, Istanbul, Turkey, 12 July 2017; Çağdaş, G., Özkar, M., Gül, L.F., Gürer, E., Eds.; HAL Archives-Ouvertes. pp. 1–20. [Google Scholar]

- Clini, P.; Quattrini, R.; Frontoni, E.; Pierdicca, R.; Nespeca, R. Real/Not Real: Pseudo-Holography and Augmented Reality Applications for Cultural Heritage. In Handbook of Research on Emerging Technologies for Digital Preservation and Information Modeling; Ippolito, A., Cigola, M., Eds.; IGIGLOBAL: Pennsylvania, PA, USA, 2017; pp. 201–227. [Google Scholar]

- del Amo, I.F.; Erkoyuncu, J.A.; Roy, R.; Palmarini, R.; Onoufriou, D. A systematic review of Augmented Reality content-related techniques for knowledge transfer in maintenance applications. Comput. Ind. 2018, 103, 47–71. [Google Scholar] [CrossRef]

- Milgram, P.; Kishino, F. A taxonomy of mixed reality visual displays. IEICE Trans. Inf. Syst. 1994, E77, 1321–1329. [Google Scholar]

- Milgram, P.; Takemura, H.; Utsumi, A.; Kishino, F. Augmented reality: A class of displays on the reality-virtuality continuum. In Proceedings of the SPIE 2351, Telemanipulator and Telepresence Technologies, Photonics for Industrial Applications, Boston, MA, USA, 31 October–4 November 1994; Das, H., Ed.; SPIE: Bellingham, WA, USA, 1995; pp. 282–292. [Google Scholar] [CrossRef]

- Russo, M.; Menconero, S.; Baglioni, L. Parametric surfaces for augmented architecture representation. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2019, XLII-2/W9, 671–678. [Google Scholar] [CrossRef]

- Azuma, R.T. A survey of augmented reality. Presence Teleoperat. Virtual Environ. 1997, 6, 355–385. [Google Scholar] [CrossRef]

- Casella, G.; Coelho, M. Augmented heritage: Situating augmented reality mobile apps in Cultural Heritage communication. In Proceedings of the 2013 International Conference on Information Systems and Design of Communication, Lisboa, Portugal, 11 July 2013; Costa, C.J., Aparicio, M., Eds.; Association for Computing Machinery (ACM): New York, NY, USA, 2013; pp. 138–140. [Google Scholar] [CrossRef]

- Skarbez, R.; Missie, S.; Whitton, M.C. Revisiting Milgram and Kishino’s Reality-Virtuality Continuum. Front. Virtual Real. 2021, 2, 1–8. [Google Scholar] [CrossRef]

- Azuma, R.T.; Baillot, Y.; Behringer, R.; Feiner, S.; Julier, S.; MacIntyre, B. Recent advances in augmented reality. IEEE Trans. Comput. Graph. Appl. 2001, 21, 34–47. [Google Scholar] [CrossRef]

- Zhao, Q. A survey on virtual reality. Sci. Chin. Ser. F Inf. Sci. 2009, 52, 348–400. [Google Scholar] [CrossRef]

- Bruder, G.; Steinicke, F.; Valkov, D.; Hinrichs, K. Augmented Virtual Studio for Architectural Exploration. In Proceedings of the Virtual Reality International Conference (VRIC 2010), Laval, France, 7–9 April 2010; Richir, S., Shirai, A., Eds.; Laval Virtual. pp. 1–8. [Google Scholar]

- Wang, X.; Schnabel, M.A. (Eds.) Mixed Reality in Architecture, Design, And Construction; Springer: Berlin, Germany, 2009. [Google Scholar] [CrossRef]

- Quint, F.; Sebastian, K.; Gorecky, D. A Mixed-reality Learning Environment. Procedia Comput. Sci. 2015, 75, 43–48. [Google Scholar] [CrossRef]

- Krüger, J.M.; Bodemer, D. Different types of interaction with augmented reality learning material. In Proceedings of the 6th International Conference of the Immersive Learning Research Network (iLRN 2020), San Luis Obispo, CA, USA, 21–25 June 2020; Economou, D., Klippel, A., Dodds, H., Peña-Rios, A., Lee, M., Beck, M.J.W., Pirker, J., Dengel, A., Peres, T.M., Richter, J., Eds.; IEEE: Piscataway, NJ, USA, 2020; pp. 78–85. [Google Scholar] [CrossRef]

- Sidani, A.; Matoseiro Dinis, F.; Duarte, J.; Sanhudo, L.; Calvetti, D.; Santos Baptista, J.; Poças Martins, J.; Soeiro, A. Recent Tools and Techniques of BIM-Based Augmented Reality: A Systematic Review. J. Build. Eng. 2021, 42, 102500. [Google Scholar] [CrossRef]

- Kornberger, M.; Clegg, S. The Architecture of Complexity. Cult. Organ. 2003, 9, 75–91. [Google Scholar] [CrossRef]

- Carnevali, L.; Russo, M. CH representation between Monge’s projections and Augmented Reality. In Proceedings of the Art Collections: Cultural Heritage, Safety & Digital Innovation (ARCO), Firenze, Italy, 21–23 September 2020. Pending Publication. [Google Scholar]

- Yuan, Q.; Cong, G.; Ma, Z.; Sun, A.; Magnenat-Thalmann, N. Time-aware point-of-interest recommendation. In Proceedings of the 36th international ACM SIGIR conference on Research and development in information retrieval (SIGIR ‘13), Dublin, Ireland, 28 July–1 August 2013; Association for Computing Machinery (ACM): New York, NY, USA, 2013; pp. 363–372. [Google Scholar] [CrossRef]

- Bryan, A. The New Digital Storytelling: Creating Narratives with New Media: Creating Narratives with New Media—Revised and Updated Edition; Praeger Pub: Westport, CT, USA, 2017. [Google Scholar]

- Schmalstieg, D.; Langlotz, T.; Billinghurst, M. Augmented Reality 2.0. In Virtual Realities; Brunnett, G., Coquillart, S., Welch, G., Eds.; Springer: Berlin/Heidelberg, Germany, 2011; pp. 13–37. [Google Scholar] [CrossRef]

- Becheikh, N.; Ziam, S.; Idrissi, O.; Castonguay, Y.; Landry, R. How to improve knowledge transfer strategies and practices in education? Answers from a systematic literature review. Res. High. Educ. J. 2009, 7, 1–21. [Google Scholar]

- Tomasello, M.; Kruger, A.C.; Ratner, H.H. Cultural Learning. Behav. Brain Sci. 1993, 16, 495–511. [Google Scholar] [CrossRef]

- Sheehy, K.; Ferguson, R.; Clough, G. Augmented Education: Bringing Real and Virtual Learning Together; Palgrave Macmillan Editor: London, UK, 2014. [Google Scholar] [CrossRef]

- Papagiannakis, G.; Singh, G.; Magnenat-Thalmann, N. A survey of mobile and wireless technologies for augmented reality systems. Comput. Anim. Virtual Worlds 2008, 19, 3–22. [Google Scholar] [CrossRef]

- Kiyokawa, K.; Billinghurst, M.; Hayes, S.E.; Gupta, A.; Sannohe, Y.; Kato, H. Communication behaviors of co-located users in collaborative AR interfaces. In Proceedings of the International Symposium on Mixed and Augmented Reality, Darmstadt, Germany, 1 October 2002; IEEE: Piscataway, NJ, USA, 2002; pp. 139–148. [Google Scholar] [CrossRef]

- Wang, P.; Bai, X.; Billinghurst, M.; Zhang, S.; Zhang, X.; Wang, S.; He, W.; Yan, Y.; Ji, H. AR/MR Remote Collaboration on Physical Tasks: A Review. Robot. Comput. Integr. Manuf. 2021, 72, 102071. [Google Scholar] [CrossRef]

- Vlahakis, V.; Karigiannis, J.; Tsotros, M.; Gounaris, M.; Almeida, L.; Stricker, D.; Gleue, T.; Christou, I.T.; Carlucci, R.; Ioannidis, N. Archeoguide: First results of an augmented reality, mobile computing system in Cultural Heritage sites. In Proceedings of the 2001 Conference on Virtual Reality, Archeology, and Cultural Heritage (VAST ‘01), Glyfada, Greece, 28–30 November 2001; Arnold, D., Chalmers, A., Fellner, D., Eds.; Association for Computing Machinery (ACM): New York, NY, USA, 2001; pp. 131–140. [Google Scholar] [CrossRef]

- Zoellner, M.; Keil, J.; Drevensek, T.; Wuest, H. Cultural Heritage layers: Integrating historic media in augmented reality. In Proceedings of the 15th International Conference on Virtual Systems and Multimedia, 2009 (VSMM’09), Vienna, Austria, 9–12 September 2009; Sablatnig, R., Kampe, M., Eds.; IEEE: Piscataway, NJ, USA, 2009; pp. 193–196. [Google Scholar] [CrossRef]

- Seo, B.K.; Kim, K.; Park, J.; Park, J.-I. A tracking framework for augmented reality tours on Cultural Heritage sites. In Proceedings of the 9th ACM SIGGRAPH Conference on Virtual-Reality Continuum and Its Applications in Industry (VRCAI’10), Seoul, Korea, 12–13 December 2010; Magnenat-Thalmann, N., Chen, J., Eds.; Association for Computing Machinery (ACM): New York, NY, USA, 2010; pp. 169–174. [Google Scholar] [CrossRef]

- Angelopoulou, A.; Economou, D.; Bouki, V.; Psarrou, A.; Jin, L.; Pritchard, C.; Kolyda, F. Mobile augmented reality for Cultural Heritage. In Mobile Wireless Middleware, Operating Systems, and Applications; Venkatasubramanian, N., Getov, V., Steglich, S., Eds.; Springer: Berlin/Heidelberg, Germany, 2011; Volume 93, pp. 15–22. [Google Scholar] [CrossRef]

- Mohammed-Amin, R.K.; Levy, R.M.; Boyd, J.E. Mobile augmented reality for interpretation of archaeological sites. In Proceedings of the 2nd International ACM Workshop on Personalized Access to Cultural Heritage, Nara, Japan, 2 November 2012; Oomen, J., Aroyo, L., Marchand-Maillet, S., Douglass, J., Eds.; Association for Computing Machinery (ACM): New York, NY, USA, 2012; pp. 11–14. [Google Scholar] [CrossRef]

- Kang, J. AR teleport: Digital reconstruction of historical and cultural-heritage sites for mobile phones via movement-based interactions. Wirel. Pers. Commun. 2013, 70, 1443–1462. [Google Scholar] [CrossRef]

- Han, J.G.; Park, K.-W.; Ban, K.J.; Kim, E.K. Cultural Heritage sites visualization system based on outdoor augmented reality. AASRI Procedia 2013, 4, 64–71. [Google Scholar] [CrossRef]

- Cirulis, A.; Brigmanis, K.B. 3D Outdoor Augmented Reality for Architecture and Urban Planning. Procedia Comput. Sci. 2013, 25, 71–79. [Google Scholar] [CrossRef]

- Caggianese, G.; Neroni, P.; Gallo, L. Natural interaction and wearable augmented reality for the enjoyment of the Cultural Heritage in outdoor conditions. In Augmented and Virtual Reality; De Paolis, L., Mongelli, A., Eds.; Springer: Manhattan, NY, USA, 2014; Volume 8853, pp. 267–282. [Google Scholar] [CrossRef]

- Panou, C.; Ragia, L.; Dimelli, D.; Mania, K. An Architecture for Mobile Outdoors Augmented Reality for Cultural Heritage. ISPRS Int. J. Geo-Inf. 2018, 7, 463. [Google Scholar] [CrossRef]

- Kim, K.; Seo, B.-K.; Han, J.-H.; Park, J.-I. Augmented reality tour system for immersive experience of Cultural Heritage. In Proceedings of the 8th ACM SIGGRAPH Conference on Virtual-Reality Continuum and Its Applications in Industry (VRCAI’09), Yokohama, Japan, 14–15 December 2009; Spencer, S.N., Ed.; Association for Computing Machinery (ACM): New York, NY, USA, 2009; pp. 323–324. [Google Scholar] [CrossRef]

- Rehman, U.; Cao, S. Augmented-Reality-Based Indoor Navigation: A Comparative Analysis of Handheld Devices Versus Google Glass. IEEE Trans. Hum. Mach. Syst. 2017, 47, 140–151. [Google Scholar] [CrossRef]

- Choudary, O.; Charvillat, V.; Grigoras, R.; Gurdjos, P. MARCH: Mobile augmented reality for Cultural Heritage. In Proceedings of the 17th ACM International Conference on Multimedia (MM ‘09), Beijing, China, 19–24 October 2009; Gao, W., Rui, Y., Hanjalic, A., Eds.; Association for Computing Machinery (ACM): New York, NY, USA, 2009; pp. 1023–1024. [Google Scholar] [CrossRef]

- Ridel, B.; Reuter, P.; Laviole, J.; Mellado, N.; Couture, N.; Granier, X. The revealing flashlight: Interactive spatial augmented reality for detail exploration of Cultural Heritage artifacts. J. Comput. Cult. Herit. 2014, 7, 1–18. [Google Scholar] [CrossRef]

- Delail, B.A.; Weruaga, L.; Zemerly, M.J. CAViAR: Context Aware Visual Indoor Augmented Reality for a University Campus. In Proceedings of the 2012 IEEE/WIC/ACM International Conferences on Web Intelligence and Intelligent Agent Technology, Macau, China, 4–7 December 2012; Li, Y., Zhang, Y., Zhong, N., Eds.; IEEE: Piscataway, NJ, USA, 2012; pp. 286–290. [Google Scholar] [CrossRef]

- Koch, C.; Neges, M.; König, M.; Abramovici, M. Natural markers for augmented reality-based indoor navigation and facility maintenance. Autom. Constr. 2014, 48, 18–30. [Google Scholar] [CrossRef]

- Bostanci, E.; Kanwal, N.; Clark, A.F. Augmented reality applications for Cultural Heritage using Kinect. Hum. Cent. Comput. Inf. Sci. 2015, 5, 1–18. [Google Scholar] [CrossRef]

- Moles, A. Teoria Dell’informazione e Percezione Estetica; Lerici: Milan, Italy, 1969. [Google Scholar]

- Cavallo, M.; Rhodes, G.A.; Forbes, A.G. Riverwalk: Incorporating Historical Photographs in Public Outdoor Augmented Reality Experiences. In Proceedings of the 2016 IEEE International Symposium on Mixed and Augmented Reality (ISMAR-Adjunct), Merida, Mexico, 19–23 September 2016; IEEE: Piscataway, NJ, USA, 2016; pp. 160–165. [Google Scholar] [CrossRef]

- Giordano, A.; Friso, I.; Borin, P.; Monteleone, C.; Panarotto, F. Time and Space in the History of Cities. In Digital Research and Education in Architectural Heritage. UHDL 2017, DECH 2017. Communications in Computer and Information Science; Münster, S., Friedrichs, K., Niebling, F., Seidel-Grzesińska, A., Eds.; Springer: Cham, Switzerland; Manhattan, NY, USA, 2018; Volume 817, pp. 47–62. [Google Scholar] [CrossRef]

- Russo, M.; Guidi, G. Reality-based and reconstructive models: Digital media for Cultural Heritage valorization. SCIRES-IT-SCIentific RESearch Inf. Technol. 2011, 2, 71–86. [Google Scholar]

- Bianchini, C.; Russo, M. Massive 3D acquisition of CH. In Proceedings of the Digital Heritage 2018: New Realities: Authenticity & Automation in the Digital Age, San Francisco, CA, USA, 26–30 October 2018; Addison, A.C., Thwaites, H., Eds.; IEEE: Piscataway, NJ, USA, 2018; pp. 482–490. [Google Scholar] [CrossRef]

- Münster, S.; Apollonio, F.I.; Bell, P.; Kuroczynski, P.; Di Lenardo, I.; Rinaudo, F.; Tamborrino, R. Digital Cultural Heritage meets Digital Humanities. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2019, XLII-2/W15, 813–820. [Google Scholar] [CrossRef]

- Beraldin, J.-A.; Blais, F.; Cournoyer, L.; Rioux, M.; El-Hakim, S.H.; Rodella, R.; Bernier, F.; Harrison, N. Digital 3D imaging system for rapid response on remote sites. In Proceedings of the Second International Conference on 3-D Digital Imaging and Modeling (3DIMM), Ottawa, ON, Canada, 8 October 1999; IEEE: Piscataway, NJ, USA, 1999; pp. 34–43. [Google Scholar] [CrossRef]

- Gaiani, M.; Balzani, M.; Uccelli, F. Reshaping the Coliseum in Rome: An integrated data capture and modeling method at heritage sites. Comput. Graph. Forum 2000, 19, 369–378. [Google Scholar] [CrossRef]

- Levoy, M.; Pulli, K.; Curless, B.; Rusinkiewicz, S.; Koller, D.; Pereira, L.; Ginzton, M.; Anderson, S.; Davis, J.; Ginsberg, J.; et al. The Digital Michelangelo Project: 3D scanning of large statues. In Proceedings of the 27th Annual Conference on Computer Graphics and Interactive Techniques (SIGGRAPH ‘00), New York, NY, USA, 1 July 2000; Brown, J.R., Akeley, K., Eds.; Association for Computing Machinery (ACM): New York, NY, USA, 2000; pp. 131–144. [Google Scholar] [CrossRef]

- Bernardini, F.; Rushmeier, H. The 3D Model Acquisition Pipeline. Comput. Graph. Forum 2002, 21, 149–172. [Google Scholar] [CrossRef]

- Guidi, G.; Russo, M.; Magrassi, G.; Bordegoni, M. Low cost characterization of TOF range sensors resolution. In Proceedings of the SPIE—Three-Dimensional Imaging, Interaction, and Measurement, San Francisco, CA, USA, 23–27 January 2011; Beraldin, J.-A., Cheok, G.S., McCarthy, M.B., Neuschaefer-Rube, U., Baskurt, A.M., McDowall, I.E., Dolinsky, M., Eds.; SPIE: Bellingham, WA, USA; Washington, DC, USA, 2011; Volume 7864, p. 78640D. [Google Scholar] [CrossRef]

- Guidi, G.; Remondino, F.; Russo, M.; Menna, F.; Rizzi, A.; Ercoli, S. A multi-resolution methodology for the 3D modeling of large and complex archeological areas. Spec. Issue Int. J. Archit. Comput. 2009, 7, 39–55. [Google Scholar] [CrossRef]

- Apollonio, F.; Gaiani, M.; Benedetti, B. 3D reality-based artefact models for the management of archaeological sites using 3D Gis: A framework starting from the case study of the Pompeii Archaeological area. J. Archaeol. Sci. 2012, 39, 1271–1287. [Google Scholar] [CrossRef]

- Guidi, G.; Russo, M.; Angheleddu, D. 3D Survey and virtual reconstruction of archaeological sites. Digit. Appl. Archaeol. Cult. Herit. 2014, 1, 55–69. [Google Scholar] [CrossRef]

- Stylianidis, E.; Remondino, F. (Eds.) 3D Recording, Documentation and Management of Cultural Heritage; Whittles Publishing: Dunbeath, Caithness, UK, 2016. [Google Scholar]

- Pollefeys, M.; van Gool, L.; Vergauwen, M.; Cornelis, K.; Verbiest, F.; Tops, J. Image-based 3D Recording for Archaeological Field Work. Comput. Graph. Appl. 2003, 23, 20–27. [Google Scholar] [CrossRef]

- Remondino, F.; El-Hakim, S. Image-based 3D Modelling: A Review. Photogramm. Rec. 2006, 21, 269–291. [Google Scholar] [CrossRef]

- Velios, A.; Harrison, J.P. Laser Scanning and digital close range photogrammetry for capturing 3D archeological objects: A comparison of quality and practicality. In Proceedings of the Conference in Computer Applications & Quantitative Methods in Archaeology, Beijing, China, 12–16 April 2001; Oxford Press: Oxford, UK, 2001; pp. 267–574. [Google Scholar]

- Remondino, F.; Spera, M.G.; Nocerino, E.; Menna, F.; Nex, F.; Gonizzi-Barsanti, S. Dense image matching: Comparisons and analyses. In Proceedings of the 2013 Digital Heritage International Congress (DigitalHeritage), Marseille, France, 28 October–1 November 2013; Addison, A.C., De Luca, L., Pescarin, S., Eds.; IEEE: Piscataway, NJ, USA, 2013; Volume 1, pp. 47–54. [Google Scholar] [CrossRef]

- Manferdini, A.M.; Russo, M. Multi-scalar 3D digitization of Cultural Heritage using a low-cost integrated approach. In Proceedings of the 2013 Digital Heritage International Congress (DigitalHeritage), Marseille, France, 28 October–1 November 2013; Addison, A.C., De Luca, L., Pescarin, S., Eds.; IEEE: Piscataway, NJ, USA, 2013; Volume 1, pp. 153–160. [Google Scholar] [CrossRef]

- Toschi, I.; Ramos, M.M.; Nocerino, E.; Menna, F.; Remondino, F.; Moe, K.; Poli, D.; Legat, K.; Fassi, F. Oblique photogrammetry supporting 3D urban reconstruction of complex scenarios. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2017, XLII-1/W1, 519–526. [Google Scholar] [CrossRef]

- Fernández-Hernandez, J.; González-Aguilera, D.; Rodríguez-Gonzálvez, P.; Mancera-Taboada, J. Image-based modelling from unmanned aerial vehicle (UAV) photogrammetry: An effective, low-cost tool for archaeological applications. Archaeometry 2015, 57, 128–145. [Google Scholar] [CrossRef]

- Camagni, F.; Colaceci, S.; Russo, M. Reverse modeling of Cultural Heritage: Pipeline and bottlenecks. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2019, XLII-2/W9, 197–204. [Google Scholar] [CrossRef]

- Teruggi, S.; Grilli, E.; Russo, M.; Fassi, F.; Remondino, F. A Hierarchical Machine Learning Approach for Multi-Level and Multi-Resolution 3D Point Cloud Classification. Remote Sens. 2020, 12, 2598. [Google Scholar] [CrossRef]

- Marschner, S.; Shirley, P. Fundamentals of Computer Graphics, 4th ed.; A K Peters/CRC Press: Florida, FL, USA, 2016. [Google Scholar] [CrossRef]

- Smith, D.K.; Tardif, M. Building Information Modeling: A Strategic Implementation Guide for Architects, Engineers, Constructors, and Real Estate Asset Managers; Wiley: Hoboken, NJ, USA, 2009. [Google Scholar]

- Baglivo, A.; Delli Ponti, F.; De Luca, D.; Guidazzoli, A.; Liguori, M.C.; Fanini, B. X3D/X3DOM, blender game engine and OSG4WEB: Open source visualisation for Cultural Heritage environments. In Proceedings of the 2013 Digital Heritage International Congress (DigitalHeritage), Marseille, France, 28 October–1 November 2013; Addison, A.C., De Luca, L., Pescarin, S., Eds.; IEEE: Piscataway, NJ, USA, 2013; Volume 2, pp. 711–718. [Google Scholar] [CrossRef]

- Carulli, M.; Bordegoni, M. Multisensory Augmented Reality Experiences for Cultural Heritage Exhibitions. In Design Tools and Methods in Industrial Engineering; ADM 2019. Lecture Notes in Mechanical Engineering; Rizzi, C., Andrisano, A., Leali, F., Gherardini, F., Pini, F., Vergnano, A., Eds.; Springer: Manhattan, NY, USA, 2020; pp. 140–151. [Google Scholar] [CrossRef]

- Rolland, J.; Hua, H. Head-Mounted Display Systems. In Encyclopedia of Optical Engineering; Taylor & Francis: Oxfordshire, UK, 2005. [Google Scholar]

- Rolland, J.P.; Fuchs, H. Optical versus video see-through head-mounted displays in medical visualization. Presence 2000, 9, 287–309. [Google Scholar] [CrossRef]

- Čejka, J.; Mangeruga, M.; Bruno, F.; Skarlatos, D.; Liarokapis, F. Evaluating the Potential of Augmented Reality Interfaces for Exploring Underwater Historical Sites. IEEE Access 2021, 9, 45017–45031. [Google Scholar] [CrossRef]

- Tonn, C.; Petzold, F.; Bimber, O.; Grundhöfer, A.; Donath, D. Spatial Augmented Reality for Architecture—Designing and Planning with and within Existing Buildings. Int. J. Archit. Comput. 2008, 6, 41–58. [Google Scholar] [CrossRef]

- Gervais, R.; Frey, G.; Hachet, M. Pointing in Spatial Augmented Reality from 2D Pointing Devices. In Human-Computer Interaction—INTERACT 2015. Lecture Notes in Computer Science; Abascal, J., Barbosa, S., Fetter, M., Gross, T., Palanque, P., Winckler, M., Eds.; Springer: Manhattan, NY, USA, 2015; Volume 9299, pp. 381–389. [Google Scholar] [CrossRef]

- Bekele, M.K. Walkable Mixed Reality Map as interaction interface for Virtual Heritage. Digit. Appl. Archaeol. Cult. Herit. 2019, 15, 1–11. [Google Scholar] [CrossRef]

- Maniello, D. Improvements and implementations of the spatial augmented reality applied on scale models of cultural goods for visual and communicative purpose. In Augmented Reality, Virtual Reality, and Computer Graphics; AVR 2018. Lecture Notes in Computer, Science; De Paolis, L., Bourdot, P., Eds.; Springer: Manhattan, NY, USA, 2018; Volume 10851, pp. 303–319. [Google Scholar] [CrossRef]

- Jin, Y.; Seo, J.; Lee, J.G.; Ahn, S.; Han, S. BIM-Based Spatial Augmented Reality (SAR) for Architectural Design Collaboration: A Proof of Concept. Appl. Sci. 2020, 10, 5915. [Google Scholar] [CrossRef]

- Hamasaki, T.; Itoh, Y.; Hiroi, Y.; Iwai, D.; Sugimoto, M. HySAR: Hybrid Material Rendering by an Optical See-Through Head-Mounted Display with Spatial Augmented Reality Projection. IEEE Trans. Vis. Comput. Graph. 2018, 24, 1457–1466. [Google Scholar] [CrossRef] [PubMed]

- Stanco, F.; Battiato, S.; Gallo, G. Digital Imaging for Cultural Heritage Preservation: Analysis, Restoration, and Reconstruction of Ancient Artworks; CRC Press: Boca Raton, FL, USA, 2017. [Google Scholar]

- Costantino, D.; Pepe, M.; Alfio, V.S. Point Cloud accuracy of Smartphone Images: Applications in Cultural Heritage Environment. Int. J. Adv. Trends Comput. Sci. Eng. 2020, 9, 6259–6267. [Google Scholar] [CrossRef]

- Yilmazturk, F.; Gurbak, A.E. Geometric Evaluation of Mobile-Phone Camera Images for 3D Information. Int. J. Opt. 2019, 2019, 8561380. [Google Scholar] [CrossRef]

- Kolivand, H.; El Rhalibi, A.; Abdulazeez, S.; Praiwattana, P. Cultural Heritage in Marker-Less Augmented Reality: A Survey. In Advanced Methods and New Materials for Cultural Heritage Preservation; Turcanu-Carutiu, D., Ion, R.-M., Eds.; Intechopen: London, UK, 2018. [Google Scholar] [CrossRef]

- Arth, C.; Grasset, R.; Gruber, L.; Langlotz, T.; Mulloni, A.; Wagner, D. The history of mobile augmented reality. arXiv 2015, arXiv:1505.01319. [Google Scholar]

- Chatzopoulos, D.; Bermejo, C.; Huang, Z.; Hui, P. Mobile Augmented Reality Survey: From Where We are to Where We Go. IEEE Access 2017, 5, 6917–6950. [Google Scholar] [CrossRef]

- Chiabrando, F.; Sammartano, G.; Spanò, A.; Spreafico, A. Hybrid 3D Models: When Geomatics Innovations Meet Extensive Built Heritage Complexes. ISPRS Int. J. Geo-Inf. 2019, 8, 124. [Google Scholar] [CrossRef]

- Jurado, D.; Jurado, J.M.; Ortega, L.; Feito, F.R. GEUINF: Real-Time Visualization of Indoor Facilities Using Mixed Reality. Sensors 2021, 21, 1123. [Google Scholar] [CrossRef] [PubMed]

- Siriwardhana, Y.; Porambage, P.; Liyanage, M.; Ylinattila, M. A Survey on Mobile Augmented Reality with 5G Mobile Edge Computing: Architectures, Applications and Technical Aspects. IEEE Commun. Surv. Tutor. 2021, 23, 1160–1192. [Google Scholar] [CrossRef]

- D’Agnano, F.; Balletti, C.; Guerra, F.; Vernier, P. Tooteko: A case study of augmented reality for an accessible Cultural Heritage. Digitization, 3D printing and sensors for an audio-tactile experience. Int. Arch. Photogram. Remote Sens. Spat. Inf. Sci. 2015, 40, 207–213. [Google Scholar] [CrossRef]

- Bermejo, C.; Hui, P. A survey on haptic technologies for mobile augmented reality. arXiv 2017, arXiv:1709.00698. [Google Scholar]

- Pham, D.-M.; Stuerzlinger, W. Is the Pen Mightier than the Controller? A Comparison of Input Devices for Selection in Virtual and Augmented Reality. In Proceedings of the 25th ACM Symposium on Virtual Reality Software and Technology (VRST ‘19), Parramatta, NSW, Australia, 12–15 November 2019; Trescak, T., Simoff, S., Richards, D., Eds.; Association for Computing Machinery (ACM): New York, NY, USA, 2019; Volume 35, pp. 1–11. [Google Scholar] [CrossRef]

- Kim, J.J.; Wang, Y.; Wang, H.; Lee, S.; Yokota, T.; Someya, T. Skin Electronics: Next-Generation Device Platform for Virtual and Augmented Reality. Adv. Funct. Mater. 2021, 2009602. [Google Scholar] [CrossRef]

- Pfeuffer, K.; Abdrabou, Y.; Esteves, A.; Rivu, R.; Abdelrahman, Y.; Meitner, S.; Saadi, A.; Alt, F. ARtention: A design space for gaze-adaptive user interfaces in augmented reality. Comput. Graph. 2021, 95, 1–12. [Google Scholar] [CrossRef]

- Langner, R.; Satkowski, M.; Büschel, W.; Dachselt, R. MARVIS: Combining Mobile Devices and Augmented Reality for Visual Data Analysis. In Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems (CHI ‘21), Yokohama, Japan, 8–13 May 2021; Association for Computing Machinery (ACM): New York, NY, USA, 2021; Volume 468, pp. 1–17. [Google Scholar] [CrossRef]

- Rauschnabel, P.A.; Ro, Y.K. Augmented reality smart glasses: An investigation of technology acceptance drivers. Int. J. Technol. Mark. 2016, 11, 1–26. [Google Scholar] [CrossRef]

- Dalim, C.S.C.; Kolivand, H.; Kadhim, H.; Sunar, M.S.; Billinghurst, M. Factors influencing the acceptance of augmented reality in education: A review of the literature. J. Comput. Sci. 2017, 13, 581–589. [Google Scholar] [CrossRef]

- Rigby, J.; Smith, S.P. Augmented Reality Challenges for Cultural Heritage; Working Paper Series; University of Newcastle: Callaghan, NSW, Australia, 2013; Volume 5. [Google Scholar]

- Brito, P.Q.; Stoyanova, J. Marker versus Markerless Augmented Reality. Which Has More Impact on Users? Int. J. Hum. Comput. Interact. 2018, 34, 819–833. [Google Scholar] [CrossRef]

- Singh, G.; Mantri, A. Ubiquitous hybrid tracking techniques for augmented reality applications. In Proceedings of the 2nd International Conference on Recent Advances in Engineering & Computational Sciences (RAECS), Chandigarh, India, 21–22 December 2015; IEEE: Piscataway, NJ, USA, 2015; pp. 1–5. [Google Scholar] [CrossRef]

- Tzima, S.; Styliaras, G.; Bassounas, A. Augmented Reality in Outdoor Settings: Evaluation of a Hybrid Image Recognition Technique. J. Comput. Cult. Herit. 2021, 14, 1–17. [Google Scholar] [CrossRef]

- Reitmayr, G.; Drummond, T.W. Going out: Robust model-based tracking for outdoor augmented reality. In Proceedings of the IEEE/ACM International Symposium on Mixed and Augmented Reality (ISMAR), Santa Barbara, CA, USA, 22–25 October 2006; IEEE: Piscataway, NJ, USA, 2015; pp. 109–118. [Google Scholar] [CrossRef]

- Imottesjo, H.; Thuvander, L.; Billger, M.; Wallberg, P.; Bodell, G.; Kain, J.-H.; Nielsen, S.A. Iterative Prototyping of Urban CoBuilder: Tracking Methods and User Interface of an Outdoor Mobile Augmented Reality Tool for Co-Designing. Multimodal Technol. Interact. 2020, 4, 26. [Google Scholar] [CrossRef]

- Hockett, P.; Ingleby, T. Augmented Reality with Hololens: Experiential Architectures Embedded in the Real World. arXiv 2016, arXiv:1610.04281. [Google Scholar]

- Teng, Z.; Hanwu, H.; Yueming, W.; He’en, C.; Yongbin, C. Mixed Reality Application: A Framework of Markerless Assembly Guidance System with Hololens Glass. In Proceedings of the International Conference on Virtual Reality and Visualization (ICVRV), Zhengzhou, China, 21–22 October 2017; IEEE: Piscataway, NJ, USA, 2017; pp. 433–434. [Google Scholar] [CrossRef]

- Kan, T.-W.; Teng, C.-H.; Chen, M.Y. QR Code Based Augmented Reality Applications. In Handbook of Augmented Reality; Furht, B., Ed.; Springer: New York, NY, USA, 2011; pp. 339–354. [Google Scholar] [CrossRef]

- Kan, T.-W.; Teng, C.-H.; Chou, W.-C. Applying QR code in augmented reality applications. In Proceedings of the 8th International Conference on Virtual Reality Continuum and its Applications in Industry (VRCAI ‘09), Yokohama, Japan, 14–15 December 2009; Spencer, N.S., Ed.; Association for Computing Machinery (ACM): New York, NY, USA, 2009; pp. 253–257. [Google Scholar] [CrossRef]

- Calabrese, C.; Baresi, L. Outdoor Augmented Reality for Urban Design and Simulation. In Urban Design and Representation; Piga, B., Salerno, R., Eds.; Springer: Manhattan, NY, USA, 2017; pp. 181–190. [Google Scholar] [CrossRef]

- Cirafici, A.; Maniello, D.; Amoretti, V. The magnificent adventure of a ‘Fragment’. Block NXLVI Parthenon North Frieze in Augmented Reality. SCiRES 2015, 5, 129–142. [Google Scholar] [CrossRef]

- Park, H.; Park, J.-I. Invisible Marker–Based Augmented Reality. Int. J. Hum. Comput. Interact. 2010, 26, 829–848. [Google Scholar] [CrossRef]

- Geiger, P.; Schickler, M.; Pryss, R.; Schobel, J.; Reichert, M. Location-based mobile augmented reality applications: Challenges, examples, lessons learned. In Proceedings of the 10th Int’l Conference onWeb Information Systems and Technologies (WEBIST’14), Special Session on Business Apps, Barcelona, Spain, 3–5 April 2014; pp. 383–394. [Google Scholar]

- Mutis, I.; Ambekar, A. Challenges and enablers of augmented reality technology for in situ walkthrough applications. J. Inf. Technol. Constr. 2020, 25, 55–71. [Google Scholar] [CrossRef]

- Uchiyama, H.; Marchand, E. Object detection and pose tracking for augmented reality: Recent approaches. In Proceedings of the 18th Korea-Japan Joint Workshop on Frontiers of Computer Vision (FCV’12), Kawasaki, Japan, 20–22 February 2012. [Google Scholar]

- Sato, Y.; Fukuda, T.; Yabuki, N.; Michikawa, T.; Motamedi, A. A Marker-less Augmented Reality System using Image Processing Techniques for Architecture and Urban Environment. In Living Systems and Micro-Utopias: Towards Continuous Designing, Proceedings of the 21st International Conference on Computer-Aided Architectural Design Research in Asia, Melbourne, Australia, 30 March–2 April 2016; Chien, S., Choo, S., Schnabel, A.M., Nakapan, W., Kim, M.J., Roudavski, S., Eds.; The Association for Computer-Aided Architectural Design Research in Asia (CAADRIA): Hong Kong, China, 2016; pp. 713–722. [Google Scholar]

- Maidi, M.; Lehiani, Y.; Preda, M. Open Augmented Reality System for Mobile Markerless Tracking. In Proceedings of the IEEE International Conference on Image Processing (ICIP), Abu Dhabi, United Arab Emirates, 25–28 October 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 2591–2595. [Google Scholar] [CrossRef]

- Liarokapis, F.; Greatbatch, I.; Mountain, D.; Gunesh, A.; Brujic-Okretic, V.; Raper, J. Mobile augmented reality techniques for geovisualisation. In Proceedings of the 9th International Conference on Information Visualisation, London, UK, 6–8 July 2005; Banissi, E., Ursyn, A., Eds.; IEEE: Piscataway, NJ, USA, 2005; pp. 745–751. [Google Scholar] [CrossRef]

- Paolocci, G.; Baldi, T.L.; Barcelli, D.; Prattichizzo, D. Combining Wristband Display and Wearable Haptics for Augmented Reality. In Proceedings of the IEEE Conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops (VRW), Atlanta, GA, USA, 22–26 March 2020; O’Conner, L., Ed.; IEEE: Piscataway, NJ, USA, 2020; pp. 632–633. [Google Scholar] [CrossRef]

- Zhao, Q. The Application of Augmented Reality Visual Communication in Network Teaching. Int. J. Emerg. Technol. Learn. 2018, 13, 1–14. [Google Scholar] [CrossRef]

- Shaer, O.; Hornecker, E. Tangible user interfaces: Past, present, and future directions. Found. Trends Hum. Comput. Interact. 2010, 3, 4–137. [Google Scholar] [CrossRef]

- Rau, L.; Horst, R.; Liu, Y.; Dörner, R.; Spierling, U. A Tangible Object for General Purposes in Mobile Augmented Reality Applications. In INFORMATIK 2020. (S. 947–954); Reussner, R.H., Koziolek, A., Heinrich, R., Eds.; Gesellschaft für Informatik: Bonn, Germany, 2021; pp. 1–9. [Google Scholar] [CrossRef]

- Kobeisse, S. Touching the Past: Developing and Evaluating a Heritage kit for Visualizing and Analyzing Historical Artefacts Using Tangible Augmented Reality. In Proceedings of the Fifteenth International Conference on Tangible, Embedded, and Embodied Interaction (TEI ‘21), Salzburg, Austria, 14–17 February 2021; Wimmer, R., Ed.; Association for Computing Machinery (ACM): New York, NY, USA, 2021; Volume 73, pp. 1–3. [Google Scholar] [CrossRef]

- Yue, S. Human motion tracking and positioning for augmented reality. J. Real Time Image Proc. 2021, 18, 357–368. [Google Scholar] [CrossRef]

- Jayamanne, R.M.; Shaminda, D. Technological Review on Integrating Image Processing, Augmented Reality and Speech Recognition for Enhancing Visual Representations. In Proceedings of the 2020 International Conference on Image Processing and Robotics (ICIP), Negombo, Sri Lanka, 6–8 March 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 1–6. [Google Scholar] [CrossRef]

- Kharroubi, A.; Billen, R.; Poux, F. Marker-less mobile augmented reality application for massive 3d point clouds and semantics. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2020, XLIII-B2–2020, 255–261. [Google Scholar] [CrossRef]

- Sereno, M.; Wang, X.; Besancon, L.; Mcguffin, M.J.; Isenberg, T. Collaborative Work in Augmented Reality: A Survey. IEEE Trans. Vis. Comput. Graph. 2020, 1–20. [Google Scholar] [CrossRef]

- Platinsky, L.; Szabados, M.; Hlasek, F.; Hemsley, R.; Del Pero, L.; Pancik, A.; Baum, B.; Grimmett, H.; Ondruska, P. Collaborative Augmented Reality on Smartphones via Life-long City-scale Maps. In Proceedings of the IEEE International Symposium on Mixed and Augmented Reality (ISMAR), Porto de Galinhas, Brazil, 9–13 November 2020; Teichrieb, V., Duh, H., Lima, J.P., Simões, F., Eds.; IEEE: Piscataway, NJ, USA, 2020; pp. 533–541. [Google Scholar] [CrossRef]

- García-Pereira, I.; Portalés, C.; Gimeno, J.; Casas, S. A collaborative augmented reality annotation tool for the inspection of prefabricated buildings. Multimed. Tools Appl. 2020, 79, 6483–6501. [Google Scholar] [CrossRef]

- Grubert, J.; Grasset, R.; Reitmayr, G. Exploring the design of hybrid interfaces for augmented posters in public spaces. In Proceedings of the 7th Nordic Conference on Human-Computer Interaction: Making Sense through Design (NordiCHI ‘12), Copenhagen, Denmark, 14–17 October 2012; Malmborg, L., Pederson, T., Eds.; Association for Computing Machinery (ACM): New York, NY, USA, 2012; pp. 238–246. [Google Scholar] [CrossRef]

- Wang, X.; Besançon, L.; Rousseau, D.; Sereno, M.; Ammi, M.; Isenberg, T. Towards an Understanding of Augmented Reality Extensions for Existing 3D Data Analysis Tools. In Proceedings of the 2020 CHI Conference on Human Factors in Computing Systems (CHI ‘20), Honolulu, HI, USA, 25–30 April 2020; Bernhaupt, R., Mueller, F.F., Verweij, D., Andres, J., Eds.; Association for Computing Machinery (ACM): New York, NY, USA, 2020; pp. 1–13. [Google Scholar] [CrossRef]

- Pulcrano, M.; Scandurra, S.; Minin, G.; di Luggo, A. 3D cameras acquisitions for the documentation of Cultural Heritage. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2019, XLII-2/W9, 639–646. [Google Scholar] [CrossRef]

- Dunston, P.S.; Wang, X. Mixed Reality-Based Visualization Interfaces for Architecture, Engineering, and Construction Industry. J. Constr. Eng. Manag. 2005, 131, 1301–1309. [Google Scholar] [CrossRef]

- Krakhofer, S.; Kaftan, M. Augmented reality design decision support engine for the early building design stage. In Emerging Experience in Past, Present and Future of Digital Architecture, Proceedings of the 20th International Conference of the Association for Computer-Aided Architectural Design Research in Asia CAADRIA 2015, Daegu, Korea, 20–22 May 2015; Ikeda, Y., Herr, C.M., Holzer, D., Kaijima, S., Kim, M.J., Schnabel, M.A., Eds.; The Association for Computer-Aided Architectural Design Research in Asia (CAADRIA): Hong Kong, China, 2015; pp. 231–240. [Google Scholar]

- Albers, B.; de Lange, N.; Xu, S. Augmented citizen science for environmental monitoring and education. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2017, XLII-2/W7, 1–4. [Google Scholar] [CrossRef]

- Ioannidis, C.; Verykokou, S.; Soile, S.; Boutsi, A.-M. A multi-purpose Cultural Heritage data platform for 4D visualization and interactive information services. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2020, XLIII-B4–2020, 583–590. [Google Scholar] [CrossRef]

- Pohlmann, M.; Pinto da Silva, F. Use of Virtual Reality and Augmented Reality in Learning Objects: A case study for technical drawing teaching. Int. J. Educ. Res. 2019, 7, 21–32. [Google Scholar]

- Goyal, S.; Khan, N.; Chattopadhyay, C.; Bhatnagar, G. LayART: Generating indoor layout using ARCore Transformations. In Proceedings of the IEEE Sixth International Conference on Multimedia Big Data (BigMM), New Delhi, India, 24–26 September 2020; Kankanhalli, M., Ed.; IEEE: Piscataway, NJ, USA, 2020; pp. 272–276. [Google Scholar] [CrossRef]

- Palma, V.; Spallone, R.; Vitali, M. Digital Interactive Baroque Atria in Turin: A Project Aimed to Sharing and Enhancing Cultural Heritage. In Proceedings of the 1st International and Interdisciplinary Conference on Digital Environments for Education, Arts and Heritage. EARTH 2018, Advances in Intelligent Systems and Computing, Brixen, Italy, 5–6 July 2018; Luigini, A., Ed.; Springer: Manhattan, NY, USA, 2018; Volume 919, pp. 314–325. [Google Scholar] [CrossRef]

- Skov, M.B.; Kjeldskov, J.; Paay, J.; Husted, N.; Nørskov, J.; Pedersen, K. Designing on-site: Facilitating participatory contextual architecture with mobile phones. Pervasive Mob. Comput. 2013, 9, 216–227. [Google Scholar] [CrossRef]

- Sánchez Riera, A.; Redondo, E.; Fonseca, D. Geo-located teaching using handheld augmented reality: Good practices to improve the motivation and qualifications of architecture students. Univ. Access Inf. Soc. 2015, 14, 363–374. [Google Scholar] [CrossRef]

- Meily, S.O.; Putra, I.K.G.D.; Buana, P.W. Augmented Reality Using Real-Object Tracking Development. J. Sist. Inf. 2021, 17, 20–29. [Google Scholar] [CrossRef]

- Empler, T. Traditional Museums, virtual Museums. Dissemination role of ICTs. DisegnareCON 2018, 11, 13.1–13.19. [Google Scholar]

- Peng, F.; Zhai, J. A mobile augmented reality system for exhibition hall based on Vuforia. In Proceedings of the 2nd International Conference on Image, Vision and Computing (ICIVC), Chengdu, China, 2–4 June 2017; IEEE: Piscataway, NJ, USA, 2017; pp. 1049–1052. [Google Scholar] [CrossRef]

- Ioannidi, A.; Gavalas, D.; Kasapakis, V. Flaneur: Augmented exploration of the architectural urbanscape. In Proceedings of the IEEE Symposium on Computers and Communications (ISCC), Heraklion, Greece, 3–6 July 2017; Ammar, R., Askoxylakis, I., Eds.; IEEE: Piscataway, NJ, USA, 2017; pp. 529–533. [Google Scholar] [CrossRef]

- Canciani, M.; Conigliaro, E.; Del Grasso, M.; Papalini, P.; Saccone, M. 3D survey and augmented reality for Cultural Heritage. The case study of aurelian wall at castra praetoria in Rome. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2016, XLI-B5, 931–937. [Google Scholar] [CrossRef]

- Garbett, J.; Hartley, T.; Heesom, D. A multi-user collaborative BIM-AR system to support design and construction. Autom. Constr. 2021, 122, 103487. [Google Scholar] [CrossRef]

- Sridhar, S.; Sanagavarapu, S. Instant Tracking-Based Approach for Interior Décor Planning with Markerless AR. In Proceedings of the Zooming Innovation in Consumer Technologies Conference (ZINC), Novi Sad, Serbia, 26–27 May 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 103–108. [Google Scholar] [CrossRef]

- Chao, K.-H.; Lan, C.-H.; Kinshuk; Chao, S.; Chang, K.-E.; Sung, Y.-T. Implementation of a mobile peer assessment system with augmented reality in a fundamental design course. Knowl. Manag. E Learn. Int. J. 2014, 6, 123–139. [Google Scholar] [CrossRef]

- Junyent, E.; Aguilo, C.; Lores, J. Enhanced Cultural Heritage Environments by Augmented Reality Systems. In Proceedings of the Seventh International Conference on Virtual Systems and MultiMedia Enhanced Realities: Augmented and Unplugged, Berkeley, CA, USA, 25–27 October 2001; IEEE: Washington, DC, USA, 2001; Volume 1, p. 357. [Google Scholar] [CrossRef]

- Mourkoussis, N.; Liarokapis, F.; Darcy, J.; Pettersson, M.; Petridis, P.; Lister, P.F.; White, M.; Pierce, B.C.; Turner, D.N. Virtual and Augmented Reality Applied to Educational and Cultural Heritage Domains. In Proceedings of the BIS 2002, Poznan, Poland, 24–25 April 2002; Abramowicz, W., Ed.; Poznań University of Economics and Business Press: Poznań, Poland, 2002; pp. 367–372. [Google Scholar]

- Adhani, N.I.; Rohaya Awang, R.D. A survey of mobile augmented reality applications. In Proceedings of the 1st International Conference on Future Trends in Computing and Communication Technologies, Malacca, Malaysia, 13–15 December 2012; pp. 89–96. [Google Scholar]

- Calisi, D.; Tommasetti, A.; Topputo, R. Architectural historical heritage: A tridimensional multilayered cataloguing method. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2011, XXXVIII-5/W16, 599–606. [Google Scholar] [CrossRef]

- Rattanarungrot, S.; White, M.; Patoli, Z.; Pascu, T. The application of augmented reality for reanimating Cultural Heritage. In Virtual, Augmented and Mixed Reality. Applications of Virtual and Augmented Reality. VAMR 2014. Lecture Notes in Computer Science; Shumaker, R., Lackey, S., Eds.; Springer: Manhattan, NY, USA, 2014; Volume 8526, pp. 85–95. [Google Scholar] [CrossRef]

- Albourae, A.T.; Armenakis, C.; Kyan, M. Architectural heritage visualization using interactive technologies. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2017, XLII-2/W5, 7–13. [Google Scholar] [CrossRef]

- Osello, A.; Lucibello, G.; Morgagni, F. HBIM and Virtual Tools: A New Chance to Preserve Architectural Heritage. Buildings 2018, 8, 12. [Google Scholar] [CrossRef]

- Banfi, F.; Brumana, R.; Stanga, C. Extended reality and informative models for architectural heritage: From scan-to-bim process to virtual and augmented reality. Virtual Archaeol. Rev. 2019, 10, 14–30. [Google Scholar] [CrossRef]

- Brusaporci, S.; Graziosi, F.; Franchi, F.; Maiezza, P.; Tata, A. Mixed Reality Experiences for the Historical Storytelling of Cultural Heritage. In From Building Information Modelling to Mixed Reality. Springer Tracts in Civil. Engineering; Bolognesi, C., Villa, D., Eds.; Springer: Manhattan, NY, USA, 2021; pp. 33–46. [Google Scholar] [CrossRef]

- Moorhouse, N.; tom Dieck, M.; Jung, T. Augmented Reality to enhance the Learning Experience in Cultural Heritage Tourism: An Experiential Learning Cycle Perspective. Ereview Tour. Res. 2017, 8, 1–5. [Google Scholar]

- Meschini, A.; Rossi, D.; Feriozzi, R. Disclosing Documentary Archives: AR interfaces to recall missing urban scenery. In Proceedings of the 2013 Digital Heritage International Congress (DigitalHeritage), Marseille, France, 28 October–1 November 2013; Addison, A.C., De Luca, L., Pescarin, S., Eds.; IEEE: Piscataway, NJ, USA, 2013; Volume 2, pp. 371–374. [Google Scholar] [CrossRef]

- Durand, E.; Merienne, F.; Pere, C.; Callet, P. Ray-on, an On-Site Photometric Augmented Reality Device. J. Comput. Cult. Herit. 2014, 7, 1–13. [Google Scholar] [CrossRef]

- Augello, A.; Infantino, I.; Pilato, G.; Vitale, G. Site Experience Enhancement and Perspective in Cultural Heritage Fruition—A Survey on New Technologies and Methodologies Based on a “Four-Pillars” Approach. Future Internet 2021, 13, 92. [Google Scholar] [CrossRef]

- Han, S.; Yoon, J.-H.; Kwon, J. Impact of Experiential Value of Augmented Reality: The Context of Heritage Tourism. Sustainability 2021, 13, 4147. [Google Scholar] [CrossRef]

- Huzaifah, S.; Kassim, S.J. Using Augmented Reality in the Dissemination of Cultural Heritage: The Issue of Evaluating Presence. In Proceedings of the Conference ICIDM, Johor Bahru, Malaysia, 30 July 30–1 August 2021. [Google Scholar]

- Han, D.I.D.; Weber, J.; Bastiaansen, M.; Mitas, O.; Lub, X. Virtual and Augmented Reality Technologies to Enhance the Visitor Experience in Cultural Tourism. In Augmented Reality and Virtual Reality. Progress in IS.; Tom Dieck, M.C., Jung, T., Eds.; Springer: Manhattan, NY, USA, 2019; pp. 113–128. [Google Scholar] [CrossRef]

- Han, D.I.D.; Dieck, T.M.C.; Jung, T. Augmented Reality Smart Glasses (ARSG) visitor adoption in cultural tourism. Leis. Stud. 2019, 38, 618–633. [Google Scholar] [CrossRef]

- Hincapié, M.; Díaz, C.; Zapata-Cárdenas, M.I.; Toro Rios, H.J.; Valencia, D.; Güemes-Castorena, D. Augmented reality mobile apps for cultural heritage reactivation. Comput. Electr. Eng. 2021, 93, 107281. [Google Scholar] [CrossRef]

- Merchán, M.J.; Merchán, P.; Pérez, E. Good Practices in the Use of Augmented Reality for the Dissemination of Architectural Heritage of Rural Areas. Appl. Sci. 2021, 11, 2055. [Google Scholar] [CrossRef]

- Anagnostou, K.; Vlamos, P. Square AR: Using Augmented Reality for Urban Planning. In Proceedings of the Third International Conference on Games and Virtual Worlds for Serious Applications, Athens, Greece, 4–6 May 2011; IEEE: Piscataway, NJ, USA, 2011; pp. 128–131. [Google Scholar] [CrossRef]

- Azmin, A.K.; Kassim, M.H.; Abdullah, F.; Sanusi, A.N.Z. Architectural Heritage Restoration of Rumah Datuk Setia via Mobile Augmented Reality Restoration. J. Malays. Inst. Plan. 2017, 15, 139–150. [Google Scholar] [CrossRef][Green Version]

- De Fino, M.; Ceppi, C.; Fatiguso, F. Virtual tours and informational models for improving territorial attractiveness and the smart management of architectural heritage: The 3d-imp-act project. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2020, XLIV-M-1–2020, 473–480. [Google Scholar] [CrossRef]

- Li, S.-S.; Tai, N.-C. Development of an Augmented Reality Application for Protecting the Perspectives and Views of Architectural Heritages. In Proceedings of the IEEE International Conference on Consumer Electronics—Taiwan (ICCE-Taiwan), Taoyuan, Taiwan, 28–30 September 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 1–2. [Google Scholar] [CrossRef]

- Tscheu, F.; Buhalis, D. Augmented Reality at Cultural Heritage sites. In Information and Communication Technologies in Tourism; Inversini, A., Schegg, R., Eds.; Springer: Manhattan, NY, USA, 2016; pp. 607–619. [Google Scholar] [CrossRef]

- Feng, Y.; Mueller, B. The State of Augmented Reality Advertising around the Globe: A Multi-Cultural Content Analysis. J. Promot. Manag. 2018, 25, 453–475. [Google Scholar] [CrossRef]

- Rahimi, R.; Hassan, A. Augmented Reality Apps for Tourism Destination Promotion. In Destination Management and Marketing: Breakthroughs in Research and Practice; IGI Global: Pennsylvania, PA, USA, 2020. [Google Scholar] [CrossRef]

- Wang, X. Augmented reality in architecture and design: Potentials and challenges for application. Int. J. Archit. Comput. 2009, 7, 309–326. [Google Scholar] [CrossRef]

- Nassereddine, H.; Veeramani, A.; Veeramani, D. Exploring the Current and Future States of Augmented Reality in the Construction Industry. In Collaboration and Integration in Construction, Engineering, Management and Technology. Advances in Science, Technology & Innovation; IEREK Interdisciplinary Series for Sustainable Development; Ahmed, S.M., Hampton, P., Azhar, S.D., Saul, A., Eds.; Springer: Manhattan, NY, USA, 2021; pp. 185–189. [Google Scholar] [CrossRef]

- Khalek, I.A.; Chalhoub, J.M.; Ayer, S.K. Augmented Reality for Identifying Maintainability Concerns during Design. Adv. Civ. Eng. 2019, 12, 8547928. [Google Scholar] [CrossRef]

- Calderon-Hernandez, C.; Xavier, B. Lean, BIM and Augmented Reality Applied in the Design and Construction Phase: A Literature Review. Int. J. Innov. Manag. Technol. 2018, 9, 60–63. [Google Scholar] [CrossRef]

- Meža, S.; Turk, Ž.; Dolenc, M. Measuring the potential of augmented reality in civil engineering. Adv. Eng. Softw. 2015, 90, 1–10. [Google Scholar] [CrossRef]

- Wang, X.; Love, P.E.D.; Kim, M.J.; Park, C.-S.; Sing, C.-P.; Hou, L. A conceptual framework for integrating building information modelling with augmented reality. Autom. Constr. 2013, 34, 37–44. [Google Scholar] [CrossRef]

- Noghabaei, M.; Heydarian, A.; Balali, V.; Han, K. Trend Analysis on Adoption of Virtual and Augmented Reality in the Architecture, Engineering, and Construction Industry. Data 2020, 5, 26. [Google Scholar] [CrossRef]

- Xiangyu, W.; Rui, C. An experimental study on collaborative effectiveness of augmented reality potentials in urban design. CoDesign 2009, 5, 229–244. [Google Scholar] [CrossRef]

- Hofmann, M.; Münster, S.; Noennig, J.R. A Theoretical Framework for the Evaluation of Massive Digital Participation Systems in Urban Planning. J. Geovis. Spat. Anal. 2020, 4, 1–12. [Google Scholar] [CrossRef]

- Rodrigues, M. Civil Construction Planning Using Augmented Reality. In Sustainability and Automation in Smart Constructions. Advances in Science, Technology & Innovation (IEREK Interdisciplinary Series for Sustainable Development); Rodrigues, H., Gaspar, F., Fernandes, P., Mateus, A., Eds.; Springer: Manhattan, NY, USA, 2021; pp. 211–217. [Google Scholar] [CrossRef]

- Shouman, B.; Othman, A.A.E.; Marzouk, M. Enhancing users involvement in architectural design using mobile augmented reality. Eng. Constr. Archit. Manag. 2021. Pending Publication. [Google Scholar] [CrossRef]

- Gül, L.F. Studying gesture-based interaction on a mobile augmented reality application for co-design activity. J. Multimodal User Interfaces 2018, 12, 109–124. [Google Scholar] [CrossRef]

- Chalhoub, J.; Ayer, S.K.; McCord, K.H. Augmented Reality to Enable Users to Identify Deviations for Model Reconciliation. Buildings 2021, 11, 77. [Google Scholar] [CrossRef]

- Zaher, M.; Greenwood, D.; Marzouk, M. Mobile augmented reality applications for construction projects. Constr. Innov. 2018, 18, 152–166. [Google Scholar] [CrossRef]

- Zollmann, S.; Hoppe, C.; Kluckner, S.; Poglitsch, C.; Bischof, H.; Reitmayr, G. Augmented Reality for Construction Site Monitoring and Documentation. Proc. IEEE 2014, 102, 137–154. [Google Scholar] [CrossRef]

- Liu, D.; Xia, X.; Chen, J.; Li, S. Integrating Building Information Model and Augmented Reality for Drone-Based Building Inspection. J. Comput. Civ. Eng. 2021, 35. [Google Scholar] [CrossRef]

- Golparvar-Fard, M.; Peña-Mora, F.; Savarese, S. Application of D4AR—A 4-Dimensional augmented reality model for automating construction progress monitoring data collection, processing and communication. J. Inf. Technol. Constr. 2009, 14, 129–153. [Google Scholar]

- Sabzevar, M.F.; Gheisari, M.; Lo, L.J. Improving Access to Design Information of Paper-Based Floor Plans Using Augmented Reality. Int. J. Constr. Educ. Res. 2021, 17, 178–198. [Google Scholar] [CrossRef]

- Avila-Garzon, C.; Bacca-Acosta, J.; Kinshuk, D.J.; Betancourt, J. Augmented Reality in Education: An Overview of Twenty-Five Years of Research. Contemp. Educ. Technol. 2021, 13, 1–29. [Google Scholar] [CrossRef]

- Ummihusna, A.; Zairul, M. Investigating immersive learning technology intervention in architecture education: A systematic literature review. J. Appl. Res. High. Educ. 2021. [Google Scholar] [CrossRef]

- Chen, P.; Liu, X.; Cheng, W.; Huang, R. A Review of using Augmented Reality in Education from 2011 to 2016. In Innovations in Smart Learning, Lecture Notes in Educational Technology; Popescu, E., Khribi, M.K., Huang, R., Jemni, M., Chen, N.-S., Sampson, D.G., Eds.; Springer: Singapore, 2017; pp. 13–18. [Google Scholar] [CrossRef]

- Arduini, G. La realtà aumentata e nuove prospettive educative. Educ. Sci. Soc. 2012, 3, 209–216. [Google Scholar]

- Dunleavy, M.; Dede, C. Augmented reality teaching and learning. In Handbook of Research on Educational Communications and Technology; Spector, J., Merrill, M., Elen, J., Bishop, M., Eds.; Springer: New York, NY, USA, 2014; pp. 735–745. [Google Scholar] [CrossRef]

- Radu, I. Augmented reality in education: A meta-review and crossmedia analysis. Pers. Ubiquitous Comput. 2014, 18, 1533–1543. [Google Scholar] [CrossRef]

- Yuen, S.C.-Y.; Yaoyuneyong, G.; Johnson, E. Augmented Reality: An Overview and Five Directions for AR in Education. J. Educ. Technol. Dev. Exch. 2011, 4, 119–140. [Google Scholar] [CrossRef]

- Luna, U.; Rivero, P.; Vicent, N. Augmented Reality in Heritage Apps:Current Trends in Europe. Appl. Sci. 2019, 9, 2756. [Google Scholar] [CrossRef]

- Ibáñez-Etxeberria, A.; Fontal, O.; Rivero, P. Educación Patrimonial y TIC en España: Marco normativo, variables estructurantes y programas referentes. Arbor 2018, 194, a448. [Google Scholar] [CrossRef]

- González Vargas, J.C.; Fabregat, R.; Carrillo-Ramos, A.; Jové, T. Survey: Using Augmented Reality to Improve Learning Motivation in Cultural Heritage Studies. Appl. Sci. 2020, 10, 897. [Google Scholar] [CrossRef]

- Barlozzini, P.; Carnevali, L.; Lanfranchi, F.; Menconero, S.; Russo, M. Analisi di opere non realizzate: Rinascita virtuale di una architettura di Pier Luigi Nervi. In Proceedings of the Drawing as (In)tangible Representation XV Congresso UID, Milano, Italy, 13–15 September 2018; Salerno, R., Ed.; Gangemi Editore: Rome, Italy, 2018; pp. 397–404. [Google Scholar]

- Rankohi, S.; Waugh, L. Review and analysis of augmented reality literature for construction industry. Vis. Eng. 2013, 1, 1–18. [Google Scholar] [CrossRef]

- Wang, X.; Kim, M.J.; Love, P.E.D.; Kang, S.-C. Augmented Reality in built environment: Classification and implications for future research. Autom. Constr. 2013, 32, 1–13. [Google Scholar] [CrossRef]

- Chen, R.; Wang, X. Tangible Augmented Reality for Design Learning: An Implementation Framework. In Proceedings of the 1th International Conference on Computer-Aided Architectural Design Research in Asia, Chiang Mai, Thailand, 9–12 April 2008; The Association for Computer-Aided Architectural Design Research in Asia (CAADRIA): Hong Kong, China, 2008; pp. 350–356. [Google Scholar]

- Morton, D. Augmented Reality in Architectural Studio Learning: How Augmented Reality can be used as an Exploratory Tool in the Design Learning Journey. In Proceedings of the 32nd eCAADe Conference, Newcastle upon Tyne, UK, 10–12 September 2014; Thompson, E.M., Ed.; The Association for Computer-Aided Architectural Design Research in Asia (CAADRIA): Hong Kong, China, 2014; pp. 343–356. [Google Scholar]

- Ben-Joseph, E.; Ishii, H.; Underkoffler, J.; Piper, B.; Yeung, L. Urban Simulation and The Luminous Planning Table Bridging The Gap Between The Digital And The Tangible. J. Plan. Educ. Res. 2001, 21, 196–203. [Google Scholar] [CrossRef]

- Markusiewicz, J.; Slik, J. From Shaping to Information Modeling in Architectural Education: Implementation of Augmented Reality Technology in Computer-Aided Modeling. In Proceedings of the 33rd eCAADe Conference, Vienna, Austria, 16–18 September 2015; Martens, B., Wurzer, G., Grasl, T., Lorenz, W.E., Schaffranek, R., Eds.; The Association for Computer-Aided Architectural Design Research in Asia (CAADRIA): Hong Kong, China, 2015; Volume 2, pp. 83–90. [Google Scholar]

- Chen, C.-T.; Chang, T.-W. 1:1 Spatially Augmented Reality Design Environment. In Innovations in Design & Decision Support Systems in Architecture and Urban Planning; Van Leeuwen, J.P., Timmermans, H.J.P., Eds.; Springer: Dordrecht, Germany, 2006; pp. 487–499. [Google Scholar] [CrossRef]

- Lee, J.G.; Joon Oh, S.; Ali, A.; Minji, C. End-Users’ Augmented Reality Utilization for Architectural Design Review. Applied Sciences 2020, 10, 5363. [Google Scholar] [CrossRef]

- Shirazi, A.; Behzadan, A.H. Design and Assessment of a Mobile Augmented Reality-Based Information Delivery Tool for Construction and Civil Engineering Curriculum. J. Prof. Issues Eng. Educ. Pract. 2015, 141. [Google Scholar] [CrossRef]

- Li, W.; Nee, A.; Ong, S. A State-of-the-Art Review of Augmented Reality in Engineering Analysis and Simulation. Multimodal Technol. Interact. 2017, 1, 17. [Google Scholar] [CrossRef]

- Wang, P.; Wu, P.; Wang, J.; Chi, H.L.; Wang, X. A Critical Review of the Use of Virtual Reality in Construction Engineering Education and Training. Int. J. Environ. Res. Public Health 2018, 15, 1204. [Google Scholar] [CrossRef]

- Alsafouri, S.; Ayer, S.K. Mobile Augmented Reality to Influence Design and Constructability Review Sessions. J. Archit. Eng. 2019, 25, 04019016. [Google Scholar] [CrossRef]

- Park, C.-S.; Lee, D.-Y.; Kwon, O.-S.; Wang, X. A framework for proactive construction defect management using BIM, augmented reality and ontology-based data collection template. Autom. Constr. 2013, 33, 61–71. [Google Scholar] [CrossRef]

- Li, X.; Yi, W.; Chi, H.-L.; Wang, X.; Chan, A.P.C. A critical review of virtual and augmented reality (VR/AR) applications in construction safety. Autom. Constr. 2018, 86, 150–162. [Google Scholar] [CrossRef]

| Interface | AR Experience | ||

|---|---|---|---|

| Content Use | On-Site | Remote | |

| Tangible | Personal | YES | NO |

| Sensor-Based | Personal | YES | NO |

| Collaborative | Group | YES | YES |

| Hybrid | Personal/Group | YES | YES |

| SDK | Typology | Platform | Tracking | Domain |

|---|---|---|---|---|

| ARToolkit | GPL 1 | Multiplatform | Marker | Generic |

| DroidAR | GPL | Android | Location/Marker | Generic |

| AR.js | GPL | Multiplatform | Location/Marker | Generic |

| EasyAR Sense | GPL/Commercial | Android | Marker/Markerless | Generic |

| Apple ARKit | Free/Proprietary | iOS | Location/Markerless | Generic |

| Google ARCore | Free/Proprietary | Android | Location/Markerless | Generic |

| ARloopa | Free/Proprietary | Multiplatform | Location/Marker/Markerless | Graphic |

| Archi-Lens | Free/Proprietary | Multiplatform | Marker | AEC/Design |

| Layar | Commercial | Multiplatform | Location/Marker/Markerless | Generic |

| Wikytude | Free/Commercial | Multiplatform | Location/Marker/Markerless | Generic |

| Vuforia | Free/Commercial | Multiplatform | Location/Marker/Markerless | Generic |

| MAXST | Commercial | Multiplatform | Marker/Markerless | Generic |

| AkulAR | Free/Commercial | Multiplatform | Location/Marker | Architecture |

| Augment | Commercial | Multiplatform | Marker/Markerless | eCommerce/AEC |

| ARki | Free/Commercial | IOS (Android soon) | Location/Markerless | AEC |

| Fuzor | Commercial | Windows | Markerless | AEC |

| GammaAR | Commercial | Multiplatform | Markerless | AEC |

| Dalux TwinBIM | Commercial | Multiplatform | Markerless | AEC |

| Fologram | Commercial | Multiplatform | Marker | Generic |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Russo, M. AR in the Architecture Domain: State of the Art. Appl. Sci. 2021, 11, 6800. https://doi.org/10.3390/app11156800

Russo M. AR in the Architecture Domain: State of the Art. Applied Sciences. 2021; 11(15):6800. https://doi.org/10.3390/app11156800

Chicago/Turabian StyleRusso, Michele. 2021. "AR in the Architecture Domain: State of the Art" Applied Sciences 11, no. 15: 6800. https://doi.org/10.3390/app11156800

APA StyleRusso, M. (2021). AR in the Architecture Domain: State of the Art. Applied Sciences, 11(15), 6800. https://doi.org/10.3390/app11156800