1. Introduction

Applications of human–robot collaboration (HRC) have already made their way into industrial practice, hence the Industry 4.0 revolution [

1], allowing human operators and robots to coexist in the same space [

2,

3]. HRC applications offer a number of advantages over non-collaborative ones, as they allow to combine benefits of both actors, namely humans and robots. Robots can offer high accuracy and repeatability, can lift heavy weights, and can reduce the cycle time compared to a merely manual production. On the other hand, humans show high levels of cognition and dexterity. Safety concerns arise though in HRC applications, as robots have to share space or tasks with humans and without proper application human safety may be violated [

4].

The driving force for such investigation has been the pursuit of manufacturing flexibility which allows companies to efficiently align their production with the demand for low volume, highly customized products [

5]. Robots have proven very capable of being reconfigured and repurposed even between cycles within the same shift, however, their productivity potential becomes hindered when humans are present in their workspace. Coexistence in a workplace does not automatically result in productivity gains as seamless collaboration requires intelligence and autonomy on both sides of the collaborating entities.

Speaking of intelligence, researchers have been utilizing AI in various research fields, such as health care [

6,

7], education [

8], transportation [

9], engineering [

10], and industry. Specifically, for industry, AI has been utilized in human –robot collaborative applications, in topics such as cognition to enable autonomy [

4], task planning and allocation of tasks among humans and robots [

11], and operator support [

12].

Humans can perform the most delicate and dexterous processes employing their intelligence and senses. Robots on the other hand are powerful, fast, and accurate machines with limited interaction capabilities [

13]. Efficient collaboration calls for a reduction of the interfaces required to exchange information between them and this can be achieved by increasing the perception and cognition capabilities of robots to emulate the ones of humans [

14]. However, in order to achieve conditions that are similar to the case of human-to-human collaboration, the triggering of alternative actions on the robot side is merely the first step. A human counterpart would:

reason upon the evolution of a task by combining observations of both his/her co-worker (movement, posture, intention) as well as the working environment (positioning of parts, tools, machine state indicators, etc.).

Increase the efficiency of collaboration by adapting his/her actions to both directly communicated requirements as well as indirect observations and intuition.

A case of collaborative behavior would include the change of the assembly task sequence initiated arbitrarily by one operator and followed by the compliance of the second, without verbal communication. The instinctive adaptation of holding height during the co manipulation of a large part to better distribute the weight according to the operator’s physiology is another example.

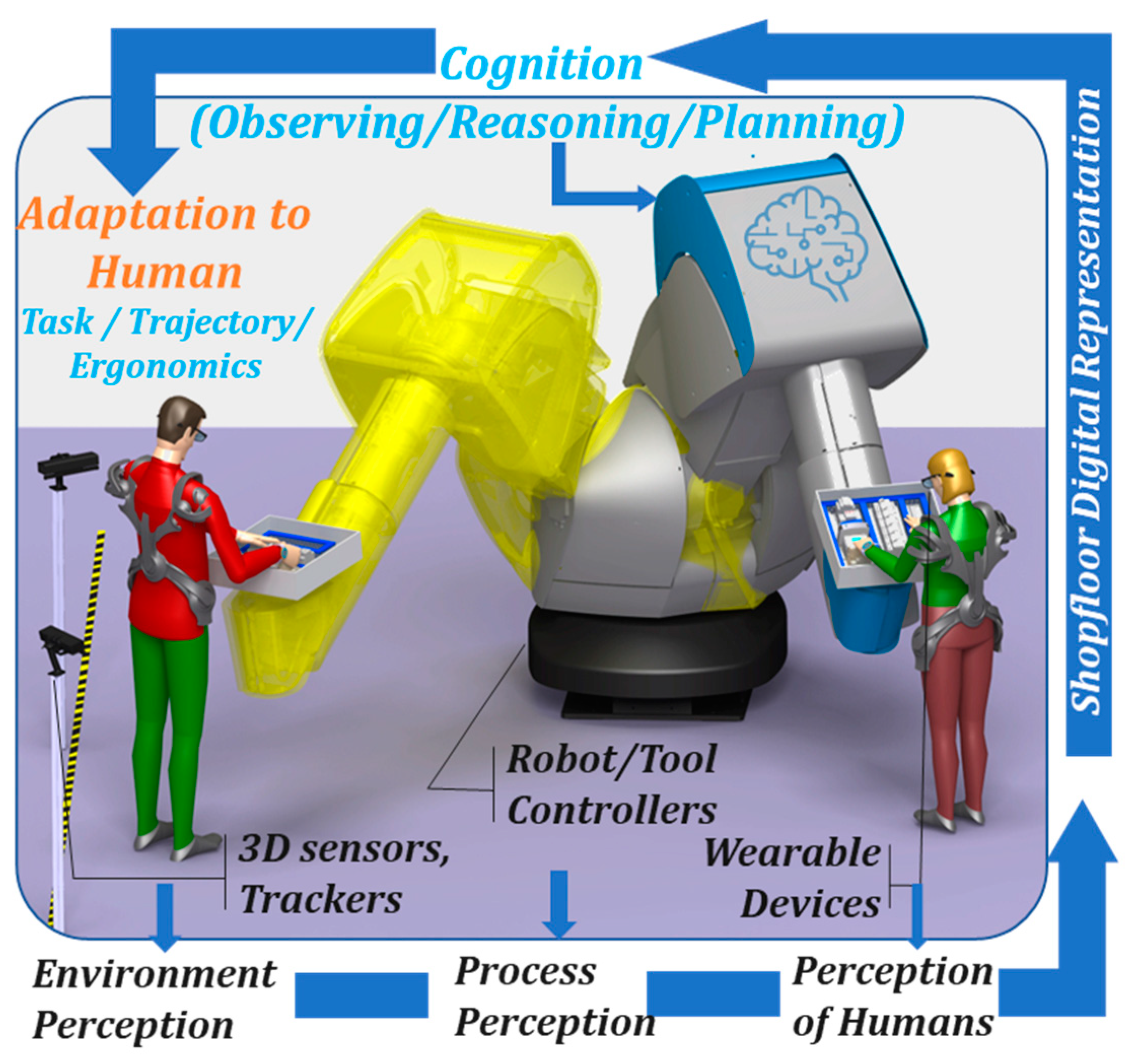

Transferring this notion to the case of HRC (

Figure 1), a high payload collaborative robot would need to continuously assess the workflow execution status using information from equipment controllers, process sensors, and direct human input as well as data from wearable devices, which have been widely used in other sectors such as health care [

15]. All data can be hosted in a Shopfloor Digital Representation (Digital Twin [

16,

17]) of the system and made available to all cognitive functions to achieve the following:

Detection of parts or tools to be manipulated by human operators and localization of their position. Although this field has been fairly researched in the past using both RGB and 3D sensors [

18], the use of data coming from wearable devices is one of the novelties of this work.

Identification of tasks being executed by human operators and validation of the assembly progress. Recent research has mainly focused on tracking the position and posture [

19] and even fewer approaches perform intention prediction [

20,

21]. This work advances further by exploiting human and environment tracking to identify the workflow execution status which allows for the triggering of task-optimized support strategies.

Online calculation of ergonomic conditions for each task as it evolves. Tools for online assessment of ergonomics using depth sensors and wearable devices have been provided [

22] to support workplace layout optimization and operator support [

23]. Their use is extended in this paper to feed the robotic cognition module with the ability to consider ergonomic aspects when devising operator support actions.

Adaptation of robot posture to both human actions (direct input to the robot) and needs (ergonomics assessment) using learning strategies and trajectory optimization techniques [

24]. The approach extends beyond the switching between predefined states by real-time adaptation of the robot-provided support to each operator.

The novelty of the proposed solution lays in the fact that it enhances the cognitive capabilities of the robots, performing real-time tracking as well as optimization of ergonomics by automatic adjustment of the robot pose, going one step forward from existing implementations that focus on providing suggestions for ergonomics improvement. Moreover, it allows automatic adaptation of the robot behavior to the needs and preferences of the operators. In a nutshell, it promotes the seamless coexistence of humans and robots.

In this work, the combination of these advancements has been applied for the first time in the case of high payload collaborative manipulators which, unlike cobots, are much more capable to supplement humans in performing strenuous tasks.

Section 2 describes the approach and its architecture, detailing their functionality and interrelationships; in

Section 3 the implementation of the approach is presented and its application on an elevator assembly case study is outlined in

Section 4.

Section 5 discusses the results and outlines future work.

2. Approach and Architecture

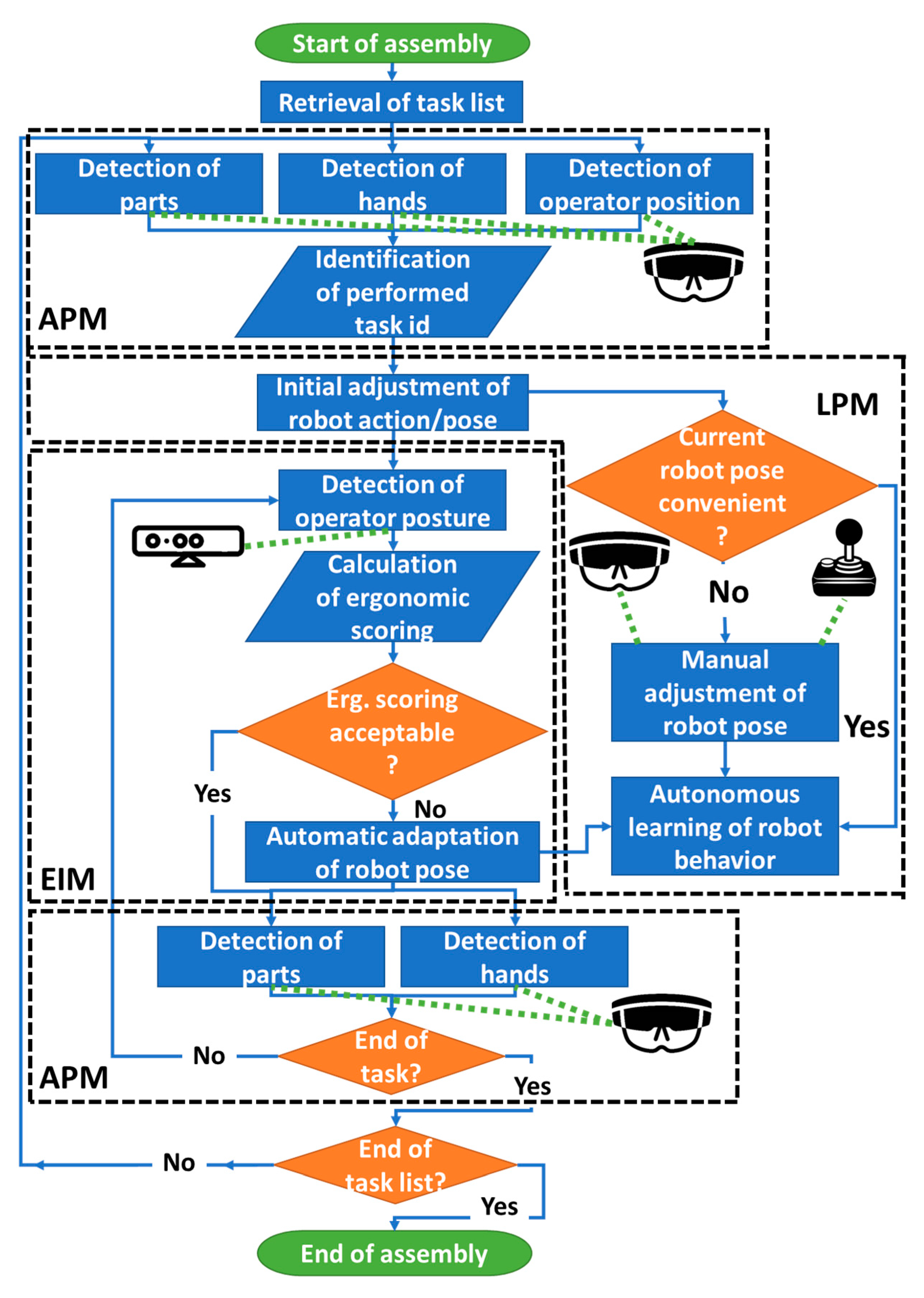

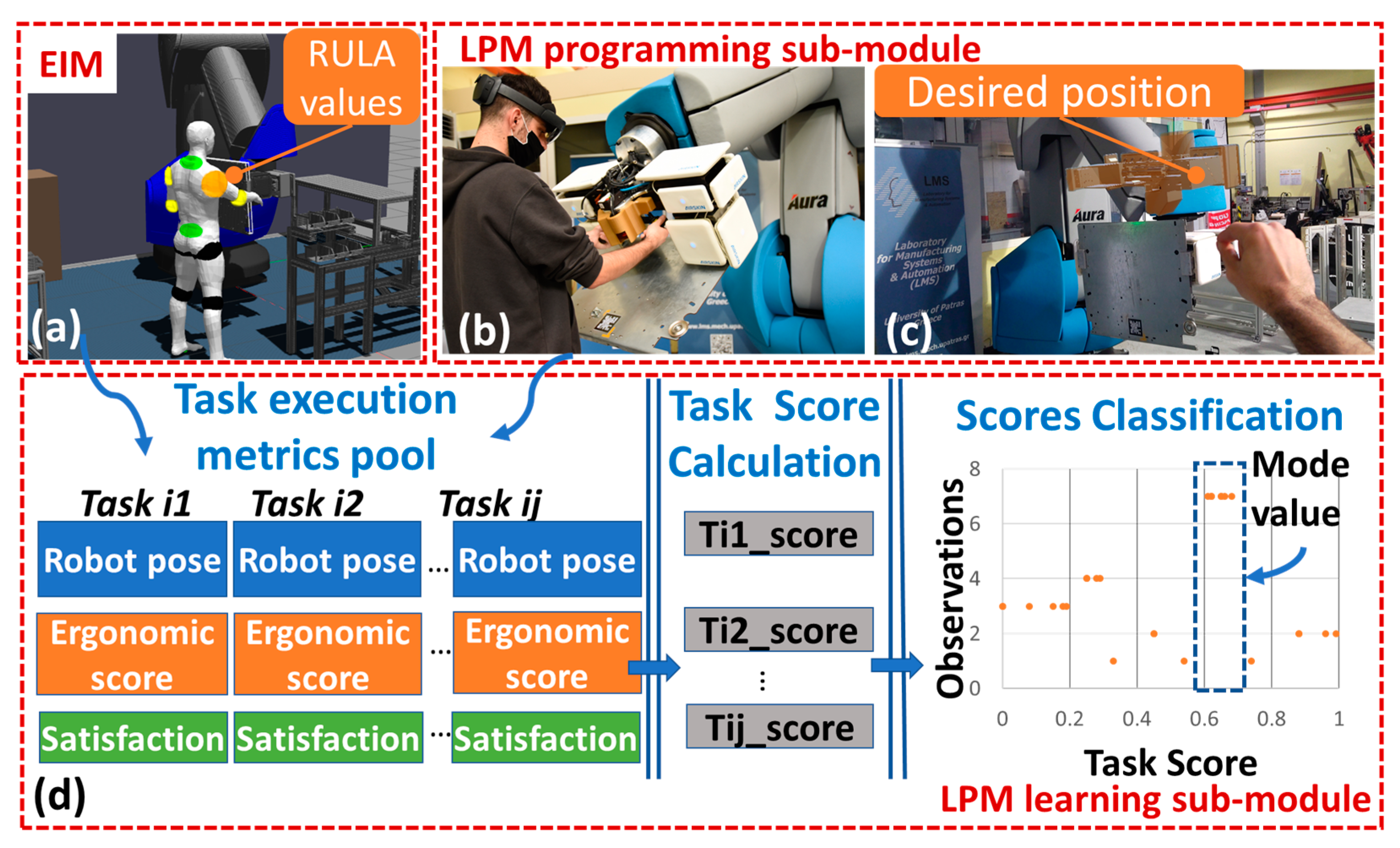

The proposed method supports the seamless and non-intrusive collaboration of human and robotic resources. This is achieved through three distinct but interoperating modules: (i) action perception module (APM); (ii) ergonomics improvement module (EIM), (iii) learning and programming module (LPM). The workflow and the functionalities implemented by each are shown in

Figure 2.

As indicated by their names, each module contains functions for perceiving the state of the work cell and its resources, the monitoring, and correction of working conditions as well as the human-centered adjustment of the robot’s behavior. The individual functions of each module are presented in detail in

Section 3.

All three modules communicate among each other as well as with the rest of the software/hardware modules of the work cell using a ROS-based architecture. A common pre-existing simulation environment, named Shopfloor Digital Representation (SDR), is used by the modules to simulate various scenarios, enhanced to support the new functionalities needed. The SDR is a 1:1 replica of the real environment, able to simulate robot as well as human motion. It is built, among others, around the GAZEBO simulation software [

25] and MoveIt! planning framework [

26].

3. Implementation

3.1. Actions Perception Module (APM)

APM acts as the main means of capturing and interpreting the scene where the robot and operator are engaged in collaboration. It uses a convolutional neural network to detect the existence/position of parts to be assembled as well as the hands of the operator. The TensorFlow machine learning framework [

18] was used, supplemented by a custom object detector, allowing to detect the parts of interest as well as the operator’s hands. The real-time detection requirement was met with the use of a single shot detector (SSD) which provides satisfactory accuracy.

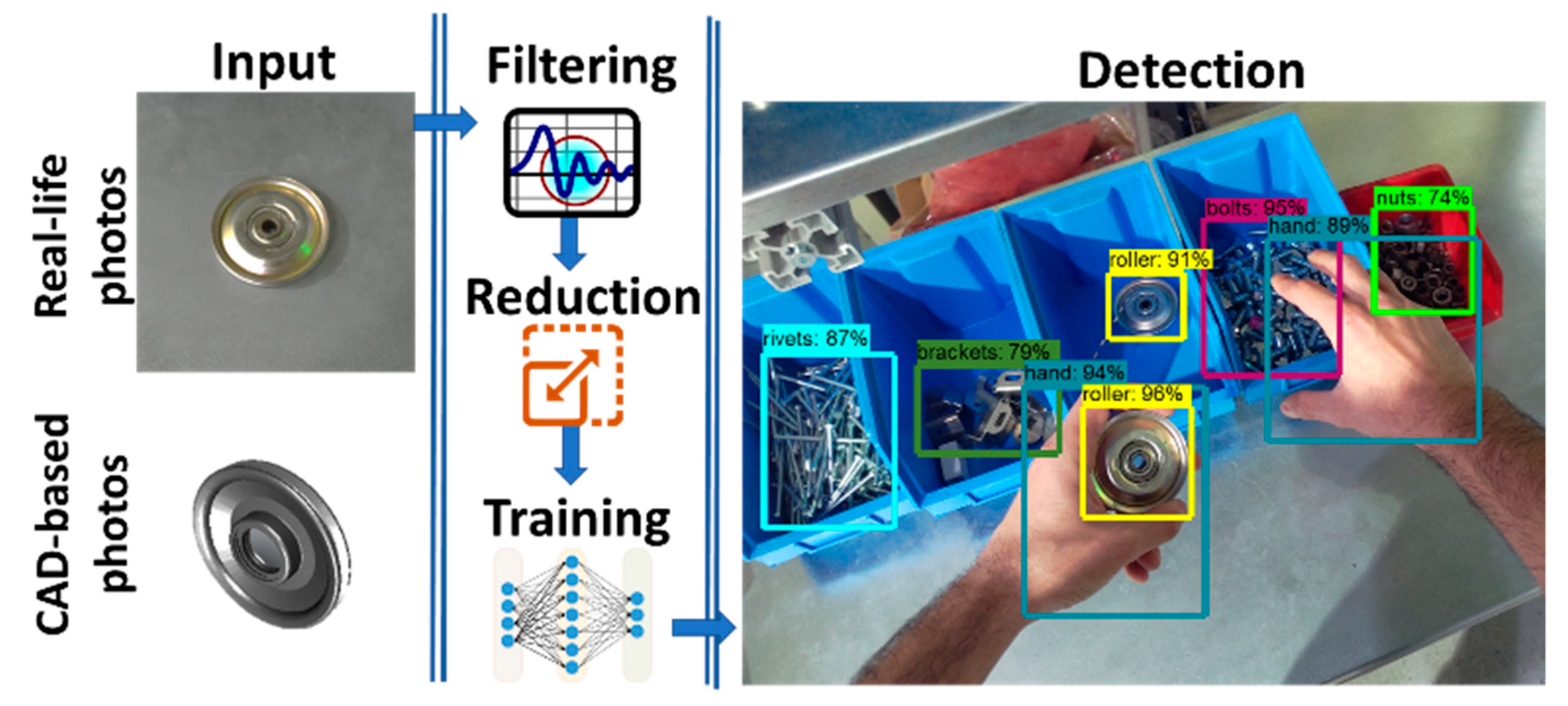

To increase the detection accuracy of the NN, training with multiple datasets were tested:

- (i)

Real-life photos: short videos of objects of interest in diverse lighting conditions and background were shot. The single frames were extracted, followed by a manual annotation procedure.

- (ii)

CAD-based photos: CAD files of the objects were imported in Unity3D, textures were applied, and a script was used to automatically vary the background/viewing angles/lighting conditions of the virtual scene, capture and label photos.

- (iii)

Synthetic dataset: a combination of real-life photos and CAD-based photos.

Augmentation of datasets using HSV filters and image reduction to 300 × 300 pixels were applied to increase the training and execution speeds. The NN trained with the synthetic data demonstrated increased accuracy when compared to the use of real-life photos (12% increase) or CAD photos (26% increase). The overall methodology is summarized in

Figure 3.

For the APM detection algorithm to work, an input in the form of RGB photos should be provided. To avoid the installation of multiple stationary RGB cameras to achieve high coverage of the shopfloor, the necessary video stream is extracted from the built-in cameras of the AR headset that the operator is already using as a supporting device (Microsoft HoloLens 2 [

27]). This allows the operator to freely move from one station to another and ensure that his/her actions are constantly tracked. The video feed of the headset is wirelessly transmitted to the APM controller allowing the utilization of low spec mobile hardware to be used, while the resource-intensive tasks are performed at a remote machine with higher processing power. To achieve low latency in HoloLens live video stream, the Mixed Reality Companion Kit library [

28] was used. As soon as a part is detected, the application draws a boundary box around it, indicating its position within the frame. To identify the parts being manipulated, the percentage of overlap between the boundary boxes of the operator’s hands and the objects of interest as well as the amount of time that the overlap occurs is calculated (

Figure 3). A number of experiments manipulating objects of various sizes were done, allowing to set various overlap percentages and time thresholds based on the size of parts/boundary boxes size.

This information is cross-checked against the task list of the specific assembly scenario, to identify the active task (including its completion status) as well as the intention of the operator on which task to carry out next. The position of the operator on the shopfloor is constantly monitored and used to supplement the scene analysis. The built-in IMU sensor of HoloLens is utilized for this reason. Prior to the beginning of the assembly scenario, the operator puts on the HoloLens headset, moves to a specific location, and initializes/calibrates his/her position inside the shopfloor. In such a way, the initial position of the operator is the same as the initial position of the virtual world (SDR). To avoid the drift phenomenon that usually occurs while calculating position using data from IMU sensors, an on-the-fly recalibration of the position of the operator is implemented. Several markers are placed in the shopfloor and at well-known predefined positions. Whenever a marker is detected via the RGB camera of HoloLens, the position of the operator is recalibrated according to the predefined position of the marker.

3.2. Ergonomics Improvement Module (EIM)

EIM’s goal is to quantify the physical effort that the operator is putting in while actively collaborating with the robot as well as reduce the muscle strain to an acceptable level. Kinect Azure [

29] sensors installed around the shopfloor track operator’s body and joints. Using the RULA assessment [

22], the EIM processes joint data on the fly to provide scoring of ergonomic risk factors associated with upper extremity musculoskeletal disorders (MSDs). The final outcome of the above process is to generate a grand score for each frame. In this way, the ergonomic values are being monitored during the whole duration of the assembly. Whenever a user-defined threshold is exceeded, the EIM tries to find an alternative robot pose that will allow the operator to adopt an ergonomically correct posture.

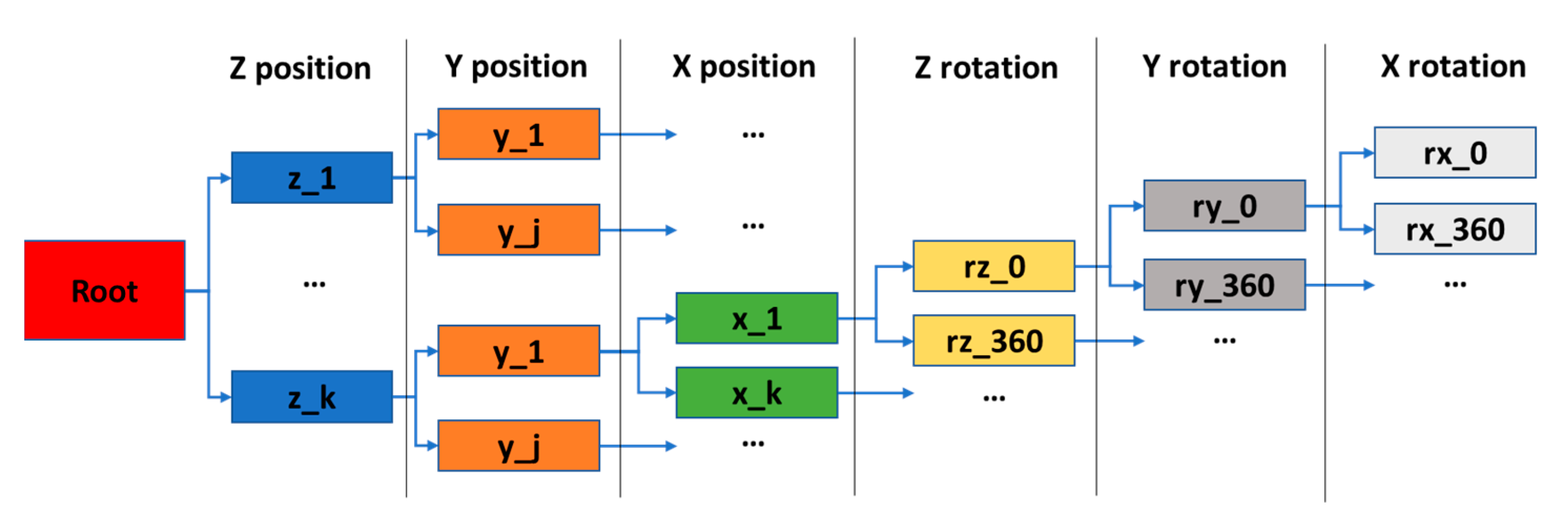

To achieve it, an AI-based algorithm [

30] comprising search and heuristic functions generates the alternative positions of the end-effector. Its main foundation lays in the selective search of the solution space, instead of using an exhaustive method. As the number of possible end-effector positions increases, the solution space becomes excessively large, which, consequently, vastly increases the computational and memory requirements of the exhaustive search method. It is important to note that the referred solution space is the set containing all possible end-effector positions that lead to feasible robot pose configurations. The operator ergonomic scores for each alternative are being calculated using the SDR simulation tools. To generate the alternatives, EIM divides the virtual 3D workspace in a grid with user-adjustable discretization. Each point of the grid is a possible new position for the robot’s end-effector. The points that are outside the working envelope of the robot as well as the points that interfere with the surroundings are automatically discarded. The user can limit the solution’s space to a specific volume around the current position of the end effector. By viewing the 3D space as tree-nodes (x,y,z coordinates) (

Figure 4), the algorithm iteratively creates random, yet valid, groups of such nodes, noted as branches. It then extends, or ‘reproduces’, the optimal branch, of the currently generated. The selection of the fittest branch is performed by estimating the utility value of each one, first calculating its node sequence utility value and then adding the average utility value of some of its random extensions, or ‘samples’. A branch’s sample is a random sequence of nodes that completes said branch, as they together to form a sequence of nodes or 3D points. This procedure is configured using three, adjustable, parameters:

Decision horizon (DH): The size in nodes of each generated branch. The higher its value the more complex each iteration step is, as more assignments are considered and evaluated each time.

Maximum number of alternatives (MNA): The number of branches created in each iteration. The higher its value, the closer the generated robot positions converge to the optimal solution.

Sample rate (SR): The plethora of samples examined per generated branch. The higher its value, the better the accuracy of the predicted final utility value of the extended branch.

For each of the alternative configurations, the expected ergonomic value is calculated by the SDR, using a virtual mannequin that is able to simulate human motion (

Figure 5a). EIM generates a robot pose that provides close to optimal ergonomic score, which is then adapted by the robot in real time. It is worth noting that the virtual mannequin has, at least for now, a fixed size and cannot be adapted to the size of the operators.

3.3. Learning and Programming Module (LPM)

To advance the collaboration scheme, predefined program execution needs to be replaced by automated continuous learning and adaptation of the robot motion, based on the operator’s perceived needs and preferences. For this purpose, the sequence of tasks to be executed are inserted in the SDR simulation environment and MoveIt is used to generate collision-free motion plans for each task.

When the operator is executing a collaborative task, he/she may choose to work with the robot pose proposed by the system or move the robot to a more convenient pose for him/her. The LPM module is divided into two sub-modules: (i) programming sub-module and (ii) learning sub-module

- (i)

Programming sub-module: The programming sub-module of LPM supports two ways of achieving such functionality: (a) direct movement of the end effector using manual guidance (

Figure 5b)—the operator can grab the dedicated handles installed at the gripper of the robot and move the end effector to his preferred position, (b) indirect-AR based control of the position of the end-effector, moving a virtual end-effector at the desired position using gestures (

Figure 5c)—the operator can manipulate with gestures a virtual end effector, whose position represents the target position of the physical end effector. In both cases, the target position of the end-effector is communicated with SDR and the relevant robot path to achieve such pose is generated, ensuring the avoidance of collisions with the surroundings.

- (ii)

Learning sub-module: Each execution of a specific assembly step provides a new input to the learning sub-module of LPM, associated with a score (Tij_score) computed from two KPIs; operator satisfaction (oper_sat), and ergonomic score (erg_sc). This funnel is specific to each operator’s habits and ways to drive the robot; thus, it is necessary to learn such a funnel for each operator. The relevant data (operator id, task id, robot pose, Tij_score, oper_sat, erg_sc) for each execution of a specific assembly step, are stored in a MongoDB. Prior to the execution of each assembly step, the Learning sub-module determines the mode Tij_score value from the dataset and instructs the robot to adapt the pose corresponding to the mode Tij_score (

Figure 5d).

To calculate the score for the execution of a specific task (Tij_score), the following formula is used (1):

where:

- (a)

Tij_score: is the score for task i, and j is a counter increasing each time a new input for task i is inserted into the learning funnel.

- (b)

oper_sat: declares the operator’s satisfaction. It can have binary values; 1 if the operator does utilize the proposed robot configuration or 0 if the operator manually adjusts the position of the end effector.

- (c)

erg_sc: refers to the ergonomic score calculated by the EIM using the RULA assessment. The value is inverse normalized, meaning that erg_sc equal to 1 declares a good ergonomic score.

- (d)

w1 and w2: denote weighting factors for the oper_sat and erg_sc values, respectively.

If the erg_sc is above a predefined threshold, the TiJ_score is set to 0. The various Tij_score values are stored at a database, along with the corresponding robot pose and erg_sc and grouped based on their value with 0.1 increments.

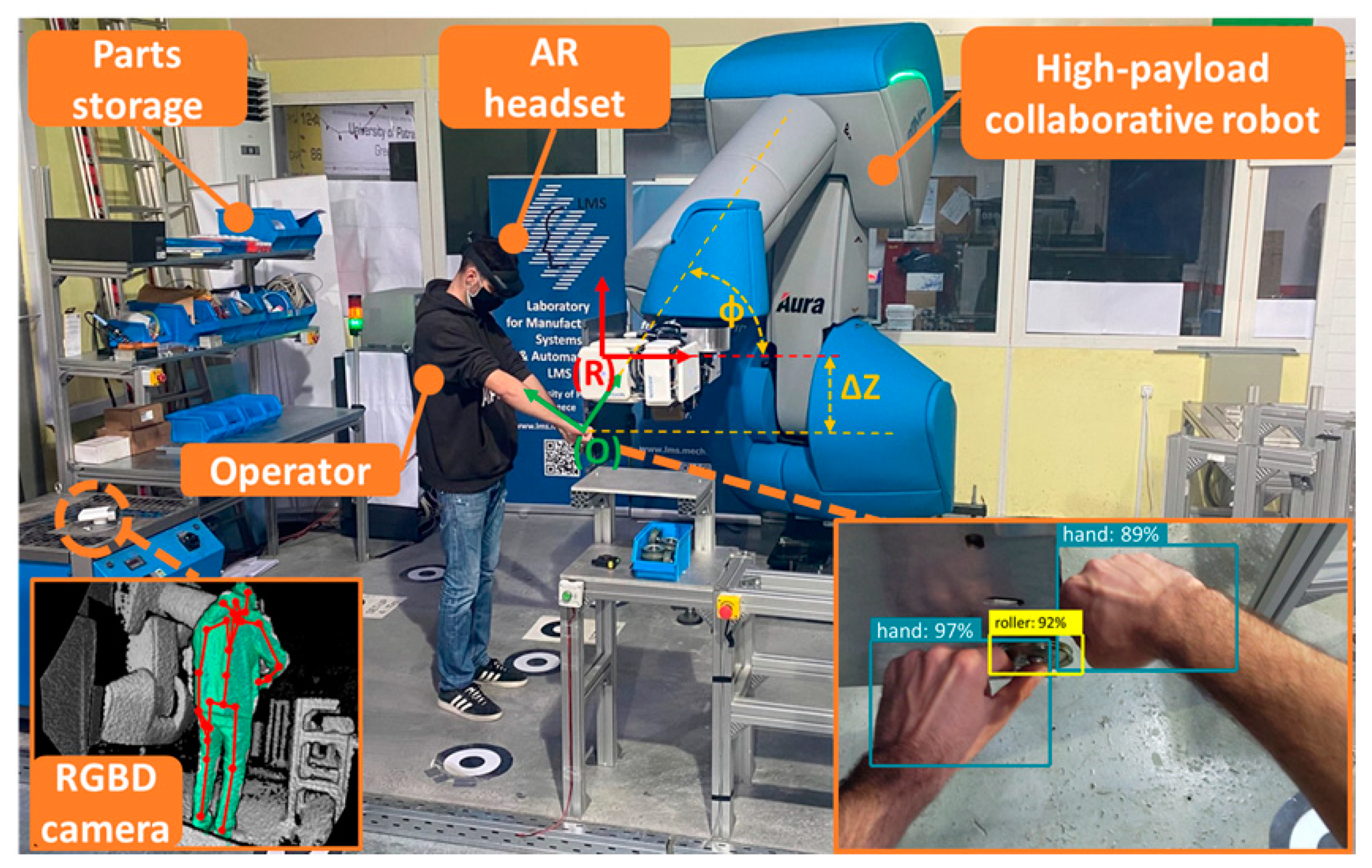

4. Case Study

To demonstrate the aforementioned approach, a used case derived from the elevator production sector was selected. Specifically, at the workstation of interest, the cab door panel hangers and 11 subcomponents are pre-assembled. Currently, all material handling and assembly operations are performed manually by an operator multiple times per shift. The heavy weight of hangers (up to 22 kg) and the need for two-sided access cause extensive ergonomic issues and symptoms such as back pains, hand tendonitis, and others. This is the primary reason why operators with restrictions cannot work in this station.

The first high payload (170 kg) collaborative robot at the market (COMAU AURA [

31]) is used as a smart work holding device, taking care of the arduous task of positioning the assembly. This allows operators to perform delicate assembly actions in an effective, pleasant, and ergonomic way. Based on the operator position, pace, and the sequence of assembly operation, the robot automatically adapts its position, rotating the hanger in space and presenting it to the operator in a convenient way (variation of the programmed frame (R) by Φ and ΔΖ to frame (O) shown in

Figure 6). In other words, a change of the current paradigm, where the part is static and the operator moves and bends around it to do the different operations, is proposed putting the human in the center of the operation and the robot presenting the part to him.

To evaluate the effectiveness of our approach, an experimental setup involving 5 operators in total took place (4 males, 1 female), varying in height from 1.56–1.92 cm and in age from 23–44 years old. Each of the subjects was asked to assemble the hanger in collaboration with the high-payload robot in two experimental configurations: (a) without AI support; the robot holds the panel at a pre-programmed stationary position, while the operator performs assembly with the help of AR instructions, confirming the completeness of each step through HMIs and hand gestures, and (b) with the utilization of the APM, EIM, and LPM modules; the robot automatically adapts its pose to operator’s needs and preferences when needed, without having to provide feedback for his/her actions. Each operator repeated 10 times each of the experimental configuration (total of 100 tries for the 5 operators). For each repetition, the cycle time and ergonomic score KPIs were monitored, while at the end of the experimentation the operators filled in questionnaires to capture their subjective feedback regarding the effectiveness of each of the modules of our approach. The results are summarized in

Table 1.

The described approach had a positive impact on the cycle time, as most operators managed to achieve better cycle time by using the modules of this study (reduction of ~6%). Regarding ergonomics, the EIM managed to improve the maximum ergonomic values for 4/5 operators, except from the first subject whose height fit well the pre-programmed pose of the robot. All the subjects indicated above-average satisfaction for the developed technology. Finally, for most of the subjects, the LPM was able to adapt to operator needs and preferences after four cycles, as limited intervention was observed afterward, either by humans or by the EIM.

5. Conclusions and Future Work

This work discusses an AI system that recognizes the actions being performed by operators inside a human–robot collaborative cell, analyzes the ergonomics, and adapts the robot behavior according to the needs of the task and the preferences of the operator, improving ergonomics and operator’s satisfaction. The demonstration in an industrial case study from the elevator production sector has revealed possible enhancement in the cycle time (by 6%), amelioration of ergonomic factors in 80% of the samples, and a quite high operator acceptance. Finally, the successful use of, non-so widespread, high payload collaborative robotics has been demonstrated, which, unlike low payload cobots, are more capable to supplement humans in performing strenuous tasks.

Nowadays, the industry is slowly moving from either manual production or totally automated production to hybrid solutions. The current implementations utilize mainly low payload robots and offer limited cognition of process and surroundings, impacting negatively the operator’s acceptance of such technologies. The proposed solution aspires to bring industry one step closer to wide adoption of human–robot collaborative solutions, by having a robot working seamlessly next to a human. According to the proposed paradigm, the human has the leading role while the robot assists him/her non-intrusively, bending its behavior around him/her. Such an approach leads to higher satisfaction rates, and consequently to higher acceptance of hybrid production solutions. The methodology can be applied in low payload as well as high payload applications.

Future work will aim at the implementation of algorithms to capture more complex—non-assembly-based—human actions in order for the system to either ignore them or plan for countering their effects. Additionally, the virtual mannequin used for simulations will have the capability to adapt to the exact dimensions of the operator, so more accurate simulations of ergonomics can be achieved. Moreover, exploration of additional wearable hardware, such as IMUs on a wristband, for highly granular detection of human posture is needed. Finally, extensions to guarantee the operator’s safety in a certified way must also be implemented.

Author Contributions

Conceptualization, N.D., G.M. and S.M.; methodology, N.D., G.M. and S.M.; software, T.T. and N.Z.; validation, N.D., T.T., N.Z., G.M. and S.M.; writing—original draft preparation, N.D. and G.M.; writing—review and editing, N.D. and G.M. All authors have read and agreed to the published version of the manuscript.

Funding

This research has been supported by the EU project “SHERLOCK Seamless and safe human-centered robotic applications for novel collaborative workshops”. This project has received funding from the European Union’s Horizon 2020 research and innovation program under grant agreement No 820689.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

Data is contained within the article.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Papakostas, N.; Constantinescu, C.; Mourtzis, D. Novel Industry 4.0 Technologies and Applications. Appl. Sci. 2020, 10, 6498. [Google Scholar] [CrossRef]

- Wang, X.V.; Kemeny, Z.; Vancza, J.; Wang, L. Human–robot collaborative assembly in cyber-physical production: Classification framework and implementation. Cirp Ann. 2017, 66, 5–8. [Google Scholar] [CrossRef]

- Matheson, E.; Minto, R.; Zampieri, E.G.; Faccio, M.; Rosati, G. Human–robot collaboration in manufacturing applications: A review. Robotics 2019, 8, 100. [Google Scholar] [CrossRef]

- Dimitropoulos, N.; Michalos, G.; Makris, S. An outlook on future hybrid assembly systems-the Sherlock approach. Procedia Cirp 2021, 97, 441–446. [Google Scholar] [CrossRef]

- Chryssolouris, G. Manufacturing Systems: Theory and Practice, 2nd ed.; Springer: New York, NY, USA, 2006. [Google Scholar]

- Fischetti, C.; Bhatter, P.; Frisch, E.; Sidhu, A.; Helmy, M.; Lungren, M.; Duhaime, E. The Evolving Importance of Artificial Intelligence and Radiology in Medical Trainee Education. Acad. Radiol. 2021. [Google Scholar] [CrossRef]

- Vaishya, R.; Javaid, M.; Khan, I.H. Haleem, A.; Artificial Intelligence (AI) applications for COVID-19 pandemic. Diabetes Metab. Syndr. Clin. Res. Rev. 2020, 14, 337–339. [Google Scholar] [CrossRef] [PubMed]

- Olaf, Z.R. Systematic review of research on artificial intelligence applications in higher education–where are the educators? Int. J. Educ. Technol. High. Educ. 2019, 161, 1–27. [Google Scholar]

- Abduljabbar, R.; Dia, H.; Liyanage, S.; Bagloee, S.A. Applications of artificial intelligence in transport: An overview. Sustainability 2019, 11, 189. [Google Scholar] [CrossRef]

- Pham, D.T.; Pham, P.T.N. Artificial intelligence in engineering. Int. J. Mach. Tools Manuf. 1999, 39, 937–949. [Google Scholar] [CrossRef]

- Evangelou, G.; Dimitropoulos, N.; Michalos, G.; Makris, S. An approach for task and action planning in Human–Robot Collaborative cells using AI. Procedia Cirp 2021, 97, 476–481. [Google Scholar] [CrossRef]

- Dimitropoulos, N.; Togias, T.; Michalos, G.; Makris, S. Operator support in human–robot collaborative environments using AI enhanced wearable devices. Procedia Cirp 2021, 97, 464–469. [Google Scholar] [CrossRef]

- Krueger, J.; Lien, T.K.; Verl, A. Cooperation of human and machines in assembly lines. Cirp Ann. 2009, 58, 628–646. [Google Scholar] [CrossRef]

- Makris, S.; Tsarouchi, P.; Matthaiakis, A.S.; Athanasatos, A.; Chatzigeorgiou, X.; Stefos, M.; Giavridis, K.; Aivaliotis, S. Dual arm robot in cooperation with humans for flexible assembly. Cirp Ann. 2017, 66, 13–16. [Google Scholar] [CrossRef]

- Nasiri, S.; Khosravani, M.R. Progress and challenges in fabrication of wearable sensors for health monitoring. Sens. Actuators A Phys. 2020, 312, 112105. [Google Scholar] [CrossRef]

- Bilberg, A.; Malik, A.A. Digital twin driven human–robot collaborative assembly. Cirp Ann. 2019, 68, 499–502. [Google Scholar] [CrossRef]

- Blume, C.; Blume, S.; Thiede, S.; Herrmann, C. Data-Driven Digital Twins for Technical Building Services Operation in Factories: A Cooling Tower Case Study. J. Manuf. Mater. Process. 2020, 4, 97. [Google Scholar]

- Andrianakos, G.; Dimitropoulos, N.; Michalos, G.; Makris, S. An approach for monitoring the execution of human based assembly operations using machine learning. Procedia Cirp 2019, 86, 198–203. [Google Scholar] [CrossRef]

- Prabhu, V.A.; Song, B.; Thrower, J.; Tiwari, A.; Webb, P. Digitisation of a moving assembly operation using multiple depth imaging sensors. Int. J. Adv. Mfg. Tech. 2016, 85, 163–184. [Google Scholar] [CrossRef][Green Version]

- Zhang, J.; Liu, H.; Chang, Q.; Wang, L.; Gao, R.X. Recurrent neural network for motion trajectory prediction in human robot collaborative assembly. Cirp Ann. 2020, 69, 9–12. [Google Scholar] [CrossRef]

- Pellegrinelli, S.; Orlandini, A.; Pedrocchi, N.; Umbrico, A.; Tolio, T. Motion planning and scheduling for human and industrial-robot collaboration. Cirp Ann. 2017, 66, 1–4. [Google Scholar] [CrossRef]

- Michalos, G.; Karvouniari, A.; Dimitropoulos, N.; Togias, T.; Makris, S. Workplace analysis and design using virtual reality techniques. Cirp Ann. 2018, 67, 141–144. [Google Scholar] [CrossRef]

- Makris, S.; Karagiannis, P.; Koukas, S.; Matthaiakis, A.S. Augmented reality system for operator support in human–robot collaborative assembly. Cirp Ann. 2016, 65, 61–64. [Google Scholar] [CrossRef]

- Gai, S.N.; Sun, R.; Chen, S.J.; Ji, S. 6-DOF Robotic Obstacle Avoidance Path Planning Based on Artificial Potential Field Method. In Proceedings of the 2019 16th International Conference on Ubiquitous Robots (UR), Jeju, Korea, 24–27 June 2019; pp. 165–168. [Google Scholar]

- Malus, A.; Kozjek, D. Real-time order dispatching for a fleet of autonomous mobile robots using multi-agent reinforcement learning. Cirp Ann. 2020, 69, 397–400. [Google Scholar] [CrossRef]

- MoveIt. Available online: https://moveit.ros.org/ (accessed on 28 May 2021).

- Microsoft HoloLens. Available online: https://www.microsoft.com/en-us/hololens (accessed on 28 May 2021).

- Mixed Reality Companion Kit library. Available online: https://github.com/microsoft/MixedRealityCompanionKit/tree/master/MixedRemoteViewCompositor/Samples/LowLatencyMRC (accessed on 28 May 2021).

- Kinect Azure. Available online: https://azure.microsoft.com/en-us/services/kinect-dk/ (accessed on 28 May 2021).

- Tsarouchi, P.; Spiliotopoulos, J.; Michalos, G.; Koukas, S.; Athanasatos, A.; Makris, S.; Chryssolouris, G. A Decision Making Framework for Human Robot Collaborative Workplace Generation. Procedia Cirp 2016, 44, 228–232. [Google Scholar] [CrossRef]

- COMAU AURA. Available online: https://www.comau.com/en/our-competences/robotics/automation-products/collaborativerobotsaura (accessed on 28 May 2021).

| Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).