Preparatory Experiments Regarding Human Brain Perception and Reasoning of Image Complexity for Synthetic Color Fractal and Natural Texture Images via EEG

Abstract

1. Introduction

2. Experiment

2.1. Rationale

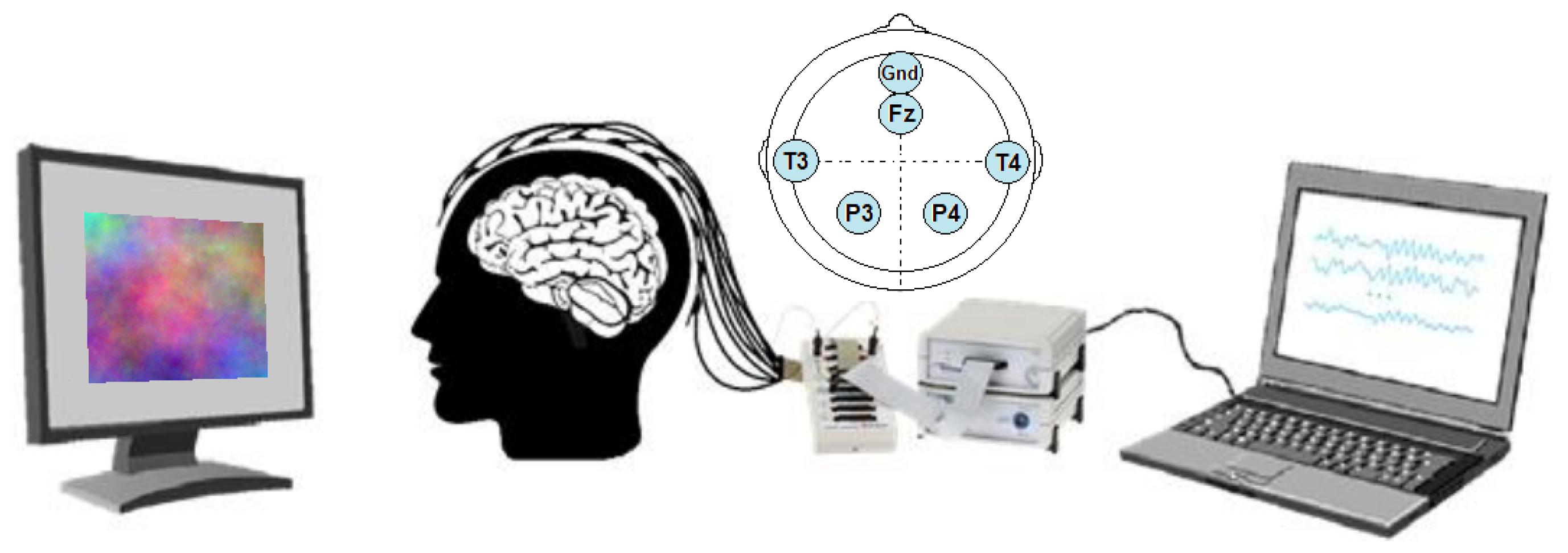

2.2. Setup

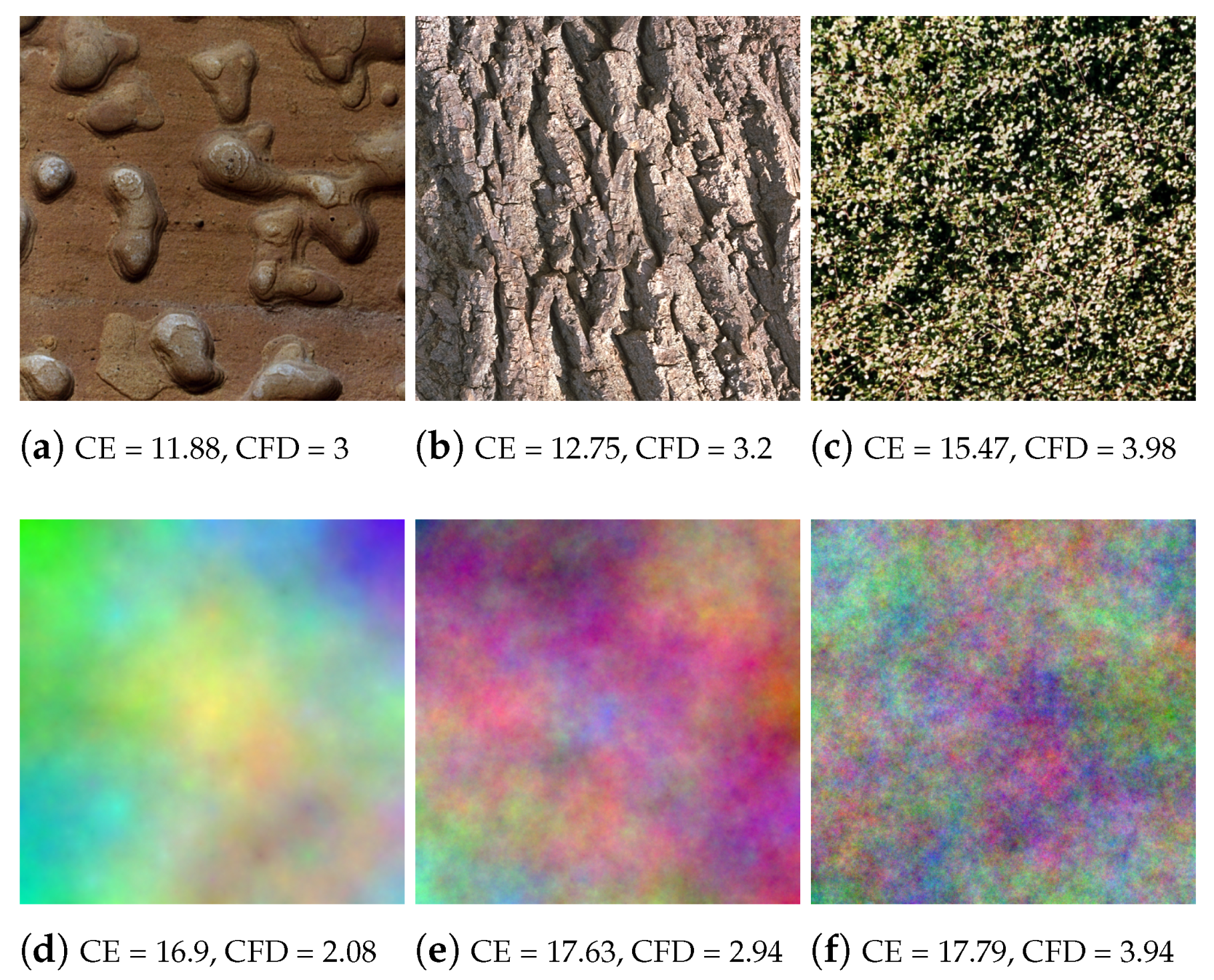

2.3. Stimuli

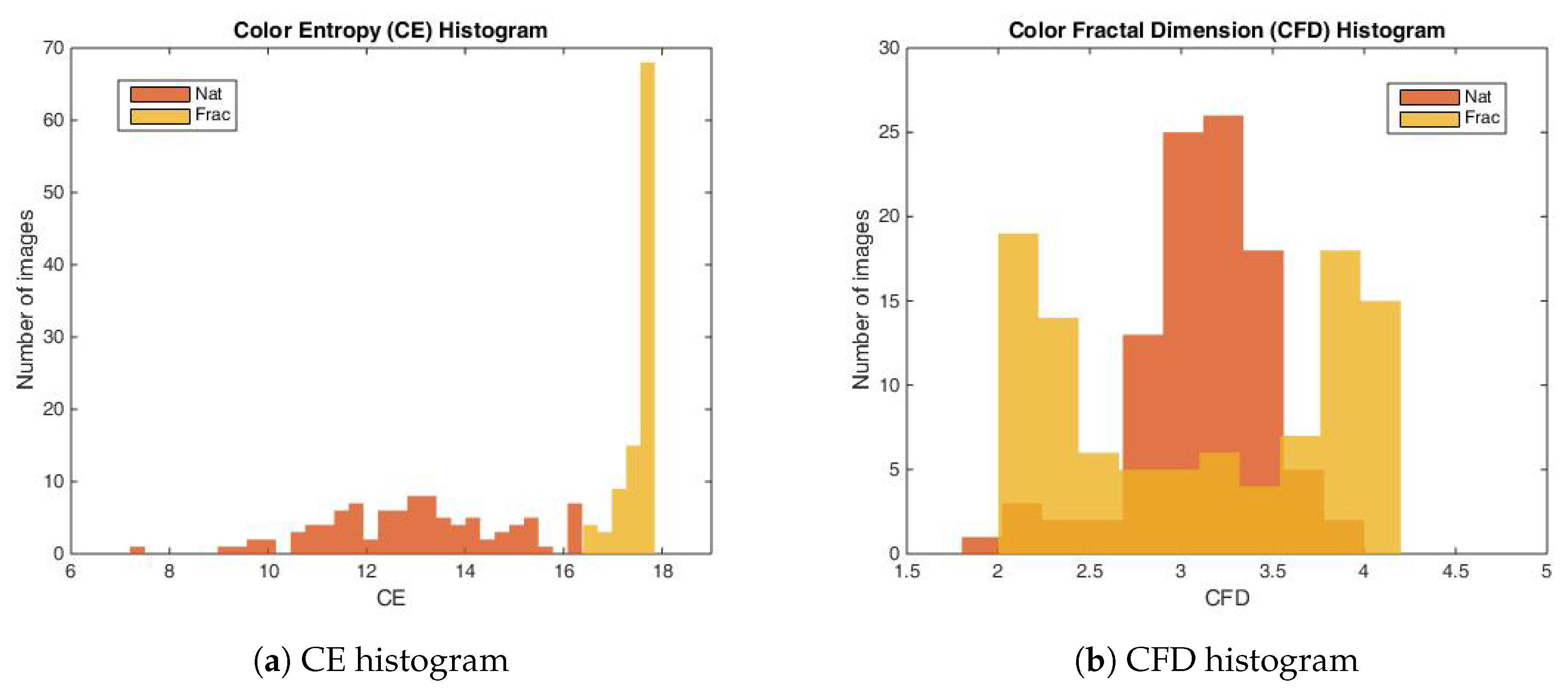

2.4. Color Image Complexity Measures

2.4.1. The Color Entropy

2.4.2. Color Fractal Dimension

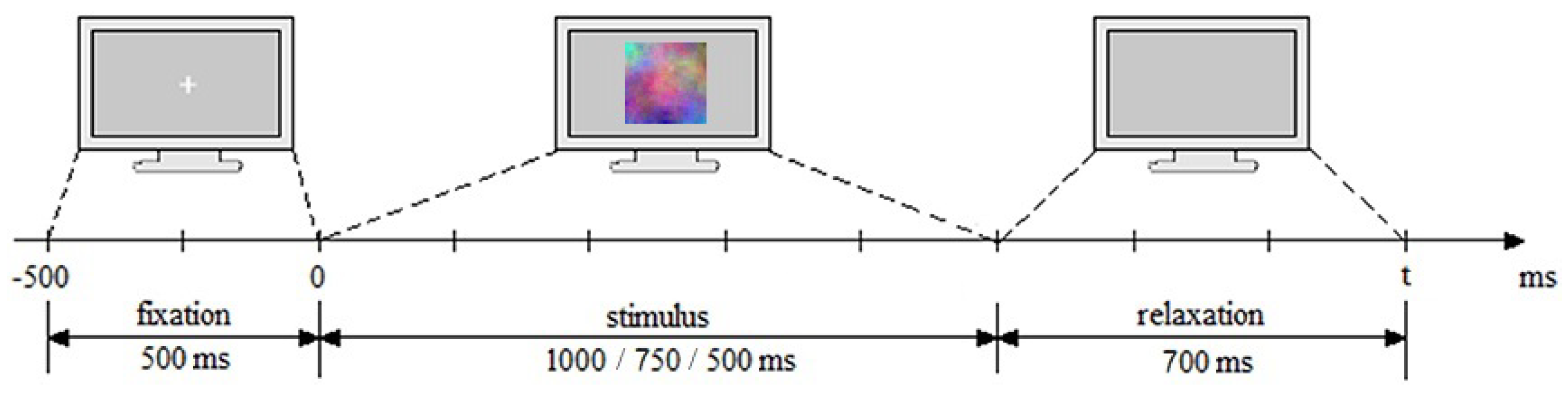

2.5. Experimental Session

2.6. Material and Equipment

2.7. Participants

3. EEG Analysis

- Bad channels rejection (Participant-specific bad channels rejection and low quality channels rejection)—Firstly, bad quality data was removed from further analysis, such as bad signal quality due to poor conductance, e.g., signal amplitude > 300 V. Further, channels were checked for variance dropping to zero and removed if positive (criterion: variance < 0.5 in more than 10% of trials [58,59].

- Filtering—we applied low-pass and high-pass filtering. For lowpass filtering, used for anti-aliasing, we applied the Chebyshev type II filter of order 10 with 42 Hz pass-band edge frequency and 3 dB ripple, and a 49 Hz stopband with 50 dB attenuation. The high-pass filter, used to reduce drifts, was applied with a 1 Hz FIR filter of order 300, using least-squares error minimization and reverse digital filtering with zero-phase effect, such as not to induce phase delays.

- Artifact rejection—For the purpose of rejecting non-EEG origin components (ocular, muscular, cardiovascular, etc.), Independent Component Analysis (ICA) with Multiple Artifact Rejection Algorithm (MARA) based on feature selection [60] were used.

- Segmentation—Data was segmented in epochs, where one epoch corresponds to one stimulation sequence.

- Epochs rejection—Noisy trials were removed based on a variance criterion, such as the ones greater or equal then a trial threshold per channels (where in 20% of the channels have excessive variance). Further, artifactual trials were rejected based on max-min criterion, such as the difference between the maximum and minimum peak should not exceed a threshold, e.g., 150 V.

- Baseline correction—For each epoch, the mean of the last hundreds of ms from the attentional period is subtracted from the epoch, either in the time or frequency domains, aiming at diminishing the background neural noise activity [61].

- Grand Average (GA)—All trials have been averaged over all participants for neurophysiological interpretation, and investigated in the temporal and frequency domains. Scalp maps distributions of the brain signals will also be presented, where a shading method based on linear interpolation between neighbor channels is used to get smooth plots (available via BBCI Toolbox, [55]).

- (a)

- Event-Related Potential, ERP analysis—For ERP analysis, the temporal signals are investigated and averaged on all signals over all participants. For baseline correction, the last 100 ms are used from the attentional period.

- (b)

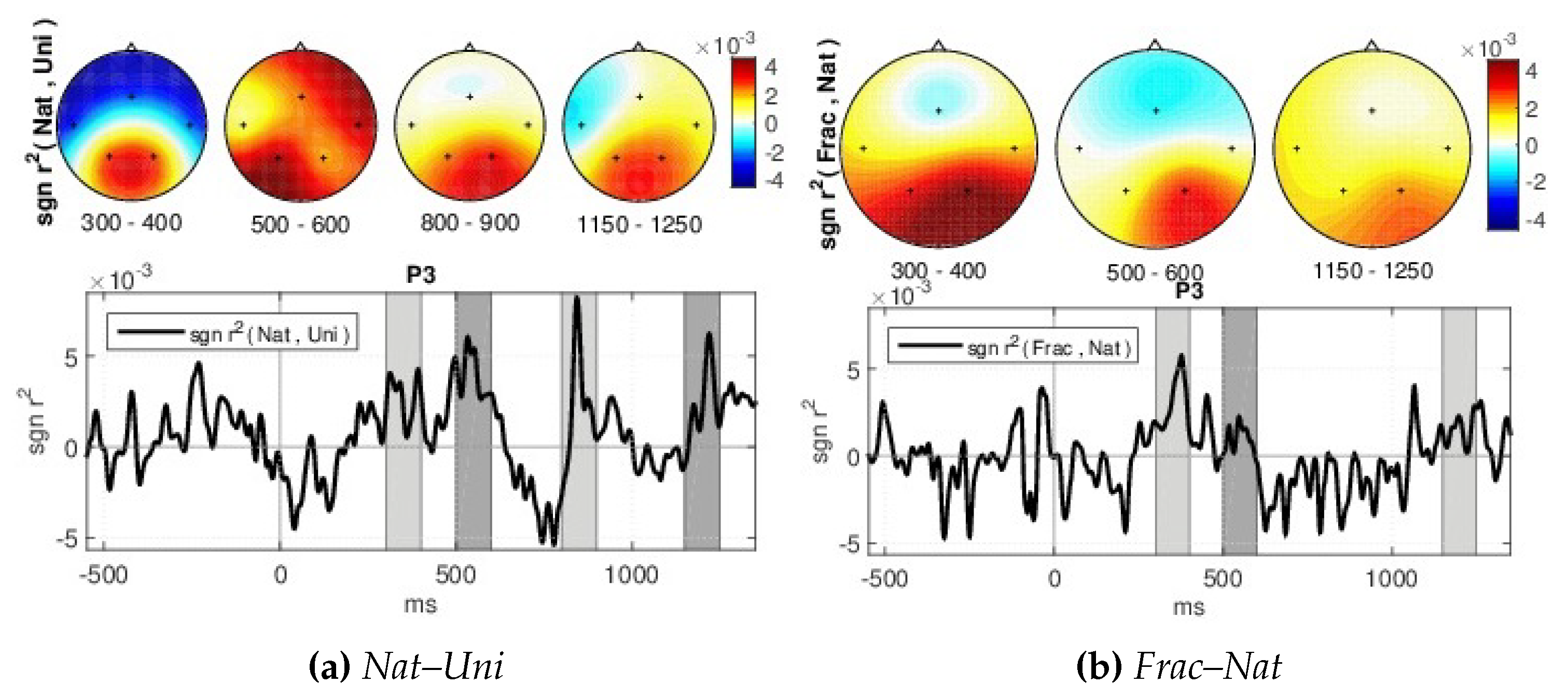

- Signed and squared point biserial correlation coefficient measure (signed )—For details on the association strength between the brain responses for different perceptions, the signed and squared point biserial correlation coefficient (signed ) [62] is computed separately for each pair of channel and time point (x), over all epochs, as in [63], being proposed by [64] (see Equation (3)).where n1 and n2—the numbers of samples in class 1 and class 2, respectively, i,1 and i,2 the class means and the standard deviation, while the signed values are sgn-r(x) = sign(x) r(x). It is a measure of how much variance of the joint distribution can be explained by class membership.

- (c)

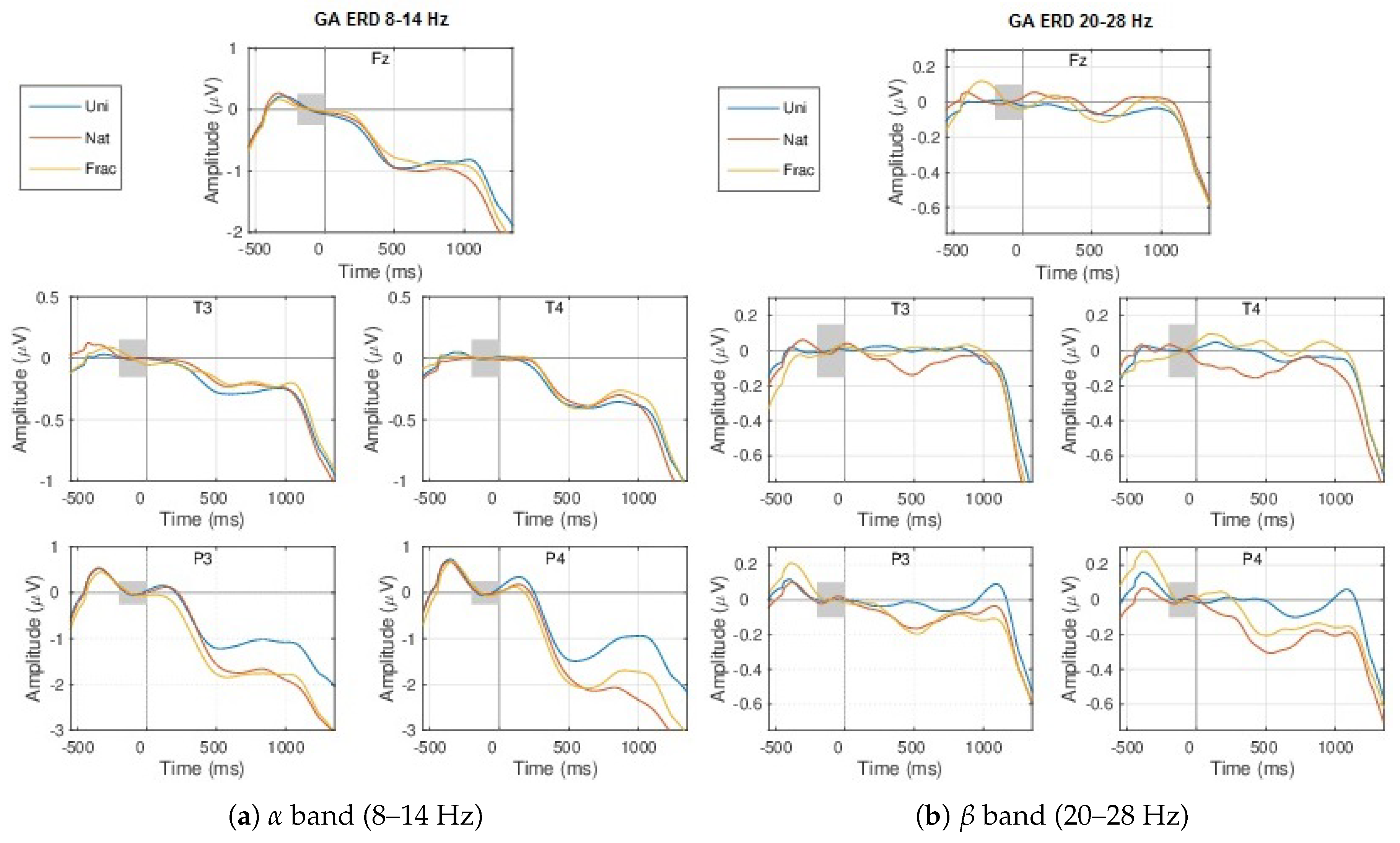

- Event-Related (De)Synchronization, ERD/ERS analysis—The neural modulations in different frequency bands, such as the (de)synchronization (ERD/ERS) effects [43], are outlined by the modulation of the amplitudes in the temporal domain, such as the signals envelopes within specific chosen bands. We used the upper envelope computed based on the Hilbert Transform [65] and then smoothed with a moving average filter based on the Root Mean Square (RMS) with a 200 ms sliding window. The envelope is baseline corrected using an interval of 200 ms from the fixation period.

- (d)

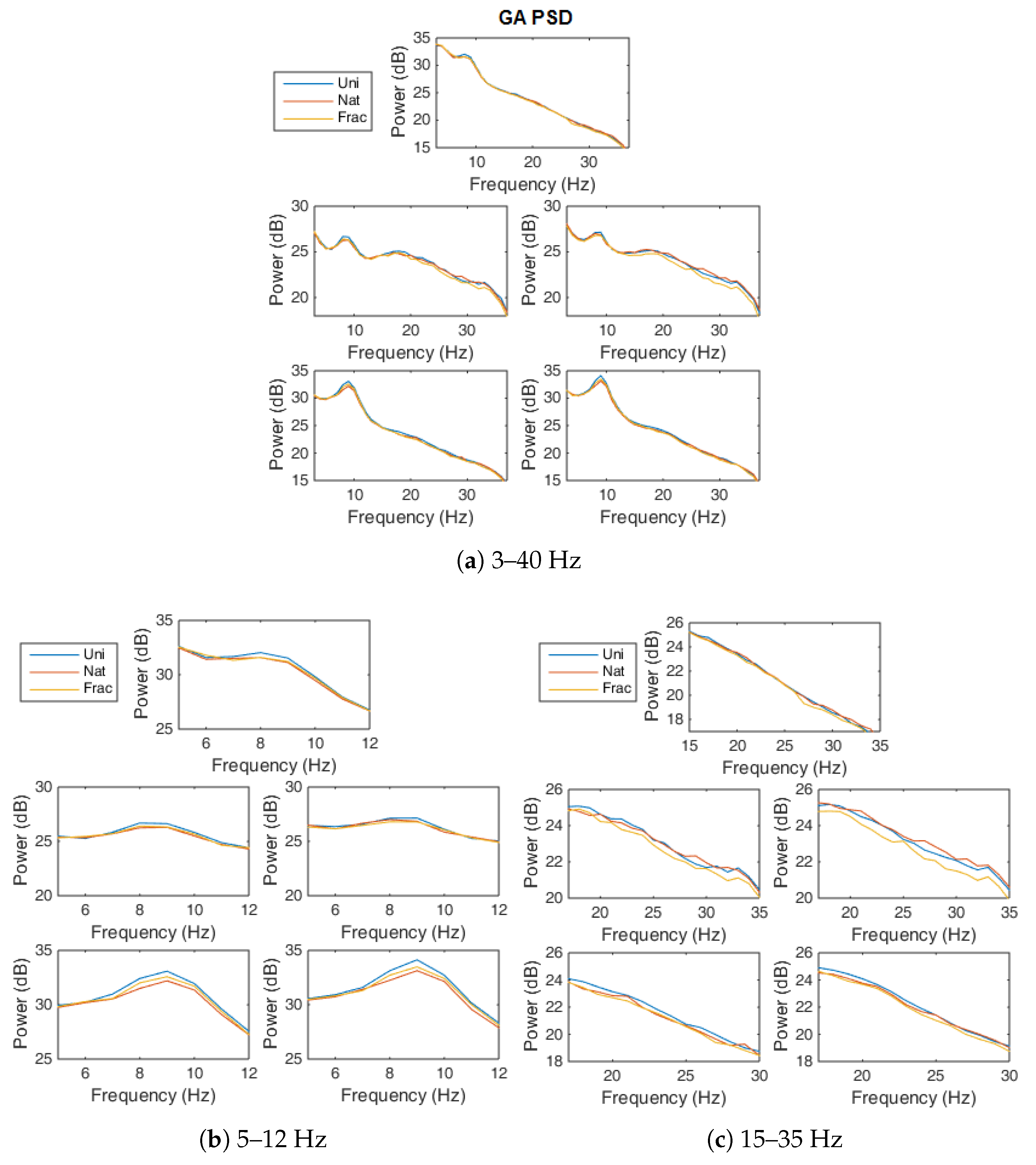

- Power spectral density (PSD) analysis—The power spectrum from 3 to 40 Hz, is computed on the trial interval (0–1350 ms), based on the Fourier transform with Kaiser window (Smith, 1997) and the logarithmic spectral power is presented as .

- (e)

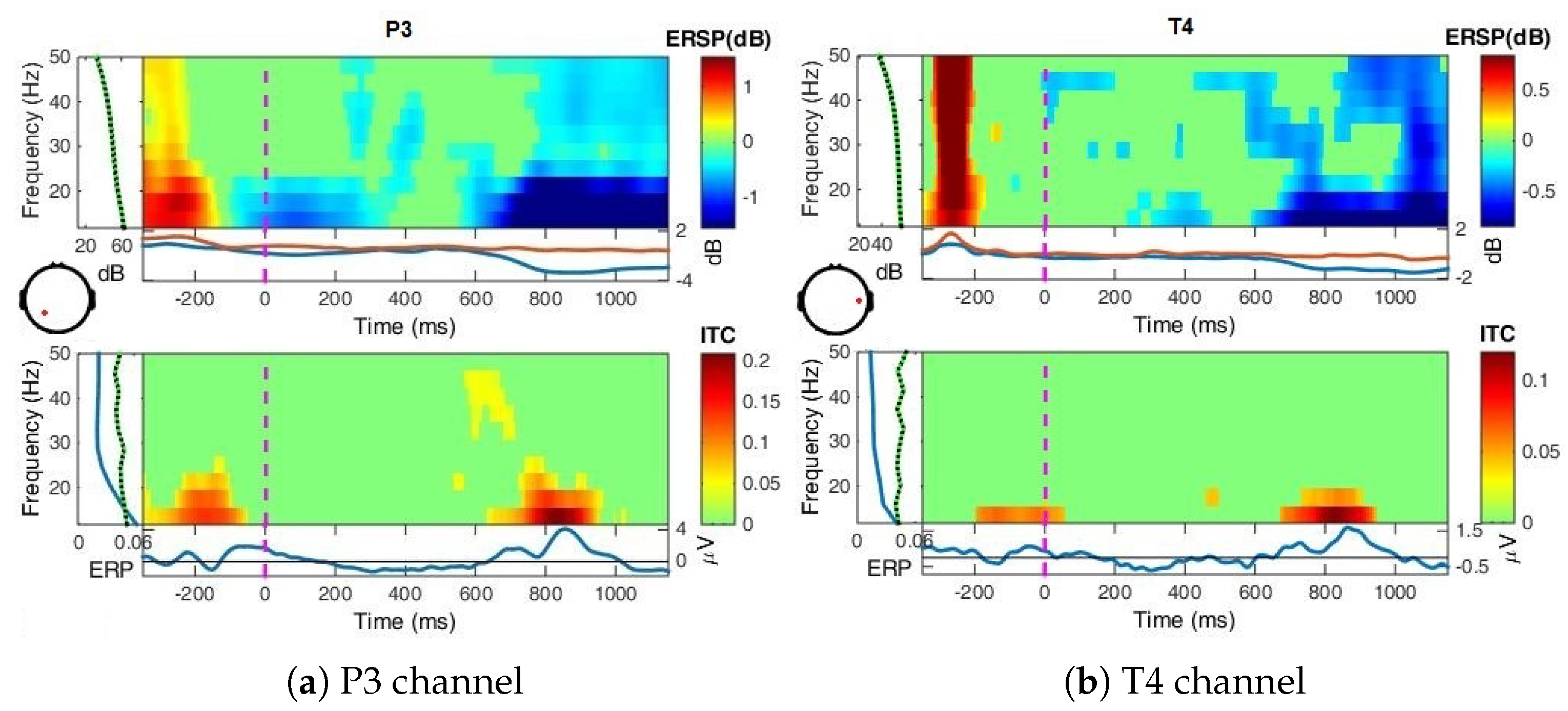

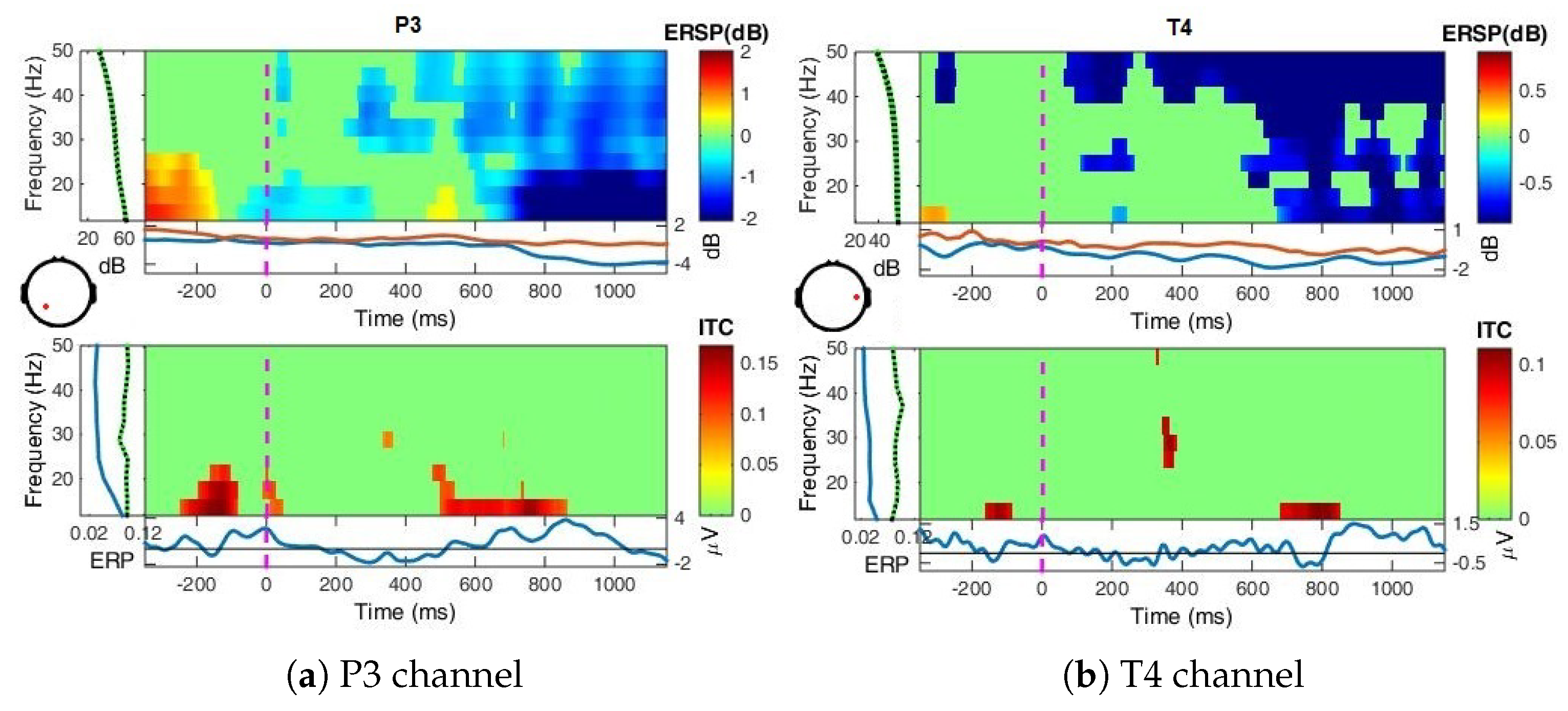

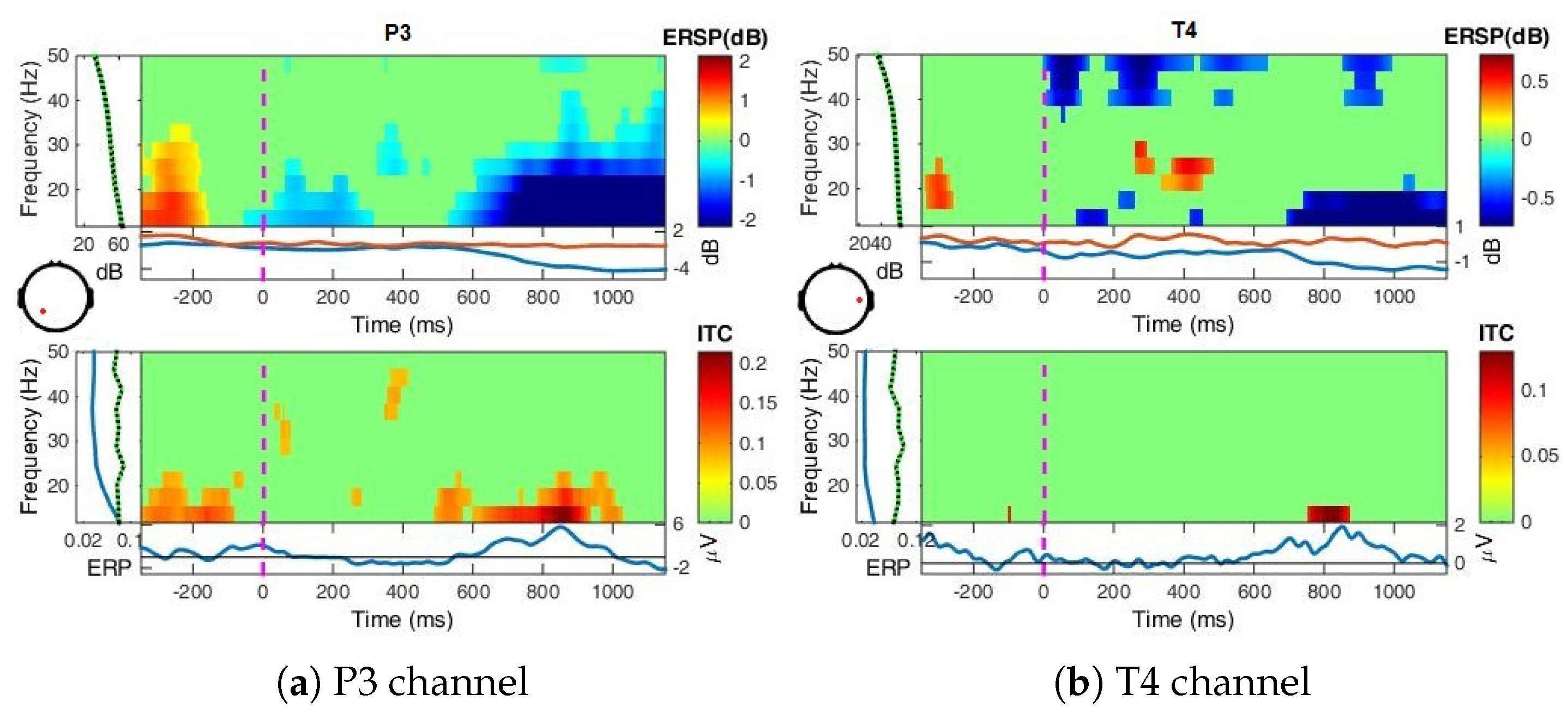

- Event-Related Spectral Perturbation (ERSP) analysis—In addition to the narrow-bands ERD curves, the Event-Related Spectral Perturbations (ERSPs) method allows the simultaneous investigation of the full spectrum [57,66,67,68]. Computed here (with EEGLab) based Short-Time Fourier analysis using Morlet wavelet transform with three cycles wide windows at each 0.5 frequency, within 0–50 Hz, on the interval −550 ms to 1350 ms, relative to the baseline period (−200, 0 ms).

- (f)

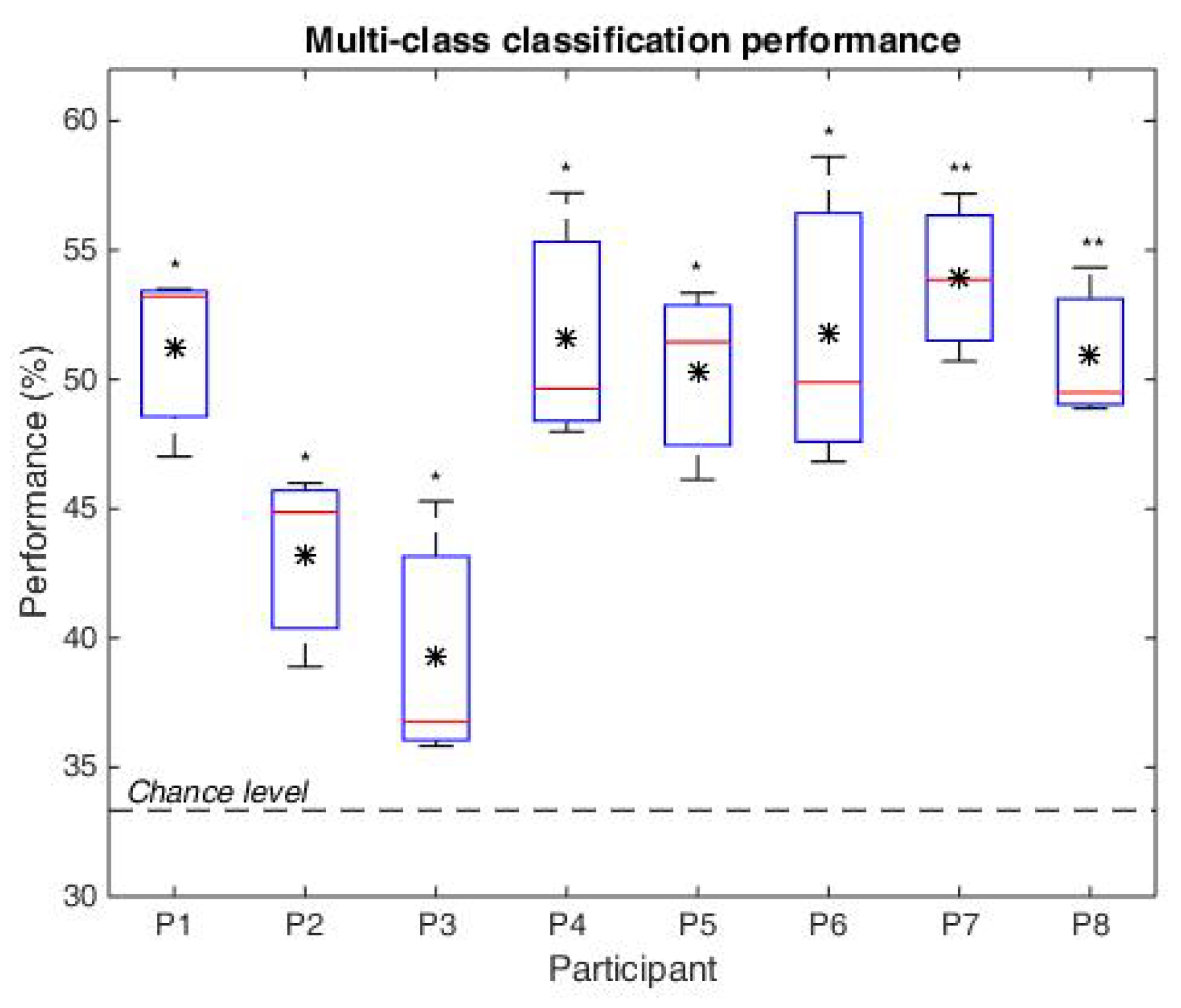

- Classification—We are interested to investigate if the brain responses can be discriminated in accordance to the image perceived, namely the synthetic fractal texture, Frac, the natural texture Nat, or the reference image, Uni. The estimation is performed using single-trial classification, by Regularized Linear Discriminant Analysis [71], in multi-class form. The three class labels are given by the stimuli images: Uni, Nat or Frac. Spatio-temporal features (channels and time, extracted as in [30,64]) are considered from intervals with highest discriminations between classes based on the signed . Namely, the signed discriminability is computed between Nat–Uni and Frac–Nat classes on the temporal signals (0–1200 ms) for all channels, and three short intervals of up to 150 ms are heuristically selected for each discrimination pair (Nat–Uni and Frac–Nat) where the discriminability is highest across all channels (see Section 4). The short temporal intervals detected are comprised within the 200–400 ms and 480–1200 ms ranges. The averaged value of the temporal signals within these short intervals considering each channel and each discrimination pair is further selected for each trial, giving a concatenated vector as spatio-temporal features of 6 × 5 dimension: 3 averaged values for Nat–Uni pair, 3 for Frac–Nat pair, for all 5 channels and all trials. Separately, also multi-modal classification is investigated considering frequency features along with the temporal features (spatio-tempo-spectral features). Similarly, the spectral features are detected as averaged values of the power spectrum (0–30 Hz) within three frequency intervals with maximum signed discriminability over the power spectrum (3–40 Hz) for Nat–Uni and Frac–Nat pairs. The frequency range intervals selected vary around 8–14 Hz and 17–39 Hz, consistent with the highest spectrum differences as observed in spectrum analysis in Section 4. The multi-modal features consider temporal features from the parietal area (P3, P4) and spectral features from the temporal area (T3, T4), giving a concatenated feature vector of 6 × 4 dimension: 3 temporal averaged values for Nat–Uni, 3 temporal for Frac–Nat, 3 spectral averaged values for Nat–Uni, 3 spectral for Frac–Nat, considering 4 channels (T3, T4, P3, P4). For validation, 3-folds cross-validation is used, where the data set is split in 3 parts, one used for training and 2 for testing, and the classification is repeated until each part has been used as training [72]. The classifications are evaluated with normalized loss (Equation (4)), which helps with weighting for unbalanced classes. The normalized loss is a ratio out of 1, therefore the performance (the accuracy, Acc) is given by: Acc = 1–loss. The final classification performance is computed as the average accuracy over all folds.where n is the number of classes such as , is the number of wrongly estimated samples in class i, and the number of samples in class i.

4. Experimental Results

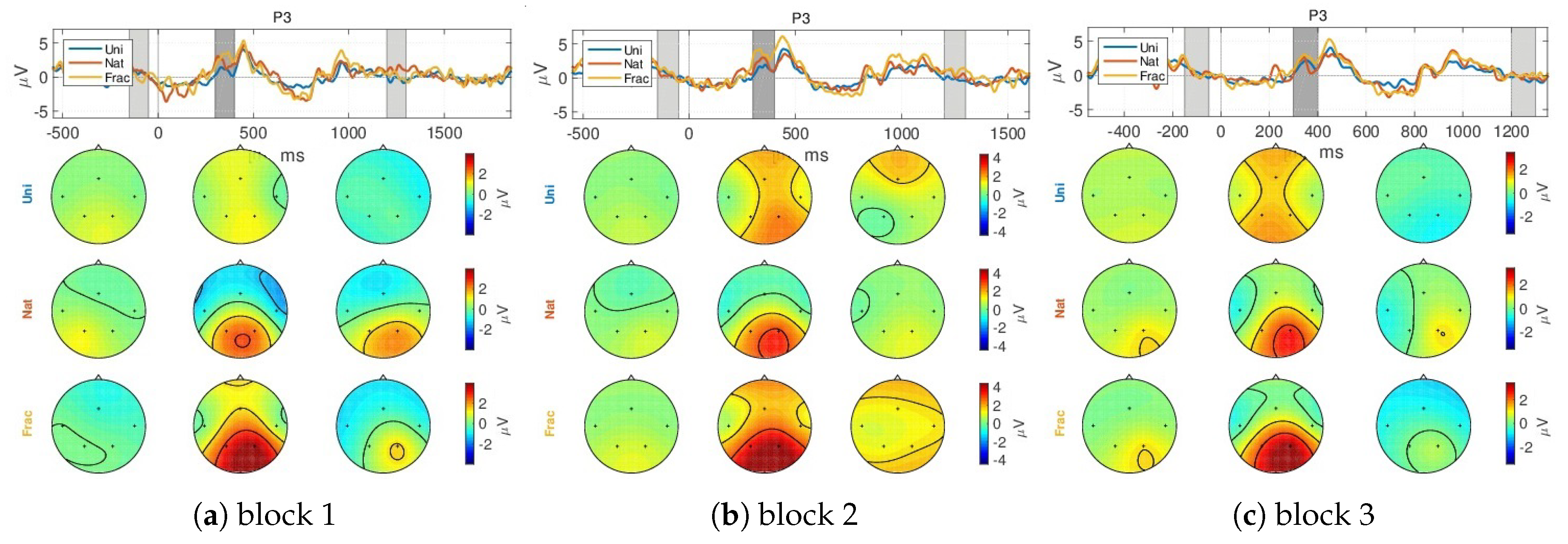

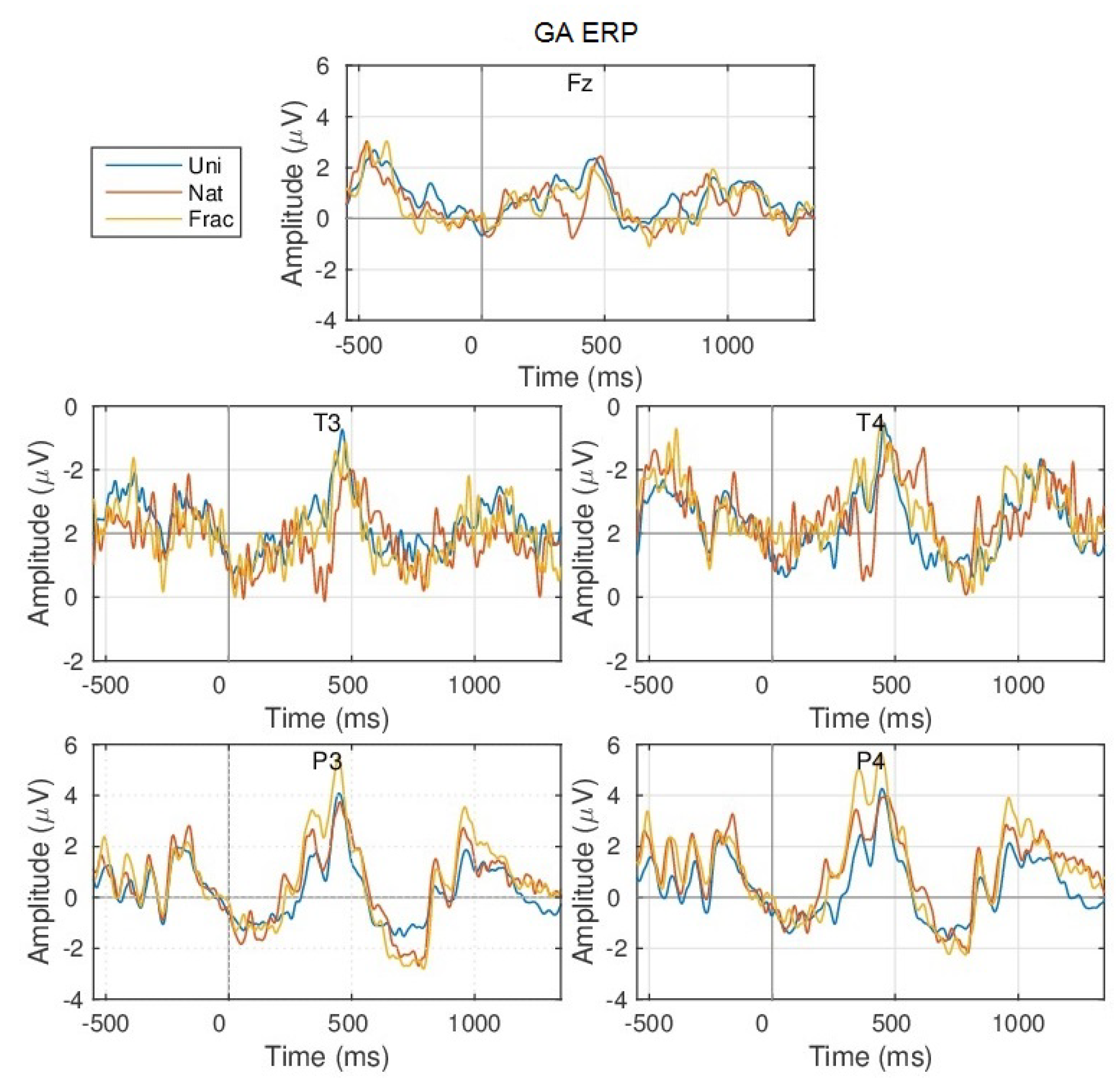

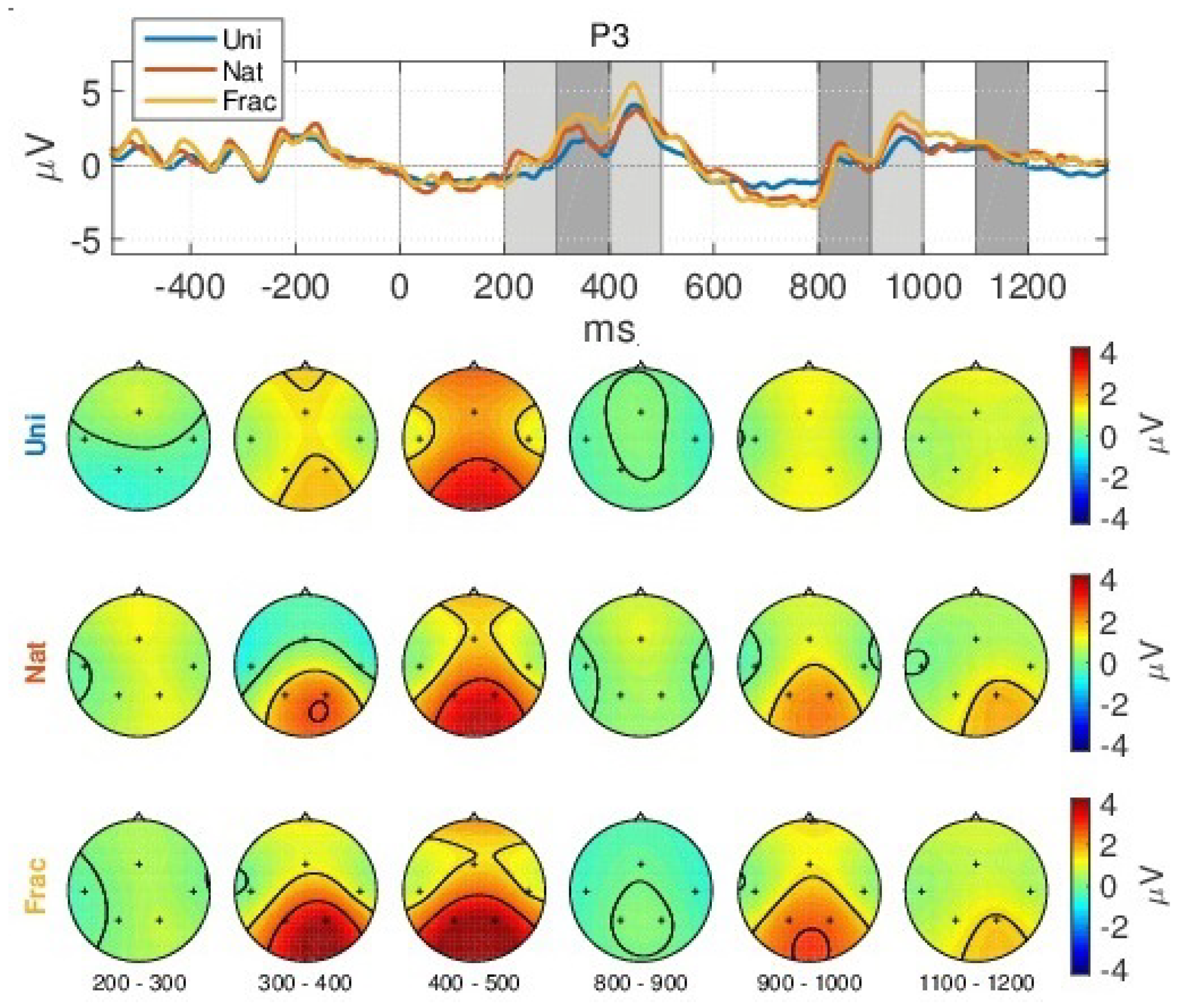

4.1. Event-Related Potentials (ERPs)

- (i)

- a slight decreased N200 peak for Uni images (at 250 ms), relating to visual perception [73];

- (ii)

- a gradually increased amplitude already from the P200 (350 ms), as response to an increase in image complexity perception from Uni to Nat and Frac images perceptions, observed also spatially (see scalp plots in Figure 5) with increased activity in the parietal area (P3, P4 channels), highest for fractal images perception (3 V);

- (iii)

- even higher amplitude for the P300 (at 450 ms) towards 4 V in the parietal area, as compared to P200.

- (iv)

- the second group of peaks around 800–1100 ms, with similar amplitude and spatial distribution for P200 in the parietal area (at 850 ms, appearing 350 ms after the grey image presentation), followed by another peak (950 ms, 450 ms after the grey image presentation) with gradually increased amplitude for Uni, Nat and Frac images of up to (2–3 V), focused in the right parietal area. This relates to an extended reasoning process, since participants stated that they were unintentionally still thinking over the image complexity, even after the stimulus interval, even though they were requested to only relax in the relaxation period.

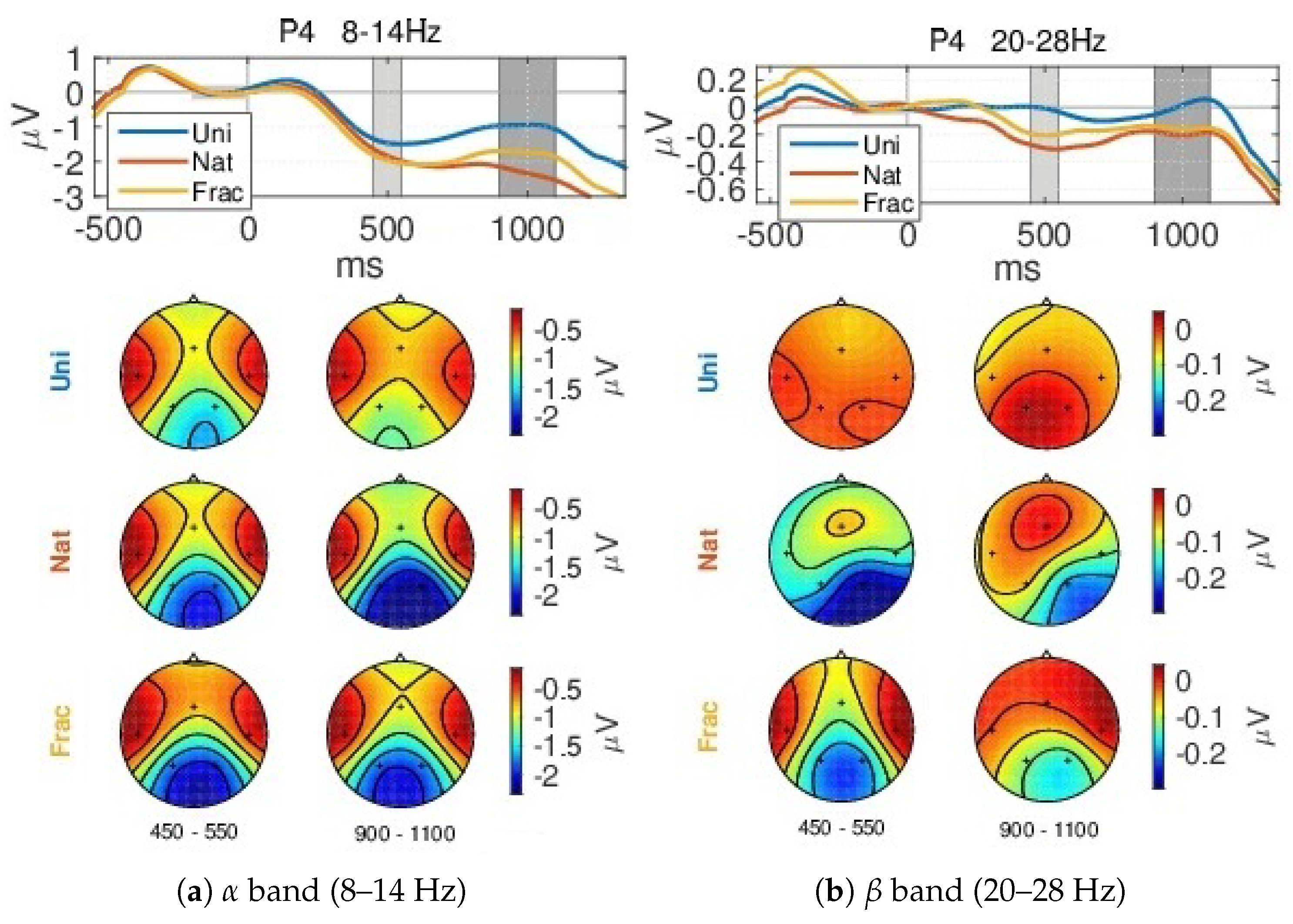

4.2. Event-Related (De)Synchronizations, ERDs/ERSs

4.3. Power Spectrum

4.4. Classification

5. Discussion, Conclusions and Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

Appendix A.1. Additional Graphs

Appendix A.1.1. GA ERPs

Appendix A.1.2. Event-Related (De)Synchronization, ERD/ERS

Appendix A.1.3. Event-Related Spectral Perturbations, ERSP

References

- Zoefel, B.; VanRullen, R. Oscillatory mechanisms of stimulus processing and selection in the visual and auditory systems: State-of-the-art, speculations and suggestions. Front. Neurosci. 2017, 11, 2–96. [Google Scholar] [CrossRef] [PubMed]

- Yadav, A.K.; Roy, R.; Kumar, A.P. Survey on content-based image retrieval and texture analysis with applications. Int. J. Signal Process. Image Process. Pattern Recognit. 2014, 7, 41–50. [Google Scholar] [CrossRef]

- Ivanovici, M.; Richard, N. Entropy versus fractal complexity for computer-generated color fractal images. In Proceedings of the 4th CIE Expert Symposium on Colour and Visual Appearance, Prague, Czech Republic, 6–7 September 2016; pp. 432–437. [Google Scholar]

- Kisan, S.; Mishra, S.; Mishra, D. A novel method to estimate fractal dimension of color images. In Proceedings of the 2016 11th International Conference on Industrial and Information Systems (ICIIS), Roorkee, India, 3–4 December 2016; pp. 692–697. [Google Scholar]

- Zunino, L.; Ribeiro, H.V. Discriminating image textures with the multiscale two-dimensional complexity-entropy causality plane. Chaos Solitons Fractals 2016, 91, 679–688. [Google Scholar] [CrossRef]

- Forsythe, A.; Nadal, M.; Sheehy, N.; Cela-Conde, C.J.; Sawey, M. Predicting beauty: Fractal dimension and visual complexity in art. Br. J. Psychol. 2011, 102, 49–70. [Google Scholar] [CrossRef]

- Ivanovici, M.; Richard, N. Fractal dimension of color fractal images. IEEE Trans. Image Process. 2011, 20, 227–235. [Google Scholar] [CrossRef]

- Jones-Smith, K.; Mathur, H. Revisiting Pollock’s drip paintings. Nature 2006, 444, E9–E10. [Google Scholar] [CrossRef]

- Ivanovici, M.; Coliban, R.M.; Hatfaludi, C.; Nicolae, I.E. Color Image Complexity versus Over-Segmentation: A Preliminary Study on the Correlation between Complexity Measures and Number of Segments. J. Imaging 2020, 6, 16. [Google Scholar] [CrossRef]

- Morabito, F.C.; Cacciola, M.; Occhiuto, G. Creative brain and abstract art: A quantitative study on Kandinskij paintings. In Proceedings of the 2011 International Joint Conference on Neural Networks, San Jose, CA, USA, 31 July–5 August 2011; pp. 2387–2394. [Google Scholar]

- Morabito, F.C.; Morabito, G.; Cacciola, M.; Occhiuto, G. The Brain and Creativity. In Springer Handbook of Bio-/Neuroinformatics; Springer: Berlin/Heidelberg, Germany, 2014; pp. 1099–1109. [Google Scholar]

- Donderi, D.C. An information theory analysis of visual complexity and dissimilarity. Perception 2006, 35, 823–835. [Google Scholar] [CrossRef]

- Donderi, D.C. Visual complexity: A review. Psychol. Bull. 2006, 132, 73. [Google Scholar] [CrossRef]

- Yu, H.; Winkler, S. Image complexity and spatial information. In Proceedings of the 2013 Fifth International Workshop on Quality of Multimedia Experience (QoMEX), Klagenfurt am Wörthersee, Austria, 3–5 July 2013; pp. 12–17. [Google Scholar]

- Ciocca, G.; Corchs, S.; Gasparini, F. Complexity perception of texture images. In International Conference on Image Analysis and Processing; Springer: Berlin/Heidelberg, Germany, 2015; pp. 119–126. [Google Scholar]

- Corchs, S.E.; Ciocca, G.; Bricolo, E.; Gasparini, F. Predicting complexity perception of real world images. PLoS ONE 2016, 11, e0157986. [Google Scholar] [CrossRef]

- Gartus, A.; Leder, H. Predicting perceived visual complexity of abstract patterns using computational measures: The influence of mirror symmetry on complexity perception. PLoS ONE 2017, 12, e0185276. [Google Scholar] [CrossRef] [PubMed]

- Matveev, N.; Sherstobitova, A.; Gerasimova, O. Fractal analysis of the relationship between the visual complexity of laser show pictures and a human psychophysiological state. SHS Web Conf. 2018, 43, 01009. [Google Scholar] [CrossRef]

- Guo, X.; Asano, C.M.; Asano, A.; Kurita, T.; Li, L. Analysis of texture characteristics associated with visual complexity perception. Opt. Rev. 2012, 19, 306–314. [Google Scholar] [CrossRef]

- Madan, C.R.; Bayer, J.; Gamer, M.; Lonsdorf, T.B.; Sommer, T. Visual complexity and affect: Ratings reflect more than meets the eye. Front. Psychol. 2018, 8, 2368. [Google Scholar] [CrossRef] [PubMed]

- Babiloni, C.; Vecchio, F.; Bultrini, A.; Luca Romani, G.; Rossini, P.M. Pre-and poststimulus alpha rhythms are related to conscious visual perception: A high-resolution EEG study. Cereb. Cortex 2006, 16, 1690–1700. [Google Scholar] [CrossRef] [PubMed]

- Demiralp, T.; Bayraktaroglu, Z.; Lenz, D.; Junge, S.; Busch, N.A.; Maess, B.; Ergen, M.; Herrmann, C.S. Gamma amplitudes are coupled to theta phase in human EEG during visual perception. Int. J. Psychophysiol. 2007, 64, 24–30. [Google Scholar] [CrossRef]

- Busch, N.A.; Dubois, J.; VanRullen, R. The phase of ongoing EEG oscillations predicts visual perception. J. Neurosci. 2009, 29, 7869–7876. [Google Scholar] [CrossRef]

- Hagerhall, C.M.; Laike, T.; Taylor, R.P.; Küller, M.; Küller, R.; Martin, T.P. Investigations of human EEG response to viewing fractal patterns. Perception 2008, 37, 1488–1494. [Google Scholar] [CrossRef]

- Acqualagna, L.; Bosse, S.; Porbadnigk, A.K.; Curio, G.; Müller, K.R.; Wiegand, T.; Blankertz, B. EEG-based classification of video quality perception using steady state visual evoked potentials (SSVEPs). J. Neural Eng. 2015, 12, 026012. [Google Scholar] [CrossRef]

- Grummett, T.; Leibbrandt, R.; Lewis, T.; DeLosAngeles, D.; Powers, D.; Willoughby, J.; Pope, K.; Fitzgibbon, S. Measurement of neural signals from inexpensive, wireless and dry EEG systems. Physiol. Meas. 2015, 36, 1469. [Google Scholar] [CrossRef]

- Mustafa, M.; Guthe, S.; Magnor, M. Single-trial EEG classification of artifacts in videos. ACM Trans. Appl. Percept. (TAP) 2012, 9, 1–15. [Google Scholar] [CrossRef]

- Portella, C.; Machado, S.; Arias-Carrión, O.; Sack, A.T.; Silva, J.G.; Orsini, M.; Leite, M.A.A.; Silva, A.C.; Nardi, A.E.; Cagy, M.; et al. Relationship between early and late stages of information processing: An event-related potential study. Neurol. Int. 2012, 4, e16. [Google Scholar] [CrossRef] [PubMed]

- Polich, J. Updating P300: An integrative theory of P3a and P3b. Clin. Neurophysiol. 2007, 118, 2128–2148. [Google Scholar] [CrossRef] [PubMed]

- Nicolae, I.E.; Acqualagna, L.; Blankertz, B. Assessing the depth of cognitive processing as the basis for potential user-state adaptation. Front. Neurosci. 2017, 11, 548. [Google Scholar] [CrossRef]

- Ivanovici, M. Fractal Dimension of Color Fractal Images With Correlated Color Components. IEEE Trans. Image Process. 2020, 29, 8069–8082. [Google Scholar] [CrossRef]

- Tononi, G.; Edelman, G.M.; Sporns, O. Complexity and coherency: Integrating information in the brain. Trends Cognit. Sci. 1998, 2, 474–484. [Google Scholar] [CrossRef]

- Wang, Z. Applications of objective image quality assessment methods [applications corner]. IEEE Signal Process. Mag. 2011, 28, 137–142. [Google Scholar] [CrossRef]

- Kim, H.G.; Lim, H.T.; Ro, Y.M. Deep virtual reality image quality assessment with human perception guider for omnidirectional image. IEEE Trans. Circuits Syst. Video Technol. 2019, 30, 917–928. [Google Scholar] [CrossRef]

- Ratti, E.; Waninger, S.; Berka, C.; Ruffini, G.; Verma, A. Comparison of medical and consumer wireless EEG systems for use in clinical trials. Front. Hum. Neurosci. 2017, 11, 398. [Google Scholar] [CrossRef]

- De Vos, M.; Kroesen, M.; Emkes, R.; Debener, S. P300 speller BCI with a mobile EEG system: Comparison to a traditional amplifier. J. Neural Eng. 2014, 11, 036008. [Google Scholar] [CrossRef]

- Huang, X.; Yin, E.; Wang, Y.; Saab, R.; Gao, X. A mobile eeg system for practical applications. In Proceedings of the 2017 IEEE Global Conference on Signal and Information Processing (GlobalSIP), Montreal, QC, Canada, 14–16 November 2017; pp. 995–999. [Google Scholar]

- Luck, S.J. An Introduction to the Event-Related Potential Technique; MIT Press: Cambridge, MA, USA, 2014. [Google Scholar]

- Kim, K.H.; Kim, J.H.; Yoon, J.; Jung, K.Y. Influence of task difficulty on the features of event-related potential during visual oddball task. Neurosci. Lett. 2008, 445, 179–183. [Google Scholar] [CrossRef] [PubMed]

- Basile, L.; Sato, J.R.; Alvarenga, M.Y.; Henrique, N., Jr.; Pasquini, H.A.; Alfenas, W.; Machado, S.; Velasques, B.; Ribeiro, P.; Piedade, R.; et al. Lack of systematic topographic difference between attention and reasoning beta correlates. PLoS ONE 2013, 8, e59595. [Google Scholar] [CrossRef] [PubMed]

- Nakata, H.; Sakamoto, K.; Otsuka, A.; Yumoto, M.; Kakigi, R. Cortical rhythm of No-go processing in humans: An MEG study. Clin. Neurophysiol. 2013, 124, 273–282. [Google Scholar] [CrossRef] [PubMed]

- Thut, G.; Nietzel, A.; Brandt, S.; Pascual-Leone, A. α-Band electroencephalographic activity over occipital cortex indexes visuospatial attention bias and predicts visual target detection. J. Neurosci. 2006, 26.37, 9494–9502. [Google Scholar] [CrossRef] [PubMed]

- Yordanova, J.; Kolev, V.; Polich, J. P300 and alpha event-related desynchronization (ERD). Soc. Psychophysiol. Res. 2001, 38, 143–152. [Google Scholar] [CrossRef]

- Buzsáki, G.; Draguhn, A. Neuronal oscillations in cortical networks. Science 2004, 304, 1926–1929. [Google Scholar] [CrossRef]

- Sheth, B.; Sandkühler, S.; Bhattacharya, J. Posterior beta and anterior gamma oscillations predict cognitive insight. J. Cognit. Neurosci. 2009, 21, 1269–1279. [Google Scholar] [CrossRef]

- Nicolae, I.E.; Ivanovici, M. Wirelessly scanning Human Brain Perception of Image Complexity—image analysis measures versus human Reasoning. 2020. in preparation. [Google Scholar]

- Panigrahy, C.; Seal, A.; Mahato, N. Fractal dimension of synthesized and natural color images in Lab space. Pattern Anal. Appl. 2019, 23, 819–836. [Google Scholar] [CrossRef]

- Shannon, C. Mathematical theory of communication. Bell Syst. Tech. J. 1948, 27, 379–423. [Google Scholar] [CrossRef]

- Mandelbrot, B.B. The Fractal Geometry of Nature; WH Freeman: New York, NY, USA, 1983; Volume 173. [Google Scholar]

- Barnsley, M.F.; Devaney, R.L.; Mandelbrot, B.B.; Peitgen, H.O.; Saupe, D.; Voss, R.F.; Fisher, Y.; McGuire, M. The Science of Fractal Images; Springer: Berlin, Germany, 1988. [Google Scholar]

- Fisher, Y.; McGuire, M.; Voss, R.F.; Barnsley, M.F.; Devaney, R.L.; Mandelbrot, B.B. The Science of Fractal Images; Springer Science & Business Media: Berlin, Germany, 2012. [Google Scholar]

- Falconer, K.; Geometry, F. Fractal Geometry: Mathematical Foundations and Applications, 1st ed.; John Wiley & Sons: Hoboken, NJ, USA, 1990; p. 288. [Google Scholar]

- Falconer, K. Fractal Geometry: Mathematical Foundations and Applications, 3rd ed.; John Wiley & Sons: Hoboken, NJ, USA, 2004; p. 398. [Google Scholar]

- Voss, R.F. Random fractals: Characterization and measurement. In Scaling Phenomena in Disordered Systems; Springer: Berlin, Germany, 1991; pp. 1–11. [Google Scholar]

- Blankertz, B.; Acqualagna, L.; Dähne, S.; Haufe, S.; Schultze-Kraft, M.; Sturm, I.; Ušćumlic, M.; Wenzel, M.A.; Curio, G.; Müller, K.R. The Berlin Brain-Computer Interface: Progress Beyond Communication and Control. Front. Neurosci. 2016, 10, 530. [Google Scholar] [CrossRef] [PubMed]

- Delorme, A.; Makeig, S. EEGLAB: An open source toolbox for analysis of singletrial EEG dynamics including independent component analysis. J. Neurosci. Methods 2004, 134, 9–21. [Google Scholar] [CrossRef] [PubMed]

- Makeig, S.; Debener, S.; Onton, J.; Delorme, A. Mining event-related brain dynamics. Trends Cognit. Sci. 2004, 8, 204–210. [Google Scholar] [CrossRef] [PubMed]

- Alotaiby, T.; El-Samie, F.; Alshebeili, S.; Ahmad, I. A review of channel selection algorithms for EEG signal processing. EURASIP J. Adv. Signal Process. 2015, 2015, 66. [Google Scholar] [CrossRef]

- El-Sayed, A.; El-Sherbeny, A.; Shoka, A.; Dessouky, M. Rapid Seizure Classification Using Feature Extraction and Channel Selection. Am. J. Biomed. Sc. Res. 2020, 7, 237–244. [Google Scholar]

- Winkler, I.; Haufe, S.; Tangermann, M. Automatic classification of artifactual ICA-components for artifact removal in EEG signals. Behav. Brain Funct. 2011, 7, 30. [Google Scholar] [CrossRef]

- Kronegg, J.; Chanel, G.; Voloshynovskiy, S.; Pun, T. EEG-based synchronized brain-computer interfaces: A model for optimizing the number of mental tasks. IEEE Trans. Neur. Syst. Rehab. Eng. 2007, 15, 50–58. [Google Scholar] [CrossRef]

- Glass, G.; Hopkins, K. Statistical Methods in Education and Psychology, 3rd ed.; Allyn & Bacon: Boston, MA, USA, 1995. [Google Scholar]

- Nicolae, I.E.; Acqualagna, L.; Blankertz, B. Neural indicators of the depth of cognitive processing for user-adaptive neurotechnological applications. In Proceedings of the 2015 37th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Milan, Italy, 25–29 August 2015; pp. 1484–1487. [Google Scholar]

- Blankertz, B.; Lemm, S.; Treder, M.; Haufe, S.; Müller, K. Single-trial analysis and classification of ERP components—A tutorial. NeuroImage 2011, 56, 814–825. [Google Scholar] [CrossRef]

- Bracewell, R. The Fourier Transform and Its Applications, 3rd ed.; McGraw-Hill: New York, NY, USA, 1999. [Google Scholar]

- Rossi, A.; Parada, F.; Kolchinsky, A.; Puce, A. Neural correlates of apparent motion perception of impoverished facial stimuli: A comparison of ERP and ERSP activity. NeuroImage 2014, 98, 442–459. [Google Scholar] [CrossRef]

- Rozado, D.; Dunser, A. Combining EEG with Pupillometry to Improve Cognitive Workload Detection. Computer 2015, 48, 18–25. [Google Scholar] [CrossRef]

- Nicolae, I.E.; Ungureanu, M.; Acqualagna, L.; Strungaru, R.; Blankertz, B. Spectral Perturbations of the Depth of Cognitive Processing for Brain-Computer Interface Systems. In Proceedings of the 2015 E-Health and Bioengineering Conference (EHB), Iasi, Romania, 19–21 November 2015; pp. 1–4. [Google Scholar]

- Gysels, E.; Celka, P. Phase synchronization for the recognition of mental tasks in a brain-computer interface. IEEE Trans. Neural Syst. Rehab. Eng. 2004, 12, 406–415. [Google Scholar] [CrossRef] [PubMed]

- Tallon-Baudry, C.; Bertrand, O.; Delpuech, C.; Pernier, J. Stimulus specificity of phase-locked and non-phase-locked 40 Hz visual responses in human. J. Neurosci. 1996, 16, 4240–4249. [Google Scholar] [CrossRef] [PubMed]

- Friedman, J. Regularized discriminant analysis. J. Am. Stat. Assoc. 1989, 84, 165–175. [Google Scholar] [CrossRef]

- Lemm, S.; Blankertz, B.; Dickhaus, T.; Müller, K. Introduction to machine learning for brain imaging. NeuroImage 2011, 56, 387–399. [Google Scholar] [CrossRef] [PubMed]

- Rutiku, R.; Aru, J.; Bachmann, T. General markers of conscious visual perception and their timing. Front. Hum. Neurosci. 2016, 10, 23. [Google Scholar] [CrossRef]

- Myers, N.; Walther, L.; Wallis, G.; Stokes, M.; Nobre, A. Temporal dynamics of attention during encoding versus maintenance of working memory: Complementary views from event-related potentials and alpha-band oscillations. J. Cognit. Neurosci. 2015, 27, 492–508. [Google Scholar] [CrossRef]

- Babb, S.; Johnson, R. Object, spatial, and temporal memory: A behavioral analysis of visual scenes using a what, where, and when paradigm. Curr. Psychol. Lett. Behav. Brain Cognit. 2011, 26, 12. [Google Scholar] [CrossRef]

- Mühl, C.; Jeunet, C.; Lotte, F. EEG-based workload estimation across affective contexts. Front. Neurosci. 2014, 8, 114. [Google Scholar]

- Huo, J. An image complexity measurement algorithm with visual memory capacity and an EEG study. In Proceedings of the 2016 SAI Computing Conference (SAI), London, UK, 13–15 July 2016; pp. 264–268. [Google Scholar]

- Krigolson, O.; Williams, C.; Norton, A.; Hassall, C.; Colino, F. Choosing MUSE: Validation of a low-cost, portable EEG system for ERP research. Front. Neurosci. 2017, 11, 109. [Google Scholar] [CrossRef]

- Lopez-Gordo, M.A.; Sanchez-Morillo, D.; Valle, F.P. Dry EEG electrodes. Sensors 2014, 14, 12847–12870. [Google Scholar] [CrossRef]

- Kaspar, K.; König, P. Overt attention and context factors: The impact of repeated presentations, image type, and individual motivation. PLoS ONE 2011, 6, e21719. [Google Scholar] [CrossRef] [PubMed]

- van de Meerendonk, N.; Chwilla, D.J.; Kolk, H.H. States of indecision in the brain: ERP reflections of syntactic agreement violations versus visual degradation. Neuropsychologia 2013, 51, 1383–1396. [Google Scholar] [CrossRef] [PubMed]

- Petersen, G.K.; Saunders, B.; Inzlicht, M. The conflict negativity: Neural correlate of value conflict and indecision during financial decision making. bioRxiv 2017, 174136. [Google Scholar]

- David, S.V.; Vinje, W.E.; Gallant, J.L. Natural stimulus statistics alter the receptive field structure of v1 neurons. J. Neurosci. 2004, 24, 6991–7006. [Google Scholar] [CrossRef]

- Schultz, W.; Dickinson, A. Neuronal coding of prediction errors. Annu. Rev. Neurosci. 2000, 23, 473–500. [Google Scholar] [CrossRef]

- Forsythe, A.; Mulhern, G.; Sawey, M. Confounds in pictorial sets: The role of complexity and familiarity in basic-level picture processing. Behav. Res. Methods 2008, 40, 116–129. [Google Scholar] [CrossRef]

- Nicolae, I.E. PerPlex EEG.zip. figshare. Dataset 2020. [Google Scholar] [CrossRef]

- Buzsáki, G. Rhythms of the Brain; Oxford University Press: Oxford, UK, 2006. [Google Scholar]

| Output | ||||

|---|---|---|---|---|

| Uni | Nat | Frac | ||

| Uni | 55.3 | 21.7 | 23 | |

| Target | Nat | 21.5 | 53.5 | 25.1 |

| Frac | 23.5 | 26.9 | 49.6 | |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Nicolae, I.E.; Ivanovici, M. Preparatory Experiments Regarding Human Brain Perception and Reasoning of Image Complexity for Synthetic Color Fractal and Natural Texture Images via EEG. Appl. Sci. 2021, 11, 164. https://doi.org/10.3390/app11010164

Nicolae IE, Ivanovici M. Preparatory Experiments Regarding Human Brain Perception and Reasoning of Image Complexity for Synthetic Color Fractal and Natural Texture Images via EEG. Applied Sciences. 2021; 11(1):164. https://doi.org/10.3390/app11010164

Chicago/Turabian StyleNicolae, Irina E., and Mihai Ivanovici. 2021. "Preparatory Experiments Regarding Human Brain Perception and Reasoning of Image Complexity for Synthetic Color Fractal and Natural Texture Images via EEG" Applied Sciences 11, no. 1: 164. https://doi.org/10.3390/app11010164

APA StyleNicolae, I. E., & Ivanovici, M. (2021). Preparatory Experiments Regarding Human Brain Perception and Reasoning of Image Complexity for Synthetic Color Fractal and Natural Texture Images via EEG. Applied Sciences, 11(1), 164. https://doi.org/10.3390/app11010164