Efficient Melody Extraction Based on Extreme Learning Machine

Abstract

1. Introduction

2. Preliminaries

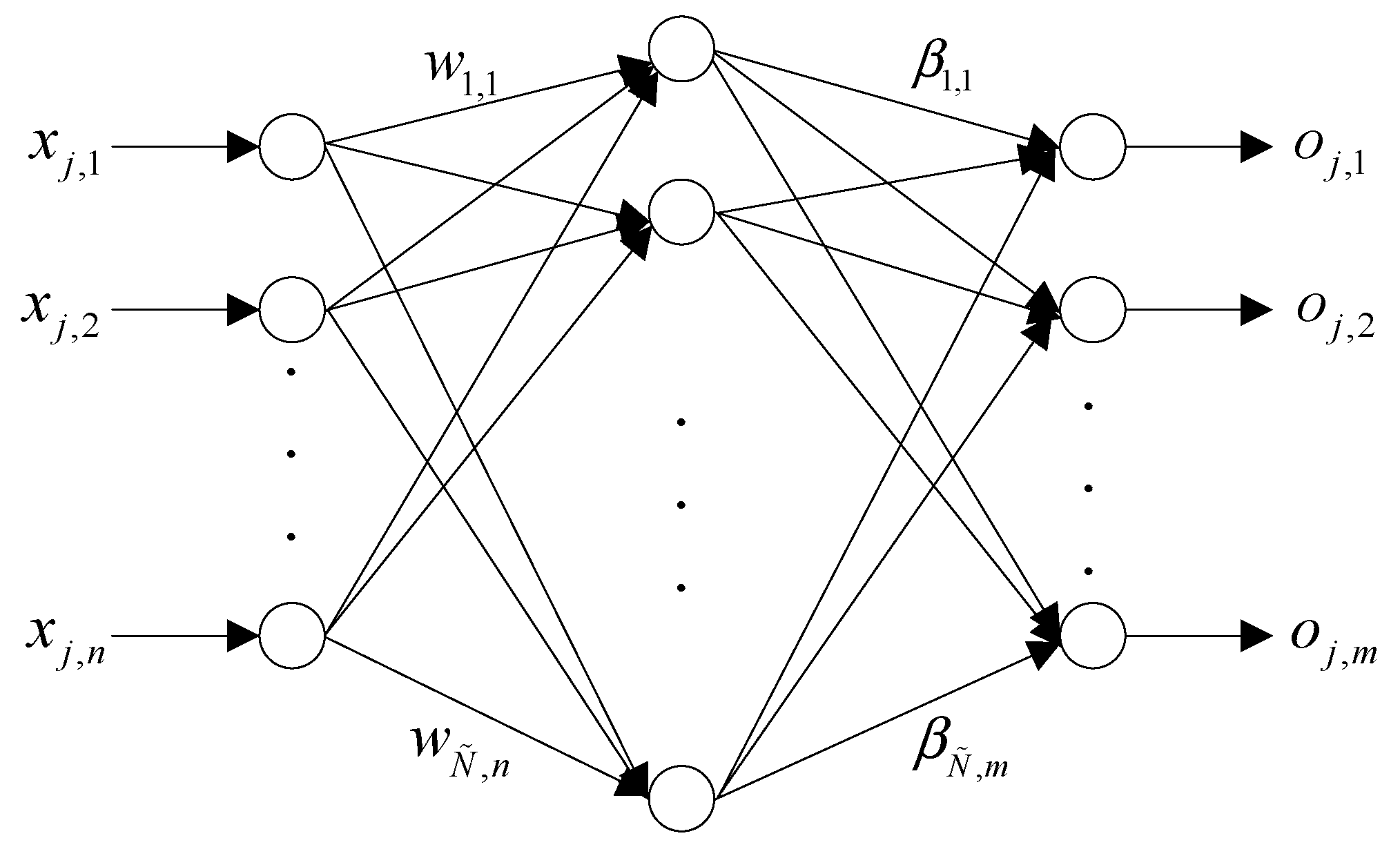

2.1. Extreme Learning Machine

| Algorithm 1. Procedure of ELM training |

| Input: Training set , activation function , and hidden node number . |

| Output: Output weights . |

| Steps |

|

2.2. Constant-Q Transform

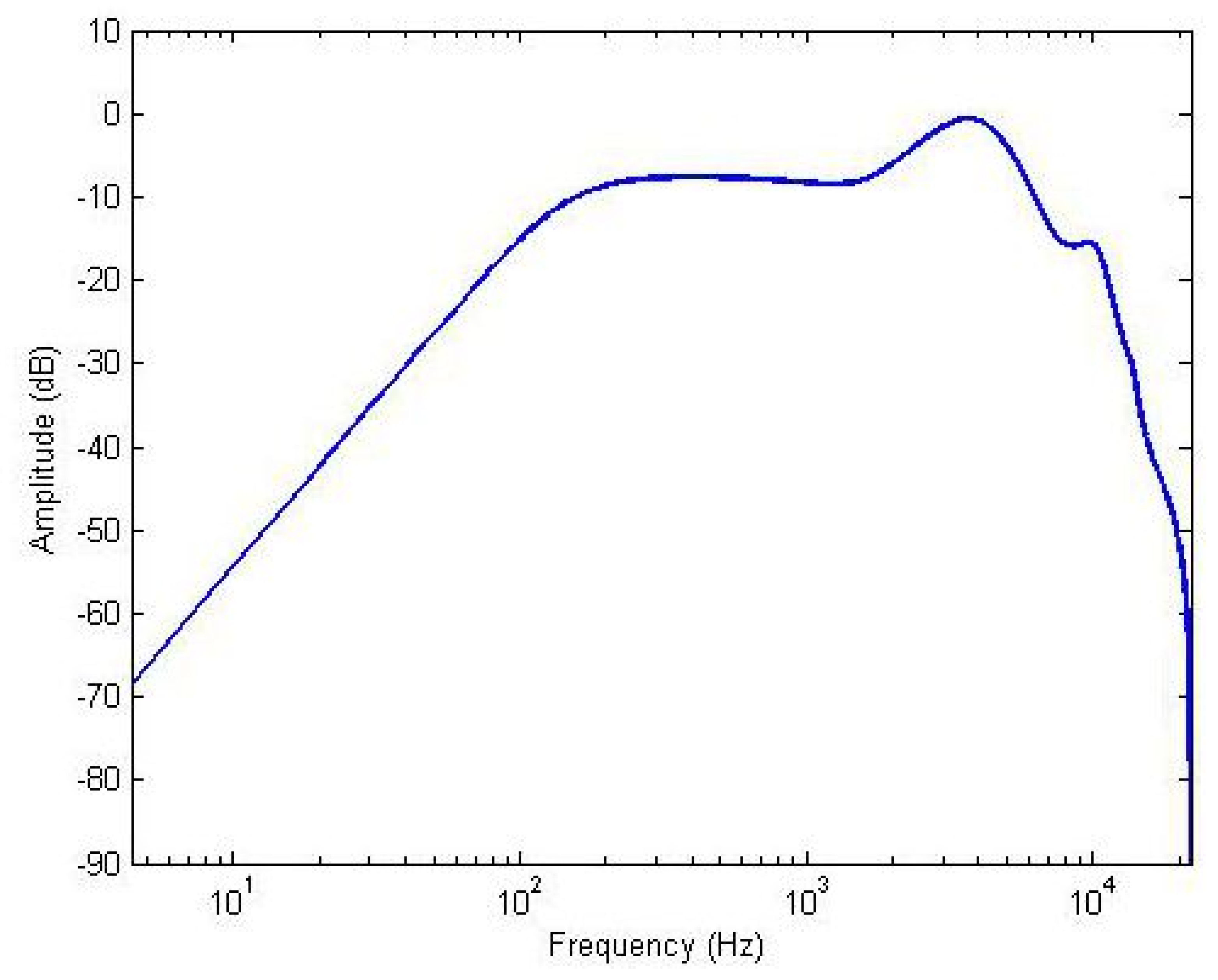

2.3. Equal Loudness Filter

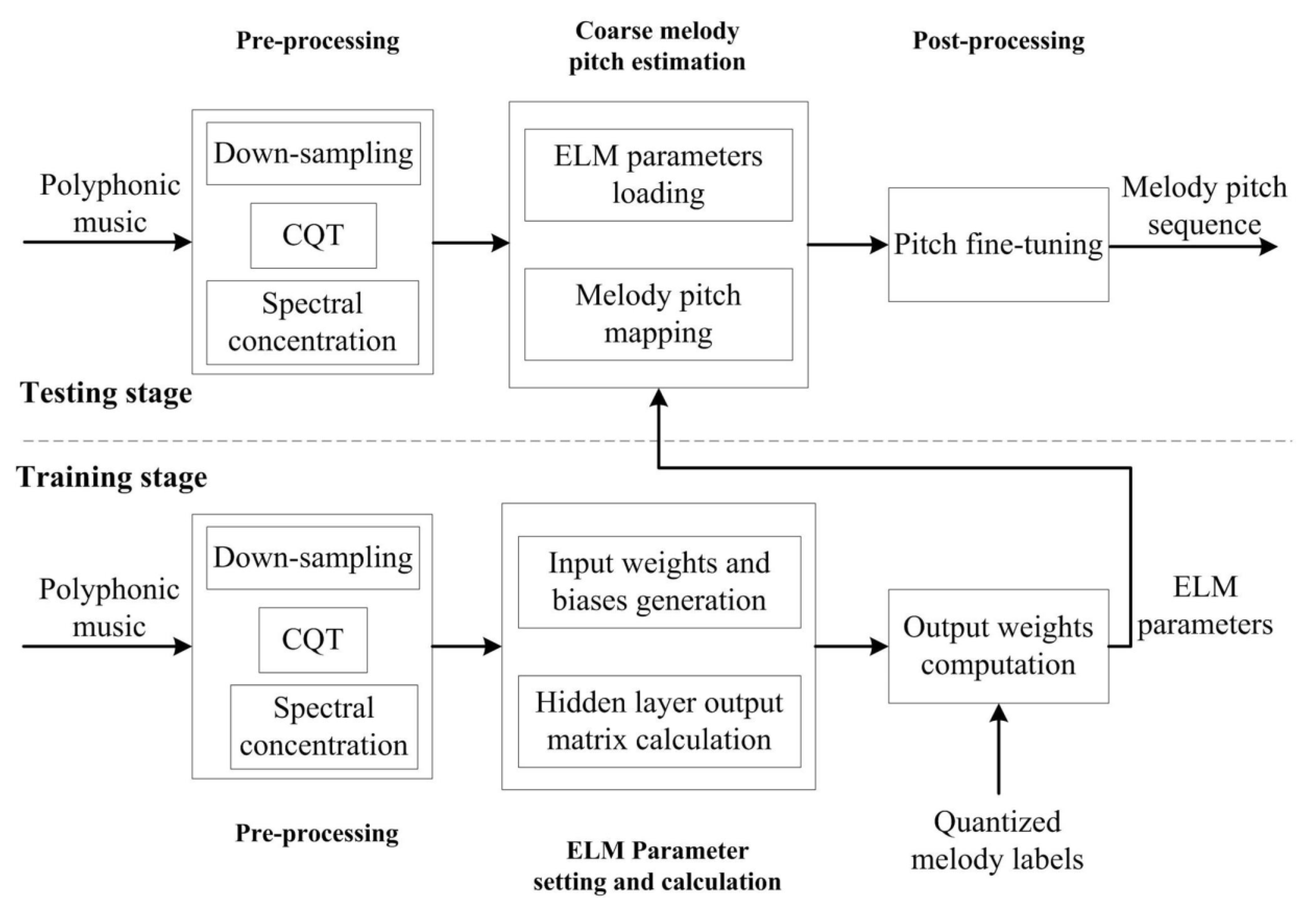

3. Extreme Learning Machine-Based Melody Extraction

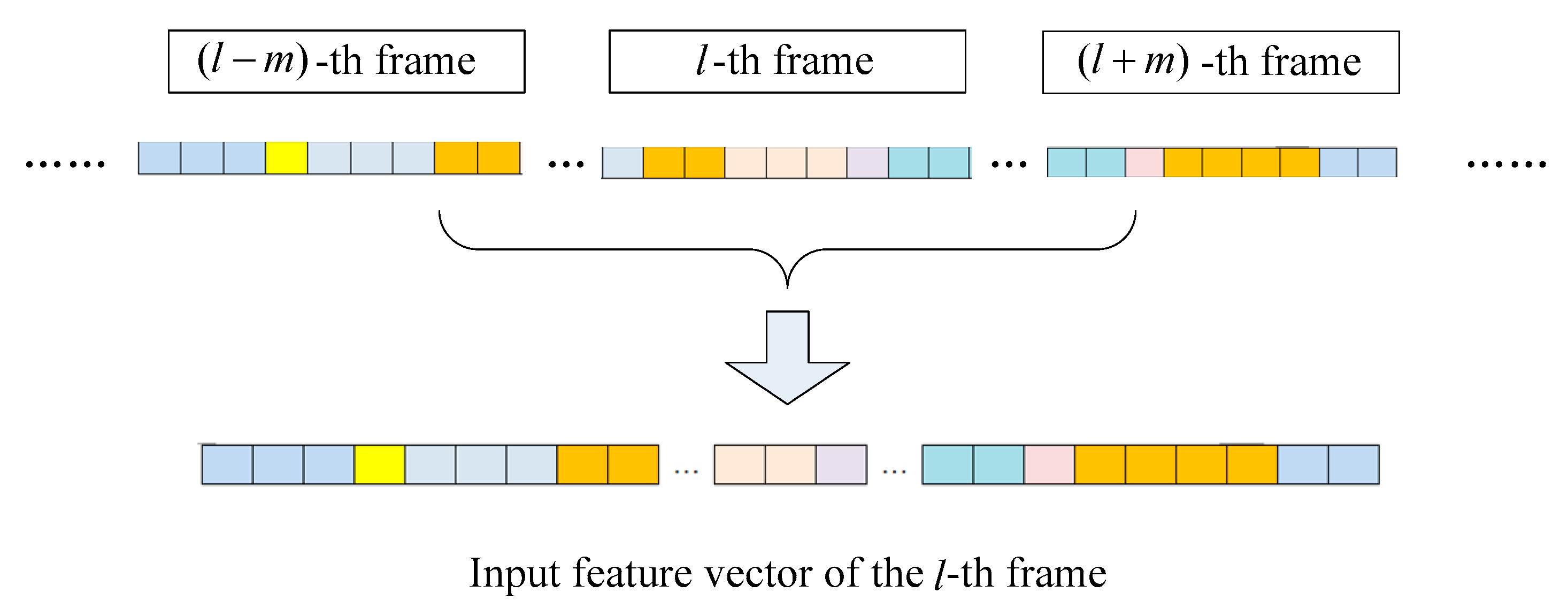

3.1. Pre-Processing

3.2. Coarse Melody Pitch Estimation

3.3. Pitch Fine-Tuning

3.4. Computational Complexity Analysis

4. Experimental Results and Discussions

4.1. Evaluation Metrics and Collections

4.1.1. Evaluation Metrics

4.1.2. Evaluation Collections

4.2. Parameter Setting

4.3. Experimental Results on Test Sets

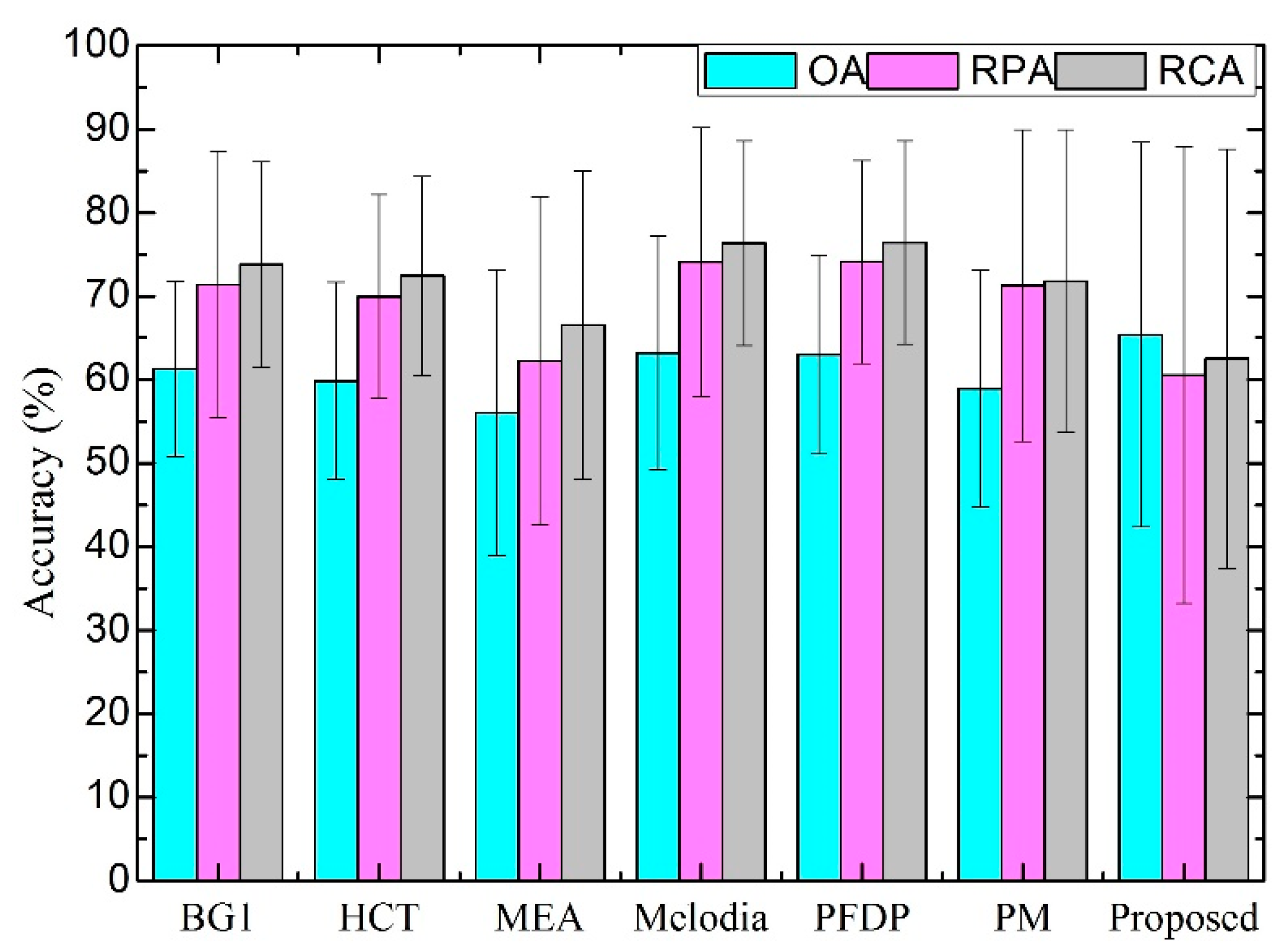

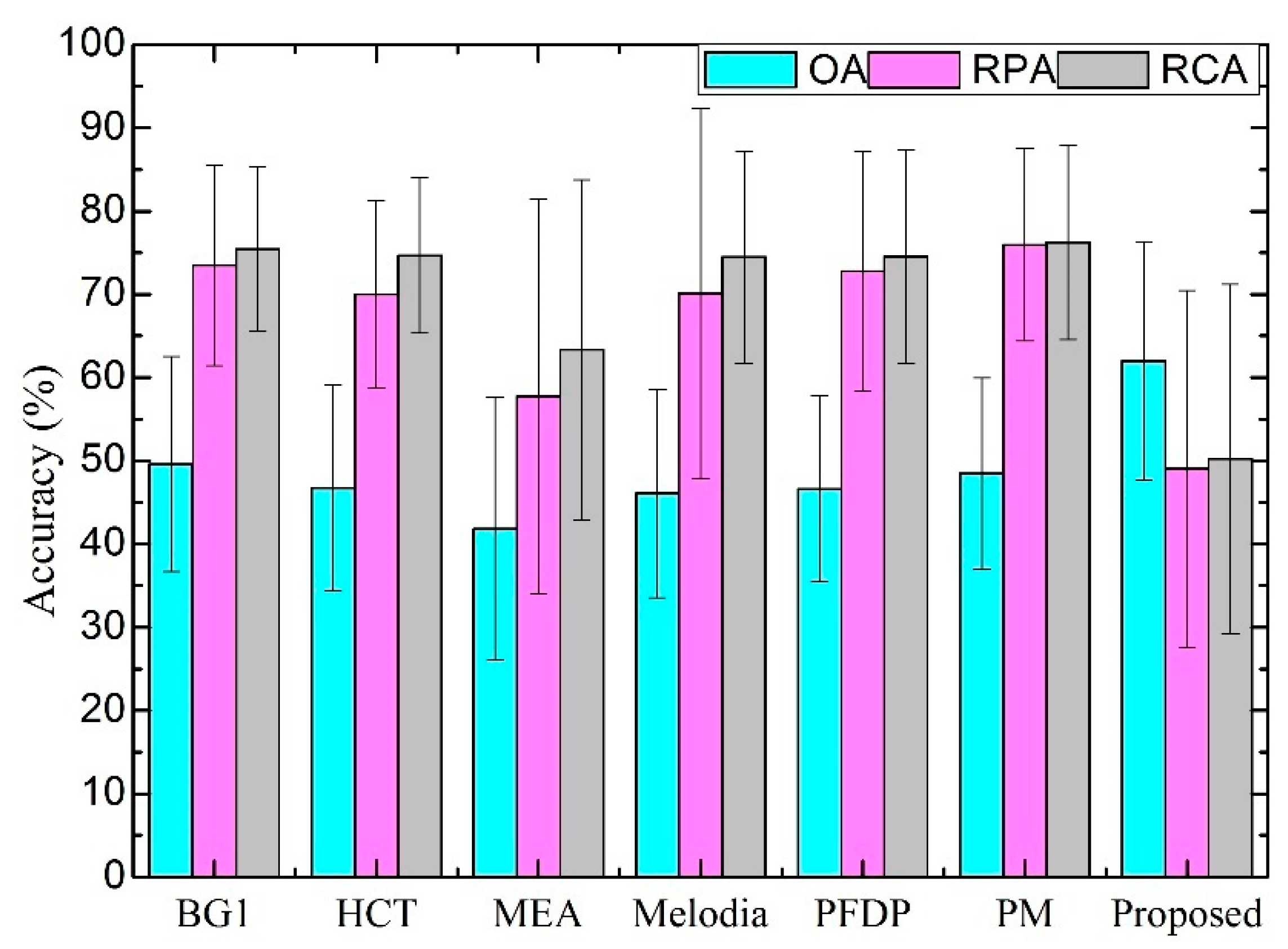

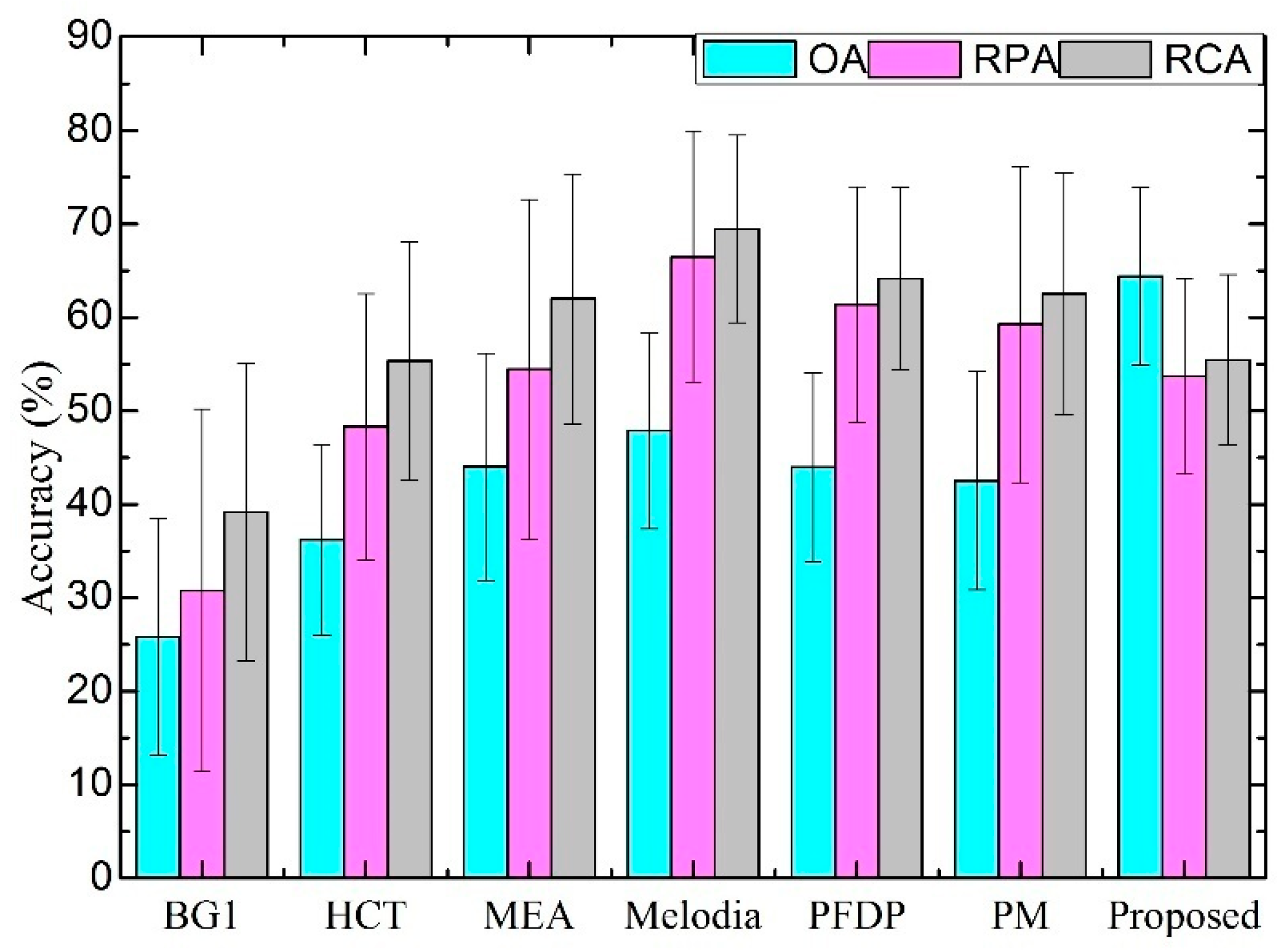

4.3.1. Accuracies on Different Collections

4.3.2. Statistical Significance Analysis

4.3.3. Discussions

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Salamon, J.; Gómez, E.; Ellis, D.P.; Richard, G. Melody extraction from polyphonic music signals: Approaches, applications, and challenges. IEEE Signal Process. Mag. 2014, 31, 118–134. [Google Scholar] [CrossRef]

- Salamon, J.; Serra, J.; Gómez, E. Tonal representations for music retrieval: From version identification to query-by-humming. Int. J. Multimed. Inf. Retr. 2013, 2, 45–58. [Google Scholar] [CrossRef]

- Zhang, W.; Chen, Z.; Yin, F. Melody extraction using chroma-level note tracking and pitch mapping. Appl. Sci. 2018, 8, 1618. [Google Scholar] [CrossRef]

- Gathekar, A.O.; Deshpande, A.M. Implementation of melody extraction algorithms from polyphonic audio for Music Information Retrieval. In Proceedings of the IEEE International Conference on Advances in Electronics, Communication and Computer Technology, Pune, India, 2–3 December 2016; pp. 6–11. [Google Scholar]

- Goto, M. A real-time music-scene-description system: Predominant-F0 estimation for detecting melody and bass lines in real-world audio signals. Speech Commun. 2004, 43, 311–329. [Google Scholar] [CrossRef]

- Fuentes, B.; Liutkus, A.; Badeau, R.; Richard, G. Probabilistic model for main melody extraction using constant-Q transform. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Kyoto, Japan, 25–30 March 2012; pp. 5357–5360. [Google Scholar]

- Salamon, J.; Gómez, E. Melody extraction from polyphonic music signals using pitch contour characteristics. IEEE Trans. Audio Speech Lang. Process. 2012, 20, 1759–1770. [Google Scholar] [CrossRef]

- Bosch, J.J.; Bittner, R.M.; Salamon, J.; Gutiérrez, E.G. A comparison of melody extraction methods based on source-filter modelling. In Proceedings of the 17th International Society for Music Information Retrieval Conference, New York, NY, USA, 7–11 August 2016; pp. 571–577. [Google Scholar]

- Bosch, J.; Gómez, E. Melody extraction based on a source-filter model using pitch contour selection. In Proceedings of the Sound and Music Computing Conference, Hamburg, Germany, 31 August–3 September 2016; pp. 67–74. [Google Scholar]

- Zhang, W.; Chen, Z.; Yin, F. Main melody extraction from polyphonic music based on modified Euclidean algorithm. Appl. Acoust. 2016, 112, 70–78. [Google Scholar] [CrossRef]

- Zhang, W.; Chen, Z.; Yin, F.; Zhang, Q. Melody extraction from polyphonic music using particle filter and dynamic programming. IEEE/ACM Trans. Audio Speech Lang. Process. 2018, 26, 1620–1632. [Google Scholar] [CrossRef]

- Fan, Z.-C.; Jang, J.-S.R.; Lu, C.-L. Singing voice separation and pitch extraction from monaural polyphonic audio music via DNN and adaptive pitch tracking. In Proceedings of the International Conference on Multimedia Big Data, Taipei, Taiwan, 20–22 April 2016; pp. 178–185. [Google Scholar]

- Rigaud, F.; Radenen, M. Singing voice melody transcription using deep neural networks. In Proceedings of the International Society for Music Information Retrieval Conference, New York, NY, USA, 7–11 August 2016; pp. 737–743. [Google Scholar]

- Bittner, R.M.; Mcfee, B.; Salamon, J.; Li, P.; Bello, J.P. Deep salience representations for F0 estimation in polyphonic music. In Proceedings of the International Society for Music Information Retrieval Conference, Suzhou, China, 23–27 October 2017; pp. 63–70. [Google Scholar]

- Lu, W.T.; Su, L. Vocal Melody Extraction with Semantic Segmentation and Audio-symbolic Domain Transfer Learning. In Proceedings of the International Society for Music Information Retrieval Conference, Paris, France, 23–27 September 2018; pp. 521–528. [Google Scholar]

- Hsieh, T.-H.; Su, L.; Yang, Y.-H. A streamlined encoder/decoder architecture for melody extraction. In Proceedings of the International Conference on Acoustics, Speech and Signal Processing, Brighton, UK, 12–17 May 2019; pp. 156–160. [Google Scholar]

- Bosch, J. From Heuristics-Based to Data-Driven Audio Melody Extraction. Ph.D. Thesis, Universitat Pompeu Fabra, Barcelona, Spain, 2017. [Google Scholar]

- Huang, G.-B.; Babri, H.A. Upper bounds on the number of hidden neurons in feedforward networks with arbitrary bounded nonlinear activation functions. IEEE Trans. Neural Netw. 1998, 9, 224–229. [Google Scholar] [CrossRef] [PubMed]

- Huang, G.-B.; Zhu, Q.-Y.; Siew, C.-K. Extreme learning machine: Theory and applications. Neurocomputing 2006, 70, 489–501. [Google Scholar] [CrossRef]

- Zhang, D.; Yu, D. Multi-fault diagnosis of gearbox based on resonance-based signal sparse decomposition and comb filter. Measurement 2017, 103, 361–369. [Google Scholar] [CrossRef]

- Verde, L.; de Pietro, G.; Sannino, G. A methodology for voice classification based on the personalized fundamental frequency estimation. Biomed. Signal Process. Control. 2018, 42, 134–144. [Google Scholar] [CrossRef]

- Maskeliunas, R.; Raudonis, V.; Damasevicius, R. Recognition of emotional vocalizations of canine. Acta Acust. United Acust. 2018, 104, 304–314. [Google Scholar] [CrossRef]

- Serre, D. Matrices: Theory and Applications; Springer: Berlin, Germany, 2002. [Google Scholar]

- Schörkhuber, C.; Klapuri, A.; Holighaus, N.; Dörfler, M. A Matlab toolbox for efficient perfect reconstruction time-frequency transforms with log-frequency resolution. In Proceedings of the 53rd International Conference: Semantic Audio Engineering Society Conference, London, UK, 26–29 January 2014; pp. 1–8. [Google Scholar]

- Reddy, G.; Rao, K.S. Predominant Vocal Melody Extraction from Enhanced Partial Harmonic Content; IEEE: Piscataway, NJ, USA, 2017. [Google Scholar]

- Dressler, K. Pitch estimation by the pair-wise evaluation of spectral peaks. In Proceedings of the AES International Conference, Ilmenau, Germany, 22–24 July 2011; pp. 1–10. [Google Scholar]

- Zhang, Q.; Chen, Z.; Yin, F. Distributed marginalized auxiliary particle filter for speaker tracking in distributed microphone networks. IEEE/ACM Trans. Audio Speech Lang. Process. 2016, 24, 1921–1934. [Google Scholar] [CrossRef]

- Durrieu, J.-L.; Richard, G.; David, B.; Févotte, C. Source/filter model for unsupervised main melody extraction from polyphonic audio signals. IEEE Trans. Audio Speech Lang. Process. 2010, 18, 564–575. [Google Scholar] [CrossRef]

- Arora, V.; Behera, L. On-line melody extraction from polyphonic audio using harmonic cluster tracking. IEEE Trans. Audio Speech Lang. Process. 2013, 21, 520–530. [Google Scholar] [CrossRef]

| Collection | Training | Validation | Testing | Total |

|---|---|---|---|---|

| ISMIR2004 | 4 | 1 | 15 | 20 |

| MIREX05 | 1 | 0 | 12 | 13 |

| MIR-1K | 145 | 99 | 756 | 1000 |

| Neuron Number | Training Accuracy (%) | ValidationAccuracy (%) | Training Time (s) | Validation Time (s) |

|---|---|---|---|---|

| 1000 | 73.56 | 67.40 | 193.16 | 11.77 |

| 2000 | 77.79 | 68.80 | 594.48 | 24.73 |

| 3000 | 80.20 | 69.45 | 1236.82 | 37.34 |

| 4000 | 83.58 | 69.87 | 2300.94 | 50.33 |

| 5000 | 84.91 | 70.04 | 3786.51 | 63.95 |

| 6000 | 85.90 | 69.87 | 5698.43 | 71.48 |

| 7000 | 86.35 | 69.59 | 8112.62 | 81.07 |

| Datasets | Pairs | Mean | t statistic | p-Value |

|---|---|---|---|---|

| ISMIR2004 | Proposed-BG1 | 4.08 | 0.67 | 0.51 |

| Proposed-HCT | 5.56 | 1.08 | 0.30 | |

| Proposed-MEA | 9.34 | 1.04 | 0.06 | |

| Proposed-Melodia | 2.20 | 0.52 | 0.62 | |

| Proposed-PFDP | 2.35 | 0.51 | 0.62 | |

| Proposed-PM | 6.45 | 0.97 | 0.35 | |

| MIREX05 | Proposed-BG1 | 12.44 | 2.20 | 0.05 |

| Proposed-HCT | 15.28 | 3.00 | 0.01 | |

| Proposed-MEA | 20.22 | 2.79 | 0.02 | |

| Proposed-Melodia | 15.97 | 3.27 | 0.01 | |

| Proposed-PFDP | 11.39 | 2.60 | 0.01 | |

| Proposed-PM | 13.57 | 2.89 | 0.02 | |

| MIR-1K | Proposed-BG1 | 38.55 | 66.95 | 0.00 |

| Proposed-HCT | 28.17 | 56.31 | 0.00 | |

| Proposed-MEA | 20.36 | 38.31 | 0.00 | |

| Proposed-Melodia | 16.52 | 32.55 | 0.00 | |

| Proposed-PFDP | 15.38 | 31.24 | 0.00 | |

| Proposed-PM | 21.55 | 43.63 | 0.00 |

| Datasets | Pairs | Mean | t statistic | p-Value |

|---|---|---|---|---|

| ISMIR2004 | Proposed-BG1 | 10.85 | 1.33 | 0.20 |

| Proposed-HCT | 9.39 | 1.39 | 0.19 | |

| Proposed-MEA | 1.70 | 0.28 | 0.78 | |

| Proposed-Melodia | 13.53 | 2.35 | 0.03 | |

| Proposed-PFDP | 13.54 | 2.27 | 0.04 | |

| Proposed-PM | 10.72 | 1.26 | 0.23 | |

| MIREX05 | Proposed-BG1 | 24.47 | 3.47 | 0.01 |

| Proposed-HCT | 21.00 | 3.50 | 0.01 | |

| Proposed-MEA | 8.74 | 0.94 | 0.37 | |

| Proposed-Melodia | 21.08 | 3.42 | 0.01 | |

| Proposed-PFDP | 23.77 | 4.04 | 0.00 | |

| Proposed-PM | 26.92 | 5.28 | 0.00 | |

| MIR-1K | BG1-Proposed | −22.93 | −28.23 | 0.00 |

| HCT-Proposed | −5.42 | −8.34 | 0.00 | |

| MEA-Proposed | 0.74 | 0.99 | 0.32 | |

| Melodia-Proposed | 12.74 | 20.23 | 0.00 | |

| PFDP-Proposed | 7.63 | 12.77 | 0.00 | |

| PM-Proposed | 6.01 | 11.08 | 0.00 |

| Methods | OA(%) | RPA(%) | RCA(%) |

|---|---|---|---|

| BG1 | 45.58 | 58.56 | 62.79 |

| HCT | 47.60 | 62.76 | 67.47 |

| MEA | 45.18 | 57.49 | 63.48 |

| Melodia | 52.96 | 69.01 | 71.77 |

| PFDP | 54.22 | 69.41 | 71.71 |

| PM | 42.67 | 59.45 | 62.72 |

| Proposed | 63.93 | 54.43 | 56.08 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, W.; Zhang, Q.; Bi, S.; Fang, S.; Dai, J. Efficient Melody Extraction Based on Extreme Learning Machine. Appl. Sci. 2020, 10, 2213. https://doi.org/10.3390/app10072213

Zhang W, Zhang Q, Bi S, Fang S, Dai J. Efficient Melody Extraction Based on Extreme Learning Machine. Applied Sciences. 2020; 10(7):2213. https://doi.org/10.3390/app10072213

Chicago/Turabian StyleZhang, Weiwei, Qiaoling Zhang, Sheng Bi, Shaojun Fang, and Jinliang Dai. 2020. "Efficient Melody Extraction Based on Extreme Learning Machine" Applied Sciences 10, no. 7: 2213. https://doi.org/10.3390/app10072213

APA StyleZhang, W., Zhang, Q., Bi, S., Fang, S., & Dai, J. (2020). Efficient Melody Extraction Based on Extreme Learning Machine. Applied Sciences, 10(7), 2213. https://doi.org/10.3390/app10072213