1. Introduction

In Traditional Chinese Medicine (TCM), the tongue conveys abundant valuable information about the health status of the human body, i.e., disorders or even pathological changes of internal organs can be reflected by the human tongue and observed by medical practitioners [

1]. Tongue image analysis is the main direction of the objectification of tongue diagnosis [

2,

3]. Tongue color is one of the most important characteristics of tongue diagnosis. However, even under the same tongue, the color appearance of captured digital tongue images displayed using different cameras under different lighting conditions and displayed on different monitors can lead to different diagnostic results. Therefore, color correction of the tongue image is indispensable for computer-assisted tongue image analysis.

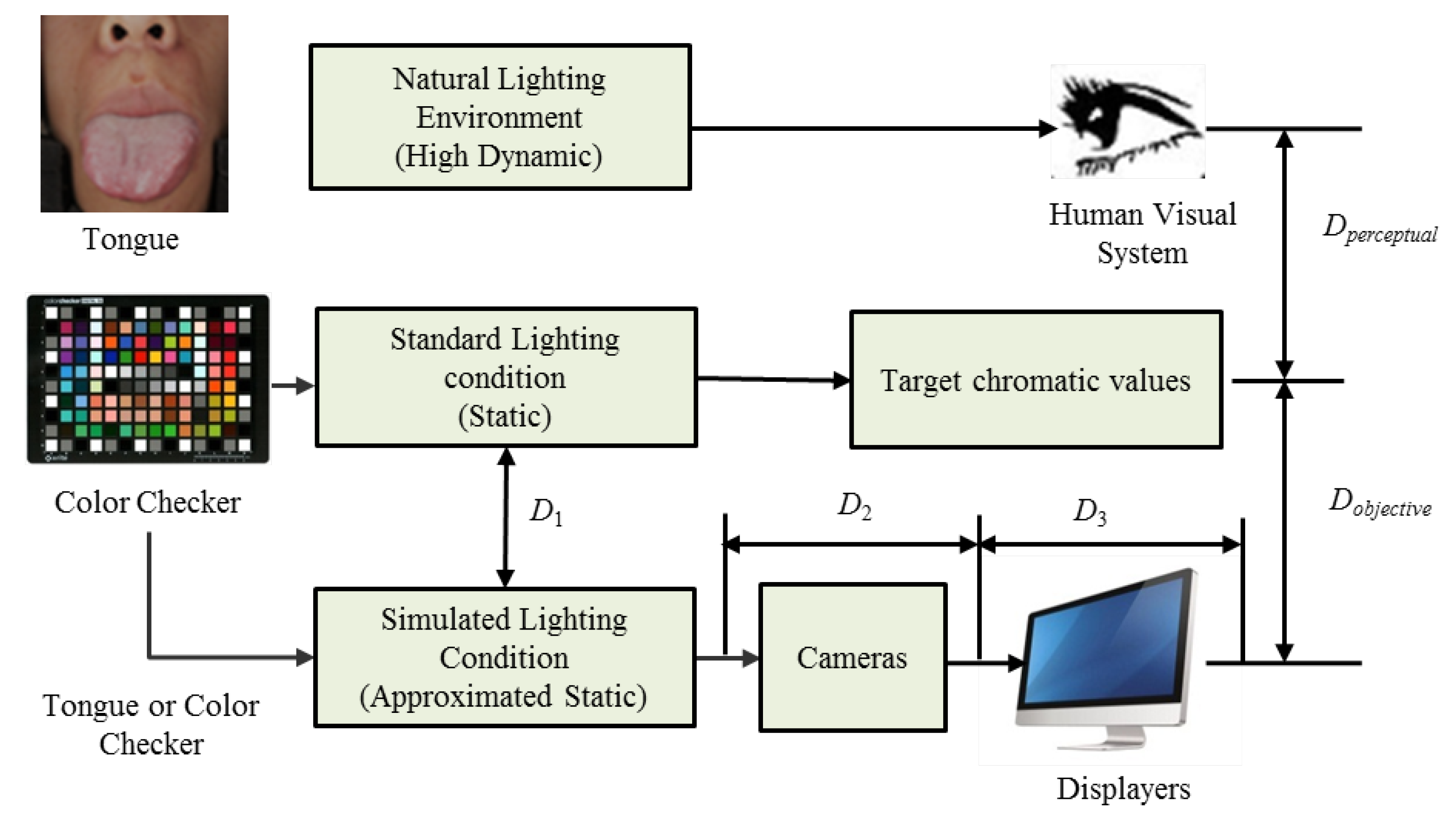

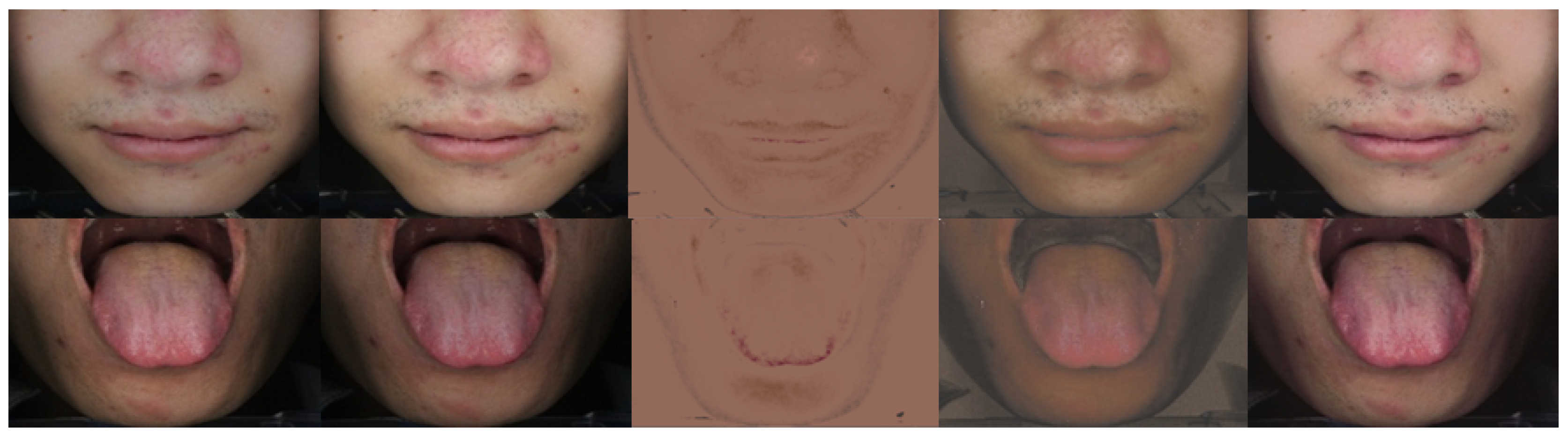

The color distortion of the captured tongue image usually results from lighting conditions, cameras, and monitors. In this paper, we formulate color distortion as the sum of the objective distortion (

) and the perceptual distortion (

), as shown in

Figure 1. The objective distortion represents a quantitative difference between the captured image and the target chrominance value. Perceptual distortion reflects the difference between the human perceived tongue images from the patients and the monitors. As shown in

Figure 1, a doctor examines a patient’s tongue under natural lighting in a traditional manner. The human visual system has the ability to respond to various dynamic lighting conditions through adaptive mechanisms. In order to quantitatively record and analyze color information, the color checker [

4] is a useful tool for providing chromaticity values for specific colors under standard (static) lighting conditions. As shown in the bottom row of

Figure 1, there are several color distortions during tongue image capture and display, including differences between simulated lighting conditions and standard light sources (

), differences via cameras (

) and difference via displays (

). Note that

also includes inconsistencies between different devices.

There are several approaches to reduce color distortion. We can simulate standard lighting conditions with specially designed devices. According to the recommendations of the International Commission on Illumination (CIE), a D65 light source with a color temperature of 6500 K is selected as the standard lighting environment, which can simulate daylight. Distortions introduced by the camera can be significantly alleviated by the gray card correction, which is suitable for some specific types of cameras. We can also correct the color distortion of the monitor with professional tools like X-Rite i1 Display Pro or Spyder5 Express. Color distortion from different cameras and inconsistencies with different capture devices should be corrected by a color correction algorithm.

As shown in

Figure 1, in addition to objective distortions (

), perceptual distortions (

) such as the variety in the open working environment and subjective preference of the doctors are also difficult to handle, which has been ignored by most color correction methods.

Generally, the lighting condition of a doctors’ consultation room exhibits a high dynamic variation due to many factors, such as windows, sunlight, and lighting. They differ from the simulated lighting conditions in our capture device. Some doctors are not accustomed to the differences between standard lighting conditions and the environments that they are familiar with. When observing the tongue images obtained by the imaging system, their accurate diagnosis will be affected. Therefore, it is necessary to provide a flexible solution to adjust the perceived color appearance of the imaged tongue image according to the doctor’s preferences and keep the objective chromaticity value of the captured tongue image unchanged for further quantitative analysis.

In this paper, a Two-phase Deep-Color Correction Network (TDCCN) for TCM tongue images is proposed, which extends our previous conference work [

5] to handle objective and subjective color correction. In our method, the color correction of the tongue image is divided into two phases, namely objective color correction (OCC) for computer analysis and perceptual color correction (PCC) for doctor observation. The contributions of this paper are as follows:

A novel two-phase color correction framework for tongue images is proposed in this paper for the first time. The framework can handle both objective color consistency and the perceptual flexibility. The output tongue image of the proposed method does help the computer-assisted tongue diagnosis, as well as the subjective preference of doctors.

In order to correct the objective color distortion of the tongue image, a simple and effective convolutional neural network was designed in the first phase, where the number of layers was determined through experimental performance.

To provide flexibility in dealing with various working environments and personal preference of doctors, a color transformation based perceptual color adjustment scheme is provided in the second phase.

Intensive experiments and results show that our proposed TDCCN can achieve better performance than several existing methods of color correction of TCM tongue images. Our method can effectively deal with objective and subjective color correction.

The remainder of this paper is organized as follows.

Section 2 briefly reviews related work.

Section 3 details the proposed TDCCN.

Section 4 then conducts comprehensive experiments and provides a discussion. Finally,

Section 5 provides the conclusions.

2. Related Work

Color correction has become one of the most critical issues in tongue image processing and computational color constancy. Thus far, lots of methods have been investigated, such as polynomial-based methods [

6,

7,

8,

9,

10,

11], SVR-based methods [

12], and neural network-based color correction methods [

13,

14].

Most existing color correction methods record the three-color response (RGB) to a standard color space. Graham et al. [

8] employ the alternating least squares technique for color correction of nonuniform intensity. Their method estimates both the intensity variation and the

transformation matrix from a single image of the color checker. Finlayson et al. [

9] employs root-polynomial regression to handle color correction as the exposure changes. These two methods are proposed for general color correction applications. Regarding the tongue images, David Zhang’s research group at the Hong Kong Polytechnic University did excellent research. In their group, Wang et al. [

11] classify the 24-patch color checker into tongue-related and tongue-independent categories to calculate the color difference between the target value and the corrected value. They further designed a new color checker for the tongue color space to improve the accuracy of the correction by a polynomial regression algorithm [

10]. In addition to polynomial-based methods, Zhang et al. [

12] use support vector regression (SVR) in tongue image color correction. Our group, the Signal and Information Processing Lab at Beijing University of Technology has been researched on tongue image analysis system for more than 20 years. In our group, Wei et al. [

15] apply Partial Least Squares Regression (PLSR) to their algorithm. To improve the effectiveness of the PLSR-based color correction algorithm, Zhuo et al. [

16] proposed a K-PLSR-based color correction method for TCM tongue images under different lighting conditions. Zhuo et al. [

13] further propose a SA-GA-BP (Simulated Annealing-Genetic Algorithm-Back Propagation) neural network. They used several colors similar to the tongue body, tongue coating, and skin to improve the accuracy of the correction.

In addition to the contributions of these two groups, there are several papers on this topic. Zhang et al. [

14] applied the mind evolutionary computation and the AdaBoost algorithm in the conventional BP neural network. Sui et al. [

17] established a mapping between the collected RGB tongue images and the standard RGB value through the calibration of the X-rite Color Checker.

The above-mentioned methods have made great progress in the development of tongue image color correction. However, the existing methods have two drawbacks. Firstly, most of them ignore the issue of perceptual adaptation. They mainly aim to reduce objective chromatic errors to facilitate further machine analysis rather than reduce perceptual color distortion. Secondly, due to the limitations of traditional regression models, there is still room to further improve the regression accuracy of objective color correction.

Image objective color correction is a regression problem, and we attempt to solve it through a convolutional neural networks (CNN). CNN is a special form of feed-forward neural network (FNN), which is trained by back-propagation [

18]. Hornik et al. [

19] have proved that FNN are capable of approximating any measurable function to any desired accuracy. In addition, many advances have been achieved on learning methods and regularization for training CNN, such as Rectified Linear Unit (ReLU) [

20]. Furthermore, the training process can be accelerated by the powerful GPUs. Generally, CNNs are used to recognize visual patterns directly from pixel images variability. Gou et al. [

21] corrected large-scale remote sensing image based on Wasserstein CNN. However, CNNs are rarely used for accurate color correction of tongue images. Motivated by Hornik et al. [

19], we established a CNN model to embed the relationship between the distorted color of the captured tongue image and the target chromatics under standard lighting conditions.

As for perceptual color correction, in practice, due to the correlation of the RGB channels, directly adjusting the RGB channels will be complicated. We should choose an appropriate color space where each component is independent [

22]. When representing a typical three-channel image in many of the most well-known color spaces, there will be correlations between the different channels. For example, in RGB space, if the blue channel is larger, most pixels will have larger values for the red and green channels. This means that, if we want to change the appearance of a pixel colors in a coherent way, we should carefully fine-tune all the color channels in tandem. It makes color modification more complicated.

Motivated by the color transfer method, we choose an appropriate color space and then use simple operations. We want an orthogonal color space without a correlation between the axes. Ruderman et al. developed a color space called

l, which minimizes the correlation between the channels of various natural scenes [

23]. We modified the color transfer to provide a simple parameter-based adjustment scheme to handle perceptual distortion in our method.

In order to meet the requirements of quantitative analysis and perceptual color adaptability, we formulated the color distortion of the tongue image acquisition and analysis system as objective distortion and perceptual distortion. Minimizing objective distortion results in chromaticity consistency, which means that similar colors of the tongue are represented as similar chromatic values. This is a prerequisite for automatic image analysis. While reducing perceptual distortion, it helps to show an image of the tongue that is as close as the real tongue. TCM practitioners can make a correct diagnosis from low-perceptual distortion tongue images.

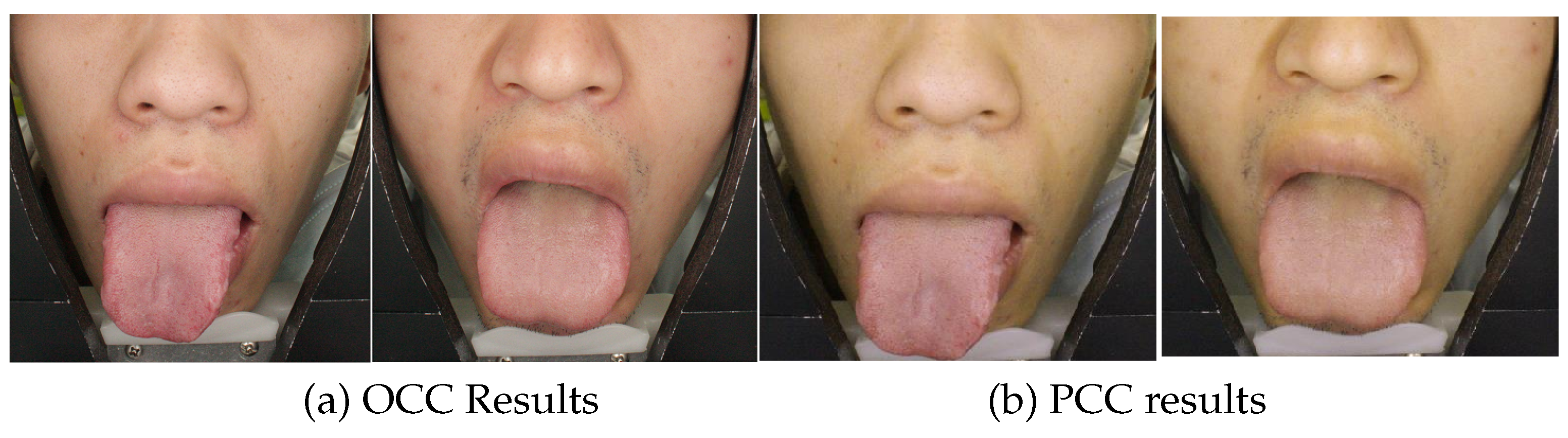

In our algorithm, a two-phase framework is designed to reduce objective distortion and perceptual distortion, respectively. These two phases are objective color correction (OCC) and perceptual color correction (PCC). In the first phase, a simple but effective deep neural network was designed to correct the tongue image to standard lighting conditions. In the second phase, a manual adjustment scheme is provided to adapt the perceptual tongue color images to the color perceived by humans under different lighting conditions. The two phases are cascaded together.

3. The Proposed Algorithm

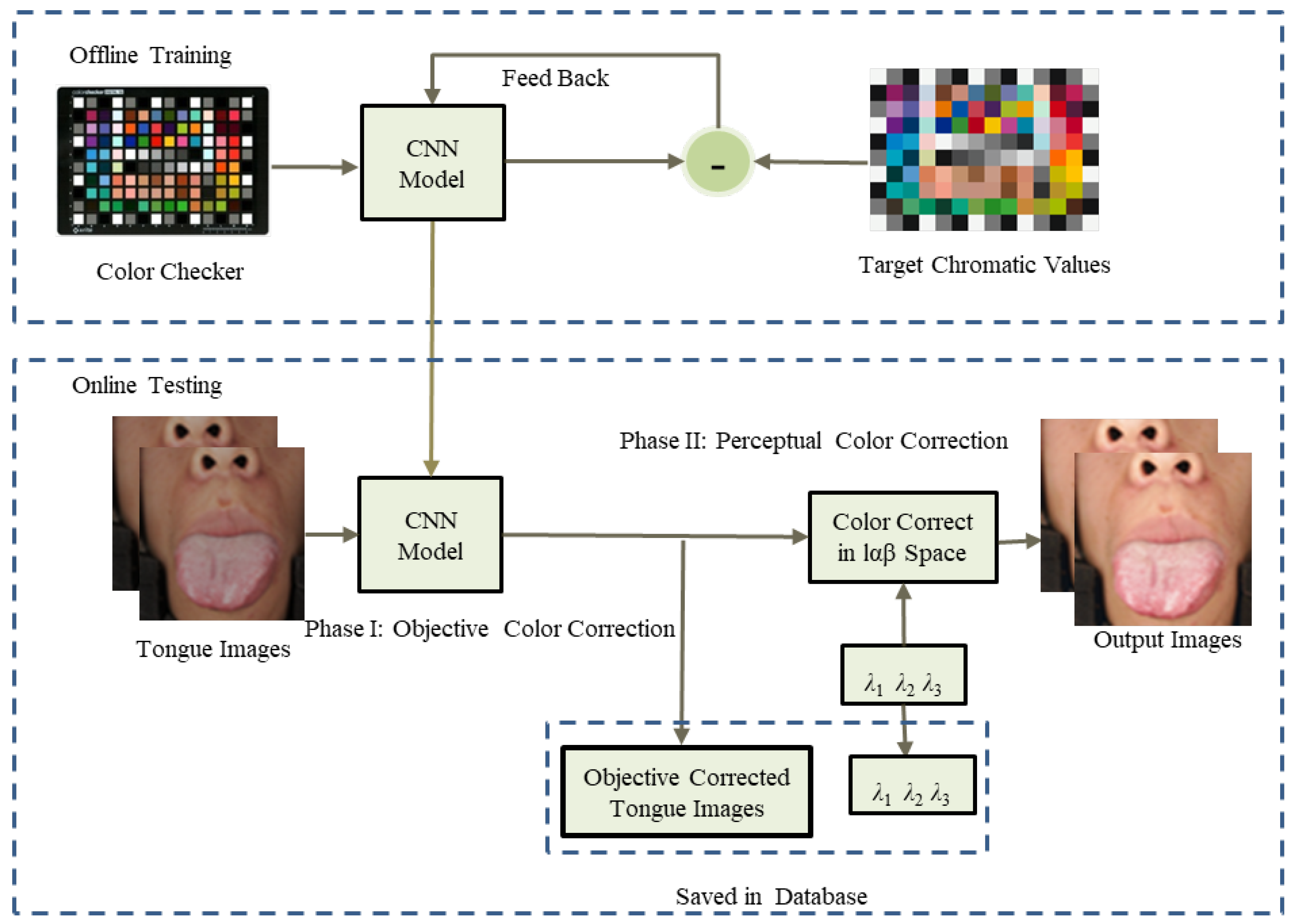

The framework of our proposed algorithm is shown in

Figure 2. It can be divided into offline training and online testing stages. During the offline training stage, a simple convolutional neural network is designed and trained using a color checker. During the online testing stage, the well trained convolutional neural network is utilized to objectively correct the captured tongue images to a standard lighting condition. The output images of the first phase will be stored in a database for further automatic analysis. Then, the second phase provides a flexible way to adjust the color appearance of the tongue image displayed on the screen through three parameters. We will also save these parameters to convert the saved objectively corrected tongue images into a perceptual adjusted image. We also defined a set of default parameters for several typical lighting conditions.

We will describe our algorithm in detail in the rest of this section.

3.1. Phase I: Objective Color Correction (OCC)

3.1.1. The Architecture of the OCC Network

The architecture of the OCC network is shown in

Figure 3. OCC network includes three parts: input layer, nonlinear transform layers, and an output layer. They work together to perform feature learning between distorted color map and original color map. For a regression model, we only utilize the convolution layers without pooling in our network.

In the input layer, the first operation is feature extraction. The convolutional layers transfer the image from the spatial domain to the feature domain. To train the OCC network for color correction, the input image is the captured color patches of the color checker, which is tiled into the

pixel color patches. The target output is the standard chroma value of the color checker. In the input layer, the feature map is composed of a set of three-dimensional tensors. There are 64 filters of size

for generating 64 feature maps. The nonlinear activation function is ReLU. The input layer can be expressed as the following equation:

where

and

are filters and biases, respectively. The size of

is

. Subsequent feature learning plays an important role in learning the nonlinear model, which is essential for color recover. In the nonlinear transformation layers, ReLU is used for nonlinearity. They can be expressed as

where

and

are the convolution filters and biases in the 2nd to 4th layers. The kernel size of the convolution filter is

. Except for the output layer, the feature map of each layer is 64 channels. All feature maps have the same spatial resolution as the input image.

In the output layer, the size of filter is

. These filters reconstruct the output by a convolutional operation given by Equation (

3):

To demonstrate the architecture of the OCC network, the parameter details are listed in

Table 1. For all convolutional layers, the padding is zero, and the stride is set at 1.

The number of hidden layers of the OCC network model is defined by experimental performance. We tested the numbers 1 to 10 of the hidden layers and chose the best number of hidden layers. For more details, see

Section 4.1.

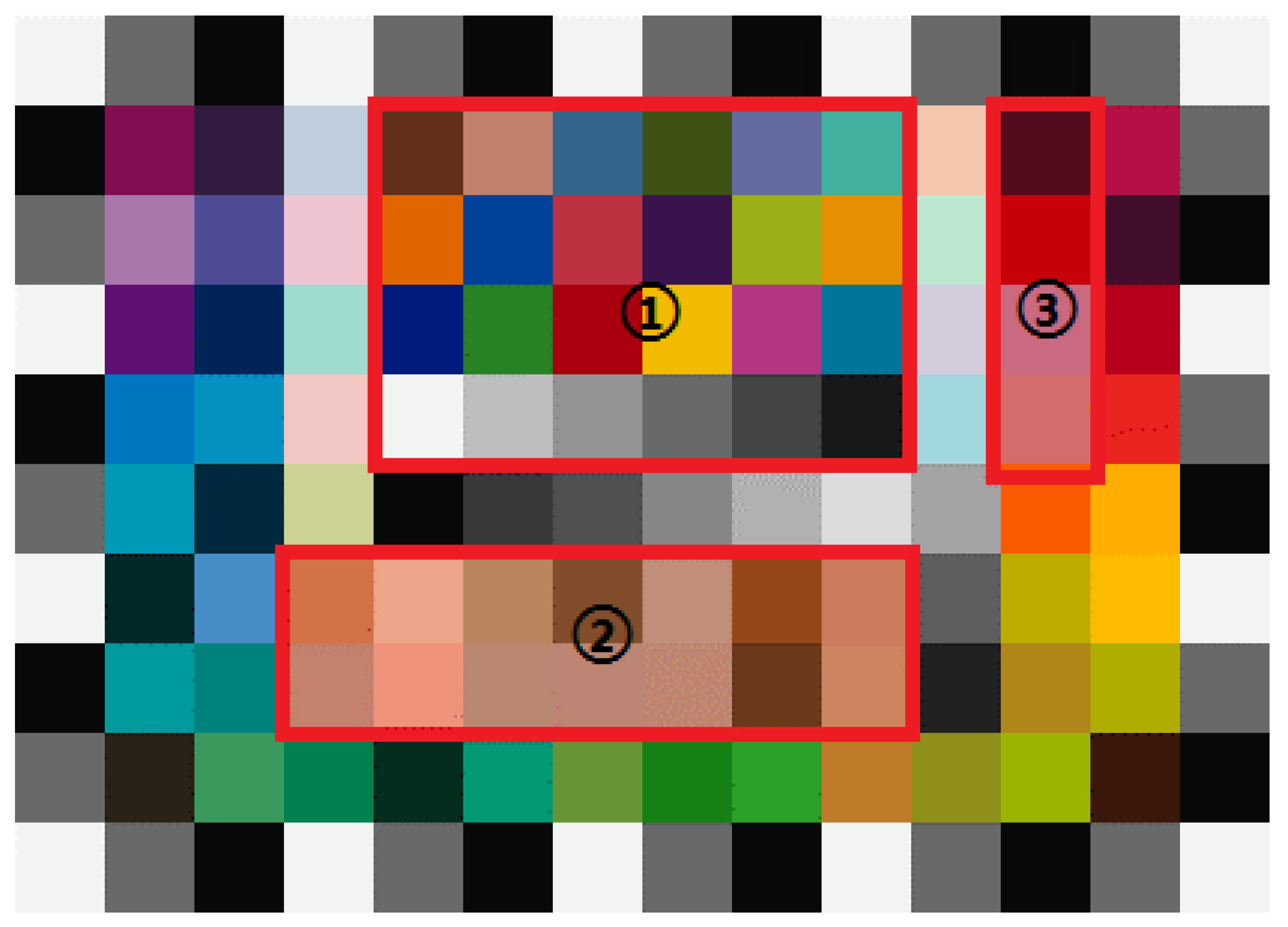

3.1.2. Network Training

For network training, a training set is collected. In general, color correction works better if the Color Checker has more color patches with a wider color gamut. Therefore, we chose Color Checker SG [

4] with 140 color patterns to train our model. Standard reference chromaticity values are available on the official website of X-Rite’s [

4]. In this phase, the sRGB color space is chosen because of its device-independent property.

The loss function is Mean Squared Error (MSE), given by Equation (

4):

where

represents

N training samples,

contains R, G, B color channels, and

is the output of the OCC network, with

as the input and parameters

W and

B. To train network parameters, the loss function is optimized by using the Adam algorithm [

24].

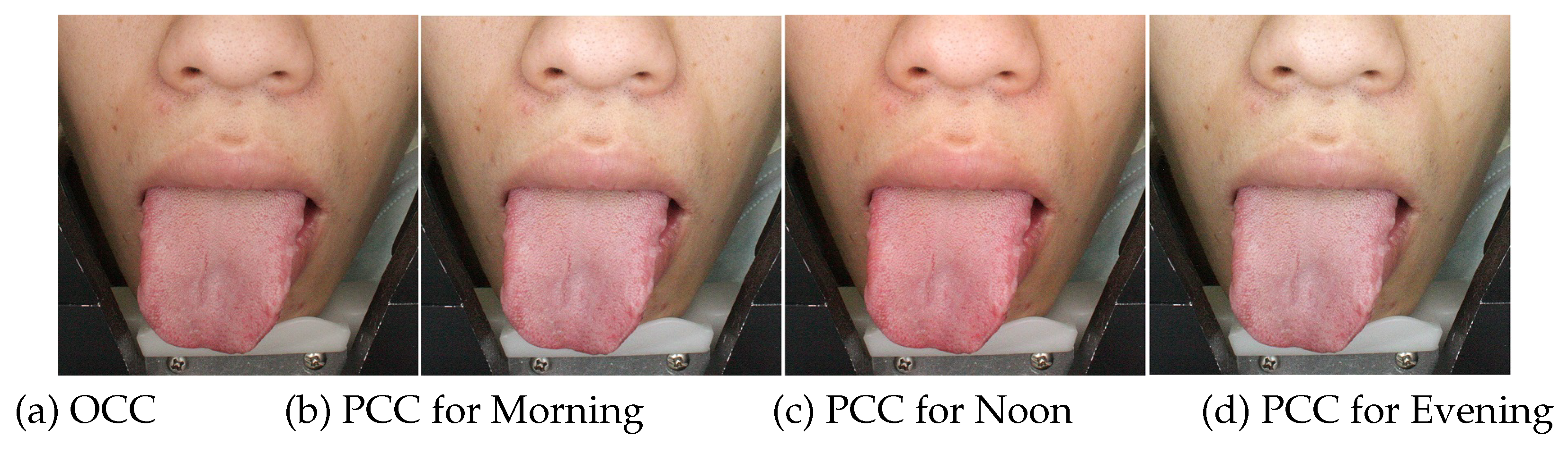

3.2. Phase II: Perceptual Color Correction (PCC)

Inspired by Reinhard et al.’s method of color transfer between images [

22], we proposed an adjustable scheme for the phase of perceptual color correction. The original color transfer imposed the color characteristics of the example image on the target image through a simple statistical analysis. Using

color space [

22], it can minimize the correlations between the channels of many natural scenes. In the

color space,

l is the achromatic channel,

is the yellow-blue channel, and

is the red-green channel. In the color transfer method, both the source and reference images are first converted to the

color space. Then, the ratio of the standard deviations of the two images is used to average and scale the data in each channel. Finally, the average of the reference image of each channel is added to the scaled data and then converted back to the RGB color space. The procedure for this process in the

space is given as the following Equations (5)–(7):

During our perceptual color correction phase, no reference image was available. Therefore, we improved this method to a single image color correction method:

where

,

, and

are user adjustment parameters.

,

, and

are the values of the input color in

space,

,

, and

are averages for each channel. The specific effects of the tongue image and the range of user parameters will be discussed later.

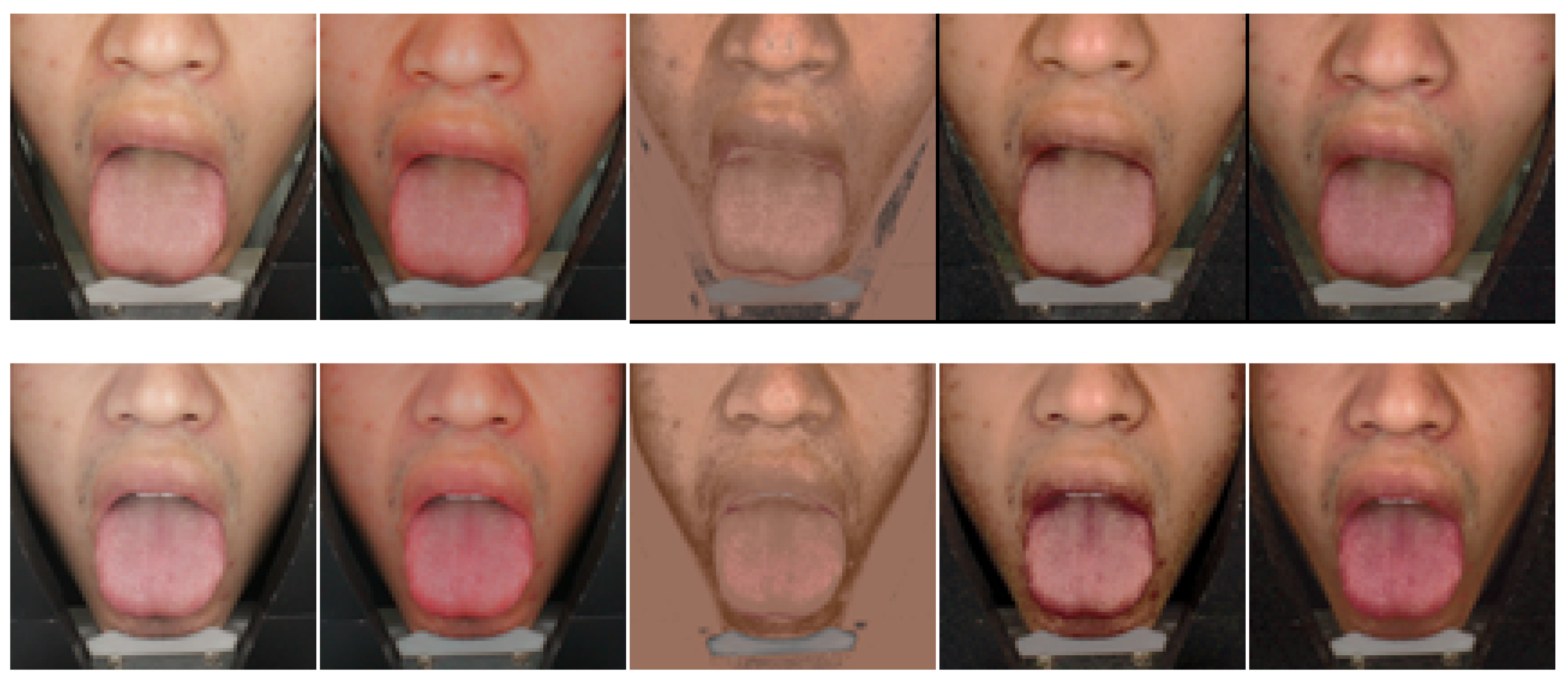

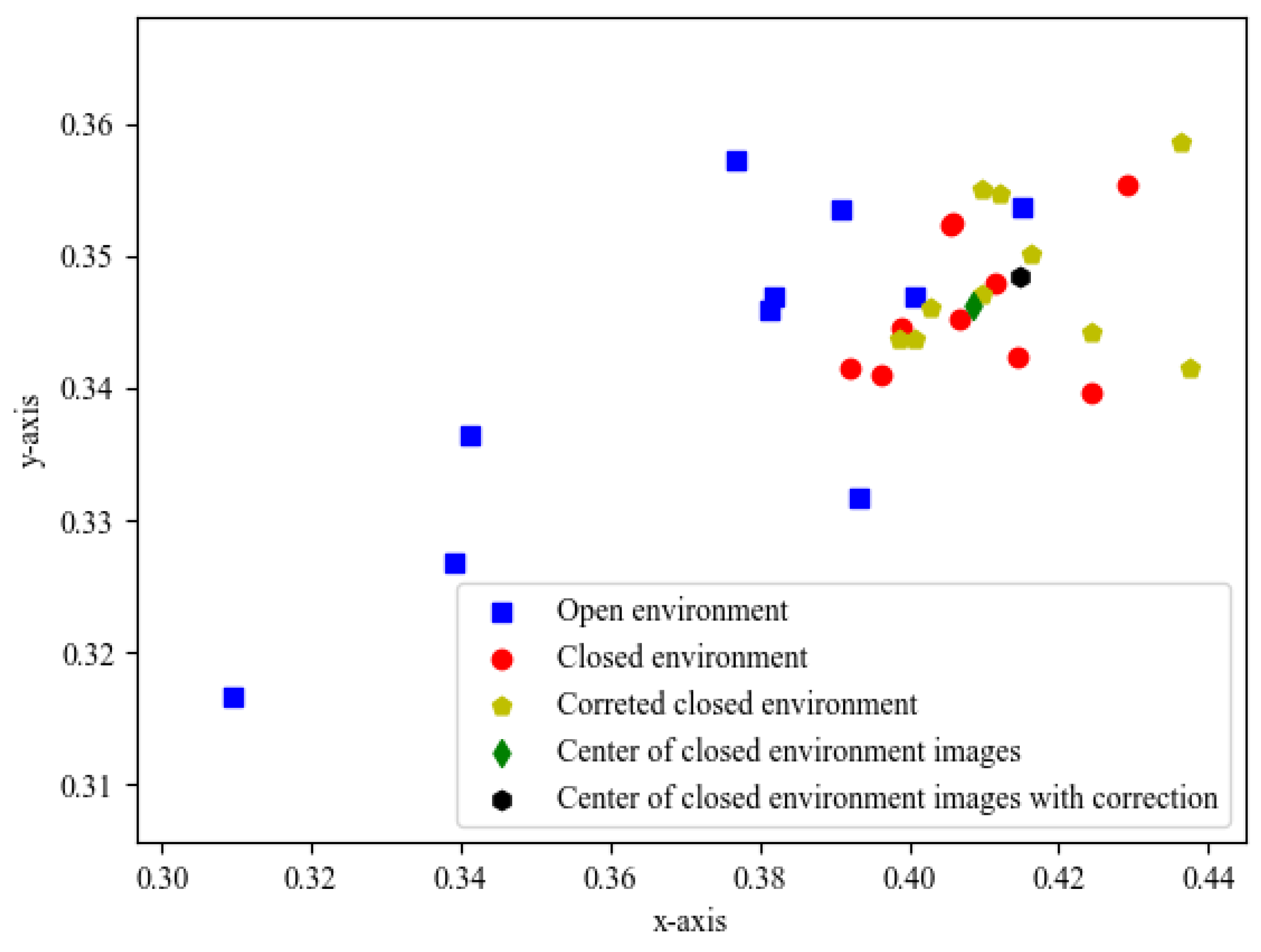

The range of each adjustable parameter is determined by a collection of tongue images that are captured under open lighting conditions. We capture tongue images at different times of the day, say 9:00 a.m., 1:00 p.m. and 6:00 p.m., and calculate the mean and variance of each channel in color space for the collected images. The range of each channel is used to ensure that the adjusted parameters are reasonable.

One could immediately think of the question of why we don’t exploit the color transfer scheme for objective color correction. Actually, the mean and standard deviation of the image depends on the content. Therefore, in addition to efficiency in perceptual color correction, content in the image, such as textual and objects, will also cause changes in the mapping function. Therefore, it is not suitable for objective color correction.

In practice, we capture an image of the tongue in the consultation room, and doctors can adjust the image to their personal preferences and make the image look like it was captured in the room instead of the imaging system. We get a set of parameters that can be used to adjust the captured image. Please note that we only need to save the output corrected image and three perceptual parameters of the first phase. Perceptually corrected images can be easily restored from stored tongue images and their parameters.