DeepSOCIAL: Social Distancing Monitoring and Infection Risk Assessment in COVID-19 Pandemic

Abstract

1. Introduction

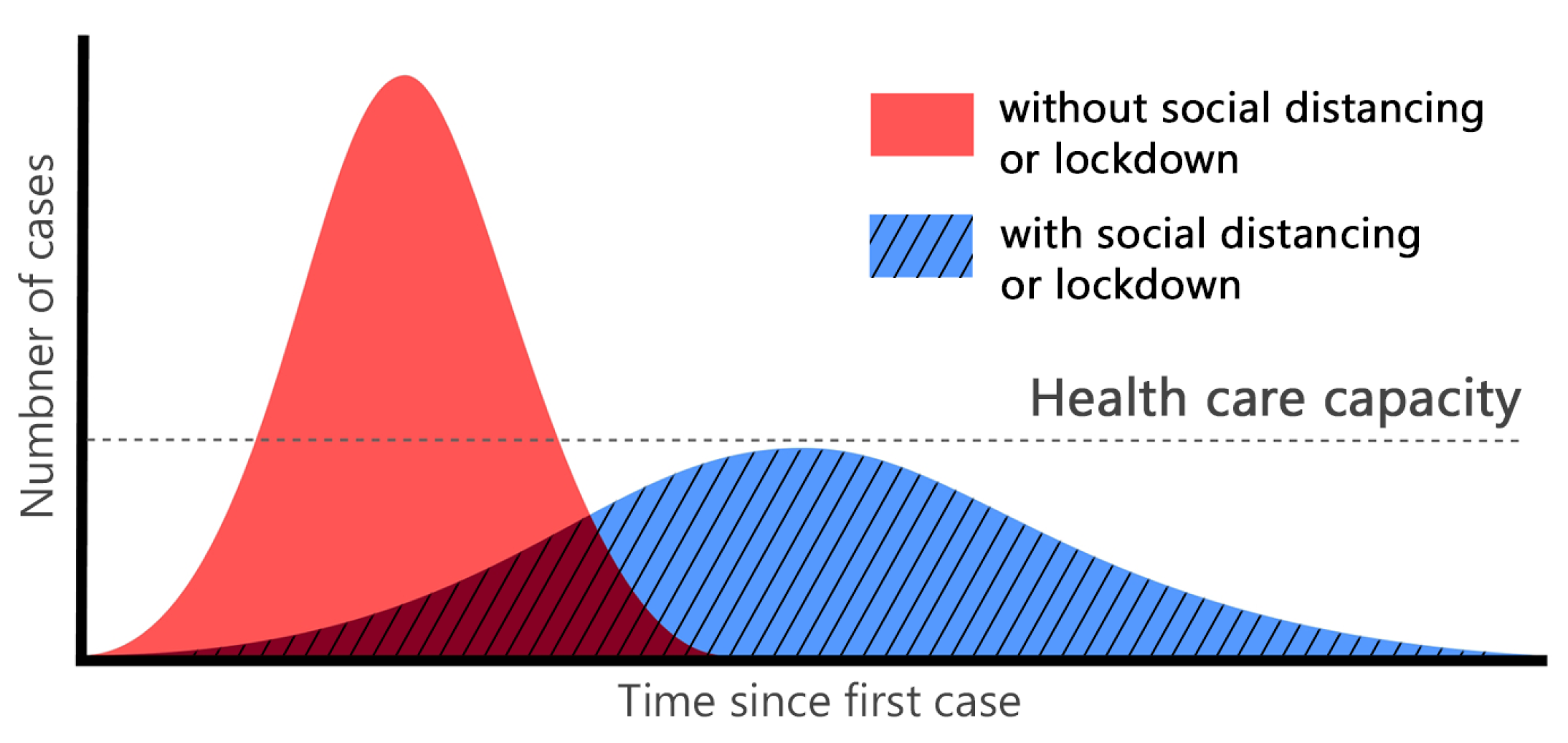

“There is emerging evidence that COVID-19 is an airborne disease that can be spread by tiny particles suspended in the air after people talk or breathe, especially in crowded, closed environments or poorly ventilated settings” [2].

- This study aims to support the reduction of the coronavirus spread and its economic costs by providing an AI-based solution to automatically monitor and detect violations of social distancing among individuals.

- We develop a robust deep neural network (DNN) model for people detection, tracking, and distance estimation called DeepSOCIAL (Section 3.1–Section 3.3). In comparison with some recent works in this area, such as [15], we offer faster and more accurate results.

- We perform a live and dynamic risk assessment, by statistical analysis of spatio-temporal data from the people movements at the scene (Section 4.4). This will enable us to track the moving trajectory of people and their behaviours, to analyse the ratio of the social distancing violations to the total number of people in the scene, and to detect high-risk zones for short- and long-term periods.

- We back up the validity of our experimental results by performing extensive tests and assessments in a diversity of indoor and outdoor datasets which outperform the state-of-the-arts (Table 3, Figure 11).

- The developed model can perform as a generic human detection and tracker system, not limited to social-distancing monitoring, and it can be applied for various real-world applications such as pedestrian detection in autonomous vehicles, human action recognition, anomaly detection, and security systems.

2. Related Works

2.1. Medical Research

2.2. Tracking Technologies

2.3. AI-Based Research

3. Methodology

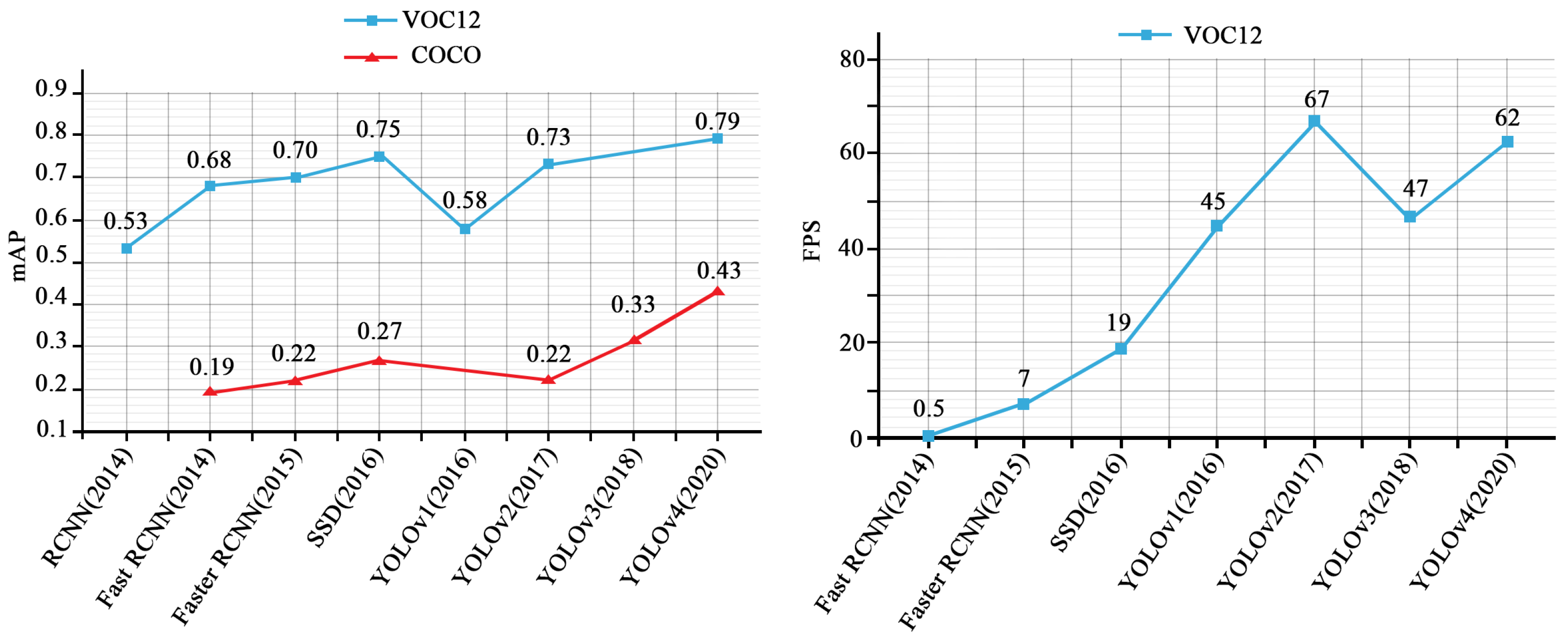

3.1. People Detection

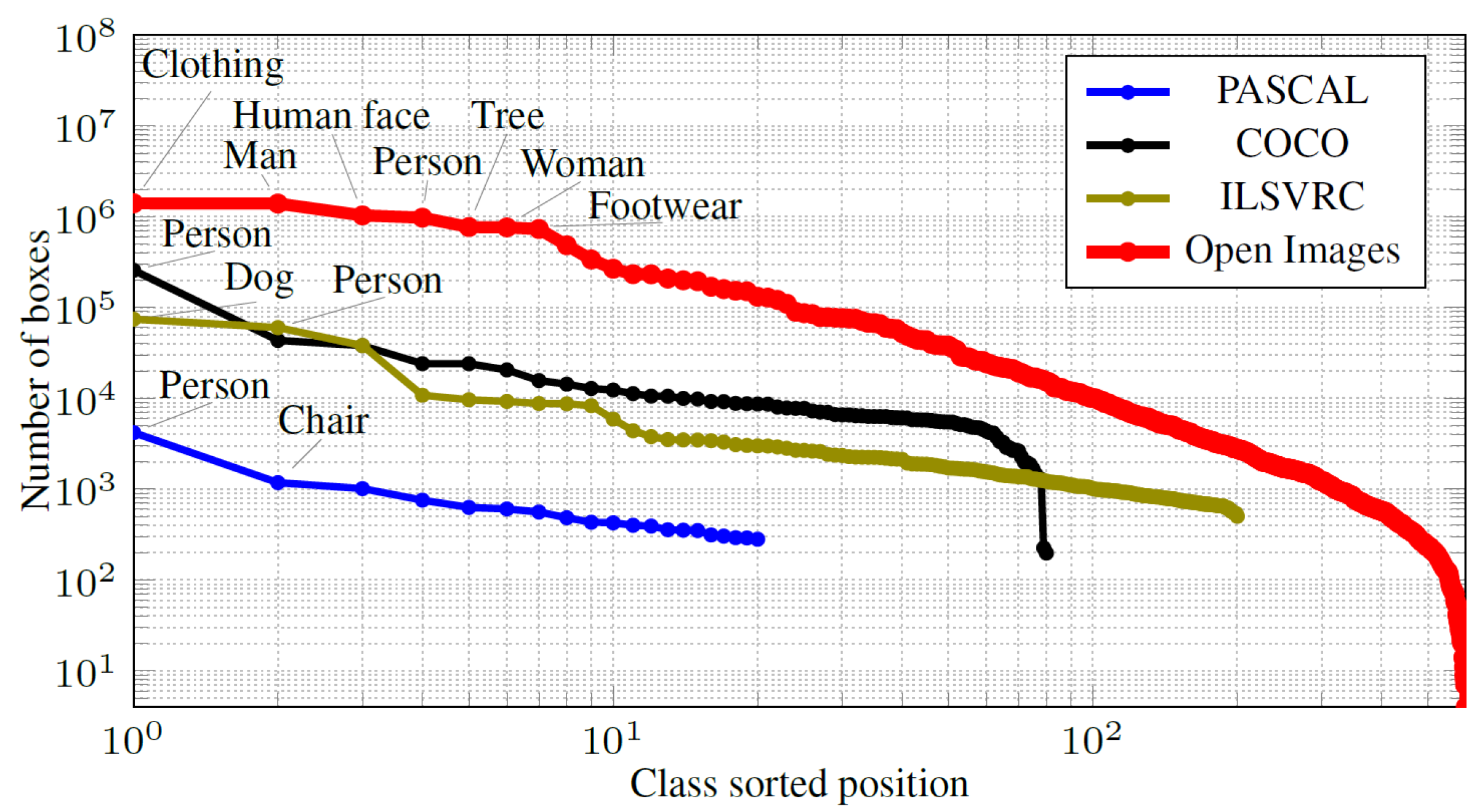

3.1.1. Inputs and Training Datasets

3.1.2. Backbone Architecture

3.1.3. Neck Module

3.1.4. Head Module

3.2. People Tracking

3.3. Inter-Distance Estimation

4. Model Training and Experimental Results

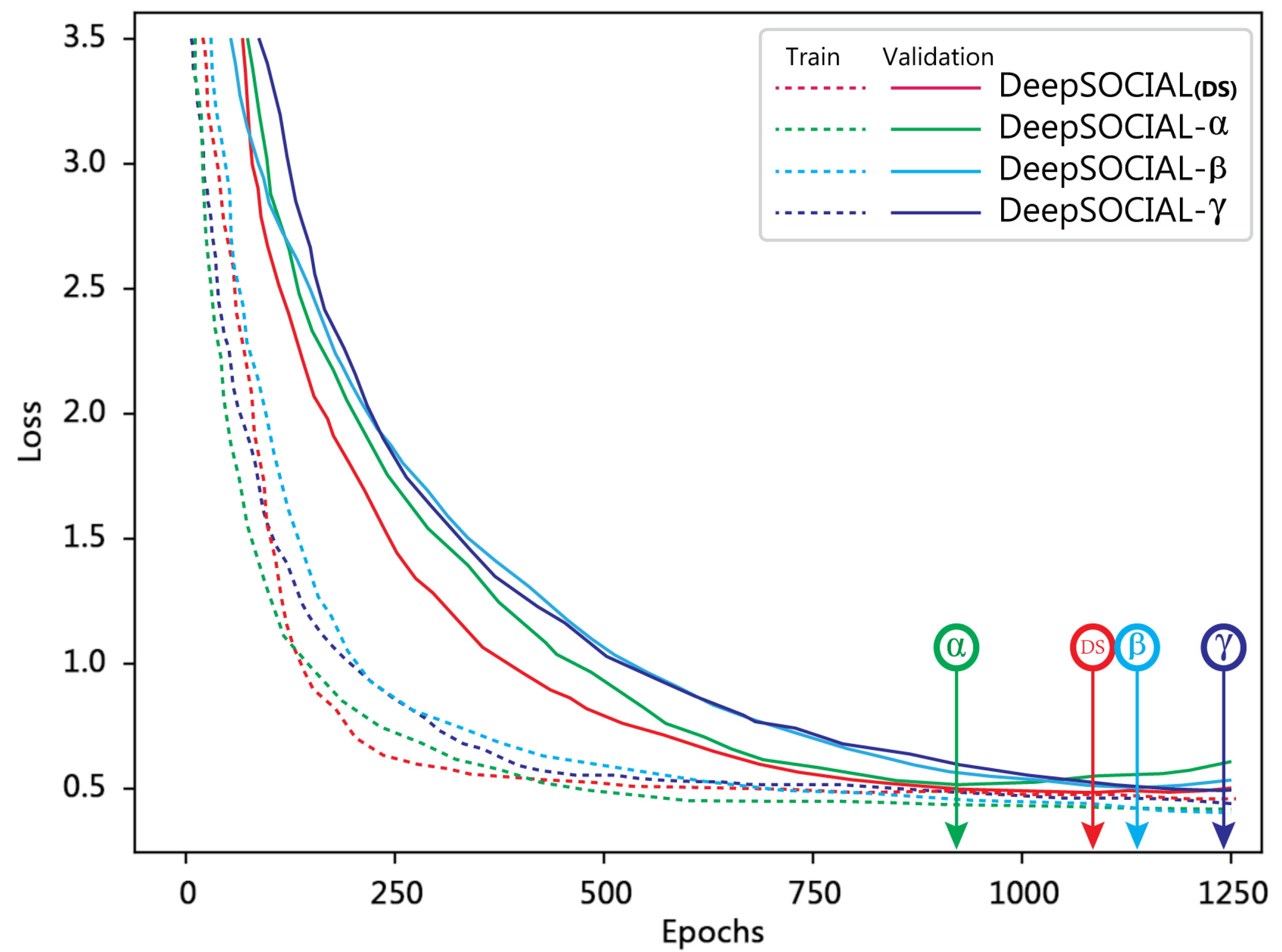

4.1. Model Training

4.2. Performance Evaluation

4.3. Social Distancing Evaluations

4.4. Zone-Based Risk Assessment

- Safe: All people who observed the social distancing (green circles).

- High-risk: All people who violated the social distancing (red circles).

- Potentially risky: Those people who moved together (yellow circles) and were identified as coupled. Any two people in a coupled group were considered as one identity as long as they did not breach the social distancing measures with their neighbouring people.

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Conflicts of Interest

References

- World Health Organisation. WHO Corona-Viruses Disease Dashboard. August 2020. Available online: https://covid19.who.int/table (accessed on 22 October 2020).

- WHO Generals and Directors Speeches. Opening Remarks at the Media Briefing on COVID-19; WHO Generals and Directors Speeches: Geneva, Switzerland, 2020. [Google Scholar]

- Olsen, S.J.; Chang, H.L.; Cheung, T.Y.Y.; Tang, A.F.Y.; Fisk, T.L.; Ooi, S.P.L.; Kuo, H.W.; Jiang, D.D.S.; Chen, K.T.; Lando, J.; et al. Transmission of the severe acute respiratory syndrome on aircraft. N. Engl. J. Med. 2003, 349, 2416–2422. [Google Scholar] [CrossRef] [PubMed]

- Adlhoch, C.; Baka, A.; Ciotti, M.; Gomes, J.; Kinsman, J.; Leitmeyer, K.; Melidou, A.; Noori, T.; Pharris, A.; Penttinen, P. Considerations Relating to Social Distancing Measures in Response to the COVID-19 Epidemic; Technical Report; European Centre for Disease Prevention and Control: Solna Municipality, Sweden, 2020.

- Ferguson, N.M.; Cummings, D.A.; Fraser, C.; Cajka, J.C.; Cooley, P.C.; Burke, D.S. Strategies for mitigating an influenza pandemic. Nature 2006, 442, 448–452. [Google Scholar] [CrossRef] [PubMed]

- Thu, T.P.B.; Ngoc, P.N.H.; Hai, N.M. Effect of the social distancing measures on the spread of COVID-19 in 10 highly infected countries. Sci. Total Environ. 2020, 140430. [Google Scholar] [CrossRef] [PubMed]

- Morato, M.M.; Bastos, S.B.; Cajueiro, D.O.; Normey-Rico, J.E. An Optimal Predictive Control Strategy for COVID-19 (SARS-CoV-2) Social Distancing Policies in Brazil. Ann. Rev. Control 2020. [Google Scholar] [CrossRef] [PubMed]

- Fong, M.W.; Gao, H.; Wong, J.Y.; Xiao, J.; Shiu, E.Y.; Ryu, S.; Cowling, B.J. Nonpharmaceutical measures for pandemic influenza in nonhealthcare settings—Social distancing measures. Emerg. Infect. Dis. 2020, 26, 976. [Google Scholar] [CrossRef] [PubMed]

- Ahmedi, F.; Zviedrite, N.; Uzicanin, A. Effectiveness of workplace social distancing measures in reducing influenza transmission: A systematic review. BMC Public Health 2018, 1–13. [Google Scholar] [CrossRef]

- Australian Government Department of Health. Deputy Chief Medical Officer Report on COVID-19; Department of Health, Social Distancing for Coronavirus: Canberra, Australia, 2020. [CrossRef]

- Nguyen, C.T.; Saputra, Y.M.; Van Huynh, N.; Nguyen, N.T.; Khoa, T.V.; Tuan, B.M.; Nguyen, D.N.; Hoang, D.T.; Vu, T.X.; Dutkiewicz, E.; et al. Enabling and Emerging Technologies for Social Distancing: A Comprehensive Survey. arXiv 2020, arXiv:2005.02816. [Google Scholar]

- Punn, N.S.; Sonbhadra, S.K.; Agarwal, S. COVID-19 Epidemic Analysis using Machine Learning and Deep Learning Algorithms. medRxiv 2020. [Google Scholar] [CrossRef]

- Shi, F.; Wang, J.; Shi, J.; Wu, Z.; Wang, Q.; Tang, Z.; He, K.; Shi, Y.; Shen, D. Review of artificial intelligence techniques in imaging data acquisition, segmentation and diagnosis for COVID-19. IEEE Rev. Biomed. Eng. 2020. [Google Scholar] [CrossRef]

- Gupta, R.; Pandey, G.; Chaudhary, P.; Pal, S.K. Machine Learning Models for Government to Predict COVID-19 Outbreak. Int. J. Digit. Gov. Res. Pract. 2020, 1. [Google Scholar] [CrossRef]

- Punn, N.S.; Sonbhadra, S.K.; Agarwal, S. Monitoring COVID-19 social distancing with person detection and tracking via fine-tuned YOLO v3 and Deepsort techniques. arXiv 2020, arXiv:2005.01385. [Google Scholar]

- Rezaei, M.; Shahidi, M. Zero-shot Learning and its Applications from Autonomous Vehicles to COVID-19 Diagnosis: A Review. SSRN Mach. Learn. J. 2020, 3, 1–27. [Google Scholar] [CrossRef]

- Toğaçar, M.; Ergen, B.; Cömert, Z. COVID-19 detection using deep learning models to exploit Social Mimic Optimization and structured chest X-ray images using fuzzy color and stacking approaches. Comput. Biol. Med. 2020, 103805. [Google Scholar] [CrossRef] [PubMed]

- Ulhaq, A.; Khan, A.; Gomes, D.; Paul, M. Computer Vision For COVID-19 Control: A Survey. Image Video Process. 2020. [Google Scholar] [CrossRef]

- Nguyen, T.T. Artificial intelligence in the battle against coronavirus (COVID-19): A survey and future research directions. ArXiv Prepr. 2020, 10. [Google Scholar] [CrossRef]

- Choi, W.; Shim, E. Optimal Strategies for Vaccination and Social Distancing in a Game-theoretic Epidemiological Model. J. Theor. Biol. 2020, 110422. [Google Scholar] [CrossRef]

- Eksin, C.; Paarporn, K.; Weitz, J.S. Systematic biases in disease forecasting—The role of behavior change. J. Epid. 2019, 96–105. [Google Scholar] [CrossRef]

- Kermack, W.O.; McKendrick, A.G. A Contributions to the Mathematical Theory of Epidemics—I; The Royal Society Publishing: London, UK, 1991. [Google Scholar] [CrossRef]

- Heffernan, J.M.; Smith, R.J.; Wahl, L.M. Perspectives on the basic reproductive ratio. J. R. Soc. Interface 2005, 2, 281–293. [Google Scholar] [CrossRef]

- Relugal, T.C. Game theory of social distancing in response to an epidemic. PLoS Comput. Biol. 2010, e1000793. [Google Scholar] [CrossRef]

- Ainslie, K.E.; Walters, C.E.; Fu, H.; Bhatia, S.; Wang, H.; Xi, X.; Baguelin, M.; Bhatt, S.; Boonyasiri, A.; Boyd, O.; et al. Evidence of initial success for China exiting COVID-19 social distancing policy after achieving containment. Wellcome Open Res. 2020, 5. [Google Scholar] [CrossRef]

- Vidal-Alaball, J.; Acosta-Roja, R.; Pastor Hernández, N.; Sanchez Luque, U.; Morrison, D.; Narejos Pérez, S.; Perez-Llano, J.; Salvador Vèrges, A.; López Seguí, F. Telemedicine in the face of the COVID-19 pandemic. Aten. Primaria 2020, 52, 418–422. [Google Scholar] [CrossRef] [PubMed]

- Sonbhadra, S.K.; Agarwal, S.; Nagabhushan, P. Target specific mining of COVID-19 scholarly articles using one-class approach. J. Chaos Solitons Fractals 2020, 140. [Google Scholar] [CrossRef]

- Punn, N.S.; Agarwal, S. Automated diagnosis of COVID-19 with limited posteroanterior chest X-ray images using fine-tuned deep neural networks. arXiv 2020, arXiv:2004.11676. [Google Scholar] [CrossRef]

- Jhunjhunwala, A. Role of Telecom Network to Manage COVID-19 in India: Aarogya Setu. Trans. Indian Natl. Acad. Eng. 2020, 1–5. [Google Scholar] [CrossRef]

- Robakowska, M.; Tyranska-Fobke, A.; Nowak, J.; Slezak, D.; Zuratynski, P.; Robakowski, P.; Nadolny, K.; Ładny, J. The use of drones during mass events. Disaster Emerg. Med. J. 2017, 2, 129–134. [Google Scholar] [CrossRef]

- Harvey, A.; LaPlace, J. Origins, Ethics, and Privacy Implications of Publicly Available Face Recognition Image Datasets; MegaPixels: London, UK, 2019. [Google Scholar]

- Xin, T.; Guo, B.; Wang, Z.; Wang, P.; Lam, J.C.; Li, V.; Yu, Z. FreeSense. ACM Interact. Mob. Wearable Ubiq. Technol. 2018, 2, 1–23. [Google Scholar] [CrossRef]

- Hossain, F.A.; Lover, A.A.; Corey, G.A.; Reigh, N.G.; T, R. FluSense: A contactless syndromic surveillance platform for influenzalike illness in hospital waiting areas. In Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies, 18 March 2020; pp. 1–28. [Google Scholar] [CrossRef]

- Sindagi, V.A.; Patel, V.M. A survey of recent advances in cnn-based single image crowd counting and density estimation. Pattern Recognit. Lett. 2018, 107, 3–16. [Google Scholar] [CrossRef]

- Brighente, A.; Formaggio, F.; Di Nunzio, G.M.; Tomasin, S. Machine Learning for In-Region Location Verification in Wireless Networks. IEEE J. Sel. Areas Commun. 2019, 37, 2490–2502. [Google Scholar] [CrossRef]

- Liu, L.; Ouyang, W.; Wang, X.; Fieguth, P.; Chen, J.; Liu, X.; Pietikäinen, M. Deep learning for generic object detection: A survey. Int. J. Comput. Vis. 2020, 128, 261–318. [Google Scholar] [CrossRef]

- Rezaei, M.; Sarshar, M.; Sanaatiyan, M.M. Toward next generation of driver assistance systems: A multimodal sensor-based platform. In Proceedings of the 2010 2nd International Conference on Computer and Automation Engineering (ICCAE), Singapore, 26–28 February 2010; Volume 4, pp. 62–67. [Google Scholar]

- Sabzevari, R.; Shahri, A.; Fasih, A.; Masoumzadeh, S.; Ghahroudi, M.R. Object detection and localization system based on neural networks for Robo-Pong. In Proceedings of the 2008 5th International Symposium on Mechatronics and Its Applications, Amman, Jordan, 27–29 May 2008; pp. 1–6. [Google Scholar]

- Nguyen, D.T.; Li, W.; Ogunbona, P.O. Human detection from images and videos: A survey. Int. J. Pattern Recognit. 2016, 51, 148–175. [Google Scholar] [CrossRef]

- Serpush, F.; Rezaei, M. Complex Human Action Recognition in Live Videos Using Hybrid FR-DL Method. arXiv 2020, arXiv:2007.02811. [Google Scholar] [CrossRef]

- Gawande, U.; Hajari, K.; Golhar, Y. Pedestrian Detection and Tracking in Video Surveillance System: Issues, Comprehensive Review, and Challenges. In Recent Trends in Computational Intelligence; Intech Open Publisher: London, UK, 2020. [Google Scholar] [CrossRef]

- Wojke, N.; Bewley, A.; Paulus, D. Simple online and realtime tracking with a deep association metric. In Proceedings of the IEEE International Conference on Image Processing (ICIP), Beijing, China, 17–20 September 2017; pp. 3645–3649. [Google Scholar] [CrossRef]

- Khandelwal, P.; Khandelwal, A.; Agarwal, S.; Thomas, D.; Xavier, N.; Raghuraman, A. Using Computer Vision to enhance Safety of Workforce in Manufacturing in a Post COVID World. Comput. Vis. Pattern Recognit. 2020. [Google Scholar] [CrossRef]

- Sandler, M.; Howard, A.; Zhu, M.; Zhmoginov, A.; Chen, L. MobileNetV2: Inverted residuals and linear bottlenecks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–22 June 2018; pp. 4510–4520. [Google Scholar] [CrossRef]

- Yang, D.; Yurtsever, E.; Renganathan, V.; Redmill, K.; Özgüner, U. A Vision-based Social Distancing and Critical Density Detection System for COVID-19. Image Video Process. 2020, arXiv:2007.03578. [Google Scholar]

- Girshick, R.; Donahue, J.; Darrell, T.; Malik, J. Rich feature hierarchies for accurate object detection and semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Columbus, OH, USA, 23–28 June 2014; pp. 580–587. [Google Scholar] [CrossRef]

- Girshick, R. Fast R-CNN. In Proceedings of the 2015 IEEE International Conference on Computer Vision, Las Condes, Chile, 11–18 December 2015; pp. 1440–1448. [Google Scholar] [CrossRef]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 1137–1149. [Google Scholar] [CrossRef] [PubMed]

- Liu, W.; Anguelov, D.; Erhan, D.; Szegedy, C.; Reed, S.; Fu, C.; Berg, A. SSD: Single Shot MultiBox Detector. Eur. Conf. Comput. Vis. 2016, 21–37. [Google Scholar] [CrossRef]

- Redmon, J.; Divvala, S.; Girshick, R.; Farhadi, A. You only look once: Unified, real-time object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 26 June–1 July 2016; pp. 779–788. [Google Scholar] [CrossRef]

- Redmon, J.; Farhadi, A. YOLO9000: Better, faster, stronger. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 6517–6525. [Google Scholar] [CrossRef]

- Redmon, J.; Farhadi, A. YOLOv3: An Incremental Improvement. arXiv 2018, arXiv:1804.02767. [Google Scholar]

- Bochkovskiy, A.; Wang, C.Y.; Liao, H.Y.M. YOLOv4: Optimal Speed and Accuracy of Object Detection. arXiv 2020, arXiv:2004.10934. [Google Scholar]

- Chen, X.; Fang, H.; Lin, T.; Vedantam, R.; Dollar, P.; Zitnick, C. Microsoft COCO Captions: Data Collection and Evaluation Server. arXiv 2015, arXiv:1504.00325. [Google Scholar]

- Everingham, M.; Van Gool, L.; Williams, C.; Winn, J.; Zisserman, A. The PASCAL Visual Object Classes Challenge 2010 (VOC2010) Results. Int. J. Comput. Vis. 2010, 88, 303–338. [Google Scholar] [CrossRef]

- DeVries, T.; Taylor, G.W. Improved regularization of convolutional neural networks with cutout. arXiv 2017, arXiv:1708.04552. [Google Scholar]

- Nair, V.; Hinton, G.E. Rectified Linear Units Improve Restricted Boltzmann Machines; ICML: Haifa, Israel, 2010. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Spatial Pyramid Pooling in Deep Convolutional Networks for Visual Recognition. In European Conference on Computer Vision (ECCV); Springer Science+Business Media: Zurich, Switzerland, 2014; pp. 346–361. [Google Scholar] [CrossRef]

- Ng, A.Y. Feature selection, L1 vs. L2 regularization, and rotational invariance. In Proceedings of the Twenty-First International Conference on Machine Learning, New York, NY, USA, 4 July 2004; p. 78. [Google Scholar]

- Zhang, H.; Cisse, M.; Dauphin, Y.N.; Lopez-Paz, D. mixup: Beyond empirical risk minimization. arXiv 2017, arXiv:1710.09412. [Google Scholar]

- Maas, A.L.; Hannun, A.Y.; Ng, A.Y. Rectifier nonlinearities improve neural network acoustic models. Proc. ICML 2013, 30, 3. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Chen, L.C.; Papandreou, G.; Kokkinos, I.; Murphy, K.; Yuille, A.L. Deeplab: Semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected crfs. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 40, 834–848. [Google Scholar] [CrossRef]

- Srivastava, N.; Hinton, G.; Krizhevsky, A.; Sutskever, I.; Salakhutdinov, R. Dropout: A simple way to prevent neural networks from overfitting. J. Mach. Learn. Res. 2014, 15, 1929–1958. [Google Scholar]

- Yun, S.; Han, D.; Oh, S.J.; Chun, S.; Choe, J.; Yoo, Y. Cutmix: Regularization strategy to train strong classifiers with localizable features. In Proceedings of the IEEE International Conference on Computer Vision, Seoul, Korea, 27–28 October 2019; pp. 6023–6032. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Delving deep into rectifiers: Surpassing human-level performance on imagenet classification. In Proceedings of the IEEE International Conference on Computer Vision, Las Condes, Chile, 11–18 December 2015; pp. 1026–1034. [Google Scholar]

- Du, X.; Lin, T.Y.; Jin, P.; Ghiasi, G.; Tan, M.; Cui, Y.; Le, Q.V.; Song, X. SpineNet: Learning scale-permuted backbone for recognition and localization. In Proceedings of the 2020 IEEE CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Seattle, WA, USA, 14–19 June 2020; pp. 11592–11601. [Google Scholar]

- Liu, S.; Qi, L.; Qin, H.; Shi, J.; Jia, J. Path aggregation network for instance segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–22 June 2018; pp. 8759–8768. [Google Scholar] [CrossRef]

- Larsson, G.; Maire, M.; Shakhnarovich, G. Fractalnet: Ultra-deep neural networks without residuals. arXiv 2016, arXiv:1605.07648. [Google Scholar]

- Lin, T.Y.; Goyal, P.; Girshick, R.; He, K.; Dollár, P. Focal loss for dense object detection. In Proceedings of the 2017 IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 2980–2988. [Google Scholar]

- Howard, A.G.; Zhu, M.; Chen, B.; Kalenichenko, D.; Wang, W.; Weyand, T.; Andreetto, M.; Adam, H. Mobilenets: Efficient convolutional neural networks for mobile vision applications. arXiv 2017, arXiv:1704.04861. [Google Scholar]

- Wang, C.Y.; Mark Liao, H.Y.; Wu, Y.H.; Chen, P.Y.; Hsieh, J.W.; Yeh, I.H. CSPNet: A new backbone that can enhance learning capability of CNN. In Proceedings of the 2020 IEEE CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Seattle, WA, USA, 14–19 June 2020; pp. 390–391. [Google Scholar] [CrossRef]

- Lin, T.Y.; Dollár, P.; Girshick, R.; He, K.; Hariharan, B.; Belongie, S. Feature pyramid networks for object detection. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 2117–2125. [Google Scholar] [CrossRef]

- Tompson, J.; Goroshin, R.; Jain, A.; LeCun, Y.; Bregler, C. Efficient object localization using convolutional networks. In Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 7 2015; pp. 648–656. [Google Scholar]

- Pang, J.; Chen, K.; Shi, J.; Feng, H.; Ouyang, W.; Lin, D. Libra r-cnn: Towards balanced learning for object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 16–20 June 2019; pp. 821–830. [Google Scholar]

- Klambauer, G.; Unterthiner, T.; Mayr, A.; Hochreiter, S. Self-normalizing neural networks. Adv. Neural Inf. Process. Syst. 2017, 971–980. [Google Scholar] [CrossRef]

- Tan, M.; Pang, R.; Le, Q.V. Efficientdet: Scalable and efficient object detection. In Proceedings of the 2020 IEEE CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Seattle, WA, USA, 14–19 June 2020; pp. 10781–10790. [Google Scholar]

- Law, H.; Deng, J. Cornernet: Detecting objects as paired keypoints. In Proceedings of the 2018 15th European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 734–750. [Google Scholar]

- He, K.; Gkioxari, G.; Dollár, P.; Girshick, R. Mask r-cnn. In Proceedings of the IEEE International Conference on Computer Vision, Istanbul, Turkey, 30–31 January 2018; pp. 2961–2969. [Google Scholar]

- Ramachandran, P.; Zoph, B.; Le, Q.V. Searching for activation functions. arXiv 2017, arXiv:1710.05941. [Google Scholar]

- Tan, M.; Le, Q. EfficientNet: Rethinking model scaling for convolutional neural networks. In Proceedings of the Thirty-Sixth International Conference on Machine Learning (ICML), Long Beach, CA, USA, 10–15 June 2018; pp. 1–10. [Google Scholar] [CrossRef]

- Liu, S.; Huang, D.; Wang, Y. Learning spatial fusion for single-shot object detection. arXiv 2019, arXiv:1911.09516. [Google Scholar]

- Rashwan, A.; Kalra, A.; Poupart, P. Matrix Nets: A new deep architecture for object detection. In Proceedings of the IEEE International Conference on Computer Vision Workshops, Seoul, Korea, 27 October–2 November 2019. [Google Scholar]

- Duan, K.; Bai, S.; Xie, L.; Qi, H.; Huang, Q.; Tian, Q. Centernet: Keypoint triplets for object detection. In Proceedings of the IEEE International Conference on Computer Vision, Seoul, Korea, 27 October–2 November 2019; pp. 6569–6578. [Google Scholar]

- Misra, D. Mish: A self regularized non-monotonic neural activation function. arXiv 2019, arXiv:1908.08681. [Google Scholar]

- Liu, S.; Huang, D.; Wang, Y. Receptive field block net for accurate and fast object detection. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 385–400. [Google Scholar]

- Dai, J.; Li, Y.; He, K.; Sun, J. R-fcn: Object detection via region-based fully convolutional networks. Adv. Neural Inf. Process. Syst. 2016, 379–387. [Google Scholar] [CrossRef]

- Yang, Z.; Liu, S.; Hu, H.; Wang, L.; Lin, S. Reppoints: Point set representation for object detection. In Proceedings of the IEEE International Conference on Computer Vision, Seoul, Korea, 27 October–2 November 2019; pp. 9657–9666. [Google Scholar]

- Szegedy, C.; Vanhoucke, V.; Ioffe, S.; Shlens, J.; Wojna, Z. Rethinking the inception architecture for computer vision. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 26 June–1 July 2016; pp. 2818–2826. [Google Scholar]

- Szegedy, C.; Ioffe, S.; Vanhoucke, V.; Alemi, A. Inception-v4, inception-resnet and the impact of residual connections on learning. arXiv 2016, arXiv:1602.07261. [Google Scholar]

- Zhao, Q.; Sheng, T.; Wang, Y.; Tang, Z.; Chen, Y.; Cai, L.; Ling, H. M2det: A single-shot object detector based on multi-level feature pyramid network. In Proceedings of the AAAI Conference on Artificial Intelligence, Honolulu, HI, USA, 27 January–1 February 2019; Volume 33, pp. 9259–9266. [Google Scholar]

- Tian, Z.; Shen, C.; Chen, H.; He, T. Fcos: Fully convolutional one-stage object detection. In Proceedings of the IEEE International Conference on Computer Vision, Seoul, Korea, 27 October–2 November 2019; pp. 9627–9636. [Google Scholar]

- Ghiasi, G.; Lin, T.Y.; Le, Q.V. Nas-fpn: Learning scalable feature pyramid architecture for object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Seoul, Korea, 27 October–2 November 2019; pp. 7036–7045. [Google Scholar]

- Yao, Z.; Cao, Y.; Zheng, S.; Huang, G.; Lin, S. Cross-Iteration Batch Normalization. Mach. Learn. 2020, arXiv:2002.05712. [Google Scholar]

- Huang, G.; Liu, Z.; Van Der Maaten, L.; Weinberger, K.Q. Densely connected convolutional networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 2261–2269. [Google Scholar] [CrossRef]

- Huang, Z.; Wang, J.; Fu, X.; Yu, T.; Guo, Y.; Wang, R. DC-SPP-YOLO: Dense connection and spatial pyramid pooling based YOLO for object detection. Inf. Sci. 2020. [Google Scholar] [CrossRef]

- Woo, S.; Park, J.; Lee, J.Y.; Kweon, I.S. CBAM: Convolutional Block Attention Module. Eur. Conf. Comput. Vision 2018, 4–19. [Google Scholar] [CrossRef]

- Sharifi, A.; Zibaei, A.; Rezaei, M. DeepHAZMAT: Hazardous Materials Sign Detection and Segmentation with Restricted Computational Resources. Eng. Res. 2020. [Google Scholar] [CrossRef]

- Zheng, Z.; Wang, P.; Liu, W.; Li, J.; Ye, R.; Ren, D. Distance-IoU loss: Faster and better learning for bounding box regression. In Proceedings of the AAAI Conference on Artificial Intelligence, Honolulu, HI, USA, 27 January–1 February 2019; pp. 1–8. [Google Scholar] [CrossRef]

- Ghiasi, G.; Lin, T.Y.; Le, Q.V. DropBlock: A regularization method for convolutional networks. arXiv 2018, arXiv:1810.12890. [Google Scholar]

- Müller, R.; Kornblith, S.; Hinton, G.E. When does label smoothing help? Adv. Neural Inf. Process. Syst. 2019, arXiv:1906.02629, 4694–4703. [Google Scholar]

- Bewley, A.; Ge, Z.; Ott, L.; Ramos, F.; Upcroft, B. Simple online and realtime tracking. In Proceedings of the 2016 IEEE International Conference on Image Processing (ICIP), Phoenix, AZ, USA, 25–28 September 2016; pp. 3464–3468. [Google Scholar] [CrossRef]

- Rezaei, M.; Klette, R. Computer Vision for Driver Assistance; Springer International Publishing: Cham, Switzerland, 2017. [Google Scholar] [CrossRef]

- Saleem, N.H.; Chien, H.J.; Rezaei, M.; Klette, R. Effects of Ground Manifold Modeling on the Accuracy of Stixel Calculations. IEEE Trans. Intell. Transp. Syst. 2018, 20, 3675–3687. [Google Scholar] [CrossRef]

- Russakovsky, O.; Deng, J.; Su, H.; Krause, J.; Satheesh, S.; Ma, S.; Huang, Z.; Karpathy, A.; Khosla, A.; Bernstein, M.; et al. ImageNet Large Scale Visual Recognition Challenge. Int. J. Comput. Vis. 2015, 115, 211–252. [Google Scholar] [CrossRef]

- Kuznetsova, A.; Rom, H.; Alldrin, N.; Uijlings, J.; Krasin, I.; Pont-Tuset, J.; Kamali, S.; Popov, S.; Malloci, M.; Kolesnikov, A.; et al. The open images dataset v4. Int. J. Comput. Vis. 2020, 1–26. [Google Scholar] [CrossRef]

- Loshchilov, I.; Hutter, F. SGDR: Stochastic gradient descent with warm restarts. In Proceedings of the International Conference on Learning Representations, San Juan, Puerto Rico, 2–4 May 2016; pp. 1–16. [Google Scholar]

- Chen, K.; Loy, C.C.; Gong, S.; Xiang, T. Feature mining for localised crowd counting. BMVC 2012, 1, 1–11. [Google Scholar] [CrossRef]

- Zhou, B.; Wang, X.; Tang, X. Understanding collective crowd behaviors: Learning a mixture model of dynamic pedestrian-agents. In Proceedings of the 2012 IEEE Conference on Computer Vision and Pattern Recognition, Providence, RI, USA, 16–21 June 2012; pp. 2871–2878. [Google Scholar] [CrossRef]

| Input | Detection Core → | Head → Output | ||||

|---|---|---|---|---|---|---|

| Augmentation | Activation | Backbone | Neck | Regularisation | Dense | Sparse |

| CutOut [56] | ReLU [57] | VGG [58] | SPP [59] | L1, L2 [60] | YOLO [50] | R-CNN [46] |

| MixUp [61] | Leaky-ReLU [62] | ResNet [63] | ASPP [64] | DropOut (DO) [65] | SSD [49] | Fast-RCNN [47] |

| CutMix [66] | Param-ReLU [67] | SpineNet [68] | PAN [69] | DropPath [70] | RetinaNet [71] | Faster-RCNN [48] |

| Mosaic [53] | ReLU6 [72] | CSPResNeXt50 [73] | FPN [74] | Spatial DO [75] | RPN [48] | Libra R-CNN [76] |

| SELU [77] | CSPDarknet53 [73] | BiFPN [78] | DropBlock [53] | CornerNet [79] | Mask R-CNN [80] | |

| Swish [81] | EfficientNet [82] | ASFF [83] | MatrixNet [84] | CenterNet [85] | ||

| Mish [86] | Darknet53 [52] | RFB [87] | R-FCN [88] | RepPoints [89] | ||

| Inception [90,91] | SFAM [92] | FCOS [93] | ||||

| NAS-FPN [94] | ||||||

| Backbone Model | Input Resolution | Number of Parameters | Speed (fps) |

|---|---|---|---|

| CSPResNeXt50 | 20.6 M | 62 | |

| CSPDarknet53 | 27.6 M | 66 | |

| EfficientNet-B3 | 12.0 M | 26 |

| Method | Backbone | Precision | Recall | FPS |

|---|---|---|---|---|

| DeepSOCIAL- | CSPResNeXt50-PANet-SPP-SAM | 99.8% | 96.7 | 23.8 |

| DeepSOCIAL- | CSPDarkNet53-PANet-SPP | 99.5% | 97.1 | 24.1 |

| DeepSOCIAL- | CSPResNeXt50-PANet-SPP | 99.6% | 96.7 | 23.8 |

| DeepSOCIAL (DS) | CSPDarkNet53-PANet-SPP-SAM | 99.8% | 97.6 | 24.1 |

| YOLOv3 | DarkNet53 | 84.6% | 68.2 | 23 |

| SSD | VGG-16 | 69.1% | 60.5 | 10 |

| Faster R-CNN | ResNet-50 | 96.9% | 83.0 | 3 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Rezaei, M.; Azarmi, M. DeepSOCIAL: Social Distancing Monitoring and Infection Risk Assessment in COVID-19 Pandemic. Appl. Sci. 2020, 10, 7514. https://doi.org/10.3390/app10217514

Rezaei M, Azarmi M. DeepSOCIAL: Social Distancing Monitoring and Infection Risk Assessment in COVID-19 Pandemic. Applied Sciences. 2020; 10(21):7514. https://doi.org/10.3390/app10217514

Chicago/Turabian StyleRezaei, Mahdi, and Mohsen Azarmi. 2020. "DeepSOCIAL: Social Distancing Monitoring and Infection Risk Assessment in COVID-19 Pandemic" Applied Sciences 10, no. 21: 7514. https://doi.org/10.3390/app10217514

APA StyleRezaei, M., & Azarmi, M. (2020). DeepSOCIAL: Social Distancing Monitoring and Infection Risk Assessment in COVID-19 Pandemic. Applied Sciences, 10(21), 7514. https://doi.org/10.3390/app10217514