1. Introduction

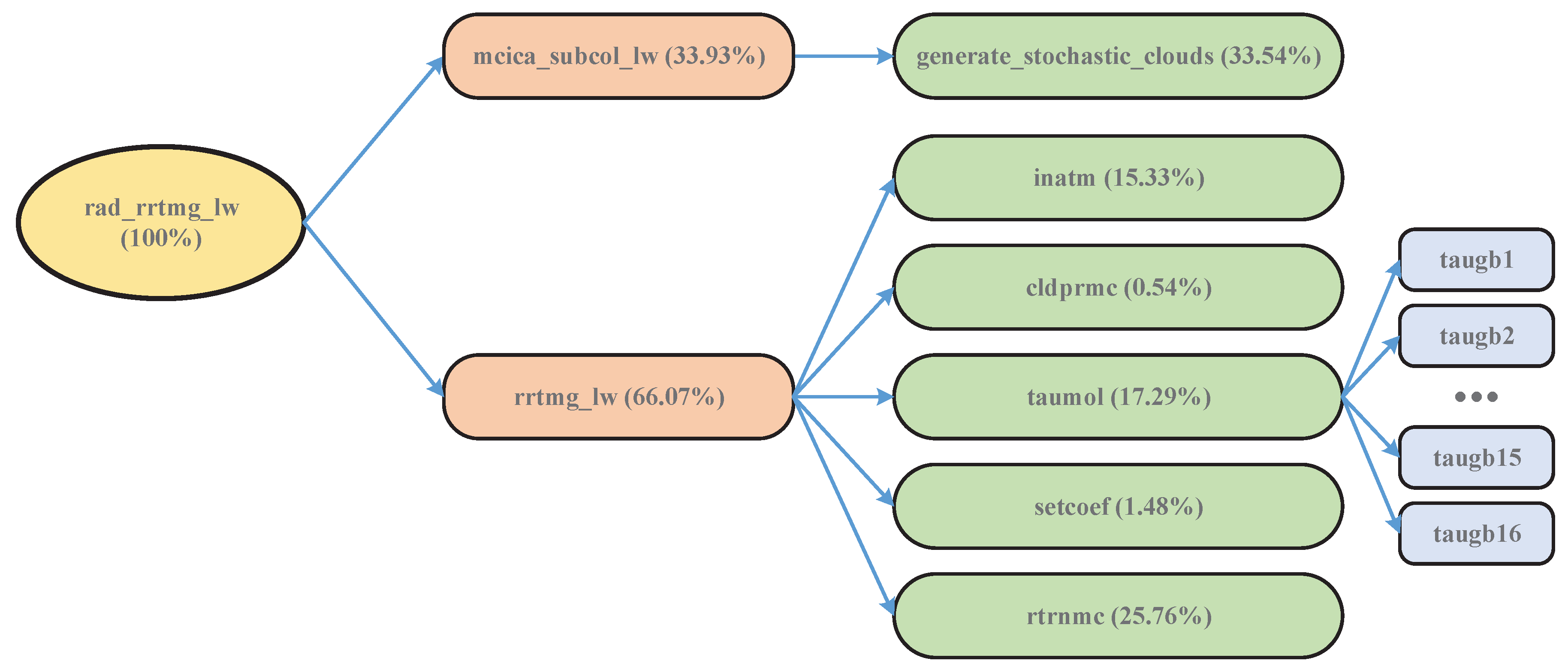

The radiative process, one of the important atmospheric physics processes, is often used for calculating atmospheric radiative fluxes and heating rates [

1]. To simulate the radiative process, several radiative transfer models were developed, such as the line-by-line radiative transfer model (LBLRTM) [

2], and the rapid radiative transfer model (RRTM) [

3]. The RRTM that is a validated model computing longwave and shortwave radiative fluxes and heating rates, uses the correlated-k method to provide the required accuracy and computing efficiency [

4], but it still demands enormous computing resources for long-term climatic simulation. To address this issue, as an accelerated version of RRTM, the rapid radiative transfer model for general circulation models (RRTMG) provides improved efficiency with minimal loss of accuracy for atmospheric general circulation models (GCMs) [

5]. As a coupled climate system model comprising eight separate component models and one central coupler, the Chinese Academy of Sciences–Earth System Model (CAS-ESM) [

6,

7] was developed by the Institute of Atmospheric Physics (IAP) of Chinese Academy of Sciences (CAS). As the atmospheric component model of the CAS-ESM, the IAP Atmospheric General Circulation Model Version 4.0 (IAP AGCM4.0) [

8,

9] used the RRTMG as its radiative parameterization scheme.

Radiative transfer is relatively time-consuming [

10,

11]. The RRTMG improves the computing efficiency of radiative transfer, but it is still so computationally expensive that it cannot be performed with shorter time steps or finer grid resolutions in operational models [

12]. To greatly improve the computational performance, it is beneficial to use high-performance computing (HPC) technology to accelerate the RRTMG.

At present, HPC is widely employed in earth climate system models [

13,

14,

15]. With the rapid development of HPC technology, due to the features of multithreaded many-core processor, high parallelism, high memory bandwidth, and low cost, the modern graphics processing unit (GPU) has substantially outpaced its central processing unit (CPU) counterparts in dealing with data- and computing-intensive problems [

16,

17,

18,

19]. Currently, increasing numbers of atmospheric applications were accelerated by the GPUs [

20,

21]. For example, the WSM5 microphysics scheme from the Weather Research and Forecasting (WRF) model obtained a 206× speedup on a GPU [

22].

In view of the booming GPU capability, our previous study used a GPU for accelerating the RRTMG longwave radiation scheme (RRTMG_LW). In this study, the GPU-based acceleration algorithms with one-dimensional (1D) and two-dimensional (2D) domain decompositions for the RRTMG_LW were proposed [

23]. However, the RRTMG_LW did not achieve an excellent speedup on a GPU. Therefore, the present paper focuses on the implementation of better GPU-based accelerating methods for the RRTMG_LW. To further accelerate the RRTMG_LW in the CAS-ESM, a GPU-based acceleration algorithm in the

g-

dimension is proposed. The proposed algorithm enables massively parallel calculation of the RRTMG_LW in the

g-

dimension. Then, an optimized version of the RRTMG_LW is built by successfully adopting GPU technology, resulting in G-RRTMG_LW. The experimental results demonstrated that the G-RRTMG_LW on one K40 GPU obtained a 30.98× speedup.

The main contributions of this study are as follows:

- (1)

To further accelerate the RRTMG_LW with a massively parallel computing technology, a GPU-based accelerating algorithm in the g- dimension is proposed. The aim is to explore the parallelization of the RRTMG_LW in the g- dimension. The proposed algorithm adapts well to the advances in multi-threading computing technology of GPUs and can be generalized to accelerate the RRTMG shortwave radiation scheme (RRTMG_SW).

- (2)

The G-RRTMG_LW was implemented in CUDA (NVIDIA’s Compute Unified Device Architecture) Fortran and shows excellent computational capability. To some extent, the more efficient computation of the G-RRTMG_LW supports real-time computing of the CAS-ESM. Moreover, the heterogeneous computing of the CAS-ESM is implemented.

The remainder of this paper is organized as follows.

Section 2 presents representative approaches that aim at improving the computational efficiency of physical parameterization schemes.

Section 3 introduces the RRTMG_LW model and GPU environment.

Section 4 details the CUDA-based parallel algorithm in the

g-

dimension for the RRTMG_LW.

Section 5 describes the parallelization implementation of the G-RRTMG_LW.

Section 6 evaluates the performance of the G-RRTMG_LW in terms of runtime efficiency and speedup, and discusses some of the problems arising in the experiment. The last section concludes the paper with a summary and proposal for future work.

2. Related Work

There were many successful attempts at using GPUs to accelerate physical parameterization schemes and climatic system models. This section describes the most salient work along this direction.

The WRF Goddard shortwave radiance was accelerated on GPUs using CUDA C [

24]. Via double precision arithmetic and with data I/O, the shortwave radiance obtained a 116× speedup on two NVIDIA GTX 590 s [

25]. The WRF five-layer thermal diffusion scheme was accelerated using CUDA C, and a 311× speedup was obtained on one Tesla K40 GPU [

26]. The WRF Single Moment 6-class microphysics scheme was also accelerated using CUDA C, and obtained a 216× speedup on one Tesla K40 GPU [

27].

The WRF long-wave RRTM code was ported to GPUs using CUDA Fortran [

28]. The RRTM on Tesla C1060 GPUs attained a 10× speedup [

29]. The RRTM longwave radiation scheme (RRTM_LW) on the GTX480 obtained a 27.6× speedup compared with the baseline wall-clock time [

1]. The CUDA Fortran version of the RRTM_LW in the GRAPES_Meso model was developed. It adopted some optimization methods for enhancing the computational efficiency, and obtained a 14.3× speedup [

10].

The Fortran code of the WRF RRTMG_LW was rewritten in the C programming language, and then its GPU parallelization was implemented using CUDA C. With I/O transfer, the RRTMG_LW achieved a 123× speedup on one Tesla K40 GPU [

30]. The Fortran code of the RRTMG_SW was also rewritten in the C programming language. Furthermore, the RRTMG_SW achieved a 202× speedup on one Tesla K40 GPU compared with its single-threaded Fortran counterpart running on Intel Xeon E5-2603 [

31].

In a significantly different approach from the previous work, this study first proposes a new and detailed parallel algorithm in the g- dimension for the CAS-ESM RRTMG_LW. Rewriting the original Fortran code of the RRTMG_LW would take considerable time, so CUDA Fortran rather than CUDA C was adopted in the parallelization implementation. The major concerns addressed by the proposed algorithm include the following: (a) runtime efficiency, and (b) common processes and technologies of GPU parallelization.

4. GPU-Enabled Acceleration Algorithm

In this section, the 2D acceleration algorithm of the RRTMG_LW is introduced. Then, the parallel strategy of the RRTMG_LW in the g- dimension is described. Finally, a CUDA-based acceleration algorithm is proposed.

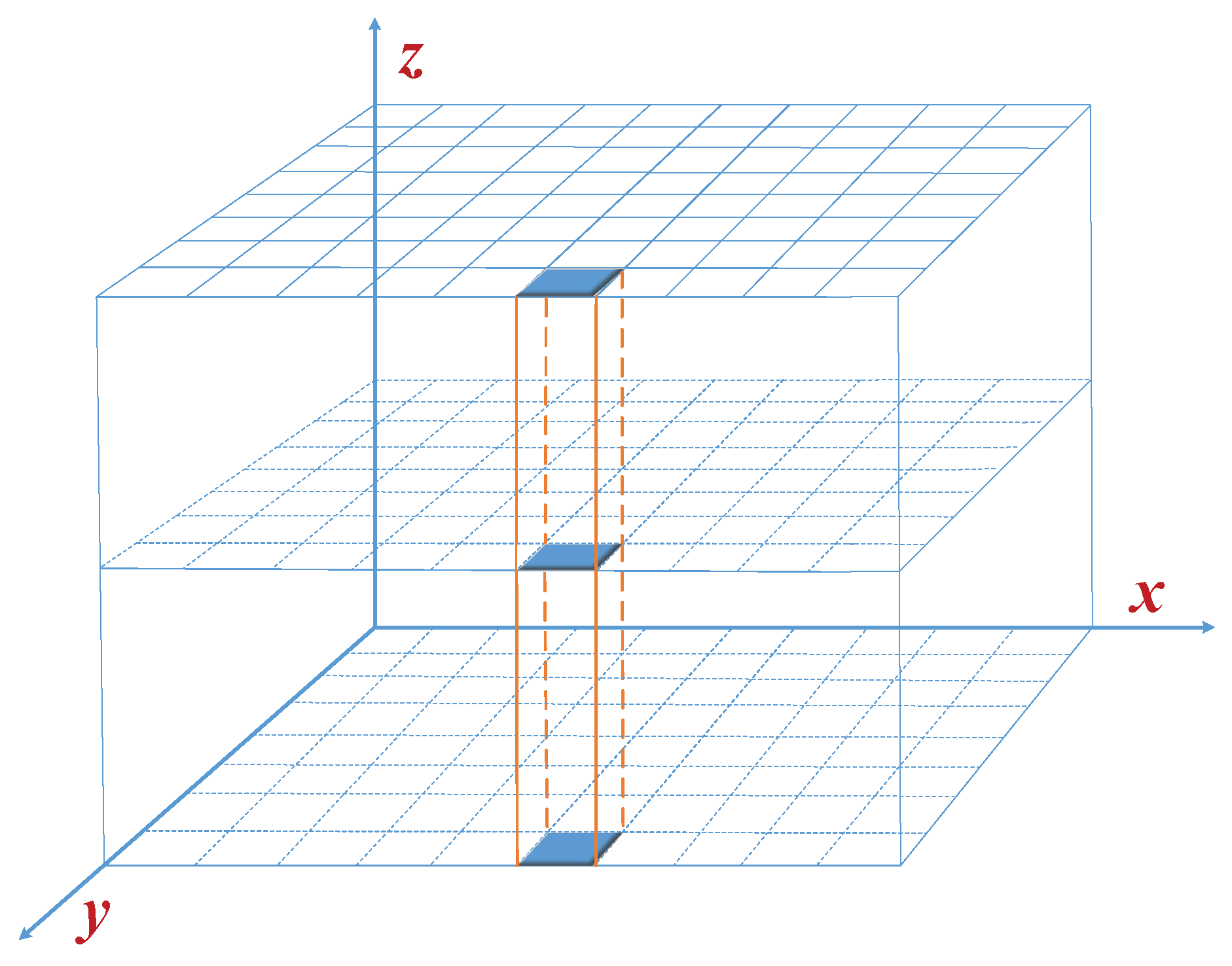

4.1. 2D Acceleration Algorithm

The RRTMG_LW uses a collection of three-dimensional (3D) cells to describe the atmosphere. Its 1D acceleration algorithm with a domain decomposition in the horizontal direction assigns the workload of one “column,” shown in

Figure 2, to each CUDA thread. Here, the

x-axis represents longitude, the

y-axis represents latitude, and the

z-axis represents the vertical direction. The RRTMG_LW in spatial structure has three dimensions, but the

x and

y dimensions in its CUDA code implementation are merged into one dimension to easily write the code. In the CAS-ESM, the IAP AGCM4.0 has a

horizontal resolution and 51 levels in the vertical direction, so the RRTMG_LW has

×

=

horizontal grid points. Thus, the first dimension of 3D arrays in its code has

elements at most.

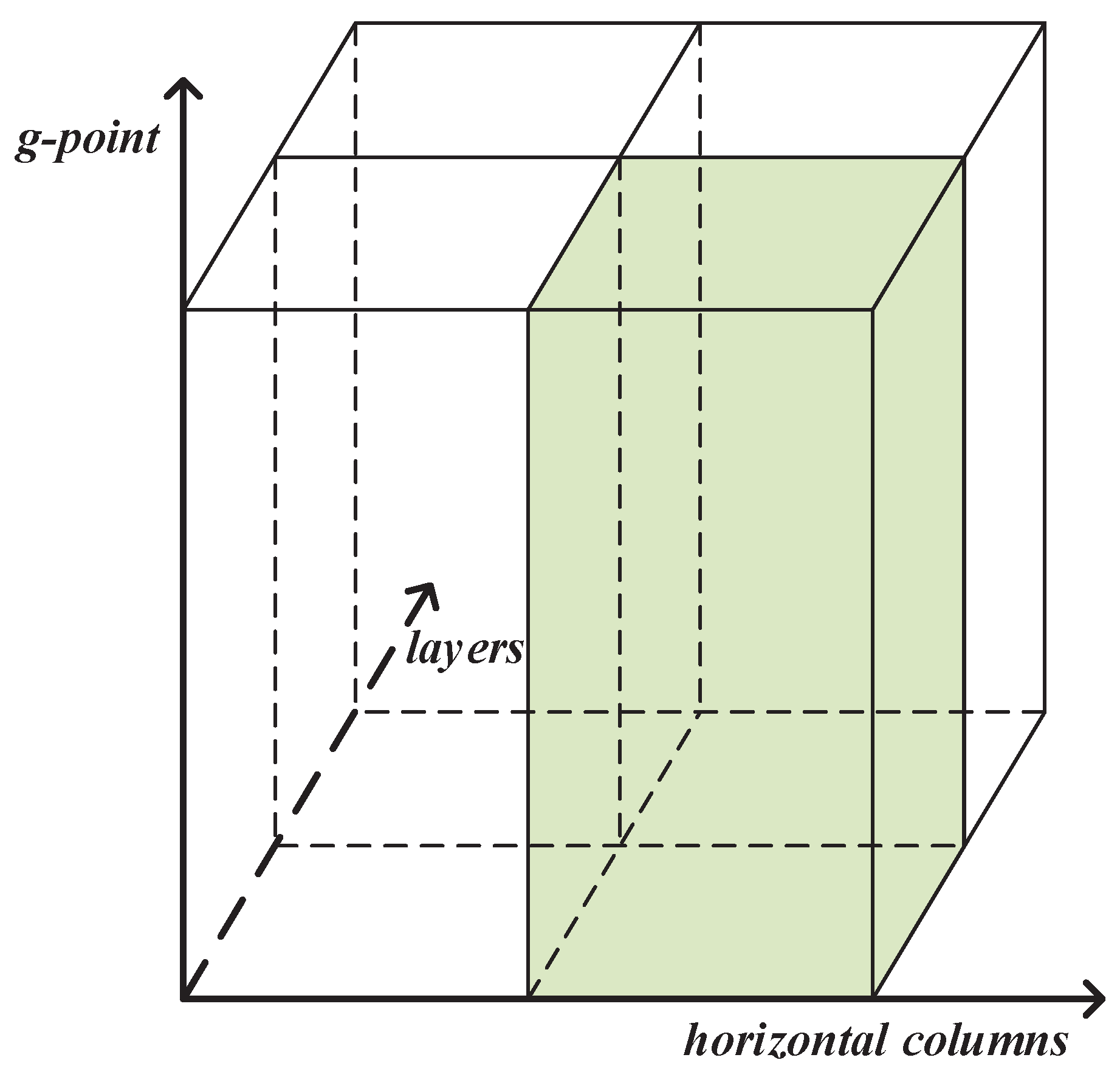

To make full use of the GPU performance, the 2D acceleration algorithm with a domain decomposition in the horizontal and vertical directions for the RRTMG_LW was proposed in our previous study [

23].

Figure 3 illustrates the 2D domain decomposition for the RRTMG_LW accelerated on the GPU. The 2D acceleration algorithm is illustrated in Algorithm 2. Because of data dependency, the acceleration of

and

in the vertical direction is unsuitable, while

,

, and

are able to accelerate in the vertical direction. In the 1D acceleration,

n is the number of threads in each thread block, while

is the number of blocks used in each kernel grid. In the 2D acceleration,

defines the number of threads used in each thread block of the

x,

y, and

z dimensions by the derived type

. Furthermore,

defines the number of blocks in the

x,

y, and

z dimensions by

.

| Algorithm 2: 2D acceleration algorithm. |

subroutine rrtmg_lw_d2(parameters)Copy input data to GPU device //Call inatm_d1 with 2D decomposition callinatm_d1(parameters) //Call inatm_d2 with 2D decomposition callinatm_d2(parameters) //Call inatm_d3 with 1D decomposition callinatm_d3(parameters) //Call inatm_d4 with 2D decomposition callinatm_d4(parameters) //Call cldprmc_d with 1D decomposition callcldprmc_d(parameters) //Call setcoef_d1 with 2D decomposition callsetcoef_d1(parameters) //Call setcoef_d2 with 1D decomposition callsetcoef_d2(parameters) //Call taumol_d with 2D decomposition calltaumol_d(parameters) //Call rtrnmc_d with 1D decomposition callrtrnmc_d(parameters) Copy result to host //Judge whether atmospheric horizontal profile data is completed if it is not completed goto 1

end subroutine |

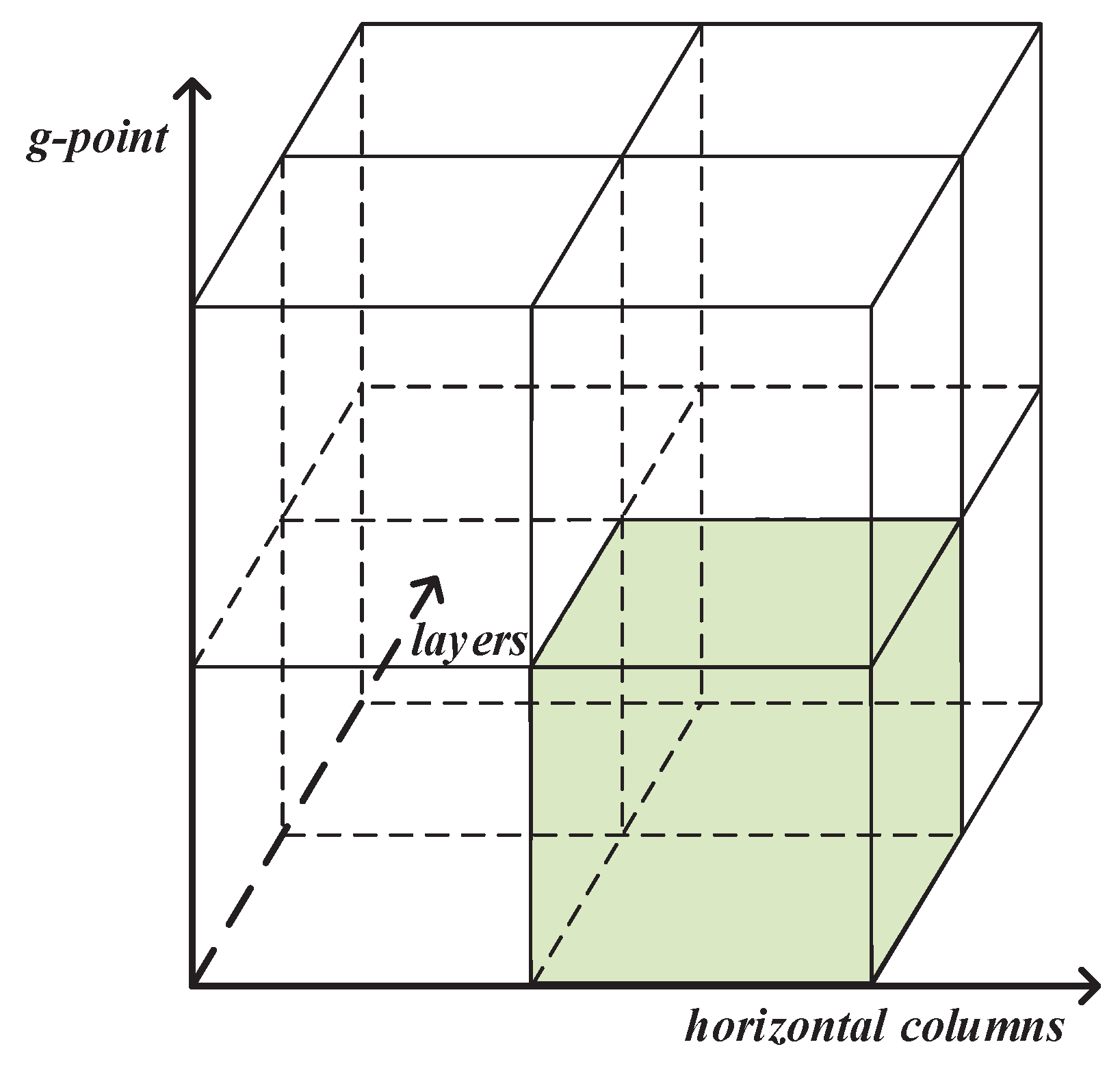

4.2. Parallel Strategy

In the RRTMG_LW, the total number of g points, , is 140. Therefore, there are iterative computations for each g point in , , and . For example, the computation of 140 g points is executed by a do-loop in the GPU-based acceleration implementation of 1D , as illustrated in Algorithm 3. To achieve more fine-grained parallelism, 140 CUDA threads can be assigned to run the kernels , , and . Thus, the parallel strategy is further accelerating , , and in the g- dimension. Moreover, the parallelization between the kernels should also be considered in addition to that within the kernels.

It is noteworthy that the first dimension of 3D arrays in the CUDA code represents the number of horizontal columns, the second dimension represents the number of model layers, and the third dimension represents the number of g points. If one GPU is applied, in theory, × × × = CUDA threads will be required for each kernel in the new parallel method.

4.3. Acceleration Algorithm

Figure 4 illustrates the domain decomposition in the

g-

dimension for the RRTMG_LW accelerated on the GPU. The acceleration algorithm in the

g-

dimension for the RRTMG_LW, is illustrated in Algorithm 4. The algorithm is described as follows:

- (1)

In the acceleration algorithm, consists of five kernels (, , , , and ). Due to data dependency, a piece of code in can be parallel only in the horizontal or vertical direction, so the kernel uses a 1D decomposition. The kernels , , and use a 2D decomposition in the horizontal and vertical directions. The kernel uses a composite decomposition in the horizontal and vertical directions and g- dimension. Due to the requirement of data synchronization, and cannot be merged into one kernel.

- (2)

The kernel still uses a 1D decomposition.

- (3)

Similarly, the kernel uses a 2D decomposition, and the kernel uses a 1D decomposition.

- (4)

The kernel uses a composite decomposition in the horizontal and vertical directions and g- dimension. In , 16 subroutines with the device attribute are invoked.

- (5)

Similarly, consists of 11 kernels (–). Here, , , , , and use a 1D decomposition. Furthermore, and use a 2D decomposition in the horizontal and vertical directions. In addition, and use a 2D decomposition in the horizontal direction and g- dimension. Finally, and use a composite decomposition in the horizontal and vertical directions and g- dimension.

| Algorithm 3: Implementation of 1D rtrnmc_d. |

attributes(global) subroutine rtrnmc_d(parameters)iplon=(blockIdx%x-1)×blockDim%x+threadIdx%x if (iplon≥1 .and. iplon≤ncol) then Initialize variable arrays do lay = 1, nlayers do ig = 1, ngptlw do some corresponding work end do end do //Loop over frequency bands //istart is beginning band of calculation //iend is ending band of calculation do iband = istart, iend //Downward radiative transfer loop do lay = nlayers, 1, -1 do some corresponding work end do end do end if

end subroutine |

| Algorithm 4: Acceleration in g- dimension. |

subroutine rrtmg_lw_d3(parameters)Copy input data to GPU device //Call inatm_d1 with 2D decomposition callinatm_d1(parameters) //Call inatm_d2 with 2D decomposition callinatm_d2(parameters) //Call inatm_d3 with g- acceleration callinatm_d3(parameters) //Call inatm_d4 with 1D decomposition callinatm_d4(parameters) //Call inatm_d5 with 2D decomposition callinatm_d5(parameters) //Call cldprmc_d with 1D decomposition callcldprmc_d(parameters) //Call setcoef_d1 with 2D decomposition callsetcoef_d1(parameters) //Call setcoef_d2 with 1D decomposition callsetcoef_d2(parameters) //Call taumol_d with g- acceleration calltaumol_d(parameters) //Call rtrnmc_d1 with 1D decomposition callrtrnmc_d1(parameters) //Call rtrnmc_d2 with 2D decomposition callrtrnmc_d2(parameters) //Call rtrnmc_d3 with g- acceleration callrtrnmc_d3(parameters) //Call rtrnmc_d4 with 1D decomposition callrtrnmc_d4(parameters) //Call rtrnmc_d5 with 2D decomposition in horizonal and g- dimensions callrtrnmc_d5(parameters) //Call rtrnmc_d6 with 2D decomposition in horizonal and g- dimensions callrtrnmc_d6(parameters) //Call rtrnmc_d7 with g- acceleration callrtrnmc_d7(parameters) //Call rtrnmc_d8 with 1D decomposition callrtrnmc_d8(parameters) //Call rtrnmc_d9 with 2D decomposition callrtrnmc_d9(parameters) //Call rtrnmc_d10 with 1D decomposition callrtrnmc_d10(parameters) //Call rtrnmc_d11 with 1D decomposition callrtrnmc_d11(parameters) Copy result to host //Judge whether atmospheric horizontal profile data is completed if it is not completed goto 1

end subroutine |

5. Algorithm Implementation

In this section, the acceleration algorithm implementation is described. The implementations of 1D and 2D were described in our previous paper, so this section only considers the implementations of , , and with an acceleration in the g- dimension.

5.1. Inatm_d

The implementations of CUDA-based 2D , , and are similar to the procedure in Algorithm 5. Here, identifies a unique thread inside a thread block in the x dimension, identifies a unique thread block inside a kernel grid in the x dimension, and identifies the number of threads in a thread block in the x dimension. In the same way, , , and identify the corresponding configuration in the y dimension. Please note that the “%” symbol in Fortran is the access to fields of a structure and not the modulus operator. In addition, , the coordinate of the horizontal grid points, represents the ID of a global thread in the x dimension, which can be expressed as =(-1)×+. The coordinate of the vertical direction, , also represents the ID of a global thread in the y dimension, which can be expressed as =(-1)×+. Thus, a grid point in the horizontal and vertical directions can be identified by the and variables as 2D linear data.

The implementation of is illustrated in Algorithm 6. Here, identifies a unique thread inside a thread block in the z dimension, identifies a unique thread block inside a kernel grid in the z dimension, and identifies the number of threads in a thread block in the z dimension. The coordinate of the g points, , also represents the ID of a global thread in the x dimension, which can be expressed as =(-1)×+. Furthermore, is used to assign a value to the four 3D arrays.

The implementation of 1D

is illustrated in Algorithm 7.

| Algorithm 5: Implementation of 2D inatm_d. |

attributes(global) subroutine inatm_d(parameters)iplon=(blockIdx%x-1)×blockDim%x+threadIdx%x lay=(blockIdx%y-1)×blockDim%y+threadIdx%y //nlayers=nlay + 1, nlay is number of model layers if ((iplon≥1 .and. iplon≤ncol) .and. (lay≥1 .and. lay≤nlayers-1 or nlayers or nlayers+1)) then Initialize variable arrays or do some computational work for them end if

end subroutine |

| Algorithm 6: Implementation of inatm_d3. |

attributes(global) subroutine inatm_d3(parameters) ig=(blockIdx%x-1)×blockDim%x+threadIdx%x iplon=(blockIdx%y-1)×blockDim%y+threadIdx%y lay=(blockIdx%z-1)×blockDim%z+threadIdx%z //ngptlw is total number of reduced g-intervals if ((iplon≥1 .and. iplon≤ncol) .and. (lay≥1 .and. lay≤nlayers-1) .and. (ig≥1 .and. ig≤ngptlw)) then cldfmc(ig, iplon, lay) = cldfmcl_d(ig, iplon, nlayers-lay) taucmc(ig, iplon, lay) = taucmcl_d(ig, iplon, nlayers-lay) ciwpmc(ig, iplon, lay) = ciwpmcl_d(ig, iplon, nlayers-lay) clwpmc(ig, iplon, lay) = clwpmcl_d(ig, iplon, nlayers-lay) end if

end subroutine |

5.2. taumol_d

The implementation of

is illustrated in Algorithm 8. Here, the implementations of

and

with the device attribute are also described. The implementations of the other 14 subroutines (

–

) are similar to those of

and

.

| Algorithm 7: Implementation of 1D inatm_d4. |

attributes(global) subroutine inatm_d4(parameters)iplon=(blockIdx%x-1)×blockDim%x+threadIdx%x if (iplon≥1 .and. iplon≤ncol) then Initialize variable arrays do lay = 1, nlayers-1 or nlayers do some corresponding computational work end do end if

end subroutine |

| Algorithm 8: Implementation of taumol_d. |

attributes(global) subroutine taumol_d(parameters) iplon=(blockIdx%x-1)×blockDim%x+threadIdx%x lay=(blockIdx%y-1)×blockDim%y+threadIdx%y ig=(blockIdx%z-1)×blockDim%z+threadIdx%z if ((iplon≥1 .and. iplon≤ncol) .and. (lay≥1 .and. lay≤nlayers) .and. (ig≥1 .and. ig≤ngptlw)) then call taugb1_d(parameters) call taugb2_d(parameters) call taugb3_d(parameters) call taugb4_d(parameters) call taugb5_d(parameters) call taugb6_d(parameters) call taugb7_d(parameters) call taugb8_d(parameters) call taugb9_d(parameters) call taugb10_d(parameters) call taugb11_d(parameters) call taugb12_d(parameters) call taugb13_d(parameters) call taugb14_d(parameters) call taugb15_d(parameters) call taugb16_d(parameters) end if

end subroutine |

| attributes(device) subroutine taugb1_d(parameters) |

| //Lower atmosphere loop |

//laytrop is tropopause layer index, ngs1 is an integer parameter used for 140 g-point modelif ((iplon≥1 .and. iplon≤ncol) .and. (lay≥1 .and. lay≤laytrop(iplon)) .and. (ig≥1 .and. ig≤ngs1)) then do some computational work end if //Upper atmosphere loop if ((iplon≥1 .and. iplon≤ncol) .and. (lay≥laytrop(iplon)+1 .and. lay≤nlayers) .and. (ig≥1 .and. ig≤ngs1)) then do some computational work end if

end subroutine |

| attributes(device) subroutine taugb2_d(parameters) |

//ngs2 is an integer parameter used for 140 g-point modelif ((iplon≥1 .and. iplon≤ncol) .and. (lay≥1 .and. lay≤laytrop(iplon)) .and. (ig≥ngs1+1 .and. ig≤ngs2)) then do some computational work end if if ((iplon≥1 .and. iplon≤ncol) .and. (lay≥laytrop(iplon)+1 .and. lay≤nlayers) .and. (ig≥ngs1+1 .and. ig≤ngs2)) then do some computational work end if

end subroutine |

5.3. rtrnmc_d

The implementations of 1D

,

,

,

, and

are similar to the procedure in Algorithm 3. The 2D

and

are used to assign a value to some arrays; their implementations are not described further here. The implementations of

, 2D

, and 2D

are illustrated in Algorithm 9. The implementation of

is similar to that of

.

| Algorithm 9: Implementation of rtrnmc_d. |

attributes(global) subroutine rtrnmc_d3(parameters)iplon=(blockIdx%x-1)×blockDim%x+threadIdx%x igc=(blockIdx%y-1)×blockDim%y+threadIdx%y lay=(blockIdx%z-1)×blockDim%z+threadIdx%z if ((iplon≥1 .and. iplon≤ncol) .and. (lay≥0 .and. lay≤nlayers) .and. (igc≥1 .and. igc≤ngptlw)) then urad(iplon, igc, lay) = 0.0 drad(iplon, igc, lay) = 0.0 clrurad(iplon, igc, lay) = 0.0 clrdrad(iplon, igc, lay) = 0.0 end if

end subroutine |

attributes(global) subroutine rtrnmc_d5(parameters)iplon=(blockIdx%x-1)×blockDim%x+threadIdx%x igc=(blockIdx%y-1)×blockDim%y+threadIdx%y if ((iplon≥1 .and. iplon≤ncol) .and. (igc≥1 .and. igc≤10)) then //Loop over frequency bands iband=1 //Downward radiative transfer loop do lay = nlayers, 1, -1 do some corresponding work end do end if if ((iplon≥1 .and. iplon≤ncol) .and. (igc≥11 .and. igc≤22)) then iband=2 do lay = nlayers, 1, -1 do some corresponding work end do end if When iband=, the algorithms are similar to that in the case of iband=1 or 2.

end subroutine |

attributes(global) subroutine rtrnmc_d6(parameters)iplon=(blockIdx%x-1)×blockDim%x+threadIdx%x igc=(blockIdx%y-1)×blockDim%y+threadIdx%y if ((iplon≥1 .and. iplon≤ncol) .and. (igc≥109 .and. igc≤114)) then iband=10 do lay = nlayers, 1, -1 do some corresponding work end do end if When iband=, the algorithms are similar to that in the case of iband=10.

end subroutine |

7. Conclusions and Future Work

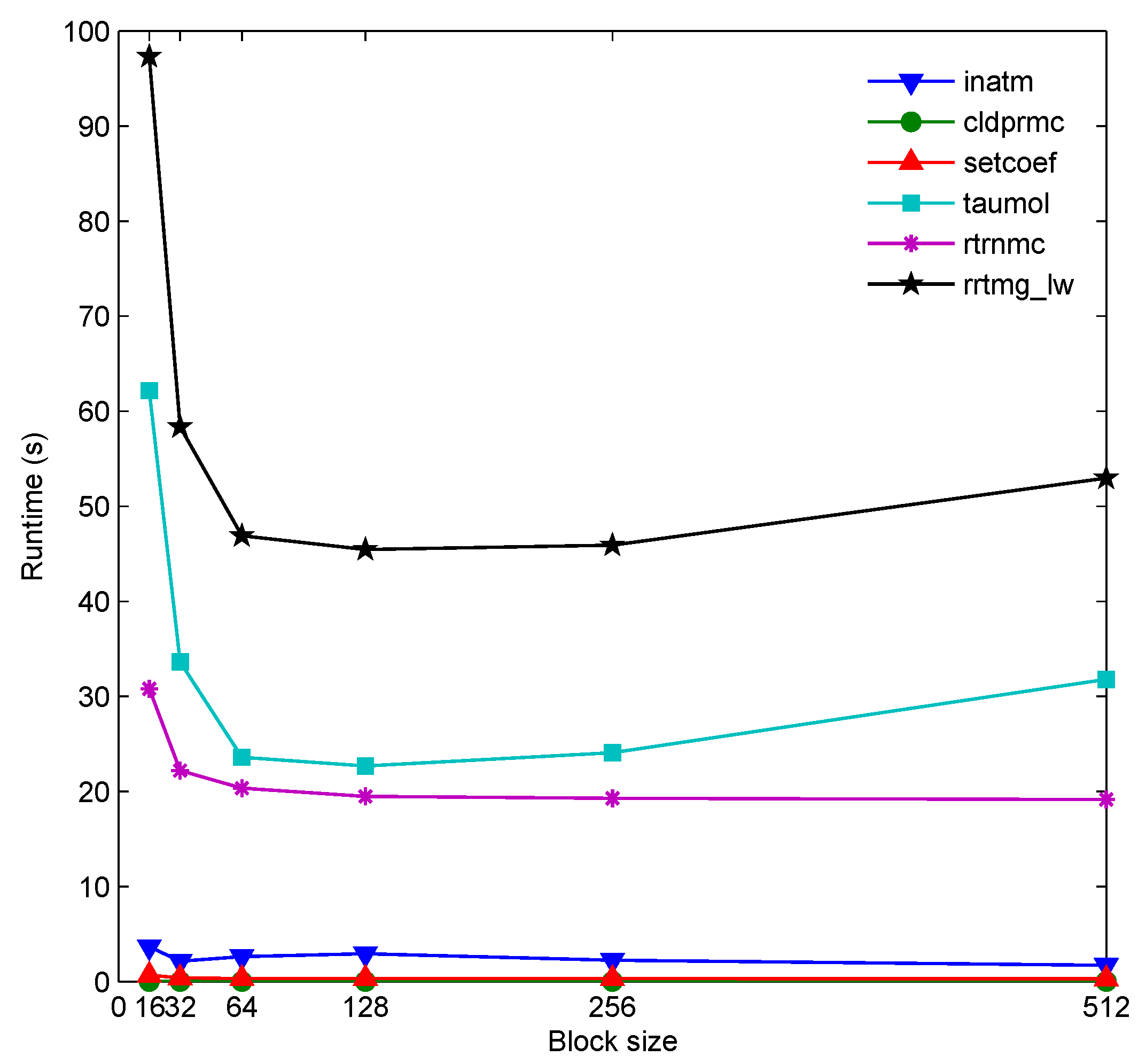

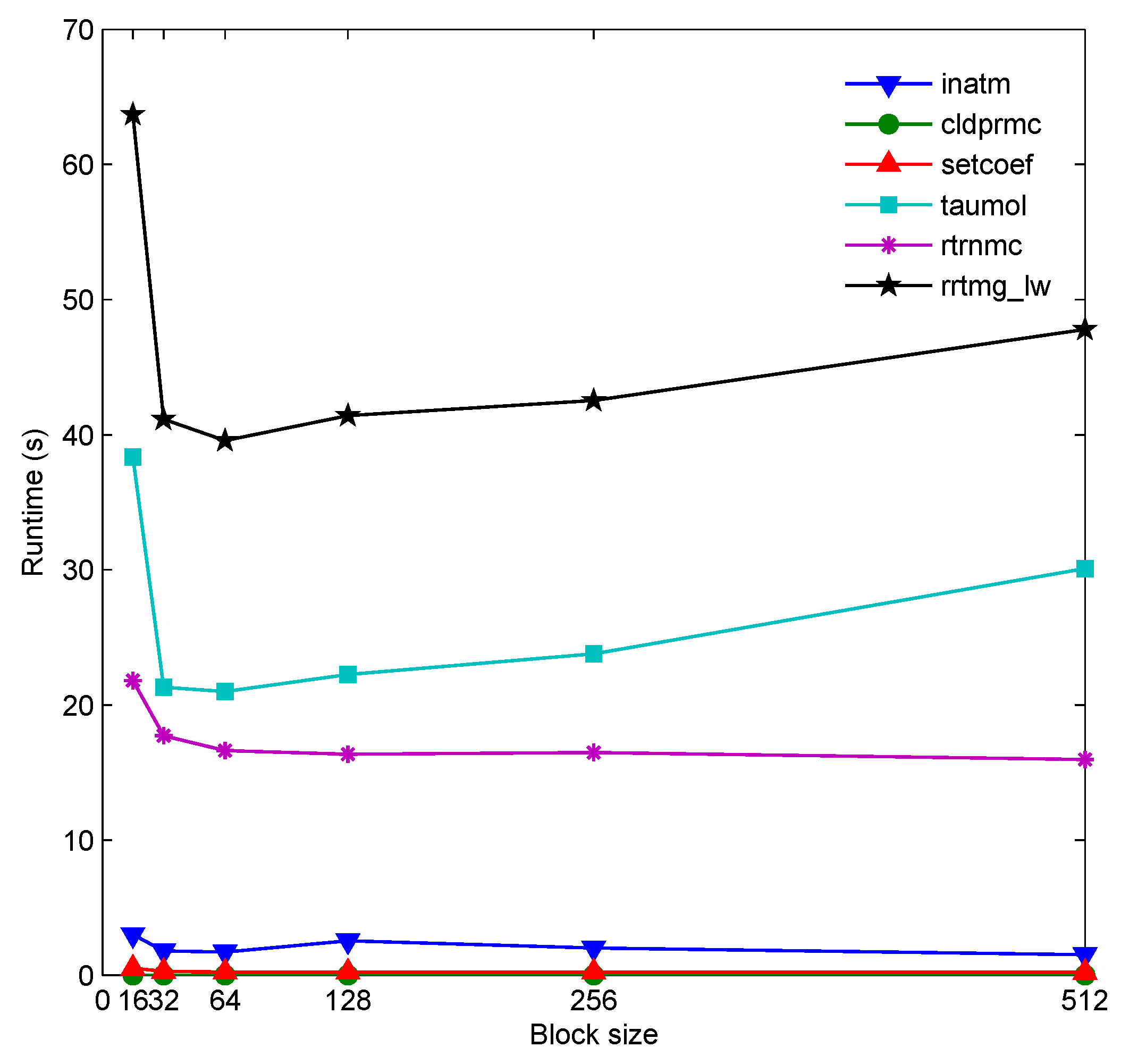

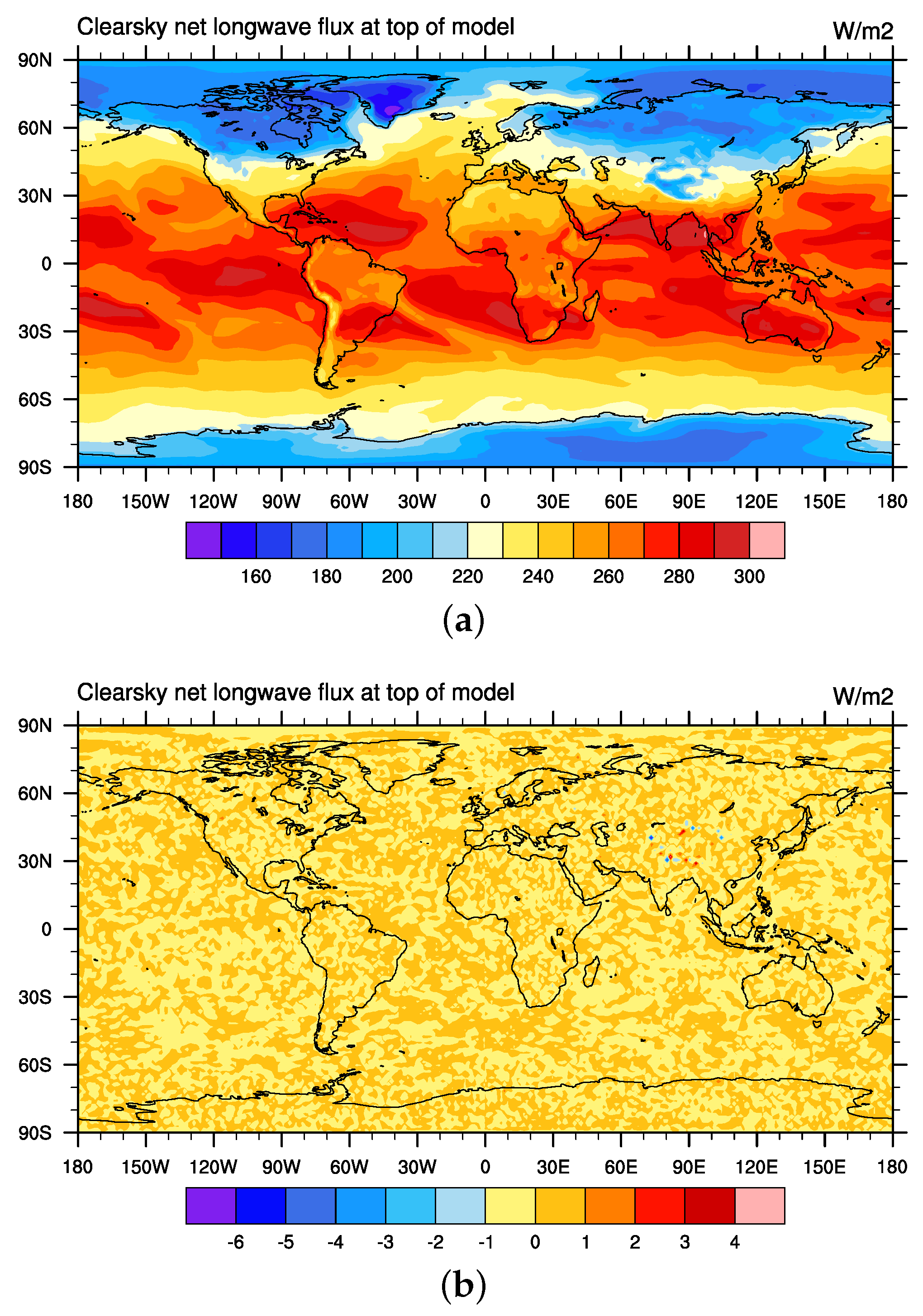

It is exceedingly challenging to use GPUs to accelerate radiation physics. In this work, a GPU-based acceleration algorithm of the RRTMG_LW in the g- dimension was proposed. Then, the acceleration algorithm was implemented using CUDA Fortran. Finally, G-RRTMG_LW, the GPU version of the RRTMG_LW, was developed. The results indicated that the algorithm was effective. During the climate simulation for one model day, the G-RRTMG_LW on one K40 GPU achieved a speedup of 30.98× as compared with a single Intel Xeon E5-2680 CPU-core counterpart. Its runtime decreased from 647.12 s to 20.8876 s. Running the G-RRTMG_LW on one GPU presented a better computing performance than on a CPU with multiple cores. Furthermore, the current acceleration algorithm of the RRTMG_LW displayed better calculation performance than its 2D algorithm did.

The future work will include three aspects. First, the proposed algorithm will be further optimized. For example, read-only arrays in CUDA code will be considered for inclusion in the CUDA texture memory, rather than in the global memory. Second, the I/O transfer in the current G-RRTMG_LW still costs a great deal of time, so the methods of reducing the I/O transfer between the CPU and GPU will be studied. Third, the G-RRTMG_LW currently only runs on one GPU. The CAS-ESM often runs on several CPUs and nodes, so the acceleration algorithm on multiple GPUs will be studied. An MPI+CUDA hybrid paradigm will be adopted to run the G-RRTMG_LW on multiple GPUs.