An Analytical Game for Knowledge Acquisition for Maritime Behavioral Analysis Systems

Abstract

1. Introduction

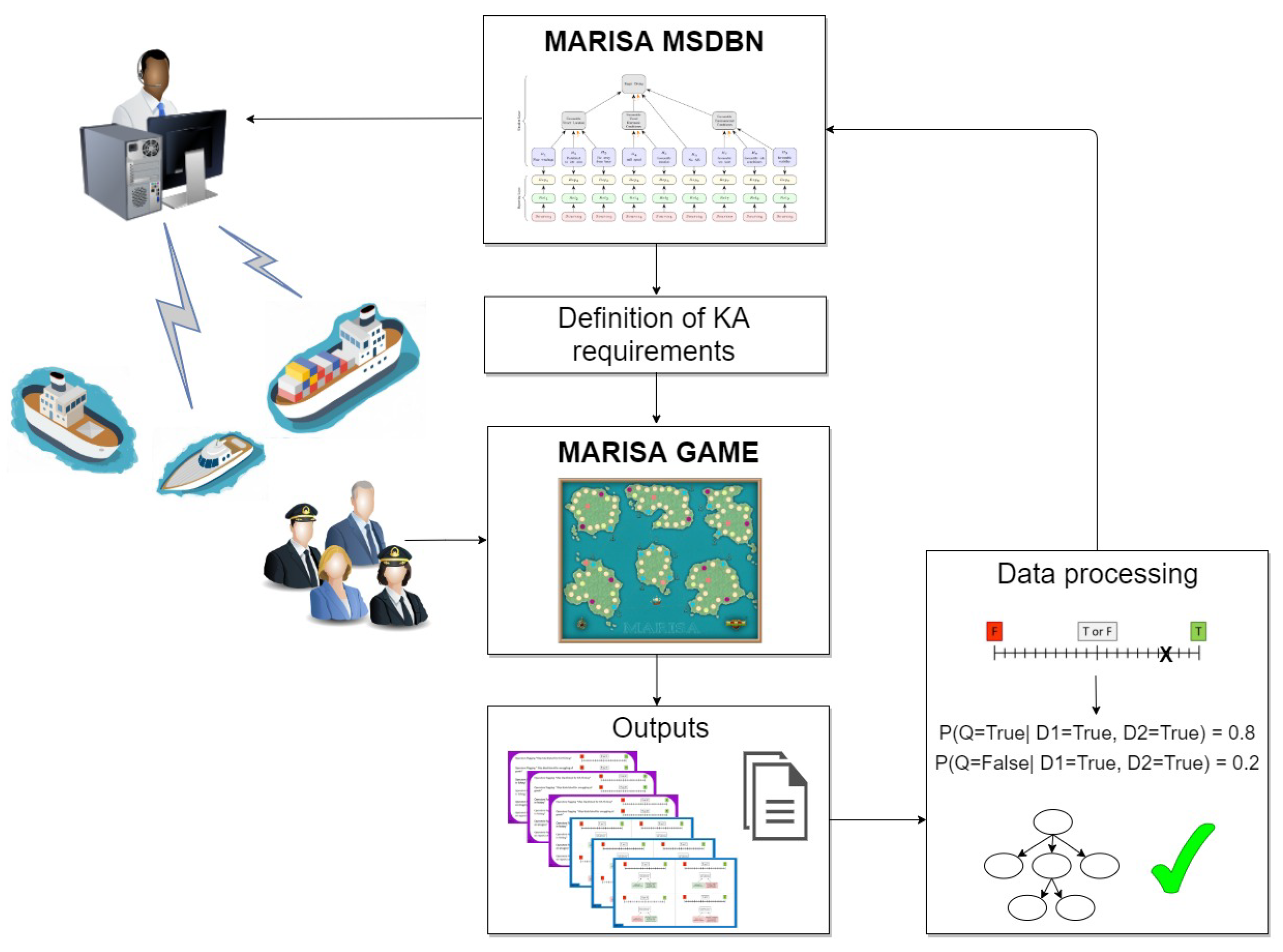

2. The Knowledge Engineering Problem

2.1. Dynamic Bayesian Networks

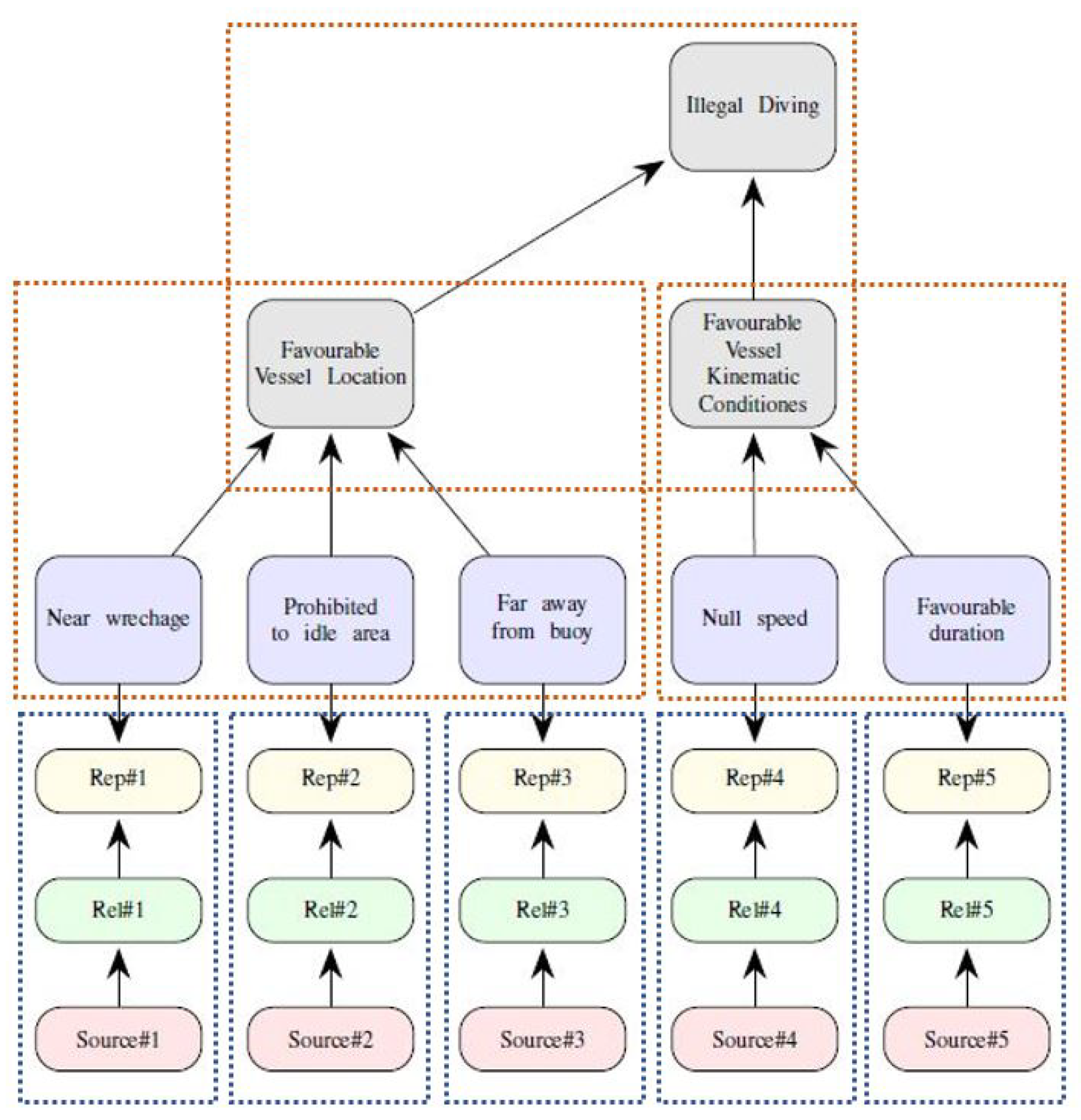

2.2. A Multi-Source Bayesian Network for Behavioral Analysis

2.3. The Knowledge Acquisition for the MSDBN

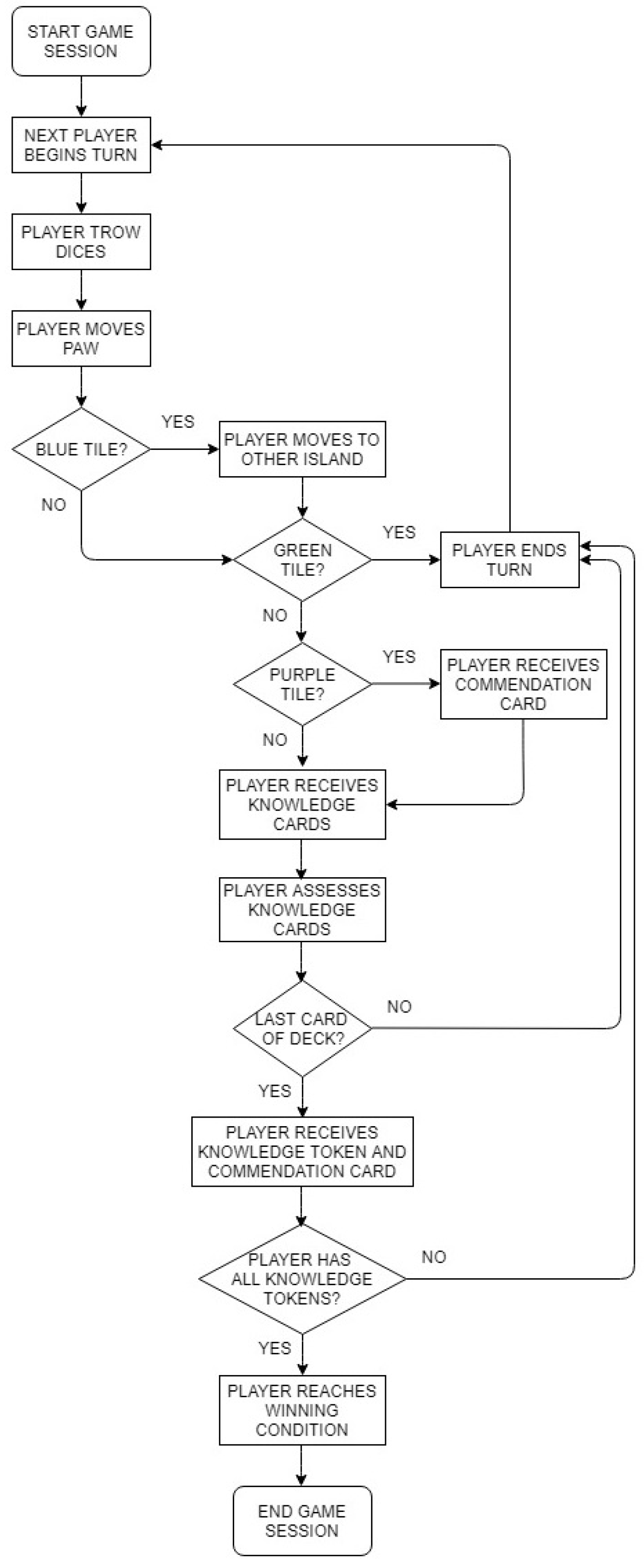

3. Method for KA: MARISA Game

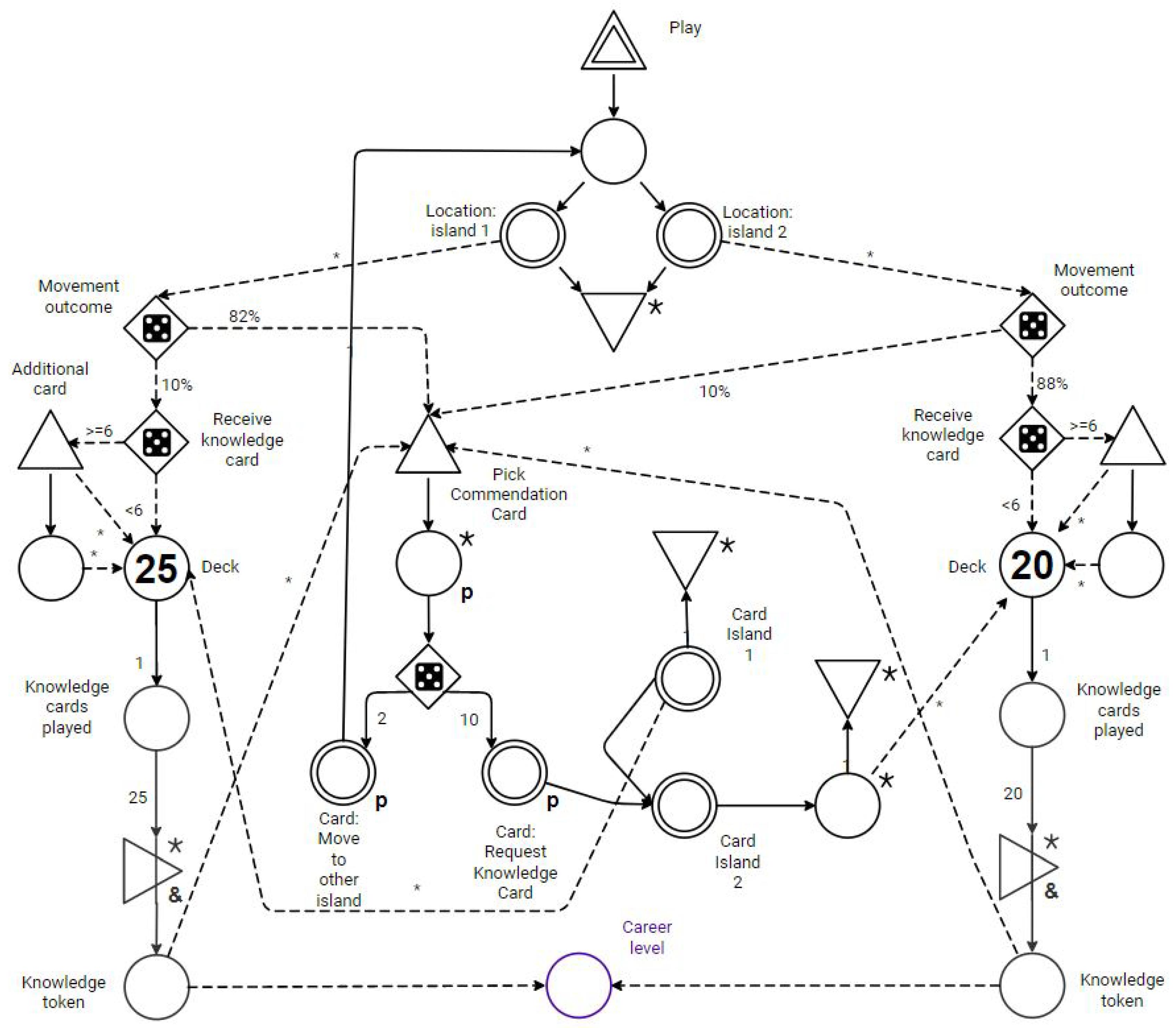

3.1. MARISA Game Design

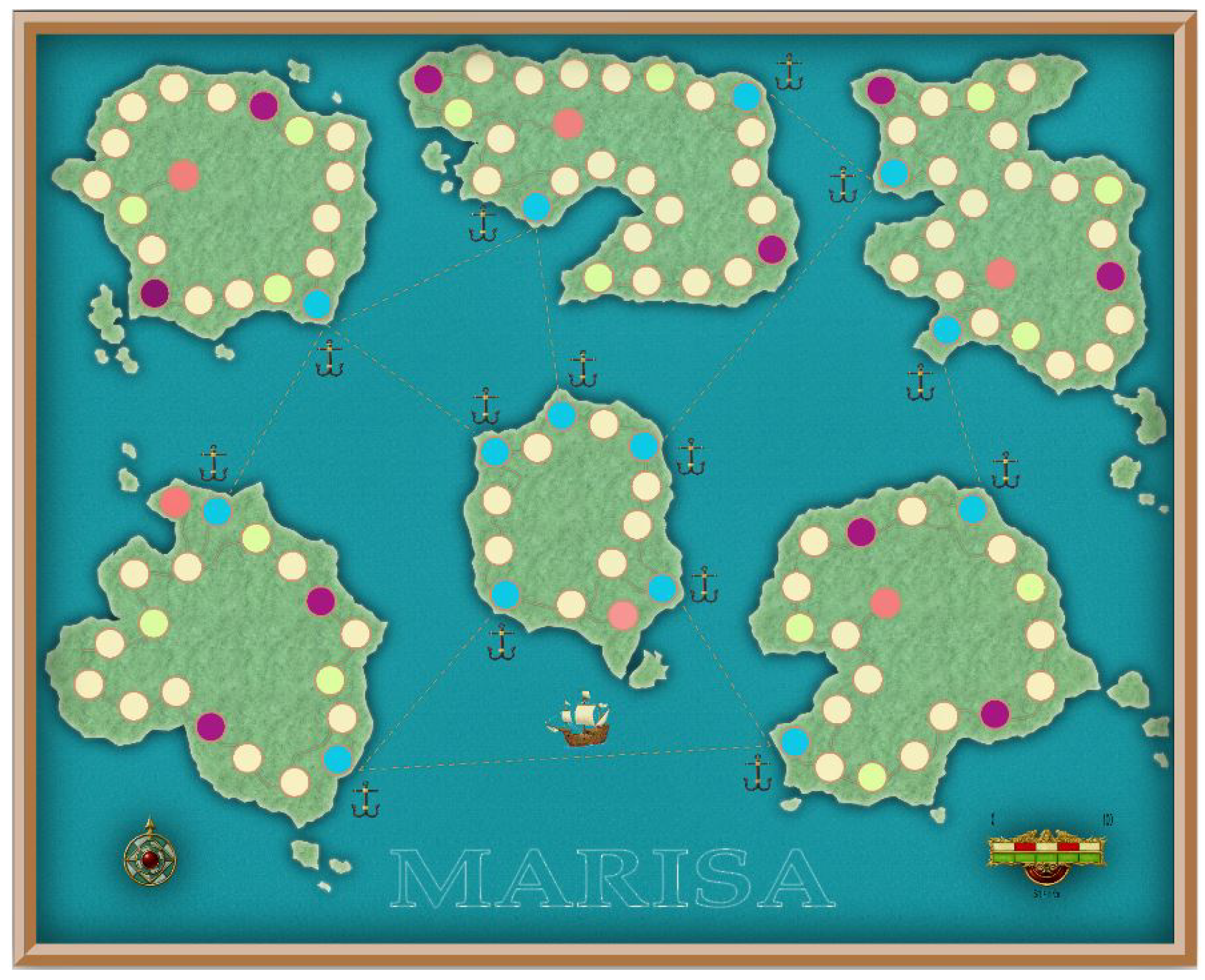

3.2. World Design

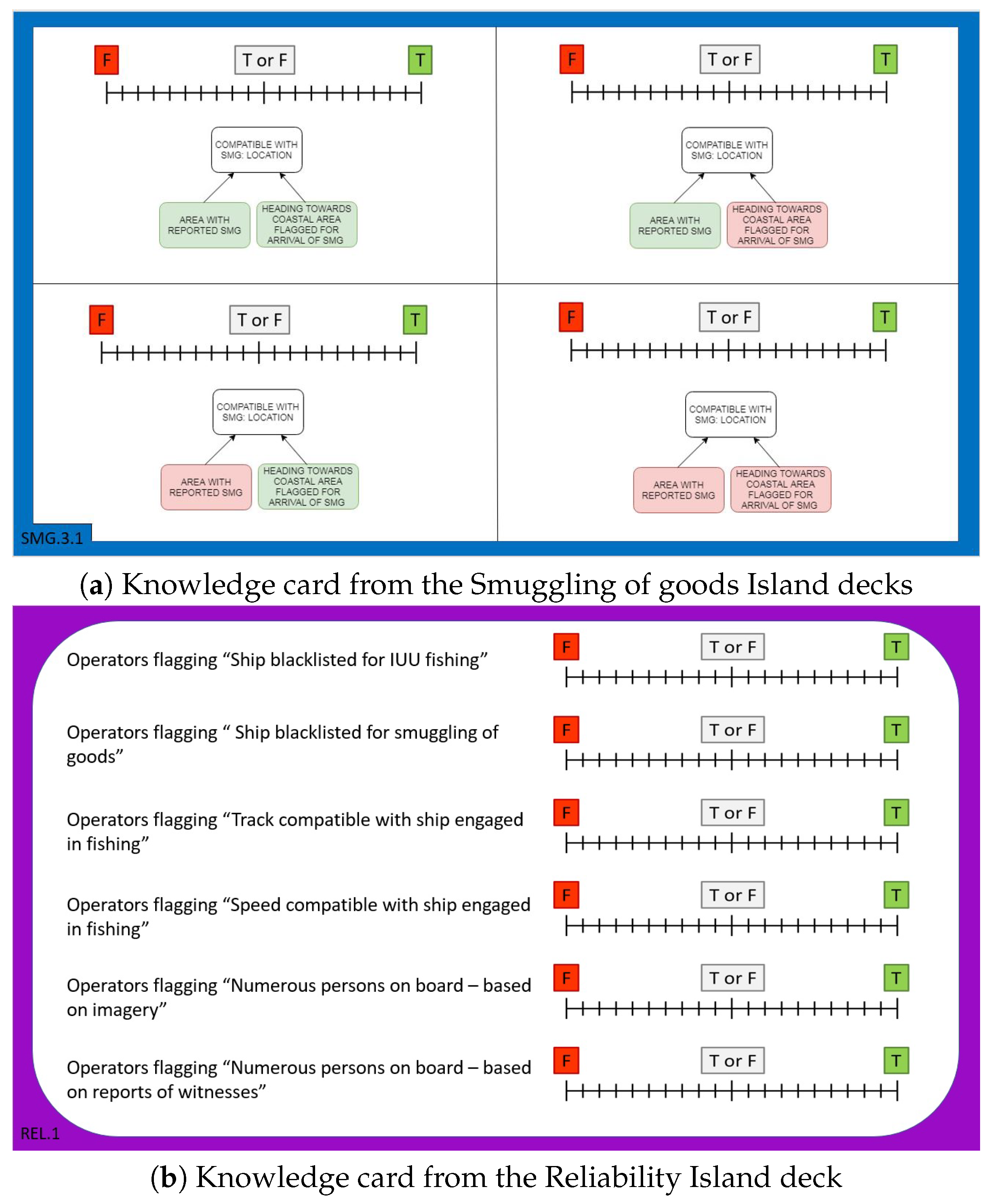

3.3. Content Design

3.4. System Design

- Green: no action associated;

- Beige: allows looking at the knowledge cards as per result of another dice;

- Purple: allows picking one bonus card from the commendation card deck;

- Red: start tile and area of arrival when changing island thanks to a commendation card;

- Blue: harbors to move between islands.

- the assessment of hypotheses relative to maritime anomalies;

- the use of cards to communicate messages to the player;

- the investigation component;

- the rating of the player beliefs related to the knowledge constructs provided through cards;

- the collection of knowledge tokens.

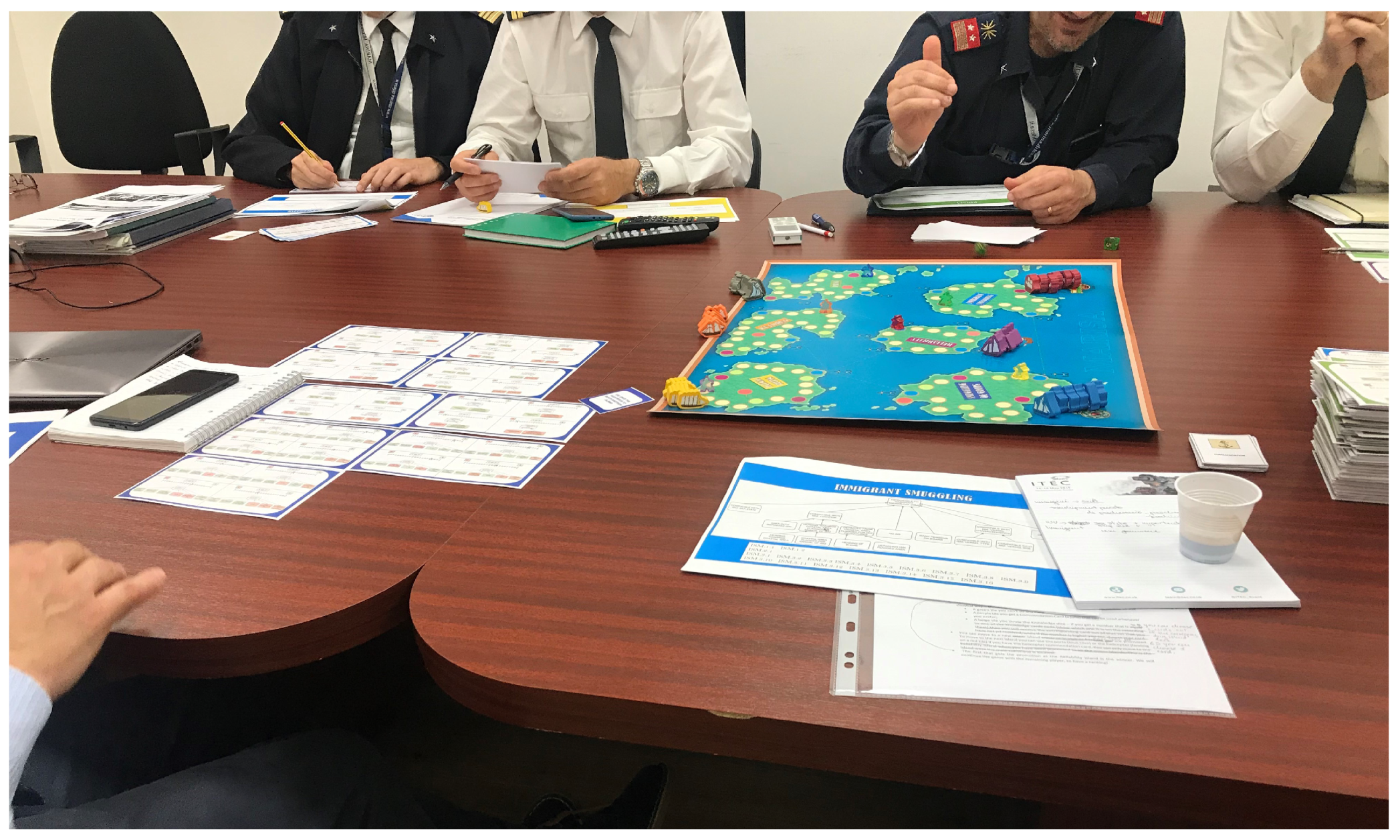

3.5. Knowledge Acquisition Experiments

- collection of the players belief to be used to define the MSDBN conditional probability tables;

- validation of the MSDBN structure;

- validity of the MARISA Game.

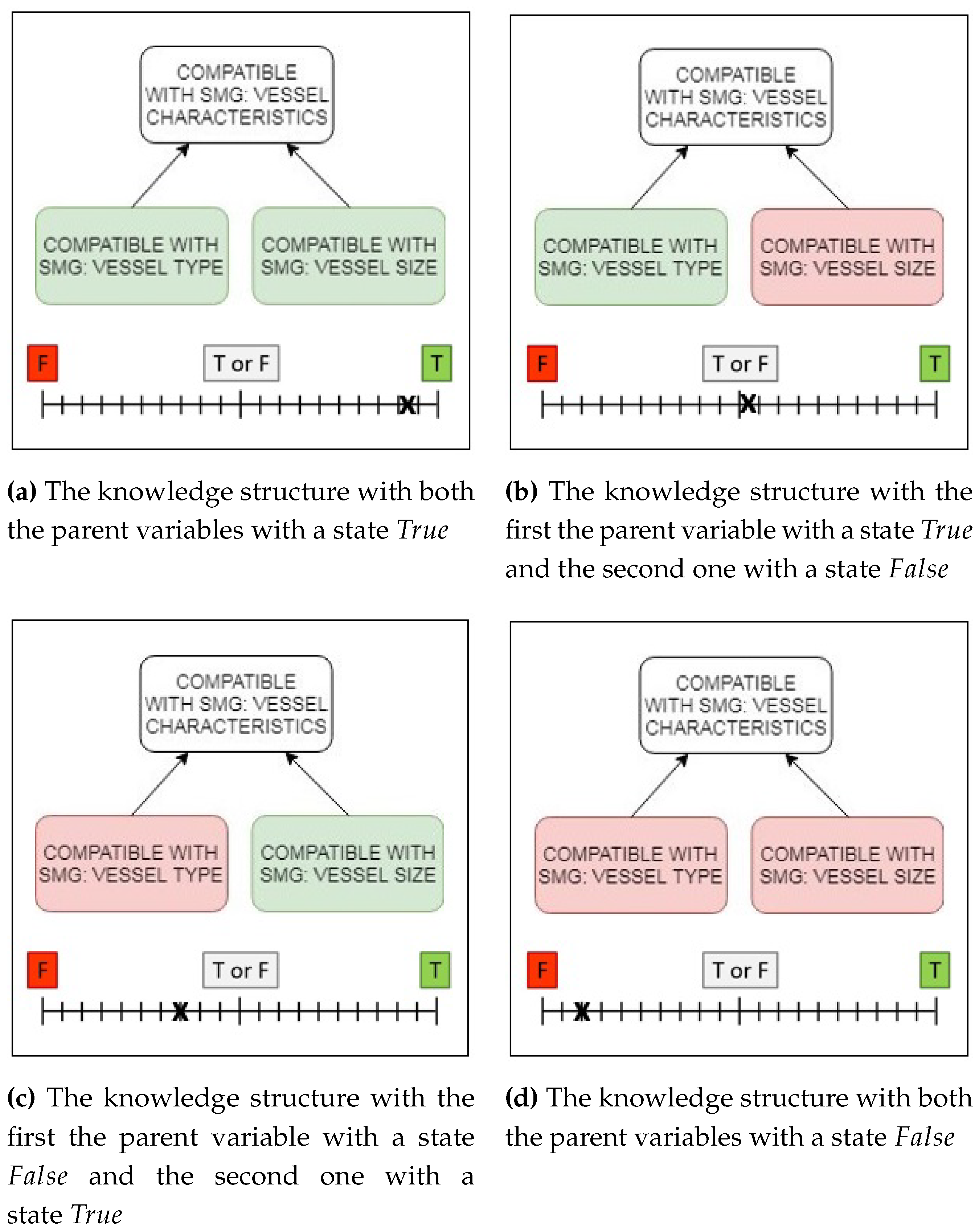

4. Results and Discussion

- : Compatible with SMG: vessel characteristics;

- : Compatible with SMG: vessel type;

- : Compatible with SMG: vessel size.

- the usability of the game as a system;

- the facilitation process;

- the sensory and imaginative immersion;

- the players’ satisfaction.

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| BN | Bayesian network |

| CISE | Common information sharing environment |

| CPT | Conditional probability table |

| DAG | Direct acyclic graph |

| DBN | Dynamic Bayesian network |

| EC | European Commission |

| H2020 | Horizon 2020 |

| IUU | Illegal, unreported and unregulated |

| KA | Knowledge acquisition |

| MARISA | Maritime integrated surveillance awareness |

| MARISA Game | MARItime Surveillance knowledge Acquisition Game |

| MSDBN | Multi-source dynamyc Bayesian network |

| NASA TLX | NASA task load index |

| PX | Player experience |

| QUIS | Questionnaire for user interaction satisfaction |

| SAW | Situational awareness |

| SLOC | Sea line of communication |

| SMG | Smuggling of goods |

Appendix A. Results of the K2AGQ

| Challenge Sub-Dimension | 1 (%) | 2 (%) | 3 (%) | 4 (%) | 5 (%) | RR (%) | Mo | IQR |

|---|---|---|---|---|---|---|---|---|

| It was easy | 0.0 | 0.0 | 14.3 | 57.1 | 28.6 | 77.8 | 4 | 0.50 |

| The game does not become monotonous as it progresses | 12.5 | 12.5 | 37.5 | 25.0 | 12.5 | 88.9 | 3 | 1.25 |

| The game is appropriately challenging for me | 0.0 | 25.0 | 37.5 | 25.0 | 12.5 | 88.9 | 3 | 1.25 |

| The game provides new challenges at an appropriate pace | 0.0 | 25.0 | 37.5 | 25.0 | 12.5 | 88.9 | 3 | 1.25 |

| Confidence Sub-Dimension | 1 (%) | 2 (%) | 3 (%) | 4 (%) | 5 (%) | RR (%) | Mo | IQR |

|---|---|---|---|---|---|---|---|---|

| The content and the structure of the game helped me to become confident that I would support the stated goal | 12.5 | 0.0 | 12.5 | 62.5 | 12.5 | 88.9 | 4 | 0.25 |

| The facilitation approach of the game helped me to become confident that I would support the stated goal | 12.5 | 0.0 | 25.0 | 50.0 | 12.5 | 88.9 | 4 | 1.00 |

| When I first looked at the game I had the impression that it would be easy | 25.0 | 0.0 | 62.5 | 12.5 | 0.0 | 88.9 | 3 | 0.50 |

| Flow Sub-Dimension | 1 (%) | 2 (%) | 3 (%) | 4 (%) | 5 (%) | RR (%) | Mo | IQR |

|---|---|---|---|---|---|---|---|---|

| I forgot about my immediate surroundings while playing the game | 50.0 | 0.0 | 37.5 | 12.5 | 0.0 | 88.9 | 1 | 2.00 |

| I was deeply concentrated in the game | 12.5 | 0.0 | 12.5 | 62.5 | 12.5 | 88.9 | 4 | 0.25 |

| I was fully occupied with the game | 12.5 | 0.0 | 25.0 | 25.0 | 37.5 | 88.9 | 5 | 2.00 |

| I was so concentrated in the game that I lost track of time | 37.5 | 12.5 | 37.5 | 0.0 | 12.5 | 88.9 | 1 | 2.00 |

| Overall Attitude Sub-Dimension | 1 (%) | 2 (%) | 3 (%) | 4 (%) | 5 (%) | RR (%) | Mo | IQR |

|---|---|---|---|---|---|---|---|---|

| I enjoyed the game | 0.0 | 12.5 | 12.5 | 37.5 | 37.5 | 88.9 | 4 | 1.25 |

| I felt annoyed | 75.0 | 12.5 | 0.0 | 12.5 | 0.0 | 88.9 | 1 | 0.25 |

| I felt bored | 37.5 | 50.0 | 12.5 | 0.0 | 0.0 | 88.9 | 2 | 1.00 |

| I felt content | 0.0 | 12.5 | 12.5 | 37.5 | 37.5 | 88.9 | 4 | 1.50 |

| I felt good | 0.0 | 12.5 | 0.0 | 75.0 | 12.5 | 88.9 | 4 | 0.00 |

| I felt pressured | 62.5 | 25.0 | 12.5 | 0.0 | 0.0 | 88.9 | 1 | 1.00 |

| I had fun | 0.0 | 0.0 | 25.0 | 75.0 | 0.0 | 88.9 | 4 | 0.25 |

| It gave me a bad mood | 87.5 | 12.5 | 0.0 | 0.0 | 0.0 | 88.9 | 1 | 0.00 |

| Relevance Sub-Dimension | 1 (%) | 2 (%) | 3 (%) | 4 (%) | 5 (%) | RR (%) | Mo | IQR |

|---|---|---|---|---|---|---|---|---|

| I prefer providing support to projects with games to supporting it with other means (e.g., interviews) | 0.0 | 28.6 | 14.3 | 14.3 | 42.9 | 77.8 | 5 | 2.25 |

| It is clear how the game contents are related to the stated goal | 0.0 | 12.5 | 1.25 | 50.0 | 25.0 | 88.9 | 4 | 2.00 |

| The game contents are relevant to my overall interests | 0.0 | 12.5 | 0.0 | 62.5 | 25.0 | 88.9 | 4 | 0.25 |

| The game is an adequate experimentation method for the project | 0.0 | 0.0 | 25.0 | 62.5 | 12.5 | 88.9 | 4 | 0.25 |

| Satisfaction Sub-Dimension | 1 (%) | 2 (%) | 3 (%) | 4 (%) | 5 (%) | RR (%) | Mo | IQR |

|---|---|---|---|---|---|---|---|---|

| Completing the game gave me a satisfying feeling of accomplishment | 12.5 | 0.0 | 25.0 | 50.0 | 12.5 | 88.9 | 4 | 1.00 |

| I feel satisfied with the experience (e.g., supporting through the game the project with expertise) | 12.5 | 0.0 | 12.5 | 37.5 | 37.5 | 88.9 | 4 | 1.25 |

| I felt competent | 0.0 | 25.0 | 25.0 | 37.5 | 12.5 | 88.9 | 4 | 1.25 |

| I felt skillful | 0.0 | 12.5 | 37.5 | 50.0 | 0.0 | 88.9 | 4 | 1.00 |

| I would recommend this game to my colleagues | 12.5 | 0.0 | 25.0 | 37.5 | 25.0 | 88.9 | 4 | 2.00 |

| Sensory and Imaginative Immersion Sub-Dimension | 1 (%) | 2 (%) | 3 (%) | 4 (%) | 5 (%) | RR (%) | Mo | IQR |

|---|---|---|---|---|---|---|---|---|

| I felt I could explore things | 0.0 | 12.5 | 0.0 | 75.0 | 12.5 | 88.9 | 4 | 0.00 |

| I felt imaginative | 0.0 | 12.5 | 37.5 | 37.5 | 12.5 | 88.9 | 3 | 1.00 |

| I found it impressive | 12.5 | 12.5 | 37.5 | 37.5 | 0.0 | 88.9 | 3 | 1.25 |

| I was interested in the game story | 0.0 | 12.5 | 25.0 | 37.5 | 25.0 | 88.9 | 4 | 1.25 |

| It felt like a rich experience | 0.0 | 12.5 | 37.5 | 25.0 | 25.0 | 88.9 | 2 | 1.50 |

| There was something interesting at the beginning of the game that captured my attention | 0.0 | 0.0 | 25.0 | 37.5 | 37.5 | 88.9 | 4 | 1.25 |

| Workload Sub-Dimension | 1 (%) | 2 (%) | 3 (%) | 4 (%) | 5 (%) | RR (%) | Mo | IQR |

|---|---|---|---|---|---|---|---|---|

| How hard did you have to work (mentally and physically) to accomplish your level of performance? | 0.0 | 12.5 | 62.5 | 25.0 | 0.0 | 88.9 | 3 | 0.25 |

| How irritated, stressed, and annoyed versus content, relaxed, and complacent did you feel during the task? | 50.0 | 12.5 | 37.5 | 0.0 | 0.0 | 88.9 | 1 | 2.00 |

| How much mental and perceptual activity was required (e.g., thinking, remembering, calculating, searching, etc.)? Was the task easy or demanding, simple or complex? | 12.5 | 12.5 | 37.5 | 25.0 | 12.5 | 88.9 | 3 | 1.25 |

| How much physical activity was required (e.g., pushing, pulling, controlling, etc.)? Was the task easy or demanding, slack or strenuous? | 37.5 | 0.0 | 37.5 | 25.0 | 0.0 | 88.9 | 1 | 2.25 |

| How much time pressure did you feel due to the pace at which the tasks or task elements occurred? Was the pace slow or rapid? | 12.5 | 50.0 | 25.0 | 12.5 | 0.0 | 88.9 | 2 | 1.00 |

| How successful were you in performing the task? How satisfied were you with your performance? | 12.5 | 0.0 | 25.0 | 37.5 | 25.0 | 88.9 | 4 | 1.25 |

| Usability Sub-Dimension | 1 (%) | 2 (%) | 3 (%) | 4 (%) | 5 (%) | RR (%) | Mo | IQR |

|---|---|---|---|---|---|---|---|---|

| How clear are the requests for input by the player? | 12.5 | 0.0 | 0.0 | 62.5 | 25.0 | 88.9 | 4 | 0.25 |

| How clear are the supplemental reference materials? | 0.0 | 12.5 | 25.0 | 37.5 | 25.0 | 88.9 | 4 | 1.25 |

| How clear was the organization of the overall layout? | 12.5 | 0.0 | 25.0 | 12.5 | 50.0 | 88.9 | 5 | 2.00 |

| How clear was the sequence of “screens” presented? | 12.5 | 0.0 | 12.5 | 37.5 | 37.5 | 88.9 | 4 | 1.25 |

| How consistent was the use of terms throughout system? | 12.5 | 0.0 | 12.5 | 62.5 | 12.5 | 88.9 | 4 | 0.25 |

| How easy is it to correct your mistakes? | 0.0 | 12.5 | 37.5 | 37.5 | 12.5 | 88.9 | 3 | 1.00 |

| How easy is it to explore new features by trial and error? | 12.5 | 12.5 | 25.0 | 50.0 | 0.0 | 88.9 | 4 | 1.25 |

| How easy is it to learn to play? | 0.0 | 12.5 | 0.0 | 37.5 | 50.0 | 88.9 | 5 | 1.00 |

| How easy is it to perform the task in a straight-forward manner? | 12.5 | 12.5 | 37.5 | 12.5 | 25.0 | 88.9 | 3 | 1.50 |

| How easy is it to remember names and use of commands? | 12.5 | 0.0 | 25.0 | 50.0 | 12.5 | 88.9 | 4 | 1.00 |

| How easy it was to interpret (e.g., read and understand) the game items? | 0.0 | 12.5 | 12.5 | 37.5 | 37.5 | 88.9 | 4 | 1.25 |

| How fast is the game? | 12.5 | 12.5 | 62.5 | 0.0 | 12.5 | 88.9 | 3 | 0.25 |

| How good are the feedback received during the game? | 0.0 | 0.0 | 12.5 | 50.0 | 37.5 | 88.9 | 4 | 1.00 |

| How good are the game messages and reports? | 0.0 | 25.0 | 12.5 | 37.5 | 25.0 | 88.9 | 4 | 1.50 |

| How good are the use of colors and sounds? | 0.0 | 0.0 | 25.0 | 50.0 | 25.0 | 88.9 | 4 | 0.50 |

| How helpful are the help messages during the game? | 0.0 | 12.5 | 37.5 | 25.0 | 25.0 | 88.9 | 3 | 1.25 |

| How helpful are the instructions that you receive when you make an error? | 0.0 | 12.5 | 12.5 | 37.5 | 37.5 | 88.9 | 4 | 1.25 |

| How much are experienced and inexperienced users’ needs taken into consideration? | 0.0 | 25.0 | 25.0 | 37.5 | 12.5 | 88.9 | 4 | 1.25 |

| How much are the game clutter and interface “noise”? | 25.0 | 37.5 | 12.5 | 25.0 | 0.0 | 88.9 | 2 | 1.50 |

| How much are you kept informed of what the facilitator is doing? | 0.0 | 12.5 | 0.0 | 50.0 | 37.5 | 88.9 | 4 | 1.00 |

| How much is the position of messages consistent on the game layout? | 12.5 | 0.0 | 12.5 | 50.0 | 25.0 | 88.9 | 4 | 0.50 |

| How much the game terminology is related to the task you are doing? | 0.0 | 25.0 | 0.0 | 50.0 | 25.0 | 88.9 | 4 | 0.75 |

| How pleasant are the game response to errors? | 0.0 | 12.5 | 25.0 | 25.0 | 37.5 | 88.9 | 5 | 2.00 |

References

- Korb, K.B.; Nicholson, A.E. Bayesian Artificial Intelligence, 2nd ed.; Chapman & Hall: London, UK, 2010. [Google Scholar]

- Duda, R.; Shortliffe, E. Expert Systems Research. Science 1983, 202, 261–268. [Google Scholar] [CrossRef]

- Endsley, R.M. The application of human factors to the development of expert systems for advanced cockpits. In Proceedings of the Human Factors Society 31st Annual Meeting. Human Factor Society, Santa Monica, CA, USA, 87–83 August 1987; pp. 1388–1392. [Google Scholar]

- Shang, Y. Expert Systems. In The Electrical Engineering Handbook; Academic Press: Cambridge, MA, USA, 2001. [Google Scholar]

- Van Harmelen, F.; Lifschitz, V.; Porter, B.W. (Eds.) Handbook of Knowledge Representation, 3rd ed.; Foundations of Artificial Intelligence; Elsevier: Amsterdam, The Netherlands, 2007. [Google Scholar]

- Boose, J.H. A Survey of Knowledge Acquisition Techniques and Tools. Knowl. Acquis. 1989, 1, 3–37. [Google Scholar] [CrossRef]

- Meyer, M.A.; Booker, J.M. Eliciting and Analyzing Expert Judgment: A Practical Guide; Society for Industrial and Applied Mathematics: Philadelphia, PA, USA, 2001. [Google Scholar]

- Schreiber, G.; Akkermans, H.; Anjewierden, A.; de Hoog, R.; Shadbolt, N.; Van de Velde, W.; Wielinga, B. Knowledge Engineering and Management: The CommonKADS Methodology; MIT Press: Cambridge, MA, USA, 2000. [Google Scholar]

- Gavrilova, T.; Andreeva, T. Knowledge Elicitation Techniques in a Knowledge Management Context. J. Knowl. Manag. 2012, 16, 523–537. [Google Scholar] [CrossRef]

- Studer, R.; Benjamins, V.R.; Fensel, D. Knowledge Engineering: Principles and Methods. Data Knowl. Eng. 1998, 25, 161–197. [Google Scholar] [CrossRef]

- Renooij, S.; Witteman, C. Probability elicitation for belief networks: Issues to consider. Int. J. Approx. Reason. 1999, 22, 169–194. [Google Scholar] [CrossRef]

- Van der Gaag, L.; Renooij, S.; Witteman, C.; Aleman, B.; Taal, B. How to elicit many probabilities. In Proceedings of the Fifteen conference on Uncertainty in Artificial Intelligence, Stockholm, Sweden, 30 July–1 August 1999; pp. 647–654. [Google Scholar]

- Das, B. Generating conditional probabilities for Bayesian Networks: Easing the knowledge acquisition problem. arXiv 2004, arXiv:cs/0411034. [Google Scholar]

- Kemp-Benedict, E. Elicitation Techniques for Bayesian Network Models; Number Working Paper WP-US-0804; Stockholm Environment Institute: Stockholm, Sweden, 2008. [Google Scholar]

- Wisse, B.; Van Gosliga, S.; Van Elst, N.; Barros, A. Relieving the elicitation burden of Bayesian Belief Networks. In Proceedings of the Sixth UAI Bayesian Modelling Applications Workshop, Helsinki, Finland, 9 July 2008. [Google Scholar]

- Renooij, S. Probability elicitation for belief networks: Issues to consider. Knowl. Eng. Rev. 2001, 16, 255–269. [Google Scholar] [CrossRef]

- Wang, H.; Dahs, D.; Druzdel, M. A Method for Evaluating Elicitation Schemes for Probabilistic Models. IEEE Trans. Syst. Man Cybern. Part B Cybern. 2002, 32, 38–43. [Google Scholar] [CrossRef]

- Witteman, C.; Renooij, S. Evaluation of a verbal-numerical probability scale. Int. J. Approx. Reason. 2003, 33, 117–131. [Google Scholar] [CrossRef]

- Aebischer, D. Bayesian Networks for Descriptive Analytics in Military Equipment Applications; CRC Press: Taylor & Francis Group: Boca Raton, FL, USA, 2018. [Google Scholar]

- De Rosa, F.; Jousselme, A.L.; De Gloria, A. A Reliability Game for Source Factors and Situational Awareness Experimentation. Int. J. Serious Games 2018, 5, 45–64. [Google Scholar] [CrossRef]

- Geurts, J.L.; Duke, R.D.; Vermeulen, P.A. Policy Gaming for Strategy and Change. Long Range Plan. 2007, 40, 535–558. [Google Scholar] [CrossRef]

- Deterding, S.; Sicart, M.; Nacke, L.; O’Hara, K.; Dixon, D. Gamification. Using Game-design Elements in Non-gaming Contexts. In Proceedings of the CHI ’11 Extended Abstracts on Human Factors in Computing Systems, Vancouver, BC, Canada, 7–12 May 2011; ACM: New York, NY, USA, 2011; pp. 2425–2428. [Google Scholar]

- Ašeriškis, D.; Damaševičius, R. Gamification of a project management system. In Proceedings of the Conference on Advances in Computer-Human Interactions, Barcelona, Spain, 9 July 2014. [Google Scholar]

- Abt, C. Serious Games; The Viking Press: New York, NY, USA, 1970. [Google Scholar]

- Perla, P.; McGrady, E. Why Wargaming Works. Nav. War Coll. Rev. 2011, 64, 111–130. [Google Scholar]

- Djaouti, D.; Alvarez, J.; Jesse, J.P. Classifying serious games: The G/P/S model. Handb. Res. Improv. Learn. Motiv. Through Educ. Games Multidiscip. Approaches 2011, 1, 118–136. [Google Scholar]

- Graafland, M.; Schijven, M.P. A serious game to improve Situation Awareness in laparoscopic surgery. In Games for Health; Schouten, B., Fedtke, S., Bekker, T., Schijven, M., Gekker, A., Eds.; Springer Fachmedien Wiesbaden: Wiesbaden, Germany, 2013; pp. 173–182. [Google Scholar]

- Sawaragi, T.; Fujii, K.; Horiguchi, Y.; Nakanishi, H. Analysis of Team Situation Awareness Using Serious Game and Constructive Model-Based Simulation. IFAC-PapersOnLine 2016, 49, 537–542. [Google Scholar] [CrossRef]

- Cooper, S.; Khatib, F.; Treuille, A.; Barbero, J.; Lee, J.; Beenen, M.; Leaver-Fay, A.; Baker, D.; Popovic, Z.; Players, F. Predicting protein structures with a multiplayer online game. Nature 2010, 466, 756–760. [Google Scholar] [CrossRef]

- Ren, Y.; Bayrak, A.E.; Papalambros, P.Y. EcoRacer: Game-based optimal electric vehicle design and driver control using human players. J. Mech. Des. 2016, 138, 061407. [Google Scholar] [CrossRef]

- Jousselme, A.L.; Pallotta, G.; Locke, J. Risk Game: Capturing impact of information quality on human belief assessment and decision-making. Int. J. Serious Games 2018, 5, 23–44. [Google Scholar] [CrossRef]

- Dean, T.; Kanazawa, K. A model for reasoning about persistence and causation. Artif. Intell. 1989, 93, 1–27. [Google Scholar] [CrossRef]

- Murphy, K.P. Dynamic Bayesian Networks: Representation, Inference and Learning. Ph.D. Thesis, University of California, Berkeley, Berkeley, CA, USA, 2002. [Google Scholar]

- Koller, D.; Friedman, N. Probabilistic Graphical Models: Principles and Techniques; MIT Press: Cambridge, MA, USA, 2009. [Google Scholar]

- Anneken, M.; de Rosa, F.; Kröker, A.; Jousselme, A.L.; Robert, S.; Beyerer, J. Detecting illegal diving and other suspicious activities in the North Sea: Tale of a successful trial. In Proceedings of the 20th Interational Radar Symposium, Ulm, Germany, 26–28 June 2019. [Google Scholar]

- Margarit, G.; Nunes, A. NEREIDS D.440.2—Simulation Element Definition for Anomal Analysis; Technical Report, Deliverable of the NEREIDS Project Funded under the European Union Research Framework Programme 7; 2012. [Google Scholar]

- Camossi, E. A Reasoned Survey of Anomaly Detection Methods for Early Maritime Domain Awareness; Technical Report JRC80902; European Commission—Joint Research Centre: Brussels, Belgium, 2013.

- Brathwaite, B.; Schreiber, I. Challenges for Game Designers; Charles River Media: Newton, MA, USA, 2008. [Google Scholar]

- Nacke, L.; Drachen, A.; Goebel, S. Methods for Evaluating Gameplay Experience in a Serious Gaming Context. Int. J. Comput. Sci. Sport 2010, 9, 1–12. [Google Scholar]

- Wiemeyer, J.; Nacke, L.; Moser, C.; ‘Floyd’ Mueller, F. Player Experience. In Serious Games: Foundations, Concepts and Practice; Dörner, R., Göbel, S., Effelsberg, W., Wiemeyer, J., Eds.; Springer International Publishing: Cham, Switzerland, 2016; pp. 243–271. [Google Scholar] [CrossRef]

- IJsselsteijn, W.A.; de Kort, Y.A.W.; Poels, K. Game Experience Questionnaire; Technische Universiteit Eindhoven: Eindhoven, The Netherlands, 2007. [Google Scholar]

- Petri, G.; Gresse von Wangenheim, C.; Borgatto, A.F. MEEGA+, Systematic Model to Evaluate Educational Games. In Encyclopedia of Computer Graphics and Games; Lee, N., Ed.; Springer: Cham, Switzerland, 2019. [Google Scholar] [CrossRef]

- Chin, J.P.; Diehl, V.A.; Norman, K.L. Development of an instrument measuring user satisfaction of the human-computer interface. In Proceedings of the SIGCHI 1988; ACM/SIGCHI: New York, NY, USA, 1988; pp. 213–218. [Google Scholar]

- Harper, B.D.; Norman, K.L. Improving User Satisfaction: The Questionnaire for User Interaction Satisfaction Version 5.5. In Proceedings of the 1st Annual Mid-Atlantic Human Factors Conference, Virginia Beach, VA, USA, 25–26 February 1993; pp. 224–228. [Google Scholar]

- Human Performance Research Group. NASA Task Load Index; NASA Ames Research Center: Mountain View, CA, USA, 1986. [Google Scholar]

- Smith, G.F.; Benson, P.G.; Curley, S.P. Belief, knowledge, and uncertainty: A cognitive perspective on subjective probability. Organ. Behav. Hum. Decis. Process. 1991, 48, 291–321. [Google Scholar] [CrossRef]

- Game Mechanics. Available online: https://boardgamegeek.com/browse/boardgamemechanic (accessed on 19 December 2019).

- Machinations. Available online: https://https://machinations.io/ (accessed on 30 December 2019).

- Adams, E.; Dormans, J. Game Mechanics: Advanced Game Design; New Riders: San Francisco, CA, USA, 2012. [Google Scholar]

- Rubel, R.C. The epistemology of war gaming. Nav. War Coll. Rev. 2006, 59, 108–128. [Google Scholar]

- Peters, V.; Westelaken, M. Simulation Games—A Concise Introduction to Game Design; Samenspraak Advies: Nijmegen, The Nethernalds, 2014. [Google Scholar]

- Kurapati, S.; Kourounioti, I.; Lukosch, H.; Tavasszy, L.; Verbraeck, A. Fostering Sustainable Transportation Operations through Corridor Management: A Simulation Gaming Approach. Sustainability 2018, 10, 455. [Google Scholar] [CrossRef]

- Wilson, D.W.; Jenkins, J.; Twyman, N.; Jensen, M.; Valacich, J.; Dunbar, N.; Wilson, S.; Miller, C.; Adame, B.; Lee, Y.H.; et al. Serious Games: An Evaluation Framework and Case Study. In Proceedings of the 49th Hawaii International Conference on System Sciences (HICSS), Koloa, HI, USA, 5–8 January 2016. [Google Scholar]

- Joint Systems Analysis (JSA) Group. Methods and Approaches for Warfighting Experimentation Action Group 12 (AG-12). In Guide for Understanding and Implementing Defence Experimentation (GUIDEx); Technical Cooperation Program (TTCP): Ottawa, Canada, 2006. [Google Scholar]

| Variable | Description | Frame |

|---|---|---|

| M | Message conveyed by a knowledge card | {, …, …, } |

| Knowledge structure conveyed by a message | {, …, , …, | |

| D | Variable with dependency with Q state | {, } |

| Q | Query Variable state (i.e., hypothesis) | {, } = {, } |

| Variable | Description | View |

|---|---|---|

| M | Message conveyed by a knowledge card | Provided |

| Knowledge structure conveyed by a message | Provided | |

| D | Variable with dependency with Q state | Provided |

| Q | Query Variable state (i.e., hypothesis) | Assessed |

| Game Mechanic | Description |

|---|---|

| Dice Rolling | The rolling of dices is used to move on the board and to determine the knowledge cards to receive |

| Elapsed Real Time Ending | The game ends after a specific time has passed [47] |

| Move Through Deck | Players move through a deck of cards to reach the bottom [47] |

| Point to Point Movement | Movements on the game board can happen only between connected points [47] |

| Role Playing | Players embody characters that improves over time [47] |

| Set Collection | The players need to collect set of items (i.e., knowledge tokens) [47] |

| Command | Players have cards that allow them to activate and perform actions (i.e., commendation cards) [47] |

| Lose a Turn | A meta-mechanism that implies that the player skips a turn [47] |

| Feature | Specification | EXP1 | EXP2 |

|---|---|---|---|

| Participants | Number (n) | 4 | 5 |

| Gender | Male | ||

| Female | |||

| Age | Average | years | years |

| Standard Dev. | years | years | |

| Status | Law enforcement / military | ||

| Civilian | |||

| Nationality | Italian | ||

| Spanish |

| Compatible with SMG: Vessel Type Compatible with SMG: Vessel Size | True | False | ||

|---|---|---|---|---|

| True | False | True | False | |

| True | 0.825 | 0.525 | 0.35 | 0.1 |

| False | 0.175 | 0.475 | 0.65 | 0.9 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

de Rosa, F.; De Gloria, A. An Analytical Game for Knowledge Acquisition for Maritime Behavioral Analysis Systems. Appl. Sci. 2020, 10, 591. https://doi.org/10.3390/app10020591

de Rosa F, De Gloria A. An Analytical Game for Knowledge Acquisition for Maritime Behavioral Analysis Systems. Applied Sciences. 2020; 10(2):591. https://doi.org/10.3390/app10020591

Chicago/Turabian Stylede Rosa, Francesca, and Alessandro De Gloria. 2020. "An Analytical Game for Knowledge Acquisition for Maritime Behavioral Analysis Systems" Applied Sciences 10, no. 2: 591. https://doi.org/10.3390/app10020591

APA Stylede Rosa, F., & De Gloria, A. (2020). An Analytical Game for Knowledge Acquisition for Maritime Behavioral Analysis Systems. Applied Sciences, 10(2), 591. https://doi.org/10.3390/app10020591