The Application of Artificial Intelligence in Prostate Cancer Management—What Improvements Can Be Expected? A Systematic Review

Abstract

1. Introduction

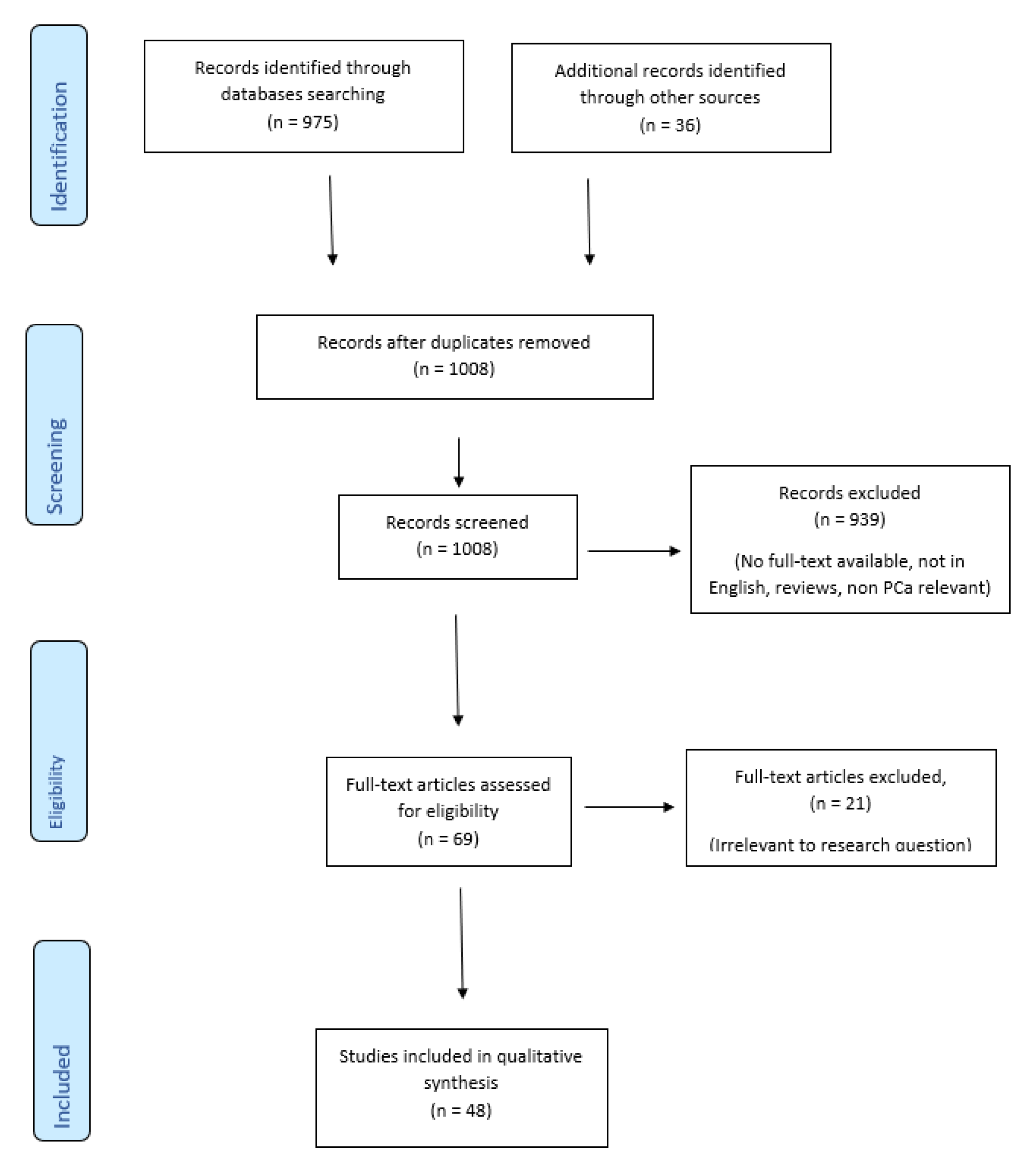

2. Materials and Methods

2.1. Ethical Consideration

2.2. Study Selection

2.3. Search Strategy

3. Results

3.1. Diagnosis

3.1.1. Genomics

3.1.2. Imaging and Radiomics

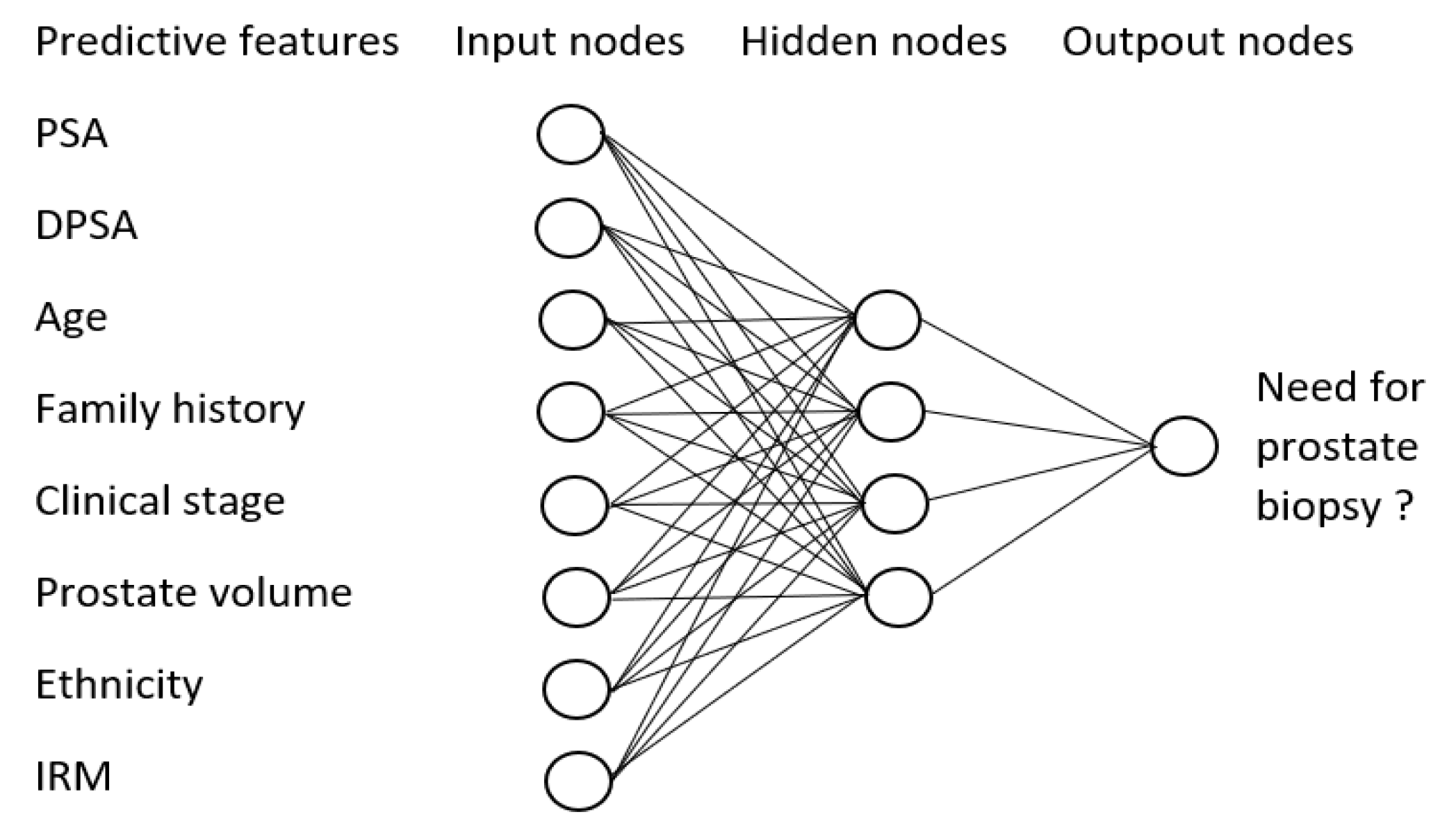

3.1.3. Biopsies

3.2. Pathology

3.3. Treatment

3.3.1. Decision Making

3.3.2. Surgery

3.3.3. Radiotherapy

3.3.4. Medication

3.4. Oncological Outcomes

4. Discussion

Review Limitation

5. Conclusions and Perspectives

Author Contributions

Funding

Conflicts of Interest

Ethical Approval

References

- Kaplan, A.M.; Haenlein, M. Siri, Siri, in my hand: Who’s the fairest in the land? On the interpretations, illustrations, and implications of artificial intelligence. Bus. Horiz. 2019, 62, 15–25. [Google Scholar] [CrossRef]

- Tran, B.X.; Vu, G.T.; Ha, G.H.; Vuong, Q.H.; Ho, T.M.; Vuong, T.T.; La, V.P.; Nghiem, K.C.P.; Le, H.T.; Latkin, C.A.; et al. Global evolution of research in artificial intelligence in health and medicine: A bibliometric study. J. Clin. Med. 2019, 8, 360. [Google Scholar] [CrossRef] [PubMed]

- Appenzeller, T. The AI Revolution in Science; Science AAAS: Washington, DC, USA, 2017; Available online: https://www.sciencemag.org/news/2017/07/ai-revolution-science (accessed on 9 January 2020).

- Ghahramani, Z. Probabilistic machine learning and artificial intelligence. Nature 2015, 521, 452–459. [Google Scholar] [CrossRef] [PubMed]

- Jordan, M.I.; Mitchell, T.M. Machine learning: Trends, perspectives, and prospects. Science 2015, 349, 255–260. [Google Scholar] [CrossRef] [PubMed]

- Noble, W.S. What is a support vector machine? Nat. Biotechnol. 2006, 24, 1565–1567. [Google Scholar] [CrossRef] [PubMed]

- Bishop, C.M. Pattern Recognition and Machine Learning; CERN Document Server: New York, NY, USA, 2006; Available online: https://cds.cern.ch/record/998831 (accessed on 30 January 2020).

- Breiman, L. Random forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Anagnostou, T.; Remzi, M.; Lykourinas, M.; Djavan, B. Artificial neural networks for decision-making in urologic oncology. Eur. Urol. 2003, 43, 596–603. [Google Scholar] [CrossRef][Green Version]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef] [PubMed]

- Karimi, D.; Samei, G.; Kesch, C.; Nir, G.; Salcudean, T. Prostate segmentation in MRI using a convolutional neural network architecture and training strategy based on statistical shape models. Int. J. Comput. Assist. Radiol. Surg. 2018, 13, 1211–1219. [Google Scholar] [CrossRef] [PubMed]

- Brinker, T.J.; Hekler, A.; Utikal, J.; Grabe, N.; Schadendorf, D.; Klode, J.; Berking, C.; Steeb, T.; Enk, A.H.; Von Kalle, C.; et al. Skin cancer classification using convolutional neural networks: Systematic review. J. Med. Internet Res. 2018, 20, e11936. [Google Scholar] [CrossRef] [PubMed]

- Abbod, M.F.; Catto, J.; Linkens, D.A.; Hamdy, F.C. Application of artificial intelligence to the management of urological cancer. J. Urol. 2007, 178, 1150–1156. [Google Scholar] [CrossRef]

- Goldenberg, S.L.; Nir, G.; Salcudean, S.E. A new era: Artificial intelligence and machine learning in prostate cancer. Nat. Rev. Urol. 2019, 16, 391–403. [Google Scholar] [CrossRef] [PubMed]

- Suarez-Ibarrola, R.; Hein, S.; Reis, G.; Gratzke, C.; Miernik, A. Current and future applications of machine and deep learning in urology: A review of the literature on urolithiasis, renal cell carcinoma, and bladder and prostate cancer. World J. Urol. 2019. [Google Scholar] [CrossRef] [PubMed]

- Moher, D.; Shamseer, L.; Clarke, M.J.; Ghersi, D.; Liberati, A.; Petticrew, M.P.; Shekelle, P.G.; Stewart, L. Preferred reporting items for systematic review and meta-analysis protocols (PRISMA-P) 2015 statement. Syst. Rev. 2015, 4, 1. [Google Scholar] [CrossRef] [PubMed]

- Brönimann, S.; Pradere, B.; Karakiewicz, P.; Abufaraj, M.; Briganti, A.; Shariat, S.F. An overview of current and emerging diagnostic, staging and prognostic markers for prostate cancer. Expert Rev. Mol. Diagn. 2020, 1–10. [Google Scholar] [CrossRef]

- MacInnis, R.J.; Schmidt, D.F.; Makalic, E.; Severi, G.; Fitzgerald, L.M.; Reumann, M.; Kapuscinski, M.K.; Kowalczyk, A.; Zhou, Z.; Goudey, B.; et al. Use of a novel nonparametric version of DEPTH to identify genomic regions associated with prostate cancer risk. Cancer Epidemiol. Biomark. Prev. 2016, 25, 1619–1624. [Google Scholar] [CrossRef]

- Hou, Q.; Bing, Z.T.; Hu, C.; Li, M.Y.; Yang, K.H.; Mo, Z.; Xie, X.W.; Liao, J.L.; Lu, Y.; Horie, S.; et al. RankProd combined with genetic algorithm optimized artificial neural network establishes a diagnostic and prognostic prediction model that revealed C1QTNF3 as a biomarker for prostate cancer. EBioMedicine 2018, 32, 234–244. Available online: https://www.ncbi.nlm.nih.gov/pubmed/29861410 (accessed on 5 December 2019). [CrossRef]

- Ishioka, J.; Matsuoka, Y.; Uehara, S.; Yasuda, Y.; Kijima, T.; Yoshida, S.; Yokoyama, M.; Saito, K.; Kihara, K.; Numao, N.; et al. Computer-aided diagnosis of prostate cancer on magnetic resonance imaging using a convolutional neural network algorithm. BJU Int. 2018, 122, 411–417. [Google Scholar] [CrossRef]

- Vos, P.C.; Hambrock, T.; Barenstz, J.O.; Huisman, H.J. Computer-assisted analysis of peripheral zone prostate lesions using T2-weighted and dynamic contrast enhanced T1-weighted MRI. Phys. Med. Biol. 2010, 55, 1719–1734. [Google Scholar] [CrossRef]

- Niaf, E.; Rouvière, O.; Mège-Lechevallier, F.; Bratan, F.; Lartizien, C. Computer-aided diagnosis of prostate cancer in the peripheral zone using multiparametric MRI. Phys. Med. Biol. 2012, 57, 3833–3851. [Google Scholar] [CrossRef]

- Giannini, V.; Mazzetti, S.; Vignati, A.; Russo, F.; Bollito, E.; Porpiglia, F.; Stasi, M.; Regge, D. A fully automatic computer aided diagnosis system for peripheral zone prostate cancer detection using multi-parametric magnetic resonance imaging. Comput. Med. Imaging Graph. 2015, 46, 219–226. [Google Scholar] [CrossRef] [PubMed]

- Rampun, A.; Chen, Z.; Malcolm, P.; Tiddeman, B.P.; Zwiggelaar, R. Computer-aided diagnosis: Detection and localization of prostate cancer within the peripheral zone. Int. J. Numer. Method Biomed. Eng. 2015, 32, e02745. [Google Scholar] [CrossRef]

- Wang, X.; Yang, W.; Weinreb, J.; Han, J.; Li, Q.; Kong, X.; Yan, Y.; Ke, Z.; Luo, B.; Liu, T.; et al. Searching for prostate cancer by fully automated magnetic resonance imaging classification: Deep learning versus non-deep learning. Sci. Rep. 2017, 7, 15415. [Google Scholar] [CrossRef]

- Song, Y.; Yan, X.; Liu, H.; Zhou, M.; Hu, B.; Yang, G.; Zhang, Y.-D. Computer-aided diagnosis of prostate cancer using a deep convolutional neural network from multiparametric MRI. J. Magn. Reson. Imaging 2018, 48, 1570–1577. [Google Scholar] [CrossRef]

- Matulewicz, L.; Jansen, J.; Bokacheva, L.; Vargas, H.A.; Akin, O.; Fine, S.W.; Shukla-Dave, A.; Eastham, J.A.; Hricak, H.; Koutcher, J.A.; et al. Anatomic segmentation improves prostate cancer detection with artificial neural networks analysis of1H magnetic resonance spectroscopic imaging. J. Magn. Reson. Imaging 2013, 40, 1414–1421. [Google Scholar] [CrossRef]

- Zhao, K.; Wang, C.; Hu, J.; Yang, X.; Wang, H.; Li, F.Y.; Zhang, X.; Zhang, J.; Wang, X. Prostate cancer identification: Quantitative analysis of T2-weighted MR images based on a back propagation artificial neural network model. Sci. Chin. Life Sci. 2015, 58, 666–673. [Google Scholar] [CrossRef]

- Betrouni, N.; Makni, N.; Lakroum, S.; Mordon, S.; Villers, A.; Puech, P. Computer-aided analysis of prostate multiparametric MR images: An unsupervised fusion-based approach. Int. J. Comput. Assist. Radiol. Surg. 2015, 10, 1515–1526. [Google Scholar] [CrossRef]

- Bonekamp, D.; Kohl, S.; Wiesenfarth, M.; Schelb, P.; Radtke, J.P.; Götz, M.; Kickingereder, P.; Yaqubi, K.; Hitthaler, B.; Gählert, N.; et al. Radiomic machine learning for characterization of prostate lesions with MRI: Comparison to ADC values. Radiology 2018, 289, 128–137. [Google Scholar] [CrossRef]

- Viswanath, S.; Chirra, P.V.; Yim, M.C.; Rofsky, N.M.; Purysko, A.; Rosen, M.; Bloch, B.N.; Madabhushi, A. Comparing radiomic classifiers and classifier ensembles for detection of peripheral zone prostate tumors on T2-weighted MRI: A multi-site study. BMC Med. Imaging 2019, 19, 22–28. [Google Scholar] [CrossRef]

- Le, M.H.; Chen, J.; Wang, L.; Wang, Z.; Liu, W.; Cheng, K.T.; Yang, X. Automated diagnosis of prostate cancer in multi-parametric MRI based on multimodal convolutional neural networks. Phys. Med. Biol. 2017, 62, 6497–6514. [Google Scholar] [CrossRef]

- Li, J.; Weng, Z.; Xu, H.; Zhang, Z.; Miao, H.; Chen, W.; Liu, Z.; Zhang, X.; Wang, M.; Xu, X.; et al. Support Vector Machines (SVM) classification of prostate cancer Gleason score in central gland using multiparametric magnetic resonance images: A cross-validated study. Eur. J. Radiol. 2018, 98, 61–67. [Google Scholar] [CrossRef] [PubMed]

- Wang, J.; Wu, C.J.; Bao, M.L.; Zhang, J.; Wang, X.N.; Zhang, Y.D. Machine learning-based analysis of MR radiomics can help to improve the diagnostic performance of PI-RADS v2 in clinically relevant prostate cancer. Eur. Radiol. 2017, 27, 4082–4090. [Google Scholar] [CrossRef] [PubMed]

- Weinreb, J.; Barentsz, J.O.; Choyke, P.L.; Cornud, F.; Haider, M.A.; Macura, K.J.; Margolis, D.; Schnall, M.D.; Shtern, F.; Tempany, C.M.; et al. PI-RADS prostate imaging—Reporting and data system: 2015, Version 2. Eur. Urol. 2015, 69, 16–40. [Google Scholar] [CrossRef]

- Fehr, D.; Veeraraghavan, H.; Wibmer, A.; Gondo, T.; Matsumoto, K.; Vargas, H.A.; Sala, E.; Hricak, H.; Deasy, J. Automatic classification of prostate cancer Gleason scores from multiparametric magnetic resonance images. Proc. Natl. Acad. Sci. USA 2015, 112, E6265–E6273. [Google Scholar] [CrossRef]

- Min, X.; Li, M.; Dong, D.; Feng, Z.; Zhang, P.; Ke, Z.; You, H.; Han, F.; Ma, H.; Tian, J.; et al. Multi-parametric MRI-based radiomics signature for discriminating between clinically significant and insignificant prostate cancer: Cross-validation of a machine learning method. Eur. J. Radiol. 2019, 115, 16–21. [Google Scholar] [CrossRef]

- Azizi, S.; Bayat, S.; Yan, P.; Tahmasebi, A.; Kwak, J.T.; Xu, S.; Turkbey, B.; Choyke, P.; Pinto, P.; Wood, B.; et al. Deep recurrent neural networks for prostate cancer detection: Analysis of temporal enhanced ultrasound. IEEE Trans. Med. Imaging 2018, 37, 2695–2703. [Google Scholar] [CrossRef]

- Koizumi, M.; Motegi, K.; Koyama, M.; Terauchi, T.; Yuasa, T.; Yonese, J. Diagnostic performance of a computer-assisted diagnosis system for bone scintigraphy of newly developed skeletal metastasis in prostate cancer patients: Search for low-sensitivity subgroups. Ann. Nucl. Med. 2017, 31, 521–528. [Google Scholar] [CrossRef]

- Acar, E.; Leblebici, A.; Ellidokuz, B.E.; Başbınar, Y.; Kaya, G. Çapa Machine learning for differentiating metastatic and completely responded sclerotic bone lesion in prostate cancer: A retrospective radiomics study. Br. J. Radiol. 2019, 92, 20190286. [Google Scholar] [CrossRef]

- Lawrentshuk, N.; Lockwood, G.; Davies, P.; Evans, A.; Sweet, J.; Toi, A.; Fleshner, N.E. Predicting prostate biopsy outcome: Artificial neural networks and polychotomous regression are equivalent models. Int. Urol. Nephrol. 2010, 43, 23–30. [Google Scholar] [CrossRef] [PubMed]

- Takeuchi, T.; Hattori-Kato, M.; Okuno, Y.; Iwai, S.; Mikami, K. Prediction of prostate cancer by deep learning with multilayer artificial neural network. Can. Urol. Assoc. J. 2018, 13, E145–E150. [Google Scholar] [CrossRef]

- Kim, B.J.; Merchant, M.; Zheng, C.; Thomas, A.A.; Contreras, R.; Jacobsen, S.J.; Chien, G.W. Second prize: A natural language processing program effectively extracts key pathologic findings from radical prostatectomy reports. J. Endourol. 2014, 28, 1474–1478. [Google Scholar] [CrossRef] [PubMed]

- Nir, G.; Hor, S.; Karimi, D.; Fazli, L.; Skinnider, B.F.; Tavassoli, P.; Turbin, D.; Villamil, C.F.; Wang, G.; Wilson, R.S.; et al. Automatic grading of prostate cancer in digitized histopathology images: Learning from multiple experts. Med. Image Anal. 2018, 50, 167–180. [Google Scholar] [CrossRef] [PubMed]

- Gorelick, L.; Veksler, O.; Gaed, M.; Gomez, J.A.; Moussa, M.; Bauman, G.S.; Fenster, A.; Ward, A.D. Prostate histopathology: Learning tissue component histograms for cancer detection and classification. IEEE Trans. Med. Imaging 2013, 32, 1804–1818. [Google Scholar] [CrossRef] [PubMed]

- Nguyen, T.H.; Sridharan, S.; Macias, V.; Kajdacsy-Balla, A.; Melamed, J.; Do, M.N.; Popescu, G. Automatic Gleason grading of prostate cancer using quantitative phase imaging and machine learning. J. Biomed. Opt. 2017, 22, 36015. [Google Scholar] [CrossRef]

- Lucas, M.; Jansen, I.; Savci-Heijink, C.D.; Meijer, S.L.; De Boer, O.J.; Van Leeuwen, T.G.; De Bruin, D.M.; Marquering, H.A. Deep learning for automatic Gleason pattern classification for grade group determination of prostate biopsies. Virchows Archiv. 2019, 475, 77–83. [Google Scholar] [CrossRef]

- Arvaniti, E.; Fricker, K.S.; Moret, M.; Rupp, N.; Hermanns, T.; Fankhauser, C.D.; Wey, N.; Wild, P.J.; Rueschoff, J.H.; Claassen, M. Automated Gleason grading of prostate cancer tissue microarrays via deep learning. Sci. Rep. 2018, 8, 12054. [Google Scholar] [CrossRef]

- Kim, S.Y.; Moon, S.K.; Jung, D.C.; Hwang, S.I.; Sung, C.K.; Cho, J.Y.; Kim, S.H.; Lee, J.; Lee, H.J. Pre-operative prediction of advanced prostatic cancer using clinical decision support systems: Accuracy comparison between support vector machine and artificial neural network. Korean J. Radiol. 2011, 12, 588–594. [Google Scholar] [CrossRef]

- Tsao, C.W.; Liu, C.Y.; Cha, T.L.; Wu, S.T.; Sun, G.H.; Yu, D.S.; Chen, H.I.; Chang, S.Y.; Chen, S.C.; Hsu, M.H. Artificial neural network for predicting pathological stage of clinically localized prostate cancer in a Taiwanese population. J. Chin. Med. Assoc. 2014, 77, 513–518. [Google Scholar] [CrossRef][Green Version]

- Wang, J.; Wu, C.J.; Bao, M.L.; Zhang, J.; Shi, H.B.; Zhang, Y.D. Using support vector machine analysis to assess PartinMR: A new prediction model for organ-confined prostate cancer. J. Magn. Reson. Imaging 2018, 48, 499–506. [Google Scholar] [CrossRef]

- Auffenberg, G.B.; Ghani, K.R.; Ramani, S.; Usoro, E.; Denton, B.; Rogers, C.; Stockton, B.; Miller, D.C.; Singh, K. askMUSIC: Leveraging a clinical registry to develop a new machine learning model to inform patients of prostate cancer treatments chosen by similar men. Eur. Urol. 2019, 75, 901–907. [Google Scholar] [CrossRef]

- Ukimura, O.; Aroni, M.; Nakamoto, M.; Shoji, S.; Abreu, A.L.; Matsugasumi, T.; Berger, A.; Desai, M.; Gill, I.S. Three-dimensional surgical navigation model with TilePro display during robot-assisted radical prostatectomy. J. Endourol. 2014, 28, 625–630. [Google Scholar] [CrossRef] [PubMed]

- Hung, A.J.; Chen, J.; Jarc, A.; Hatcher, D.; Djaladat, H.; Gill, I.S. Development and validation of objective performance metrics for robot-assisted radical prostatectomy: A pilot study. J. Urol. 2018, 199, 296–304. [Google Scholar] [CrossRef] [PubMed]

- Hung, A.J.; Chen, J.; Che, Z.; Nilanon, T.; Jarc, A.; Titus, M.; Oh, P.; Gill, I.S.; Liu, Y. Utilizing machine learning and automated performance metrics to evaluate robot-assisted radical prostatectomy performance and predict outcomes. J. Endourol. 2018, 32, 438–444. [Google Scholar] [CrossRef] [PubMed]

- Hung, A.J.; Chen, J.; Ghodoussipour, S.; Oh, P.J.; Liu, Z.; Nguyen, J.; Purushotham, S.; Gill, I.S.; Liu, Y. A deep-learning model using automated performance metrics and clinical features to predict urinary continence recovery after robot-assisted radical prostatectomy. BJU Int. 2019, 124, 487–495. [Google Scholar] [CrossRef]

- Ranasinghe, W.K.B.; De Silva, D.; Bandaragoda, T.; Adikari, A.; Alahakoon, D.; Persad, R.; Lawrentshuk, N.; Bolton, D. Robotic-assisted vs. open radical prostatectomy: A machine learning framework for intelligent analysis of patient-reported outcomes from online cancer support groups. Urol. Oncol. Semin. Orig. Investig. 2018, 36, 529.e1–529.e9. [Google Scholar] [CrossRef]

- Nicolae, A.; Morton, G.; Chung, H.; Loblaw, A.; Jain, S.; Mitchell, D.; Lu, L.; Helou, J.; Al-Hanaqta, M.; Heath, E.; et al. Evaluation of a machine-learning algorithm for treatment planning in prostate low-dose-rate brachytherapy. Int. J. Radiat. Oncol. 2017, 97, 822–829. [Google Scholar] [CrossRef]

- Kajikawa, T.; Kadoya, N.; Ito, K.; Takayama, Y.; Chiba, T.; Tomori, S.; Takeda, K.; Jingu, K. Automated prediction of dosimetric eligibility of patients with prostate cancer undergoing intensity-modulated radiation therapy using a convolutional neural network. Radiol. Phys. Technol. 2018, 11, 320–327. [Google Scholar] [CrossRef]

- Lee, S.; Kerns, S.; Ostrer, H.; Rosenstein, B.; Deasy, J.; Oh, J.H. Machine learning on a genome-wide association study to predict late genitourinary toxicity after prostate radiation therapy. Int. J. Radiat. Oncol. 2018, 101, 128–135. [Google Scholar] [CrossRef]

- Oh, J.H.; Kerns, S.; Ostrer, H.; Powell, S.N.; Rosenstein, B.; Deasy, J. Computational methods using genome-wide association studies to predict radiotherapy complications and to identify correlative molecular processes. Sci. Rep. 2017, 7, 43381. [Google Scholar] [CrossRef]

- Pella, A.; Cambria, R.; Riboldi, M.; Jereczek-Fossa, B.A.; Fodor, C.; Zerini, D.; Torshabi, A.E.; Cattani, F.; Garibaldi, C.; Pedroli, G.; et al. Use of machine learning methods for prediction of acute toxicity in organs at risk following prostate radiotherapy. Med. Phys. 2011, 38, 2859–2867. [Google Scholar] [CrossRef]

- Heintzelman, N.H.; Taylor, R.J.; Simonsen, L.; Lustig, R.; Anderko, D.; Haythornthwaite, J.A.; Childs, L.C.; Bova, G.S. Longitudinal analysis of pain in patients with metastatic prostate cancer using natural language processing of medical record text. J. Am. Med. Inform. Assoc. 2013, 20, 898–905. [Google Scholar] [CrossRef] [PubMed]

- Zhang, Y.D.; Wang, J.; Wu, C.J.; Bao, M.L.; Li, H.; Wang, X.N.; Tao, J.; Shi, H.B. An imaging-based approach predicts clinical outcomes in prostate cancer through a novel support vector machine classification. Oncotarget 2016, 7, 78140–78151. [Google Scholar] [CrossRef] [PubMed]

- Wong, N.C.; Lam, C.J.; Patterson, L.; Shayegan, B. Use of machine learning to predict early biochemical recurrence after robot-assisted prostatectomy. BJU Int. 2018, 123, 51–57. [Google Scholar] [CrossRef] [PubMed]

- Abdollahi, H.; Mofid, B.; Shiri, I.; Razzaghdoust, A.; Saadipoor, A.; Mahdavi, A.; Galandooz, H.M.; Mahdavi, S.R.; Moid, B. Machine learning-based radiomic models to predict intensity-modulated radiation therapy response, Gleason score and stage in prostate cancer. Radiol. Med. 2019, 124, 555–567. [Google Scholar] [CrossRef]

- Golub, T.; Slonim, D.K.; Tamayo, P.; Huard, C.; Gaasenbeek, M.; Mesirov, J.P.; Coller, H.; Loh, M.L.; Downing, J.R.; Caligiuri, M.A.; et al. Molecular classification of cancer: Class discovery and class prediction by gene expression monitoring. Science 1999, 286, 531–537. [Google Scholar] [CrossRef]

- Fogel, A.L.; Kvedar, J.C. Artificial intelligence powers digital medicine. NPJ Digit. Med. 2018, 1, 1–4. [Google Scholar] [CrossRef]

- Libbrecht, M.W.; Noble, W.S. Machine learning applications in genetics and genomics. Nat. Rev. Genet. 2015, 16, 321–332. [Google Scholar] [CrossRef]

- Xu, J.; Yang, P.; Xue, S.; Sharma, B.; Sanchez-Martin, M.; Wang, F.; Beaty, K.A.; Dehan, E.; Parikh, B. Translating cancer genomics into precision medicine with artificial intelligence: Applications, challenges and future perspectives. Qual. Life Res. 2019, 138, 109–124. [Google Scholar] [CrossRef]

- Golkov, V.; Skwark, M.J.; Golkov, A.; Dosovitskiy, A.; Brox, T.; Meiler, J.; Cremers, D. Protein contact prediction from amino acid co-evolution using convolutional networks for graph-valued images. In Advances in Neural Information Processing Systems, Proceedings of the 30th Conference on Neural Information Processing Systems (NIPS 2016); Lee, D.D., Sugiyama, M., Luxburg, U.V., Guyon, I., Garnett, R., Eds.; Curran Associates Inc.: Barcelona, Spain, 2016; pp. 4222–4230. [Google Scholar]

- Theofilatos, K.; Pavlopoulou, N.; Papasavvas, C.A.; Likothanassis, S.; Dimitrakopoulos, C.; Georgopoulos, E.; Moschopoulos, C.; Mavroudi, S. Predicting protein complexes from weighted protein–protein interaction graphs with a novel unsupervised methodology: Evolutionary enhanced Markov clustering. Artif. Intell. Med. 2015, 63, 181–189. [Google Scholar] [CrossRef]

- Rapakoulia, T.; Theofilatos, K.; Kleftogiannis, D.; Likothanasis, S.; Tsakalidis, A.; Mavroudi, S. EnsembleGASVR: A novel ensemble method for classifying missense single nucleotide polymorphisms. Bioinformatics 2014, 30, 2324–2333. [Google Scholar] [CrossRef]

- Fairweather, M.; Raut, C.P. To biopsy, or not to biopsy: Is there really a question? Ann. Surg. Oncol. 2019, 26, 4182–4184. [Google Scholar] [CrossRef] [PubMed]

- Heidenreich, A.; Bellmunt, J.; Bolla, M.; Joniau, S.; Mason, M.; Matveev, V.; Mottet, N.; Schmid, H.-P.; Van Der Kwast, T.; Wiegel, T.; et al. EAU guidelines on prostate cancer. Part 1: Screening, diagnosis, and treatment of clinically localised disease. Eur. Urol. 2011, 59, 61–71. [Google Scholar] [CrossRef] [PubMed]

- Doyle, S.; Hwang, M.; Shah, K.; Madabhushi, A.; Feldman, M.; Tomaszeweski, J. Automated grading of prostate cancer using architectural and textural image features. In Proceedings of the 4th IEEE International Symposium on Biomedical Imaging: From Nano to Macro, Arlington, VA, USA, 12–15 April 2007; pp. 1284–1287. [Google Scholar] [CrossRef]

- Vennalaganti, P.R.; Kanakadandi, V.N.; Gross, S.A.; Parasa, S.; Wang, K.K.; Gupta, N.; Sharma, P. Inter-observer agreement among pathologists using wide-area transepithelial sampling with computer-assisted analysis in patients with Barrett’s esophagus. Am. J. Gastroenterol. 2015, 110, 1257–1260. [Google Scholar] [CrossRef]

- Bejnordi, B.E.; Veta, M.; Van Diest, P.J.; Van Ginneken, B.; Karssemeijer, N.; Litjens, G.; Van Der Laak, J.A.W.M. The CAMELYON16 Consortium Diagnostic assessment of deep learning algorithms for detection of lymph node metastases in women with breast cancer. JAMA 2017, 318, 2199–2210. [Google Scholar] [CrossRef] [PubMed]

- Kumar, V.; Gu, Y.; Basu, S.; Berglund, A.; Eschrich, S.A.; Schabath, M.B.; Forster, K.; Aerts, H.J.; Dekker, A.; Fenstermacher, D.; et al. Radiomics: The process and the challenges. Magn. Reson. Imaging 2012, 30, 1234–1248. [Google Scholar] [CrossRef] [PubMed]

- Peeken, J.C.; Bernhofer, M.; Wiestler, B.; Goldberg, T.; Cremers, D.; Rost, B.; Wilkens, J.J.; Combs, S.E.; Nüsslin, F. Radiomics in radiooncology—Challenging the medical physicist. Phys. Med. 2018, 48, 27–36. [Google Scholar] [CrossRef]

- Gillies, R.J.; Kinahan, P.E.; Hricak, H. Radiomics: Images are more than pictures, they are data. Radiology 2016, 278, 563–577. [Google Scholar] [CrossRef]

- Stoyanova, R.; Takhar, M.; Tschudi, Y.; Ford, J.C.; Solórzano, G.; Erho, N.; Balagurunathan, Y.; Punnen, S.; Davicioni, E.; Gillies, R.J.; et al. Prostate cancer radiomics and the promise of radiogenomics. Transl. Cancer Res. 2016, 5, 432–447. [Google Scholar] [CrossRef]

- Lambin, P.; Van Stiphout, R.G.P.M.; Starmans, M.H.W.; Rios-Velazquez, E.; Nalbantov, G.; Aerts, H.J.W.L.; Roelofs, E.; Van Elmpt, W.; Boutros, P.C.; Granone, P.; et al. Predicting outcomes in radiation oncology—Multifactorial decision support systems. Nat. Rev. Clin. Oncol. 2012, 10, 27–40. [Google Scholar] [CrossRef]

- Fave, X.; Zhang, L.; Yang, J.; Mackin, D.; Balter, P.; Gomez, D.; Followill, D.; Jones, A.K.; Stingo, F.C.; Liao, Z.; et al. Delta-radiomics features for the prediction of patient outcomes in non-small cell lung cancer. Sci. Rep. 2017, 7, 1–11. [Google Scholar] [CrossRef]

- Porpiglia, F.; Fiori, C.; Checcucci, E.; Amparore, D.; Bertolo, R. Augmented reality robot-assisted radical prostatectomy: Preliminary experience. Urology 2018, 115, 184. [Google Scholar] [CrossRef] [PubMed]

- Porpiglia, F.; Checcucci, E.; Amparore, D.; Autorino, R.; Piana, A.; Bellin, A.; Piazzolla, P.; Massa, F.; Bollito, E.; Gned, D.; et al. Augmented-reality robot-assisted radical prostatectomy using hyper-accuracy three-dimensional reconstruction (HA3D™) technology: A radiological and pathological study. BJU Int. 2018, 123, 834–845. [Google Scholar] [CrossRef] [PubMed]

- Fida, B.; Cutolo, F.; Di Franco, G.; Ferrari, M.; Ferrari, V. Augmented reality in open surgery. Updat. Surg. 2018, 70, 389–400. [Google Scholar] [CrossRef] [PubMed]

- Feußner, H.; Ostler, D.; Wilhelm, D. Robotics and augmented reality: Current state of development and future perspectives. Chirurg 2018, 89, 760–768. [Google Scholar] [CrossRef]

- Wake, N.; Bjurlin, M.A.; Rostami, P.; Chandarana, H.; Huang, W.C. Three-dimensional printing and augmented reality: Enhanced precision for robotic assisted partial nephrectomy. Urology 2018, 116, 227–228. [Google Scholar] [CrossRef]

- Pessaux, P.; Diana, M.; Soler, L.; Piardi, T.; Mutter, D.; Marescaux, J. Robotic duodenopancreatectomy assisted with augmented reality and real-time fluorescence guidance. Surg. Endosc. 2014, 28, 2493–2498. [Google Scholar] [CrossRef]

- Pessaux, P.; Diana, M.; Soler, L.; Piardi, T.; Mutter, D.; Marescaux, J. Towards cybernetic surgery: Robotic and augmented reality-assisted liver segmentectomy. Langenbecks Arch. Surg. 2014, 400, 381–385. [Google Scholar] [CrossRef]

- Tang, R.; Ma, L.F.; Rong, Z.X.; Li, M.D.; Zeng, J.P.; Wang, X.D.; Liao, H.; Dong, J. Augmented reality technology for preoperative planning and intraoperative navigation during hepatobiliary surgery: A review of current methods. HBPD Int. 2018, 17, 101–112. [Google Scholar] [CrossRef]

- Lin, L.; Shi, Y.; Tan, A.; Bogari, M.; Zhu, M.; Xin, Y.; Xu, H.; Zhang, Y.; Xie, L.; Chai, G. Mandibular angle split osteotomy based on a novel augmented reality navigation using specialized robot-assisted arms—A feasibility study. J. Cranio Maxillofac. Surg. 2016, 44, 215–223. [Google Scholar] [CrossRef]

- Pratt, P.; Arora, A. Transoral robotic surgery: Image guidance and augmented reality. ORL J. 2018, 80, 204–212. [Google Scholar] [CrossRef]

- Ewurum, C.H.; Guo, Y.; Pagnha, S.; Feng, Z.; Luo, X. Surgical navigation in orthopedics: Workflow and system review. Adv. Exp. Med. Biol. 2018, 1093, 47–63. [Google Scholar] [CrossRef] [PubMed]

- Fard, M.J.; Ameri, S.; Ellis, R.D.; Chinnam, R.B.; Pandya, A.K.; Klein, M.D. Automated robot-assisted surgical skill evaluation: Predictive analytics approach. Int. J. Med. Robot. Comput. Assist. Surg. 2018, 14, e1850. [Google Scholar] [CrossRef] [PubMed]

- Wang, Z.; Fey, A.M. Deep learning with convolutional neural network for objective skill evaluation in robot-assisted surgery. Int. J. Comput. Assist. Radiol. Surg. 2018, 13, 1959–1970. [Google Scholar] [CrossRef]

- Fawaz, H.I.; Forestier, G.; Weber, J.; Idoumghar, L.; Muller, P.A. Accurate and interpretable evaluation of surgical skills from kinematic data using fully convolutional neural networks. Int. J. Comput. Assist. Radiol. Surg. 2019, 14, 1611–1617. [Google Scholar] [CrossRef] [PubMed]

- Kassahun, Y.K.; Yu, B.; Tibebu, A.T.; Stoyanov, D.; Giannarou, S.; Metzen, J.H.; Poorten, E.V. Surgical robotics beyond enhanced dexterity instrumentation: A survey of machine learning techniques and their role in intelligent and autonomous surgical actions. Int. J. Comput. Assist. Radiol. Surg. 2015, 11, 553–568. [Google Scholar] [CrossRef]

- Hutson, M. Missing Data Hinder Replication of Artificial Intelligence Studies; Science AAAS: Washington, DC, USA, 2018; Available online: https://www.sciencemag.org/news/2018/02/missing-data-hinder-replication-artificial-intelligence-studies (accessed on 9 January 2020).

- Lipton, Z.C. The doctor just won’t accept that! arXiv 2017, arXiv:1711.08037. [Google Scholar]

- Watson, D.S.; Krutzinna, J.; Bruce, I.N.; Griffiths, C.E.; McInnes, I.B.; Barnes, M.R.; Floridi, L. Clinical applications of machine learning algorithms: Beyond the black box. BMJ 2019, 364, l886. [Google Scholar] [CrossRef]

- Cussenot, O.; Valeri, A.; Berthon, P.; Fournier, G.; Mangin, P. Hereditary prostate cancer and other genetic predispositions to prostate cancer. Urol. Int. 1998, 60, 30–34. [Google Scholar] [CrossRef]

- Keane, P.; Topol, E.J. With an eye to AI and autonomous diagnosis. NPJ Digit. Med. 2018, 1, 40. [Google Scholar] [CrossRef]

- Eifler, J.B.; Feng, Z.; Lin, B.M.; Partin, M.T.; Humphreys, E.B.; Han, M.; Epstein, J.I.; Walsh, P.C.; Trock, B.J.; Partin, A.W. An updated prostate cancer staging nomogram (Partin tables) based on cases from 2006 to 2011. BJU Int. 2012, 111, 22–29. [Google Scholar] [CrossRef]

- Leyh-Bannurah, S.R.; Gazdovich, S.; Budäus, L.; Zaffuto, E.; Dell’Oglio, P.; Briganti, A.; Abdollah, F.; Montorsi, F.; Schiffmann, J.; Menon, M.; et al. Population-based external validation of the updated 2012 partin tables in contemporary North American prostate cancer patients. Prostate 2016, 77, 105–113. [Google Scholar] [CrossRef] [PubMed]

- Esteva, A.; Kuprel, B.; Novoa, R.A.; Ko, J.; Swetter, S.M.; Blau, H.M.; Thrun, S. Dermatologist-level classification of skin cancer with deep neural networks. Nature 2017, 542, 115–118. [Google Scholar] [CrossRef]

- Gulshan, V.; Peng, L.; Coram, M.; Stumpe, M.C.; Wu, D.; Narayanaswamy, A.; Venugopalan, S.; Widner, K.; Madams, T.; Cuadros, J.; et al. Development and validation of a deep learning algorithm for detection of diabetic retinopathy in retinal fundus photographs. JAMA 2016, 316, 2402–2410. [Google Scholar] [CrossRef] [PubMed]

- De Fauw, J.; Ledsam, J.R.; Romera-Paredes, B.; Nikolov, S.; Tomasev, N.; Blackwell, S.; Askham, H.; Glorot, X.; O’Donoghue, B.; Visentin, D.; et al. Clinically applicable deep learning for diagnosis and referral in retinal disease. Nat. Med. 2018, 24, 1342–1350. [Google Scholar] [CrossRef]

- Korbar, B.; Olofson, A.M.; Miraflor, A.P.; Nicka, C.M.; Suriawinata, M.A.; Torresani, L.; Suriawinata, A.A.; Hassanpour, S. Deep learning for classification of colorectal polyps on whole-slide images. J. Pathol. Inform. 2017, 8, 30. [Google Scholar] [CrossRef]

- Komeda, Y.; Handa, H.; Watanabe, T.; Nomura, T.; Kitahashi, M.; Sakurai, T.; Okamoto, A.; Minami, T.; Kono, M.; Arizumi, T.; et al. Computer-aided diagnosis based on convolutional neural network system for colorectal polyp classification: Preliminary experience. Oncology 2017, 93, 30–34. [Google Scholar] [CrossRef]

- Christodoulou, E.; Ma, J.; Collins, G.S.; Steyerberg, E.W.; Verbakel, J.Y.; Van Calster, B.; Evangelia, C.; Jie, M. A systematic review shows no performance benefit of machine learning over logistic regression for clinical prediction models. J. Clin. Epidemiol. 2019, 110, 12–22. [Google Scholar] [CrossRef]

- Dreiseitl, S.; Ohno-Machado, L. Logistic regression and artificial neural network classification models: A methodology review. J. Biomed. Inform. 2003, 35, 352–359. [Google Scholar] [CrossRef]

- Cui, Z.; Gong, G. The effect of machine learning regression algorithms and sample size on individualized behavioral prediction with functional connectivity features. NeuroImage 2018, 178, 622–637. [Google Scholar] [CrossRef]

- Shaikhina, T.; Lowe, D.; Daga, S.; Briggs, D.C.; Higgins, R.; Khovanova, N. Machine Learning for predictive modelling based on small data in biomedical engineering. IFAC PapersOnLine 2015, 48, 469–474. [Google Scholar] [CrossRef]

- Hutson, M. Artificial intelligence faces reproducibility crisis. Science 2018, 359, 725–726. [Google Scholar] [CrossRef] [PubMed]

- Bizzego, A.; Bussola, N.; Chierici, M.; Maggio, V.; Francescatto, M.; Cima, L.; Cristoforetti, M.; Jurman, G.; Furlanello, C. Evaluating reproducibility of AI algorithms in digital pathology with DAPPER. PLoS Comput. Biol. 2019, 15, e1006269. [Google Scholar] [CrossRef] [PubMed]

- Codari, M.; Schiaffino, S.; Sardanelli, F.; Trimboli, R.M. Artificial intelligence for breast MRI in 2008–2018: A systematic mapping review. Am. J. Roentgenol. 2019, 212, 280–292. [Google Scholar] [CrossRef] [PubMed]

- Yassin, N.I.; Omran, S.; El Houby, E.M.F.; Allam, H. Machine learning techniques for breast cancer computer aided diagnosis using different image modalities: A systematic review. Comput. Methods Programs Biomed. 2018, 156, 25–45. [Google Scholar] [CrossRef] [PubMed]

- Nindrea, R.D.; Aryandono, T.; Lazuardi, L.; Dwiprahasto, I. Diagnostic accuracy of different machine learning algorithms for breast cancer risk calculation: A meta-analysis. Asian Pac. J. Cancer Prev. 2018, 19, 1747–1752. [Google Scholar]

- Murphy, A.; Skalski, M.R.; Gaillard, F. The utilisation of convolutional neural networks in detecting pulmonary nodules: A review. Br. J. Radiol. 2018, 91, 20180028. [Google Scholar] [CrossRef]

- Rudie, J.D.; Rauschecker, A.M.; Bryan, R.N.; Davatzikos, C.; Mohan, S. Emerging applications of artificial intelligence in neuro-oncology. Radiology 2019, 290, 607–618. [Google Scholar] [CrossRef]

- Nguyen, A.; Blears, E.E.; Ross, E.; Lall, R.R.; Ortega-Barnett, J. Machine learning applications for the differentiation of primary central nervous system lymphoma from glioblastoma on imaging: A systematic review and meta-analysis. Neurosurg. Focus 2018, 45, E5. [Google Scholar] [CrossRef]

- De Souza, L.A.; Palm, C.; Mendel, R.; Hook, C.; Ebigbo, A.; Probst, A.; Messmann, H.; Weber, S.A.T.; Papa, J.P. A survey on Barrett’s esophagus analysis using machine learning. Comput. Biol. Med. 2018, 96, 203–213. [Google Scholar] [CrossRef]

- Sharma, R. The burden of prostate cancer is associated with human development index: Evidence from 87 countries, 1990–2016. EPMA J. 2019, 10, 137–152. [Google Scholar] [CrossRef]

- Golubnitschaja, O.; Baban, B.; Boniolo, G.; Wang, W.; Bubnov, R.; Kapalla, M.; Krapfenbauer, K.; Mozaffari, M.S.; Costigliola, V. Medicine in the early twenty-first century: Paradigm and anticipation—EPMA position paper 2016. EPMA J. 2016, 7, 23. [Google Scholar] [CrossRef] [PubMed]

- Lu, M.; Zhan, X. The crucial role of multiomic approach in cancer research and clinically relevant outcomes. EPMA J. 2018, 9, 77–102. [Google Scholar] [CrossRef] [PubMed]

| Author | AI Model | Patients (Training/Validation/Testing) | Parameters | Predicted Outcomes |

|---|---|---|---|---|

| Ishioka et al. BJUI 2018 [20] | CNN algorithm (U-net and ResNet50) | 335 PCa patients (301/34) | T2-weighted (T2-w) MR images labeled as ‘cancer’ or ‘no cancer’ | Detection of PCa on MRI with CAD algorithm required 5.5 h to learn to analyze 2 million images, operating at approximately 30 ms/ image for evaluation and showed AUC of 0.645 and 0.636. 16/17 and 7/17 patients mistakenly diagnosed as having PCa in two validation datasets. |

| Vos et al. Phys Med Biol 2010 [21] | SVM | 34 PCa patients | T2-w and dynamic contrast-enhanced (DCE) T1-w MR images labeled as malignant, benign, or normal regions | T2 values alone achieved a diagnostic AUC of 0.85 (0.77–0.92) with a significantly improved discriminating performance of 0.89 (0.81–0.95), when combined with DCE T1-w features. |

| Niaf et al. Phys. Med. Biol. 2012 [22] | SVM (MATLAB) | 30 RARP | 42 cancer ROIs, 49 suspicious ROIs, and 124 benign ROIs | For the discrimination of the malignant versus nonmalignant tissues AUC was 0.89 (0.81–0.94) and 0.82 (0.73–0.90) for the discrimination between malignant versus suspicious tissues. |

| Giannini et al. Comput Med Imaging Graph, 2015 [23] | Computer Vision and SVM | 56 patients with 65 PCa lesions | MR images with anatomical and pharmacokinetic features | For the discrimination of the malignant versus nonmalignant tissues, AUC was 0.91 (1st–3rd quartile; 0.83–0.98), with a median sensitivity and specificity of 0.84 (1st–3rd quartile; 0.77–0.93) and 0.86 (1st–3rd quartile; 0.76–0.95) respectively. Detection of 62/65 lesions (per-lesion sensitivity increased to 98%, if CS PCa considered only) 2/3 false negative lesions were NS PCa. |

| Rampun et al. Int J Numer Method Biomed Eng 2016 [24] | Unsupervised ML algorithm | 37 PCa patients | 4 images features on T2-W MRI | CAD detection and localization of PCa within the peripheral zone with 86% of accuracy, 87% and 86% of sensitivity and specificity respectively. |

| Wang et al. Sci Rep 2017 [25] | DCNN vs. a non-DL with SIFT image feature and bag-of-word (BoW) | 172 PCa patients | Clinicopathological and MR imaging Datasets with 2602 morphologic images | Statistically higher area under the receiver operating characteristics curve (AUC) for DCNN than non-DL (p = 0.0007 < 0.001). The AUCs were respectively 0.84 (95% CI 0.78–0.89) for DL method and 0.70 (95% CI 0.63–0.77) for non-deep learning method. |

| Song et al. J MagnReson Imaging 2018 [26] | DCNN (Python scikit-learn and R software) | 195 (159/17/19) patients with localized PCa | MR images labeled as ‘cancer’ or ‘no cancer’ | Strong diagnostic performance for DCNN in distinguishing between PCa and non-cancerous tissues: AUC of 0.944 (95% CI: 0.876–0.994), 87.0% and 90.6% of sensitivity and specificity respectively, PPV of 87.0%, and NPV of 90.6%. Joining PI-RADS and DCNN provided additional net benefits rather than in particular. |

| Matulewicz et al. J MagnReson Imaging 2014 [27] | 2 ANN models (STATISTICA) | 18 PCa patients 5308 voxels (3716/796/796) | Model 1: 256 MRI variables Model 2: 256 MRI variables + 4 anatomical variables (percentage of PZ, TZ, U, and O). | By adding training with anatomic segmentation PCa, performance of model 2 was significantly higher than model 1 with respectively AUC 0.968 vs. 0.949 (p = 0.03), sensitivity of 62.5% vs. 50% and specificity of 99.0% vs. 98.7%. |

| Zhao et al. Sci China Life Sci 2015 [28] | ANN (in-house software) | 71 patients (35 with PCa and 36 without PCa) | 12 radiomic features on T2-W | In the PZ, 10/12 radiomic features had significant difference (p < 0.01) between PCa and non-PCa. In CG, 5/12 radiomic features (sum average, minimum value, standard deviation, 10th percentile, and entropy) had significant difference. |

| Betrouni et al. Int J Comput Assist RadiolSurg. 2015 [29] | Unsupervised ML algorithm | 15 RARP | Radiomic features extracted from MRI T2-w, T1-w DCE, and ADC images | The mean sensitivity and specificity were 65% (0–100%) and 81% (50–100%), respectively. The poorer scores were for lesions with volumes less than 0.5 cc (value actually use to define CS PCa on MR images). With only tumors with a significant size, the mean sensitivity and specificity grew to 70% and 88%, respectively. |

| Bonekamp et al. EurRadiol 2018 [30] | ML algorithm (Python scikit-learn) | 316 men with MRI-TRUS fusion biopsy (183/133) | Lesion segmentation and 846 radiomic features analysis | Radiomic characterization of prostate lesions with MRI: ML vs. ADC comparison AUC for the mean ADC (AUC global = 0.84; AUC zone-specific ≤ 0.87) vs. the ML (AUC global = 0.88, p = 0.176; AUC zone-specific ≤ 0.89, p ≥ 0.493) showed no significantly different performance. |

| Viswanath et al. BMC Med Imaging 2019 [31] | Comparing 12 radiomic classifier derived from 4 ML families (MATLAB) | 85 RARP | 116 MRI features of 4 different types of texture | The boosted QDA classifier was identified as the most accurate, fast and robust for voxel-wise detection of PCa (AUCs of 0.735, 0.683, 0.768 across the 3 sites) but results therefore suggest that simpler classifiers may be more robust, accurate, and efficient for prostate cancer especially in multi-site validation. |

| Le et al. Phys Med Biol 2017 [32] | CNN algorithm (ResNet) | 364 patients with a total of 463 PCa lesions and 450 identified non-cancerous image | MRI quantitative features | In distinguishing cancer from non-cancerous tissues: sensitivity of 89.85% and a specificity of 95.83% and 100% with 91.46% accuracy and a specificity of 76.92% and accuracy 88.75% for distinguishing NS PCa from CS PCa. |

| Li et al. Eur J Radiol 2018 [33] | SVM algorithms (MATLAB) | 152 CG cancerous ROIs from 63 PCa patients | 6 mpMRI significant variables (selected by feature-selection and variation test) from 55 variables | For classification between NS PCa vs. CS PCa in CG, the trained SMV algorithm in dataset A2 and validated in B2 had AUC of 0.91 (95% CI: 0.85, 0.95), with an accuracy of 84.1% and a sensitivity and specificity of 82.3% and 87.5% respectively. When the data sets were reversed, the trained SMV algorithm in dataset B2 and validated in A2 had AUC of 0.90 (95% CI: 0.85, 0.95), with an accuracy of 81.1% and a sensitivity and specificity of 90.5% and 79.7% respectively. |

| Wang et al. EurRadiol 2017 [34] | SVM algorithm | 54 PCa patients | PI-RADS v2 and 8 radiomic features | For PCa vs. normal TZ, the ML algorithm trained with radiomic features had a significantly higher AUC (0.955 [95% CI 0.923–0.976]) than PI-RADS (0.878 [0.834–0.914], p < 0.001) but not vs. normal PZ (0.972 [0.945–0.988] vs. 0.940 [0.905–0.965], p = 0.097). Performance of PI-RADS was significantly improved for PCa vs. PZ AUC 0.983 [0.960–0.995]) and PCa vs. TZ (0.968 [0.940–0.985]) with radiomic features addition. |

| Fehr et al. Proc. Natl. Acad. Sci. USA 2015 [36] | ML algorithm (in-house software in MATLAB) | 147 RARP | Haralick features on T2-w and ADC | By combining T2-W and ADC, ML model accuracy was 93% for lesions developing in both PZ and TZ and 92% in the PZ alone for stratification between NS and CS PCa. Accuracy of 92% for distinction between GS 7(3+4) and 7(4 + 3) for cancers occurring in both the PZ and TZ and 93% in the PZ alone. To compare, a model using only the ADC mean achieved an accuracy of 58% and 63% for distinguishing between NS and CS PCa for cancers occurring in PZ and TZ and in PZ alone respectively. The same model performed an accuracy of 59% for stratification between GS 7(3 + 4) from 7(4 + 3) appearing in the PZ and TZ and 60% in PZ alone. |

| Min et al. Eur J Radiol 2019 [37] | ML algorithm (SMOTE) | 280 (187/93) PCa patients | 9 radiomic features among 918 extracted on T2-w, ADC, and DWI | Significant differences in the radiomic features existed between the CS PCa and NS PCa groups in the training and test cohorts (p < 0.01 for both). For radiomic signature in the training cohort, the AUC was 0.872 and the sensitivity and specificity were 0.883 and 0.753, respectively. In the testing cohort, the AUC was 0.823, and 0.841 and 0.727 for the sensibility and specificity, respectively. |

| Azizi et al. IEEE 2018 [38] | ANN (Python, Keras, or Tensorflow) | 255 (84/171) prostate biopsy cores of 157 patients | Temporal Enhanced Ultrasound image analysis 172 cores labeled as benign and 83 labeled as cancerous | Temporal Enhanced US using ANN improve significantly cancer detection and achieve an AUC = 0.96, with 76% of sensitivity, 98% of specificity and an accuracy of 93%. |

| Koizumi et al. Ann Nucl Med 2017 [39] | ANN algorithm (BONENAVI) | 226 PCa patients (124 with skeletal metastasis and 101 without) | Bone Scintigraphy (BS) images | BONENAVI showed for metastasis detection 82% and 83% of sensitivity and specificity, respectively. AUC was 0.888 (95% CI 0.843–0.932). The mean ANN values were 0.78 (SD = 0.29) for the skeletal metastasis patients and 0.22 (SD = 0.30) for the non-skeletal metastasis patients. False negative had often a solitary lesion in the pelvis. |

| Acar et al. Br J Radiol, 2019 [40] | 3 ML algorithms (MATLAB) | 75 PCa metastatic patients | 35 radiomic features on 68Ga-PSMA PET/CT images | The Weighted KNN (ML algorithm) succeeded to differentiate sclerotic lesion from metastasis or completely responded lesions with 0.76 AUC, sensitivity and specificity were 73.5% and 73.7%, respectively. PPV and NPV were 86.9% and 53.8%, respectively. GLZLM_SZHGE and histogram-based kurtosis were found to be the most important parameters, but 28/35 radiomic features were significant in differentiating metastatic from completely responded sclerotic lesions. |

| Author | AI Model | Patients (Training/Validation/Testing) | Parameters | Predicted Outcomes |

|---|---|---|---|---|

| Nir et al. Med Image Anal 2018 [44] | Supervised ML algorithm (U-Net) | 287 RARP (231/56) with 563 (333/230) TMA cores | 23 weighted histological features on TMA cores | The inter-annotator agreement between ML model and pathologist was k = 0.51, while the overall agreements between the 6 pathologists was 0.45 to 0.62 (0.56 ± 0.07). The classifier’s accuracy in detecting cancer was 90.5%. For the distinction between NS and SC PCa, the accuracy was 79.2%, with 79.2% and 79.1% of sensitivity and specificity, respectively. The manual grading by pathologists leads to inter-observer mean accuracy of 97.2%, with 98.4% and 86.2% of sensitivity and specificity respectively for cancer detection, and 78.8%, 79.2% and 79.3%, respectively, for NS vs. SC PCa. ML’s working time was 18.5h for the training and validation steps. |

| Gorelick et al. IEEE Trans Med Imaging 2013 [45] | ML algorithm (MATLAB 7.12.0) | 15 RARP with 991 sub-images extracted from digital pathology images of 50 whole-mount tissue sections | 7 morphometric features, 100 geometric features, and 9 tissue component labels | Difference between cancer versus non-cancer and high-grade versus low-grade was made with accuracies of 90% and 85% respectively with 12% and 5% of FN for cancer detection and classification, respectively. |

| Nguyen et al. J BiomedOpt 2017 [46] | Unsupervised ML algorithm (RF on MATLAB) | 368 TMA cores with PCa consensus diagnosis | Combining morphological features with quantitative information from TMA cores | To separate GS 3 to GS 4, the AUC value was 0.82, which is in the range of human error when inter-observer variability is considered. The AUC reduced from 0.98 to 0.87, inversely correlated with GS (6 to 10). |

| Lucas et al. Virchows Arch 2019 [47] | CNN (Inception-v3, MATLAB) | 96 TRUS biopsies from 38 patients | Digitized slides annotated with 4 groups using histological and simplify Gleason Pattern (GP) | Differentiation between benign and PCa (GP ≥ 3) areas resulted in an accuracy of 92%, with 90 and 93% of sensitivity and specificity, respectively. The differentiation between NS PCa and CS PCa was accurate for 90%, with 77 and 94% of sensitivity and specificity, respectively. Concordance between CNN and genito-urinary pathologist was achieved in 65% (κ = 0.70). |

| Arvaniti et al. Sci Rep 2018 [48] | CNN (Python3, Keras, or Tensorflow) | 886 PCa patients (641/245) | GP annotation on TMA cores | The inter-annotator agreements between CNN and pathologists were 0.75 and 0.71, which was comparable with the inter-pathologist agreement (k = 0.71) for automated GS grading. On disease-specific survival, the stratification made by the CNN algorithm into prognostically distinct groups was more significant than the one achieved by pathologists, achieving a pathologist-level survival stratification. |

| Kim et al. Korean J Radiol 2011 [49] | ANN vs. SVM | 532 PCa patients (300/232) | 3 clinical and 5 biopsy variables | SMV significantly outperformed ANN with an AUC of 0.805 and 0.719, respectively (p = 0.020). Pre-operative probability for > pT3a: for SMW, the accuracy was 77%, and the sensitivity and specificity were respectively 67% and 79%. For ANN, the sensitivity, specificity, and accuracy were 63%, 81%, and 78%, respectively. |

| Tsao et al. J Chin Med Assoc 2014 [50] | ANN (STATISTICA, StatSoft Inc) vs. LR (Partin Tables) | 299 ORP or RARP | 7 clinical and pathological variables | To predict the pathological stage (T2 or T3) after surgery, ANN was significantly better than LR (0.795 ± 0.023 versus 0.746 ± 0.025, p = 0.016), with a sensitivity and specificity of 83% and 56% respectively for ANN and 70% and 56% respectively for LR. PSA and BMI were significantly associated with T3 stages. |

| Wang et al. J MagnReson Imaging 2018 [51] | SVM vs. LR (Partin Tables) (R software v.3.3.4) | 541 PCa patients | Clinical, pathological, and MRI variables | The created model PartinMR (Partin tables + mpMRI staging + SMV) outperformed other models of this study, with AUC of 0.891 (95% CI: 0.884–0.899, p < 0.001), 79.3% and 75.7% of sensibility and specificity respectively, 79% PPV, and 76.0% NPV for OC PCa. Using MR staging, mPartin table (AUC, 0.814, 95% CI: 0.779–0.846, p = 0.001) is significantly better than the Partin table (AUC, 0.730, 95% CI: 0.690– 0.767). MR stage was the most influential factor of extracapsular extension (HR, 2.77, 95% CI: 1.54–3.33), then D-max (2.01, 95% CI: 1.31–2.68), biopsy GS (1.64, 95% CI: 1.35–2.12), and PI-RADS score (1.21, 95% CI: 1.01–1.98). |

| Treatment Modality | AI Model | Patients (Training/Validation/Testing) | Parameters | Predicted Outcomes | |

|---|---|---|---|---|---|

| Surgery | Auffenberg et al. EurUrol 2019 [52] | ML algorithm (askMUSIC) | 7543 PCa patients | Clinical and pathological variables | 3413 (45%) have chosen RP, 2289 (30%) active surveillance, 1280 (17%) RT, 422 (5.6%) ADT, and 139 (1.8%) watchful waiting. Personalized prediction with ML algorithm to inform patients of PCa treatments was highly accurate (AUC = 0.81). Age, number of positive cores, and GS were the most influential parameters influencing patient treatment choice. |

| Surgery | Ukimura et al. J. Endourol.2014 [53] | Virtual Reality 3D surgical navigation (TilePro system) | 10 RARP | 5 anatomic structures (prostate, image-visible biopsy-proven ‘‘index’’ cancer lesion, neurovascular bundles, urethra, and recorded biopsy trajectories) | The 3D model allowed careful surgical dissection in the proximity of the biopsy-proven index lesion. Negative surgical margins were achieved in 90%. No intraoperative complications and no additional time for surgical procedures. There was a good correlation between geographic location of the index lesion on the final pathology report with the created 3D model. At 3 months, PSA level were undetectable (<0.03 ng/mL) in 100%. TRUS underestimated index lesion volume compared to MR-based modeling. The addition of multi-parametric MR and digital data of biopsy-proven improved to 90% of actual pathologic volume. |

| Surgery | Hung et al. J. Urol. 2018 [54] | Surgeon performance metrics recorder (dVLogger) | 20 urological surgeons (10 experts and 10 novices) | Automated performance metrics (APM) | Mean number cases for novices was 35 (5–80) and 810 (100–2000) for experts. Experts were more efficient in their moves by completing operative steps faster (p < 0.001) with lesser instrument-travel distance (p < 0.01), lesser aggregate instrument idle time (p < 0.001), shorter camera-path length (p < 0.001) and more frequent camera movements (p < 0.03). Experts had greater ratio of dominant: non-dominant instrument-path distance for all steps (p < 0.04), except for anterior vesicourethral anastomosis. |

| Surgery | Hung et al. J Endourol 2018 [55] | 3 ML algorithms (Python scikit-learn 0.19.0 package) | 78 RARP | Hospital length of stay (LoS) and APM | ML algorithm predicted length of stay with 87.2% accuracy. Trained with APM, its accuracy was improved to 88.5%. Metrics related with camera use were main predictors for surgery time, LoS, and foley duration. Patient outcomes predicted by the algorithm had significant association with ‘ground truth’ in surgery time, length of stay, and Foley duration. |

| Surgery | Hung et al. BJUI 2019 [56] | ML algorithm (DeepSurv) | 100 RARP cases | APM and patient clinicopathological and continence data | Urinary continence was achieved in 79% after a median of 126 days. ML model performed C-index of 0.6 and mean absolute error of 85.9 in predicting continence. 3 APM top-ranked features were taken during vesico-urethral anastomosis and 1 during prostatic apical dissection. APM were ranked higher by the ML model than clinicopathological features for prediction of urinary continence recovery after RARP. |

| Surgery | Ranasinghe et al. Urol. Oncol. 2018 [57] | NLP algorithm (PRIME-2) | 5157 RARP and 579 ORP | Pre- and post-operative clinical data | Surgeon experience and erectile function preservation (p < 0.01) were important factors in treatment choice. There were no significant differences in urinary, sexual, or bowel symptoms between RARP and ORP during the 12-month follow-up period. Emotions expressed by patients who underwent RARP were more positive while ORP expressed more negative emotions immediately and 3 months post-surgery (p < 0.05), due to pain and discomfort, and during 9 months due to fear and anxiety of pending PSA tests and sexual side effects. |

| Radiotherapy | Nicolae et al. Int. J. Radiat. Oncol. Biol. Phys. 2017 [58] | ML algorithm | 100 PCa patients treated by Low Dose Rate (LDR) brachytherapy | 6 key measures of clinical quality of LDR brachytherapy | The average planning time for the ML algorithm was significantly shorter with 0.84 ± 0.57 min compared to 17.88 ± 8.76 min for the expert planner (p = 0.020). The average prostate V150% was 4% higher for brachytherapists than ML, but not clinically significant. Only 2 brachytherapists were able to distinguish ML’s work from an expert. |

| Radiotherapy | Kajikawaet al. Radiol Phys Technol 2018 [59] | 2 CNN algorithm (AlexNet) | 60 PCa patients treated by IMRT | Planning CT images and structure labels | For the ANN model with structure labels, the accuracy was 70%, with sensitivity and specificity of 94.6% and 31% respectively. The ANN with planning CT had an accuracy of 56.7%, with 70% and 11.3% of sensitivity and specificity, respectively. These models had moderate performance to predict dosimetric eligibility. |

| Radiotherapy | Lee et al. Int J Radiat Oncol Biol Phys. 2018 [60] | ML (PRFR model) | 324 PCa patients treated with RT were genotyped for 606,563 germline Single Nucleotide Polymorphism (SNP) | 14 clinical variables and genome-wide association studies (GWAS) data | The predictive accuracy of the ML model differed across the urinary symptoms. Only for the weak stream endpoint did it achieve a significant AUC of 0.70 (95% CI 0.54–0.86; p = 0.01). 7 interconnected proteins were highlighted by gene ontology analysis and were already known to be associated with LUTS. |

| Radiotherapy | Oh et al. Sci Rep 2017 [61] | ML (PRFR model) | 368 PCa patients treated with RT were genotyped for 606,571 germline SNP | 9 clinical variables and GWAS data | For rectal bleeding and erectile dysfunction, AUC were 0.70 and 0.69, respectively. With chi-square test p-values of 0.95 and 0.93 for rectal bleeding and erectile dysfunction, respectively, there was a good correlation between the real incidence and the predicted one. 10 biological processes/genes were highly associated with both complications and the estimated false discovery rate (FDR) was less than 0.05. |

| Radiotherapy | Pella et al. Med Phys 2011 [62] | SVM and ANN (Matlab) | 321 PCa patients treated by prostate conformal RT | 13 clinical and RT variables | ANN and SVM-based models showed similar prediction accuracy for gastro-intestinal and genito-urinary toxicity after RT. For SVM model, the AUC index was 0.717, with 84.6% and 58.8% of sensitivity and specificity respectively, and 70% of accuracy. For ANN, the AUC index was 0.697 and 69% of accuracy. |

| Medication | Heintzelman et al. J Am Med Inform Assoc 2013 [63] | NLP (ClinREAD, Lockheed Martin, Bethesda, Maryland, USA)) | 33 metastatic PCa patients and 4409 clinical encounters | 4 pain severity groups with 42 pain severity contextual rules, 16 semantic types | NLP identified 6387 pain and 13 827 drug mentions in medical records. For all patient died from metastatic PCa, except for 2, the level of pain increased drastically in the last 2 years of life. Severe pain was associated with opioids prescription (OR = 6.6, p < 0.0001) and palliative radiation (OR = 3.4, p = 0.0002). 5 factors were significantly associated with severe pain: receipt of chemotherapy, opioids, or palliative radiotherapy, being in the last year of life and the number of medical appointments. The 5 African American patients clustered at the high end of the pain index spectrum, but non-significant. |

| Surgery (outcomes) | Zhang et al. Oncotarget. 2016 [64] | SVM vs. LR analysis | 205 PCa patients treated by surgery | Clinicopathologic and MR imaging datasets | To predict the probability of PCa BCR, SVM had significantly higher AUC (0.959 vs. 0.886; p = 0.007), sensitivity (93.3% vs. 83.3%; p = 0.025), specificity (91.7% vs. 77.2%; p = 0.009) and accuracy (92.2% vs. 79.0%; p = 0.006) than LR analysis. By adding MRI-derived variables, D’AMICO classification performance was effectively improved (AUC: 0.970 vs. 0.859, p < 0.001; sensitivity: 91.7% vs. 86.7%, p = 0.031; specificity: 94.5% vs. 78.6%, p = 0.001; and accuracy: 93.7% vs. 81.0%, p = 0.007). ADC (HR = 0.149, p = 0.035) was the only imaging predictor of time until PSA failure. Others were GS (HR = 1.560, p = 0.008), surgical-T3b (HR = 4.525, p < 0.001) and positive surgical margin (HR = 1.314, p = 0.007). |

| Surgery (outcomes) | Wong et al. BJUI 2019 [65] | 3 supervised ML algorithms vs. LR analysis | 338 RARP | 19 different variables (demographic, clinical, imaging, and operative data) | To predict patient with biochemical recurrence at 1 year, the 3 models were K-nearest neighbor, random forest tree, and logistic regression with an accuracy prediction scores of 0.976, 0.953 and 0.976, respectively. All 3 ML models were better than conventional statistical regression model AUC 0.865, vs. 0.903, 0.924 and 0.940, respectively, to predict early biochemical recurrence after RARP. |

| Radiotherapy (outcomes) | Abdollahi et al. Radiol Med 2019 [66] | ML algorithm (in-house Python codes) | 33 PCa patients treated by intense-modulated radiation therapy (IMRT) | Radiomic features | For GS prediction, T2-w radiomic models, with a mean AUC of 0.739 had better efficiency. To predict stages, ADC models were more effective with an AUC of 0.675. For treatment response after IMRT, 22 radiomic features were strongly correlated, with a wide range achievement from 0.55 to 0.78. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Thenault, R.; Kaulanjan, K.; Darde, T.; Rioux-Leclercq, N.; Bensalah, K.; Mermier, M.; Khene, Z.-e.; Peyronnet, B.; Shariat, S.; Pradère, B.; et al. The Application of Artificial Intelligence in Prostate Cancer Management—What Improvements Can Be Expected? A Systematic Review. Appl. Sci. 2020, 10, 6428. https://doi.org/10.3390/app10186428

Thenault R, Kaulanjan K, Darde T, Rioux-Leclercq N, Bensalah K, Mermier M, Khene Z-e, Peyronnet B, Shariat S, Pradère B, et al. The Application of Artificial Intelligence in Prostate Cancer Management—What Improvements Can Be Expected? A Systematic Review. Applied Sciences. 2020; 10(18):6428. https://doi.org/10.3390/app10186428

Chicago/Turabian StyleThenault, Ronan, Kevin Kaulanjan, Thomas Darde, Nathalie Rioux-Leclercq, Karim Bensalah, Marie Mermier, Zine-eddine Khene, Benoit Peyronnet, Shahrokh Shariat, Benjamin Pradère, and et al. 2020. "The Application of Artificial Intelligence in Prostate Cancer Management—What Improvements Can Be Expected? A Systematic Review" Applied Sciences 10, no. 18: 6428. https://doi.org/10.3390/app10186428

APA StyleThenault, R., Kaulanjan, K., Darde, T., Rioux-Leclercq, N., Bensalah, K., Mermier, M., Khene, Z.-e., Peyronnet, B., Shariat, S., Pradère, B., & Mathieu, R. (2020). The Application of Artificial Intelligence in Prostate Cancer Management—What Improvements Can Be Expected? A Systematic Review. Applied Sciences, 10(18), 6428. https://doi.org/10.3390/app10186428