1. Introduction

Spoken language processing combines knowledge from the interdisciplinary area of natural language processing, cognitive sciences, dialogue systems, and information access. Speech Emotion Recognition (SER) and text-to-speech synthesis (TTS), including voice and style conversion, as part of human–machine spoken dialogue systems correspond to certain cognitive aspects underlying the human language processing system [

1]. In the last few decades, there has been growing interest in developing human–machine interfaces that are more adaptive and responsive to a user’s behavior [

2]. In that sense, the use of emotion in speech synthesis and recognition of emotion in speech takes an important place in attempts to improve naturalness of human–machine interaction (HMI) [

3]. As to TTS, different applications such as smart environments, virtual assistants, intelligent robots, and call centers have set requirements for different speech styles identified with corresponding emotional expressions [

4]. Recognition of emotions in HMI is not restricted to speech analysis only, but also image analysis (facial expression recognition, eye-tracking data) and physiological signals (pulse rate, skin conductance, facial electromyography, electroencephalography (EEG) signal) [

5]. Emotion recognition in spoken dialogue systems such as call centers provides a possibility to respond to callers according to the detected emotional state or to pass control over to human operators [

2,

6,

7,

8].

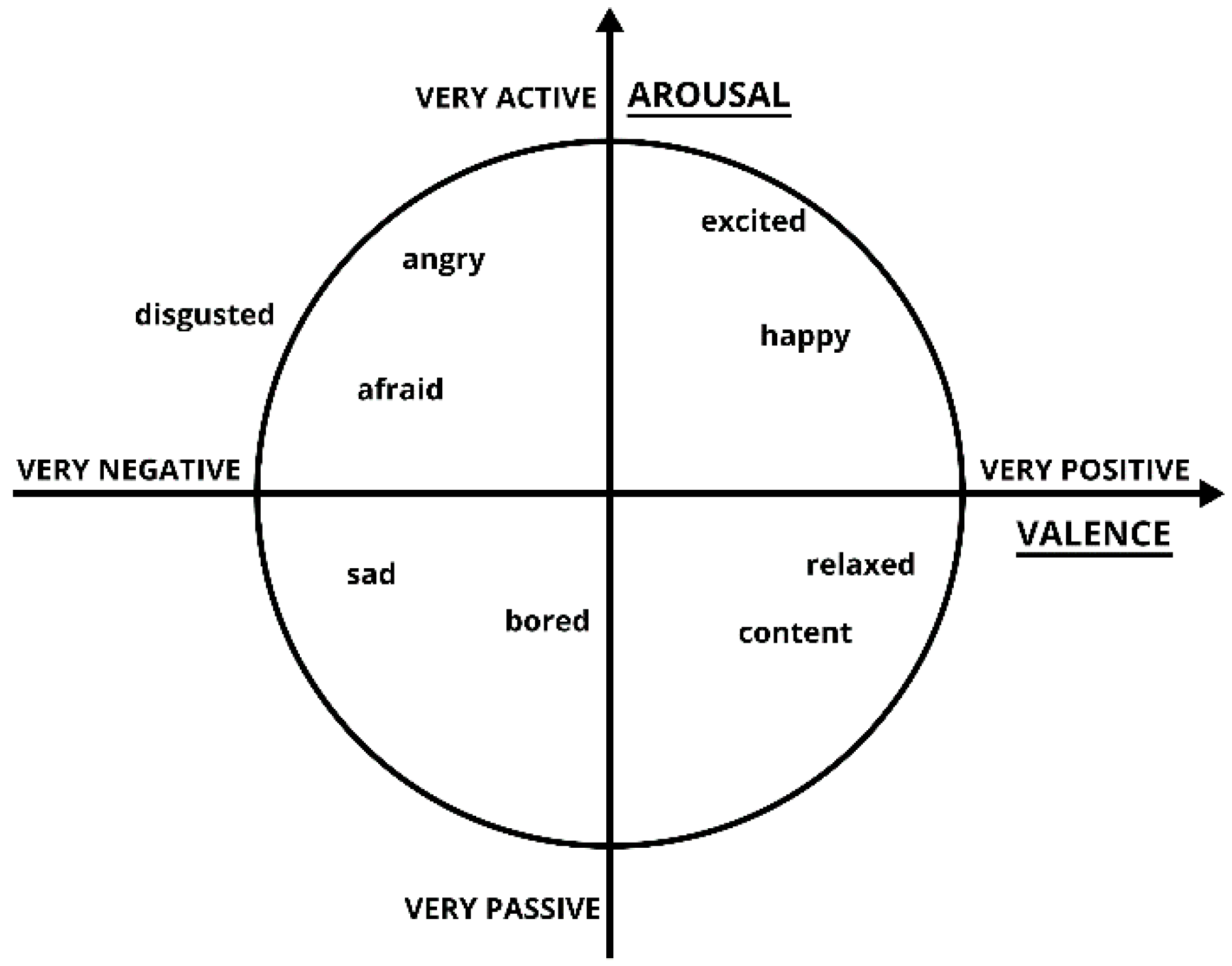

In the SER research, two main approaches are utilized in describing the emotional space. The first approach describes the emotional space with a finite number of prototypical emotions according with categorical emotion model. The second approach uses dimensions (typically arousal and valence) to determine possible emotional states in the space defined by chosen dimensions. The latter approach corresponds to dimensional emotion models. Dimensional emotion models mostly use two or three dimensions (e.g., valence, arousal, and sometimes dominance) to describe the emotional space in which the emotional variability is to the greatest extent determined by the first two dimensions and thus used as a basis for research in the field of SER [

9]. The valence dimension describes the pleasantness of emotion and ranges from positive (e.g., joy) to negative (e.g., anger). The arousal dimension indicates the level of activation during some emotional experience and it ranges from passive (e.g., sleepiness) to active (e.g., high excitement). The position of some basic, categorical emotions in the valence–arousal space is shown in

Figure 1.

The dimensional models allow using emotional categories (appropriately positioned in a two-dimensional emotional space) among which it is possible to determine a distance metric [

10]. Goncalves et al. utilized four dimensions (namely, valence, arousal, sense of control, and ease in achieving a goal) to describe user’s emotional state while interacting with an electronic game [

11]. Landowska proposed a procedure to obtain new mappings with mapping matrices for estimating the dimensions of a valence-arousal-dominance model from Ekman’s six basic emotions [

12]. The procedure, as well as the proposed metrics, might be used, not only in evaluation of the mappings between representation models, but also in a comparison of emotion recognition and annotation results. Emotion valence classification in self-assessed affect challenge is reported in [

13]. Detection of a degree of speaker’s sleepiness can help recognizing his/her emotional arousal as well [

14]. Sometimes both approaches, emotion category and valence-arousal classification, are utilized for comparison, as in the INTERSPEECH Emotion Sub-Challenge on acted speech corpus [

15].

In a situation when all call center operators are busy and unable to answer a new call, the system puts that call on hold regardless of its urgency. By way of illustration, if a call is the fifth call in the queue in a given moment, a caller which is terrified, angry, or upset would be left to wait for a certain period of time before his/her call is considered. This period of time is equivalent to the time in which all the preceding calls are answered. This classical approach in call centers does not take into consideration the urgency of a call and calls are processed in the order in which they are received. Petrushin utilized the emotion recognition as a part of a decision support system for prioritizing telephone voice messages in a call center and assigning a proper agent to respond the message [

6]. His goal was to recognize two possible states: “agitation” which includes anger, happiness and fear, and “calm” which includes normal state and sadness. The average recognition accuracy was in the range of 73–77%.

In this research, the first presumption was that there are some calls which are more urgent and which should be processed faster. The second presumption was that the urgency of the call correlates with a caller emotional state reflected through speech. The motivation behind this research was to improve the effectiveness of call center service through giving the first level priority to the callers who are experiencing a negative valence emotional state (fear and anger), the second level priority to a sad or neutral emotional state, and the third level priority to a joyful emotional state. The proposed approach consists of recognition of caller’s emotional speech and redistribution of the calls according to the proposed emotion ranking. Thus, faster processing and the decrease in waiting time for callers estimated as more urgent, is achieved.

The paper is organized as follows:

Section 2 covers related works including acoustic modeling of emotional speech and the underlying emotional speech corpus, as well as methods for emotion classification. The proposed algorithm for redistribution of calls is described in detail in

Section 3. The simulation and experimental results are reported and discussed in

Section 4. Finally, conclusion remarks and future research directions are summarized in

Section 5.

3. Algorithm for Call Redistribution Based on Speech Emotion Recognition

As mentioned earlier, in this research, one presumption was that the urgency of the call correlates with the caller’s emotional state reflected through speech. Our focus was on emergency call centers and health care centers for elderly people. Aiming to recognize more urgent callers among them, we have proposed the ranking of five basic emotions.

So, basic emotions with negative valence (fear, anger and sadness) reflect unpleasantness of the speaker and our presumption was that those speakers have a health, or any other, more urgent problem. On the other hand, there are positive valence emotions (e.g., joy) and neutral valence (neutral state) that are supposed to reflect less urgent speaker’s state and those calls could be processed later.

The proposed ranking of five basic emotions is:

- 1.

Fear (f)

- 2.

Anger (a)

- 3.

Sadness (s)

- 4.

Neutral (n)

- 5.

Joy (j).

In the proposed ranking, fear is put first because it is an emotion that people experience when facing a serious problem (serious injuries, heart attack, accidents, etc.). In the research conducted on the CEMO corpus containing dialogues recorded in a real-world medical call center, it was pointed out that patients had often expressed stress, pain, fear of being sick or even real panic [

8]. Fear is the most common emotion in the CEMO corpus, with different levels of intensity and many variations [

7]. Anger is the second negative and high arousal emotion, expressed in various stressful and disturbing situations. Sadness is in third place. It is an emotion with negative valence which is typical for elderly and lonely people. Holmen et al. reported that experiencing loneliness had a negative influence on the state of mood, so loneliness and sad mood prevailed especially among elderly subjects with cognitive difficulties [

46]. Joy is in last place because it is considered to reflect full satisfaction and good mood, which are not indicators of urgent states.

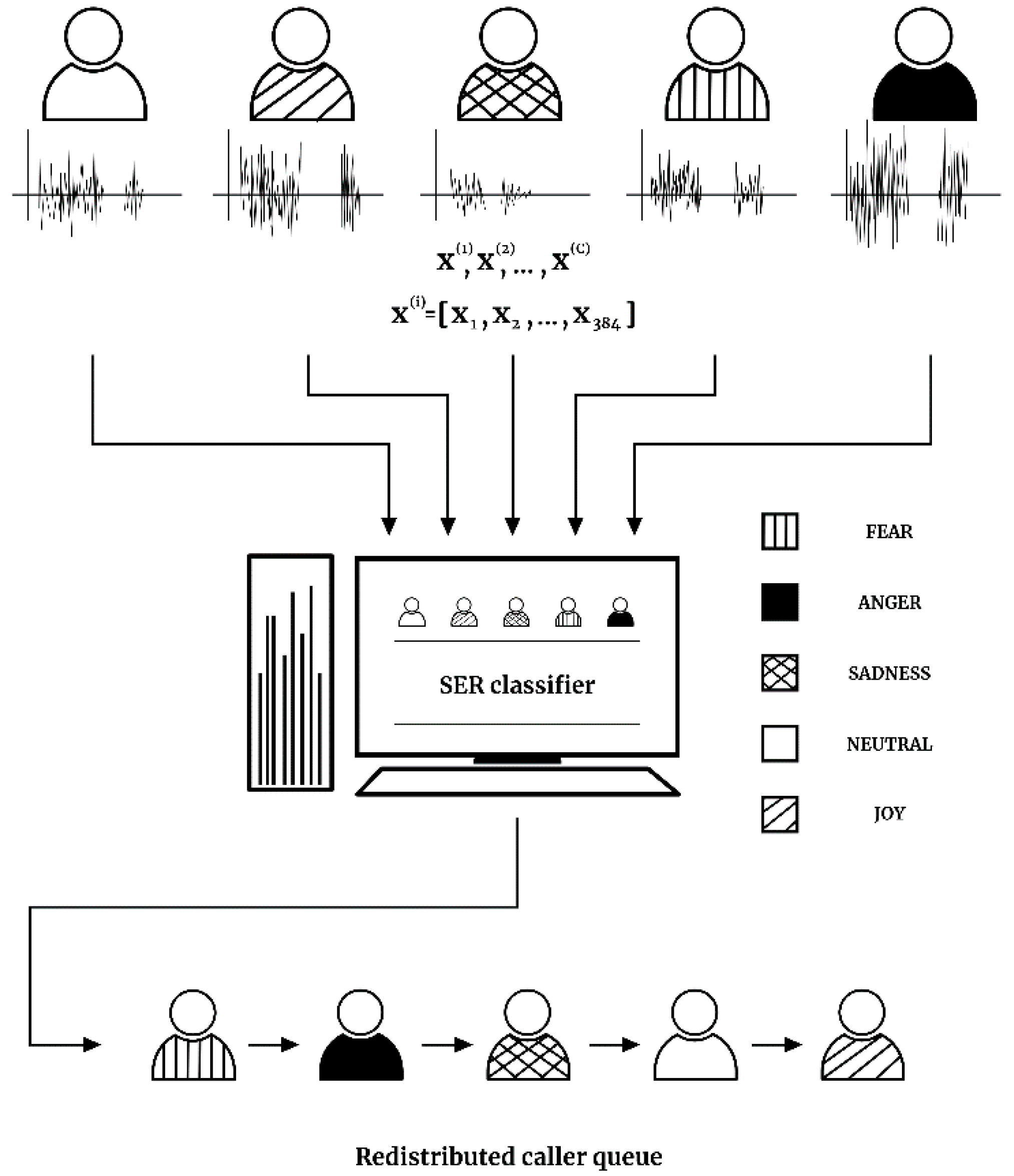

The research setting is explained using an example of five calls received at the same moment—while all operators are busy. For each call, the initial part of the caller’s speech is recorded. This recording is further processed and the feature vector

is extracted. The feature vector is forwarded to a classifier which gives one of the five emotion labels (anger, joy, fear, sadness, and neutral) to input speech. Finally, after SER, those five calls are redistributed according to the recognized emotions and the proposed emotion ranking. The proposed framework of call processing is shown in

Figure 2. For example, in the scenario shown in

Figure 2, the original call order was neutral, joyful, sad, afraid, and angry; after SER and proposed call redistribution, the system will firstly process the call featuring fear, then the call featuring anger, afterwards a sad caller, then neutral, and at last the call featuring joy.

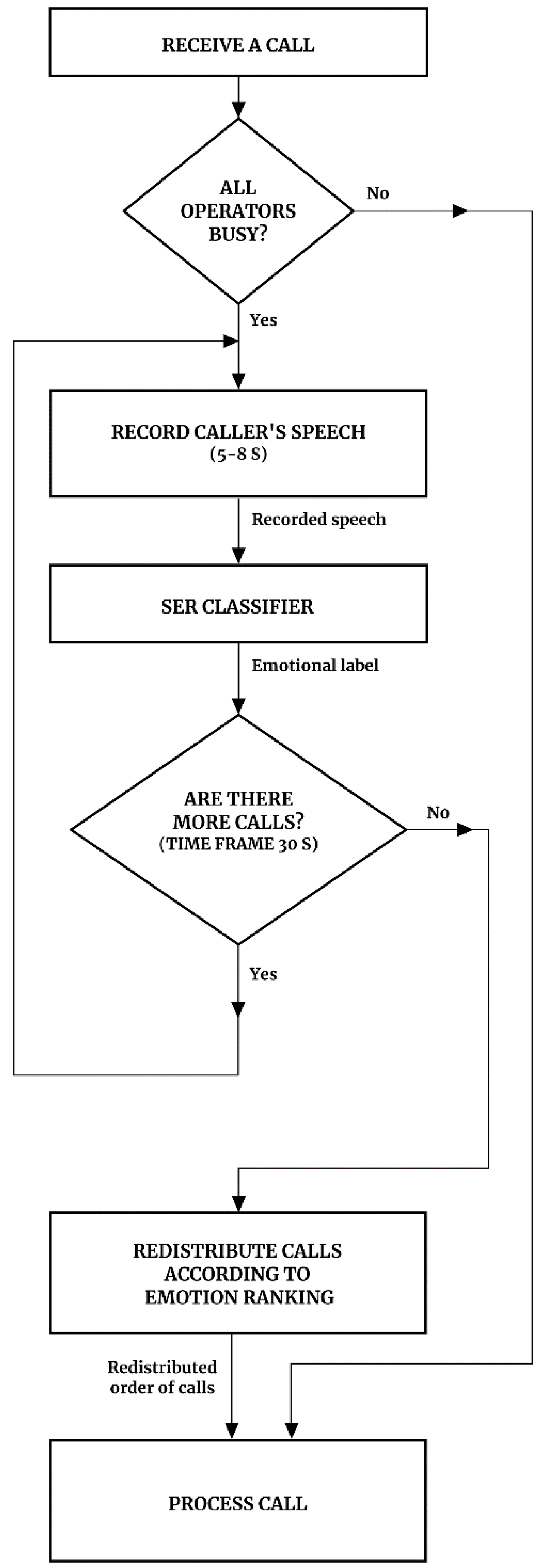

The proposed algorithm, whose block diagram is shown in

Figure 3, has the following steps:

- 1.

When a call is received while all operators are busy, the system asks for the reason of the call and records the caller’s speech for about 5–8 s. This recording contains about 1–2 sentences, depending on the dialogue strategy, which will be processed quickly by SER while the call is put on hold. For each recording, the feature vector consisting of 384 features is extracted.

- 2.

The extracted feature vector is input to our trained SER classifier. The classifier outputs one of the five emotion labels (fear, anger, sadness, neutral and joy) to the input speech. Keywords recognized by automatic speech recognition (ASR) can also be used for sentiment analysis, but it depends on both language and type of the call center.

- 3.

If there are several calls on hold at the same time, they are redistributed based on the associated emotion label. Redistribution is done according to the introduced priority vector

:

where

represents fear, i.e., it denotes the speaker recognized as being in a state of fear,

denotes the speaker recognized as angry,

marks the speaker recognized as sad,

refers to neutral, and

to a joyful state of the speaker. The introduced priority vector, i.e., emotion ranking, represented in Equation (1), is proposed considering application in emergency call centers and health care centers for elderly people. It should be noted that the proposed algorithm is not restricted to the aforementioned priority vector only. Regarding a specific domain of application, a new emotion ranking can be adopted.

- 4.

Calls are processed in the new order which is obtained after their emotion labeling based on SER (and ASR) and redistribution according to the proposed emotion ranking, i.e., the priority vector. Firstly, all callers that are recognized as afraid are processed, after them angry callers and so on. In the end, the callers recognized as joyful are processed. The final goal of the redistribution is reduction in waiting time for the callers recognized as the priority. Let us denote the waiting time

of a caller

without SER and call redistribution, where

and

is the number of calls received at the same moment. Then,

denotes the waiting time of a caller

after SER and call redistribution (after application of the proposed algorithm). The objective function is:

according to the priority vector

. The objective function is formulated as to maximize waiting time reduction for the callers recognized as the priority regarding the priority vector

. So, the goal of call redistribution is to maximize waiting time reduction for the caller

, if the caller

is set as priority regarding the vector

. In our experiments this is the case for the caller recognized as being afraid—fear is in first place in the priority vector

. Afterwards, the objective function maximizes the waiting time reduction for the caller recognized as being angry, since anger is in second position in the priority vector

.

The call processing and the proposed algorithm are intended to be a part of a client-server application based upon computer-telephone integration (CTI). The main part of the application is running on the server side located on a remote computer. A client side is located at a call center. When a call is received, if there is at least one free operator, the call is answered immediately. In the case all operators are busy at that moment, the client side initiates a connection with the server which is waiting for clients. After the connection is established, a new session is started and the client sends a recorded speech sample of the call. On the server side, the feature vector is extracted for a received speech record and it is forwarded to SER module which classifies it into one of the predefined emotion categories. If a new call is received at a call center within a period of 30 s, the client sends the recorded speech sample of the second call and steps 1 and 2 of the algorithm are performed. The established session lasts as long as there are new calls within the time frame of 30 s, which is chosen as the overlapping time between two consecutive calls. When there are no more calls within the specified time period, all calls processed during the current session of client-server communication are redistributed according to the proposed emotion ranking. The call redistribution is intended to be applied on a finite number of calls received in a short period of time while all the operators are busy. In the experiments, the situations of three, five and seven simultaneously received calls were considered. Let us denote them as group of calls. For example, when a call center simultaneously receives seven calls, those seven calls will be redistributed according to the SER system output and processed in a new order. While operators are answering those seven calls, if there is a new incoming call, it will be put in a new group of calls to be redistributed. The proposed emotion ranking can be specified after the connection establishment, so that the server adapts the system response to the specific type of a call center (a client). At the end of the session, the server sends the client the list of redistributed calls which are then processed according to the redistributed order.

4. Simulation and Experimental Results

The research was designed as a set of experiments in a simulated call center receiving a different number of calls simultaneously, i.e., during a short period of time when all operators are busy. The experiments focused on: (i) the redistribution of calls based on emotion label assigned after speech emotion recognition task, and (ii) the evaluation of time period in which the call was put on hold without and after speech emotion recognition was applied for call redistribution. During experiments, the number of simultaneously received calls varied from 3 to 7. In all experiments, prosodic and spectral feature set was used and the linear Bayes classifier and kNN were considered as classification techniques, as described in

Section 2.

An average waiting time, without and with the redistribution, for each emotional state is evaluated as an average value of waiting time obtained for 50 experimental iterations in the simulated call center with one ideal active human-operator (a human factor is not considered). This procedure is repeated for each experimental setting (3, 5, and 7 simultaneously received calls). Waiting time reduction estimate is made under assumptions about underlying distribution of emotions in input calls and distribution of call duration. We assumed that all the emotions had a uniform distribution as well as that call durations were uniformly distributed across the chosen range (from 30 s to 3 min 50 s). The specified range was chosen with the assumption that it is wide enough to take into consideration the duration of shorter, medium, and longer phone calls as well. Thus, the evaluated waiting time after call redistribution may be shorter for every caller proportionally to the number of active operators in the call center.

A pseudo-random number generator is used for the generation of emotion labels (random choice of emotion for input call) as well as the generation of input call duration. The order of the calls in queue (the order of the call arrival) has, as in simulation as in real-world call center, the biggest influence on the estimated waiting time which a caller could spend in callers’ queue. In our simulations, the order of the call arrival featuring specific emotion is also unknown and thus determined by generated pseudo-random number. Thus, regarding every iteration in simulation, the random number of occurrences of each emotion class with the associated random call duration, and finally random order of calls (emotions) in callers’ queue, jointly influence the variations of estimated average waiting time, before and after call redistribution. Additionally, the recognition rate of some emotional state has an influence on the average waiting time after call redistribution.

Simulation of call redistribution in a call center is explained on an experimental example for three simultaneously received calls. Each call is represented by one utterance in the GEES corpus. Firstly, the vector of randomized emotion labels for three input calls was generated. According to the input emotion label vector, three utterances belonging to chosen emotion classes were randomly (regarding a speaker) selected from the corpus and provided as an input to SER. As an initial part of the simulation, duration of a call, generated as a random value between 30 s and 3 min 50 s, was appended to each of these utterances. Knowing the initial order of the simulated calls (determined with input emotion label vector), the initial waiting time in the caller’s queue is calculated for each caller as a sum of call duration for all preceding callers in the queue. Every caller is represented with input utterance determined with input emotion label. Thus, initial waiting time for every emotion class is evaluated. Secondly, based on the classifier output each input utterance gets one of the five emotion labels, thus output emotion label vector is obtained. Given the output emotion label, calls are redistributed according to the priority vector. New waiting time is calculated for each caller based on the new position in redistributed caller’s queue. Accordingly, new waiting time for every emotion class is evaluated.

Table 2 shows the average waiting time which a caller will spend if his/her call is among three calls received at the same moment while all operators are busy, before and after application of SER and call redistribution. It can be observed that there is a significant waiting time reduction for callers recognized as being in a state of fear: initially, they were waiting for about 2 min 40 s, and after SER and the proposed call redistribution they had to wait only 8 s. In the case of an angry caller, there is also a noticeable waiting time reduction: the initial waiting time was 2 min 19 s and after redistribution only about 1 min. In the case of a sad caller, there is little time saving expressed in few seconds: the initial waiting time was 2 min and after redistribution reduced to 1 min 45 s. Regarding neutral and joyful emotional states of the caller, there is an increase in waiting time after SER and call redistribution: about 1 min increased waiting time for a neutral caller and about 2 min for a joyful caller. This increase was expected as the redistribution always places callers with these emotions at the end of callers’ queue.

The average waiting time which a caller will spend if his/her call is among five calls received in a short period of time while all operators are busy, before and after the application of the proposed algorithm, is shown in

Table 3. In the case of fear as the first in emotion ranking, there is the biggest and significant decrease in waiting time: from 4 min 17 s to 25 s after SER and redistribution. There is also a significant decrease in waiting time for angry callers: from 4 min 36 s to 1 min 57 s.

Unlike the experiment with three calls at the same time, in the experiment with five calls, callers recognized as being sad have achieved nearly 1 min shorter waiting time after SER and redistribution. In the case of a neutral state, the waiting time is increased for about 1 min. For callers recognized as being joyful, the increase is larger and amounts to about 4 min.

Table 4 shows the average waiting time which a caller will spend if his/her call is among 7 calls received simultaneously, i.e., in a short period of time while all operators are busy. As in two previous experimental settings, three emotions ranked as the priority one (fear, anger and sadness) have a significant decrease in waiting time. Calls featuring fear have the biggest waiting time reduction: it amounts to about 5 min 40 s. Calls featuring anger have achieved 2 min 20 s reduction in waiting time. In the case of a sad caller, the achieved decrease in waiting time is about 1 min. It can be observed that neutral and joyful callers have an increase in waiting time: 2 min 37 s and 5 min 30 s, respectively.

The comparative results of average waiting time in all three experimental settings (3, 5, and 7 simultaneously received calls) regarding the callers in all five emotional states, are shown in

Figure 4. As the callers in the state of fear have the highest priority, their average waiting time is significantly reduced in all experimental settings, even up to twenty times in the case of three simultaneously received calls, ten times in the case of five simultaneously received calls, and six times reduced in the case of seven calls. Angry callers are given the second priority in redistribution, so in all experiments the decrease in their average waiting time is achieved. In the case of three and five simultaneously received calls, the waiting time after redistribution is reduced to less than half of the waiting time before redistribution. In the case of seven simultaneously received calls, the waiting time is reduced by one third of the initial waiting time.

From experimental results shown in

Figure 4, it can be noticed that sad callers will have a moderate decrease in waiting time after the proposed call redistribution. The absolute value of waiting time reduction is the biggest in the case of seven simultaneously received calls, but the relative value of reduction is the biggest in the case of five calls and it amounts to about 18% of the initial waiting time.

As can be observed from

Figure 4, callers in a neutral state have increased waiting time after call redistribution, about 1 min increase in the case of three and five simultaneously received calls, and about 2 min increase in the case of seven simultaneously received calls. Joy is marked as the emotion with the lowest priority, which is why callers featuring joy are put at the end of the caller’s queue. It causes a significant increase in waiting time for the caller in a state of joy, about twice as longer waiting time after the proposed call redistribution in all experimental settings.

Table 5 shows the waiting time reduction for five emotional states after SER and the proposed call redistribution is applied, in all experimental settings (with 3, 5 and 7 simultaneously received calls) in a simulated call center. Time reduction is calculated as difference between the average waiting time without the call redistribution and the average waiting time after application of SER and the proposed call redistribution:

where

denotes the average waiting time for a caller in the emotional state

without SER and call redistribution,

denotes one of the five emotional states (fear, anger, sadness, neutral and joy), and

denotes the average waiting time for a caller in the emotional state

after application of SER and call redistribution.

The positive values of waiting time reduction in

Table 5 indicate the real reduction in waiting time after call redistribution, which is the case of the callers recognized as being in a state of fear, anger or sadness. Negative values of waiting time reduction indicate that waiting time after call redistribution is actually increased, which is the case of the callers recognized as being in a neutral or joyful state. From the results presented in

Table 5, it can be observed that as the number of simultaneously received calls grows, the calls featuring three recognized emotions considered as indicators of more urgent caller’s state, namely fear, anger and sadness, show the tendency to have a decreased waiting time after the proposed call redistribution. On the other hand, the calls featuring recognized neutral speech and joy show tendency of increased waiting time as the number of simultaneously received calls grows, but it is considered justified as long as more urgent calls are processed instead of less urgent one.

To examine the results in the case of larger number of iterations, the simulations were performed using 200, 500, and 1000 iterations in all three experimental settings (3, 5, and 7 simultaneously received calls). For each experimental setting, obtained results are presented in

Table 6,

Table 7 and

Table 8, respectively. Regarding initial average waiting time, even with 1000 iterations there are differences in evaluated initial average waiting time across five emotional states due to combination of random order of emotions in callers’ queue and random duration of each call in the queue. Similar to the experiments with 50 iterations, after application of SER and call redistribution, calls featuring fear and anger have achieved significant reduction in waiting time. Unlike the simulation with 50 iterations, calls featuring sadness achieved in some cases slight increase and in some cases slight decrease in waiting time after call redistribution. This can be explained with the fact that neutral callers are put in the middle of callers’ priority, so it was expected that their waiting time after increased number of iterations is evaluated as slightly changed initial average value. As can be observed from

Table 6,

Table 7 and

Table 8, callers recognized as being in neutral and joyful states will have increased waiting time, similar to the results obtained in the simulation with 50 iterations.

Experimental results show the decrease in waiting time of the prioritized emotions. Indeed, there is a minor probability of misrecognizing anger as joy (because both are characterized by a high arousal, but opposite valence poles), and placing that caller at the end of the callers’ queue, but possible negative effect depends on the position of such a call in original queue and emotional states of other callers in it. Overall experimental results show an essential decrease in waiting time of the prioritized emotions with negative valence.

In real-world emergency call centers, it is unlikely to expect all emotions equally distributed, as it was case in our simulation experiments. It is more likely to receive more calls featuring fear and less calls featuring joy, as it is reported for the CEMO corpus recorded in a real-world medical call center [

7]. Although the results of the proposed SER might be to a certain extent lower in real-world emergency call center, we consider, based on high recognition accuracy for fear, sadness, and neutral that the proposed approach to SER and call redistribution based on it would improve effectiveness of such call center service.

5. Conclusions

The presented research has addressed the problem occurring in emergency call centers when there are several incoming calls in a short period of time while all operators are busy. The proposed solution takes into account a caller’s emotional state, by recognizing emotion in speech and giving priority to the caller with negative valence emotion (fear, anger and sadness). The research aims to improve efficiency of emergency call centers based on recognition of more urgent callers. Utilizing the proposed emotion ranking and call redistribution, there is a significant reduction in waiting time for the callers recognized as being in the state of fear. A noticeable waiting time reduction is also achieved in the case of callers recognized to be angry, and a slight reduction in the case of callers recognized to be sad. On the other hand, the algorithm puts neutral and joyful callers at the end of the call queue, so those callers will have an increased waiting time. This is the price to be paid, and it has been considered that less urgent callers are more capable of bearing a longer waiting time.

Additionally, the waiting time for the most urgent calls can be shortened by giving the signal to operators who process lower priority calls that there is an emergency call on hold. Depending on the dialogue strategy in a call center, the current call will be ended faster or put on hold, so that an emergency call would be received immediately.

Although there are evident differences between the emotional speech corpus recorded in a real call center and the acted emotional speech corpus recorded under controlled conditions, the experimental results in the simulated call center give a promising sign that the proposed approach to SER and call redistribution based on it would improve effectiveness of a real call center service. The proposed algorithm is a basis for detecting critical users in the specific type of call centers considered in the research.

Other SER techniques can be used instead of the proposed one, with similar results related to the improvement of a call center effectiveness. The proposed SER based on hand-crafted features (like at the OpenSMILE toolkit) could be faster and more robust in real conditions than any DNN or end-to-end based SER system, particularly in the case of a rather small GEES corpus, i.e., the only one available in Serbian that was suitable for the presented research. Due to the lack of available data, any DNN- or end-to-end-based SER system for Serbian could not be trained well, and there is a high risk of model over-fitting. In the only emotional speech corpus for under-resourced Serbian (GEES), there are just 1800 utterances, which is definitely not enough for state-of-the-art NN-based approaches.

Further research should consider “in the wild” recordings from real-world call centers (emergency call centers or health care centers for elderly people), so that the proposed approach could be tested on realistic data and its efficiency verified. Further research may also be directed toward combining paralinguistic and linguistic information. Recordings of the initial part of a call (1–2 sentences with duration of 5–8 s) in human–machine dialogue can be used as input not only into SER, but also into ASR. After ASR, recognized keywords can be used as an additional indicator of certain emotional states and thus priorities. It could increase reliability of the emotion estimation and utility of the proposed algorithm, even in the case of a lower arousal, i.e., more passive levels of emotion activation. Of course, a possible fusion of SER and ASR depends on the dialogue strategy, and the language and vocabulary expected in particular human–machine interactions.