1. Introduction

The movement intention of the human body is generated from the brain and transmitted to the muscle cells through the nerves. The form and amplitude of the electrical signal of the muscle directly reflect the movement pattern of the human body [

1,

2,

3]. In order to enable wearable devices such as prosthetics and exoskeletons to switch smoothly between multiple movement modes, many scholars have worked on the recognition of the human movement state. An effective method to identify human movement patterns and movement intentions is by collecting human electromyography (EMG) signals to control the movement of an exoskeleton in real time [

4,

5]. Young et al. [

6,

7] trained a model to recognize amputees in different motion modes through mechanical signals and EMG signals. The lower-limb prosthetic system is expected to seamlessly switch among motion modes, and the overall recognition rate can be increased to 86%. Joshi et al. [

8] used the Bayesian information criterion (BIC) and some standard feature extraction methods and linear discriminant analysis (LDA) classification algorithms to separate eight different gait phases based on the electromyogram (EMG) signal data of the lower limbs. Simon et al. [

9] used pattern recognition to seamlessly and naturally switch among the five motion modes of prosthetics. Collecting and analyzing EMG signals can help the exoskeleton to accurately identify the current state of movement of the human body for matching the best movement mode [

10,

11,

12,

13]. Liu et al. [

14] used the data obtained by the angle sensor to train the model to achieve better recognition results. Liu et al. [

15] used myoelectric sensors, gyroscopes, and pressure sensors to collect data and used a hidden Markov model (HMM) to identify real-time motion states. In this way, the distinction in the intention of the user to walk on five different terrains can be inferred. Capturing images of human motion to analyze motion patterns is a very mature method, but this method requires huge image acquisition equipment and cannot be integrated with wearable devices such as an exoskeleton [

16].

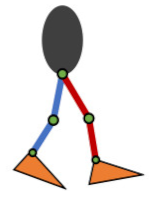

We have tried to install a gyroscope on each joint of the lower limbs of the human body, install a pressure sensor on the selected position of the foot, and analyze the human movement pattern by multi-source information fusion, which has showed a good recognition effect on the human movement pattern recognition [

17]. Since the angle information of the lower limbs of the human body and the pressure information of the sole of the foot are generated after the actual movement of the human body, there is a lag in the judgment of the human body’s movement intention and is rather more suitable for identifying the current movement mode of the human body or exoskeleton system. The surface EMG signal of the muscle has the advantage of not being restricted by physical constraints and, thus, not disturbing the actual movement of the human body. Therefore, in order to improve the accuracy of the recognition of common human movement patterns in the daily use of exoskeletons, we designed a wearable EMG acquisition system to collect EMG signals on the surface of the human body and identify common human lower-limb movement patterns. A supervised machine-learning method is used to train a motion pattern classifier, by using the surface EMG signals, to study the effects of different feature parameters on human motion pattern recognition based on multilayer perceptrons and the long short-term memory (LSTM) neural network.

4. Discussion

We know that when the human body wears an exoskeleton, the accurate recognition of the human movement mode guarantees the switching to the accurate movement mode during the mixed movement control of the exoskeleton. Scholars have tried various methods to identify the movement state of the human body and obtain the accurate movement intention of the human body. Young et al. [

6,

7] trained an algorithm to recognize amputees in different motion modes through mechanical and EMG signals. The overall recognition rate using EMGs can be increased to 86%. Joshi et al. [

8] used the Bayesian information criterion (BIC) along with some standard feature extraction methods and linear discriminant analysis (LDA) classification algorithms to separate eight different gait phases by using the electromyogram (EMG) signal data of the lower limbs, and the maximum accuracy of recognition of the left and the right leg motion patterns was found to be 93.83% and 91.60%, respectively. Simon et al. [

9] used pattern recognition to seamlessly and naturally switch control among multiple motion modes of prosthetics. Under five movement modes, the recognition error rate was less than 5%. Liu et al. [

14] used angle sensors to obtain human motion data, and the algorithm was trained to achieve the model on the test set with an average accuracy of 87.22%. Liu et al. [

15] used myoelectric sensors, gyroscopes, and pressure sensors to collect data and used a hidden Markov model (HMM) to identify real-time motion states. The intention of the prosthetic user to walk on different terrains can be inferred, and the intent pattern recognizer can recognize five typical terrain patterns with an accuracy of 95.8%. We have tried to install gyroscopes on various joints of the lower limbs of the human body and installed pressure sensors at key positions on the sole of the feet. The accuracy of identifying mixed human movement patterns was 92.7% [

17].

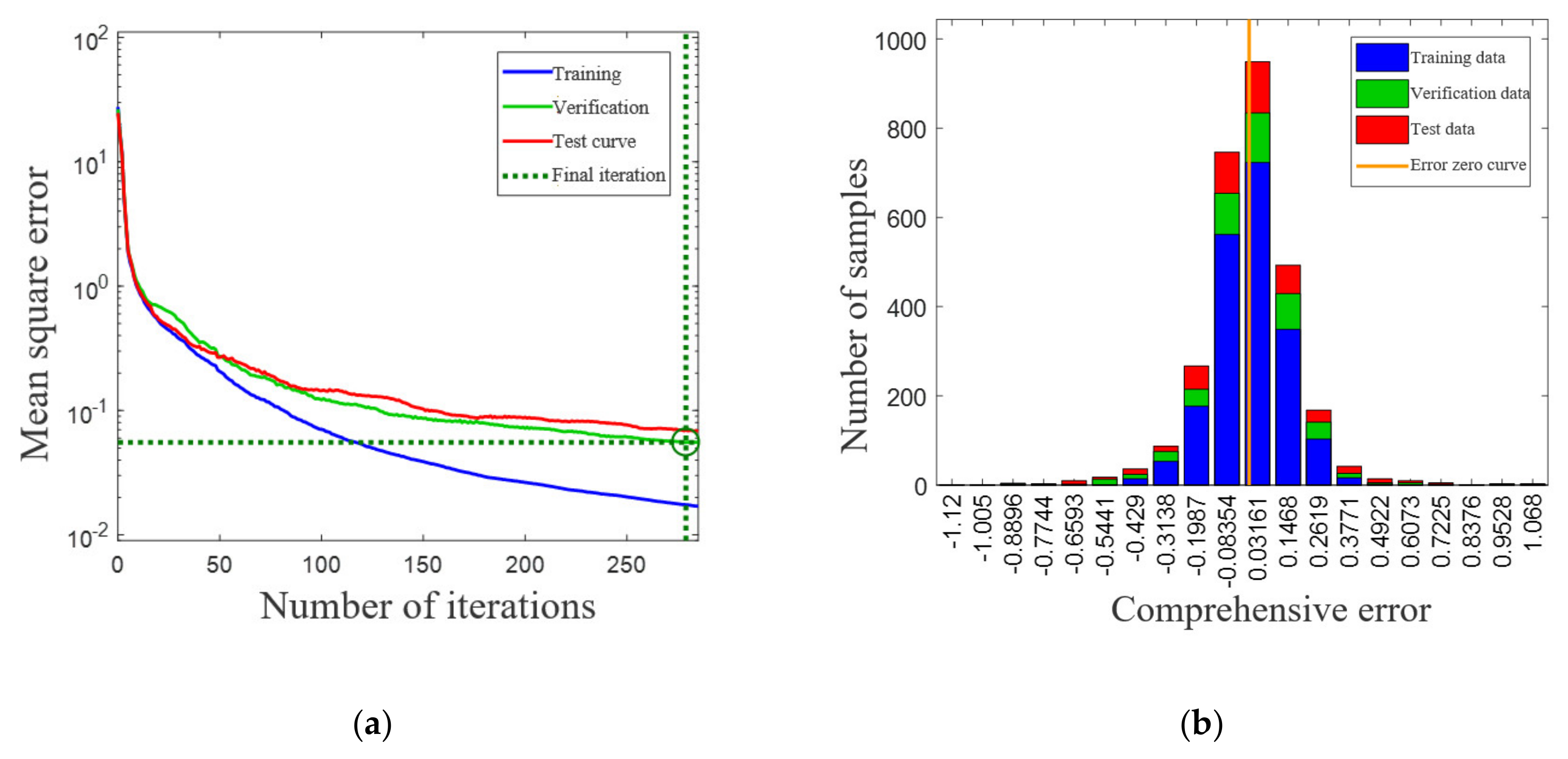

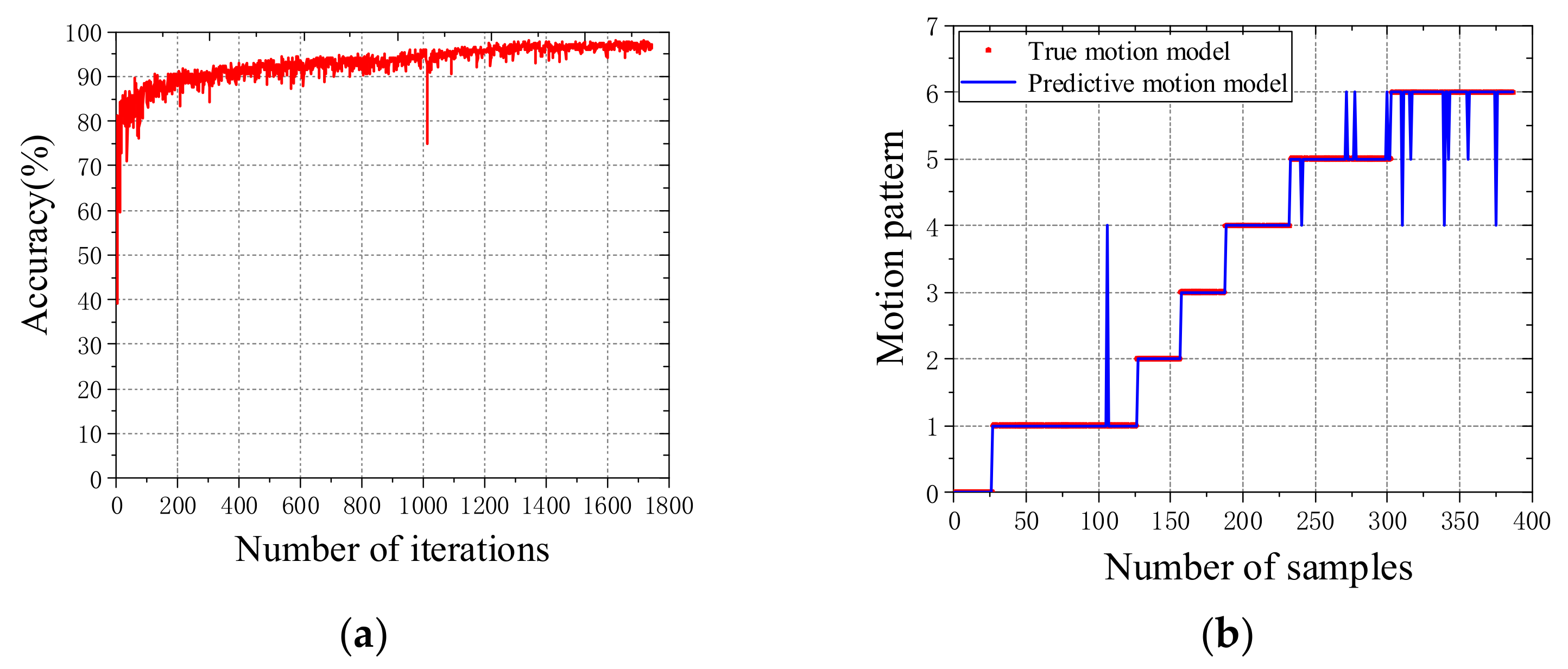

In this paper, different feature parameters of human surface electromyographic signals are extracted in the seven daily motion modes. Two common neural networks, multilayer perceptrons and LSTM neural networks, are separately trained to identify human motion patterns. In the training process, different feature parameters and different classifier model parameters were used to compare the accuracy of the motion pattern recognition and error impact analysis. In our work done in this paper, the best recognition rate trained by the multilayer perceptrons was 95.53%, and the best recognition rate trained by the LSTM neural network was 96.57%. Compared with the motion recognition rate of the above scholars, it has a slight advantage. At the same time, the motion data acquisition system is compact and easy to integrate with the exoskeleton.

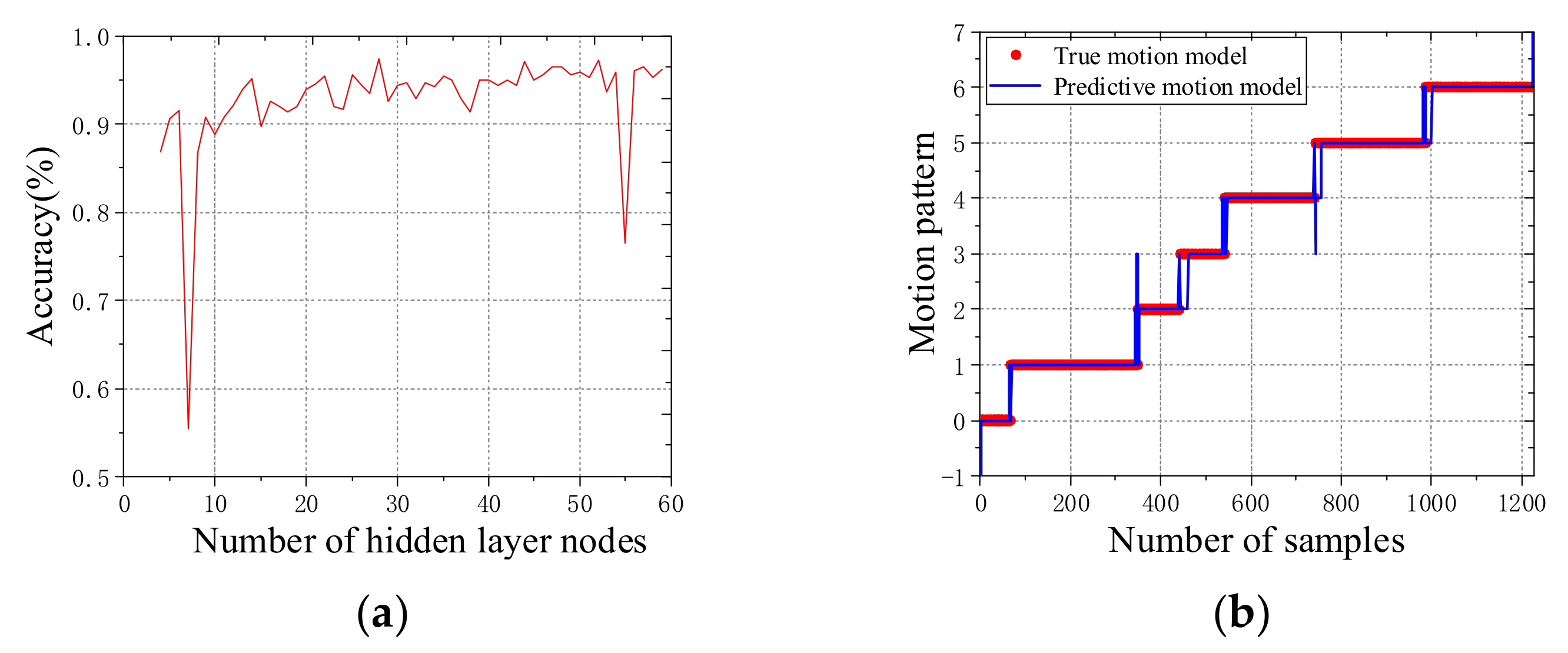

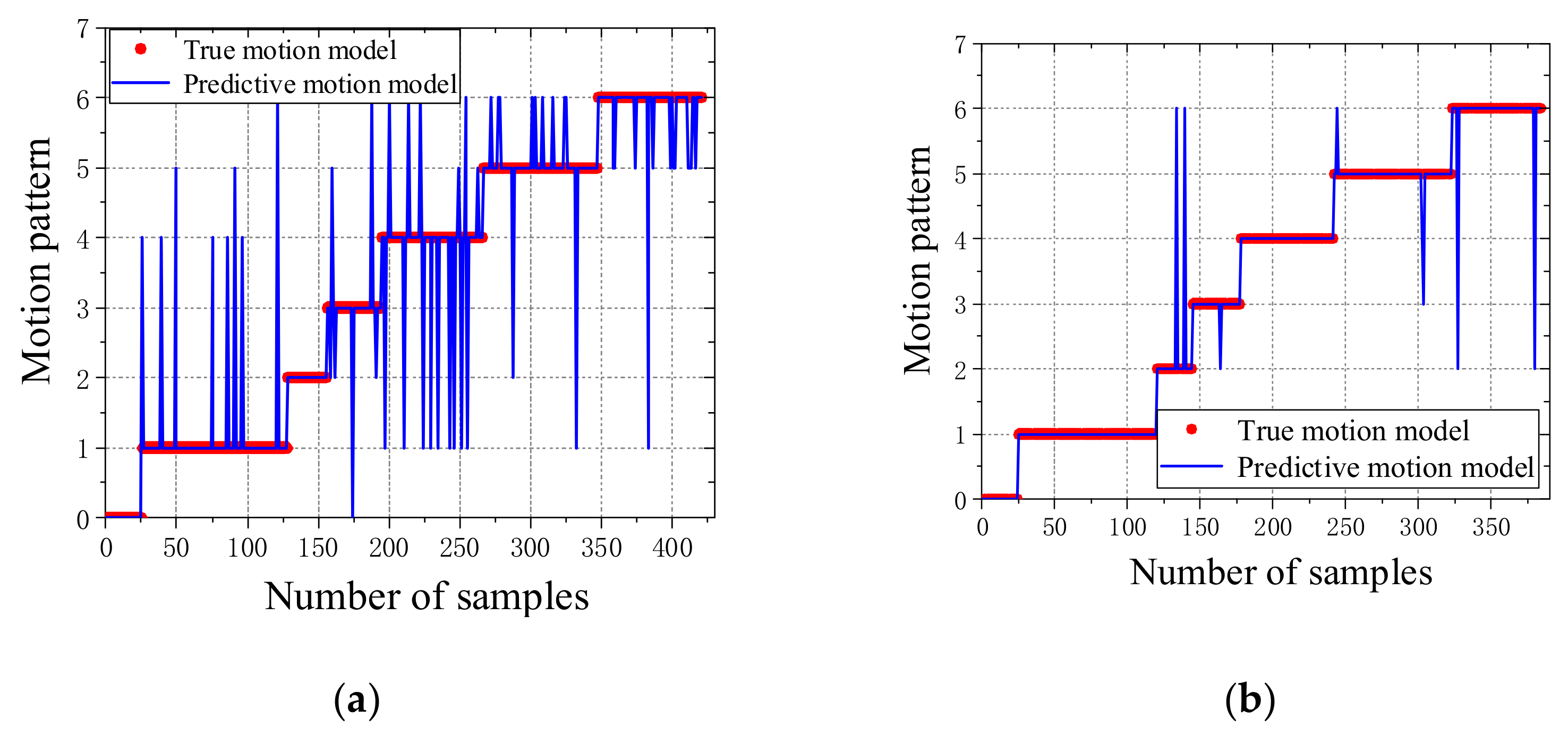

In this study, the reason for the lower accuracy of the training results might be the dataset being not very large. For example, the local minimum in

Figure 5a appears to have some "cursed" numbers of hidden neurons. So, in our next work, we intend to add more datasets for the training to nullify this problem. During the establishment of the feature parameters dataset, we found that the data’s absolute mean value (MAV) and the root mean square (RMS) have remarkable similarity, so one of them can be deleted for dimensionality reduction. Generally, we think that the calculation cost of RMS will be high, but we have deleted the mean absolute value (MAV) instead of the root mean square (RMS). The reason is that the amount of data processed was not very large. Of course, it is precisely because of the amount of data this time being not very large that leads to the final motion pattern recognition rate being lower. In the process of mass data processing in the future, we will improve this and reconsider the feature value selection in the process of establishing a feature parameters dataset.

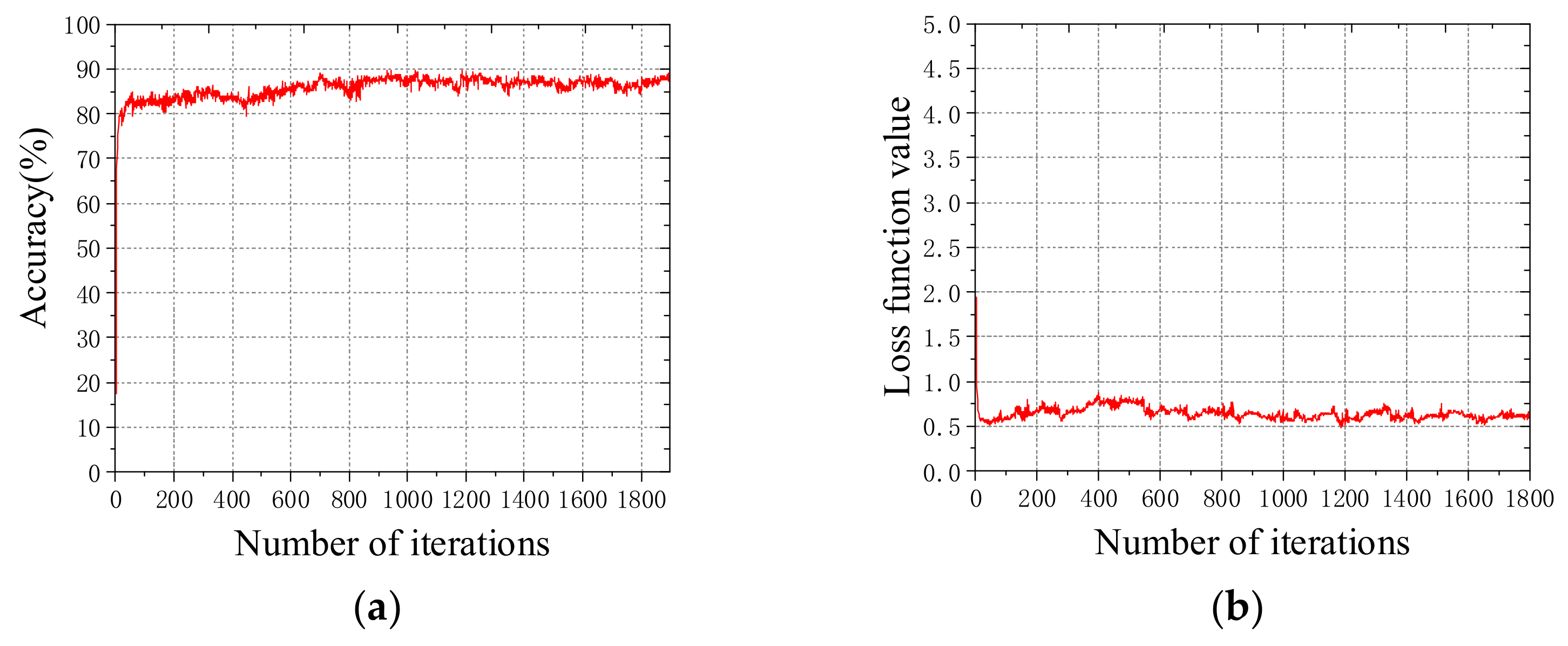

There are five activation functions in a typical LSTM neural network, including three sigmoid and two tanh. In

Section 3.2.2, we wanted to modify the tanh in the activation functions of the LSTM, which was applied on cell state and then multiplied with the sigmoid of the input to provide the output. The reason for the modification of the activation function of the LSTM neural network was to find a way to improve the recognition accuracy. We compared the accuracy of the sigmoid and ReLU as activation functions of the LSTM with the accuracy of tanh as the activation function of the LSTM. The results confirmed that tanh has better accuracy as the activation function of the LSTM. Here, we verified that tanh is the optimal activation function for the LSTM in the classification task.

It must be noted that the human motion pattern recognition method adopted in this paper may cause errors in the data collection process, as the sensor cannot be in close contact with the skin in real time, resulting in a lower recognition rate of the final motion pattern. The sensor harness may interfere with the human motion; therefore, the sensor system needs to be further optimized, which will improve the recognition rate of human motion patterns. In this article, the seven selected movement modes were identified, and the errors were most likely occurring during the transition among different movement modes. Therefore, the future intention is to fuse the electromyography information of human motion with the information obtained from other mechanical sensors and strengthen the training of the transition states of different human motion modes to obtain better recognition results.

The wearable EMG acquisition device designed in this paper is compact and can be integrated with the exoskeleton of the lower limbs. The identification of human movement patterns by collecting EMG information is to provide ground information as the human body wears the exoskeleton when switching between different movement modes to the exoskeleton. The research in this article is the first step in judging human movement patterns and intentions for the exoskeletons. Next, we intend to combine this set of wearable EMG acquisition devices and the data processing methods with lower-extremity exoskeletons to aim for the intelligent switching of movement modes of the lower exoskeleton.

5. Conclusions

In response to the need for the exoskeleton to quickly recognize the wearer’s movement state during the mixed movement control, this paper studies the impact of different feature parameters extracted from the surface EMG signals on the accuracy of multilayer perceptrons and the LSTM neural network recognition of human motion patterns. Based on the EMG signals extracted from seven common human motion patterns in daily life, the time-domain and frequency-domain features of the collected information are extracted to build a feature parameter dataset for training the classifier. Using multilayer perceptrons and the LSTM neural network to recognize the motion pattern of human lower limbs, the dataset uses different feature parameters and different classifier model parameters to compare and analyze the accuracy of motion pattern recognition and error impact.

When the offline supervised learning of the surface EMG dataset based on the multilayer perceptrons is carried out with the sliding window length of 1024 ms, and using all the time and frequency-domain feature parameters, the accuracy rate of the human motion pattern recognition is high, reaching 95.53%. The trend term of the surface EMG has a great impact on the accuracy of the recognition and should be removed before the data is used. The time-domain features contribute more to the accuracy of the model recognition than the frequency-domain features. However, in order to achieve the best recognition accuracy, the time-domain and frequency-domain features should be combined. When the sliding window length is shortened, the recognition accuracy rate decreases rapidly, such as 65.98% at 512 ms, which does not satisfy the needs of human motion pattern recognition.

When an offline supervised learning of the surface EMG dataset based on the LSTM classifier is carried out with the sliding window length of 1024 ms, and using all the time and frequency-domain feature parameters, the accuracy rate of the human motion pattern recognition is slightly higher than that of the multilayer perceptrons, reaching 96.57%. The ReLU function is not suitable as the activation function of the model input layer, and the model recognition accuracy using the sigmoid function and tanh function as the activation functions is higher. When the length of the sliding window is shortened, the accuracy rate is still high, e.g., the recognition accuracy rate is 88.84% at 512 ms, so the LSTM classifier performs better than the multilayer perceptrons in the recognition of human motion patterns.