Snake Oil or Panacea? How to Misuse AI in Scientific Inquiries of the Human Mind

Abstract

1. Introduction

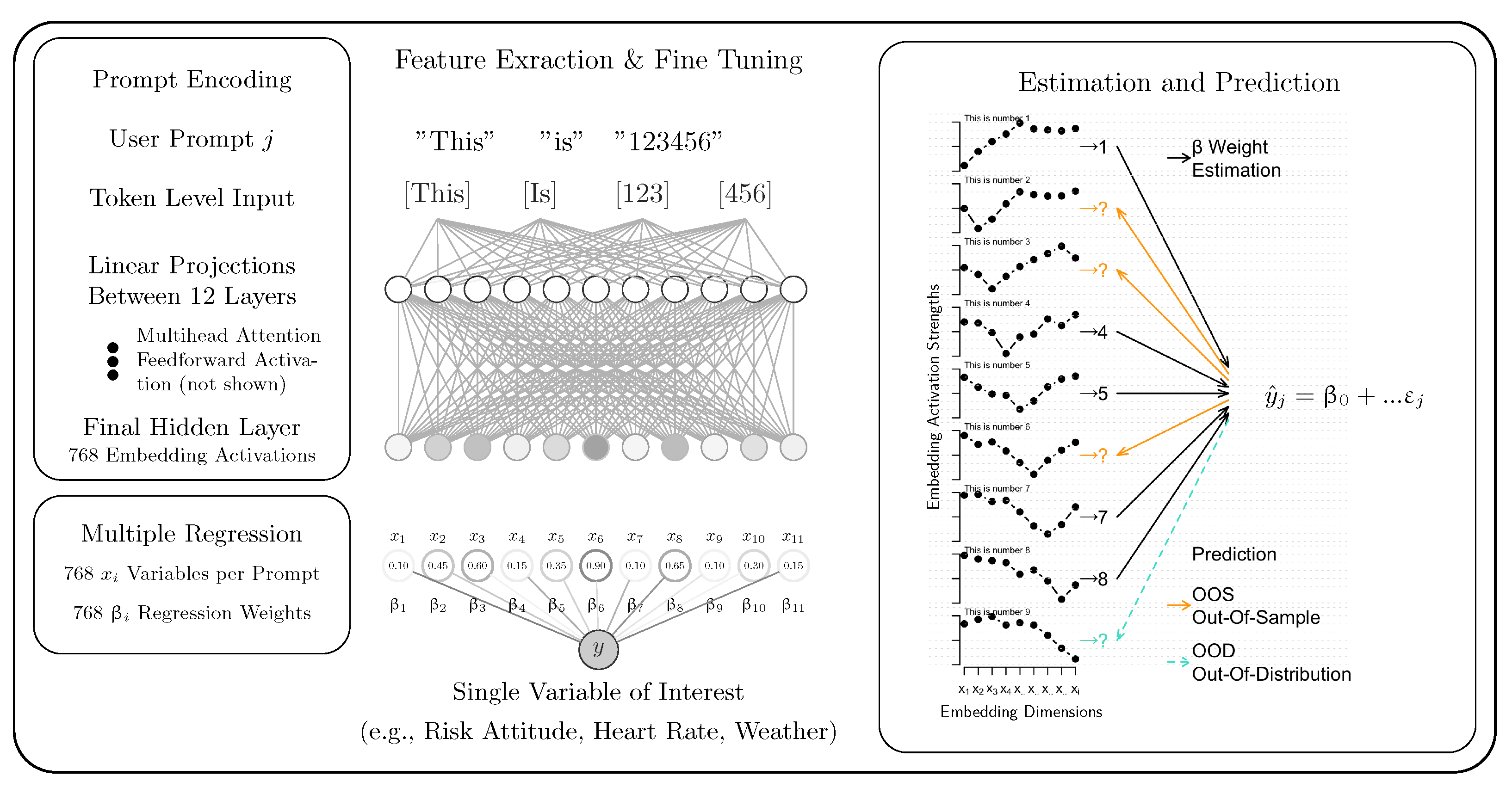

2. The Embedding-Based Regression Framework

3. Generalization, Metrics, and Severe Ordinal Tests

4. Applications and Simulations

4.1. Simulation Methods

SD Score

4.2. Use Case 1: Interpolation Versus Extrapolation in Synthetic Data

4.3. Use Case 2: Assessment of Disease Severity from Textual Descriptions

4.4. Use Case 3: Individual Neural Correlations

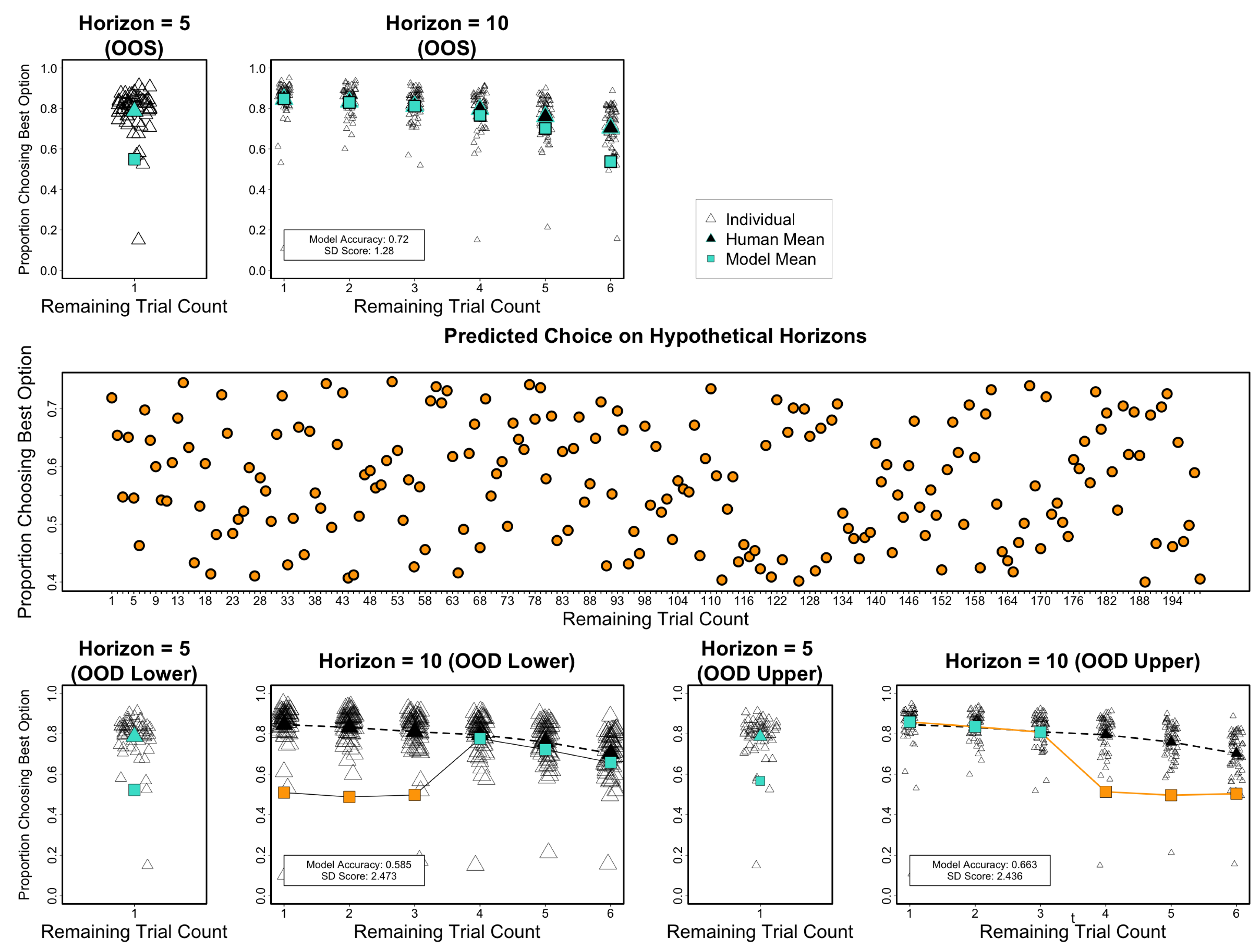

4.5. Use Case 4: Choice Variability Modulates Explore–Exploitation Tradeoff

5. Discussion

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

| 1 | The broadest claim for use-cases in experimental studies is detecting unacknowledged sources of explained variance (e.g., Binz et al., 2025), ostensibly yielding “explainable” variance for theory. Yet this term, as we argue later, is ill-defined: it conflates confounds with meaningful signals and correlation with causation, as elaborated below. |

| 2 | Constant prompt prefixes may enhance encoding of varying elements, but using only unique information often improves fit here, suggesting extraneous text can dilute signal (beyond this review’s scope). |

| 3 | These outcomes are in line with a broader range of critiques on theoretical interpretability (Cummins, 2025; Guest & Martin, 2023, 2025; Orr et al., 2025). |

| 4 | We prefer not to disclose the exact prompt engineering because it would be too easy to conduct severe misuse of it, which we do not want to replicate. |

| 5 | In this quote, Skinner originally refers to earlier studies addressing binary choices under uncertainty (then still called ‘guessing’) via the concept of telepathy, instead of a principled set of explicitly stated psychological hypotheses. |

References

- Aka, A., & Bhatia, S. (2022). Machine learning models for predicting, understanding, and influencing health perception. Journal of the Association for Consumer Research, 7(2), 142–153. [Google Scholar] [CrossRef]

- Battleday, R. M., Peterson, J. C., & Griffiths, T. L. (2020). Capturing human categorization of natural images by combining deep networks and cognitive models. Nature Communications, 11(1), 5418. [Google Scholar] [CrossRef] [PubMed]

- Bhatia, S. (2017). Associative judgment and vector space semantics. Psychological Review, 124(1), 1–20. [Google Scholar] [CrossRef] [PubMed]

- Bhatia, S., & Richie, R. (2024). Transformer networks of human conceptual knowledge. Psychological Review, 131(1), 271–306. [Google Scholar] [CrossRef] [PubMed]

- Bhatia, S., & Walasek, L. (2023). Predicting implicit attitudes with natural language data. Proceedings of the National Academy of Sciences (PNAS) of the United States of America, 120(17), e2301239120. [Google Scholar] [CrossRef]

- Binz, M., Akata, E., Bethge, M., Brändle, F., Callaway, F., Coda-Forno, J., Dayan, P., Demircan, C., Eckstein, M. K., Éltető, N., Griffiths, T. L., Haridi, S., Jagadish, A. K., Ji-An, L., Kipnis, A., Kumar, S., Ludwig, T., Mathony, M., Mattar, M., … Schulz, E. (2025). A foundation model to predict and capture human cognition. Nature, 644, 1002–1009. [Google Scholar] [CrossRef]

- Binz, M., & Schulz, E. (2023). Turning large language models into cognitive models. arXiv, arXiv:2306.03917. [Google Scholar] [CrossRef]

- Bowers, J. S., Malhotra, G., Dujmović, M., Llera Montero, M., Tsvetkov, C., Biscione, V., Puebla, G., Adolfi, F., Hummel, J. E., Heaton, R. F., Evans, B. D., Mitchell, J., & Blything, R. (2023). Deep problems with neural network models of human vision. Behavioral and Brain Sciences, 46, e385. [Google Scholar] [CrossRef]

- Bowers, J. S., Puebla, G., Thorat, S., Tsetsos, K., & Ludwig, C. (2025). Centaur: A model without a theory. OSF. [Google Scholar] [CrossRef]

- Cummins, J. (2025). The threat of analytic flexibility in using large language models to simulate human data: A call to attention. arXiv, arXiv:2509.13397. [Google Scholar] [CrossRef]

- Dome, L., & Wills, A. J. (2025). G-Distance: On the comparison of model and human heterogeneity. Psychological Review, 132(3), 632–655. [Google Scholar] [CrossRef]

- Farrell, S., & Lewandowsky, S. (2018). Computational modeling of cognition and behavior. Cambridge University Press. [Google Scholar]

- Feher da Silva, C., Lombardi, G., Edelson, M., & Hare, T. A. (2023). Rethinking model-based and model-free influences on mental effort and striatal prediction errors. Nature Human Behaviour, 7(6), 956–969. [Google Scholar] [CrossRef] [PubMed]

- Feher da Silva, C., Victorino, C. G., Caticha, N., & Baldo, M. V. C. (2017). Exploration and recency as the main proximate causes of probability matching: A reinforcement learning analysis. Scientific Reports, 7(1), 15326. [Google Scholar] [CrossRef]

- Friedman, J. H., Hastie, T., & Tibshirani, R. (2010). Regularization paths for generalized linear models via coordinate descent. Journal of Statistical Software, 33, 1–22. [Google Scholar] [CrossRef]

- Gao, Y., Lee, D., Burtch, G., & Fazelpour, S. (2025). Take caution in using LLMs as human surrogates: Scylla ex machina. arXiv, arXiv:2410.19599. [Google Scholar] [CrossRef]

- Guest, O., & Martin, A. E. (2023). On logical inference over brains, behaviour, and artificial neural networks. Computational Brain & Behavior, 6(2), 213–227. [Google Scholar] [CrossRef]

- Guest, O., & Martin, A. E. (2025). A metatheory of classical and modern connectionism. Psychological Review. [Google Scholar] [CrossRef]

- Guest, O., & van Rooij, I. (2025). Critical artificial intelligence literacy for psychologists. PsyArXiv, preprint. [Google Scholar] [CrossRef]

- Hastie, T., Tibshirani, R., & Friedman, J. (2009). The elements of statistical learning: Data mining, inference, and prediction. Springer. [Google Scholar]

- Hendrycks, D., Liu, X., Wallace, E., Dziedzic, A., Krishnan, R., & Song, D. (2020). Pretrained transformers improve out-of-distribution robustness. arXiv, arXiv:2004.06100. [Google Scholar] [CrossRef]

- Hussain, Z., Binz, M., Mata, R., & Wulff, D. U. (2024). A tutorial on open-source large language models for behavioral science. Behavior Research Methods, 56(8), 8214–8237. [Google Scholar] [CrossRef]

- James, G., Witten, D., Hastie, T., & Tibshirani, R. (2013). An introduction to statistical learning: With applications in R. Springer. [Google Scholar]

- Khromov, G., & Singh, S. P. (2023). Some fundamental aspects about lipschitz continuity of neural networks. arXiv, arXiv:2302.10886. [Google Scholar] [CrossRef]

- Liu, P., Yuan, W., Fu, J., Jiang, Z., Hayashi, H., & Neubig, G. (2021). Pre-train, prompt, and predict: A systematic survey of prompting methods in natural language processing. arXiv, arXiv:2107.13586. [Google Scholar] [CrossRef]

- Liu, Y., Ott, M., Goyal, N., Du, J., Joshi, M., Chen, D., Levy, O., Lewis, M., Zettlemoyer, L., & Stoyanov, V. (2019). RoBERTa: A robustly optimized bert pretraining approach. arXiv, arXiv:1907.11692. [Google Scholar]

- Luo, X., Mok, R. M., Roads, B. D., & Love, B. C. (2025). Coordinating multiple mental faculties during learning. Scientific Reports, 15(1), 5319. [Google Scholar] [CrossRef]

- Naveed, H., Khan, A. U., Qiu, S., Saqib, M., Anwar, S., Usman, M., Akhtar, N., Barnes, N., & Mian, A. (2024). A comprehensive overview of large language models. arXiv, arXiv:2307.06435. [Google Scholar] [CrossRef]

- Nosofsky, R. M., Meagher, B. J., & Kumar, P. (2022). Contrasting exemplar and prototype models in a natural-science category domain. Journal of Experimental Psychology: Learning, Memory, and Cognition, 48(12), 1970–1994. [Google Scholar] [CrossRef]

- Orr, M., Cranford, D., Ford, K., Gluck, K., Hancock, W., Lebiere, C., Pirolli, P., Ritter, F., & Stocco, A. (2025). Not even wrong: On the limits of prediction as explanation in cognitive science. arXiv, arXiv:2510.03311. [Google Scholar] [CrossRef]

- Peters, M. E., Neumann, M., Zettlemoyer, L., & Yih, W.-T. (2018). Dissecting contextual word embeddings: Architecture and representation. arXiv, arXiv:1808.08949. [Google Scholar] [CrossRef]

- Pitt, M. A., Kim, W., Navarro, D. J., & Myung, J. I. (2006). Global model analysis by parameter space partitioning. Psychological Review, 113(1), 57–83. [Google Scholar] [CrossRef]

- Popper, K. R. (1959). The logic of scientific discovery. Routledge. [Google Scholar]

- Requeima, J., Bronskill, J., Choi, D., Turner, R. E., & Duvenaud, D. (2024). LLM processes: Numerical predictive distributions conditioned on natural language. Advances in Neural Information Processing Systems, 37, 109609–109671. [Google Scholar]

- Richie, R., Zou, W., & Bhatia, S. (2019). Predicting high-level human judgment across diverse domains using word embeddings. Collabra: Psychology, 5(1), 50. [Google Scholar] [CrossRef]

- Roberts, S., & Pashler, H. (2000). How persuasive is a good fit? A comment on theory testing. Psychological Review, 107(2), 358–367. [Google Scholar] [CrossRef]

- Sanh, V., Debut, L., Chaumond, J., & Wolf, T. (2020). DistilBERT, a distilled version of BERT: Smaller, faster, cheaper and lighter. arXiv, arXiv:1910.01108. [Google Scholar] [CrossRef]

- Schröder, S., Morgenroth, T., Kuhl, U., Vaquet, V., & Paaßen, B. (2025). Large language models do not simulate human psychology. arXiv, arXiv:2508.06950. [Google Scholar] [CrossRef]

- Shanks, D. R., Tunney, R. J., & McCarthy, J. D. (2002). A re-examination of probability matching and rational choice. Journal of Behavioral Decision Making, 15(3), 233–250. [Google Scholar] [CrossRef]

- Shuttleworth, R., Andreas, J., Torralba, A., & Sharma, P. (2024). LoRA vs full fine-tuning: An illusion of equivalence. arXiv, arXiv:2410.21228. [Google Scholar] [CrossRef]

- Singh, P., Peterson, J. C., Battleday, R. M., & Griffiths, T. L. (2020). End-to-end deep prototype and exemplar models for predicting human behavior. arXiv, arXiv:2007.08723. [Google Scholar] [CrossRef]

- Skinner, B. F. (1942). The processes involved in the repeated guessing of alternatives. Journal of Experimental Psychology, 30(6), 495–503. [Google Scholar] [CrossRef]

- Stone, M. (1974). Cross-validatory choice and assessment of statistical predictions. Journal of the Royal Statistical Society: Series B (Methodological), 36(2), 111–147. [Google Scholar] [CrossRef]

- Tang, E., Yang, B., & Song, X. (2024). Understanding LLM embeddings for regression. Transactions on Machine Learning Research, 2025(2), 1–7. [Google Scholar]

- Tartaglini, A. R., Vong, W. K., & Lake, B. (2021). Modeling artificial category learning from pixels: Revisiting Shepard, Hovland, and Jenkins (1961) with deep neural networks. Proceedings of the Annual Meeting of the Cognitive Science Society, 43(43), 2464–2470. [Google Scholar]

- Wills, A. J., & Pothos, E. M. (2012). On the adequacy of current empirical evaluations of formal models of categorization. Psychological Bulletin, 138(1), 102–125. [Google Scholar] [CrossRef] [PubMed]

- Wilson, R. C., & Collins, A. G. (2019). Ten simple rules for the computational modeling of behavioral data. eLife, 8, e49547. [Google Scholar] [CrossRef]

- Wilson, R. C., Geana, A., White, J. M., Ludvig, E. A., & Cohen, J. D. (2014). Humans use directed and random exploration to solve the explore—Exploit dilemma. Journal of Experimental Psychology General, 143(6), 2074–2081. [Google Scholar] [CrossRef] [PubMed]

- Xie, H., & Zhu, J.-Q. (2025). Centaur may have learned a shortcut that explains away psychological tasks. OSF. [Google Scholar] [CrossRef]

- Zhou, S., Xu, Z., Zhang, M., Xu, C., Guo, Y., Zhan, Z., Fang, Y., Ding, S., Wang, J., Xu, K., Lin, M., Melton, G. B., & Zhang, R. (2025). Large language models for disease diagnosis: A scoping review. NPJ Artificial Intelligence, 1(1), 9. [Google Scholar] [CrossRef] [PubMed]

| Test Prompt | Sample Type | Expected Behavior | Embedding Shift | |

|---|---|---|---|---|

| 1 | “This is number 37” (seen in training) | In-sample | Perfect prediction; reproduces training example | None—seen input |

| 2 | “This is number 42” (unseen in 1–100) | Out-of-sample In-distribution | Accurate; generalizes within seen structure | Very low |

| 3 | “This is number 150” | Out-of-distribution | Model may fail or invert trend | Moderate–high—numeric embeddings misaligned beyond range |

| 4 | “This is NOT disease 42” | Out-of-sample In-distribution Mild out-of-domain | Degraded or biased output; changed prompt alters reasoning | Moderate—semantic framing shifts attention |

| 5 | “Xylozyme rank 42 is peak flux” | Out-of-sample In-distribution Strong out-of-domain | Output unpredictable; input is nonsensical or adversarial | Very high—embedding distant from training manifold |

| 6 | “Warning: number 150 detected” | Full out-of-distribution (prompt domain and dependent variable new) | Highly unstable or default-biased prediction | High—both prompt and value unfamiliar |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Schlegelmilch, R.; Dome, L. Snake Oil or Panacea? How to Misuse AI in Scientific Inquiries of the Human Mind. Behav. Sci. 2026, 16, 219. https://doi.org/10.3390/bs16020219

Schlegelmilch R, Dome L. Snake Oil or Panacea? How to Misuse AI in Scientific Inquiries of the Human Mind. Behavioral Sciences. 2026; 16(2):219. https://doi.org/10.3390/bs16020219

Chicago/Turabian StyleSchlegelmilch, René, and Lenard Dome. 2026. "Snake Oil or Panacea? How to Misuse AI in Scientific Inquiries of the Human Mind" Behavioral Sciences 16, no. 2: 219. https://doi.org/10.3390/bs16020219

APA StyleSchlegelmilch, R., & Dome, L. (2026). Snake Oil or Panacea? How to Misuse AI in Scientific Inquiries of the Human Mind. Behavioral Sciences, 16(2), 219. https://doi.org/10.3390/bs16020219