1. Introduction

The accelerated digitization of organizational practices has profoundly transformed the ways in which employee performance is monitored, assessed, and managed (

Leavitt et al., 2024;

Kalischko & Riedl, 2021). Notably, the integration of artificial intelligence (AI) and algorithmic decision-making systems is gaining traction as a means of delivering performance feedback, roles previously associated with leaders, though emerging evidence shows that employee responses to AI feedback can differ systematically from responses to human evaluation (

Tong et al., 2021;

Qin et al., 2023;

Hoffmann & Thommes, 2020). Companies such as Unilever and Cogito, for instance, have introduced AI-driven platforms that evaluate employee behaviors, track customer interactions, and generate tailored suggestions for improvement (

Marr, 2018;

De La Garza, 2019). Similarly, Enaible has developed an AI program that remotely monitors workflows, assigns productivity scores, and recommends efficiency adjustments (

Heaven, 2020). Overall, these examples underscore the growing reliance on AI feedback in the workplace, which is commonly justified by its efficiency, objectivity, and broad applicability.

Yet performance feedback is not always positive, namely, negative performance feedback (NPF). This often conveys critical or corrective information that strongly shapes how employees think, feel, and behave at work (

Ilgen & Davis, 2000;

Belschak & Den Hartog, 2009). Previous studies proposed that negative performance feedback has long been recognized as a means for guiding employee development and enhancing organizational effectiveness (

Choi et al., 2018). However, some scholars argued that negative performance feedback also can provoke defensive reactions and undermine motivation. Therefore, scholars have increasingly explored whether negative performance feedback originating from AI elicits distinct employee responses compared with feedback generated by leaders (

Tong et al., 2021;

Li et al., 2025;

Liao et al., 2024). Within this stream of work, leader negative performance feedback has been widely investigated. By contrast, AI negative performance feedback is generated through data-driven processes, often relying on standardized metrics, performance analytics, or algorithmic scoring systems (

Pei et al., 2024;

Liao et al., 2024).

Regardless of whether negative performance feedback is generated by leaders or AI, existing studies have primarily focused on its behavioral outcomes, such as performance, fairness perceptions, and retreat behaviors (

Tong et al., 2021;

Eggers & Suh, 2019), while devoting less attention to the emotional mechanisms. In fact, emotions play an essential role in how employees process performance feedback, as they influence not only immediate reactions but also longer-term attitudes and behaviors in the workplace (

Van Kleef et al., 2015;

Sufi et al., 2024). Among the emotions elicited by negative performance feedback, shame and anger emerge as particularly salient, given their strong implications for employee’s attitudes and behaviors (

Bonner et al., 2017;

Xing et al., 2021;

Gibson & Callister, 2010;

Fitness, 2000). This raises a pressing question: how do employees emotionally respond to negative performance feedback when it generates from an AI compared with a leader?

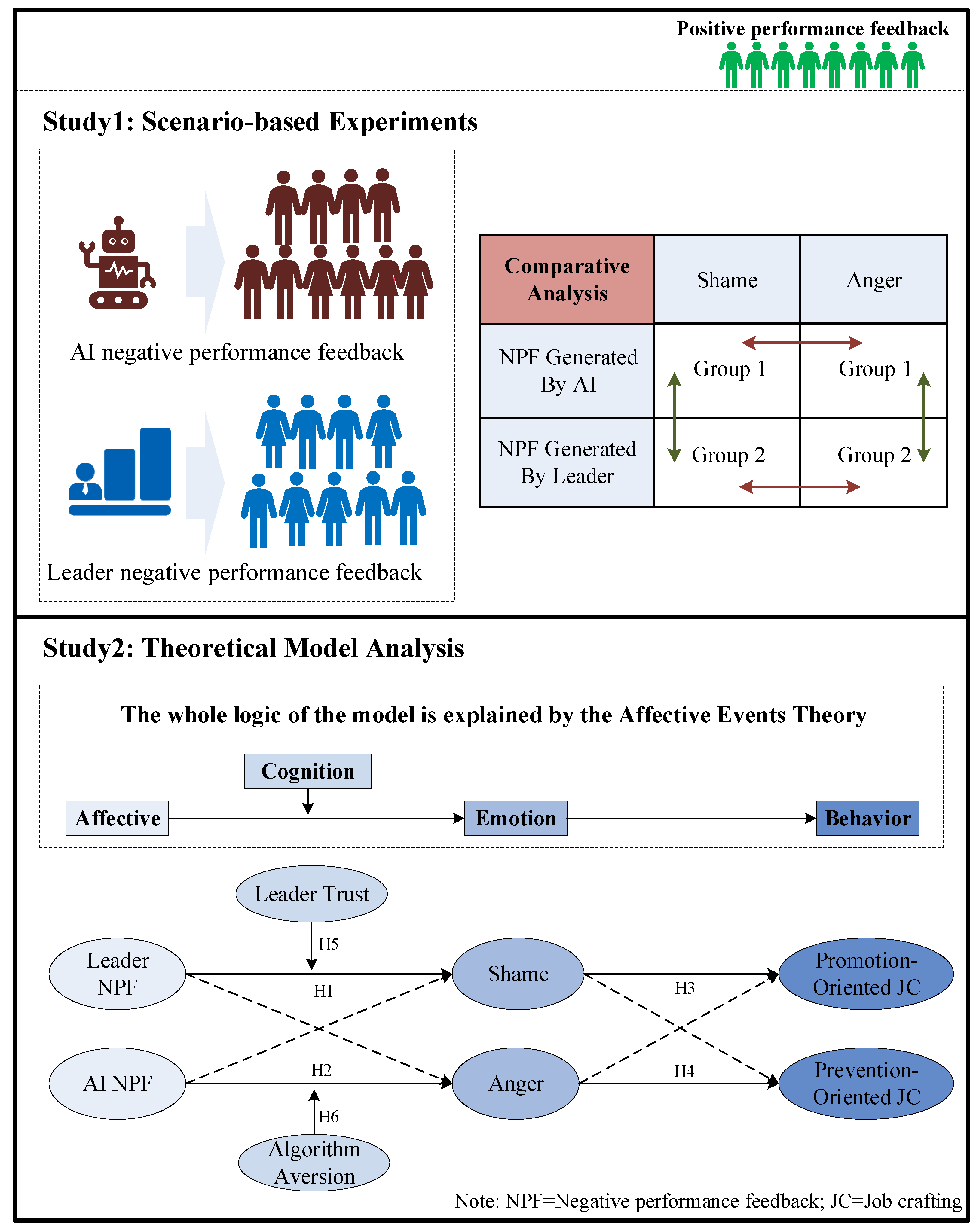

To address this gap, we draw on Affective Events Theory (AET;

Weiss & Cropanzano, 1996) to develop a theoretical framework that explains how negative performance feedback from leader vs. AI differentially elicits employees’ shame and anger. According to AET, workplace events give rise to affective responses that subsequently shape employees’ attitudes and behaviors. In the context of performance management, leader negative performance feedback and AI negative performance feedback can be conceptualized as two types of affective events that differ in their social-interactional and data-driven nature, thereby triggering shame and anger in different ways. Moreover, such emotional differences may lead to distinct behavioral outcomes. Specifically, this study focuses on job crafting, which refers to employees’ proactive changes to the task, relational, and cognitive boundaries of their work (

Tims et al., 2012;

Zhang & Parker, 2019). Prior research suggests that job crafting comprises two dimensions (

Bindl et al., 2019;

Boehnlein & Baum, 2022). Promotion-oriented job crafting refers to behaviors such as expanding responsibilities, seeking additional resources, or building constructive relationships, whereas prevention-oriented job crafting involves reducing work demands, avoiding challenging tasks, or minimizing exposure to negative interactions (

Chen et al., 2024). Given that shame tends to foster an inward focus on self-improvement (

Xing et al., 2021), whereas anger reflects an outward focus on blame and resistance (

Gibson & Callister, 2010), we propose that negative performance feedback from different sources may give rise to distinct forms of job crafting. Moreover, these emotional mechanisms may vary depending on boundary conditions. From a trust perspective, this study further examines whether leader trust (

Burke et al., 2007;

Campagna et al., 2020) and algorithm aversion (

Jussupow et al., 2020;

Mahmud et al., 2022;

Burton et al., 2020) moderate the relationship between negative performance feedback and employees’ emotional responses.

This study makes several contributions. First, it advances the feedback literature by demonstrating that the source of negative performance feedback (leader vs. AI) triggers distinct emotional responses—shame and anger—that in turn drive different forms of job crafting. In doing so, it moves beyond the dominant focus on feedback content and fairness to highlight the importance of feedback source as an affective trigger. Second, by integrating Affective Events Theory with the job crafting perspective, this study enriches our understanding of how discrete emotions channel employee reactions into promotion- versus prevention-oriented behaviors, thereby offering a more nuanced account of the feedback–behavior link. Third, this research identifies boundary conditions, namely leader trust and algorithm aversion, that shape the intensity and direction of emotional reactions, extending knowledge on when and why employees accept or resist feedback in digitized contexts. Finally, the findings provide timely insights for organizations navigating the growing use of algorithmic management, underscoring the need to balance efficiency with emotional and motivational employee dynamics in performance evaluation practices. The rest of this study is organized as follows.

Section 2 reviews the literature and develops the theoretical framework and hypotheses.

Section 3 outlines the overall research methodology.

Section 4 and

Section 5 present the design and results of Study 1 and Study 2, respectively.

Section 6 discusses the theoretical and practical implications of the findings. Finally,

Section 7 concludes the paper and highlights limitations and directions for future research.

4. Study 1

4.1. Scenario-Based Experiment

This study employed a scenario-based experimental approach (

Kim & Jang, 2014) and designed a series of negative feedback scenarios to examine whether different sources of feedback would lead to distinct emotional reactions. The experimental materials consisted of scenario descriptions involving negative performance feedback. Both scenarios were presented from a third-person perspective to avoid directly asking participants about their emotional reactions, thereby reducing social desirability bias and enhancing the external validity of the scenarios.

The experiment adopted a scenario-based design (negative feedback source: leader vs. AI). Each scenario described employees’ failure to meet work expectations, specifically under-performance in task completion. The feedback source was manipulated through two groups: “leader negative performance feedback” and “AI negative performance feedback.” The leader group emphasized personalized and interpersonal evaluation, highlighting leaders’ assessment of employees’ performance. In contrast, the AI group emphasized systematized, data-driven evaluation. The scenarios were constructed as follows:

The reading materials for both the leader and the AI group were identical across conditions, with the only variation being the source of negative performance feedback. Li Ming for the leader group and the “AX-300” AI evaluation system for the AI group. Refer to

Appendix A for more details of reading materials.

After reading the scenario materials, participants were asked to complete a questionnaire. This questionnaire measured two variables, shame and anger, using well-validated scales that have been widely applied in top journals. Shame was assessed with a 4-item scale developed by

Watson et al. (

1996), which we adapted into a situational format to fit the research design. Specifically, participants were asked to evaluate the extent to which the focal character, “Chen Yu,” would experience each affective state. A sample item is, “Chen Yu would feel embarrassed.” The Cronbach’s α for this scale was 0.75. Anger was measured with a 3-item scale from the same source, with a representative item such as, “Chen Yu would feel upset.” The Cronbach’s α for this scale was 0.83, indicating good internal consistency. All items were rated on a 5-point Likert scale, ranging from “1 = strongly disagree” to “5 = strongly agree”.

4.2. Manipulations and Samples

The required sample size for this experiment was calculated using G*Power 3.1. For an independent samples t-test, assuming a medium effect size (d = 0.5) and a significance level (α = 0.05), the results indicated that a minimum of 172 participants would be needed to achieve 90% statistical power. Data were collected between November 2024 and January 2025. During this period, participants were recruited through Credamo (

https://www.credamo.com/, accessed on 8 November 2024), a professional online data collection platform in China. Eligibility criteria required participants to be at least 20 years old, employed full-time, and to maintain a questionnaire completion rate of over 90%. All participants were randomly assigned to one of two groups and asked to read the corresponding scenario materials. Then, they completed the questionnaire, which measured the extent to which they experienced shame and anger in the given scenario.

In total, 350 questionnaires were distributed. After excluding 47 questionnaires that failed the attention check, 303 valid responses were obtained, yielding a response rate of 86.57%. An additional 38 questionnaires were removed due to missing data, excessive repetitive responses, careless answering patterns, or regular patterned responses. The final dataset consisted of 265 participants (overall valid response rate = 75.71%). Among them, 139 were male (52.45%) and 126 were female (47.54%). In terms of age distribution, 34 participants (12.83%) were between 20 and 25 years old, 101 (38.11%) were between 26 and 35, 78 (29.43%) were between 36 and 45, 32 (12.08%) were between 46 and 55, and 20 (7.55%) were above 55 years. Regarding educational background, 57 participants (21.51%) held a junior college degree or below, 156 (58.87%) had a bachelor’s degree, and 52 (19.62%) possessed a master’s degree or higher. With respect to organizational tenure, 62 participants (23.40%) reported less than 3 years of work experience, 106 (40.00%) had 3–10 years, 61 (23.02%) had 11–20 years, 21 (7.92%) had 21–25 years, and 15 (5.66%) reported more than 26 years of experience. In terms of marital status, 120 participants (45.28%) were unmarried, 111 (41.89%) were married, and 34 (12.83%) reported other statuses. Participants were distributed across a wide range of industries, ensuring variability in occupational contexts. Of the total sample, 141 participants were assigned to the leader negative performance feedback group and 124 participants to the AI negative performance feedback group. All participants voluntarily took part in the study, provided informed consent, and received appropriate compensation upon completion.

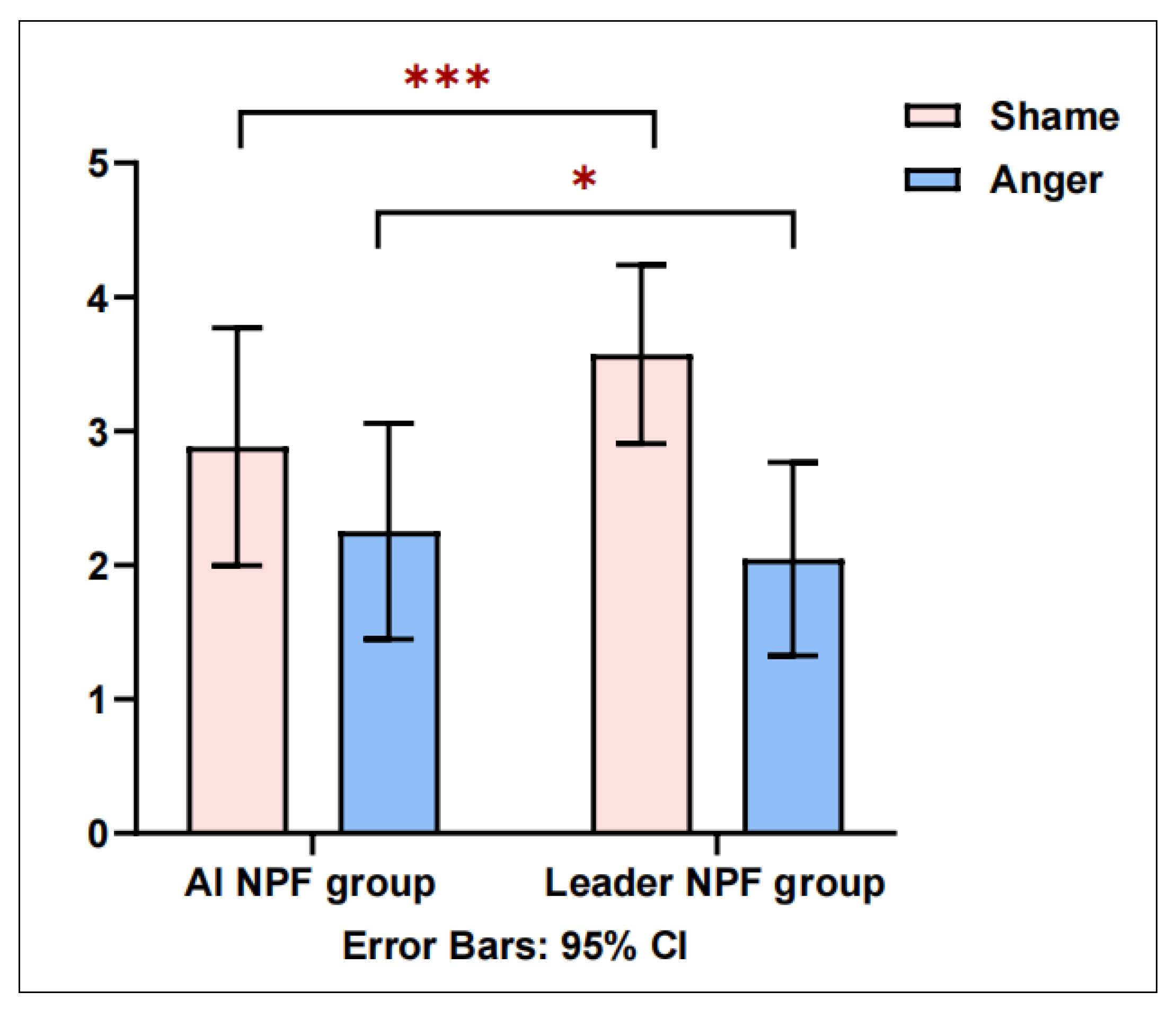

4.3. Experimental Results

Independent sample

t-tests were conducted using GraphPad Prism 10.1 to examine the differences between the AI negative performance feedback group and the leader negative performance feedback group. As shown in

Figure 2, employees in the AI negative performance feedback group reported significantly lower levels of shame (Mean = 2.883, SD = 0.885) compared with those in the leader negative performance feedback group (Mean = 3.573, SD = 0.667), t = −7.086,

p < 0.001, Cohen’s d = 0.777. However, employees in the AI negative performance feedback group reported significantly higher levels of anger (Mean = 2.253, SD = 0.806) than those in the leader negative performance feedback group (Mean = 2.045, SD = 0.722), t = 2.214,

p < 0.05, Cohen’s d = 0.762. To further assess the joint effects of feedback source on employees’ emotional responses, a multivariate analysis of variance was conducted with shame and anger as dependent variables. Results indicated a significant main effect of feedback source (Wilks’ λ = 0.813, F = 30.198,

p < 0.001, η

2 = 0.187). These findings suggest that the source of negative performance feedback (leader vs. AI) exerts a significant influence on employees’ shame and anger. Therefore, H1 and H2 were supported.

5. Study 2

5.1. Measures

Study 2 employed a survey-based research design to further investigate the mechanisms through which different sources of negative performance feedback influence employees’ emotions and behaviors. All constructs were measured using well-established scales published in top-tier international journals. Responses were recorded on a 5-point Likert scale, ranging from “1 = strongly disagree” to “5 = strongly agree”. To ensure measurement validity and accuracy, the original English items were translated into Chinese following a double-blind translation and back-translation procedure. Subsequently, 4 professors in management and 3 doctoral candidates specializing in organizational behavior were invited to review the translated items. Their feedback was incorporated to refine wording and guarantee contextual appropriateness for Chinese respondents.

Negative performance feedback. Leader negative performance feedback was measured using the 4-item scale developed by

Steelman & Rutkowski (

2004). A representative item is “When I do not complete a task on time, my leader lets me know.” The Cronbach’s α of this scale was 0.903, suggesting high internal consistency. To measure AI negative performance feedback, the same items were adapted by replacing the feedback source with an AI. For example: “When I do not complete a task on time, the organization’s performance feedback system/AI reminds me.” The Cronbach’s α of this scale was 0.893, demonstrating good reliability and validity.

Shame and anger. The measures for shame and anger were identical to those used in Study 1, adapted from

Watson et al. (

1996). In the present study, shame (4 items) yielded a Cronbach’s α of 0.810, while anger (3 items) yielded a Cronbach’s α of 0.824.

Job crafting. This was measured using the validated scale developed by

Tims et al. (

2012), which has been widely applied in organizational research. Promotion-focused job crafting includes 15 items in total. Representative items include: “I make an effort to improve my job-related abilities,” “I ask my colleagues for feedback,” and “When an interesting project arises, I proactively request to be involved.” Prevention-focused job crafting was measured with 6 items, such as: “I try to reduce the emotional demands of my work” and “I organize my work so as to avoid continuous strain.” The Cronbach’s α were 0.928 and 0.907, respectively.

Algorithm aversion. Based on previous research (

Liao et al., 2024), algorithm aversion was assessed across three dimensions: permissibility, liking, and utilization intention. Permissibility was measured using the scale developed by

Bigman and Gray (

2018); liking for algorithms was measured using

Jago’s (

2019) scale; and utilization intention was measured with items from

Cadario et al. (

2021). The overall scale comprised 6 items, such as: “Is the appraisal decision made by the algorithm system appropriate?” The Cronbach’s α of the scale was 0.716.

Leader trust. This was measured using the 5-item scale by

Podsakoff et al. (

1990). A representative item is: “I completely trust my leader to be honest and truthful.” The Cronbach’s α of the scale was 0.764.

Control variables. Prior research suggests that employees’ demographic characteristics, such as education, gender, and age, may influence their emotions and work behaviors. To alleviate potential bias, this study included gender, age, education, marriage, and working years as controlled variables.

5.2. Samples

Data for Study 2 were collected between February 2025 and June 2025 through WeChat and email surveys distributed across 9 enterprises located in Beijing, Guangdong, Jiangxi, and Hebei provinces. This multi-site data collection strategy ensured diversity in both industry background and regional distribution. Prior to distributing the questionnaires, we contacted the human resources directors of each company to explain the academic purpose of the study and to obtain organizational consent. The HR directors assisted in identifying full-time employees and in disseminating the survey link internally. All participants were informed of the voluntary nature of participation and were assured of the anonymity and confidentiality of their responses.

A total of 732 questionnaires were distributed. After excluding 28 questionnaires that failed attention checks and 45 questionnaires with excessive missing data, 659 valid responses were retained, yielding an effective response rate of 90.0%. The final sample consisted of 349 males (52.95%) and 310 females (47.05%). Regarding age, 109 participants (16.54%) were between 20 and 25 years old, 267 (40.52%) were between 26 and 35, 168 (25.49%) were between 36 and 45, 85 (12.90%) were between 46 and 55, and 30 (4.55%) were above 55 years old. With respect to educational background, 191 participants (28.98%) held an associate degree or below, 327 (49.62%) held a bachelor’s degree, and 141 (21.40%) possessed a master’s degree or higher. In terms of organizational tenure, 106 participants (16.09%) had less than 3 years of work experience, 317 (48.11%) had 3–10 years, 131 (19.88%) had 11–20 years, 68 (10.32%) had 21–25 years, and 37 (5.61%) had more than 26 years. Concerning marital status, 314 participants (47.65%) were unmarried, 275 (41.73%) were married, and 70 (10.62%) were categorized as other. All respondents voluntarily participated and received modest compensation upon survey completion.

5.3. Descriptive Analysis

Descriptive and correlation analysis were conducted using SPSS 27.0. The means, standard deviations (SD), and correlation coefficients of all variables are presented in

Table 1. Results indicated that leader negative performance feedback and shame were both positively associated with promotion-oriented job crafting (r = 0.669, r = 0.862,

p < 0.01). Leader negative performance feedback was positively related to shame (r = 0.549,

p < 0.01). In addition, AI negative performance feedback and anger were both positively associated with prevention-oriented job crafting (r = 0.325, r = 0.880,

p < 0.01). Algorithm aversion was negatively associated with prevention-oriented job crafting (r = −0.254,

p < 0.01), while AI negative performance feedback was positively related to anger (r = 0.326,

p < 0.01). These results provide preliminary evidence for the proposed model.

5.4. Confirmatory Factor Analyses

To assess the discriminant validity of the theoretical model, confirmatory factor analyses (CFA) were conducted using Mplus 8.3. As shown in

Table 2, a four-factor model consisting of leader negative performance feedback, shame, leader trust, and promotion-oriented job crafting was developed. The results indicated that the four-factors model 1 (

χ2/

df = 2.963, CFI = 0.914, TLI = 0.894, RMSEA = 0.060, SRMR = 0.131) demonstrates the best fit indices compared to other alternative models. For instance, the one-factor model 1 (all items combined into one single factor) demonstrated a poorer fit (

χ2/

df = 9.192, CFI = 0.815, TLI = 0.774, RMSEA = 0.111, SRMR = 0.128), as did the two-factor model 1 (

χ2/

df = 7.342, CFI = 0.857, TLI = 0.825, RMSEA = 0.098, SRMR = 0.128).

Similarly, the four-factors model 2 including AI negative performance feedback, anger, algorithm aversion, and prevention-oriented job crafting was developed. As reported in

Table 3, this four-factor model 2 showed good fit indices (

χ2/

df = 2.851, CFI = 0.942, TLI = 0.933, RMSEA = 0.059, SRMR = 0.046), which were significantly better than those of the alternative models (e.g., three-factors model 3:

χ2/

df = 12.687, CFI = 0.787, TLI = 0.754, RMSEA = 0.133, SRMR = 0.128). In sum, these results provide strong evidence of discriminant validity among the study variables.

5.5. Tests of Hypotheses

This study employed hierarchical regression analyses using PROCESS in SPSS 27.0 to test Hypotheses 3 through 6. The results are presented in

Table 4 and

Table 5. After controlling the demographic variables, the results in Models 2 and 5 (

Table 4) demonstrated that leader negative performance feedback was significantly positively associated with shame (

= 0.557,

p < 0.001) and promotion-oriented job crafting (

= 0.672,

p < 0.001), respectively. Model 6 further revealed that, after controlling for shame, the effect of leader negative performance feedback on promotion-oriented job crafting was reduced (

= 0.282,

p < 0.001). Thus, H3 was supported. In

Table 5, after controlling for demographic variables, Models 8 and 11 showed that AI negative performance feedback was significantly positively related to anger (

= 0.282,

p < 0.001) and prevention-oriented job crafting (

= 0.281,

p < 0.001). The results in Model 12 demonstrated that, after controlling for anger, the relationship between AI negative performance feedback and prevention-oriented job crafting was insignificant (

= 0.038,

p > 0.05), indicating that anger fully mediated the relationship. Thus, H4 was supported.

In order to estimate the moderating roles of leader trust and algorithm aversion, interaction terms were constructed in Model 3 and Model 6. The results in Model 3 showed that the interaction between leader negative performance feedback and leader trust was significantly negatively associated with shame ( = −0.402, p < 0.001). Model 9 demonstrated that the interaction between AI negative performance feedback and algorithm aversion was significantly positively associated with anger ( = 0.239, p < 0.001). Thus, H5 and H6 were supported.

This study employed simple slope analyses (

Aiken et al., 1991) to examine the moderating effects of leader trust and algorithm aversion at different levels. As illustrated in

Figure 3, compared with low leader trust (Mean − 1 SD), high leader trust (Mean + 1 SD) significantly weakened the positive effect of leader negative feedback on shame. Thus, H5 was further supported. In addition,

Hayes and Scharkow (

2013)’s bootstrap method (=5000 times) was applied to test the conditional indirect effect of shame in mediating the relationship between leader negative performance feedback and promotion-focused job crafting across varying levels of leader trust. As shown in

Table 6, the mediating effects of shame were 0.453, 0.311, and 0.170 under low (Mean − 1 SD), medium (Mean), and high (Mean + 1 SD) leader trust, respectively. The corresponding 95% confidence intervals were [0.397, 0.510], [0.264, 0.355], and [0.112, 0.223]. These results indicate that leader negative performance feedback increases employees’ shame, but this positive relationship is significantly weakened at higher levels of leader trust. Moreover, the positive indirect effect of leader negative performance feedback on promotion-focused job crafting through employees’ shame also decreases when leader trust is high.

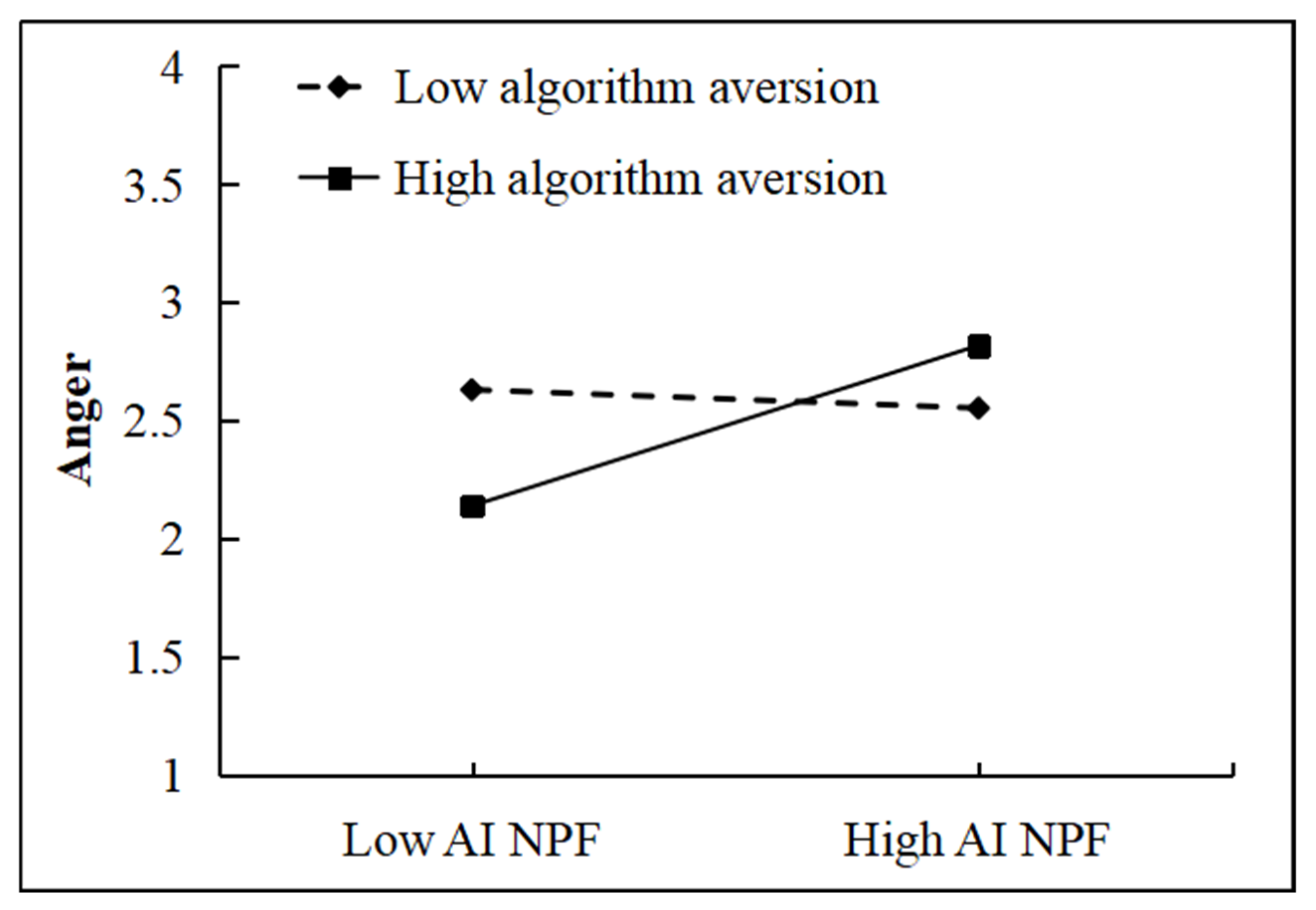

As shown in

Figure 4, compared to low algorithm aversion (Mean − 1 SD), high algorithm aversion (Mean + 1 SD) significantly strengthened the positive effect of AI negative performance feedback on anger. Thus, H6 was supported. Furthermore, the conditional indirect effect of anger in mediating the relationship between AI negative performance feedback and prevention-oriented job crafting was examined under different levels of algorithm aversion (

Table 7). The results demonstrated that the mediating effects of anger were −0.033, 0.129, and 0.290 under low (Mean − 1 SD), medium (Mean), and high (Mean + 1 SD) algorithm aversion, respectively, with the 95% confidence intervals being [−0.137, 0.067], [0.044, 0.209], and [0.199, 0.378]. These findings suggest that AI negative performance feedback elicits employees’ anger, and this effect intensifies as the degree of algorithm aversion increases. In addition, the indirect effect of AI negative performance feedback on prevention-oriented job crafting through employees’ anger is also increased under higher levels of algorithm aversion.

6. Discussion

6.1. Theoretical Implications

This research makes several theoretical contributions to the literature on negative performance feedback, emotions, and job crafting in the digital era. First, this study advances the literature on feedback and algorithmic management by generating new insights into employees’ perceptual differences toward different feedback sources, namely, leader vs. AI negative performance feedback. While prior research on negative feedback has primarily emphasized informational characteristics such as accuracy, fairness, and credibility (

Tong et al., 2021;

Newman et al., 2020), relatively little is known about how employees evaluate the perceived appropriateness of the negative feedback and its emotional consequences. Our findings extend this line of work to reveal that employees attribute leader negative performance feedback to interpersonal evaluation, thereby experiencing heightened shame, whereas AI negative performance feedback is perceived as less relational but more detrimental to employees’ confidence in their ability to meet performance expectations, thereby increasing anger. This study aligns with existing scholarship indicating that leader negative performance feedback tends to elicit stronger feelings of shame in employees (

Li et al., 2025;

Xing et al., 2021). This distinction contributes to a deeper theoretical understanding of how feedback source influences discrete emotional responses. Moreover, it extends the literature on algorithmic management by positioning AI feedback not merely as a technical tool (

Olan et al., 2022;

Agrawal et al., 2019), but as a social signal that triggers psychological processes.

Second, building on AET, this study enriches the job crafting literature by identifying discrete emotions as psychological mechanisms that translate the impact of negative performance feedback (leader vs. AI) into divergent behavioral strategies. Previous studies have mainly focused on cognitive factors like appraisal, self-regulation (

Zhang & Parker, 2022;

Bakker & Oerlemans, 2019;

Demerouti et al., 2024) or motivational orientations (

Niessen et al., 2016;

Rofcanin et al., 2019) as the drivers of job crafting (

Zhang & Parker, 2019;

Lazazzara et al., 2020;

Lichtenthaler & Fischbach, 2019), while the role of emotions has been less systematically examined. Our results demonstrate that shame, typically triggered by leader negative performance feedback, facilitates promotion-oriented job crafting by motivating employees to restore competence and maintain a positive professional identity. In contrast, anger, often elicited by AI negative performance feedback, promotes prevention-oriented job crafting by encouraging employees to adopt defensive strategies to reduce risk and protect resources. Building on prior research that has predominantly examined single-source feedback (

Cheng et al., 2023;

He et al., 2024;

Liu et al., 2024), this study uncovers the differentiated mechanisms through which distinct feedback sources influence job crafting. By highlighting the mediating role of shame and anger, it provides a nuanced understanding of why the different sources of negative performance feedback can lead to either promotion-oriented or prevention-oriented job crafting, thereby enriching existing perspectives that have primarily focused on cognitive or motivational processes.

Finally, by incorporating leader trust and algorithm aversion as boundary conditions, this study advances the feedback and emotion literature by specifying for whom negative performance feedback exerts stronger or weaker effects. Prior research has predominantly focused on the direct influence of negative performance feedback (

Qin et al., 2023;

Pei et al., 2024), with relatively limited attention given to how AI-related or leader-related factors moderated the link between feedback sources and employee behavior (

Li et al., 2025;

Liao et al., 2024). Extending this stream of research, the present study highlights that employees’ trust in leaders or in AI would influence their emotional and behavioral responses. The results indicate that leader trust weakens the mediating role of shame between leader feedback and promotion-oriented job crafting, whereas algorithm aversion strengthens the mediating role of anger between AI feedback and prevention-oriented job crafting. This perspective not only enriches research on negative performance feedback and job crafting but also contributes to the emerging literature on algorithmic management by revealing how employees’ trust, whether directed toward leaders or toward algorithmic systems, shapes their reactions to leader versus AI sources of authority.

6.2. Practical Implications

This research also provides several practical insights for organizational feedback practices in the era of digitalization. First, the findings suggest that organizations should strategically align the source of negative feedback with their intended outcomes. Leader negative performance feedback, although often evoking shame, can transform this emotion into constructive motivation, thereby encouraging promotion-oriented job crafting aimed at enhancing competence and performance. Accordingly, leaders may consider delivering critical feedback personally when the objective is employee development and growth. In contrast, AI negative performance feedback is more likely to elicit anger, an outward-directed emotion associated with perceived unfairness and lack of control, which may foster defensive, prevention-oriented job crafting. Organizations should therefore be cautious about over-reliance on AI-based feedback systems, particularly in sensitive performance evaluation contexts.

Second, the results highlight the importance of cultivating leader trust as a critical buffer in feedback processes. When employees trust their leaders, the shame evoked by negative performance feedback is weakened, reducing the risk that it becomes harmful while preserving its motivational benefits. In practice, this suggests that organizations should invest in leadership development programs that emphasize not only technical competence but also interpersonal credibility, integrity, and fairness. Specifically, training interventions that enhance leaders’ communication skills, empathy, and relational transparency can strengthen trust with subordinates, thereby ensuring that feedback is interpreted as supportive rather than punitive. Moreover, organizational cultures that promote psychological safety, fairness, and consistent leader behavior may further reinforce employee trust, thereby facilitating the constructive reception of negative feedback.

Third, when implementing AI performance management systems, organizations must proactively address employees’ algorithm aversion. Because AI feedback is often perceived as rigid, opaque, and detached from contextual nuances, employees may experience anger and defensiveness in response to such feedback. To mitigate these risks, organizations should increase transparency by clearly communicating the logic, criteria, and data sources underlying AI assessments. It is equally important to provide opportunities for employees to share opinions and seek explanations. These methods not only enhance perceptions of procedural justice but also empower employees with a sense of agency, thereby reducing negative emotional reactions. Such practices may lessen resistance to AI negative performance feedback and strengthen perceived fairness, which in turn increases employees’ willingness to accept and learn from AI feedback.