Building Instance Extraction via Multi-Scale Hybrid Dual-Attention Network

Abstract

1. Introduction

- 1.

- We propose a Multi-Scale Hybrid Dual-Attention Network (MS-HDAN) that integrates local detail modeling with global semantic reasoning for accurate building instance segmentation.

- 2.

- We introduce the Gated Dual-Attention BiFormer Block (GDABB) for dynamic global feature selection, and the Local-Global Collaborative Perception Enhancement Module (LG-CPEM) for adaptive feature alignment under geometric variations.

- 3.

- A dual-attention guided reconstruction module is designed to refine building boundaries and enhance spatial detail consistency.

2. Related Work

2.1. Deep Learning and Attention Mechanisms for Building Extraction

2.2. Deformable Convolutions and Hybrid Architectures for Building Extraction

3. Method

3.1. Dual-Stream Encoder

3.1.1. Global Context Modeling Pathway

3.1.2. Local Feature Extraction Pathway

3.1.3. Local-Global Collaborative Perception Enhancement Module

3.2. Attention-Guided Decoder

4. Experiments

4.1. Dataset Details

4.2. Evaluation Metrics

4.3. Performance Evaluation

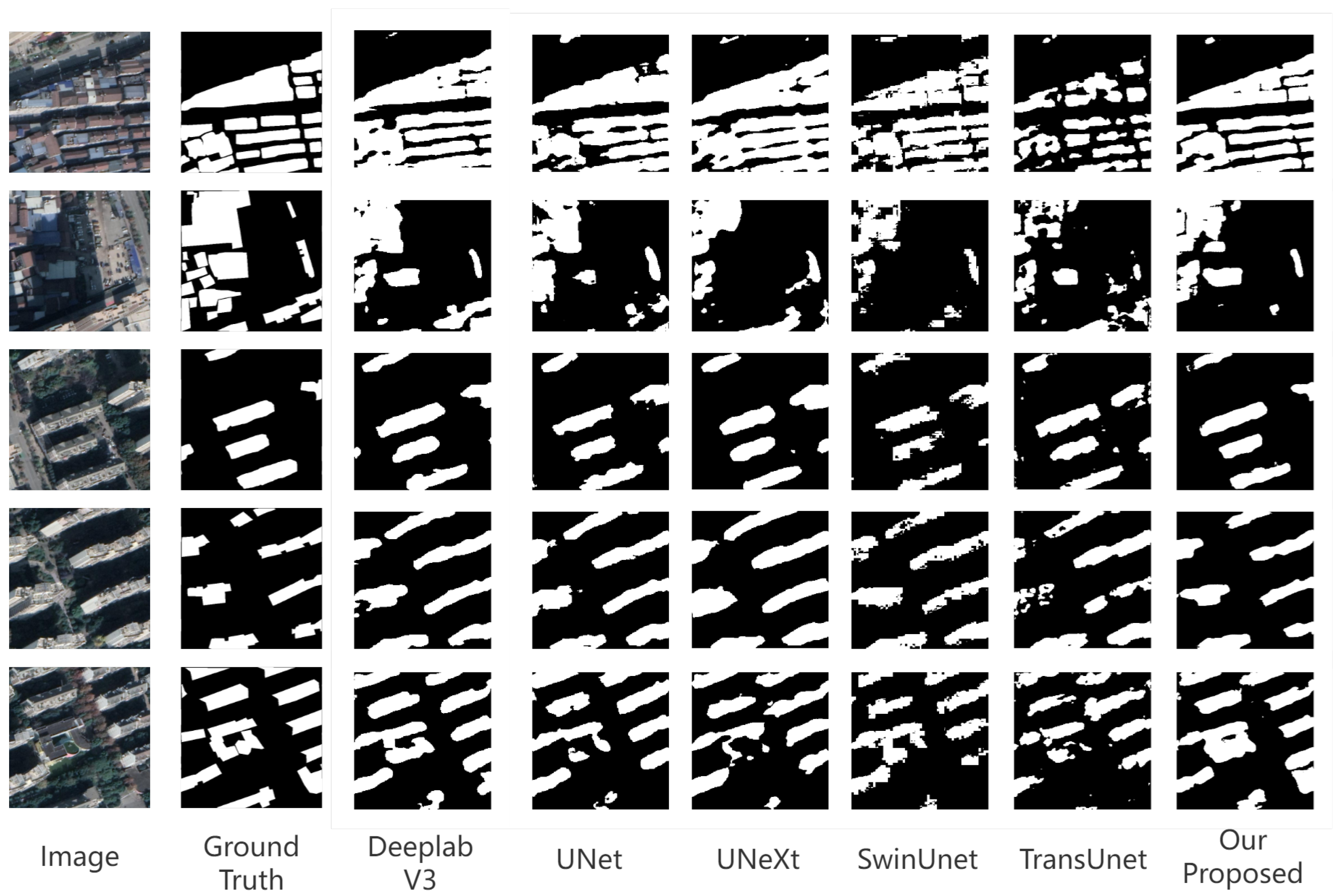

4.4. Visualization Results

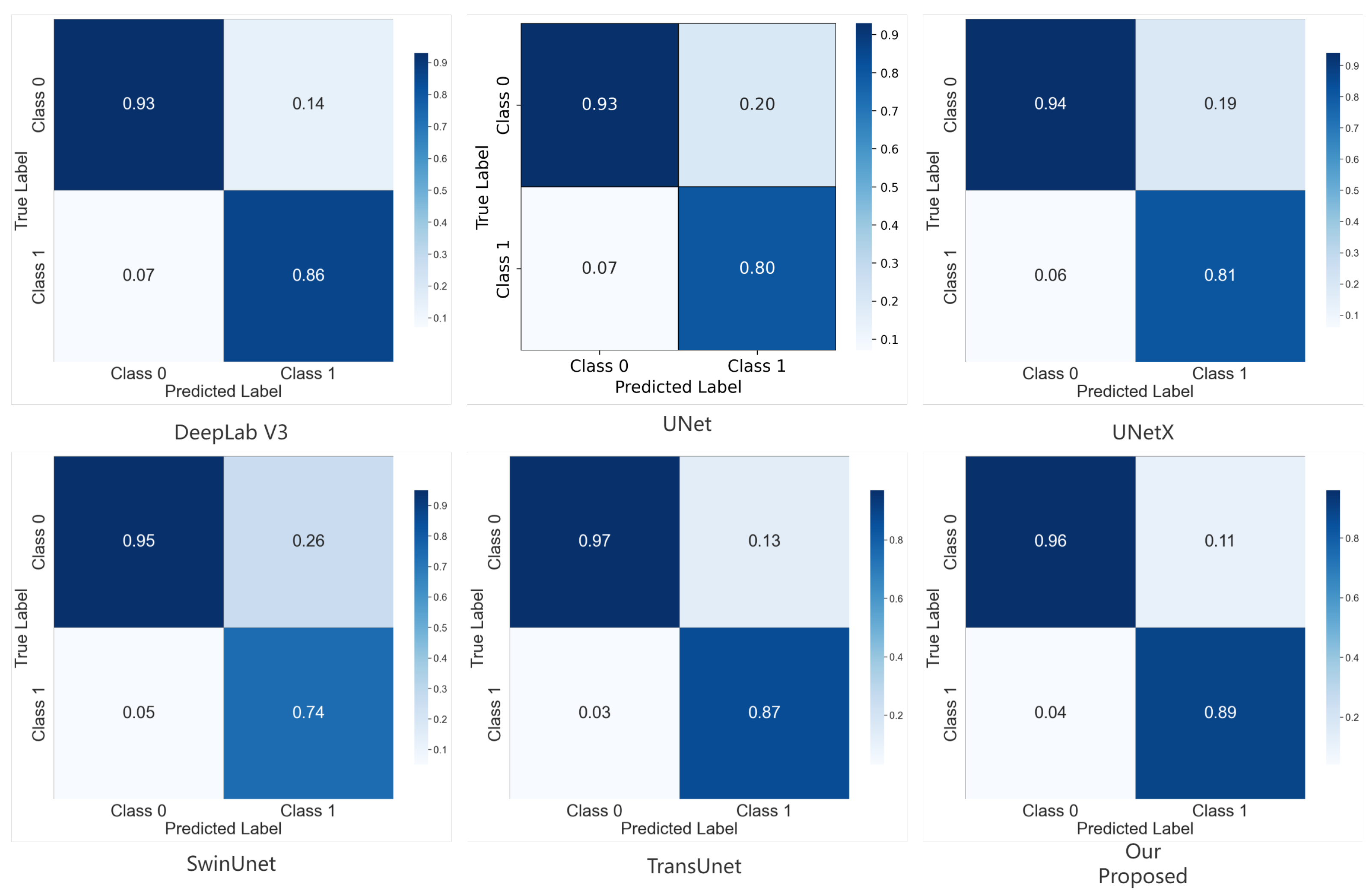

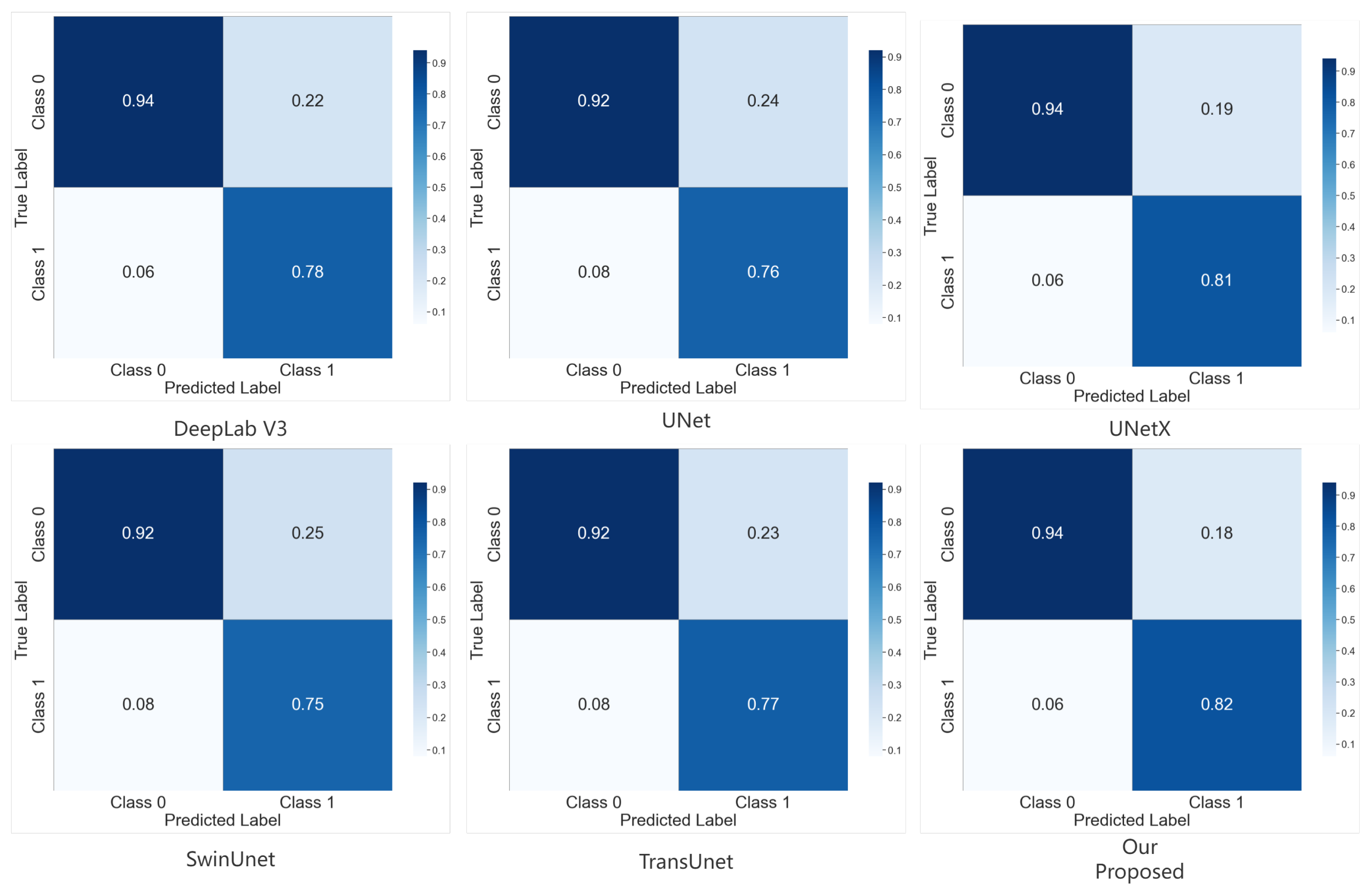

4.5. Confusion Matrices

4.6. Ablation Experiment

5. Discussion

5.1. Model Effectiveness in Complex Urban Scenes

5.2. Potential Impact on Urban Decision Making

5.3. Limitations and Future Work

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Raghavan, R.; Verma, D.C.; Pandey, D.; Anand, R.; Pandey, B.K.; Singh, H. Optimized building extraction from high-resolution satellite imagery using deep learning. Multimed. Tools Appl. 2022, 81, 42309–42323. [Google Scholar] [CrossRef]

- Tolstikhin, I.O.; Houlsby, N.; Kolesnikov, A.; Beyer, L.; Zhai, X.; Unterthiner, T.; Yung, J.; Steiner, A.; Keysers, D.; Uszkoreit, J.; et al. Mlp-mixer: An all-mlp architecture for vision. Adv. Neural Inf. Process. Syst. 2021, 34, 24261–24272. [Google Scholar]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin transformer: Hierarchical vision transformer using shifted windows. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, BC, Canada, 11–17 October 2021. [Google Scholar]

- Fu, J.; Liu, J.; Tian, H.; Li, Y.; Bao, Y.; Fang, Z.; Lu, H. Dual attention network for scene segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019. [Google Scholar]

- Dai, J.; Qi, H.; Xiong, Y.; Li, Y.; Zhang, G.; Hu, H.; Wei, Y. Deformable convolutional networks. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017. [Google Scholar]

- Zhao, H.; Shi, J.; Qi, X.; Wang, X.; Jia, J. Pyramid scene parsing network. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017. [Google Scholar]

- Long, J.; Shelhamer, E.; Darrell, T. Fully convolutional networks for semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015. [Google Scholar]

- Ronneberger, O.; Fischer, P.; Brox, T. U-net: Convolutional networks for biomedical image segmentation. In Proceedings of the International Conference on Medical Image Computing and Computer-Assisted Intervention, Munich, Germany, 5–9 October 2015; Springer: Berlin/Heidelberg, Germany, 2015; pp. 234–241. [Google Scholar]

- Liu, Y.; Shen, J.; Yang, L.; Bian, G.; Yu, H. ResDO-UNet: A deep residual network for accurate retinal vessel segmentation from fundus images. Biomed. Signal Process. Control 2023, 79, 104087. [Google Scholar] [CrossRef]

- Oktay, O.; Schlemper, J.; Folgoc, L.L.; Lee, M.; Heinrich, M.; Misawa, K.; Mori, K.; McDonagh, S.; Hammerla, N.Y.; Kainz, B.; et al. Attention u-net: Learning where to look for the pancreas. arXiv 2018, arXiv:1804.03999. [Google Scholar] [CrossRef]

- He, X.; Zhou, Y.; Zhao, J.; Zhang, D.; Yao, R.; Xue, Y. Swin transformer embedding UNet for remote sensing image semantic segmentation. IEEE Trans. Geosci. Remote Sens. 2022, 60, 4408715. [Google Scholar] [CrossRef]

- Yin, M.; Chen, Z.; Zhang, C. A CNN-transformer network combining CBAM for change detection in high-resolution remote sensing images. Remote Sens. 2023, 15, 2406. [Google Scholar] [CrossRef]

- Han, C.; Wu, C.; Guo, H.; Hu, M.; Chen, H. HANet: A hierarchical attention network for change detection with bitemporal very-high-resolution remote sensing images. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2023, 16, 3867–3878. [Google Scholar] [CrossRef]

- Dai, L.; Zhang, G.; Zhang, R. RADANet: Road Augmented Deformable Attention Network for Road Extraction From Complex High-Resolution Remote-Sensing Images. IEEE Trans. Geosci. Remote Sens. 2023, 61, 5602213. [Google Scholar] [CrossRef]

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-excitation networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018. [Google Scholar]

- Woo, S.; Park, J.; Lee, J.; Kweon, I.S. Cbam: Convolutional block attention module. In Proceedings of the European Conference on Computer Vision, Munich, Germany, 8–14 September 2018. [Google Scholar]

- Qiu, W.; Gu, L.; Gao, F.; Jiang, T. Building Extraction From Very High-Resolution Remote Sensing Images Using Refine-UNet. IEEE Geosci. Remote Sens. Lett. 2023, 20, 6002905. [Google Scholar] [CrossRef]

- Liu, X.; Peng, Y.; Lu, Z.; Li, W.; Yu, J.; Ge, D.; Xiang, W. Feature-Fusion Segmentation Network for Landslide Detection Using High-Resolution Remote Sensing Images and Digital Elevation Model Data. IEEE Trans. Geosci. Remote Sens. 2023, 61, 4500314. [Google Scholar] [CrossRef]

- Rajagopal, A.; Nirmala, V. Convolutional gated MLP: Combining convolutions and gMLP. In Proceedings of the International Conference on Big Data, Machine Learning and Applications, Orlando, FL, USA, 6–9 December 2021. [Google Scholar]

- Zuo, R.; Zhang, G.; Zhang, R.; Jia, X. A deformable attention network for high-resolution remote sensing images semantic segmentation. IEEE Trans. Geosci. Remote Sens. 2021, 60, 4406314. [Google Scholar] [CrossRef]

- Chen, J.; Yi, J.; Chen, A.; Jin, Z. EFCOMFF-Net: A multiscale feature fusion architecture with enhanced feature correlation for remote sensing image scene classification. IEEE Trans. Geosci. Remote Sens. 2023, 61, 5604917. [Google Scholar] [CrossRef]

- Wu, K.; Zheng, D.; Chen, Y.; Zeng, L.; Zhang, J.; Chai, S.; Xu, W.; Yang, Y.; Li, S.; Liu, Y.; et al. A dataset of building instances of typical cities in China. Chin. Sci. Data 2021, 6, 182–190. [Google Scholar] [CrossRef]

- You, D.; Wang, S.; Wang, F.; Zhou, Y.; Wang, Z.; Wang, J.; Xiong, Y. EfficientUNet+: A building extraction method for emergency shelters based on deep learning. Remote Sens. 2022, 14, 2207. [Google Scholar] [CrossRef]

- Valanarasu, J.M.J.; Patel, V.M. Unext: Mlp-based rapid medical image segmentation network. In Proceedings of the International Conference on Medical Image Computing and Computer-Assisted Intervention, Singapore, 18–22 September 2022; Springer: Berlin/Heidelberg, Germany, 2022; pp. 23–33. [Google Scholar]

- Chen, L.C.; Papandreou, G.; Schroff, F.; Adam, H. Rethinking atrous convolution for semantic image segmentation. arXiv 2017, arXiv:1706.05587. [Google Scholar] [CrossRef]

- Chen, J.; Lu, Y.; Yu, Q.; Luo, X.; Adeli, E.; Wang, Y.; Lu, L.; Yuille, A.L.; Zhou, Y. Transunet: Transformers make strong encoders for medical image segmentation. arXiv 2021, arXiv:2102.04306. [Google Scholar] [CrossRef]

- Cao, H.; Wang, Y.; Chen, J.; Jiang, D.; Zhang, X.; Tian, Q.; Wang, M. Swin-unet: Unet-like pure transformer for medical image segmentation. In Proceedings of the European Conference on Computer Vision, Tel Aviv, Israel, 23–27 October 2022. [Google Scholar]

- Al Shafian, S.; Hu, D. Integrating machine learning and remote sensing in disaster management: A decadal review of post-disaster building damage assessment. Buildings 2024, 14, 2344. [Google Scholar] [CrossRef]

- Dabove, P.; Daud, M.; Olivotto, L. Revolutionizing urban mapping: Deep learning and data fusion strategies for accurate building footprint segmentation. Sci. Rep. 2024, 14, 13510. [Google Scholar] [CrossRef] [PubMed]

- Arulananth, T.; Kuppusamy, P.; Ayyasamy, R.K.; Alhashmi, S.M.; Mahalakshmi, M.; Vasanth, K.; Chinnasamy, P. Semantic segmentation of urban environments: Leveraging U-Net deep learning model for cityscape image analysis. PLoS ONE 2024, 19, e0300767. [Google Scholar] [CrossRef] [PubMed]

| Model | Accuracy | F1-Score | mIoU |

|---|---|---|---|

| U-Net [8] | 0.9034 | 0.9014 | 0.7466 |

| UNeXt [24] | 0.9121 | 0.9111 | 0.7693 |

| DeepLabV3 [25] | 0.9208 | 0.9187 | 0.7841 |

| TransUNet [26] | 0.9329 | 0.9325 | 0.8325 |

| SwinUNet [27] | 0.9046 | 0.9031 | 0.7513 |

| Our proposed method | 0.9451 | 0.9445 | 0.8445 |

| Model | Accuracy | F1-Score | mIoU |

|---|---|---|---|

| U-Net [8] | 0.8972 | 0.8922 | 0.6919 |

| UNeXt [24] | 0.8789 | 0.8762 | 0.6613 |

| DeepLabV3 [25] | 0.9139 | 0.9121 | 0.7427 |

| TransUNet [26] | 0.9017 | 0.8971 | 0.7033 |

| SwinUNet [27] | 0.9025 | 0.8993 | 0.7056 |

| Our proposed method | 0.9232 | 0.9221 | 0.7638 |

| Method | Accuracy | F1-Score | mIoU |

|---|---|---|---|

| Our proposed | 0.9451 | 0.9447 | 0.8458 |

| w/o GDAB | 0.9363 | 0.9354 | 0.8217 |

| w/o DA | 0.9344 | 0.9345 | 0.8216 |

| Method | Accuracy | F1-Score | mIoU |

|---|---|---|---|

| Our proposed | 0.9232 | 0.9221 | 0.7638 |

| w/o GDAB | 0.9182 | 0.9161 | 0.7515 |

| w/o DA | 0.9191 | 0.9177 | 0.7571 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Hu, Q.; Peng, Y.; Zhang, C.; Lin, Y.; U, K.; Chen, J. Building Instance Extraction via Multi-Scale Hybrid Dual-Attention Network. Buildings 2025, 15, 3102. https://doi.org/10.3390/buildings15173102

Hu Q, Peng Y, Zhang C, Lin Y, U K, Chen J. Building Instance Extraction via Multi-Scale Hybrid Dual-Attention Network. Buildings. 2025; 15(17):3102. https://doi.org/10.3390/buildings15173102

Chicago/Turabian StyleHu, Qingqing, Yiran Peng, Chi Zhang, Yunqi Lin, KinTak U, and Junming Chen. 2025. "Building Instance Extraction via Multi-Scale Hybrid Dual-Attention Network" Buildings 15, no. 17: 3102. https://doi.org/10.3390/buildings15173102

APA StyleHu, Q., Peng, Y., Zhang, C., Lin, Y., U, K., & Chen, J. (2025). Building Instance Extraction via Multi-Scale Hybrid Dual-Attention Network. Buildings, 15(17), 3102. https://doi.org/10.3390/buildings15173102