Change Detection in Unmanned Aerial Vehicle Images for Progress Monitoring of Road Construction

Abstract

1. Introduction

2. Methodology

2.1. Orthoimage Generation

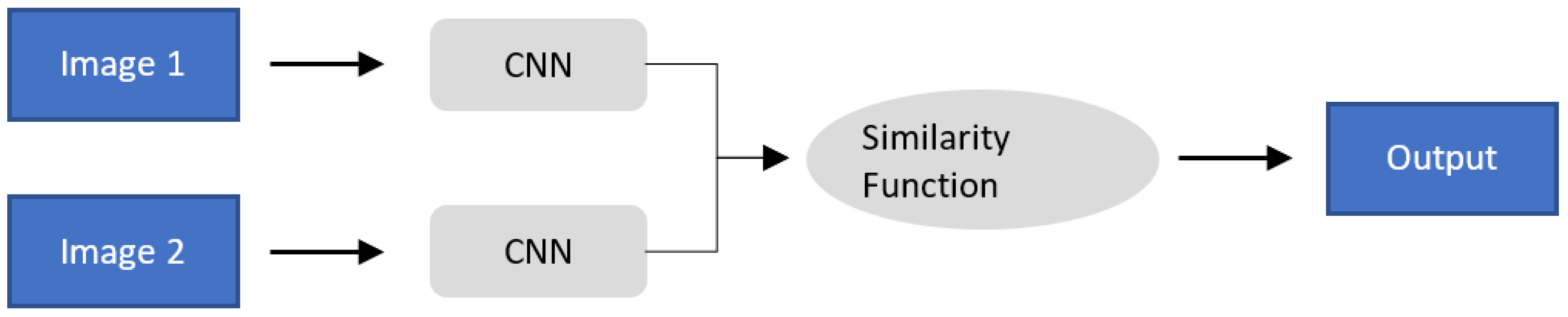

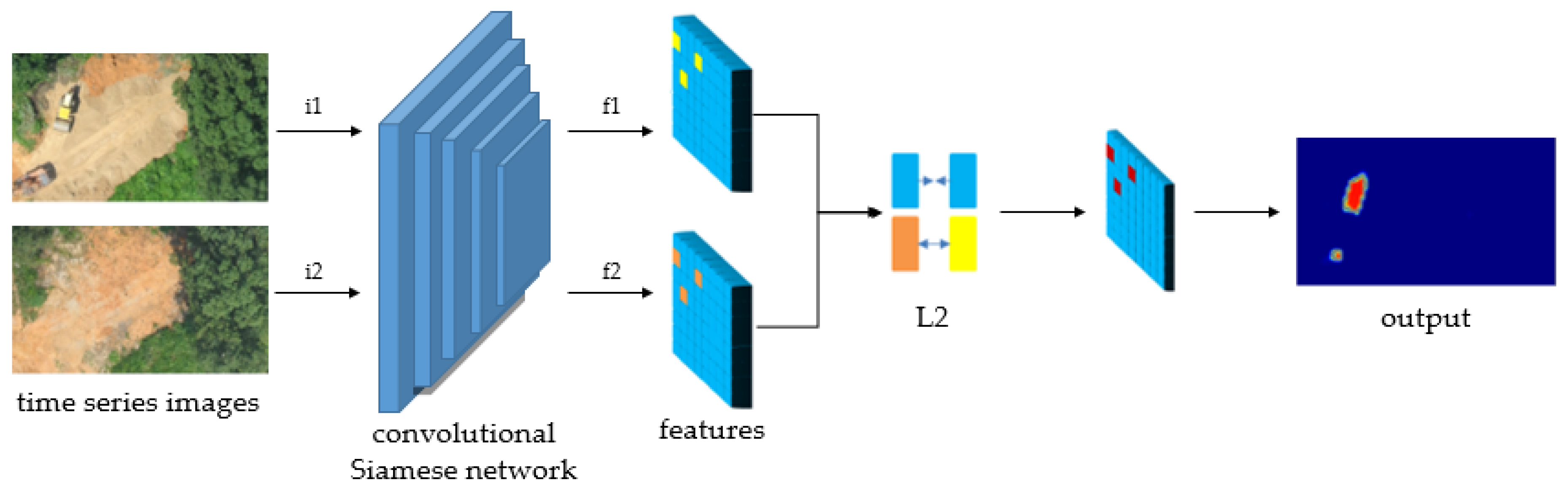

2.2. Introduction to Convolutional Siamese Metric Networks

2.3. Image Change Detection

2.4. Evaluation Metrics

3. Experiment

3.1. Study Area and Devices

3.2. Data Acquisition

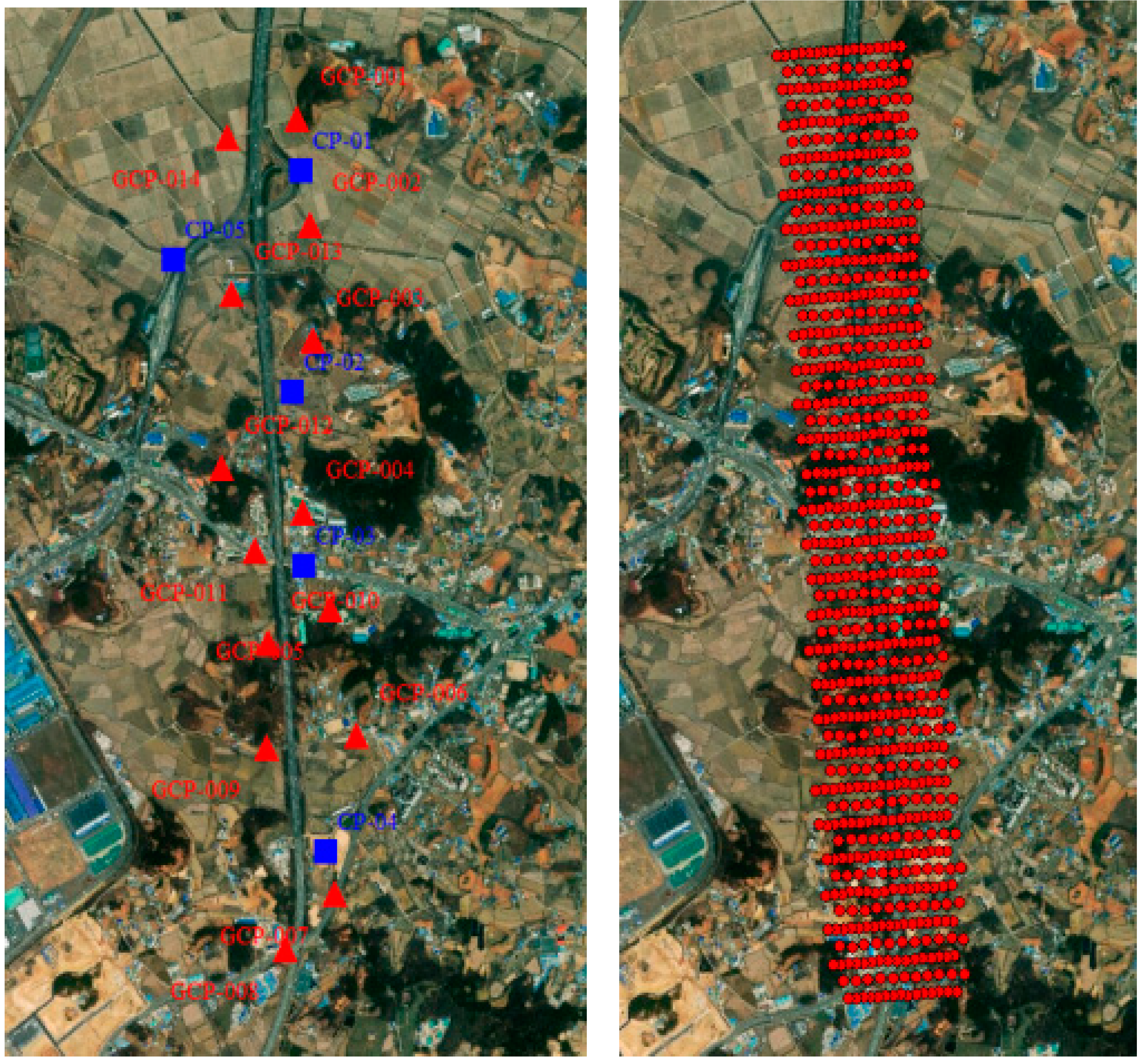

3.3. Creation of an Orthoimage

3.4. Change Detection Implementation

4. Results

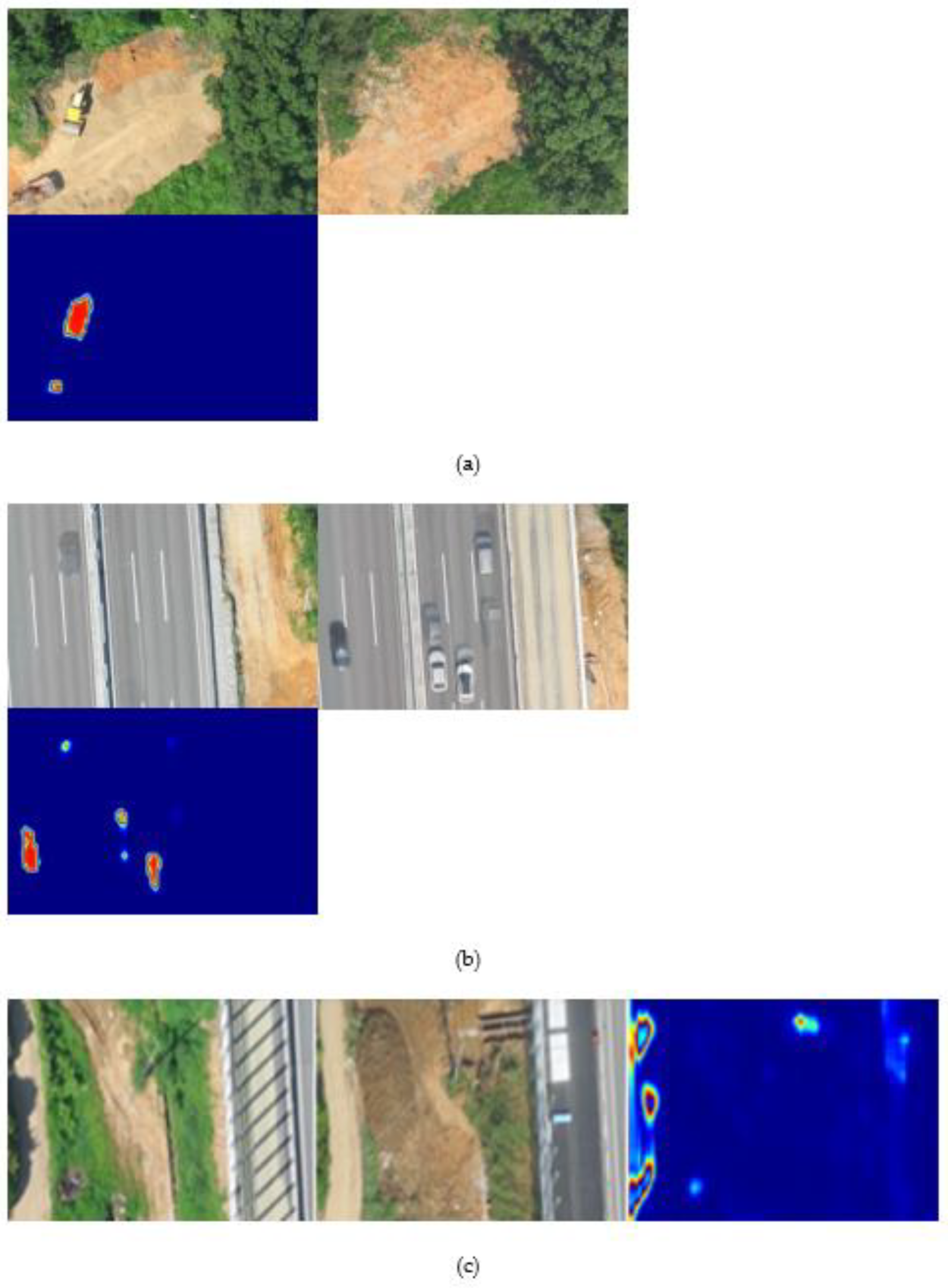

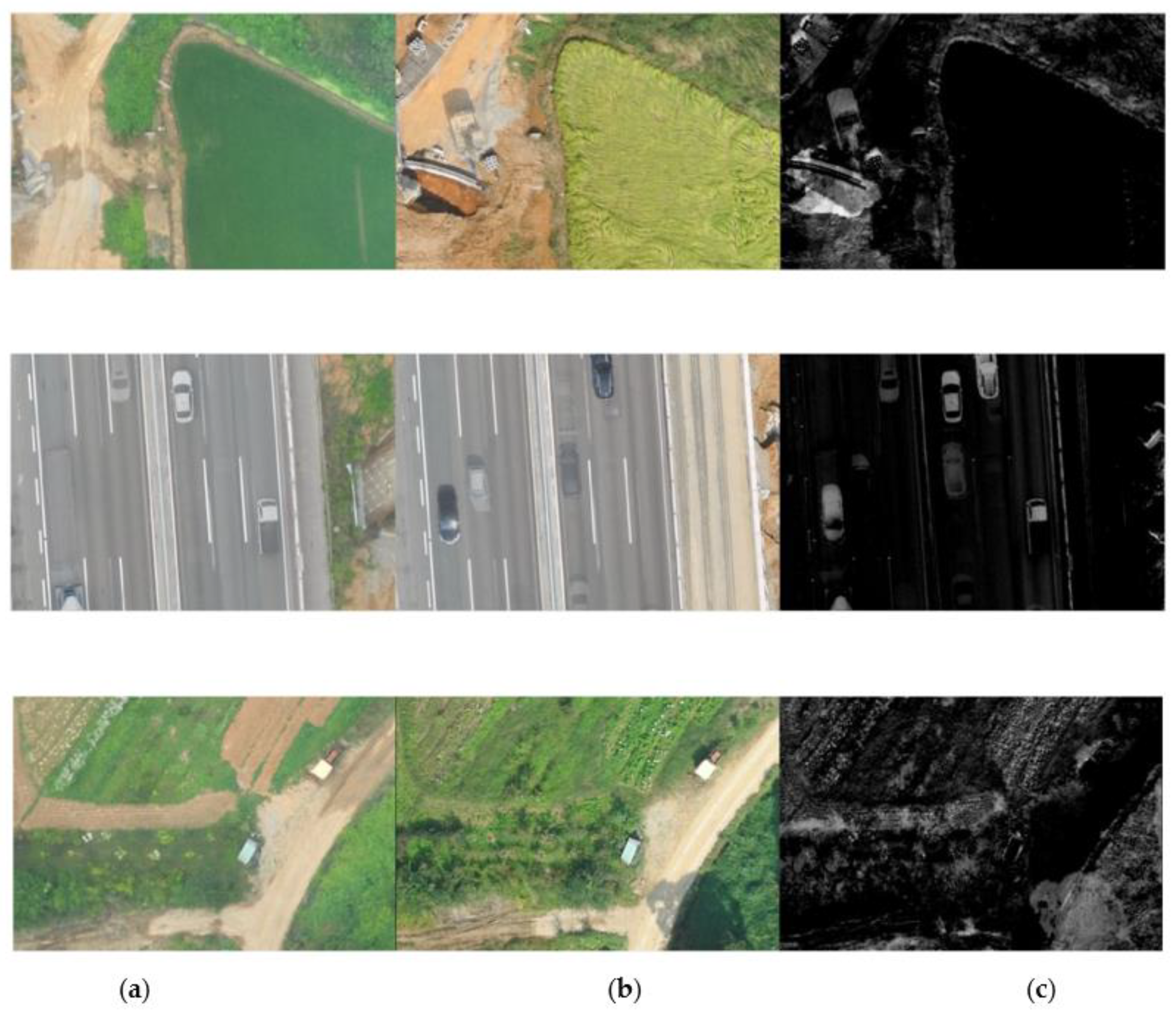

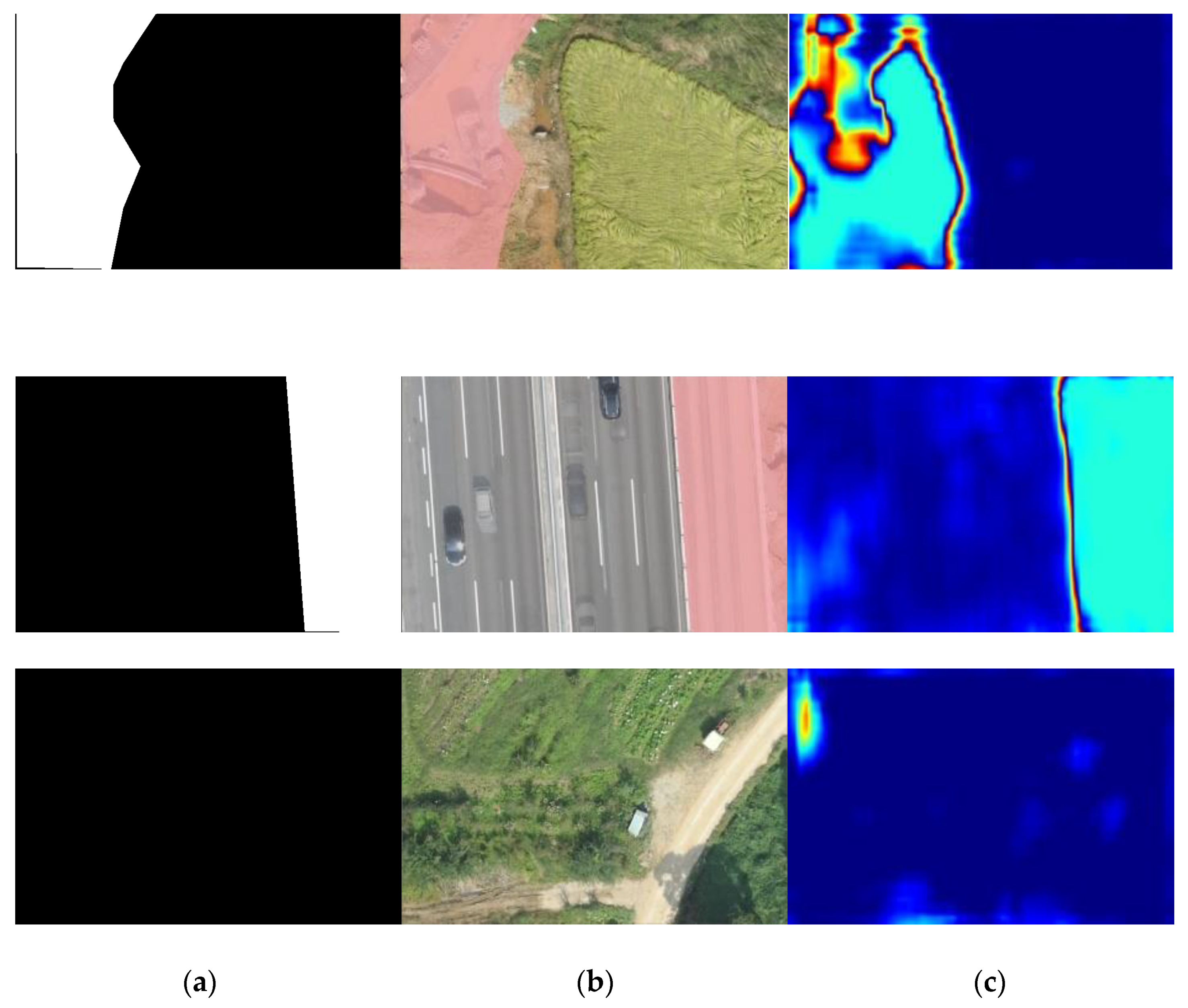

4.1. Change Detection

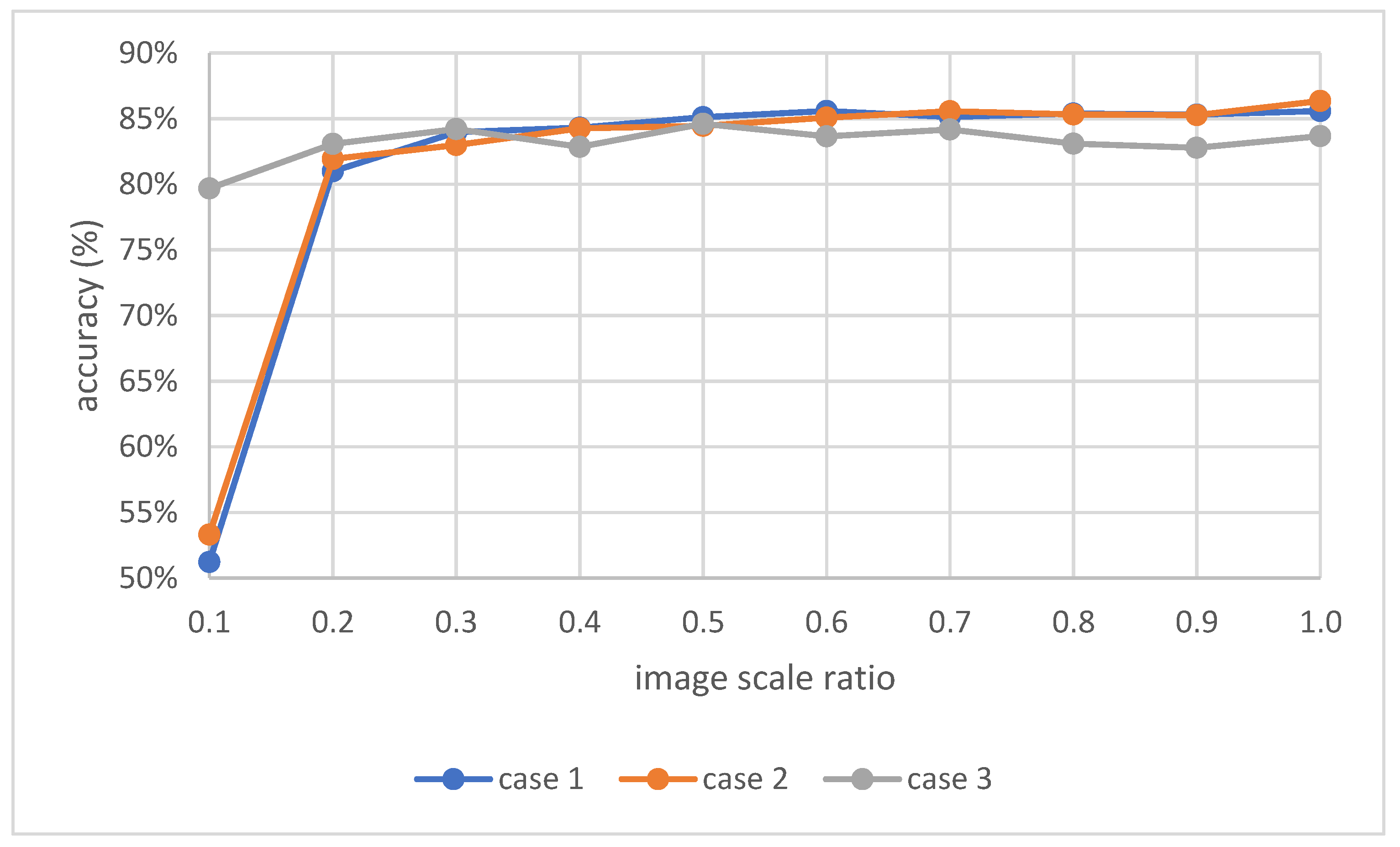

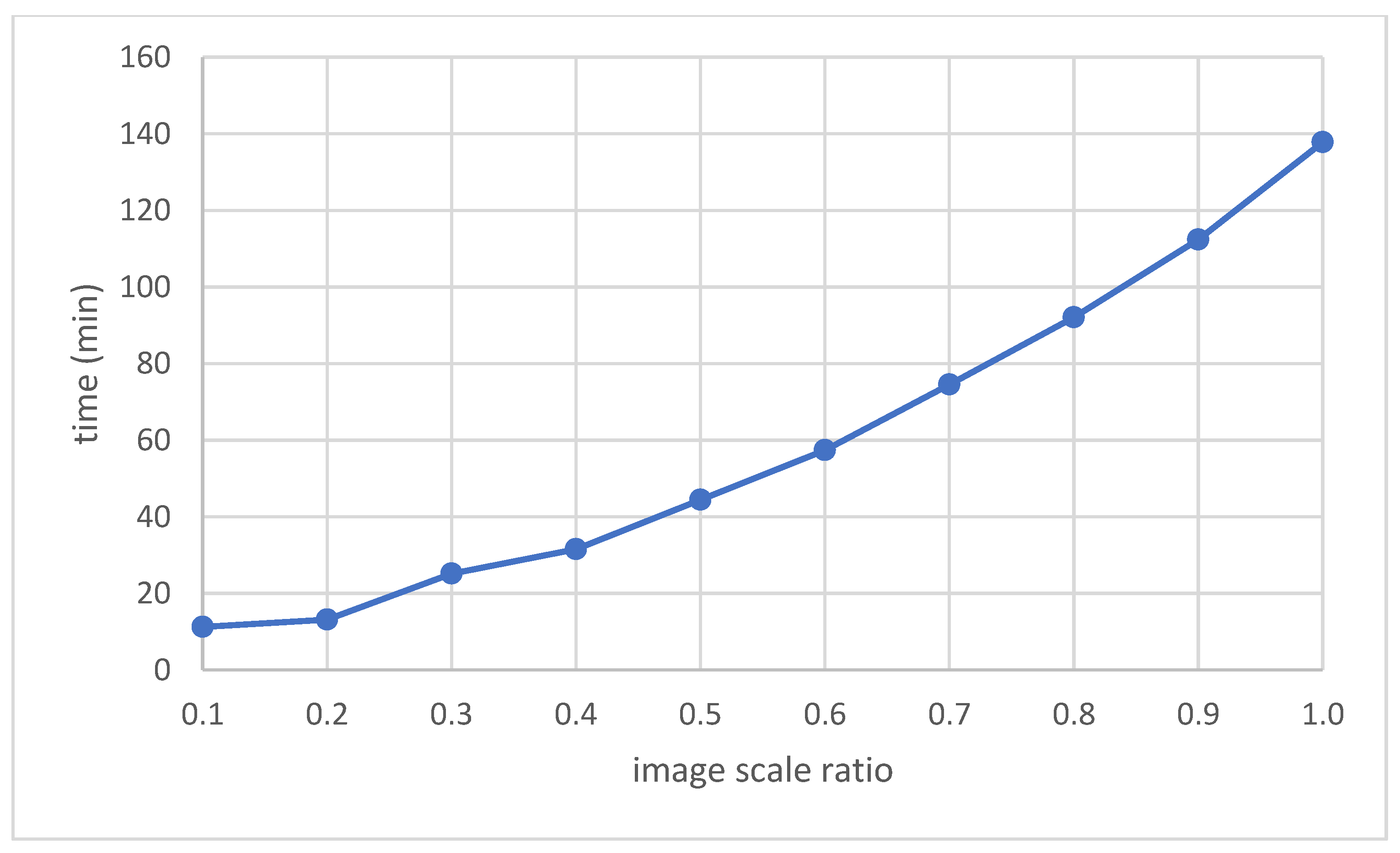

4.2. Image Size Effect

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Yao, H.; Qin, R.; Chen, X. Unmanned aerial vehicle for remote sensing applications—A review. Remote Sens. 2019, 11, 1443. [Google Scholar] [CrossRef]

- Sepasgozar, S.M.; Davis, S. Digital construction technology and job-site equipment demonstration: Modelling relationship strategies for technology adoption. Buildings 2019, 9, 158. [Google Scholar] [CrossRef]

- Moon, D.; Chung, S.; Kwon, S.; Seo, J.; Shin, J. Comparison and utilization of point cloud generated from photogrammetry and laser scanning: 3D world model for smart heavy equipment planning. Autom. Constr. 2019, 98, 322–331. [Google Scholar] [CrossRef]

- Lee, S.B.; Song, M.; Kim, S.; Won, J.H. Change Monitoring at Expressway Infrastructure Construction Sites Using Drone. Sens. Mater. 2020, 32, 3923–3933. [Google Scholar]

- Fan, J.; Saadeghvaziri, M.A. Applications of drones in infrastructures: Challenges and opportunities. Int. J. Mech. Mechatron. Eng. 2019, 13, 649–655. [Google Scholar]

- Li, Y.; Liu, C. Applications of multirotor drone technologies in construction management. Int. J. Constr. Manag. 2019, 19, 401–412. [Google Scholar] [CrossRef]

- Ajayi, O.G.; Salubi, A.A.; Angbas, A.F.; Odigure, M.G. Generation of accurate digital elevation models from UAV acquired low percentage overlapping images. Int. J. Remote Sens. 2017, 38, 3113–3134. [Google Scholar] [CrossRef]

- Airsight NextGen Airfield Inspections. Available online: https://www.airsight.de/fileadmin/airsight/templates/public/flyers/airsight-uav-pavement-inspections-en-web.pdf (accessed on 21 November 2020).

- Liu, D.; Chen, J.; Hu, D.; Zhang, Z. Dynamic BIM-augmented UAV safety inspection for water diversion project. Comput. Ind. 2019, 108, 163–177. [Google Scholar] [CrossRef]

- Lin, J.J.; Han, K.K.; Golparvar-Fard, M. A framework for model-driven acquisition and analytics of visual data using UAVs for automated construction progress monitoring. In Proceedings of the 2015 International Workshop on Computing in Civil Engineering, Austin, TX, USA, 21–23 June 2015; pp. 156–164. [Google Scholar]

- Irizarry, J.; Costa, D.B. Exploratory study of potential applications of unmanned aerial systems for construction management tasks. J. Manag. Eng. 2016, 32, 05016001. [Google Scholar] [CrossRef]

- Howard, J.; Murashov, V.; Branche, C.M. Unmanned aerial vehicles in construction and worker safety. Am. J. Ind. Med. 2018, 61, 3–10. [Google Scholar] [CrossRef]

- Kim, S.; Irizarry, J.; Costa, D.B. Field Test-Based UAS Operational Procedures and Considerations for Construction Safety Management: A Qualitative Exploratory Study. Int. J. Civ. Eng. 2020, 18, 919–933. [Google Scholar] [CrossRef]

- Wang, X.; Al-Shabbani, Z.; Sturgill, R.; Kirk, A.; Dadi, G.B. Estimating earthwork volumes through use of unmanned aerial systems. Transp. Res. Rec. 2017, 2630, 1–8. [Google Scholar] [CrossRef]

- Ham, Y.; Han, K.K.; Lin, J.J.; Golparvar-Fard, M. Visual monitoring of civil infrastructure systems via camera-equipped Unmanned Aerial Vehicles (UAVs): A review of related works. Vis. Eng. 2016, 4, 1. [Google Scholar] [CrossRef]

- Nooraldeen, Y.; Puripanda, N.; Bandla, K.; Derbas, Z.; AlNowakhda, A. November. Implementation of Tatweer’s Spatial Data Infrastructure and Utilization of Uav’s for Day-to-day Operations in the Bahrain Field. In Proceedings of the Abu Dhabi International Petroleum Exhibition & Conference, Abu Dhabi, UAE, 11–14 November; Society of Petroleum Engineers: Dubai, UAE, 2019. [Google Scholar]

- Radke, R.; Andra, S.; Al-Kofahi, O.; Roysam, B. Image change detection algorithms: A systematic survey. IEEE Trans. Image Process. 2005, 14, 294–307. [Google Scholar] [CrossRef] [PubMed]

- Makuti, S.; Nex, F.; Yang, M.Y. Multi-temporal classification and change detection using UAV images. ISPRS-Int. Arch. Photogramm. Remote. Sens. Spat. Inf. Sci. 2018, 42, 651–658. [Google Scholar] [CrossRef]

- Alcantarilla, P.F.; Stent, S.; Ros, G.; Arroyo, R.; Gherardi, R. Street-view change detection with deconvolutional networks. Auton. Robot. 2018, 42, 1301–1322. [Google Scholar] [CrossRef]

- Guo, E.; Fu, X.; Zhu, J.; Deng, M.; Liu, Y.; Zhu, Q.; Li, H. Learning to measure change: Fully convolutional Siamese metric networks for scene change detection. arXiv 2018, arXiv:1810.09111. [Google Scholar]

- Sakurada, K.; Okatani, T. Change detection from a street image pair using CNN features and superpixel segmentation. In Proceedings of the 2015 British Machine Vision Conference, Swansea, UK, 7–10 September 2015. [Google Scholar]

- Zhan, Y.; Fu, K.; Yan, M.; Sun, X.; Wang, H.; Qiu, X. Change detection based on deep Siamese convolutional network for optical aerial images. IEEE Geosci. Remote. Sens. Lett. 2017, 14, 1845–1849. [Google Scholar] [CrossRef]

- Shi, J.; Wang, J.; Xu, Y. Object-based change detection using georeferenced UAV images. ISPRS-Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2012, 38, 177–182. [Google Scholar] [CrossRef]

- Altuntas, C. Urban area change detection using time series aerial images. ISPRS-Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2018, 42, 29–34. [Google Scholar] [CrossRef]

- Jiang, H.; Hu, X.; Li, K.; Zhang, J.; Gong, J.; Zhang, M. PGA-SiamNet: Pyramid feature-based attention-guided Siamese network for remote sensing orthoimagery building change detection. Remote Sens. 2020, 12, 484. [Google Scholar] [CrossRef]

- Ekaso, D.; Nex, F.; Kerle, N. Accuracy assessment of real-time kinematics (RTK) measurements on unmanned aerial vehicles (UAV) for direct geo-referencing. Geo-Spat. Inf. Sci. 2020, 23, 165–181. [Google Scholar] [CrossRef]

- Agisoft Metashape User Manual. Available online: https://www.agisoft.com/pdf/metashape-pro_1_6_en.pdf (accessed on 21 November 2020).

- Ma, L.; Liu, Y.; Zhang, X.; Ye, Y.; Yin, G.; Johnson, B.A. Deep learning in remote sensing applications: A meta-analysis and review. ISPRS J. Photogramm. Remote Sens. 2019, 152, 166–177. [Google Scholar] [CrossRef]

- Wang, S.; Hou, X.; Zhao, X. Automatic building extraction from high-resolution aerial imagery via fully convolutional encoder-decoder network with non-local block. IEEE Access 2020, 8, 7313–7322. [Google Scholar] [CrossRef]

- Bromley, J.; Bentz, J.W.; Bottou, L.; Guyon, I.; LeCun, Y.; Moore, C.; Sackinger, E.; Shah, R. Signature verification using a “SIAMESE” time delay neural network. Int. J. Pattern Recognit. Artif. Intell. 1993, 7, 669–688. [Google Scholar] [CrossRef]

- Figueroa-Mata, G.; Mata-Montero, E. Using a convolutional siamese network for image-based plant species identification with small datasets. Biomimetics 2020, 5, 8. [Google Scholar] [CrossRef] [PubMed]

- Chopra, S.; Hadsell, R.; Le Cun, Y. Learning a similarity metric discriminatively, with application to face verification. In Proceedings of the 2005 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR’05), San Diego, CA, USA, 20–25 June 2005; Volume 1, pp. 539–546. [Google Scholar]

- Wang, Y.; Jodoin, P.-M.; Porikli, F.; Konrad, J.; Benezeth, Y.; Ishwar, P. CDnet 2014: An expanded change detection benchmark dataset. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, Columbus, OH, USA, 23–28 June 2014. [Google Scholar]

| Parameter | Value |

|---|---|

| Wingspan | 110 cm |

| Weight | Approx. 1.1 kg |

| Maximum flight time | 59 min |

| Oblique imagery | 0 to −50° |

| Global navigation satellite systems | Real-time and post-processed kinematic |

| Camera | SenseFly S.O.D.A. 20 MP (5472 × 3648) |

| Ground sampling distance (100 m) | Down to 2.3 cm/pixel |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Han, D.; Lee, S.B.; Song, M.; Cho, J.S. Change Detection in Unmanned Aerial Vehicle Images for Progress Monitoring of Road Construction. Buildings 2021, 11, 150. https://doi.org/10.3390/buildings11040150

Han D, Lee SB, Song M, Cho JS. Change Detection in Unmanned Aerial Vehicle Images for Progress Monitoring of Road Construction. Buildings. 2021; 11(4):150. https://doi.org/10.3390/buildings11040150

Chicago/Turabian StyleHan, Dongyeob, Suk Bae Lee, Mihwa Song, and Jun Sang Cho. 2021. "Change Detection in Unmanned Aerial Vehicle Images for Progress Monitoring of Road Construction" Buildings 11, no. 4: 150. https://doi.org/10.3390/buildings11040150

APA StyleHan, D., Lee, S. B., Song, M., & Cho, J. S. (2021). Change Detection in Unmanned Aerial Vehicle Images for Progress Monitoring of Road Construction. Buildings, 11(4), 150. https://doi.org/10.3390/buildings11040150