Glaucoma Classification Using a NFNet-Based Deep Learning Model with a Customized Hybrid Attention Mechanism

Abstract

1. Introduction

- Propose a hybrid attention module that combines spatial and channel attention to calibrate features for glaucoma classification from fundus images.

- Explore normalization-free networks as feature extractors in combination with hybrid attention modules. Various hybrid attention modules were utilized alongside distinct versions of normalization-free ResNets (NF-ResNets) [11] models.

- Further evaluate the proposed attention modules using five-fold cross-validation on the combined LAG, BrG, and EyePACS datasets and conduct comparisons with state-of-the-art (SOTA) models.

2. Materials and Methods

2.1. Datasets

2.2. Deep Learning Architecture

2.2.1. Backbone

2.2.2. Hybrid Attention Module

2.2.3. Classification Head

3. Results

3.1. Training Setting

3.2. Test Results

4. Discussion

5. Conclusions

Author Contributions

Funding

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| AI | Artificial intelligence |

| CNN | Convolutional Neural Network |

| NFNet | Normalizer-Free Neural Network |

References

- Kingman, S. Glaucoma is second leading cause of blindness globally. Bull. World Health Organ. 2004, 82, 887–888. [Google Scholar] [PubMed]

- Tham, Y.C.; Li, V.; Wong, T.Y.; Quigley, H.A.; Aung, T.; Cheng, C.Y. Global prevalence of glaucoma and projections of glaucoma burden through 2040: A systematic review and meta-analysis. Ophthalmology 2014, 121, 2081–2090. [Google Scholar] [CrossRef] [PubMed]

- Lima, A.; Maia, L.B.; dos Santos, P.T.C.; Braz Junior, G.; de Almeida, J.D.S.; de Paiva, A.C. Evolving Convolutional Neural Networks for Glaucoma Diagnosis. Braz. J. Health Rev. 2020, 3, 1873–1882. [Google Scholar] [CrossRef]

- Das, D.; Nayak, D.R.; Pachori, R.B. CA-Net: A Novel Cascaded Attention-Based Network for Multistage Glaucoma Classification Using Fundus Images. IEEE Trans. Instrum. Meas. 2023, 72, 1–10. [Google Scholar] [CrossRef]

- Latif, J.; Tu, S.; Xiao, C.; Ur Rehman, S.; Imran, A.; Latif, Y. ODGNet: A deep learning model for automated optic disc localization and glaucoma classification using fundus images. SN Appl. Sci. 2022, 4, 98. [Google Scholar] [CrossRef]

- Fan, R.; Alipour, K.; Bowd, C.; Christopher, M.; Brye, N.; Proudfoot, J.A.; Goldbaum, M.H.; Belghith, A.; Girkin, C.A.; Fazio, M.A.; et al. Detecting Glaucoma from Fundus Photographs Using Deep Learning without Convolutions: Transformer for Improved Generalization. Ophthalmol. Sci. 2023, 3, 100233. [Google Scholar] [CrossRef] [PubMed]

- Da Silva, M.C.; Silva, C.M.; Matos, A.G.; Da Silva, S.P.P.; Sarmento, R.M.; Rebouças Filho, P.P.; Nascimento, N.M.N.; de Santiago, R.V.C.; Benevides, C.A.; Henrique Cunha, C.C. A New Diabetic Retinopathy Classification Approach Based on Normalizer Free Network. In Proceedings—IEEE Symposium on Computer-Based Medical Systems; Institute of Electrical and Electronics Engineers Inc.: Piscataway, NJ, USA, 2024; pp. 164–169. [Google Scholar]

- Yang, B.; Zhang, Z.; Yang, P.; Zhai, Y.; Zhao, Z.; Zhang, L.; Zhang, R.; Geng, L.; Ouyang, Y.; Yang, K.; et al. MobilenetV2-RC: A lightweight network model for retinopathy classification in retinal OCT images. J. Phys. D Appl. Phys. 2024, 57, 505401. [Google Scholar] [CrossRef]

- Chakraborty, S.; Roy, A.; Pramanik, P.; Valenkova, D.; Sarkar, R. A Dual Attention-aided DenseNet-121 for Classification of Glaucoma from Fundus Images. In Proceedings of the 2024 13th Mediterranean Conference on Embedded Computing (MECO), Budva, Montenegro, 11–14 June 2024. [Google Scholar]

- Das, D.; Nayak, D.R.; Pachori, R.B. AES-Net: An adapter and enhanced self-attention guided network for multi-stage glaucoma classification using fundus images. Image Vis. Comput. 2024, 146, 105042. [Google Scholar] [CrossRef]

- Brock, A.; De, S.; Smith, S.L.; Simonyan, K. High-Performance Large-Scale Image Recognition Without Normalization. Proc. Mach. Learn. Res. 2021, 139, 1059–1071. [Google Scholar]

- Li, L.; Xu, M.; Wang, X.; Jiang, L.; Liu, H. Attention based glaucoma detection: A large-scale database and CNN model. In Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 16–20 June 2019. [Google Scholar]

- Bragança, C.P.; Torres, J.M.; Soares, C.P.D.A.; Macedo, L.O. Detection of Glaucoma on Fundus Images Using Deep Learning on a New Image Set Obtained with a Smartphone and Handheld Ophthalmoscope. Healthcare 2022, 10, 2345. [Google Scholar] [CrossRef] [PubMed]

- De Vente, C.; Vermeer, K.A.; Jaccard, N.; Wang, H.; Sun, H.; Khader, F.; Truhn, D.; Aimyshev, T.; Zhanibekuly, Y.; Le, T.D.; et al. AIROGS: Artificial Intelligence for Robust Glaucoma Screening Challenge. IEEE Trans. Med. Imaging 2024, 43, 542–557. [Google Scholar] [CrossRef] [PubMed]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016. [Google Scholar]

- Woo, S.; Park, J.; Lee, J.Y.; Kweon, I.S. CBAM: Convolutional block attention module. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 3–19. [Google Scholar]

- Singh, P.B.; Singh, P.; Dev, H.; Batra, D.; Chaurasia, B.K. HViTML: Hybrid vision transformer with machine learning-based classification model for glaucomatous eye. Multimed. Tools Appl. 2025, 84, 33609–33632. [Google Scholar] [CrossRef]

- Yu, F.; Wu, C.; Zhou, Y. DG2Net: A MLP-Based Dynamixing Gate and Depthwise Group Norm Network for Classification of Glaucoma. In International Conference on Pattern Recognition; Springer Nature: Cham, Switzerland, 2024. [Google Scholar]

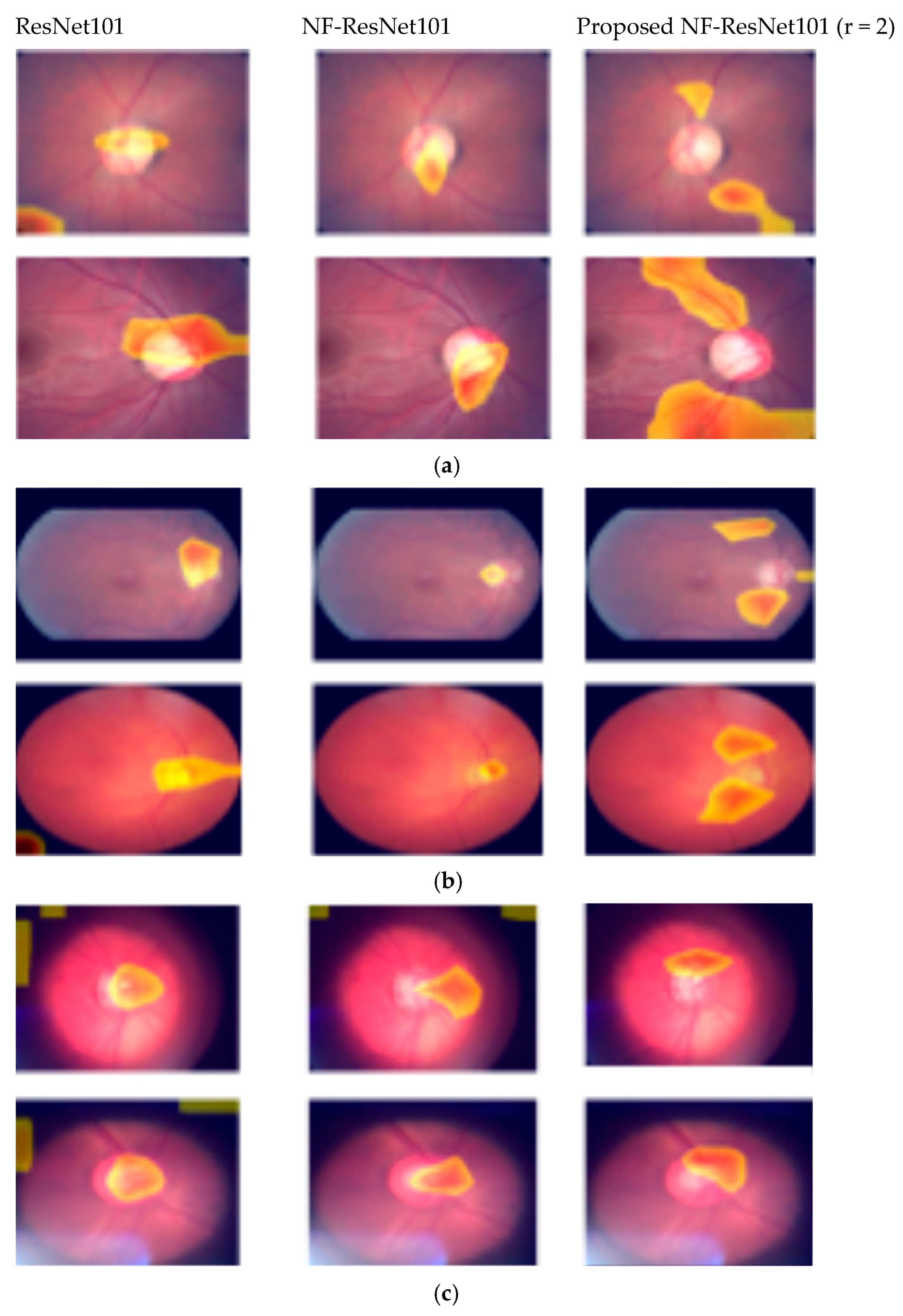

- Chattopadhay, A.; Sarkar, A.; Howlader, P.; Balasubramanian, V.N. Grad-CAM++: Generalized gradient-based visual explanations for deep convolutional networks. In Proceedings of the 2018 IEEE Winter Conference on Applications of Computer Vision, WACV, Lake Tahoe, NV, USA, 12–15 March 2018. [Google Scholar]

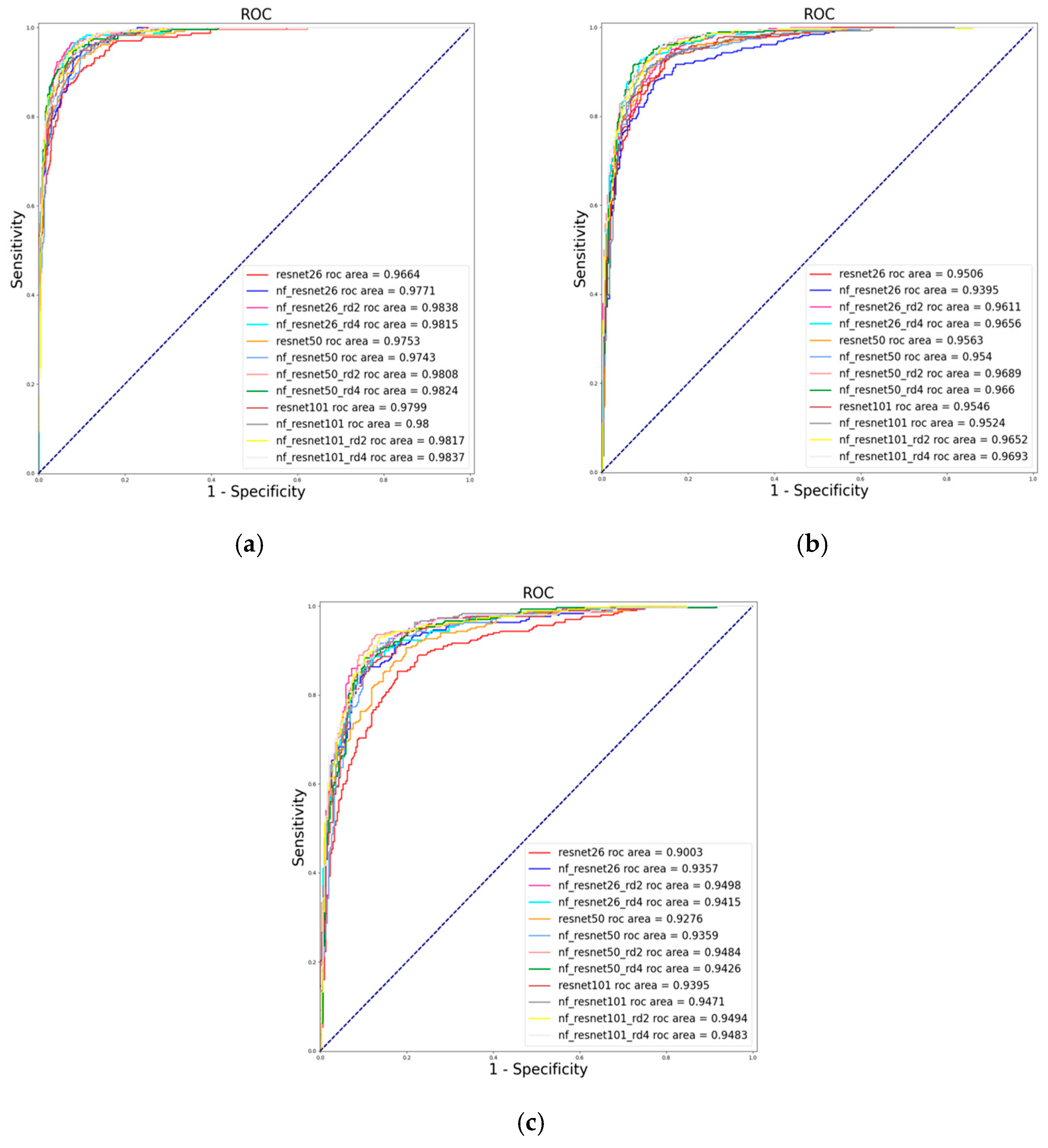

| Model | Hybrid Attention (r) | Accuracy | Sensitivity | Specificity | F1 | AUC | Kappa |

|---|---|---|---|---|---|---|---|

| ResNet26 Variants | |||||||

| ResNet26 | No | 0.9081 | 0.8761 | 0.9180 | 0.9215 | 0.9664 | 0.7572 |

| NF_ResNet26 | No | 0.9192 | 0.8889 | 0.9286 | 0.9303 | 0.9771 | 0.7850 |

| NF_ResNet26 | Yes (2) | 0.9343 | 0.9615 | 0.9259 | 0.9690 | 0.9838 | 0.8299 |

| NF_ResNet26 | Yes (4) | 0.9364 | 0.9103 | 0.9444 | 0.9445 | 0.9815 | 0.8290 |

| ResNet50 Variants | |||||||

| ResNet50 | No | 0.9162 | 0.8974 | 0.922 | 0.9340 | 0.9753 | 0.7792 |

| NF_ResNet50 | No | 0.9232 | 0.8846 | 0.9352 | 0.9289 | 0.9743 | 0.7940 |

| NF_ResNet50 | Yes (2) | 0.9253 | 0.9615 | 0.9140 | 0.9672 | 0.9808 | 0.8087 |

| NF_ResNet50 | Yes (4) | 0.9323 | 0.9103 | 0.9392 | 0.9437 | 0.9824 | 0.8192 |

| ResNet101 variants | |||||||

| ResNet101 | No | 0.9232 | 0.9231 | 0.9233 | 0.9483 | 0.9799 | 0.7992 |

| NF_ResNet101 | No | 0.9273 | 0.9017 | 0.9352 | 0.9384 | 0.9800 | 0.8060 |

| NF_ResNet101 | Yes (2) | 0.9374 | 0.9274 | 0.9405 | 0.9532 | 0.9817 | 0.8334 |

| NF_ResNet101 | Yes (4) | 0.9394 | 0.9231 | 0.9444 | 0.9515 | 0.9837 | 0.8379 |

| Model | Hybrid Attention (r) | Accuracy | Sensitivity | Specificity | F1 | AUC | Kappa |

|---|---|---|---|---|---|---|---|

| ResNet26 Variants | |||||||

| ResNet26 | No | 0.8805 | 0.9013 | 0.8597 | 0.8829 | 0.9506 | 0.7610 |

| NF_ResNet26 | No | 0.8766 | 0.8753 | 0.8779 | 0.8764 | 0.9395 | 0.7532 |

| NF_ResNet26 | Yes (2) | 0.8896 | 0.9160 | 0.8623 | 0.8920 | 0.9611 | 0.7792 |

| NF_ResNet26 | Yes (4) | 0.9130 | 0.9403 | 0.8857 | 0.9153 | 0.9656 | 0.8260 |

| ResNet50 Variants | |||||||

| ResNet50 | No | 0.8948 | 0.8883 | 0.9013 | 0.8941 | 0.9563 | 0.7896 |

| NF_ResNet50 | No | 0.8974 | 0.8753 | 0.9195 | 0.8951 | 0.9540 | 0.7948 |

| NF_ResNet50 | Yes (2) | 0.9065 | 0.9091 | 0.9039 | 0.9067 | 0.9689 | 0.8130 |

| NF_ResNet50 | Yes (4) | 0.9130 | 0.9351 | 0.8909 | 0.9149 | 0.9660 | 0.8260 |

| ResNet101 variants | |||||||

| ResNet101 | No | 0.8792 | 0.8883 | 0.8701 | 0.8803 | 0.9546 | 0.7584 |

| NF_ResNet101 | No | 0.9000 | 0.9091 | 0.8909 | 0.9009 | 0.9524 | 0.8000 |

| NF_ResNet101 | Yes (2) | 0.9039 | 0.9039 | 0.9039 | 0.9039 | 0.9652 | 0.8078 |

| NF_ResNet101 | Yes (4) | 0.9130 | 0.9221 | 0.9039 | 0.9138 | 0.9693 | 0.8260 |

| Model | Hybrid Attention (r) | Accuracy | Sensitivity | Specificity | F1 | AUC | Kappa |

|---|---|---|---|---|---|---|---|

| ResNet26 variants | |||||||

| ResNet26 | No | 0.8355 | 0.8500 | 0.8212 | 0.8384 | 0.9003 | 0.6711 |

| NF_ResNet26 | No | 0.8621 | 0.8967 | 0.8278 | 0.8673 | 0.9357 | 0.7243 |

| NF_ResNet26 | Yes (2) | 0.8821 | 0.8733 | 0.8907 | 0.8813 | 0.9498 | 0.7641 |

| NF_ResNet26 | Yes (4) | 0.8771 | 0.8600 | 0.8940 | 0.8752 | 0.9415 | 0.7541 |

| ResNet50 variants | |||||||

| ResNet50 | No | 0.8488 | 0.8600 | 0.8377 | 0.8510 | 0.9276 | 0.6977 |

| NF_ResNet50 | No | 0.8854 | 0.9033 | 0.8675 | 0.8878 | 0.9359 | 0.7708 |

| NF_ResNet50 | Yes (2) | 0.8887 | 0.8633 | 0.9139 | 0.8860 | 0.9484 | 0.7774 |

| NF_ResNet50 | Yes (4) | 0.8870 | 0.8767 | 0.8974 | 0.8862 | 0.9426 | 0.7741 |

| ResNet101 variants | |||||||

| ResNet101 | No | 0.8721 | 0.8567 | 0.8874 | 0.8704 | 0.9395 | 0.7442 |

| NF_ResNet101 | No | 0.8787 | 0.8667 | 0.8907 | 0.8775 | 0.9471 | 0.7575 |

| NF_ResNet101 | Yes (2) | 0.8920 | 0.8933 | 0.8907 | 0.8925 | 0.9494 | 0.7841 |

| NF_ResNet101 | Yes (4) | 0.8854 | 0.8767 | 0.8940 | 0.8847 | 0.9483 | 0.7707 |

| Model | Hybrid Attention (r) | Accuracy | Sensitivity | Specificity | F1 | AUC | Kappa |

|---|---|---|---|---|---|---|---|

| ResNet26 variants | |||||||

| ResNet26 | No | 0.8976 | 0.8956 | 0.8994 | 0.8887 | 0.9605 | 0.7940 |

| NF_ResNet26 | No | 0.9002 | 0.8893 | 0.9094 | 0.8905 | 0.9612 | 0.7989 |

| NF_ResNet26 | Yes (2) | 0.9154 | 0.9153 | 0.9155 | 0.9080 | 0.9698 | 0.8297 |

| NF_ResNet26 | Yes (4) | 0.9110 | 0.9100 | 0.9118 | 0.9032 | 0.9684 | 0.8208 |

| ResNet50 variants | |||||||

| ResNet50 | No | 0.9003 | 0.8869 | 0.9116 | 0.8903 | 0.9601 | 0.7990 |

| NF_ResNet50 | No | 0.9072 | 0.8996 | 0.9135 | 0.8984 | 0.9653 | 0.8129 |

| NF_ResNet50 | Yes (2) | 0.9193 | 0.9182 | 0.9202 | 0.9122 | 0.9723 | 0.8375 |

| NF_ResNet50 | Yes (4) | 0.9155 | 0.9187 | 0.9128 | 0.9085 | 0.9709 | 0.8300 |

| ResNet101 variants | |||||||

| ResNet101 | No | 0.9019 | 0.8880 | 0.9135 | 0.8919 | 0.9623 | 0.8020 |

| NF_ResNet101 | No | 0.9104 | 0.9059 | 0.9142 | 0.9022 | 0.9673 | 0.8195 |

| NF_ResNet101 | Yes (2) | 0.9164 | 0.9158 | 0.9169 | 0.9090 | 0.9709 | 0.8317 |

| NF_ResNet101 | Yes (4) | 0.9164 | 0.9143 | 0.9181 | 0.9089 | 0.9708 | 0.8316 |

| Model | Dataset | Pretrained Weight | Accuracy | Sensitivity | Specificity | F1 | AUC |

|---|---|---|---|---|---|---|---|

| Ensemble using 5 CNNs [13] | BrG | Yes | 0.9050 | 0.8500 | 0.9600 | 0.8990 | 0.9650 |

| ResNet50 [13] | BrG | Yes | 0.8810 | 0.9530 | 0.8100 | 0.8890 | 0.9560 |

| ResNet101 [13] | BrG | Yes | 0.8800 | 0.9100 | 0.8500 | 0.8830 | 0.9490 |

| DenseNet121 [9] | LAG | Yes | 0.9381 | - | - | 0.93049 | - |

| HViTML [17] | LAG | Yes | 0.9300 | - | - | - | - |

| DeiT [6] | LAG | Yes | - | - | - | - | 0.8800 |

| DG2Net [18] | EyePACS- AIROGS- light-V2 | Yes | 0.9180 | 0.9183 | 0.9190 | ||

| MaXViT [18] | EyePACS-AIROGS-light-V2 | Yes | 0.9325 | 0.9324 | 0.9325 | ||

| Proposed Hybrid Attention based on NF_ResNet | |||||||

| NF_ResNet101 HA (r = 4) | LAG | No | 0.9394 | 0.9231 | 0.9444 | 0.9515 | 0.9837 |

| NF_ResNet50 HA (r = 2) | EyePACS | No | 0.9130 | 0.9351 | 0.8909 | 0.9149 | 0.9660 |

| NF_ResNet101 HA (r = 2) | BrG | No | 0.8920 | 0.8933 | 0.8907 | 0.8925 | 0.9494 |

| NF_ResNet50 HA (r = 2) | LAG, BrG, EyPACS | No | 0.9193 | 0.9182 | 0.9202 | 0.9122 | 0.9723 |

| Model | Hybrid Attention (r) | Accuracy | Sensitivity | Specificity | F1 | AUC | Kappa |

|---|---|---|---|---|---|---|---|

| ConvNext_Small | - | 0.8146 | 0.8004 | 0.8264 | 0.7975 | 0.8979 | 0.6265 |

| ConvNext_Base | - | 0.8163 | 0.7987 | 0.8310 | 0.7987 | 0.8989 | 0.6297 |

| DenseNet121 | - | 0.9098 | 0.9107 | 0.9090 | 0.9021 | 0.9680 | 0.8185 |

| EfficientNetB0 | - | 0.8079 | 0.7913 | 0.8218 | 0.7899 | 0.8941 | 0.6130 |

| EfficientNetB4 | - | 0.8398 | 0.8292 | 0.8486 | 0.8252 | 0.9212 | 0.6773 |

| EfficientNetB7 | - | 0.8248 | 0.8155 | 0.8325 | 0.8108 | 0.9007 | 0.6477 |

| ViT_Small | - | 0.7220 | 0.6869 | 0.7513 | 0.6928 | 0.8002 | 0.4389 |

| ViT_Base | - | 0.7373 | 0.7127 | 0.7579 | 0.7118 | 0.8131 | 0.4705 |

| Proposed Hybrid Attention based on NF_ResNet | |||||||

| NF_ResNet26 | Yes (2) | 0.9154 | 0.9153 | 0.9155 | 0.9080 | 0.9698 | 0.8297 |

| NF_ResNet26 | Yes (4) | 0.9110 | 0.9100 | 0.9118 | 0.9032 | 0.9684 | 0.8208 |

| NF_ResNet50 | Yes (2) | 0.9193 | 0.9182 | 0.9202 | 0.9122 | 0.9723 | 0.8375 |

| NF_ResNet50 | Yes (4) | 0.9155 | 0.9187 | 0.9128 | 0.9085 | 0.9709 | 0.8300 |

| NF_ResNet101 | Yes (2) | 0.9164 | 0.9158 | 0.9169 | 0.9090 | 0.9709 | 0.8317 |

| NF_ResNet101 | Yes (4) | 0.9164 | 0.9143 | 0.9181 | 0.9089 | 0.9708 | 0.8316 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Angara, S.; Tran, L.; Kim, J. Glaucoma Classification Using a NFNet-Based Deep Learning Model with a Customized Hybrid Attention Mechanism. Diagnostics 2026, 16, 815. https://doi.org/10.3390/diagnostics16050815

Angara S, Tran L, Kim J. Glaucoma Classification Using a NFNet-Based Deep Learning Model with a Customized Hybrid Attention Mechanism. Diagnostics. 2026; 16(5):815. https://doi.org/10.3390/diagnostics16050815

Chicago/Turabian StyleAngara, Sandeep, Loc Tran, and Jongwoo Kim. 2026. "Glaucoma Classification Using a NFNet-Based Deep Learning Model with a Customized Hybrid Attention Mechanism" Diagnostics 16, no. 5: 815. https://doi.org/10.3390/diagnostics16050815

APA StyleAngara, S., Tran, L., & Kim, J. (2026). Glaucoma Classification Using a NFNet-Based Deep Learning Model with a Customized Hybrid Attention Mechanism. Diagnostics, 16(5), 815. https://doi.org/10.3390/diagnostics16050815