Diagnostic Accuracy and Stability of Multimodal Large Language Models for Hand Fracture Detection: A Multi-Run Evaluation on Plain Radiographs

Abstract

1. Introduction

2. Materials and Methods

2.1. Study Design and Objectives

- To quantify diagnostic accuracy across multiple independent inference runs

- To assess intra-model reliability using Fleiss’ kappa (κ)

- To characterize the relationship between accuracy and consistency across models

2.2. Dataset and Case Selection

- (1)

- availability of standardized radiographic projections, specifically comprising standard two-view protocols (anteroposterior/posteroanterior and oblique/lateral) for phalangeal and metacarpal fractures, and a dedicated scaphoid series (comprising at least three projections, preferably a four-view protocol) for scaphoid fractures [31];

- (2)

- absence of superimposed annotations (e.g., arrows, circles, or text overlays) that could serve as visual cues for the model, with the exception of standard anatomical side markers (L/R);

- (3)

- diagnostic-quality images in web-optimized formats (JPEG/PNG), with sufficient native resolution (e.g., on the order of ~600 × 600 pixels) to allow visual assessment of cortical margins, trabecular bone structure, and fracture lines;

- (4)

- availability of basic demographic metadata, including patient age and sex, as provided in the case description; and

- (5)

- representation of common, clinically typical fracture patterns without rare variants, syndromic associations, or atypical imaging presentations.

2.3. Models Under Evaluation

- GPT-5 Pro (OpenAI, San Francisco, CA, USA; proprietary)

- Gemini 2.5 Pro (Google DeepMind, Mountain View, CA, USA; proprietary)

- Claude Sonnet 4.5 (Anthropic, San Francisco, CA, USA; proprietary)

- Mistral Medium 3.1 (Mistral AI, Paris, France; representing the open-source heritage)

2.4. Prompting Strategy and Inference Protocol

- A specific diagnostic classification (stating either “No fracture” or “Yes” followed by the specific fracture type, e.g., “metacarpal fracture”)

- An estimated patient age

- An inferred patient sex

- Each run was conducted in a fresh session without conversational context

- No conversational memory, feedback, or adaptive prompting was permitted

2.5. Outcome Definitions

- Diagnostic accuracy, defined as the mean proportion of correct classifications across five runs

- Intra-model reliability, quantified using Fleiss’ kappa (κ) to assess agreement across repeated runs

- Case-level agreement profiles, describing how often a case was correctly classified across five runs (0/5 to 5/5 correct)

- Descriptive subgroup performance, stratified by fracture localization

2.6. Statistical Analysis

2.7. Visualization Strategy

- Run-wise accuracy dot plots to assess diagnostic stability

- Accuracy–consistency dissociation matrix to relate correctness to reliability

- Heatmaps summarizing descriptive performance by fracture subtype

- Stacked bar charts illustrating case-level agreement profiles

2.8. Exploratory Demographic Analyses

3. Results

3.1. Overall Diagnostic Accuracy and Stability

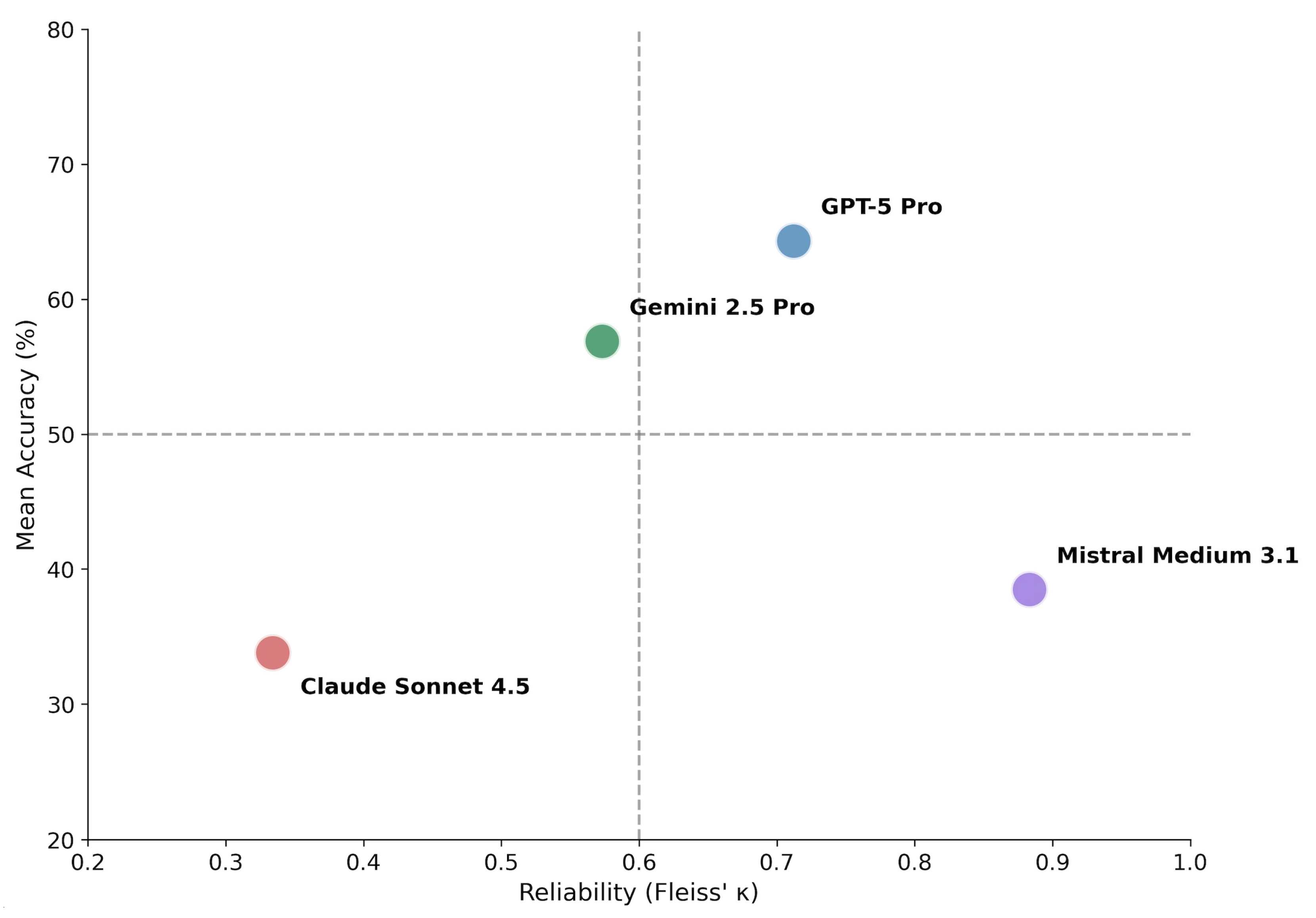

3.2. Accuracy–Consistency Dissociation

3.3. Descriptive Subgroup Performance

3.4. Case-Level Agreement Profiles

3.5. Exploratory Demographic Attribute Inference

4. Discussion

4.1. Accuracy–Consistency Dissociation as a Core Behavioral Property

4.2. Diagnostic Stability Beyond Single-Run Accuracy

4.3. Anatomical Complexity and Task-Specific Limitations

4.4. Demographic Attribute Inference and Diagnostic Performance

4.5. Limitations and Future Directions

- 1.

- The radiographic cases were derived from heterogeneous sources [30] and were not acquired under standardized imaging protocols. Differences in imaging devices, acquisition parameters, projection angles, and post-processing settings reflect real-world variability but limit strict control over image quality and comparability. Consequently, model performance may be influenced by uncontrolled technical factors inherent to the source material.

- 2.

- The study design exclusively included fracture-positive cases. While this approach was intentionally chosen to enable focused analysis of diagnostic behavior, consistency, and error patterns under conditions of confirmed pathology, it necessarily limits the scope of inference. In particular, specificity, false-positive rates, and population-level screening performance could not be assessed. Future investigations should therefore incorporate substantially larger and balanced datasets that include both fracture-positive and fracture-negative cases.

- 3.

- The sample size, particularly for anatomically complex subgroups such as scaphoid fractures, was limited. As a result, subgroup analyses were descriptive in nature and should not be interpreted as definitive performance estimates. Larger, pathology-stratified cohorts will be required to robustly assess model behavior across less frequent but clinically critical fracture types.

- 4.

- This study evaluated MLLMs in isolation and did not include direct comparisons with human experts or with specialized CNN–based fracture detection systems. Benchmarking against experienced radiologists and task-specific CNN tools represents an important next step to contextualize the observed MLLM behavior within established clinical workflows. Comparative evaluations involving human experts, CNN-based systems, and MLLMs would allow assessment of complementary strengths, failure modes, and potential hybrid decision-support strategies.

- 5.

- The present analysis focused on diagnostic accuracy and intra-model consistency across repeated inference runs. While these metrics capture important aspects of reliability, they do not fully reflect clinical utility. Future benchmarking frameworks should incorporate additional dimensions, including time-to-decision, workflow integration, cognitive load reduction, and robustness under realistic deployment constraints. In particular, comparisons between human experts, CNN-based systems, and MLLMs should consider diagnostic performance in relation to time expenditure and operational efficiency rather than accuracy alone.

- 6.

- Generative models introduce unique sources of variability related to stochastic decoding processes. Parameters such as temperature, sampling strategies, and system-level nondeterminism may substantially influence output stability. In many real-world deployments, especially web-based interfaces, such parameters are not fully transparent or controllable by the user. This limits reproducibility and complicates fair benchmarking across systems. Future studies should therefore prioritize controlled evaluation environments that allow systematic regulation of stochastic inference settings.

- 7.

- Binary fracture detection alone captures only a limited aspect of clinical decision-making. From a clinical perspective, additional descriptive elements, including fracture classification, displacement, extent, and contextual interpretation, would likely be required before such systems could be considered for meaningful decision support.

- 8.

- Model performance represents a temporal snapshot tied to specific model versions and deployment configurations. Given the rapid evolution of multimodal architectures, frequent updates, and opaque system changes, longitudinal assessments will be necessary to determine whether observed behavioral patterns remain stable over time or shift with subsequent model iterations.

- 9.

- In addition, evolving model-level safety and moderation policies introduce an external source of variability that may affect the reproducibility of diagnostic evaluations across model versions and time.

4.6. Practical Considerations: Terms of Use, Cost Structure, and Workflow Constraints

5. Conclusions

- Dissociation of Accuracy and Consistency: High intra-model agreement must not be equated with diagnostic correctness, as reproducibility may conceal consistently erroneous reasoning or unstable probabilistic behavior.

- Task-Dependent Performance: Model performance varied substantially by anatomical complexity, with relatively robust detection of phalangeal fractures but persistent limitations in complex carpal anatomy such as scaphoid fractures.

- Demographic Independence: Diagnostic performance was independent of implicit age or sex inference, indicating that fracture detection was not driven by incidental demographic visual cues.

- Reliability-Aware Evaluation: Future studies should routinely incorporate repeated-run analyses and reliability metrics alongside single-run accuracy to distinguish robust reasoning from stable or unstable error patterns.

- Dataset Expansion: Larger and balanced datasets including fracture-negative cases are required to assess specificity and population-level screening performance.

- Clinical Benchmarking: Systematic comparisons with task-specific CNN-based systems and human experts, ideally using controlled API-based evaluation environments, are necessary to contextualize MLLM behavior within real-world clinical workflows.

- Longitudinal Stability Monitoring: Given the rapid evolution of multimodal architectures, longitudinal assessments are necessary to determine if diagnostic behavior remains stable over subsequent model iterations and changing safety policies.

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| AI | Artificial Intelligence |

| API | Application Programming Interface |

| CNN | Convolutional Neural Network |

| κ | Fleiss’s Kappa (inter-rater reliability coefficient) |

| LLM | Large Language Model |

| MAE | Mean Absolute Error |

| MLLM | Multimodal Large Language Model |

| NLP | Natural Language Processing |

| SD | Standard Deviation |

References

- Cheah, A.E.; Yao, J. Hand Fractures: Indications, the Tried and True and New Innovations. J. Hand Surg. Am. 2016, 41, 712–722. [Google Scholar] [CrossRef]

- Vasdeki, D.; Barmpitsioti, A.; De Leo, A.; Dailiana, Z. How to Prevent Hand Injuries—Review of Epidemiological Data is the First Step in Health Care Management. Injury 2024, 55, 111327. [Google Scholar] [CrossRef]

- Karl, J.W.; Olson, P.R.; Rosenwasser, M.P. The Epidemiology of Upper Extremity Fractures in the United States, 2009. J. Orthop. Trauma 2015, 29, e242–e244. [Google Scholar] [CrossRef]

- Flores, D.V.; Gorbachova, T.; Mistry, M.R.; Gammon, B.; Rakhra, K.S. From Diagnosis to Treatment: Challenges in Scaphoid Imaging. Radiographics 2026, 46, e250050. [Google Scholar] [CrossRef]

- Krastman, P.; Mathijssen, N.M.; Bierma-Zeinstra, S.M.A.; Kraan, G.; Runhaar, J. Diagnostic accuracy of history taking, physical examination and imaging for phalangeal, metacarpal and carpal fractures: A systematic review update. BMC Musculoskelet. Disord. 2020, 21, 12. [Google Scholar] [CrossRef]

- Torabi, M.; Lenchik, L.; Beaman, F.D.; Wessell, D.E.; Bussell, J.K.; Cassidy, R.C.; Czuczman, G.J.; Demertzis, J.L.; Khurana, B.; Klitzke, A.; et al. ACR Appropriateness Criteria® Acute Hand and Wrist Trauma. J. Am. Coll. Radiol. 2019, 16, S7–S17. [Google Scholar] [CrossRef]

- Amrami, K.K.; Frick, M.A.; Matsumoto, J.M. Imaging for Acute and Chronic Scaphoid Fractures. Hand Clin. 2019, 35, 241–257. [Google Scholar] [CrossRef] [PubMed]

- Catalano, L.W., 3rd; Minhas, S.V.; Kirby, D.J. Evaluation and Management of Carpal Fractures Other Than the Scaphoid. J. Am. Acad. Orthop. Surg. 2020, 28, e651–e661. [Google Scholar] [CrossRef]

- Pinto, A.; Berritto, D.; Russo, A.; Riccitiello, F.; Caruso, M.; Belfiore, M.P.; Papapietro, V.R.; Carotti, M.; Pinto, F.; Giovagnoni, A.; et al. Traumatic fractures in adults: Missed diagnosis on plain radiographs in the Emergency Department. Acta Biomed. 2018, 89, 111–123. [Google Scholar] [CrossRef]

- Zhang, A.; Zhao, E.; Wang, R.; Zhang, X.; Wang, J.; Chen, E. Multimodal large language models for medical image diagnosis: Challenges and opportunities. J. Biomed. Inform. 2025, 169, 104895. [Google Scholar] [CrossRef] [PubMed]

- Nam, Y.; Kim, D.Y.; Kyung, S.; Seo, J.; Song, J.M.; Kwon, J.; Kim, J.; Jo, W.; Park, H.; Sung, J.; et al. Multimodal Large Language Models in Medical Imaging: Current State and Future Directions. Korean J. Radiol. 2025, 26, 900–923. [Google Scholar] [CrossRef] [PubMed]

- Bradshaw, T.J.; Tie, X.; Warner, J.; Hu, J.; Li, Q.; Li, X. Large Language Models and Large Multimodal Models in Medical Imaging: A Primer for Physicians. J. Nucl. Med. 2025, 66, 173–182. [Google Scholar] [CrossRef]

- Fang, M.; Wang, Z.; Pan, S.; Feng, X.; Zhao, Y.; Hou, D.; Wu, L.; Xie, X.; Zhang, X.Y.; Tian, J.; et al. Large models in medical imaging: Advances and prospects. Chin. Med. J. 2025, 138, 1647–1664. [Google Scholar] [CrossRef] [PubMed]

- Han, T.; Adams, L.C.; Nebelung, S.; Kather, J.N.; Bressem, K.K.; Truhn, D. Multimodal large language models are generalist medical image interpreters. medRxiv 2023. [Google Scholar] [CrossRef]

- Urooj, B.; Fayaz, M.; Ali, S.; Dang, L.M.; Kim, K.W. Large Language Models in Medical Image Analysis: A Systematic Survey and Future Directions. Bioengineering 2025, 12, 818. [Google Scholar] [CrossRef]

- Reith, T.P.; D’Alessandro, D.M.; D’Alessandro, M.P. Capability of multimodal large language models to interpret pediatric radiological images. Pediatr. Radiol. 2024, 54, 1729–1737. [Google Scholar] [CrossRef]

- Gosai, A.; Kavishwar, A.; McNamara, S.L.; Samineni, S.; Umeton, R.; Chowdhury, A.; Lotter, W. Beyond diagnosis: Evaluating multimodal LLMs for pathology localization in chest radiographs. arXiv 2025. [Google Scholar] [CrossRef]

- da Silva, N.B.; Harrison, J.; Minetto, R.; Delgado, M.R.; Nassu, B.T.; SilvaTH. Multimodal LLMs see sentiment. arXiv 2025. [Google Scholar] [CrossRef]

- Lee, J.; Park, S.; Shin, J.; Cho, B. Analyzing evaluation methods for large language models in the medical field: A scoping review. BMC Med. Inform. Decis. Mak. 2024, 24, 366. [Google Scholar] [CrossRef]

- Chang, Y.; Wang, X.; Wang, J.; Wu, Y.; Yang, L.; Zhu, K.; Chen, H.; Yi, X.; Wang, C.; Wang, Y.; et al. A survey on evaluation of large language models. ACM Trans. Intell. Syst. Technol. 2024, 15, 39:1–39:45. [Google Scholar] [CrossRef]

- Shool, S.; Adimi, S.; Saboori Amleshi, R.; Bitaraf, E.; Golpira, R.; Tara, M. A systematic review of large language model (LLM) evaluations in clinical medicine. BMC Med. Inform. Decis. Mak. 2025, 25, 117. [Google Scholar] [CrossRef]

- Lieberum, J.L.; Toews, M.; Metzendorf, M.I.; Heilmeyer, F.; Siemens, W.; Haverkamp, C.; Böhringer, D.; Meerpohl, J.J.; Eisele-Metzger, A. Large language models for conducting systematic reviews: On the rise, but not yet ready for use-a scoping review. J. Clin. Epidemiol. 2025, 181, 111746. [Google Scholar] [CrossRef]

- Shen, Y.; Xu, Y.; Ma, J.; Rui, W.; Zhao, C.; Heacock, L.; Huang, C. Multi-modal large language models in radiology: Principles, applications, and potential. Abdom. Radiol. 2025, 50, 2745–2757. [Google Scholar] [CrossRef] [PubMed]

- Yi, Z.; Xiao, T.; Albert, M.V. A survey on multimodal large language models in radiology for report generation and visual question answering. Information 2025, 16, 136. [Google Scholar] [CrossRef]

- Nakaura, T.; Ito, R.; Ueda, D.; Nozaki, T.; Fushimi, Y.; Matsui, Y.; Yanagawa, M.; Yamada, A.; Tsuboyama, T.; Fujima, N.; et al. The impact of large language models on radiology: A guide for radiologists on the latest innovations in AI. Jpn. J. Radiol. 2024, 42, 685–696. [Google Scholar] [CrossRef] [PubMed]

- Park, J.S.; Hwang, J.; Kim, P.H.; Shim, W.H.; Seo, M.J.; Kim, D.; Shin, J.I.; Kim, I.H.; Heo, H.; Suh, C.H. Accuracy of Large Language Models in Detecting Cases Requiring Immediate Reporting in Pediatric Radiology: A Feasibility Study Using Publicly Available Clinical Vignettes. Korean J. Radiol. 2025, 26, 855–866. [Google Scholar] [CrossRef]

- Lacaita, P.G.; Galijasevic, M.; Swoboda, M.; Gruber, L.; Scharll, Y.; Barbieri, F.; Widmann, G.; Feuchtner, G.M. The Accuracy of ChatGPT-4o in Interpreting Chest and Abdominal X-Ray Images. J. Pers. Med. 2025, 15, 194. [Google Scholar] [CrossRef]

- Sarangi, P.K.; Datta, S.; Swarup, M.S.; Panda, S.; Nayak, D.S.K.; Malik, A.; Datta, A.; Mondal, H. Radiologic Decision-Making for Imaging in Pulmonary Embolism: Accuracy and Reliability of Large Language Models-Bing, Claude, ChatGPT, and Perplexity. Indian. J. Radiol. Imaging 2024, 34, 653–660. [Google Scholar] [CrossRef]

- Mongan, J.; Moy, L.; Kahn, C.E., Jr. Checklist for Artificial Intelligence in Medical Imaging (CLAIM): A Guide for Authors and Reviewers. Radiol. Artif. Intell. 2020, 2, e200029. [Google Scholar] [CrossRef]

- Radiopaedia.org. License. Available online: https://radiopaedia.org/licence (accessed on 25 December 2025).

- Han, S.M.; Cao, L.; Yang, C.; Yang, H.-H.; Wen, J.-X.; Guo, Z.; Wu, H.-Z.; Wu, W.-J.; Gao, B.-L. Value of the 45-degree reverse oblique view of the carpal palm in diagnosing scaphoid waist fractures. Injury 2022, 53, 1049–1056. [Google Scholar] [CrossRef]

- Schober, P.; Mascha, E.J.; Vetter, T.R. Statistics from A (Agreement) to Z (z Score): A Guide to Interpreting Common Measures of Association, Agreement, Diagnostic Accuracy, Effect Size, Heterogeneity, and Reliability in Medical Research. Anesth. Analg. 2021, 133, 1633–1641. [Google Scholar] [CrossRef] [PubMed]

- Cohen, J. A coefficient of agreement for nominal scales. Educ. Psychol. Meas. 1960, 20, 37–46. [Google Scholar] [CrossRef]

- Landis, J.R.; Koch, G.G. The measurement of observer agreement for categorical data. Biometrics 1977, 33, 159–174. [Google Scholar] [CrossRef]

- Aydin, C.; Duygu, O.B.; Karakas, A.B.; Er, E.; Gokmen, G.; Ozturk, A.M.; Govsa, F. Clinical Failure of General-Purpose AI in Photographic Scoliosis Assessment: A Diagnostic Accuracy Study. Medicina 2025, 61, 1342. [Google Scholar] [CrossRef] [PubMed]

- Das, A.B.; Sakib, S.K.; Ahmed, S. Trustworthy medical imaging with large language models: A study of hallucinations across modalities. arXiv 2025. [Google Scholar] [CrossRef]

- Ahmed, S.; Sakib, S.K.; Das, A.B. Can large language models challenge CNNs in medical image analysis? arXiv 2025. [Google Scholar] [CrossRef]

| Model | Run 1 | Run 2 | Run 3 | Run 4 | Run 5 | Mean (Range) |

|---|---|---|---|---|---|---|

| GPT-5 Pro | 61.5 | 69.2 | 66.2 | 67.7 | 56.9 | 64.3 (56.9–69.2) |

| Gemini 2.5 Pro | 61.5 | 50.8 | 56.9 | 60.0 | 55.4 | 56.9 (50.8–61.5) |

| Claude Sonnet 4.5 | 12.3 | 55.4 | 35.4 | 12.3 | 53.8 | 33.8 (12.3–55.4) |

| Mistral Medium 3.1 | 38.5 | 41.5 | 36.9 | 36.9 | 38.5 | 38.5 (36.9–41.5) |

| Model | Fleiss’ κ | Interpretation | Accuracy Range (%) |

|---|---|---|---|

| GPT-5 Pro | 0.712 | Substantial | 56.9–69.2 |

| Gemini 2.5 Pro | 0.573 | Moderate | 50.8–61.5 |

| Claude Sonnet 4.5 | 0.334 | Fair | 12.3–55.4 |

| Mistral Medium 3.1 | 0.883 | Almost perfect | 36.9–41.5 |

| Model | Finger (n = 30) | Metacarpal (n = 30) | Scaphoid (n = 5) |

|---|---|---|---|

| GPT-5 Pro | 83.3 | 44.7 | 68.0 |

| Gemini 2.5 Pro | 66.0 | 46.7 | 64.0 |

| Claude Sonnet 4.5 | 40.7 | 32.7 | 0.0 |

| Mistral Medium 3.1 | 52.0 | 30.7 | 4.0 |

| Model | 0/5 | 1/5 | 2/5 | 3/5 | 4/5 | 5/5 |

|---|---|---|---|---|---|---|

| GPT-5 Pro | 13 | 7 | 5 | 2 | 4 | 34 |

| Gemini 2.5 Pro | 15 | 6 | 6 | 8 | 7 | 23 |

| Claude Sonnet 4.5 | 19 | 21 | 2 | 11 | 8 | 4 |

| Mistral Medium 3.1 | 34 | 7 | 0 | 0 | 2 | 22 |

| Model | Age MAE (Years) | Sex Accuracy (%) |

|---|---|---|

| GPT-5 Pro | 12.98 ± 9.81 | 62.8 |

| Gemini 2.5 Pro | 16.00 ± 12.02 | 48.9 |

| Claude Sonnet 4.5 | 18.28 ± 12.98 | 49.2 |

| Mistral Medium 3.1 | 17.72 ± 14.07 | 49.2 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Güler, I.; Grieb, G.; Kraus, A.; Lautenbach, M.; Stelling, H. Diagnostic Accuracy and Stability of Multimodal Large Language Models for Hand Fracture Detection: A Multi-Run Evaluation on Plain Radiographs. Diagnostics 2026, 16, 424. https://doi.org/10.3390/diagnostics16030424

Güler I, Grieb G, Kraus A, Lautenbach M, Stelling H. Diagnostic Accuracy and Stability of Multimodal Large Language Models for Hand Fracture Detection: A Multi-Run Evaluation on Plain Radiographs. Diagnostics. 2026; 16(3):424. https://doi.org/10.3390/diagnostics16030424

Chicago/Turabian StyleGüler, Ibrahim, Gerrit Grieb, Armin Kraus, Martin Lautenbach, and Henrik Stelling. 2026. "Diagnostic Accuracy and Stability of Multimodal Large Language Models for Hand Fracture Detection: A Multi-Run Evaluation on Plain Radiographs" Diagnostics 16, no. 3: 424. https://doi.org/10.3390/diagnostics16030424

APA StyleGüler, I., Grieb, G., Kraus, A., Lautenbach, M., & Stelling, H. (2026). Diagnostic Accuracy and Stability of Multimodal Large Language Models for Hand Fracture Detection: A Multi-Run Evaluation on Plain Radiographs. Diagnostics, 16(3), 424. https://doi.org/10.3390/diagnostics16030424