Will AI Replace Physicians in the Near Future? AI Adoption Barriers in Medicine

Abstract

1. Introduction

2. Methods

2.1. Eligibility Criteria

2.2. Study Selection and Synthesis

2.3. Data Source and Their Presentation

3. Phenomena Supporting the Potential Replacement of Humans by AI in Medicine

3.1. Empirical Evidence That AI Models Encode Latent, Clinically Relevant Features Beyond Human Annotations

3.2. When Convolutional Neural Networks May Outperform the Human Eye

3.3. Technical Performance and Real-World Validation

4. Barriers to the Full Replacement of Humans by AI Systems in Medical Practice

4.1. Regulatory and Legal Issues as Barriers for AI in Medical Practice

4.2. Human–Machine Interaction Gaps

4.3. Statistical and Out-of-Distribution Generalization Barriers in Medical AI

4.4. Embodied Cognition as a Structural Boundary to Physician Replacement

5. Machine Learning in Radiology—Limitations Implied by Statistics

6. Reliability and Trust in AI-Based Diagnosis and the Role of Physicians

6.1. Hallucinations and the Necessity of Physician Oversight

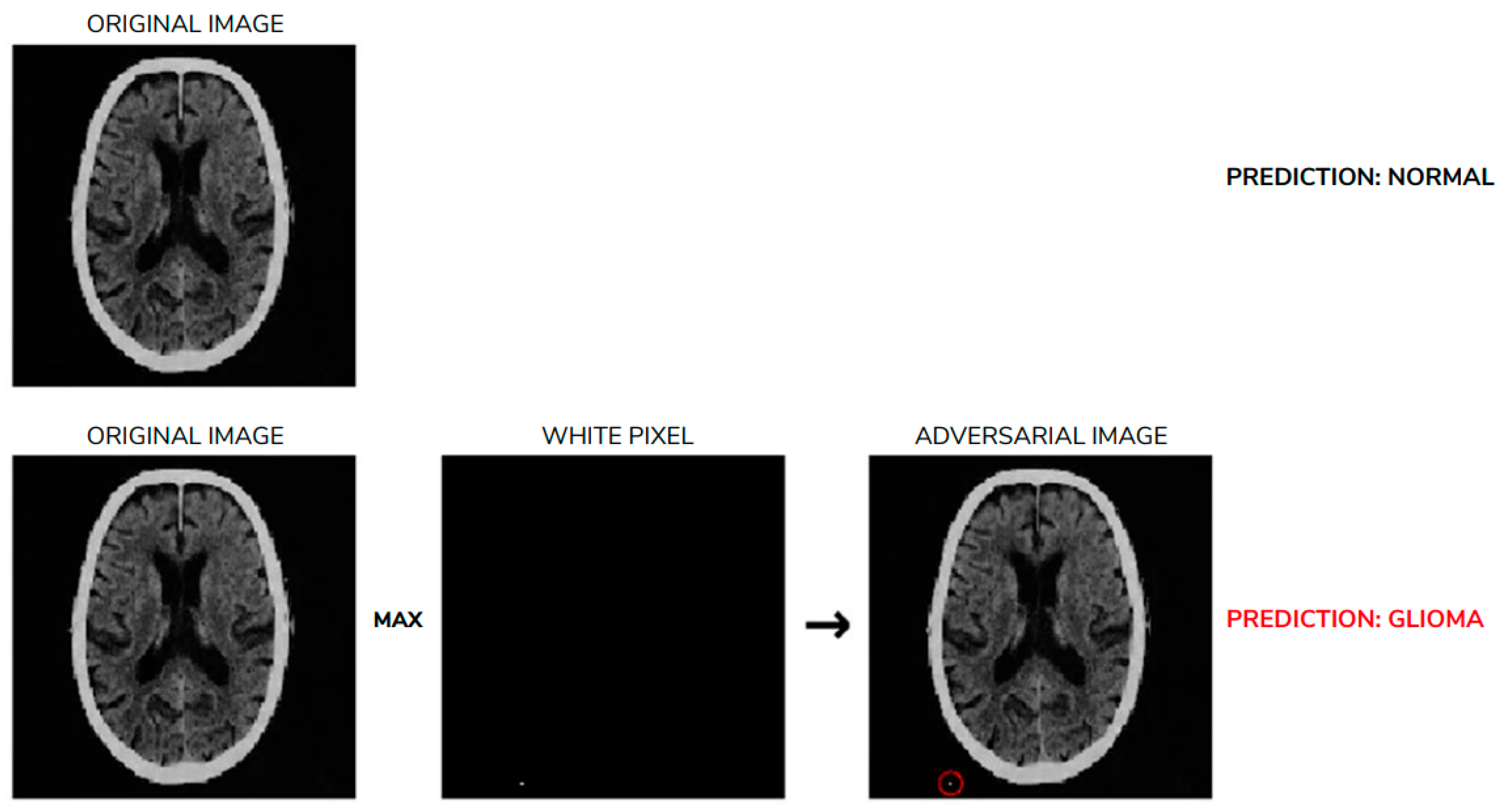

6.2. Heightened Sensitivity to Perturbations in AI-Generated Diagnosis

6.3. Disappointed Hopes

| Clinical Domain | AI Task | Representative System/Study (As cited) | Dataset/Setting | Key Performance Metrics | Interpretation in Context of Physician Replacement |

|---|---|---|---|---|---|

| Radiology (CT) | Intracranial hemorrhage detection | Titano et al., 2018 [2] | Multicenter CT scans | Sensitivity ≈ 0.90–1.00; AUC > 0.90 | High sensitivity supports triage and prioritization; false positives and out-of-distribution risk necessitate radiologist oversight |

| Radiology (CT) | Pulmonary embolism detection | Ardila et al., 2019; FDA-cleared triage tools [8] | CT pulmonary angiography | Sensitivity up to ~0.95; AUC ≈ 0.90–0.94 | Effective for workflow acceleration; not validated for autonomous diagnosis |

| Mammography | Breast cancer detection | Rodríguez-Ruiz et al., 2019 [3]; Salim et al., 2020 [4] | Screening mammography | AUC ≈ 0.94–0.97; sensitivity comparable to expert radiologists | Demonstrates near-expert performance in a narrowly defined task, supporting second-reader or triage use |

| Radiology (X-ray) | Pneumonia and lung pathology detection | Zech et al., 2018 [30] | Single- vs. multi-institution datasets | AUC > 0.90 (internal validation); marked performance drop on external data | Illustrates limited generalization and dependence on training distribution |

| MRI reconstruction | Accelerated image reconstruction | fastMRI challenge (Knoll et al., 2020, Muckley et al. 2020) [96,97] | Multivendor MRI datasets | SSIM often > 0.95; localized hallucinations reported | High quantitative image quality; hallucinated or missing structures preclude unsupervised clinical use |

| Large language models | Medical examinations and diagnostic reasoning | Kung et al., 2023 [23] | USMLE-style examinations | Accuracy > 0.85 on MCQs; misdiagnosis rate ~10–15% in case analyses | Strong associative reasoning; error rate incompatible with autonomous clinical responsibility |

| Colonoscopy | Polyp and adenoma detection | Budzyń et al., 2025 [105] | Multicenter real-world endoscopy | Improved detection during AI assistance; reduced vigilance after withdrawal (20%) | benefit with documented risk of physician deskilling |

| Sepsis prediction | clinical deterioration prediction | Spittal et al., 2025 [104] | EHR-based predictive models | AUROC moderate; limited clinical benefit | Illustrates gap between predictive performance and outcome improvement |

| Imaging Modality | Clinical Task | AI Approach (as Reported) | Representative Performance Metrics * | Clinical Interpretation |

|---|---|---|---|---|

| MRI | Brain tumor detection and classification | Convolutional neural networks (CNNs) | AUC ≈ 0.88–0.97; F1-score ≈ 0.88–0.97 | Near-expert performance in well-defined neuro-oncologic tasks under controlled conditions |

| MRI | Accelerated image reconstruction | Deep learning reconstruction networks | SSIM > 0.95; PSNR improvement reported | High quantitative image quality, with a documented risk of hallucinated or missing structures |

| CT | Lung cancer screening and nodule detection | 3D CNN-based detection models | AUC ≈ 0.90–0.94 | Effective for detection and triage; performance sensitive to dataset composition and domain shift |

| CT | Intracranial hemorrhage detection | CNN-based classification and triage systems | Sensitivity ≈ 0.90–1.00; AUC > 0.90 | High sensitivity supports emergency triage but does not eliminate false positives |

| X-ray (Chest) | Pneumonia, COVID-19, and tuberculosis detection | CNN architectures (e.g., ResNet, VGG) | F1-score ≈ 0.95–0.99 (internal validation) | Excellent in-distribution performance with reduced robustness across institutions |

| Mammography | Breast cancer detection | Deep learning CAD systems | AUC ≈ 0.94–0.97 | Comparable to expert radiologists in screening settings; typically used as a second reader |

| Ultrasound | Musculoskeletal and joint assessment | CNN-based segmentation and classification | F1-score ≈ 0.84–0.91 | Promising results, but limited generalization and operator dependence |

| Histopathology (WSI) | Tumor classification and mutation prediction | CNN and weakly supervised deep learning | AUC ≈ 0.90–0.99 (task-dependent) | Strong performance in subtype classification; translation limited by domain shift |

| Multimodal (Imaging + text) | Diagnostic reasoning and reporting | LLM-assisted systems | Misdiagnosis rate ≈ 0.10–0.15 | Useful for documentation and decision support, not autonomous diagnosis |

7. Discussion

7.1. Accuracy Is Not Equivalence: Statistical Performance Versus Clinical Responsibility

7.2. Generalization and the Problem of the Unknown

7.3. Embodiment as a Non-Negotiable Dimension of Medical Practice

7.4. Legal and Ethical Constraints Are Not Externalities

7.5. Augmentation, Not Replacement, as the Clinically Coherent Trajectory

7.6. Relative Clinical Maturity of Imaging AI and Large Language Models

7.7. Patient-Centered Clinical Outcomes When AI Is Used

7.8. Technological and Implementation Challenges

8. Conclusions

8.1. Implications Supporting the Clinical Use of AI

8.2. Constraints Limiting Full Physician Replacement

8.3. Future Directions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| ACC | Accuracy |

| AI | Artificial Intelligence |

| AUC | Area Under the Curve (ROC) |

| AUROC | Area Under the Receiver Operating Characteristic Curve |

| BACC | Balanced Accuracy |

| BERT | Bidirectional Encoder Representations from Transformers |

| CDSS | Clinical Decision Support System(s) |

| CE (marking) | Conformité Européenne |

| CNN/CNNs | Convolutional Neural Network(s) |

| CT | Computed Tomography |

| ECG | Electrocardiogram |

| EU | European Union |

| F1Score/F1 | Harmonic Mean of Precision and Recall |

| GPT | Generative Pretrained Transformer |

| HbA1c | Glycated Hemoglobin A1c |

| IT | Information Technology |

| LLM/LLMs | Large Language Model(s) |

| MAE | Mean Absolute Error |

| MSKCC | Memorial Sloan Kettering Cancer Center |

| MRI/MR | Magnetic Resonance Imaging/Magnetic Resonance |

| OOD | Out of Distribution |

| PACS | Picture Archiving and Communication Systems |

| PET | Positron Emission Tomography |

| ROI | Return on Investments |

| RIS | Radiology Information System |

| TCAV | Testing with Concept Activation Vectors |

| USMLE | United States Medical Licensing Examination |

| WER | Word Error Rate |

| XAI | Explainable AI |

References

- Nicholson Price, W.N., II; Gerke, S.; Cohen, I.G. Potential Liability for Physicians Using Artificial Intelligence. JAMA 2019, 322, 1765–1766. [Google Scholar] [CrossRef]

- Titano, J.J.; Badgeley, M.; Schefflein, J.; Pain, M.; Su, A.; Cai, M.; Swinburne, N.; Zech, J.; Kim, J.; Bederson, J.; et al. Automated deep-neural-network surveillance of cranial images for acute neurologic events. Nat. Med. 2018, 24, 1337–1341. [Google Scholar] [PubMed]

- Rodríguez-Ruiz, A.; Lång, K.; Gubern-Mérida, A.; Broeders, M.; Gennaro, G.; Clauser, P.; Helbich, T.H.; Chevalier, M.; Tan, T.; Mertelmeier, T.; et al. Stand-alone artificial intelligence for breast cancer detection in mammography: Comparison with 101 radiologists. J. Natl. Cancer Inst. 2019, 111, 916–922. [Google Scholar] [PubMed]

- Salim, M.; Wåhlin, E.; Dembrower, K.; Azavedo, E.; Foukakis, T.; Liu, Y.; Smith, K.; Eklund, M.; Strand, F. External Evaluation of 3 Commercial Artificial Intelligence Algorithms for Independent Assessment of Screening Mammograms. JAMA Oncol. 2020, 6, 1581–1588. [Google Scholar] [CrossRef] [PubMed]

- Kim, H.-E.; Kim, H.H.; Han, B.-K.; Kim, K.H.; Han, K.; Nam, H.; Lee, E.H.; Kim, E.-K. Changes in cancer detection and false-positive recall in mammography using artificial intelligence: A retrospective, multireader study. Lancet Digit. Health 2020, 2, e138–e148. [Google Scholar] [CrossRef]

- Litjens, G.; Kooi, T.; Bejnordi, B.E.; Arindra, A.A.; Setio, A.A.A.; Ciompi, F.; Ghafoorian, M.; van der Laak, J.A.W.M.; Ginneken, B.; van Sánchez, C.I. A survey on deep learning in medical image analysis. Med. Image Anal. 2017, 42, 60–88. [Google Scholar]

- Choy, G.; Khalilzadeh, O.; Michalski, M.; Do, S.; Samir, A.E.; Pianykh, O.S.; Geis, J.R.; Pandharipande, P.V.; Brink, J.A.; Dreyer, K.J. Current applications and future impact of machine learning in radiology. Radiology 2018, 288, 318–328. [Google Scholar] [CrossRef]

- Ardila, D.; Kiraly, A.P.; Bharadwaj, S.; Choi, B.; Reicher, J.J.; Peng, L.; Tse, D.; Etemadi, M.; Ye, W.; Corrado, G.; et al. End to end lung cancer screening with three dimensional deep learning on low dose chest computed tomography. Nat. Med. 2019, 25, 954–961. [Google Scholar] [CrossRef]

- Yoon, S.H.; Kim, K.W.; Goo, J.M.; Kim, D.W.; Hahn, S. Observer variability in RECIST based tumour burden measurements: A metanalysis. Eur. J. Cancer 2016, 53, 5–15. [Google Scholar] [CrossRef]

- Dahm, I.C.; Kolb, M.; Altmann, S.; Nikolaou, K.; Gatidis, S.; Othman, A.E.; Hering, A.; Moltz, J.H.; Peisen, F. Reliability of Automated RECIST 1.1 and Volumetric RECIST Target Lesion Response Evaluation in Follow-Up CT—A Multi-Center, Multi-Observer Reading Study. Cancers 2024, 16, 4009. [Google Scholar]

- Pyrros, A.; Borstelmann, S.M.; Mantravadi, R.; Zaiman, Z.; Thomas, K.; Price, B.; Greenstein, E.; Siddiqui, N.; Willis, M.; Shulhan, I.; et al. Opportunistic detection of type 2 diabetes using deep learning from frontal chest radiographs. Nat. Commun. 2023, 14, 4039. [Google Scholar] [CrossRef]

- Yang, C.-Y.; Pan, Y.-J.; Chou, Y.; Yang, C.-J.; Kao, C.-C.; Huang, K.-C.; Chang, J.-S.; Chen, H.-C.; Kuo, K.-H. Using Deep Neural Networks for Predicting Age and Sex in Healthy Adult Chest Radiographs. J. Clin. Med. 2021, 10, 4431. [Google Scholar] [CrossRef] [PubMed]

- Ieki, H.; Ito, K.; Saji, M.; Kawakami, R.; Nagatomo, Y.; Takada, K.; Kariyasu, T.; Machida, H.; Koyama, S.; Yoshida, H.; et al. Deep learning-based age estimation from chest X-rays indicates cardiovascular prognosis. Commun. Med. 2022, 2, 159. [Google Scholar] [CrossRef] [PubMed]

- Adleberg, J.; Wardeh, A.; Doo, F.X.; Marinelli, B.; Cook, T.S.; Mendelson, D.S.; Kagen, A. Predicting Patient Demographics From Chest Radiographs With Deep Learning. J. Am. Coll. Radiol. 2022, 19, 1151–1161. [Google Scholar] [CrossRef] [PubMed]

- Gichoya, J.W.; Banerjee, I.; Bhimireddy, A.R.; Burns, J.L.; Celi, L.A.; Chen, L.-C.; Correa, R.; Dullerud, N.; Ghassemi, M.; Huang, S.-C.; et al. AI recognition of patient race in medical imaging: A modelling study. Lancet Digit. Health 2022, 4, e406–e414. [Google Scholar] [CrossRef]

- Poplin, R.; Varadarajan, A.V.; Blumer, K.; Liu, Y.; McConnell, M.V.; Corrado, G.S.; Peng, L.; Webster, D.R. Prediction of cardiovascular risk factors from retinal fundus photographs via deep learning. Nat. Biomed. Eng. 2018, 2, 158–164. [Google Scholar] [CrossRef]

- Mitani, A.; Liu, Y.; Huang, A.; Corrado, G.S.; Peng, L.; Webster, D.R.; Hammel, N.; Varadarajan, A.V. Detecting anemia from retinal fundus images. arXiv 2019, arXiv:1904.06435. [Google Scholar] [CrossRef]

- Brix, M.A.K.; Järvinen, J.; Bode, M.K.; Nevalainen, M.; Nikki, M.; Niinimäki, J.; Lammentausta, E. Financial impact of incorporating deep learning reconstruction into magnetic resonance imaging routine. Eur. J. Radiol. 2024, 175, 111434. [Google Scholar] [CrossRef]

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. BERT: Pre-training of deep bidirectional transformers for language understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (NAACL-HLT 2019), Minneapolis, MN, USA, 2–7 June 2019; pp. 4171–4186. [Google Scholar]

- Nori, H.; King, N.; McKinney, S.M.; Carignan, D.; Horvitz, E. Capabilities of GPT-4 on medical challenge problems. Sci. Rep. 2023, 13, 43436. [Google Scholar]

- Lucas, H.C.; Upperman, J.S.; Robinson, J.R. A systematic review of large language models and their implications in medical education. Med. Educ. 2024, 58, 1276–1285. [Google Scholar] [CrossRef]

- Ayers, J.W.; Poliak, A.; Dredze, M.; Leas, E.C.; Zhu, Z.; Kelley, J.B.; Faix, D.J.; Goodman, A.M.; Longhurst, C.A.; Hogarth, M.; et al. Comparing physician and artificial intelligence chatbot responses to patient questions posted to a public social media forum. JAMA Intern. Med. 2023, 183, 589–596. [Google Scholar] [CrossRef] [PubMed]

- Kung, T.H.; Cheatham, M.; Medenilla, A.; Sillos, C.; De Leon, L.; Elepaño, C.; Madriaga, M.; Aggabao, R.; Diaz-Candido, G.; Maningo, J.; et al. Performance of ChatGPT on USMLE: Potential for AI-assisted medical education using large language models. PLoS Digit. Health 2023, 2, e0000198. [Google Scholar] [CrossRef] [PubMed]

- De Fauw, J.; Ledsam, J.R.; Romera-Paredes, B.; Nikolov, S.; Tomasev, N.; Blackwell, S.; Askham, H.; Glorot, X.; O’Donoghue, B.; Visentin, D.; et al. Clinically applicable deep learning for diagnosis and referral in retinal disease. Nat. Med. 2018, 24, 1342–1350. [Google Scholar] [CrossRef] [PubMed]

- Rim, T.H.; Lee, C.J.; Tham, Y.C.; Cheung, N.; Yu, M.; Lee, G.; Kim, Y.; Ting, D.S.W.; Chong, C.C.Y.; Choi, Y.S.; et al. Deep-learning-based cardiovascular risk stratification using coronary artery calcium scores predicted from retinal photographs. Lancet Digit. Health 2021, 3, e360–e370. [Google Scholar] [CrossRef]

- Coudray, N.; Ocampo, P.S.; Sakellaropoulos, T.; Narula, N.; Snuderl, M.; Fenyö, D.; Moreira, A.L.; Razavian, N.; Tsirigos, A. Classification and mutation prediction from non–small cell lung cancer histopathology images using deep learning. Nat. Med. 2018, 24, 1559–1567. [Google Scholar] [CrossRef]

- Kather, J.N.; Heij, L.R.; Grabsch, H.I.; Loeffler, C.; Echle, A.; Muti, H.S.; Krause, J.; Niehues, J.M.; Sommer, K.A.J.; Bankhead, P.; et al. Pan-cancer image-based detection of clinically actionable genetic alterations. Nat. Cancer 2020, 1, 789–799. [Google Scholar] [CrossRef]

- Mobadersany, P.; Yousefi, S.; Amgad, M.; Gutman, D.A.; Barnholtz-Sloan, J.S.; Velázquez Vega, J.E.; Brat, D.J.; Cooper, L.A.D. Predicting cancer outcomes from histology and genomics using convolutional networks. Proc. Natl. Acad. Sci. USA 2018, 115, E2970–E2979. [Google Scholar] [CrossRef]

- Lu, M.Y.; Williamson, D.F.K.; Chen, T.Y.; Chen, R.J.; Barbieri, M.; Mahmood, F. Data-efficient and weakly supervised computational pathology on whole slide images. Nat. Biomed. Eng. 2021, 5, 555–570. [Google Scholar] [CrossRef]

- Zech, J.R.; Badgeley, M.A.; Liu, M.; Costa, A.B.; Titano, J.J.; Oermann, E.K. Variable generalization performance of deep learning models for detecting pneumonia on chest radiographs: A cross-domain study. PLoS Med. 2018, 15, e1002683. [Google Scholar] [CrossRef]

- Obuchowicz, R.; Strzelecki, M.; Piórkowski, A. Clinical Applications of Artificial Intelligence in Medical Imaging and Image Processing—A Review. Cancers 2024, 16, 1870. [Google Scholar] [CrossRef]

- Azizi, S.; Culp, L.; Freyberg, J.; Mustafa, B.; Baur, S.; Kornblith, S.; Chen, T.; Tomasev, N.; Mitrović, J.; Strachan, P.; et al. Robust and efficient medical imaging with self-supervision. Nat. Biomed. Eng. 2023, 7, 545–557. [Google Scholar] [CrossRef]

- He, K.; Fan, H.; Wu, Y.; Xie, S.; Girshick, R. Momentum contrast for unsupervised visual representation learning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 9729–9738. [Google Scholar]

- Raghu, M.; Zhang, C.; Kleinberg, J.; Bengio, S. Transfusion: Understanding transfer learning for medical imaging. In Proceedings of the 33rd Conference on Neural Information Processing Systems (NeurIPS 2019), Vancouver, BC, Canada, 8–14 December 2019; Volume 32. [Google Scholar]

- Seyyed-Kalantari, L.; Zhang, H.; McDermott, M.B.A.; Chen, I.Y.; Ghassemi, M. Underdiagnosis bias of AI algorithms in chest radiograph interpretation. Nat. Med. 2021, 27, 2172–2176. [Google Scholar] [CrossRef] [PubMed]

- Selvaraju, R.R.; Cogswell, M.; Das, A.; Vedantam, R.; Parikh, D.; Batra, D. Batra, Grad-CAM: Visual Explanations from Deep Networks via Gradient-Based Localization. In Proceedings of the 2017 IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 618–626. [Google Scholar]

- Adebayo, J.; Gilmer, J.; Muelly, M.; Goodfellow, I.; Hardt, M.; Kim, B. Sanity checks for saliency maps. In Proceedings of the 32nd Conference on Neural Information Processing Systems (NeurIPS 2018), Montreal, QC, Canada, 2–8 December 2018; Volume 3. [Google Scholar]

- Arun, N.; Gaw, N.; Singh, P.; Chang, K.; Aggarwal, M.; Chen, B.; Hoebel, K.; Gupta, S.; Patel, J.; Gidwani, M.; et al. Assessing the trustworthiness of saliency maps for localizing abnormalities in medical imaging. Radiol. Artif. Intell. 2021, 3, e210001. [Google Scholar] [CrossRef] [PubMed]

- Kim, B.; Wattenberg, M.; Gilmer, J.; Cai, C.; Wexler, J.; Viegas, F.; Sayres, R. Interpretability beyond feature attribution: Testing with Concept Activation Vectors (TCAV). In Proceedings of the International Conference on Machine Learning, Stockholm, Sweden, 10–15 July 2018; pp. 2668–2677. [Google Scholar]

- Ghorbani, A.; Wexler, J.; Zou, J.Y.; Kim, B. Towards automatic concept-based explanations. In Proceedings of the 33rd Conference on Neural Information Processing Systems (NeurIPS 2019), Vancouver, BC, Canada, 8–14 December 2019; Volume 32. [Google Scholar]

- Dalton-Brown, S. The ethics of medical AI and the physician-patient relationship. Camb. Q. Healthc. Ethics 2020, 29, 115–121. [Google Scholar] [CrossRef] [PubMed]

- Bleher, H.; Braun, M. Diffused responsibility: Attributions of responsibility in the use of AI-driven clinical decision support systems. AI Ethics 2022, 2, 747–761. [Google Scholar] [CrossRef]

- European Parliament. European Parliament resolution of 16 February 2017 with recommendations to the Commission on Civil Law Rules on Robotics [2015/2103[INL]]. Off. J. Eur. Union 2018, 252, 239–257. [Google Scholar]

- Regulation (EU) 2024/1689 of 13 June 2024 Laying Down Harmonised Rules on Artificial Intelligence (Artificial Intelligence Act). Available online: https://eur-lex.europa.eu/legal-content/EN/TXT/PDF/?uri=OJ:L_202401689 (accessed on 27 September 2025).

- Evans, J.S.B.; Stanovich, K.E. Dual-process theories of higher cognition: Advancing the debate. Perspect. Psychol. Sci. 2013, 8, 223–241. [Google Scholar] [CrossRef]

- Obermeyer, Z.; Powers, B.; Vogeli, C.; Mullainathan, S. Dissecting racial bias in an algorithm used to manage the health of populations. Science 2019, 366, 447–453. [Google Scholar] [CrossRef]

- Brooks, R.A. Intelligence without representation. Artif. Intell. 1991, 47, 139–159. [Google Scholar] [CrossRef]

- Liu, Y.; Cao, X.; Chen, T.; Jiang, Y.; You, J.; Wu, M.; Wang, X.; Feng, M.; Jin, Y.; Chen, J. A survey of embodied ai in healthcare: Techniques, applications, and opportunities. arXiv 2025, arXiv:2501.07468. [Google Scholar] [CrossRef]

- Verghese, A.; Charlton, B.; Kassirer, J.P.; Ramsey, M.; Ioannidis, J.P.A. Inadequacies of physical examination as a cause of medical errors and adverse events. JAMA 2011, 306, 372–378. [Google Scholar]

- Patel, V.L.; Shortliffe, E.H.; Stefanelli, M.; Szolovits, P.; Berthold, M.R.; Bellazzi, R.; Abu-Hanna, A. The coming of age of artificial intelligence in medicine. Artif. Intell. Med. 2015, 65, 5–17. [Google Scholar] [CrossRef] [PubMed]

- Okamura, A.M. Haptic feedback in robot-assisted minimally invasive surgery. Curr. Opin. Urol. 2009, 19, 102–107. [Google Scholar] [CrossRef]

- Zhu, Y.; Moyle, W.; Hong, M.; Aw, K. From Sensors to Care: How Robotic Skin Is Transforming Modern Healthcare—A Mini Review. Sensors 2025, 25, 2895. [Google Scholar] [CrossRef]

- Flanagan, J.R.; Bowman, M.C.; Johansson, R.S. Control strategies in object manipulation tasks. Curr. Opin. Neurobiol. 2006, 16, 650–659. [Google Scholar] [CrossRef]

- Pfeifer, R.; Bongard, J. How the Body Shapes the Way We Think: A New View of Intelligence; MIT Press: Cambridge, MA, USA, 2007. [Google Scholar]

- Mohan, V.G.; Ameedeen, M.A.; Mubarak-Ali, A.F. Navigating the Divide: Exploring the Boundaries and Implications of Artificial Intelligence Compared to Human Intelligence. 2024. Available online: https://ssrn.com/abstract=4788544 (accessed on 27 September 2025).

- Riek, L.D.; Howard, D. A code of ethics for the human-robot interaction profession. Proc. IEEE 2014, 102, 703–716. [Google Scholar]

- Vasiliuk, A.; Frolova, D.; Belyaev, M.; Shirokikh, B. Limitations of Out of Distribution Detection in 3D Medical Image Segmentation. J. Imaging 2023, 9, 191. [Google Scholar] [CrossRef]

- Somashekhar, S.P.; Sepúlveda, M.J.; Puglielli, S.; Norden, A.D.; Shortliffe, E.H.; Kumar, C.R.; Rauthan, A.; Kumar, N.A.; Patil, P.; Rhee, K.; et al. Watson for Oncology and Breast Cancer Treatment Recommendations: Agreement With an Expert Multidisciplinary Tumor Board. Ann. Oncol. 2018, 29, 418–423. [Google Scholar] [CrossRef]

- Zhu, E.; Muneer, A.; Zhang, J.; Xia, Y.; Li, X.; Zhou, C.; Heymach, J.V.; Wu, J.; Le, X. Progress and challenges of artificial intelligence in lung cancer clinical translation. NPJ Precis. Oncol. 2025, 9, 210. [Google Scholar] [CrossRef]

- Wang, Y.; Zhong, W.; Li, L.; Mi, F.; Zeng, X.; Huang, W.; Shang, L.; Jiang, X.; Liu, Q. Aligning large language models with human: A survey. arXiv 2023, arXiv:2307.12966. [Google Scholar] [CrossRef]

- Pacheco Barrios, K.; Ortega Márquez, J.; Fregni, F. Haptic technology: Exploring its underexplored clinical applications—A systematic review. Biomedicines 2024, 12, 2802. [Google Scholar] [CrossRef] [PubMed]

- Colan, J.; Davila, A.; Hasegawa, Y. Tactile feedback in robot assisted minimally invasive surgery: A systematic review. Int. J. Med. Robot. 2024, 20, e70019. [Google Scholar] [CrossRef] [PubMed]

- Leszczyńska, A.; Obuchowicz, R.; Strzelecki, M.; Seweryn, M. The Integration of Artificial Intelligence into Robotic Cancer Surgery: A Systematic Review. J. Clin. Med. 2025, 14, 6181. [Google Scholar] [CrossRef] [PubMed]

- Hulleck, A.A.; Menoth Mohan, D.; Abdallah, N.; El Rich, M.; Khalaf, K. Present and future of gait assessment in clinical practice: Towards the application of novel trends and technologies. Front. Med. Technol. 2022, 4, 901331. [Google Scholar] [CrossRef]

- Chai, P.R.; Dadabhoy, F.Z.; Huang, H.W.; Chu, J.N.; Feng, A.; Le, H.M.; Collins, J.; da Silva, M.; Raibert, M.; Hur, C.; et al. Assessment of the acceptability and feasibility of using mobile robotic systems for patient evaluation. JAMA Netw. Open 2021, 4, e210667. [Google Scholar] [CrossRef]

- Chow, W.; Mao, J.; Li, B.; Seita, D.; Guizilini, V.; Wang, Y. Physbench: Benchmarking and enhancing vision-language models for physical world understanding. arXiv 2025, arXiv:2501.16411. [Google Scholar]

- Rudman, W.; Golovanevsky, M.; Bar, A.; Che, W.; Nabende, J.; Shutova, E.; Pilehvar, M.T. Forgotten polygons: Multimodal large language models are shape-blind. In Findings of the Association for Computational Linguistics: ACL 2025; Association for Computational Linguistics: Vienna, Austria, 2025; pp. 11983–11998. [Google Scholar] [CrossRef]

- Weihs, L.; Yuile, A.; Baillargeon, R.; Fisher, C.; Marcus, G.; Mottaghi, R.; Kembhavi, A. Benchmarking progress to infant-level physical reasoning in AI. Trans. Mach. Learn. Res. 2022, 2022. [Google Scholar]

- Bansal, G.; Chamola, V.; Hussain, A.; Guizani, M.; Niyato, D. Transforming conversations with AI—A comprehensive study of ChatGPT. Cogn. Comput. 2024, 16, 2487–2510. [Google Scholar] [CrossRef]

- Moravec, H.P. Sensor fusion in certainty grids for mobile robots. AI Mag. 1988, 9, 61. [Google Scholar]

- Lake, B.M.; Ullman, T.D.; Tenenbaum, J.B.; Gershman, S.J. Building machines that learn and think like people. Behav. Brain Sci. 2017, 40, e253. [Google Scholar] [CrossRef]

- Luo, X.; Liu, D.; Dang, F.; Luo, H. Integration of LLMs and the physical world: Research and application. In Proceedings of the ACM Turing Award Celebration Conference, Changsha, China, 1–5 July 2024. [Google Scholar]

- Xu, H.; Han, L.; Yang, Q.; Li, M.; Srivastava, M. Penetrative AI: Making LLMs comprehend the physical world. In Proceedings of the 25th International Workshop on Mobile Computing Systems and Applications, San Diego, CA, USA, 1–7 February 2024. [Google Scholar]

- Zhang, C.; Wong, L.; Grand, G.; Tenenbaum, J. Grounded physical language understanding with probabilistic programs and simulated worlds. In Proceedings of the Annual Meeting of the Cognitive Science Society, Sydney, Australia, 26–29 July 2023; Volume 45. [Google Scholar]

- Bakhtin, A.; van der Maaten, L.; Johnson, J.; Gustafson, L.; Girshick, R. Phyre: A new benchmark for physical reasoning. In Proceedings of the 33rd Conference on Neural Information Processing Systems (NeurIPS 2019), Vancouver, BC, Canada, 8–14 December 2019; Volume 32. [Google Scholar]

- Cherian, A.; Corcodel, R.; Jain, S.; Romeres, D. LLMphy: Complex physical reasoning using large language models and world models. arXiv 2024, arXiv:2411.08027. [Google Scholar] [CrossRef]

- Brown, T.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; et al. Language models are few-shot learners. In Proceedings of the 34th Conference on Neural Information Processing Systems (NeurIPS 2020), Vancouver, BC, Canada, 6–12 December 2020; Volume 33. [Google Scholar] [CrossRef]

- Lin, S.; Hilton, J.; Evans, O. TruthfulQA: Measuring how models mimic human falsehoods. In Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics [ACL 2022], Dublin, Ireland, 22–27 May 2022. [Google Scholar] [CrossRef]

- Rohatgi, V.K. Statistical Inference; John Wiley & Sons: New York, NY, USA, 1984; ISBN 978-0-471-87126-2. [Google Scholar]

- Asok, C.; Sukhatme, B.V. On Sampford’s procedure of unequal probability sampling without replacement. J. Am. Stat. Assoc. 1976, 87, 912–918. [Google Scholar] [CrossRef]

- Bielecki, A.; Nieszporska, S. The proposal of philosophical basis of the health care system. Med. Health Care Philos. 2016, 20, 23–35. [Google Scholar] [CrossRef] [PubMed]

- Joel, M.Z.; Umaro, S.; Chang, E.; Choi, R.; Yang, D.X.; Duncan, J.S. Using adversarial images to assess the robustness of deep learning models trained on diagnostic images in oncology. JCO Clin. Cancer Inform. 2022, 6, e2100170. [Google Scholar] [CrossRef]

- Kilim, O.; Olar, A.; Joó, T.; Palicz, T.; Pollner, P.; Csabai, I. Physical imaging parameter variation drives domain shift. Sci. Rep. 2022, 12, 21302. [Google Scholar] [CrossRef]

- Bielecka, M.; Bielecki, A.; Suchorab, A.; Wojnicki, I. Hierarchical structural information—Theory and applications. In Proceedings of the 25th International Conference on Computational Science ICCS 2025, Singapore, 7–9 July 2025; Lecture Notes in Computer Science. Volume 15905, pp. 48–59. [Google Scholar]

- Huang, S.-C.; Pareek, A.; Seyyedi, S.; Banerjee, I.; Lungren, M.P. Fusion of medical imaging and electronic health records using deep learning: A systematic review and implementation guidelines. NPJ Digit. Med. 2020, 3, 136. [Google Scholar] [CrossRef]

- Simon, B.D.; Ozyoruk, K.B.; Gelikman, D.G.; Harmon, S.A.; Türkbey, B. The future of multimodal artificial intelligence models for integrating imaging and clinical metadata: A narrative review. Diagn. Interv. Radiol. 2025, 31, 303–312. [Google Scholar] [CrossRef]

- Tjoa, E.; Guan, C. A survey on explainable artificial intelligence (XAI): Toward medical XAI. IEEE Trans. Neural Netw. Learn. Syst. 2021, 32, 4793–4813. [Google Scholar] [CrossRef]

- Oakden-Rayner, L.; Dunnmon, J.; Carneiro, G.; Ré, C. Hidden stratification causes clinically meaningful failures in machine learning for medical imaging. NPJ Digit. Med. 2020, 3, 75. [Google Scholar]

- Raisuddin, A.M.; Vaattovaara, E.; Nevalainen, M.; Nikki, M.; Järvenpää, E.; Makkonen, K.; Pinola, P.; Palsio, T.; Niemensivu, A.; Tervonen, O.; et al. Critical evaluation of deep neural networks for wrist fracture detection. Sci. Rep. 2021, 11, 6006. [Google Scholar] [CrossRef]

- Koçak, B.; Ponsiglione, A.; Stanzione, A.; Bluethgen, C.; Santinha, J.; Ugga, L.; Huisman, M.; Klontzas, M.E.; Cannella, R.; Cuocolo, R. Bias in artificial intelligence for medical imaging: Fundamentals, detection, avoidance, mitigation, challenges, ethics, and prospects. Diagn. Interv. Radiol. 2025, 31, 75–88. [Google Scholar] [CrossRef] [PubMed]

- Till, T.; Scherkl, M.; Stranger, N.; Singer, G.; Hankel, S.; Flucher, C.; Hržić, F.; Štajduhar, I.; Tschauner, S. Impact of test set composition on AI performance in pediatric wrist fracture detection in X-rays. Eur. Radiol. 2025, 35, 6853–6864. [Google Scholar] [CrossRef] [PubMed]

- Ramiah, D.; Mmereki, D. Synthesizing Efficiency Tools in Radiotherapy to Increase Patient Flow: A Comprehensive Literature. Clin. Med. Insights Oncol. 2024, 18, 11795549241303606. [Google Scholar] [CrossRef] [PubMed]

- Zweibel, S.L.; Bertsimas, D.; Ning, C.; McMahon, S. PO-05-166 AI-enabled multi-class diagnosis of left ventricular ejection fraction from electrocardiograms. Heart Rhythm 2025, 22, S540. [Google Scholar] [CrossRef]

- Zang, P.; Wang, C.; Hormel, T.T.; Bailey, S.T.; Hwang, T.S.; Jia, Y. Clinically explainable disease diagnosis based on biomarker activation map. IEEE Trans. Bio-Med. Eng. 2025. Online ahead of print. [Google Scholar] [CrossRef]

- Smith, A.L.; Greaves, F.; Panch, T. Hallucination or confabulation? Neuroanatomy as metaphor in large language models. PLoS Digit. Health 2023, 2, e0000388. [Google Scholar] [CrossRef]

- Knoll, F.; Murrell, T.; Sriram, A.; Yakubova, N.; Zbontar, J.; Rabbat, M.; Defazio, A.; Muckley, M.J.; Sodickson, D.K.; Zitnick, C.L.; et al. Advancing machine learning for MR image reconstruction with an open competition: Overview of the 2019 fastMRI challenge. Magn. Reson. Med. 2020, 84, 3054–3070. [Google Scholar] [CrossRef]

- Muckley, M.J.; Riemenschneider, B.; Radmanesh, A.; Kim, S.; Jeong, G.; Ko, J.; Jun, Y.; Shin, H.; Hwang, D.; Mostapha, M.; et al. Results of the 2020 fastMRI Challenge for Machine Learning MR Image Reconstruction. IEEE Trans. Med. Imaging 2021, 40, 2306–2317. [Google Scholar] [CrossRef] [PubMed] [PubMed Central]

- Bhadra, S.; Kelkar, V.A.; Brooks, F.J.; Anastasio, M.A. On hallucinations in tomographic image reconstruction. IEEE Trans. Med. Imaging 2021, 40, 3249–3260. [Google Scholar] [CrossRef]

- Hauptmann, A.; Arridge, S.; Lucka, F.; Muthurangu, V.; Steeden, J.A. Real-time cardiovascular MR with spatio-temporal artifact suppression using deep learning–proof of concept in congenital heart disease. Magn. Reson. Med. 2019, 81, 1143–1156. [Google Scholar] [CrossRef]

- Chung, T.; Dillman, J.R. Deep learning image reconstruction: A tremendous advance for clinical MRI but be careful…. Pediatr. Radiol. 2023, 53, 2157–2158. [Google Scholar] [CrossRef]

- Mirzadeh, I.; Alizadeh, K.; Shahrokhi, H.; Tuzel, O.; Bengio, S.; Farajtabar, M. GSM-Symbolic: Understanding the Limitations of Mathematical Reasoning in Large Language Models. arXiv 2024, arXiv:2410.05229. [Google Scholar] [CrossRef]

- Tsai, M.-J.; Lin, P.-Y.; Lee, M.-E. Adversarial Attacks on Medical Image Classification. Cancers 2023, 15, 4228. [Google Scholar] [CrossRef] [PubMed]

- Tajak, W.; Nurzynska, K.; Piórkowski, A. Vulnerability to One-Pixel Attacks of Neural Network Architectures in Medical Image Classification. Bio-Algorithms Med. Syst. 2025, 21, 58–70. [Google Scholar] [CrossRef]

- Spittal, M.J.; Guo, X.A.; Kang, L.; Kirtley, O.J.; Clapperton, A.; Hawton, K.; Kapur, N.; Pirkis, J.; Carter, G. Machine learning algorithms and their predictive accuracy for suicide and self-harm: Systematic review and meta-analysis. PLoS Med. 2025, 22, e1004581. [Google Scholar] [CrossRef]

- Budzyń, K.; Romańczyk, M.; Kitala, D.; Kołodziej, P.; Bugajski, M.; Adami, H.O.; Blom, J.; Buszkiewicz, M.; Halvorsen, N.; Hassan, C.; et al. Endoscopist deskilling risk after exposure to artificial intelligence in colonoscopy: A multicentre, observational study. Lancet Gastroenterol. Hepatol. 2025, 10, 896–903. [Google Scholar] [CrossRef]

- Nurzynska, K.; Piórkowski, A.; Strzelecki, M.; Kociołek, M.; Banyś, R.P.; Obuchowicz, R. Differentiating age and sex in vertebral body CT scans–Texture analysis versus deep learning approach. Biocybern. Biomed. Eng. 2024, 44, 20–30. [Google Scholar] [CrossRef]

- Nurzynska, K.; Wodzinski, M.; Piórkowski, A.; Strzelecki, M.; Obuchowicz, R.; Kamiński, P. Automated determination of hip arthrosis on the Kellgren-Lawrence scale in pelvic digital radiographs scans using machine learning. Comput. Methods Programs Biomed. 2025, 266, 108742. [Google Scholar] [CrossRef]

- Nurzynska, K.; Strzelecki, M.; Piórkowski, A.; Obuchowicz, R. AI in Medical Imaging and Image Processing. J. Clin. Med. 2025, 14, 4153. [Google Scholar] [CrossRef]

- Topol, E.J. High-performance medicine: The convergence of human and artificial intelligence. Nat. Med. 2019, 25, 44–56. [Google Scholar] [CrossRef]

- Sauerbrei, A.; Kerasidou, A.; Lucivero, F.; Hallowell, N. The impact of artificial intelligence on the person-centred, doctor-patient relationship: Some problems and solutions. BMC Med. Inform. Decis. Mak. 2023, 23, 73. [Google Scholar] [CrossRef]

- Marcus, G.; Davis, E. Rebooting AI: Building Artificial Intelligence We Can Trust; Vintage: New York, NY, USA, 2019; pp. 111–127. [Google Scholar]

- Ghassemi, M.; OakdenRayner, L.; Beam, A.L. The false hope of current approaches to explainable artificial intelligence in health care. Lancet Digit. Health 2021, 3, e745–e750. [Google Scholar] [CrossRef]

- Marcus, G. Deep learning: A critical appraisal. arXiv 2018, arXiv:1801.00631. Available online: https://arxiv.org/abs/1801.00631 (accessed on 27 September 2025). [CrossRef]

- Muneer, A.; Waqas, M.; Saad, M.B.; Showkatian, E.; Bandyopadhyay, R.; Xu, H.; Li, W.; Chang, J.Y.; Liao, Z.; Haymaker, C.; et al. From classical machine learning to emerging foundation models: Review on multimodal data integration for cancer Research. arXiv 2025, arXiv:2507.09028. [Google Scholar] [CrossRef]

- Habli, I.; Lawton, T.; Porter, Z. Artificial intelligence in health care: Accountability and safety. J. Law Biosci. 2020, 7, lsaa012. [Google Scholar] [CrossRef] [PubMed] [PubMed Central]

- Topol, E. Deep Medicine: How Artificial Intelligence Can Make Healthcare Human Again; Basic Books: New York, NY, USA, 2019; pp. 20–28. [Google Scholar]

- Muneer, A.; Zhang, K.; Hamdi, I.; Qureshi, R.; Waqas, M.; Fouad, S.; Ali, H.; Anwar, S.M.; Wu, J. Foundation Models in Biomedical Imaging: Turning Hype into Reality. arXiv 2025, arXiv:2512.15808. [Google Scholar] [CrossRef]

- Eisemann, N.; Bunk, S.; Mukama, T.; Baltus, H.; Elsner, S.A.; Gomille, T.; Hecht, G.; Heywang-Köbrunner, S.; Rathmann, R.; Siegmann-Luz, K.; et al. Nationwide real-world implementation of AI for cancer detection in population-based mammography screening. Nat. Med. 2025, 31, 917–924. [Google Scholar] [CrossRef]

- Mohan, B.P.; Facciorusso, A.; Khan, S.R.; Chandan, S.; Kassab, L.L.; Gkolfakis, P.; Tziatzios, G.; Triantafyllou, K.; Adler, D.G. Real-time computer-aided colonoscopy vs. standard colonoscopy: Meta-analysis of randomized controlled trials. Endosc. Int. Open 2020, 8, E1297–E1306. [Google Scholar]

- Wallace, M.B.; Sharma, P.; Bhandari, P.; East, J.; Antonelli, G.; Lorenzetti, R.; Vieth, M.; Speranza, I.; Spadaccini, M.; Desai, M.; et al. Impact of artificial intelligence on miss rate of colorectal neoplasia. Gastroenterology 2022, 163, 295–304.e5. [Google Scholar] [CrossRef]

- Martinez-Gutierrez, J.C.; Kim, Y.; Salazar-Marioni, S.; Tariq, M.B.; Abdelkhaleq, R.; Niktabe, A.; Ballekere, A.N.; Iyyangar, A.S.; Le, M.; Azeem, H.; et al. Automated large-vessel occlusion detection software and workflow optimization. JAMA Neurol. 2023, 80, 1182–1190. [Google Scholar] [CrossRef]

- Hassan, A.E.; Ringheanu, V.M.; Tekle, W.G. The implementation of artificial intelligence significantly reduces door-in-door-out times in a primary care center prior to transfer. Interv. Neuroradiol. 2023, 29, 631–636. [Google Scholar] [CrossRef] [PubMed Central]

- Wong, A.; Otles, E.; Donnelly, J.P.; Krumm, A.; Ghous, M.; McCullough, J.; DeTroyer-Cooley, O.; Pestrue, J.; Phillips, M.; Konye, J.; et al. External validation of a widely implemented proprietary sepsis prediction model. JAMA Intern. Med. 2021, 181, 1065–1070. [Google Scholar] [CrossRef]

- Ozcan, B.B.; Dogan, B.E.; Xi, Y.; Knippa, E.E. Patient perception of artificial intelligence use in interpretation of screening mammograms: A survey study. Radiol. Imaging Cancer 2025, 7, e240290. [Google Scholar] [CrossRef]

- Pesapane, F.; Giambersio, E.; Capetti, B.; Monzani, D.; Grasso, R.; Nicosia, L.; Rotili, A.; Sorce, A.; Meneghetti, L.; Carriero, S.; et al. Patients’ Perceptions and Attitudes to the Use of Artificial Intelligence in Breast Cancer Diagnosis: A Narrative Review. Life 2024, 14, 454. [Google Scholar] [CrossRef] [PubMed]

- Abràmoff, M.D.; Lavin, P.T.; Birch, M.; Shah, N.; Folk, J.C. Pivotal trial of an autonomous AI-based diagnostic system for detection of diabetic retinopathy. NPJ Digit. Med. 2018, 1, 39. [Google Scholar] [CrossRef]

- Obuchowicz, R.; Lasek, J.; Wodziński, M.; Piórkowski, A.; Strzelecki, M.; Nurzynska, K. Artificial Intelligence-Empowered Radiology-Current Status and Critical Review. Diagnostics 2025, 15, 282. [Google Scholar] [CrossRef] [PubMed]

- Steiner, D.F.; Chen, P.H.C.; Mermel, C.H. Closing the translation gap: AI applications in digital pathology. Am. J. Pathol. 2020, 190, 1056–1066. [Google Scholar] [CrossRef]

- Ullah, E.; Parwani, A.; Baig, M.M.; Singh, R. Challenges and barriers of using large language models (LLM) such as ChatGPT for diagnostic medicine with a focus on digital pathology–a recent scoping review. Diagn. Pathol. 2024, 19, 43. [Google Scholar] [CrossRef]

- van Diest, P.J.; Flach, R.N.; van Dooijeweert, C.; Makineli, S.; Breimer, G.E.; Stathonikos, N.; Pham, P.; Nguyen, T.Q.; Veta, M. Pros and cons of artificial intelligence implementation in diagnostic pathology. Histopathology 2024, 84, 924–934. [Google Scholar] [CrossRef]

- Serbánescu, M.S. Best Practices and Pitfalls of Deep Learning in Pathology. Appl. Med. Inform. 2025, 47, S60. [Google Scholar]

- Smiley, A.; Reategui-Rivera, C.M.; Villarreal-Zegarra, D.; Escobar-Agreda, S.; Finkelstein, J. Exploring artificial intelligence biases in predictive models for cancer diagnosis. Cancers 2025, 17, 407. [Google Scholar] [CrossRef] [PubMed]

- Kolla, L. Uses and limitations of artificial intelligence for oncology. Cancer 2024, 130, 2101–2107. [Google Scholar] [CrossRef] [PubMed]

- Fehrmann, R.S.N.; van Kruchten, M.; de Vries, E.G.E. How to critically appraise and direct the trajectory of AI in oncology. ESMO Real World Data Digit. Oncol. 2024, 5, 100066. [Google Scholar] [CrossRef]

- Biondi-Zoccai, G.; Mahajan, A.; Powell, D.; Peruzzi, M.; Carnevale, R.; Frati, G. Advancing cardiovascular care through actionable AI innovation. NPJ Digit. Med. 2025, 8, 249. [Google Scholar] [CrossRef]

- Arends, B.; McCormick, J.; Heus, P.; Van Der Harst, P.; Van Es, R. Barriers and facilitators for implementation of AI algorithms for ECG analysis and triage in patients with chest pain. Eur. Heart J. 2024, 45, ehae666.3487. [Google Scholar] [CrossRef]

- He, J.; Baxter, S.L.; Xu, J.; Xu, J.; Zhou, X.; Zhang, K. The practical implementation of artificial intelligence technologies in medicine. Nat. Med. 2019, 25, 30–36. [Google Scholar] [CrossRef]

- Adams, R.; Henry, K.E.; Sridharan, A.; Soleimani, H.; Zhan, A.; Rawat, N.; Johnson, L.; Hager, D.N.; Cosgrove, S.E.; Markowski, A.; et al. Prospective, multi-site study of patient outcomes after implementation of the TREWS machine learning-based early warning system for sepsis. Nat. Med. 2022, 28, 1455–1460. [Google Scholar] [CrossRef]

- Frank, X. Is Watson for Oncology per se unreasonably dangerous? Making a case for how to prove products liability based on a flawed artificial intelligence design. Am. J. Law. Med. 2019, 45, 273–294. [Google Scholar] [CrossRef]

- Cai, C.J.; Jongejan, J.; Holbrook, J. The effects of example-based explanations in a machine learning interface. In Proceedings of the 24th International Conference on Intelligent User Interfaces [IUI ’19], New York, NY, USA, 17–20 March 2019; Association for Computing Machinery: New York, NY, USA, 2019; pp. 258–262. [Google Scholar] [CrossRef]

- van Leeuwen, K.G.; Schalekamp, S.; Rutten, M.J.C.M.; van Ginneken, B.; de Rooij, M. Artificial intelligence in radiology: 100 commercially available products and their scientific evidence. Eur. Radiol. 2021, 31, 3797–3804. [Google Scholar] [CrossRef]

- Goddard, K.; Roudsari, A.; Wyatt, J.C. Automation bias: Empirical results assessing influencing factors. Int. J. Med. Inform. 2014, 83, 368–375. [Google Scholar] [CrossRef]

- Gaube, S.; Suresh, H.; Raue, M.; Merritt, A.; Berkowitz, S.J.; Lermer, E. Do as AI say: Susceptibility in deployment of clinical decision support systems. NPJ Digit. Med. 2021, 4, 31. [Google Scholar] [CrossRef]

| Category | Physician Function Type | Representative Examples | Current AI Capability | Principal Limitations |

|---|---|---|---|---|

| Largely replaceable (task-level automation) | Highly standardized, data-driven analytic tasks | Image triage, lesion detection, quantitative measurements, structured reporting, documentation drafting | Near-human or superhuman performance in narrowly defined tasks | Limited generalization beyond training data; vulnerability to out-of-distribution cases; requirement for human supervision |

| Augmentable (human-in-the-loop) | Context-dependent cognitive and interpretive tasks | Diagnostic reasoning support, risk stratification, differential diagnosis assistance, treatment planning support | Decision support and recommendation generation | Lack of embodied cognition; limited uncertainty awareness; dependence on clinician judgment and accountability |

| Not automatable (structural human functions) | Embodied, ethical, and responsibility-bearing clinical tasks | Physical examination, palpation, procedural and surgical skills, empathic communication, informed consent, final clinical responsibility | Not achievable with current AI systems | Absence of sensorimotor embodiment, multisensory integration, moral agency, and legal responsibility |

| Clinical Task | Modality | AI Model Type | Representative Study (Ref.) | Reported Performance (Typical) | Key Limitation(s) |

|---|---|---|---|---|---|

| Intracranial hemorrhage detection | CT | Convolutional neural network | Titano et al., 2018 [2] | Sensitivity ≈ 0.90–1.00; AUC > 0.90 | Limited generalization across scanners and institutions; false positives require radiologist oversight |

| Pulmonary embolism detection | CT pulmonary angiography | CNN-based triage systems | Ardila et al., 2019 [8] | AUC ≈ 0.90–0.94; sensitivity up to ~0.95 | Not validated for autonomous diagnosis; performance sensitive to data distribution |

| Breast cancer detection | Mammography | Deep learning CAD systems | Rodríguez-Ruiz et al., 2019 [3]; Salim et al., 2020 [4] | AUC ≈ 0.94–0.97; comparable to expert radiologists | Screening-only context; limited applicability to symptomatic patients |

| Pneumonia detection | Chest X-ray | CNN architectures | Zech et al., 2018 [30] | AUC > 0.90 (internal validation) | Marked performance degradation on external datasets (domain shift) |

| MRI image reconstruction | MRI | Deep learning reconstruction networks | Knoll et al., 2020; fastMRI [96] | SSIM often > 0.95 | Risk of hallucinated or missing anatomical structures |

| Medical knowledge assessment | Text (MCQs) | Large language models | Kung et al., 2023 [23] | Accuracy > 0.85 on USMLE-style exams | Reflects pattern recognition; not equivalent to real-world clinical reasoning |

| Patient-facing written communication | Text | Large language models | Ayers et al., 2023 [22] | Responses rated as detailed and empathetic | Susceptibility to hallucinations; lack of accountability |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Obuchowicz, R.; Piórkowski, A.; Nurzyńska, K.; Obuchowicz, B.; Strzelecki, M.; Bielecka, M. Will AI Replace Physicians in the Near Future? AI Adoption Barriers in Medicine. Diagnostics 2026, 16, 396. https://doi.org/10.3390/diagnostics16030396

Obuchowicz R, Piórkowski A, Nurzyńska K, Obuchowicz B, Strzelecki M, Bielecka M. Will AI Replace Physicians in the Near Future? AI Adoption Barriers in Medicine. Diagnostics. 2026; 16(3):396. https://doi.org/10.3390/diagnostics16030396

Chicago/Turabian StyleObuchowicz, Rafał, Adam Piórkowski, Karolina Nurzyńska, Barbara Obuchowicz, Michał Strzelecki, and Marzena Bielecka. 2026. "Will AI Replace Physicians in the Near Future? AI Adoption Barriers in Medicine" Diagnostics 16, no. 3: 396. https://doi.org/10.3390/diagnostics16030396

APA StyleObuchowicz, R., Piórkowski, A., Nurzyńska, K., Obuchowicz, B., Strzelecki, M., & Bielecka, M. (2026). Will AI Replace Physicians in the Near Future? AI Adoption Barriers in Medicine. Diagnostics, 16(3), 396. https://doi.org/10.3390/diagnostics16030396