Clinical Effectiveness of an Artificial Intelligence-Based Prediction Model for Cardiac Arrest in General Ward-Admitted Patients: A Non-Randomized Controlled Trial

Abstract

1. Introduction

2. Methods

2.1. Study Design and Population

2.2. Intervention

2.2.1. AI-SaMD

2.2.2. AI-SaMD Integration in Clinical Flow

2.3. Study Outcomes

2.4. Data Collection and Preprocessing

2.5. Statistical Analysis

2.6. Secondary Analysis

3. Results

3.1. Study Population

3.2. Primary Analysis

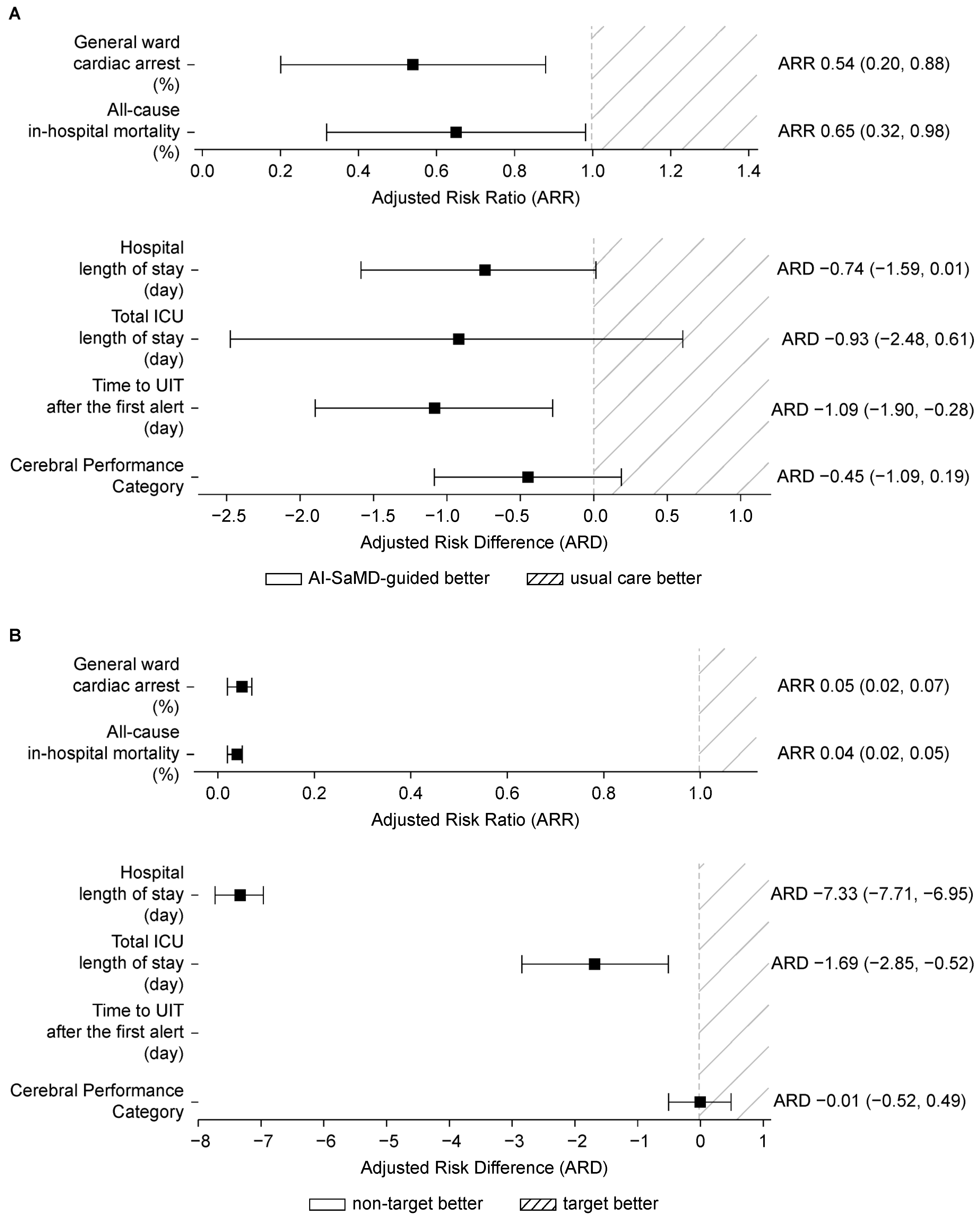

3.2.1. Key Aspect 1: Patient Outcomes Based on AI-SaMD-Guided Intervention

3.2.2. Key Aspect 2: Patient Outcomes Based on AI-SaMD Alert

3.3. Secondary Analysis

3.3.1. Key Aspect 3: Survival Analysis

3.3.2. Key Aspect 4: Effect of Timely and Continuous Compliance

4. Discussion

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| AAM | Advanced Alert Monitor |

| AHA | American Heart Association |

| AI | Artificial intelligence |

| AI-EWS | Artificial intelligence-based early warning system |

| AI-SaMD | Artificial intelligence–software as a medical device |

| ARD | Adjusted risk difference |

| ARR | Adjusted risk ratio |

| CCI | Charlson comorbidity index |

| CE-MDR | Conformité européenne—Medical Device Regulation (EU) |

| CI | Confidence interval |

| CPC | Cerebral Performance Category |

| CPR | Cardiopulmonary resuscitation |

| CRIS | Clinical Research Information Service |

| DeepCARS™ | Deep learning-based cardiac arrest risk management system |

| DNR | Do-not-resuscitate |

| EMR | Electronic medical record |

| EWS | Early warning score |

| FDA | U.S. Food and Drug Administration |

| HCPs | Healthcare professionals |

| ICMJE | the International Committee of Medical Journal Editors |

| ICU | Intensive care unit |

| IHCA | In-hospital cardiac arrest |

| IRB | Institutional Review Board |

| JAMA | Journal of the American Medical Association |

| MACPD | Mean alarm count per day |

| MEWS | Modified Early Warning Score |

| MFDS | Ministry of Food and Drug Safety (Republic of Korea) |

| NEWS | National Early Warning Score |

| NPV | Negative predictive value |

| PaCO2 | Arterial partial pressure of carbon dioxide |

| PaO2 | Arterial partial pressure of oxygen |

| PPV | Positive predictive value |

| PSM | Propensity score matching. |

| RR | Risk ratio |

| RRS | Rapid response system |

| SaMD | Software as a medical device |

| SMD | Standardized mean differences |

| SOFA | Sequential organ failure assessment |

| SPTTS | Single-parameter track-and-trigger system |

| tCO2 | Total carbon dioxide |

| TREND | Transparent Reporting of Evaluations with Non-randomized Designs |

| TTS | Track-and-trigger system |

| UIT | Unplanned intensive transfer |

| WHO ICTRP | World Health Organization International Clinical Trials Registry Platform |

References

- Andersen, L.W.; Holmberg, M.J.; Berg, K.M.; Donnino, M.W.; Granfeldt, A. In-hospital cardiac arrest: A review. JAMA 2019, 321, 1200–1210. [Google Scholar] [CrossRef]

- Nallamothu, B.K.; Greif, R.; Anderson, T.; Atiq, H.; Couto, T.B.; Considine, J.; De Caen, A.R.; Djärv, T.; Doll, A.; Douma, M.J.; et al. Ten steps toward improving in-hospital cardiac arrest quality of care and outcomes. Circ. Cardiovasc. Qual. Outcomes 2023, 16, e010491. [Google Scholar] [CrossRef]

- Andersen, L.W.; Holmberg, M.J.; Løfgren, B.; Kirkegaard, H.; Granfeldt, A. Adult in-hospital cardiac arrest in Denmark. Resuscitation 2019, 140, 31–36. [Google Scholar] [CrossRef] [PubMed]

- Holmberg, M.J.; Ross, C.E.; Fitzmaurice, G.M.; Chan, P.S.; Duval-Arnould, J.; Grossestreuer, A.V.; Yankama, T.; Donnino, M.W.; Andersen, L.W. Annual incidence of adult and pediatric in-hospital cardiac arrest in the United States. Circ. Cardiovasc. Qual. Outcomes 2019, 12, e005580. [Google Scholar] [CrossRef] [PubMed]

- Martin, S.S.; Aday, A.W.; Almarzooq, Z.I.; Anderson, C.A.; Arora, P.; Avery, C.L.; Baker-Smith, C.M.; Gibbs, B.B.; Beaton, A.Z.; Boehme, A.K.; et al. 2024 Heart disease and stroke statistics: A report of US and global data from the American Heart Association. Circulation 2024, 149, e347–e913. [Google Scholar] [CrossRef] [PubMed]

- Choi, Y.; Kwon, I.H.; Jeong, J.; Chung, J.; Roh, Y. Incidence of adult in-hospital cardiac arrest using national representative patient sample in Korea. Healthc. Inform. Res. 2016, 22, 277–284. [Google Scholar] [CrossRef][Green Version]

- Skogvoll, E.; Isern, E.; Sangolt, G.K.; Gisvold, S.E. In-hospital cardiopulmonary resuscitation. 5 years’ incidence and survival according to the Utstein template. Acta Anaesthesiol. Scand. 1999, 43, 177–184. [Google Scholar] [CrossRef]

- Lee, B.Y.; Hong, S.B. Rapid response systems in Korea. Acute Crit. Care 2019, 34, 108–116. [Google Scholar] [CrossRef]

- Maharaj, R.; Raffaele, I.; Wendon, J. Rapid response systems: A systematic review and meta-analysis. Crit. Care 2015, 19, 254. [Google Scholar] [CrossRef]

- Song, M.J.; Lee, Y.J. Strategies for successful implementation and permanent maintenance of a rapid response system. Korean J. Intern. Med. 2021, 36, 1031–1039. [Google Scholar] [CrossRef]

- Armoundas, A.A.; Narayan, S.M.; Arnett, D.K.; Spector-Bagdady, K.; Bennett, D.A.; Celi, L.A.; Friedman, P.A.; Gollob, M.H.; Hall, J.L.; Kwitek, A.E.; et al. Use of artificial intelligence in improving outcomes in heart disease: A scientific statement from the American Heart Association. Circulation 2024, 149, e1028–e1050. [Google Scholar] [CrossRef]

- Greif, R.; Bhanji, F.; Bigham, B.L.; Bray, J.; Breckwoldt, J.; Cheng, A.; Duff, J.P.; Gilfoyle, E.; Hsieh, M.-J.; Iwami, T.; et al. Education, implementation, and teams: 2020 International Consensus on cardiopulmonary resuscitation and Emergency Cardiovascular Care Science with Treatment Recommendations. Circulation 2020, 142, S222–S283. [Google Scholar] [CrossRef] [PubMed]

- Honarmand, K.; Wax, R.S.; Penoyer, D.; Lighthall, G.; Danesh, V.P.; Rochwerg, B.M.; Cheatham, M.L.M.; Davis, D.P.; DeVita, M.M.; Downar, J.M.; et al. Society of Critical Care Medicine guidelines on recognizing and responding to clinical deterioration outside the ICU: 2023. Crit. Care Med. 2024, 52, 314–330. [Google Scholar] [CrossRef] [PubMed]

- Solomon, R.S.; Corwin, G.S.; Barclay, D.C.; Quddusi, S.F.; Dannenberg, M.D. Effectiveness of rapid response teams on rates of in-hospital cardiopulmonary arrest and mortality: A systematic review and meta-analysis. J. Hosp. Med. 2016, 11, 438–445. [Google Scholar] [CrossRef] [PubMed]

- McGaughey, J.; Fergusson, D.A.; Van Bogaert, P.; Rose, L. Early warning systems and rapid response systems for the prevention of patient deterioration on acute adult hospital wards. Cochrane Database Syst. Rev. 2021, 11, CD005529. [Google Scholar] [CrossRef]

- Alhmoud, B.; Bonnici, T.; Patel, R.; Melley, D.; Williams, B.; Banerjee, A. Performance of universal early warning scores in different patient subgroups and clinical settings: A systematic review. BMJ Open 2021, 11, e045849. [Google Scholar] [CrossRef]

- Difonzo, M. Performance of the afferent limb of rapid response systems in managing deteriorating patients: A systematic review. Crit. Care Res. Pract. 2019, 2019, 6902420. [Google Scholar] [CrossRef]

- Jansen, J.O.; Cuthbertson, B.H. Detecting critical illness outside the ICU: The role of track and trigger systems. Curr. Opin. Crit. Care 2010, 16, 184–190. [Google Scholar] [CrossRef]

- Cvach, M. Monitor alarm fatigue: An integrative review. Biomed. Instrum. Technol. 2012, 46, 268–277. [Google Scholar] [CrossRef]

- Edelson, D.P.; Churpek, M.M.; Carey, K.A.; Lin, Z.; Huang, C.; Siner, J.M.; Johnson, J.; Krumholz, H.M.; Rhodes, D.J. Early warning scores with and without artificial intelligence. JAMA Netw. Open 2024, 7, e2438986. [Google Scholar] [CrossRef]

- Ruskin, K.J.; Hueske-Kraus, D. Alarm fatigue: Impacts on patient safety. Curr. Opin. Anaesthesiol. 2015, 28, 685–690. [Google Scholar] [CrossRef] [PubMed]

- Devita, M.A. Textbook of Rapid Response Systems: Concept and Implementation; Springer: New York, NY, USA, 2024. [Google Scholar]

- Escobar, G.J.; Liu, V.X.; Schuler, A.; Lawson, B.; Greene, J.D.; Kipnis, P. Automated identification of adults at risk for in-hospital clinical deterioration. N. Engl. J. Med. 2020, 383, 1951–1960. [Google Scholar] [CrossRef] [PubMed]

- Winslow, C.J.; Edelson, D.P.; Churpek, M.M.; Taneja, M.; Shah, N.S.; Datta, A.; Wang, C.-H.; Ravichandran, U.; McNulty, P.B.; Kharasch, M.; et al. The impact of a machine learning early warning score on hospital mortality: A multicenter clinical intervention trial. Crit. Care Med. 2022, 50, 1339–1347. [Google Scholar] [CrossRef] [PubMed]

- Adedinsewo, D.A.; Morales-Lara, A.C.; Afolabi, B.B.; Kushimo, O.A.; Mbakwem, A.C.; Ibiyemi, K.F.; Ogunmodede, J.A.; Raji, H.O.; Ringim, S.H.; Habib, A.A.; et al. Artificial intelligence guided screening for cardiomyopathies in an obstetric population: A pragmatic randomized clinical trial. Nat. Med. 2024, 30, 2897–2906. [Google Scholar] [CrossRef]

- Attia, Z.I.; Harmon, D.M.; Dugan, J.; Manka, L.; Lopez-Jimenez, F.; Lerman, A.; Siontis, K.C.; Noseworthy, P.A.; Yao, X.; Klavetter, E.W.; et al. Prospective evaluation of smartwatch-enabled detection of left ventricular dysfunction. Nat. Med. 2022, 28, 2497–2503. [Google Scholar] [CrossRef]

- Pan, X.; Tang, L.; Lai, Y.; Lin, M. Development and External Validation of a Machine Learning-Based System for Predicting 4-Year Incident Sarcopenia in Multimorbid Older Adults: Results From Two Prospective Cohorts. Geriatr. Gerontol. Int. 2025, 25, 1780–1792. [Google Scholar] [CrossRef] [PubMed]

- Cho, K.J.; Kim, J.S.; Lee, D.H.; Lee, S.; Song, M.J.; Lim, S.Y.; Cho, Y.-J.; Jo, Y.H.; Shin, Y.; Lee, Y.J. Prospective, multicenter validation of the deep learning-based cardiac arrest risk management system for predicting in-hospital cardiac arrest or unplanned intensive care unit transfer in patients admitted to general wards. Crit. Care 2023, 27, 346. [Google Scholar] [CrossRef]

- Cho, K.J.; Kim, K.H.; Choi, J.; Yoo, D.; Kim, J. External validation of deep learning-based cardiac arrest risk management system for predicting in-hospital cardiac arrest in patients admitted to general wards based on Rapid Response System operating and nonoperating periods: A single-center study. Crit. Care Med. 2024, 52, e110–e120. [Google Scholar] [CrossRef]

- Shin, Y.; Cho, K.J.; Chang, M.; Youk, H.; Kim, Y.J.; Park, J.Y.; Yoo, D. The development and validation of a novel deep-learning algorithm to predict in-hospital cardiac arrest in ED-ICU (emergency department-based intensive care units): A single center retrospective cohort study. Signa Vitae 2024, 20, 83–98. [Google Scholar] [CrossRef]

- Kwon, J.M.; Lee, Y.; Lee, Y.; Lee, S.; Park, J. An algorithm based on deep learning for predicting in-hospital cardiac arrest. J. Am. Heart Assoc. 2018, 7, e008678. [Google Scholar] [CrossRef]

- Lee, Y.J.; Cho, K.J.; Kwon, O.; Park, H.; Lee, Y.; Kwon, J.-M.; Park, J.; Kim, J.S.; Lee, M.-J.; Kim, A.J.; et al. A multicentre validation study of the deep learning-based early warning score for predicting in-hospital cardiac arrest in patients admitted to general wards. Resuscitation 2021, 163, 78–85. [Google Scholar] [CrossRef] [PubMed]

- Mayampurath, A.; Carey, K.; Palama, B.; Gonzalez, M.M.; Reid, J.M.; Bartlett, A.H.; Churpek, M.; Edelson, D.; Jani, P. Machine learning-based pediatric early warning score: Patient outcomes in a pre- versus post-implementation study, 2019–2023. Pediatr. Crit. Care Med. 2025, 26, e146–e154. [Google Scholar] [CrossRef] [PubMed]

- Kollef, M.H.; Chen, Y.; Heard, K.; LaRossa, G.N.; Lu, C.; Martin, N.R.; Martin, N.; Micek, S.T.; Bailey, T. A randomized trial of real-time automated clinical deterioration alerts sent to a rapid response team. J. Hosp. Med. 2014, 9, 424–429. [Google Scholar] [CrossRef] [PubMed]

- Bailey, T.C.; Chen, Y.; Mao, Y.; Lu, C.; Hackmann, G.; Micek, S.T.; Heard, K.M.; Faulkner, K.M.; Kollef, M.H. A trial of a real-time alert for clinical deterioration in patients hospitalized on general medical wards. J. Hosp. Med. 2013, 8, 236–242. [Google Scholar] [CrossRef]

- Adams, R.; Henry, K.E.; Sridharan, A.; Soleimani, H.; Zhan, A.; Rawat, N.; Johnson, L.; Hager, D.N.; Cosgrove, S.E.; Markowski, A.; et al. Prospective, multi-site study of patient outcomes after implementation of the TREWS machine learning-based early warning system for sepsis. Nat. Med. 2022, 28, 1455–1460. [Google Scholar] [CrossRef]

- Orosz, J.; Bailey, M.; Udy, A.; Pilcher, D.; Bellomo, R.; Jones, D. Unplanned ICU admission from hospital wards after rapid response team review in Australia and New Zealand. Crit. Care Med. 2020, 48, e550–e556. [Google Scholar] [CrossRef]

- Jacobs, I.; Nadkarni, V.; ILCOR Task Force on Cardiac Arrest and Cardiopulmonary Resuscitation Outcomes; Bahr, J.; Berg, R.A.; Billi, J.E.; Bossaert, L.; Cassan, P.; Coovadia, A.; D’Este, K.; et al. Cardiac arrest and cardiopulmonary resuscitation outcome reports: Update and simplification of the Utstein templates for resuscitation registries: A statement for healthcare professionals from a task force of the International Liaison Committee on Resuscitation (American Heart Association, European Resuscitation Council, Australian Resuscitation Council, New Zealand Resuscitation Council, Heart and Stroke Foundation of Canada, InterAmerican Heart Foundation, Resuscitation Councils of Southern Africa). Circulation 2004, 110, 3385–3397. [Google Scholar] [CrossRef]

- Nielsen, P.B.; Langkjær, C.S.; Schultz, M.; Kodal, A.M.; Pedersen, N.E.; Petersen, J.A.; Lange, T.; Arvig, M.D.; Meyhoff, C.S.; Bestle, M.H.; et al. Clinical assessment as a part of an early warning score-A Danish cluster-randomised, multicentre study of an individual early warning score. Lancet Digit. Health 2022, 4, e497–e506. [Google Scholar] [CrossRef]

- Wang, H.; Chow, S.C. Sample size calculation for comparing proportions. In Wiley Encyclopedia of Clinical Trials; D’Agostino, R.B., Sullivan, L., Massaro, J., Eds.; John Wiley & Sons: Chichester, UK, 2007. [Google Scholar] [CrossRef]

- Norton, E.C.; Miller, M.M.; Kleinman, L.C. Computing adjusted risk ratios and risk differences in Stata. STATA J. 2013, 13, 492–509. [Google Scholar] [CrossRef]

- Haukoos, J.S.; Lewis, R.J. The propensity score. JAMA 2015, 314, 1637–1638. [Google Scholar] [CrossRef]

- VanderWeele, T.J.; Ding, P. Sensitivity analysis in observational research: Introducing the E-value. Ann. Intern. Med. 2017, 167, 268–274. [Google Scholar] [CrossRef] [PubMed]

- Wang, M. Generalized estimating equations in longitudinal data analysis: A review and recent developments. Adv. Stat. 2014, 2014, 303728. [Google Scholar] [CrossRef]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Prettenhofer, P.; Weiss, R.; Dubourg, V.; et al. Scikit-learn: Machine learning in Python. J. Mach. Learn. Res. 2011, 12, 2825–2830. [Google Scholar]

- Seabold, S.; Perktold, J. Statsmodels: Econometric and statistical modeling with Python. SciPy 2010, 7, 92–96. [Google Scholar] [CrossRef]

- Wang, P.; Li, Y.; Reddy, C.K. Machine learning for survival analysis: A survey. ACM Comput. Surv. 2019, 51, 1–36. [Google Scholar] [CrossRef]

- Bedoya, A.D.; Clement, M.E.; Phelan, M.; Steorts, R.C.; O’Brien, C.; Goldstein, B.A. Minimal impact of implemented early warning score and best practice alert for patient deterioration. Crit. Care Med. 2019, 47, 49–55. [Google Scholar] [CrossRef]

- Khera, R.; Butte, A.J.; Berkwits, M.; Hswen, Y.; Flanagin, A.; Park, H.; Curfman, G.; Bibbins-Domingo, K. AI in medicine-JAMA’s focus on clinical outcomes, patient-centered care, quality, and equity. JAMA 2023, 330, 818–820. [Google Scholar] [CrossRef]

- Mandair, D.; Elia, M.V.; Hong, J.C. Considerations in translating AI to improve care. JAMA Netw. Open 2025, 8, e252023. [Google Scholar] [CrossRef]

- Pinsky, M.R.; Bedoya, A.; Bihorac, A.; Celi, L.; Churpek, M.; Economou-Zavlanos, N.J.; Elbers, P.; Saria, S.; Liu, V.; Lyons, P.G.; et al. Use of artificial intelligence in critical care: Opportunities and obstacles. Crit. Care 2024, 28, 113. [Google Scholar] [CrossRef]

- de Hond, A.A.H.; Kant, I.M.J.; Fornasa, M.; Cinà, G.; Elbers, P.W.G.; Thoral, P.J.; Arbous, M.S.; Steyerberg, E.W. Predicting readmission or death after discharge from the ICU: External validation and retraining of a machine learning model. Crit. Care Med. 2023, 51, 291–300. [Google Scholar] [CrossRef]

- Okada, Y.; Ning, Y.; Ong, M.E.H. Explainable artificial intelligence in emergency medicine: An overview. Clin. Exp. Emerg. Med. 2023, 10, 354–362. [Google Scholar] [CrossRef]

- Huang, X.; Marques-Silva, J. On the failings of Shapley values for explainability. Int. J. Approx. Reason. 2024, 171, 109112. [Google Scholar] [CrossRef]

- Bienefeld, N.; Boss, J.M.; Lüthy, R.; Brodbeck, D.; Azzati, J.; Blaser, M.; Willms, J.; Keller, E. Solving the explainable AI conundrum by bridging clinicians’ needs and developers’ goals. npj Digit. Med. 2023, 6, 94. [Google Scholar] [CrossRef]

| Variables | AI-SaMD-Guided Cohort (n = 1409) | Usual Care Cohort (n = 1497) | p-Value | Target Cohort (n = 2906) | Non-Target Cohort (n = 32,721) | p-Value |

|---|---|---|---|---|---|---|

| Cohort | ||||||

| Number of hospital admissions (n) | 1409 | 1497 | - | 2906 | 32,721 | - |

| Number of patients (n) | 1313 | 1213 | - | 2526 | 20,381 | - |

| Demographics | ||||||

| Age (years) | 73.04 ± 12.46 | 75.08 ± 11.66 | ** | 74.09 ± 12.09 | 59.65 ± 16.46 | ** |

| Sex, male (n) | 738 (52.38%) | 780 (52.10%) | 0.912 | 1518 (52.24%) | 16,533 (50.53%) | 0.081 |

| Vital signs, mean | ||||||

| Heart rate (/min) | 87.57 ± 11.24 | 89.87 ± 10.83 | ** | 88.76 ± 11.09 | 75.91 ± 10.48 | ** |

| Respiratory rate (/min) | 18.85 ± 1.58 | 19.15 ± 1.37 | ** | 19.01 ± 1.49 | 17.91 ± 1.26 | ** |

| Systolic blood pressure (mmHg) | 125.76 ± 15.14 | 124.99 ± 16.51 | 0.188 | 125.36 ± 15.86 | 127.05 ± 15.27 | ** |

| Body temperature (°C) | 36.70 ± 0.32 | 36.77 ± 0.58 | ** | 36.74 ± 0.47 | 36.62 ± 0.32 | ** |

| NEWS | 1.34 ± 0.62 | 1.41 ± 0.74 | ** | 1.38 ± 0.69 | 0.69 ± 0.53 | ** |

| SPTTS > 0 (%) | 7.04 ± 7.94 | 6.85 ± 9.64 | 0.561 | 6.94 ± 8.86 | 1.99 ± 5.44 | ** |

| AI-SaMD (DeepCARS™) | 63.42 ± 16.26 | 68.01 ± 15.00 | ** | 65.79 ± 15.79 | 31.43 ± 17.48 | ** |

| Vital signs at admission | ||||||

| Heart rate (/min) | 89.88 ± 18.46 | 92.80 ± 18.47 | ** | 91.38 ± 18.52 | 81.13 ± 14.44 | ** |

| Respiratory rate (/min) | 19.10 ± 2.56 | 19.46 ± 3.60 | ** | 19.29 ± 3.14 | 18.30 ± 1.99 | ** |

| Systolic blood pressure (mmHg) | 132.63 ± 23.80 | 132.11 ± 26.38 | 0.575 | 132.36 ± 25.16 | 132.03 ± 20.75 | 0.487 |

| Body temperature (°C) | 36.74 ± 0.52 | 36.76 ± 0.58 | 0.299 | 36.75 ± 0.55 | 36.68 ± 0.50 | ** |

| NEWS | 1.39 ± 1.61 | 1.71 ± 1.71 | ** | 1.55 ± 1.67 | 0.66 ± 0.92 | ** |

| SPTTS > 0 (n) | 231 (16.39%) | 345 (23.05%) | ** | 576 (19.82%) | 1802 (5.51%) | ** |

| AI-SaMD (DeepCARS™) | 64.17 ± 21.22 | 70.34 ± 18.65 | ** | 67.34 ± 20.17 | 39.72 ± 22.72 | ** |

| Vital signs, at first AI-SaMD alert | ||||||

| Heart rate (/min) | 113.92 ± 27.79 | 115.61 ± 24.27 | 0.081 | 114.79 ± 26.04 | - | - |

| Respiratory rate (/min) | 22.51 ± 7.40 | 21.69 ± 5.54 | ** | 22.09 ± 6.52 | - | - |

| Systolic blood pressure (mmHg) | 123.49 ± 37.62 | 125.65 ± 34.39 | 0.107 | 124.60 ± 36.00 | - | - |

| Body temperature (°C) | 36.70 ± 0.99 | 37.02 ± 1.29 | ** | 36.86 ± 1.16 | - | - |

| NEWS | 4.11 ± 1.95 | 3.76 ± 1.90 | ** | 3.93 ± 1.93 | - | - |

| SPTTS > 0 (n) | 730 (51.81%) | 603 (40.28%) | ** | 1333 (45.87%) | - | - |

| AI-SaMD (DeepCARS™) | 96.38 ± 1.36 | 96.33 ± 1.34 | 0.319 | 96.36 ± 1.35 | - | - |

| Variables | AI-SaMD- Guided Cohort (n = 1409) | Usual Care Cohort (n = 1497) | Adjusted Risk Ratio or Adjusted Risk Difference (95% CI) | p-Value |

|---|---|---|---|---|

| Primary outcome | ||||

| General ward cardiac arrest (n) | 15 (1.06%) | 31 (2.07%) | ARR 0.54 (0.20, 0.88) | ** |

| Secondary outcomes | ||||

| All-cause in-hospital mortality (n) | 24 (1.70%) | 41 (2.74%) | ARR 0.65 (0.32, 0.98) | * |

| Hospital length of stay (days) | 9.71 (4.83, 17.71) | 10.46 (5.61, 18.15) | ARD −0.73 (−1.56, 0.11) | 0.089 |

| Total ICU length of stay (days) | 4.70 (2.64, 9.81) | 5.81 (3.93, 10.52) | ARD −0.93 (−2.48, 0.61) | 0.235 |

| Time to UIT after the first alert (days) | 0.73 (0.26, 2.48) | 1.82 (0.51, 6.95) | ARD −1.09 (−1.90, −0.28) | ** |

| Cerebral Performance Category | 4.00 ± 0.96 | 4.45 ± 0.99 | ARD −0.45 (−1.09, 0.19) | 0.168 |

| Variables | Multivariable Regression Analysis (Main Result) | Crude Analysis (Unadjusted) | PSM Analysis | Exclusion of Post-ICU Reallocation | E-Value |

|---|---|---|---|---|---|

| Primary outcome | |||||

| General ward cardiac arrest (n) | ARR 0.54 (0.20, 0.88) | RR 0.51 (0.20, 0.88) | ARR 0.51 (0.19, 0.83) | ARR 0.54 (0.20, 0.88) | 3.11 (1.54) |

| Secondary outcomes | |||||

| All-cause in-hospital mortality (n) | ARR 0.65 (0.32, 0.98) | RR 0.62 (0.32, 0.98) | ARR 0.52 (0.26, 0.79) | ARR 0.66 (0.32, 0.99) | 2.45 (1.29) |

| Hospital length of stay (days) | ARD −0.73 (−1.56, 0.11) | RD −0.75 (−1.51, 0.05) | ARD −0.81 (−1.80, 0.17) | ARD −0.42 (−1.20, 0.36) | 1.39 (1.00) |

| Total ICU length of stay (days) | ARD −0.93 (−2.48, 0.61) | RD −1.12 (−2.83, 0.34) | ARD −0.53 (−2.10, 1.05) | ARD −0.37 (−2.11, 1.36) | 1.78 (1.00) |

| Time to UIT after first alert (days) | ARD −1.09 (−1.90, −0.28) | RD −1.09 (−2.08, −0.28) | ARD −0.75 (−1.78, 0.28) | ARD −3.15 (−5.71, −0.59) | 5.48 (1.78) |

| Cerebral Performance Category | ARD −0.45 (−1.09, 0.19) | RD −0.45 (−1.09, 0.19) | ARD −0.72 (−1.23, −0.21) | ARD −0.50 (−1.11, 0.11) | 1.52 (1.00) |

| Variables | Target Cohort (n = 2906) | Non-Target Cohort (n = 32,721) | Adjusted Risk Ratio or Adjusted Risk Difference (95% CI) | p-Value |

|---|---|---|---|---|

| Primary outcome | ||||

| General ward cardiac arrest (n) | 46 (1.58%) | 24 (0.07%) | ARR 0.05 (0.02, 0.07) | ** |

| Secondary outcomes | ||||

| All-cause in-hospital mortality (n) | 65 (2.24%) | 27 (0.08%) | ARR 0.04 (0.02, 0.05) | ** |

| Hospital length of stay (days) | 9.87 (5.47, 17.77) | 2.68 (1.29, 4.91) | ARD −7.33 (−7.71, −6.95) | ** |

| Total ICU length of stay (days) | 5.31 (2.95, 9.83) | 3.69 (1.62, 7.01) | ARD −1.69 (−2.85, −0.52) | ** |

| Time to UIT after the first alert (days) | 1.02 (0.33, 3.22) | - | - | - |

| Cerebral Performance Category | 4.30 ± 0.99 | 4.27 ± 0.98 | ARD −0.01 (−0.52, 0.49) | 0.960 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Park, M.H.; Kim, M.; Lee, M.-J.; Kim, A.J.; Cho, K.-J.; Jang, J.; Jung, J.; Chang, M.; Yoo, D.; Kim, J.S. Clinical Effectiveness of an Artificial Intelligence-Based Prediction Model for Cardiac Arrest in General Ward-Admitted Patients: A Non-Randomized Controlled Trial. Diagnostics 2026, 16, 335. https://doi.org/10.3390/diagnostics16020335

Park MH, Kim M, Lee M-J, Kim AJ, Cho K-J, Jang J, Jung J, Chang M, Yoo D, Kim JS. Clinical Effectiveness of an Artificial Intelligence-Based Prediction Model for Cardiac Arrest in General Ward-Admitted Patients: A Non-Randomized Controlled Trial. Diagnostics. 2026; 16(2):335. https://doi.org/10.3390/diagnostics16020335

Chicago/Turabian StylePark, Mi Hwa, Mincheol Kim, Man-Jong Lee, Ah Jin Kim, Kyung-Jae Cho, Jinhui Jang, Jaehun Jung, Mineok Chang, Dongjoon Yoo, and Jung Soo Kim. 2026. "Clinical Effectiveness of an Artificial Intelligence-Based Prediction Model for Cardiac Arrest in General Ward-Admitted Patients: A Non-Randomized Controlled Trial" Diagnostics 16, no. 2: 335. https://doi.org/10.3390/diagnostics16020335

APA StylePark, M. H., Kim, M., Lee, M.-J., Kim, A. J., Cho, K.-J., Jang, J., Jung, J., Chang, M., Yoo, D., & Kim, J. S. (2026). Clinical Effectiveness of an Artificial Intelligence-Based Prediction Model for Cardiac Arrest in General Ward-Admitted Patients: A Non-Randomized Controlled Trial. Diagnostics, 16(2), 335. https://doi.org/10.3390/diagnostics16020335