Diagnosing Melanomas in Dermoscopy Images Using Deep Learning

Abstract

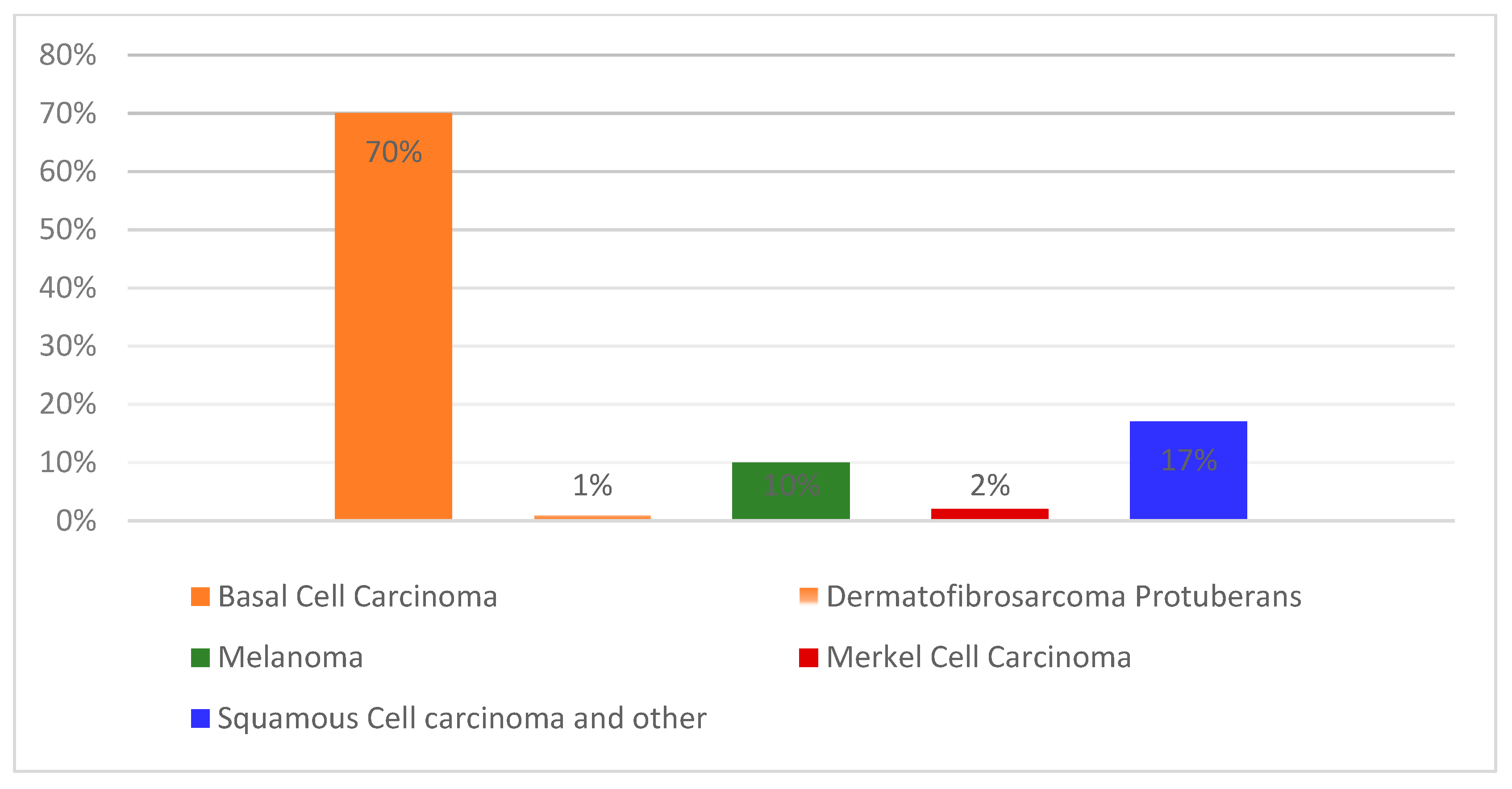

1. Introduction

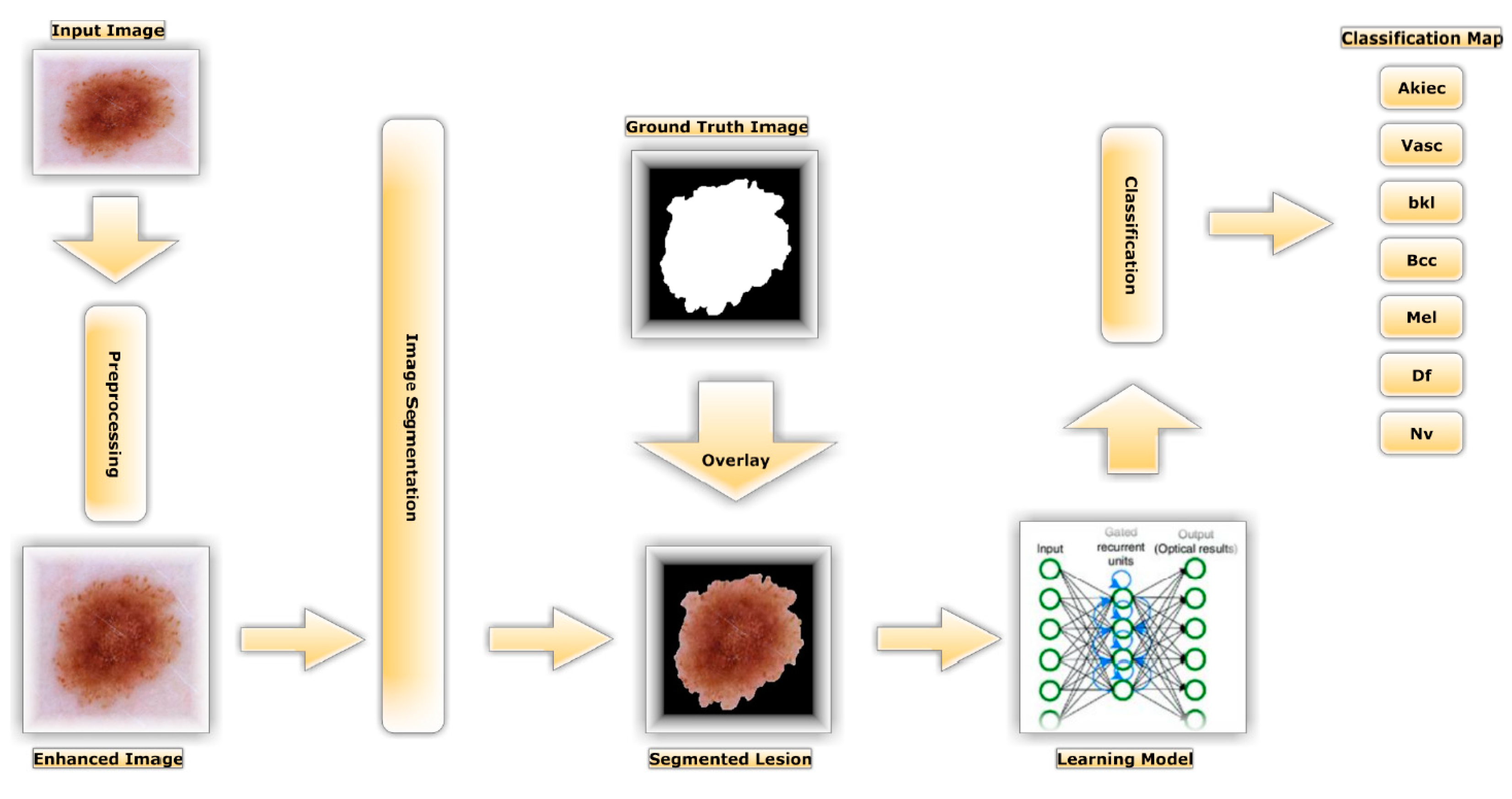

- An enhanced super-resolution generative adversarial network (ESRGAN) was employed using 10,000 training photos to generate high-quality images for the Human against Machine dataset (HAM10000 dataset [20]).

- Because the HAM10000 dataset contains uneven data, we employed augmentation to balance the data throughout all classes.

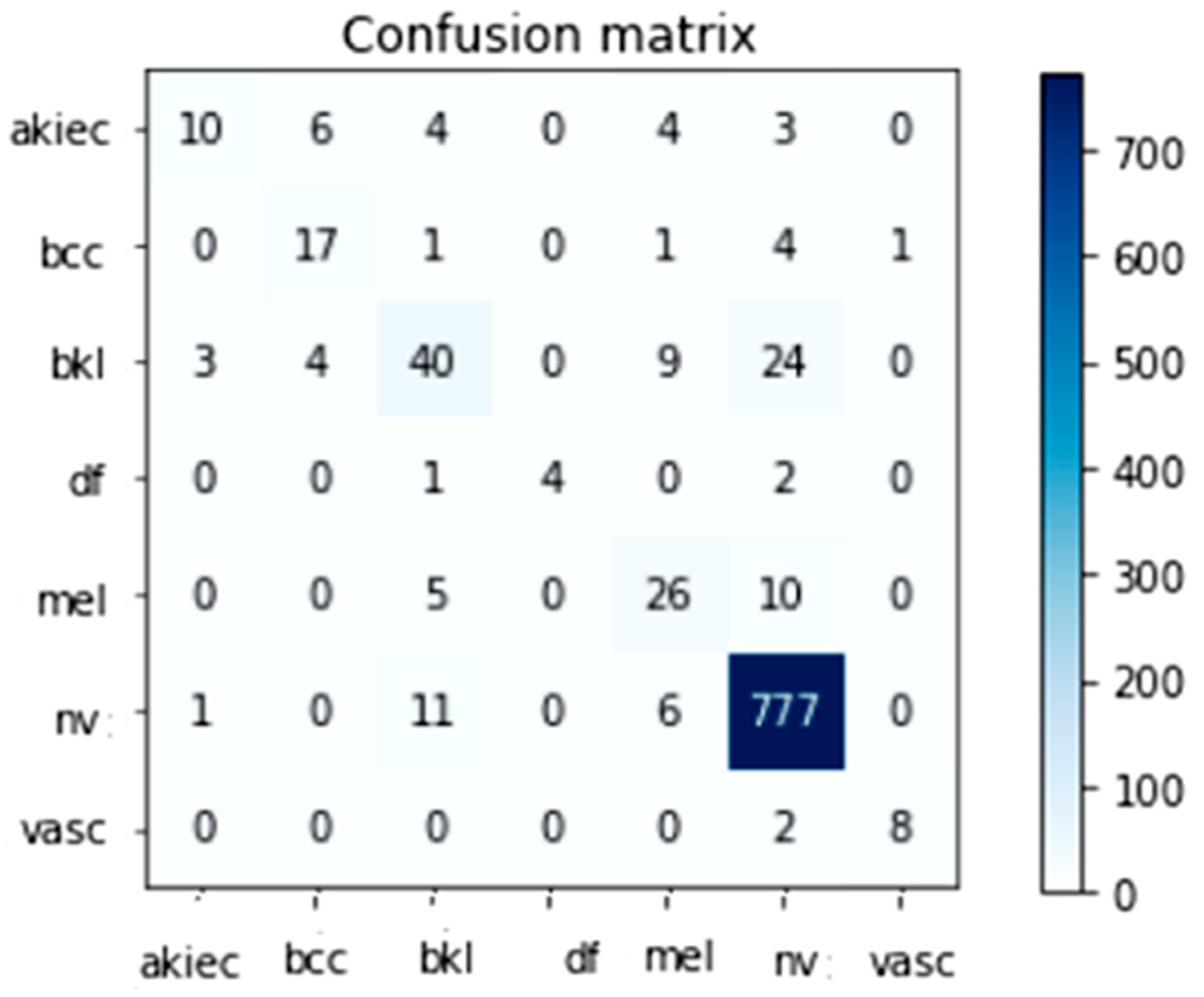

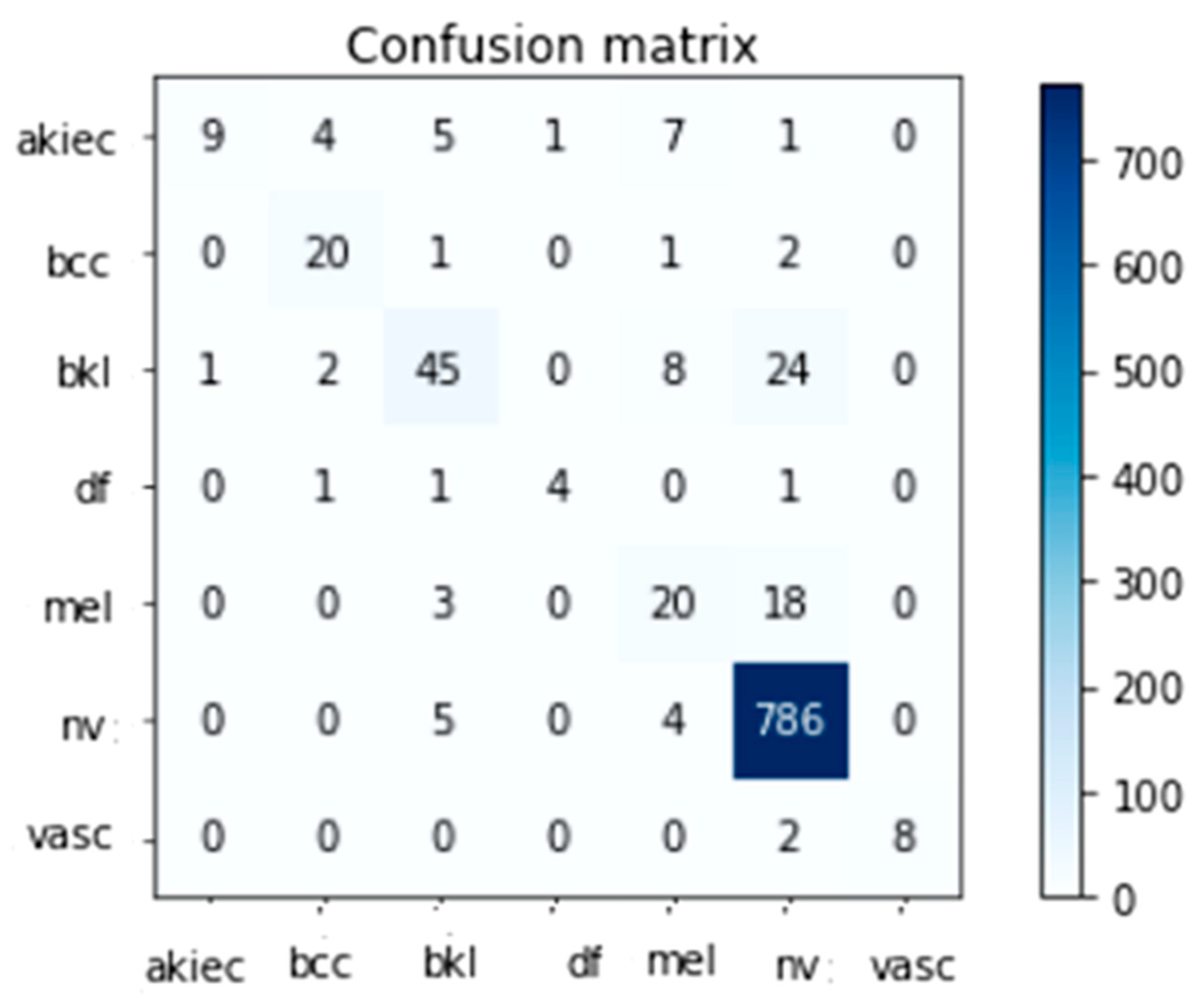

- A deep comparative evaluation employing several assessment parameters such as accuracy, specificity, sensitivity, a confusion matrix, and the F1-score established whether the proposed system was feasible.

- Together with Inception-V3 and InceptionResnet-V2, it was used to fine-tune the weights of HAM10000-trained networks.

- To boost the recommended method’s scalability and safeguard against overfitting, we used a supplementary training procedure assisted by several training strategy variations (e.g., data augmentation, learning rate, batch size, and validation patience).

2. Related Work

3. Proposed Methodology

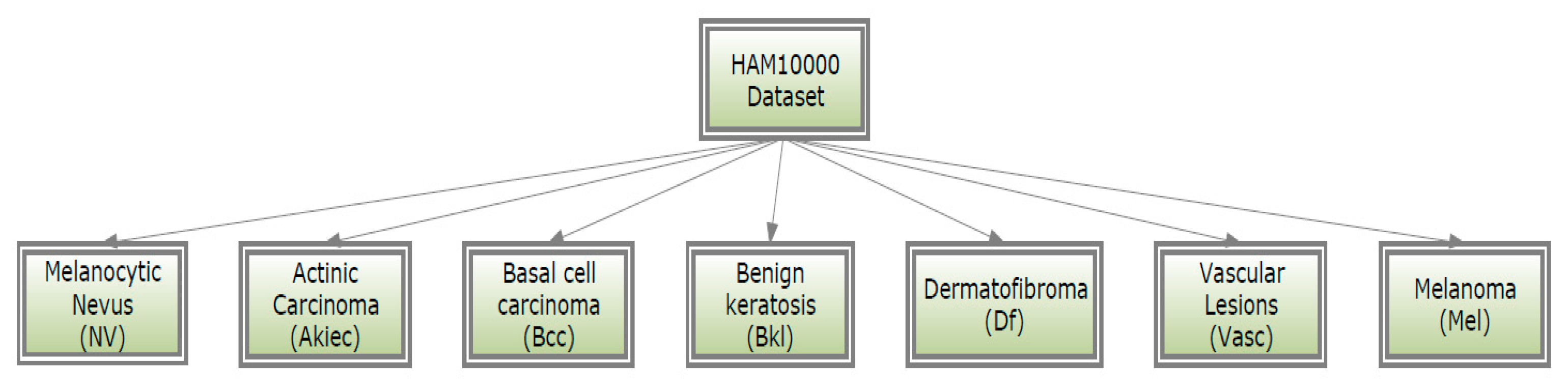

3.1. HAM10000 Dataset

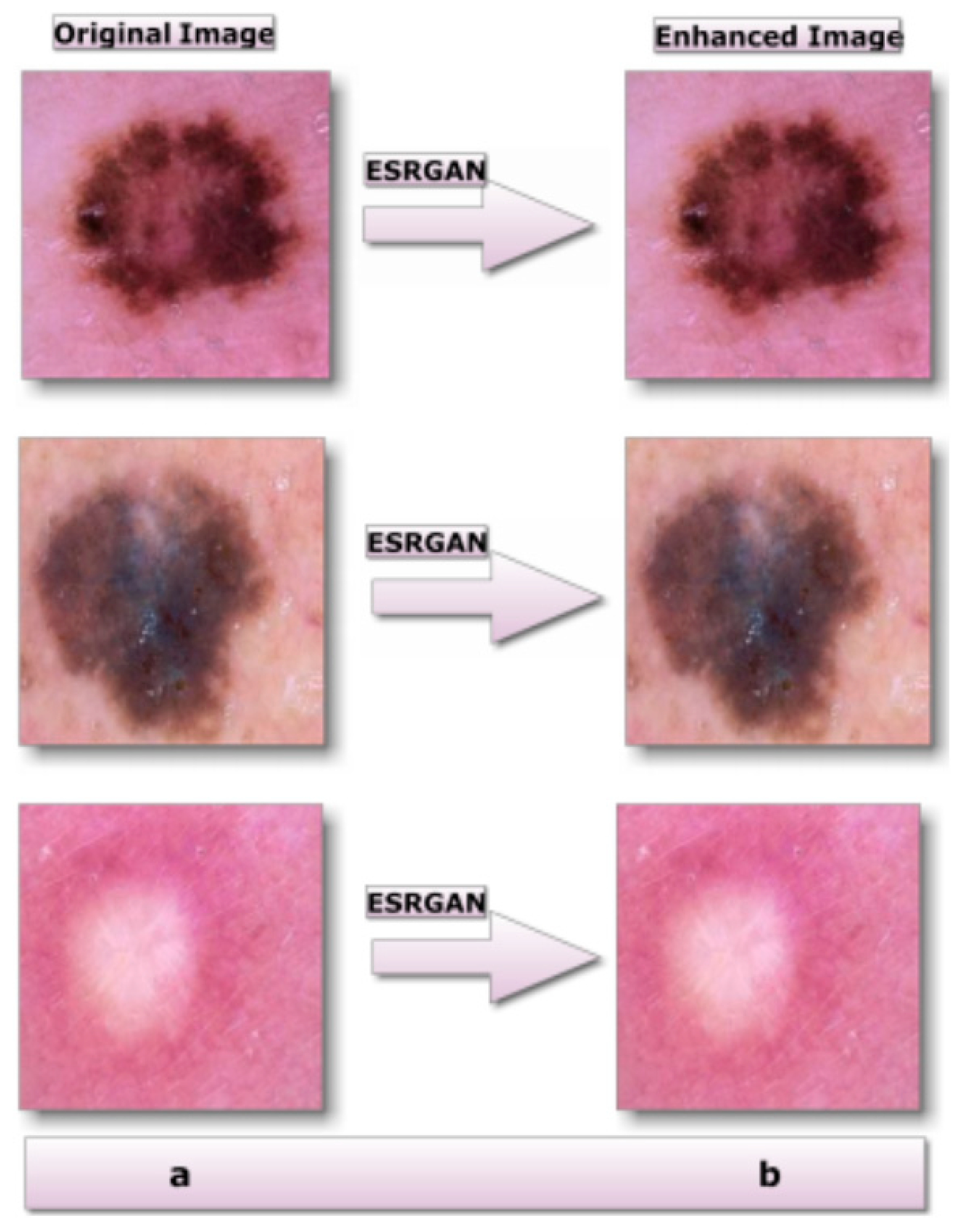

3.2. Image Pre-Processing Step

3.3. ESRGAN

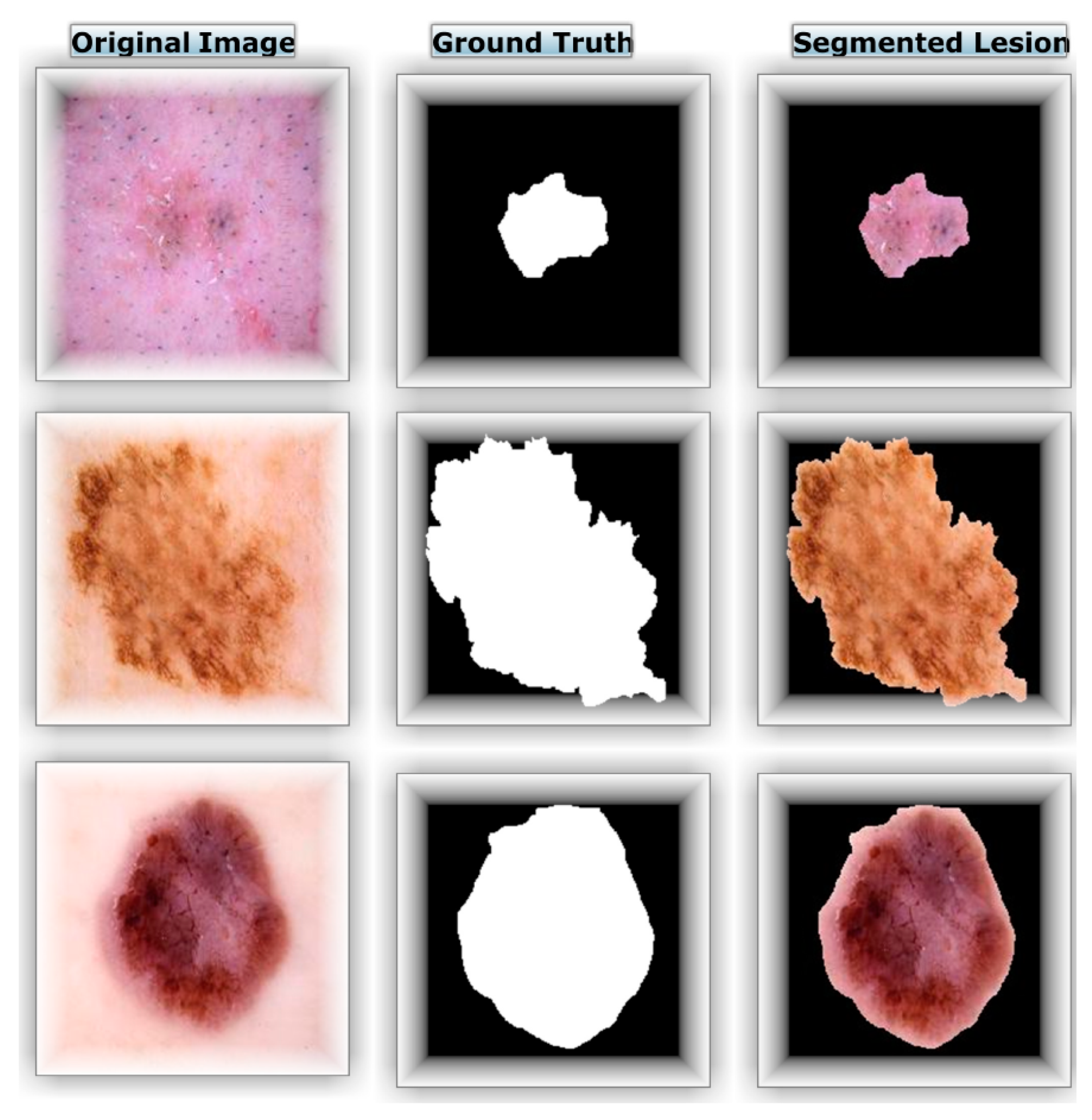

3.4. Segmentation

3.5. Data Augmentation

3.6. Transfer Models for Learning

3.7. Model Training Utilizing Inception-V3

3.8. Model Training Utilizing Inception-Resnet

4. Experiments and Results

4.1. Instruction and Deployment of Inception-v3 and Inception-Resnet-V2

4.2. Criteria for Assessment

4.3. Effectiveness among Several DNN Models

4.4. Comparison to Alternative Approaches

5. Discussion

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Saeed, J.; Zeebaree, S. Skin Lesion Classification Based on Deep Convolutional Neural Networks Architectures. J. Appl. Sci. Technol. Trends 2021, 2, 41–51. [Google Scholar] [CrossRef]

- Albahar, M.A. Skin Lesion Classification Using Convolutional Neural Network With Novel Regularizer. IEEE Access 2019, 7, 38306–38313. [Google Scholar] [CrossRef]

- Khan, I.U.; Aslam, N.; Anwar, T.; Aljameel, S.S.; Ullah, M.; Khan, R.; Rehman, A.; Akhtar, N. Remote Diagnosis and Triaging Model for Skin Cancer Using EfficientNet and Extreme Gradient Boosting. Complexity 2021, 2021, 5591614. [Google Scholar] [CrossRef]

- Gouda, W.; Sama, N.U.; Al-Waakid, G.; Humayun, M.; Jhanjhi, N.Z. Detection of Skin Cancer Based on Skin Lesion Images Using Deep Learning. Healthcare 2022, 10, 1183. [Google Scholar] [CrossRef] [PubMed]

- Nikitkina, A.I.; Bikmulina, P.Y.; Gafarova, E.R.; Kosheleva, N.V.; Efremov, Y.M.; Bezrukov, E.A.; Butnaru, D.V.; Dolganova, I.N.; Chernomyrdin, N.V.; Cherkasova, O.P.; et al. Terahertz radiation and the skin: A review. J. Biomed. Opt. 2021, 26, 043005. [Google Scholar] [CrossRef]

- Xu, H.; Lu, C.; Berendt, R.; Jha, N.; Mandal, M. Automated analysis and classification of melanocytic tumor on skin whole slide images. Comput. Med. Imaging Graph. 2018, 66, 124–134. [Google Scholar] [CrossRef]

- Alwakid, G.; Gouda, W.; Humayun, M.; Sama, N.U. Melanoma Detection Using Deep Learning-Based Classifications. Healthcare 2022, 10, 2481. [Google Scholar] [CrossRef]

- Namozov, A.; Cho, Y.I. Convolutional neural network algorithm with parameterized activation function for melanoma classification. In Proceedings of the 2018 International Conference on Information and Communication Technology Convergence (ICTC), Jeju, Republic of Korea, 17–19 October 2018. [Google Scholar]

- Ozkan, I.A.; Koklu, M. Skin lesion classification using machine learning algorithms. Int. J. Intell. Syst. Appl. Eng. 2017, 5, 285–289. [Google Scholar] [CrossRef]

- Thamizhamuthu, R.; Manjula, D. Skin Melanoma Classification System Using Deep Learning. Comput. Mater. Contin. 2021, 68, 1147–1160. [Google Scholar] [CrossRef]

- Stolz, W. ABCD rule of dermatoscopy: A new practical method for early recognition of malignant melanoma. Eur. J. Dermatol. 1994, 4, 521–527. [Google Scholar]

- Argenziano, G.; Fabbrocini, G.; Garli, P.; De Gorge, V.; Sammarco, E.; Delfino, M. Epiluminescence microscopy for the diagnosis of doubtful melanocytic skin lesions: Comparison of the ABCD rule of dermatoscopy and a new 7-point checklist based on pattern analysis. Arch. Dermatol. 1998, 134, 1563–1570. [Google Scholar] [CrossRef] [PubMed]

- Pehamberger, H.; Steiner, A.; Wolff, K. In vivo epiluminescence microscopy of pigmented skin lesions. I. Pattern analysis of pigmented skin lesions. J. Am. Acad. Dermatol. 1987, 17, 571–583. [Google Scholar] [CrossRef] [PubMed]

- Reshma, G.; Al-Atroshi, C.; Nassa, V.K.; Geetha, B.; Sunitha, G.; Galety, M.G.; Neelakandan, S. Deep Learning-Based Skin Lesion Diagnosis Model Using Dermoscopic Images. Intell. Autom. Soft Comput. 2022, 31, 621–634. [Google Scholar] [CrossRef]

- Litjens, G.; Kooi, T.; Bejnordi, B.E.; Setio, A.A.A.; Ciompi, F.; Ghafoorian, M.; van der Laak, J.A.W.M.; van Ginneken, B.; Sánchez, C.I. A survey on deep learning in medical image analysis. Med. Image Anal. 2017, 42, 60–88. [Google Scholar] [CrossRef]

- Yao, P.; Shen, S.; Xu, M.; Liu, P.; Zhang, F.; Xing, J.; Shao, P.; Kaffenberger, B.; Xu, R.X. Single model deep learning on imbalanced small datasets for skin lesion classification. arXiv 2021, arXiv:2102.01284. [Google Scholar] [CrossRef]

- Adegun, A.; Viriri, S. Deep learning techniques for skin lesion analysis and melanoma cancer detection: A survey of state-of-the-art. Artif. Intell. Rev. 2020, 54, 811–841. [Google Scholar] [CrossRef]

- Yang, J.; Sun, X.; Liang, J.; Rosin, P.L. Clinical skin lesion diagnosis using representations inspired by dermatologist criteria. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018. [Google Scholar]

- Satheesha, T.; Satyanarayana, D.; Prasad, M.N.G.; Dhruve, D.K. Melanoma is skin deep: A 3D reconstruction technique for computerized dermoscopic skin lesion classifi-cation. IEEE J. Transl. Eng. Health Med. 2017, 5, 1–17. [Google Scholar] [CrossRef]

- Tschandl, P.; Rosendahl, C.; Kittler, H. The HAM10000 dataset, a large collection of multi-source dermatoscopic images of common pigmented skin lesions. Sci. Data 2018, 5, 180161. [Google Scholar] [CrossRef]

- Lembhe, A.; Motarwar, P.; Patil, R.; Elias, S. Enhancement in Skin Cancer Detection using Image Super Resolution and Convolutional Neural Network. Procedia Comput. Sci. 2023, 218, 164–173. [Google Scholar] [CrossRef]

- Balaha, H.M.; Hassan, A.E.-S. Skin cancer diagnosis based on deep transfer learning and sparrow search algorithm. Neural Comput. Appl. 2022, 35, 815–853. [Google Scholar] [CrossRef]

- Brinker, T.J.; Hekler, A.; Enk, A.H.; Klode, J.; Hauschild, A.; Berking, C.; Schilling, B.; Haferkamp, S.; Schadendorf, D.; Fröhling, S.; et al. A convolutional neural network trained with dermoscopic images performed on par with 145 dermatologists in a clinical melanoma image classification task. Eur. J. Cancer 2019, 111, 148–154. [Google Scholar] [CrossRef] [PubMed]

- Tembhurne, J.V.; Hebbar, N.; Patil, H.Y.; Diwan, T. Skin cancer detection using ensemble of machine learning and deep learning techniques. Multimedia Tools Appl. 2023, 1–24. [Google Scholar] [CrossRef]

- Mazhar, T.; Haq, I.; Ditta, A.; Mohsan, S.A.H.; Rehman, F.; Zafar, I.; Gansau, J.A.; Goh, L.P.W. The Role of Machine Learning and Deep Learning Approaches for the Detection of Skin Cancer. Healthcare 2023, 11, 415. [Google Scholar] [CrossRef] [PubMed]

- Haenssle, H.A.; Fink, C.; Schneiderbauer, R.; Toberer, F.; Buhl, T.; Blum, A.; Kalloo, A.; Hassen, A.B.H.; Thomas, L.; Enk, A.; et al. Man against machine: Diagnostic performance of a deep learning convolutional neural network for dermoscopic melanoma recognition in comparison to 58 dermatologists. Ann. Oncol. Off. J. Eur. Soc. Med. Oncol. 2018, 29, 1836–1842. [Google Scholar] [CrossRef] [PubMed]

- Alenezi, F.; Armghan, A.; Polat, K. A multi-stage melanoma recognition framework with deep residual neural network and hyperparameter optimization-based decision support in dermoscopy images. Expert Syst. Appl. 2023, 215, 119352. [Google Scholar] [CrossRef]

- Inthiyaz, S.; Altahan, B.R.; Ahammad, S.H.; Rajesh, V.; Kalangi, R.R.; Smirani, L.K.; Hossain, A.; Rashed, A.N.Z. Skin disease detection using deep learning. Adv. Eng. Softw. 2023, 175, 103361. [Google Scholar] [CrossRef]

- Mendes, D.B.; da Silva, N.C. Skin lesions classification using convolutional neural networks in clinical images. arXiv 2018, arXiv:1812.02316. [Google Scholar]

- Aijaz, S.F.; Khan, S.J.; Azim, F.; Shakeel, C.S.; Hassan, U. Deep Learning Application for Effective Classification of Different Types of Psoriasis. J. Healthc. Eng. 2022, 2022, 7541583. [Google Scholar] [CrossRef]

- Khan, M.A.; Akram, T.; Zhang, Y.-D.; Sharif, M. Attributes based skin lesion detection and recognition: A mask RCNN and transfer learning-based deep learning framework. Pattern Recognit. Lett. 2021, 143, 58–66. [Google Scholar] [CrossRef]

- Zare, R.; Pourkazemi, A. DenseNet approach to segmentation and classification of dermatoscopic skin lesions images. arXiv 2021, arXiv:2110.04632. [Google Scholar]

- Ledig, C.; Theis, L.; Huszár, F.; Caballero, J.; Cunningham, A.; Acosta, A.; Aitken, A.P.; Tejani, A.; Totz, J.; Wang, Z.; et al. Photo-Realistic Single Image Super-Resolution Using a Generative Adversarial Network. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017. [Google Scholar]

- Li, S.; Zhao, M.; Fang, Z.; Zhang, Y.; Li, H. Image Super-Resolution Using Lightweight Multiscale Residual Dense Network. Int. J. Opt. 2020, 2020, 2852865. [Google Scholar] [CrossRef]

- Xia, X.; Xu, C.; Nan, B. Inception-v3 for flower classification. In Proceedings of the 2017 2nd International Conference on Image, Vision and Computing (ICIVC), Chengdu, China, 2–4 June 2017. [Google Scholar]

- Ioffe, S.; Szegedy, C. Batch normalization: Accelerating deep network training by reducing internal covariate shift. In Proceedings of the International Conference on Machine Learning, Lille, France, 6–11 July 2015. [Google Scholar]

- Krause, J.; Sapp, B.; Howard, A.; Zhou, H.; Toshev, A.; Duerig, T.; Philbin, J.; Fei-Fei, L. The unreasonable effectiveness of noisy data for fine-grained recognition. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 11–14 October 2016. [Google Scholar]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. Imagenet classification with deep convolutional neural networks. In Proceedings of the 25th International Conference on Neural Information Processing Systems (NIPS’12), Lake Tahoe, NV, USA, 3–6 December 2012; pp. 1097–1105. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- Serre, T.; Wolf, L.; Bileschi, S.; Riesenhuber, M.; Poggio, T. Robust object recognition with cortex-like mechanisms. IEEE Trans. Pattern Anal. Mach. Intell. 2007, 29, 411–426. [Google Scholar] [CrossRef] [PubMed]

- Lin, M.; Chen, Q.; Yan, S. Network in network. arXiv 2013, arXiv:1312.4400. [Google Scholar]

- Szegedy, C.; Liu, W.; Jia, Y.; Sermanet, P.; Reed, S.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A. Going deeper with convolutions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015. [Google Scholar]

- Arora, S.; Bhaskara, A.; Ge, R.; Ma, T. Provable bounds for learning some deep representations. In Proceedings of the International Conference on Machine Learning, Beijing, China, 21–26 June 2014. [Google Scholar]

- Szegedy, C.; Ioffe, S.; Vanhoucke, V.; Alemi, A. Inception-v4, inception-resnet and the impact of residual connections on learning. In Proceedings of the Thirty-First AAAI Conference on Artificial Intelligence, San Francisco, CA, USA, 4–9 February 2017. [Google Scholar]

- Ameri, A. A Deep Learning Approach to Skin Cancer Detection in Dermoscopy Images. J. Biomed. Phys. Eng. 2020, 10, 801–806. [Google Scholar] [CrossRef] [PubMed]

- Sae-Lim, W.; Wettayaprasit, W.; Aiyarak, P. Convolutional neural networks using MobileNet for skin lesion classification. In Proceedings of the 2019 16th International Joint Conference on Computer Science and Software Engineering (JCSSE), Chonburi, Thailand, 10–12 July 2019. [Google Scholar]

- Salian, A.C.; Vaze, S.; Singh, P.; Shaikh, G.N.; Chapaneri, S.; Jayaswal, D. Skin lesion classification using deep learning architectures. In Proceedings of the 2020 3rd International Conference on Com-Munication System, Computing and IT Applications (CSCITA), Mumbai, India, 3–4 April 2020. [Google Scholar]

- Pham, T.C.; Tran, G.S.; Nghiem, T.P.; Doucet, A.; Luong, C.M.; Hoang, V.D. A comparative study for classification of skin cancer. In Proceedings of the 2019 International Conference on System Science and Engineering (ICSSE), Dong Hoi, Vietnam, 19–21 July 2019. [Google Scholar]

- Rahman, Z.; Ami, A.M. A transfer learning based approach for skin lesion classification from imbalanced data. In Proceedings of the 2020 11th International Conference on Electrical and Computer Engineering (ICECE), Dhaka, Bangladesh, 17–19 December 2020. [Google Scholar]

- Polat, K.; Koc, K.O. Detection of Skin Diseases from Dermoscopy Image Using the combination of Convolutional Neural Network and One-versus-All. J. Artif. Intell. Syst. 2020, 2, 80–97. [Google Scholar] [CrossRef]

- Srinivasu, P.N.; SivaSai, J.G.; Ijaz, M.F.; Bhoi, A.K.; Kim, W.; Kang, J.J. Classification of skin disease using deep learning neural networks with MobileNet V2 and LSTM. Sensors 2021, 21, 2852. [Google Scholar] [CrossRef]

- Kausar, N.; Hameed, A.; Sattar, M.; Ashraf, R.; Imran, A.S.; Abidin, M.Z.U.; Ali, A. Multiclass Skin Cancer Classification Using Ensemble of Fine-Tuned Deep Learning Models. Appl. Sci. 2021, 11, 10593. [Google Scholar] [CrossRef]

| Work | Dataset | Used Techniques | Number of Testing Images | Number of Training Images | Number of Classes |

|---|---|---|---|---|---|

| D. Mendes and N. da Silva [29] | University Medical Center Groningen Dermofit Atlas | ResNet-152 | 956 | 3797 | 12 |

| S. Aijaz [30] | BFL NTU + Dermnet | Segmentation + VGG-19 + LSTM | 188 | 1468 | 6 |

| R. Zare and A. Pourkazemi [32] | HAM10000 | U-Net (segmentation) + DenseNet121 (classification) | - | - | 7 |

| Class | Number of Images in Each Partition | |||

|---|---|---|---|---|

| Training Set | Validation Set | Testing Set | Total Set | |

| Akiec | 273 | 27 | 27 | 327 |

| Bcc | 451 | 31 | 32 | 514 |

| Mel | 1030 | 42 | 41 | 1113 |

| Vasc | 119 | 12 | 11 | 142 |

| Nv | 5115 | 795 | 795 | 6705 |

| Df | 101 | 7 | 7 | 115 |

| Bkl | 940 | 79 | 80 | 1099 |

| Total | 8029 | 993 | 993 | 10,015 |

| Class | Number of Training Images |

|---|---|

| Akiec | 5684 |

| Bcc | 5668 |

| Mel | 5886 |

| Vasc | 5570 |

| Nv | 5979 |

| Df | 4747 |

| Bkl | 5896 |

| All Classes | 39,430 |

| Acc | Top-2 Accuracy | Top-3 Accuracy | Specificity | Sensitivity | Fsc |

|---|---|---|---|---|---|

| 0.897 | 0.960 | 0.981 | 0.89 | 0.90 | 0.89 |

| Acc | Top-2 Accuracy | Top-3 Accuracy | Specificity | Sensitivity | Fsc |

|---|---|---|---|---|---|

| 0.913 | 0.968 | 0.986 | 0.90 | 0.91 | 0.91 |

| Specificity | Sensitivity | Fsc | Total Images | |

|---|---|---|---|---|

| Akiec | 0.71 | 0.37 | 0.49 | 27 |

| Bcc | 0.63 | 0.71 | 0.67 | 24 |

| Bkl | 0.66 | 0.5 | 0.57 | 80 |

| Df | 1 | 0.57 | 0.73 | 7 |

| Mel | 0.57 | 0.63 | 0.6 | 41 |

| Nv | 0.95 | 0.98 | 0.96 | 795 |

| Vasc | 0.89 | 0.8 | 0.84 | 10 |

| Average | 0.89 | 0.9 | 0.89 | 984 |

| Specificity | Sensitivity | Fsc | Total Images | |

|---|---|---|---|---|

| Akiec | 0.9 | 0.33 | 0.49 | 27 |

| Bcc | 0.74 | 0.83 | 0.78 | 24 |

| Bkl | 0.75 | 0.56 | 0.64 | 80 |

| Df | 0.8 | 0.57 | 0.67 | 7 |

| Mel | 0.5 | 0.49 | 0.49 | 41 |

| Nv | 0.94 | 0.99 | 0.97 | 795 |

| Vasc | 1 | 0.8 | 0.89 | 10 |

| Average | 0.9 | 0.91 | 0.9 | 984 |

| Reference | Model | Dataset | Acc |

|---|---|---|---|

| [45] | AlexNet | HAM10000 | 84% |

| [46] | MobileNet | HAM10000 | 83.9% |

| [47] | MobileNet, VGG-16 | HAM10000 | 80.61% |

| [48] | SVM | HAM10000 | 74.75% |

| [49] | ResNet | HAM10000 | 78% |

| Xception | 82% | ||

| DenseNet | 82% | ||

| [50] | CNN | HAM10000 | 77% |

| [51] | MobileNet and LSTM | HAM10000 | 85% |

| [16] | RegNetY-3.2GF | HAM10000 | 85.8% |

| [52] | Inception V3 | ISIC 2019 | 91% |

| Proposed | Inception-V3 | HAM10000 | 89.73% |

| InceptionResnet-V2 | HAM10000 | 91.26% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Alwakid, G.; Gouda, W.; Humayun, M.; Jhanjhi, N.Z. Diagnosing Melanomas in Dermoscopy Images Using Deep Learning. Diagnostics 2023, 13, 1815. https://doi.org/10.3390/diagnostics13101815

Alwakid G, Gouda W, Humayun M, Jhanjhi NZ. Diagnosing Melanomas in Dermoscopy Images Using Deep Learning. Diagnostics. 2023; 13(10):1815. https://doi.org/10.3390/diagnostics13101815

Chicago/Turabian StyleAlwakid, Ghadah, Walaa Gouda, Mamoona Humayun, and N. Z Jhanjhi. 2023. "Diagnosing Melanomas in Dermoscopy Images Using Deep Learning" Diagnostics 13, no. 10: 1815. https://doi.org/10.3390/diagnostics13101815

APA StyleAlwakid, G., Gouda, W., Humayun, M., & Jhanjhi, N. Z. (2023). Diagnosing Melanomas in Dermoscopy Images Using Deep Learning. Diagnostics, 13(10), 1815. https://doi.org/10.3390/diagnostics13101815