Human Fall Detection Using 3D Multi-Stream Convolutional Neural Networks with Fusion

Abstract

1. Introduction

2. Related Works

2.1. Wearable-Sensors-Based Human Fall Detection Systems

2.2. Machine-Vision-Based Human Fall Detection Systems

2.3. Machine-Learning-Based Human Fall Detection Systems

2.4. Deep-Learning-Based Human Fall Detection Systems

3. Materials and Methods

3.1. Proposed Method

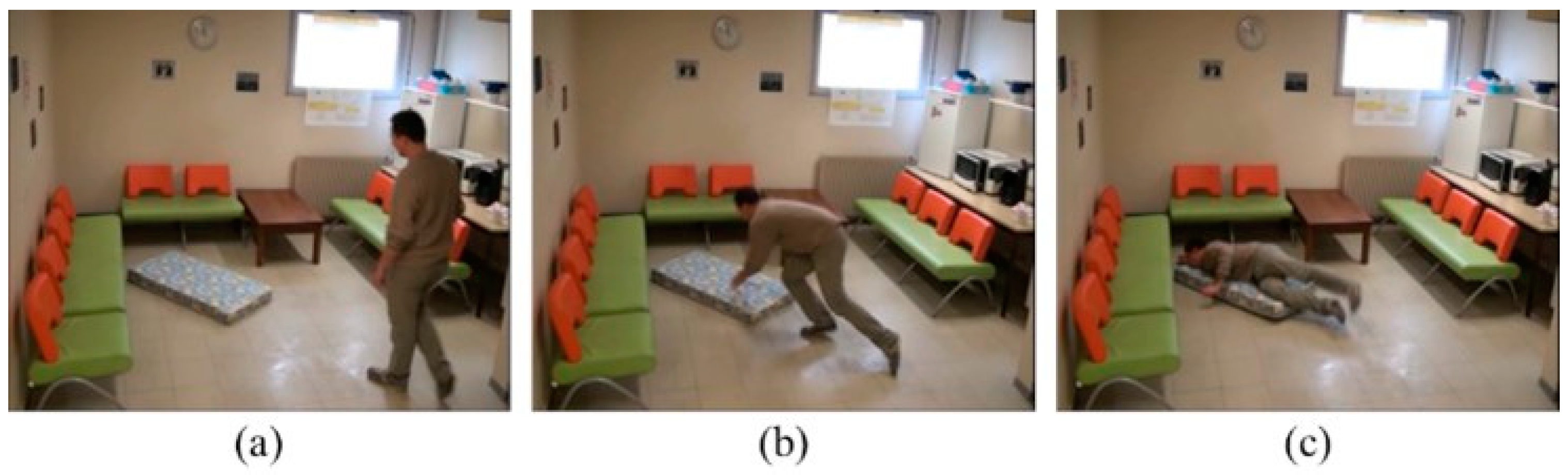

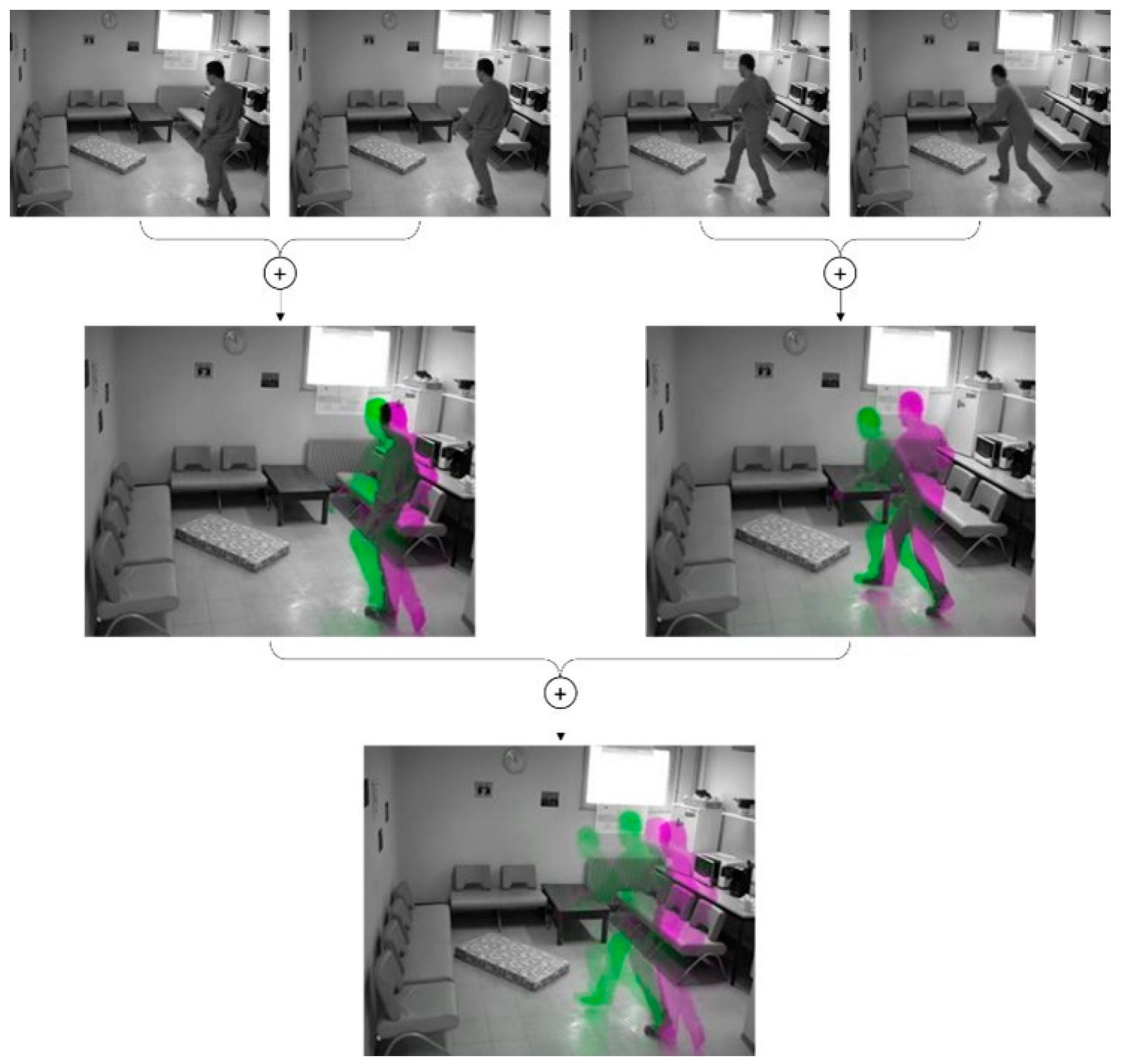

3.2. Pre-Processing of Video Sequences

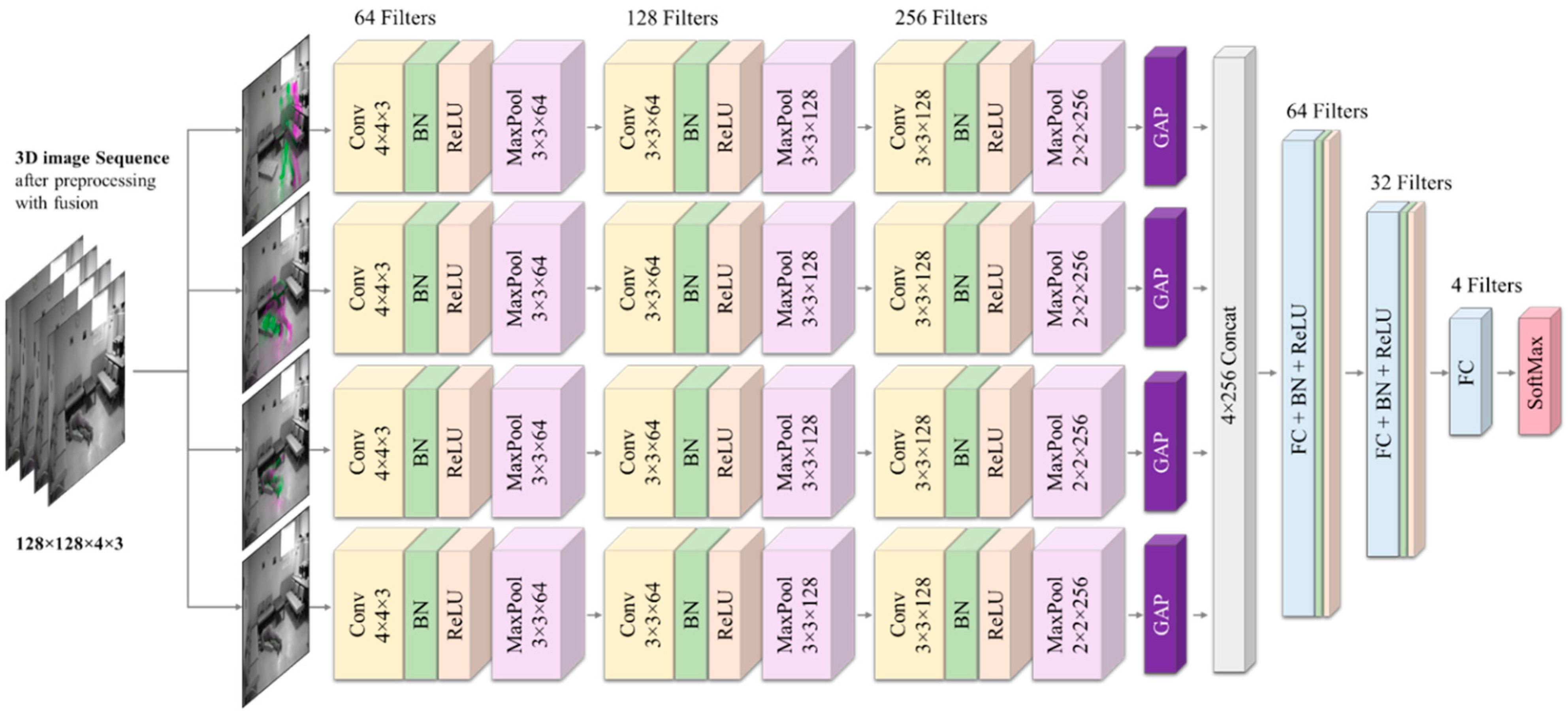

3.3. Model Architecture and Specifications

4. Experiments

4.1. Database Description

4.2. Dataset Preparation

4.3. Experiments and Evaluations

4.4. Discussion

| Model | Accuracy | Sensitivity | Specificity | Precision |

|---|---|---|---|---|

| Chamle et al. [73] | 79.30% | 84.30% | 73.07% | 79.40% |

| Núñez et al. [56] | 97.00% | 99.00% | 97.00% | - |

| Alaoui, et al. [74] | 90.90% | 94.56% | 81.08% | 90.84% |

| Poonsri et al. [75] | 86.21% | 93.18% | 64.29% | 91.11% |

| Zou et al. [77] | 97.23% | 100.00% | 97.04% | - |

| Vishnu et al. [78] | 78.50% | 93.00% | - | 81.50% |

| Youssfi et al. [76] | 93.67% | 100.00% | 87.00% | 83.62% |

| Proposed 4S-3DCNN | 99.03% | 99.00% | 99.68% | 99.00% |

5. Conclusions

Author Contributions

Funding

Informed Consent Statement

Acknowledgments

Conflicts of Interest

References

- World Health Organization. Falls. 2021. Available online: https://www.who.int/news-room/fact-sheets/detail/falls (accessed on 10 October 2022).

- Yu, M.; Rhuma, A.; Naqvi, S.M.; Wang, L.; Chambers, J. A posture recognition-based fall detection system for monitoring an elderly person in a smart home environment. IEEE Trans. Inf. Technol. Biomed. 2012, 16, 1274–1286. [Google Scholar] [PubMed]

- WHO. WHO Global Report on Falls Prevention in Older Age; World Health Organization Ageing and Life Course Unit: Geneva, Switzerland, 2008. [Google Scholar]

- San-Segundo, R.; Echeverry-Correa, J.D.; Salamea, C.; Pardo, J.M. Human activity monitoring based on hidden Markov models using a smartphone. IEEE Instrum. Meas. Mag. 2016, 19, 27–31. [Google Scholar] [CrossRef]

- Baek, J.; Yun, B.-J. Posture monitoring system for context awareness in mobile computing. IEEE Trans. Instrum. Meas. 2010, 59, 1589–1599. [Google Scholar] [CrossRef]

- Tao, Y.; Hu, H. A novel sensing and data fusion system for 3-D arm motion tracking in telerehabilitation. IEEE Trans. Instrum. Meas. 2008, 57, 1029–1040. [Google Scholar]

- Mubashir, M.; Shao, L.; Seed, L. A survey on fall detection: Principles and approaches. Neurocomputing 2013, 100, 144–152. [Google Scholar] [CrossRef]

- Shieh, W.-Y.; Huang, J.-C. Falling-incident detection and throughput enhancement in a multi-camera video-surveillance system. Med. Eng. Phys. 2012, 34, 954–963. [Google Scholar] [CrossRef]

- Miaou, S.-G.; Sung, P.-H.; Huang, C.-Y. A customized human fall detection system using omni-camera images and personal information. In Proceedings of the 1st Transdisciplinary Conference on Distributed Diagnosis and Home Healthcare, Arlington, VA, USA, 2–4 April 2006. [Google Scholar]

- Jansen, B.; Deklerck, R. Context aware inactivity recognition for visual fall detection. In Proceedings of the 2006 Pervasive Health Conference and Workshops, Innsbruck, Austria, 29 November–1 December 2006. [Google Scholar]

- Voulodimos, A.; Doulamis, N.; Doulamis, A.; Protopapadakis, E. Deep Learning for Computer Vision: A Brief Review. Comput. Intell. Neurosci. 2018, 2018, 7068349. [Google Scholar] [CrossRef]

- Islam, M.M.; Nooruddin, S.; Karray, F.; Muhammad, G. Human activity recognition using tools of convolutional neural networks: A state of the art review, data sets, challenges, and future prospects. Comput. Biol. Med. 2022, 149, 106060. [Google Scholar] [CrossRef]

- Muhammad, G.; Alshehri, F.; Karray, F.; El Saddik, A.; Alsulaiman, M.; Falk, T.H. A comprehensive survey on multimodal medical signals fusion for smart healthcare systems. Inf. Fusion 2021, 76, 355–375. [Google Scholar] [CrossRef]

- Altaheri, H.; Muhammad, G.; Alsulaiman, M.; Amin, S.U.; Altuwaijri, G.A.; Abdul, W.; Bencherif, M.A.; Faisal, M. Deep learning techniques for classification of electroencephalogram (EEG) motor imagery (MI) signals: A review. In Neural Computing and Applications; Springer: Berlin/Heidelberg, Germany, 2021. [Google Scholar]

- Pathak, A.R.; Pandey, M.; Rautaray, S. Application of Deep Learning for Object Detection. Procedia Comput. Sci. 2018, 132, 1706–1717. [Google Scholar] [CrossRef]

- Blasch, E.; Zheng, Y.; Liu, Z. Multispectral Image Fusion and Colorization; SPIE Press: Washington, DC, USA, 2018. [Google Scholar]

- Masud, M.; Gaba, G.S.; Choudhary, K.; Hossain, M.S.; Alhamid, M.F.; Muhammad, G. Lightweight and Anonymity-Preserving User Authentication Scheme for IoT-Based Healthcare. IEEE Internet Things J. 2021, 9, 2649–2656. [Google Scholar] [CrossRef]

- Muhammad, G.; Hossain, M.S. COVID-19 and Non-COVID-19 Classification using Multi-layers Fusion From Lung Ultrasound Images. Inf. Fusion 2021, 72, 80–88. [Google Scholar] [CrossRef] [PubMed]

- Haghighat, M.B.A.; Aghagolzadeh, A.; Seyedarabi, H. Multi-focus image fusion for visual sensor networks in DCT domain. Comput. Electr. Eng. 2011, 37, 789–797. [Google Scholar] [CrossRef]

- Haghighat, M.B.A.; Aghagolzadeh, A.; Seyedarabi, H. A non-reference image fusion metric based on mutual information of image features. Comput. Electr. Eng. 2011, 37, 744–756. [Google Scholar] [CrossRef]

- Nafea, O.; Abdul, W.; Muhammad, G.; Alsulaiman, M. Sensor-Based Human Activity Recognition with Spatio-Temporal Deep Learning. Sensors 2021, 21, 2141. [Google Scholar] [CrossRef] [PubMed]

- de Quadros, T.; Lazzaretti, A.E.; Schneider, F.K. A Movement Decomposition and Machine Learning-Based Fall Detection System Using Wrist Wearable Device. IEEE Sens. J. 2018, 18, 5082–5089. [Google Scholar] [CrossRef]

- Biroš, O.; Karchnak, J.; Šimšík, D.; Hošovský, A. Implementation of wearable sensors for fall detection into smart household. In Proceedings of the 2014 IEEE 12th International Symposium on Applied Machine Intelligence and Informatics (SAMI), Herl’any, Slovakia, 23–25 January 2014. [Google Scholar]

- Özdemir, A.T.; Barshan, B. Detecting Falls with Wearable Sensors Using Machine Learning Techniques. Sensors 2014, 14, 10691–10708. [Google Scholar] [CrossRef] [PubMed]

- Pernini, L.; Belli, A.; Palma, L.; Pierleoni, P.; Pellegrini, M.; Valenti, S. A High Reliability Wearable Device for Elderly Fall Detection. IEEE Sens. J. 2015, 15, 4544–4553. [Google Scholar]

- Yazar, A.; Erden, F.; Cetin, A.E. Multi-sensor ambient assisted living system for fall detection. In Proceedings of the IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP), Florence, Italy, 4–9 May 2014. [Google Scholar]

- Santos, G.L.; Endo, P.T.; Monteiro, K.; Rocha, E.; Silva, I.; Lynn, T. Accelerometer-Based Human Fall Detection Using Convolutional Neural Networks. Sensors 2019, 19, 1644. [Google Scholar] [CrossRef] [PubMed]

- Chelli, A.; Pätzold, M. A Machine Learning Approach for Fall Detection and Daily Living Activity Recognition. IEEE Access 2019, 7, 38670–38687. [Google Scholar] [CrossRef]

- Muhammad, G.; Rahman, S.K.M.M.; Alelaiwi, A.; Alamri, A. Smart Health Solution Integrating IoT and Cloud: A Case Study of Voice Pathology Monitoring. IEEE Commun. Mag. 2017, 55, 69–73. [Google Scholar] [CrossRef]

- Alshehri, F.; Muhammad, G. A comprehensive survey of the Internet of Things (IoT) and AI-based smart healthcare. IEEE Access 2021, 9, 3660–3678. [Google Scholar] [CrossRef]

- Muhammad, G.; Alhussein, M. Security, trust, and privacy for the Internet of vehicles: A deep learning approach. IEEE Consum. Electron. Mag. 2022, 11, 49–55. [Google Scholar] [CrossRef]

- Leone, A.; Diraco, G.; Siciliano, P. Detecting falls with 3D range camera in ambient assisted living applications: A preliminary study. Med. Eng. Phys. 2011, 33, 770–781. [Google Scholar] [CrossRef] [PubMed]

- Jokanovic, B.; Amin, M.; Ahmad, F. Radar fall motion detection using deep learning. In Proceedings of the 2016 IEEE Radar Conference (RadarConf16), Philadelphia, PA, USA, 2–6 May 2016. [Google Scholar]

- Amin, M.G.; Zhang, Y.D.; Ahmad, F.; Ho, K.D. Radar Signal Processing for Elderly Fall Detection: The future for in-home monitoring. IEEE Signal Process. Mag. 2016, 33, 71–80. [Google Scholar] [CrossRef]

- Yang, L.; Ren, Y.; Hu, H.; Tian, B. New Fast Fall Detection Method Based on Spatio-Temporal Context Tracking of Head by Using Depth Images. Sensors 2015, 15, 23004–23019. [Google Scholar] [CrossRef] [PubMed]

- Ma, X.; Wang, H.; Xue, B.; Zhou, M.; Ji, B.; Li, Y. Depth-Based Human Fall Detection via Shape Features and Improved Extreme Learning Machine. IEEE J. Biomed. Health Inform. 2014, 18, 1915–1922. [Google Scholar] [CrossRef]

- Angal, Y.; Jagtap, A. Fall detection system for older adults. In Proceedings of the IEEE International Conference on Advances in Electronics, Communication and Computer Technology (ICAECCT), Pune, India, 2–3 December 2016. [Google Scholar]

- Stone, E.E.; Skubic, M. Fall Detection in Homes of Older Adults Using the Microsoft Kinect. IEEE J. Biomed. Health Inform. 2015, 19, 290–301. [Google Scholar] [CrossRef]

- Yang, L.; Ren, Y.; Zhang, W. 3D depth image analysis for indoor fall detection of elderly people. Digit. Commun. Netw. 2016, 2, 24–34. [Google Scholar] [CrossRef]

- Adhikari, K.; Bouchachia, A.; Nait-Charif, H. Activity recognition for indoor fall detection using convolutional neural network. In Proceedings of the 2017 Fifteenth IAPR International Conference on Machine Vision Applications (MVA), Nagoya, Japan, 8–12 May 2017. [Google Scholar]

- Fan, K.; Wang, P.; Zhuang, S. Human fall detection using slow feature analysis. Multimed. Tools Appl. 2019, 78, 9101–9128. [Google Scholar] [CrossRef]

- Xu, H.; Leixian, S.; Zhang, Q.; Cao, G. Fall Behavior Recognition Based on Deep Learning and Image Processing. Int. J. Mob. Comput. Multimed. Commun. 2018, 9, 1–15. [Google Scholar] [CrossRef]

- Bian, Z.-P.; Hou, J.; Chau, L.-P.; Magnenat-Thalmann, N. Fall Detection Based on Body Part Tracking Using a Depth Camera. IEEE J. Biomed. Health Inform. 2015, 19, 430–439. [Google Scholar] [CrossRef]

- Wang, S.; Chen, L.; Zhou, Z.; Sun, X.; Dong, J. Human Fall Detection in Surveillance Video Based on PCANet. Multimed. Tools Appl. 2016, 75, 11603–11613. [Google Scholar] [CrossRef]

- Benezeth, Y.; Emile, B.; Laurent, H.; Rosenberger, C. Vision-Based System for Human Detection and Tracking in Indoor Environment. Int. J. Soc. Robot. 2009, 2, 41–52. [Google Scholar] [CrossRef]

- Liu, H.; Zuo, C. An Improved Algorithm of Automatic Fall Detection. AASRI Procedia 2012, 1, 353–358. [Google Scholar] [CrossRef]

- Lu, K.-L.; Chu, E.T.-H. An Image-Based Fall Detection System for the Elderly. Appl. Sci. 2018, 8, 1995. [Google Scholar] [CrossRef]

- Debard, G.; Karsmakers, P.; Deschodt, M.; Vlaeyen, E.; Bergh, J.; Dejaeger, E.; Milisen, K.; Goedemé, T.; Tuytelaars, T.; Vanrumste, B. Camera Based Fall Detection Using Multiple Features Validated with Real Life Video. In Proceedings of the 7th International Conference on Intelligent Environments, Nottingham, UK, 25–28 July 2011; Volume 10. [Google Scholar]

- Shawe-Taylor, J.; Sun, S. Kernel Methods and Support Vector Machines. Acad. Press Libr. Signal Process. 2014, 1, 857–881. [Google Scholar]

- Cristianini, N.; Shawe-Taylor, J. An Introduction to Support Vector Machines and Other Kernel-Based Learning Methods; Cambridge University Press: London, UK, 2001; Volume 22. [Google Scholar]

- Muaz, M.; Ali, S.; Fatima, A.; Idrees, F.; Nazar, N. Human Fall Detection. In Proceedings of the 2013 16th International Multi Topic Conference, INMIC 2013, Lahore, Pakistan, 19–20 December 2013. [Google Scholar]

- Lu, N.; Wu, Y.; Feng, L.; Song, J. Deep Learning for Fall Detection: Three-Dimensional CNN Combined With LSTM on Video Kinematic Data. IEEE J. Biomed. Health Inform. 2019, 23, 314–323. [Google Scholar] [CrossRef] [PubMed]

- Nafea, O.; Abdul, W.; Muhammad, G. Multi-Sensor Human Activity Recognition using CNN and GRU. Int. J. Multimed. Inf. Retr. 2022, 11, 135–147. [Google Scholar] [CrossRef]

- Min, W.; Cui, H.; Rao, H.; Li, Z.; Yao, L. Detection of Human Falls on Furniture Using Scene Analysis Based on Deep Learning and Activity Characteristics. IEEE Access 2018, 6, 9324–9335. [Google Scholar] [CrossRef]

- Kong, Y.; Huang, J.; Huang, S.; Wei, Z.; Wang, S. Learning Spatiotemporal Representations for Human Fall Detection in Surveillance Video. J. Vis. Commun. Image Represent. 2019, 59, 215–230. [Google Scholar] [CrossRef]

- Núñez-Marcos, A.; Azkune, G.; Arganda-Carreras, I. Vision-Based Fall Detection with Convolutional Neural Networks. Wirel. Commun. Mob. Comput. 2017, 2017, 9474806. [Google Scholar] [CrossRef]

- Fan, Y.; Levine, M.D.; Wen, G.; Qiu, S. A deep neural network for real-time detection of falling humans in naturally occurring scenes. Neurocomputing 2017, 260, 43–58. [Google Scholar] [CrossRef]

- Taramasco, C.; Rodenas, T.; Martinez, F.; Fuentes, P.; Munoz, R.; Olivares, R.; De Albuquerque, V.H.C.; Demongeot, J. A Novel Monitoring System for Fall Detection in Older People. IEEE Access 2018, 6, 43563–43574. [Google Scholar] [CrossRef]

- Nogas, J.; Khan, S.S.; Mihailidis, A. DeepFall: Non-Invasive Fall Detection with Deep Spatio-Temporal Convolutional Autoencoders. J. Healthc. Inform. Res. 2020, 4, 50–70. [Google Scholar] [CrossRef] [PubMed]

- Gu, C.; Sun, C.; Ross, D.A.; Vondrick, C.; Pantofaru, C.; Li, Y.; Vijayanarasimhan, S.; Toderici, G.; Ricco, S.; Sukthankar, R.; et al. Ava: A video dataset of spatio-temporally localized atomic visual actions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018. [Google Scholar]

- Peng, X.; Schmid, C. Multi-region Two-Stream R-CNN for Action Detection. In European Conference on Computer Vision; Springer: Amsterdam, The Netherlands, 2016. [Google Scholar]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 1137–1149. [Google Scholar] [CrossRef] [PubMed]

- Carreira, J.; Zisserman, A. Quo Vadis, Action Recognition? A New Model and the Kinetics Dataset. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017. [Google Scholar]

- Muhammad, G.; Hossain, M.S.; Kumar, N. EEG-Based Pathology Detection for Home Health Monitoring. IEEE J. Sel. Areas Commun. 2021, 39, 603–610. [Google Scholar] [CrossRef]

- Altuwaijri, G.A.; Muhammad, G. A Multibranch of Convolutional Neural Network Models for Electroencephalogram-Based Motor Imagery Classification. Biosensors 2022, 12, 22. [Google Scholar] [CrossRef]

- Al-Hammadi, M.; Muhammad, G.; Abdul, W.; Alsulaiman, M.; Bencherif, M.A.; Alrayes, T.S.; Mathkour, H.; Mekhtiche, M.A. Deep Learning-Based Approach for Sign Language Gesture Recognition With Efficient Hand Gesture Representation. IEEE Access 2020, 8, 192527–192542. [Google Scholar] [CrossRef]

- Tu, Z.; Xie, W.; Qin, Q.; Poppe, R.; Veltkamp, R.C.; Li, B.; Yuan, J. Multi-stream CNN: Learning representations based on human-related regions for action recognition. Pattern Recognit. 2018, 79, 32–43. [Google Scholar] [CrossRef]

- Al-Hammadi, M.; Muhammad, G.; Abdul, W.; Alsulaiman, M.; Bencherif, M.A.; Mekhtiche, M.A. Hand Gesture Recognition for Sign Language Using 3DCNN. IEEE Access 2020, 8, 79491–79509. [Google Scholar] [CrossRef]

- Gaur, L.; Bhatia, U.; Jhanjhi, N.Z.; Muhammad, G.; Masud, M. Medical Image-based Detection of COVID-19 using Deep Convolution Neural Networks. Multimed. Syst. 2022, 1–22. [Google Scholar] [CrossRef] [PubMed]

- Altuwaijri, G.A.; Muhammad, G.; Altaheri, H.; AlSulaiman, M. A Multi-Branch Convolutional Neural Network with Squeeze-and-Excitation Attention Blocks for EEG-Based Motor Imagery Signals Classification. Diagnostics 2022, 12, 995. [Google Scholar] [CrossRef] [PubMed]

- Musallam, Y.K.; AlFassam, N.I.; Muhammad, G.; Amin, S.U.; Alsulaiman, M.; Abdul, W.; Altaheri, H.; Bencherif, M.A.; Algabri, M. Electroencephalography-based motor imagery classification using temporal convolutional network fusion. Biomed. Signal Process. Control 2021, 69, 102826. [Google Scholar] [CrossRef]

- Charfi, I.; Mitéran, J.; Dubois, J.; Atri, M.; Tourki, R. Optimised spatio-temporal descriptors for real-time fall detection: Comparison of SVM and Adaboost based classification. J. Electron. Imaging 2013, 22, 17. [Google Scholar] [CrossRef]

- Chamle, M.; Gunale, K.G.; Warhade, K.K. Automated unusual event detection in video surveillance. In Proceedings of the 2016 International Conference on Inventive Computation Technologies (ICICT), Coimbatore, India, 26–27 August 2016. [Google Scholar]

- Alaoui, A.Y.; el Hassouny, A.; Thami, R.O.H.; Tairi, H. Human Fall Detection Using Von Mises Distribution and Motion Vectors of Interest Points. In Proceedings of the 2nd international Conference on Big Data, Cloud and Applications (BDCA’17), Tetouan, Morocco, 29–30 March 2017. [Google Scholar]

- Poonsri, A.; Chiracharit, W. Improvement of fall detection using consecutive-frame voting. In Proceedings of the 2018 International Workshop on Advanced Image Technology (IWAIT), Chiang Mai, Thailand, 7–9 January 2018. [Google Scholar]

- Alaoui, A.Y.; Tabii, Y.; Thami, R.O.H.; Daoudi, M.; Berretti, S.; Pala, P. Fall Detection of Elderly People Using the Manifold of Positive Semidefinite Matrices. J. Imaging 2021, 7, 109. [Google Scholar] [CrossRef]

- Zou, S.; Min, W.; Liu, L.; Wang, Q.; Zhou, X. Movement Tube Detection Network Integrating 3D CNN and Object Detection Framework to Detect Fall. Electronics 2021, 10, 898. [Google Scholar] [CrossRef]

- Vishnu, C.; Datla, R.; Roy, D.; Babu, S.; Mohan, C.K. Human Fall Detection in Surveillance Videos Using Fall Motion Vector Modeling. IEEE Sens. J. 2021, 21, 17162–17170. [Google Scholar] [CrossRef]

| Layer Type | Number of Filters | Size of Kernel | Size of Stride | Size of Feature Map |

|---|---|---|---|---|

| Input Layer | 128 × 128 × 4 × 3 | |||

| 1st Convolutional layer | ||||

| Branch 1 | 64 | 4 × 4 × 3 | 2 ×2 × 3 | 63 × 63 × 1 × 64 |

| Branch 2 | 64 | 4 × 4 × 3 | 2 × 2 × 3 | 63 × 63 × 1 × 64 |

| Branch 3 | 64 | 4 × 4 × 3 | 2 × 2 × 3 | 63 × 63 × 1 × 64 |

| Branch 4 | 64 | 4 × 4 × 3 | 2 × 2 × 3 | 63 × 63 × 1 × 64 |

| 2nd Convolutional layer | ||||

| Branch 1 | 128 | 3 × 3 × 64 | 2 × 2 × 64 | 15 × 15 × 1 × 128 |

| Branch 2 | 128 | 3 × 3 × 64 | 2 × 2 × 64 | 15 × 15 × 1 × 128 |

| Branch 3 | 128 | 3 × 3 × 64 | 2 × 2 × 64 | 15 × 15 × 1 × 128 |

| Branch 4 | 128 | 3 × 3 × 64 | 2 × 2 × 64 | 15 × 15 × 1 × 128 |

| 3rd Convolutional layer | ||||

| Branch 1 | 256 | 3 × 3 × 128 | 1 × 1 × 128 | 5 × 5 × 1 × 256 |

| Branch 2 | 256 | 3 × 3 × 128 | 1 × 1 × 128 | 5 × 5 × 1 × 256 |

| Branch 3 | 256 | 3 × 3 × 128 | 1 × 1 × 128 | 5 × 5 × 1 × 256 |

| Branch 4 | 256 | 3 × 3 × 128 | 1 × 1 × 128 | 5 × 5 × 1 × 256 |

| 1st Max Pooling layer | ||||

| Branch 1 | 3 × 3 × 1 | 2 × 2 × 64 | 31 × 31 × 1 × 64 | |

| Branch 2 | 3 × 3 × 1 | 2 × 2 × 64 | 31 × 31 × 1 × 64 | |

| Branch 3 | 3 × 3 × 1 | 2 × 2 × 64 | 31 × 31 × 1 × 64 | |

| Branch 4 | 3 × 3 × 1 | 2 × 2 × 64 | 31 × 31 × 1 × 64 | |

| 2nd Max Pooling layer | ||||

| Branch 1 | 3 × 3 × 1 | 2 × 2 × 128 | 7 × 7 × 1 × 128 | |

| Branch 2 | 3 × 3 × 1 | 2 × 2 × 128 | 7 × 7 × 1 × 128 | |

| Branch 3 | 3 × 3 × 1 | 2 × 2 × 128 | 7 × 7 × 1 × 128 | |

| Branch 4 | 3 × 3 × 1 | 2 × 2 × 128 | 7 × 7 × 1 × 128 | |

| 3rd Max Pooling layer | ||||

| Branch 1 | 2 × 2 × 1 | 1 × 1 × 256 | 4 × 4 × 1 × 256 | |

| Branch 2 | 2 × 2 × 1 | 1 × 1 × 256 | 4 × 4 × 1 × 256 | |

| Branch 3 | 2 × 2 × 1 | 1 × 1 × 256 | 4 × 4 × 1 × 256 | |

| Branch 4 | 2 × 2 × 1 | 1 × 1 × 256 | 4 × 4 × 1 × 256 | |

| Fully connected (FC) layer | ||||

| 1st FC layer | 64 | 1 × 1 × 1 × 64 | ||

| 2nd FC layer | 32 | 1 × 1 × 1 × 32 | ||

| 3rd FC layer | 4 | 1 × 1 × 1 × 4 | ||

| Class | Generated Videos | Generated Sequences |

|---|---|---|

| Standing | 128 | 1498 |

| Falling | 104 | 1464 |

| Fallen | 103 | 1793 |

| Other | 120 | 1637 |

| Measurement | Equation | Equation No. |

|---|---|---|

| Accuracy | (2) | |

| Sensitivity | (3) | |

| Specificity | (4) | |

| Precision | (5) |

| Model | Accuracy | Sensitivity | Specificity | Precision |

|---|---|---|---|---|

| GoogleNet | 97.06 ± 1.09 | 97.45 ± 1.18 | 99.19 ± 0.36 | 97.51 ± 1.05 |

| SqueezeNet | 97.62 ± 0.29 | 97.57 ± 0.31 | 99.22 ± 0.10 | 97.55 ± 0.30 |

| ResNet18 | 98.75 ± 0.09 | 98.73 ± 0.08 | 99.59 ± 0.03 | 98.71 ± 0.09 |

| DarkNet19 | 97.45 ± 0.12 | 97.35 ± 0.13 | 99.15 ± 0.04 | 97.39 ± 0.14 |

| 4S-3DCNN | 99.03 ± 0.44 | 99.00 ± 0.46 | 99.68 ± 0.15 | 99.00 ± 0.46 |

| Model | Accuracy (%) | No. of Parameters | No. of Layers | Information Density |

|---|---|---|---|---|

| GoogleNet | 97.06% | 14.5 M | 142 | 6.694 |

| SqueezeNet | 97.62% | 2.6 M | 68 | 37.546 |

| ResNet18 | 98.75% | 33.1 M | 71 | 2.983 |

| DarkNet19 | 97.45% | 57.9 M | 64 | 1.523 |

| Proposed 4S-3DCNN | 99.03% | 1.5 M | 67 | 66.020 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Alanazi, T.; Muhammad, G. Human Fall Detection Using 3D Multi-Stream Convolutional Neural Networks with Fusion. Diagnostics 2022, 12, 3060. https://doi.org/10.3390/diagnostics12123060

Alanazi T, Muhammad G. Human Fall Detection Using 3D Multi-Stream Convolutional Neural Networks with Fusion. Diagnostics. 2022; 12(12):3060. https://doi.org/10.3390/diagnostics12123060

Chicago/Turabian StyleAlanazi, Thamer, and Ghulam Muhammad. 2022. "Human Fall Detection Using 3D Multi-Stream Convolutional Neural Networks with Fusion" Diagnostics 12, no. 12: 3060. https://doi.org/10.3390/diagnostics12123060

APA StyleAlanazi, T., & Muhammad, G. (2022). Human Fall Detection Using 3D Multi-Stream Convolutional Neural Networks with Fusion. Diagnostics, 12(12), 3060. https://doi.org/10.3390/diagnostics12123060