1. Introduction

Artificial intelligence (AI) technology has advanced significantly with the development of a hybrid system that combines neural networks, logic programming models, and SAT structures. The artificial neural network (ANN) is a robust analysis operating model that has previously seen extensive investigation and application by specialists and researchers due to its capacity to manage and display complex challenges. The Hopfield neural network (HNN), established by Hopfield & Tank [

1], is one of the many neural networks. The HNN is a recurrent network that performs similarly to the human brain and can solve various challenging mathematical problems. A symbolised representing structure that governs the data stored in the HNN was produced by using Wan Abdullah’s (WA) approach that describes logic rules [

2]. Abdullah suggested one of the earliest approaches to incorporate SAT into the HNN. The work was explained by embedding the SAT structure into the HNN by reducing the cost function to a minimum value. Abdullah offered a unique logic programming as a symbolic rule to represent the HNN to solve the issue by determining the symbol’s reduced cost function using the final Lyapunov energy function. Therefore, the method successfully surpassed the traditional learning method like Hebbian learning and can intelligently compute the relationship between the reasoning.

The work is expanded by Sathasivam when the SAT structure, specifically Horn Satisfiability (HornSAT), is utilised [

3]. The proposed logic demonstrates how well the HornSAT can converge to the least amount of energy. Years later, Kasihmuddin et al. [

4] pioneered the work of 2-Satisfiability (2SAT). The 2SAT structure is being implemented in the HNN as the systematic logical rule. According to the previous work, the proposed 2SAT in the HNN manages to reach a high global minima ratio in a respectable amount of time. Two literals are used in every clause of the suggested logical structure, and a disjunction joins all clauses. By contrasting the cost function and the Lyapunov energy function, this logical rule was incorporated into the HNN. Mansor et al. [

5] then expanded the logical rule’s order by suggesting a systematic logical rule with a higher order. This work improves the previous work by adding three literals per clause, namely 3-Satisfiability (3SAT) in the HNN. The third-order Lyapunov energy function is evaluated with the cost function to determine the third-order synaptic weight. Despite the suggested study’s associative memory of the HNN with 3SAT expanding exponentially, it defined the global minimum ratio’s superior value. The proposed 3SAT increases the network’s storage capacity in the HNN because the neuron’s ability to produce many local minima solutions is still limited. The use of systematic SAT in ANNs was made possible in large part by this research. The rigidity of the logical composition, which added to overfitting results in the HNN, is the drawback of any kSAT. As soon as the number of neurons is elevated, the literals in a clause result in low synaptic weight values, which reduces the likelihood of finding global minimum solutions. Various options in the search space solutions are required to ensure a thorough exploration of the neurons. Thus, to overcome the downside of the structure, we offer a hybridised network of 3SAT logic that uses fuzzy logic and a metaheuristic algorithm to significantly widen the search space and improve the presentation of logic programming in the HNN.

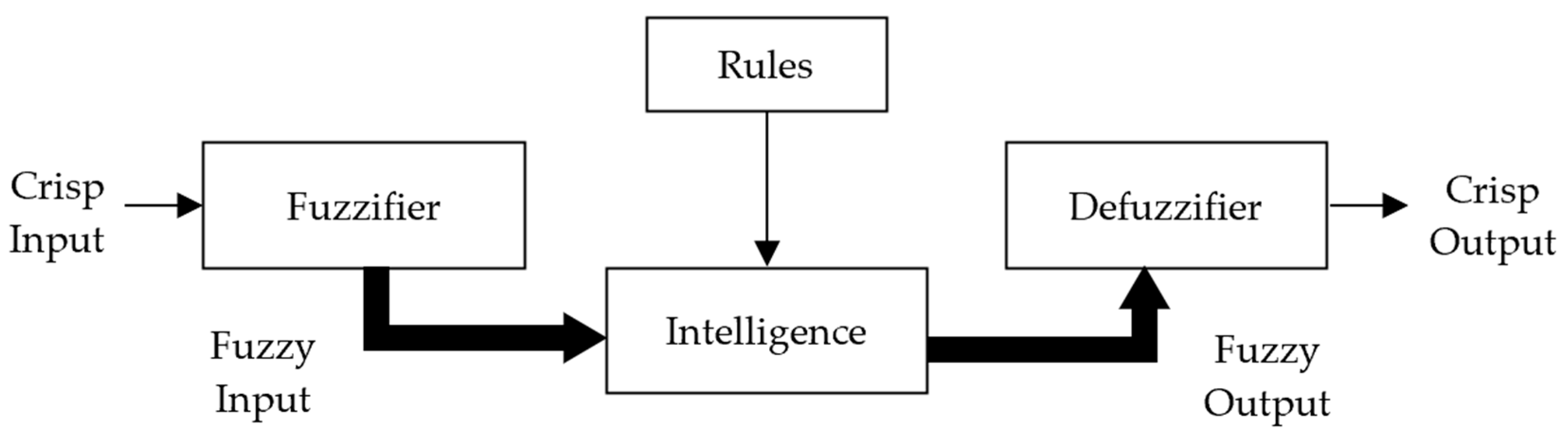

The word fuzzy refers to things that appear not to be adequate or ambiguous [

6]. Numerous-valued logic allows an idea to have some degree of reality [

7]. It can simulate human experience and decision-making. Fuzzy logic has been used in numerous scientific fields, including neural networks, machine learning, artificial intelligence, and data mining [

8,

9,

10,

11]. This method can handle insufficient, inaccurate, inconsistent, or uncertain data. Since then, a broad range of research has shown the benefits of this approach, including the fact that the fuzzy sets are straightforward to use, the method does not require a sophisticated mathematical model, and the cost of computing is little [

12]. Fuzzy logic builds the proper rules via a fuzzification and defuzzification process. Incorporating the fuzzy logic process with the HNN lowers the processing cost and enables the suggested scheme to accommodate more accurate neurons. As part of the defuzzifying procedure, any suboptimal neuron will be modified using the alpha-cut technique until the ideal neuron state is found. Then, we integrate the network with a metaheuristic algorithm, a Genetic Algorithm (GA). The Darwinian Theory of Survival of the Fittest is replicated by the GA, a form of natural selection. The GA was proposed by Holland [

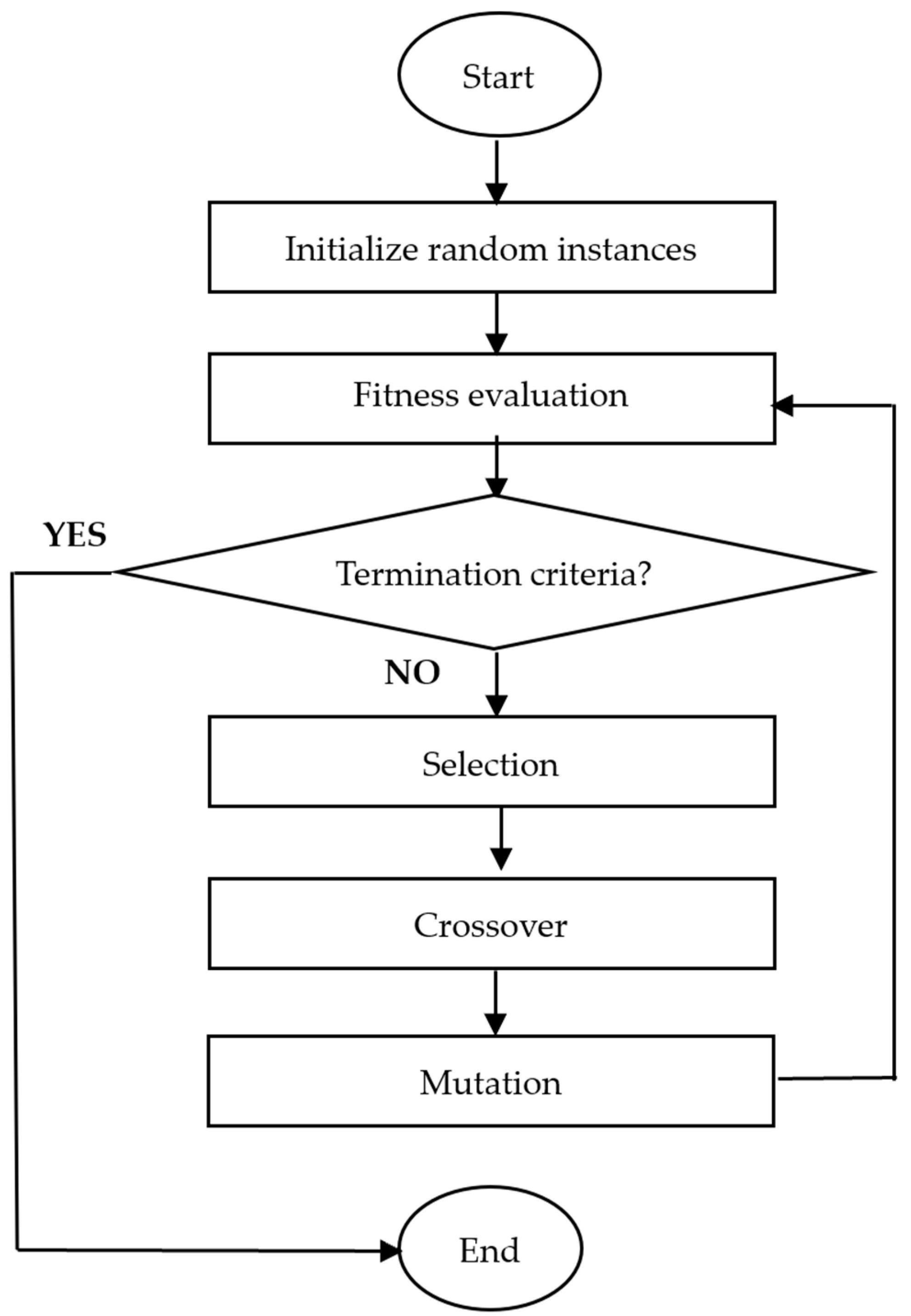

13] as a widely used optimisation technique founded on the population of metaheuristic algorithms. A population of potential solutions is improved over time as part of the implementation of the GA, which is an iterative process. The paradigm of computer intelligence known as metaheuristic algorithms is particularly effective at handling challenging optimisation problems.

Thus, the objectives of this research are listed as follows: (1) To construct a continuous search space by applying a fuzzy logic system in the training phase of 3-Satisfiability logic. (2) To create a design structure integrating a Genetic Algorithm to improve all the logical combinations of reasoning that will minimise the cost function. (3) To produce a new performance metric to evaluate the method’s efficiency in obtaining the highest clauses of satisfying assignments in the learning phase. (4) To establish a thorough assessment of the suggested hybrid network using the generated datasets that can perform significantly with the Hopfield neural network model. Our findings indicated that the suggested HNN-3SATFuzzyGA exhibits the finest performance in conditions of error analysis, efficiency evaluation, energy analysis, similarity index performance, and computational time. The layout of this article is as follows:

Section 2 provides an outline structure of 3-Satisfiability.

Section 3 describes the application of 3SAT into the Hopfield Neural Network. The general formulation of fuzzy logic and Genetic Algorithm is explained in

Section 4 and

Section 5, respectively.

Section 6 discusses the execution of the suggested model of HNN-3SATFuzzyGA. Additionally,

Section 7 listed the experimental and parameter sets, along with the performance evaluation metrics used.

Section 8 reviewed the outcomes and discussion on the findings of HNN-3SATFuzzyGA.

Section 9 concludes the paper’s results and suggestions for future research.

6. Fuzzy Logic System and Genetic Algorithm in 3-Satisfiability Logic Programming

The discovery of the HNN and SAT models, satisfying assignments of embedded logical structure, results in an optimal training phase (when the cost function is zero). A metaheuristic algorithm was used as the training method to carry out the kSAT minimisation objective in the HNN [

14]. The optimisation operators enable the algorithm to enhance the network’s convergence towards cost function minimisation [

19].

is used as the instance for the state of neurons in the training phase’s representation of the 3SAT’s potentially satisfying assignments. The drawback of the previous approach is the usage of

representations. In this study, the process is to employ the neuron’s expression continuously so that every component in the exploration universe will be computed with various fitness functions. As a result, we embedded fuzzy logic in the system in the first stage. Additionally, the domain of fuzzy sets and functions is introduced when the binary (Boolean) problem is made continuous while maintaining its logical nature. A metaheuristic algorithm is employed in the second stage to obtain the best solution for the system to converge optimally. We cast it into the framework of the hybrid function between two techniques and then return (transform) the solution to the initial binary structure. This section explains how fuzzy logic incorporates the GA in 3SAT, namely the HNN-3SATFuzzyGA. The proposed technique is allocated into two stages: (1) the Fuzzy logic system’s stage and (2) the Genetic algorithm’s stage.

Firstly, the fuzzy logic control system is used in determining the output

with three inputs

. For example, three literals represent the input of

and

and the system produces

as its output. The procedure is repeated for all number of clauses. Three membership functions are set up as the inputs. Meanwhile, there are two membership functions used in the output. In the first phase, each

will be randomly assigned. After that, fuzzy logic will take place to evaluate the value of the first output

. The first step in the fuzzy logic system is fuzzification, where each literal will be assigned to its membership function of:

in which

.

Then, fuzzy rules will be applied using the first clause block,

, e.g.,

. By enabling

to accept any value in [0, 1], we will employ Zadeh’s operators, such as the logical operator AND holds the function

and logical operator OR holds the function

. This conversion creates the fuzzy function logically consistent with the binary function (Boolean function). This process will be repeated for a total number of clauses

. Defuzzification steps will be employed to convert fuzzy values to its crips output. The alpha-cut method will modify neuron clauses through defuzzification until the proper neuron state is attained [

17]. The improved alpha-cut formula has an estimation model of:

The second phase of the learning part is applying the Genetic Algorithm (GA). The initialisation of the chromosome,

is obtained from the previous phase (fuzzy logic system solutions). The fitness of each chromosome,

is evaluated using a fitness function:

where the conditions meet

The sum of all clauses in

implies the optimum fitness

of each

[

18]. Equation (14) denotes the objective function of GA:

The genetic information

of two parents (chromosome,

) is exchanged using crossover operators to create the offspring (new chromosome,

). The parents’ genetic information is replaced according to the segments produced in the crossover stage, which selects random crossover points [

20]. One mechanism that keeps genetic diversity from one population to the next is mutation. The mutation procedure entails the flipping of a single gene from another. A gene

of a given chromosome

is disseminated within itself by the displacement mutation operator. The location is randomly selected from the provided gene

for displacement to guarantee that both the final result and a random displacement mutation are valid. Note that the GA process will be repeated until the

is achieved. Algorithm 1 shows the pseudocode for FuzzyGA.

| Algorithm 1: Pseudocode for FuzzyGA |

Begin the first stage

Step 1: Initialisation

Initialise the inputs, output, membership function and rule lists .

Step 2: Fuzzification

Assign the literal, to it membership function which .

Step 3: Fuzzy rules

Apply rules lists, using the first clause block, , e.g.,

Step 4: Defuzzification

Implement the defuzzification step and estimation model.

end

Begin the second stage

Step 1: Initialisation

Initialise random populations of the instances, .

Step 2: Fitness Evaluation

Evaluate the fitness of the population of the instances, .

Step 3: Selection

Select best and save as .

Step 4: Crossover

Randomly select two bits, from .

Generate new solutions and save them as .

Step 5: Mutation

Select a solution from .

Mutates the solution by flipping the bits randomly and generates .

if ,

Update .

end

Update .

end |

8. Results and Discussion

The results of three distinct methods will be discussed in this section. HNN-3SAT uses a basic random searching algorithm without any improvisation in the training phase. Meanwhile, HNN-3SATFuzzy employs fuzzy logic alone, and HNN-3SATFuzzyGA uses fuzzy logic and a Genetic algorithm in the training phase. Finding satisfying

assignments that match the reduction of Equation (6) is the purpose of the training and testing phase in the HNN. This strategy uses the WA method to achieve appropriate synaptic weight regulation [

31]. A simulation of the proposed model is conducted with the dynamics of different numbers of neurons to verify the prediction. This study limits the number of neurons to

to determine our suggested model’s success. Additionally, we tested training error, training efficiency, testing error, energy analysis, similarity index and computational time. Results of all the three HNN-3SAT methods in the training and testing phases are shown in

Figure 3,

Figure 4,

Figure 5,

Figure 6,

Figure 7,

Figure 8 and

Figure 9.

Figure 3 displays the results of RMSE

train, MAE

train, SSE

train and MAPE

train during the training stage for the three different methods: HNN-3SAT, HNN-3SATFuzzy, and HNN-3SATFuzzyGA, respectively. According to

Figure 3a,b, RMSE

train and MAE

train for HNN-3SATFuzzyGA outperformed those of other networks during the training phase. Despite an increase in the number of neurons (NN), the results demonstrate that the RMSE

train and MAE

train values for the HNN-3SATFuzzyGA network are the lowest. The HNN-3SATFuzzyGA solutions deviated from the potential solutions less. Initially, the outcomes for all networks seemed to have little difference during

. However, once it reached

, the results started to deviate more for RMSE

train and MAE

train, with performance for HNN-3SATFuzzyGA remaining low. The other two methods considerably increased toward the end of the simulations, especially HNN-3SAT. Based on RMSE

train and MAE

train calculations, the suggested method, HNN-3SATFuzzyGA, achieved

at lower results. The leading cause of this is that fuzzy logic, and GA resolve a better solution in the training phase, enabling the achievement of

in a shorter number of cycles. The reason is that the fuzzy logic system is more likely to widen the search during training using fuzzification for precise interpretations. Then, the HNN-3SATFuzzyGA utilised a systematic approach by employing the defuzzification procedure. The usage of GA also helps to optimise the solutions better. Additionally, HNN-3SATFuzzyGA could efficiently verify the proper interpretation and handle more restrictions than the other networks. In another work by Abdullahi et al. [

32], a method for extracting the logical rule in the form of 3SAT to characterise the behaviour of a particular medical data set is tested. The goal is to create a reliable algorithm that can be used to extract data and insights from a set of medical records. The 3SAT method extracts logical rule-based insights from medical data sets. The technique will integrate 3SAT logic and HNN as a single data mining paradigm. Medical data sets like the Statlog Heart (ST) and Breast Cancer Coimbra (BCC) are tested and trained using the proposed method. The network has recorded good performance evaluation metrics like RMSE and MAE based on the simulation with various numbers of neurons. The results of Abdullahi et al. [

32] can conclude that the HNN-3SAT models have a considerably lower sensitivity in error analysis results. According to the optimal CPU time measured with various levels of complexity, the networks can also accomplish flexibility.

Figure 3c, d demonstrates that HNN-3SATFuzzyGA has lower SSE

train and MAPE

train values. Since HNN-3SATFuzzyGA has a lower SSE

train value, it has a more reliable ability to train the simulated data set. With a lower SSE

train value for all hidden neuron counts, HNN-3SATFuzzyGA was found to have evident good-quality results. Even though the results for SSE

train during

have not deviated far from each other’s result, HNN-3SATFuzzyGA remained low until the end of the simulations compared to the other methods. Associated with HNN-3SATFuzzyGA during the final NN, the outcomes of HNN-3SAT increased drastically. The results show the MAPE

train settings’ output for all networks. The MAPE

train value has also provided compelling evidence of fuzzy logic and GA compatibility in 3SAT. The results for HNN-3SATFuzzyGA were significantly at a low level even after

. Compared to HNN-3SATFuzzyGA, the results of HNN-3SAT converged higher, starting from

. As a result, the HNN-3SATFuzzyGA approach performs noticeably better than the other two models. The effectiveness of the network is because the training phase’s operators of fuzzy logic and GA boosted the solutions’ compatibility. Compared to different networks, HNN-3SATFuzzyGA can recover a more precise final state.

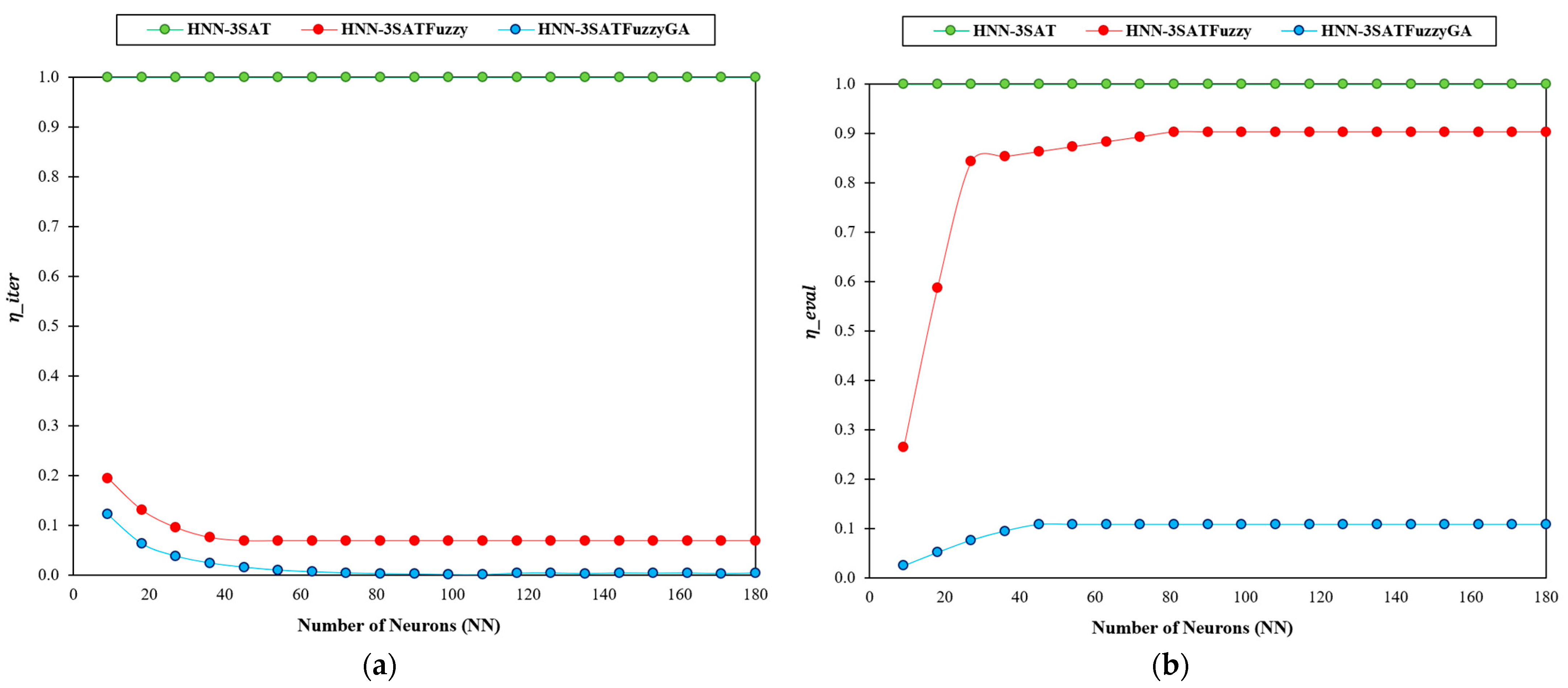

Figure 4a,b shows the iteration efficiency

and evaluation efficiency

for the methods in the training part, respectively. A lower value of

and

stands to be the best result. Generally, a lower

and

score denotes a higher efficiency level. Based on

Figure 4a, HNN-3SAT maintains the value of

since it has no optimisation operator to improve the learning part. HNN-3SATFuzzyGA and HNN-3SATFuzzy have a lower value of

compared to HNN-3SAT. The reason is that most of the iteration goes into the fuzzy logic and (or) GA optimisation phase. In order to search for the best maximum fitness, the training must go through the operator to locate the best solution. Meanwhile, in

Figure 4b,

show the results for the highest successful iterations in obtaining the highest clauses of satisfying assignments in the fuzzy-meta learning phase. HNN-3SAT remains at

at all levels since it does not have an optimiser. In the meantime, HNN-3SATFuzzyGA obtained the lowest score since it can achieve the highest successful iterations to obtain maximum fitness. Fuzzy logic is responsible for widening the development effort to obtain an ideal state with satisfying assignments. Owing to the addition of a metaheuristic algorithm, such as GA, HNN-3SATFuzzyGA performed well with the help of operators like crossover and mutation. The crossover is a subclass of global search operators that maximises the search for potential solutions. In this instance, chromosomal crossover (possible satisfying assignments) leads to almost ideal states that might support 3SAT. The mutation operator is vital in locating the best solution for a particular area of the search space. Meanwhile, HNN-3SAT has no capability for exploration and exploitation since it uses a random search technique by the “trial and error” mechanism.

Figure 5 display the results of RMSE

test, MAE

test, SSE

test and MAPE

test in the testing error analyses. Examining the HNN-3SAT behaviour based on error analysis concerning global or local minima solutions is the significance of testing error analysis. The WA approach will construct the synaptic weight after completing the HNN-3SAT clause satisfaction (minimisation of the cost function).

Figure 5a,b shows that the testing error for RMSE

test and MAE

test in HNN-3SAT is the highest, indicating that the model cannot induce global minima solutions. The model is overfitted to the test sets due to the unsatisfied clauses. Therefore, we need to add an optimiser such as fuzzy logic to widen the search space and GA optimise the solutions better. Based on RMSE

test and MAE

test calculations, the HNN-3SAT attains

at higher results. The two methods, with an optimiser, maintain the error at a lower level. Additionally, in

Figure 5c,d, we consider SSE

test and MAPE

test as two performance indicators in the testing phase when evaluating the error analysis. Notably, an increased likelihood of optimal solutions will be attributed to lower SSE

test and MAPE

test values. Lower SSE

test and MAPE

test scores denote a higher accuracy (fitness) level. The SSE

test and MAPE

test values for the HNN-3SAT model rise noticeably when the number of neurons increases. As a result, the HNN-3SAT model with greater SSE

test and MAPE

test values cannot optimise the required fitness value during the testing phase. After

, the values for SSE

test and MAPE

test for the HNN-3SAT model begin to rise. The event occurred due to the HNN-3SAT trial-and-error approach, which resulted in a less ideal testing phase.

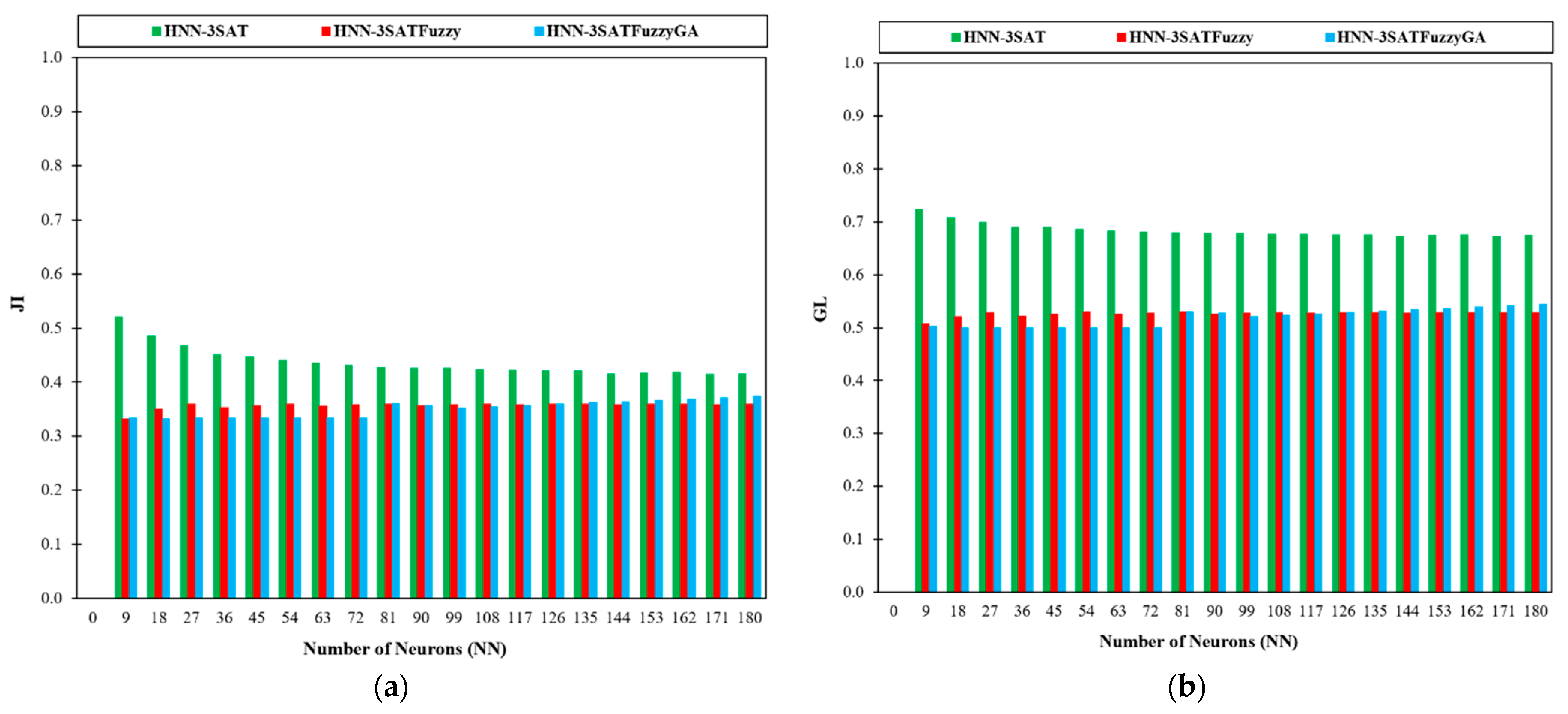

Next, we used the similarity index to analyse the similarity and dissimilarity of the generated final neuron states in

Figure 6a,b. This indexing is used for divergence investigations. It is particular to binary variables [

33]. According to Equations (25) and (26), we searched for the Jaccard Index (JI) and the Gower-Legendre Index (GLI) in the similarity index analysis. A comparison of the JI and GLI values obtained by several HNN-3SAT models is shown in

Figure 6a, b. The JI and GLI calculate how the computed states resemble the baseline state. Examining the value of the JI in

Figure 6a is best done with lower JI and GLI values. In our investigation, the HNN-3SATFuzzyGA model recorded lower JI values for lower NN between

. Based on

Figure 6a,b, HNN-3SAT has the highest overall similarity index values. In HNN-3SATFuzzy and HNN-3SATFuzzyGA, there is fuzzy logic or (and) GA optimisation in the training phase, resulting in a non-redundant neuron state that achieves the global minima energy due to diversification of the search space. As a result, the final neuron state differs significantly from the benchmark state, causing it to have a lower value. However, HNN-3SAT produces a similar neuron state corresponding to the global minima energy because of the limitation of neuron choice for the variable. That interprets the higher value of the JI and GLI in

Figure 6a,b, respectively. Gao et al. [

34] used a higher-order logical structure that can exploit the random property of the first, second, and third-order clauses. Alternative parameter settings, including varying clause orderings, ratios between positive and negative literals, relaxation and different numbers of learning trials, were used to assess the logic in the HNN. The similarity index measures formulation is used since the similarity index measurements aim to analyse neuron variation caused by the random 3SAT. The simulation indicated that the random logic was adaptable and offered various solutions. According to Karim et al. [

29], the experimental results showed that random 3SAT may be implemented in the HNN and that each logical combination had unique properties. In the entire solution space, random 3SAT can offer neuron possibilities. Third-order clauses were added to the random 3SAT to boost the possible variability in the reasoning learning and retrieval stages. The simulations were used to determine the best value for each parameter to get the best outcomes. Therefore, the model’s flexible architecture can be combined with fuzzy logic and the GA to offer an alternate viewpoint on potential random dynamics for applying practical bioinformatics issues.

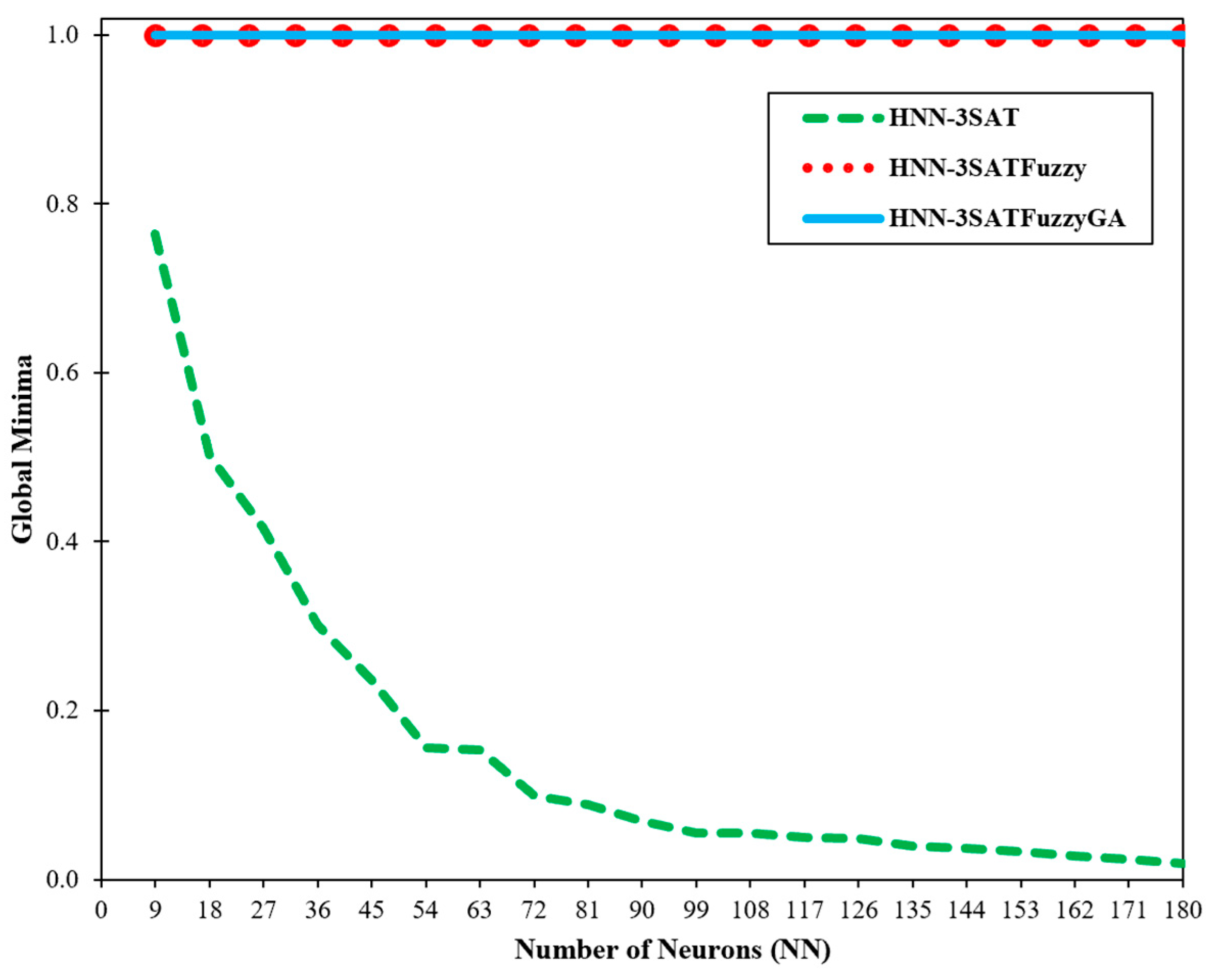

Figure 7 and

Figure 8 show the global and local minima ratio detected by the other two networks for different numbers of neurons. A correlation between the global and local minima values and the type of energy gained in the program’s final section was found by Sathasivam [

3]. The outcomes in the system have arguably reached global minima energy, except HNN-3SAT, given that the suggested hybrid network’s global minima ratio is approaching a value of 1. 3SATFuzzyGA certainly can offer more precise and accurate states when compared to the HNN. The outcome is due to how effectively the fuzzy logic algorithm and GA searches. The HNN-3SATFuzzyGA solution attained the best global minimum energy of value 1. The obtained results are from utilising the HNN-3SAT network with the fuzzy logic technique and the genetic algorithm. The fuzzy logic lessens computation load by fuzzifying and defuzzifying the state of the neurons to distinguish the right states. The suggested method can accept additional neurons. Additionally, unfulfilled neuron clauses are improved by utilising the alpha-cut process in the defuzzification stage until the proper neuron state is found. This property makes it possible for defuzzification and fuzzification algorithms to converge successfully to global minima compared to the other network. With the aid of the GA, the optimisation process is enhanced. When the number of neurons rises, the HNN-3SAT network gets stuck in a suboptimal state. It has been demonstrated that the fuzzy logic method makes the network less complex. From the results, HNN-3SATFuzzy and HNN-3SATFuzzyGA global minimum converged to ideal results with satisfactory outcomes. Calculating the time is crucial for assessing our proposed method’s efficacy. The robustness of our techniques can be roughly inferred from the effectiveness of the entire computation process. The computing time, also known as the CPU period, is the time it took for our system to complete all the calculations for our inquiry [

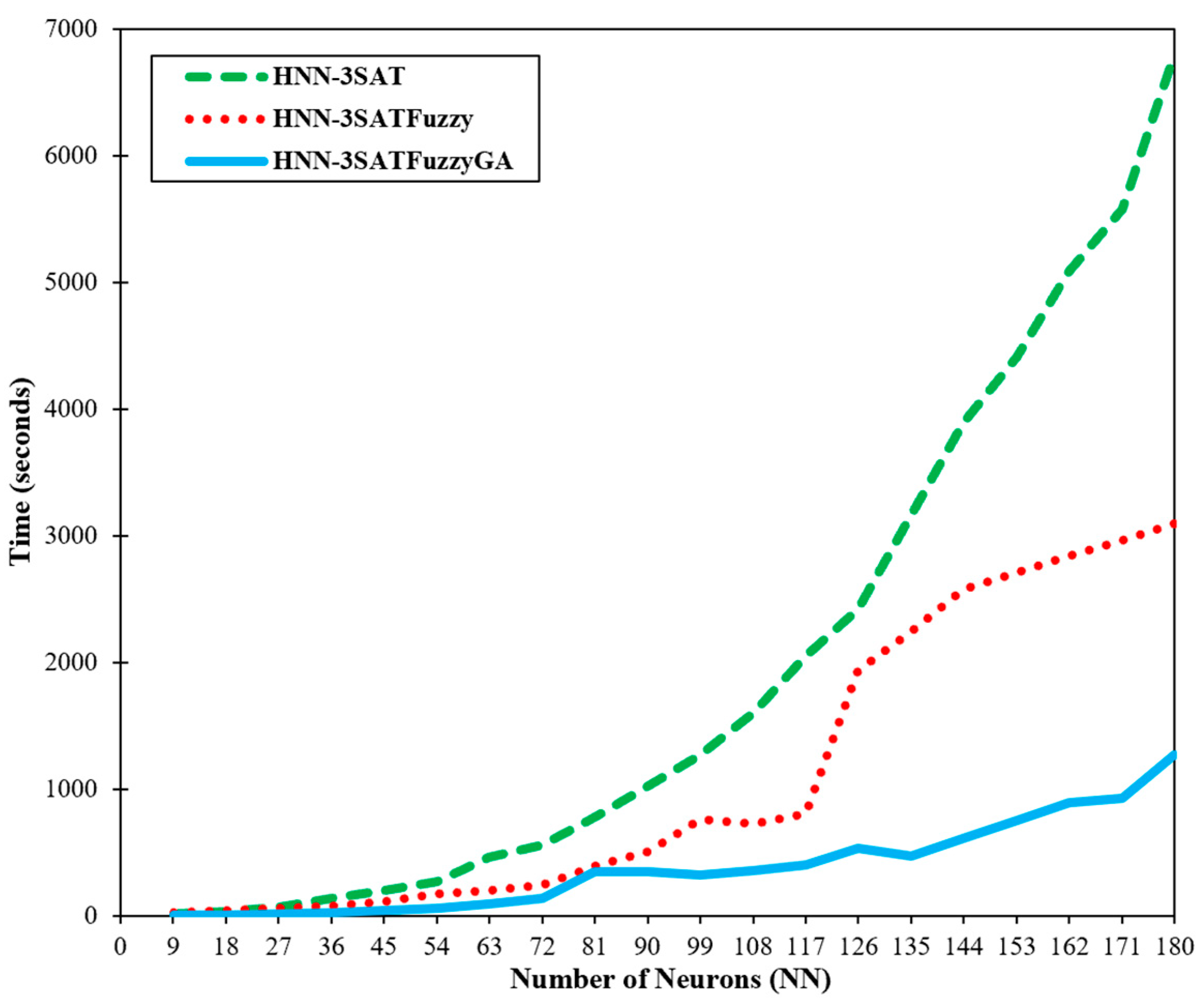

3]. The computation technique involves training and producing our framework’s most pleasing sentences.

Figure 9 shows the total computation time for the network to complete the simulations. The likelihood of the same neuron being implicated in other phrases increased as the number of neurons grew [

3]. Furthermore,

Figure 9 demonstrates unequivocally how superior HNN-3SATFuzzyGA is to other networks. The HNN-3SATFuzzyGA network improved more quickly than the other network after the number of clauses grew. The time taken by all techniques for more minor clauses is not significantly different. The CPU time was quicker when the fuzzy logic technique and the GA were applied, showing how effectively the methods worked.

In another application by Mansor et al. [

35], the HNN can be used to optimise the pattern satisfiability (pattern SAT) problem when combined with 3SAT. The goal is to examine the pattern formed by the algorithms’ accuracy and CPU time. Based on global pattern-SAT and running time, the specific HNN-3SAT performance of the 3SAT method in performing pattern-SAT is discussed by Mansor et al. [

35]. The outcomes of the simulation clearly show how well HNN-3SAT performs pattern-SAT. This work was done by applying logic programming in the HNN to prove the pattern satisfiability using 3SAT. The network in pattern-SAT works excellently as measured by the number of correctly recalled patterns and CPU time. As confirmed theoretically and experimentally, the network increased the probability of recovering more accurate patterns as the complexity increased. The excellent agreement between the global pattern and CPU time obtained supports the performance analysis. In another work by Mansor et al. [

36], a very large-scale integration (VLSI) circuit design model also can be used with the HNN as a circuit verification tool to implement the satisfiability problem. In order to detect potential errors earlier than with human circuit design, a VLSI circuit based on the HNN is built. The method’s effectiveness was assessed based on runtime, circuit accuracy, and overall VLSI configuration. The early error detection in our circuit has led to the observation that the VLSI circuits in the HNN-3SAT circuit generated by the suggested design are superior to the conventional circuit. The model increases the accuracy of the VLSI circuit as the number of transistors rises. The result of Mansor et al. [

36] achieved excellent outcomes for the global configuration, correctness, and runtime, showing that it can also be implemented with fuzzy logic and the GA.