In this part, we provide a theoretical foundation for our method and assess its effectiveness within a standard federated learning backdoor scenario. Prior studies [

15] have shown that the second-to-last layer of a model captures informative, high-level representations that reflect the model’s focus. Building on this understanding, we incorporate an auxiliary network to assist local training. If the client is benign, this additional model enhances the extraction of relevant features, thereby strengthening the quality of local representations. If the client is malicious, the auxiliary model is intentionally trained as a backdoored model

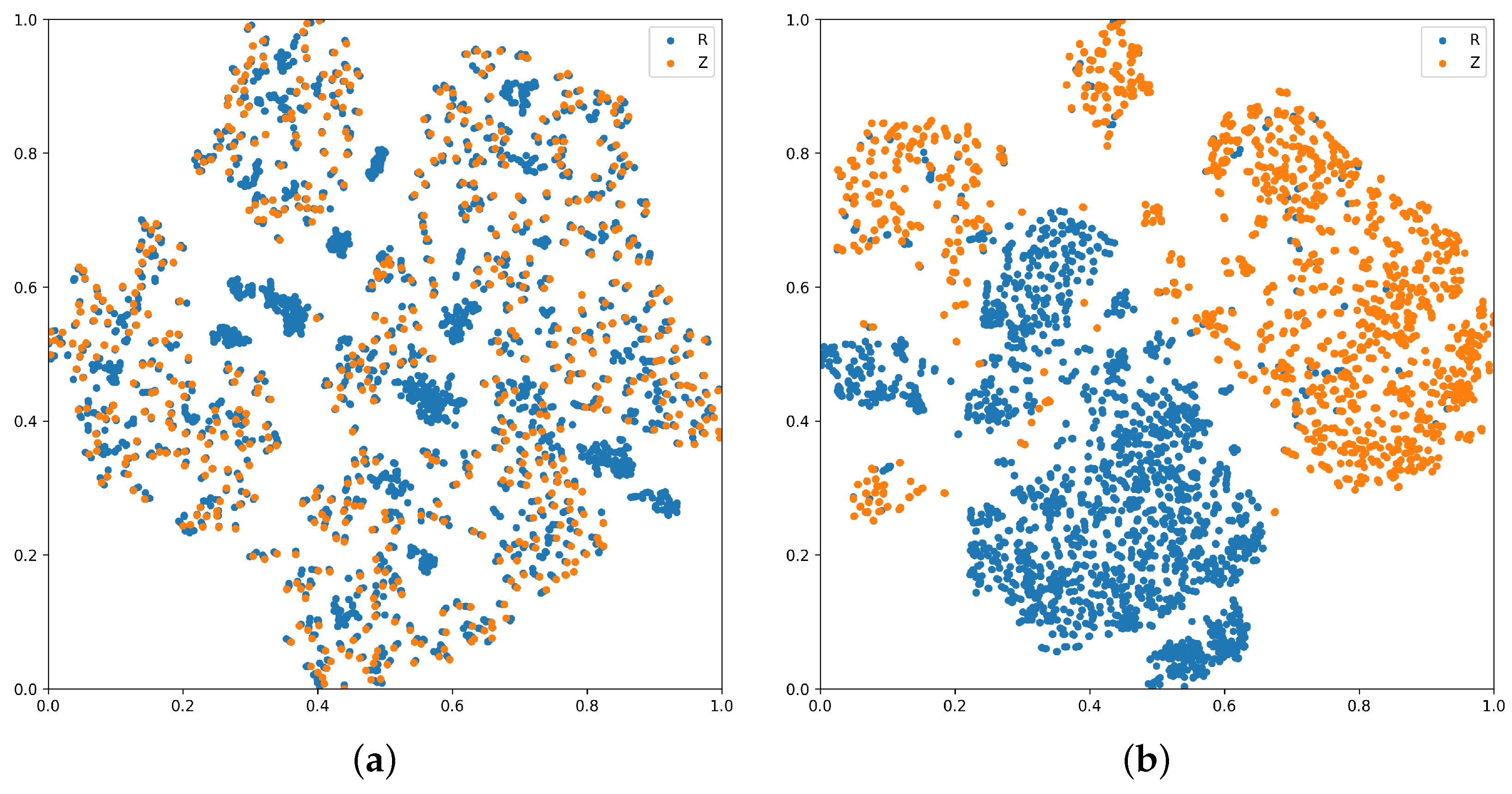

. Then, for the sample pairs

held by the malicious client, we extract the penultimate layer outputs of both

and the local model

as

R (backdoor features) and

Z (clean features), respectively. During training, contrastive learning is employed to decouple

R from

Z, followed by a sample-reweighting strategy to guide

toward learning clean features, thereby mitigating the backdoor effect. Upon completion, the purified model

is used in global aggregation. The subsequent sections elaborate on each implementation step in malicious clients.

4.2. Local Model

Building on the decoupling concept introduced by Huang [

37], this work formulates the loss function by integrating a variational information bottleneck approach alongside sample weighting. The loss function is expressed as three parts:

Here, denotes mutual information. The terms labeled ➀ and ➁ together form the information bottleneck loss. Term ➀ limits irrelevant information in the input that does not contribute to the label, helping to filter out noise from unrelated features. Term ➁ encourages the latent representation Z to retain the key information needed for accurate label prediction. Term ➂ measures the dependence between the backdoor feature R and the clean feature Z. Reducing the mutual information between Z and R decreases their dependency, allowing Z to focus on extracting features critical for the task. The detailed calculation process is presented below.

Term ➀

. To constrain the irrelevant and redundant information in the input that does not affect the label, thus aiding in filtering noise from unrelated features,

can be rewritten based on the mutual information formula as follows:

In real-world federated learning settings, calculating the marginal distribution

is challenging. Prior studies [

38] suggest approximating

with a variational distribution

. The Kullback–Leibler divergence provides a metric for the difference between

and

, calculated as follows:

Since the Kullback–Leibler divergence cannot be negative, the inequality below holds

From the aforementioned inequality, the relationship between

and

can be established. Consequently, an upper bound for Equation (

2) is obtained.

Assuming the posterior

follows a Gaussian distribution with mean

and diagonal covariance matrix

whose diagonal elements are

to

, and the prior

is a standard normal distribution with mean zero and identity covariance matrix

I, the KL divergence between the two distributions can be formulated as follows:

Here, represents the squared length of the mean vector using the norm, refers to the total of all diagonal elements within the covariance matrix, and denotes the logarithm of the determinant of the covariance matrix. The symbol D corresponds to the dimensionality of the feature vector.

When the covariance matrix is set to zero, the posterior distribution turns deterministic, causing z to equal and yielding a fixed embedding. In this scenario, reducing the mutual information between Z and X is equivalent to applying an norm regularization on z.

Term ➁

. The goal of maximizing

is to ensure that the latent representation

Z effectively encodes information relevant to predicting the label

Y. This helps the model focus on extracting useful, task-relevant clean features, thereby improving classification performance and defense effectiveness, while reducing the interference of backdoor features on the model’s decisions. Since calculating

directly is challenging, we approximate it by minimizing the cross-entropy loss (

). To achieve this, a sample-weighted cross-entropy loss (

) is applied to train

, where features from the intermediate layers of

are used only for weighting purposes without backpropagation through

. The weight calculation is expressed as follows:

For training samples, a low loss value on results in a weight near 0, while a high loss leads the weight to approach 1. A tiny value is included to prevent division errors caused by zero denominators. This weighting mechanism aims to direct towards emphasizing clean feature learning and diminishing the influence of backdoor feature extraction. It is important to note that the features of the auxiliary model are computed only during the forward pass and do not participate in the backward propagation.

Term ➂

. Using the connection between mutual information and entropy,

can be represented as follows:

Minimizing the mutual information

aims to separate the clean features

Z from the backdoor features

R, helping the model focus on extracting task-relevant clean key information while suppressing interference from backdoor features, thereby enhancing the model’s defense capability against backdoor attacks. We utilize contrastive learning to improve the model’s feature space by contrasting positive and negative pairs. Positive pairs include

z and

, where

is generated by applying data augmentation on the input

x to form

, which is then processed by the local model

to obtain its feature. Negative pairs are constructed from the local model’s feature

z and the backdoor model’s feature

.

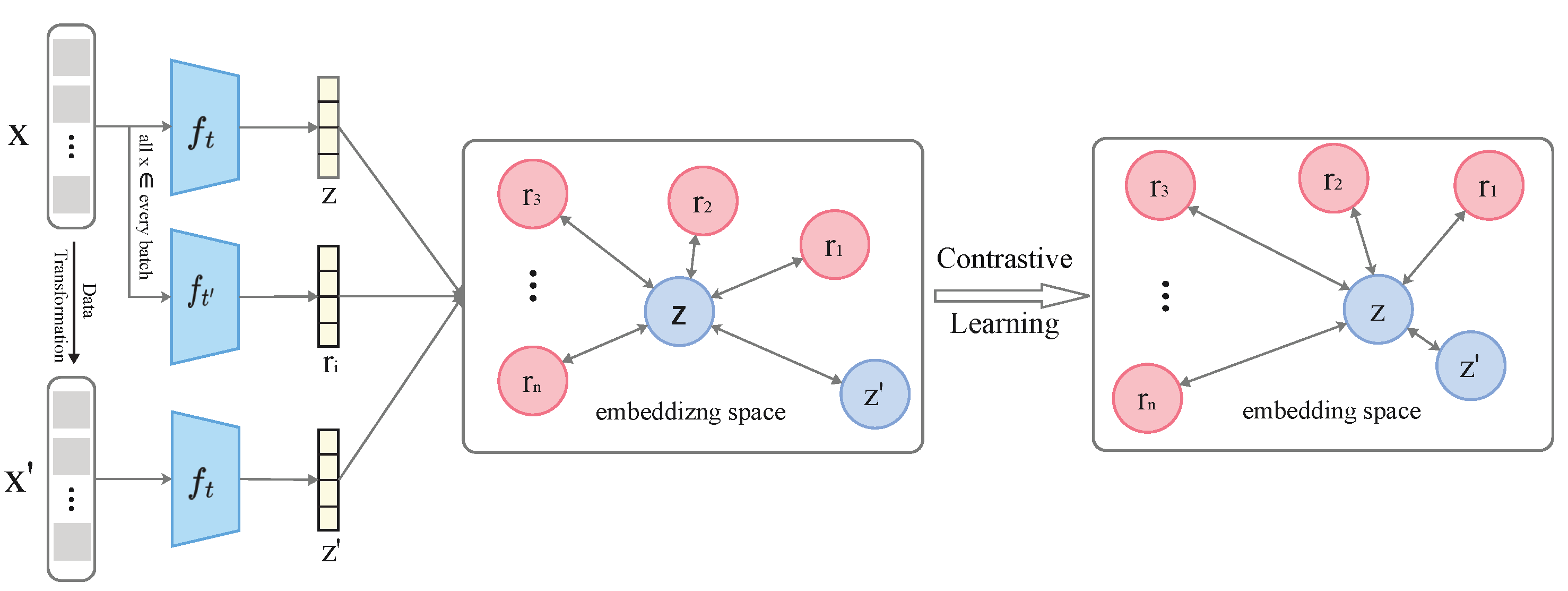

Figure 2 illustrates the overall contrastive learning framework. All colors are used for visual distinction only and have no practical meaning.

Within this framework, the local model

and the backdoor model

produce intermediate representations

z and

r, respectively. These outputs are fed into the contrastive learning component to compute the contrastive loss. The loss function employed is based on the InfoNCE formulation, defined as follows:

Here, refers to the similarity measure between sample pairs, commonly calculated using cosine similarity; denotes the similarity score for positive pairs, whereas corresponds to that of negative pairs; the parameter regulates the sharpness of the similarity distribution, while n represents the total count of samples.

It is worth noting that the InfoNCE loss serves as a lower bound on the mutual information between positive pairs

[

39,

40]; thus, maximizing the similarity of positive pairs implicitly maximizes this mutual information. Meanwhile, by contrasting with negative samples

, the method encourages the dissimilarity between

Z and

R, effectively reducing the mutual information

and promoting disentanglement. Although InfoNCE does not theoretically guarantee complete independence between

Z and

R, prior works [

41] demonstrate that with sufficient negative samples and appropriate temperature settings, it effectively enforces feature separation in practice. Therefore, we consider the use of the InfoNCE-based contrastive loss as a practical and efficient approach to approximate the desired feature disentanglement in our framework.

Through optimizing this objective, the model increases similarity between positive pairs to strengthen the consistency of clean features and reduces similarity among negative pairs to lower the mutual information between

Z and

R. Finally, integrating contrastive learning with sample weighting, the loss function for

is formulated as follows:

controls the strength of regularization, balancing feature compression and model performance. Too small weakens defense; too large harms accuracy. We set

= 0.1 in our experiments.

Based on the described approach, stochastic gradient descent optimizes the loss functions for both

and

. Algorithm 1 presents the entire procedure. The contrastive learning process is described in Algorithm 2.

| Algorithm 1 Decoupled contrastive learning for federated backdoor defense |

- 1:

Global server’s input: Global model , total communication rounds T, Client sampling size per round K - 2:

Local client’s input: backdoor model training iterations , local model training iterations - 3:

Global output: Global backdoor-free model f - 4:

Initialize: Global model - 5:

for each round do - 6:

Server samples a set of clients with - 7:

for each client do - 8:

if client k is labeled as malicious then - 9:

▷Train backdoor model - 10:

Initialize and local model - 11:

for iteration to do - 12:

Train using poisoned data via SGD - 13:

end for - 14:

for iteration to do - 15:

Sample poisoned data - 16:

- 17:

▷Current feature from clean model - 18:

▷Augmented view - 19:

Compute contrastive loss: - 20:

Compute instance weight - 21:

Optimize using SGD with total loss (e.g., Equation ( 10)) - 22:

end for - 23:

else - 24:

▷Benign client training - 25:

Initialize local model - 26:

Train using clean data with SGD for iterations - 27:

end if - 28:

Client k sends updated model to server - 29:

end for - 30:

Server aggregates models from clients to update f - 31:

end for

|

| Algorithm 2 Contrastive loss calculation |

- 1:

Input: Clean feature z, Augmented clean feature , Set of backdoor features , Temperature parameter - 2:

Output: Contrastive loss - 3:

Initialize:

- 4:

function Contrastive_L - 5:

▷Similarity between z and - 6:

for each do - 7:

▷Similarity between z and - 8:

end for - 9:

- 10:

- 11:

- 12:

Return:

|