An Improved Dark Channel Prior Method for Video Defogging and Its FPGA Implementation

Abstract

1. Introduction

- (1)

- The refinement of transmittance images by an improved guided filtering algorithm to reduce the halo effect;

- (2)

- It proposes the gamma correction method to realize image enhancement during the image restoration process;

- (3)

- Under the premise of improving the quality of defogging, the system can stably realize the video processing speed of 1280 × 720 @ 60 fps.

2. Principle of Image Defogging Algorithm

2.1. Dark Channel Prior Theory

2.2. Guided Filtering Theory

2.3. Gamma Correction

3. Systematic Implementation of Dark Channel Prior Algorithms

3.1. Dark Channel Image Research

3.2. Transmittance Image Research

3.3. Image Restoration Research

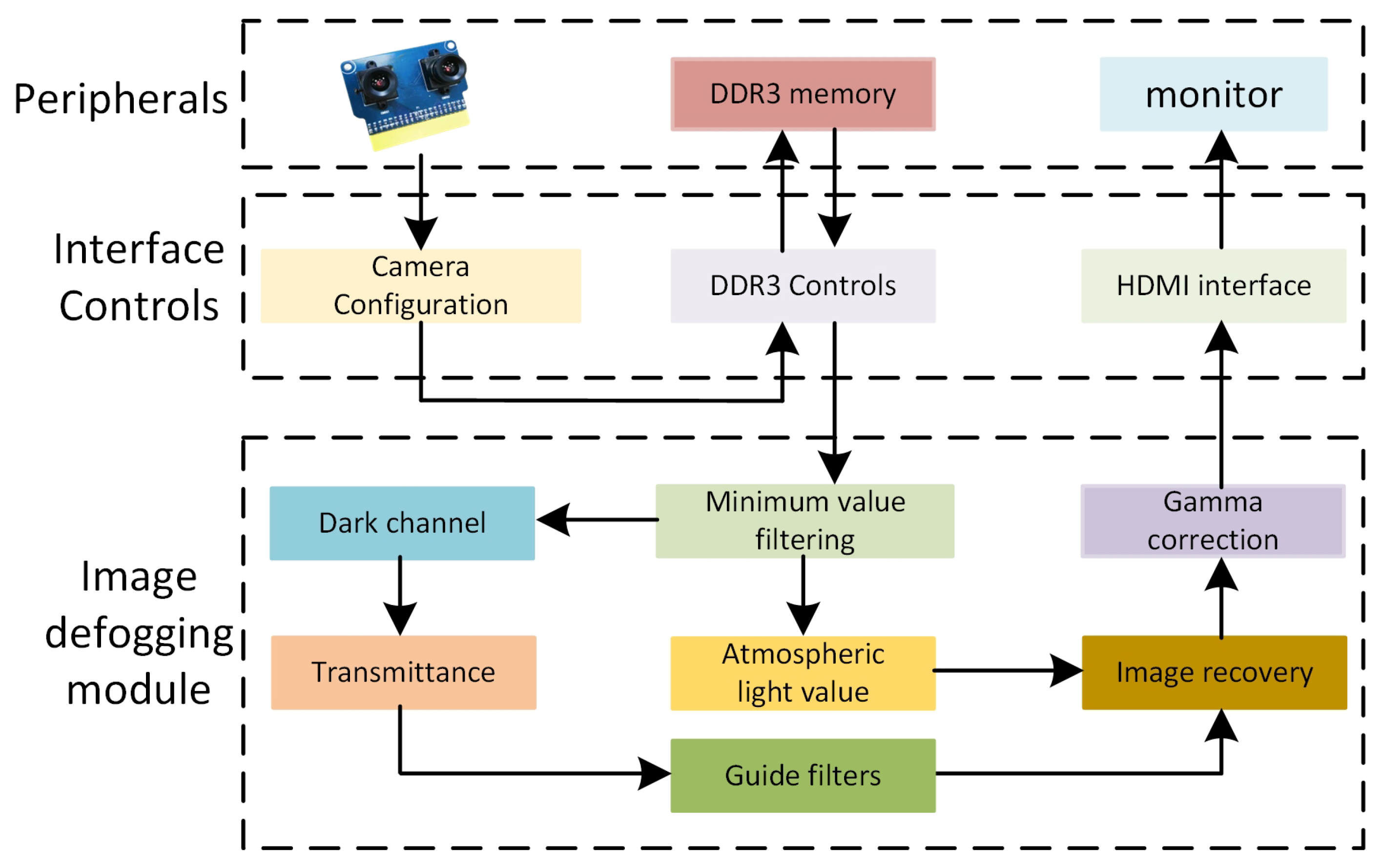

4. Analysis of ZYNQ-Based Experimental Platform

4.1. Experimental Platforms

4.2. ZYNQ System Architecture

5. Experimental Results and Data Analysis

5.1. Defogging Effect Analysis

5.2. Power Consumption and Resource Utilization Analysis

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Wu, X.; Chen, X.; Wang, X.; Zhang, X.; Yuan, S.; Sun, B.; Huang, X.; Liu, L. A real-time framework for HD video defogging using modified dark channel prior. J. Real-Time Image Process. 2024, 21, 55. [Google Scholar] [CrossRef]

- Liu, S.; Chen, P.; Lan, J.; Li, J.; Shen, Z.; Wang, Z. Underwater image restoration via multiscale optical attenuation compensation and adaptive dark channel dehazing. Comput. Electr. Eng. 2025, 123, 110228. [Google Scholar] [CrossRef]

- Pandey, P.; Gupta, R.; Goel, N. Comprehensive review of single image defogging techniques: Enhancement, prior, and learning based approaches. Artif. Intell. Rev. 2025, 58, 116. [Google Scholar] [CrossRef]

- He, K.; Sun, J.; Tang, X. Single image haze removal using dark channel prior. IEEE Trans. Pattern Anal. Mach. Intell. 2010, 33, 2341–2353. [Google Scholar] [PubMed]

- Zhang, T. Design of an FPGA-based Image Defogging Algorithm Using Dark Channel Prior. In Proceedings of the 2024 4th International Conference on Electronic Information Engineering and Computer Science (EIECS), Yanji, China, 27–29 September 2024; IEEE: New York, NY, USA, 2024; pp. 464–468. [Google Scholar]

- Li, J.; Guo, Y.; Li, G. FPGA Implementation of Dark Channel Prior and Defogging for Video Images. In Electronic Engineering and Informatics; IOS Press: Amsterdam, The Netherlands, 2024; pp. 705–712. [Google Scholar]

- Liu, B.; Wei, Q.; Ding, K. ZYNQ-Based Visible Light Defogging System Design Realization. Sensors 2024, 24, 2276. [Google Scholar] [CrossRef] [PubMed]

- Gang, H.; DaTang, Z.; LaiJun, Y.; WenXin, Y.; Kang, X.; Shuang, C.; ZhenGuo, C. Single image dehazing algorithm using complementary saturation prior. Signal Image Video Process. 2025, 19, 224. [Google Scholar] [CrossRef]

- Yoong, N.K.J. CLAHE HLS implementation on Zynq SoC FPGA 2024. Available online: https://dr.ntu.edu.sg/handle/10356/176412 (accessed on 28 March 2025).

- Sun, A.; Wang, Y.; Yang, Q. Welding Image Enhancement Based on CLAHE and Guided Filter. In Proceedings of the 2024 10th International Conference on Electrical Engineering, Control and Robotics (EECR), Guangzhou, China, 29–31 March 2024; IEEE: New York, NY, USA, 2024; pp. 285–290. [Google Scholar]

- Munaf, S.; Bharathi, A.; Jayanthi, A. FPGA-based low-light image enhancement using Retinex algorithm and coarse-grained reconfigurable architecture. Sci. Rep. 2024, 14, 28770. [Google Scholar] [CrossRef] [PubMed]

- Lv, T.; Du, G.; Li, Z.; Wang, X.; Teng, P.; Ni, W.; Ouyang, Y. A fast hardware accelerator for nighttime fog removal based on image fusion. Integration 2024, 99, 102256. [Google Scholar] [CrossRef]

- Teng, P.; Du, G.; Li, Z.; Wang, X.; Yin, Y. High-speed hardware accelerator based on brightness improved by light-dehazenet. J. Real-Time Image Process. 2024, 21, 87. [Google Scholar] [CrossRef]

- Shen, M.; Lv, T.; Liu, Y.; Zhang, J.; Ju, M. A Comprehensive Review of Traditional and Deep-Learning-Based Defogging Algorithms. Electronics 2024, 13, 3392. [Google Scholar] [CrossRef]

- Kumari, A.; Sahoo, S.K. An effective and robust single-image dehazing method based on gamma correction and adaptive Gaussian notch filtering. J. Supercomput. 2024, 80, 9253–9276. [Google Scholar] [CrossRef]

- Zhang, S.; Tian, Y.; Shen, L.; Wang, H.; Du, Y.; Chen, H. Single image defogging method based on optimized double dark channel with gaussian weighting. In Proceedings of the 2022 6th International Conference on Electronic Information Technology and Computer Engineering, Xiamen China, 21–23 October 2022; pp. 1489–1493. [Google Scholar]

- Yadav, S.K.; Sarawadekar, K. Robust multi-scale weighting-based edge-smoothing filter for single image dehazing. Pattern Recognit. 2024, 149, 110137. [Google Scholar] [CrossRef]

- Vedavyas, Y.; Harsha, S.S.; Subhash, M.S.; Vasavi, S. Quality Enhancement for Drone Based Video using FPGA. In Proceedings of the 2022 International Conference on Electronics and Renewable Systems (ICEARS), Tuticorin, India, 16–18 March 2022; IEEE: New York, NY, USA, 2022; pp. 29–34. [Google Scholar]

- He, K.; Sun, J.; Tang, X. Guided image filtering. IEEE Trans. Pattern Anal. Mach. Intell. 2012, 35, 1397–1409. [Google Scholar] [CrossRef] [PubMed]

- Bailey, D.G. Design for Embedded Image Processing on FPGAs; John Wiley & Sons: Hoboken, NJ, USA, 2023. [Google Scholar]

- Rahmawati, L.; Rustad, S.; Marjuni, A.; Arief, M.; Soeleman, C.S.; Shidik, G.F. Transmission Map Refinement Using Laplacian Transform on Single Image Dehazing Based on Dark Channel Prior Approach. Cybern. Inf. Technol. 2024, 24, 126–142. [Google Scholar] [CrossRef]

- Xue, Q.; Hu, H.; Bai, Y.; Cheng, R.; Wang, P.; Song, N. Underwater image enhancement algorithm based on color correction and contrast enhancement. Vis. Comput. 2024, 40, 5475–5502. [Google Scholar] [CrossRef]

- Yu, J.; Zhang, J.; Li, B.; Ni, X.; Mei, J. Nighttime image dehazing based on bright and dark channel prior and gaussian mixture model. In Proceedings of the 2023 6th International Conference on Image and Graphics Processing, Chongqing, China, 6–8 January 2023; pp. 44–50. [Google Scholar]

- Akash, E.; Ashokkumar, S.; Banupriya, N.; Thangam, K.S.; Kumar, N.S.; Jananeshwaran, M. Hardware Implementations of Dehazing Algorithms. In Proceedings of the 2024 11th International Conference on Computing for Sustainable Global Development (INDIACom), New Delhi, India, 28 February–1 March 2024; IEEE: New York, NY, USA, 2024; pp. 1654–1661. [Google Scholar]

- Ngo, D.; Kang, B. A Symmetric Multiprocessor System-on-a-Chip-Based Solution for Real-Time Image Dehazing. Symmetry 2024, 16, 653. [Google Scholar] [CrossRef]

- Suo, H.; Guan, J.; Ma, M.; Huo, Y.; Cheng, Y.; Wei, N.; Zhang, L. Dynamic dark channel prior dehazing with polarization. Appl. Sci. 2023, 13, 10475. [Google Scholar] [CrossRef]

- Ehsan, S.M.; Imran, M.; Ullah, A.; Elbasi, E. A single image dehazing technique using the dual transmission maps strategy and gradient-domain guided image filtering. IEEE Access 2021, 9, 89055–89063. [Google Scholar] [CrossRef]

- Zhu, Q.; Mai, J.; Shao, L. A fast single image haze removal algorithm using color attenuation prior. IEEE Trans. Image Process. 2015, 24, 3522–3533. [Google Scholar] [PubMed]

- Cong, H. Optimization and Implementation of ZYNQ-Based Dark Channel Prior Defogging Algorithm. Master’s Thesis, Sichuan Normal University, Chengdu, China, 2024. [Google Scholar]

- Jianyu, H. Research on FPGA-Based Nighttime Image Enhancement Algorithm. Master’s Thesis, China University of Mining and Technology, Xuzhou, China, 2023. [Google Scholar]

| Imagery | Algorithm | IE/bit | UIQM | AG | Running Time (PC)/(FPGA)/s |

|---|---|---|---|---|---|

| (1) | Original | 7.2668 | 3.8923 | 21.5147 | -/- |

| He [4] | 7.3827 | 6.8962 | 34.0108 | 4.8684/- | |

| Ehsan [27] | 7.0880 | 6.9383 | 33.2866 | 7.7856/- | |

| Zhu [28] | 7.5437 | 6.8943 | 31.9447 | 2.0293/- | |

| Proposed | 7.5463 | 7.3546 | 42.2765 | 3.1853/0.0138 | |

| (2) | Original | 7.4554 | 3.0642 | 12.6117 | -/- |

| He [4] | 6.9130 | 6.4483 | 14.4420 | 4.3005/- | |

| Ehsan [27] | 6.7227 | 5.4782 | 15.8163 | 7.9553/- | |

| Zhu [28] | 7.5427 | 5.3679 | 13.9631 | 1.8057/- | |

| Proposed | 7.4560 | 6.5842 | 27.7230 | 2.9842/0.0138 | |

| (3) | Original | 7.2492 | 4.2847 | 22.3559 | -/- |

| He [4] | 7.3827 | 7.4891 | 36.6422 | 4.4382/- | |

| Ehsan [27] | 7.2837 | 8.2204 | 38.3339 | 6.9987/- | |

| Zhu [28] | 7.4317 | 7.7548 | 31.7208 | 1.7906/- | |

| Proposed | 7.5903 | 8.0671 | 41.9183 | 3.0853/0.0138 | |

| (4) | Original | 7.5295 | 4.7281 | 23.6353 | -/- |

| He [4] | 6.9065 | 7.8931 | 29.8417 | 4.1062/- | |

| Ehsan [27] | 6.8268 | 8.0142 | 30.8351 | 7.1346/- | |

| Zhu [28] | 7.1068 | 7.0763 | 28.7505 | 2.3571/- | |

| Proposed | 7.1428 | 7.7149 | 36.9836 | 3.0356/0.0138 | |

| (5) | Original | 7.2107 | 3.3648 | 12.2805 | -/- |

| He [4] | 7.0103 | 6.0671 | 18.9904 | 4.7693/- | |

| Ehsan [27] | 6.7471 | 6.4837 | 21.4487 | 7.6148/- | |

| Zhu [28] | 7.3504 | 5.3301 | 16.4952 | 1.9250/- | |

| Proposed | 7.4335 | 6.6748 | 27.1644 | 3.0961/0.0138 |

| Types | Targets | Overall | |||||||

|---|---|---|---|---|---|---|---|---|---|

| Dynamic | Clocks | Signals | Logic | BRAM | DSP | MMCM | I/O | PS7 | |

| 0.088 W | 0.103 W | 0.093 W | 0.007 W | 0.009 W | 0.106 W | 0.133 W | 1.543 W | 2.082 W | |

| 4% | 5% | 4% | 1% | 1% | 5% | 6% | 74% | 93% | |

| Static | - | 0.160 W | |||||||

| - | 7% | ||||||||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Wang, L.; Luo, Z.; Gao, L. An Improved Dark Channel Prior Method for Video Defogging and Its FPGA Implementation. Symmetry 2025, 17, 839. https://doi.org/10.3390/sym17060839

Wang L, Luo Z, Gao L. An Improved Dark Channel Prior Method for Video Defogging and Its FPGA Implementation. Symmetry. 2025; 17(6):839. https://doi.org/10.3390/sym17060839

Chicago/Turabian StyleWang, Lin, Zhongqiang Luo, and Li Gao. 2025. "An Improved Dark Channel Prior Method for Video Defogging and Its FPGA Implementation" Symmetry 17, no. 6: 839. https://doi.org/10.3390/sym17060839

APA StyleWang, L., Luo, Z., & Gao, L. (2025). An Improved Dark Channel Prior Method for Video Defogging and Its FPGA Implementation. Symmetry, 17(6), 839. https://doi.org/10.3390/sym17060839