1. Introduction

Direction of arrival (DOA) estimation is a common problem in array signal processing with extensive applications in various domains, including astronomical observations, indoor localization, sonar, radar, wireless communications [

1,

2,

3,

4], etc. The principal challenges in DOA estimation encompass the intricate task of devising integrated strategies that exhibit minimal hardware consumption [

5] while concurrently optimizing both performance and receiver cost. Additionally, there is a need to enhance the accuracy and super-resolution capabilities of DOA estimation methods in scenarios featuring multiple sources. Moreover, improving the adaptability of DOA estimation techniques in challenging environments characterized by limited snapshots and low signal-to-noise ratios (SNRs) is also a critical area of focus.

Typically, DOA estimation is mostly accomplished using model-driven methods [

6,

7,

8,

9,

10,

11,

12,

13], where the underlying principle involves constructing a forward parameter model from signal direction to array outputs, followed by leveraging the properties of predefined assumptions to estimate the direction. Subspace-based methods [

6,

7], as well as compressive sensing and sparse recovery methods, like singular value decomposition (SVD) [

8,

9], sparse Bayesian learning (SBL) [

10,

11], and orthogonal matching pursuit (OMP) [

12,

13], are commonly utilized in DOA estimation. The model-driven techniques depend on the accuracy of the pre-established model, making it challenging to achieve high accuracy under non-ideal conditions, e.g., coherent sources [

14] may lead to significant performance degradation of the algorithms mentioned above.

In recent years, machine learning (ML) [

15] approaches utilizing data-driven models [

16] have been widely adopted by researchers to address the source localization problem. Deep neural network (DNN)-based methods aim to directly learn the nonlinear relationship between array output and source location, facilitating an efficient mapping from the sensor output space to the arrival direction space. The rapid advancement of artificial intelligence has led researchers to incorporate radial basis function (RBF), support vector regression (SVR) [

17], and deep learning (DL) [

18,

19,

20,

21,

22] into DOA estimation, with the goal of enhancing both accuracy and computational efficiency. In [

19], a DL framework for DOA estimation is introduced, specifically designed to handle array imperfections effectively. In [

20], the authors demonstrate that the columns of array covariance matrix be formulated as undersampled noisy linear measurements of the spatial spectrum and presented a deep convolutional network (DCN) framework for estimating the DOAs. Additionally, in [

21], the authors propose a convolutional neural network (CNN) that effectively learns the number of sources and DOAs even in extreme SNR scenarios. Moreover, in [

22], a novel DOA estimation method based on dimensional alternating fully-connected (DAFC) block-based neural networks (NNs) is presented, specifically designed to address spatial spectrum estimation in multi-source scenarios where non-Gaussian spatial color interference is present, and the number of sources is unknown a priori. Ref. [

23] designs deep augmented (DA)-MUSIC neural architectures to overcome the limitations of traditional algorithms and combines model-/data-driven hybrid DOA estimators to improve the resolution of the signals. Ref. [

24] proposes a DNN-based DOA estimation framework for uniform circular array (UCA) to realize more efficient data transmission in high-capacity communication networks. In the field of sound source localization, Ref. [

25] proposes new frequency-invariant circular harmonic features as inputs to the network structure, utilizing CNN for self-adaptation to array defects. Nevertheless, the aforementioned methodologies [

16,

17,

18,

19,

20,

21,

22,

23,

24,

25] share a common prerequisite for accurate DOA estimation–-these sources are irrelevant. Regarding coherent signals, finding appropriate data characteristics and constructing a more efficient learning model is our motivation.

For coherent sources, model-driven methods for solving the coherent source problem require spatial smoothing (SS) techniques [

26,

27,

28], albeit at the expense of a reduced effective array aperture. On the contrary, data-driven methods are employed to improve the estimation accuracy and realize real-time updates. In [

29], two angle separation learning schemes (ASLs) are proposed to solve the coherent DOA estimation issue, taking into consideration the spatial sparsity of the array output regarding angle separation. A logarithmic eigenvalue-based classification network (LogECNet) is introduced in [

30] to achieve higher accuracy of signal number detection and angle estimation performance. However, the existing neural network-based methods for addressing coherent sources [

29,

30] require transforming signal statistics into a long vector input, thereby leading to the requirement for large-sized parameter matrices in the neural layers during the training process.

Existing methods for coherent signal estimation are often hindered by significant computational complexity [

26,

27,

28]. From the above analysis, we propose an effective data-driven method for improving the accuracy and resolution of coherent source estimation. Our main contributions are as follows:

We propose a novel signal space deep convolution (SSDC) network that learns angular features of coherent signals to address the coherent DOA estimation problem.

Since the conventional neural network frames are unable to effectively convey information in the complex domain, we divide the covariance matrix of the input signal space into real and imaginary parts and perform two-dimensional convolution operations separately, which can make the features of the input data fully utilized.

The proposed SSDC network also considers the spatial sparsity of the array output and performs a spectral peak search on the output to determine the interested DOAs.

Notations: Upper-case (lower-case) bold characters represent matrices (vectors). , and stand for the transpose, hermitian transpose, and pseudo-inverse operations, respectively. denotes the expectation operator, randn is a random number between 0 and 1, and represents the set of complex numbers. and represent real and imaginary parts, and j is the imaginary unit, defined as .

3. Proposed SSDC Network

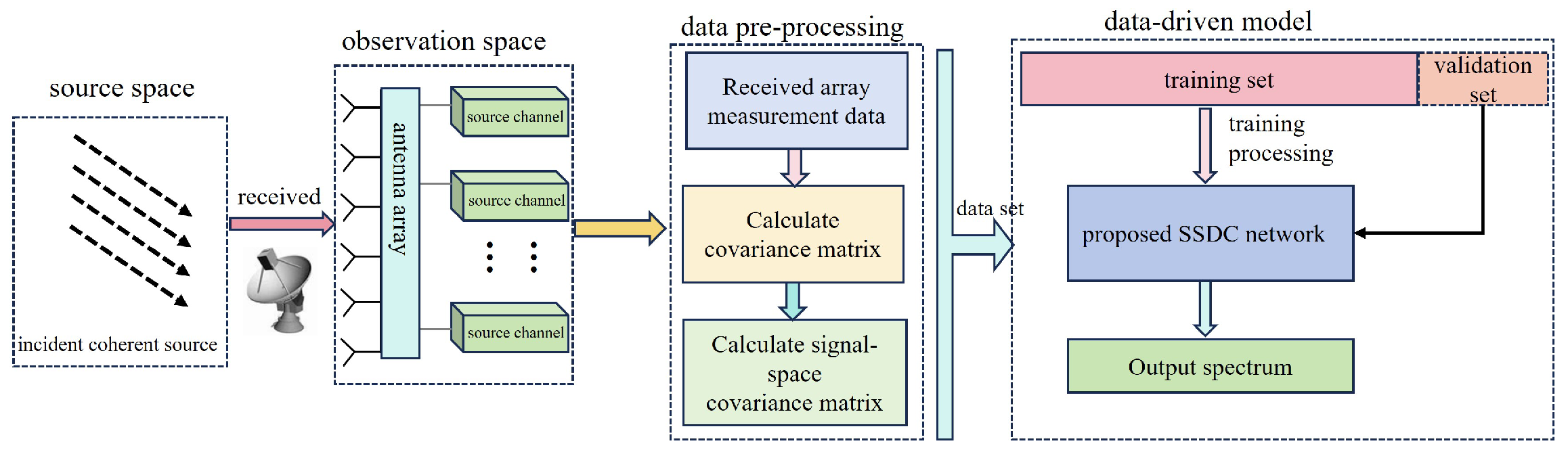

In this section, we propose a SSDC network that formulates the coherent signal DOA estimation as a multi-label classification task. The supervised learning method consists of two stages: the learning stage and the validation stage. The structure diagram of the SSDC network is illustrated in

Figure 2. We design a two-input system with

, which includes the real part and the imaginary part of the signal covariance matrix. The two-dimensional convolution is employed to perform feature extraction from the multi-channel input data. Subsequently, Fully connected (FC) layers concatenate the outputs from the real and imaginary parts of the convolutional layers. After one round of a Fully connected layer, a one-dimensional convolution is used for feature fitting. Finally, a pre-selected grid is used to infer the DOA estimation values. As the network runs deeper, to prevent excessive increase in network parameters and the risk of overfitting, we choose a five-layer hidden architecture to achieve a nonlinear representation of the network.

In the training stage of the SSDC network, we assume that the values of the elements in the vector sparse signal

are only one at the true source location and zero otherwise. For this, we need to find a relationship from

to sparse signal vector

, even though it is a

black box. According to the well-known universal approximation theorem [

31], a feedforward network with a single hidden layer can approximate continuous functions on compact subsets of

. For multi-layer networks, we define nonlinear function

f as a mapping from the input space to the output space, i.e.,

, and it is parametrized using a five-layer SSDC model, i.e.,

where

and

are the outputs of inputs

and

, respectively.

is the input of the third network layer and the

is the final output.

Functions

and

are based on 2D-convolution architectures and are performed in parallel depending on the real and imaginary parts, which consist of 10 and 5 filters, respectively. Then, they follow a Rectified Linear Unit (ReLU) layer that applies the activation function to the variables from the previous layer. The output of the

ith layer is given:

Kernel

is a 2D matrix of the size of

. We employ

for

and

for the second convolution layers

. The stride

s is set to

with no padding, and

represent the bias of the

ith layer. Subsequently, we flatten the results obtained from the double parallel convolutions and concatenate them into a single dataset, then enter the third layer, a Fully connected network, represented as

Here,

and

represent the weight and bias of the

ith layer.

is a dense layer with

L neurons, followed by a ReLU layer. Thereafter, the proposed

and

structures are based on the standard 1D convolution architecture, which consists of three and one filters, respectively, i.e.,

Kernel

is a vector of size

. We employ

for

and

for layers

. Stride

with padding operator

restores the output of activation function to the original input size by applying zero-padding at the borders. In addition,

represent the bias. The all activation function can be expressed as

The final output layer,

, consists of

L neurons in a Conv1D layer, followed by the sigmoid activation function. The sigmoid function, defined as

, is applied to the values from the preceding layer, returning values within

, representing the probability of each entry in the predicted label. We define the output layer as

. Like most supervised learning approaches, the SSDC network trains on the offline dataset

where

D denotes the batch size and parameters

with sets of weight

and bias

use backpropagation, minimizing the Mean Square Error (MSE) as the loss function between the reconstructed spectrum

and the original

, as shown in [

20], i.e.,

The MSE is an appropriate criterion for minimizing the error between the learning target and the true target. To continuously reduce the loss function

, backpropagation is used to update the weight and bias vectors. The update process is as follows:

and

and

are the partial derivatives of the parameters with respect to the

ith layer neuron, represented as

, reflecting the sensitivity of the final loss to the

ith layer neuron.

represents the learning rate.

In addition, this paper adopts an adaptive moment estimation algorithm called Adam [

32] to optimize the parameters of the SSDC network. Since the learning rate is a crucial parameter during neural network optimization, setting it too high may cause the loss function not to converge, while setting it too low may result in slow convergence of the loss function. Therefore, we employ a dynamically changing learning rate to adaptively adjust the convergence of the loss function. Assuming

represents the first moment of the partial derivative and

represents the second moment of the partial derivative, the Adam algorithm combines the RMSprop algorithm and momentum-based methods. To ensure that each update is related to historical values, it performs exponential moving average on both the gradient and the square of the gradient, as follows:

and

where

and

are the decay rates for the two moving averages, with this paper using

and

, and

m is the

mth of iterations. Then, the initial sliding value is corrected, that is,

and

Finally, the parameters are updated

and

is a very small number (usually

) to avoid having a denominator of zero.

Remark:Figure 3 depicts a fabricated prototype picture of the proposed SSDC network for coherent DOA estimation. When a coherent signal incident on a uniform linear array is considered, the received data need to be preprocessed first. This process requires the covariance matrix to be derived from the received data, and then the signal-space covariance matrix is calculated according to

. The data corresponding to the range of incident DOA are subjected to this preprocessing process; a random

of the signal spatial covariance and the corresponding spatially sparse signal data are used to enter the proposed SSDC network for training and

of the preprocessed data is randomly selected for validation.

4. Simulation Results

In this section, we carry out several simulations to discuss the performance of the proposed SSDC network, the SS-MUSIC method [

26], and DL-based DOA estimation methods, i.e., ASL (we use ASL2) [

29], DCN [

20], and DNN [

19]. The root mean square error (RMSE) and MSE are used to evaluate the performance of these algorithms, which is

where

and

denote the real and estimated DOAs in the

nth Monte Carlo (MC) simulation experiment, respectively.

The Cramér–Rao Bound (CRB) provides a lower bound on the covariance matrix of for any unbiased estimator [

33]. In this paper, the CRB is used in the case of large snapshots of the estimated variance approximation using the stochastic maximum likelihood algorithm [

33], represented as

with

and

where

is the first-order derivative of the direction vector,

is the covariance matrix calculated by Equation (

3),

is a singular matrix with a rank of one.

4.1. Experiment Setup

To train the proposed SSDC network, we consider the grid with resolution of and define a narrow grid scope with . For the simulations, we employ a ULA with sensors, , array spacing m, and signal carrier wavelength m.

For the training dataset, we select coherent sources with . The first and second sources are uniformly generated within the ranges and with a step of , and 10 groups of fixed snapshots are collected with SNR randomly distributed between −20 dB and 0 dB. Based on Python 3.10 and the Adam optimizer, we utilize Keras 2.12.0 and its embedded tools for gradient computation to implement the training of SSDC network. The data are generated by the operating environment “12th Gen Intel(R) Core(TM) i7-12700H 2.30 GHz processor with a 64-bit operating system MATLAB 2022a”, and the sample datasets consist of a total of 15,800 measurement vectors. In addition, we adopt mini-batch training with a batch size of 64 and conduct 500 training epochs.

4.2. MSE during Training and Validation

Figure 4 illustrates the changes in MSE during the training and validation of the DCN [

20], the DNN [

19], and the proposed SSDC methods.

The DNN-based framework consists of a multi-task autoencoder and a series of parallel multilayer classifiers [

19]. The spacing of the array elements of the ULA in the DNN model is half a wavelength, and the potential space is divided into six subregions of equal spatial extent. The number of hidden layers in each subregion is two, and the sizes of the hidden and output layers of each classifier are 30, 20, and 20, respectively. All the weights and biases of the DNN are randomly initialized with a uniform distribution between −0.1 and 0.1.

The DCN-based framework [

20] can learn inverse transformations from large training datasets, considering the spatial sparsity of the incident signal. The DOA estimation problem is transformed into a sparse linear inverse problem by introducing a spatial overcomplete formulation. Compared with the traditional iteration-based sparse recovery algorithms, the DCN-based method requires only feed-forward computation to realize real-time direction measurement. The DCN network has four hidden layers and one output layer with convolutional kernels of 25 × 12, 15 × 6, 5 × 3, 3 × 1, and an output dimension of 60 × 1, respectively.

As can be seen from

Figure 4, the proposed SSDC network exhibits lower MSE on both the training and validation sets compared to the other two methods. The generated data are randomly partitioned into two sets:

is allocated for the training set, while the remaining

is assigned to the validation set.

Figure 4a provides a clear visualization of the training process, where the initial higher loss values are attributed to the random initialization of model parameters and limited understanding of data patterns. As training progresses, the model gradually learns the data features, resulting in a steady reduction in the loss function.

To prevent overfitting during the training process, a validation dataset is used to evaluate the model’s performance on unused data.

Figure 4b displays the model’s loss function performance on the validation data as the training advances. While the training loss might fluctuate, the overall trend shows a decreasing pattern. Furthermore, the proposed SSDC network exhibits lower MSE values on both the training and validation sets compared to the other two methods.

To evaluate the computational complexity of the proposed SSDC algorithm, in

Table 1, we record the running time required by all the related algorithms (average of 50 tests). It can be seen that the running time of the proposed SSDC algorithm is only a fraction of the SS-MUSIC algorithm [

26]. Moreover, the ASL algorithm [

29] has the longest train time due to its very large number of parameters. Next, multiple DNNs (six DNNs in [

19]) need to be trained, so SSDC is also more efficient than [

19]. Furthermore, compared to the DCN algorithm [

20], although the proposed SSDC has more parameters, SSDC reduces the computational dimensions by processing the real and imaginary parts separately, and thus SSDC is more efficient than DCN.

4.3. Experiment with the Same Number of Coherent Sources in the Test Set

As in the Training Set

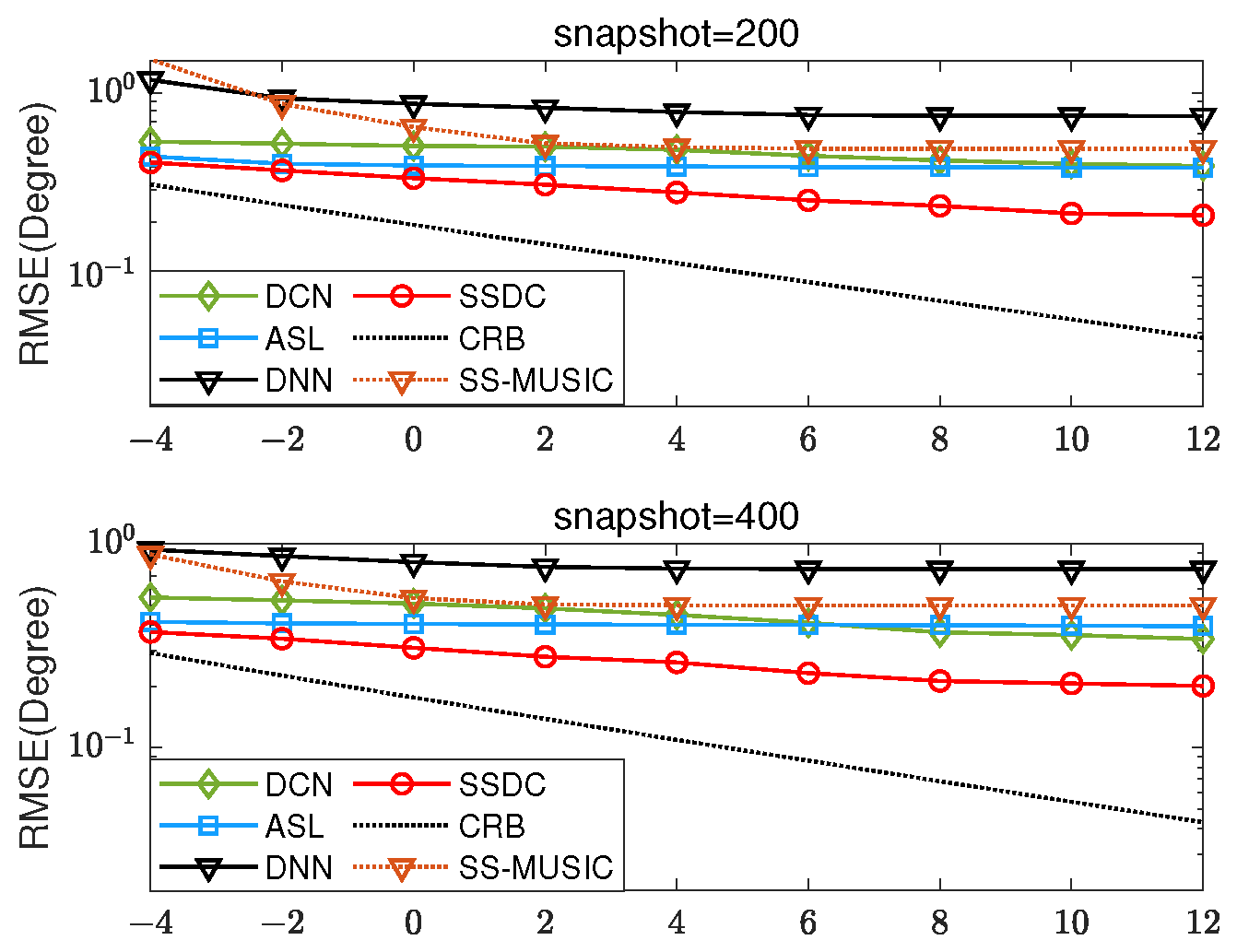

Firstly, two coherent sources with DOAs

and

and SNR within

dB are considered. As shown in

Figure 5, we conduct 500 independent MC simulation experiments with snapshot numbers set at 200 and 400. The proposed SSDC method has better estimation performance compared to the SS-MUSIC method and other three data-driven methods.

Figure 5 shows the RMSE’s variation of the proposed SSDC network at different SNR levels. As the SNR increases, the RMSE shows a decreasing trend, i.e., the RMSE is smaller at higher SNRs. This indicates that the SSDC network estimator performs better and can estimate the DOAs more accurately when the signal is relatively strong and the noise is weak. Therefore, there is a negative correlation between the SNR and the RMSE, and a high SNR usually corresponds to a low RMSE.

Table 2 provides the visualized RMSE values of

Figure 5. It can be more clearly observed that the proposed SSDC network has the lowest RMSE in the range of −4 to 12 dB.

For enhanced result visualization,

Figure 6 displays the corresponding estimated MSE for the two sources. The first source is characterized by direction

, while the second source is

with

in

Figure 6a and

in

Figure 6b. The snapshot is 256, SNR = 0 dB. We observe that the estimated MSE of the DOA estimation obtained by our method optimizes the estimation at both large and small angular intervals.

4.4. Experiment with a Different Number of Coherent Sources in the Test and Training Sets

Next, spectral peaks are tested in three coherent signals with DOAs of

, as shown in

Figure 7, where SNR = 10 dB, the number of snapshots is 256. When the number of signals

, the SS-MUSIC algorithm does not work well in this case due to the total number of array elements

, so it is not used for comparison. Even in the case of a mismatch between the number of test sources and the number of training sources, the proposed method could search for sharper peaks.

Three coherent signals located at

,

, and

with SNRs within

dB are considered, and for the RMSE of each SNR, we perform 500 independent MC simulation experiments, as shown in

Figure 8. Similarly, the snapshot numbers are set to 200 and 400, respectively. As the SNR and snapshot increase, the proposed SSDC method exhibits higher estimation accuracy compared to the ASL algorithm, which is also designed for coherent signal estimation. Moreover, it can be seen from

Figure 8 that the performance of the proposed SSDC network estimator decreases the RMSE as the SNR increases.

Table 3 provides the visualized RMSE values of

Figure 8. It can be more clearly observed that the proposed SSDC network has the lowest RMSE in the range of −14 to 2 dB. Despite the lower SNR, the proposed SSDC network still has the optimal performance compared to the other three methods.

Finally, we test snapshots with an interval of 50 in the range of

and perform 500 independent Monte Carlo (MC) simulations at SNRs of −5 dB and 0 dB, respectively. The RMSE results are shown in

Figure 9. As the number of snapshots increases, the proposed SSDC algorithm is significantly robust and performs optimally, even in scenarios where the number of test targets and training targets mismatch.