Program Behavior Dynamic Trust Measurement and Evaluation Based on Data Analysis

Abstract

1. Introduction

- Constructing a benchmark library for function call sequences based on sliding windows. Traditional industrial control terminal program security protection methods often use signature- or rule-based detection methods, which have coarse detection granularity and cannot effectively detect and prevent attacks. We use a sliding window execution sequence measurement method to measure the sequence of application layer function calls, and establish a complete lightweight benchmark library based on the measurement values. The benchmark library lays the foundation for the subsequent implementation of the dynamic credibility evaluation of application layer function call sequences.

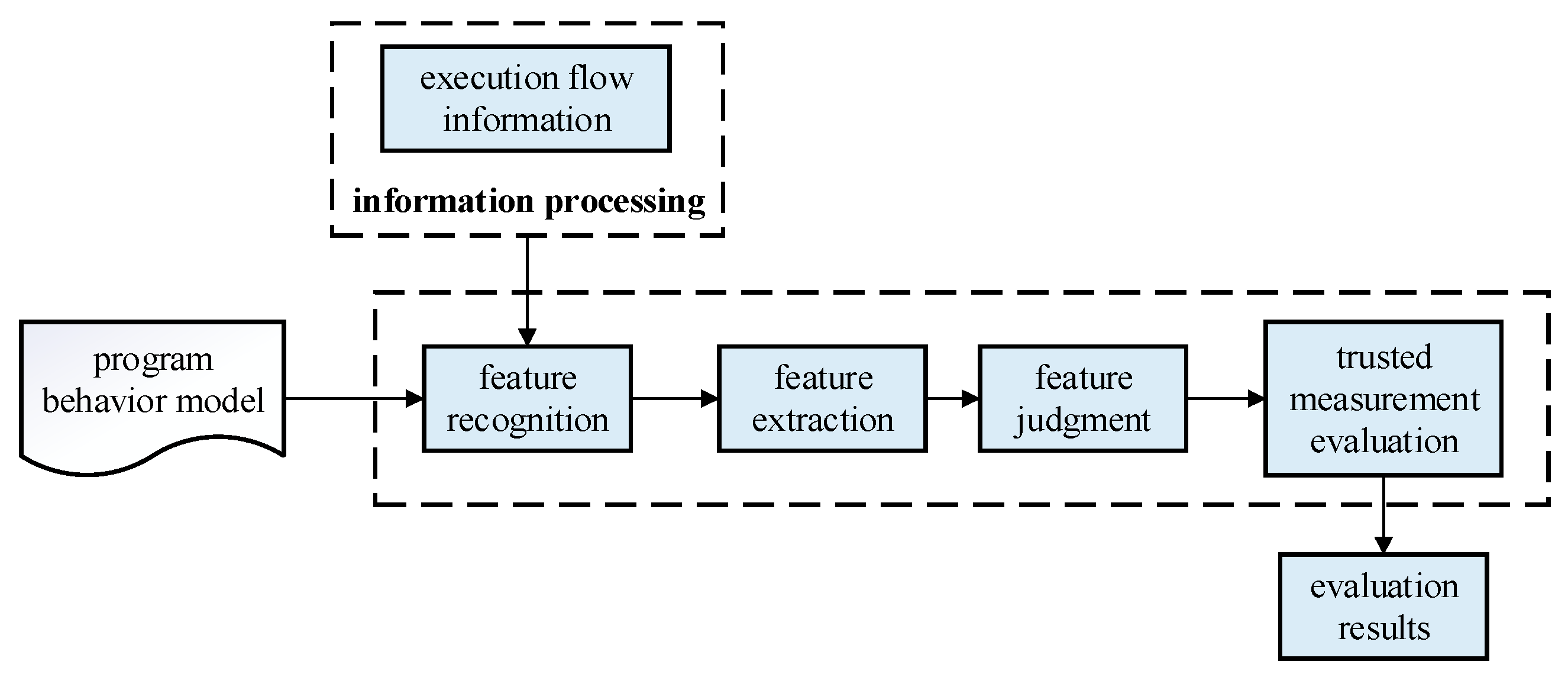

- Proposing a partition dynamic credibility evaluation mechanism. Traditional industrial control terminal security detection technology usually uses offline scanning for detection, which lacks real-time performance and cannot respond quickly to attack events. Meanwhile, the system calls generated during program execution are unstable and difficult to measure. We propose a dynamic credibility evaluation method between partitions, which divides runtime program behavior into application layer function call behavior and system call behavior within function intervals. For the application layer function call sequence, a trust measurement method based on sliding window execution sequence is used. The real-time measurement results are evaluated based on the benchmark library. For the system call sequence within the function interval, a maximum entropy system call model is constructed and used to evaluate the credibility of the system call sequence.

2. Analysis of the Current State of Research

3. Research Content

3.1. Function Call Sequence Trust Measurement Evaluation

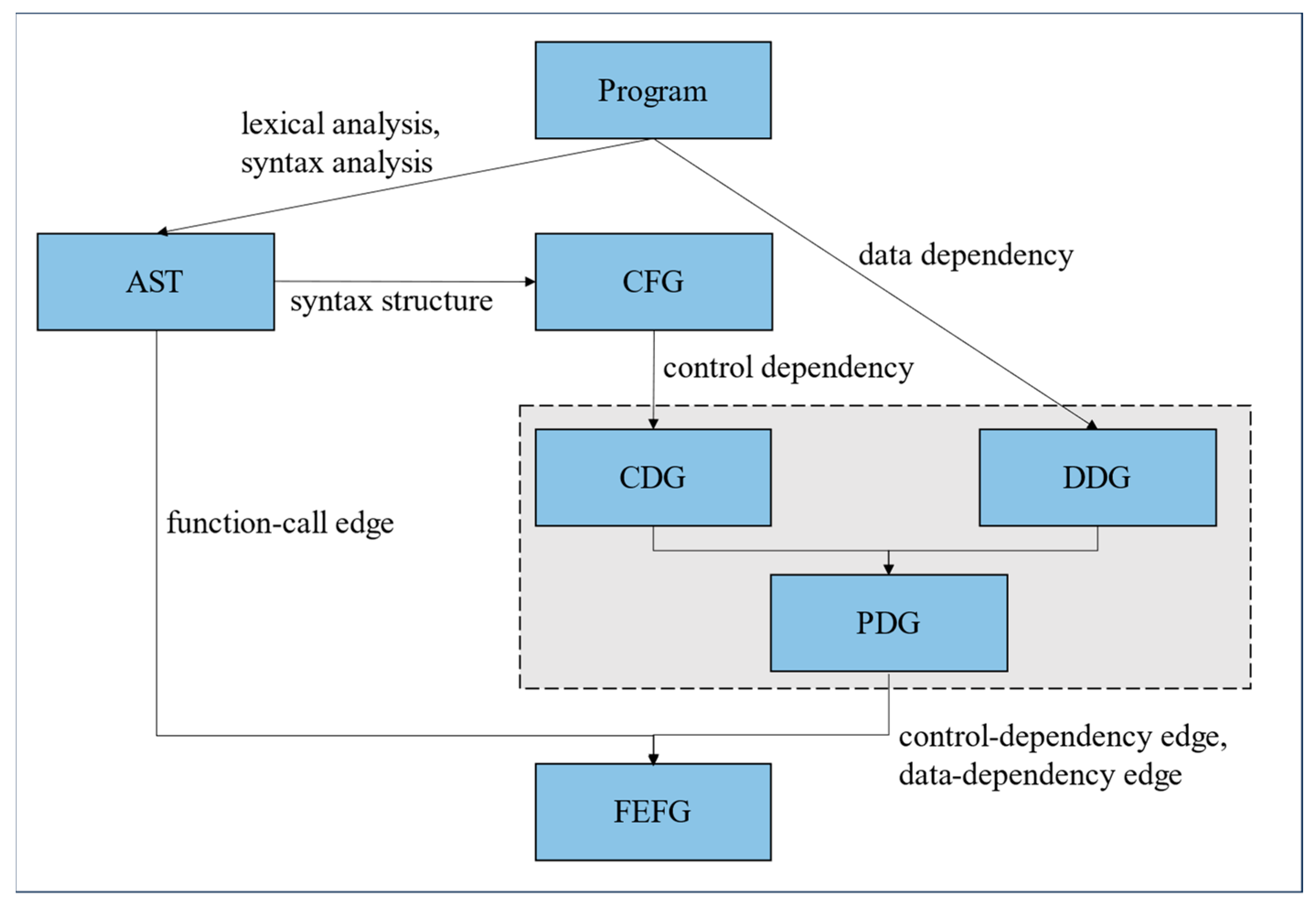

3.1.1. Construction of Function-Level Execution Flow Graph (FEFG)

- Construction of Abstract Syntax Tree (AST)

- 2.

- Construction of Control Flow Graph (CFG)

| Algorithm 1: Program Control Flow Graph Construction Algorithm |

| Input: Gast = (V, E, root), program source code Output: Gcfg = (V, E, entry, exit) |

|

- 3.

- Construction of Program Dependency Graph (PDG)

| Algorithm 2: Control Dependency Graph Construction Algorithm |

| Input: Gcfg = (V, E, entry,exit) Output: Gcdg = (V, E, entry) |

|

| Algorithm 3: Data Dependency Graph Construction Algorithm |

| Input: Collection N of variables, constants, functions, and statement blocks Output: Gddg = (V, E, var) |

|

| Algorithm 4: Program Dependency Graph Construction Algorithm |

| Input: Gcdg = (V, E, entry), Gddg = (V, E, var) Output: Gpdg = (V, E, entry, exit) |

|

- 4.

- Construction of Function Level Execution Flow Graph (FEFG)

| Algorithm 5: Function-level Execution Flow Graph Construction Algorithm |

| Input: Gast-unite = (V, E, root), Gpdg = (V, E, entry,exit) Output: Gfefg = (V, E, entry, exit) |

|

3.1.2. Construction of Benchmark Library

| Algorithm 6: Measurement Algorithm for Function Call Sequences Based on Sliding Windows |

| Input: func_call_seq Output: hashValue |

|

3.1.3. Dynamic Credibility Measurement of Function Call Sequence Based on Sliding Windows

| Algorithm 7: Trust Measurement Evaluation Algorithm for Function Call Sequences Based on Sliding Windows |

| Input: Benchmark library, real-time measurement value Output: Evaluation result |

|

3.2. Trust Measurement Evaluation for System Call Sequences

3.2.1. Maximum Entropy Model

3.2.2. System Call Credibility Measurement Model Based on Maximum Entropy

- (1)

- Feature extraction. Select appropriate features according to different tasks and requirements, such as call frequency, call time, etc., and convert the original data into feature vectors.

- (2)

- Model training. Use the maximum entropy model to train the model and obtain a model with high accuracy and strong generalization ability. Through training, the probability distribution is obtained.

- (3)

- Prediction and evaluation. Use the existing eigenvectors to predict the results of unknown data, calculate its probability distribution, and evaluate the safety of the program.

- (1)

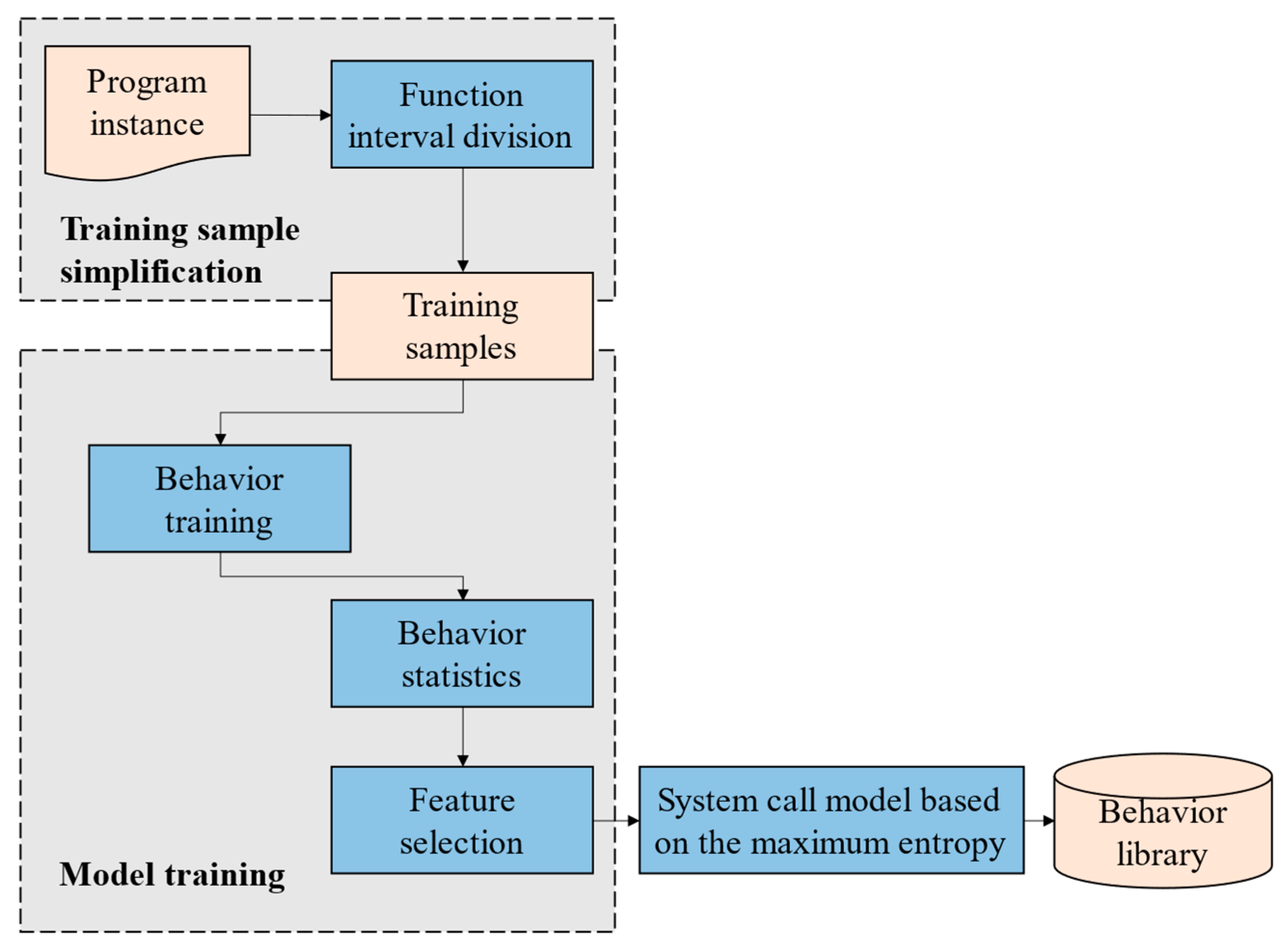

- Training sample simplification: It mainly simplifies the training data, divides the behavior intervals by function, obtains the key system calls and system calls with high security in the interval, and obtains the training samples of the maximum entropy model.

- (2)

- Model training: The training system trains the training samples, counts the behavior probability, extracts the characteristics of the maximum entropy model, establishes the system call model in the interval based on the maximum entropy, and stores it in the behavior database.

| Algorithm 8: Construction Algorithm for the Maximum Entropy System Call Model |

| Input: training dataset feature function Output: the maximum entropy system call model P(y|x) |

|

| Algorithm 9: Maximum Entropy-Based System Call Credibility Measurement Evaluation Algorithm |

| Input: the feature set F = { f1(X), f2(X), …, fn(X)}, the new function interval system call sequence F′ Output: the credibility measure score of F |

|

4. Experimental Verification

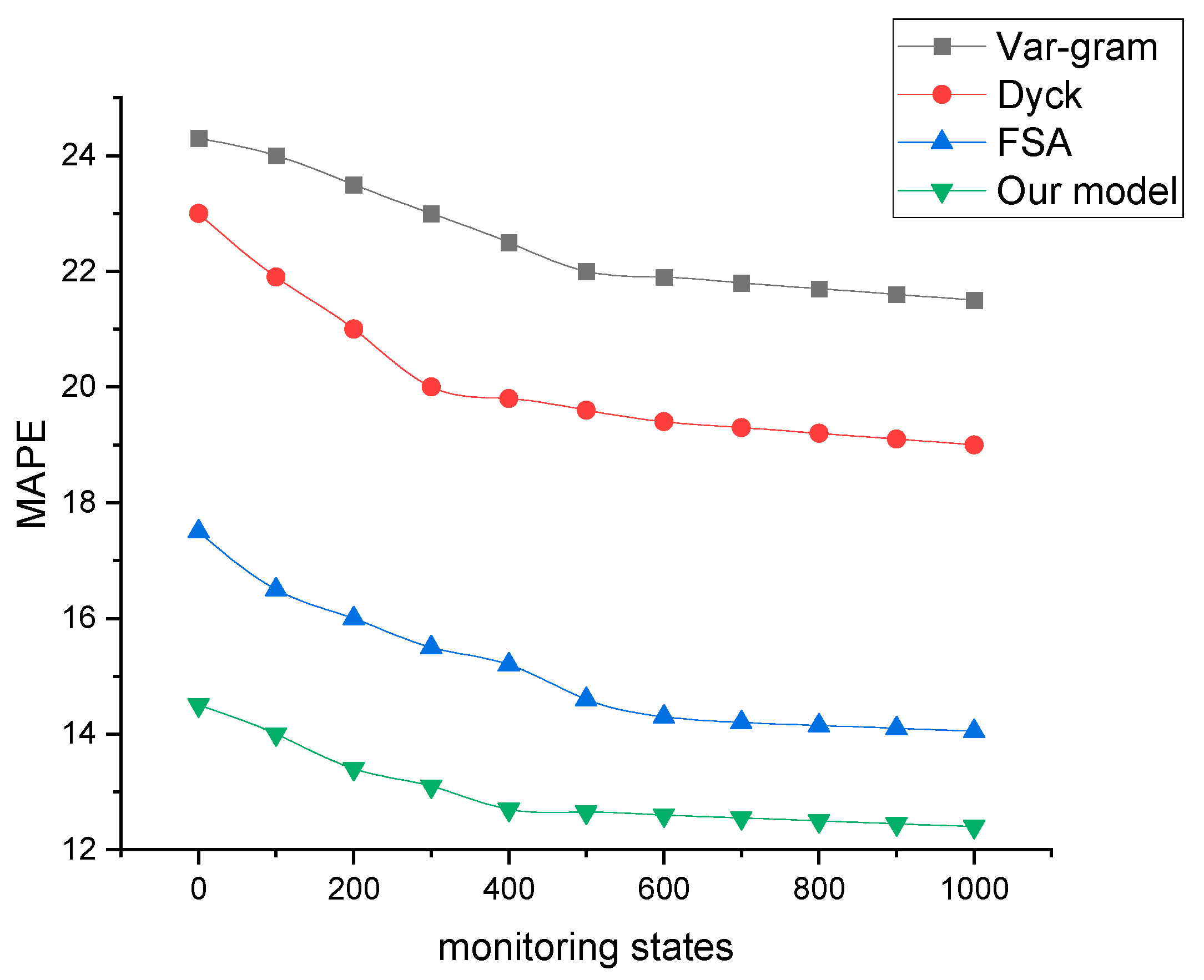

4.1. Evaluation of Model Effectiveness

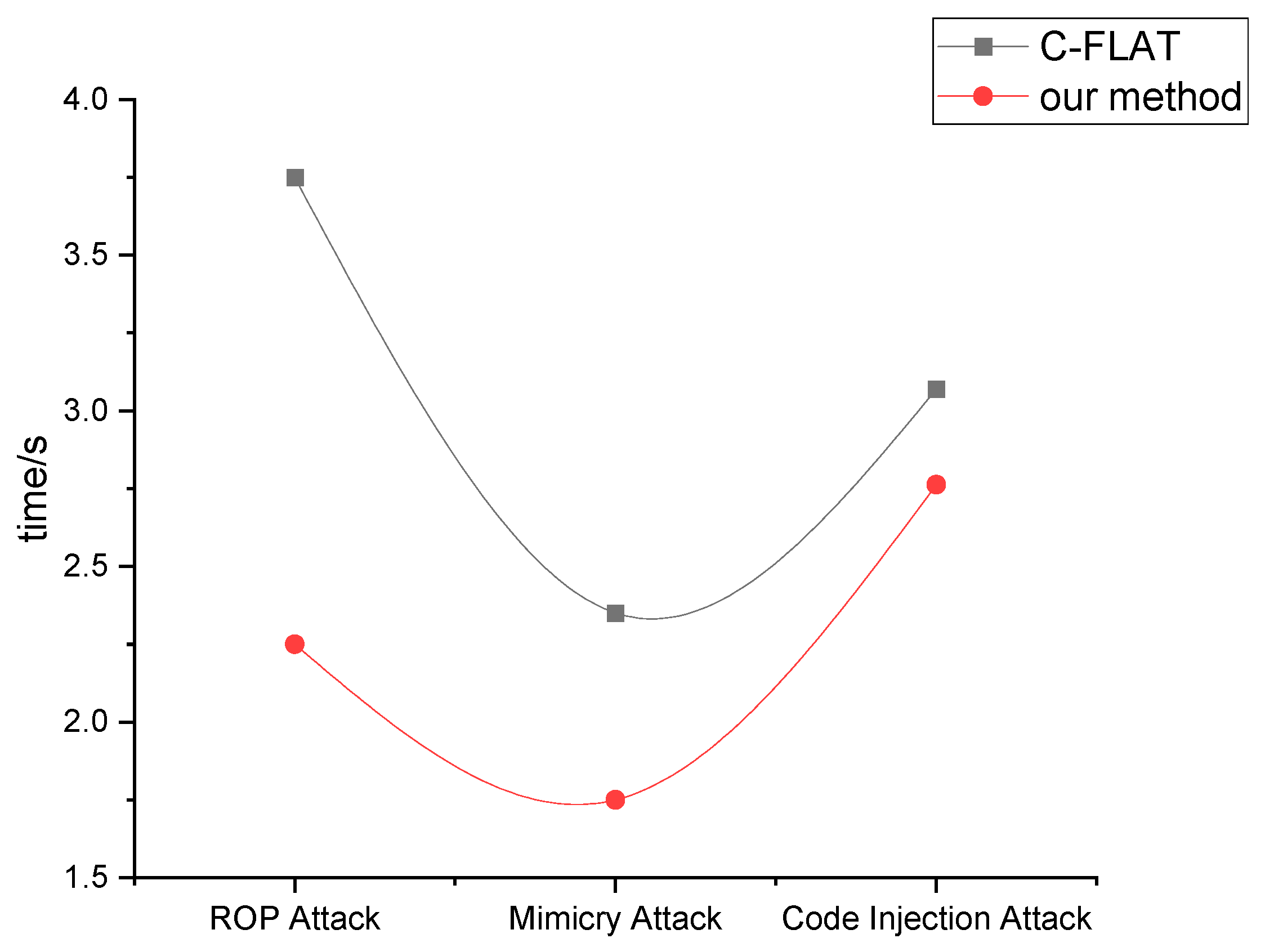

4.2. Comparative Experiment on Attack Detection

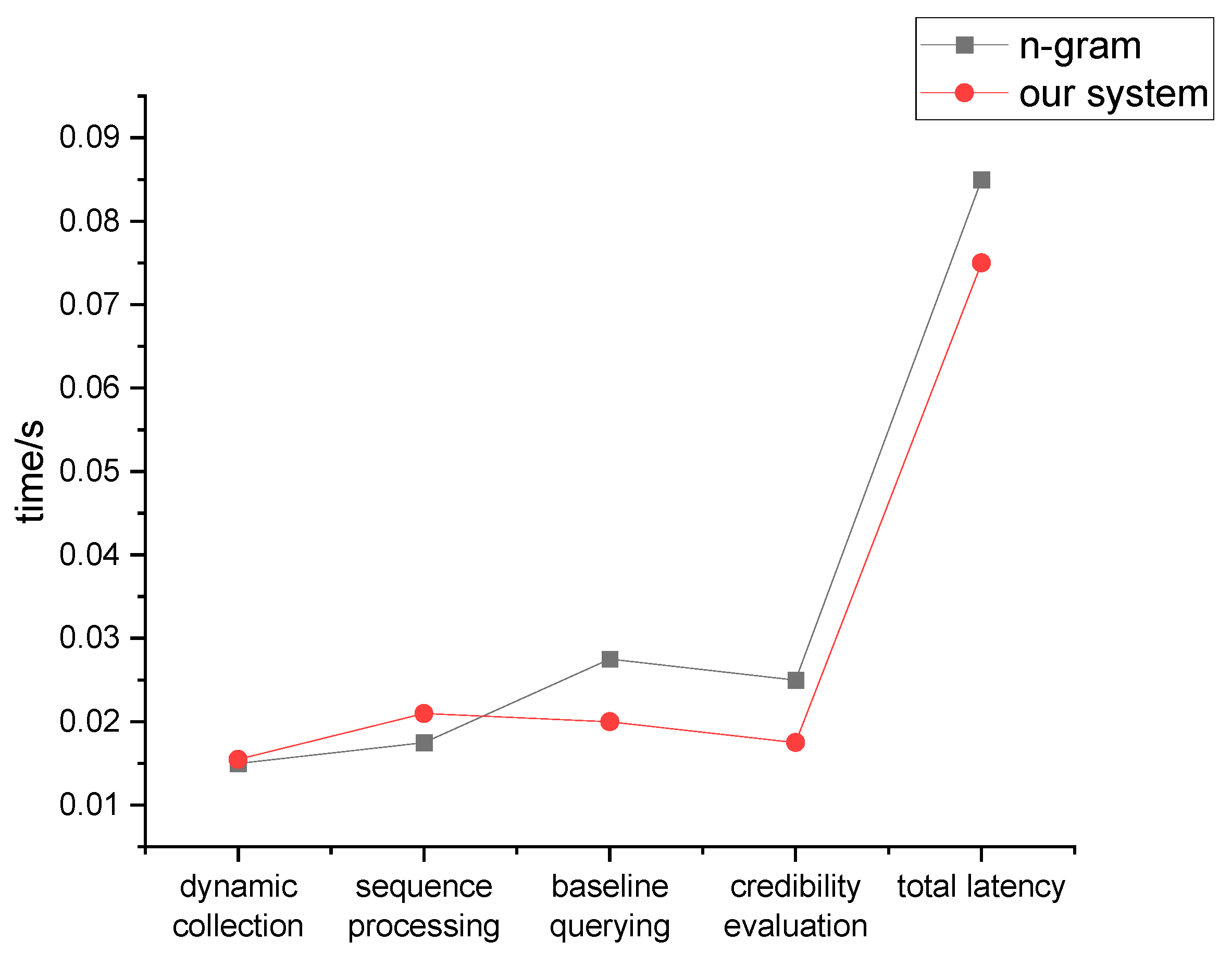

4.3. Evaluation of Model Performance

4.4. Discussion

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Tsochev, G.; Trifonov, R.; Nakov, O.; Manolov, S.; Pavlova, G. Cyber security: Threats and Challenges. In Proceedings of the 2020 International Conference Automatics and Informatics (ICAI), Varna, Bulgaria, 1–3 October 2020. [Google Scholar]

- Ani, U.P.D.; Watson, J.M.; Green, B.; Craggs, B.; Nurse, J.R.C. Design considerations for building credible security testbeds: Perspectives from industrial control system use cases. J. Cyber Secur. Technol. 2021, 5, 71–119. [Google Scholar] [CrossRef]

- Zhang, L.; Meng, Y.; Yu, J.; Xiang, C.; Falk, B.; Zhu, H. Voiceprint Mimicry Attack Towards Speaker Verification System in Smart Home. In Proceedings of the IEEE INFOCOM 2020—IEEE Conference on Computer Communications, Toronto, ON, Canada, 6–9 July 2020. [Google Scholar]

- Luo, B.; Xiang, F.; Sun, Z.; Yao, Y. BLE neighbor discovery parameter configuration for IoT applications. IEEE Access 2019, 7, 54097–54105. [Google Scholar] [CrossRef]

- Khedker, U.; Sanyal, A.; Sathe, B. Data Flow Analysis: Theory and Practice; CRC Press: Boca Raton, FL, USA, 2017; pp. 59–99. [Google Scholar]

- Aghakhani, H.; Gritti, F.; Mecca, F.; Lindorfer, M.; Ortolani, S.; Balzarotti, D.; Vigna, G.; Kruegel, C. When malware is packin’ heat: Limits of machine learning classifiers based on static analysis features. In Proceedings of the Network and Distributed Systems Security (NDSS) Symposium 2020, San Diego, CA, USA, 23–26 February 2020. [Google Scholar]

- Shestakov, A.L. Dynamic measuring methods: A review. Acta IMEKO. 2019, 8, 64–76. [Google Scholar] [CrossRef]

- Sailer, R.; Zhang, X.; Jaeger, T.; van Doorn, L. Design and implementation of a TCG-based integrity measurement architecture. In Proceedings of the 13th USENIX Security Symposium 2004, San Diego, CA, USA, 9–13 August 2004; pp. 223–238. [Google Scholar]

- Koruyeh, E.M.; Shirazi, S.H.A.; Khasawneh, K.N.; Song, C.; Abu-Ghazaleh, N. Speccfi: Mitigating spectre attacks using CFI informed speculation. In Proceedings of the 2020 IEEE Symposium on Security and Privacy (SP), San Francisco, CA, USA, 18–21 May 2020; pp. 39–53. [Google Scholar]

- Jeong, S.; Hwang, J.; Kwon, H.; Shin, D. A CFI countermeasure against GOT overwrite attacks. IEEE Access 2020, 8, 36267–36280. [Google Scholar] [CrossRef]

- Feng, L.; Huang, J.; Hu, J.; Reddy, A. FastCFI: Real-time control-flow integrity using FPGA without code instrumentation. ACM Trans. Des. Autom. Electron. Syst. TODAES 2021, 26, 1–39. [Google Scholar] [CrossRef]

- Serra, G.; Fara, P.; Cicero, G.; Restuccia, F.; Biondi, A. PAC-PL: Enabling control-flow integrity with pointer authentication in FPGA SoC platforms. In Proceedings of the 2022 IEEE 28th Real-Time and Embedded Technology and Applications Symposium (RTAS), Milano, Italy, 3 May 2022; pp. 241–253. [Google Scholar]

- She, C.; Chen, L.; Shi, G. TFCFI: Transparent Forward Fine-grained Control-Flow Integrity Protection. In Proceedings of the 2022 IEEE International Conference on Trust, Security and Privacy in Computing and Communications (TrustCom), Wuhan, China, 28–30 October 2022; pp. 407–414. [Google Scholar]

- Moghadam, V.E.; Prinetto, P.; Roascio, G. Real-Time Control-Flow Integrity for Multicore Mixed-Criticality IoT Systems. In Proceedings of the 2022 IEEE European Test Symposium (ETS), Barcelona, Spain, 23–27 May 2022; pp. 1–4. [Google Scholar]

- Li, Y.; Wang, M.; Zhang, C.; Chen, X.; Yang, S.; Liu, Y. Finding cracks in shields: On the security of control flow integrity mechanisms. In Proceedings of the 2020 ACM SIGSAC Conference on Computer and Communications Security, Virtual, 9–13 November 2020; pp. 1821–1835. [Google Scholar]

- Abera, T.; Asokan, N.; Davi, L.; Ekberg, J.-E.; Nyman, T.; Paverd, A.; Sadeghi, A.R.; Tsudik, G. C-FLAT: Control-flow attestation for embedded systems software. In Proceedings of the 2016 ACM SIGSAC Conference on Computer and Communications Security 2016. Vienna, Austria, 24–28 October 2016; pp. 743–754. [Google Scholar]

- Hu, H.; Shinde, S.; Adrian, S.; Chua, Z.L.; Saxena, P.; Liang, Z. Data-Oriented Programming: On the Expressiveness of Non-control Data Attacks. In Proceedings of the 2016 IEEE Symposium on Security and Privacy (S&P), San Jose, CA, USA, 23–25 May 2016. [Google Scholar]

- Canonical. Ubuntu Core—The Operating System Optimized for IoT and Edge; Canonical: Eatontown, NJ, USA, 2022. [Google Scholar]

- Werner, M.; Unterluggauer, T.; Schaffenrath, D.; Mangard, S. Sponge-Based Control-Flow Protection for IoT Devices. In Proceedings of the 2018 IEEE European Symposium on Security and Privacy (EuroS&P), London, UK, 24–26 April 2018. [Google Scholar]

- Shahzad, R.K. Android malware detection using feature fusion and artificial data. In Proceedings of the 2018 IEEE 16th Intl Conf on Dependable, Autonomic and Secure Computing, 16th Intl Conf on Pervasive Intelligence and Computing, 4th Intl Conf on Big Data Intelligence and Computing and Cyber Science and Technology Congress (DASC/PiCom/DataCom/CyberSciTech), Athens, Greece, 12–15 August 2018; IEEE: Piscataway, NJ, USA, 2018; pp. 702–709. [Google Scholar]

- Cadar, C.; Sen, K. Symbolic execution for software testing: Three decades later. Commun. ACM 2013, 56, 82–90. [Google Scholar] [CrossRef]

- Vishnyakov, A.; Fedotov, A.; Kuts, D.; Novikov, A.; Parygina, D.; Kobrin, E.; Logunova, V.; Belecky, P.; Kurmangaleev, S. Sydr: Cutting edge dynamic symbolic execution. In Proceedings of the 2020 Ivannikov ISPRAS Open Conference (ISPRAS), Moscow, Russia, 10–11 December 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 46–54. [Google Scholar]

- Cadar, C.; Nowack, M. KLEE symbolic execution engine in 2019. Int. J. Softw. Tools Technol. Transf. 2021, 23, 867–870. [Google Scholar] [CrossRef]

- Trabish, D.; Kapus, T.; Rinetzky, N.; Cadar, C. Past-sensitive pointer analysis for symbolic execution. In Proceedings of the 28th ACM Joint Meeting on European Software Engineering Conference and Symposium on the Foundations of Software Engineering 2020, Virtual, 8–13 November 2020; pp. 197–208. [Google Scholar]

- Poeplau, S.; Francillon, A. Symbolic execution with SymCC: Don’t interpret, compile! In Proceedings of the 29th USENIX Conference on Security Symposium 2020, Boston, MA, USA, 12–14 August 2020; pp. 181–198. [Google Scholar]

- Amer, E.; Zelinka, I. A dynamic Windows malware detection and prediction method based on contextual understanding of API call sequence. Comput. Secur. 2020, 92, 101760. [Google Scholar] [CrossRef]

- Moore, E.F. The Shortest Path Through a Maze. In Proceedings of the International Symposium on the Theory of Switching; Harvard University Press: Cambridge, MA, USA, 1959. [Google Scholar]

- Jaynes, E.T. Information theory and statistical mechanics. Phys. Rev. 1957, 106, 620. [Google Scholar] [CrossRef]

- Berger, A.L. The Improved Iterative Scaling Algorithm: A Gentle Introduction; CMU School of Computer Science: Pittsburgh, PA, USA, 1997. [Google Scholar]

- Vxheaven. Org’s Website Mirror [EB/OL]. (2018–07–28). Available online: https://github.com/opsxcq/mirror-vxheaven.org (accessed on 20 December 2023).

- Lai, Y.; Liu, Z.; Ye, T. Software behaviour analysis method based on behaviour template. Int. J. Simul. Process Model. 2018, 13, 126–134. [Google Scholar] [CrossRef]

- Chen, X.; Ding, H.; Fang, S.; Li, Z.; He, X. A Defect Detection Technology Based on Software Behavior Decision Tree. In Proceedings of the 2017 International Conference on Computer Systems, Electronics and Control (ICCSEC), Dalian, China, 25–27 December 2017; IEEE: Piscataway, NJ, USA, 2017; pp. 717–724. [Google Scholar]

- Xiao, X.; Zhang, S.; Mercaldo, F.; Hu, G.; Sangaiah, A.K. Android malware detection based on system call sequences and LSTM. Multimed. Tools Appl. 2019, 78, 3979–3999. [Google Scholar] [CrossRef]

| Variables and Symbols | Meanings |

|---|---|

| D | training dataset |

| xi | input vector of the i-th sample |

| yi | true output of the i-th sample |

| fj(x,y) | a specific feature function that represents a feature function that satisfies the constraint condition hj(y) when the input is x and classified as y |

| hj(y) | constraint function that represents the value of the j-th feature when the input is x and classified as y |

| wj | weight of the j-th feature |

| φ(x,y) | feature vector of input vector x and classification y |

| P(y|x) | conditional probability of being classified as y given input x |

| Z(x) | normalization factor used to ensure that the sum of probability values equals 1 |

| Ep(hj) | expected value of feature function hj(y) under the current model |

| Es(hj) | expected value of feature function hj(y) given the training dataset D |

| λ | regularization parameter used to prevent overfitting |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Wang, S.; Hu, A.; Li, T.; Lin, S. Program Behavior Dynamic Trust Measurement and Evaluation Based on Data Analysis. Symmetry 2024, 16, 249. https://doi.org/10.3390/sym16020249

Wang S, Hu A, Li T, Lin S. Program Behavior Dynamic Trust Measurement and Evaluation Based on Data Analysis. Symmetry. 2024; 16(2):249. https://doi.org/10.3390/sym16020249

Chicago/Turabian StyleWang, Shuai, Aiqun Hu, Tao Li, and Shaofan Lin. 2024. "Program Behavior Dynamic Trust Measurement and Evaluation Based on Data Analysis" Symmetry 16, no. 2: 249. https://doi.org/10.3390/sym16020249

APA StyleWang, S., Hu, A., Li, T., & Lin, S. (2024). Program Behavior Dynamic Trust Measurement and Evaluation Based on Data Analysis. Symmetry, 16(2), 249. https://doi.org/10.3390/sym16020249