On the Alternative SOR-like Iteration Method for Solving Absolute Value Equations

Abstract

1. Introduction

| Algorithm 1([42]). (The SOR-like iteration method) |

Let the matrix A be nonsingular. Given two initial guesses , for until the generated sequence is convergent, compute

|

2. Preliminaries

- ;

- If , then ;

- Assume that P is a nonnegative matrix. If , then .

3. An Alternative SOR-like Iteration Method

| Algorithm 2 (The ASOR-like iteration method) |

Let the matrix A be nonsingular. Given two initial guesses , for until the generated sequence is convergent, compute

|

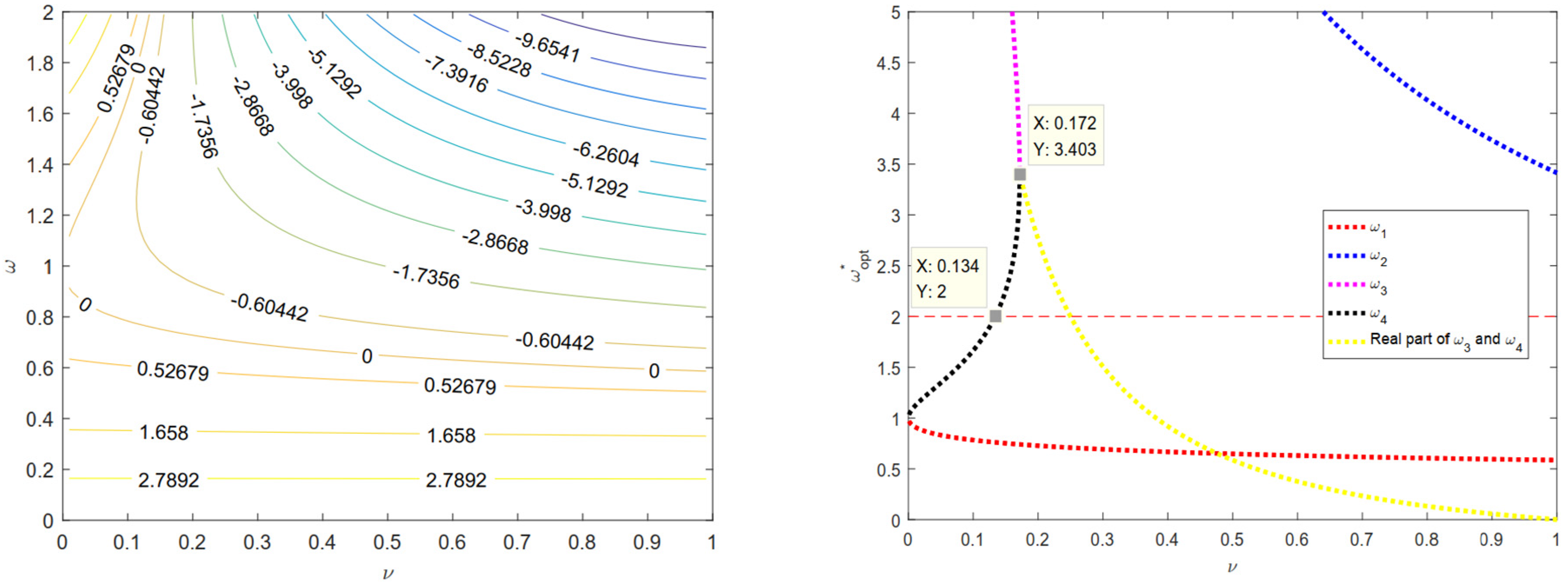

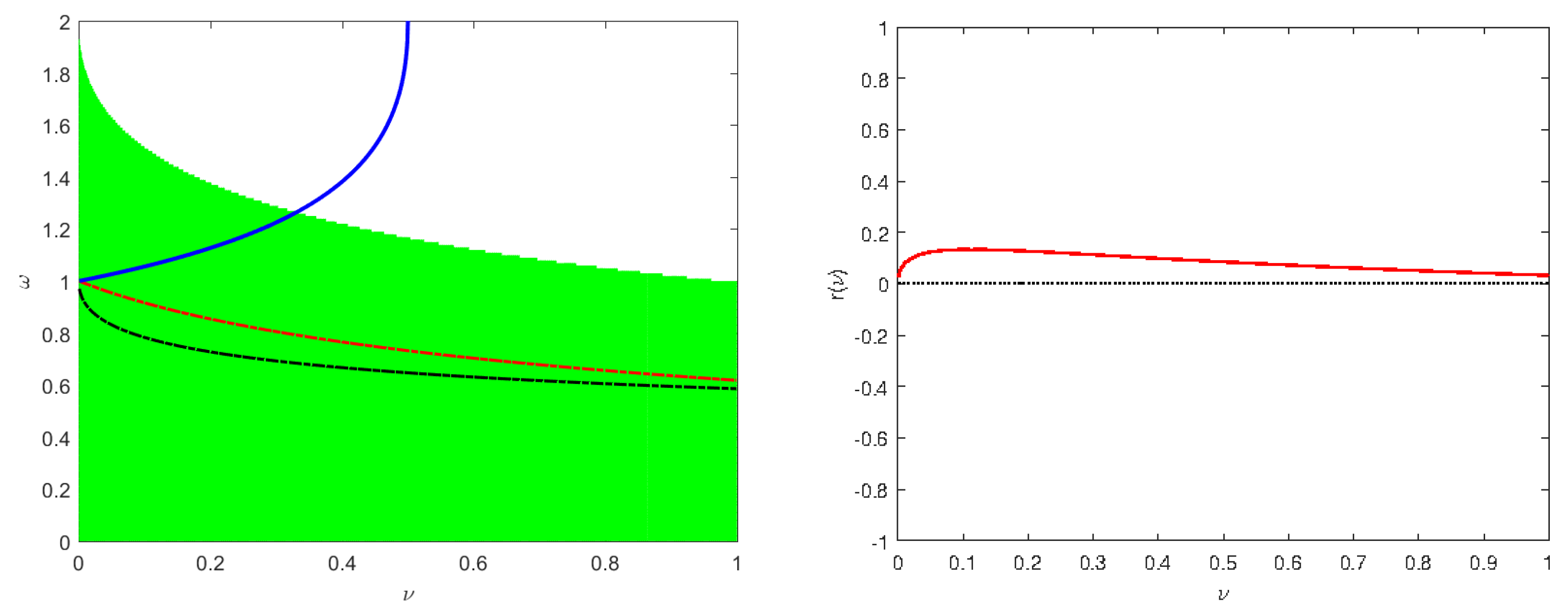

4. Optimal Parameter for the ASOR-like Iteration Method

5. Numerical Results

- ASOR-like-exp method: its iteration format is (9). The optimal parameter selection of the ASOR-like-exp method is consistent with the SOR-like-exp method.

- SOR-like-opt method [44]: its iteration format is consistent with the SOR-like-exp method where the theoretical optimal parameter follows (4). can be calculated by the classical bisection method with the termination criterion is or the updated ends of the interval , see [44] for specific operations.

- ASOR-like-opt method: its iteration format is consistent with the ASOR-like-exp method, and is calculated in accordance with (31).

- ASOR-like-aopt method: its iteration format is consistent with the ASOR-like-exp method and is calculated in accordance with (32).

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Rohn, J. A theorem of the alternatives for the equation Ax + B|x| = b. Linear Multilinear Algebra 2004, 52, 421–426. [Google Scholar] [CrossRef]

- Mangasarian, O.L. Absolute value programming. Comput. Optim. Appl. 2007, 36, 43–53. [Google Scholar] [CrossRef]

- Mangasarian, O.L. Absolute value equation solution via concave minimization. Optim. Lett. 2019, 1, 3–8. [Google Scholar] [CrossRef]

- Mangasarian, O.L. A generalized Newton method for absolute value equations. Optim. Lett. 2009, 3, 101–108. [Google Scholar] [CrossRef]

- Bai, Z.-Z. Modulus–based matrix splitting iteration methods for linear complementarity problems. Numer. Linear Algebra Appl. 2010, 17, 917–933. [Google Scholar] [CrossRef]

- Cottle, R.W.; Pang, J.-S.; Stone, R.E. The Linear Complementarity Problem; Academic Press: New York, NY, USA, 1992. [Google Scholar]

- Hu, S.-L.; Huang, Z.-H.; Zhang, Q. A generalized Newton method for absolute value equations associated with second order cones. J. Comput. Appl. Math. 2019, 39, 1490–1501. [Google Scholar] [CrossRef]

- Miao, X.-H.; Yang, J.-T.; Saheya, B.; Chen, J.-S. A smoothing Newton method for absolute value equation associated with second-order cone. Appl. Numer. Math. 2017, 120, 82–96. [Google Scholar] [CrossRef]

- Prokopyev, O. On equivalent reformulations for absolute value equations. Comput. Optim. Appl. 2009, 44, 363–372. [Google Scholar] [CrossRef]

- Mezzadri, F. On the solution of general absolute value equations. Appl. Math. Lett. 2020, 107, 106462. [Google Scholar] [CrossRef]

- Mangasarian, O.L.; Meyer, R.R. Absolute value equations. Linear Algebra Appl. 2006, 419, 359–367. [Google Scholar] [CrossRef]

- Rohn, J. On unique solvability of the absolute value equation. Optim. Lett. 2009, 3, 603–606. [Google Scholar] [CrossRef]

- Wu, S.-L.; Li, C.-X. A note on unique solvability of the absolute value equation. Optim. Lett. 2020, 14, 1957–1960. [Google Scholar] [CrossRef]

- Wu, S.-L.; Shen, S.-Q. On the unique solution of the generalized absolute value equation. Optim. Lett. 2021, 15, 2017–2024. [Google Scholar] [CrossRef]

- Cao, Y.; Shi, Q.; Zhu, S.-L. A relaxed generalized Newton iteration method for generalized absolute value equations. AIMS Math. 2021, 6, 1258–1275. [Google Scholar] [CrossRef]

- Cruz, J.Y.B.; Ferreira, O.P.; Prudente, L.F. On the global convergence of the inexact semi-smooth Newton method for absolute value equation. Comput. Optim. Appl. 2016, 65, 93–108. [Google Scholar] [CrossRef]

- Feng, J.; Liu, S. A new two-step iterative method for solving absolute value equations. J. Inequal. Appl. 2019, 39, 1–8. [Google Scholar] [CrossRef]

- Huang, B.-H.; Ma, C.-F. Convergent conditions of the generalized Newton method for absolute value equation over second order cones. Appl. Math. Lett. 2019, 92, 151–157. [Google Scholar] [CrossRef]

- Lian, Y.-Y.; Li, C.-X.; Wu, S.-L. Stronger convergent results of the generalized Newton method for the generalized absolute value equations. J. Comput. Appl. Math. 2018, 2018, 221–226. [Google Scholar] [CrossRef]

- Li, C.-X. A modified generalized newton method for absolute value equations. J. Optim. Theory Appl. 2016, 170, 1055–1059. [Google Scholar] [CrossRef]

- Shi, L.; Iqbal, J.; Arif, M.; Khan, A. A two-step Newton-type method for solving system of absolute value equations. Math. Probl. Eng. 2020, 2020, 2798080. [Google Scholar] [CrossRef]

- Wang, A.; Cao, Y.; Chen, J.-X. Modified Newton-type iteration methods for generalized absolute value equations. J. Optim. Theory Appl. 2019, 2020, 216–230. [Google Scholar] [CrossRef]

- Wu, S.-L.; Li, C.-X.; Zhou, H.-Y. Newton-based matrix splitting method for generalized absolute value equation. J. Comput. Appl. Math. 2021, 394, 113578. [Google Scholar]

- Salkuyeh, D.K. The Picard–HSS iteration method for absolute value equations. Optim. Lett. 2014, 8, 2191–2202. [Google Scholar] [CrossRef]

- Li, C.-X. A preconditioned AOR iterative method for the absolute value equations. Int. J. Comput. Methods 2017, 14, 1750016. [Google Scholar] [CrossRef]

- Edalatpour, V.; Hezari, D.; Salkuyeh, D.K. A generalization of the Gauss–Seidel iteration method for solving absolute value equations. Appl. Math. Comput. 2017, 293, 156–167. [Google Scholar] [CrossRef]

- Huang, B.-H.; Ma, C.-F. The Modulus-based Levenberg-Marquardt method for solving linear complementarity problem. Numer. Math. Theor. Meth. Appl. 2019, 12, 154–168. [Google Scholar]

- Iqbal, J.; Iqbal, A.; Arif, M. Levenberg-Marquardt method for solving systems of absolute value equations. J. Comput. Appl. Math. 2015, 282, 134–138. [Google Scholar] [CrossRef]

- Chen, C.-R.; Yu, D.-M.; Han, D.-R. Exact and inexact Douglas-Rachford splitting methods for solving large-scale sparse absolute value equations. IMA J. Numr. Anal. 2022. online first. [Google Scholar] [CrossRef]

- Chen, C.-R.; Yu, D.-M.; Han, D.-R. An inverse-free dynamical system for solving the absolute value equations. Appl. Numer. Math. 2021, 168, 170–181. [Google Scholar] [CrossRef]

- Huang, X.-J.; Cui, B.-T. Neural network-based method for solving absolute value equations. ICIC Express Lett. 2017, 11, 853–861. [Google Scholar]

- Mansoori, A.; Eshaghnezhad, M.; Effati, S. An efficient neural network model for solving the absolute value equations. IEEE Trans. Circuits Syst. II 2017, 65, 391–395. [Google Scholar] [CrossRef]

- Mansoori, A.; Erfanian, M. A dynamic model to solve the absolute value equations. J. Comput. Appl. Math. 2019, 333, 28–35. [Google Scholar] [CrossRef]

- Saheya, B.; Nguyen, C.-T.; Chen, J.-S. Neural network based on systematically generated smoothing functions for absolute value equation. J. Appl. Math. Comput. 2019, 61, 533–558. [Google Scholar] [CrossRef]

- Yu, D.-M.; Chen, C.-R.; Yang, Y.-N.; Han, D.-R. An inertial inverse-free dynamical system for solving absolute value equations. J. Ind. Manag. Optim. 2023, 19, 2549–2559. [Google Scholar] [CrossRef]

- Yu, Z.-S.; Li, L.; Yuan, Y. A modified multivariate spectral gradient algorithm for solving absolute value equations. Appl. Math. Lett. 2021, 121, 107461. [Google Scholar] [CrossRef]

- Li, Y.; Du, S. Modified HS conjugate gradient method for solving generalized absolute value equations. J. Inequal. Appl. 2019, 68, 68. [Google Scholar] [CrossRef]

- Rohn, J.; Hooshyarbakhsh, V.; Farhadsefat, R. An iterative method for solving absolute value equations and sufficient conditions for unique solvability. Optim. Lett. 2014, 8, 35–44. [Google Scholar] [CrossRef]

- Saheya, B.; Yu, C.-H.; Chen, J.-S. Numerical comparisons based on four smoothing functions for absolute value equation. J. Appl. Math. Comput. 2018, 56, 131–149. [Google Scholar] [CrossRef]

- Zamani, M.; Hladík, M. A new concave minimization algorithm for the absolute value equation solution. Optim. Lett. 2021, 15, 2241–2254. [Google Scholar] [CrossRef]

- Zamani, M.; Hladík, M. Error bounds and a condition number for the absolute value equations. Math. Program. 2022, 1–29. [Google Scholar] [CrossRef]

- Ke, Y.-F.; Ma, C.-F. SOR-like iteration method for solving absolute value equations. Appl. Math. Comput. 2017, 311, 195–202. [Google Scholar] [CrossRef]

- Guo, P.; Wu, S.-L.; Li, C.-X. On the SOR-like iteration method for solving absolute value equations. Appl. Math. Lett. 2019, 97, 107–113. [Google Scholar] [CrossRef]

- Chen, C.-R.; Yu, D.-M.; Han, D.-R. Optimal parameter for the SOR-like iteration method for solving the system of absolute value equations. arXiv 2020, arXiv:2001.05781. [Google Scholar]

- Ke, Y.-F. The new iteration algorithm for absolute value equation. Appl. Math. Lett. 2020, 99, 105990. [Google Scholar] [CrossRef]

- Yu, D.-M.; Chen, C.-R.; Han, D.-R. A modified fixed point iteration method for solving the system of absolute value equations. Optimization 2022, 71, 449–461. [Google Scholar] [CrossRef]

- Dong, X.; Shao, X.-H.; Shen, H.-L. A new SOR-like method for solving absolute value equations. Appl. Numer. Math. 2020, 156, 410–421. [Google Scholar] [CrossRef]

- Horn, R.A.; Johnson, C.R. Matrix Analysis; Cambridge University Press: Cambridge, UK, 1990. [Google Scholar]

- Berman, A.; Plemmons, R. Nonnegative Matrices in the Mathematical Sciences; Academic Press: New York, NY, USA, 1979. [Google Scholar]

- Young, D.M. Iterative Solution for Large Linear Systems; Academic Press: New York, NY, USA, 1971. [Google Scholar]

- Shams, N.N.; Jahromi, A.F.; Beik, F.P.A. Iterative schemes induced by block splittings for solving absolute value equations. Filomat 2020, 34, 4171–4188. [Google Scholar] [CrossRef]

| Method | n | 256 | 512 | 1024 | 2048 | 4096 |

|---|---|---|---|---|---|---|

| SOR-like-exp | ||||||

| IT | 15 | 34 | 26 | 29 | 25 | |

| CPU | ||||||

| RES | ||||||

| ASOR-like-exp | ||||||

| IT | 15 | 34 | 26 | 29 | 25 | |

| CPU | ||||||

| RES | ||||||

| SOR-like-opt | ||||||

| IT | 18 | 78 | 46 | 44 | 41 | |

| CPU | ||||||

| RES | ||||||

| ASOR-like-opt | ||||||

| IT | 36 | 86 | 59 | 67 | 62 | |

| CPU | ||||||

| RES | ||||||

| SOR-like-aopt | ||||||

| IT | 27 | 78 | 51 | 56 | 52 | |

| CPU | ||||||

| RES | ||||||

| ASOR-like-aopt | ||||||

| IT | 28 | 79 | 52 | 56 | 52 | |

| CPU | ||||||

| RES |

| Method | n | 256 | 512 | 1024 | 2048 | 4096 |

|---|---|---|---|---|---|---|

| SOR-like-exp | ||||||

| IT | 11 | 13 | 10 | 10 | 9 | |

| CPU | ||||||

| RES | ||||||

| ASOR-like-exp | ||||||

| IT | 11 | 13 | 10 | 10 | 9 | |

| CPU | ||||||

| RES | ||||||

| SOR-like-opt | ||||||

| IT | 19 | 30 | 23 | 17 | 23 | |

| CPU | ||||||

| RES | ||||||

| ASOR-like-opt | ||||||

| IT | 32 | 37 | 33 | 31 | 34 | |

| CPU | ||||||

| RES | ||||||

| SOR-like-aopt | ||||||

| IT | 25 | 32 | 27 | 24 | 28 | |

| CPU | ||||||

| RES | ||||||

| ASOR-like-aopt | ||||||

| IT | 26 | 33 | 29 | 25 | 29 | |

| CPU | ||||||

| RES |

| Method | n | 256 | 512 | 1024 | 2048 | 4096 |

|---|---|---|---|---|---|---|

| SOR-like-exp | 1 | |||||

| IT | 6 | 6 | 6 | 8 | 7 | |

| CPU | ||||||

| RES | ||||||

| ASOR-like-exp | 1 | |||||

| IT | 6 | 6 | 6 | 10 | 7 | |

| CPU | ||||||

| RES | ||||||

| SOR-like-opt | ||||||

| IT | 10 | 18 | 9 | 29 | 28 | |

| CPU | ||||||

| RES | ||||||

| ASOR-like-opt | ||||||

| IT | 25 | 29 | 25 | 34 | 34 | |

| CPU | ||||||

| RES | ||||||

| SOR-like-aopt | ||||||

| IT | 18 | 22 | 17 | 30 | 29 | |

| CPU | ||||||

| RES | ||||||

| ASOR-like-aopt | ||||||

| IT | 19 | 24 | 18 | 31 | 31 | |

| CPU | ||||||

| RES |

| Method | q | 0 | 1 | 10 | 100 | 1000 |

|---|---|---|---|---|---|---|

| SOR-like-exp | 1 | |||||

| IT | 14 | 14 | 13 | 10 | 7 | |

| CPU | ||||||

| RES | ||||||

| ASOR-like-exp | 1 | |||||

| IT | 14 | 14 | 13 | 10 | 7 | |

| CPU | ||||||

| RES | ||||||

| SOR-like-opt | 1 | |||||

| IT | 27 | 26 | 19 | 10 | 7 | |

| CPU | ||||||

| RES | ||||||

| ASOR-like-opt | ||||||

| IT | 39 | 38 | 35 | 26 | 23 | |

| CPU | ||||||

| RES | ||||||

| SOR-like-aopt | ||||||

| IT | 32 | 32 | 27 | 18 | 16 | |

| CPU | ||||||

| RES | ||||||

| ASOR-like-aopt | ||||||

| IT | 33 | 32 | 28 | 19 | 16 | |

| CPU | ||||||

| RES |

| Method | q | 0 | 1 | 10 |

|---|---|---|---|---|

| SOR-like-exp | ||||

| IT | 12 | 12 | 12 | |

| CPU | ||||

| RES | ||||

| ASOR-like-exp | ||||

| IT | 12 | 12 | 12 | |

| CPU | ||||

| RES | ||||

| SOR-like-opt | ||||

| IT | 17 | 17 | 14 | |

| CPU | ||||

| RES | ||||

| ASOR-like-opt | ||||

| IT | 33 | 33 | 30 | |

| CPU | ||||

| RES | ||||

| SOR-like-aopt | ||||

| IT | 26 | 25 | 23 | |

| CPU | ||||

| RES | ||||

| ASOR-like-aopt | ||||

| IT | 26 | 26 | 23 | |

| CPU | ||||

| RES |

| Method | ||||||

|---|---|---|---|---|---|---|

| SOR-like-exp | ||||||

| IT | 15 | 15 | 17 | 10 | 10 | |

| CPU | ||||||

| RES | ||||||

| ASOR-like-exp | ||||||

| IT | 15 | 16 | 17 | 10 | 10 | |

| CPU | ||||||

| RES | ||||||

| SOR-like-opt | ||||||

| IT | 21 | 22 | 29 | 12 | 12 | |

| CPU | ||||||

| RES | ||||||

| ASOR-like-opt | ||||||

| IT | 33 | 33 | 39 | 23 | 23 | |

| CPU | ||||||

| RES |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, Y.; Yu, D.; Yuan, Y. On the Alternative SOR-like Iteration Method for Solving Absolute Value Equations. Symmetry 2023, 15, 589. https://doi.org/10.3390/sym15030589

Zhang Y, Yu D, Yuan Y. On the Alternative SOR-like Iteration Method for Solving Absolute Value Equations. Symmetry. 2023; 15(3):589. https://doi.org/10.3390/sym15030589

Chicago/Turabian StyleZhang, Yiming, Dongmei Yu, and Yifei Yuan. 2023. "On the Alternative SOR-like Iteration Method for Solving Absolute Value Equations" Symmetry 15, no. 3: 589. https://doi.org/10.3390/sym15030589

APA StyleZhang, Y., Yu, D., & Yuan, Y. (2023). On the Alternative SOR-like Iteration Method for Solving Absolute Value Equations. Symmetry, 15(3), 589. https://doi.org/10.3390/sym15030589