Abstract

Symmetries play a vital role in the study of physical systems. For example, microworld and quantum physics problems are modeled on the principles of symmetry. These problems are then formulated as equations defined on suitable abstract spaces. Most of these studies reduce to solving nonlinear equations in suitable abstract spaces iteratively. In particular, the convergence of a sixth-order Cordero type iterative method for solving nonlinear equations was studied using Taylor expansion and assumptions on the derivatives of order up to six. In this study, we obtained order of convergence six for Cordero type method using assumptions only on the first derivative. Moreover, we modified Cordero’s method and obtained an eighth-order iterative scheme. Further, we considered analogous iterative methods to solve an ill-posed problem in a Hilbert space setting.

1. Introduction

As already mentioned in the abstract, the main goal is to obtain convergence order of the method studied in [1] without using assumptions on the higher-order derivatives. Throughout this paper denote Banach spaces and is a convex set. We are interested in approximating the solution of the equation

where is a nonlinear operator that is Frèchet differentiable. A considerable number of nonlinear problems of the form (1) that arise in physics, chemistry, biology, finance, and mathematics are modeled on principles of symmetry. In general, the classical Newton method of second-order defines by

where is considered to be the most efficient iterative method to solve Equation (1). Cordero et al. [2] modified the classical Newton method by employing Adomian polynomial decomposition and obtained a fourth-order iterative scheme. The iterative scheme in [2] is defined by

where This new fourth-order Cordero method has better stability than the classical Newton method with higher-order convergence.

A new technique was introduced by Cordero et al. in [1] to improve the convergence order of an iterative method from q to by combining it with the classical Newton method. By using this technique, the authors modified the fourth-order iterative method (3) to a sixth-order iterative scheme that is defined by

However, the disadvantage of the convergence analysis conducted by Cordero et al. [1] is that they use Taylor expansion which involves the Fréchet derivative of the function up to order six. The convergence analysis of iterative methods in Banach space is conducted by using Taylor expansion which requires assumptions on the higher-order derivatives of the operator involved [1,3,4,5,6,7,8]. If the higher-order derivatives are unbounded, these schemes bear limited applicability. For example, consider the equation where is defined by

Since the third-order derivative of is unbounded, the convergence analysis depends on Taylor expansion which is not applicable in this example.

In this study, we could obtain the sixth-order convergence for the method (4) without using Taylor expansion. We employed only the assumptions on the Fréchet derivative of order one. The novelty of our approach is that it does not require higher-order Fréchet derivatives of the operator and Taylor expansion in the convergence analysis. Thus, we enhance the method’s utility. We also modify the last step of the method (4) and obtain a new eighth-order iterative scheme that is defined by

where

In [9], Parhi and Sharma proved the convergence of the method (4) without using Taylor expansion. However, the authors could not obtain the sixth-order convergence theoretically for method (4).

In this study, we also estimate the radius of convergence of the methods (4) and (5) under assumptions on first-order Fréchet derivative and compute the efficiency indices. We numerically demonstrate that the radius of convergence in our study is superior to the estimates of Parhi and Sharma. We also considered the analogous iterative methods of these two iterative schemes to solve an ill-posed problem in a Hilbert space.

2. Convergence Analysis of (4) and (5)

We use notations and for some The following definition and assumptions are used to prove our results.

Definition 1.

A sequence is said to converge to solution with order q if there exists such that

Assumption A1.

such that

Assumption A2.

such that

The local convergence is based on functions which are defined as follows. Let be defined by

and

We observe that and as . So, by intermediate value theorem has a minimal zero Similarly, define by

and

Furthermore, let be the minimal zero of Let

Then, Let and

Theorem 1.

Proof.

(Existence Part) By induction, we shall prove the following inequalities:

For by (4) we have,

So by Assumption A1, we obtain,

By adding and subtracting the term we get,

Therefore, by (7), Assumptions A1 and A2, we obtain

Thus, By the third step of (4) we have,

Note that,

So by (9), we get,

Further, since we have The induction is complete, by replacing by , respectively, in the preceding arguments.

(Uniqueness Part) Let be another solution of the Equation (1) in the set

Let By using Assumption A1, we have

Therefore, by using Banach lemma [10], one can conclude that T is invertible.

Hence follows from □

Next, we prove the convergence of method (5). Let be defined by

Again, by intermediate value theorem has a minimal zero Let us define

Theorem 2.

Proof.

From (11), we get

The rest of the proof proceeds in the same manner as in Theorem 1. □

3. Estimation of Radius of Convergence and Computational Order

We estimate the radius of convergence and to validate the theoretical results.

Example 1.

Let and be defined by

We have, .

By using Banach Lemma,

So,

Therefore, .

Set we then get,

Furthermore, we have and Using the convergence analysis in [9], we obtain the radius

Example 2.

Let Define function on for by

Then,

Thus, and Furthermore, we get, and the radius of convergence Furthermore, we have and Parhi and Sharma [9] considered this example 2 and obtained the radius .

Remark 2.

We observe that, in the above examples. Furthermore, note that we can obtain a better radius of convergence than that of Parhi and Sharma’s convergence analysis in [9].

To ensure the methods (4) and (5) attain the order of convergence computationally, we calculated the Approximate Computational Order of Convergence (ACOC) (Table 1), that is defined as [1]

We considered the following functions and used the stopping criterion

Note that the oscillatory nature of the approximations and slow convergence in the initial stage present the main disadvantages in the computation of ACOC in higher-order iterative methods. In Table 1, we observe that the choice of a suitable initial approximation plays a vital role to achieve the maximum order of convergence (see Equations (12), (13) and (14)). Furthermore, it requires at least four iterations to compute ACOC (see Equation (15)). Specifically in Table 1, we provide ACOC for nonlinear equations using Newton method (NM) (2), Cordero’s fourth-order method (CM) (3), first extension (CM1) (4) and second extension (CM2) (5). Here, , and denote the number of iterations, root, and initial value, respectively.

Remark 3.

The efficiency index is defined as where q is the order of convergence and m is the number of functions (and derivatives) [11]. The informational efficiency I is defined as [12]. The efficiency index and informational efficiency of the fourth-order Cordero method (3) are and , respectively, which coincide with that of the Newton method. Whereas for the sixth-order method (4) and for the eighth-order method (5).

4. Application to Ill-Posed Problem

We implemented the analogous iterative methods (2), (3), (4) and (5) to solve the nonlinear ill-posed problem (see [13,14] for details).

Example 3.

Let be a constant. Consider the inverse problem of identifying the distributed-growth law , in the initial value problem

from the noisy data . One can reformulate the above problem as an ill-posed operator equation

with

The Fréchet derivative of is given by

It is proved in [15], that is positive type and spectrum of is the singleton set . We use the Lavrentiev regularization method with (see [14] for details), i.e.,

to approximate the exact solution of (18). To solve (19), we consider the analogous iterative methods (2), (3), (4) and (5) defined by

and

respectively.

Remark 4.

We choose a priori α which satisfies the following condition;

for some with (see [13,14] for details).

For computation, we have taken and Table 2 provides the relative error of each iterative method, where CS is the computed solution. We choose α according to (20). The accuracy of reconstruction increases as the relative error decreases.

Table 2.

Relative errors for Example 3.

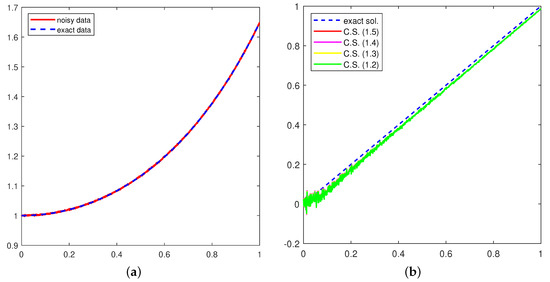

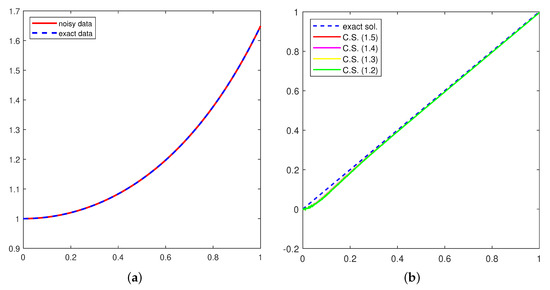

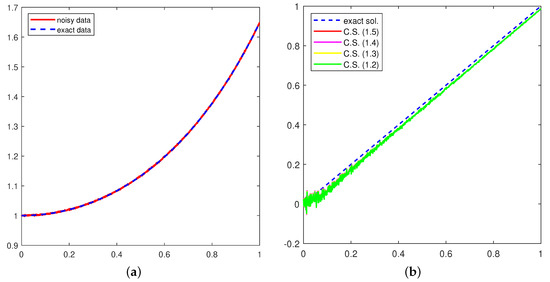

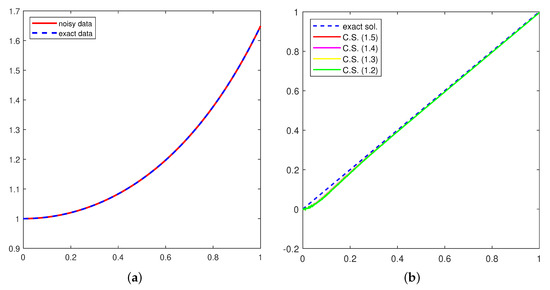

For , the exact and noisy data are shown in subfigure (a) and the computed solution is in subfigure (b), respectively, in both Figure 1 and Figure 2.

Figure 1.

Data (a) and Solution (b) with δ = 0.001.

Figure 2.

Data (a) and Solution (b) with δ = 0.0001.

5. Conclusions

We studied the convergence analysis of a three-step Cordero type method of order six and modified it to a new eighth-order iterative method. The convergence analysis of these methods was studied without using Taylor’s expansion. We use assumptions based only on the first-order Fréchet derivative. We computed the radius of convergence and computational efficiencies of these methods. Furthermore, we considered analogous iterative methods to solve an ill-posed problem in a Hilbert space. The developed process can also be applied to any other method using inverses of linear operators with the same benefits. This represents the topic of our future study.

Author Contributions

Conceptualization, K.R., I.K.A., M.S.K., S.G. and J.P.; methodology, K.R., I.K.A., M.S.K., S.G. and J.P.; software, K.R., I.K.A., M.S.K., S.G. and J.P.; validation, K.R., I.K.A., M.S.K., S.G. and J.P.; formal analysis, K.R., I.K.A., M.S.K., S.G. and J.P.; investigation, K.R., I.K.A., M.S.K., S.G. and J.P.; resources, K.R., I.K.A., M.S.K., S.G. and J.P.; data curation, K.R., I.K.A., M.S.K., S.G. and J.P.; writing—original draft preparation, K.R., I.K.A., M.S.K., S.G. and J.P.; writing—review and editing, K.R., I.K.A., M.S.K., S.G. and J.P.; visualization, K.R., I.K.A., M.S.K., S.G. and J.P.; supervision, K.R., I.K.A., M.S.K., S.G. and J.P.; project administration, K.R., I.K.A., M.S.K., S.G. and J.P.; funding acquisition, K.R., I.K.A., M.S.K., S.G. and J.P. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

Not applicable.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Cordero, A.; Hueso, J.L.; Martínez, E.; Torregrosa, J.R. Increasing the convergence order of an iterative method for nonlinear systems. Appl. Math. Lett. 2012, 25, 2369–2374. [Google Scholar]

- Cordero, A.; Martínez, E.; Toregrossa, J.R. Iterative methods of order four and five for systems of nonlinear equations. J. Comput. Appl. Math. 2012, 231, 541–551. [Google Scholar] [CrossRef]

- Cordero, A.; Hueso, J.L.; Martínez, E.; Torregrosa, J.R. A modified Newton Jarratt’s composition. Numer. Algor. 2010, 55, 87–99. [Google Scholar] [CrossRef]

- Cordero, A.; Ezquerro, J.A.; Hernández-Verón, M.A.; Torregrosa, J.R. On the local convergence of a fifth-order iterative method in Banach spaces. Appl. Math. Comput. 2012, 251, 396–403. [Google Scholar] [CrossRef]

- Fang, L.; Sun, L.; He, G. An efficient newton-type method with fifthorder convergence for solving nonlinear equations. Comput. Appl. Math. 2008, 227, 269–274. [Google Scholar]

- Grau-Sánchez, M.; Grau, A.; Noguera, M. On the computational efficiency index and some iterative methods for solving systems of nonlinear equations. J. Comput. Appl. Math. 2021, 236, 1259–1266. [Google Scholar] [CrossRef]

- Sharma, J.R.; Gupta, P. An efficient fifth order method for solving systems of nonlinear equations. Comput. Math. Appl. 2014, 67, 591–601. [Google Scholar] [CrossRef]

- Sharma, J.R.; Sharma, R.; Kalra, N. A novel family of composite Newton–Traub methods for solving systems of nonlinear equations. Appl. Math. Comput. 2015, 269, 520–535. [Google Scholar] [CrossRef]

- Parhi, S.K.; Sharma, D. On the Local Convergence of a Sixth-Order Iterative Scheme in Banach Spaces. In New Trends in Applied Analysis and Computational Mathematics; Springer: Singapore, 2021; pp. 79–88. [Google Scholar]

- Argyros, I.K. The Theory and Applications of Iteration Methods, 2nd ed.; Engineering Series; CRC Press, Taylor and Francis Group: Boca Raton, FL, USA, 2022. [Google Scholar]

- Ostrowski, A.M. Solution of Equations in Euclidean and Banach Spaces; Elsevier: Amsterdam, The Netherlands, 1973. [Google Scholar] [CrossRef]

- Traub, J.F. Iterative Methods for Solution of Equations; Prentice-Hal: Englewood Cliffs, NJ, USA, 1964. [Google Scholar]

- George, S.; Saeed, M.; Argyros, I.K.; Jidesh, P. An apriori parameter choice strategy and a fifth order iterative scheme for Lavrentiev regularization method. J. Appl. Math. Comput. 2022, 1–21. [Google Scholar] [CrossRef]

- George, S.; Jidesh, P.; Krishnendu, R.; Argyros, I.K. A new parameter choice strategy for Lavrentiev regularization method for nonlinear ill-posed equations. Mathematics 2022, 10, 3365. [Google Scholar] [CrossRef]

- Nair, M.T.; Ravishankar, P. Regularized versions of continuous Newton’s method and continuous modified Newton’s method under general source conditions. Numer. Funct. Anal. Optim. 2008, 29, 1140–1165. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).